Dieser Inhalt ist in der von Ihnen ausgewählten Sprache nicht verfügbar.

Observability

Observability

Abstract

Chapter 1. Observability service introduction

The observability component is a service you can use to understand the health and utilization of clusters across your fleet. By default, multicluster observability operator is enabled during the installation of Red Hat Advanced Cluster Management.

Read the following documentation for more details about the observability component:

1.1. Observability architecture

The multiclusterhub-operator enables the multicluster-observability-operator pod by default. You must configure the multicluster-observability-operator pod.

When you enable the service, the observability-endpoint-operator is automatically deployed to each imported or created cluster. This controller collects the data from Red Hat OpenShift Container Platform Prometheus, then sends it to the Red Hat Advanced Cluster Management hub cluster. If the hub cluster imports itself is self-managed and imports itself as the local-cluster, observability is also enabled on it and metrics are collected from the hub cluster. As a reminder, when the hub cluster is self-managed the disableHubSelfManagement parameter is set to false.

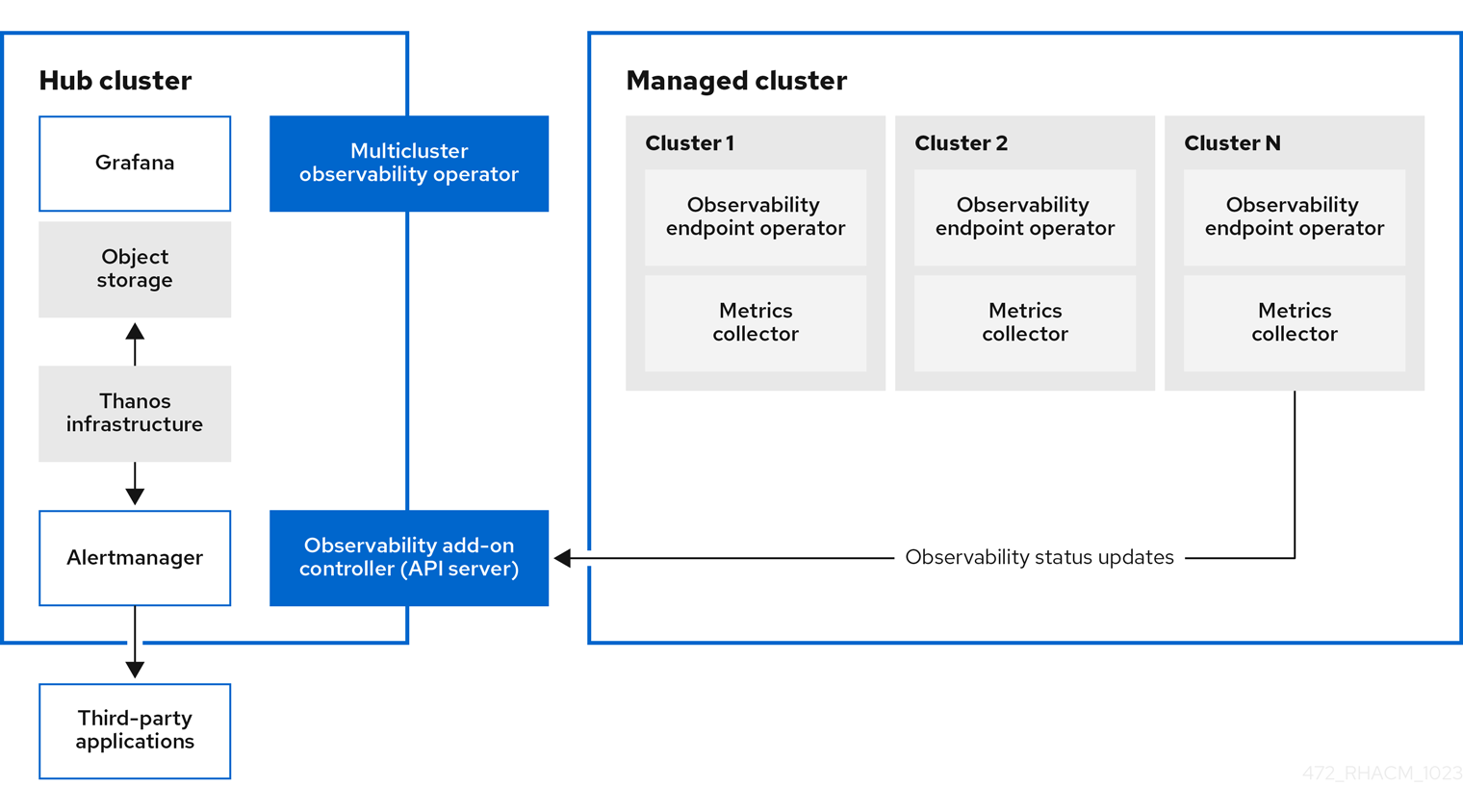

The following diagram shows the components of observability:

The components of the observability architecture include the following items:

-

The multicluster hub operator, also known as the

multiclusterhub-operatorpod, deploys themulticluster-observability-operatorpod. It sends hub cluster data to your managed clusters. - The observability add-on controller is the API server that automatically updates the log of the managed cluster.

The Thanos infrastructure includes the Thanos Compactor, which is deployed by the

multicluster-observability-operatorpod. The Thanos Compactor ensures that queries are performing well by using the retention configuration, and compaction of the data in storage.To help identify when the Thanos Compactor is experiencing issues, use the four default alerts that are monitoring its health. Read the following table of default alerts:

Expand Table 1.1. Table of default Thanos alerts Alert Severity Description ACMThanosCompactHaltedcritical

An alert is sent when the compactor stops.

ACMThanosCompactHighCompactionFailureswarning

An alert is sent when the compaction failure rate is greater than 5 percent.

ACMThanosCompactBucketHighOperationFailureswarning

An alert is sent when the bucket operation failure rate is greater than 5%.

ACMThanosCompactHasNotRunwarning

An alert is sent when the compactor has not uploaded anything in last 24 hours.

- The observability component deploys an instance of Grafana to enable data visualization with dashboards (static) or data exploration. Red Hat Advanced Cluster Management supports version 8.5.20 of Grafana. You can also design your Grafana dashboard. For more information, see Designing your Grafana dashboard.

- The Prometheus Alertmanager enables alerts to be forwarded with third-party applications. You can customize the observability service by creating custom recording rules or alerting rules. Red Hat Advanced Cluster Management supports version 0.25 of Prometheus Alertmanager.

1.1.1. Persistent stores used in the observability service

Important: Do not use the local storage operator or a storage class that uses local volumes for persistent storage. You can lose data if the pod relaunched on a different node after a restart. When this happens, the pod can no longer access the local storage on the node. Be sure that you can access the persistent volumes of the receive and rules pods to avoid data loss.

When you install Red Hat Advanced Cluster Management the following persistent volumes (PV) must be created so that Persistent Volume Claims (PVC) can attach to it automatically. As a reminder, you must define a storage class in the MultiClusterObservability custom resource when there is no default storage class specified or you want to use a non-default storage class to host the PVs. It is recommended to use Block Storage, similar to what Prometheus uses. Also each replica of alertmanager, thanos-compactor, thanos-ruler, thanos-receive-default and thanos-store-shard must have its own PV. View the following table:

| Persistent volume name | Purpose |

| alertmanager |

Alertmanager stores the |

| thanos-compact | The compactor needs local disk space to store intermediate data for its processing, as well as bucket state cache. The required space depends on the size of the underlying blocks. The compactor must have enough space to download all of the source blocks, then build the compacted blocks on the disk. On-disk data is safe to delete between restarts and should be the first attempt to get crash-looping compactors unstuck. However, it is recommended to give the compactor persistent disks in order to effectively use bucket state cache in between restarts. |

| thanos-rule |

The thanos ruler evaluates Prometheus recording and alerting rules against a chosen query API by issuing queries at a fixed interval. Rule results are written back to the disk in the Prometheus 2.0 storage format. The amount of hours or days of data retained in this stateful set was fixed in the API version |

| thanos-receive-default |

Thanos receiver accepts incoming data (Prometheus remote-write requests) and writes these into a local instance of the Prometheus TSDB. Periodically (every 2 hours), TSDB blocks are uploaded to the object storage for long term storage and compaction. The amount of hours or days of data retained in this stateful set, which acts a local cache was fixed in API Version |

| thanos-store-shard | It acts primarily as an API gateway and therefore does not need a significant amount of local disk space. It joins a Thanos cluster on startup and advertises the data it can access. It keeps a small amount of information about all remote blocks on local disk and keeps it in sync with the bucket. This data is generally safe to delete across restarts at the cost of increased startup times. |

Note: The time series historical data is stored in object stores. Thanos uses object storage as the primary storage for metrics and metadata related to them. For more details about the object storage and downsampling, see Enabling observability service.

1.1.2. Additional resources

1.2. Observability configuration

Continue reading to understand what metrics can be collected with the observability compnent, and for information about the observability pod capacity.

1.2.1. Metric types

By default, OpenShift Container Platform sends metrics to Red Hat using the Telemetry service. The acm_managed_cluster_info is available with Red Hat Advanced Cluster Management and is included with telemetry, but is not displayed on the Red Hat Advanced Cluster Management Observe environments overview dashboard.

View the following table of metric types that are supported by the framework:

| Metric name | Metric type | Labels/tags | Status |

|---|---|---|---|

|

| Gauge |

| Stable |

|

| Histogram | None | Stable. Read Governance metric for more details. |

|

| Histogram | None | Stable. Refer to Governance metric for more details. |

|

| Histogram | None | Stable. Read Governance metric for more details. |

|

| Gauge |

| Stable. Review Governance metric for more details. |

|

| Gauge |

| Stable. Read Managing insight _PolicyReports_ for more details. |

|

| Counter | None | Stable. See the Search components section in the Searching in the console introduction documentation. |

|

| Histogram | None | Stable. See the Search components section in the Searching in the console introduction documentation. |

|

| Histogram | None | Stable. See the Search components section in the Searching in the console introduction documentation. |

|

| Counter | None | Stable. See the Search components section in the Searching in the console introduction documentation. |

|

| Histogram | None | Stable. See the Search components section in the Searching in the console introduction documentation. |

|

| Gauge | None | Stable. See the Search components section in the Searching in the console introduction documentation. |

|

| Histogram | None | Stable. See the Search components section in the Searching in the console introduction documentation. |

1.2.2. Observability pod capacity requests

Observability components require 2701mCPU and 11972Mi memory to install the observability service. The following table is a list of the pod capacity requests for five managed clusters with observability-addons enabled:

| Deployment or StatefulSet | Container name | CPU (mCPU) | Memory (Mi) | Replicas | Pod total CPU | Pod total memory |

|---|---|---|---|---|---|---|

| observability-alertmanager | alertmanager | 4 | 200 | 3 | 12 | 600 |

| config-reloader | 4 | 25 | 3 | 12 | 75 | |

| alertmanager-proxy | 1 | 20 | 3 | 3 | 60 | |

| observability-grafana | grafana | 4 | 100 | 2 | 8 | 200 |

| grafana-dashboard-loader | 4 | 50 | 2 | 8 | 100 | |

| observability-observatorium-api | observatorium-api | 20 | 128 | 2 | 40 | 256 |

| observability-observatorium-operator | observatorium-operator | 100 | 100 | 1 | 10 | 50 |

| observability-rbac-query-proxy | rbac-query-proxy | 20 | 100 | 2 | 40 | 200 |

| oauth-proxy | 1 | 20 | 2 | 2 | 40 | |

| observability-thanos-compact | thanos-compact | 100 | 512 | 1 | 100 | 512 |

| observability-thanos-query | thanos-query | 300 | 1024 | 2 | 600 | 2048 |

| observability-thanos-query-frontend | thanos-query-frontend | 100 | 256 | 2 | 200 | 512 |

| observability-thanos-query-frontend-memcached | memcached | 45 | 128 | 3 | 135 | 384 |

| exporter | 5 | 50 | 3 | 15 | 150 | |

| observability-thanos-receive-controller | thanos-receive-controller | 4 | 32 | 1 | 4 | 32 |

| observability-thanos-receive-default | thanos-receive | 300 | 512 | 3 | 900 | 1536 |

| observability-thanos-rule | thanos-rule | 50 | 512 | 3 | 150 | 1536 |

| configmap-reloader | 4 | 25 | 3 | 12 | 75 | |

| observability-thanos-store-memcached | memcached | 45 | 128 | 3 | 135 | 384 |

| exporter | 5 | 50 | 3 | 15 | 150 | |

| observability-thanos-store-shard | thanos-store | 100 | 1024 | 3 | 300 | 3072 |

1.2.3. Additional resources

- For more information about enabling observability, read Enabling the observability service.

- Read Customizing observability to learn how to configure the observability service, view metrics and other data.

- Read Using Grafana dashboards.

- Learn from the OpenShift Container Platform documentation what types of metrics are collected and sent using telemetry. See Information collected by Telemetry for information.

- Refer to Governance metric for details.

- Read Managing insight PolicyReports.

- Refer to Prometheus recording rules.

- Also refer to Prometheus alerting rules.

- Return to Observability service introduction.

Chapter 2. Enabling the observability service

When you enable the observability service on your hub cluster, the multicluster-observability-operator watches for new managed clusters and automatically deploys metric and alert collection services to the managed clusters. You can use metrics and configure Grafana dashboards to make cluster resource information visible, help you save cost, and prevent service disruptions.

Monitor the status of your managed clusters with the observability component, also known as the multicluster-observability-operator pod.

Required access: Cluster administrator, the open-cluster-management:cluster-manager-admin role, or S3 administrator.

2.1. Prerequisites

- You must install Red Hat Advanced Cluster Management for Kubernetes. See Installing while connected online for more information.

-

You must define a storage class in the

MultiClusterObservabilitycustom resource, if there is no default storage class specified. - Direct network access to the hub cluster is required. Network access to load balancers and proxies are not supported. For more information, see Networking.

You must configure an object store to create a storage solution.

- Important: When you configure your object store, ensure that you meet the encryption requirements that are necessary when sensitive data is persisted. The observability service uses Thanos supported, stable object stores. You might not be able to share an object store bucket by multiple Red Hat Advanced Cluster Management observability installations. Therefore, for each installation, provide a separate object store bucket.

Red Hat Advanced Cluster Management supports the following cloud providers with stable object stores:

- Amazon Web Services S3 (AWS S3)

- Red Hat Ceph (S3 compatible API)

- Google Cloud Storage

- Azure storage

- Red Hat OpenShift Data Foundation, formerly known as Red Hat OpenShift Container Storage

- Red Hat OpenShift on IBM (ROKS)

2.2. Enabling observability from the command line interface

Enable the observability service by creating a MultiClusterObservability custom resource instance. Before you enable observability, see Observability pod capacity requests for more information.

Note:

-

When observability is enabled or disabled on OpenShift Container Platform managed clusters that are managed by Red Hat Advanced Cluster Management, the observability endpoint operator updates the

cluster-monitoring-configconfig map by adding additionalalertmanagerconfiguration that automatically restarts the local Prometheus. -

The observability endpoint operator updates the

cluster-monitoring-configconfig map by adding additionalalertmanagerconfigurations that automatically restart the local Prometheus. Therefore, when you insert thealertmanagerconfiguration in the OpenShift Container Platform managed cluster, the configuration removes the settings that relate to the retention of the Prometheus metrics.

Complete the following steps to enable the observability service:

- Log in to your Red Hat Advanced Cluster Management hub cluster.

Create a namespace for the observability service with the following command:

oc create namespace open-cluster-management-observabilityGenerate your pull-secret. If Red Hat Advanced Cluster Management is installed in the

open-cluster-managementnamespace, run the following command:DOCKER_CONFIG_JSON=`oc extract secret/multiclusterhub-operator-pull-secret -n open-cluster-management --to=-`If the

multiclusterhub-operator-pull-secretis not defined in the namespace, copy thepull-secretfrom theopenshift-confignamespace into theopen-cluster-management-observabilitynamespace. Run the following command:DOCKER_CONFIG_JSON=`oc extract secret/pull-secret -n openshift-config --to=-`Then, create the pull-secret in the

open-cluster-management-observabilitynamespace, run the following command:oc create secret generic multiclusterhub-operator-pull-secret \ -n open-cluster-management-observability \ --from-literal=.dockerconfigjson="$DOCKER_CONFIG_JSON" \ --type=kubernetes.io/dockerconfigjsonImportant: If you modify the global pull secret for your cluster by using the OpenShift Container Platform documentation, be sure to also update the global pull secret in the observability namespace. See Updating the global pull secret for more details.

Create a secret for your object storage for your cloud provider. Your secret must contain the credentials to your storage solution. For example, run the following command:

oc create -f thanos-object-storage.yaml -n open-cluster-management-observabilityView the following examples of secrets for the supported object stores:

For Amazon S3 or S3 compatible, your secret might resemble the following file:

apiVersion: v1 kind: Secret metadata: name: thanos-object-storage namespace: open-cluster-management-observability type: Opaque stringData: thanos.yaml: | type: s3 config: bucket: YOUR_S3_BUCKET endpoint: YOUR_S3_ENDPOINT1 insecure: true access_key: YOUR_ACCESS_KEY secret_key: YOUR_SECRET_KEY- 1

- Enter the URL without the protocol. Enter the URL for your Amazon S3 endpoint that might resemble the following URL:

s3.us-east-1.amazonaws.com.

For more details, see the Amazon Simple Storage Service user guide.

For Google Cloud Platform, your secret might resemble the following file:

apiVersion: v1 kind: Secret metadata: name: thanos-object-storage namespace: open-cluster-management-observability type: Opaque stringData: thanos.yaml: | type: GCS config: bucket: YOUR_GCS_BUCKET service_account: YOUR_SERVICE_ACCOUNTFor more details, see Google Cloud Storage.

For Azure your secret might resemble the following file:

apiVersion: v1 kind: Secret metadata: name: thanos-object-storage namespace: open-cluster-management-observability type: Opaque stringData: thanos.yaml: | type: AZURE config: storage_account: YOUR_STORAGE_ACCT storage_account_key: YOUR_STORAGE_KEY container: YOUR_CONTAINER endpoint: blob.core.windows.net1 max_retries: 0- 1

- If you use the

msi_resourcepath, the endpoint authentication is complete by using the system-assigned managed identity. Your value must resemble the following endpoint:https://<storage-account-name>.blob.core.windows.net.

If you use the

user_assigned_idpath, endpoint authentication is complete by using the user-assigned managed identity. When you use theuser_assigned_id, themsi_resourceendpoint default value ishttps:<storage_account>.<endpoint>. For more details, see Azure Storage documentation.Note: If you use Azure as an object storage for a Red Hat OpenShift Container Platform cluster, the storage account associated with the cluster is not supported. You must create a new storage account.

For Red Hat OpenShift Data Foundation, your secret might resemble the following file:

apiVersion: v1 kind: Secret metadata: name: thanos-object-storage namespace: open-cluster-management-observability type: Opaque stringData: thanos.yaml: | type: s3 config: bucket: YOUR_RH_DATA_FOUNDATION_BUCKET endpoint: YOUR_RH_DATA_FOUNDATION_ENDPOINT1 insecure: false access_key: YOUR_RH_DATA_FOUNDATION_ACCESS_KEY secret_key: YOUR_RH_DATA_FOUNDATION_SECRET_KEY- 1

- Enter the URL without the protocol. Enter the URL for your Red Hat OpenShift Data Foundation endpoint that might resemble the following URL:

example.redhat.com:443.

For more details, see Red Hat OpenShift Data Foundation.

For Red Hat OpenShift on IBM (ROKS), your secret might resemble the following file:

apiVersion: v1 kind: Secret metadata: name: thanos-object-storage namespace: open-cluster-management-observability type: Opaque stringData: thanos.yaml: | type: s3 config: bucket: YOUR_ROKS_S3_BUCKET endpoint: YOUR_ROKS_S3_ENDPOINT1 insecure: true access_key: YOUR_ROKS_ACCESS_KEY secret_key: YOUR_ROKS_SECRET_KEY- 1

- Enter the URL without the protocol. Enter the URL for your Red Hat OpenShift Data Foundation endpoint that might resemble the following URL:

example.redhat.com:443.

For more details, follow the IBM Cloud documentation, Cloud Object Storage. Be sure to use the service credentials to connect with the object storage. For more details, follow the IBM Cloud documentation, Cloud Object Store and Service Credentials.

2.2.1. Configuring storage for AWS Security Token Service

For Amazon S3 or S3 compatible storage, you can also use short term, limited-privilege credentials that are generated with AWS Security Token Service (AWS STS). Refer to AWS Security Token Service documentation for more details.

Generating access keys using AWS Security Service require the following additional steps:

- Create an IAM policy that limits access to an S3 bucket.

- Create an IAM role with a trust policy to generate JWT tokens for OpenShift Container Platform service accounts.

- Specify annotations for the observability service accounts that requires access to the S3 bucket. You can find an example of how observability on Red Hat OpenShift Service on AWS (ROSA) cluster can be configured to work with AWS STS tokens in the Set environment step. See Red Hat OpenShift Service on AWS (ROSA) for more details, along with ROSA with STS explained for an in-depth description of the requirements and setup to use STS tokens.

2.2.2. Generating access keys using the AWS Security Service

Complete the following steps to generate access keys using the AWS Security Service:

Set up the AWS environment. Run the following commands:

export POLICY_VERSION=$(date +"%m-%d-%y") export TRUST_POLICY_VERSION=$(date +"%m-%d-%y") export CLUSTER_NAME=<my-cluster> export S3_BUCKET=$CLUSTER_NAME-acm-observability export REGION=us-east-2 export NAMESPACE=open-cluster-management-observability export SA=tbd export SCRATCH_DIR=/tmp/scratch export OIDC_PROVIDER=$(oc get authentication.config.openshift.io cluster -o json | jq -r .spec.serviceAccountIssuer| sed -e "s/^https:\/\///") export AWS_ACCOUNT_ID=$(aws sts get-caller-identity --query Account --output text) export AWS_PAGER="" rm -rf $SCRATCH_DIR mkdir -p $SCRATCH_DIRCreate an S3 bucket with the following command:

aws s3 mb s3://$S3_BUCKETCreate a

s3-policyJSON file for access to your S3 bucket. Run the following command:{ "Version": "$POLICY_VERSION", "Statement": [ { "Sid": "Statement", "Effect": "Allow", "Action": [ "s3:ListBucket", "s3:GetObject", "s3:DeleteObject", "s3:PutObject", "s3:PutObjectAcl", "s3:CreateBucket", "s3:DeleteBucket" ], "Resource": [ "arn:aws:s3:::$S3_BUCKET/*", "arn:aws:s3:::$S3_BUCKET" ] } ] }Apply the policy with the following command:

S3_POLICY=$(aws iam create-policy --policy-name $CLUSTER_NAME-acm-obs \ --policy-document file://$SCRATCH_DIR/s3-policy.json \ --query 'Policy.Arn' --output text) echo $S3_POLICYCreate a

TrustPolicyJSON file. Run the following command:{ "Version": "$TRUST_POLICY_VERSION", "Statement": [ { "Effect": "Allow", "Principal": { "Federated": "arn:aws:iam::${AWS_ACCOUNT_ID}:oidc-provider/${OIDC_PROVIDER}" }, "Action": "sts:AssumeRoleWithWebIdentity", "Condition": { "StringEquals": { "${OIDC_PROVIDER}:sub": [ "system:serviceaccount:${NAMESPACE}:observability-thanos-query", "system:serviceaccount:${NAMESPACE}:observability-thanos-store-shard", "system:serviceaccount:${NAMESPACE}:observability-thanos-compact" "system:serviceaccount:${NAMESPACE}:observability-thanos-rule", "system:serviceaccount:${NAMESPACE}:observability-thanos-receive", ] } } } ] }Create a role for AWS Prometheus and CloudWatch with the following command:

S3_ROLE=$(aws iam create-role \ --role-name "$CLUSTER_NAME-acm-obs-s3" \ --assume-role-policy-document file://$SCRATCH_DIR/TrustPolicy.json \ --query "Role.Arn" --output text) echo $S3_ROLEAttach the policies to the role. Run the following command:

aws iam attach-role-policy \ --role-name "$CLUSTER_NAME-acm-obs-s3" \ --policy-arn $S3_POLICYYour secret might resemble the following file. The

configsection specifiessignature_version2: falseand does not specifyaccess_keyandsecret_key:apiVersion: v1 kind: Secret metadata: name: thanos-object-storage namespace: open-cluster-management-observability type: Opaque stringData: thanos.yaml: | type: s3 config: bucket: $S3_BUCKET endpoint: s3.$REGION.amazonaws.com signature_version2: false-

Specify service account annotations when you the

MultiClusterObservabilitycustom resource as described in Creating the MultiClusterObservability custom resource section. You can retrieve the S3 access key and secret key for your cloud providers with the following commands. You must decode, edit, and encode your

base64string in the secret:YOUR_CLOUD_PROVIDER_ACCESS_KEY=$(oc -n open-cluster-management-observability get secret <object-storage-secret> -o jsonpath="{.data.thanos\.yaml}" | base64 --decode | grep access_key | awk '{print $2}') echo $ACCESS_KEY YOUR_CLOUD_PROVIDER_SECRET_KEY=$(oc -n open-cluster-management-observability get secret <object-storage-secret> -o jsonpath="{.data.thanos\.yaml}" | base64 --decode | grep secret_key | awk '{print $2}') echo $SECRET_KEYVerify that observability is enabled by checking the pods for the following deployments and stateful sets. You might receive the following information:

observability-thanos-query (deployment) observability-thanos-compact (statefulset) observability-thanos-receive-default (statefulset) observability-thanos-rule (statefulset) observability-thanos-store-shard-x (statefulsets)

2.2.3. Creating the MultiClusterObservability custom resource

Use the MultiClusterObservability custom resource to specify the persistent volume storage size for various components. You must set the storage size during the initial creation of the MultiClusterObservability custom resource. When you update the storage size values post-deployment, changes take effect only if the storage class supports dynamic volume expansion. For more information, see Expanding persistent volumes from the Red Hat OpenShift Container Platform documentation.

Complete the following steps to create the MultiClusterObservability custom resource on your hub cluster:

Create the

MultiClusterObservabilitycustom resource YAML file namedmulticlusterobservability_cr.yaml.View the following default YAML file for observability:

apiVersion: observability.open-cluster-management.io/v1beta2 kind: MultiClusterObservability metadata: name: observability spec: observabilityAddonSpec: {} storageConfig: metricObjectStorage: name: thanos-object-storage key: thanos.yamlYou might want to modify the value for the

retentionConfigparameter in theadvancedsection. For more information, see Thanos Downsampling resolution and retention. Depending on the number of managed clusters, you might want to update the amount of storage for stateful sets. If your S3 bucket is configured to use STS tokens, annotate the service accounts to use STS with S3 role. View the following configuration:spec: advanced: compact: serviceAccountAnnotations: eks.amazonaws.com/role-arn: $S3_ROLE store: serviceAccountAnnotations: eks.amazonaws.com/role-arn: $S3_ROLE rule: serviceAccountAnnotations: eks.amazonaws.com/role-arn: $S3_ROLE receive: serviceAccountAnnotations: eks.amazonaws.com/role-arn: $S3_ROLE query: serviceAccountAnnotations: eks.amazonaws.com/role-arn: $S3_ROLESee Observability API for more information.

To deploy on infrastructure machine sets, you must set a label for your set by updating the

nodeSelectorin theMultiClusterObservabilityYAML. Your YAML might resemble the following content:nodeSelector: node-role.kubernetes.io/infra:For more information, see Creating infrastructure machine sets.

Apply the observability YAML to your cluster by running the following command:

oc apply -f multiclusterobservability_cr.yamlAll the pods in

open-cluster-management-observabilitynamespace for Thanos, Grafana and Alertmanager are created. All the managed clusters connected to the Red Hat Advanced Cluster Management hub cluster are enabled to send metrics back to the Red Hat Advanced Cluster Management Observability service.- Validate that the observability service is enabled and the data is populated by launching the Grafana dashboards.

Click the Grafana link that is near the console header, from either the console Overview page or the Clusters page.

-

Alternatively, access the OpenShift Container Platform 3.11 Grafana dashboards with the following URL:

https://$ACM_URL/grafana/dashboards. - To view the OpenShift Container Platform 3.11 dashboards, select the folder named OCP 3.11 .

-

Alternatively, access the OpenShift Container Platform 3.11 Grafana dashboards with the following URL:

Access the

multicluster-observability-operatordeployment to verify that themulticluster-observability-operatorpod is being deployed by themulticlusterhub-operatordeployment. Run the following command:oc get deploy multicluster-observability-operator -n open-cluster-management --show-labels NAME READY UP-TO-DATE AVAILABLE AGE LABELS multicluster-observability-operator 1/1 1 1 35m installer.name=multiclusterhub,installer.namespace=open-cluster-managementView the

labelssection of themulticluster-observability-operatordeployment for labels that are associated with the resource. Thelabelssection might contain the following details:labels: installer.name: multiclusterhub installer.namespace: open-cluster-management

. . Optional: If you want to exclude specific managed clusters from collecting the observability data, add the following cluster label to your clusters: observability: disabled.

The observability service is enabled. After you enable the observability service, the following functions are initiated:

- All the alert managers from the managed clusters are forwarded to the Red Hat Advanced Cluster Management hub cluster.

All the managed clusters that are connected to the Red Hat Advanced Cluster Management hub cluster are enabled to send alerts back to the Red Hat Advanced Cluster Management observability service. You can configure the Red Hat Advanced Cluster Management Alertmanager to take care of deduplicating, grouping, and routing the alerts to the correct receiver integration such as email, PagerDuty, or OpsGenie. You can also handle silencing and inhibition of the alerts.

Note: Alert forwarding to the Red Hat Advanced Cluster Management hub cluster feature is only supported by managed clusters with Red Hat OpenShift Container Platform version 4.12 or later. After you install Red Hat Advanced Cluster Management with observability enabled, alerts from OpenShift Container Platform 4.12 and later are automatically forwarded to the hub cluster. See Forwarding alerts to learn more.

2.3. Enabling observability from the Red Hat OpenShift Container Platform console

Optionally, you can enable observability from the Red Hat OpenShift Container Platform console, create a project named open-cluster-management-observability. Be sure to create an image pull-secret named, multiclusterhub-operator-pull-secret in the open-cluster-management-observability project.

Create your object storage secret named, thanos-object-storage in the open-cluster-management-observability project. Enter the object storage secret details, then click Create. See step four of the Enabling observability section to view an example of a secret.

Create the MultiClusterObservability custom resource instance. When you receive the following message, the observability service is enabled successfully from OpenShift Container Platform: Observability components are deployed and running.

2.3.1. Verifying the Thanos version

After Thanos is deployed on your cluster, verify the Thanos version from the command line interface (CLI).

After you log in to your hub cluster, run the following command in the observability pods to receive the Thanos version:

thanos --versionThe Thanos version is displayed.

2.4. Disabling observability

You can disable observability, which stops data collection on the Red Hat Advanced Cluster Management hub cluster.

2.4.1. Disabling observability on all clusters

Disable observability by removing observability components on all managed clusters. Update the multicluster-observability-operator resource by setting enableMetrics to false. Your updated resource might resemble the following change:

spec:

imagePullPolicy: Always

imagePullSecret: multiclusterhub-operator-pull-secret

observabilityAddonSpec: # The ObservabilityAddonSpec defines the global settings for all managed clusters which have observability add-on enabled

enableMetrics: false #indicates the observability addon push metrics to hub server2.4.2. Disabling observability on a single cluster

Disable observability by removing observability components on specific managed clusters. Add the observability: disabled label to the managedclusters.cluster.open-cluster-management.io custom resource. From the Red Hat Advanced Cluster Management console Clusters page, add the observability=disabled label to the specified cluster.

Note: When a managed cluster with the observability component is detached, the metrics-collector deployments are removed.

2.5. Removing observability

When you remove the MultiClusterObservability custom resource, you are disabling and uninstalling the observability service. From the OpenShift Container Platform console navigation, select Operators > Installed Operators > Advanced Cluster Manager for Kubernetes. Remove the MultiClusterObservability custom resource.

2.6. Additional resources

Links to cloud provider documentation for object storage information:

- See Using observability.

- To learn more about customizing the observability service, see Customizing observability.

- For more related topics, return to the Observability service introduction.

Chapter 3. Using observability

Use the observability service to view the utilization of clusters across your fleet.

3.1. Querying metrics using the observability API

Observability provides an external API for metrics to be queried through the OpenShift route, rbac-query-proxy. See the following options to get your queries for the rbac-query-proxy route:

You can get the details of the route with the following command:

oc get route rbac-query-proxy -n open-cluster-management-observability-

You can also access the

rbac-query-proxyroute with your OpenShift OAuth access token. The token should be associated with a user or service account, which has permission to get namespaces. For more information, see Managing user-owned OAuth access tokens.

Complete the following steps to create proxy-byo-cert secrets for observability:

Get the default CA certificate and store the content of the key

tls.crtin a local file. Run the following command:oc -n openshift-ingress get secret router-certs-default -o jsonpath="{.data.tls\.crt}" | base64 -d > ca.crtRun the following command to query metrics:

curl --cacert ./ca.crt -H "Authorization: Bearer {TOKEN}" https://{PROXY_ROUTE_URL}/api/v1/query?query={QUERY_EXPRESSION}Note: The

QUERY_EXPRESSIONis the standard Prometheus query expression. For example, query the metricscluster_infrastructure_providerby replacing the URL in the previously mentioned command with the following URL:https://{PROXY_ROUTE_URL}/api/v1/query?query=cluster_infrastructure_provider. For more details, see Querying Prometheus.Run the following command to create

proxy-byo-casecrets using the generated certificates:oc -n open-cluster-management-observability create secret tls proxy-byo-ca --cert ./ca.crt --key ./ca.keyCreate

proxy-byo-certsecrets using the generated certificates by using the following command:oc -n open-cluster-management-observability create secret tls proxy-byo-cert --cert ./ingress.crt --key ./ingress.key

3.2. Exporting metrics to external endpoints

Export metrics to external endpoints, which support the Prometheus Remote-Write specification in real time. Complete the following steps to export metrics to external endpoints:

Create the Kubernetes secret for an external endpoint with the access information of the external endpoint in the

open-cluster-management-observabilitynamespace. View the following example secret:apiVersion: v1 kind: Secret metadata: name: victoriametrics namespace: open-cluster-management-observability type: Opaque stringData: ep.yaml: | url: http://victoriametrics:8428/api/v1/write http_client_config: basic_auth: username: test password: testThe

ep.yamlis the key of the content and is used in theMultiClusterObservabilitycustom resource in next step. Currently, observability supports exporting metrics to endpoints without any security checks, with basic authentication or withtlsenablement. View the following tables for a full list of supported parameters:Expand Name Description Schema url

requiredURL for the external endpoint.

string

http_client_config

optionalAdvanced configuration for the HTTP client.

HttpClientConfig

Expand Name Description Schema basic_auth

optionalHTTP client configuration for basic authentication.

tls_config

optionalHTTP client configuration for TLS.

BasicAuth

Expand Name Description Schema username

optionalUser name for basic authorization.

string

password

optionalPassword for basic authorization.

string

TLSConfig

Expand Name

Description

Schema

secret_name

requiredName of the secret that contains certificates.

string

ca_file_key

optionalKey of the CA certificate in the secret (only optional if insecure_skip_verify is set to true).

string

cert_file_key

requiredKey of the client certificate in the secret.

string

key_file_key

requiredKey of the client key in the secret.

string

insecure_skip_verify

optionalParameter to skip the verification for target certificate.

bool

Add the

writeStorageparameter to theMultiClusterObservabilitycustom resource for adding a list of external endppoints that you want to export. View the following example:spec: storageConfig: writeStorage:1 - key: ep.yaml name: victoriametrics- 1

- Each item contains two attributes: name and key. Name is the name of the Kubernetes secret that contains endpoint access information, and key is the key of the content in the secret. If you add more than one item to the list, then the metrics are exported to multiple external endpoints.

View the status of metric export after the metrics export is enabled by checking the

acm_remote_write_requests_totalmetric.- From the OpenShift Container Platform console of your hub cluster, navigate to the Metrics page by clicking Metrics in the Observe section.

-

Then query the

acm_remote_write_requests_totalmetric. The value of that metric is the total number of requests with a specific response for one external endpoint, on one observatorium API instance. Thenamelabel is the name for the external endpoint. Thecodelabel is the return code of the HTTP request for the metrics export.

3.3. Viewing and exploring data by using dashboards

View the data from your managed clusters by accessing Grafana from the hub cluster. You can query specific alerts and add filters for the query.

For example, to explore the cluster_infrastructure_provider alert from a single-node OpenShift cluster, use the following query expression: cluster_infrastructure_provider{clusterType="SNO"}

Note: Do not set the ObservabilitySpec.resources.CPU.limits parameter if observability is enabled on single node managed clusters. When you set the CPU limits, it causes the observability pod to be counted against the capacity for your managed cluster. See the reference for Management Workload Partitioning in the Additional resources section.

3.3.1. Viewing historical data

When you query historical data, manually set your query parameter options to control how much data is displayed from the dashboard. Complete the following steps:

- From your hub cluster, select the Grafana link that is in the console header.

- Edit your cluster dashboard by selecting Edit Panel.

- From the Query front-end data source in Grafana, click the Query tab.

-

Select

$datasource. - If you want to see more data, increase the value of the Step parameter section. If the Step parameter section is empty, it is automatically calculated.

-

Find the Custom query parameters field and select

max_source_resolution=auto. - To verify that the data is displayed, refresh your Grafana page.

Your query data appears from the Grafana dashboard.

3.3.2. Viewing Red Hat Advanced Cluster Management dashboards

When you enable the Red Hat Advanced Cluster Management observability service, three dashboards become available. the following dashboard descriptions:

- Alert Analysis: Overview dashboard of the alerts being generated within the managed cluster fleet.

- Clusters by Alert: Alert dashboard where you can filter by the alert name.

- Alerts by Cluster: Alert dashboard where you can filter by cluster, and view real-time data for alerts that are initiated or pending within the cluster environment.

3.3.3. Viewing the etcd table

You can also view the etcd table from the hub cluster dashboard in Grafana to learn the stability of the etcd as a data store. Select the Grafana link from your hub cluster to view the etcd table data, which is collected from your hub cluster. The Leader election changes across managed clusters are displayed.

3.3.4. Viewing the Kubernetes API server dashboard

View the following options to view the Kubernetes API server dashboards:

View the cluster fleet Kubernetes API service-level overview from the hub cluster dashboard in Grafana.

- Navigate to the Grafana dashboard.

Access the managed dashboard menu by selecting Kubernetes > Service-Level Overview > API Server. The Fleet Overview and Top Cluster details are displayed.

The total number of clusters that are exceeding or meeting the targeted service-level objective (SLO) value for the past seven or 30-day period, offending and non-offending clusters, and API Server Request Duration is displayed.

View the Kubernetes API service-level overview table from the hub cluster dashboard in Grafana.

- Navigate to the Grafana dashboard from your hub cluster.

Access the managed dashboard menu by selecting Kubernetes > Service-Level Overview > API Server. The Fleet Overview and Top Cluster details are displayed.

The error budget for the past seven or 30-day period, the remaining downtime, and trend are displayed.

3.4. Additional resources

- For more information, see Prometheus Remote-Write specification.

- Read Enabling the observability service.

- For more topics, return to Observability service introduction.

3.5. Using Grafana dashboards

Use Grafana dashboards to view hub cluster and managed cluster metrics. The data displayed in the Grafana alerts dashboard relies on alerts metrics, originating from managed clusters. The alerts metric does not affect managed clusters forwarding alerts to Red Hat Advanced Cluster Management alert manager on the hub cluster. Therefore, the metrics and alerts have distinct propagation mechanisms and follow separate code paths.

Even if you see data in the Grafana alerts dashboard, that does not guarantee that the managed cluster alerts are successfully forwarding to the Red Hat Advanced Cluster Management hub cluster alert manager. If the metrics are propagated from the managed clusters, you can view the data displayed in the Grafana alerts dashboard.

To use the Grafana dashboards for your development needs, complete the following:

3.5.1. Setting up the Grafana developer instance

You can design your Grafana dashboard by creating a grafana-dev instance. Be sure to use the most current grafana-dev instance.

Complete the following steps to set up the Grafana developer instance:

-

Clone the

open-cluster-management/multicluster-observability-operator/repository, so that you are able to run the scripts that are in thetoolsfolder. Run the

setup-grafana-dev.shto setup your Grafana instance. When you run the script the following resources are created:secret/grafana-dev-config,deployment.apps/grafana-dev,service/grafana-dev,ingress.extensions/grafana-dev,persistentvolumeclaim/grafana-dev:./setup-grafana-dev.sh --deploy secret/grafana-dev-config created deployment.apps/grafana-dev created service/grafana-dev created serviceaccount/grafana-dev created clusterrolebinding.rbac.authorization.k8s.io/open-cluster-management:grafana-crb-dev created route.route.openshift.io/grafana-dev created persistentvolumeclaim/grafana-dev created oauthclient.oauth.openshift.io/grafana-proxy-client-dev created deployment.apps/grafana-dev patched service/grafana-dev patched route.route.openshift.io/grafana-dev patched oauthclient.oauth.openshift.io/grafana-proxy-client-dev patched clusterrolebinding.rbac.authorization.k8s.io/open-cluster-management:grafana-crb-dev patchedSwitch the user role to Grafana administrator with the

switch-to-grafana-admin.shscript.-

Select the Grafana URL,

https:grafana-dev-open-cluster-management-observability.{OPENSHIFT_INGRESS_DOMAIN}, and log in. Then run the following command to add the switched user as Grafana administrator. For example, after you log in using

kubeadmin, run following command:./switch-to-grafana-admin.sh kube:admin User <kube:admin> switched to be grafana admin

-

Select the Grafana URL,

The Grafana developer instance is set up.

3.5.1.1. Verifying Grafana version

Verify the Grafana version from the command line interface (CLI) or from the Grafana user interface.

After you log in to your hub cluster, access the observabilty-grafana pod terminal. Run the following command:

grafana-cliThe Grafana version that is currently deployed within the cluster environment is displayed.

Alternatively, you can navigate to the Manage tab in the Grafana dashboard. Scroll to the end of the page, where the version is listed.

3.5.2. Designing your Grafana dashboard

After you set up the Grafana instance, you can design the dashboard. Complete the following steps to refresh the Grafana console and design your dashboard:

- From the Grafana console, create a dashboard by selecting the Create icon from the navigation panel. Select Dashboard, and then click Add new panel.

- From the New Dashboard/Edit Panel view, navigate to the Query tab.

-

Configure your query by selecting

Observatoriumfrom the data source selector and enter a PromQL query. - From the Grafana dashboard header, click the Save icon that is in the dashboard header.

- Add a descriptive name and click Save.

3.5.2.1. Designing your Grafana dashboard with a ConfigMap

Design your Grafana dashboard with a ConfigMap. You can use the generate-dashboard-configmap-yaml.sh script to generate the dashboard ConfigMap, and to save the ConfigMap locally:

./generate-dashboard-configmap-yaml.sh "Your Dashboard Name"

Save dashboard <your-dashboard-name> to ./your-dashboard-name.yamlIf you do not have permissions to run the previously mentioned script, complete the following steps:

- Select a dashboard and click the Dashboard settings icon.

- Click the JSON Model icon from the navigation panel.

-

Copy the dashboard JSON data and paste it in the

datasection. Modify the

nameand replace$your-dashboard-name. Enter a universally unique identifier (UUID) in theuidfield indata.$your-dashboard-name.json.$$your_dashboard_json. You can use a program such as uuidegen to create a UUID. Your ConfigMap might resemble the following file:kind: ConfigMap apiVersion: v1 metadata: name: $your-dashboard-name namespace: open-cluster-management-observability labels: grafana-custom-dashboard: "true" data: $your-dashboard-name.json: |- $your_dashboard_jsonNotes:

If your dashboard is created within the

grafana-devinstance, you can take the name of the dashboard and pass it as an argument in the script. For example, a dashboard named Demo Dashboard is created in thegrafana-devinstance. From the CLI, you can run the following script:./generate-dashboard-configmap-yaml.sh "Demo Dashboard"After running the script, you might receive the following message:

Save dashboard <demo-dashboard> to ./demo-dashboard.yamlIf your dashboard is not in the General folder, you can specify the folder name in the

annotationssection of this ConfigMap:annotations: observability.open-cluster-management.io/dashboard-folder: CustomAfter you complete your updates for the ConfigMap, you can install it to import the dashboard to the Grafana instance.

Verify that the YAML file is created by applying the YAML from the CLI or OpenShift Container Platform console. A ConfigMap within the open-cluster-management-observability namespace is created. Run the following command from the CLI:

oc apply -f demo-dashboard.yaml

From the OpenShift Container Platform console, create the ConfigMap using the demo-dashboard.yaml file. The dashboard is located in the Custom folder.

3.5.3. Uninstalling the Grafana developer instance

When you uninstall the instance, the related resources are also deleted. Run the following command:

./setup-grafana-dev.sh --clean

secret "grafana-dev-config" deleted

deployment.apps "grafana-dev" deleted

serviceaccount "grafana-dev" deleted

route.route.openshift.io "grafana-dev" deleted

persistentvolumeclaim "grafana-dev" deleted

oauthclient.oauth.openshift.io "grafana-proxy-client-dev" deleted

clusterrolebinding.rbac.authorization.k8s.io "open-cluster-management:grafana-crb-dev" deleted3.5.4. Additional resources

- See Exporting metrics to external endpoints.

- See uuidegen for instructions to create a UUID.

- See Using managed cluster labels in Grafana for more details.

- Return to the beginning of the page Using Grafana dashboard.

- For topics, see the Observing environments introduction.

Chapter 4. Customizing observability configuration

Customize the observability configuration to the specific needs of your environment, after you enable observability.

To learn more about how you want to manage and view cluster fleet data that the observability service collects, read the following sections:

Required access: Cluster administrator

- Creating custom rules

- Adding custom metrics

- Adding advanced configuration

- Updating the MultiClusterObservability custom resource replicas from the console

- Customizing route certification

- Customizing certificates for accessing the object store

- Configuring proxy settings for observability add-ons

- Disabling proxy settings for observability add-ons

4.1. Creating custom rules

Create custom rules for the observability installation by adding Prometheus recording rules and alerting rules to the observability resource.

To precalculate expensive expressions, use the recording rules abilities. The results are saved as a new set of time series. With the alerting rules, you can specify the alert conditions based on how you want to send an alert to an external service.

Note: When you update your custom rules, observability-thanos-rule pods restart automatically.

Define custom rules with Prometheus to create alert conditions, and send notifications to an external messaging service. View the following examples of custom rules:

Create a custom alert rule. Create a config map named

thanos-ruler-custom-rulesin theopen-cluster-management-observabilitynamespace. You must name the key,custom_rules.yaml, as shown in the following example. You can create multiple rules in the configuration.- Create a custom alert rule that notifies you when your CPU usage passes your defined value. Your YAML might resemble the following content:

data: custom_rules.yaml: | groups: - name: cluster-health rules: - alert: ClusterCPUHealth-jb annotations: summary: Notify when CPU utilization on a cluster is greater than the defined utilization limit description: "The cluster has a high CPU usage: {{ $value }} core for {{ $labels.cluster }} {{ $labels.clusterID }}." expr: | max(cluster:cpu_usage_cores:sum) by (clusterID, cluster, prometheus) > 0 for: 5s labels: cluster: "{{ $labels.cluster }}" prometheus: "{{ $labels.prometheus }}" severity: critical+

-

The default alert rules are in the

thanos-ruler-default-rulesconfig map of theopen-cluster-management-observabilitynamespace.

Create a custom recording rule within the

thanos-ruler-custom-rulesconfig map. Create a recording rule that gives you the ability to get the sum of the container memory cache of a pod. Your YAML might resemble the following content:data: custom_rules.yaml: | groups: - name: container-memory rules: - record: pod:container_memory_cache:sum expr: sum(container_memory_cache{pod!=""}) BY (pod, container)Note: After you make changes to the config map, the configuration automatically reloads. The configuration reloads because of the

config-reloadwithin theobservability-thanos-rulersidecar.-

To verify that the alert rules are functioning correctly, go to the Grafana dashboard, select to the Explore page, and query

ALERTS. The alert is only available in Grafana if you created the alert.

4.2. Adding custom metrics

Add metrics to the metrics_list.yaml file, to collect from managed clusters. Complete the following steps:

Before you add a custom metric, verify that

mco observabilityis enabled with the following command:oc get mco observability -o yamlCheck for the following message in the

status.conditions.messagesection reads:Observability components are deployed and runningCreate the

observability-metrics-custom-allowlistconfig map in theopen-cluster-management-observabilitynamespace with the following command:oc apply -n open-cluster-management-observability -f observability-metrics-custom-allowlist.yamlAdd the name of the custom metric to the

metrics_list.yamlparameter. Your YAML for the config map might resemble the following content:kind: ConfigMap apiVersion: v1 metadata: name: observability-metrics-custom-allowlist data: metrics_list.yaml: | names:1 - node_memory_MemTotal_bytes rules:2 - record: apiserver_request_duration_seconds:histogram_quantile_90 expr: histogram_quantile(0.90,sum(rate(apiserver_request_duration_seconds_bucket{job=\"apiserver\", verb!=\"WATCH\"}[5m])) by (verb,le))- 1

- Optional: Add the name of the custom metrics that are to be collected from the managed cluster.

- 2

- Optional: Enter only one value for the

exprandrecordparameter pair to define the query expression. The metrics are collected as the name that is defined in therecordparameter from your managed cluster. The metric value returned are the results after you run the query expression.

You can use either one or both of the sections. For user workload metrics, see the Adding user workload metrics section.

- Verify the data collection from your custom metric by querying the metric from the Explore page. You can also use the custom metrics in your own dashboard.

4.2.1. Adding user workload metrics

Collect OpenShift Container Platform user-defined metrics from workloads in OpenShift Container Platform to display the metrics from your Grafana dashboard. Complete the following steps:

Enable monitoring on your OpenShift Container Platform cluster. See Enabling monitoring for user-defined projects in the Additional resources section.

If you have a managed cluster with monitoring for user-defined workloads enabled, the user workloads are located in the

testnamespace and generate metrics. These metrics are collected by Prometheus from the OpenShift Container Platform user workload.Add user workload metrics to the

observability-metrics-custom-allowlistconfig map to collect the metrics in thetestnamespace. View the following example:kind: ConfigMap apiVersion: v1 metadata: name: observability-metrics-custom-allowlist namespace: test data: uwl_metrics_list.yaml:1 names:2 - sample_metrics- 1

- Enter the key for the config map data.

- 2

- Enter the value of the config map data in YAML format. The

namessection includes the list of metric names, which you want to collect from thetestnamespace. After you create the config map, the observability collector collects and pushes the metrics from the target namespace to the hub cluster.

4.2.2. Removing default metrics

If you do not want to collect data for a specific metric from your managed cluster, remove the metric from the observability-metrics-custom-allowlist.yaml file. When you remove a metric, the metric data is not collected from your managed clusters. Complete the following steps to remove a default metric:

Verify that

mco observabilityis enabled by using the following command:oc get mco observability -o yamlAdd the name of the default metric to the

metrics_list.yamlparameter with a hyphen-at the start of the metric name. View the following metric example:-cluster_infrastructure_providerCreate the

observability-metrics-custom-allowlistconfig map in theopen-cluster-management-observabilitynamespace with the following command:oc apply -n open-cluster-management-observability -f observability-metrics-custom-allowlist.yaml- Verify that the observability service is not collecting the specific metric from your managed clusters. When you query the metric from the Grafana dashboard, the metric is not displayed.

4.3. Adding advanced configuration for retention

Add the advanced configuration section to update the retention for each observability component, according to your needs.

Edit the MultiClusterObservability custom resource and add the advanced section with the following command:

oc edit mco observability -o yamlYour YAML file might resemble the following contents:

spec:

advanced:

retentionConfig:

blockDuration: 2h

deleteDelay: 48h

retentionInLocal: 24h

retentionResolutionRaw: 30d

retentionResolution5m: 180d

retentionResolution1h: 0d

receive:

resources:

limits:

memory: 4096Gi

replicas: 3

For descriptions of all the parameters that can added into the advanced configuration, see the Observability API documentation.

4.4. Dynamic metrics for single-node OpenShift clusters

Dynamic metrics collection supports automatic metric collection based on certain conditions. By default, a single-node OpenShift cluster does not collect pod and container resource metrics. Once a single-node OpenShift cluster reaches a specific level of resource consumption, the defined granular metrics are collected dynamically. When the cluster resource consumption is consistently less than the threshold for a period of time, granular metric collection stops.

The metrics are collected dynamically based on the conditions on the managed cluster specified by a collection rule. Because these metrics are collected dynamically, the following Red Hat Advanced Cluster Management Grafana dashboards do not display any data. When a collection rule is activated and the corresponding metrics are collected, the following panels display data for the duration of the time that the collection rule is initiated:

- Kubernetes/Compute Resources/Namespace (Pods)

- Kubernetes/Compute Resources/Namespace (Workloads)

- Kubernetes/Compute Resources/Nodes (Pods)

- Kubernetes/Compute Resources/Pod

- Kubernetes/Compute Resources/Workload A collection rule includes the following conditions:

- A set of metrics to collect dynamically.

- Conditions written as a PromQL expression.

-

A time interval for the collection, which must be set to

true. - A match expression to select clusters where the collect rule must be evaluated.

By default, collection rules are evaluated continuously on managed clusters every 30 seconds, or at a specific time interval. The lowest value between the collection interval and time interval takes precedence. Once the collection rule condition persists for the duration specified by the for attribute, the collection rule starts and the metrics specified by the rule are automatically collected on the managed cluster. Metrics collection stops automatically after the collection rule condition no longer exists on the managed cluster, at least 15 minutes after it starts.

The collection rules are grouped together as a parameter section named collect_rules, where it can be enabled or disabled as a group. Red Hat Advanced Cluster Management installation includes the collection rule group, SNOResourceUsage with two default collection rules: HighCPUUsage and HighMemoryUsage. The HighCPUUsage collection rule begins when the node CPU usage exceeds 70%. The HighMemoryUsage collection rule begins if the overall memory utilization of the single-node OpenShift cluster exceeds 70% of the available node memory. Currently, the previously mentioned thresholds are fixed and cannot be changed. When a collection rule begins for more than the interval specified by the for attribute, the system automatically starts collecting the metrics that are specified in the dynamic_metrics section.

View the list of dynamic metrics that from the collect_rules section, in the following YAML file:

collect_rules:

- group: SNOResourceUsage

annotations:

description: >

By default, a {sno} cluster does not collect pod and container resource metrics. Once a {sno} cluster

reaches a level of resource consumption, these granular metrics are collected dynamically.

When the cluster resource consumption is consistently less than the threshold for a period of time,

collection of the granular metrics stops.

selector:

matchExpressions:

- key: clusterType

operator: In

values: ["{sno}"]

rules:

- collect: SNOHighCPUUsage

annotations:

description: >

Collects the dynamic metrics specified if the cluster cpu usage is constantly more than 70% for 2 minutes

expr: (1 - avg(rate(node_cpu_seconds_total{mode=\"idle\"}[5m]))) * 100 > 70

for: 2m

dynamic_metrics:

names:

- container_cpu_cfs_periods_total

- container_cpu_cfs_throttled_periods_total

- kube_pod_container_resource_limits

- kube_pod_container_resource_requests

- namespace_workload_pod:kube_pod_owner:relabel

- node_namespace_pod_container:container_cpu_usage_seconds_total:sum_irate

- node_namespace_pod_container:container_cpu_usage_seconds_total:sum_rate

- collect: SNOHighMemoryUsage

annotations:

description: >

Collects the dynamic metrics specified if the cluster memory usage is constantly more than 70% for 2 minutes

expr: (1 - sum(:node_memory_MemAvailable_bytes:sum) / sum(kube_node_status_allocatable{resource=\"memory\"})) * 100 > 70

for: 2m

dynamic_metrics:

names:

- kube_pod_container_resource_limits

- kube_pod_container_resource_requests

- namespace_workload_pod:kube_pod_owner:relabel

matches:

- __name__="container_memory_cache",container!=""

- __name__="container_memory_rss",container!=""

- __name__="container_memory_swap",container!=""

- __name__="container_memory_working_set_bytes",container!=""

A collect_rules.group can be disabled in the custom-allowlist as shown in the following example. When a collect_rules.group is disabled, metrics collection reverts to the previous behavior. These metrics are collected at regularly, specified intervals:

collect_rules:

- group: -SNOResourceUsageThe data is only displayed in Grafana when the rule is initiated.

4.5. Updating the MultiClusterObservability custom resource replicas from the console

If your workload increases, increase the number of replicas of your observability pods. Navigate to the Red Hat OpenShift Container Platform console from your hub cluster. Locate the MultiClusterObservability custom resource, and update the replicas parameter value for the component where you want to change the replicas. Your updated YAML might resemble the following content:

spec:

advanced:

receive:

replicas: 6

For more information about the parameters within the mco observability custom resource, see the Observability API documentation.

4.6. Customizing route certificate

If you want to customize the OpenShift Container Platform route certification, you must add the routes in the alt_names section. To ensure your OpenShift Container Platform routes are accessible, add the following information: alertmanager.apps.<domainname>, observatorium-api.apps.<domainname>, rbac-query-proxy.apps.<domainname>.

For more details, see Replacing certificates for alertmanager route in the Governance documentation.

Note: Users are responsible for certificate rotations and updates.

4.7. Customizing certificates for accessing the object store

Complete the following steps to customize certificates for accessing the object store:

Edit the

http_configsection by adding the certificate in the object store secret. View the following example:thanos.yaml: | type: s3 config: bucket: "thanos" endpoint: "minio:9000" insecure: false access_key: "minio" secret_key: "minio123" http_config: tls_config: ca_file: /etc/minio/certs/ca.crt insecure_skip_verify: falseAdd the object store secret in the

open-cluster-management-observabilitynamespace. The secret must contain theca.crtthat you defined in the previous secret example. If you want to enable Mutual TLS, you need to providepublic.crt, andprivate.keyin the previous secret. View the following example:thanos.yaml: | type: s3 config: ... http_config: tls_config: ca_file: /etc/minio/certs/ca.crt1 cert_file: /etc/minio/certs/public.crt key_file: /etc/minio/certs/private.key insecure_skip_verify: false- 1

- The path to certificates and key values for the

thanos-object-storagesecret.

Configure the secret name and mount path by updating the

tlsSecretNameandtlsSecretMountPathparameters in theMultiClusterObservabilitycustom resource. View the following example where the secret name istls-certs-secretand the mount path for the certificates and key value is the directory that is used in the prior example:metricObjectStorage: key: thanos.yaml name: thanos-object-storage tlsSecretName: tls-certs-secret tlsSecretMountPath: /etc/minio/certsMount the secret in the

tlsSecretMountPathresource of all components that need to access the object store by renaming the existing TLS. See the following example:metricObjectStorage: key: thanos.yaml name: thanos-object-storage tlsSecretName: <existing-tls-certs-secret> tlsSecretMountPath: /etc/minio/certs receiver: store: ruler: compact:- To verify that you can access the object store, check that the pods are displayed.

4.8. Configuring proxy settings for observability add-ons

Configure the proxy settings to allow the communications from the managed cluster to access the hub cluster through a HTTP and HTTPS proxy server. Typically, add-ons do not need any special configuration to support HTTP and HTTPS proxy servers between a hub cluster and a managed cluster. But if you enabled the observability add-on, you must complete the proxy configuration.

4.9. Prerequisite

- You have a hub cluster.

- You have enabled the proxy settings between the hub cluster and managed cluster.

Complete the following steps to configure the proxy settings for the observability add-on:

- Go to the cluster namespace on your hub cluster.

Create an

AddOnDeploymentConfigresource with the proxy settings by adding aspec.proxyConfigparameter. View the following YAML example:apiVersion: addon.open-cluster-management.io/v1alpha1 kind: AddOnDeploymentConfig metadata: name: <addon-deploy-config-name> namespace: <managed-cluster-name> spec: agentInstallNamespace: open-cluster-managment-addon-observability proxyConfig: httpsProxy: "http://<username>:<password>@<ip>:<port>"1 noProxy: ".cluster.local,.svc,172.30.0.1"2 To get the IP address, run following command on your managed cluster:

oc -n default describe svc kubernetes | grep IP:Go to the

ManagedClusterAddOnresource and update it by referencing theAddOnDeploymentConfigresource that you made. View the following YAML example:apiVersion: addon.open-cluster-management.io/v1alpha1 kind: ManagedClusterAddOn metadata: name: observability-controller namespace: <managed-cluster-name> spec: installNamespace: open-cluster-managment-addon-observability configs: - group: addon.open-cluster-management.io resource: AddonDeploymentConfig name: <addon-deploy-config-name> namespace: <managed-cluster-name>Verify the proxy settings. If you successfully configured the proxy settings, the metric collector deployed by the observability add-on agent on the managed cluster sends the data to the hub cluster. Complete the following steps:

- Go to the hub cluster then the managed cluster on the Grafana dashboard.

- View the metrics for the proxy settings.

4.10. Disabling proxy settings for observability add-ons

If your development needs change, you might need to disable the proxy setting for the observability add-ons you configured for the hub cluster and managed cluster. You can disable the proxy settings for the observability add-on at any time. Complete the following steps:

-

Go to the

ManagedClusterAddOnresource. -

Remove the referenced

AddOnDeploymentConfigresource.

4.11. Additional resources

- Refer to Prometheus configuration for more information. For more information about recording rules and alerting rules, refer to the recording rules and alerting rules from the Prometheus documentation.

- For more information about viewing the dashboard, see Using Grafana dashboards.

- See Exporting metrics to external endpoints.

- See Enabling monitoring for user-defined projects.

- See the Observability API.

- For information about updating the certificate for the alertmanager route, see Replacing certificates for alertmanager.

- For more details about observability alerts, see Observability alerts

- To learn more about alert forwarding, see the Prometheus Alertmanager documentation.

- See Observability alerts for more information.

- For more topics about the observability service, see Observability service introduction.

- See Management Workload Partitioning for more information.

- Return to the beginning of this topic, Customizing observability.

Chapter 5. Managing observability alerts

Receive and define alerts for the observability service to be notified of hub cluster and managed cluster changes.

5.1. Configuring Alertmanager

Integrate external messaging tools such as email, Slack, and PagerDuty to receive notifications from Alertmanager. You must override the alertmanager-config secret in the open-cluster-management-observability namespace to add integrations, and configure routes for Alertmanager. Complete the following steps to update the custom receiver rules:

Extract the data from the

alertmanager-configsecret. Run the following command:oc -n open-cluster-management-observability get secret alertmanager-config --template='{{ index .data "alertmanager.yaml" }}' |base64 -d > alertmanager.yamlEdit and save the

alertmanager.yamlfile configuration by running the following command:oc -n open-cluster-management-observability create secret generic alertmanager-config --from-file=alertmanager.yaml --dry-run -o=yaml | oc -n open-cluster-management-observability replace secret --filename=-Your updated secret might resemble the following content:

global smtp_smarthost: 'localhost:25' smtp_from: 'alertmanager@example.org' smtp_auth_username: 'alertmanager' smtp_auth_password: 'password' templates: - '/etc/alertmanager/template/*.tmpl' route: group_by: ['alertname', 'cluster', 'service'] group_wait: 30s group_interval: 5m repeat_interval: 3h receiver: team-X-mails routes: - match_re: service: ^(foo1|foo2|baz)$ receiver: team-X-mails

Your changes are applied immediately after it is modified. For an example of Alertmanager, see prometheus/alertmanager.

5.2. Forwarding alerts

After you enable observability, alerts from your OpenShift Container Platform managed clusters are automatically sent to the hub cluster. You can use the alertmanager-config YAML file to configure alerts with an external notification system.

View the following example of the alertmanager-config YAML file:

global:

slack_api_url: '<slack_webhook_url>'

route:

receiver: 'slack-notifications'

group_by: [alertname, datacenter, app]

receivers:

- name: 'slack-notifications'

slack_configs:

- channel: '#alerts'

text: 'https://internal.myorg.net/wiki/alerts/{{ .GroupLabels.app }}/{{ .GroupLabels.alertname }}'

If you want to configure a proxy for alert forwarding, add the following global entry to the alertmanager-config YAML file:

global:

slack_api_url: '<slack_webhook_url>'

http_config:

proxy_url: http://****5.2.1. Disabling alert forwarding for managed clusters

To disable alert forwarding for managed clusters, add the following annotation to the MultiClusterObservability custom resource:

metadata:

annotations:

mco-disable-alerting: true

When you set the annotation, the alert forwarding configuration on the managed clusters is reverted. Any changes made to the ocp-monitoring-config ConfigMap in the openshift-monitoring namespace are also reverted. Setting the annotation ensures that the ocp-monitoring-config ConfigMap is no longer managed or updated by the observability operator endpoint. After you update the configuration, the Prometheus instance on your managed cluster restarts.

Important: Metrics on your managed cluster are lost if you have a Prometheus instance with a persistent volume for metrics, and the Prometheus instance restarts. Metrics from the hub cluster are not affected.

When the changes are reverted, a ConfigMap named cluster-monitoring-reverted is created in the open-cluster-management-addon-observability namespace. Any new, manually added alert forward configurations are not reverted from the ConfigMap.

Verify that the hub cluster alert manager is no longer propagating managed cluster alerts to third-party messaging tools. See the previous section, Configuring Alertmanager.

5.3. Silencing alerts

Add alerts that you do not want to receive. You can silence alerts by the alert name, match label, or time duration. After you add the alert that you want to silence, an ID is created. Your ID for your silenced alert might resemble the following string, d839aca9-ed46-40be-84c4-dca8773671da.

Continue reading for ways to silence alerts:

To silence a Red Hat Advanced Cluster Management alert, you must have access to the

alertmanager-mainpod in theopen-cluster-management-observabilitynamespace. For example, enter the following command in the pod terminal to silenceSampleAlert:amtool silence add --alertmanager.url="http://localhost:9093" --author="user" --comment="Silencing sample alert" alertname="SampleAlert"Silence an alert by using multiple match labels. The following command uses

match-label-1andmatch-label-2:amtool silence add --alertmanager.url="http://localhost:9093" --author="user" --comment="Silencing sample alert" <match-label-1>=<match-value-1> <match-label-2>=<match-value-2>If you want to silence an alert for a specific period of time, use the

--durationflag. Run the following command to silence theSampleAlertfor an hour:amtool silence add --alertmanager.url="http://localhost:9093" --author="user" --comment="Silencing sample alert" --duration="1h" alertname="SampleAlert"You can also specify a start or end time for the silenced alert. Enter the following command to silence the

SampleAlertat a specific start time:amtool silence add --alertmanager.url="http://localhost:9093" --author="user" --comment="Silencing sample alert" --start="2023-04-14T15:04:05-07:00" alertname="SampleAlert"To view all silenced alerts that are created, run the following command:

amtool silence --alertmanager.url="http://localhost:9093"If you no longer want an alert to be silenced, end the silencing of the alert by running the following command:

amtool silence expire --alertmanager.url="http://localhost:9093" "d839aca9-ed46-40be-84c4-dca8773671da"To end the silencing of all alerts, run the following command:

amtool silence expire --alertmanager.url="http://localhost:9093" $(amtool silence query --alertmanager.url="http://localhost:9093" -q)

5.4. Suppressing alerts

Suppress Red Hat Advanced Cluster Management alerts across your clusters globally that are less severe. Suppress alerts by defining an inhibition rule in the alertmanager-config in the open-cluster-management-observability namespace.

An inhibition rule mutes an alert when there is a set of parameter matches that match another set of existing matchers. In order for the rule to take effect, both the target and source alerts must have the same label values for the label names in the equal list. Your inhibit_rules might resemble the following:

global:

resolve_timeout: 1h

inhibit_rules:

- equal:

- namespace

source_match:

severity: critical

target_match_re:

severity: warning|info- 1

- The

inhibit_rulesparameter section is defined to look for alerts in the same namespace. When acriticalalert is initiated within a namespace and if there are any other alerts that contain the severity levelwarningorinfoin that namespace, only thecriticalalerts are routed to the Alertmanager receiver. The following alerts might be displayed when there are matches:ALERTS{alertname="foo", namespace="ns-1", severity="critical"} ALERTS{alertname="foo", namespace="ns-1", severity="warning"} - 2

- If the value of the

source_matchandtarget_match_reparameters do not match, the alert is routed to the receiver:ALERTS{alertname="foo", namespace="ns-1", severity="critical"} ALERTS{alertname="foo", namespace="ns-2", severity="warning"}To view suppressed alerts in Red Hat Advanced Cluster Management, enter the following command:

amtool alert --alertmanager.url="http://localhost:9093" --inhibited

5.5. Additional resources

- See Customizing observability for more details.

- For more observability topics, see Observability service introduction.

Chapter 6. Using managed cluster labels in Grafana