スケーラビリティーおよびパフォーマンス

実稼働環境における OpenShift Container Platform クラスターのスケーリングおよびパフォーマンスチューニング

概要

第1章 大規模なクラスターのインストールに推奨されるプラクティス

大規模なクラスターをインストールする場合や、クラスターを大きなノード数にスケーリングする際に、以下のプラクティスを適用します。

1.1. 大規模なクラスターのインストールに推奨されるプラクティス

大規模なクラスターをインストールする場合や、クラスターを大規模なノード数に拡張する場合、クラスターをインストールする前に、install-config.yaml ファイルに適宜クラスターネットワーク cidr を設定します。

networking:

clusterNetwork:

- cidr: 10.128.0.0/14

hostPrefix: 23

machineCIDR: 10.0.0.0/16

networkType: OpenShiftSDN

serviceNetwork:

- 172.30.0.0/16

クラスターのサイズが 500 を超える場合、デフォルトのクラスターネットワーク cidr 10.128.0.0/14 を使用することはできません。500 ノードを超えるノード数にするには、10.128.0.0/12 または 10.128.0.0/10 に設定する必要があります。

第2章 ホストについての推奨されるプラクティス

このトピックでは、OpenShift Container Platform のホストについての推奨プラクティスについて説明します。

これらのガイドラインは、Open Virtual Network (OVN) ではなく、ソフトウェア定義ネットワーク (SDN) を使用する OpenShift Container Platform に該当します。

2.1. ノードホストについての推奨プラクティス

OpenShift Container Platform ノードの設定ファイルには、重要なオプションが含まれています。たとえば、podsPerCore および maxPods の 2 つのパラメーターはノードにスケジュールできる Pod の最大数を制御します。

両方のオプションが使用されている場合、2 つの値の低い方の値により、ノード上の Pod 数が制限されます。これらの値を超えると、以下の状態が生じる可能性があります。

- CPU 使用率の増大。

- Pod のスケジューリングの速度が遅くなる。

- (ノードのメモリー量によって) メモリー不足のシナリオが生じる可能性。

- IP アドレスのプールを消費する。

- リソースのオーバーコミット、およびこれによるアプリケーションのパフォーマンスの低下。

Kubernetes では、単一コンテナーを保持する Pod は実際には 2 つのコンテナーを使用します。2 つ目のコンテナーは実際のコンテナーの起動前にネットワークを設定するために使用されます。そのため、10 の Pod を使用するシステムでは、実際には 20 のコンテナーが実行されていることになります。

クラウドプロバイダーからのディスク IOPS スロットリングは CRI-O および kubelet に影響を与える可能性があります。ノード上に多数の I/O 集約型 Pod が実行されている場合、それらはオーバーロードする可能性があります。ノード上のディスク I/O を監視し、ワークロード用に十分なスループットを持つボリュームを使用することが推奨されます。

podsPerCore は、ノードのプロセッサーコア数に基づいてノードが実行できる Pod 数を設定します。たとえば、4 プロセッサーコアを搭載したノードで podsPerCore が 10 に設定される場合、このノードで許可される Pod の最大数は 40 になります。

kubeletConfig:

podsPerCore: 10

podsPerCore を 0 に設定すると、この制限が無効になります。デフォルトは 0 です。podsPerCore は maxPods を超えることができません。

maxPods は、ノードのプロパティーにかかわらず、ノードが実行できる Pod 数を固定値に設定します。

kubeletConfig:

maxPods: 2502.2. kubelet パラメーターを編集するための KubeletConfig CRD の作成

kubelet 設定は、現時点で Ignition 設定としてシリアル化されているため、直接編集することができます。ただし、新規の kubelet-config-controller も Machine Config Controller (MCC) に追加されます。これにより、KubeletConfig カスタムリソース (CR) を使用して kubelet パラメーターを編集できます。

kubeletConfig オブジェクトのフィールドはアップストリーム Kubernetes から kubelet に直接渡されるため、kubelet はそれらの値を直接検証します。kubeletConfig オブジェクトに無効な値により、クラスターノードが利用できなくなります。有効な値は、Kubernetes ドキュメント を参照してください。

以下のガイダンスを参照してください。

-

マシン設定プールごとに、そのプールに加える設定変更をすべて含めて、

KubeletConfigCR を 1 つ作成します。同じコンテンツをすべてのプールに適用している場合には、すべてのプールにKubeletConfigCR を 1 つだけ設定する必要があります。 -

既存の

KubeletConfigCR を編集して既存の設定を編集するか、変更ごとに新規 CR を作成する代わりに新規の設定を追加する必要があります。CR を作成するのは、別のマシン設定プールを変更する場合、または一時的な変更を目的とした変更の場合のみにして、変更を元に戻すことができるようにすることをお勧めします。 -

必要に応じて、クラスターごとに 10 を制限し、複数の

KubeletConfigCR を作成します。最初のKubeletConfigCR について、Machine Config Operator (MCO) はkubeletで追加されたマシン設定を作成します。それぞれの後続の CR で、コントローラーは数字の接尾辞が付いた別のkubeletマシン設定を作成します。たとえば、kubeletマシン設定があり、その接尾辞が-2の場合に、次のkubeletマシン設定には-3が付けられます。

マシン設定を削除する場合は、制限を超えないようにそれらを逆の順序で削除する必要があります。たとえば、kubelet-3 マシン設定を、kubelet-2 マシン設定を削除する前に削除する必要があります。

接尾辞が kubelet-9 のマシン設定があり、別の KubeletConfig CR を作成する場合には、kubelet マシン設定が 10 未満の場合でも新規マシン設定は作成されません。

KubeletConfig CR の例

$ oc get kubeletconfigNAME AGE

set-max-pods 15mKubeletConfig マシン設定を示す例

$ oc get mc | grep kubelet...

99-worker-generated-kubelet-1 b5c5119de007945b6fe6fb215db3b8e2ceb12511 3.2.0 26m

...以下の手順は、ワーカーノードでノードあたりの Pod の最大数を設定する方法を示しています。

前提条件

設定するノードタイプの静的な

MachineConfigPoolCR に関連付けられたラベルを取得します。以下のいずれかの手順を実行します。マシン設定プールを表示します。

$ oc describe machineconfigpool <name>以下に例を示します。

$ oc describe machineconfigpool worker出力例

apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfigPool metadata: creationTimestamp: 2019-02-08T14:52:39Z generation: 1 labels: custom-kubelet: set-max-pods1 - 1

- ラベルが追加されると、

labelsの下に表示されます。

ラベルが存在しない場合は、キー/値のペアを追加します。

$ oc label machineconfigpool worker custom-kubelet=set-max-pods

手順

これは、選択可能なマシン設定オブジェクトを表示します。

$ oc get machineconfigデフォルトで、2 つの kubelet 関連の設定である

01-master-kubeletおよび01-worker-kubeletを選択できます。ノードあたりの最大 Pod の現在の値を確認します。

$ oc describe node <node_name>以下に例を示します。

$ oc describe node ci-ln-5grqprb-f76d1-ncnqq-worker-a-mdv94Allocatableスタンザでvalue: pods: <value>を検索します。出力例

Allocatable: attachable-volumes-aws-ebs: 25 cpu: 3500m hugepages-1Gi: 0 hugepages-2Mi: 0 memory: 15341844Ki pods: 250ワーカーノードでノードあたりの最大の Pod を設定するには、kubelet 設定を含むカスタムリソースファイルを作成します。

apiVersion: machineconfiguration.openshift.io/v1 kind: KubeletConfig metadata: name: set-max-pods spec: machineConfigPoolSelector: matchLabels: custom-kubelet: set-max-pods1 kubeletConfig: maxPods: 5002 注記kubelet が API サーバーと通信する速度は、1 秒あたりのクエリー (QPS) およびバースト値により異なります。デフォルト値の

50(kubeAPIQPSの場合) および100(kubeAPIBurstの場合) は、各ノードで制限された Pod が実行されている場合には十分な値です。ノード上に CPU およびメモリーリソースが十分にある場合には、kubelet QPS およびバーストレートを更新することが推奨されます。apiVersion: machineconfiguration.openshift.io/v1 kind: KubeletConfig metadata: name: set-max-pods spec: machineConfigPoolSelector: matchLabels: custom-kubelet: set-max-pods kubeletConfig: maxPods: <pod_count> kubeAPIBurst: <burst_rate> kubeAPIQPS: <QPS>ラベルを使用してワーカーのマシン設定プールを更新します。

$ oc label machineconfigpool worker custom-kubelet=large-podsKubeletConfigオブジェクトを作成します。$ oc create -f change-maxPods-cr.yamlKubeletConfigオブジェクトが作成されていることを確認します。$ oc get kubeletconfig出力例

NAME AGE set-max-pods 15mクラスター内のワーカーノードの数によっては、ワーカーノードが 1 つずつ再起動されるのを待機します。3 つのワーカーノードを持つクラスターの場合は、10 分 から 15 分程度かかる可能性があります。

変更がノードに適用されていることを確認します。

maxPods値が変更されたワーカーノードで確認します。$ oc describe node <node_name>Allocatableスタンザを見つけます。... Allocatable: attachable-volumes-gce-pd: 127 cpu: 3500m ephemeral-storage: 123201474766 hugepages-1Gi: 0 hugepages-2Mi: 0 memory: 14225400Ki pods: 5001 ...- 1

- この例では、

podsパラメーターはKubeletConfigオブジェクトに設定した値を報告するはずです。

KubeletConfigオブジェクトの変更を確認します。$ oc get kubeletconfigs set-max-pods -o yaml上記のコマンドでは

status: "True"とtype:Successが表示されるはずです。spec: kubeletConfig: maxPods: 500 machineConfigPoolSelector: matchLabels: custom-kubelet: set-max-pods status: conditions: - lastTransitionTime: "2021-06-30T17:04:07Z" message: Success status: "True" type: Success

2.4. コントロールプレーンノードのサイジング

コントロールプレーンノードリソースの要件は、クラスター内のノード数によって異なります。コントロールプレーンノードのサイズについての以下の推奨内容は、テストに重点を置いた場合のコントロールプレーンの密度の結果に基づいています。コントロールプレーンのテストでは、ノード数に応じて各 namespace でクラスター全体に展開される以下のオブジェクトを作成します。

- 12 イメージストリーム

- 3 ビルド設定

- 6 ビルド

- それぞれに 2 つのシークレットをマウントする 2 Pod レプリカのある 1 デプロイメント

- 2 つのシークレットをマウントする 1 Pod レプリカのある 2 デプロイメント

- 先のデプロイメントを参照する 3 つのサービス

- 先のデプロイメントを参照する 3 つのルート

- 10 のシークレット (それらの内の 2 つは先ののデプロイメントでマウントされる)

- 10 の設定マップ (それらの内の 2 つは先のデプロイメントでマウントされる)

| ワーカーノードの数 | クラスターの負荷 (namespace) | CPU コア数 | メモリー (GB) |

|---|---|---|---|

| 25 | 500 | 4 | 16 |

| 100 | 1000 | 8 | 32 |

| 250 | 4000 | 16 | 96 |

3 つのコントロールプレーンノード (またはマスターノード) がある大規模で高密度のクラスターでは、いずれかのノードが停止、起動、または障害が発生すると、CPU とメモリーの使用量が急上昇します。障害は、コストを節約するためにシャットダウンした後にクラスターが再起動する意図的なケースに加えて、電源、ネットワーク、または基礎となるインフラストラクチャーの予期しない問題が発生することが原因である可能性があります。残りの 2 つのコントロールプレーンノードは、高可用性を維持するために負荷を処理する必要があります。これにより、リソースの使用量が増えます。これは、マスターが遮断 (cordon)、ドレイン (解放) され、オペレーティングシステムおよびコントロールプレーン Operator の更新を適用するために順次再起動されるため、アップグレード時に想定される動作になります。障害が繰り返し発生しないようにするには、コントロールプレーンノードでの全体的な CPU およびメモリーリソース使用状況を、利用可能な容量の最大 60% に維持し、使用量の急増に対応できるようにします。リソース不足による潜在的なダウンタイムを回避するために、コントロールプレーンノードの CPU およびメモリーを適宜増やします。

ノードのサイジングは、クラスター内のノードおよびオブジェクトの数によって異なります。また、オブジェクトがそのクラスター上でアクティブに作成されるかどうかによっても異なります。オブジェクトの作成時に、コントロールプレーンは、オブジェクトが running フェーズにある場合と比較し、リソースの使用状況においてよりアクティブな状態になります。

インストール手法にインストーラーでプロビジョニングされるインフラストラクチャーを使用した場合には、実行中の OpenShift Container Platform 4.7 クラスターでコントロールプレーンノードのサイズを変更できません。その代わりに、ノードの合計数を見積もり、コントロールプレーンノードの推奨サイズをインストール時に使用する必要があります。

この推奨内容は、OpenShiftSDN がネットワークプラグインとして設定されている OpenShift Container Platform クラスターでキャプチャーされるデータポイントに基づくものです。

OpenShift Container Platform 4.7 では、デフォルトで CPU コア (500 ミリコア) の半分がシステムによって予約されます (OpenShift Container Platform 3.11 以前のバージョンと比較)。サイズはこれを考慮に入れて決定されます。

2.4.1. Amazon Web Services (AWS) マスターインスタンスのフレーバーサイズを増やす

クラスター内の AWS マスターノードに過負荷がかかり、マスターノードがより多くのリソースを必要とする場合は、マスターインスタンスのフレーバーサイズを増加させることができます。

AWS マスターインスタンスのフレーバーサイズを増やす前に、etcd をバックアップすることをお勧めします。

前提条件

- AWS に IPI (installer-provisioned infrastructure) または UPI (user-provisioned infrastructure) クラスターがある。

手順

- AWS コンソールを開き、マスターインスタンスを取得します。

- 1 つのマスターインスタンスを停止します。

- 停止したインスタンスを選択し、Actions → Instance Settings → Change instance type をクリックします。

-

インスタンスをより大きなタイプに変更し、タイプが前の選択と同じベースであることを確認して、変更を適用します。たとえば、

m5.xlargeをm5.2xlargeまたはm5.4xlargeに変更できます。 - インスタンスをバックアップし、次のマスターインスタンスに対して手順を繰り返します。

2.5. etcd についての推奨されるプラクティス

大規模で密度の高いクラスターの場合に、キースペースが過剰に拡大し、スペースのクォータを超過すると、etcd は低下するパフォーマンスの影響を受ける可能性があります。etcd を定期的に維持および最適化して、データストアのスペースを解放します。Prometheus で etcd メトリックをモニターし、必要に応じてデフラグします。そうしないと、etcd はクラスター全体のアラームを発生させ、クラスターをメンテナンスモードにして、キーの読み取りと削除のみを受け入れる可能性があります。

これらの主要な指標をモニターします。

-

etcd_server_quota_backend_bytes、これは現在のクォータ制限です -

etcd_mvcc_db_total_size_in_use_in_bytes、これはヒストリーコンパクション後の実際のデータベース使用状況を示します。 -

etcd_debugging_mvcc_db_total_size_in_bytes、これはデフラグを待機している空き領域を含む、データベースのサイズを示します。

etcd の最適化の詳細については、etcd データの最適化を参照してください。

etcd はデータをディスクに書き込み、プロポーザルをディスクに保持するため、そのパフォーマンスはディスクのパフォーマンスに依存します。遅いディスクと他のプロセスからのディスクアクティビティーは、長い fsync 待ち時間を引き起こす可能性があります。これらの待ち時間により、etcd はハートビートを見逃し、新しいプロポーザルを時間どおりにディスクにコミットせず、最終的にリクエストのタイムアウトと一時的なリーダーの喪失を経験する可能性があります。低遅延と高スループットの SSD または NVMe ディスクでバックアップされたマシンで etcd を実行します。シングルレベルセル (SLC) ソリッドステートドライブ (SSD) を検討してください。これは、メモリーセルごとに 1 ビットを提供し、耐久性と信頼性が高く、書き込みの多いワークロードに最適です。

デプロイされた OpenShift Container Platform クラスターでモニターする主要なメトリクスの一部は、etcd ディスクの write ahead log 期間の p99 と etcd リーダーの変更数です。Prometheus を使用してこれらのメトリクスを追跡します。

-

etcd_disk_wal_fsync_duration_seconds_bucketメトリックは、etcd ディスクの fsync 期間を報告します。 -

etcd_server_leader_changes_seen_totalメトリックは、リーダーの変更を報告します。 -

遅いディスクを除外し、ディスクが適度に速いことを確認するには、

etcd_disk_wal_fsync_duration_seconds_bucketの 99 パーセンタイルが 10 ミリ秒未満であることを確認します。

OpenShift Container Platform クラスターを作成する前または後に etcd のハードウェアを検証するには、fio と呼ばれる I/O ベンチマークツールを使用できます。

前提条件

- Podman や Docker などのコンテナーランタイムは、テストしているマシンにインストールされます。

-

データは

/var/lib/etcdパスに書き込まれます。

手順

fio を実行し、結果を分析します。

Podman を使用する場合は、次のコマンドを実行します。

$ sudo podman run --volume /var/lib/etcd:/var/lib/etcd:Z quay.io/openshift-scale/etcd-perfDocker を使用する場合は、次のコマンドを実行します。

$ sudo docker run --volume /var/lib/etcd:/var/lib/etcd:Z quay.io/openshift-scale/etcd-perf

この出力では、実行からキャプチャーされた fsync メトリクスの 99 パーセンタイルの比較でディスクが 10 ms 未満かどうかを確認して、ディスクの速度が etcd をホストするのに十分であるかどうかを報告します。

etcd はすべてのメンバー間で要求を複製するため、そのパフォーマンスはネットワーク入出力 (I/O) のレイテンシーによって大きく変わります。ネットワークのレイテンシーが高くなると、etcd のハートビートの時間は選択のタイムアウトよりも長くなり、その結果、クラスターに中断をもたらすリーダーの選択が発生します。デプロイされた OpenShift Container Platform クラスターでのモニターの主要なメトリクスは、各 etcd クラスターメンバーの etcd ネットワークピアレイテンシーの 99 番目のパーセンタイルになります。Prometheus を使用してメトリクスを追跡します。

histogram_quantile(0.99, rate(etcd_network_peer_round_trip_time_seconds_bucket[2m])) メトリックは、etcd がメンバー間でクライアントリクエストの複製を完了するまでのラウンドトリップ時間をレポートします。50 ミリ秒未満であることを確認してください。

2.6. etcd データのデフラグ

etcd 履歴の圧縮および他のイベントによりディスクの断片化が生じた後にディスク領域を回収するために、手動によるデフラグを定期的に実行する必要があります。

履歴の圧縮は 5 分ごとに自動的に行われ、これによりバックエンドデータベースにギャップが生じます。この断片化された領域は etcd が使用できますが、ホストファイルシステムでは利用できません。ホストファイルシステムでこの領域を使用できるようにするには、etcd をデフラグする必要があります。

etcd はデータをディスクに書き込むため、そのパフォーマンスはディスクのパフォーマンスに大きく依存します。etcd のデフラグは、毎月、月に 2 回、またはクラスターでの必要に応じて行うことを検討してください。etcd_db_total_size_in_bytes メトリクスをモニターして、デフラグが必要であるかどうかを判別することもできます。

etcd のデフラグはプロセスを阻止するアクションです。etcd メンバーはデフラグが完了するまで応答しません。このため、各 Pod のデフラグアクションごとに少なくとも 1 分間待機し、クラスターが回復できるようにします。

以下の手順に従って、各 etcd メンバーで etcd データをデフラグします。

前提条件

-

cluster-adminロールを持つユーザーとしてクラスターにアクセスできる。

手順

リーダーを最後にデフラグする必要があるため、どの etcd メンバーがリーダーであるかを判別します。

etcd Pod の一覧を取得します。

$ oc get pods -n openshift-etcd -o wide | grep -v quorum-guard | grep etcd出力例

etcd-ip-10-0-159-225.example.redhat.com 3/3 Running 0 175m 10.0.159.225 ip-10-0-159-225.example.redhat.com <none> <none> etcd-ip-10-0-191-37.example.redhat.com 3/3 Running 0 173m 10.0.191.37 ip-10-0-191-37.example.redhat.com <none> <none> etcd-ip-10-0-199-170.example.redhat.com 3/3 Running 0 176m 10.0.199.170 ip-10-0-199-170.example.redhat.com <none> <none>Pod を選択し、以下のコマンドを実行して、どの etcd メンバーがリーダーであるかを判別します。

$ oc rsh -n openshift-etcd etcd-ip-10-0-159-225.example.redhat.com etcdctl endpoint status --cluster -w table出力例

Defaulting container name to etcdctl. Use 'oc describe pod/etcd-ip-10-0-159-225.example.redhat.com -n openshift-etcd' to see all of the containers in this pod. +---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+ | ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS | +---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+ | https://10.0.191.37:2379 | 251cd44483d811c3 | 3.4.9 | 104 MB | false | false | 7 | 91624 | 91624 | | | https://10.0.159.225:2379 | 264c7c58ecbdabee | 3.4.9 | 104 MB | false | false | 7 | 91624 | 91624 | | | https://10.0.199.170:2379 | 9ac311f93915cc79 | 3.4.9 | 104 MB | true | false | 7 | 91624 | 91624 | | +---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+この出力の

IS LEADER列に基づいて、https://10.0.199.170:2379エンドポイントがリーダーになります。このエンドポイントを直前の手順の出力に一致させると、リーダーの Pod 名はetcd-ip-10-0-199-170.example.redhat.comになります。

etcd メンバーのデフラグ。

実行中の etcd コンテナーに接続し、リーダーでは ない Pod の名前を渡します。

$ oc rsh -n openshift-etcd etcd-ip-10-0-159-225.example.redhat.comETCDCTL_ENDPOINTS環境変数の設定を解除します。sh-4.4# unset ETCDCTL_ENDPOINTSetcd メンバーのデフラグを実行します。

sh-4.4# etcdctl --command-timeout=30s --endpoints=https://localhost:2379 defrag出力例

Finished defragmenting etcd member[https://localhost:2379]タイムアウトエラーが発生した場合は、コマンドが正常に実行されるまで

--command-timeoutの値を増やします。データベースサイズが縮小されていることを確認します。

sh-4.4# etcdctl endpoint status -w table --cluster出力例

+---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+ | ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS | +---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+ | https://10.0.191.37:2379 | 251cd44483d811c3 | 3.4.9 | 104 MB | false | false | 7 | 91624 | 91624 | | | https://10.0.159.225:2379 | 264c7c58ecbdabee | 3.4.9 | 41 MB | false | false | 7 | 91624 | 91624 | |1 | https://10.0.199.170:2379 | 9ac311f93915cc79 | 3.4.9 | 104 MB | true | false | 7 | 91624 | 91624 | | +---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+この例では、この etcd メンバーのデータベースサイズは、開始時のサイズの 104 MB ではなく 41 MB です。

これらの手順を繰り返して他の etcd メンバーのそれぞれに接続し、デフラグします。常に最後にリーダーをデフラグします。

etcd Pod が回復するように、デフラグアクションごとに 1 分以上待機します。etcd Pod が回復するまで、etcd メンバーは応答しません。

領域のクォータの超過により

NOSPACEアラームがトリガーされる場合、それらをクリアします。NOSPACEアラームがあるかどうかを確認します。sh-4.4# etcdctl alarm list出力例

memberID:12345678912345678912 alarm:NOSPACEアラームをクリアします。

sh-4.4# etcdctl alarm disarm

2.7. OpenShift Container Platform インフラストラクチャーコンポーネント

以下のインフラストラクチャーワークロードでは、OpenShift Container Platform ワーカーのサブスクリプションは不要です。

- マスターで実行される Kubernetes および OpenShift Container Platform コントロールプレーンサービス

- デフォルトルーター

- 統合コンテナーイメージレジストリー

- HAProxy ベースの Ingress Controller

- ユーザー定義プロジェクトのモニターリング用のコンポーネントを含む、クラスターメトリクスの収集またはモニターリングサービス

- クラスター集計ロギング

- サービスブローカー

- Red Hat Quay

- Red Hat OpenShift Container Storage

- Red Hat Advanced Cluster Manager

- Kubernetes 用 Red Hat Advanced Cluster Security

- Red Hat OpenShift GitOps

- Red Hat OpenShift Pipelines

他のコンテナー、Pod またはコンポーネントを実行するノードは、サブスクリプションが適用される必要のあるワーカーノードです。

2.8. モニタリングソリューションの移動

デフォルトでは、Prometheus、Grafana、および AlertManager が含まれる Prometheus Cluster Monitoring スタックはクラスターモニターリングをデプロイするためにデプロイされます。これは Cluster Monitoring Operator によって管理されます。このコンポーネント異なるマシンに移行するには、カスタム設定マップを作成し、これを適用します。

手順

以下の

ConfigMap定義をcluster-monitoring-configmap.yamlファイルとして保存します。apiVersion: v1 kind: ConfigMap metadata: name: cluster-monitoring-config namespace: openshift-monitoring data: config.yaml: |+ alertmanagerMain: nodeSelector: node-role.kubernetes.io/infra: "" prometheusK8s: nodeSelector: node-role.kubernetes.io/infra: "" prometheusOperator: nodeSelector: node-role.kubernetes.io/infra: "" grafana: nodeSelector: node-role.kubernetes.io/infra: "" k8sPrometheusAdapter: nodeSelector: node-role.kubernetes.io/infra: "" kubeStateMetrics: nodeSelector: node-role.kubernetes.io/infra: "" telemeterClient: nodeSelector: node-role.kubernetes.io/infra: "" openshiftStateMetrics: nodeSelector: node-role.kubernetes.io/infra: "" thanosQuerier: nodeSelector: node-role.kubernetes.io/infra: ""この設定マップを実行すると、モニタリングスタックのコンポーネントがインフラストラクチャーノードに再デプロイされます。

新規の設定マップを適用します。

$ oc create -f cluster-monitoring-configmap.yamlモニタリング Pod が新規マシンに移行することを確認します。

$ watch 'oc get pod -n openshift-monitoring -o wide'コンポーネントが

infraノードに移動していない場合は、このコンポーネントを持つ Pod を削除します。$ oc delete pod -n openshift-monitoring <pod>削除された Pod からのコンポーネントが

infraノードに再作成されます。

2.9. デフォルトレジストリーの移行

レジストリー Operator を、その Pod を複数の異なるノードにデプロイするように設定します。

前提条件

- 追加のマシンセットを OpenShift Container Platform クラスターに設定します。

手順

config/instanceオブジェクトを表示します。$ oc get configs.imageregistry.operator.openshift.io/cluster -o yaml出力例

apiVersion: imageregistry.operator.openshift.io/v1 kind: Config metadata: creationTimestamp: 2019-02-05T13:52:05Z finalizers: - imageregistry.operator.openshift.io/finalizer generation: 1 name: cluster resourceVersion: "56174" selfLink: /apis/imageregistry.operator.openshift.io/v1/configs/cluster uid: 36fd3724-294d-11e9-a524-12ffeee2931b spec: httpSecret: d9a012ccd117b1e6616ceccb2c3bb66a5fed1b5e481623 logging: 2 managementState: Managed proxy: {} replicas: 1 requests: read: {} write: {} storage: s3: bucket: image-registry-us-east-1-c92e88cad85b48ec8b312344dff03c82-392c region: us-east-1 status: ...config/instanceオブジェクトを編集します。$ oc edit configs.imageregistry.operator.openshift.io/clusterオブジェクトの

specセクションを以下の YAML のように変更します。spec: affinity: podAntiAffinity: preferredDuringSchedulingIgnoredDuringExecution: - podAffinityTerm: namespaces: - openshift-image-registry topologyKey: kubernetes.io/hostname weight: 100 logLevel: Normal managementState: Managed nodeSelector: node-role.kubernetes.io/infra: ""レジストリー Pod がインフラストラクチャーノードに移動していることを確認します。

以下のコマンドを実行して、レジストリー Pod が置かれているノードを特定します。

$ oc get pods -o wide -n openshift-image-registryノードに指定したラベルがあることを確認します。

$ oc describe node <node_name>コマンド出力を確認し、

node-role.kubernetes.io/infraがLABELS一覧にあることを確認します。

2.10. ルーターの移動

ルーター Pod を異なるマシンセットにデプロイできます。デフォルトで、この Pod はワーカーノードにデプロイされます。

前提条件

- 追加のマシンセットを OpenShift Container Platform クラスターに設定します。

手順

ルーター Operator の

IngressControllerカスタムリソースを表示します。$ oc get ingresscontroller default -n openshift-ingress-operator -o yamlコマンド出力は以下のテキストのようになります。

apiVersion: operator.openshift.io/v1 kind: IngressController metadata: creationTimestamp: 2019-04-18T12:35:39Z finalizers: - ingresscontroller.operator.openshift.io/finalizer-ingresscontroller generation: 1 name: default namespace: openshift-ingress-operator resourceVersion: "11341" selfLink: /apis/operator.openshift.io/v1/namespaces/openshift-ingress-operator/ingresscontrollers/default uid: 79509e05-61d6-11e9-bc55-02ce4781844a spec: {} status: availableReplicas: 2 conditions: - lastTransitionTime: 2019-04-18T12:36:15Z status: "True" type: Available domain: apps.<cluster>.example.com endpointPublishingStrategy: type: LoadBalancerService selector: ingresscontroller.operator.openshift.io/deployment-ingresscontroller=defaultingresscontrollerリソースを編集し、nodeSelectorをinfraラベルを使用するように変更します。$ oc edit ingresscontroller default -n openshift-ingress-operator以下に示すように、

infraラベルを参照するnodeSelectorスタンザをspecセクションに追加します。spec: nodePlacement: nodeSelector: matchLabels: node-role.kubernetes.io/infra: ""ルーター Pod が

infraノードで実行されていることを確認します。ルーター Pod の一覧を表示し、実行中の Pod のノード名をメモします。

$ oc get pod -n openshift-ingress -o wide出力例

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES router-default-86798b4b5d-bdlvd 1/1 Running 0 28s 10.130.2.4 ip-10-0-217-226.ec2.internal <none> <none> router-default-955d875f4-255g8 0/1 Terminating 0 19h 10.129.2.4 ip-10-0-148-172.ec2.internal <none> <none>この例では、実行中の Pod は

ip-10-0-217-226.ec2.internalノードにあります。実行中の Pod のノードのステータスを表示します。

$ oc get node <node_name>1 出力例

NAME STATUS ROLES AGE VERSION ip-10-0-217-226.ec2.internal Ready infra,worker 17h v1.20.0ロールの一覧に

infraが含まれているため、Pod は正しいノードで実行されます。

2.11. インフラストラクチャーノードのサイジング

これらの要素により、Prometheus のメトリクスまたは時系列の数が増加する可能性があり、インフラストラクチャーノードのリソース要件はクラスターのクラスターの使用年数、ノード、およびオブジェクトによって異なります。以下のインフラストラクチャーノードのサイズの推奨内容は、クラスターの最大値およびコントロールプレーンの密度に重点を置いたテストの結果に基づいています。

| ワーカーノードの数 | CPU コア数 | メモリー (GB) |

|---|---|---|

| 25 | 4 | 16 |

| 100 | 8 | 32 |

| 250 | 16 | 128 |

| 500 | 32 | 128 |

これらのサイジングの推奨内容は、クラスター全体に多数のオブジェクトを作成するスケーリングのテストに基づいています。これらのテストでは、一部のクラスターの最大値に達しいます。OpenShift Container Platform 4.7 クラスターでノード数が 250 および 500 の場合、これらの最大値は、10000 namespace および 61000 Pod、10000 デプロイメント、181000 シークレット、400 設定マップなどになります。Prometheus はメモリー集約型のアプリケーションであり、リソースの使用率はノード数、オブジェクト数、Prometheus メトリクスの収集間隔、メトリクスまたは時系列、クラスターの使用年数などのさまざまな要素によって異なります。ディスクサイズは保持期間によっても変わります。これらの要素を考慮し、これらに応じてサイズを設定する必要があります。

これらのサイジングの推奨内容は、クラスターのインストール時にインストールされるインフラストラクチャーコンポーネント (Prometheus、ルーターおよびレジストリー) についてのみ適用されます。ロギングは Day 2 の操作で、これらの推奨事項には含まれていません。

OpenShift Container Platform 4.7 では、デフォルトで CPU コア (500 ミリコア) の半分がシステムによって予約されます (OpenShift Container Platform 3.11 以前のバージョンと比較)。これは、上記のサイジングの推奨内容に影響します。

第3章 IBM Z および LinuxONE 環境に推奨されるホストプラクティス

このトピックでは、IBM Z および LinuxONE での OpenShift Container Platform のホストについての推奨プラクティスについて説明します。

s390x アーキテクチャーは、多くの側面に固有のものです。したがって、ここで説明する推奨事項によっては、他のプラットフォームには適用されない可能性があります。

特に指定がない限り、これらのプラクティスは IBM Z および LinuxONE での z/VM および Red Hat Enterprise Linux (RHEL) KVM インストールの両方に適用されます。

3.1. CPU のオーバーコミットの管理

高度に仮想化された IBM Z 環境では、インフラストラクチャーのセットアップとサイズ設定を慎重に計画する必要があります。仮想化の最も重要な機能の 1 つは、リソースのオーバーコミットを実行する機能であり、ハイパーバイザーレベルで実際に利用可能なリソースよりも多くのリソースを仮想マシンに割り当てます。これはワークロードに大きく依存し、すべてのセットアップに適用できる黄金律はありません。

設定によっては、CPU のオーバーコミットに関する以下のベストプラクティスを考慮してください。

- LPAR レベル (PR/SM ハイパーバイザー) で、利用可能な物理コア (IFL) を各 LPAR に割り当てないようにします。たとえば、4 つの物理 IFL が利用可能な場合は、それぞれ 4 つの論理 IFL を持つ 3 つの LPAR を定義しないでください。

- LPAR 共有および重みを確認します。

- 仮想 CPU の数が多すぎると、パフォーマンスに悪影響を与える可能性があります。論理プロセッサーが LPAR に定義されているよりも多くの仮想プロセッサーをゲストに定義しないでください。

- ピーク時の負荷に対して、ゲストごとの仮想プロセッサー数を設定し、それ以上は設定しません。

- 小規模から始めて、ワークロードを監視します。必要に応じて、vCPU の数値を段階的に増やします。

- すべてのワークロードが、高いオーバーコミットメント率に適しているわけではありません。ワークロードが CPU 集約型である場合、パフォーマンスの問題なしに高い比率を実現できない可能性が高くなります。より多くの I/O 集約値であるワークロードは、オーバーコミットの使用率が高い場合でも、パフォーマンスの一貫性を保つことができます。

3.2. Transparent Huge Page を無効にする方法

Transparent Huge Page (THP) は、Huge Page を作成し、管理し、使用するためのほとんどの要素を自動化しようとします。THP は Huge Page を自動的に管理するため、すべてのタイプのワークロードに対して常に最適に処理される訳ではありません。THP は、多くのアプリケーションが独自の Huge Page を処理するため、パフォーマンス低下につながる可能性があります。したがって、THP を無効にすることを検討してください。以下の手順では、Node Tuning Operator (NTO) プロファイルを使用して THP を無効にする方法を説明します。

3.2.1. Node Tuning Operator (NTO) プロファイルを使用した THP の無効化

手順

以下の NTO サンプルプロファイルを YAML ファイルにコピーします。たとえば、

thp-s390-tuned.yamlのようになります。apiVersion: tuned.openshift.io/v1 kind: Tuned metadata: name: thp-workers-profile namespace: openshift-cluster-node-tuning-operator spec: profile: - data: | [main] summary=Custom tuned profile for OpenShift on IBM Z to turn off THP on worker nodes include=openshift-node [vm] transparent_hugepages=never name: openshift-thp-never-worker recommend: - match: - label: node-role.kubernetes.io/worker priority: 35 profile: openshift-thp-never-workerNTO プロファイルを作成します。

$ oc create -f thp-s390-tuned.yamlアクティブなプロファイルの一覧を確認します。

$ oc get tuned -n openshift-cluster-node-tuning-operatorプロファイルを削除します。

$ oc delete -f thp-s390-tuned.yaml

検証

ノードのいずれかにログインし、通常の THP チェックを実行して、ノードがプロファイルを正常に適用したかどうかを確認します。

$ cat /sys/kernel/mm/transparent_hugepage/enabled always madvise [never]

3.3. Receive Flow Steering を使用したネットワークパフォーマンスの強化

Receive Flow Steering (RFS) は、ネットワークレイテンシーをさらに短縮して Receive Packet Steering (RPS) を拡張します。RFS は技術的には RPS をベースとしており、CPU キャッシュのヒットレートを増やして、パケット処理の効率を向上させます。RFS はこれを実現すると共に、計算に最も便利な CPU を決定することによってキューの長さを考慮し、キャッシュヒットが CPU 内で発生する可能性が高くなります。そのため、CPU キャッシュは無効化され、キャッシュを再構築するサイクルが少なくて済みます。これにより、パケット処理の実行時間を減らすのに役立ちます。

3.3.1. Machine Config Operator (MCO) を使用した RFS のアクティブ化

手順

以下の MCO サンプルプロファイルを YAML ファイルにコピーします。たとえば、

enable-rfs.yamlのようになります。apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfig metadata: labels: machineconfiguration.openshift.io/role: worker name: 50-enable-rfs spec: config: ignition: version: 2.2.0 storage: files: - contents: source: data:text/plain;charset=US-ASCII,%23%20turn%20on%20Receive%20Flow%20Steering%20%28RFS%29%20for%20all%20network%20interfaces%0ASUBSYSTEM%3D%3D%22net%22%2C%20ACTION%3D%3D%22add%22%2C%20RUN%7Bprogram%7D%2B%3D%22/bin/bash%20-c%20%27for%20x%20in%20/sys/%24DEVPATH/queues/rx-%2A%3B%20do%20echo%208192%20%3E%20%24x/rps_flow_cnt%3B%20%20done%27%22%0A filesystem: root mode: 0644 path: /etc/udev/rules.d/70-persistent-net.rules - contents: source: data:text/plain;charset=US-ASCII,%23%20define%20sock%20flow%20enbtried%20for%20%20Receive%20Flow%20Steering%20%28RFS%29%0Anet.core.rps_sock_flow_entries%3D8192%0A filesystem: root mode: 0644 path: /etc/sysctl.d/95-enable-rps.confMCO プロファイルを作成します。

$ oc create -f enable-rfs.yaml50-enable-rfsという名前のエントリーが表示されていることを確認します。$ oc get mc非アクティブにするには、次のコマンドを実行します。

$ oc delete mc 50-enable-rfs

3.4. ネットワーク設定の選択

ネットワークスタックは、OpenShift Container Platform などの Kubernetes ベースの製品の最も重要なコンポーネントの 1 つです。IBM Z 設定では、ネットワーク設定は選択したハイパーバイザーによって異なります。ワークロードとアプリケーションに応じて、最適なものは通常、ユースケースとトラフィックパターンによって異なります。

設定によっては、以下のベストプラクティスを考慮してください。

- トラフィックパターンを最適化するためにネットワークデバイスに関するすべてのオプションを検討してください。OSA-Express、RoCE Express、HiperSockets、z/VM VSwitch、Linux Bridge (KVM) の利点を調べて、セットアップに最大のメリットをもたらすオプションを決定します。

- 常に利用可能な最新の NIC バージョンを使用してください。たとえば、OSA Express 7S 10 GbE は、OSA Express 6S 10 GbE とトランザクションワークロードタイプと比べ、10 GbE アダプターよりも優れた改善を示しています。

- 各仮想スイッチは、追加のレイテンシーのレイヤーを追加します。

- ロードバランサーは、クラスター外のネットワーク通信に重要なロールを果たします。お使いのアプリケーションに重要な場合は、実稼働環境グレードのハードウェアロードバランサーの使用を検討してください。

- OpenShift Container Platform SDN では、ネットワークパフォーマンスに影響を与えるフローおよびルールが導入されました。コミュニケーションが重要なサービスの局所性から利益を得るには、Pod の親和性と配置を必ず検討してください。

- パフォーマンスと機能間のトレードオフのバランスを取ります。

3.5. z/VM の HyperPAV でディスクのパフォーマンスが高いことを確認します。

DASD デバイスおよび ECKD デバイスは、IBM Z 環境で一般的に使用されているディスクタイプです。z/VM 環境で通常の OpenShift Container Platform 設定では、DASD ディスクがノードのローカルストレージをサポートするのに一般的に使用されます。HyperPAV エイリアスデバイスを設定して、z/VM ゲストをサポートする DASD ディスクに対してスループットおよび全体的な I/O パフォーマンスを向上できます。

ローカルストレージデバイスに HyperPAV を使用すると、パフォーマンスが大幅に向上します。ただし、スループットと CPU コストのトレードオフがあることに注意してください。

3.5.1. z/VM フルパックミニディスクを使用してノードで HyperPAV エイリアスをアクティブにするために Machine Config Operator (MCO) を使用します。

フルパックミニディスクを使用する z/VM ベースの OpenShift Container Platform セットアップの場合、すべてのノードで HyperPAV エイリアスをアクティベートして MCO プロファイルを利用できます。コントロールプレーンノードおよびコンピュートノードの YAML 設定を追加する必要があります。

手順

以下の MCO サンプルプロファイルをコントロールプレーンノードの YAML ファイルにコピーします。たとえば、

05-master-kernelarg-hpav.yamlです。$ cat 05-master-kernelarg-hpav.yaml apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfig metadata: labels: machineconfiguration.openshift.io/role: master name: 05-master-kernelarg-hpav spec: config: ignition: version: 3.1.0 kernelArguments: - rd.dasd=800-805以下の MCO サンプルプロファイルをコンピュートノードの YAML ファイルにコピーします。たとえば、

05-worker-kernelarg-hpav.yamlです。$ cat 05-worker-kernelarg-hpav.yaml apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfig metadata: labels: machineconfiguration.openshift.io/role: worker name: 05-worker-kernelarg-hpav spec: config: ignition: version: 3.1.0 kernelArguments: - rd.dasd=800-805注記デバイス ID に合わせて

rd.dasd引数を変更する必要があります。MCO プロファイルを作成します。

$ oc create -f 05-master-kernelarg-hpav.yaml$ oc create -f 05-worker-kernelarg-hpav.yaml非アクティブにするには、次のコマンドを実行します。

$ oc delete -f 05-master-kernelarg-hpav.yaml$ oc delete -f 05-worker-kernelarg-hpav.yaml

3.6. IBM Z ホストの RHEL KVM の推奨事項

KVM 仮想サーバーの環境を最適化すると、仮想サーバーと利用可能なリソースの可用性が大きく変わります。ある環境のパフォーマンスを向上させる同じアクションは、別の環境で悪影響を与える可能性があります。特定の設定に最適なバランスを見つけることは困難な場合があり、多くの場合は実験が必要です。

以下のセクションでは、IBM Z および LinuxONE 環境で RHEL KVM とともに OpenShift Container Platform を使用する場合のベストプラクティスについて説明します。

3.6.1. VirtIO ネットワークインターフェイスに複数のキューを使用

複数の仮想 CPU を使用すると、受信パケットおよび送信パケットに複数のキューを指定すると、パッケージを並行して転送できます。driver 要素の queues 属性を使用して複数のキューを設定します。仮想サーバーの仮想 CPU の数を超えない 2 以上の整数を指定します。

以下の仕様の例では、ネットワークインターフェイスの入出力キューを 2 つ設定します。

<interface type="direct">

<source network="net01"/>

<model type="virtio"/>

<driver ... queues="2"/>

</interface>複数のキューは、ネットワークインターフェイス用に強化されたパフォーマンスを提供するように設計されていますが、メモリーおよび CPU リソースも使用します。ビジーなインターフェイス用の 2 つのキューの定義を開始します。次に、トラフィックが少ないインターフェイスの場合は 2 つのキューを、ビジーなインターフェイスの場合は 3 つ以上のキューを試してください。

3.6.2. 仮想ブロックデバイスの I/O スレッドの使用

I/O スレッドを使用するように仮想ブロックデバイスを設定するには、仮想サーバー用に 1 つ以上の I/O スレッドを設定し、各仮想ブロックデバイスがこれらの I/O スレッドの 1 つを使用するように設定する必要があります。

以下の例は、<iothreads>3</iothreads> を指定し、3 つの I/O スレッドを連続して 1、2、および 3 に設定します。iothread="2" パラメーターは、ID 2 で I/O スレッドを使用するディスクデバイスのドライバー要素を指定します。

I/O スレッド仕様のサンプル

...

<domain>

<iothreads>3</iothreads>

...

<devices>

...

<disk type="block" device="disk">

<driver ... iothread="2"/>

</disk>

...

</devices>

...

</domain>スレッドは、ディスクデバイスの I/O 操作のパフォーマンスを向上させることができますが、メモリーおよび CPU リソースも使用します。同じスレッドを使用するように複数のデバイスを設定できます。スレッドからデバイスへの最適なマッピングは、利用可能なリソースとワークロードによって異なります。

少数の I/O スレッドから始めます。多くの場合は、すべてのディスクデバイスの単一の I/O スレッドで十分です。仮想 CPU の数を超えてスレッドを設定しないでください。アイドル状態のスレッドを設定しません。

virsh iothreadadd コマンドを使用して、特定のスレッド ID の I/O スレッドを稼働中の仮想サーバーに追加できます。

3.6.3. 仮想 SCSI デバイスの回避

SCSI 固有のインターフェイスを介してデバイスに対応する必要がある場合にのみ、仮想 SCSI デバイスを設定します。ホスト上でバッキングされるかどうかにかかわらず、仮想 SCSI デバイスではなく、ディスク領域を仮想ブロックデバイスとして設定します。

ただし、以下には、SCSI 固有のインターフェイスが必要になる場合があります。

- ホスト上で SCSI 接続のテープドライブ用の LUN。

- 仮想 DVD ドライブにマウントされるホストファイルシステムの DVD ISO ファイル。

3.6.4. ディスクについてのゲストキャッシュの設定

ホストではなく、ゲストでキャッシュするようにディスクデバイスを設定します。

ディスクデバイスのドライバー要素に cache="none" パラメーターおよび io="native" パラメーターが含まれていることを確認します。

<disk type="block" device="disk">

<driver name="qemu" type="raw" cache="none" io="native" iothread="1"/>

...

</disk>3.6.5. メモリーバルーンデバイスを除外します。

動的メモリーサイズが必要ない場合は、メモリーバルーンデバイスを定義せず、libvirt が管理者用に作成しないようにする必要があります。memballoon パラメーターを、ドメイン設定 XML ファイルの devices 要素の子として含めます。

アクティブなプロファイルの一覧を確認します。

<memballoon model="none"/>

3.6.6. ホストスケジューラーの CPU 移行アルゴリズムの調整

影響を把握する専門家がない限り、スケジューラーの設定は変更しないでください。テストせずに実稼働システムに変更を適用せず、目的の効果を確認しないでください。

kernel.sched_migration_cost_ns パラメーターは、ナノ秒の間隔を指定します。タスクの最後の実行後、CPU キャッシュは、この間隔が期限切れになるまで有用なコンテンツを持つと見なされます。この間隔を大きくすると、タスクの移行が少なくなります。デフォルト値は 500000 ns です。

実行可能なプロセスがあるときに CPU アイドル時間が予想よりも長い場合は、この間隔を短くしてみてください。タスクが CPU またはノード間で頻繁にバウンスする場合は、それを増やしてみてください。

間隔を 60000 ns に動的に設定するには、以下のコマンドを入力します。

# sysctl kernel.sched_migration_cost_ns=60000

値を 60000 ns に永続的に変更するには、次のエントリーを /etc/sysctl.conf に追加します。

kernel.sched_migration_cost_ns=600003.6.7. cpuset cgroup コントローラーの無効化

この設定は、cgroups バージョン 1 の KVM ホストにのみ適用されます。ホストで CPU ホットプラグを有効にするには、cgroup コントローラーを無効にします。

手順

-

任意のエディターで

/etc/libvirt/qemu.confを開きます。 -

cgroup_controllers行に移動します。 - 行全体を複製し、コピーから先頭の番号記号 (#) を削除します。

cpusetエントリーを以下のように削除します。cgroup_controllers = [ "cpu", "devices", "memory", "blkio", "cpuacct" ]新しい設定を有効にするには、libvirtd デーモンを再起動する必要があります。

- すべての仮想マシンを停止します。

以下のコマンドを実行します。

# systemctl restart libvirtd- 仮想マシンを再起動します。

この設定は、ホストの再起動後も維持されます。

3.6.8. アイドル状態の仮想 CPU のポーリング期間の調整

仮想 CPU がアイドル状態になると、KVM は仮想 CPU のウェイクアップ条件をポーリングしてからホストリソースを割り当てます。ポーリングが sysfs の /sys/module/kvm/parameters/halt_poll_ns に配置される時間間隔を指定できます。指定された時間中、ポーリングにより、リソースの使用量を犠牲にして、仮想 CPU のウェイクアップレイテンシーが短縮されます。ワークロードに応じて、ポーリングの時間を長くしたり短くしたりすることが有益な場合があります。間隔はナノ秒で指定します。デフォルトは 50000 ns です。

CPU の使用率が低い場合を最適化するには、小さい値または書き込み 0 を入力してポーリングを無効にします。

# echo 0 > /sys/module/kvm/parameters/halt_poll_nsトランザクションワークロードなどの低レイテンシーを最適化するには、大きな値を入力します。

# echo 80000 > /sys/module/kvm/parameters/halt_poll_ns

第4章 クラスタースケーリングに関する推奨プラクティス

本セクションのガイダンスは、クラウドプロバイダーの統合によるインストールにのみ関連します。

これらのガイドラインは、Open Virtual Network (OVN) ではなく、ソフトウェア定義ネットワーク (SDN) を使用する OpenShift Container Platform に該当します。

以下のベストプラクティスを適用して、OpenShift Container Platform クラスター内のワーカーマシンの数をスケーリングします。ワーカーのマシンセットで定義されるレプリカ数を増やしたり、減らしたりしてワーカーマシンをスケーリングします。

4.1. クラスターのスケーリングに関する推奨プラクティス

クラスターをノード数のより高い値にスケールアップする場合:

- 高可用性を確保するために、ノードを利用可能なすべてのゾーンに分散します。

- 1 度に 25 未満のマシンごとに 50 マシンまでスケールアップします。

- 定期的なプロバイダーの容量関連の制約を軽減するために、同様のサイズの別のインスタンスタイプを使用して、利用可能なゾーンごとに新規のマシンセットを作成することを検討してください。たとえば、AWS で、m5.large および m5d.large を使用します。

クラウドプロバイダーは API サービスのクォータを実装する可能性があります。そのため、クラスターは段階的にスケーリングします。

マシンセットのレプリカが 1 度に高い値に設定される場合に、コントローラーはマシンを作成できなくなる可能性があります。OpenShift Container Platform が上部にデプロイされているクラウドプラットフォームが処理できる要求の数はプロセスに影響を与えます。コントローラーは、該当するステータスのマシンの作成、確認、および更新を試行する間に、追加のクエリーを開始します。OpenShift Container Platform がデプロイされるクラウドプラットフォームには API 要求の制限があり、過剰なクエリーが生じると、クラウドプラットフォームの制限によりマシンの作成が失敗する場合があります。

大規模なノード数にスケーリングする際にマシンヘルスチェックを有効にします。障害が発生する場合、ヘルスチェックは状態を監視し、正常でないマシンを自動的に修復します。

大規模で高密度のクラスターをノード数を減らしてスケールダウンする場合には、長い時間がかかる可能性があります。このプロセスで、終了するノードで実行されているオブジェクトのドレイン (解放) またはエビクトが並行して実行されるためです。また、エビクトするオブジェクトが多過ぎる場合に、クライアントはリクエストのスロットリングを開始する可能性があります。デフォルトのクライアント QPS およびバーストレートは、現時点で 5 と 10 にそれぞれ設定されています。これらは OpenShift Container Platform で変更することはできません。

4.2. マシンセットの変更

マシンセットを変更するには、MachineSet YAML を編集します。次に、各マシンを削除するか、またはマシンセットを 0 レプリカにスケールダウンしてマシンセットに関連付けられたすべてのマシンを削除します。レプリカは必要な数にスケーリングします。マシンセットへの変更は既存のマシンに影響を与えません。

他の変更を加えずに、マシンセットをスケーリングする必要がある場合、マシンを削除する必要はありません。

デフォルトで、OpenShift Container Platform ルーター Pod はワーカーにデプロイされます。ルーターは Web コンソールなどの一部のクラスターリソースにアクセスすることが必要であるため、 ルーター Pod をまず再配置しない限り、ワーカーのマシンセットを 0 にスケーリングできません。

前提条件

-

OpenShift Container Platform クラスターおよび

ocコマンドラインをインストールすること。 -

cluster-adminパーミッションを持つユーザーとして、ocにログインする。

手順

マシンセットを編集します。

$ oc edit machineset <machineset> -n openshift-machine-apiマシンセットを

0にスケールダウンします。$ oc scale --replicas=0 machineset <machineset> -n openshift-machine-apiまたは、以下を実行します。

$ oc edit machineset <machineset> -n openshift-machine-apiマシンが削除されるまで待機します。

マシンセットを随時スケールアップします。

$ oc scale --replicas=2 machineset <machineset> -n openshift-machine-apiまたは、以下を実行します。

$ oc edit machineset <machineset> -n openshift-machine-apiマシンが起動するまで待ちます。新規マシンにはマシンセットに加えられた変更が含まれます。

4.3. マシンのヘルスチェック

マシンのヘルスチェックは特定のマシンプールの正常ではないマシンを自動的に修復します。

マシンの正常性を監視するには、リソースを作成し、コントローラーの設定を定義します。5 分間 NotReady ステータスにすることや、 node-problem-detector に永続的な条件を表示すること、および監視する一連のマシンのラベルなど、チェックする条件を設定します。

マスターロールのあるマシンにマシンヘルスチェックを適用することはできません。

MachineHealthCheck リソースを監視するコントローラーは定義済みのステータスをチェックします。マシンがヘルスチェックに失敗した場合、このマシンは自動的に検出され、その代わりとなるマシンが作成されます。マシンが削除されると、machine deleted イベントが表示されます。

マシンの削除による破壊的な影響を制限するために、コントローラーは 1 度に 1 つのノードのみをドレイン (解放) し、これを削除します。マシンのターゲットプールで許可される maxUnhealthy しきい値を上回る数の正常でないマシンがある場合、修復が停止するため、手動による介入が可能になります。

タイムアウトについて注意深い検討が必要であり、ワークロードと要件を考慮してください。

- タイムアウトの時間が長くなると、正常でないマシンのワークロードのダウンタイムが長くなる可能性があります。

-

タイムアウトが短すぎると、修復ループが生じる可能性があります。たとえば、

NotReadyステータスを確認するためのタイムアウトについては、マシンが起動プロセスを完了できるように十分な時間を設定する必要があります。

チェックを停止するには、リソースを削除します。

4.3.1. マシンヘルスチェックのデプロイ時の制限

マシンヘルスチェックをデプロイする前に考慮すべき制限事項があります。

- マシンセットが所有するマシンのみがマシンヘルスチェックによって修復されます。

- コントロールプレーンマシンは現在サポートされておらず、それらが正常でない場合にも修正されません。

- マシンのノードがクラスターから削除される場合、マシンヘルスチェックはマシンが正常ではないとみなし、すぐにこれを修復します。

-

nodeStartupTimeoutの後にマシンの対応するノードがクラスターに加わらない場合、マシンは修復されます。 -

MachineリソースフェーズがFailedの場合、マシンはすぐに修復されます。

4.4. サンプル MachineHealthCheck リソース

ベアメタルを除くすべてのクラウドベースのインストールタイプの MachineHealthCheck リソースは、以下の YAML ファイルのようになります。

apiVersion: machine.openshift.io/v1beta1

kind: MachineHealthCheck

metadata:

name: example

namespace: openshift-machine-api

spec:

selector:

matchLabels:

machine.openshift.io/cluster-api-machine-role: <role>

machine.openshift.io/cluster-api-machine-type: <role>

machine.openshift.io/cluster-api-machineset: <cluster_name>-<label>-<zone>

unhealthyConditions:

- type: "Ready"

timeout: "300s"

status: "False"

- type: "Ready"

timeout: "300s"

status: "Unknown"

maxUnhealthy: "40%"

nodeStartupTimeout: "10m" - 1

- デプロイするマシンヘルスチェックの名前を指定します。

- 2 3

- チェックする必要のあるマシンプールのラベルを指定します。

- 4

- 追跡するマシンセットを

<cluster_name>-<label>-<zone>形式で指定します。たとえば、prod-node-us-east-1aとします。 - 5 6

- ノードの状態のタイムアウト期間を指定します。タイムアウト期間の条件が満たされると、マシンは修正されます。タイムアウトの時間が長くなると、正常でないマシンのワークロードのダウンタイムが長くなる可能性があります。

- 7

- ターゲットプールで同時に修復できるマシンの数を指定します。これはパーセンテージまたは整数として設定できます。正常でないマシンの数が

maxUnhealthyで設定された制限を超える場合、修復は実行されません。 - 8

- マシンが正常でないと判別される前に、ノードがクラスターに参加するまでマシンヘルスチェックが待機する必要のあるタイムアウト期間を指定します。

matchLabels はあくまでもサンプルであるため、特定のニーズに応じてマシングループをマッピングする必要があります。

4.4.1. マシンヘルスチェックによる修復の一時停止 (short-circuiting)

一時停止 (short-circuiting) が実行されることにより、マシンのヘルスチェックはクラスターが正常な場合にのみマシンを修復するようになります。一時停止 (short-circuiting) は、MachineHealthCheck リソースの maxUnhealthy フィールドで設定されます。

ユーザーがマシンの修復前に maxUnhealthy フィールドの値を定義する場合、MachineHealthCheck は maxUnhealthy の値を、正常でないと判別するターゲットプール内のマシン数と比較します。正常でないマシンの数が maxUnhealthy の制限を超える場合、修復は実行されません。

maxUnhealthy が設定されていない場合、値は 100% にデフォルト設定され、マシンはクラスターの状態に関係なく修復されます。

適切な maxUnhealthy 値は、デプロイするクラスターの規模や、MachineHealthCheck が対応するマシンの数によって異なります。たとえば、maxUnhealthy 値を使用して複数のアベイラビリティーゾーン間で複数のマシンセットに対応でき、ゾーン全体が失われると、maxUnhealthy の設定によりクラスター内で追加の修復を防ぐことができます。

maxUnhealthy フィールドは整数またはパーセンテージのいずれかに設定できます。maxUnhealthy の値によって、修復の実装が異なります。

4.4.1.1. 絶対値を使用した maxUnhealthy の設定

maxUnhealthy が 2 に設定される場合:

- 2 つ以下のノードが正常でない場合に、修復が実行されます。

- 3 つ以上のノードが正常でない場合は、修復は実行されません。

これらの値は、マシンヘルスチェックによってチェックされるマシン数と別個の値です。

4.4.1.2. パーセンテージを使用した maxUnhealthy の設定

maxUnhealthy が 40% に設定され、25 のマシンがチェックされる場合:

- 10 以下のノードが正常でない場合に、修復が実行されます。

- 11 以上のノードが正常でない場合は、修復は実行されません。

maxUnhealthy が 40% に設定され、6 マシンがチェックされる場合:

- 2 つ以下のノードが正常でない場合に、修復が実行されます。

- 3 つ以上のノードが正常でない場合は、修復は実行されません。

チェックされる maxUnhealthy マシンの割合が整数ではない場合、マシンの許可される数は切り捨てられます。

4.5. MachineHealthCheck リソースの作成

クラスターに、すべての MachineSets の MachineHealthCheck リソースを作成できます。コントロールプレーンマシンをターゲットとする MachineHealthCheck リソースを作成することはできません。

前提条件

-

ocコマンドラインインターフェイスをインストールします。

手順

-

マシンヘルスチェックの定義を含む

healthcheck.ymlファイルを作成します。 healthcheck.ymlファイルをクラスターに適用します。$ oc apply -f healthcheck.yml

第5章 Node Tuning Operator の使用

Node Tuning Operator について説明し、この Operator を使用し、Tuned デーモンのオーケストレーションを実行してノードレベルのチューニングを管理する方法について説明します。

5.1. Node Tuning Operator について

Node Tuning Operator は、Tuned デーモンのオーケストレーションによるノードレベルのチューニングの管理に役立ちます。ほとんどの高パフォーマンスアプリケーションでは、一定レベルのカーネルのチューニングが必要です。Node Tuning Operator は、ノードレベルの sysctl の統一された管理インターフェイスをユーザーに提供し、ユーザーが指定するカスタムチューニングを追加できるよう柔軟性を提供します。

Operator は、コンテナー化された OpenShift Container Platform の Tuned デーモンを Kubernetes デーモンセットとして管理します。これにより、カスタムチューニング仕様が、デーモンが認識する形式でクラスターで実行されるすべてのコンテナー化された Tuned デーモンに渡されます。デーモンは、ノードごとに 1 つずつ、クラスターのすべてのノードで実行されます。

コンテナー化された Tuned デーモンによって適用されるノードレベルの設定は、プロファイルの変更をトリガーするイベントで、または終了シグナルの受信および処理によってコンテナー化された Tuned デーモンが正常に終了する際にロールバックされます。

Node Tuning Operator は、バージョン 4.1 以降における標準的な OpenShift Container Platform インストールの一部となっています。

5.2. Node Tuning Operator 仕様サンプルへのアクセス

このプロセスを使用して Node Tuning Operator 仕様サンプルにアクセスします。

手順

以下を実行します。

$ oc get Tuned/default -o yaml -n openshift-cluster-node-tuning-operator

デフォルトの CR は、OpenShift Container Platform プラットフォームの標準的なノードレベルのチューニングを提供することを目的としており、Operator 管理の状態を設定するためにのみ変更できます。デフォルト CR へのその他のカスタム変更は、Operator によって上書きされます。カスタムチューニングの場合は、独自のチューニングされた CR を作成します。新規に作成された CR は、ノード/Pod ラベルおよびプロファイルの優先順位に基づいて OpenShift Container Platform ノードに適用されるデフォルトの CR およびカスタムチューニングと組み合わされます。

特定の状況で Pod ラベルのサポートは必要なチューニングを自動的に配信する便利な方法ですが、この方法は推奨されず、とくに大規模なクラスターにおいて注意が必要です。デフォルトの調整された CR は Pod ラベル一致のない状態で提供されます。カスタムプロファイルが Pod ラベル一致のある状態で作成される場合、この機能はその時点で有効になります。Pod ラベル機能は、Node Tuning Operator の今後のバージョンで非推奨になる場合があります。

5.3. クラスターに設定されるデフォルトのプロファイル

以下は、クラスターに設定されるデフォルトのプロファイルです。

apiVersion: tuned.openshift.io/v1

kind: Tuned

metadata:

name: default

namespace: openshift-cluster-node-tuning-operator

spec:

profile:

- name: "openshift"

data: |

[main]

summary=Optimize systems running OpenShift (parent profile)

include=${f:virt_check:virtual-guest:throughput-performance}

[selinux]

avc_cache_threshold=8192

[net]

nf_conntrack_hashsize=131072

[sysctl]

net.ipv4.ip_forward=1

kernel.pid_max=>4194304

net.netfilter.nf_conntrack_max=1048576

net.ipv4.conf.all.arp_announce=2

net.ipv4.neigh.default.gc_thresh1=8192

net.ipv4.neigh.default.gc_thresh2=32768

net.ipv4.neigh.default.gc_thresh3=65536

net.ipv6.neigh.default.gc_thresh1=8192

net.ipv6.neigh.default.gc_thresh2=32768

net.ipv6.neigh.default.gc_thresh3=65536

vm.max_map_count=262144

[sysfs]

/sys/module/nvme_core/parameters/io_timeout=4294967295

/sys/module/nvme_core/parameters/max_retries=10

- name: "openshift-control-plane"

data: |

[main]

summary=Optimize systems running OpenShift control plane

include=openshift

[sysctl]

# ktune sysctl settings, maximizing i/o throughput

#

# Minimal preemption granularity for CPU-bound tasks:

# (default: 1 msec# (1 + ilog(ncpus)), units: nanoseconds)

kernel.sched_min_granularity_ns=10000000

# The total time the scheduler will consider a migrated process

# "cache hot" and thus less likely to be re-migrated

# (system default is 500000, i.e. 0.5 ms)

kernel.sched_migration_cost_ns=5000000

# SCHED_OTHER wake-up granularity.

#

# Preemption granularity when tasks wake up. Lower the value to

# improve wake-up latency and throughput for latency critical tasks.

kernel.sched_wakeup_granularity_ns=4000000

- name: "openshift-node"

data: |

[main]

summary=Optimize systems running OpenShift nodes

include=openshift

[sysctl]

net.ipv4.tcp_fastopen=3

fs.inotify.max_user_watches=65536

fs.inotify.max_user_instances=8192

recommend:

- profile: "openshift-control-plane"

priority: 30

match:

- label: "node-role.kubernetes.io/master"

- label: "node-role.kubernetes.io/infra"

- profile: "openshift-node"

priority: 405.4. Tuned プロファイルが適用されていることの確認

この手順を使用して、すべてのノードに適用される Tuned プロファイルを確認します。

手順

各ノードで実行されている Tuned Pod を確認します。

$ oc get pods -n openshift-cluster-node-tuning-operator -o wide出力例

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES cluster-node-tuning-operator-599489d4f7-k4hw4 1/1 Running 0 6d2h 10.129.0.76 ip-10-0-145-113.eu-west-3.compute.internal <none> <none> tuned-2jkzp 1/1 Running 1 6d3h 10.0.145.113 ip-10-0-145-113.eu-west-3.compute.internal <none> <none> tuned-g9mkx 1/1 Running 1 6d3h 10.0.147.108 ip-10-0-147-108.eu-west-3.compute.internal <none> <none> tuned-kbxsh 1/1 Running 1 6d3h 10.0.132.143 ip-10-0-132-143.eu-west-3.compute.internal <none> <none> tuned-kn9x6 1/1 Running 1 6d3h 10.0.163.177 ip-10-0-163-177.eu-west-3.compute.internal <none> <none> tuned-vvxwx 1/1 Running 1 6d3h 10.0.131.87 ip-10-0-131-87.eu-west-3.compute.internal <none> <none> tuned-zqrwq 1/1 Running 1 6d3h 10.0.161.51 ip-10-0-161-51.eu-west-3.compute.internal <none> <none>各 Pod から適用されるプロファイルを抽出し、それらを直前の一覧に対して一致させます。

$ for p in `oc get pods -n openshift-cluster-node-tuning-operator -l openshift-app=tuned -o=jsonpath='{range .items[*]}{.metadata.name} {end}'`; do printf "\n*** $p ***\n" ; oc logs pod/$p -n openshift-cluster-node-tuning-operator | grep applied; done出力例

*** tuned-2jkzp *** 2020-07-10 13:53:35,368 INFO tuned.daemon.daemon: static tuning from profile 'openshift-control-plane' applied *** tuned-g9mkx *** 2020-07-10 14:07:17,089 INFO tuned.daemon.daemon: static tuning from profile 'openshift-node' applied 2020-07-10 15:56:29,005 INFO tuned.daemon.daemon: static tuning from profile 'openshift-node-es' applied 2020-07-10 16:00:19,006 INFO tuned.daemon.daemon: static tuning from profile 'openshift-node' applied 2020-07-10 16:00:48,989 INFO tuned.daemon.daemon: static tuning from profile 'openshift-node-es' applied *** tuned-kbxsh *** 2020-07-10 13:53:30,565 INFO tuned.daemon.daemon: static tuning from profile 'openshift-node' applied 2020-07-10 15:56:30,199 INFO tuned.daemon.daemon: static tuning from profile 'openshift-node-es' applied *** tuned-kn9x6 *** 2020-07-10 14:10:57,123 INFO tuned.daemon.daemon: static tuning from profile 'openshift-node' applied 2020-07-10 15:56:28,757 INFO tuned.daemon.daemon: static tuning from profile 'openshift-node-es' applied *** tuned-vvxwx *** 2020-07-10 14:11:44,932 INFO tuned.daemon.daemon: static tuning from profile 'openshift-control-plane' applied *** tuned-zqrwq *** 2020-07-10 14:07:40,246 INFO tuned.daemon.daemon: static tuning from profile 'openshift-control-plane' applied

5.5. カスタムチューニング仕様

Operator のカスタムリソース (CR) には 2 つの重要なセクションがあります。1 つ目のセクションの profile: は Tuned プロファイルおよびそれらの名前の一覧です。2 つ目の recommend: は、プロファイル選択ロジックを定義します。

複数のカスタムチューニング仕様は、Operator の namespace に複数の CR として共存できます。新規 CR の存在または古い CR の削除は Operator によって検出されます。既存のカスタムチューニング仕様はすべてマージされ、コンテナー化された Tuned デーモンの適切なオブジェクトは更新されます。

管理状態

Operator 管理の状態は、デフォルトの Tuned CR を調整して設定されます。デフォルトで、Operator は Managed 状態であり、spec.managementState フィールドはデフォルトの Tuned CR に表示されません。Operator Management 状態の有効な値は以下のとおりです。

- Managed: Operator は設定リソースが更新されるとそのオペランドを更新します。

- Unmanaged: Operator は設定リソースへの変更を無視します。

- Removed: Operator は Operator がプロビジョニングしたオペランドおよびリソースを削除します。

プロファイルデータ

profile: セクションは、Tuned プロファイルおよびそれらの名前を一覧表示します。

profile:

- name: tuned_profile_1

data: |

# Tuned profile specification

[main]

summary=Description of tuned_profile_1 profile

[sysctl]

net.ipv4.ip_forward=1

# ... other sysctl's or other Tuned daemon plugins supported by the containerized Tuned

# ...

- name: tuned_profile_n

data: |

# Tuned profile specification

[main]

summary=Description of tuned_profile_n profile

# tuned_profile_n profile settings推奨プロファイル

profile: 選択ロジックは、CR の recommend: セクションによって定義されます。recommend: セクションは、選択基準に基づくプロファイルの推奨項目の一覧です。

recommend:

<recommend-item-1>

# ...

<recommend-item-n>一覧の個別項目:

- machineConfigLabels:

<mcLabels>

match:

<match>

priority: <priority>

profile: <tuned_profile_name>

operand:

debug: <bool> - 1

- オプション:

- 2

- キー/値の

MachineConfigラベルのディクショナリー。キーは一意である必要があります。 - 3

- 省略する場合は、優先度の高いプロファイルが最初に一致するか、または

machineConfigLabelsが設定されていない限り、プロファイルの一致が想定されます。 - 4

- オプションの一覧。

- 5

- プロファイルの順序付けの優先度。数値が小さいほど優先度が高くなります (

0が最も高い優先度になります)。 - 6

- 一致に適用する TuneD プロファイル。例:

tuned_profile_1 - 7

- オプションのオペランド設定。

- 8

- TuneD デーモンのデバッグオンまたはオフを有効にします。オプションは、オンの場合は

true、オフの場合はfalseです。デフォルトはfalseです。

<match> は、以下のように再帰的に定義されるオプションの一覧です。

- label: <label_name>

value: <label_value>

type: <label_type>

<match>

<match> が省略されない場合、ネストされたすべての <match> セクションが true に評価される必要もあります。そうでない場合には false が想定され、それぞれの <match> セクションのあるプロファイルは適用されず、推奨されません。そのため、ネスト化 (子の <match> セクション) は論理 AND 演算子として機能します。これとは逆に、<match> 一覧のいずれかの項目が一致する場合、<match> の一覧全体が true に評価されます。そのため、一覧は論理 OR 演算子として機能します。

machineConfigLabels が定義されている場合、マシン設定プールベースのマッチングが指定の recommend: 一覧の項目に対してオンになります。<mcLabels> はマシン設定のラベルを指定します。マシン設定は、プロファイル <tuned_profile_name> についてカーネル起動パラメーターなどのホスト設定を適用するために自動的に作成されます。この場合、マシン設定セレクターが <mcLabels> に一致するすべてのマシン設定プールを検索し、プロファイル <tuned_profile_name> を確認されるマシン設定プールが割り当てられるすべてのノードに設定する必要があります。マスターロールとワーカーのロールの両方を持つノードをターゲットにするには、マスターロールを使用する必要があります。

一覧項目の match および machineConfigLabels は論理 OR 演算子によって接続されます。match 項目は、最初にショートサーキット方式で評価されます。そのため、true と評価される場合、machineConfigLabels 項目は考慮されません。

マシン設定プールベースのマッチングを使用する場合、同じハードウェア設定を持つノードを同じマシン設定プールにグループ化することが推奨されます。この方法に従わない場合は、チューニングされたオペランドが同じマシン設定プールを共有する 2 つ以上のノードの競合するカーネルパラメーターを計算する可能性があります。

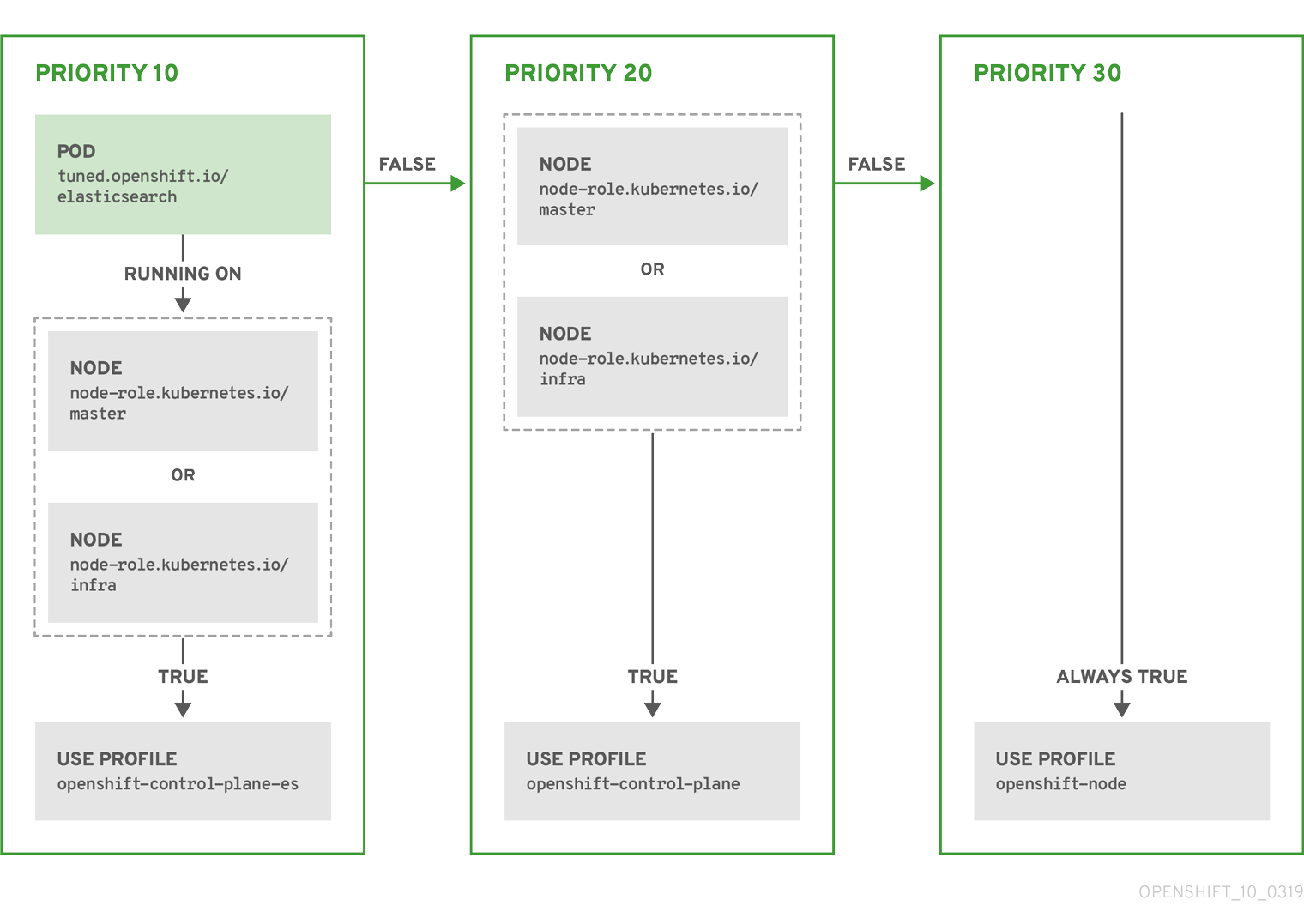

例: ノード/Pod ラベルベースのマッチング

- match:

- label: tuned.openshift.io/elasticsearch

match:

- label: node-role.kubernetes.io/master

- label: node-role.kubernetes.io/infra

type: pod

priority: 10

profile: openshift-control-plane-es

- match:

- label: node-role.kubernetes.io/master

- label: node-role.kubernetes.io/infra

priority: 20

profile: openshift-control-plane

- priority: 30

profile: openshift-node

上記のコンテナー化された Tuned デーモンの CR は、プロファイルの優先順位に基づいてその recommend.conf ファイルに変換されます。最も高い優先順位 (10) を持つプロファイルは openshift-control-plane-es であるため、これが最初に考慮されます。指定されたノードで実行されるコンテナー化された Tuned デーモンは、同じノードに tuned.openshift.io/elasticsearch ラベルが設定された Pod が実行されているかどうかを確認します。これがない場合、 <match> セクション全体が false として評価されます。このラベルを持つこのような Pod がある場合、 <match> セクションが true に評価されるようにするには、ノードラベルは node-role.kubernetes.io/master または node-role.kubernetes.io/infra である必要もあります。

優先順位が 10 のプロファイルのラベルが一致した場合、openshift-control-plane-es プロファイルが適用され、その他のプロファイルは考慮されません。ノード/Pod ラベルの組み合わせが一致しない場合、2 番目に高い優先順位プロファイル (openshift-control-plane) が考慮されます。このプロファイルは、コンテナー化されたチューニング済み Pod が node-role.kubernetes.io/master または node-role.kubernetes.io/infra ラベルを持つノードで実行される場合に適用されます。

最後に、プロファイル openshift-node には最低の優先順位である 30 が設定されます。これには <match> セクションがないため、常に一致します。これは、より高い優先順位の他のプロファイルが指定されたノードで一致しない場合に openshift-node プロファイルを設定するために、最低の優先順位のノードが適用される汎用的な (catch-all) プロファイルとして機能します。

例: マシン設定プールベースのマッチング

apiVersion: tuned.openshift.io/v1

kind: Tuned

metadata:

name: openshift-node-custom

namespace: openshift-cluster-node-tuning-operator

spec:

profile:

- data: |

[main]

summary=Custom OpenShift node profile with an additional kernel parameter

include=openshift-node

[bootloader]

cmdline_openshift_node_custom=+skew_tick=1

name: openshift-node-custom

recommend:

- machineConfigLabels:

machineconfiguration.openshift.io/role: "worker-custom"

priority: 20

profile: openshift-node-customノードの再起動を最小限にするには、ターゲットノードにマシン設定プールのノードセレクターが一致するラベルを使用してラベルを付け、上記の Tuned CR を作成してから、最後にカスタムのマシン設定プール自体を作成します。

5.6. カスタムチューニングの例

以下の CR は、ラベル tuned.openshift.io/ingress-node-label を任意の値に設定した状態で OpenShift Container Platform ノードのカスタムノードレベルのチューニングを適用します。管理者として、以下のコマンドを使用してカスタムの Tune CR を作成します。

カスタムチューニングの例

$ oc create -f- <<_EOF_

apiVersion: tuned.openshift.io/v1

kind: Tuned

metadata:

name: ingress

namespace: openshift-cluster-node-tuning-operator

spec:

profile:

- data: |

[main]

summary=A custom OpenShift ingress profile

include=openshift-control-plane

[sysctl]

net.ipv4.ip_local_port_range="1024 65535"

net.ipv4.tcp_tw_reuse=1

name: openshift-ingress

recommend:

- match:

- label: tuned.openshift.io/ingress-node-label

priority: 10

profile: openshift-ingress

_EOF_

カスタムプロファイル作成者は、デフォルトの Tuned CR に含まれるデフォルトの調整されたデーモンプロファイルを組み込むことが強く推奨されます。上記の例では、デフォルトの openshift-control-plane プロファイルを使用してこれを実行します。

5.7. サポートされている Tuned デーモンプラグイン

[main] セクションを除き、以下の Tuned プラグインは、Tuned CR の profile: セクションで定義されたカスタムプロファイルを使用する場合にサポートされます。

- audio

- cpu

- disk

- eeepc_she

- modules

- mounts

- net

- scheduler

- scsi_host

- selinux

- sysctl

- sysfs

- usb

- video

- vm

これらのプラグインの一部によって提供される動的チューニング機能の中に、サポートされていない機能があります。以下の Tuned プラグインは現時点でサポートされていません。

- bootloader

- script

- systemd

詳細は、利用可能な Tuned プラグイン および Tuned の使用 を参照してください。

第6章 クラスターローダーの使用

クラスターローダーとは、クラスターに対してさまざまなオブジェクトを多数デプロイするツールであり、ユーザー定義のクラスターオブジェクトを作成します。クラスターローダーをビルド、設定、実行して、さまざまなクラスターの状態にある OpenShift Container Platform デプロイメントのパフォーマンスメトリクスを測定します。

6.1. クラスターローダーのインストール

手順

コンテナーイメージをプルするには、以下を実行します。

$ podman pull quay.io/openshift/origin-tests:4.7

6.2. クラスターローダーの実行

前提条件

- リポジトリーは認証を要求するプロンプトを出します。レジストリーの認証情報を使用すると、一般的に利用できないイメージにアクセスできます。インストールからの既存の認証情報を使用します。

手順

組み込まれているテスト設定を使用してクラスターローダーを実行し、5 つのテンプレートビルドをデプロイして、デプロイメントが完了するまで待ちます。

$ podman run -v ${LOCAL_KUBECONFIG}:/root/.kube/config:z -i \ quay.io/openshift/origin-tests:4.7 /bin/bash -c 'export KUBECONFIG=/root/.kube/config && \ openshift-tests run-test "[sig-scalability][Feature:Performance] Load cluster \ should populate the cluster [Slow][Serial] [Suite:openshift]"'または、

VIPERCONFIGの環境変数を設定して、ユーザー定義の設定でクラスターローダーを実行します。$ podman run -v ${LOCAL_KUBECONFIG}:/root/.kube/config:z \ -v ${LOCAL_CONFIG_FILE_PATH}:/root/configs/:z \ -i quay.io/openshift/origin-tests:4.7 \ /bin/bash -c 'KUBECONFIG=/root/.kube/config VIPERCONFIG=/root/configs/test.yaml \ openshift-tests run-test "[sig-scalability][Feature:Performance] Load cluster \ should populate the cluster [Slow][Serial] [Suite:openshift]"'この例では、

${LOCAL_KUBECONFIG}はローカルファイルシステムのkubeconfigのパスを参照します。さらに、${LOCAL_CONFIG_FILE_PATH}というディレクトリーがあり、これはtest.yamlという設定ファイルが含まれるコンテナーにマウントされます。また、test.yamlが外部テンプレートファイルや podspec ファイルを参照する場合、これらもコンテナーにマウントされる必要があります。

6.3. クラスターローダーの設定

このツールは、複数のテンプレートや Pod を含む namespace (プロジェクト) を複数作成します。

6.3.1. クラスターローダー設定ファイルの例

クラスターローダーの設定ファイルは基本的な YAML ファイルです。

provider: local

ClusterLoader:

cleanup: true

projects:

- num: 1

basename: clusterloader-cakephp-mysql

tuning: default

ifexists: reuse

templates:

- num: 1

file: cakephp-mysql.json

- num: 1

basename: clusterloader-dancer-mysql

tuning: default

ifexists: reuse

templates:

- num: 1

file: dancer-mysql.json

- num: 1

basename: clusterloader-django-postgresql

tuning: default

ifexists: reuse

templates:

- num: 1

file: django-postgresql.json

- num: 1

basename: clusterloader-nodejs-mongodb

tuning: default

ifexists: reuse

templates:

- num: 1

file: quickstarts/nodejs-mongodb.json

- num: 1

basename: clusterloader-rails-postgresql

tuning: default

templates:

- num: 1

file: rails-postgresql.json

tuningsets:

- name: default

pods:

stepping:

stepsize: 5

pause: 0 s

rate_limit:

delay: 0 msこの例では、外部テンプレートファイルや Pod 仕様ファイルへの参照もコンテナーにマウントされていることを前提とします。

Microsoft Azure でクラスターローダーを実行している場合、AZURE_AUTH_LOCATION 変数を、インストーラーディレクトリーにある terraform.azure.auto.tfvars.json の出力が含まれるファイルに設定する必要があります。

6.3.2. 設定フィールド

| フィールド | 説明 |

|---|---|

|

|

|

|

|

1 つまたは多数の定義が指定されたサブオブジェクト。 |

|

|

設定ごとに 1 つの定義が指定されたサブオブジェクト。 |

|

| 設定ごとに 1 つの定義が指定されたオプションのサブオブジェクト。オブジェクト作成時に同期できるかどうかについて追加します。 |

| フィールド | 説明 |

|---|---|

|

| 整数。作成するプロジェクト数の 1 つの定義。 |

|

|

文字列。プロジェクトのベース名の定義。競合が発生しないように、同一の namespace の数が |

|

| 文字列。オブジェクトに適用するチューニングセットの 1 つの定義。 これは対象の namespace にデプロイします。 |

|

|

|

|

| キーと値のペア一覧。キーは設定マップの名前で、値はこの設定マップの作成元のファイルへのパスです。 |

|

| キーと値のペア一覧。キーはシークレットの名前で、値はこのシークレットの作成元のファイルへのパスです。 |

|

| デプロイする Pod の 1 つまたは多数の定義を持つサブオブジェクト |

|

| デプロイするテンプレートの 1 つまたは多数の定義を持つサブオブジェクト |

| フィールド | 説明 |

|---|---|

|

| 整数。デプロイする Pod またはテンプレート数。 |

|

| 文字列。プルが可能なリポジトリーに対する Docker イメージの URL |

|

| 文字列。作成するテンプレート (または Pod) のベース名の 1 つの定義。 |

|

| 文字列。ローカルファイルへのパス。 作成する Pod 仕様またはテンプレートのいずれかです。 |

|

|

キーと値のペア。 |

| フィールド | 説明 |

|---|---|

|

| 文字列。チューニングセットの名前。 プロジェクトのチューニングを定義する時に指定した名前と一致します。 |

|

|

Pod に適用される |

|

|

テンプレートに適用される |

| フィールド | 説明 |

|---|---|

|

| サブオブジェクト。ステップ作成パターンでオブジェクトを作成する場合に使用するステップ設定。 |

|

| サブオブジェクト。オブジェクト作成速度を制限するための速度制限チューニングセットの設定。 |

| フィールド | 説明 |

|---|---|

|

| 整数。オブジェクト作成を一時停止するまでに作成するオブジェクト数。 |

|

|

整数。 |

|

| 整数。オブジェクト作成に成功しなかった場合に失敗するまで待機する秒数。 |

|

| 整数。次の作成要求まで待機する時間 (ミリ秒)。 |

| フィールド | 説明 |

|---|---|

|

|

|

|

|

ブール値。 |

|

|

ブール値。 |

|

|

|

|

|

文字列。 |

6.4. 既知の問題

- クラスターローダーは設定なしで呼び出される場合に失敗します。(BZ#1761925)

IDENTIFIERパラメーターがユーザーテンプレートで定義されていない場合には、テンプレートの作成はerror: unknown parameter name "IDENTIFIER"エラーを出して失敗します。テンプレートをデプロイする場合は、このエラーが発生しないように、以下のパラメーターをテンプレートに追加してください。{ "name": "IDENTIFIER", "description": "Number to append to the name of resources", "value": "1" }Pod をデプロイする場合は、このパラメーターを追加する必要はありません。

第7章 CPU マネージャーの使用

CPU マネージャーは、CPU グループを管理して、ワークロードを特定の CPU に制限します。

CPU マネージャーは、以下のような属性が含まれるワークロードに有用です。

- できるだけ長い CPU 時間が必要な場合

- プロセッサーのキャッシュミスの影響を受ける場合

- レイテンシーが低いネットワークアプリケーションの場合

- 他のプロセスと連携し、単一のプロセッサーキャッシュを共有することに利点がある場合

7.1. CPU マネージャーの設定

手順

オプション: ノードにラベルを指定します。

# oc label node perf-node.example.com cpumanager=trueCPU マネージャーを有効にする必要のあるノードの

MachineConfigPoolを編集します。この例では、すべてのワーカーで CPU マネージャーが有効にされています。# oc edit machineconfigpool workerラベルをワーカーのマシン設定プールに追加します。

metadata: creationTimestamp: 2020-xx-xxx generation: 3 labels: custom-kubelet: cpumanager-enabledKubeletConfig、cpumanager-kubeletconfig.yaml、カスタムリソース (CR) を作成します。直前の手順で作成したラベルを参照し、適切なノードを新規の kubelet 設定で更新します。machineConfigPoolSelectorセクションを参照してください。apiVersion: machineconfiguration.openshift.io/v1 kind: KubeletConfig metadata: name: cpumanager-enabled spec: machineConfigPoolSelector: matchLabels: custom-kubelet: cpumanager-enabled kubeletConfig: cpuManagerPolicy: static1 cpuManagerReconcilePeriod: 5s2 動的な kubelet 設定を作成します。

# oc create -f cpumanager-kubeletconfig.yamlこれにより、CPU マネージャー機能が kubelet 設定に追加され、必要な場合には Machine Config Operator (MCO) がノードを再起動します。CPU マネージャーを有効にするために再起動する必要はありません。

マージされた kubelet 設定を確認します。

# oc get machineconfig 99-worker-XXXXXX-XXXXX-XXXX-XXXXX-kubelet -o json | grep ownerReference -A7出力例

"ownerReferences": [ { "apiVersion": "machineconfiguration.openshift.io/v1", "kind": "KubeletConfig", "name": "cpumanager-enabled", "uid": "7ed5616d-6b72-11e9-aae1-021e1ce18878" } ]ワーカーで更新された

kubelet.confを確認します。# oc debug node/perf-node.example.com sh-4.2# cat /host/etc/kubernetes/kubelet.conf | grep cpuManager出力例

cpuManagerPolicy: static1 cpuManagerReconcilePeriod: 5s2 コア 1 つまたは複数を要求する Pod を作成します。制限および要求の CPU の値は整数にする必要があります。これは、対象の Pod 専用のコア数です。

# cat cpumanager-pod.yaml出力例

apiVersion: v1 kind: Pod metadata: generateName: cpumanager- spec: containers: - name: cpumanager image: gcr.io/google_containers/pause-amd64:3.0 resources: requests: cpu: 1 memory: "1G" limits: cpu: 1 memory: "1G" nodeSelector: cpumanager: "true"Pod を作成します。

# oc create -f cpumanager-pod.yamlPod がラベル指定されたノードにスケジュールされていることを確認します。

# oc describe pod cpumanager出力例

Name: cpumanager-6cqz7 Namespace: default Priority: 0 PriorityClassName: <none> Node: perf-node.example.com/xxx.xx.xx.xxx ... Limits: cpu: 1 memory: 1G Requests: cpu: 1 memory: 1G ... QoS Class: Guaranteed Node-Selectors: cpumanager=truecgroupsが正しく設定されていることを確認します。pauseプロセスのプロセス ID (PID) を取得します。# ├─init.scope │ └─1 /usr/lib/systemd/systemd --switched-root --system --deserialize 17 └─kubepods.slice ├─kubepods-pod69c01f8e_6b74_11e9_ac0f_0a2b62178a22.slice │ ├─crio-b5437308f1a574c542bdf08563b865c0345c8f8c0b0a655612c.scope │ └─32706 /pauseQoS (quality of service) 階層

Guaranteedの Pod は、kubepods.sliceに配置されます。他の QoS の Pod は、kubepodsの子であるcgroupsに配置されます。# cd /sys/fs/cgroup/cpuset/kubepods.slice/kubepods-pod69c01f8e_6b74_11e9_ac0f_0a2b62178a22.slice/crio-b5437308f1ad1a7db0574c542bdf08563b865c0345c86e9585f8c0b0a655612c.scope # for i in `ls cpuset.cpus tasks` ; do echo -n "$i "; cat $i ; done出力例

cpuset.cpus 1 tasks 32706対象のタスクで許可される CPU 一覧を確認します。

# grep ^Cpus_allowed_list /proc/32706/status出力例

Cpus_allowed_list: 1システム上の別の Pod (この場合は

burstableQoS 階層にある Pod) が、GuaranteedPod に割り当てられたコアで実行できないことを確認します。# cat /sys/fs/cgroup/cpuset/kubepods.slice/kubepods-besteffort.slice/kubepods-besteffort-podc494a073_6b77_11e9_98c0_06bba5c387ea.slice/crio-c56982f57b75a2420947f0afc6cafe7534c5734efc34157525fa9abbf99e3849.scope/cpuset.cpus 0 # oc describe node perf-node.example.com出力例

... Capacity: attachable-volumes-aws-ebs: 39 cpu: 2 ephemeral-storage: 124768236Ki hugepages-1Gi: 0 hugepages-2Mi: 0 memory: 8162900Ki pods: 250 Allocatable: attachable-volumes-aws-ebs: 39 cpu: 1500m ephemeral-storage: 124768236Ki hugepages-1Gi: 0 hugepages-2Mi: 0 memory: 7548500Ki pods: 250 ------- ---- ------------ ---------- --------------- ------------- --- default cpumanager-6cqz7 1 (66%) 1 (66%) 1G (12%) 1G (12%) 29m Allocated resources: (Total limits may be over 100 percent, i.e., overcommitted.) Resource Requests Limits -------- -------- ------ cpu 1440m (96%) 1 (66%)この仮想マシンには、2 つの CPU コアがあります。

system-reserved設定は 500 ミリコアを予約し、Node Allocatableの量になるようにノードの全容量からコアの半分を引きます。ここでAllocatable CPUは 1500 ミリコアであることを確認できます。これは、それぞれがコアを 1 つ受け入れるので、CPU マネージャー Pod の 1 つを実行できることを意味します。1 つのコア全体は 1000 ミリコアに相当します。2 つ目の Pod をスケジュールしようとする場合、システムは Pod を受け入れますが、これがスケジュールされることはありません。NAME READY STATUS RESTARTS AGE cpumanager-6cqz7 1/1 Running 0 33m cpumanager-7qc2t 0/1 Pending 0 11s

第8章 Topology Manager の使用

Topology Manager は、CPU マネージャー、デバイスマネージャー、およびその他の Hint Provider からヒントを収集し、同じ Non-Uniform Memory Access (NUMA) ノード上のすべての QoS (Quality of Service) クラスについて CPU、SR-IOV VF、その他デバイスリソースなどの Pod リソースを調整します。

Topology Manager は、収集したヒントのトポロジー情報を使用し、設定される Topology Manager ポリシーおよび要求される Pod リソースに基づいて、pod がノードから許可されるか、または拒否されるかどうかを判別します。

Topology Manager は、ハードウェアアクセラレーターを使用して低遅延 (latency-critical) の実行と高スループットの並列計算をサポートするワークロードの場合に役立ちます。

Topology Manager を使用するには、static ポリシーで CPU マネージャーを使用する必要があります。CPU マネージャーの詳細は、CPU マネージャーの使用 を参照してください。

8.1. Topology Manager ポリシー

Topology Manager は、CPU マネージャーやデバイスマネージャーなどの Hint Provider からトポロジーのヒントを収集し、収集したヒントを使用して Pod リソースを調整することで、すべての QoS (Quality of Service) クラスの Pod リソースを調整します。

CPU リソースを Pod 仕様の他の要求されたリソースと調整するには、CPU マネージャーを static CPU マネージャーポリシーで有効にする必要があります。

Topology Manager は、cpumanager-enabled カスタムリソース (CR) で割り当てる 4 つの割り当てポリシーをサポートします。

noneポリシー- これはデフォルトのポリシーで、トポロジーの配置は実行しません。

best-effortポリシー-

best-effortトポロジー管理ポリシーを持つ Pod のそれぞれのコンテナーの場合、kubelet は 各 Hint Provider を呼び出してそれらのリソースの可用性を検出します。この情報を使用して、Topology Manager は、そのコンテナーの推奨される NUMA ノードのアフィニティーを保存します。アフィニティーが優先されない場合、Topology Manager はこれを保管し、ノードに対して Pod を許可します。 restrictedポリシー-

restrictedトポロジー管理ポリシーを持つ Pod のそれぞれのコンテナーの場合、kubelet は 各 Hint Provider を呼び出してそれらのリソースの可用性を検出します。この情報を使用して、Topology Manager は、そのコンテナーの推奨される NUMA ノードのアフィニティーを保存します。アフィニティーが優先されない場合、Topology Manager はこの Pod をノードから拒否します。これにより、Pod が Pod の受付の失敗によりTerminated状態になります。 single-numa-nodeポリシー-

single-numa-nodeトポロジー管理ポリシーがある Pod のそれぞれのコンテナーの場合、kubelet は各 Hint Provider を呼び出してそれらのリソースの可用性を検出します。この情報を使用して、Topology Manager は単一の NUMA ノードのアフィニティーが可能かどうかを判別します。可能である場合、Pod はノードに許可されます。単一の NUMA ノードアフィニティーが使用できない場合には、Topology Manager は Pod をノードから拒否します。これにより、Pod は Pod の受付失敗と共に Terminated (終了) 状態になります。

8.2. Topology Manager のセットアップ

Topology Manager を使用するには、 cpumanager-enabled カスタムリソース (CR) で割り当てポリシーを設定する必要があります。CPU マネージャーをセットアップしている場合は、このファイルが存在している可能性があります。ファイルが存在しない場合は、作成できます。

前提条件

-

CPU マネージャーのポリシーを

staticに設定します。スケーラビリティーおよびパフォーマンスセクションの CPU マネージャーの使用を参照してください。

手順

Topololgy Manager をアクティブにするには、以下を実行します。

cpumanager-enabledカスタムリソース (CR) で Topology Manager 割り当てポリシーを設定します。$ oc edit KubeletConfig cpumanager-enabledapiVersion: machineconfiguration.openshift.io/v1 kind: KubeletConfig metadata: name: cpumanager-enabled spec: machineConfigPoolSelector: matchLabels: custom-kubelet: cpumanager-enabled kubeletConfig: cpuManagerPolicy: static1 cpuManagerReconcilePeriod: 5s topologyManagerPolicy: single-numa-node2

8.3. Pod の Topology Manager ポリシーとの対話

以下のサンプル Pod 仕様は、Pod の Topology Manger との対話について説明しています。

以下の Pod は、リソース要求や制限が指定されていないために BestEffort QoS クラスで実行されます。

spec:

containers:

- name: nginx

image: nginx

以下の Pod は、要求が制限よりも小さいために Burstable QoS クラスで実行されます。

spec:

containers:

- name: nginx

image: nginx

resources:

limits:

memory: "200Mi"

requests:

memory: "100Mi"

選択したポリシーが none 以外の場合は、Topology Manager はこれらの Pod 仕様のいずれかも考慮しません。

以下の最後のサンプル Pod は、要求が制限と等しいために Guaranteed QoS クラスで実行されます。

spec:

containers:

- name: nginx

image: nginx

resources:

limits:

memory: "200Mi"

cpu: "2"

example.com/device: "1"

requests:

memory: "200Mi"

cpu: "2"

example.com/device: "1"Topology Manager はこの Pod を考慮します。Topology Manager は、利用可能な CPU のトポロジーを返す CPU マネージャーの静的ポリシーを確認します。また Topology Manager はデバイスマネージャーを確認し、example.com/device の利用可能なデバイスのトポロジーを検出します。

Topology Manager はこの情報を使用して、このコンテナーに最適なトポロジーを保管します。この Pod の場合、CPU マネージャーおよびデバイスマネージャーは、リソース割り当ての段階でこの保存された情報を使用します。

第9章 Cluster Monitoring Operator のスケーリング

OpenShift Container Platform は、Cluster Monitoring Operator が収集し、Prometheus ベースのモニターリングスタックに保存するメトリクスを公開します。管理者は、Grafana という 1 つのダッシュボードインターフェイスでシステムリソース、コンテナーおよびコンポーネントのメトリクスを表示できます。

Prometheus の割り当てられた PVC を使用してクラスターモニターリングを実行している場合、クラスターのアップグレード時に OOM による強制終了が生じる可能性があります。永続ストレージが Prometheus 用に使用される場合、Prometheus のメモリー使用量はクラスターのアップグレード時、およびアップグレードの完了後の数時間で 2 倍になります。OOM による強制終了の問題を回避するには、ワーカーノードで、アップグレード前に利用可能なメモリーのサイズを 2 倍にできるようにします。たとえば、推奨される最小ノード (RAM が 8 GB の 2 コア) でモニターリングを実行している場合は、メモリーを 16 GB に増やします。詳細は、BZ#1925061 を参照してください。

9.1. Prometheus データベースのストレージ要件

Red Hat では、異なるスケールサイズに応じて各種のテストが実行されました。

以下の Prometheus ストレージ要件は規定されていません。ワークロードのアクティビティーおよびリソースの使用に応じて、クラスターで観察されるリソースの消費量が大きくなる可能性があります。

| ノード数 | Pod 数 | 1 日あたりの Prometheus ストレージの増加量 | 15 日ごとの Prometheus ストレージの増加量 | RAM 領域 (スケールサイズに基づく) | ネットワーク (tsdb チャンクに基づく) |

|---|---|---|---|---|---|

| 50 | 1800 | 6.3 GB | 94 GB | 6 GB | 16 MB |

| 100 | 3600 | 13 GB | 195 GB | 10 GB | 26 MB |

| 150 | 5400 | 19 GB | 283 GB | 12 GB | 36 MB |

| 200 | 7200 | 25 GB | 375 GB | 14 GB | 46 MB |

ストレージ要件が計算値を超過しないようにするために、オーバーヘッドとして予期されたサイズのおよそ 20% が追加されています。

上記の計算は、デフォルトの OpenShift Container Platform Cluster Monitoring Operator についての計算です。

CPU の使用率による影響は大きくありません。比率については、およそ 50 ノードおよび 1800 Pod ごとに 1 コア (/40) になります。

OpenShift Container Platform についての推奨事項

- 3 つ以上のインフラストラクチャー (infra) ノードを使用します。

- NVMe (non-volatile memory express) ドライブを搭載した 3 つ以上の openshift-container-storage ノードを使用します。

9.2. クラスターモニターリングの設定

クラスターモニターリングスタック内の Prometheus コンポーネントのストレージ容量を増やすことができます。

手順

Prometheus のストレージ容量を拡張するには、以下を実行します。

YAML 設定ファイル

cluster-monitoring-config.ymlを作成します。以下に例を示します。apiVersion: v1 kind: ConfigMap data: config.yaml: | prometheusK8s: retention: {{PROMETHEUS_RETENTION_PERIOD}}1 nodeSelector: node-role.kubernetes.io/infra: "" volumeClaimTemplate: spec: storageClassName: {{STORAGE_CLASS}}2 resources: requests: storage: {{PROMETHEUS_STORAGE_SIZE}}3 alertmanagerMain: nodeSelector: node-role.kubernetes.io/infra: "" volumeClaimTemplate: spec: storageClassName: {{STORAGE_CLASS}}4 resources: requests: storage: {{ALERTMANAGER_STORAGE_SIZE}}5 metadata: name: cluster-monitoring-config namespace: openshift-monitoring- 1

- 標準の値は

PROMETHEUS_RETENTION_PERIOD=15dになります。時間は、接尾辞 s、m、h、d のいずれかを使用する単位で測定されます。 - 2 4

- クラスターのストレージクラス。

- 3

- 標準の値は

PROMETHEUS_STORAGE_SIZE=2000Giです。ストレージの値には、接尾辞 E、P、T、G、M、K のいずれかを使用した単純な整数または固定小数点整数を使用できます。 また、2 のべき乗の値 (Ei、Pi、Ti、Gi、Mi、Ki) を使用することもできます。 - 5

- 標準の値は

ALERTMANAGER_STORAGE_SIZE=20Giです。ストレージの値には、接尾辞 E、P、T、G、M、K のいずれかを使用した単純な整数または固定小数点整数を使用できます。 また、2 のべき乗の値 (Ei、Pi、Ti、Gi、Mi、Ki) を使用することもできます。

- 保存期間、ストレージクラス、およびストレージサイズの値を追加します。

- ファイルを保存します。

以下を実行して変更を適用します。

$ oc create -f cluster-monitoring-config.yaml

第10章 オブジェクトの最大値に合わせた環境計画

OpenShift Container Platform クラスターの計画時に以下のテスト済みのオブジェクトの最大値を考慮します。

これらのガイドラインは、最大規模のクラスターに基づいています。小規模なクラスターの場合、最大値はこれより低くなります。指定のしきい値に影響を与える要因には、etcd バージョンやストレージデータ形式などの多数の要因があります。

これらのガイドラインは、Open Virtual Network (OVN) ではなく、ソフトウェア定義ネットワーク (SDN) を使用する OpenShift Container Platform に該当します。

ほとんど場合、これらの制限値を超えると、パフォーマンスが全体的に低下します。ただし、これによって必ずしもクラスターに障害が発生する訳ではありません。

10.1. メジャーリリースについての OpenShift Container Platform のテスト済みクラスターの最大値

OpenShift Container Platform 3.x のテスト済みクラウドプラットフォーム: Red Hat OpenStack (RHOSP)、Amazon Web Services および Microsoft AzureOpenShift Container Platform 4.x のテスト済み Cloud Platform : Amazon Web Services、Microsoft Azure および Google Cloud Platform

| 最大値のタイプ | 3.x テスト済みの最大値 | 4.x テスト済みの最大値 |

|---|---|---|

| ノード数 | 2,000 | 2,000 |

| Pod 数 [1] | 150,000 | 150,000 |

| ノードあたりの Pod 数 | 250 | 500 [2] |

| コアあたりの Pod 数 | デフォルト値はありません。 | デフォルト値はありません。 |

| namespace 数 [3] | 10,000 | 10,000 |

| ビルド数 | 10,000(デフォルト Pod RAM 512 Mi)- Pipeline ストラテジー | 10,000(デフォルト Pod RAM 512 Mi)- Source-to-Image (S2I) ビルドストラテジー |

| namespace ごとの Pod 数 [4] | 25,000 | 25,000 |

| Ingress Controller ごとのルートとバックエンドの数 | ルーターあたり 2,000 | ルーターあたり 2,000 |

| シークレットの数 | 80,000 | 80,000 |

| config map の数 | 90,000 | 90,000 |

| サービス数 [5] | 10,000 | 10,000 |

| namespace ごとのサービス数 | 5,000 | 5,000 |

| サービスごとのバックエンド数 | 5,000 | 5,000 |

| namespace ごとのデプロイメント数 [4] | 2,000 | 2,000 |

| ビルド設定の数 | 12,000 | 12,000 |

| シークレットの数 | 40,000 | 40,000 |

| カスタムリソース定義 (CRD) の数 | デフォルト値はありません。 | 512 [6] |

- ここで表示される Pod 数はテスト用の Pod 数です。実際の Pod 数は、アプリケーションのメモリー、CPU、ストレージ要件により異なります。

-

これは、ワーカーノードごとに 500 の Pod を持つ 100 ワーカーノードを含むクラスターでテストされています。デフォルトの

maxPodsは 250 です。500maxPodsに到達するには、クラスターはカスタム kubelet 設定を使用し、maxPodsが500に設定された状態で作成される必要があります。500 ユーザー Pod が必要な場合は、ノード上に 10-15 のシステム Pod がすでに実行されているため、hostPrefixが22である必要があります。永続ボリューム要求 (PVC) が割り当てられている Pod の最大数は、PVC の割り当て元のストレージバックエンドによって異なります。このテストでは、OpenShift Container Storage (OCS v4) のみが本書で説明されているノードごとの Pod 数に対応することができました。 - 有効なプロジェクトが多数ある場合、キースペースが過剰に拡大し、スペースのクォータを超過すると、etcd はパフォーマンスの低下による影響を受ける可能性があります。etcd ストレージを解放するために、デフラグを含む etcd の定期的なメンテナンスを行うことを強くお勧めします。

- システムには、状態の変更に対する対応として特定の namespace にある全オブジェクトに対して反復する多数のコントロールループがあります。単一の namespace に特定タイプのオブジェクトの数が多くなると、ループのコストが上昇し、特定の状態変更を処理する速度が低下します。この制限については、アプリケーションの各種要件を満たすのに十分な CPU、メモリー、およびディスクがシステムにあることが前提となっています。

- 各サービスポートと各サービスのバックエンドには、iptables の対応するエントリーがあります。特定のサービスのバックエンド数は、エンドポイントのオブジェクトサイズに影響があり、その結果、システム全体に送信されるデータサイズにも影響を与えます。

-

OpenShift Container Platform には、OpenShift Container Platform によってインストールされたもの、OpenShift Container Platform と統合された製品、およびユーザー作成の CRD を含め、合計 512 のカスタムリソース定義 (CRD) の制限があります。512 を超える CRD が作成されている場合は、

ocコマンドリクエストのスロットリングが適用される可能性があります。

Red Hat は、OpenShift Container Platform クラスターのサイズ設定に関する直接的なガイダンスを提供していません。これは、クラスターが OpenShift Container Platform のサポート範囲内にあるかどうかを判断するには、クラスターのスケールを制限するすべての多次元な要因を慎重に検討する必要があるためです。

10.2. クラスターの最大値がテスト済みの OpenShift Container Platform 環境および設定

AWS クラウドプラットフォーム:

| ノード | フレーバー | vCPU | RAM(GiB) | ディスクタイプ | ディスクサイズ (GiB)/IOS | カウント | リージョン |

|---|---|---|---|---|---|---|---|

| マスター/etcd [1] | r5.4xlarge | 16 | 128 | io1 | 220 / 3000 | 3 | us-west-2 |

| インフラ [2] | m5.12xlarge | 48 | 192 | gp2 | 100 | 3 | us-west-2 |

| ワークロード [3] | m5.4xlarge | 16 | 64 | gp2 | 500 [4] | 1 | us-west-2 |

| ワーカー | m5.2xlarge | 8 | 32 | gp2 | 100 | 3/25/250/500 [5] | us-west-2 |

- 3000 IOPS を持つ io1 ディスクは、etcd が I/O 集約型であり、かつレイテンシーの影響を受けやすいため、マスター/etcd ノードに使用されます。

- インフラストラクチャーノードは、モニターリング、Ingress およびレジストリーコンポーネントをホストするために使用され、これにより、それらが大規模に実行する場合に必要とするリソースを十分に確保することができます。

- ワークロードノードは、パフォーマンスとスケーラビリティーのワークロードジェネレーターを実行するための専用ノードです。

- パフォーマンスおよびスケーラビリティーのテストの実行中に収集される大容量のデータを保存するのに十分な領域を確保できるように、大きなディスクサイズが使用されます。

- クラスターは反復的にスケーリングされ、パフォーマンスおよびスケーラビリティーテストは指定されたノード数で実行されます。

10.3. テスト済みのクラスターの最大値に基づく環境計画

ノード上で物理リソースを過剰にサブスクライブすると、Kubernetes スケジューラーが Pod の配置時に行うリソースの保証に影響が及びます。メモリースワップを防ぐために実行できる処置について確認してください。

一部のテスト済みの最大値については、単一の namespace/ユーザーが作成するオブジェクトでのみ変更されます。これらの制限はクラスター上で数多くのオブジェクトが実行されている場合には異なります。

本書に記載されている数は、Red Hat のテスト方法、セットアップ、設定、およびチューニングに基づいています。これらの数は、独自のセットアップおよび環境に応じて異なります。

環境の計画時に、ノードに配置できる Pod 数を判別します。

required pods per cluster / pods per node = total number of nodes neededノードあたりの現在の Pod の最大数は 250 です。ただし、ノードに適合する Pod 数はアプリケーション自体によって異なります。アプリケーション要件に合わせて環境計画を立てる方法で説明されているように、アプリケーションのメモリー、CPU およびストレージの要件を検討してください。

シナリオ例

クラスターごとに 2200 の Pod のあるクラスターのスコープを設定する場合、ノードごとに最大 500 の Pod があることを前提として、最低でも 5 つのノードが必要になります。

2200 / 500 = 4.4ノード数を 20 に増やす場合は、Pod 配分がノードごとに 110 の Pod に変わります。

2200 / 20 = 110ここで、

required pods per cluster / total number of nodes = expected pods per node10.4. アプリケーション要件に合わせて環境計画を立てる方法

アプリケーション環境の例を考えてみましょう。

| Pod タイプ | Pod 数 | 最大メモリー | CPU コア数 | 永続ストレージ |

|---|---|---|---|---|

| apache | 100 | 500 MB | 0.5 | 1 GB |

| node.js | 200 | 1 GB | 1 | 1 GB |

| postgresql | 100 | 1 GB | 2 | 10 GB |

| JBoss EAP | 100 | 1 GB | 1 | 1 GB |

推定要件: CPU コア 550 個、メモリー 450GB およびストレージ 1.4TB

ノードのインスタンスサイズは、希望に応じて増減を調整できます。ノードのリソースはオーバーコミットされることが多く、デプロイメントシナリオでは、小さいノードで数を増やしたり、大きいノードで数を減らしたりして、同じリソース量を提供することもできます。このデプロイメントシナリオでは、小さいノードで数を増やしたり、大きいノードで数を減らしたりして、同じリソース量を提供することもできます。運用上の敏捷性やインスタンスあたりのコストなどの要因を考慮する必要があります。

| ノードのタイプ | 数量 | CPU | RAM (GB) |

|---|---|---|---|

| ノード (オプション 1) | 100 | 4 | 16 |

| ノード (オプション 2) | 50 | 8 | 32 |

| ノード (オプション 3) | 25 | 16 | 64 |

アプリケーションによってはオーバーコミットの環境に適しているものもあれば、そうでないものもあります。たとえば、Java アプリケーションや Huge Page を使用するアプリケーションの多くは、オーバーコミットに対応できません。対象のメモリーは、他のアプリケーションに使用できません。上記の例では、環境は一般的な比率として約 30 % オーバーコミットされています。

アプリケーション Pod は環境変数または DNS のいずれかを使用してサービスにアクセスできます。環境変数を使用する場合、それぞれのアクティブなサービスについて、変数が Pod がノードで実行される際に kubelet によって挿入されます。クラスター対応の DNS サーバーは、Kubernetes API で新規サービスの有無を監視し、それぞれに DNS レコードのセットを作成します。DNS がクラスター全体で有効にされている場合、すべての Pod は DNS 名でサービスを自動的に解決できるはずです。DNS を使用したサービス検出は、5000 サービスを超える使用できる場合があります。サービス検出に環境変数を使用する場合、引数の一覧は namespace で 5000 サービスを超える場合の許可される長さを超えると、Pod およびデプロイメントは失敗します。デプロイメントのサービス仕様ファイルのサービスリンクを無効にして、以下を解消します。

---

apiVersion: template.openshift.io/v1

kind: Template

metadata:

name: deployment-config-template

creationTimestamp:

annotations:

description: This template will create a deploymentConfig with 1 replica, 4 env vars and a service.

tags: ''

objects:

- apiVersion: apps.openshift.io/v1

kind: DeploymentConfig

metadata:

name: deploymentconfig${IDENTIFIER}

spec:

template:

metadata:

labels:

name: replicationcontroller${IDENTIFIER}

spec:

enableServiceLinks: false

containers:

- name: pause${IDENTIFIER}

image: "${IMAGE}"

ports:

- containerPort: 8080

protocol: TCP

env:

- name: ENVVAR1_${IDENTIFIER}

value: "${ENV_VALUE}"

- name: ENVVAR2_${IDENTIFIER}

value: "${ENV_VALUE}"

- name: ENVVAR3_${IDENTIFIER}

value: "${ENV_VALUE}"

- name: ENVVAR4_${IDENTIFIER}

value: "${ENV_VALUE}"

resources: {}

imagePullPolicy: IfNotPresent

capabilities: {}

securityContext:

capabilities: {}

privileged: false

restartPolicy: Always

serviceAccount: ''

replicas: 1

selector:

name: replicationcontroller${IDENTIFIER}

triggers:

- type: ConfigChange

strategy:

type: Rolling

- apiVersion: v1

kind: Service

metadata:

name: service${IDENTIFIER}

spec:

selector:

name: replicationcontroller${IDENTIFIER}

ports:

- name: serviceport${IDENTIFIER}

protocol: TCP

port: 80

targetPort: 8080

portalIP: ''

type: ClusterIP

sessionAffinity: None

status:

loadBalancer: {}

parameters:

- name: IDENTIFIER

description: Number to append to the name of resources

value: '1'

required: true

- name: IMAGE

description: Image to use for deploymentConfig

value: gcr.io/google-containers/pause-amd64:3.0

required: false

- name: ENV_VALUE

description: Value to use for environment variables

generate: expression

from: "[A-Za-z0-9]{255}"

required: false

labels:

template: deployment-config-template

namespace で実行できるアプリケーション Pod の数は、環境変数がサービス検出に使用される場合にサービスの数およびサービス名の長さによって異なります。システムの ARG_MAX は、新規プロセスの引数の最大の長さを定義し、デフォルトで 2097152 KiB に設定されます。Kubelet は、以下を含む namespace で実行するようにスケジュールされる各 Pod に環境変数を挿入します。

-

<SERVICE_NAME>_SERVICE_HOST=<IP> -

<SERVICE_NAME>_SERVICE_PORT=<PORT> -

<SERVICE_NAME>_PORT=tcp://<IP>:<PORT> -

<SERVICE_NAME>_PORT_<PORT>_TCP=tcp://<IP>:<PORT> -

<SERVICE_NAME>_PORT_<PORT>_TCP_PROTO=tcp -

<SERVICE_NAME>_PORT_<PORT>_TCP_PORT=<PORT> -

<SERVICE_NAME>_PORT_<PORT>_TCP_ADDR=<ADDR>

引数の長さが許可される値を超え、サービス名の文字数がこれに影響する場合、namespace の Pod は起動に失敗し始めます。たとえば、5000 サービスを含む namespace では、サービス名の制限は 33 文字であり、これにより namespace で 5000 Pod を実行できます。

第11章 ストレージの最適化

ストレージを最適化すると、すべてのリソースでストレージの使用を最小限に抑えることができます。管理者は、ストレージを最適化することで、既存のストレージリソースが効率的に機能できるようにすることができます。

11.1. 利用可能な永続ストレージオプション

永続ストレージオプションについて理解し、OpenShift Container Platform 環境を最適化できるようにします。

| ストレージタイプ | 説明 | 例 |

|---|---|---|

| ブロック |