네트워킹

AWS 네트워킹에서 Red Hat OpenShift Service 구성

초록

1장. 네트워킹 정보

Red Hat OpenShift Networking은 클러스터가 하나 이상의 하이브리드 클러스터의 네트워크 트래픽을 관리하는 데 필요한 고급 네트워킹 관련 기능을 사용하여 Kubernetes 네트워킹을 개선하는 기능, 플러그인 및 고급 네트워킹 기능으로 구성된 에코시스템입니다. 이 네트워킹 기능의 에코시스템은 수신, 송신, 로드 밸런싱, 고성능 처리량, 보안 및 클러스터 내부 트래픽 관리를 통합합니다. Red Hat OpenShift Networking 에코시스템은 또한 역할 기반 관찰 툴을 제공하여 자연의 복잡성을 줄일 수 있습니다.

다음은 클러스터에서 사용할 수 있는 가장 일반적으로 사용되는 Red Hat OpenShift Networking 기능 중 일부입니다.

- 네트워크 플러그인 관리를 위한 Cluster Network Operator

다음 CNI(Container Network Interface) 플러그인에서 제공하는 기본 클러스터 네트워크입니다.

- 기본 CNI 플러그인인 OVN-Kubernetes 네트워크 플러그인 .

- OpenShift SDN 네트워크 플러그인 - OpenShift 4.16에서 더 이상 사용되지 않고 OpenShift 4.17에서 제거되었습니다.

OpenShift SDN 네트워크 플러그인으로 구성된 ROSA(classic architecture) 클러스터를 버전 4.17로 업그레이드하기 전에 OVN-Kubernetes 네트워크 플러그인으로 마이그레이션해야 합니다. 자세한 내용은 추가 리소스 섹션의 OpenShift SDN 네트워크 플러그인에서 OVN-Kubernetes 네트워크 플러그인으로 마이그레이션 을 참조하십시오.

추가 리소스

2장. Networking Operator

2.1. AWS Load Balancer Operator

AWS Load Balancer Operator는 Red Hat에서 지원하는 Operator로, 사용자가 선택적으로 AWS(ROSA) 클러스터의 SRE 관리 Red Hat OpenShift Service에 설치할 수 있습니다. AWS Load Balancer Operator는 ROSA 클러스터에서 실행되는 애플리케이션에 대해 AWS Elastic Load Balancing v2(ELBv2) 서비스를 프로비저닝하는 AWS Load Balancer 컨트롤러의 라이프사이클을 관리합니다.

AWS Load Balancer Operator에서 생성한 로드 밸런서는 OpenShift 경로에 사용할 수 없으며 OpenShift 경로 의 전체 계층 7 기능이 필요하지 않은 개별 서비스 또는 인그레스 리소스에만 사용해야 합니다.

AWS Load Balancer 컨트롤러는 AWS(ROSA) 클러스터에서 Red Hat OpenShift Service의 AWS Elastic Load Balancer를 관리합니다. 컨트롤러는 LoadBalancer 유형의 Kubernetes 서비스 리소스를 구현할 때 Kubernetes Ingress 리소스 및 AWS NLB(Network Load Balancer)를 생성할 때 AWS Application Load Balancer( ALB )를 프로비저닝합니다.

기본 AWS in-tree 로드 밸런서 공급자와 비교하여 이 컨트롤러는 ALB 및 NLB 모두에 대한 고급 주석으로 개발됩니다. 일부 고급 사용 사례는 다음과 같습니다.

- ALB에서 네이티브 Kubernetes Ingress 오브젝트 사용

- ALB를 AWS Web Application Firewall(WAF) 서비스와 통합

- 사용자 정의 NLB 소스 IP 범위 지정

- 사용자 정의 NLB 내부 IP 주소 지정

AWS Load Balancer Operator 는 ROSA 클러스터에서 aws-load-balancer-controller 인스턴스를 설치, 관리 및 구성하는 데 사용됩니다.

2.1.1. AWS Load Balancer Operator를 설치하도록 환경 설정

AWS Load Balancer Operator에는 여러 가용 영역(AZ)이 있는 클러스터와 클러스터와 동일한 가상 프라이빗 클라우드(VPC)의 3개의 퍼블릭 서브넷이 분할되어야 합니다.

이러한 요구 사항으로 인해 많은 PrivateLink 클러스터에 AWS Load Balancer Operator가 적합하지 않을 수 있습니다. AWS NLB에는 이러한 제한이 없습니다.

AWS Load Balancer Operator를 설치하기 전에 다음을 구성해야 합니다.

- 여러 가용성 영역이 있는 ROSA(클래식 아키텍처) 클러스터

- BYO VPC 클러스터

- AWS CLI

- OC CLI

2.1.1.1. AWS Load Balancer Operator 환경 설정

선택 사항: 임시 환경 변수를 설정하여 설치 명령을 간소화할 수 있습니다.

환경 변수를 사용하지 않으려면 코드 조각에 묻는 값을 수동으로 입력합니다.

프로세스

admin 사용자로 클러스터에 로그인한 후 다음 명령을 실행합니다.

$ export CLUSTER_NAME=$(oc get infrastructure cluster -o=jsonpath="{.status.infrastructureName}") $ export REGION=$(oc get infrastructure cluster -o=jsonpath="{.status.platformStatus.aws.region}") $ export OIDC_ENDPOINT=$(oc get authentication.config.openshift.io cluster -o jsonpath='{.spec.serviceAccountIssuer}' | sed 's|^https://||') $ export AWS_ACCOUNT_ID=$(aws sts get-caller-identity --query Account --output text) $ export SCRATCH="/tmp/${CLUSTER_NAME}/alb-operator" $ mkdir -p ${SCRATCH}다음 명령을 실행하여 변수가 설정되었는지 확인할 수 있습니다.

$ echo "Cluster name: ${CLUSTER_NAME}, Region: ${REGION}, OIDC Endpoint: ${OIDC_ENDPOINT}, AWS Account ID: ${AWS_ACCOUNT_ID}"출력 예

Cluster name: <cluster_id>, Region: us-east-2, OIDC Endpoint: oidc.op1.openshiftapps.com/<oidc_id>, AWS Account ID: <aws_id>

2.1.1.2. AWS VPC 및 서브넷

AWS Load Balancer Operator를 설치하려면 먼저 AWS VPC 리소스에 태그를 지정해야 합니다.

프로세스

ROSA 배포의 적절한 값으로 환경 변수를 설정합니다.

$ export VPC_ID=<vpc-id> $ export PUBLIC_SUBNET_IDS="<public-subnet-a-id> <public-subnet-b-id> <public-subnet-c-id>" $ export PRIVATE_SUBNET_IDS="<private-subnet-a-id> <private-subnet-b-id> <private-subnet-c-id>"클러스터 이름을 사용하여 클러스터의 VPC에 태그를 추가합니다.

$ aws ec2 create-tags --resources ${VPC_ID} --tags Key=kubernetes.io/cluster/${CLUSTER_NAME},Value=owned --region ${REGION}퍼블릭 서브넷에 태그를 추가합니다.

$ aws ec2 create-tags \ --resources ${PUBLIC_SUBNET_IDS} \ --tags Key=kubernetes.io/role/elb,Value='' \ --region ${REGION}프라이빗 서브넷에 태그를 추가합니다.

$ aws ec2 create-tags \ --resources ${PRIVATE_SUBNET_IDS} \ --tags Key=kubernetes.io/role/internal-elb,Value='' \ --region ${REGION}

- 여러 가용성 영역이 있는 ROSA 클래식 클러스터를 설정하려면 기본 옵션을 사용하여 STS를 사용하여 ROSA 클러스터 생성을참조하십시오.

2.1.2. AWS Load Balancer Operator 설치

클러스터로 환경을 설정한 후 CLI를 사용하여 AWS Load Balancer Operator를 설치할 수 있습니다.

프로세스

AWS Load Balancer Operator에 대한 클러스터 내에 새 프로젝트를 생성합니다.

$ oc new-project aws-load-balancer-operatorAWS Load Balancer Controller에 대한 AWS IAM 정책을 생성합니다.

참고업스트림 AWS Load Balancer 컨트롤러 정책에서 AWS IAM 정책을 찾을 수 있습니다. 이 정책에는 Operator가 작동하는 데 필요한 모든 권한이 포함됩니다.

$ POLICY_ARN=$(aws iam list-policies --query \ "Policies[?PolicyName=='aws-load-balancer-operator-policy'].{ARN:Arn}" \ --output text) $ if [[ -z "${POLICY_ARN}" ]]; then wget -O "${SCRATCH}/load-balancer-operator-policy.json" \ https://raw.githubusercontent.com/rh-mobb/documentation/main/content/rosa/aws-load-balancer-operator/load-balancer-operator-policy.json POLICY_ARN=$(aws --region "$REGION" --query Policy.Arn \ --output text iam create-policy \ --policy-name aws-load-balancer-operator-policy \ --policy-document "file://${SCRATCH}/load-balancer-operator-policy.json") fi $ echo $POLICY_ARNAWS Load Balancer Operator에 대한 AWS IAM 신뢰 정책을 생성합니다.

$ cat <<EOF > "${SCRATCH}/trust-policy.json" { "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Condition": { "StringEquals" : { "${OIDC_ENDPOINT}:sub": ["system:serviceaccount:aws-load-balancer-operator:aws-load-balancer-operator-controller-manager", "system:serviceaccount:aws-load-balancer-operator:aws-load-balancer-controller-cluster"] } }, "Principal": { "Federated": "arn:aws:iam::$AWS_ACCOUNT_ID:oidc-provider/${OIDC_ENDPOINT}" }, "Action": "sts:AssumeRoleWithWebIdentity" } ] } EOFAWS Load Balancer Operator에 대한 AWS IAM 역할을 생성합니다.

$ ROLE_ARN=$(aws iam create-role --role-name "${ROSA_CLUSTER_NAME}-alb-operator" \ --assume-role-policy-document "file://${SCRATCH}/trust-policy.json" \ --query Role.Arn --output text) $ echo $ROLE_ARN $ aws iam attach-role-policy --role-name "${ROSA_CLUSTER_NAME}-alb-operator" \ --policy-arn $POLICY_ARNAWS Load Balancer Operator가 새로 생성된 AWS IAM 역할을 가정할 시크릿을 생성합니다.

$ cat << EOF | oc apply -f - apiVersion: v1 kind: Secret metadata: name: aws-load-balancer-operator namespace: aws-load-balancer-operator stringData: credentials: | [default] role_arn = $ROLE_ARN web_identity_token_file = /var/run/secrets/openshift/serviceaccount/token EOFAWS Load Balancer Operator를 설치합니다.

$ cat << EOF | oc apply -f - apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: aws-load-balancer-operator namespace: aws-load-balancer-operator spec: upgradeStrategy: Default --- apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: aws-load-balancer-operator namespace: aws-load-balancer-operator spec: channel: stable-v1.0 installPlanApproval: Automatic name: aws-load-balancer-operator source: redhat-operators sourceNamespace: openshift-marketplace startingCSV: aws-load-balancer-operator.v1.0.0 EOFOperator를 사용하여 AWS Load Balancer 컨트롤러 인스턴스를 배포합니다.

참고여기에서 오류가 발생하면 1분 정도 기다린 후 다시 시도하면 Operator가 아직 설치를 완료하지 않은 것입니다.

$ cat << EOF | oc apply -f - apiVersion: networking.olm.openshift.io/v1 kind: AWSLoadBalancerController metadata: name: cluster spec: credentials: name: aws-load-balancer-operator EOFOperator 및 컨트롤러 Pod가 둘 다 실행 중인지 확인합니다.

$ oc -n aws-load-balancer-operator get pods잠시 대기하지 않고 다시 시도하면 다음이 표시됩니다.

NAME READY STATUS RESTARTS AGE aws-load-balancer-controller-cluster-6ddf658785-pdp5d 1/1 Running 0 99s aws-load-balancer-operator-controller-manager-577d9ffcb9-w6zqn 2/2 Running 0 2m4s

2.1.2.1. 배포 검증

새 프로젝트를 생성합니다.

$ oc new-project hello-worldhello world 애플리케이션을 배포합니다.

$ oc new-app -n hello-world --image=docker.io/openshift/hello-openshiftAWS ALB에 연결하도록 NodePort 서비스를 구성합니다.

$ cat << EOF | oc apply -f - apiVersion: v1 kind: Service metadata: name: hello-openshift-nodeport namespace: hello-world spec: ports: - port: 80 targetPort: 8080 protocol: TCP type: NodePort selector: deployment: hello-openshift EOFAWS Load Balancer Operator를 사용하여 AWS ALB를 배포합니다.

$ cat << EOF | oc apply -f - apiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: hello-openshift-alb namespace: hello-world annotations: alb.ingress.kubernetes.io/scheme: internet-facing spec: ingressClassName: alb rules: - http: paths: - path: / pathType: Exact backend: service: name: hello-openshift-nodeport port: number: 80 EOFAWS ALB Ingress 끝점을 curl하여 hello world 애플리케이션에 액세스할 수 있는지 확인합니다.

참고AWS ALB 프로비저닝에는 몇 분이 걸립니다.

curl: (6) 호스트를 해결할 수 없는오류가 발생하면 기다렸다가 다시 시도하십시오.$ INGRESS=$(oc -n hello-world get ingress hello-openshift-alb \ -o jsonpath='{.status.loadBalancer.ingress[0].hostname}') $ curl "http://${INGRESS}"출력 예

Hello OpenShift!hello world 애플리케이션에 사용할 AWS NLB를 배포합니다.

$ cat << EOF | oc apply -f - apiVersion: v1 kind: Service metadata: name: hello-openshift-nlb namespace: hello-world annotations: service.beta.kubernetes.io/aws-load-balancer-type: external service.beta.kubernetes.io/aws-load-balancer-nlb-target-type: instance service.beta.kubernetes.io/aws-load-balancer-scheme: internet-facing spec: ports: - port: 80 targetPort: 8080 protocol: TCP type: LoadBalancer selector: deployment: hello-openshift EOFAWS NLB 끝점을 테스트합니다.

참고NLB 프로비저닝에는 몇 분이 걸립니다.

curl: (6) 호스트를 해결할 수 없는오류가 발생하면 기다렸다가 다시 시도하십시오.$ NLB=$(oc -n hello-world get service hello-openshift-nlb \ -o jsonpath='{.status.loadBalancer.ingress[0].hostname}') $ curl "http://${NLB}"출력 예

Hello OpenShift!

2.1.3. AWS Load Balancer Operator 설치 예 삭제

hello world 애플리케이션 네임스페이스(및 네임스페이스의 모든 리소스)를 삭제합니다.

$ oc delete project hello-worldAWS Load Balancer Operator 및 AWS IAM 역할을 삭제합니다.

$ oc delete subscription aws-load-balancer-operator -n aws-load-balancer-operator $ aws iam detach-role-policy \ --role-name "${ROSA_CLUSTER_NAME}-alb-operator" \ --policy-arn $POLICY_ARN $ aws iam delete-role \ --role-name "${ROSA_CLUSTER_NAME}-alb-operator"AWS IAM 정책을 삭제합니다.

$ aws iam delete-policy --policy-arn $POLICY_ARN

2.2. AWS의 Red Hat OpenShift Service의 DNS Operator

AWS의 Red Hat OpenShift Service에서 DNS Operator는 CoreDNS 인스턴스를 배포 및 관리하여 클러스터 내부 Pod에 이름 확인 서비스를 제공하고 DNS 기반 Kubernetes 서비스 검색을 활성화하며 내부 cluster.local 이름을 확인합니다.

2.2.1. DNS 전달 사용

DNS 전달을 사용하여 다음과 같은 방법으로 /etc/resolv.conf 파일의 기본 전달 구성을 덮어쓸 수 있습니다.

모든 영역에 대해 이름 서버(

spec.servers)를 지정합니다. 전달된 영역이 AWS의 Red Hat OpenShift Service에서 관리하는 인그레스 도메인인 경우 도메인에 대한 업스트림 이름 서버를 인증해야 합니다.중요하나 이상의 영역을 지정해야 합니다. 그렇지 않으면 클러스터가 기능을 손실할 수 있습니다.

-

업스트림 DNS 서버 목록 제공(

spec.upstreamResolvers). - 기본 전달 정책을 변경합니다.

기본 도메인의 DNS 전달 구성에는 /etc/resolv.conf 파일과 업스트림 DNS 서버에 지정된 기본 서버가 모두 있을 수 있습니다.

프로세스

이름이

default인 DNS Operator 오브젝트를 수정합니다.$ oc edit dns.operator/default이전 명령을 실행한 후 Operator는

spec.servers를 기반으로 추가 서버 구성 블록을 사용하여dns-default라는 구성 맵을 생성하고 업데이트합니다.중요zones매개 변수의 값을 지정할 때 인트라넷과 같은 특정 영역으로만 전달해야 합니다. 하나 이상의 영역을 지정해야 합니다. 그렇지 않으면 클러스터가 기능을 손실할 수 있습니다.서버에 쿼리와 일치하는 영역이 없는 경우 이름 확인은 업스트림 DNS 서버로 대체됩니다.

DNS 전달 구성

apiVersion: operator.openshift.io/v1 kind: DNS metadata: name: default spec: cache: negativeTTL: 0s positiveTTL: 0s logLevel: Normal nodePlacement: {} operatorLogLevel: Normal servers: - name: example-server1 zones: - example.com2 forwardPlugin: policy: Random3 upstreams:4 - 1.1.1.1 - 2.2.2.2:5353 upstreamResolvers:5 policy: Random6 protocolStrategy: ""7 transportConfig: {}8 upstreams: - type: SystemResolvConf9 - type: Network address: 1.2.3.410 port: 5311 status: clusterDomain: cluster.local clusterIP: x.y.z.10 conditions: ...- 1

rfc6335서비스 이름 구문을 준수해야 합니다.- 2

rfc1123서비스 이름 구문의 하위 도메인 정의를 준수해야 합니다. 클러스터 도메인인cluster.local은zones필드에 대해 유효하지 않은 하위 도메인입니다.- 3

forwardPlugin에 나열된 업스트림 리졸버를 선택하는 정책을 정의합니다. 기본값은Random입니다.RoundRobin및Sequential값을 사용할 수도 있습니다.- 4

forwardPlugin당 최대 15개의업스트림이 허용됩니다.- 5

upstreamResolvers를 사용하여 기본 전달 정책을 재정의하고 기본 도메인의 지정된 DNS 확인자(업스트림 확인자)로 DNS 확인을 전달할 수 있습니다. 업스트림 확인자를 제공하지 않으면 DNS 이름 쿼리는/etc/resolv.conf에 선언된 서버로 이동합니다.- 6

- 쿼리용으로 업스트림에 나열된

업스트림서버의 순서를 결정합니다. 이러한 값 중 하나를 지정할 수 있습니다.Random,RoundRobin또는Sequential. 기본값은Sequential입니다. - 7

- 생략하면 플랫폼은 원래 클라이언트 요청의 프로토콜인 기본값을 선택합니다. 클라이언트 요청이 UDP를 사용하는 경우에도 플랫폼에서 모든 업스트림 DNS 요청에

TCP를 사용하도록 지정하려면 TCP로 설정합니다. - 8

- DNS 요청을 업스트림 확인기로 전달할 때 사용할 전송 유형, 서버 이름 및 선택적 사용자 정의 CA 또는 CA 번들을 구성하는 데 사용됩니다.

- 9

- 두 가지 유형의

업스트림을 지정할 수 있습니다 :SystemResolvConf또는Network.SystemResolvConf는/etc/resolv.conf를 사용하도록 업스트림을 구성하고Network는Networkresolver를 정의합니다. 하나 또는 둘 다를 지정할 수 있습니다. - 10

- 지정된 유형이

Network인 경우 IP 주소를 제공해야 합니다.address필드는 유효한 IPv4 또는 IPv6 주소여야 합니다. - 11

- 지정된 유형이

네트워크인 경우 선택적으로 포트를 제공할 수 있습니다.port필드에는1에서65535사이의 값이 있어야 합니다. 업스트림에 대한 포트를 지정하지 않으면 기본 포트는 853입니다.

2.3. AWS의 Red Hat OpenShift Service의 Ingress Operator

Ingress Operator는 IngressController API를 구현하며 AWS 클러스터 서비스에서 Red Hat OpenShift Service에 대한 외부 액세스를 활성화하는 구성 요소입니다.

2.3.1. AWS Ingress Operator의 Red Hat OpenShift Service

AWS 클러스터에서 Red Hat OpenShift Service를 생성하면 클러스터에서 실행되는 Pod 및 서비스에 각각 자체 IP 주소가 할당됩니다. IP 주소는 내부에서 실행되지만 외부 클라이언트가 액세스할 수 없는 다른 pod 및 서비스에 액세스할 수 있습니다.

Ingress Operator를 사용하면 라우팅을 처리하기 위해 하나 이상의 HAProxy 기반 Ingress 컨트롤러를 배포하고 관리하여 외부 클라이언트가 서비스에 액세스할 수 있습니다. Red Hat 사이트 안정성 엔지니어(SRE)는 AWS 클러스터에서 Red Hat OpenShift Service용 Ingress Operator를 관리합니다. Ingress Operator의 설정을 변경할 수는 없지만 기본 Ingress 컨트롤러 구성, 상태 및 로그와 Ingress Operator 상태를 볼 수 있습니다.

2.3.2. Ingress 구성 자산

설치 프로그램은 config.openshift.io API 그룹인 cluster-ingress-02-config.yml에 Ingress 리소스가 포함된 자산을 생성합니다.

Ingress 리소스의 YAML 정의

apiVersion: config.openshift.io/v1

kind: Ingress

metadata:

name: cluster

spec:

domain: apps.openshiftdemos.com

설치 프로그램은 이 자산을 manifests / 디렉터리의 cluster-ingress-02-config.yml 파일에 저장합니다. 이 Ingress 리소스는 Ingress와 관련된 전체 클러스터 구성을 정의합니다. 이 Ingress 구성은 다음과 같이 사용됩니다.

- Ingress Operator는 클러스터 Ingress 구성에 설정된 도메인을 기본 Ingress 컨트롤러의 도메인으로 사용합니다.

-

OpenShift API Server Operator는 클러스터 Ingress 구성의 도메인을 사용합니다. 이 도메인은 명시적 호스트를 지정하지 않는

Route리소스에 대한 기본 호스트를 생성할 수도 있습니다.

2.3.3. Ingress 컨트롤러 구성 매개변수

IngressController CR(사용자 정의 리소스)에는 조직의 특정 요구 사항을 충족하도록 구성할 수 있는 선택적 구성 매개변수가 포함되어 있습니다.

| 매개변수 | 설명 |

|---|---|

|

|

비어 있는 경우 기본값은 |

|

|

|

|

|

클라우드 환경의 경우

다음

설정되지 않은 경우, 기본값은

|

|

|

보안에는 키와 데이터, 즉 *

설정하지 않으면 와일드카드 인증서가 자동으로 생성되어 사용됩니다. 인증서는 Ingress 컨트롤러 생성된 인증서 또는 사용자 지정 인증서는 AWS 기본 제공 OAuth 서버의 Red Hat OpenShift Service와 자동으로 통합됩니다. |

|

|

|

|

|

|

|

|

설정하지 않으면 기본값이 사용됩니다. 참고

|

|

|

설정되지 않으면, 기본값은

Ingress 컨트롤러의 최소 TLS 버전은 참고

구성된 보안 프로파일의 암호 및 최소 TLS 버전은 중요

Ingress Operator는 |

|

|

|

|

|

|

|

|

|

|

|

기본적으로 정책은

이러한 조정은 HTTP/1을 사용하는 경우에만 일반 텍스트, 에지 종료 및 재암호화 경로에 적용됩니다.

요청 헤더의 경우 이러한 조정은

|

|

|

|

|

|

|

|

|

캡처하려는 모든 쿠키의 경우 다음 매개변수가

예를 들면 다음과 같습니다. |

|

|

|

|

|

|

|

|

|

|

|

이러한 연결은 로드 밸런서 상태 프로브 또는 웹 브라우저 추측 연결(preconnect)에서 제공되며 무시해도 됩니다. 그러나 이러한 요청은 네트워크 오류로 인해 발생할 수 있으므로 이 필드를 |

2.3.3.1. Ingress 컨트롤러 TLS 보안 프로필

TLS 보안 프로필은 서버가 서버에 연결할 때 연결 클라이언트가 사용할 수 있는 암호를 규제하는 방법을 제공합니다.

2.3.3.1.1. TLS 보안 프로필 이해

TLS(Transport Layer Security) 보안 프로필을 사용하여 AWS 구성 요소의 다양한 Red Hat OpenShift Service에 필요한 TLS 암호를 정의할 수 있습니다. AWS TLS 보안 프로필의 Red Hat OpenShift Service는 Mozilla 권장 구성 을 기반으로 합니다.

각 구성 요소에 대해 다음 TLS 보안 프로필 중 하나를 지정할 수 있습니다.

| Profile | 설명 |

|---|---|

|

| 이 프로필은 레거시 클라이언트 또는 라이브러리와 함께 사용하기 위한 것입니다. 프로필은 이전 버전과의 호환성 권장 구성을 기반으로 합니다.

참고 Ingress 컨트롤러의 경우 최소 TLS 버전이 1.0에서 1.1로 변환됩니다. |

|

| 이 프로필은 Ingress 컨트롤러, kubelet 및 컨트롤 플레인의 기본 TLS 보안 프로필입니다. 프로필은 중간 호환성 권장 구성을 기반으로 합니다.

참고 이 프로필은 대부분의 클라이언트에서 권장되는 구성입니다. |

|

| 이 프로필은 이전 버전과의 호환성이 필요하지 않은 최신 클라이언트와 사용하기 위한 것입니다. 이 프로필은 최신 호환성 권장 구성을 기반으로 합니다.

|

|

| 이 프로필을 사용하면 사용할 TLS 버전과 암호를 정의할 수 있습니다. 주의

|

미리 정의된 프로파일 유형 중 하나를 사용하는 경우 유효한 프로파일 구성은 릴리스마다 변경될 수 있습니다. 예를 들어 릴리스 X.Y.Z에 배포된 중간 프로필을 사용하는 사양이 있는 경우 릴리스 X.Y.Z+1로 업그레이드하면 새 프로필 구성이 적용되어 롤아웃이 발생할 수 있습니다.

2.3.3.1.2. Ingress 컨트롤러의 TLS 보안 프로필 구성

Ingress 컨트롤러에 대한 TLS 보안 프로필을 구성하려면 IngressController CR(사용자 정의 리소스)을 편집하여 사전 정의된 또는 사용자 지정 TLS 보안 프로필을 지정합니다. TLS 보안 프로필이 구성되지 않은 경우 기본값은 API 서버에 설정된 TLS 보안 프로필을 기반으로 합니다.

Old TLS 보안 프로파일을 구성하는 샘플 IngressController CR

apiVersion: operator.openshift.io/v1

kind: IngressController

...

spec:

tlsSecurityProfile:

old: {}

type: Old

...TLS 보안 프로필은 Ingress 컨트롤러의 TLS 연결에 대한 최소 TLS 버전과 TLS 암호를 정의합니다.

Status.Tls Profile 아래의 IngressController CR(사용자 정의 리소스) 및 Spec.Tls Security Profile 아래 구성된 TLS 보안 프로필에서 구성된 TLS 보안 프로필의 암호 및 최소 TLS 버전을 확인할 수 있습니다. Custom TLS 보안 프로필의 경우 특정 암호 및 최소 TLS 버전이 두 매개변수 아래에 나열됩니다.

HAProxy Ingress 컨트롤러 이미지는 TLS 1.3 및 Modern 프로필을 지원합니다.

Ingress Operator는 Old 또는 Custom 프로파일의 TLS 1.0을 1.1로 변환합니다.

사전 요구 사항

-

cluster-admin역할의 사용자로 클러스터에 액세스할 수 있어야 합니다.

절차

openshift-ingress-operator프로젝트에서IngressControllerCR을 편집하여 TLS 보안 프로필을 구성합니다.$ oc edit IngressController default -n openshift-ingress-operatorspec.tlsSecurityProfile필드를 추가합니다.Custom프로필에 대한IngressControllerCR 샘플apiVersion: operator.openshift.io/v1 kind: IngressController ... spec: tlsSecurityProfile: type: Custom1 custom:2 ciphers:3 - ECDHE-ECDSA-CHACHA20-POLY1305 - ECDHE-RSA-CHACHA20-POLY1305 - ECDHE-RSA-AES128-GCM-SHA256 - ECDHE-ECDSA-AES128-GCM-SHA256 minTLSVersion: VersionTLS11 ...- 파일을 저장하여 변경 사항을 적용합니다.

검증

IngressControllerCR에 프로파일이 설정되어 있는지 확인합니다.$ oc describe IngressController default -n openshift-ingress-operator출력 예

Name: default Namespace: openshift-ingress-operator Labels: <none> Annotations: <none> API Version: operator.openshift.io/v1 Kind: IngressController ... Spec: ... Tls Security Profile: Custom: Ciphers: ECDHE-ECDSA-CHACHA20-POLY1305 ECDHE-RSA-CHACHA20-POLY1305 ECDHE-RSA-AES128-GCM-SHA256 ECDHE-ECDSA-AES128-GCM-SHA256 Min TLS Version: VersionTLS11 Type: Custom ...

2.3.3.1.3. 상호 TLS 인증 구성

spec.clientTLS 값을 설정하여 mTLS(mTLS) 인증을 사용하도록 Ingress 컨트롤러를 구성할 수 있습니다. clientTLS 값은 클라이언트 인증서를 확인하도록 Ingress 컨트롤러를 구성합니다. 이 구성에는 구성 맵에 대한 참조인 clientCA 값 설정이 포함됩니다. 구성 맵에는 클라이언트의 인증서를 확인하는 데 사용되는 PEM 인코딩 CA 인증서 번들이 포함되어 있습니다. 필요한 경우 인증서 제목 필터 목록을 구성할 수도 있습니다.

clientCA 값이 X509v3 인증서 취소 목록(CRL) 배포 지점을 지정하는 경우 Ingress Operator는 각 인증서에 지정된 HTTP URI X509v3 CRL Distribution Point 를 기반으로 CRL 구성 맵을 다운로드하고 관리합니다. Ingress 컨트롤러는 mTLS/TLS 협상 중에 이 구성 맵을 사용합니다. 유효한 인증서를 제공하지 않는 요청은 거부됩니다.

사전 요구 사항

-

cluster-admin역할의 사용자로 클러스터에 액세스할 수 있어야 합니다. - PEM 인코딩 CA 인증서 번들이 있습니다.

CA 번들이 CRL 배포 지점을 참조하는 경우 클라이언트 CA 번들에 엔드센티 또는 리프 인증서도 포함해야 합니다. RFC 5280에 설명된 대로 이 인증서에는

CRL 배포 지점아래에 HTTP URI가 포함되어 있어야 합니다. 예를 들면 다음과 같습니다.Issuer: C=US, O=Example Inc, CN=Example Global G2 TLS RSA SHA256 2020 CA1 Subject: SOME SIGNED CERT X509v3 CRL Distribution Points: Full Name: URI:http://crl.example.com/example.crl

절차

openshift-config네임스페이스에서 CA 번들에서 구성 맵을 생성합니다.$ oc create configmap \ router-ca-certs-default \ --from-file=ca-bundle.pem=client-ca.crt \1 -n openshift-config- 1

- 구성 맵 데이터 키는

ca-bundle.pem이어야 하며 데이터 값은 PEM 형식의 CA 인증서여야 합니다.

openshift-ingress-operator프로젝트에서IngressController리소스를 편집합니다.$ oc edit IngressController default -n openshift-ingress-operatorspec.clientTLS필드 및 하위 필드를 추가하여 상호 TLS를 구성합니다.패턴 필터링을 지정하는

clientTLS프로필에 대한IngressControllerCR 샘플apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: clientTLS: clientCertificatePolicy: Required clientCA: name: router-ca-certs-default allowedSubjectPatterns: - "^/CN=example.com/ST=NC/C=US/O=Security/OU=OpenShift$"-

선택 사항, 다음 명령을 입력하여

allowedSubjectPatterns에 대한 Distinguished Name (DN)을 가져옵니다.

$ openssl x509 -in custom-cert.pem -noout -subject

subject= /CN=example.com/ST=NC/C=US/O=Security/OU=OpenShift2.3.4. 기본 Ingress 컨트롤러 보기

Ingress Operator는 AWS에서 Red Hat OpenShift Service의 핵심 기능이며 즉시 활성화됩니다.

AWS 설치 시 모든 새로운 Red Hat OpenShift Service에는 이름이 default인 ingresscontroller 가 있습니다. 추가 Ingress 컨트롤러를 추가할 수 있습니다. 기본 ingresscontroller가 삭제되면 Ingress Operator가 1분 이내에 자동으로 다시 생성합니다.

프로세스

기본 Ingress 컨트롤러를 확인합니다.

$ oc describe --namespace=openshift-ingress-operator ingresscontroller/default

2.3.5. Ingress Operator 상태 보기

Ingress Operator의 상태를 확인 및 조사할 수 있습니다.

프로세스

Ingress Operator 상태를 확인합니다.

$ oc describe clusteroperators/ingress

2.3.6. Ingress 컨트롤러 로그 보기

Ingress 컨트롤러의 로그를 확인할 수 있습니다.

프로세스

Ingress 컨트롤러 로그를 확인합니다.

$ oc logs --namespace=openshift-ingress-operator deployments/ingress-operator -c <container_name>

2.3.7. Ingress 컨트롤러 상태 보기

특정 Ingress 컨트롤러의 상태를 확인할 수 있습니다.

프로세스

Ingress 컨트롤러의 상태를 확인합니다.

$ oc describe --namespace=openshift-ingress-operator ingresscontroller/<name>

2.3.8. 사용자 정의 Ingress 컨트롤러 생성

클러스터 관리자는 새 사용자 정의 Ingress 컨트롤러를 생성할 수 있습니다. 기본 Ingress 컨트롤러는 AWS의 Red Hat OpenShift Service 중에 변경될 수 있으므로 사용자 정의 Ingress 컨트롤러를 생성하면 클러스터 업데이트 전체에서 지속되는 구성을 수동으로 유지 관리할 때 유용할 수 있습니다.

이 예에서는 사용자 정의 Ingress 컨트롤러에 대한 최소 사양을 제공합니다. 사용자 정의 Ingress 컨트롤러를 추가로 사용자 지정하려면 " Ingress 컨트롤러 구성"을 참조하십시오.

사전 요구 사항

-

OpenShift CLI(

oc)를 설치합니다. -

cluster-admin권한이 있는 사용자로 로그인합니다.

절차

사용자 지정

IngressController오브젝트를 정의하는 YAML 파일을 생성합니다.custom-ingress-controller.yaml파일 예apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: <custom_name>1 namespace: openshift-ingress-operator spec: defaultCertificate: name: <custom-ingress-custom-certs>2 replicas: 13 domain: <custom_domain>4 다음 명령을 실행하여 오브젝트를 생성합니다.

$ oc create -f custom-ingress-controller.yaml

2.3.9. Ingress 컨트롤러 구성

2.3.9.1. 사용자 정의 기본 인증서 설정

관리자는 Secret 리소스를 생성하고 IngressController CR(사용자 정의 리소스)을 편집하여 사용자 정의 인증서를 사용하도록 Ingress 컨트롤러를 구성할 수 있습니다.

사전 요구 사항

- PEM 인코딩 파일에 인증서/키 쌍이 있어야 합니다. 이때 인증서는 신뢰할 수 있는 인증 기관 또는 사용자 정의 PKI에서 구성한 신뢰할 수 있는 개인 인증 기관의 서명을 받은 인증서입니다.

인증서가 다음 요구 사항을 충족합니다.

- 인증서가 Ingress 도메인에 유효해야 합니다.

-

인증서는

subjectAltName확장자를 사용하여*.apps.ocp4.example.com과같은 와일드카드 도메인을 지정합니다.

IngressControllerCR이 있어야 합니다. 기본 설정을 사용할 수 있어야 합니다.$ oc --namespace openshift-ingress-operator get ingresscontrollers출력 예

NAME AGE default 10m

임시 인증서가 있는 경우 사용자 정의 기본 인증서가 포함 된 보안의 tls.crt 파일에 인증서가 포함되어 있어야 합니다. 인증서를 지정하는 경우에는 순서가 중요합니다. 서버 인증서 다음에 임시 인증서를 나열해야 합니다.

절차

아래에서는 사용자 정의 인증서 및 키 쌍이 현재 작업 디렉터리의 tls.crt 및 tls.key 파일에 있다고 가정합니다. 그리고 tls.crt 및 tls.key의 실제 경로 이름으로 변경합니다. Secret 리소스를 생성하고 IngressController CR에서 참조하는 경우 custom-certs-default를 다른 이름으로 변경할 수도 있습니다.

이 작업을 수행하면 롤링 배포 전략에 따라 Ingress 컨트롤러가 재배포됩니다.

tls.crt및tls.key파일을 사용하여openshift-ingress네임스페이스에 사용자 정의 인증서를 포함하는 Secret 리소스를 만듭니다.$ oc --namespace openshift-ingress create secret tls custom-certs-default --cert=tls.crt --key=tls.key새 인증서 보안 키를 참조하도록 IngressController CR을 업데이트합니다.

$ oc patch --type=merge --namespace openshift-ingress-operator ingresscontrollers/default \ --patch '{"spec":{"defaultCertificate":{"name":"custom-certs-default"}}}'업데이트가 적용되었는지 확인합니다.

$ echo Q |\ openssl s_client -connect console-openshift-console.apps.<domain>:443 -showcerts 2>/dev/null |\ openssl x509 -noout -subject -issuer -enddate다음과 같습니다.

<domain>- 클러스터의 기본 도메인 이름을 지정합니다.

출력 예

subject=C = US, ST = NC, L = Raleigh, O = RH, OU = OCP4, CN = *.apps.example.com issuer=C = US, ST = NC, L = Raleigh, O = RH, OU = OCP4, CN = example.com notAfter=May 10 08:32:45 2022 GM작은 정보다음 YAML을 적용하여 사용자 지정 기본 인증서를 설정할 수 있습니다.

apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: defaultCertificate: name: custom-certs-default인증서 보안 이름은 CR을 업데이트하는 데 사용된 값과 일치해야 합니다.

IngressController CR이 수정되면 Ingress Operator는 사용자 정의 인증서를 사용하도록 Ingress 컨트롤러의 배포를 업데이트합니다.

2.3.9.2. 사용자 정의 기본 인증서 제거

관리자는 사용할 Ingress 컨트롤러를 구성한 사용자 정의 인증서를 제거할 수 있습니다.

사전 요구 사항

-

cluster-admin역할의 사용자로 클러스터에 액세스할 수 있어야 합니다. -

OpenShift CLI(

oc)가 설치되어 있습니다. - 이전에는 Ingress 컨트롤러에 대한 사용자 정의 기본 인증서를 구성했습니다.

절차

사용자 정의 인증서를 제거하고 AWS에서 Red Hat OpenShift Service와 함께 제공되는 인증서를 복원하려면 다음 명령을 입력합니다.

$ oc patch -n openshift-ingress-operator ingresscontrollers/default \ --type json -p $'- op: remove\n path: /spec/defaultCertificate'클러스터가 새 인증서 구성을 조정하는 동안 지연이 발생할 수 있습니다.

검증

원래 클러스터 인증서가 복원되었는지 확인하려면 다음 명령을 입력합니다.

$ echo Q | \ openssl s_client -connect console-openshift-console.apps.<domain>:443 -showcerts 2>/dev/null | \ openssl x509 -noout -subject -issuer -enddate다음과 같습니다.

<domain>- 클러스터의 기본 도메인 이름을 지정합니다.

출력 예

subject=CN = *.apps.<domain> issuer=CN = ingress-operator@1620633373 notAfter=May 10 10:44:36 2023 GMT

2.3.9.3. Ingress 컨트롤러 자동 스케일링

처리량 증가 요구와 같은 라우팅 성능 또는 가용성 요구 사항을 동적으로 충족하도록 Ingress 컨트롤러를 자동으로 확장할 수 있습니다.

다음 절차에서는 기본 Ingress 컨트롤러를 확장하는 예제를 제공합니다.

사전 요구 사항

-

OpenShift CLI(

oc)가 설치되어 있어야 합니다. -

cluster-admin역할의 사용자로 AWS 클러스터의 Red Hat OpenShift Service에 액세스할 수 있습니다. Custom Metrics Autoscaler Operator 및 관련 KEDA 컨트롤러가 설치되어 있습니다.

-

웹 콘솔에서 OperatorHub를 사용하여 Operator를 설치할 수 있습니다. Operator를 설치한 후

KedaController의 인스턴스를 생성할 수 있습니다.

-

웹 콘솔에서 OperatorHub를 사용하여 Operator를 설치할 수 있습니다. Operator를 설치한 후

절차

다음 명령을 실행하여 Thanos로 인증할 서비스 계정을 생성합니다.

$ oc create -n openshift-ingress-operator serviceaccount thanos && oc describe -n openshift-ingress-operator serviceaccount thanos출력 예

Name: thanos Namespace: openshift-ingress-operator Labels: <none> Annotations: <none> Image pull secrets: thanos-dockercfg-kfvf2 Mountable secrets: thanos-dockercfg-kfvf2 Tokens: <none> Events: <none>다음 명령을 사용하여 서비스 계정 시크릿 토큰을 수동으로 생성합니다.

$ oc apply -f - <<EOF apiVersion: v1 kind: Secret metadata: name: thanos-token namespace: openshift-ingress-operator annotations: kubernetes.io/service-account.name: thanos type: kubernetes.io/service-account-token EOF서비스 계정의 토큰을 사용하여

openshift-ingress-operator네임스페이스에TriggerAuthentication오브젝트를 정의합니다.TriggerAuthentication오브젝트를 생성하고secret변수 값을TOKEN매개변수에 전달합니다.$ oc apply -f - <<EOF apiVersion: keda.sh/v1alpha1 kind: TriggerAuthentication metadata: name: keda-trigger-auth-prometheus namespace: openshift-ingress-operator spec: secretTargetRef: - parameter: bearerToken name: thanos-token key: token - parameter: ca name: thanos-token key: ca.crt EOF

Thanos에서 메트릭을 읽는 역할을 생성하고 적용합니다.

Pod 및 노드에서 지표를 읽는 새 역할

thanos-metrics-reader.yaml을 생성합니다.thanos-metrics-reader.yaml

apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: name: thanos-metrics-reader namespace: openshift-ingress-operator rules: - apiGroups: - "" resources: - pods - nodes verbs: - get - apiGroups: - metrics.k8s.io resources: - pods - nodes verbs: - get - list - watch - apiGroups: - "" resources: - namespaces verbs: - get다음 명령을 실행하여 새 역할을 적용합니다.

$ oc apply -f thanos-metrics-reader.yaml

다음 명령을 입력하여 서비스 계정에 새 역할을 추가합니다.

$ oc adm policy -n openshift-ingress-operator add-role-to-user thanos-metrics-reader -z thanos --role-namespace=openshift-ingress-operator$ oc adm policy -n openshift-ingress-operator add-cluster-role-to-user cluster-monitoring-view -z thanos참고add-cluster-role-to-user인수는 네임스페이스 간 쿼리를 사용하는 경우에만 필요합니다. 다음 단계에서는 이 인수가 필요한kube-metrics네임스페이스의 쿼리를 사용합니다.기본 Ingress 컨트롤러 배포를 대상으로 하는 새 scaled

ObjectYAML 파일ingress-autoscaler.yaml을 만듭니다.scaled

Object정의의 예apiVersion: keda.sh/v1alpha1 kind: ScaledObject metadata: name: ingress-scaler namespace: openshift-ingress-operator spec: scaleTargetRef:1 apiVersion: operator.openshift.io/v1 kind: IngressController name: default envSourceContainerName: ingress-operator minReplicaCount: 1 maxReplicaCount: 202 cooldownPeriod: 1 pollingInterval: 1 triggers: - type: prometheus metricType: AverageValue metadata: serverAddress: https://thanos-querier.openshift-monitoring.svc.cluster.local:90913 namespace: openshift-ingress-operator4 metricName: 'kube-node-role' threshold: '1' query: 'sum(kube_node_role{role="worker",service="kube-state-metrics"})'5 authModes: "bearer" authenticationRef: name: keda-trigger-auth-prometheus중요네임스페이스 간 쿼리를 사용하는 경우

serverAddress필드에서 포트 9091이 아닌 포트 9091을 대상으로 지정해야 합니다. 또한 이 포트에서 메트릭을 읽을 수 있는 높은 권한이 있어야 합니다.다음 명령을 실행하여 사용자 정의 리소스 정의를 적용합니다.

$ oc apply -f ingress-autoscaler.yaml

검증

다음 명령을 실행하여 기본 Ingress 컨트롤러가

kube-state-metrics쿼리에서 반환된 값과 일치하도록 확장되었는지 확인합니다.grep명령을 사용하여 Ingress 컨트롤러 YAML 파일에서 복제본을 검색합니다.$ oc get -n openshift-ingress-operator ingresscontroller/default -o yaml | grep replicas:출력 예

replicas: 3openshift-ingress프로젝트에서 Pod를 가져옵니다.$ oc get pods -n openshift-ingress출력 예

NAME READY STATUS RESTARTS AGE router-default-7b5df44ff-l9pmm 2/2 Running 0 17h router-default-7b5df44ff-s5sl5 2/2 Running 0 3d22h router-default-7b5df44ff-wwsth 2/2 Running 0 66s

2.3.9.4. Ingress 컨트롤러 확장

처리량 증가 요구 등 라우팅 성능 또는 가용성 요구 사항을 충족하도록 Ingress 컨트롤러를 수동으로 확장할 수 있습니다. IngressController 리소스를 확장하려면 oc 명령을 사용합니다. 다음 절차는 기본 IngressController를 확장하는 예제입니다.

원하는 수의 복제본을 만드는 데에는 시간이 걸리기 때문에 확장은 즉시 적용되지 않습니다.

절차

기본

IngressController의 현재 사용 가능한 복제본 개수를 살펴봅니다.$ oc get -n openshift-ingress-operator ingresscontrollers/default -o jsonpath='{$.status.availableReplicas}'출력 예

2oc patch명령을 사용하여 기본IngressController의 복제본 수를 원하는 대로 조정합니다. 다음 예제는 기본IngressController를 3개의 복제본으로 조정합니다.$ oc patch -n openshift-ingress-operator ingresscontroller/default --patch '{"spec":{"replicas": 3}}' --type=merge출력 예

ingresscontroller.operator.openshift.io/default patched기본

IngressController가 지정한 복제본 수에 맞게 조정되었는지 확인합니다.$ oc get -n openshift-ingress-operator ingresscontrollers/default -o jsonpath='{$.status.availableReplicas}'출력 예

3작은 정보또는 다음 YAML을 적용하여 Ingress 컨트롤러를 세 개의 복제본으로 확장할 수 있습니다.

apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 31 - 1

- 다른 양의 복제본이 필요한 경우

replicas값을 변경합니다.

2.3.9.5. 수신 액세스 로깅 구성

Ingress 컨트롤러가 로그에 액세스하도록 구성할 수 있습니다. 수신 트래픽이 많지 않은 클러스터의 경우 사이드카에 로그를 기록할 수 있습니다. 트래픽이 많은 클러스터가 있는 경우 로깅 스택의 용량을 초과하지 않거나 AWS의 Red Hat OpenShift Service 외부에서 로깅 인프라와 통합하기 위해 사용자 정의 syslog 끝점으로 로그를 전달할 수 있습니다. 액세스 로그의 형식을 지정할 수도 있습니다.

컨테이너 로깅은 기존 Syslog 로깅 인프라가 없는 경우 트래픽이 적은 클러스터에서 액세스 로그를 활성화하거나 Ingress 컨트롤러의 문제를 진단하는 동안 단기적으로 사용하는 데 유용합니다.

액세스 로그가 OpenShift 로깅 스택 용량을 초과할 수 있는 트래픽이 많은 클러스터 또는 로깅 솔루션이 기존 Syslog 로깅 인프라와 통합되어야 하는 환경에는 Syslog가 필요합니다. Syslog 사용 사례는 중첩될 수 있습니다.

사전 요구 사항

-

cluster-admin권한이 있는 사용자로 로그인합니다.

절차

사이드카에 Ingress 액세스 로깅을 구성합니다.

수신 액세스 로깅을 구성하려면

spec.logging.access.destination을 사용하여 대상을 지정해야 합니다. 사이드카 컨테이너에 로깅을 지정하려면Containerspec.logging.access.destination.type을 지정해야 합니다. 다음 예제는Container대상에 로그를 기록하는 Ingress 컨트롤러 정의입니다.apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: destination: type: Container사이드카에 로그를 기록하도록 Ingress 컨트롤러를 구성하면 Operator는 Ingress 컨트롤러 Pod에

logs라는 컨테이너를 만듭니다.$ oc -n openshift-ingress logs deployment.apps/router-default -c logs출력 예

2020-05-11T19:11:50.135710+00:00 router-default-57dfc6cd95-bpmk6 router-default-57dfc6cd95-bpmk6 haproxy[108]: 174.19.21.82:39654 [11/May/2020:19:11:50.133] public be_http:hello-openshift:hello-openshift/pod:hello-openshift:hello-openshift:10.128.2.12:8080 0/0/1/0/1 200 142 - - --NI 1/1/0/0/0 0/0 "GET / HTTP/1.1"

Syslog 끝점에 대한 Ingress 액세스 로깅을 구성합니다.

수신 액세스 로깅을 구성하려면

spec.logging.access.destination을 사용하여 대상을 지정해야 합니다. Syslog 끝점 대상에 로깅을 지정하려면spec.logging.access.destination.type에 대한Syslog를 지정해야 합니다. 대상 유형이Syslog인 경우spec.logging.access.destination.syslog.address를 사용하여 대상 끝점도 지정해야 하며spec.logging.access.destination.syslog.facility를 사용하여 기능을 지정할 수 있습니다. 다음 예제는Syslog대상에 로그를 기록하는 Ingress 컨트롤러 정의입니다.apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: destination: type: Syslog syslog: address: 1.2.3.4 port: 10514참고syslog대상 포트는 UDP여야 합니다.syslog대상 주소는 IP 주소여야 합니다. DNS 호스트 이름을 지원하지 않습니다.

특정 로그 형식으로 Ingress 액세스 로깅을 구성합니다.

spec.logging.access.httpLogFormat을 지정하여 로그 형식을 사용자 정의할 수 있습니다. 다음 예제는 IP 주소 1.2.3.4 및 포트 10514를 사용하여syslog끝점에 로그하는 Ingress 컨트롤러 정의입니다.apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: destination: type: Syslog syslog: address: 1.2.3.4 port: 10514 httpLogFormat: '%ci:%cp [%t] %ft %b/%s %B %bq %HM %HU %HV'

Ingress 액세스 로깅을 비활성화합니다.

Ingress 액세스 로깅을 비활성화하려면

spec.logging또는spec.logging.access를 비워 둡니다.apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: null

사이드카를 사용할 때 Ingress 컨트롤러에서 HAProxy 로그 길이를 수정할 수 있도록 허용합니다.

spec.logging.access.destination.destination.를 사용합니다.type: Syslog를 사용하는 경우 spec.logging.access.destination.maxLengthapiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: destination: type: Syslog syslog: address: 1.2.3.4 maxLength: 4096 port: 10514spec.logging.access.destination.destination.를 사용합니다.type: Container를 사용하는 경우 spec.logging.access.destination.maxLengthapiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: replicas: 2 logging: access: destination: type: Container container: maxLength: 8192

2.3.9.6. Ingress 컨트롤러 스레드 수 설정

클러스터 관리자는 클러스터에서 처리할 수 있는 들어오는 연결의 양을 늘리기 위해 스레드 수를 설정할 수 있습니다. 기존 Ingress 컨트롤러에 패치하여 스레드의 양을 늘릴 수 있습니다.

사전 요구 사항

- 다음은 Ingress 컨트롤러를 이미 생성했다고 가정합니다.

절차

스레드 수를 늘리도록 Ingress 컨트롤러를 업데이트합니다.

$ oc -n openshift-ingress-operator patch ingresscontroller/default --type=merge -p '{"spec":{"tuningOptions": {"threadCount": 8}}}'참고많은 리소스를 실행할 수 있는 노드가 있는 경우 원하는 노드의 용량과 일치하는 라벨을 사용하여

spec.nodePlacement.nodeSelector를 구성하고spec.tuningOptions.threadCount를 적절하게 높은 값으로 구성할 수 있습니다.

2.3.9.7. 내부 로드 밸런서를 사용하도록 Ingress 컨트롤러 구성

클라우드 플랫폼에서 Ingress 컨트롤러를 생성할 때 Ingress 컨트롤러는 기본적으로 퍼블릭 클라우드 로드 밸런서에 의해 게시됩니다. 관리자는 내부 클라우드 로드 밸런서를 사용하는 Ingress 컨트롤러를 생성할 수 있습니다.

IngressController 의 범위를 변경하려면 CR(사용자 정의 리소스)을 생성한 후 .spec.endpointPublishingStrategy.loadBalancer.scope 매개변수를 변경할 수 있습니다.

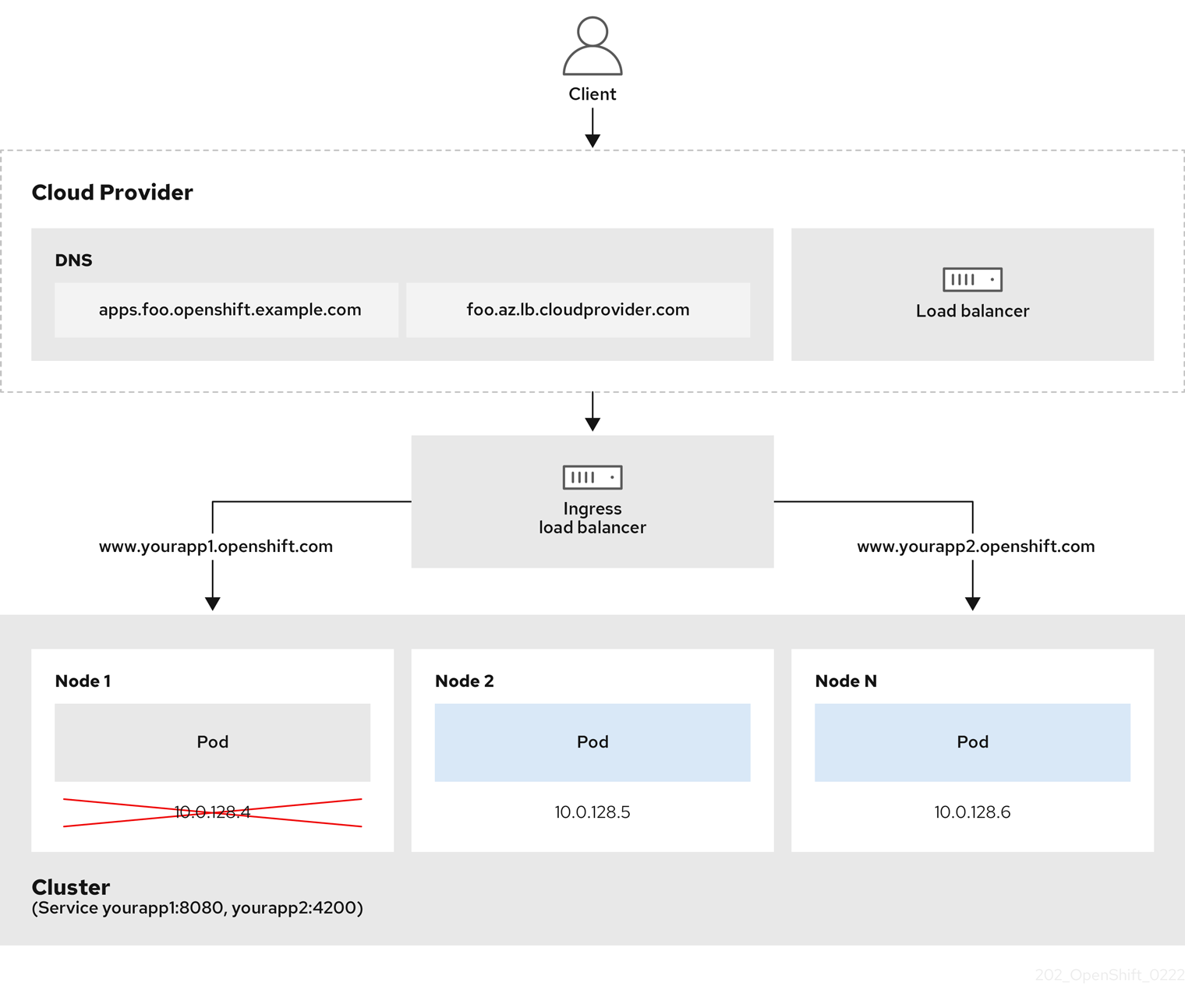

그림 2.1. LoadBalancer 다이어그램

이전 그래픽은 AWS Ingress LoadBalancerService 끝점 게시 전략의 Red Hat OpenShift Service와 관련된 다음 개념을 보여줍니다.

- 클라우드 공급자 로드 밸런서를 사용하거나 내부적으로 OpenShift Ingress 컨트롤러 로드 밸런서를 사용하여 외부에서 부하를 분산할 수 있습니다.

- 그래픽에 표시된 클러스터에 표시된 대로 로드 밸런서의 단일 IP 주소와 8080 및 4200과 같은 더 친숙한 포트를 사용할 수 있습니다.

- 외부 로드 밸런서의 트래픽은 Pod에서 전달되고 다운 노드의 인스턴스에 표시된 대로 로드 밸런서에 의해 관리됩니다. 구현 세부 사항은 Kubernetes 서비스 설명서를 참조하십시오.

사전 요구 사항

-

OpenShift CLI(

oc)를 설치합니다. -

cluster-admin권한이 있는 사용자로 로그인합니다.

프로세스

다음 예제와 같이

<name>-ingress-controller.yam파일에IngressControllerCR(사용자 정의 리소스)을 생성합니다.apiVersion: operator.openshift.io/v1 kind: IngressController metadata: namespace: openshift-ingress-operator name: <name>1 spec: domain: <domain>2 endpointPublishingStrategy: type: LoadBalancerService loadBalancer: scope: Internal3 다음 명령을 실행하여 이전 단계에서 정의된 Ingress 컨트롤러를 생성합니다.

$ oc create -f <name>-ingress-controller.yaml1 - 1

<name>을IngressController오브젝트의 이름으로 변경합니다.

선택 사항: Ingress 컨트롤러가 생성되었는지 확인하려면 다음 명령을 실행합니다.

$ oc --all-namespaces=true get ingresscontrollers

2.3.9.8. Ingress 컨트롤러 상태 점검 간격 설정

클러스터 관리자는 상태 점검 간격을 설정하여 라우터가 연속 상태 점검 사이에 대기하는 시간을 정의할 수 있습니다. 이 값은 모든 경로에 대해 전역적으로 적용됩니다. 기본값은 5초입니다.

사전 요구 사항

- 다음은 Ingress 컨트롤러를 이미 생성했다고 가정합니다.

프로세스

백엔드 상태 점검 간 간격을 변경하도록 Ingress 컨트롤러를 업데이트합니다.

$ oc -n openshift-ingress-operator patch ingresscontroller/default --type=merge -p '{"spec":{"tuningOptions": {"healthCheckInterval": "8s"}}}'참고단일 경로의

healthCheckInterval을 재정의하려면 경로 주석router.openshift.io/haproxy.health.check.interval을 사용합니다.

2.3.9.9. 클러스터의 기본 Ingress 컨트롤러를 내부로 구성

클러스터를 삭제하고 다시 생성하여 클러스터의 default Ingress 컨트롤러를 내부용으로 구성할 수 있습니다.

IngressController 의 범위를 변경하려면 CR(사용자 정의 리소스)을 생성한 후 .spec.endpointPublishingStrategy.loadBalancer.scope 매개변수를 변경할 수 있습니다.

사전 요구 사항

-

OpenShift CLI(

oc)를 설치합니다. -

cluster-admin권한이 있는 사용자로 로그인합니다.

프로세스

클러스터의

기본Ingress 컨트롤러를 삭제하고 다시 생성하여 내부용으로 구성합니다.$ oc replace --force --wait --filename - <<EOF apiVersion: operator.openshift.io/v1 kind: IngressController metadata: namespace: openshift-ingress-operator name: default spec: endpointPublishingStrategy: type: LoadBalancerService loadBalancer: scope: Internal EOF

2.3.9.10. 경로 허용 정책 구성

관리자 및 애플리케이션 개발자는 도메인 이름이 동일한 여러 네임스페이스에서 애플리케이션을 실행할 수 있습니다. 이는 여러 팀이 동일한 호스트 이름에 노출되는 마이크로 서비스를 개발하는 조직을 위한 것입니다.

네임스페이스 간 클레임은 네임스페이스 간 신뢰가 있는 클러스터에 대해서만 허용해야 합니다. 그렇지 않으면 악의적인 사용자가 호스트 이름을 인수할 수 있습니다. 따라서 기본 승인 정책에서는 네임스페이스 간에 호스트 이름 클레임을 허용하지 않습니다.

사전 요구 사항

- 클러스터 관리자 권한이 있어야 합니다.

프로세스

다음 명령을 사용하여

ingresscontroller리소스 변수의.spec.routeAdmission필드를 편집합니다.$ oc -n openshift-ingress-operator patch ingresscontroller/default --patch '{"spec":{"routeAdmission":{"namespaceOwnership":"InterNamespaceAllowed"}}}' --type=merge샘플 Ingress 컨트롤러 구성

spec: routeAdmission: namespaceOwnership: InterNamespaceAllowed ...작은 정보다음 YAML을 적용하여 경로 승인 정책을 구성할 수 있습니다.

apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: routeAdmission: namespaceOwnership: InterNamespaceAllowed

2.3.9.11. 와일드카드 경로 사용

HAProxy Ingress 컨트롤러는 와일드카드 경로를 지원합니다. Ingress Operator는 wildcardPolicy를 사용하여 Ingress 컨트롤러의 ROUTER_ALLOW_WILDCARD_ROUTES 환경 변수를 구성합니다.

Ingress 컨트롤러의 기본 동작은 와일드카드 정책이 None인 경로를 허용하고, 이는 기존 IngressController 리소스의 이전 버전과 호환됩니다.

프로세스

와일드카드 정책을 구성합니다.

다음 명령을 사용하여

IngressController리소스를 편집합니다.$ oc edit IngressControllerspec에서wildcardPolicy필드를WildcardsDisallowed또는WildcardsAllowed로 설정합니다.spec: routeAdmission: wildcardPolicy: WildcardsDisallowed # or WildcardsAllowed

2.3.9.12. HTTP 헤더 구성

Red Hat OpenShift Service on AWS는 HTTP 헤더로 작업할 수 있는 다양한 방법을 제공합니다. 헤더를 설정하거나 삭제할 때 Ingress 컨트롤러의 특정 필드를 사용하거나 개별 경로를 사용하여 요청 및 응답 헤더를 수정할 수 있습니다. 경로 주석을 사용하여 특정 헤더를 설정할 수도 있습니다. 헤더를 구성하는 다양한 방법은 함께 작업할 때 문제가 발생할 수 있습니다.

IngressController 또는 Route CR 내에서 헤더만 설정하거나 삭제할 수 있으므로 추가할 수 없습니다. HTTP 헤더가 값으로 설정된 경우 해당 값은 완료되어야 하며 나중에 추가할 필요가 없습니다. X-Forwarded-For 헤더와 같은 헤더를 추가하는 것이 적합한 경우 spec.httpHeaders.actions 대신 spec.httpHeaders.forwardedHeaderPolicy 필드를 사용합니다.

2.3.9.12.1. 우선순위 순서

Ingress 컨트롤러와 경로에서 동일한 HTTP 헤더를 수정하는 경우 HAProxy는 요청 또는 응답 헤더인지 여부에 따라 특정 방식으로 작업에 우선순위를 부여합니다.

- HTTP 응답 헤더의 경우 경로에 지정된 작업 후에 Ingress 컨트롤러에 지정된 작업이 실행됩니다. 즉, Ingress 컨트롤러에 지정된 작업이 우선합니다.

- HTTP 요청 헤더의 경우 경로에 지정된 작업은 Ingress 컨트롤러에 지정된 작업 후에 실행됩니다. 즉, 경로에 지정된 작업이 우선합니다.

예를 들어 클러스터 관리자는 다음 구성을 사용하여 Ingress 컨트롤러에서 값이 DENY 인 X-Frame-Options 응답 헤더를 설정합니다.

IngressController 사양 예

apiVersion: operator.openshift.io/v1

kind: IngressController

# ...

spec:

httpHeaders:

actions:

response:

- name: X-Frame-Options

action:

type: Set

set:

value: DENY

경로 소유자는 클러스터 관리자가 Ingress 컨트롤러에 설정한 것과 동일한 응답 헤더를 설정하지만 다음 구성을 사용하여 SAMEORIGIN 값이 사용됩니다.

Route 사양의 예

apiVersion: route.openshift.io/v1

kind: Route

# ...

spec:

httpHeaders:

actions:

response:

- name: X-Frame-Options

action:

type: Set

set:

value: SAMEORIGIN

IngressController 사양과 Route 사양 모두에서 X-Frame-Options 응답 헤더를 구성하는 경우 특정 경로에서 프레임을 허용하는 경우에도 Ingress 컨트롤러의 글로벌 수준에서 이 헤더에 설정된 값이 우선합니다. 요청 헤더의 경우 Route spec 값은 IngressController 사양 값을 재정의합니다.

이 우선순위는 haproxy.config 파일에서 다음 논리를 사용하므로 Ingress 컨트롤러가 프런트 엔드로 간주되고 개별 경로가 백엔드로 간주되기 때문입니다. 프런트 엔드 구성에 적용된 헤더 값 DENY 는 백엔드에 설정된 SAMEORIGIN 값으로 동일한 헤더를 재정의합니다.

frontend public

http-response set-header X-Frame-Options 'DENY'

frontend fe_sni

http-response set-header X-Frame-Options 'DENY'

frontend fe_no_sni

http-response set-header X-Frame-Options 'DENY'

backend be_secure:openshift-monitoring:alertmanager-main

http-response set-header X-Frame-Options 'SAMEORIGIN'또한 Ingress 컨트롤러 또는 경로 주석을 사용하여 설정된 경로 덮어쓰기 값에 정의된 모든 작업입니다.

2.3.9.12.2. 특수 케이스 헤더

다음 헤더는 완전히 설정되거나 삭제되지 않거나 특정 상황에서 허용되지 않습니다.

| 헤더 이름 | IngressController 사양을 사용하여 구성 가능 | Route 사양을 사용하여 구성 가능 | 허용하지 않는 이유 | 다른 방법을 사용하여 구성 가능 |

|---|---|---|---|---|

|

| 없음 | 없음 |

| 없음 |

|

| 없음 | 제공됨 |

| 없음 |

|

| 없음 | 없음 |

|

제공됨: |

|

| 없음 | 없음 | HAProxy가 클라이언트 연결을 특정 백엔드 서버에 매핑하는 세션 추적에 사용되는 쿠키입니다. 이러한 헤더를 설정하도록 허용하면 HAProxy의 세션 선호도를 방해하고 HAProxy의 쿠키 소유권을 제한할 수 있습니다. | 예:

|

2.3.9.13. Ingress 컨트롤러에서 HTTP 요청 및 응답 헤더 설정 또는 삭제

규정 준수 목적 또는 기타 이유로 특정 HTTP 요청 및 응답 헤더를 설정하거나 삭제할 수 있습니다. Ingress 컨트롤러에서 제공하는 모든 경로 또는 특정 경로에 대해 이러한 헤더를 설정하거나 삭제할 수 있습니다.

예를 들어 상호 TLS를 사용하기 위해 클러스터에서 실행 중인 애플리케이션을 마이그레이션할 수 있습니다. 이 경우 애플리케이션에서 X-Forwarded-Client-Cert 요청 헤더를 확인해야 하지만 AWS 기본 Ingress 컨트롤러의 Red Hat OpenShift Service는 X-SSL-Client-Der 요청 헤더를 제공합니다.

다음 절차에서는 X-Forwarded-Client-Cert 요청 헤더를 설정하도록 Ingress 컨트롤러를 수정하고 X-SSL-Client-Der 요청 헤더를 삭제합니다.

사전 요구 사항

-

OpenShift CLI(

oc)가 설치되어 있습니다. -

cluster-admin역할의 사용자로 AWS 클러스터의 Red Hat OpenShift Service에 액세스할 수 있습니다.

절차

Ingress 컨트롤러 리소스를 편집합니다.

$ oc -n openshift-ingress-operator edit ingresscontroller/defaultX-SSL-Client-Der HTTP 요청 헤더를 X-Forwarded-Client-Cert HTTP 요청 헤더로 바꿉니다.

apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: httpHeaders: actions:1 request:2 - name: X-Forwarded-Client-Cert3 action: type: Set4 set: value: "%{+Q}[ssl_c_der,base64]"5 - name: X-SSL-Client-Der action: type: Delete- 1

- HTTP 헤더에서 수행할 작업 목록입니다.

- 2

- 변경할 헤더 유형입니다. 이 경우 요청 헤더가 있습니다.

- 3

- 변경할 헤더의 이름입니다. 설정하거나 삭제할 수 있는 사용 가능한 헤더 목록은 HTTP 헤더 구성 을 참조하십시오.

- 4

- 헤더에서 수행되는 작업 유형입니다. 이 필드에는

Set또는Delete값이 있을 수 있습니다. - 5

- HTTP 헤더를 설정할 때

값을제공해야 합니다. 값은 해당 헤더에 사용 가능한 지시문 목록(예:DENY)의 문자열이거나 HAProxy의 동적 값 구문을 사용하여 해석되는 동적 값이 될 수 있습니다. 이 경우 동적 값이 추가됩니다.

참고HTTP 응답에 대한 동적 헤더 값을 설정하기 위해 허용되는 샘플 페이퍼는

res.hdr및ssl_c_der입니다. HTTP 요청에 대한 동적 헤더 값을 설정하는 경우 허용되는 샘플 페더는req.hdr및ssl_c_der입니다. request 및 response 동적 값은 모두lower및base64컨버터를 사용할 수 있습니다.- 파일을 저장하여 변경 사항을 적용합니다.

2.3.9.14. X-Forwarded 헤더 사용

HAProxy Ingress 컨트롤러를 구성하여 Forwarded 및 X-Forwarded-For를 포함한 HTTP 헤더 처리 방법에 대한 정책을 지정합니다. Ingress Operator는 HTTPHeaders 필드를 사용하여 Ingress 컨트롤러의 ROUTER_SET_FORWARDED_HEADERS 환경 변수를 구성합니다.

프로세스

Ingress 컨트롤러에 대한

HTTPHeaders필드를 구성합니다.다음 명령을 사용하여

IngressController리소스를 편집합니다.$ oc edit IngressControllerspec에서HTTPHeaders정책 필드를Append,Replace,IfNone또는Never로 설정합니다.apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: httpHeaders: forwardedHeaderPolicy: Append

사용 사례 예

클러스터 관리자는 다음을 수행할 수 있습니다.

Ingress 컨트롤러로 전달하기 전에

X-Forwarded-For헤더를 각 요청에 삽입하는 외부 프록시를 구성합니다.헤더를 수정하지 않은 상태로 전달하도록 Ingress 컨트롤러를 구성하려면

never정책을 지정합니다. 그러면 Ingress 컨트롤러에서 헤더를 설정하지 않으며 애플리케이션은 외부 프록시에서 제공하는 헤더만 수신합니다.외부 프록시에서 외부 클러스터 요청에 설정한

X-Forwarded-For헤더를 수정하지 않은 상태로 전달하도록 Ingress 컨트롤러를 구성합니다.외부 프록시를 통과하지 않는 내부 클러스터 요청에

X-Forwarded-For헤더를 설정하도록 Ingress 컨트롤러를 구성하려면if-none정책을 지정합니다. HTTP 요청에 이미 외부 프록시를 통해 설정된 헤더가 있는 경우 Ingress 컨트롤러에서 해당 헤더를 보존합니다. 요청이 프록시를 통해 제공되지 않아 헤더가 없는 경우에는 Ingress 컨트롤러에서 헤더를 추가합니다.

애플리케이션 개발자는 다음을 수행할 수 있습니다.

X-Forwarded-For헤더를 삽입하는 애플리케이션별 외부 프록시를 구성합니다.다른 경로에 대한 정책에 영향을 주지 않으면서 애플리케이션 경로에 대한 헤더를 수정하지 않은 상태로 전달하도록 Ingress 컨트롤러를 구성하려면 애플리케이션 경로에 주석

haproxy.router.openshift.io/set-forwarded-headers: if-none또는haproxy.router.openshift.io/set-forwarded-headers: never를 추가하십시오.참고Ingress 컨트롤러에 전역적으로 설정된 값과 관계없이 경로별로

haproxy.router.openshift.io/set-forwarded-headers주석을 설정할 수 있습니다.

2.3.9.15. Ingress 컨트롤러에서 HTTP/2 활성화 또는 비활성화

HAProxy에서 투명한 엔드 투 엔드 HTTP/2 연결을 활성화하거나 비활성화할 수 있습니다. 애플리케이션 소유자는 단일 연결, 헤더 압축, 바이너리 스트림 등을 포함하여 HTTP/2 프로토콜 기능을 사용할 수 있습니다.

개별 Ingress 컨트롤러 또는 전체 클러스터에 대해 HTTP/2 연결을 활성화하거나 비활성화할 수 있습니다.

개별 Ingress 컨트롤러 및 전체 클러스터에 대해 HTTP/2 연결을 활성화하거나 비활성화하는 경우 Ingress 컨트롤러의 HTTP/2 구성이 클러스터의 HTTP/2 구성보다 우선합니다.

클라이언트에서 HAProxy 인스턴스로의 연결에 HTTP/2 사용을 활성화하려면 경로에서 사용자 정의 인증서를 지정해야 합니다. 기본 인증서를 사용하는 경로에서는 HTTP/2를 사용할 수 없습니다. 이것은 동일한 인증서를 사용하는 다른 경로의 연결을 클라이언트가 재사용하는 등 동시 연결로 인한 문제를 방지하기 위한 제한입니다.

각 경로 유형에 대한 HTTP/2 연결에 대해 다음 사용 사례를 고려하십시오.

- 재암호화 경로의 경우 애플리케이션이 ALPN(Application-Level Protocol Negotiation)을 사용하여 HTTP/2를 협상하는 경우 HAProxy에서 애플리케이션 Pod로의 연결은 HTTP/2를 사용할 수 있습니다. Ingress 컨트롤러에 HTTP/2가 활성화된 경우가 아니면 재암호화 경로와 함께 HTTP/2를 사용할 수 없습니다.

- 패스스루 경로의 경우 애플리케이션에서 ALPN 사용을 지원하여 HTTP/2를 클라이언트와 협상하는 경우 HTTP/2를 사용할 수 있습니다. Ingress Controller에 HTTP/2가 활성화되거나 비활성화된 경우 통과 경로와 함께 HTTP/2를 사용할 수 있습니다.

-

에지 종료 보안 경로의 경우 서비스에서

appProtocol: kubernetes.io/h2c만 지정하는 경우 HTTP/2를 사용합니다. Ingress Controller에 HTTP/2가 활성화되거나 비활성화된 경우 에지 종료 보안 경로와 함께 HTTP/2를 사용할 수 있습니다. -

비보안 경로의 경우 서비스에서

appProtocol: kubernetes.io/h2c만 지정하는 경우 HTTP/2를 사용합니다. Ingress Controller에 HTTP/2가 활성화되거나 비활성화된 경우 비보안 경로와 HTTP/2를 사용할 수 있습니다.

패스스루(passthrough)가 아닌 경로의 경우 Ingress 컨트롤러는 클라이언트와의 연결과 관계없이 애플리케이션에 대한 연결을 협상합니다. 즉, 클라이언트가 Ingress 컨트롤러에 연결하고 HTTP/1.1을 협상할 수 있습니다. 그러면 Ingress 컨트롤러가 애플리케이션에 연결하고 HTTP/2를 협상하고, HTTP/2 연결을 사용하여 클라이언트 HTTP/1.1 연결에서 요청을 전달할 수 있습니다.

이러한 이벤트 시퀀스로 인해 클라이언트가 HTTP/1.1에서 WebSocket 프로토콜로 연결을 업그레이드하려고 하면 문제가 발생합니다. WebSocket 연결을 수락하려는 애플리케이션이 있고 애플리케이션에서 HTTP/2 프로토콜 협상을 허용하려고 하면 클라이언트는 WebSocket 프로토콜로 업그레이드하지 못합니다.

2.3.9.15.1. HTTP/2 활성화

특정 Ingress 컨트롤러에서 HTTP/2를 활성화하거나 전체 클러스터에 HTTP/2를 활성화할 수 있습니다.

프로세스

특정 Ingress 컨트롤러에서 HTTP/2를 활성화하려면

oc annotate명령을 입력합니다.$ oc -n openshift-ingress-operator annotate ingresscontrollers/<ingresscontroller_name> ingress.operator.openshift.io/default-enable-http2=true1 - 1

- &

lt;ingresscontroller_name>을 HTTP/2를 활성화하려면 Ingress 컨트롤러의 이름으로 바꿉니다.

전체 클러스터에 HTTP/2를 사용하려면

oc annotate명령을 입력합니다.$ oc annotate ingresses.config/cluster ingress.operator.openshift.io/default-enable-http2=true

또는 다음 YAML 코드를 적용하여 HTTP/2를 활성화할 수 있습니다.

apiVersion: config.openshift.io/v1

kind: Ingress

metadata:

name: cluster

annotations:

ingress.operator.openshift.io/default-enable-http2: "true"2.3.9.15.2. HTTP/2 비활성화

특정 Ingress 컨트롤러에서 HTTP/2를 비활성화하거나 전체 클러스터에 대해 HTTP/2를 비활성화할 수 있습니다.

프로세스

특정 Ingress 컨트롤러에서 HTTP/2를 비활성화하려면

oc annotate명령을 입력합니다.$ oc -n openshift-ingress-operator annotate ingresscontrollers/<ingresscontroller_name> ingress.operator.openshift.io/default-enable-http2=false1 - 1

- &

lt;ingresscontroller_name>을 HTTP/2를 비활성화하려면 Ingress 컨트롤러의 이름으로 바꿉니다.

전체 클러스터에 대해 HTTP/2를 비활성화하려면

oc annotate명령을 입력합니다.$ oc annotate ingresses.config/cluster ingress.operator.openshift.io/default-enable-http2=false

또는 다음 YAML 코드를 적용하여 HTTP/2를 비활성화할 수 있습니다.

apiVersion: config.openshift.io/v1

kind: Ingress

metadata:

name: cluster

annotations:

ingress.operator.openshift.io/default-enable-http2: "false"2.3.9.16. Ingress 컨트롤러에 대한 PROXY 프로토콜 구성

클러스터 관리자는 Ingress 컨트롤러에서 HostNetwork,NodePortService 또는 Private 엔드포인트 게시 전략 유형을 사용하는 경우 PROXY 프로토콜 을 구성할 수 있습니다. PROXY 프로토콜을 사용하면 로드 밸런서에서 Ingress 컨트롤러가 수신하는 연결에 대한 원래 클라이언트 주소를 유지할 수 있습니다. 원래 클라이언트 주소는 HTTP 헤더를 로깅, 필터링 및 삽입하는 데 유용합니다. 기본 구성에서 Ingress 컨트롤러가 수신하는 연결에는 로드 밸런서와 연결된 소스 주소만 포함됩니다.

Keepalived Ingress 가상 IP(VIP)를 사용하는 클라우드 플랫폼에 설치 관리자 프로비저닝 클러스터가 있는 기본 Ingress 컨트롤러는 PROXY 프로토콜을 지원하지 않습니다.

PROXY 프로토콜을 사용하면 로드 밸런서에서 Ingress 컨트롤러가 수신하는 연결에 대한 원래 클라이언트 주소를 유지할 수 있습니다. 원래 클라이언트 주소는 HTTP 헤더를 로깅, 필터링 및 삽입하는 데 유용합니다. 기본 구성에서 Ingress 컨트롤러에서 수신하는 연결에는 로드 밸런서와 연결된 소스 IP 주소만 포함됩니다.

패스스루 경로 구성의 경우 AWS 클러스터의 Red Hat OpenShift Service의 서버는 원래 클라이언트 소스 IP 주소를 관찰할 수 없습니다. 원래 클라이언트 소스 IP 주소를 알아야 하는 경우 클라이언트 소스 IP 주소를 볼 수 있도록 Ingress 컨트롤러에 대한 Ingress 액세스 로깅을 구성합니다.

재암호화 및 엣지 경로의 경우 AWS 라우터의 Red Hat OpenShift Service는 애플리케이션 워크로드가 클라이언트 소스 IP 주소를 확인하도록 Forwarded 및 X-Forwarded-For 헤더를 설정합니다.

Ingress 액세스 로깅에 대한 자세한 내용은 " Ingress 액세스 로깅 구성"을 참조하십시오.

LoadBalancerService 끝점 게시 전략 유형을 사용하는 경우 Ingress 컨트롤러에 대한 PROXY 프로토콜 구성은 지원되지 않습니다. 이는 AWS의 Red Hat OpenShift Service가 클라우드 플랫폼에서 실행되고 Ingress 컨트롤러가 서비스 로드 밸런서를 사용해야 함을 지정하는 경우 Ingress Operator는 로드 밸런서 서비스를 구성하고 소스 주소를 유지하기 위한 플랫폼 요구 사항에 따라 PROXY 프로토콜을 활성화하기 때문입니다.

PROXY 프로토콜 또는 TCP를 사용하도록 AWS에서 Red Hat OpenShift Service와 외부 로드 밸런서를 모두 구성해야 합니다.

이 기능은 클라우드 배포에서 지원되지 않습니다. 이는 AWS의 Red Hat OpenShift Service가 클라우드 플랫폼에서 실행되고 Ingress 컨트롤러가 서비스 로드 밸런서를 사용해야 함을 지정하는 경우 Ingress Operator는 로드 밸런서 서비스를 구성하고 소스 주소를 유지하기 위한 플랫폼 요구 사항에 따라 PROXY 프로토콜을 활성화하기 때문입니다.

PROXY 프로토콜을 사용하거나 TCP(Transmission Control Protocol)를 사용하려면 AWS의 Red Hat OpenShift Service와 외부 로드 밸런서를 모두 구성해야 합니다.

사전 요구 사항

- Ingress 컨트롤러가 생성되어 있습니다.

프로세스

CLI에 다음 명령을 입력하여 Ingress 컨트롤러 리소스를 편집합니다.

$ oc -n openshift-ingress-operator edit ingresscontroller/defaultPROXY 구성을 설정합니다.

Ingress 컨트롤러에서

HostNetwork끝점 게시 전략 유형을 사용하는 경우spec.endpointPublishingStrategy.hostNetwork.protocol하위 필드를PROXY:로 설정합니다.PROXY에 대한hostNetwork구성 샘플# ... spec: endpointPublishingStrategy: hostNetwork: protocol: PROXY type: HostNetwork # ...Ingress 컨트롤러에서

NodePortService끝점 게시 전략 유형을 사용하는 경우spec.endpointPublishingStrategy.nodePort.protocol하위 필드를PROXY로 설정합니다.PROXY에 대한nodePort구성 샘플# ... spec: endpointPublishingStrategy: nodePort: protocol: PROXY type: NodePortService # ...Ingress 컨트롤러에서

Private끝점 게시 전략 유형을 사용하는 경우spec.endpointPublishingStrategy.private.protocol하위 필드를PROXY로 설정합니다.PROXY에 대한개인구성 샘플# ... spec: endpointPublishingStrategy: private: protocol: PROXY type: Private # ...

2.3.9.17. appsDomain 옵션을 사용하여 대체 클러스터 도메인 지정

클러스터 관리자는 appsDomain 필드를 구성하여 사용자가 생성한 경로에 대한 기본 클러스터 도메인의 대안을 지정할 수 있습니다. appsDomain 필드는 domain 필드에 지정된 기본값 대신 사용할 AWS의 Red Hat OpenShift Service의 선택적 도메인 입니다. 대체 도메인을 지정하면 새 경로의 기본 호스트를 결정하기 위해 기본 클러스터 도메인을 덮어씁니다.

예를 들어, 회사의 DNS 도메인을 클러스터에서 실행되는 애플리케이션의 경로 및 인그레스의 기본 도메인으로 사용할 수 있습니다.

사전 요구 사항

- AWS 클러스터에 Red Hat OpenShift Service를 배포했습니다.

-

oc명령줄 인터페이스를 설치했습니다.

프로세스

사용자 생성 경로에 대한 대체 기본 도메인을 지정하여

appsDomain필드를 구성합니다.ingress

클러스터리소스를 편집합니다.$ oc edit ingresses.config/cluster -o yamlYAML 파일을 편집합니다.

test.example.com에 대한appsDomain구성 샘플apiVersion: config.openshift.io/v1 kind: Ingress metadata: name: cluster spec: domain: apps.example.com1 appsDomain: <test.example.com>2

경로를 노출하고 경로 도메인 변경을 확인하여 기존 경로에

appsDomain필드에 지정된 도메인 이름이 포함되어 있는지 확인합니다.참고경로를 노출하기 전에

openshift-apiserver가 롤링 업데이트를 완료할 때까지 기다립니다.경로를 노출합니다.

$ oc expose service hello-openshift route.route.openshift.io/hello-openshift exposed출력 예

$ oc get routes NAME HOST/PORT PATH SERVICES PORT TERMINATION WILDCARD hello-openshift hello_openshift-<my_project>.test.example.com hello-openshift 8080-tcp None

2.3.9.18. HTTP 헤더 대소문자 변환

HAProxy 소문자 HTTP 헤더 이름(예: Host: xyz.com 을 host: xyz.com )으로 변경합니다. 기존 애플리케이션이 HTTP 헤더 이름의 대문자에 민감한 경우 Ingress Controller spec.httpHeaders.headerNameCaseAdjustments API 필드를 사용하여 기존 애플리케이션을 수정할 때 까지 지원합니다.

AWS의 Red Hat OpenShift Service에는 HAProxy 2.8이 포함되어 있습니다. 이 웹 기반 로드 밸런서 버전으로 업데이트하려면 spec.httpHeaders.headerNameCaseAdjustments 섹션을 클러스터의 구성 파일에 추가해야 합니다.

클러스터 관리자는 oc patch 명령을 입력하거나 Ingress 컨트롤러 YAML 파일에서 HeaderNameCaseAdjustments 필드를 설정하여 HTTP 헤더 케이스를 변환할 수 있습니다.

사전 요구 사항

-

OpenShift CLI(

oc)가 설치되어 있습니다. -

cluster-admin역할의 사용자로 클러스터에 액세스할 수 있어야 합니다.

프로세스

oc patch명령을 사용하여 HTTP 헤더를 대문자로 설정합니다.다음 명령을 실행하여 HTTP 헤더를

host에서Host로 변경합니다.$ oc -n openshift-ingress-operator patch ingresscontrollers/default --type=merge --patch='{"spec":{"httpHeaders":{"headerNameCaseAdjustments":["Host"]}}}'주석을 애플리케이션에 적용할 수 있도록

Route리소스 YAML 파일을 생성합니다.my-application이라는 경로 예apiVersion: route.openshift.io/v1 kind: Route metadata: annotations: haproxy.router.openshift.io/h1-adjust-case: true1 name: <application_name> namespace: <application_name> # ...- 1

- Ingress 컨트롤러가 지정된 대로

호스트요청 헤더를 조정할 수 있도록haproxy.router.openshift.io/h1-adjust-case를 설정합니다.

Ingress 컨트롤러 YAML 구성 파일에서

HeaderNameCaseAdjustments필드를 구성하여 조정을 지정합니다.다음 예제 Ingress 컨트롤러 YAML 파일은 적절한 주석이 달린 경로에 대해 HTTP/1 요청에 대해

호스트헤더를Host로 조정합니다.Ingress 컨트롤러 YAML 예시

apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: httpHeaders: headerNameCaseAdjustments: - Host다음 예제 경로에서는

haproxy.router.openshift.io/h1-adjust-case주석을 사용하여 HTTP 응답 헤더 이름 대소문자 조정을 활성화합니다.경로 YAML의 예

apiVersion: route.openshift.io/v1 kind: Route metadata: annotations: haproxy.router.openshift.io/h1-adjust-case: true1 name: my-application namespace: my-application spec: to: kind: Service name: my-application- 1

haproxy.router.openshift.io/h1-adjust-case를 true로 설정합니다.

2.3.9.19. 라우터 압축 사용

특정 MIME 유형에 대해 전역적으로 라우터 압축을 지정하도록 HAProxy Ingress 컨트롤러를 구성합니다. mimeTypes 변수를 사용하여 압축이 적용되는 MIME 유형의 형식을 정의할 수 있습니다. 유형은 application, image, message, multipart, text, video 또는 "X-"가 붙은 사용자 지정 유형입니다. MIME 유형 및 하위 유형에 대한 전체 표기법을 보려면 RFC1341 을 참조하십시오.

압축에 할당된 메모리는 최대 연결에 영향을 미칠 수 있습니다. 또한 큰 버퍼를 압축하면 많은 regex 또는 긴 regex 목록과 같은 대기 시간이 발생할 수 있습니다.

모든 MIME 유형이 압축의 이점은 아니지만 HAProxy는 여전히 리소스를 사용하여 다음을 지시한 경우 압축합니다. 일반적으로 html, css, js와 같은 텍스트 형식은 압축할 수 있지만 이미 압축한 형식(예: 이미지, 오디오, 비디오 등)은 압축에 소요되는 시간과 리소스를 거의 교환하지 못합니다.

프로세스

Ingress 컨트롤러의

httpCompression필드를 구성합니다.다음 명령을 사용하여

IngressController리소스를 편집합니다.$ oc edit -n openshift-ingress-operator ingresscontrollers/defaultspec에서httpCompression정책 필드를mimeTypes로 설정하고 압축이 적용되어야 하는 MIME 유형 목록을 지정합니다.apiVersion: operator.openshift.io/v1 kind: IngressController metadata: name: default namespace: openshift-ingress-operator spec: httpCompression: mimeTypes: - "text/html" - "text/css; charset=utf-8" - "application/json" ...

2.3.9.20. 라우터 지표 노출

기본 통계 포트인 1936에서 Prometheus 형식으로 기본적으로 HAProxy 라우터 지표를 노출할 수 있습니다. Prometheus와 같은 외부 메트릭 컬렉션 및 집계 시스템은 HAProxy 라우터 지표에 액세스할 수 있습니다. 브라우저에서 HAProxy 라우터 메트릭을 HTML 및 쉼표로 구분된 값(CSV) 형식으로 볼 수 있습니다.

사전 요구 사항

- 기본 통계 포트인 1936에 액세스하도록 방화벽을 구성했습니다.

프로세스

다음 명령을 실행하여 라우터 Pod 이름을 가져옵니다.

$ oc get pods -n openshift-ingress출력 예

NAME READY STATUS RESTARTS AGE router-default-76bfffb66c-46qwp 1/1 Running 0 11h라우터 Pod가

/var/lib/haproxy/conf/metrics-auth/statsUsername및/var/lib/haproxy/conf/metrics-auth/statsPassword파일에 저장하는 라우터의 사용자 이름과 암호를 가져옵니다.다음 명령을 실행하여 사용자 이름을 가져옵니다.

$ oc rsh <router_pod_name> cat metrics-auth/statsUsername다음 명령을 실행하여 암호를 가져옵니다.

$ oc rsh <router_pod_name> cat metrics-auth/statsPassword

다음 명령을 실행하여 라우터 IP 및 메트릭 인증서를 가져옵니다.

$ oc describe pod <router_pod>다음 명령을 실행하여 Prometheus 형식으로 원시 통계를 가져옵니다.

$ curl -u <user>:<password> http://<router_IP>:<stats_port>/metrics다음 명령을 실행하여 메트릭에 안전하게 액세스합니다.

$ curl -u user:password https://<router_IP>:<stats_port>/metrics -k다음 명령을 실행하여 기본 stats 포트 1936에 액세스합니다.

$ curl -u <user>:<password> http://<router_IP>:<stats_port>/metrics예 2.1. 출력 예

... # HELP haproxy_backend_connections_total Total number of connections. # TYPE haproxy_backend_connections_total gauge haproxy_backend_connections_total{backend="http",namespace="default",route="hello-route"} 0 haproxy_backend_connections_total{backend="http",namespace="default",route="hello-route-alt"} 0 haproxy_backend_connections_total{backend="http",namespace="default",route="hello-route01"} 0 ... # HELP haproxy_exporter_server_threshold Number of servers tracked and the current threshold value. # TYPE haproxy_exporter_server_threshold gauge haproxy_exporter_server_threshold{type="current"} 11 haproxy_exporter_server_threshold{type="limit"} 500 ... # HELP haproxy_frontend_bytes_in_total Current total of incoming bytes. # TYPE haproxy_frontend_bytes_in_total gauge haproxy_frontend_bytes_in_total{frontend="fe_no_sni"} 0 haproxy_frontend_bytes_in_total{frontend="fe_sni"} 0 haproxy_frontend_bytes_in_total{frontend="public"} 119070 ... # HELP haproxy_server_bytes_in_total Current total of incoming bytes. # TYPE haproxy_server_bytes_in_total gauge haproxy_server_bytes_in_total{namespace="",pod="",route="",server="fe_no_sni",service=""} 0 haproxy_server_bytes_in_total{namespace="",pod="",route="",server="fe_sni",service=""} 0 haproxy_server_bytes_in_total{namespace="default",pod="docker-registry-5-nk5fz",route="docker-registry",server="10.130.0.89:5000",service="docker-registry"} 0 haproxy_server_bytes_in_total{namespace="default",pod="hello-rc-vkjqx",route="hello-route",server="10.130.0.90:8080",service="hello-svc-1"} 0 ...브라우저에 다음 URL을 입력하여 통계 창을 시작합니다.

http://<user>:<password>@<router_IP>:<stats_port>선택 사항: 브라우저에 다음 URL을 입력하여 CSV 형식으로 통계를 가져옵니다.

http://<user>:<password>@<router_ip>:1936/metrics;csv

2.3.9.21. HAProxy 오류 코드 응답 페이지 사용자 정의

클러스터 관리자는 503, 404 또는 두 오류 페이지에 대한 사용자 지정 오류 코드 응답 페이지를 지정할 수 있습니다. HAProxy 라우터는 애플리케이션 pod가 실행 중이 아닌 경우 503 오류 페이지 또는 요청된 URL이 없는 경우 404 오류 페이지를 제공합니다. 예를 들어 503 오류 코드 응답 페이지를 사용자 지정하면 애플리케이션 pod가 실행되지 않을 때 페이지가 제공되며 HAProxy 라우터에서 잘못된 경로 또는 존재하지 않는 경로에 대해 기본 404 오류 코드 HTTP 응답 페이지가 제공됩니다.

사용자 정의 오류 코드 응답 페이지가 구성 맵에 지정되고 Ingress 컨트롤러에 패치됩니다. 구성 맵 키의 사용 가능한 파일 이름은 error-page-503.http 및 error-page-404.http 입니다.

사용자 지정 HTTP 오류 코드 응답 페이지는 HAProxy HTTP 오류 페이지 구성 지침을 따라야 합니다. 다음은 AWS HAProxy 라우터 http 503 오류 코드 응답 페이지의 기본 Red Hat OpenShift Service의 예입니다. 기본 콘텐츠를 고유한 사용자 지정 페이지를 생성하기 위한 템플릿으로 사용할 수 있습니다.

기본적으로 HAProxy 라우터는 애플리케이션이 실행 중이 아니거나 경로가 올바르지 않거나 존재하지 않는 경우 503 오류 페이지만 제공합니다. 이 기본 동작은 AWS 4.8 및 이전 버전의 Red Hat OpenShift Service의 동작과 동일합니다. HTTP 오류 코드 응답 사용자 정의에 대한 구성 맵이 제공되지 않고 사용자 정의 HTTP 오류 코드 응답 페이지를 사용하는 경우 라우터는 기본 404 또는 503 오류 코드 응답 페이지를 제공합니다.

AWS의 Red Hat OpenShift Service를 기본 503 오류 코드 페이지를 사용자 지정 템플릿으로 사용하는 경우 파일의 헤더에는 CRLF 줄 끝을 사용할 수 있는 편집기가 필요합니다.

절차

openshift-config네임스페이스에my-custom-error-code-pages라는 구성 맵을 생성합니다.$ oc -n openshift-config create configmap my-custom-error-code-pages \ --from-file=error-page-503.http \ --from-file=error-page-404.http중요사용자 정의 오류 코드 응답 페이지에 올바른 형식을 지정하지 않으면 라우터 Pod 중단이 발생합니다. 이 중단을 해결하려면 구성 맵을 삭제하거나 수정하고 영향을 받는 라우터 Pod를 삭제하여 올바른 정보로 다시 생성해야 합니다.

이름별로

my-custom-error-code-pages구성 맵을 참조하도록 Ingress 컨트롤러를 패치합니다.$ oc patch -n openshift-ingress-operator ingresscontroller/default --patch '{"spec":{"httpErrorCodePages":{"name":"my-custom-error-code-pages"}}}' --type=mergeIngress Operator는

my-custom-error-code-pages구성 맵을openshift-config네임스페이스에서openshift-ingress네임스페이스로 복사합니다. Operator는openshift-ingress네임스페이스에서<your_ingresscontroller_name>-errorpages패턴에 따라 구성 맵의 이름을 지정합니다.복사본을 표시합니다.

$ oc get cm default-errorpages -n openshift-ingress출력 예

NAME DATA AGE default-errorpages 2 25s1 - 1

defaultIngress 컨트롤러 CR(사용자 정의 리소스)이 패치되었기 때문에 구성 맵 이름은default-errorpages입니다.

사용자 정의 오류 응답 페이지가 포함된 구성 맵이 라우터 볼륨에 마운트되는지 확인합니다. 여기서 구성 맵 키는 사용자 정의 HTTP 오류 코드 응답이 있는 파일 이름입니다.

503 사용자 지정 HTTP 사용자 정의 오류 코드 응답의 경우:

$ oc -n openshift-ingress rsh <router_pod> cat /var/lib/haproxy/conf/error_code_pages/error-page-503.http404 사용자 지정 HTTP 사용자 정의 오류 코드 응답의 경우:

$ oc -n openshift-ingress rsh <router_pod> cat /var/lib/haproxy/conf/error_code_pages/error-page-404.http

검증

사용자 정의 오류 코드 HTTP 응답을 확인합니다.

테스트 프로젝트 및 애플리케이션을 생성합니다.

$ oc new-project test-ingress$ oc new-app django-psql-example503 사용자 정의 http 오류 코드 응답의 경우:

- 애플리케이션의 모든 pod를 중지합니다.

다음 curl 명령을 실행하거나 브라우저에서 경로 호스트 이름을 방문합니다.

$ curl -vk <route_hostname>

404 사용자 정의 http 오류 코드 응답의 경우:

- 존재하지 않는 경로 또는 잘못된 경로를 방문합니다.

다음 curl 명령을 실행하거나 브라우저에서 경로 호스트 이름을 방문합니다.

$ curl -vk <route_hostname>

errorfile속성이haproxy.config파일에 제대로 있는지 확인합니다.$ oc -n openshift-ingress rsh <router> cat /var/lib/haproxy/conf/haproxy.config | grep errorfile

2.3.9.22. Ingress 컨트롤러 최대 연결 설정

클러스터 관리자는 OpenShift 라우터 배포에 대한 최대 동시 연결 수를 설정할 수 있습니다. 기존 Ingress 컨트롤러를 패치하여 최대 연결 수를 늘릴 수 있습니다.

사전 요구 사항

- 다음은 Ingress 컨트롤러를 이미 생성했다고 가정합니다.

프로세스

HAProxy의 최대 연결 수를 변경하도록 Ingress 컨트롤러를 업데이트합니다.

$ oc -n openshift-ingress-operator patch ingresscontroller/default --type=merge -p '{"spec":{"tuningOptions": {"maxConnections": 7500}}}'주의spec.tuningOptions.maxConnections값을 현재 운영 체제 제한보다 크게 설정하면 HAProxy 프로세스가 시작되지 않습니다. 이 매개변수에 대한 자세한 내용은 "Ingress Controller 구성 매개변수" 섹션의 표를 참조하십시오.

2.3.10. Red Hat OpenShift Service on AWS Ingress Operator 구성

다음 표에서는 Ingress Operator의 구성 요소와 Red Hat 사이트 안정성 엔지니어(SRE)가 AWS 클러스터의 Red Hat OpenShift Service에서 이 구성 요소를 유지보수하는 경우 자세히 설명합니다.

| Ingress 구성 요소 | 관리 대상 | 기본 설정 |

|---|---|---|

| Ingress 컨트롤러 스케일링 | SRE | 제공됨 |

| Ingress Operator 스레드 수 | SRE | 제공됨 |

| Ingress 컨트롤러 액세스 로깅 | SRE | 제공됨 |

| Ingress 컨트롤러 분할 | SRE | 제공됨 |

| Ingress 컨트롤러 경로 허용 정책 | SRE | 제공됨 |

| Ingress 컨트롤러 와일드카드 경로 | SRE | 제공됨 |

| Ingress 컨트롤러 X-Forwarded 헤더 | SRE | 제공됨 |

| Ingress 컨트롤러 경로 압축 | SRE | 제공됨 |

2.4. AWS의 Red Hat OpenShift Service의 Ingress Node Firewall Operator

Ingress Node Firewall Operator는 AWS의 Red Hat OpenShift Service에서 노드 수준 인그레스 트래픽을 관리하기 위한 상태 비저장 eBPF 기반 방화벽을 제공합니다.

2.4.1. Ingress 노드 방화벽 Operator

Ingress Node Firewall Operator는 방화벽 구성에서 지정 및 관리하는 노드에 데몬 세트를 배포하여 노드 수준에서 Ingress 방화벽 규칙을 제공합니다. 데몬 세트를 배포하려면 IngressNodeFirewallConfig CR(사용자 정의 리소스)을 생성합니다. Operator는 IngressNodeFirewallConfig CR을 적용하여 nodeSelector 와 일치하는 모든 노드에서 실행되는 수신 노드 방화벽 데몬 세트를 생성합니다.

IngressNodeFirewall CR의 규칙을 구성하고 nodeSelector 를 사용하여 클러스터에 적용하고 값을 "true"로 설정합니다.

Ingress Node Firewall Operator는 상태 비저장 방화벽 규칙만 지원합니다.

기본 XDP 드라이버를 지원하지 않는 NIC(네트워크 인터페이스 컨트롤러)는 더 낮은 성능에서 실행됩니다.

AWS 4.14 이상에서 Red Hat OpenShift Service의 경우 RHEL 9.0 이상에서 Ingress Node Firewall Operator를 실행해야 합니다.

2.4.2. Ingress Node Firewall Operator 설치

클러스터 관리자는 AWS CLI 또는 웹 콘솔에서 Red Hat OpenShift Service를 사용하여 Ingress Node Firewall Operator를 설치할 수 있습니다.

2.4.2.1. CLI를 사용하여 Ingress Node Firewall Operator 설치

클러스터 관리자는 CLI를 사용하여 Operator를 설치할 수 있습니다.

사전 요구 사항

-

OpenShift CLI(

oc)가 설치되어 있습니다. - 관리자 권한이 있는 계정이 있습니다.

절차

openshift-ingress-node-firewall네임스페이스를 생성하려면 다음 명령을 입력합니다.$ cat << EOF| oc create -f - apiVersion: v1 kind: Namespace metadata: labels: pod-security.kubernetes.io/enforce: privileged pod-security.kubernetes.io/enforce-version: v1.24 name: openshift-ingress-node-firewall EOFOperatorGroupCR을 생성하려면 다음 명령을 입력합니다.$ cat << EOF| oc create -f - apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: ingress-node-firewall-operators namespace: openshift-ingress-node-firewall EOFIngress Node Firewall Operator에 등록합니다.

Ingress Node Firewall Operator에 대한

서브스크립션CR을 생성하려면 다음 명령을 입력합니다.$ cat << EOF| oc create -f - apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: ingress-node-firewall-sub namespace: openshift-ingress-node-firewall spec: name: ingress-node-firewall channel: stable source: redhat-operators sourceNamespace: openshift-marketplace EOF

Operator가 설치되었는지 확인하려면 다음 명령을 입력합니다.

$ oc get ip -n openshift-ingress-node-firewall출력 예

NAME CSV APPROVAL APPROVED install-5cvnz ingress-node-firewall.4.0-202211122336 Automatic trueOperator 버전을 확인하려면 다음 명령을 입력합니다.

$ oc get csv -n openshift-ingress-node-firewall출력 예

NAME DISPLAY VERSION REPLACES PHASE ingress-node-firewall.4.0-202211122336 Ingress Node Firewall Operator 4.0-202211122336 ingress-node-firewall.4.0-202211102047 Succeeded

2.4.2.2. 웹 콘솔을 사용하여 Ingress Node Firewall Operator 설치

클러스터 관리자는 웹 콘솔을 사용하여 Operator를 설치할 수 있습니다.

사전 요구 사항

-

OpenShift CLI(

oc)가 설치되어 있습니다. - 관리자 권한이 있는 계정이 있습니다.

절차

Ingress Node Firewall Operator를 설치합니다.

- AWS 웹 콘솔의 Red Hat OpenShift Service에서 Operator → OperatorHub 를 클릭합니다.

- 사용 가능한 Operator 목록에서 Ingress Node Firewall Operator 를 선택한 다음 설치를 클릭합니다.

- Operator 설치 페이지의 설치된 네임스페이스 에서 Operator 권장 네임스페이스를 선택합니다.

- 설치를 클릭합니다.

Ingress Node Firewall Operator가 성공적으로 설치되었는지 확인합니다.

- Operator → 설치된 Operator 페이지로 이동합니다.

Ingress Node Firewall Operator 가 openshift-ingress-node-firewall 프로젝트에 InstallSucceeded 상태로 나열되어 있는지 확인합니다.

참고설치 중에 Operator는 실패 상태를 표시할 수 있습니다. 나중에 InstallSucceeded 메시지와 함께 설치에 성공하면 이 실패 메시지를 무시할 수 있습니다.

Operator에 InstallSucceeded 상태가 없는 경우 다음 단계를 사용하여 문제를 해결합니다.

- Operator 서브스크립션 및 설치 계획 탭의 상태 아래에서 실패 또는 오류가 있는지 검사합니다.

-

워크로드 → Pod 페이지로 이동하여

openshift-ingress-node-firewall프로젝트에서 Pod 로그를 확인합니다. YAML 파일의 네임스페이스를 확인합니다. 주석이 없는 경우 다음 명령을 사용하여 주석

workload.openshift.io/allowed=management를 Operator 네임스페이스에 추가할 수 있습니다.$ oc annotate ns/openshift-ingress-node-firewall workload.openshift.io/allowed=management참고단일 노드 OpenShift 클러스터의 경우

openshift-ingress-node-firewall네임스페이스에workload.openshift.io/allowed=management주석이 필요합니다.

2.4.3. Ingress Node Firewall Operator 배포

사전 요구 사항

- Ingress Node Firewall Operator가 설치되어 있습니다.

절차

Ingress Node Firewall Operator를 배포하려면 Operator의 데몬 세트를 배포할 IngressNodeFirewallConfig 사용자 정의 리소스를 생성합니다. 방화벽 규칙을 적용하여 하나 이상의 IngressNodeFirewall CRD를 노드에 배포할 수 있습니다.

-

ingressnodefirewallconfig라는openshift-ingress-node-firewall네임스페이스에IngressNodeFirewallConfig를 생성합니다. 다음 명령을 실행하여 Ingress Node Firewall Operator 규칙을 배포합니다.

$ oc apply -f rule.yaml

2.4.3.1. Ingress 노드 방화벽 구성 오브젝트

Ingress 노드 방화벽 구성 오브젝트의 필드는 다음 표에 설명되어 있습니다.

| 필드 | 유형 | 설명 |

|---|---|---|

|

|

|

CR 오브젝트의 이름입니다. 방화벽 규칙 오브젝트의 이름은 |

|

|

|

Ingress Firewall Operator CR 오브젝트의 네임스페이스입니다. |

|

|

| 지정된 노드 라벨을 통해 노드를 대상으로 지정하는 데 사용되는 노드 선택 제약 조건입니다. 예를 들면 다음과 같습니다. 참고

|

|

|

| Node Ingress Firewall Operator에서 eBPF Manager Operator를 사용하거나 eBPF 프로그램을 관리하지 않는지를 지정합니다. 이 기능은 기술 프리뷰 기능입니다. Red Hat 기술 프리뷰 기능의 지원 범위에 대한 자세한 내용은 기술 프리뷰 기능 지원 범위를 참조하십시오. |

Operator는 CR을 사용하고 nodeSelector 와 일치하는 모든 노드에 Ingress 노드 방화벽 데몬 세트를 생성합니다.

Ingress Node Firewall Operator 구성 예

다음 예제에서는 전체 Ingress 노드 방화벽 구성이 지정됩니다.

Ingress 노드 방화벽 구성 오브젝트의 예

apiVersion: ingressnodefirewall.openshift.io/v1alpha1

kind: IngressNodeFirewallConfig

metadata:

name: ingressnodefirewallconfig

namespace: openshift-ingress-node-firewall

spec:

nodeSelector:

node-role.kubernetes.io/worker: ""

Operator는 CR을 사용하고 nodeSelector 와 일치하는 모든 노드에 Ingress 노드 방화벽 데몬 세트를 생성합니다.

2.4.3.2. Ingress 노드 방화벽 규칙 오브젝트

Ingress 노드 방화벽 규칙 오브젝트의 필드는 다음 표에 설명되어 있습니다.

| 필드 | 유형 | 설명 |

|---|---|---|

|

|

| CR 오브젝트의 이름입니다. |

|

|

|

이 오브젝트의 필드는 방화벽 규칙을 적용할 인터페이스를 지정합니다. 예: |

|

|

|

|

|

|

|

|

Ingress 오브젝트 구성

ingress 오브젝트의 값은 다음 표에 정의되어 있습니다.

| 필드 | 유형 | 설명 |

|---|---|---|

|

|

| CIDR 블록을 설정할 수 있습니다. 다른 주소 제품군에서 여러 CIDR을 구성할 수 있습니다. 참고

다른 CIDR을 사용하면 동일한 주문 규칙을 사용할 수 있습니다. CIDR이 겹치는 동일한 노드 및 인터페이스에 |

|

|

|

Ingress 방화벽

규칙을 적용하거나 참고 Ingress 방화벽 규칙은 잘못된 구성을 차단하는 확인 Webhook를 사용하여 확인합니다. 확인 Webhook를 사용하면 API 서버와 같은 중요한 클러스터 서비스를 차단할 수 없습니다. |

Ingress 노드 방화벽 규칙 오브젝트 예

다음 예제에서는 전체 Ingress 노드 방화벽 구성이 지정됩니다.

Ingress 노드 방화벽 구성의 예

apiVersion: ingressnodefirewall.openshift.io/v1alpha1

kind: IngressNodeFirewall

metadata:

name: ingressnodefirewall

spec:

interfaces:

- eth0

nodeSelector:

matchLabels:

<ingress_firewall_label_name>: <label_value>

ingress:

- sourceCIDRs:

- 172.16.0.0/12

rules:

- order: 10

protocolConfig:

protocol: ICMP

icmp:

icmpType: 8 #ICMP Echo request

action: Deny

- order: 20

protocolConfig:

protocol: TCP

tcp:

ports: "8000-9000"

action: Deny

- sourceCIDRs:

- fc00:f853:ccd:e793::0/64

rules:

- order: 10

protocolConfig:

protocol: ICMPv6

icmpv6:

icmpType: 128 #ICMPV6 Echo request

action: Deny- 1

- <label_name> 및 <label_value>는 노드에 있어야 하며

ingressfirewallconfigCR을 실행할 노드에 적용되는nodeselector레이블 및 값과 일치해야 합니다. <label_value>는true또는false일 수 있습니다.nodeSelector레이블을 사용하면 별도의 노드 그룹을 대상으로 하여ingressfirewallconfigCR을 사용하는 데 다른 규칙을 적용할 수 있습니다.

제로 신뢰 Ingress 노드 방화벽 규칙 오브젝트 예

제로 트러스트 Ingress 노드 방화벽 규칙은 다중 인터페이스 클러스터에 추가 보안을 제공할 수 있습니다. 예를 들어 제로 신뢰 Ingress 노드 방화벽 규칙을 사용하여 SSH를 제외한 특정 인터페이스에서 모든 트래픽을 삭제할 수 있습니다.

다음 예에서는 제로 신뢰 Ingress 노드 방화벽 규칙 세트의 전체 구성이 지정됩니다.

사용자는 적절한 기능을 보장하기 위해 애플리케이션이 허용 목록에 사용하는 모든 포트를 추가해야 합니다.

제로 트러스트 Ingress 노드 방화벽 규칙의 예

apiVersion: ingressnodefirewall.openshift.io/v1alpha1

kind: IngressNodeFirewall

metadata:

name: ingressnodefirewall-zero-trust

spec:

interfaces:

- eth1

nodeSelector:

matchLabels:

<ingress_firewall_label_name>: <label_value>

ingress:

- sourceCIDRs:

- 0.0.0.0/0

rules:

- order: 10

protocolConfig:

protocol: TCP

tcp:

ports: 22

action: Allow

- order: 20

action: Deny eBPF Manager Operator 통합은 기술 프리뷰 기능 전용입니다. 기술 프리뷰 기능은 Red Hat 프로덕션 서비스 수준 계약(SLA)에서 지원되지 않으며 기능적으로 완전하지 않을 수 있습니다. 따라서 프로덕션 환경에서 사용하는 것은 권장하지 않습니다. 이러한 기능을 사용하면 향후 제품 기능을 조기에 이용할 수 있어 개발 과정에서 고객이 기능을 테스트하고 피드백을 제공할 수 있습니다.

Red Hat 기술 프리뷰 기능의 지원 범위에 대한 자세한 내용은 기술 프리뷰 기능 지원 범위를 참조하십시오.

2.4.4. Ingress Node Firewall Operator 통합

Ingress 노드 방화벽은 eBPF 프로그램을 사용하여 일부 주요 방화벽 기능을 구현합니다. 기본적으로 이러한 eBPF 프로그램은 Ingress 노드 방화벽과 관련된 메커니즘을 사용하여 커널에 로드됩니다. 대신 eBPF Manager Operator를 사용하여 이러한 프로그램을 로드 및 관리하도록 Ingress Node Firewall Operator를 구성할 수 있습니다.

이 통합을 활성화하면 다음과 같은 제한 사항이 적용됩니다.

- Ingress Node Firewall Operator는 XDP를 사용할 수 없는 경우 TCX를 사용하고 TCX는 bpfman과 호환되지 않습니다.

-

Ingress Node Firewall Operator 데몬 세트 Pod는 방화벽 규칙이 적용될 때까지

ContainerCreating상태로 유지됩니다. - Ingress Node Firewall Operator 데몬 세트 Pod는 privileged로 실행됩니다.

2.4.5. eBPF Manager Operator를 사용하도록 Ingress Node Firewall Operator 구성

Ingress 노드 방화벽은 eBPF 프로그램을 사용하여 일부 주요 방화벽 기능을 구현합니다. 기본적으로 이러한 eBPF 프로그램은 Ingress 노드 방화벽과 관련된 메커니즘을 사용하여 커널에 로드됩니다.

클러스터 관리자는 eBPF Manager Operator를 사용하여 이러한 프로그램을 로드 및 관리하도록 Ingress Node Firewall Operator를 구성하여 추가 보안 및 관찰 기능을 추가할 수 있습니다.

사전 요구 사항

-

OpenShift CLI(

oc)가 설치되어 있습니다. - 관리자 권한이 있는 계정이 있습니다.

- Ingress Node Firewall Operator가 설치되어 있어야 합니다.

- eBPF Manager Operator가 설치되어 있습니다.

절차

ingress-node-firewall-system네임스페이스에 다음 라벨을 적용합니다.$ oc label namespace openshift-ingress-node-firewall \ pod-security.kubernetes.io/enforce=privileged \ pod-security.kubernetes.io/warn=privileged --overwriteingressnodefirewallconfig라는IngressNodeFirewallConfig오브젝트를 편집하고ebpfProgramManagerMode필드를 설정합니다.Ingress Node Firewall Operator 구성 오브젝트

apiVersion: ingressnodefirewall.openshift.io/v1alpha1 kind: IngressNodeFirewallConfig metadata: name: ingressnodefirewallconfig namespace: openshift-ingress-node-firewall spec: nodeSelector: node-role.kubernetes.io/worker: "" ebpfProgramManagerMode: <ebpf_mode>다음과 같습니다.

<ebpf_mode> : Ingress Node Firewall Operator에서 eBPF Manager Operator를 사용하여 eBPF 프로그램을 관리할지 여부를 지정합니다.true또는false여야 합니다. 설정되지 않은 경우 eBPF Manager가 사용되지 않습니다.

2.4.6. Ingress Node Firewall Operator 규칙 보기

절차

다음 명령을 실행하여 현재 모든 규칙을 확인합니다.

$ oc get ingressnodefirewall반환된 <

resource>이름 중 하나를 선택하고 다음 명령을 실행하여 규칙 또는 구성을 확인합니다.$ oc get <resource> <name> -o yaml

2.4.7. Ingress Node Firewall Operator 문제 해결

다음 명령을 실행하여 설치된 Ingress Node Firewall CRD(사용자 정의 리소스 정의)를 나열합니다.

$ oc get crds | grep ingressnodefirewall출력 예

NAME READY UP-TO-DATE AVAILABLE AGE ingressnodefirewallconfigs.ingressnodefirewall.openshift.io 2022-08-25T10:03:01Z ingressnodefirewallnodestates.ingressnodefirewall.openshift.io 2022-08-25T10:03:00Z ingressnodefirewalls.ingressnodefirewall.openshift.io 2022-08-25T10:03:00Z다음 명령을 실행하여 Ingress Node Firewall Operator의 상태를 확인합니다.

$ oc get pods -n openshift-ingress-node-firewall출력 예

NAME READY STATUS RESTARTS AGE ingress-node-firewall-controller-manager 2/2 Running 0 5d21h ingress-node-firewall-daemon-pqx56 3/3 Running 0 5d21h다음 필드는 Operator 상태에 대한 정보를 제공합니다.

READY,STATUS,AGE,RESTARTS. Ingress Node Firewall Operator가 할당된 노드에 데몬 세트를 배포할 때STATUS필드는Running입니다.다음 명령을 실행하여 모든 수신 방화벽 노드 Pod의 로그를 수집합니다.

$ oc adm must-gather – gather_ingress_node_firewall로그는 /s

os_commands/ebpff .에 있는 eBPF보고서에서 사용할 수 있습니다. 이러한 보고서에는 수신 방화벽 XDP가 패킷 처리를 처리하고 통계를 업데이트하고 이벤트를 발송할 때 사용되거나 업데이트된 조회 테이블이 포함됩니다.bpftool출력이 포함된 sos 노드의

3장. ROSA 클러스터에 대한 네트워크 확인

네트워크 확인 검사는 AWS(ROSA)의 Red Hat OpenShift Service를 기존 VPC(Virtual Private Cloud)에 배포하거나 클러스터에 새로 추가된 서브넷을 사용하여 추가 머신 풀을 생성할 때 자동으로 실행됩니다. 이 검사에서는 네트워크 구성을 검증하고 오류를 강조 표시하므로 배포 전에 구성 문제를 해결할 수 있습니다.

네트워크 확인 검사를 수동으로 실행하여 기존 클러스터의 구성을 검증할 수도 있습니다.

3.1. ROSA 클러스터에 대한 네트워크 확인 이해

AWS(ROSA) 클러스터에 Red Hat OpenShift Service를 기존 VPC(Virtual Private Cloud)에 배포하거나 클러스터에 새로 추가된 서브넷을 사용하여 추가 머신 풀을 생성하면 네트워크 확인이 자동으로 실행됩니다. 이를 통해 배포 전에 구성 문제를 식별하고 해결할 수 있습니다.

Red Hat OpenShift Cluster Manager를 사용하여 클러스터 설치를 준비하면 VPC(Virtual Private Cloud) 서브넷 설정 페이지의 서브넷 ID 필드에 서브넷 ID를 입력한 후 자동 검사가 실행됩니다. ROSA CLI(rosa)를 대화형 모드로 사용하여 클러스터를 생성하는 경우 필요한 VPC 네트워크 정보를 제공한 후 검사가 실행됩니다. 대화형 모드 없이 CLI를 사용하는 경우 클러스터 생성 직전에 검사가 시작됩니다.

서브넷이 새로 추가된 서브넷으로 머신 풀을 추가하면 자동 네트워크 확인에서 서브넷을 확인하여 머신 풀을 프로비저닝하기 전에 네트워크 연결을 사용할 수 있는지 확인합니다.

자동 네트워크 확인이 완료되면 서비스 로그에 레코드가 전송됩니다. 레코드는 네트워크 구성 오류를 포함하여 확인 확인 결과를 제공합니다. 배포 전에 확인된 문제를 해결할 수 있으며 배포의 성공 가능성이 더 높습니다.

기존 클러스터에 대해 네트워크 확인을 수동으로 실행할 수도 있습니다. 이를 통해 구성을 변경한 후 클러스터의 네트워크 구성을 확인할 수 있습니다. 네트워크 확인 검사를 수동으로 실행하는 단계는 네트워크 확인 수동 실행을 참조하십시오.

3.2. 네트워크 검증 검사 범위

네트워크 확인에는 다음 요구사항 각각에 대한 검사가 포함됩니다.

- 상위 VPC(Virtual Private Cloud)가 있습니다.

- 지정된 모든 서브넷이 VPC에 속합니다.

-

VPC에

enableDnsSupport가 활성화되어 있습니다. -

VPC에

enableDnsHostnames가 활성화되어 있습니다. - egress는 AWS 방화벽 사전 요구 사항 섹션에 지정된 필수 도메인 및 포트 조합에서 사용할 수 있습니다.

3.3. 자동 네트워크 확인 우회

알려진 네트워크 구성 문제가 있는 AWS(ROSA) 클러스터에 Red Hat OpenShift Service를 기존 VPC(Virtual Private Cloud)에 배포하려면 자동 네트워크 확인을 바이패스할 수 있습니다.

클러스터를 생성할 때 네트워크 확인을 바이패스하면 클러스터의 지원 상태가 제한됩니다. 설치 후 문제를 해결한 다음 네트워크 확인을 수동으로 실행할 수 있습니다. 제한된 지원 상태는 검증에 성공한 후 제거됩니다.

OpenShift Cluster Manager를 사용하여 자동 네트워크 확인 우회

Red Hat OpenShift Cluster Manager를 사용하여 기존 VPC에 클러스터를 설치하는 경우 VPC(Virtual Private Cloud) 서브넷 설정 페이지에서 Bypass network verification 을 선택하여 자동 확인을 바이패스할 수 있습니다.

3.4. 수동으로 네트워크 확인 실행

AWS(ROSA) 클러스터에 Red Hat OpenShift Service를 설치한 후 Red Hat OpenShift Cluster Manager 또는 ROSA CLI(rosa)를 사용하여 네트워크 확인 검사를 수동으로 실행할 수 있습니다.

OpenShift Cluster Manager를 사용하여 네트워크 확인 수동으로 실행

Red Hat OpenShift Cluster Manager를 사용하여 AWS(ROSA) 클러스터에서 기존 Red Hat OpenShift Service에 대한 네트워크 확인 검사를 수동으로 실행할 수 있습니다.

사전 요구 사항

- 기존 ROSA 클러스터가 있습니다.

- 클러스터 소유자이거나 클러스터 편집기 역할이 있습니다.

절차

- OpenShift Cluster Manager 로 이동하여 클러스터를 선택합니다.

- 작업 드롭다운 메뉴에서 네트워크 확인을 선택합니다.

CLI를 사용하여 수동으로 네트워크 확인 실행

ROSA CLI(rosa)를 사용하여 AWS(ROSA) 클러스터에서 기존 Red Hat OpenShift Service에 대한 네트워크 확인 검사를 수동으로 실행할 수 있습니다.

네트워크 확인을 실행하면 VPC 서브넷 ID 세트 또는 클러스터 이름을 지정할 수 있습니다.

사전 요구 사항

-

설치 호스트에 최신 ROSA CLI(

rosa)를 설치하고 구성했습니다. - 기존 ROSA 클러스터가 있습니다.

- 클러스터 소유자이거나 클러스터 편집기 역할이 있습니다.

절차

다음 방법 중 하나를 사용하여 네트워크 구성을 확인합니다.

클러스터 이름을 지정하여 네트워크 구성을 확인합니다. 서브넷 ID가 자동으로 탐지됩니다.

$ rosa verify network --cluster <cluster_name>1 - 1

- &

lt;cluster_name>을 클러스터 이름으로 바꿉니다.

출력 예

I: Verifying the following subnet IDs are configured correctly: [subnet-03146b9b52b6024cb subnet-03146b9b52b2034cc] I: subnet-03146b9b52b6024cb: pending I: subnet-03146b9b52b2034cc: passed I: Run the following command to wait for verification to all subnets to complete: rosa verify network --watch --status-only --region us-east-1 --subnet-ids subnet-03146b9b52b6024cb,subnet-03146b9b52b2034cc모든 서브넷에 대한 확인이 완료되었는지 확인합니다.

$ rosa verify network --watch \1 --status-only \2 --region <region_name> \3 --subnet-ids subnet-03146b9b52b6024cb,subnet-03146b9b52b2034cc4 - 1

watch플래그는 test의 모든 서브넷이 실패 또는 전달된 상태에 있는 후 명령이 완료되도록 합니다.- 2

status-only플래그는 네트워크 확인을 트리거하지 않지만 현재 상태를 반환합니다(예:subnet-123(확인은 여전히 진행 중). 기본적으로 이 옵션이 없으면 이 명령을 호출하면 지정된 서브넷의 확인이 항상 트리거됩니다.- 3

- AWS_REGION 환경 변수를 재정의하는 특정 AWS 리전을 사용합니다.

- 4

- 쉼표로 구분된 서브넷 ID 목록을 입력하여 확인합니다. 서브넷이 없는 경우

'subnet-<subnet_number> 서브넷에 대한 Network 확인 메시지가 표시되고 서브넷을 확인하지 않습니다.

출력 예

I: Checking the status of the following subnet IDs: [subnet-03146b9b52b6024cb subnet-03146b9b52b2034cc] I: subnet-03146b9b52b6024cb: passed I: subnet-03146b9b52b2034cc: passed작은 정보전체 확인 테스트 목록을 출력하려면

rosa verify network명령을 실행할 때--debug인수를 포함할 수 있습니다.

VPC 서브넷 ID를 지정하여 네트워크 구성을 확인합니다. <

region_name>을 AWS 리전으로 바꾸고 <AWS_account_ID>를 AWS 계정 ID로 바꿉니다.$ rosa verify network --subnet-ids 03146b9b52b6024cb,subnet-03146b9b52b2034cc --region <region_name> --role-arn arn:aws:iam::<AWS_account_ID>:role/my-Installer-Role출력 예

I: Verifying the following subnet IDs are configured correctly: [subnet-03146b9b52b6024cb subnet-03146b9b52b2034cc] I: subnet-03146b9b52b6024cb: pending I: subnet-03146b9b52b2034cc: passed I: Run the following command to wait for verification to all subnets to complete: rosa verify network --watch --status-only --region us-east-1 --subnet-ids subnet-03146b9b52b6024cb,subnet-03146b9b52b2034cc모든 서브넷에 대한 확인이 완료되었는지 확인합니다.

$ rosa verify network --watch --status-only --region us-east-1 --subnet-ids subnet-03146b9b52b6024cb,subnet-03146b9b52b2034cc출력 예

I: Checking the status of the following subnet IDs: [subnet-03146b9b52b6024cb subnet-03146b9b52b2034cc] I: subnet-03146b9b52b6024cb: passed I: subnet-03146b9b52b2034cc: passed

4장. 클러스터 전체 프록시 구성

기존 VPC(Virtual Private Cloud)를 사용하는 경우 Red Hat OpenShift Service on AWS(ROSA) 클러스터 설치 중 또는 클러스터가 설치된 후 클러스터 전체 프록시를 구성할 수 있습니다. 프록시를 활성화하면 코어 클러스터 구성 요소가 인터넷에 대한 직접 액세스가 거부되지만 프록시는 사용자 워크로드에 영향을 미치지 않습니다.