Storage Administration Guide

Deploying and configuring single-node storage in Red Hat Enterprise Linux 6

Abstract

Chapter 1. Overview

1.1. What's New in Red Hat Enterprise Linux 6

File System Encryption (Technology Preview)

File System Caching (Technology Preview)

Btrfs (Technology Preview)

I/O Limit Processing

ext4 Support

Network Block Storage

Part I. File Systems

Chapter 2. File System Structure and Maintenance

- Shareable versus unshareable files

- Variable versus static files

2.1. Overview of Filesystem Hierarchy Standard (FHS)

- Compatibility with other FHS-compliant systems

- The ability to mount a

/usr/partition as read-only. This is especially crucial, since/usr/contains common executables and should not be changed by users. In addition, since/usr/is mounted as read-only, it should be mountable from the CD-ROM drive or from another machine via a read-only NFS mount.

2.1.1. FHS Organization

2.1.1.1. Gathering File System Information

df command reports the system's disk space usage. Its output looks similar to the following:

Example 2.1. df command output

df shows the partition size in 1 kilobyte blocks and the amount of used and available disk space in kilobytes. To view the information in megabytes and gigabytes, use the command df -h. The -h argument stands for "human-readable" format. The output for df -h looks similar to the following:

Example 2.2. df -h command output

Note

/dev/shm represents the system's virtual memory file system.

du command displays the estimated amount of space being used by files in a directory, displaying the disk usage of each subdirectory. The last line in the output of du shows the total disk usage of the directory; to see only the total disk usage of a directory in human-readable format, use du -hs. For more options, refer to man du.

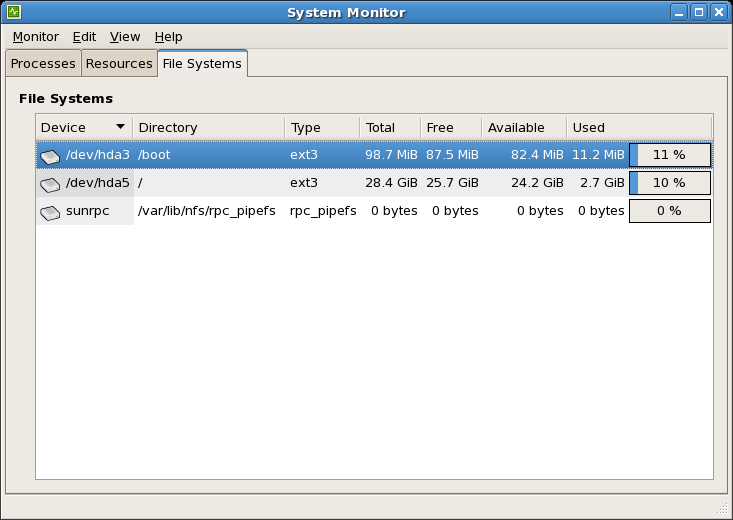

gnome-system-monitor. Select the File Systems tab to view the system's partitions. The figure below illustrates the File Systems tab.

Figure 2.1. GNOME System Monitor File Systems tab

2.1.1.2. The /boot/ Directory

/boot/ directory contains static files required to boot the system, for example, the Linux kernel. These files are essential for the system to boot properly.

Warning

/boot/ directory. Doing so renders the system unbootable.

2.1.1.3. The /dev/ Directory

/dev/ directory contains device nodes that represent the following device types:

- devices attached to the system;

- virtual devices provided by the kernel.

udevd daemon creates and removes device nodes in /dev/ as needed.

/dev/ directory and subdirectories are defined as either character (providing only a serial stream of input and output, for example, mouse or keyboard) or block (accessible randomly, for example, a hard drive or a floppy drive). If GNOME or KDE is installed, some storage devices are automatically detected when connected (such as with a USB) or inserted (such as a CD or DVD drive), and a pop-up window displaying the contents appears.

| File | Description |

|---|---|

| /dev/hda | The master device on the primary IDE channel. |

| /dev/hdb | The slave device on the primary IDE channel. |

| /dev/tty0 | The first virtual console. |

| /dev/tty1 | The second virtual console. |

| /dev/sda | The first device on the primary SCSI or SATA channel. |

| /dev/lp0 | The first parallel port. |

| /dev/ttyS0 | Serial port. |

2.1.1.4. The /etc/ Directory

/etc/ directory is reserved for configuration files that are local to the machine. It should contain no binaries; any binaries should be moved to /bin/ or /sbin/.

/etc/skel/ directory stores "skeleton" user files, which are used to populate a home directory when a user is first created. Applications also store their configuration files in this directory and may reference them when executed. The /etc/exports file controls which file systems export to remote hosts.

2.1.1.5. The /lib/ Directory

/lib/ directory should only contain libraries needed to execute the binaries in /bin/ and /sbin/. These shared library images are used to boot the system or execute commands within the root file system.

2.1.1.6. The /media/ Directory

/media/ directory contains subdirectories used as mount points for removable media, such as USB storage media, DVDs, and CD-ROMs.

2.1.1.7. The /mnt/ Directory

/mnt/ directory is reserved for temporarily mounted file systems, such as NFS file system mounts. For all removable storage media, use the /media/ directory. Automatically detected removable media will be mounted in the /media directory.

Important

/mnt directory must not be used by installation programs.

2.1.1.8. The /opt/ Directory

/opt/ directory is normally reserved for software and add-on packages that are not part of the default installation. A package that installs to /opt/ creates a directory bearing its name, for example /opt/packagename/. In most cases, such packages follow a predictable subdirectory structure; most store their binaries in /opt/packagename/bin/ and their man pages in /opt/packagename/man/.

2.1.1.9. The /proc/ Directory

/proc/ directory contains special files that either extract information from the kernel or send information to it. Examples of such information include system memory, CPU information, and hardware configuration. For more information about /proc/, refer to Section 2.3, “The /proc Virtual File System”.

2.1.1.10. The /sbin/ Directory

/sbin/ directory stores binaries essential for booting, restoring, recovering, or repairing the system. The binaries in /sbin/ require root privileges to use. In addition, /sbin/ contains binaries used by the system before the /usr/ directory is mounted; any system utilities used after /usr/ is mounted are typically placed in /usr/sbin/.

/sbin/:

arpclockhaltinitfsck.*grubifconfigmingettymkfs.*mkswaprebootrouteshutdownswapoffswapon

2.1.1.11. The /srv/ Directory

/srv/ directory contains site-specific data served by a Red Hat Enterprise Linux system. This directory gives users the location of data files for a particular service, such as FTP, WWW, or CVS. Data that only pertains to a specific user should go in the /home/ directory.

Note

/var/www/html for served content.

2.1.1.12. The /sys/ Directory

/sys/ directory utilizes the new sysfs virtual file system specific to the 2.6 kernel. With the increased support for hot plug hardware devices in the 2.6 kernel, the /sys/ directory contains information similar to that held by /proc/, but displays a hierarchical view of device information specific to hot plug devices.

2.1.1.13. The /usr/ Directory

/usr/ directory is for files that can be shared across multiple machines. The /usr/ directory is often on its own partition and is mounted read-only. The /usr/ directory usually contains the following subdirectories:

/usr/bin- This directory is used for binaries.

/usr/etc- This directory is used for system-wide configuration files.

/usr/games- This directory stores games.

/usr/include- This directory is used for C header files.

/usr/kerberos- This directory is used for Kerberos-related binaries and files.

/usr/lib- This directory is used for object files and libraries that are not designed to be directly utilized by shell scripts or users. This directory is for 32-bit systems.

/usr/lib64- This directory is used for object files and libraries that are not designed to be directly utilized by shell scripts or users. This directory is for 64-bit systems.

/usr/libexec- This directory contains small helper programs called by other programs.

/usr/sbin- This directory stores system administration binaries that do not belong to

/sbin/. /usr/share- This directory stores files that are not architecture-specific.

/usr/src- This directory stores source code.

/usr/tmplinked to/var/tmp- This directory stores temporary files.

/usr/ directory should also contain a /local/ subdirectory. As per the FHS, this subdirectory is used by the system administrator when installing software locally, and should be safe from being overwritten during system updates. The /usr/local directory has a structure similar to /usr/, and contains the following subdirectories:

/usr/local/bin/usr/local/etc/usr/local/games/usr/local/include/usr/local/lib/usr/local/libexec/usr/local/sbin/usr/local/share/usr/local/src

/usr/local/ differs slightly from the FHS. The FHS states that /usr/local/ should be used to store software that should remain safe from system software upgrades. Since the RPM Package Manager can perform software upgrades safely, it is not necessary to protect files by storing them in /usr/local/.

/usr/local/ for software local to the machine. For instance, if the /usr/ directory is mounted as a read-only NFS share from a remote host, it is still possible to install a package or program under the /usr/local/ directory.

2.1.1.14. The /var/ Directory

/usr/ as read-only, any programs that write log files or need spool/ or lock/ directories should write them to the /var/ directory. The FHS states /var/ is for variable data, which includes spool directories and files, logging data, transient and temporary files.

/var/ directory depending on what is installed on the system:

/var/account//var/arpwatch//var/cache//var/crash//var/db//var/empty//var/ftp//var/gdm//var/kerberos//var/lib//var/local//var/lock//var/log//var/maillinked to/var/spool/mail//var/mailman//var/named//var/nis//var/opt//var/preserve//var/run//var/spool//var/tmp//var/tux//var/www//var/yp/

messages and lastlog, go in the /var/log/ directory. The /var/lib/rpm/ directory contains RPM system databases. Lock files go in the /var/lock/ directory, usually in directories for the program using the file. The /var/spool/ directory has subdirectories that store data files for some programs. These subdirectories may include:

/var/spool/at//var/spool/clientmqueue//var/spool/cron//var/spool/cups//var/spool/exim//var/spool/lpd//var/spool/mail//var/spool/mailman//var/spool/mqueue//var/spool/news//var/spool/postfix//var/spool/repackage//var/spool/rwho//var/spool/samba//var/spool/squid//var/spool/squirrelmail//var/spool/up2date//var/spool/uucp//var/spool/uucppublic//var/spool/vbox/

2.2. Special Red Hat Enterprise Linux File Locations

/var/lib/rpm/ directory. For more information on RPM, refer to man rpm.

/var/cache/yum/ directory contains files used by the Package Updater, including RPM header information for the system. This location may also be used to temporarily store RPMs downloaded while updating the system. For more information about the Red Hat Network, refer to the documentation online at https://rhn.redhat.com/.

/etc/sysconfig/ directory. This directory stores a variety of configuration information. Many scripts that run at boot time use the files in this directory.

2.3. The /proc Virtual File System

/proc contains neither text nor binary files. Instead, it houses virtual files; as such, /proc is normally referred to as a virtual file system. These virtual files are typically zero bytes in size, even if they contain a large amount of information.

/proc file system is not used for storage. Its main purpose is to provide a file-based interface to hardware, memory, running processes, and other system components. Real-time information can be retrieved on many system components by viewing the corresponding /proc file. Some of the files within /proc can also be manipulated (by both users and applications) to configure the kernel.

/proc files are relevant in managing and monitoring system storage:

- /proc/devices

- Displays various character and block devices that are currently configured.

- /proc/filesystems

- Lists all file system types currently supported by the kernel.

- /proc/mdstat

- Contains current information on multiple-disk or RAID configurations on the system, if they exist.

- /proc/mounts

- Lists all mounts currently used by the system.

- /proc/partitions

- Contains partition block allocation information.

/proc file system, refer to the Red Hat Enterprise Linux 6 Deployment Guide.

2.4. Discard unused blocks

fstrim command. This command discards all unused blocks in a file system that match the user's criteria. Both operation types are supported for use with ext4 file systems as of Red Hat Enterprise Linux 6.2 and later, so long as the block device underlying the file system supports physical discard operations. This is also the case with XFS file systems as of Red Hat Enterprise Linux 6.4 and later. Physical discard operations are supported if the value of /sys/block/device/queue/discard_max_bytes is not zero.

-o discard option (either in /etc/fstab or as part of the mount command), and run in realtime without user intervention. Online discard operations only discard blocks that are transitioning from used to free. Online discard operations are supported on ext4 file systems as of Red Hat Enterprise Linux 6.2 and later, and on XFS file systems as of Red Hat Enterprise Linux 6.4 and later.

Chapter 3. Encrypted File System

mkfs. Instead, eCryptfs is initiated by issuing a special mount command. To manage file systems protected by eCryptfs, the ecryptfs-utils package must be installed first.

3.1. Mounting a File System as Encrypted

mount -t ecryptfs /source /destination

# mount -t ecryptfs /source /destination/source in the above example) with eCryptfs means mounting it to a mount point encrypted by eCryptfs (/destination in the example above). All file operations to /destination will be passed encrypted to the underlying /source file system. In some cases, however, it may be possible for a file operation to modify /source directly without passing through the eCryptfs layer; this could lead to inconsistencies.

/source and /destination be identical. For example:

mount -t ecryptfs /home /home

# mount -t ecryptfs /home /home/home pass through the eCryptfs layer.

mount will allow the following settings to be configured:

- Encryption key type

openssl,tspi, orpassphrase. When choosingpassphrase,mountwill ask for one.- Cipher

aes,blowfish,des3_ede,cast6, orcast5.- Key bytesize

16,32, or24.plaintext passthrough- Enabled or disabled.

filename encryption- Enabled or disabled.

mount will display all the selections made and perform the mount. This output consists of the command-line option equivalents of each chosen setting. For example, mounting /home with a key type of passphrase, aes cipher, key bytesize of 16 with both plaintext passthrough and filename encryption disabled, the output would be:

-o option of mount. For example:

mount -t ecryptfs /home /home -o ecryptfs_unlink_sigs \ ecryptfs_key_bytes=16 ecryptfs_cipher=aes ecryptfs_sig=c7fed37c0a341e19[2]

# mount -t ecryptfs /home /home -o ecryptfs_unlink_sigs \

ecryptfs_key_bytes=16 ecryptfs_cipher=aes ecryptfs_sig=c7fed37c0a341e19[2]3.2. Additional Information

man ecryptfs (provided by the ecryptfs-utils package). The following Kernel document (provided by the kernel-doc package) also provides additional information on eCryptfs:

/usr/share/doc/kernel-doc-version/Documentation/filesystems/ecryptfs.txt

Chapter 4. Btrfs

Btrfs) is a local file system that aims to provide better performance and scalability. Btrfs was introduced in Red Hat Enterprise Linux 6 as a Technology Preview, available on AMD64 and Intel 64 architectures. The Btrfs Technology Preview ended as of Red Hat Enterprise Linux 6.6 and will not be updated in the future. Btrfs will be included in future releases of Red Hat Enterprise Linux 6, but will not be supported in any way.

Btrfs Features

- Built-in System Rollback

- File system snapshots make it possible to roll a system back to a prior, known-good state if something goes wrong.

- Built-in Compression

- This makes saving space easier.

- Checksum Functionality

- This improves error detection.

- dynamic, online addition or removal of new storage devices

- internal support for RAID across the component devices

- the ability to use different RAID levels for meta or user data

- full checksum functionality for all meta and user data.

Chapter 5. The Ext3 File System

- Availability

- After an unexpected power failure or system crash (also called an unclean system shutdown), each mounted ext2 file system on the machine must be checked for consistency by the

e2fsckprogram. This is a time-consuming process that can delay system boot time significantly, especially with large volumes containing a large number of files. During this time, any data on the volumes is unreachable.It is possible to runfsck -non a live filesystem. However, it will not make any changes and may give misleading results if partially written metadata is encountered.If LVM is used in the stack, another option is to take an LVM snapshot of the filesystem and runfsckon it instead.Finally, there is the option to remount the filesystem as read only. All pending metadata updates (and writes) are then forced to the disk prior to the remount. This ensures the filesystem is in a consistent state, provided there is no previous corruption. It is now possible to runfsck -n.The journaling provided by the ext3 file system means that this sort of file system check is no longer necessary after an unclean system shutdown. The only time a consistency check occurs using ext3 is in certain rare hardware failure cases, such as hard drive failures. The time to recover an ext3 file system after an unclean system shutdown does not depend on the size of the file system or the number of files; rather, it depends on the size of the journal used to maintain consistency. The default journal size takes about a second to recover, depending on the speed of the hardware.Note

The only journaling mode in ext3 supported by Red Hat isdata=ordered(default). - Data Integrity

- The ext3 file system prevents loss of data integrity in the event that an unclean system shutdown occurs. The ext3 file system allows you to choose the type and level of protection that your data receives. With regard to the state of the file system, ext3 volumes are configured to keep a high level of data consistency by default.

- Speed

- Despite writing some data more than once, ext3 has a higher throughput in most cases than ext2 because ext3's journaling optimizes hard drive head motion. You can choose from three journaling modes to optimize speed, but doing so means trade-offs in regards to data integrity if the system was to fail.

- Easy Transition

- It is easy to migrate from ext2 to ext3 and gain the benefits of a robust journaling file system without reformatting. Refer to Section 5.2, “Converting to an Ext3 File System” for more information on how to perform this task.

The default size of the on-disk inode has increased for more efficient storage of extended attributes, for example, ACLs or SELinux attributes. Along with this change, the default number of inodes created on a file system of a given size has been decreased. The inode size may be selected with the mke2fs -I option or specified in /etc/mke2fs.conf to set system wide defaults for mke2fs.

Note

data_err

A new mount option has been added: data_err=abort. This option instructs ext3 to abort the journal if an error occurs in a file data (as opposed to metadata) buffer in data=ordered mode. This option is disabled by default (set as data_err=ignore).

When creating a file system (that is, mkfs), mke2fs will attempt to "discard" or "trim" blocks not used by the file system metadata. This helps to optimize SSDs or thinly-provisioned storage. To suppress this behavior, use the mke2fs -K option.

5.1. Creating an Ext3 File System

Procedure 5.1. Create an ext3 file system

- Format the partition with the ext3 file system using

mkfs. - Label the file system using

e2label.

5.2. Converting to an Ext3 File System

tune2fs command converts an ext2 file system to ext3.

Note

e2fsck utility to check your file system before and after using tune2fs. Before trying to convert ext2 to ext3, back up all file systems in case any errors occur.

ext2 file system to ext3, log in as root and type the following command in a terminal:

tune2fs -j block_device

# tune2fs -j block_device- A mapped device

- A logical volume in a volume group, for example,

/dev/mapper/VolGroup00-LogVol02. - A static device

- A traditional storage volume, for example,

/dev/sdbX, where sdb is a storage device name and X is the partition number.

df command to display mounted file systems.

5.3. Reverting to an Ext2 File System

/dev/mapper/VolGroup00-LogVol02

Procedure 5.2. Revert from ext3 to ext2

- Unmount the partition by logging in as root and typing:

umount /dev/mapper/VolGroup00-LogVol02

# umount /dev/mapper/VolGroup00-LogVol02Copy to Clipboard Copied! Toggle word wrap Toggle overflow - Change the file system type to ext2 by typing the following command:

tune2fs -O ^has_journal /dev/mapper/VolGroup00-LogVol02

# tune2fs -O ^has_journal /dev/mapper/VolGroup00-LogVol02Copy to Clipboard Copied! Toggle word wrap Toggle overflow - Check the partition for errors by typing the following command:

e2fsck -y /dev/mapper/VolGroup00-LogVol02

# e2fsck -y /dev/mapper/VolGroup00-LogVol02Copy to Clipboard Copied! Toggle word wrap Toggle overflow - Then mount the partition again as ext2 file system by typing:

mount -t ext2 /dev/mapper/VolGroup00-LogVol02 /mount/point

# mount -t ext2 /dev/mapper/VolGroup00-LogVol02 /mount/pointCopy to Clipboard Copied! Toggle word wrap Toggle overflow In the above command, replace /mount/point with the mount point of the partition.Note

If a.journalfile exists at the root level of the partition, delete it.

/etc/fstab file, otherwise it will revert back after booting.

Chapter 6. The Ext4 File System

Note

fsck. For more information, see Chapter 5, The Ext3 File System.

- Main Features

- Ext4 uses extents (as opposed to the traditional block mapping scheme used by ext2 and ext3), which improves performance when using large files and reduces metadata overhead for large files. In addition, ext4 also labels unallocated block groups and inode table sections accordingly, which allows them to be skipped during a file system check. This makes for quicker file system checks, which becomes more beneficial as the file system grows in size.

- Allocation Features

- The ext4 file system features the following allocation schemes:

- Persistent pre-allocation

- Delayed allocation

- Multi-block allocation

- Stripe-aware allocation

Because of delayed allocation and other performance optimizations, ext4's behavior of writing files to disk is different from ext3. In ext4, when a program writes to the file system, it is not guaranteed to be on-disk unless the program issues anfsync()call afterwards.By default, ext3 automatically forces newly created files to disk almost immediately even withoutfsync(). This behavior hid bugs in programs that did not usefsync()to ensure that written data was on-disk. The ext4 file system, on the other hand, often waits several seconds to write out changes to disk, allowing it to combine and reorder writes for better disk performance than ext3.Warning

Unlike ext3, the ext4 file system does not force data to disk on transaction commit. As such, it takes longer for buffered writes to be flushed to disk. As with any file system, use data integrity calls such asfsync()to ensure that data is written to permanent storage. - Other Ext4 Features

- The ext4 file system also supports the following:

- Extended attributes (

xattr) — This allows the system to associate several additional name and value pairs per file. - Quota journaling — This avoids the need for lengthy quota consistency checks after a crash.

Note

The only supported journaling mode in ext4 isdata=ordered(default). - Subsecond timestamps — This gives timestamps to the subsecond.

6.1. Creating an Ext4 File System

mkfs.ext4 command. In general, the default options are optimal for most usage scenarios:

mkfs.ext4 /dev/device

# mkfs.ext4 /dev/deviceExample 6.1. mkfs.ext4 command output

mkfs.ext4 chooses an optimal geometry. This may also be true on some hardware RAIDs which export geometry information to the operating system.

-E option of mkfs.ext4 (that is, extended file system options) with the following sub-options:

- stride=value

- Specifies the RAID chunk size.

- stripe-width=value

- Specifies the number of data disks in a RAID device, or the number of stripe units in the stripe.

value must be specified in file system block units. For example, to create a file system with a 64k stride (that is, 16 x 4096) on a 4k-block file system, use the following command:

mkfs.ext4 -E stride=16,stripe-width=64 /dev/device

# mkfs.ext4 -E stride=16,stripe-width=64 /dev/deviceman mkfs.ext4.

Important

tune2fs to enable some ext4 features on ext3 file systems, and to use the ext4 driver to mount an ext3 file system. These actions, however, are not supported in Red Hat Enterprise Linux 6, as they have not been fully tested. Because of this, Red Hat cannot guarantee consistent performance and predictable behavior for ext3 file systems converted or mounted in this way.

6.2. Mounting an Ext4 File System

mount /dev/device /mount/point

# mount /dev/device /mount/pointacl parameter enables access control lists, while the user_xattr parameter enables user extended attributes. To enable both options, use their respective parameters with -o, as in:

mount -o acl,user_xattr /dev/device /mount/point

# mount -o acl,user_xattr /dev/device /mount/pointtune2fs utility also allows administrators to set default mount options in the file system superblock. For more information on this, refer to man tune2fs.

Write Barriers

nobarrier option, as in:

mount -o nobarrier /dev/device /mount/point

# mount -o nobarrier /dev/device /mount/point6.3. Resizing an Ext4 File System

resize2fs command:

resize2fs /mount/device node

# resize2fs /mount/device noderesize2fs command can also decrease the size of an unmounted ext4 file system:

resize2fs /dev/device size

# resize2fs /dev/device sizeresize2fs utility reads the size in units of file system block size, unless a suffix indicating a specific unit is used. The following suffixes indicate specific units:

s— 512 byte sectorsK— kilobytesM— megabytesG— gigabytes

Note

resize2fs automatically expands to fill all available space of the container, usually a logical volume or partition.

man resize2fs.

6.4. Backup ext2/3/4 File Systems

Procedure 6.1. Backup ext2/3/4 File Systems Example

- All data must be backed up before attempting any kind of restore operation. Data backups should be made on a regular basis. In addition to data, there is configuration information that should be saved, including

/etc/fstaband the output offdisk -l. Running an sosreport/sysreport will capture this information and is strongly recommended.Copy to Clipboard Copied! Toggle word wrap Toggle overflow In this example, we will use the/dev/sda6partition to save backup files, and we assume that/dev/sda6is mounted on/backup-files. - If the partition being backed up is an operating system partition, bootup your system into Single User Mode. This step is not necessary for normal data partitions.

- Use

dumpto backup the contents of the partitions:Note

- If the system has been running for a long time, it is advisable to run

e2fsckon the partitions before backup. dumpshould not be used on heavily loaded and mounted filesystem as it could backup corrupted version of files. This problem has been mentioned on dump.sourceforge.net.Important

When backing up operating system partitions, the partition must be unmounted.While it is possible to back up an ordinary data partition while it is mounted, it is adviseable to unmount it where possible. The results of attempting to back up a mounted data partition can be unpredicteable.

dump -0uf /backup-files/sda1.dump /dev/sda1 dump -0uf /backup-files/sda2.dump /dev/sda2 dump -0uf /backup-files/sda3.dump /dev/sda3

# dump -0uf /backup-files/sda1.dump /dev/sda1 # dump -0uf /backup-files/sda2.dump /dev/sda2 # dump -0uf /backup-files/sda3.dump /dev/sda3Copy to Clipboard Copied! Toggle word wrap Toggle overflow If you want to do a remote backup, you can use both ssh or configure a non-password login.Note

If using standard redirection, the '-f' option must be passed separately.dump -0u -f - /dev/sda1 | ssh root@remoteserver.example.com dd of=/tmp/sda1.dump

# dump -0u -f - /dev/sda1 | ssh root@remoteserver.example.com dd of=/tmp/sda1.dumpCopy to Clipboard Copied! Toggle word wrap Toggle overflow

6.5. Restore an ext2/3/4 File System

Procedure 6.2. Restore an ext2/3/4 File System Example

- If you are restoring an operating system partition, bootup your system into Rescue Mode. This step is not required for ordinary data partitions.

- Rebuild sda1/sda2/sda3/sda4/sda5 by using the

fdiskcommand.Note

If necessary, create the partitions to contain the restored file systems. The new partitions must be large enough to contain the restored data. It is important to get the start and end numbers right; these are the starting and ending sector numbers of the partitions. - Format the destination partitions by using the

mkfscommand, as shown below.Important

DO NOT format/dev/sda6in the above example because it saves backup files.mkfs.ext3 /dev/sda1 mkfs.ext3 /dev/sda2 mkfs.ext3 /dev/sda3

# mkfs.ext3 /dev/sda1 # mkfs.ext3 /dev/sda2 # mkfs.ext3 /dev/sda3Copy to Clipboard Copied! Toggle word wrap Toggle overflow - If creating new partitions, re-label all the partitions so they match the

fstabfile. This step is not required if the partitions are not being recreated.e2label /dev/sda1 /boot1 e2label /dev/sda2 / e2label /dev/sda3 /data mkswap -L SWAP-sda5 /dev/sda5

# e2label /dev/sda1 /boot1 # e2label /dev/sda2 / # e2label /dev/sda3 /data # mkswap -L SWAP-sda5 /dev/sda5Copy to Clipboard Copied! Toggle word wrap Toggle overflow - Prepare the working directories.

Copy to Clipboard Copied! Toggle word wrap Toggle overflow - Restore the data.

Copy to Clipboard Copied! Toggle word wrap Toggle overflow If you want to restore from a remote host or restore from a backup file on a remote host you can use either ssh or rsh. You will need to configure a password-less login for the following examples:Login into 10.0.0.87, and restore sda1 from local sda1.dump file:ssh 10.0.0.87 "cd /mnt/sda1 && cat /backup-files/sda1.dump | restore -rf -"

# ssh 10.0.0.87 "cd /mnt/sda1 && cat /backup-files/sda1.dump | restore -rf -"Copy to Clipboard Copied! Toggle word wrap Toggle overflow Login into 10.0.0.87, and restore sda1 from a remote 10.66.0.124 sda1.dump file:ssh 10.0.0.87 "cd /mnt/sda1 && RSH=/usr/bin/ssh restore -r -f 10.66.0.124:/tmp/sda1.dump"

# ssh 10.0.0.87 "cd /mnt/sda1 && RSH=/usr/bin/ssh restore -r -f 10.66.0.124:/tmp/sda1.dump"Copy to Clipboard Copied! Toggle word wrap Toggle overflow - Reboot.

6.6. Other Ext4 File System Utilities

- e2fsck

- Used to repair an ext4 file system. This tool checks and repairs an ext4 file system more efficiently than ext3, thanks to updates in the ext4 disk structure.

- e2label

- Changes the label on an ext4 file system. This tool also works on ext2 and ext3 file systems.

- quota

- Controls and reports on disk space (blocks) and file (inode) usage by users and groups on an ext4 file system. For more information on using

quota, refer toman quotaand Section 16.1, “Configuring Disk Quotas”.

tune2fs utility can also adjust configurable file system parameters for ext2, ext3, and ext4 file systems. In addition, the following tools are also useful in debugging and analyzing ext4 file systems:

- debugfs

- Debugs ext2, ext3, or ext4 file systems.

- e2image

- Saves critical ext2, ext3, or ext4 file system metadata to a file.

man pages.

Chapter 7. Global File System 2

fsck command on a very large file system can take a long time and consume a large amount of memory. Additionally, in the event of a disk or disk-subsystem failure, recovery time is limited by the speed of backup media.

clvmd, and running in a Red Hat Cluster Suite cluster. The daemon makes it possible to use LVM2 to manage logical volumes across a cluster, allowing all nodes in the cluster to share the logical volumes. For information on the Logical Volume Manager, see Red Hat's Logical Volume Manager Administration guide.

gfs2.ko kernel module implements the GFS2 file system and is loaded on GFS2 cluster nodes.

Chapter 8. The XFS File System

- Main Features

- XFS supports metadata journaling, which facilitates quicker crash recovery. The XFS file system can also be defragmented and enlarged while mounted and active. In addition, Red Hat Enterprise Linux 6 supports backup and restore utilities specific to XFS.

- Allocation Features

- XFS features the following allocation schemes:

- Extent-based allocation

- Stripe-aware allocation policies

- Delayed allocation

- Space pre-allocation

Delayed allocation and other performance optimizations affect XFS the same way that they do ext4. Namely, a program's writes to an XFS file system are not guaranteed to be on-disk unless the program issues anfsync()call afterwards.For more information on the implications of delayed allocation on a file system, refer to Allocation Features in Chapter 6, The Ext4 File System. The workaround for ensuring writes to disk applies to XFS as well. - Other XFS Features

- The XFS file system also supports the following:

- Extended attributes (

xattr) - This allows the system to associate several additional name/value pairs per file.

- Quota journaling

- This avoids the need for lengthy quota consistency checks after a crash.

- Project/directory quotas

- This allows quota restrictions over a directory tree.

- Subsecond timestamps

- This allows timestamps to go to the subsecond.

- Extended attributes (

8.1. Creating an XFS File System

mkfs.xfs /dev/device command. In general, the default options are optimal for common use.

mkfs.xfs on a block device containing an existing file system, use the -f option to force an overwrite of that file system.

Example 8.1. mkfs.xfs command output

mkfs.xfs command:

Note

xfs_growfs command (refer to Section 8.4, “Increasing the Size of an XFS File System”).

mkfs.xfs chooses an optimal geometry. This may also be true on some hardware RAIDs that export geometry information to the operating system.

mkfs.xfs sub-options:

- su=value

- Specifies a stripe unit or RAID chunk size. The

valuemust be specified in bytes, with an optionalk,m, orgsuffix. - sw=value

- Specifies the number of data disks in a RAID device, or the number of stripe units in the stripe.

mkfs.xfs -d su=64k,sw=4 /dev/device

# mkfs.xfs -d su=64k,sw=4 /dev/deviceman mkfs.xfs.

8.2. Mounting an XFS File System

mount /dev/device /mount/point

# mount /dev/device /mount/pointinode64 mount option. This option configures XFS to allocate inodes and data across the entire file system, which can improve performance:

mount -o inode64 /dev/device /mount/point

# mount -o inode64 /dev/device /mount/pointWrite Barriers

nobarrier option:

mount -o nobarrier /dev/device /mount/point

# mount -o nobarrier /dev/device /mount/point8.3. XFS Quota Management

noenforce; this will allow usage reporting without enforcing any limits. Valid quota mount options are:

uquota/uqnoenforce- User quotasgquota/gqnoenforce- Group quotaspquota/pqnoenforce- Project quota

xfs_quota tool can be used to set limits and report on disk usage. By default, xfs_quota is run interactively, and in basic mode. Basic mode sub-commands simply report usage, and are available to all users. Basic xfs_quota sub-commands include:

- quota username/userID

- Show usage and limits for the given

usernameor numericuserID - df

- Shows free and used counts for blocks and inodes.

xfs_quota also has an expert mode. The sub-commands of this mode allow actual configuration of limits, and are available only to users with elevated privileges. To use expert mode sub-commands interactively, run xfs_quota -x. Expert mode sub-commands include:

- report /path

- Reports quota information for a specific file system.

- limit

- Modify quota limits.

help.

-c option, with -x for expert sub-commands.

Example 8.2. Display a sample quota report

/home (on /dev/blockdevice), use the command xfs_quota -x -c 'report -h' /home. This will display output similar to the following:

john (whose home directory is /home/john), use the following command:

xfs_quota -x -c 'limit isoft=500 ihard=700 /home/john'

# xfs_quota -x -c 'limit isoft=500 ihard=700 /home/john'limit sub-command recognizes targets as users. When configuring the limits for a group, use the -g option (as in the previous example). Similarly, use -p for projects.

bsoft or bhard instead of isoft or ihard.

Example 8.3. Set a soft and hard block limit

accounting on the /target/path file system, use the following command:

xfs_quota -x -c 'limit -g bsoft=1000m bhard=1200m accounting' /target/path

# xfs_quota -x -c 'limit -g bsoft=1000m bhard=1200m accounting' /target/pathImportant

rtbhard/rtbsoft) are described in man xfs_quota as valid units when setting quotas, the real-time sub-volume is not enabled in this release. As such, the rtbhard and rtbsoft options are not applicable.

Setting Project Limits

/etc/projects. Project names can be added to/etc/projectid to map project IDs to project names. Once a project is added to /etc/projects, initialize its project directory using the following command:

xfs_quota -c 'project -s projectname'

# xfs_quota -c 'project -s projectname'xfs_quota -x -c 'limit -p bsoft=1000m bhard=1200m projectname'

# xfs_quota -x -c 'limit -p bsoft=1000m bhard=1200m projectname'quota, repquota, and edquota for example) may also be used to manipulate XFS quotas. However, these tools cannot be used with XFS project quotas.

man xfs_quota.

8.4. Increasing the Size of an XFS File System

xfs_growfs command:

xfs_growfs /mount/point -D size

# xfs_growfs /mount/point -D size-D size option grows the file system to the specified size (expressed in file system blocks). Without the -D size option, xfs_growfs will grow the file system to the maximum size supported by the device.

-D size, ensure that the underlying block device is of an appropriate size to hold the file system later. Use the appropriate resizing methods for the affected block device.

Note

man xfs_growfs.

8.5. Repairing an XFS File System

xfs_repair:

xfs_repair /dev/device

# xfs_repair /dev/devicexfs_repair utility is highly scalable and is designed to repair even very large file systems with many inodes efficiently. Unlike other Linux file systems, xfs_repair does not run at boot time, even when an XFS file system was not cleanly unmounted. In the event of an unclean unmount, xfs_repair simply replays the log at mount time, ensuring a consistent file system.

Warning

xfs_repair utility cannot repair an XFS file system with a dirty log. To clear the log, mount and unmount the XFS file system. If the log is corrupt and cannot be replayed, use the -L option ("force log zeroing") to clear the log, that is, xfs_repair -L /dev/device. Be aware that this may result in further corruption or data loss.

man xfs_repair.

8.6. Suspending an XFS File System

xfs_freeze. Suspending write activity allows hardware-based device snapshots to be used to capture the file system in a consistent state.

Note

xfs_freeze utility is provided by the xfsprogs package, which is only available on x86_64.

xfs_freeze -f /mount/point

# xfs_freeze -f /mount/pointxfs_freeze -u /mount/point

# xfs_freeze -u /mount/pointxfs_freeze to suspend the file system first. Rather, the LVM management tools will automatically suspend the XFS file system before taking the snapshot.

Note

xfs_freeze utility can also be used to freeze or unfreeze an ext3, ext4, GFS2, XFS, and BTRFS, file system. The syntax for doing so is the same.

man xfs_freeze.

8.7. Backup and Restoration of XFS File Systems

xfsdump and xfsrestore.

xfsdump utility. Red Hat Enterprise Linux 6 supports backups to tape drives or regular file images, and also allows multiple dumps to be written to the same tape. The xfsdump utility also allows a dump to span multiple tapes, although only one dump can be written to a regular file. In addition, xfsdump supports incremental backups, and can exclude files from a backup using size, subtree, or inode flags to filter them.

xfsdump uses dump levels to determine a base dump to which a specific dump is relative. The -l option specifies a dump level (0-9). To perform a full backup, perform a level 0 dump on the file system (that is, /path/to/filesystem), as in:

xfsdump -l 0 -f /dev/device /path/to/filesystem

# xfsdump -l 0 -f /dev/device /path/to/filesystemNote

-f option specifies a destination for a backup. For example, the /dev/st0 destination is normally used for tape drives. An xfsdump destination can be a tape drive, regular file, or remote tape device.

xfsdump -l 1 -f /dev/st0 /path/to/filesystem

# xfsdump -l 1 -f /dev/st0 /path/to/filesystemxfsrestore utility restores file systems from dumps produced by xfsdump. The xfsrestore utility has two modes: a default simple mode, and a cumulative mode. Specific dumps are identified by session ID or session label. As such, restoring a dump requires its corresponding session ID or label. To display the session ID and labels of all dumps (both full and incremental), use the -I option:

xfsrestore -I

# xfsrestore -IExample 8.4. Session ID and labels of all dumps

Simple Mode for xfsrestore

session-ID), restore it fully to /path/to/destination using:

xfsrestore -f /dev/st0 -S session-ID /path/to/destination

# xfsrestore -f /dev/st0 -S session-ID /path/to/destinationNote

-f option specifies the location of the dump, while the -S or -L option specifies which specific dump to restore. The -S option is used to specify a session ID, while the -L option is used for session labels. The -I option displays both session labels and IDs for each dump.

Cumulative Mode for xfsrestore

xfsrestore allows file system restoration from a specific incremental backup, for example, level 1 to level 9. To restore a file system from an incremental backup, simply add the -r option:

xfsrestore -f /dev/st0 -S session-ID -r /path/to/destination

# xfsrestore -f /dev/st0 -S session-ID -r /path/to/destinationInteractive Operation

xfsrestore utility also allows specific files from a dump to be extracted, added, or deleted. To use xfsrestore interactively, use the -i option, as in:

xfsrestore -f /dev/st0 -i

xfsrestore finishes reading the specified device. Available commands in this dialogue include cd, ls, add, delete, and extract; for a complete list of commands, use help.

man xfsdump and man xfsrestore.

8.8. Other XFS File System Utilities

- xfs_fsr

- Used to defragment mounted XFS file systems. When invoked with no arguments,

xfs_fsrdefragments all regular files in all mounted XFS file systems. This utility also allows users to suspend a defragmentation at a specified time and resume from where it left off later.In addition,xfs_fsralso allows the defragmentation of only one file, as inxfs_fsr /path/to/file. Red Hat advises against periodically defragmenting an entire file system, as this is normally not warranted. - xfs_bmap

- Prints the map of disk blocks used by files in an XFS filesystem. This map lists each extent used by a specified file, as well as regions in the file with no corresponding blocks (that is, holes).

- xfs_info

- Prints XFS file system information.

- xfs_admin

- Changes the parameters of an XFS file system. The

xfs_adminutility can only modify parameters of unmounted devices or file systems. - xfs_copy

- Copies the contents of an entire XFS file system to one or more targets in parallel.

- xfs_metadump

- Copies XFS file system metadata to a file. The

xfs_metadumputility should only be used to copy unmounted, read-only, or frozen/suspended file systems; otherwise, generated dumps could be corrupted or inconsistent. - xfs_mdrestore

- Restores an XFS metadump image (generated using

xfs_metadump) to a file system image. - xfs_db

- Debugs an XFS file system.

man pages.

Chapter 9. Network File System (NFS)

9.1. How NFS Works

rpcbind service, supports ACLs, and utilizes stateful operations. Red Hat Enterprise Linux 6 supports NFSv2, NFSv3, and NFSv4 clients. When mounting a file system via NFS, Red Hat Enterprise Linux uses NFSv4 by default, if the server supports it.

rpcbind [3], lockd, and rpc.statd daemons. The rpc.mountd daemon is required on the NFS server to set up the exports.

Note

'-p' command line option that can set the port, making firewall configuration easier.

/etc/exports configuration file to determine whether the client is allowed to access any exported file systems. Once verified, all file and directory operations are available to the user.

Important

rpc.nfsd process now allow binding to any specified port during system start up. However, this can be error-prone if the port is unavailable, or if it conflicts with another daemon.

9.1.1. Required Services

rpcbind service. To share or mount NFS file systems, the following services work together depending on which version of NFS is implemented:

Note

portmap service was used to map RPC program numbers to IP address port number combinations in earlier versions of Red Hat Enterprise Linux. This service is now replaced by rpcbind in Red Hat Enterprise Linux 6 to enable IPv6 support.

- nfs

service nfs startstarts the NFS server and the appropriate RPC processes to service requests for shared NFS file systems.- nfslock

service nfslock startactivates a mandatory service that starts the appropriate RPC processes allowing NFS clients to lock files on the server.- rpcbind

rpcbindaccepts port reservations from local RPC services. These ports are then made available (or advertised) so the corresponding remote RPC services can access them.rpcbindresponds to requests for RPC services and sets up connections to the requested RPC service. This is not used with NFSv4.- rpc.nfsd

rpc.nfsdallows explicit NFS versions and protocols the server advertises to be defined. It works with the Linux kernel to meet the dynamic demands of NFS clients, such as providing server threads each time an NFS client connects. This process corresponds to thenfsservice.Note

As of Red Hat Enterprise Linux 6.3, only the NFSv4 server usesrpc.idmapd. The NFSv4 client uses the keyring-based idmappernfsidmap.nfsidmapis a stand-alone program that is called by the kernel on-demand to perform ID mapping; it is not a daemon. If there is a problem withnfsidmapdoes the client fall back to usingrpc.idmapd. More information regardingnfsidmapcan be found on the nfsidmap man page.

- rpc.mountd

- This process is used by an NFS server to process

MOUNTrequests from NFSv2 and NFSv3 clients. It checks that the requested NFS share is currently exported by the NFS server, and that the client is allowed to access it. If the mount request is allowed, the rpc.mountd server replies with aSuccessstatus and provides theFile-Handlefor this NFS share back to the NFS client. - lockd

lockdis a kernel thread which runs on both clients and servers. It implements the Network Lock Manager (NLM) protocol, which allows NFSv2 and NFSv3 clients to lock files on the server. It is started automatically whenever the NFS server is run and whenever an NFS file system is mounted.- rpc.statd

- This process implements the Network Status Monitor (NSM) RPC protocol, which notifies NFS clients when an NFS server is restarted without being gracefully brought down.

rpc.statdis started automatically by thenfslockservice, and does not require user configuration. This is not used with NFSv4. - rpc.rquotad

- This process provides user quota information for remote users.

rpc.rquotadis started automatically by thenfsservice and does not require user configuration. - rpc.idmapd

rpc.idmapdprovides NFSv4 client and server upcalls, which map between on-the-wire NFSv4 names (strings in the form ofuser@domain) and local UIDs and GIDs. Foridmapdto function with NFSv4, the/etc/idmapd.conffile must be configured. At a minimum, the "Domain" parameter should be specified, which defines the NFSv4 mapping domain. If the NFSv4 mapping domain is the same as the DNS domain name, this parameter can be skipped. The client and server must agree on the NFSv4 mapping domain for ID mapping to function properly.

Important

9.2. pNFS

-o v4.1 mount option on mounts from a pNFS-enabled server.

nfs_layout_nfsv41_files kernel is automatically loaded on the first mount. If the module is successfully loaded, the following message is logged in the /var/log/messages file:

kernel: nfs4filelayout_init: NFSv4 File Layout Driver Registering...

kernel: nfs4filelayout_init: NFSv4 File Layout Driver Registering...mount | grep /proc/mounts

$ mount | grep /proc/mountsImportant

files, blocks, objects, flexfiles, and SCSI.

9.3. NFS Client Configuration

mount command mounts NFS shares on the client side. Its format is as follows:

mount -t nfs -o options server:/remote/export /local/directory

# mount -t nfs -o options server:/remote/export /local/directory- options

- A comma-delimited list of mount options; refer to Section 9.5, “Common NFS Mount Options” for details on valid NFS mount options.

- server

- The hostname, IP address, or fully qualified domain name of the server exporting the file system you wish to mount

- /remote/export

- The file system or directory being exported from the server, that is, the directory you wish to mount

- /local/directory

- The client location where /remote/export is mounted

mount options nfsvers or vers. By default, mount will use NFSv4 with mount -t nfs. If the server does not support NFSv4, the client will automatically step down to a version supported by the server. If the nfsvers/vers option is used to pass a particular version not supported by the server, the mount will fail. The file system type nfs4 is also available for legacy reasons; this is equivalent to running mount -t nfs -o nfsvers=4 host:/remote/export /local/directory.

man mount for more details.

/etc/fstab file and the autofs service. Refer to Section 9.3.1, “Mounting NFS File Systems using /etc/fstab” and Section 9.4, “autofs” for more information.

9.3.1. Mounting NFS File Systems using /etc/fstab

/etc/fstab file. The line must state the hostname of the NFS server, the directory on the server being exported, and the directory on the local machine where the NFS share is to be mounted. You must be root to modify the /etc/fstab file.

Example 9.1. Syntax example

/etc/fstab is as follows:

server:/usr/local/pub /pub nfs defaults 0 0

server:/usr/local/pub /pub nfs defaults 0 0/pub must exist on the client machine before this command can be executed. After adding this line to /etc/fstab on the client system, use the command mount /pub, and the mount point /pub is mounted from the server.

/etc/fstab file is referenced by the netfs service at boot time, so lines referencing NFS shares have the same effect as manually typing the mount command during the boot process.

/etc/fstab entry to mount an NFS export should contain the following information:

server:/remote/export /local/directory nfs options 0 0

server:/remote/export /local/directory nfs options 0 0Note

/etc/fstab is read. Otherwise, the mount will fail.

/etc/fstab, refer to man fstab.

9.4. autofs

/etc/fstab is that, regardless of how infrequently a user accesses the NFS mounted file system, the system must dedicate resources to keep the mounted file system in place. This is not a problem with one or two mounts, but when the system is maintaining mounts to many systems at one time, overall system performance can be affected. An alternative to /etc/fstab is to use the kernel-based automount utility. An automounter consists of two components:

- a kernel module that implements a file system, and

- a user-space daemon that performs all of the other functions.

automount utility can mount and unmount NFS file systems automatically (on-demand mounting), therefore saving system resources. It can be used to mount other file systems including AFS, SMBFS, CIFS, and local file systems.

Important

autofs uses /etc/auto.master (master map) as its default primary configuration file. This can be changed to use another supported network source and name using the autofs configuration (in /etc/sysconfig/autofs) in conjunction with the Name Service Switch (NSS) mechanism. An instance of the autofs version 4 daemon was run for each mount point configured in the master map and so it could be run manually from the command line for any given mount point. This is not possible with autofs version 5, because it uses a single daemon to manage all configured mount points; as such, all automounts must be configured in the master map. This is in line with the usual requirements of other industry standard automounters. Mount point, hostname, exported directory, and options can all be specified in a set of files (or other supported network sources) rather than configuring them manually for each host.

9.4.1. Improvements in autofs Version 5 over Version 4

autofs version 5 features the following enhancements over version 4:

- Direct map support

- Direct maps in

autofsprovide a mechanism to automatically mount file systems at arbitrary points in the file system hierarchy. A direct map is denoted by a mount point of/-in the master map. Entries in a direct map contain an absolute path name as a key (instead of the relative path names used in indirect maps). - Lazy mount and unmount support

- Multi-mount map entries describe a hierarchy of mount points under a single key. A good example of this is the

-hostsmap, commonly used for automounting all exports from a host under/net/hostas a multi-mount map entry. When using the-hostsmap, anlsof/net/hostwill mount autofs trigger mounts for each export from host. These will then mount and expire them as they are accessed. This can greatly reduce the number of active mounts needed when accessing a server with a large number of exports. - Enhanced LDAP support

- The

autofsconfiguration file (/etc/sysconfig/autofs) provides a mechanism to specify theautofsschema that a site implements, thus precluding the need to determine this via trial and error in the application itself. In addition, authenticated binds to the LDAP server are now supported, using most mechanisms supported by the common LDAP server implementations. A new configuration file has been added for this support:/etc/autofs_ldap_auth.conf. The default configuration file is self-documenting, and uses an XML format. - Proper use of the Name Service Switch (

nsswitch) configuration. - The Name Service Switch configuration file exists to provide a means of determining from where specific configuration data comes. The reason for this configuration is to allow administrators the flexibility of using the back-end database of choice, while maintaining a uniform software interface to access the data. While the version 4 automounter is becoming increasingly better at handling the NSS configuration, it is still not complete. Autofs version 5, on the other hand, is a complete implementation.Refer to

man nsswitch.conffor more information on the supported syntax of this file. Not all NSS databases are valid map sources and the parser will reject ones that are invalid. Valid sources are files,yp,nis,nisplus,ldap, andhesiod. - Multiple master map entries per autofs mount point

- One thing that is frequently used but not yet mentioned is the handling of multiple master map entries for the direct mount point

/-. The map keys for each entry are merged and behave as one map.Example 9.2. Multiple master map entries per autofs mount point

An example is seen in the connectathon test maps for the direct mounts below:/- /tmp/auto_dcthon /- /tmp/auto_test3_direct /- /tmp/auto_test4_direct

/- /tmp/auto_dcthon /- /tmp/auto_test3_direct /- /tmp/auto_test4_directCopy to Clipboard Copied! Toggle word wrap Toggle overflow

9.4.2. autofs Configuration

/etc/auto.master, also referred to as the master map which may be changed as described in the Section 9.4.1, “Improvements in autofs Version 5 over Version 4”. The master map lists autofs-controlled mount points on the system, and their corresponding configuration files or network sources known as automount maps. The format of the master map is as follows:

mount-point map-name options

mount-point map-name options- mount-point

- The

autofsmount point,/home, for example. - map-name

- The name of a map source which contains a list of mount points, and the file system location from which those mount points should be mounted. The syntax for a map entry is described below.

- options

- If supplied, these will apply to all entries in the given map provided they don't themselves have options specified. This behavior is different from

autofsversion 4 where options were cumulative. This has been changed to implement mixed environment compatibility.

Example 9.3. /etc/auto.master file

/etc/auto.master file (displayed with cat /etc/auto.master):

/home /etc/auto.misc

/home /etc/auto.miscmount-point [options] location

mount-point [options] location- mount-point

- This refers to the

autofsmount point. This can be a single directory name for an indirect mount or the full path of the mount point for direct mounts. Each direct and indirect map entry key (mount-pointabove) may be followed by a space separated list of offset directories (sub directory names each beginning with a "/") making them what is known as a multi-mount entry. - options

- Whenever supplied, these are the mount options for the map entries that do not specify their own options.

- location

- This refers to the file system location such as a local file system path (preceded with the Sun map format escape character ":" for map names beginning with "/"), an NFS file system or other valid file system location.

/etc/auto.misc):

payroll -fstype=nfs personnel:/exports/payroll sales -fstype=ext3 :/dev/hda4

payroll -fstype=nfs personnel:/exports/payroll

sales -fstype=ext3 :/dev/hda4autofs mount point (sales and payroll from the server called personnel). The second column indicates the options for the autofs mount while the third column indicates the source of the mount. Following the above configuration, the autofs mount points will be /home/payroll and /home/sales. The -fstype= option is often omitted and is generally not needed for correct operation.

service autofs start(if the automount daemon has stopped)service autofs restart

autofs unmounted directory such as /home/payroll/2006/July.sxc, the automount daemon automatically mounts the directory. If a timeout is specified, the directory will automatically be unmounted if the directory is not accessed for the timeout period.

service autofs status

# service autofs status9.4.3. Overriding or Augmenting Site Configuration Files

- Automounter maps are stored in NIS and the

/etc/nsswitch.conffile has the following directive:automount: files nis

automount: files nisCopy to Clipboard Copied! Toggle word wrap Toggle overflow - The

auto.masterfile contains the following+auto.master

+auto.masterCopy to Clipboard Copied! Toggle word wrap Toggle overflow - The NIS

auto.mastermap file contains the following:/home auto.home

/home auto.homeCopy to Clipboard Copied! Toggle word wrap Toggle overflow - The NIS

auto.homemap contains the following:beth fileserver.example.com:/export/home/beth joe fileserver.example.com:/export/home/joe * fileserver.example.com:/export/home/&

beth fileserver.example.com:/export/home/beth joe fileserver.example.com:/export/home/joe * fileserver.example.com:/export/home/&Copy to Clipboard Copied! Toggle word wrap Toggle overflow - The file map

/etc/auto.homedoes not exist.

auto.home and mount home directories from a different server. In this case, the client will need to use the following /etc/auto.master map:

/home /etc/auto.home +auto.master

/home /etc/auto.home

+auto.master/etc/auto.home map contains the entry:

* labserver.example.com:/export/home/&

* labserver.example.com:/export/home/&/home will contain the contents of /etc/auto.home instead of the NIS auto.home map.

auto.home map with just a few entries, create an /etc/auto.home file map, and in it put the new entries. At the end, include the NIS auto.home map. Then the /etc/auto.home file map will look similar to:

mydir someserver:/export/mydir +auto.home

mydir someserver:/export/mydir

+auto.homeauto.home map listed above, ls /home would now output:

beth joe mydir

beth joe mydirautofs does not include the contents of a file map of the same name as the one it is reading. As such, autofs moves on to the next map source in the nsswitch configuration.

9.4.4. Using LDAP to Store Automounter Maps

openldap package should be installed automatically as a dependency of the automounter. To configure LDAP access, modify /etc/openldap/ldap.conf. Ensure that BASE, URI, and schema are set appropriately for your site.

rfc2307bis. To use this schema it is necessary to set it in the autofs configuration /etc/autofs.conf by removing the comment characters from the schema definition.

Example 9.4. Setting autofs configuration

Note

/etc/autofs.conf file instead of the /etc/systemconfig/autofs file as was the case in previous releases.

automountKey replaces the cn attribute in the rfc2307bis schema. An LDIF of a sample configuration is described below:

Example 9.5. LDIF configuration

9.5. Common NFS Mount Options

mount commands, /etc/fstab settings, and autofs.

- intr

- Allows NFS requests to be interrupted if the server goes down or cannot be reached.

- lookupcache=mode

- Specifies how the kernel should manage its cache of directory entries for a given mount point. Valid arguments for mode are

all,none, orpos/positive. - nfsvers=version

- Specifies which version of the NFS protocol to use, where version is 2, 3, or 4. This is useful for hosts that run multiple NFS servers. If no version is specified, NFS uses the highest version supported by the kernel and

mountcommand.The optionversis identical tonfsvers, and is included in this release for compatibility reasons. - noacl

- Turns off all ACL processing. This may be needed when interfacing with older versions of Red Hat Enterprise Linux, Red Hat Linux, or Solaris, since the most recent ACL technology is not compatible with older systems.

- nolock

- Disables file locking. This setting is occasionally required when connecting to older NFS servers.

- noexec

- Prevents execution of binaries on mounted file systems. This is useful if the system is mounting a non-Linux file system containing incompatible binaries.

- nosuid

- Disables

set-user-identifierorset-group-identifierbits. This prevents remote users from gaining higher privileges by running asetuidprogram. - port=num

port=num— Specifies the numeric value of the NFS server port. Ifnumis0(the default), thenmountqueries the remote host'srpcbindservice for the port number to use. If the remote host's NFS daemon is not registered with itsrpcbindservice, the standard NFS port number of TCP 2049 is used instead.- rsize=num and wsize=num

- These settings speed up NFS communication for reads (

rsize) and writes (wsize) by setting a larger data block size (num, in bytes), to be transferred at one time. Be careful when changing these values; some older Linux kernels and network cards do not work well with larger block sizes.Note

If an rsize value is not specified, or if the specified value is larger than the maximum that either client or server can support, then the client and server negotiate the largest resize value they can both support. - sec=mode

- Specifies the type of security to utilize when authenticating an NFS connection. Its default setting is

sec=sys, which uses local UNIX UIDs and GIDs by usingAUTH_SYSto authenticate NFS operations.sec=krb5uses Kerberos V5 instead of local UNIX UIDs and GIDs to authenticate users.sec=krb5iuses Kerberos V5 for user authentication and performs integrity checking of NFS operations using secure checksums to prevent data tampering.sec=krb5puses Kerberos V5 for user authentication, integrity checking, and encrypts NFS traffic to prevent traffic sniffing. This is the most secure setting, but it also involves the most performance overhead. - tcp

- Instructs the NFS mount to use the TCP protocol.

- udp

- Instructs the NFS mount to use the UDP protocol.

man mount and man nfs.

9.6. Starting and Stopping NFS

rpcbind[3] service must be running. To verify that rpcbind is active, use the following command:

service rpcbind status

# service rpcbind statusrpcbind service is running, then the nfs service can be started. To start an NFS server, use the following command:

service nfs start

# service nfs startnfslock must also be started for both the NFS client and server to function properly. To start NFS locking, use the following command:

service nfslock start

# service nfslock startnfslock also starts by running chkconfig --list nfslock. If nfslock is not set to on, this implies that you will need to manually run the service nfslock start each time the computer starts. To set nfslock to automatically start on boot, use chkconfig nfslock on.

nfslock is only needed for NFSv2 and NFSv3.

service nfs stop

# service nfs stoprestart option is a shorthand way of stopping and then starting NFS. This is the most efficient way to make configuration changes take effect after editing the configuration file for NFS. To restart the server type:

service nfs restart

# service nfs restartcondrestart (conditional restart) option only starts nfs if it is currently running. This option is useful for scripts, because it does not start the daemon if it is not running. To conditionally restart the server type:

service nfs condrestart

# service nfs condrestartservice nfs reload

# service nfs reload9.7. NFS Server Configuration

- Manually editing the NFS configuration file, that is,

/etc/exports, and - through the command line, that is, by using the command

exportfs

9.7.1. The /etc/exports Configuration File

/etc/exports file controls which file systems are exported to remote hosts and specifies options. It follows the following syntax rules:

- Blank lines are ignored.

- To add a comment, start a line with the hash mark (

#). - You can wrap long lines with a backslash (

\). - Each exported file system should be on its own individual line.

- Any lists of authorized hosts placed after an exported file system must be separated by space characters.

- Options for each of the hosts must be placed in parentheses directly after the host identifier, without any spaces separating the host and the first parenthesis.

export host(options)

export host(options)- export

- The directory being exported

- host

- The host or network to which the export is being shared

- options

- The options to be used for host

export host1(options1) host2(options2) host3(options3)

export host1(options1) host2(options2) host3(options3)/etc/exports file only specifies the exported directory and the hosts permitted to access it, as in the following example:

Example 9.6. The /etc/exports file

/exported/directory bob.example.com

/exported/directory bob.example.combob.example.com can mount /exported/directory/ from the NFS server. Because no options are specified in this example, NFS will use default settings.

- ro

- The exported file system is read-only. Remote hosts cannot change the data shared on the file system. To allow hosts to make changes to the file system (that is, read/write), specify the

rwoption. - sync

- The NFS server will not reply to requests before changes made by previous requests are written to disk. To enable asynchronous writes instead, specify the option

async. - wdelay

- The NFS server will delay writing to the disk if it suspects another write request is imminent. This can improve performance as it reduces the number of times the disk must be accesses by separate write commands, thereby reducing write overhead. To disable this, specify the

no_wdelay.no_wdelayis only available if the defaultsyncoption is also specified. - root_squash

- This prevents root users connected remotely (as opposed to locally) from having root privileges; instead, the NFS server will assign them the user ID

nfsnobody. This effectively "squashes" the power of the remote root user to the lowest local user, preventing possible unauthorized writes on the remote server. To disable root squashing, specifyno_root_squash.

all_squash. To specify the user and group IDs that the NFS server should assign to remote users from a particular host, use the anonuid and anongid options, respectively, as in:

export host(anonuid=uid,anongid=gid)

export host(anonuid=uid,anongid=gid)anonuid and anongid options allow you to create a special user and group account for remote NFS users to share.

no_acl option when exporting the file system.

rw option is not specified, then the exported file system is shared as read-only. The following is a sample line from /etc/exports which overrides two default options:

/another/exported/directory 192.168.0.3(rw,async)

192.168.0.3 can mount /another/exported/directory/ read/write and all writes to disk are asynchronous. For more information on exporting options, refer to man exportfs.

man exports for details on these less-used options.

Important

/etc/exports file is very precise, particularly in regards to use of the space character. Remember to always separate exported file systems from hosts and hosts from one another with a space character. However, there should be no other space characters in the file except on comment lines.

/home bob.example.com(rw) /home bob.example.com (rw)

/home bob.example.com(rw)

/home bob.example.com (rw)bob.example.com read/write access to the /home directory. The second line allows users from bob.example.com to mount the directory as read-only (the default), while the rest of the world can mount it read/write.

9.7.2. The exportfs Command

/etc/exports file. When the nfs service starts, the /usr/sbin/exportfs command launches and reads this file, passes control to rpc.mountd (if NFSv2 or NFSv3) for the actual mounting process, then to rpc.nfsd where the file systems are then available to remote users.

/usr/sbin/exportfs command allows the root user to selectively export or unexport directories without restarting the NFS service. When given the proper options, the /usr/sbin/exportfs command writes the exported file systems to /var/lib/nfs/etab. Since rpc.mountd refers to the etab file when deciding access privileges to a file system, changes to the list of exported file systems take effect immediately.

/usr/sbin/exportfs:

- -r

- Causes all directories listed in

/etc/exportsto be exported by constructing a new export list in/etc/lib/nfs/etab. This option effectively refreshes the export list with any changes made to/etc/exports. - -a

- Causes all directories to be exported or unexported, depending on what other options are passed to

/usr/sbin/exportfs. If no other options are specified,/usr/sbin/exportfsexports all file systems specified in/etc/exports. - -o file-systems

- Specifies directories to be exported that are not listed in

/etc/exports. Replace file-systems with additional file systems to be exported. These file systems must be formatted in the same way they are specified in/etc/exports. This option is often used to test an exported file system before adding it permanently to the list of file systems to be exported. Refer to Section 9.7.1, “The/etc/exportsConfiguration File” for more information on/etc/exportssyntax. - -i

- Ignores

/etc/exports; only options given from the command line are used to define exported file systems. - -u

- Unexports all shared directories. The command

/usr/sbin/exportfs -uasuspends NFS file sharing while keeping all NFS daemons up. To re-enable NFS sharing, useexportfs -r. - -v

- Verbose operation, where the file systems being exported or unexported are displayed in greater detail when the

exportfscommand is executed.

exportfs command, it displays a list of currently exported file systems. For more information about the exportfs command, refer to man exportfs.

9.7.2.1. Using exportfs with NFSv4

RPCNFSDARGS= -N 4 in /etc/sysconfig/nfs.

9.7.3. Running NFS Behind a Firewall

rpcbind, which dynamically assigns ports for RPC services and can cause problems for configuring firewall rules. To allow clients to access NFS shares behind a firewall, edit the /etc/sysconfig/nfs configuration file to control which ports the required RPC services run on.

/etc/sysconfig/nfs may not exist by default on all systems. If it does not exist, create it and add the following variables, replacing port with an unused port number (alternatively, if the file exists, un-comment and change the default entries as required):

MOUNTD_PORT=port- Controls which TCP and UDP port

mountd(rpc.mountd) uses. STATD_PORT=port- Controls which TCP and UDP port status (

rpc.statd) uses. LOCKD_TCPPORT=port- Controls which TCP port

nlockmgr(lockd) uses. LOCKD_UDPPORT=port- Controls which UDP port

nlockmgr(lockd) uses.

/var/log/messages. Normally, NFS will fail to start if you specify a port number that is already in use. After editing /etc/sysconfig/nfs, restart the NFS service using service nfs restart. Run the rpcinfo -p command to confirm the changes.

Procedure 9.1. Configure a firewall to allow NFS

- Allow TCP and UDP port 2049 for NFS.

- Allow TCP and UDP port 111 (

rpcbind/sunrpc). - Allow the TCP and UDP port specified with

MOUNTD_PORT="port" - Allow the TCP and UDP port specified with

STATD_PORT="port" - Allow the TCP port specified with

LOCKD_TCPPORT="port" - Allow the UDP port specified with

LOCKD_UDPPORT="port"

Note

/proc/sys/fs/nfs/nfs_callback_tcpport and allow the server to connect to that port on the client.

mountd, statd, and lockd are not required in a pure NFSv4 environment.

9.7.3.1. Discovering NFS exports

showmount command:

showmount -e myserver Export list for mysever /exports/foo /exports/bar

$ showmount -e myserver

Export list for mysever

/exports/foo

/exports/bar/ and look around.

Note

9.7.4. Hostname Formats

- Single machine

- A fully-qualified domain name (that can be resolved by the server), hostname (that can be resolved by the server), or an IP address.

- Series of machines specified with wildcards

- Use the

*or?character to specify a string match. Wildcards are not to be used with IP addresses; however, they may accidentally work if reverse DNS lookups fail. When specifying wildcards in fully qualified domain names, dots (.) are not included in the wildcard. For example,*.example.comincludesone.example.combut does notinclude one.two.example.com. - IP networks

- Use a.b.c.d/z, where a.b.c.d is the network and z is the number of bits in the netmask (for example 192.168.0.0/24). Another acceptable format is a.b.c.d/netmask, where a.b.c.d is the network and netmask is the netmask (for example, 192.168.100.8/255.255.255.0).

- Netgroups

- Use the format @group-name, where group-name is the NIS netgroup name.

9.7.5. NFS over RDMA

Procedure 9.2. Enabling RDMA transport in the NFS server

- Ensure the RDMA RPM is installed and the RDMA service is enabled:

yum install rdma; chkconfig --level 2345 rdma on

# yum install rdma; chkconfig --level 2345 rdma onCopy to Clipboard Copied! Toggle word wrap Toggle overflow - Ensure the package that provides the

nfs-rdmaservice is installed and the service is enabled:yum install rdma; chkconfig --level 345 nfs-rdma on

# yum install rdma; chkconfig --level 345 nfs-rdma onCopy to Clipboard Copied! Toggle word wrap Toggle overflow - Ensure that the RDMA port is set to the preferred port (default for Red Hat Enterprise Linux 6 is

2050): edit the/etc/rdma/rdma.conffile to setNFSoRDMA_LOAD=yesandNFSoRDMA_PORTto the desired port. - Set up the exported file system as normal for NFS mounts.

Procedure 9.3. Enabling RDMA from the client

- Ensure the RDMA RPM is installed and the RDMA service is enabled:

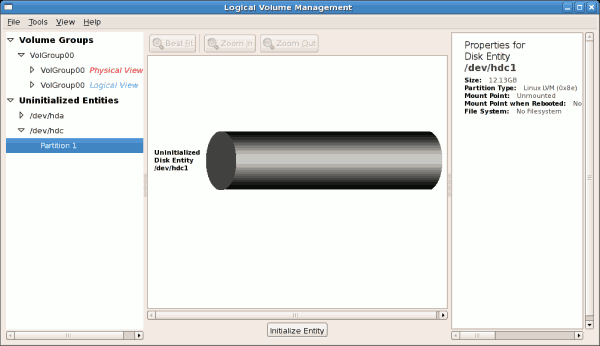

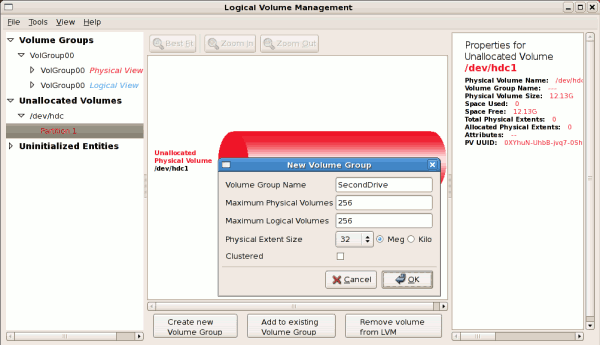

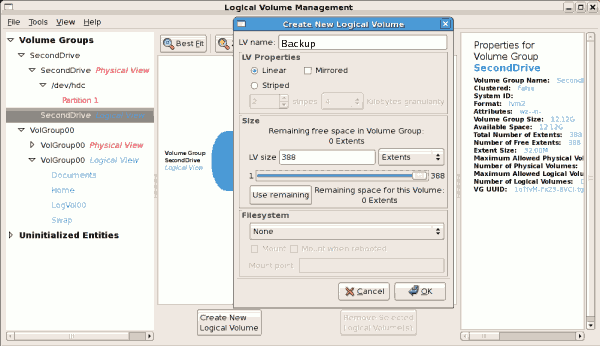

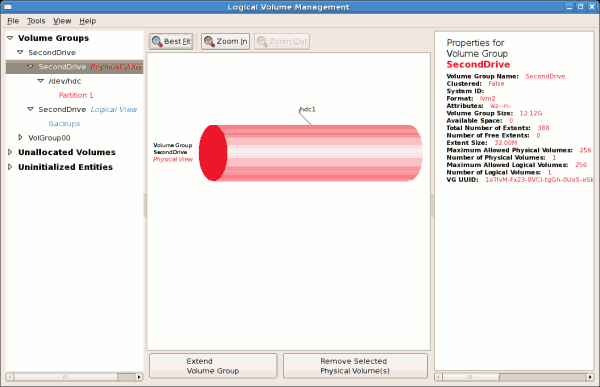

yum install rdma; chkconfig --level 2345 rdma on