System Administrator’s Guide

Deployment, configuration, and administration of RHEL 7

Abstract

Part I. Basic System Configuration

This part covers basic post-installation tasks and basic system administration tasks such as keyboard configuration, date and time configuration, managing users and groups, and gaining privileges.

Chapter 1. Getting Started

This chapter covers the basic tasks that you might need to perform just after you have installed Red Hat Enterprise Linux 7.

Note that these items may include tasks that are usually done already during the installation process, but they do not have to be done necessarily, such as the registration of the system. The subchapters dealing with such tasks provide a brief summary of how this can be achieved during the installation and links to related documentation in a special section.

For detailed information on Red Hat Enterprise Linux 7 installation, consult Red Hat Enterprise Linux 7 Installation Guide.

This chapter mentions some commands to be performed. The commands that need to be entered by the root user have # in the prompt, while the commands that can be performed by a regular user, have $ in their prompt.

For further information on common post-installation tasks, you can see also Red Hat Enterprise Linux 7 Installation Guide.

Although all post-installation tasks can be achieved through the command line, you can also use the web console tool to perform some of them.

What web console Is and Which Tasks It Can Be Used For

web console is a system administration tool that provides a user interface for monitoring and administering servers through a web browser.

web console enables to perform these tasks:

- Monitoring basic system features, such as hardware, internet connection, or performance characteristics

- Analyzing the content of the system log files

- Configuring basic networking features, such as interfaces, network logs, packet sizes

- Managing user accounts

- Monitoring and configuring system services

- Creating diagnostic reports

- Setting kernel dump configuration

- Configuring SELinux

- Managing system subscriptions

- Accessing the terminal

For more information on installing and using web console, see Managing systems using the RHEL 7 web console.

1.1. Basic Configuration of the Environment

Basic configuration of the environment includes:

- Date and Time

- System Locales

- Keyboard Layout

Setting of these items is normally a part of the installation process.

For more information, see the appropriate source according to the installation method:

When installing with the Anaconda installer, see:

Date&Time, Language Support and Keyboard Configuration in Red Hat Enterprise Linux 7 Installation Guide

When installing with the Kickstart file, consult:

Kickstart Commands and Options in Red Hat Enterprise Linux 7 Installation Guide.

If you need to reconfigure the basic characteristics of the environment after the installation, follow the instructions in this section.

1.1.1. Introduction to Configuring the Date and Time

Accurate time keeping is important for a number of reasons. In Red Hat Enterprise Linux 7, time keeping is ensured by the NTP protocol, which is implemented by a daemon running in user space. The user space daemon updates the system clock running in the kernel. The system clock can keep time by using various clock sources.

Red Hat Enterprise Linux 7 uses the following daemons to implement NTP:

chronydThe

chronyddaemon is used by default. It is available from the chrony package. For more information on configuring and usingNTPwithchronyd, see Chapter 18, Configuring NTP Using the chrony Suite.ntpdThe

ntpddaemon is available from the ntp package. For more information on configuring and usingNTPwithntpd, see Chapter 19, Configuring NTP Using ntpd.

If you want to use ntpd instead of default chronyd, you need to disable chronyd, install, enable and configure ntpd as shown in Chapter 19, Configuring NTP Using ntpd.

Displaying the Current Date and Time

To display the current date and time, use one of the following commands:

date

~]$ datetimedatectl

~]$ timedatectl

Note that the timedatectl command provides more verbose output, including universal time, currently used time zone, the status of the Network Time Protocol (NTP) configuration, and some additional information.

For more information on configuring the date and time, see Chapter 3, Configuring the Date and Time.

1.1.2. Introduction to Configuring the System Locale

System-wide locale settings are stored in the /etc/locale.conf file, which is read at early boot by the systemd daemon. The locale settings configured in /etc/locale.conf are inherited by every service or user, unless individual programs or individual users override them.

Basic tasks to handle the system locales:

Listing available system locale settings:

localectl list-locales

~]$ localectl list-localesCopy to Clipboard Copied! Toggle word wrap Toggle overflow Displaying current status of the system locales settings:

localectl status

~]$ localectl statusCopy to Clipboard Copied! Toggle word wrap Toggle overflow Setting or changing the default system locale settings:

localectl set-locale LANG=locale

~]# localectl set-locale LANG=localeCopy to Clipboard Copied! Toggle word wrap Toggle overflow

For more information on configuring the system locale, see Chapter 2, System Locale and Keyboard Configuration.

1.1.3. Introduction to Configuring the Keyboard Layout

The keyboard layout settings control the layout used on the text console and graphical user interfaces.

Basic tasks to handle the keyboard layout include:

Listing available keymaps:

localectl list-keymaps

~]$ localectl list-keymapsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Displaying current status of keymap settings:

localectl status

~]$ localectl statusCopy to Clipboard Copied! Toggle word wrap Toggle overflow Setting or changing the default system keymap:

localectl set-keymap

~]# localectl set-keymapCopy to Clipboard Copied! Toggle word wrap Toggle overflow

For more information on configuring the keyboard layout, see Chapter 2, System Locale and Keyboard Configuration.

1.2. Configuring and Inspecting Network Access

The network access is usually configured during the installation process. However, the installation process does not prompt you to configure network interfaces on some common installation paths. Consequently, it is possible that the network access is not configured after the installation. If this happens, you can configure network access after installation.

For a quickstart to configuring network access during the installation, see Section 1.2.1, “Configuring Network Access During the Installation Process”. To configure network access after the installation, you can use either the nmcli command-line utility, described in Red Hat Enterprise Linux 7 Networking Guide or the nmtui text user interface utility, described in Red Hat Enterprise Linux 7 Networking Guide.

The nmcli and nmtui utilities also enable you to add one or more new network connections, as well as modify and inspect the existing connections. If you want to create and manage network connections with nmcli, see Section 1.2.2, “Managing Network Connections After the Installation Process Using nmcli”. If you want to create and manage network connections with nmtui, see Section 1.2.3, “Managing Network Connections After the Installation Process Using nmtui”.

1.2.1. Configuring Network Access During the Installation Process

Ways to configure network access during the installation proces:

- The menu at the Installation Summary screen in the graphical user interface of the Anaconda installation program

- The option in the text mode of the Anaconda installation program

- The Kickstart file

When the system boots for the first time after the installation has finished, any network interfaces which you configured during the installation are automatically activated.

For detailed information on configuration of network access during installation process, see Red Hat Enterprise Linux 7 Installation Guide.

1.2.2. Managing Network Connections After the Installation Process Using nmcli

Run the following commands as the root user to manage network connections using the nmcli utility.

To create a new connection:

nmcli con add type type of the connection "con-name" connection name ifname ifname interface-name the name of the interface ipv4 address ipv4 address gw4 address gateway address

~]# nmcli con add type type of the connection "con-name" connection name ifname ifname interface-name the name of the interface ipv4 address ipv4 address gw4 address gateway addressTo modify the existing connection:

nmcli con mod "con-name"

~]# nmcli con mod "con-name"To display all connections:

nmcli con show

~]# nmcli con showTo display the active connection:

nmcli con show --active

~]# nmcli con show --activeTo display all configuration settings of a particular connection:

nmcli con show "con-name"

~]# nmcli con show "con-name"For more information on the nmcli command-line utility, see Red Hat Enterprise Linux 7 Networking Guide.

1.2.3. Managing Network Connections After the Installation Process Using nmtui

The NetworkManager text user interface (TUI) utility, nmtui, provides a text interface to configure networking by controlling NetworkManager.

For more information about installing and using the nmtui text interface tool, see Red Hat Enterprise Linux 7 Networking Guide.

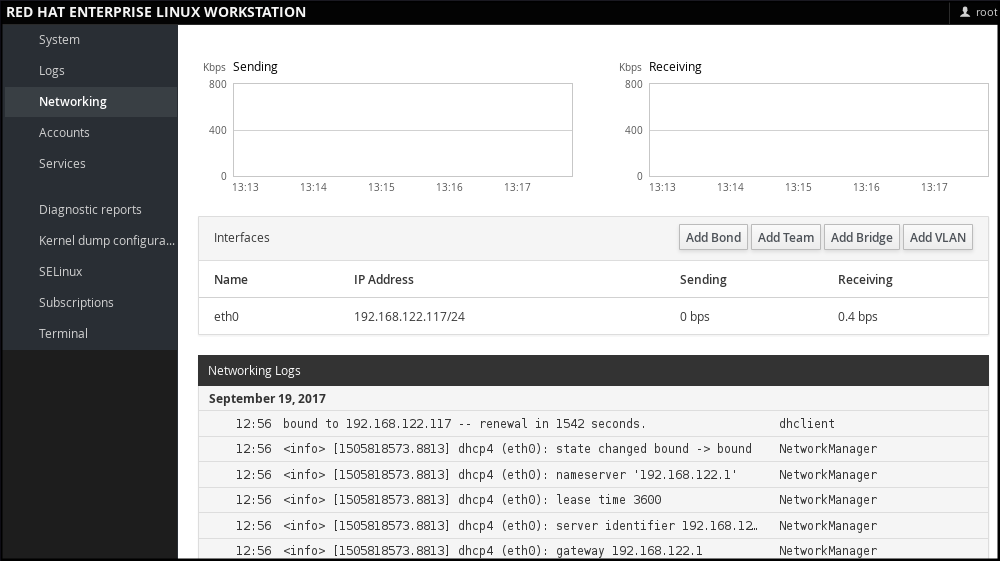

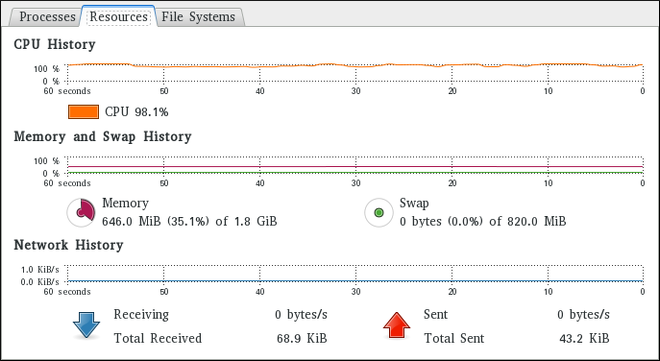

1.2.4. Managing Networking in web console

In web console, the menu enables you:

- To display currently received and sent packets

- To display the most important characteristics of available network interfaces

- To display content of the networking logs.

- To add various types of network interfaces (bond, team, bridge, VLAN)

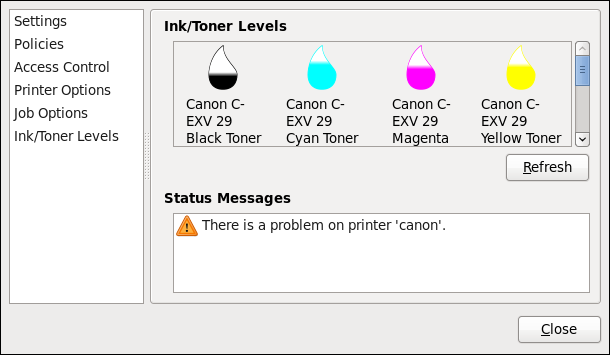

Figure 1.1. Managing Networking in web console

1.3. The Basics of Registering the System and Managing Subscriptions

1.3.1. What Red Hat Subscriptions Are and Which Tasks They Can Be Used For

The products installed on Red Hat Enterprise Linux 7, including the operating system itself, are covered by subscriptions.

A subscription to Red Hat Content Delivery Network is used to track:

- Registered systems

- Products installed on those system

- Subscriptions attached to those product

1.3.2. Registering the System During the Installation

This section provides a brief summary of registering Red Hat Enterprise Linux 7 during the installation process. If your operating system is not registered after the installation, you can find what might have been missed during the installation by reading through this section. For detailed information, consult Red Hat Enterprise Linux 7 Installation Guide.

Basically, there are two ways to register the system during the installation:

- Normally, registration is a part of the Initial Setup configuration process. For more information, see Red Hat Enterprise Linux 7 Installation Guide.

- Another option is to run Subscription manager as a post-installation script, which performs the automatic registration at the moment when the installation is complete and before the system is rebooted for the first time. To ensure this, modify the %post section of the Kickstart file. For more detailed information on running Subscription manager as a post-installation script, see Red Hat Enterprise Linux 7 Installation Guide.

1.3.3. Registering the System after the Installation

If you have not registered your system during installation process, you can do it afterwards by applying the following procedure. Note that all commands in this procedure need to be performed as the root user.

Registering and Subscribing Your System

Register your system:

subscription-manager register

~]# subscription-manager registerCopy to Clipboard Copied! Toggle word wrap Toggle overflow The command will prompt you to enter your Red Hat Customer Portal user name and password.

Determine the pool ID of a subscription that you require:

subscription-manager list --available

~]# subscription-manager list --availableCopy to Clipboard Copied! Toggle word wrap Toggle overflow This command displays all available subscriptions for your Red Hat account. For every subscription, various characteristics are displayed, including the pool ID.

Attach the appropriate subscription to your system by replacing pool_id with the pool ID determined in the previous step:

subscription-manager attach --pool=pool_id

~]# subscription-manager attach --pool=pool_idCopy to Clipboard Copied! Toggle word wrap Toggle overflow

For more information on registration of your system and attachment of the Red Hat Content Delivery Network subscriptions, see Chapter 7, Registering the System and Managing Subscriptions.

1.3.4. Registering a System to EUS Content

To access the Extended Update Support (EUS) content, register your system as follows:

Verify that EUS entitlements are available:

subscription-manager list --available --matches="*Extended Update Support"

~]# subscription-manager list --available --matches="*Extended Update Support"Copy to Clipboard Copied! Toggle word wrap Toggle overflow Copy to Clipboard Copied! Toggle word wrap Toggle overflow Attach the applicable subscription using the Pool identifier:

subscription-manager attach --pool 8a99f9ac7238188b01723d9c8a8a06a9

~]# subscription-manager attach --pool 8a99f9ac7238188b01723d9c8a8a06a9Copy to Clipboard Copied! Toggle word wrap Toggle overflow Replace the default repositories enabled for the system with the EUS variants:

subscription-manager repos --disable \*

~]# subscription-manager repos --disable \*Copy to Clipboard Copied! Toggle word wrap Toggle overflow Enable the repositories which represent the EUS content set for the RHEL revision in use:

subscription-manager repos --enable rhel-7-server-eus-rpms

~]# subscription-manager repos --enable rhel-7-server-eus-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Select the required and supported release for the end system:

subscription-manager release --set 7.6

~]# subscription-manager release --set 7.6Copy to Clipboard Copied! Toggle word wrap Toggle overflow

For currently supported EUS releases, see Extended Update Support Add-on.

1.3.5. Registering a System to E4S Content

The following procedure describes how to register a system and utilize the E4S content.

Register your system using the following command:

subscription-manager register

~]# subscription-manager registerCopy to Clipboard Copied! Toggle word wrap Toggle overflow Verify that E4S entitlements are available:

subscription-manager list --available --matches="*Update Services for SAP Solutions*"

~]# subscription-manager list --available --matches="*Update Services for SAP Solutions*"Copy to Clipboard Copied! Toggle word wrap Toggle overflow Copy to Clipboard Copied! Toggle word wrap Toggle overflow Attach the applicable subscription using the Pool identifier:

subscription-manager attach --pool=#################

~]# subscription-manager attach --pool=#################Copy to Clipboard Copied! Toggle word wrap Toggle overflow Replace the default repositories enabled for the system with the EUS variants:

subscription-manager repos --disable="*"

~]# subscription-manager repos --disable="*"Copy to Clipboard Copied! Toggle word wrap Toggle overflow Enable the repositories which represent the E4S content set for the RHEL revision in use:

subscription-manager --enable=rhel-7-server-e4s-rpms

~]# subscription-manager --enable=rhel-7-server-e4s-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Clear the repository cache and release lock the system to a valid release for E4S which supports your SAP application:

yum clean all && subscription-manager release --set=7.7

~]# yum clean all && subscription-manager release --set=7.7Copy to Clipboard Copied! Toggle word wrap Toggle overflow

1.4. Installing Software

This section provides information to guide you through the basics of software installation on a Red Hat Enterprise Linux 7 system. It mentions the prerequisites that you need to fulfil to be able to install software in Section 1.4.1, “Prerequisites for Software Installation”, provides the basic information on software packaging and software repositories in Section 1.4.2, “Introduction to the System of Software Packaging and Software Repositories”, and references the ways to perform basic tasks related to software installation in Section 1.4.3, “Managing Basic Software Installation Tasks with Subscription Manager and Yum”.

1.4.1. Prerequisites for Software Installation

The Red Hat Content Delivery Network subscription service provides a mechanism to handle Red Hat software inventory and enables you to install additional software or update already installed packages. You can start installing software once you have registered your system and attached a subscription, as described in Section 1.3, “The Basics of Registering the System and Managing Subscriptions”.

1.4.2. Introduction to the System of Software Packaging and Software Repositories

All software on a Red Hat Enterprise Linux system is divided into RPM packages, which are stored in particular repositories. When a system is subscribed to the Red Hat Content Delivery Network, a repository file is created in the /etc/yum.repos.d/ directory.

Use the yum utility to manage package operations:

- Searching information about packages

- Installing packages

- Updating packages

- Removing packages

- Checking the list of currently available repositories

- Adding or removing a repository

- Enabling or disabling a repository

For information on basic tasks related to the installation of software, see Section 1.4.3, “Managing Basic Software Installation Tasks with Subscription Manager and Yum”. For further information on managing software repositories, see Section 7.2, “Managing Software Repositories”. For detailed information on using the yum utility, see Chapter 9, Yum.

1.4.3. Managing Basic Software Installation Tasks with Subscription Manager and Yum

The most basic software-installation tasks that you might need after the operating system has been installed include:

Listing all available repositories:

subscription-manager repos --list

~]# subscription-manager repos --listCopy to Clipboard Copied! Toggle word wrap Toggle overflow Listing all currently enabled repositories:

yum repolist

~]$ yum repolistCopy to Clipboard Copied! Toggle word wrap Toggle overflow Enabling or disabling a repository:

subscription-manager repos --enable repository

~]# subscription-manager repos --enable repositoryCopy to Clipboard Copied! Toggle word wrap Toggle overflow subscription-manager repos --disable repository

~]# subscription-manager repos --disable repositoryCopy to Clipboard Copied! Toggle word wrap Toggle overflow Searching for packages matching a specific string:

yum search string

~]$ yum search stringCopy to Clipboard Copied! Toggle word wrap Toggle overflow Installing a package:

yum install package_name

~]# yum install package_nameCopy to Clipboard Copied! Toggle word wrap Toggle overflow Updating all packages and their dependencies:

yum update

~]# yum updateCopy to Clipboard Copied! Toggle word wrap Toggle overflow Updating a package:

yum update package_name

~]# yum update package_nameCopy to Clipboard Copied! Toggle word wrap Toggle overflow Uninstalling a package and any packages that depend on it:

yum remove package_name

~]# yum remove package_nameCopy to Clipboard Copied! Toggle word wrap Toggle overflow Listing information on all installed and available packages:

yum list all

~]$ yum list allCopy to Clipboard Copied! Toggle word wrap Toggle overflow Listing information on all installed packages:

yum list installed

~]$ yum list installedCopy to Clipboard Copied! Toggle word wrap Toggle overflow

1.5. Making systemd Services Start at Boot Time

Systemd is a system and service manager for Linux operating systems that introduces the concept of systemd units. For more information on systemd, see Section 10.1, “Introduction to systemd”.

This section provides the information on how to ensure that a service is enabled or disabled at boot time. It also explains how to manage the services through web console.

1.5.1. Enabling or Disabling the Services

You can determine services that are enabled or disabled at boot time already during the installation process, or you can enable or disable a service on an installed operating system.

To create the list of services enabled or disabled at boot time during the installation process, use the services option in the Kickstart file:

services [--disabled=list] [--enabled=list]

services [--disabled=list] [--enabled=list]The list of disabled services is processed before the list of enabled services. Therefore, if a service appears on both lists, it will be enabled. The list of the services should be given in the comma separated format. Do not include spaces in the list of services. For detailed information, refer to Red Hat Enterprise Linux 7 Installation Guide.

To enable or disable a service on an already installed operating system:

systemctl enableservice_name

~]# systemctl enableservice_namesystemctl disableservice_name

~]# systemctl disableservice_nameFor further details, see Section 10.2, “Managing System Services”.

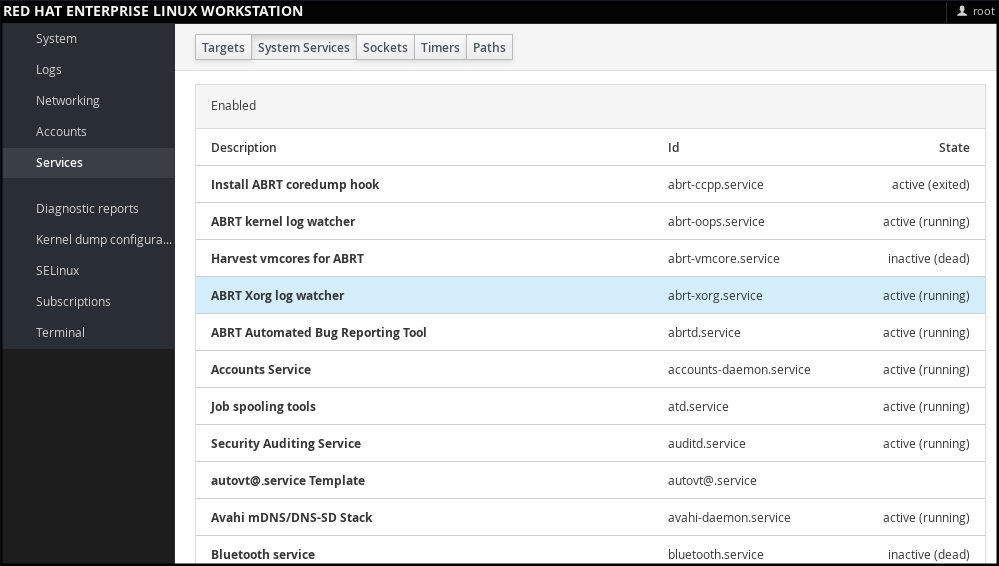

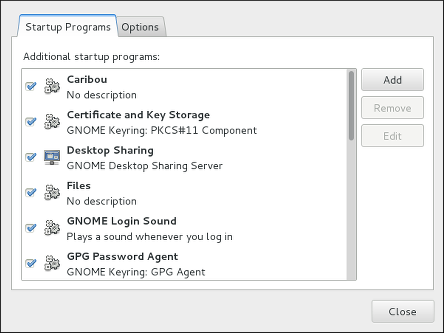

1.5.2. Managing Services in web console

In web console, select to manage systemd targets, services, sockets, timers and paths. There you can check their status, start or stop them, enable or disable them.

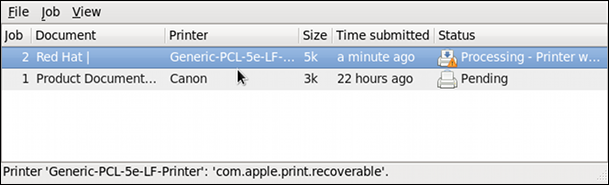

Figure 1.2. Managing Services in web console

1.5.3. Additional Resources on systemd Services

For more information on systemd, see Chapter 10, Managing Services with systemd.

1.6. Enhancing System Security with a Firewall, SELinux and SSH Logings

Computer security is the protection of computer systems from the theft or damage to their hardware, software, or information, as well as from disruption or misdirection of the services they provide. Ensuring computer security is therefore an essential task not only in the enterprises processing sensitive data or handling some business transactions.

Computer security includes a wide variety of features and tools. This section covers only the basic security features that you need to configure after you have installed the operating system. For detailed information on securing Red Hat Enterprise Linux 7, see Red Hat Enterprise Linux 7 Security Guide.

1.6.1. Ensuring the Firewall Is Enabled and Running

1.6.1.1. What a Firewall Is and How It Enhances System Security

A firewall is a network security system that monitors and controls the incoming and outgoing network traffic based on predetermined security rules. A firewall typically establishes a barrier between a trusted, secure internal network and another outside network.

On Red Hat Enterprise Linux 7, the firewall is provided by the firewalld service, which is automatically enabled during the installation of Red Hat Enterprise Linux. However, if you explicitly disabled the service, for example in the kickstart configuration, you can re-enable it, as described in Section 1.6.1.2, “Re-enabling the firewalld Service”. For overview of firewall setting options in the Kickstart file, see Red Hat Enterprise Linux 7 Installation Guide.

1.6.1.2. Re-enabling the firewalld Service

In case that the firewalld service is disabled after the installation, Red Hat recommends to consider re-enabling it.

You can display the current status of firewalld even as a regular user:

systemctl status firewalld

~]$ systemctl status firewalld

If firewalld is not enabled and running, switch to the root user, and change its status:

systemctl start firewalld

~]# systemctl start firewalldsystemctl enable firewalld

~]# systemctl enable firewalld

For further information on post-installation procedures related to firewalld, see Red Hat Enterprise Linux 7 Security Guide. For detailed information on configuring and using firewall, see Red Hat Enterprise Linux 7 Security Guide

1.6.2. Ensuring the Appropriate State of SELinux

1.6.2.1. What SELinux Is and How It Enhances System Security

Security Enhanced Linux (SELinux) is an additional layer of system security that determines which process can access which files, directories, and ports.

SELinux States

SELinux has two possible states:

- Enabled

- Disabled

When SELinux is disabled, only Discretionary Access Control (DAC) rules are used.

SELinux Modes

When SELinux is enabled, it can run in one of the following modes:

- Enforcing

- Permissive

Enforcing mode means that SELinux policies is enforced. SELinux denies access based on SELinux policy rules, and enables only the interactions that are particularly allowed. Enforcing mode is the default mode after the installation and it is also the safest SELinux mode.

Permissive mode means that SELinux policies is not enforced. SELinux does not deny access, but denials are logged for actions that would have been denied if running in enforcing mode. Permissive mode is the default mode during the installation. Operating in permissive mode is also useful in some specific cases, for example if you require access to the Access Vector Cache (AVC) denials when troubleshooting problems.

For further information on SELinux in Red Hat Enterprise Linux 7, see Red Hat Enterprise Linux 7 SELinux User’s and Administrator’s Guide.

1.6.2.2. Ensuring the Required State of SELinux

By default, SELinux operates in permissive mode during the installation and in enforcing mode when the installation has finished.

However, in some specific situations, SELinux might be explicitly set to permissive mode or it might even be disabled on the installed operating system. This can be set for example in the kickstart configuration. For overview of SELinux setting options in the Kickstart file, see Red Hat Enterprise Linux 7 Installation Guide.

Red Hat recommends to keep your system in enforcing mode.

To display the current SELinux mode, and to set the mode as needed:

Ensuring the Required State of SELinux

Display the current SELinux mode in effect:

getenforce

~]$ getenforceCopy to Clipboard Copied! Toggle word wrap Toggle overflow If needed, switch between the SELinux modes.

The switch can be either temporary or permanent. A temporary switch is not persistent across reboots, while permanent switch is.

To temporary switch to either enforcing or permissive mode:

setenforce Enforcing

~]# setenforce EnforcingCopy to Clipboard Copied! Toggle word wrap Toggle overflow setenforce Permissive

~]# setenforce PermissiveCopy to Clipboard Copied! Toggle word wrap Toggle overflow To permanently set the SELinux mode, modify the SELINUX variable in the

/etc/selinux/configconfiguration file.For example, to switch SELinux to enforcing mode:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow

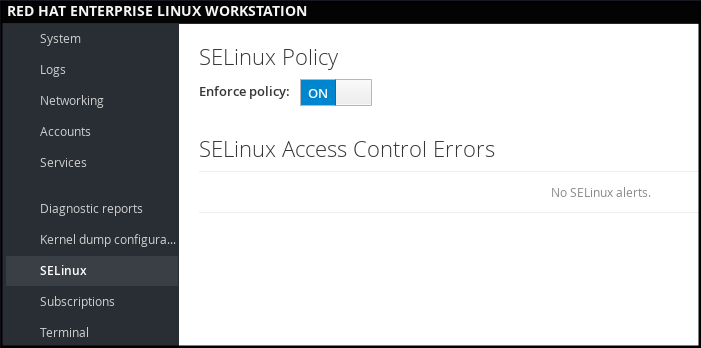

1.6.2.3. Managing SELinux in web console

In web console, use the option to turn SELinux enforcing policy on or off.

By default, SELinux enforcing policy in web console is on, and SELinux operates in enforcing mode. By turning it off, you can switch SELinux into permissive mode. Note that such deviation from the default configuration in the /etc/sysconfig/selinux file is automatically reverted on the next boot.

Figure 1.3. Managing SELinux in web console

1.6.3. Using SSH-based Authentication

1.6.3.1. What SSH-based Authentication Is and How It Enhances System Security

If you want to secure your communication with another computer, you can use SSH-based authentication.

Secure Shell (SSH) is a protocol which facilitates client-server communication and allows users to log in to any host system running SSH remotely. SSH encrypts the connection. The client transmits its authentication information to the server using encryption, and all data sent and received during a session are transferred under the encryption.

SSH enables its users to authenticate without a password. To do so, SSH uses a private-public key scheme.

For further information about SSH safeguards, see Section 12.1.2, “Main Features”.

1.6.3.2. Establishing an SSH Connection

To be able to use SSH connection, create a pair of two keys consisting of a public and a private key.

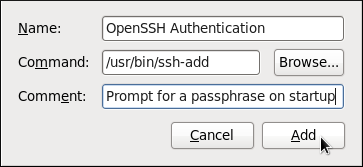

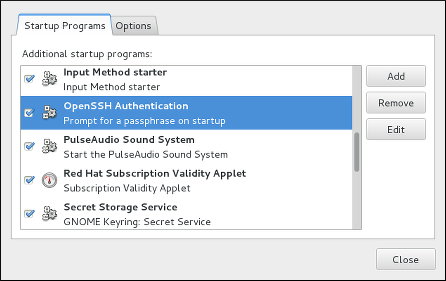

Creating the Key Files and Copying Them to the Server

Generate a public and a private key:

ssh-keygen

~]$ ssh-keygenCopy to Clipboard Copied! Toggle word wrap Toggle overflow Both keys are stored in the

~/.ssh/directory:-

~/.ssh/id_rsa.pub- public key ~/.ssh/id_rsa- private keyThe public key does not need to be secret. It is used to verify the private key. The private key is secret. You can choose to protect the private key with the passphrase that you specify during the key generation process. With the passphrase, authentication is even more secure, but is no longer password-less. You can avoid this using the

ssh-agentcommand. In this case, you will enter the passphrase only once - at the beginning of a session. For more information onssh-agentconfiguration, see Section 12.2.4, “Using Key-based Authentication”.

-

Copy the most recently modified public key to a remote machine you want to log into:

ssh-copy-id USER@hostname

~]# ssh-copy-id USER@hostnameCopy to Clipboard Copied! Toggle word wrap Toggle overflow As a result, you are now able to enter the system in a secure way, but without entering a password.

1.6.3.3. Disabling SSH Root Login

To increase system security, you can disable SSH access for the root user, which is enabled by default.

For more information on this topic, see Red Hat Enterprise Linux 7 Security Guide.

Disabling SSH Root Login

Access the

/etc/ssh/sshd_configfile:vi /etc/ssh/sshd_config

~]# vi /etc/ssh/sshd_configCopy to Clipboard Copied! Toggle word wrap Toggle overflow Change the line that reads

#PermitRootLogin yesto:PermitRootLogin no

PermitRootLogin noCopy to Clipboard Copied! Toggle word wrap Toggle overflow Restart the

sshdservice:systemctl restart sshd

~]# systemctl restart sshdCopy to Clipboard Copied! Toggle word wrap Toggle overflow

1.7. The Basics of Managing User Accounts

Red Hat Enterprise Linux 7 is a multi-user operating system, which enables multiple users on different computers to access a single system installed on one machine. Every user operates under its own account, and managing user accounts thus represents a core element of Red Hat Enterprise Linux system administration.

Normal and System Accounts

Normal accounts are created for users of a particular system. Such accounts can be added, removed, and modified during normal system administration.

System accounts represent a particular applications identifier on a system. Such accounts are generally added or manipulated only at software installation time, and they are not modified later.

System accounts are presumed to be available locally on a system. If these accounts are configured and provided remotely, such as in the instance of an LDAP configuration, system breakage and service start failures can occur.

For system accounts, user IDs below 1000 are reserved. For normal accounts, you can use IDs starting at 1000. However, the recommended practice is to assign IDs starting at 5000. See Section 4.1, “Introduction to Users and Groups” for more information. The guidelines for assigning IDs can be found in the /etc/login.defs file.

What Groups Are and Which Purposes They Can Be Used For

A group is an entity which ties together multiple user accounts for a common purpose, such as granting access to particular files.

1.7.1. The Most Basic Command-Line Tools to Manage User Accounts and Groups

The most basic tasks to manage user accounts and groups, and the appropriate command-line tools, include:

Displaying user and group IDs:

id

~]$ idCopy to Clipboard Copied! Toggle word wrap Toggle overflow Creating a new user account:

useradd [options] user_name

~]# useradd [options] user_nameCopy to Clipboard Copied! Toggle word wrap Toggle overflow Assigning a new password to a user account belonging to username:

passwd user_name

~]# passwd user_nameCopy to Clipboard Copied! Toggle word wrap Toggle overflow Adding a user to a group:

usermod -a -G group_name user_name

~]# usermod -a -G group_name user_nameCopy to Clipboard Copied! Toggle word wrap Toggle overflow

For detailed information on managing users and groups, see Chapter 4, Managing Users and Groups.

If you want to use graphical user interface to manage users and groups, see Section 4.2, “Managing Users in a Graphical Environment”.

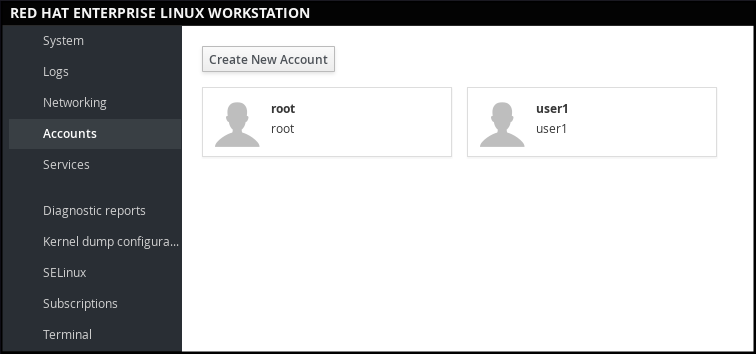

1.7.2. Managing User Accounts in web console

To manage accounts in web console, select the menu.

Figure 1.4. Managing User Accounts in web console

1.8. Dumping the Crashed Kernel Using the kdump Mechanism

This section provides an introduction to the Kernel crash dump mechanism, also called kdump, and briefly explains what kdump is used for in Section 1.8.1, “What kdump Is and Which Tasks It Can Be Used For”.

Activation of the kdump service is a part of the installation process, and by default, kdump was enabled during the installation. This section summarizes how to activate kdump during the installation in Section 1.8.2, “Enabling and Activating kdump During the Installation Process”, and how to manually enable the kdump service if it is disabled after the installation in Section 1.8.3, “Ensuring That kdump Is Installed and Enabled after the Installation Process”.

You can also use web console to configure kdump. See Section 1.8.4, “Configuring kdump in web console” for more information.

1.8.1. What kdump Is and Which Tasks It Can Be Used For

In case of a system crash, you can use the Kernel crash dump mechanism called kdump that enables you to save the content of the system’s memory for later analysis. The kdump mechanism relies on the kexec system call, which can be used to boot a Linux kernel from the context of another kernel, bypass BIOS, and preserve the contents of the first kernel’s memory that would otherwise be lost.

When kernel crash occurs, kdump uses kexec to boot into a second kernel (a capture kernel), which resides in a reserved part of the system memory that is inaccessible to the first kernel. The second kernel captures the contents of the crashed kernel’s memory (a crash dump) and saves it.

1.8.2. Enabling and Activating kdump During the Installation Process

During the installation, enablement and activation of kdump can be done either in the Anaconda installer or using the %addon com_redhat_kdump command in the Kickstart file.

For more information, see the appropriate source according to the installation method:

When installing with the Anaconda installer, see:

Installing Using Anaconda in Red Hat Enterprise Linux 7 Installation Guide.

When installing with the Kickstart file, see:

Kickstart Commands and Options in Red Hat Enterprise Linux 7 Installation Guide.

1.8.3. Ensuring That kdump Is Installed and Enabled after the Installation Process

To ensure that kdump is installed and to configure it:

Checking Whether kdump Is Installed and Configuring kdump

To check whether kdump is installed on your system:

rpm -q kexec-tools

~]$ rpm -q kexec-toolsCopy to Clipboard Copied! Toggle word wrap Toggle overflow If not installed, to install kdump, enter as the

rootuser:yum install kexec-tools

~]# yum install kexec-toolsCopy to Clipboard Copied! Toggle word wrap Toggle overflow To configure kdump:

Use either the command line or graphical user interface.

Both options are described in detail in Red Hat Enterprise Linux 7 Kernel Crash Dump Guide.

If you need to install the graphical configuration tool:

yum install system-config-kdump

~]# yum install system-config-kdumpCopy to Clipboard Copied! Toggle word wrap Toggle overflow

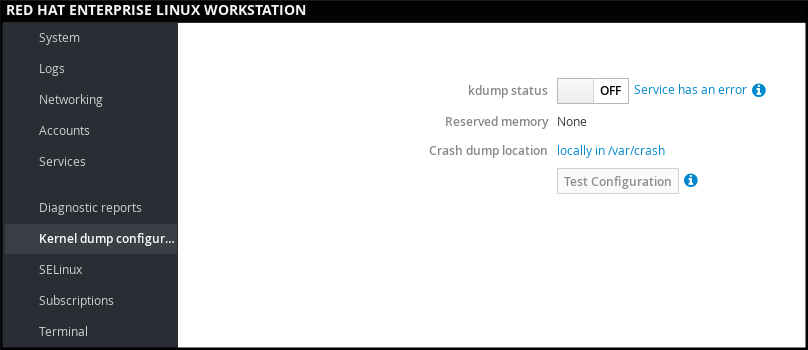

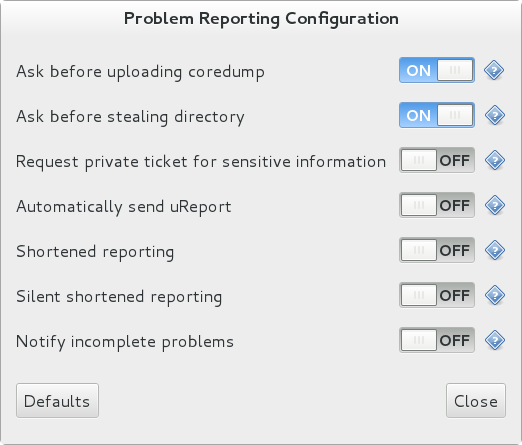

1.8.4. Configuring kdump in web console

In web console, select to verify:

- the kdump status

- the amount of memory reserved for kdump

- the location of the crash dump files

Figure 1.5. Configuring kdump in web console

1.8.5. Additional Resources on kdump

For more information on kdump, see Red Hat Enterprise Linux 7 Kernel Crash Dump Guide.

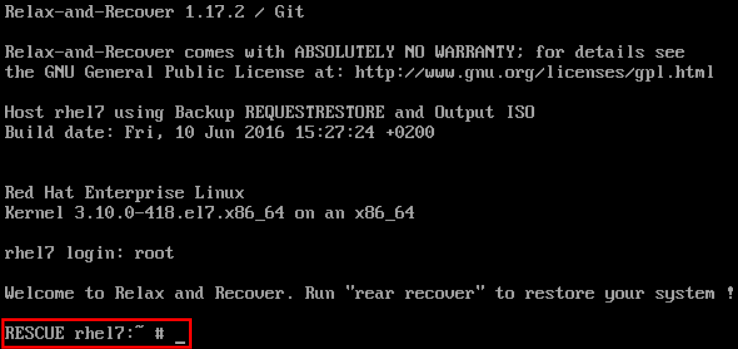

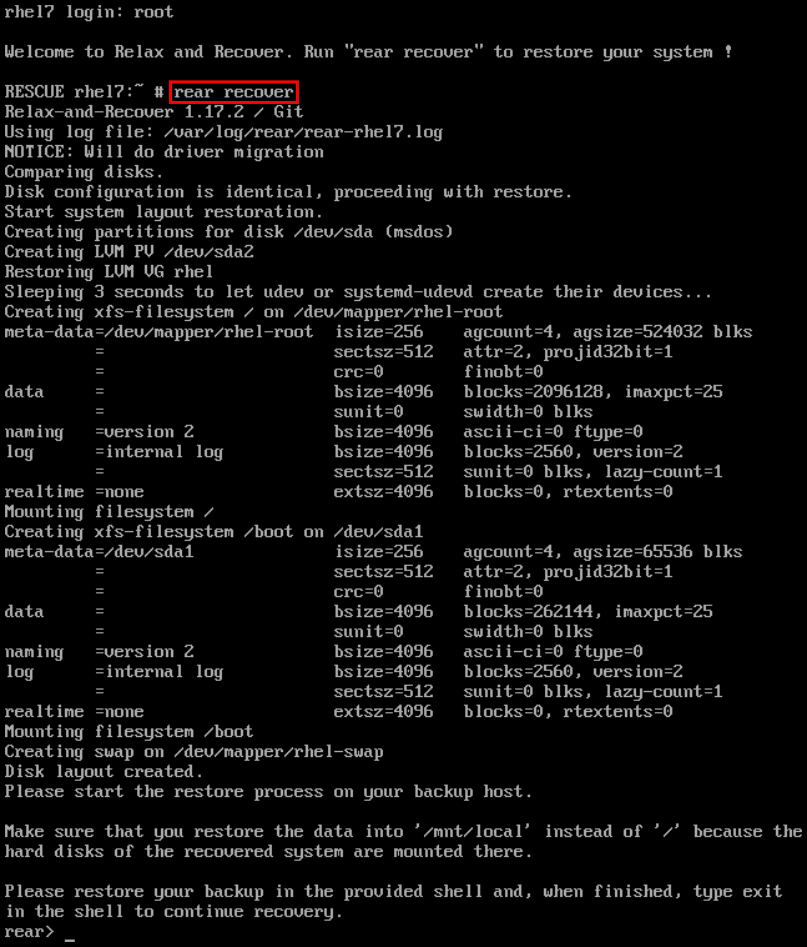

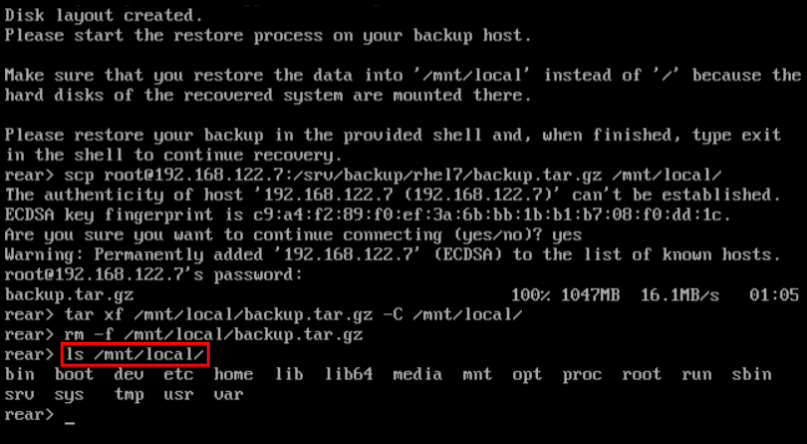

1.9. Performing System Rescue and Creating System Backup with ReaR

When a software or hardware failure breaks the operating system, you need a mechanism to rescue the system. It is also useful to have the system backup saved. Red Hat recommends using the Relax-and-Recover (ReaR) tool to fulfil both these needs.

1.9.1. What ReaR Is and Which Tasks It Can Be Used For

ReaR is a disaster recovery and system migration utility which enables you to create the complete rescue system. By default, this rescue system restores only the storage layout and the boot loader, but not the actual user and system files.

Additionally, certain backup software enables you to integrate ReaR for disaster recovery.

ReaR enables to perform the following tasks:

- Booting a rescue system on the new hardware

- Replicating the original storage layout

- Restoring user and system files

1.9.2. Quickstart to Installation and Configuration of ReaR

To install ReaR, enter as the root user:

yum install rear

~]# yum install rear

Use the settings in the /etc/rear/local.conf file to configure ReaR.

For further information see, Section 27.1, “Basic ReaR Usage”.

1.9.3. Quickstart to Creation of the Rescue System with ReaR

- To create the rescue system, perform the following command as the

rootuser

rear mkrescue

~]# rear mkrescueFor further information on creating the rescue system with ReaR, see Section 27.1.3, “Creating a Rescue System”.

1.9.4. Quickstart to Configuration of ReaR with the Backup Software

ReaR contains a fully-integrated built-in, or internal, backup method called NETFS.

To make ReaR use its internal backup method, add these lines to the /etc/rear/local.conf file:

BACKUP=NETFS BACKUP_URL=backup location

BACKUP=NETFS

BACKUP_URL=backup location

You can also configure ReaR to keep the previous backup archives when the new ones are created by adding the following line to /etc/rear/local.conf:

NETFS_KEEP_OLD_BACKUP_COPY=y

NETFS_KEEP_OLD_BACKUP_COPY=y

To make the backups incremental, meaning that only the changed files are backed up on each run, add this line to /etc/rear/local.conf:

BACKUP_TYPE=incremental

BACKUP_TYPE=incrementalFor detailed information about using the ReaR NETFS internal backup method, see Section 27.2.1, “The Built-in Backup Method”.

For information on supported external backup methods and unsupported backup methods, see Section 27.2.2, “Supported Backup Methods” and Section 27.2.3, “Unsupported Backup Methods”.

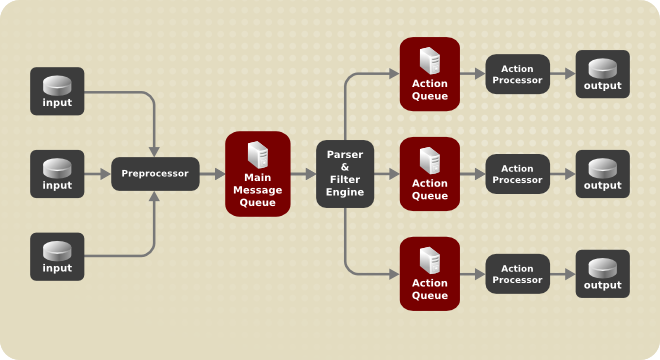

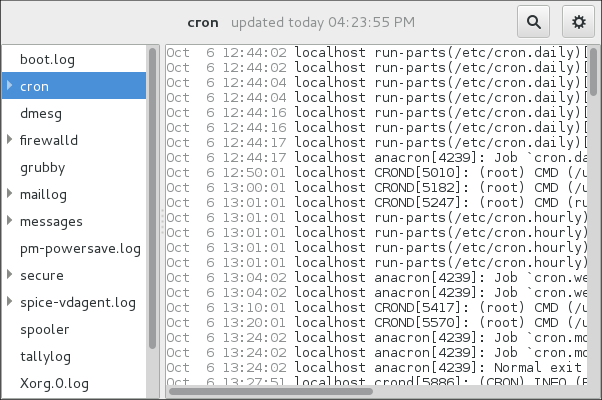

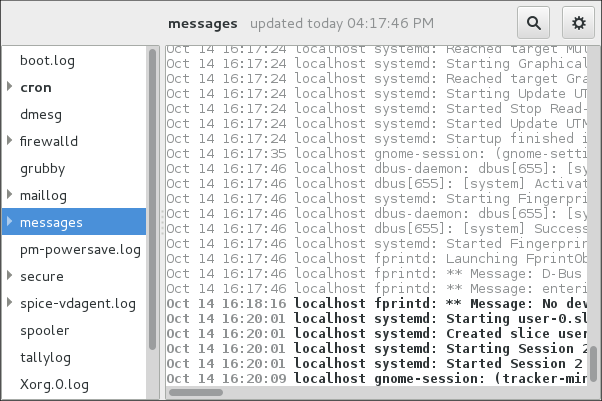

1.10. Using the Log Files to Troubleshoot Problems

When troubleshooting a problem, you may appreciate the log files that contain different information and messages about the operating system. The logging system in Red Hat Enterprise Linux 7 is based on the built-in syslog protocol. Particular programs use this system to record events and organize them into log files, which are useful when auditing the operating system and troubleshooting various problems.

For more information on log files, see Chapter 23, Viewing and Managing Log Files.

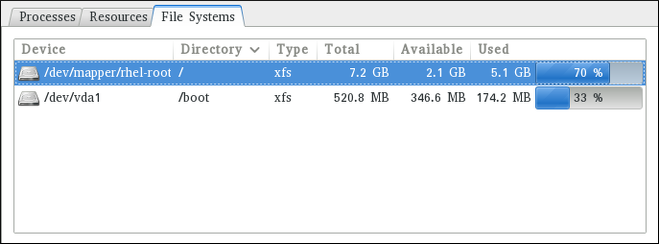

1.10.1. Services Handling the syslog Messages

The syslog messages are handled by two services:

-

the

systemd-journalddaemon - Collects messages from the kernel, the early stages of the boot process, standard output and error of daemons as they start up and run, and syslog, and forwards the messages to thersyslogservice for further processing. -

the

rsyslogservice - Sorts the syslog messages by type and priority, and writes them to the files in the/var/logdirectory, where the logs are persistently stored.

1.10.2. Subdirectories Storing the syslog Messages

The syslog messages are stored in various subdirectories under the /var/log directory according to what kind of messages and logs they contain:

-

var/log/messages- all syslog messages except those mentioned below -

var/log/secure- security and authentication-related messages and errors -

var/log/maillog- mail server-related messages and errors -

var/log/cron- log files related to periodically executed tasks -

var/log/boot.log- log files related to system startup

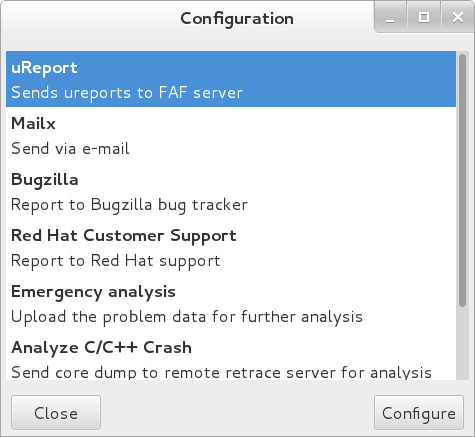

1.11. Accessing Red Hat Support

To obtain support from Red Hat, use the Red Hat Customer Portal, which provides access to everything available with your subscription.

This section describes:

- Obtaining Red Hat support, see Section 1.11.1, “Obtaining Red Hat Support Through Red Hat Customer Portal”

- Using the SOS report to troubleshoot problems, see Section 1.11.2, “Using the SOS Report to Troubleshoot Problems”

1.11.1. Obtaining Red Hat Support Through Red Hat Customer Portal

By using the Red Hat Customer Portal you can:

- Open a new support case

- Initiate a live chat with a Red Hat expert

- Contact a Red Hat expert by making a call or sending an email

To access the Red Hat Customer Portal, go to https://access.redhat.com..

To work with Red Hat Customer Portal services related to Red Hat support, you can use:

- Web browser

- Red Hat Support Tool

1.11.1.1. What the Red Hat Support Tool Is and Which Tasks It Can Be Used For

The Red Hat Support Tool is a command-line based tool which provides a text console interface to the subscription-based Red Hat access services. This tool is contained in the redhat-support-tool package.

The Red Hat Support Tool enables you to perform support-related tasks, such as:

- Opening or updating support cases

- Searching in the Red Hat knowledge base solutions

- Analyzing Python and Java errors

To start the tool in interactive mode:

redhat-support-tool

~]$ redhat-support-tool

Welcome to the Red Hat Support Tool.

Command (? for help):In interactive mode, enter to display the available commands:

Command (? for help): ?

Command (? for help): ?For more information about the installation and use of the Red Hat Support Tool, see Chapter 8, Accessing Support Using the Red Hat Support Tool, and the Red Hat Knowledgebase article Red Hat Access: Red Hat Support Tool.

1.11.2. Using the SOS Report to Troubleshoot Problems

The SOS report collects configuration details, system information and diagnostic information from a Red Hat Enterprise Linux system. Attach the report when you open a support case.

Note that the SOS report is provided in the sos package, which is not installed with the default minimal installation of Red Hat Enterprise Linux 7.

To install the sos package:

yum install sos

~]# yum install sosTo generate an SOS report:

sosreport

~]# sosreportTo attach the sos report to your support case, see the Red Hat Knowledgebase article How can I attach a file to a Red Hat support case?. Note that you will be prompted to enter the number of the support case, when attaching the sos report.

For more information on SOS report, see the Red Hat Knowledgebase article What is a sosreport and how to create one in Red Hat Enterprise Linux 4.6 and later?.

Chapter 2. System Locale and Keyboard Configuration

The system locale specifies the language settings of system services and user interfaces. The keyboard layout settings control the layout used on the text console and graphical user interfaces.

These settings can be made by modifying the /etc/locale.conf configuration file or by using the localectl utility. Also, you can use the graphical user interface to perform the task; for a description of this method, see Red Hat Enterprise Linux 7 Installation Guide.

2.1. Setting the System Locale

System-wide locale settings are stored in the /etc/locale.conf file, which is read at early boot by the systemd daemon. The locale settings configured in /etc/locale.conf are inherited by every service or user, unless individual programs or individual users override them.

The basic file format of /etc/locale.conf is a newline-separated list of variable assignments. For example, German locale with English messages in /etc/locale.conf looks as follows:

LANG=de_DE.UTF-8 LC_MESSAGES=C

LANG=de_DE.UTF-8

LC_MESSAGES=C

Here, the LC_MESSAGES option determines the locale used for diagnostic messages written to the standard error output. To further specify locale settings in /etc/locale.conf, you can use several other options, the most relevant are summarized in Table 2.1, “Options configurable in /etc/locale.conf”. See the locale(7) manual page for detailed information on these options. Note that the LC_ALL option, which represents all possible options, should not be configured in /etc/locale.conf.

| Option | Description |

|---|---|

| LANG | Provides a default value for the system locale. |

| LC_COLLATE | Changes the behavior of functions which compare strings in the local alphabet. |

| LC_CTYPE | Changes the behavior of the character handling and classification functions and the multibyte character functions. |

| LC_NUMERIC | Describes the way numbers are usually printed, with details such as decimal point versus decimal comma. |

| LC_TIME | Changes the display of the current time, 24-hour versus 12-hour clock. |

| LC_MESSAGES | Determines the locale used for diagnostic messages written to the standard error output. |

2.1.1. Displaying the Current Status

The localectl command can be used to query and change the system locale and keyboard layout settings. To show the current settings, use the status option:

localectl status

localectl statusExample 2.1. Displaying the Current Status

The output of the previous command lists the currently set locale, keyboard layout configured for the console and for the X11 window system.

localectl status

~]$ localectl status

System Locale: LANG=en_US.UTF-8

VC Keymap: us

X11 Layout: n/a2.1.2. Listing Available Locales

To list all locales available for your system, type:

localectl list-locales

localectl list-localesExample 2.2. Listing Locales

Imagine you want to select a specific English locale, but you are not sure if it is available on the system. You can check that by listing all English locales with the following command:

2.1.3. Setting the Locale

To set the default system locale, use the following command as root:

localectl set-locale LANG=locale

localectl set-locale LANG=locale

Replace locale with the locale name, found with the localectl list-locales command. The above syntax can also be used to configure parameters from Table 2.1, “Options configurable in /etc/locale.conf”.

Example 2.3. Changing the Default Locale

For example, if you want to set British English as your default locale, first find the name of this locale by using list-locales. Then, as root, type the command in the following form:

localectl set-locale LANG=en_GB.utf8

~]# localectl set-locale LANG=en_GB.utf82.1.4. Making System Locale Settings Permanent when Installing with Kickstart

When Red Hat Enterprise Linux is installed with the Red Hat Kickstart installation method, setting of the system locales might not be persistent after an upgrade of the operating system.

When the %packages section of the Kickstart file includes the --instLang option, the _install_langs RPM macro is set to the particular value for this installation, and the set of installed locales is adjusted accordingly. However, this adjustment affects only this installation, not subsequent upgrades. If an upgrade reinstalls the glibc package, the entire set of locales is upgraded instead of only the locales you requested during the installation.

To avoid this, make the choice of locales permanent. You have these options:

- If you have not started the Kickstart installation, modify the Kickstart file to include instructions for setting RPM macros globally by applying this procedure: Setting RPM macros during the Kickstart installation

- If you have already installed the system, set RPM macros globally on the system by applying this procedure: Setting RPM macros globally

Setting RPM macros during the Kickstart installation

Modify the

%postsection of the Kickstart file:LANG=en_US echo "%_install_langs $LANG" > /etc/rpm/macros.language-conf yum-config-manager --setopt=override_install_langs=$LANG --save

LANG=en_US echo "%_install_langs $LANG" > /etc/rpm/macros.language-conf yum-config-manager --setopt=override_install_langs=$LANG --saveCopy to Clipboard Copied! Toggle word wrap Toggle overflow Modify the

%packagessection of the Kickstart file:%packages yum-utils* %end

%packages yum-utils* %endCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Setting RPM macros globally

Create the RPM configuration file at

/etc/rpm/macros.language-confwith the following contents:%_install_langs LANG

%_install_langs LANGCopy to Clipboard Copied! Toggle word wrap Toggle overflow LANG is the value of the

instLangoption.Update the

/etc/yum.conffile with:override_install_langs=LANG

override_install_langs=LANGCopy to Clipboard Copied! Toggle word wrap Toggle overflow

2.2. Changing the Keyboard Layout

The keyboard layout settings enable the user to control the layout used on the text console and graphical user interfaces.

2.2.1. Displaying the Current Settings

As mentioned before, you can check your current keyboard layout configuration with the following command:

localectl status

localectl statusExample 2.4. Displaying the Keyboard Settings

In the following output, you can see the keyboard layout configured for the virtual console and for the X11 window system.

localectl status

~]$ localectl status

System Locale: LANG=en_US.utf8

VC Keymap: us

X11 Layout: us2.2.2. Listing Available Keymaps

To list all available keyboard layouts that can be configured on your system, type:

localectl list-keymaps

localectl list-keymapsExample 2.5. Searching for a Particular Keymap

You can use grep to search the output of the previous command for a specific keymap name. There are often multiple keymaps compatible with your currently set locale. For example, to find available Czech keyboard layouts, type:

2.2.3. Setting the Keymap

To set the default keyboard layout for your system, use the following command as root:

localectl set-keymap map

localectl set-keymap map

Replace map with the name of the keymap taken from the output of the localectl list-keymaps command. Unless the --no-convert option is passed, the selected setting is also applied to the default keyboard mapping of the X11 window system, after converting it to the closest matching X11 keyboard mapping. This also applies in reverse, you can specify both keymaps with the following command as root:

localectl set-x11-keymap map

localectl set-x11-keymap map

If you want your X11 layout to differ from the console layout, use the --no-convert option.

localectl --no-convert set-x11-keymap map

localectl --no-convert set-x11-keymap mapWith this option, the X11 keymap is specified without changing the previous console layout setting.

Example 2.6. Setting the X11 Keymap Separately

Imagine you want to use German keyboard layout in the graphical interface, but for console operations you want to retain the US keymap. To do so, type as root:

localectl --no-convert set-x11-keymap de

~]# localectl --no-convert set-x11-keymap deThen you can verify if your setting was successful by checking the current status:

localectl status

~]$ localectl status

System Locale: LANG=de_DE.UTF-8

VC Keymap: us

X11 Layout: deApart from keyboard layout (map), three other options can be specified:

localectl set-x11-keymap map model variant options

localectl set-x11-keymap map model variant options

Replace model with the keyboard model name, variant and options with keyboard variant and option components, which can be used to enhance the keyboard behavior. These options are not set by default. For more information on X11 Model, X11 Variant, and X11 Options see the kbd(4) man page.

2.3. Additional Resources

For more information on how to configure the keyboard layout on Red Hat Enterprise Linux, see the resources listed below:

Installed Documentation

-

localectl(1) — The manual page for thelocalectlcommand line utility documents how to use this tool to configure the system locale and keyboard layout. -

loadkeys(1) — The manual page for theloadkeyscommand provides more information on how to use this tool to change the keyboard layout in a virtual console.

See Also

-

Chapter 6, Gaining Privileges documents how to gain administrative privileges by using the

suandsudocommands. -

Chapter 10, Managing Services with systemd provides more information on

systemdand documents how to use thesystemctlcommand to manage system services.

Chapter 3. Configuring the Date and Time

Modern operating systems distinguish between the following two types of clocks:

- A real-time clock (RTC), commonly referred to as a hardware clock, (typically an integrated circuit on the system board) that is completely independent of the current state of the operating system and runs even when the computer is shut down.

- A system clock, also known as a software clock, that is maintained by the kernel and its initial value is based on the real-time clock. Once the system is booted and the system clock is initialized, the system clock is completely independent of the real-time clock.

The system time is always kept in Coordinated Universal Time (UTC) and converted in applications to local time as needed. Local time is the actual time in your current time zone, taking into account daylight saving time (DST). The real-time clock can use either UTC or local time. UTC is recommended.

Red Hat Enterprise Linux 7 offers three command line tools that can be used to configure and display information about the system date and time:

-

The

timedatectlutility, which is new in Red Hat Enterprise Linux 7 and is part ofsystemd. -

The traditional

datecommand. -

The

hwclockutility for accessing the hardware clock.

3.1. Using the timedatectl Command

The timedatectl utility is distributed as part of the systemd system and service manager and allows you to review and change the configuration of the system clock. You can use this tool to change the current date and time, set the time zone, or enable automatic synchronization of the system clock with a remote server.

For information on how to display the current date and time in a custom format, see also Section 3.2, “Using the date Command”.

3.1.1. Displaying the Current Date and Time

To display the current date and time along with detailed information about the configuration of the system and hardware clock, run the timedatectl command with no additional command line options:

timedatectl

timedatectl

This displays the local and universal time, the currently used time zone, the status of the Network Time Protocol (NTP) configuration, and additional information related to DST.

Example 3.1. Displaying the Current Date and Time

The following is an example output of the timedatectl command on a system that does not use NTP to synchronize the system clock with a remote server:

Changes to the status of chrony or ntpd will not be immediately noticed by timedatectl. If changes to the configuration or status of these tools is made, enter the following command:

systemctl restart systemd-timedated.service

~]# systemctl restart systemd-timedated.service3.1.2. Changing the Current Time

To change the current time, type the following at a shell prompt as root:

timedatectl set-time HH:MM:SS

timedatectl set-time HH:MM:SSReplace HH with an hour, MM with a minute, and SS with a second, all typed in two-digit form.

This command updates both the system time and the hardware clock. The result it is similar to using both the date --set and hwclock --systohc commands.

The command will fail if an NTP service is enabled. See Section 3.1.5, “Synchronizing the System Clock with a Remote Server” to temporally disable the service.

Example 3.2. Changing the Current Time

To change the current time to 11:26 p.m., run the following command as root:

timedatectl set-time 23:26:00

~]# timedatectl set-time 23:26:00

By default, the system is configured to use UTC. To configure your system to maintain the clock in the local time, run the timedatectl command with the set-local-rtc option as root:

timedatectl set-local-rtc boolean

timedatectl set-local-rtc boolean

To configure your system to maintain the clock in the local time, replace boolean with yes (or, alternatively, y, true, t, or 1). To configure the system to use UTC, replace boolean with no (or, alternatively, n, false, f, or 0). The default option is no.

3.1.3. Changing the Current Date

To change the current date, type the following at a shell prompt as root:

timedatectl set-time YYYY-MM-DD

timedatectl set-time YYYY-MM-DDReplace YYYY with a four-digit year, MM with a two-digit month, and DD with a two-digit day of the month.

Note that changing the date without specifying the current time results in setting the time to 00:00:00.

Example 3.3. Changing the Current Date

To change the current date to 2 June 2017 and keep the current time (11:26 p.m.), run the following command as root:

timedatectl set-time "2017-06-02 23:26:00"

~]# timedatectl set-time "2017-06-02 23:26:00"3.1.4. Changing the Time Zone

To list all available time zones, type the following at a shell prompt:

timedatectl list-timezones

timedatectl list-timezones

To change the currently used time zone, type as root:

timedatectl set-timezone time_zone

timedatectl set-timezone time_zone

Replace time_zone with any of the values listed by the timedatectl list-timezones command.

Example 3.4. Changing the Time Zone

To identify which time zone is closest to your present location, use the timedatectl command with the list-timezones command line option. For example, to list all available time zones in Europe, type:

To change the time zone to Europe/Prague, type as root:

timedatectl set-timezone Europe/Prague

~]# timedatectl set-timezone Europe/Prague3.1.5. Synchronizing the System Clock with a Remote Server

As opposed to the manual adjustments described in the previous sections, the timedatectl command also allows you to enable automatic synchronization of your system clock with a group of remote servers using the NTP protocol. Enabling NTP enables the chronyd or ntpd service, depending on which of them is installed.

The NTP service can be enabled and disabled using a command as follows:

timedatectl set-ntp boolean

timedatectl set-ntp boolean

To enable your system to synchronize the system clock with a remote NTP server, replace boolean with yes (the default option). To disable this feature, replace boolean with no.

Example 3.5. Synchronizing the System Clock with a Remote Server

To enable automatic synchronization of the system clock with a remote server, type:

timedatectl set-ntp yes

~]# timedatectl set-ntp yes

The command will fail if an NTP service is not installed. See Section 18.3.1, “Installing chrony” for more information.

3.2. Using the date Command

The date utility is available on all Linux systems and allows you to display and configure the current date and time. It is frequently used in scripts to display detailed information about the system clock in a custom format.

For information on how to change the time zone or enable automatic synchronization of the system clock with a remote server, see Section 3.1, “Using the timedatectl Command”.

3.2.1. Displaying the Current Date and Time

To display the current date and time, run the date command with no additional command line options:

date

dateThis displays the day of the week followed by the current date, local time, abbreviated time zone, and year.

By default, the date command displays the local time. To display the time in UTC, run the command with the --utc or -u command line option:

date --utc

date --utc

You can also customize the format of the displayed information by providing the +"format" option on the command line:

date +"format"

date +"format"

Replace format with one or more supported control sequences as illustrated in Example 3.6, “Displaying the Current Date and Time”. See Table 3.1, “Commonly Used Control Sequences” for a list of the most frequently used formatting options, or the date(1) manual page for a complete list of these options.

| Control Sequence | Description |

|---|---|

|

|

The hour in the HH format (for example, |

|

|

The minute in the MM format (for example, |

|

|

The second in the SS format (for example, |

|

|

The day of the month in the DD format (for example, |

|

|

The month in the MM format (for example, |

|

|

The year in the YYYY format (for example, |

|

|

The time zone abbreviation (for example, |

|

|

The full date in the YYYY-MM-DD format (for example, |

|

|

The full time in the HH:MM:SS format (for example, 17:30:24). This option is equal to |

Example 3.6. Displaying the Current Date and Time

To display the current date and local time, type the following at a shell prompt:

date

~]$ date

Mon Sep 16 17:30:24 CEST 2016To display the current date and time in UTC, type the following at a shell prompt:

date --utc

~]$ date --utc

Mon Sep 16 15:30:34 UTC 2016

To customize the output of the date command, type:

date +"%Y-%m-%d %H:%M"

~]$ date +"%Y-%m-%d %H:%M"

2016-09-16 17:303.2.2. Changing the Current Time

To change the current time, run the date command with the --set or -s option as root:

date --set HH:MM:SS

date --set HH:MM:SSReplace HH with an hour, MM with a minute, and SS with a second, all typed in two-digit form.

By default, the date command sets the system clock to the local time. To set the system clock in UTC, run the command with the --utc or -u command line option:

date --set HH:MM:SS --utc

date --set HH:MM:SS --utcExample 3.7. Changing the Current Time

To change the current time to 11:26 p.m., run the following command as root:

date --set 23:26:00

~]# date --set 23:26:003.2.3. Changing the Current Date

To change the current date, run the date command with the --set or -s option as root:

date --set YYYY-MM-DD

date --set YYYY-MM-DDReplace YYYY with a four-digit year, MM with a two-digit month, and DD with a two-digit day of the month.

Note that changing the date without specifying the current time results in setting the time to 00:00:00.

Example 3.8. Changing the Current Date

To change the current date to 2 June 2017 and keep the current time (11:26 p.m.), run the following command as root:

date --set "2017-06-02 23:26:00"

~]# date --set "2017-06-02 23:26:00"3.3. Using the hwclock Command

hwclock is a utility for accessing the hardware clock, also referred to as the Real Time Clock (RTC). The hardware clock is independent of the operating system you use and works even when the machine is shut down. This utility is used for displaying the time from the hardware clock. hwclock also contains facilities for compensating for systematic drift in the hardware clock.

The hardware clock stores the values of: year, month, day, hour, minute, and second. It is not able to store the time standard, local time or Coordinated Universal Time (UTC), nor set the Daylight Saving Time (DST).

The hwclock utility saves its settings in the /etc/adjtime file, which is created with the first change you make, for example, when you set the time manually or synchronize the hardware clock with the system time.

For the changes in the hwclock behaviour between Red Hat Enterprise Linux version 6 and 7, see Red Hat Enterprise Linux 7 Migration Planning Guide guide.

3.3.1. Displaying the Current Date and Time

Running hwclock with no command line options as the root user returns the date and time in local time to standard output.

hwclock

hwclock

Note that using the --utc or --localtime options with the hwclock command does not mean you are displaying the hardware clock time in UTC or local time. These options are used for setting the hardware clock to keep time in either of them. The time is always displayed in local time. Additionally, using the hwclock --utc or hwclock --local commands does not change the record in the /etc/adjtime file. This command can be useful when you know that the setting saved in /etc/adjtime is incorrect but you do not want to change the setting. On the other hand, you may receive misleading information if you use the command an incorrect way. See the hwclock(8) manual page for more details.

Example 3.9. Displaying the Current Date and Time

To display the current date and the current local time from the hardware clock, run as root:

hwclock

~]# hwclock

Tue 15 Apr 2017 04:23:46 PM CEST -0.329272 secondsCEST is a time zone abbreviation and stands for Central European Summer Time.

For information on how to change the time zone, see Section 3.1.4, “Changing the Time Zone”.

3.3.2. Setting the Date and Time

Besides displaying the date and time, you can manually set the hardware clock to a specific time.

When you need to change the hardware clock date and time, you can do so by appending the --set and --date options along with your specification:

hwclock --set --date "dd mmm yyyy HH:MM"

hwclock --set --date "dd mmm yyyy HH:MM"Replace dd with a day (a two-digit number), mmm with a month (a three-letter abbreviation), yyyy with a year (a four-digit number), HH with an hour (a two-digit number), MM with a minute (a two-digit number).

At the same time, you can also set the hardware clock to keep the time in either UTC or local time by adding the --utc or --localtime options, respectively. In this case, UTC or LOCAL is recorded in the /etc/adjtime file.

Example 3.10. Setting the Hardware Clock to a Specific Date and Time

If you want to set the date and time to a specific value, for example, to "21:17, October 21, 2016", and keep the hardware clock in UTC, run the command as root in the following format:

hwclock --set --date "21 Oct 2016 21:17" --utc

~]# hwclock --set --date "21 Oct 2016 21:17" --utc3.3.3. Synchronizing the Date and Time

You can synchronize the hardware clock and the current system time in both directions.

Either you can set the hardware clock to the current system time by using this command:

hwclock --systohc

hwclock --systohcCopy to Clipboard Copied! Toggle word wrap Toggle overflow Note that if you use NTP, the hardware clock is automatically synchronized to the system clock every 11 minutes, and this command is useful only at boot time to get a reasonable initial system time.

Or, you can set the system time from the hardware clock by using the following command:

hwclock --hctosys

hwclock --hctosysCopy to Clipboard Copied! Toggle word wrap Toggle overflow

When you synchronize the hardware clock and the system time, you can also specify whether you want to keep the hardware clock in local time or UTC by adding the --utc or --localtime option. Similarly to using --set, UTC or LOCAL is recorded in the /etc/adjtime file.

The hwclock --systohc --utc command is functionally similar to timedatectl set-local-rtc false and the hwclock --systohc --local command is an alternative to timedatectl set-local-rtc true.

Example 3.11. Synchronizing the Hardware Clock with System Time

To set the hardware clock to the current system time and keep the hardware clock in local time, run the following command as root:

hwclock --systohc --localtime

~]# hwclock --systohc --localtimeTo avoid problems with time zone and DST switching, it is recommended to keep the hardware clock in UTC. The shown Example 3.11, “Synchronizing the Hardware Clock with System Time” is useful, for example, in case of a multi boot with a Windows system, which assumes the hardware clock runs in local time by default, and all other systems need to accommodate to it by using local time as well. It may also be needed with a virtual machine; if the virtual hardware clock provided by the host is running in local time, the guest system needs to be configured to use local time, too.

3.4. Additional Resources

For more information on how to configure the date and time in Red Hat Enterprise Linux 7, see the resources listed below.

Installed Documentation

-

timedatectl(1) — The manual page for thetimedatectlcommand line utility documents how to use this tool to query and change the system clock and its settings. -

date(1) — The manual page for thedatecommand provides a complete list of supported command line options. -

hwclock(8) — The manual page for thehwclockcommand provides a complete list of supported command line options.

See Also

- Chapter 2, System Locale and Keyboard Configuration documents how to configure the keyboard layout.

-

Chapter 6, Gaining Privileges documents how to gain administrative privileges by using the

suandsudocommands. -

Chapter 10, Managing Services with systemd provides more information on systemd and documents how to use the

systemctlcommand to manage system services.

Chapter 4. Managing Users and Groups

The control of users and groups is a core element of Red Hat Enterprise Linux system administration. This chapter explains how to add, manage, and delete users and groups in the graphical user interface and on the command line, and covers advanced topics, such as creating group directories.

4.1. Introduction to Users and Groups

While users can be either people (meaning accounts tied to physical users) or accounts that exist for specific applications to use, groups are logical expressions of organization, tying users together for a common purpose. Users within a group share the same permissions to read, write, or execute files owned by that group.

Each user is associated with a unique numerical identification number called a user ID (UID). Likewise, each group is associated with a group ID (GID). A user who creates a file is also the owner and group owner of that file. The file is assigned separate read, write, and execute permissions for the owner, the group, and everyone else. The file owner can be changed only by root, and access permissions can be changed by both the root user and file owner.

Additionally, Red Hat Enterprise Linux supports access control lists (ACLs) for files and directories which allow permissions for specific users outside of the owner to be set. For more information about this feature, see Chapter 5, Access Control Lists.

Reserved User and Group IDs

Red Hat Enterprise Linux reserves user and group IDs below 1000 for system users and groups. By default, the User Manager does not display the system users. Reserved user and group IDs are documented in the setup package. To view the documentation, use this command:

cat /usr/share/doc/setup*/uidgid

cat /usr/share/doc/setup*/uidgid

The recommended practice is to assign IDs starting at 5,000 that were not already reserved, as the reserved range can increase in the future. To make the IDs assigned to new users by default start at 5,000, change the UID_MIN and GID_MIN directives in the /etc/login.defs file:

[file contents truncated] UID_MIN 5000 [file contents truncated] GID_MIN 5000 [file contents truncated]

[file contents truncated]

UID_MIN 5000

[file contents truncated]

GID_MIN 5000

[file contents truncated]

For users created before you changed UID_MIN and GID_MIN directives, UIDs will still start at the default 1000.

Even with new user and group IDs beginning with 5,000, it is recommended not to raise IDs reserved by the system above 1000 to avoid conflict with systems that retain the 1000 limit.

4.1.1. User Private Groups

Red Hat Enterprise Linux uses a user private group (UPG) scheme, which makes UNIX groups easier to manage. A user private group is created whenever a new user is added to the system. It has the same name as the user for which it was created and that user is the only member of the user private group.

User private groups make it safe to set default permissions for a newly created file or directory, allowing both the user and the group of that user to make modifications to the file or directory.

The setting which determines what permissions are applied to a newly created file or directory is called a umask and is configured in the /etc/bashrc file. Traditionally on UNIX-based systems, the umask is set to 022, which allows only the user who created the file or directory to make modifications. Under this scheme, all other users, including members of the creator’s group, are not allowed to make any modifications. However, under the UPG scheme, this "group protection" is not necessary since every user has their own private group. See Section 4.3.5, “Setting Default Permissions for New Files Using umask” for more information.

A list of all groups is stored in the /etc/group configuration file.

4.1.2. Shadow Passwords

In environments with multiple users, it is very important to use shadow passwords provided by the shadow-utils package to enhance the security of system authentication files. For this reason, the installation program enables shadow passwords by default.

The following is a list of the advantages shadow passwords have over the traditional way of storing passwords on UNIX-based systems:

-

Shadow passwords improve system security by moving encrypted password hashes from the world-readable

/etc/passwdfile to/etc/shadow, which is readable only by therootuser. - Shadow passwords store information about password aging.

-

Shadow passwords allow to enforce some of the security policies set in the

/etc/login.defsfile.

Most utilities provided by the shadow-utils package work properly whether or not shadow passwords are enabled. However, since password aging information is stored exclusively in the /etc/shadow file, some utilities and commands do not work without first enabling shadow passwords:

-

The

chageutility for setting password aging parameters. For details, see the Password Security section in the Red Hat Enterprise Linux 7 Security Guide. -

The

gpasswdutility for administrating the/etc/groupfile. -

The

usermodcommand with the-e, --expiredateor-f, --inactiveoption. -

The

useraddcommand with the-e, --expiredateor-f, --inactiveoption.

4.2. Managing Users in a Graphical Environment

The Users utility allows you to view, modify, add, and delete local users in the graphical user interface.

4.2.1. Using the Users Settings Tool

Press the Super key to enter the Activities Overview, type Users and then press Enter. The Users settings tool appears. The Super key appears in a variety of guises, depending on the keyboard and other hardware, but often as either the Windows or Command key, and typically to the left of the Space bar. Alternatively, you can open the Users utility from the Settings menu after clicking your user name in the top right corner of the screen.

To make changes to the user accounts, first select the button and authenticate yourself as indicated by the dialog box that appears. Note that unless you have superuser privileges, the application will prompt you to authenticate as root. To add and remove users, select the and button respectively. To add a user to the administrative group wheel, change the Account Type from Standard to Administrator. To edit a user’s language setting, select the language and a drop-down menu appears.

Figure 4.1. The Users Settings Tool

When a new user is created, the account is disabled until a password is set. The Password drop-down menu, shown in Figure 4.2, “The Password Menu”, contains the options to set a password by the administrator immediately, choose a password by the user at the first login, or create a guest account with no password required to log in. You can also disable or enable an account from this menu.

Figure 4.2. The Password Menu

4.3. Using Command-Line Tools

Apart from the Users settings tool described in Section 4.2, “Managing Users in a Graphical Environment”, which is designed for basic managing of users, you can use command line tools for managing users and groups that are listed in Table 4.1, “Command line utilities for managing users and groups”.

| Utilities | Description |

|---|---|

|

| Displays user and group IDs. |

|