Architecture

OpenShift Container Platform 3.10 Architecture Information

Abstract

Chapter 1. Overview

OpenShift v3 is a layered system designed to expose underlying Docker-formatted container image and Kubernetes concepts as accurately as possible, with a focus on easy composition of applications by a developer. For example, install Ruby, push code, and add MySQL.

Unlike OpenShift v2, more flexibility of configuration is exposed after creation in all aspects of the model. The concept of an application as a separate object is removed in favor of more flexible composition of "services", allowing two web containers to reuse a database or expose a database directly to the edge of the network.

1.1. What Are the Layers?

The Docker service provides the abstraction for packaging and creating Linux-based, lightweight container images. Kubernetes provides the cluster management and orchestrates containers on multiple hosts.

OpenShift Container Platform adds:

- Source code management, builds, and deployments for developers

- Managing and promoting images at scale as they flow through your system

- Application management at scale

- Team and user tracking for organizing a large developer organization

- Networking infrastructure that supports the cluster

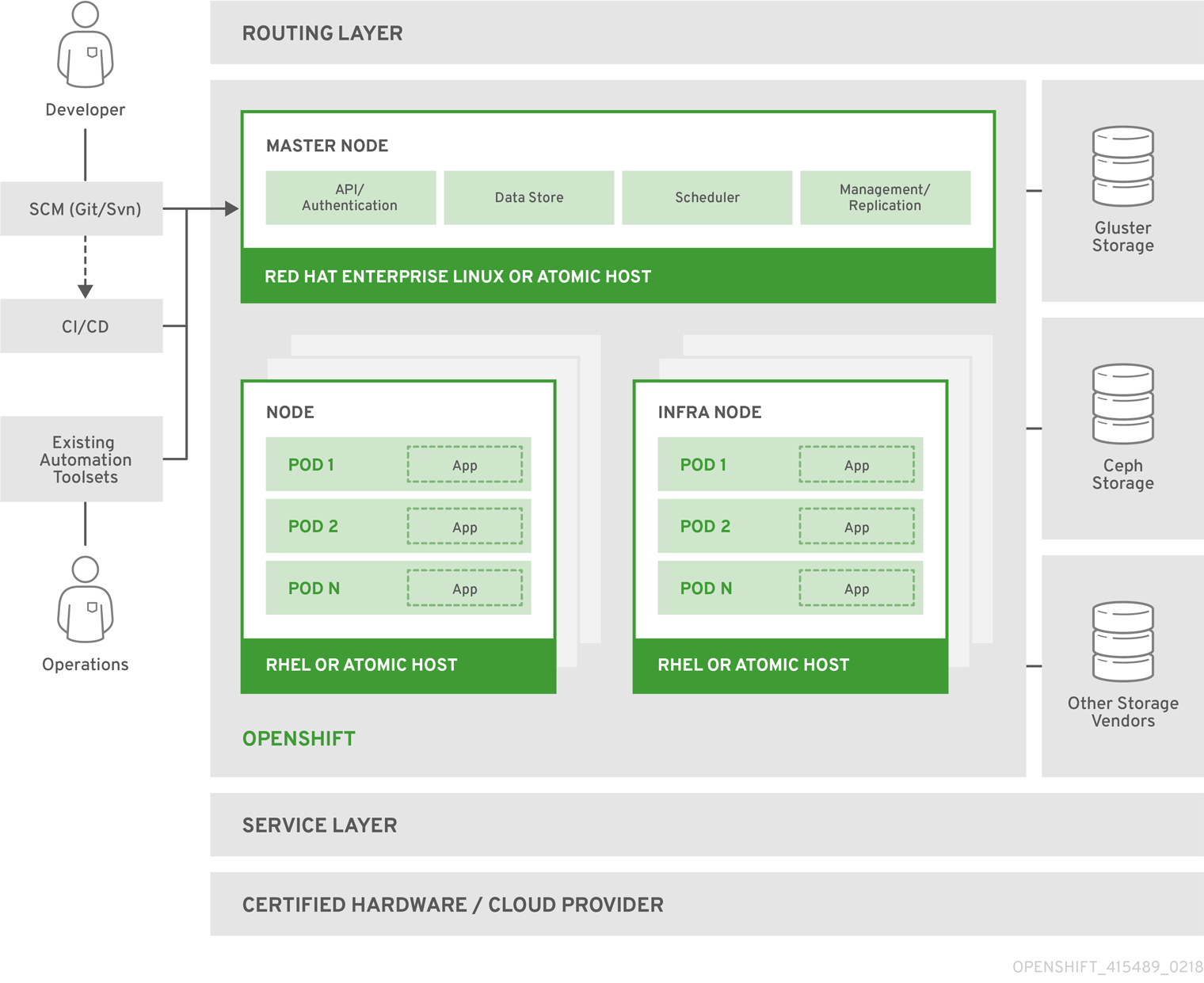

Figure 1.1. OpenShift Container Platform Architecture Overview

For more information on the node types in the architecture overview, see Kubernetes Infrastructure.

1.2. What Is the OpenShift Container Platform Architecture?

OpenShift Container Platform has a microservices-based architecture of smaller, decoupled units that work together. It runs on top of a Kubernetes cluster, with data about the objects stored in etcd, a reliable clustered key-value store. Those services are broken down by function:

- REST APIs, which expose each of the core objects.

- Controllers, which read those APIs, apply changes to other objects, and report status or write back to the object.

Users make calls to the REST API to change the state of the system. Controllers use the REST API to read the user’s desired state, and then try to bring the other parts of the system into sync. For example, when a user requests a build they create a "build" object. The build controller sees that a new build has been created, and runs a process on the cluster to perform that build. When the build completes, the controller updates the build object via the REST API and the user sees that their build is complete.

The controller pattern means that much of the functionality in OpenShift Container Platform is extensible. The way that builds are run and launched can be customized independently of how images are managed, or how deployments happen. The controllers are performing the "business logic" of the system, taking user actions and transforming them into reality. By customizing those controllers or replacing them with your own logic, different behaviors can be implemented. From a system administration perspective, this also means the API can be used to script common administrative actions on a repeating schedule. Those scripts are also controllers that watch for changes and take action. OpenShift Container Platform makes the ability to customize the cluster in this way a first-class behavior.

To make this possible, controllers leverage a reliable stream of changes to the system to sync their view of the system with what users are doing. This event stream pushes changes from etcd to the REST API and then to the controllers as soon as changes occur, so changes can ripple out through the system very quickly and efficiently. However, since failures can occur at any time, the controllers must also be able to get the latest state of the system at startup, and confirm that everything is in the right state. This resynchronization is important, because it means that even if something goes wrong, then the operator can restart the affected components, and the system double checks everything before continuing. The system should eventually converge to the user’s intent, since the controllers can always bring the system into sync.

1.3. How Is OpenShift Container Platform Secured?

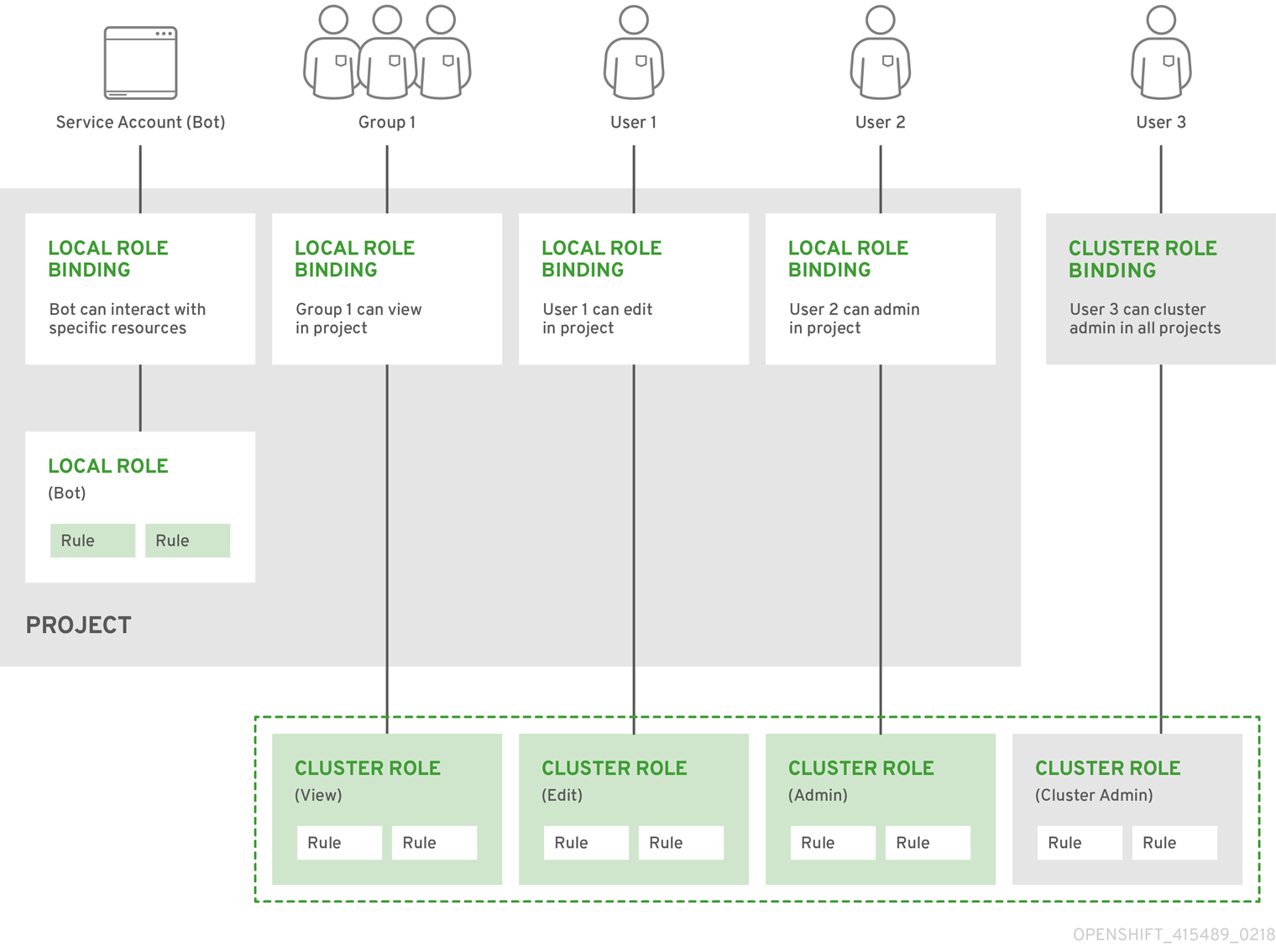

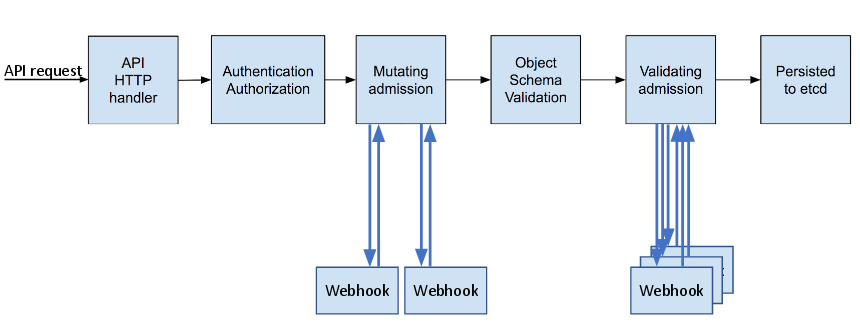

The OpenShift Container Platform and Kubernetes APIs authenticate users who present credentials, and then authorize them based on their role. Both developers and administrators can be authenticated via a number of means, primarily OAuth tokens and X.509 client certificates. OAuth tokens are signed with JSON Web Algorithm RS256, which is RSA signature algorithm PKCS#1 v1.5 with SHA-256.

Developers (clients of the system) typically make REST API calls from a client program like oc or to the web console via their browser, and use OAuth bearer tokens for most communications. Infrastructure components (like nodes) use client certificates generated by the system that contain their identities. Infrastructure components that run in containers use a token associated with their service account to connect to the API.

Authorization is handled in the OpenShift Container Platform policy engine, which defines actions like "create pod" or "list services" and groups them into roles in a policy document. Roles are bound to users or groups by the user or group identifier. When a user or service account attempts an action, the policy engine checks for one or more of the roles assigned to the user (e.g., cluster administrator or administrator of the current project) before allowing it to continue.

Since every container that runs on the cluster is associated with a service account, it is also possible to associate secrets to those service accounts and have them automatically delivered into the container. This enables the infrastructure to manage secrets for pulling and pushing images, builds, and the deployment components, and also allows application code to easily leverage those secrets.

1.3.1. TLS Support

All communication channels with the REST API, as well as between master components such as etcd and the API server, are secured with TLS. TLS provides strong encryption, data integrity, and authentication of servers with X.509 server certificates and public key infrastructure. By default, a new internal PKI is created for each deployment of OpenShift Container Platform. The internal PKI uses 2048 bit RSA keys and SHA-256 signatures. Custom certificates for public hosts are supported as well.

OpenShift Container Platform uses Golang’s standard library implementation of crypto/tls and does not depend on any external crypto and TLS libraries. Additionally, the client depends on external libraries for GSSAPI authentication and OpenPGP signatures. GSSAPI is typically provided by either MIT Kerberos or Heimdal Kerberos, which both use OpenSSL’s libcrypto. OpenPGP signature verification is handled by libgpgme and GnuPG.

The insecure versions SSL 2.0 and SSL 3.0 are unsupported and not available. The OpenShift Container Platform server and oc client only provide TLS 1.2 by default. TLS 1.0 and TLS 1.1 can be enabled in the server configuration. Both server and client prefer modern cipher suites with authenticated encryption algorithms and perfect forward secrecy. Cipher suites with deprecated and insecure algorithms such as RC4, 3DES, and MD5 are disabled. Some internal clients (for example, LDAP authentication) have less restrict settings with TLS 1.0 to 1.2 and more cipher suites enabled.

| TLS Version | OpenShift Container Platform Server | oc Client | Other Clients |

|---|---|---|---|

| SSL 2.0 | Unsupported | Unsupported | Unsupported |

| SSL 3.0 | Unsupported | Unsupported | Unsupported |

| TLS 1.0 | No [a] | No [a] | Maybe [b] |

| TLS 1.1 | No [a] | No [a] | Maybe [b] |

| TLS 1.2 | Yes | Yes | Yes |

| TLS 1.3 | N/A [c] | N/A [c] | N/A [c] |

[a]

Disabled by default, but can be enabled in the server configuration.

[b]

Some internal clients, such as the LDAP client.

[c]

TLS 1.3 is still under development.

| |||

The following list of enabled cipher suites of OpenShift Container Platform’s server and oc client are sorted in preferred order:

-

TLS_ECDHE_ECDSA_WITH_CHACHA20_POLY1305 -

TLS_ECDHE_RSA_WITH_CHACHA20_POLY1305 -

TLS_ECDHE_ECDSA_WITH_AES_128_GCM_SHA256 -

TLS_ECDHE_RSA_WITH_AES_128_GCM_SHA256 -

TLS_ECDHE_ECDSA_WITH_AES_256_GCM_SHA384 -

TLS_ECDHE_RSA_WITH_AES_256_GCM_SHA384 -

TLS_ECDHE_ECDSA_WITH_AES_128_CBC_SHA256 -

TLS_ECDHE_RSA_WITH_AES_128_CBC_SHA256 -

TLS_ECDHE_ECDSA_WITH_AES_128_CBC_SHA -

TLS_ECDHE_ECDSA_WITH_AES_256_CBC_SHA -

TLS_ECDHE_RSA_WITH_AES_128_CBC_SHA -

TLS_ECDHE_RSA_WITH_AES_256_CBC_SHA -

TLS_RSA_WITH_AES_128_GCM_SHA256 -

TLS_RSA_WITH_AES_256_GCM_SHA384 -

TLS_RSA_WITH_AES_128_CBC_SHA -

TLS_RSA_WITH_AES_256_CBC_SHA

Chapter 2. Infrastructure Components

2.1. Kubernetes Infrastructure

2.1.1. Overview

Within OpenShift Container Platform, Kubernetes manages containerized applications across a set of containers or hosts and provides mechanisms for deployment, maintenance, and application-scaling. The container runtime packages, instantiates, and runs containerized applications. A Kubernetes cluster consists of one or more masters and a set of nodes.

You can optionally configure your masters for high availability (HA) to ensure that the cluster has no single point of failure.

OpenShift Container Platform uses Kubernetes 1.10 and Docker 1.13.

2.1.2. Masters

The master is the host or hosts that contain the control plane components, including the API server, controller manager server, and etcd. The master manages nodes in its Kubernetes cluster and schedules pods to run on those nodes.

| Component | Description |

|---|---|

| API Server | The Kubernetes API server validates and configures the data for pods, services, and replication controllers. It also assigns pods to nodes and synchronizes pod information with service configuration. |

| etcd | etcd stores the persistent master state while other components watch etcd for changes to bring themselves into the desired state. etcd can be optionally configured for high availability, typically deployed with 2n+1 peer services. |

| Controller Manager Server | The controller manager server watches etcd for changes to replication controller objects and then uses the API to enforce the desired state. Several such processes create a cluster with one active leader at a time. |

| HAProxy |

Optional, used when configuring highly-available masters with the |

2.1.2.1. Control Plane Static Pods

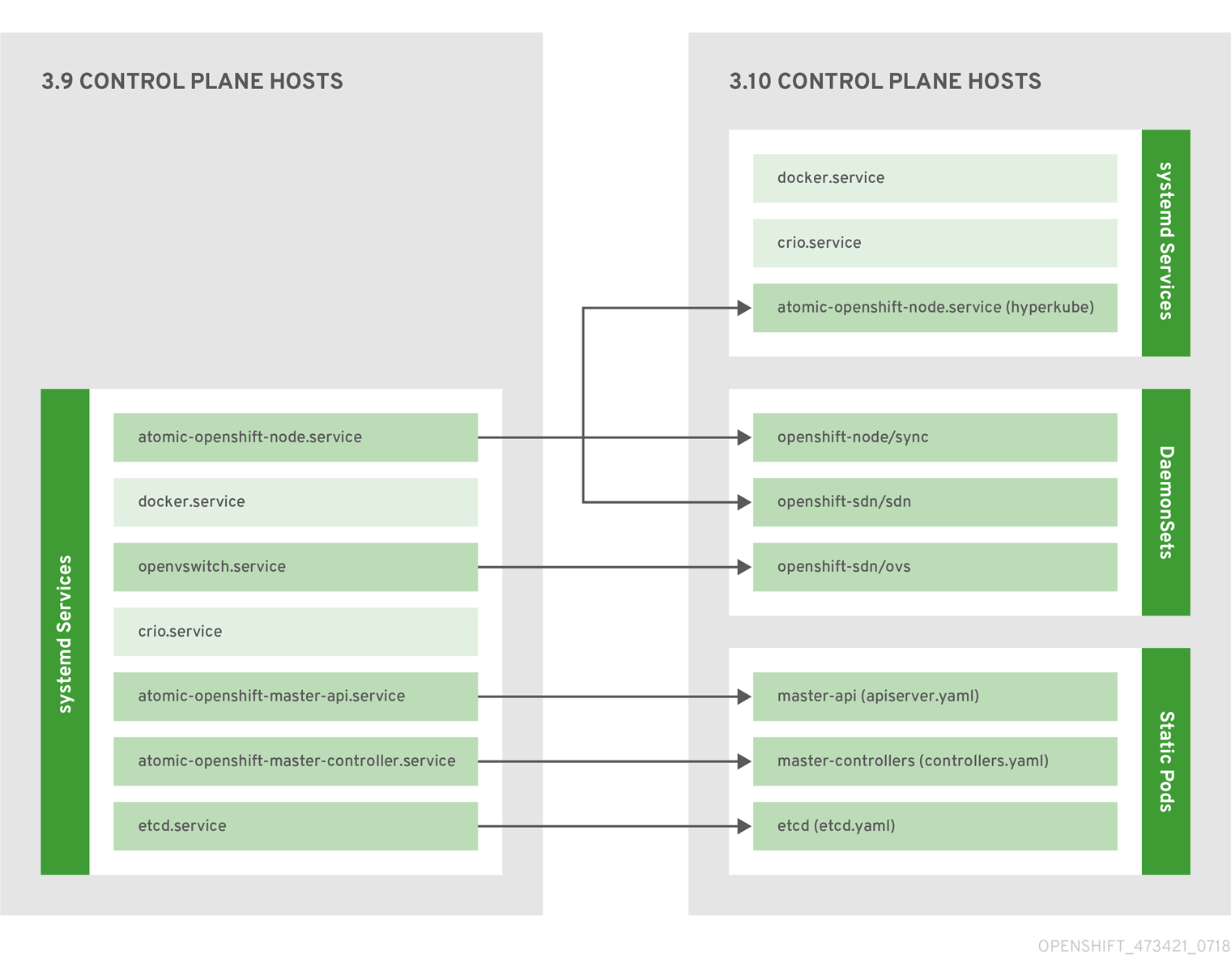

Starting in OpenShift Container Platform 3.10, the deployment model for installing and operating the core control plane components changed. Prior to 3.10, the API server and the controller manager components ran as stand-alone host processes operated by systemd. In 3.10, these two components are moved to static pods operated by the kubelet.

For masters that have etcd co-located on the same host, etcd is also moved to static pods. RPM-based etcd is still supported on etcd hosts that are not also masters.

In addition, the node components openshift-sdn and openvswitch are now run using a DaemonSet instead of a systemd service.

Figure 2.1. Control plane host architecture changes

Even with control plane components running as static pods, master hosts still source their configuration from the /etc/origin/master/master-config.yaml file, as described in the Master and Node Configuration topic.

Mirror Pods

The kubelet on master nodes automatically creates mirror pods on the API server for each of the control plane static pods so that they are visible in the cluster in the kube-system project. Manifests for these static pods are installed by default by the openshift-ansible installer, located in the /etc/origin/node/pods directory on the master host.

These pods have the following hostPath volumes defined:

| /etc/origin/master | Contains all certificates, configuration files, and the admin.kubeconfig file. |

| /var/lib/origin | Contains volumes and potential core dumps of the binary. |

| /etc/origin/cloudprovider | Contains cloud provider specific configuration (AWS, Azure, etc.). |

| /usr/libexec/kubernetes/kubelet-plugins | Contains additional third party volume plug-ins. |

| /etc/origin/kubelet-plugins | Contains additional third party volume plug-ins for system containers. |

The set of operations you can do on the static pods is limited. For example:

$ oc logs master-api-<hostname> -n kube-systemreturns the standard output from the API server. However:

$ oc delete pod master-api-<hostname> -n kube-systemwill not actually delete the pod.

As another example, a cluster administrator may want to perform a common operation, such as increasing the loglevel of the API server to provide more verbose data if a problem occurs. In OpenShift Container Platform 3.10, you must edit the /etc/origin/master/master.env file, where the --loglevel parameter in the OPTIONS variable can be modified, as this is passed to the process running inside the container. Changes require a restart of the process running inside the container.

Restarting Master Services

To restart control plane services running in control plane static pods, use the master-restart command on the master host.

To restart the master API:

# master-restart apiTo restart the controllers:

# master-restart controllersTo restart etcd:

# master-restart etcdViewing Master Service Logs

To view logs for control plane services running in control plane static pods, use the master-logs command for the respective component:

# master-logs api api

# master-logs controllers controllers

# master-logs etcd etcd2.1.2.2. High Availability Masters

You can optionally configure your masters for high availability (HA) to ensure that the cluster has no single point of failure.

To mitigate concerns about availability of the master, two activities are recommended:

- A runbook entry should be created for reconstructing the master. A runbook entry is a necessary backstop for any highly-available service. Additional solutions merely control the frequency that the runbook must be consulted. For example, a cold standby of the master host can adequately fulfill SLAs that require no more than minutes of downtime for creation of new applications or recovery of failed application components.

-

Use a high availability solution to configure your masters and ensure that the cluster has no single point of failure. The cluster installation documentation provides specific examples using the

nativeHA method and configuring HAProxy. You can also take the concepts and apply them towards your existing HA solutions using thenativemethod instead of HAProxy.

In production OpenShift Container Platform clusters, you must maintain high availability of the API Server load balancer. If the API Server load balancer is not available, nodes cannot report their status, all their pods are marked dead, and the pods' endpoints are removed from the service.

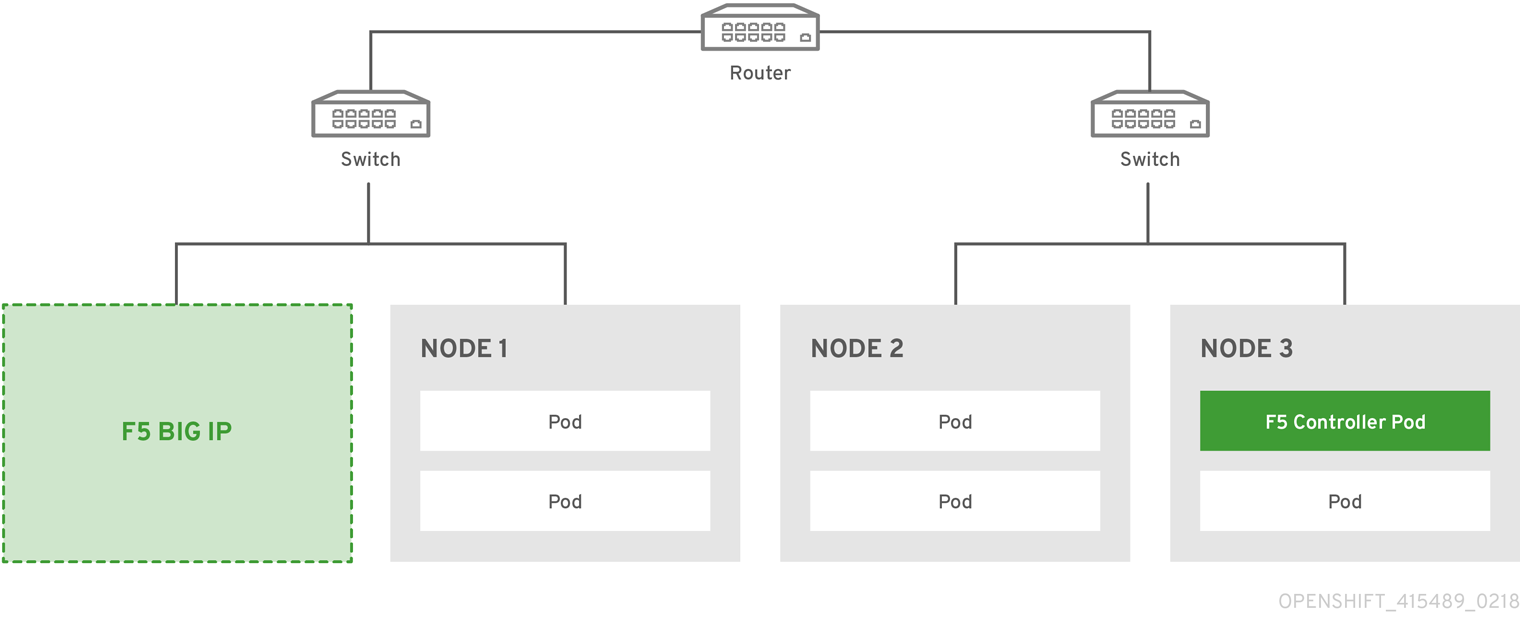

In addition to configuring HA for OpenShift Container Platform, you must separately configure HA for the API Server load balancer. To configure HA, it is much preferred to integrate an enterprise load balancer (LB) such as an F5 Big-IP™ or a Citrix Netscaler™ appliance. If such solutions are not available, it is possible to run multiple HAProxy load balancers and use Keepalived to provide a floating virtual IP address for HA. However, this solution is not recommended for production instances.

When using the native HA method with HAProxy, master components have the following availability:

| Role | Style | Notes |

|---|---|---|

| etcd | Active-active | Fully redundant deployment with load balancing. Can be installed on separate hosts or collocated on master hosts. |

| API Server | Active-active | Managed by HAProxy. |

| Controller Manager Server | Active-passive | One instance is elected as a cluster leader at a time. |

| HAProxy | Active-passive | Balances load between API master endpoints. |

While clustered etcd requires an odd number of hosts for quorum, the master services have no quorum or requirement that they have an odd number of hosts. However, since you need at least two master services for HA, it is common to maintain a uniform odd number of hosts when collocating master services and etcd.

2.1.3. Nodes

A node provides the runtime environments for containers. Each node in a Kubernetes cluster has the required services to be managed by the master. Nodes also have the required services to run pods, including the container runtime, a kubelet, and a service proxy.

OpenShift Container Platform creates nodes from a cloud provider, physical systems, or virtual systems. Kubernetes interacts with node objects that are a representation of those nodes. The master uses the information from node objects to validate nodes with health checks. A node is ignored until it passes the health checks, and the master continues checking nodes until they are valid. The Kubernetes documentation has more information on node statuses and management.

Administrators can manage nodes in an OpenShift Container Platform instance using the CLI. To define full configuration and security options when launching node servers, use dedicated node configuration files.

See the cluster limits section for the recommended maximum number of nodes.

2.1.3.1. Kubelet

Each node has a kubelet that updates the node as specified by a container manifest, which is a YAML file that describes a pod. The kubelet uses a set of manifests to ensure that its containers are started and that they continue to run.

A container manifest can be provided to a kubelet by:

- A file path on the command line that is checked every 20 seconds.

- An HTTP endpoint passed on the command line that is checked every 20 seconds.

- The kubelet watching an etcd server, such as /registry/hosts/$(hostname -f), and acting on any changes.

- The kubelet listening for HTTP and responding to a simple API to submit a new manifest.

2.1.3.2. Service Proxy

Each node also runs a simple network proxy that reflects the services defined in the API on that node. This allows the node to do simple TCP and UDP stream forwarding across a set of back ends.

2.1.3.3. Node Object Definition

The following is an example node object definition in Kubernetes:

apiVersion: v1

kind: Node

metadata:

creationTimestamp: null

labels:

kubernetes.io/hostname: node1.example.com

name: node1.example.com

spec:

externalID: node1.example.com

status:

nodeInfo:

bootID: ""

containerRuntimeVersion: ""

kernelVersion: ""

kubeProxyVersion: ""

kubeletVersion: ""

machineID: ""

osImage: ""

systemUUID: ""- 1

apiVersiondefines the API version to use.- 2

kindset toNodeidentifies this as a definition for a node object.- 3

metadata.labelslists any labels that have been added to the node.- 4

metadata.nameis a required value that defines the name of the node object. This value is shown in theNAMEcolumn when running theoc get nodescommand.- 5

spec.externalIDdefines the fully-qualified domain name where the node can be reached. Defaults to themetadata.namevalue when empty.

2.1.3.4. Node Bootstrapping

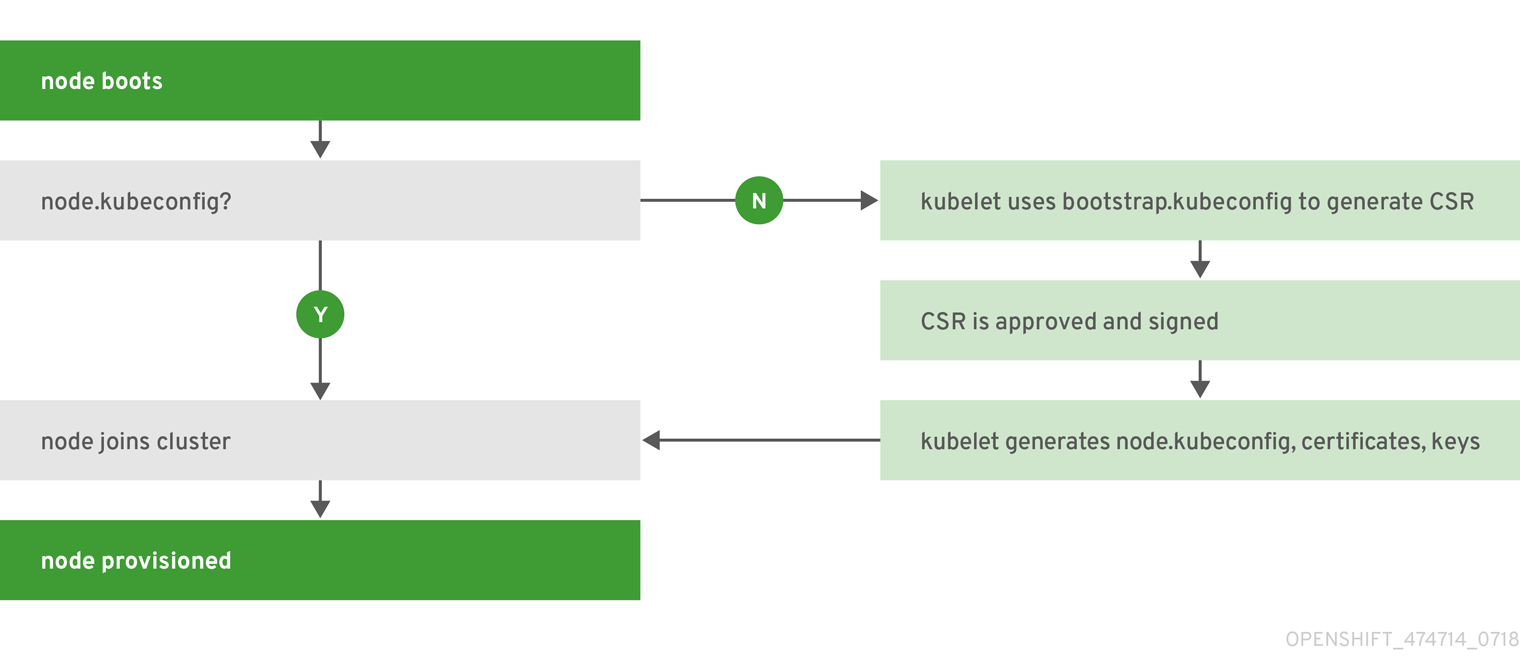

Starting in OpenShift Container Platform 3.10, a node’s configuration is bootstrapped from the master, which means nodes pull their pre-defined configuration and client and server certificates from the master. This allows faster node start-up by reducing the differences between nodes, as well as centralizing more configuration and letting the cluster converge on the desired state. Certificate rotation and centralized certificate management are enabled by default.

Figure 2.2. Node bootstrapping workflow overview

When node services are started, the node checks if the /etc/origin/node/node.kubeconfig file and other node configuration files exist before joining the cluster. If they do not, the node pulls the configuration from the master, then joins the cluster.

ConfigMaps are used to store the node configuration in the cluster, which populates the configuration file on the node host at /etc/origin/node/node-config.yaml. For definitions of the set of default node groups and their ConfigMaps, see Defining Node Groups and Host Mappings in Installing Clusters.

Node Bootstrap Workflow

The process for automatic node bootstrapping uses the following workflow:

By default during cluster installation, a set of

clusterrole,clusterrolebindingandserviceaccountobjects are created for use in node bootstrapping:The system:node-bootstrapper cluster role is used for creating certificate signing requests (CSRs) during node bootstrapping:

# oc describe clusterrole.authorization.openshift.io/system:node-bootstrapper Name: system:node-bootstrapper Created: 17 hours ago Labels: kubernetes.io/bootstrapping=rbac-defaults Annotations: authorization.openshift.io/system-only=true openshift.io/reconcile-protect=false Verbs Non-Resource URLs Resource Names API Groups Resources [create get list watch] [] [] [certificates.k8s.io] [certificatesigningrequests]The following node-bootstrapper service account is created in the openshift-infra project:

# oc describe sa node-bootstrapper -n openshift-infra Name: node-bootstrapper Namespace: openshift-infra Labels: <none> Annotations: <none> Image pull secrets: node-bootstrapper-dockercfg-f2n8r Mountable secrets: node-bootstrapper-token-79htp node-bootstrapper-dockercfg-f2n8r Tokens: node-bootstrapper-token-79htp node-bootstrapper-token-mqn2q Events: <none>The following system:node-bootstrapper cluster role binding is for the node bootstrapper cluster role and service account:

# oc describe clusterrolebindings system:node-bootstrapper Name: system:node-bootstrapper Created: 17 hours ago Labels: <none> Annotations: openshift.io/reconcile-protect=false Role: /system:node-bootstrapper Users: <none> Groups: <none> ServiceAccounts: openshift-infra/node-bootstrapper Subjects: <none> Verbs Non-Resource URLs Resource Names API Groups Resources [create get list watch] [] [] [certificates.k8s.io] [certificatesigningrequests]

Also by default during cluster installation, the openshift-ansible installer creates a OpenShift Container Platform certificate authority and various other certificates, keys, and kubeconfig files in the /etc/origin/master directory. Two files of note are:

/etc/origin/master/admin.kubeconfig

Uses the system:admin user.

/etc/origin/master/bootstrap.kubeconfig

Used for node bootstrapping nodes other than masters.

The /etc/origin/master/bootstrap.kubeconfig is created when the installer uses the node-bootstrapper service account as follows:

$ oc --config=/etc/origin/master/admin.kubeconfig \ serviceaccounts create-kubeconfig node-bootstrapper \ -n openshift-infra- On master nodes, the /etc/origin/master/admin.kubeconfig is used as a bootstrapping file and is copied to /etc/origin/node/boostrap.kubeconfig. On other, non-master nodes, the /etc/origin/master/bootstrap.kubeconfig file is copied to all other nodes in at /etc/origin/node/boostrap.kubeconfig on each node host.

The /etc/origin/master/bootstrap.kubeconfig is then passed to kubelet using the flag

--bootstrap-kubeconfigas follows:--bootstrap-kubeconfig=/etc/origin/node/bootstrap.kubeconfig

- The kubelet is first started with the supplied /etc/origin/node/bootstrap.kubeconfig file. After initial connection internally, the kubelet creates certificate signing requests (CSRs) and sends them to the master.

The CSRs are verified and approved via the controller manager (specifically the certificate signing controller). If approved, the kubelet client and server certificates are created in the /etc/origin/node/ceritificates directory. For example:

# ls -al /etc/origin/node/certificates/ total 12 drwxr-xr-x. 2 root root 212 Jun 18 21:56 . drwx------. 4 root root 213 Jun 19 15:18 .. -rw-------. 1 root root 2826 Jun 18 21:53 kubelet-client-2018-06-18-21-53-15.pem -rw-------. 1 root root 1167 Jun 18 21:53 kubelet-client-2018-06-18-21-53-45.pem lrwxrwxrwx. 1 root root 68 Jun 18 21:53 kubelet-client-current.pem -> /etc/origin/node/certificates/kubelet-client-2018-06-18-21-53-45.pem -rw-------. 1 root root 1447 Jun 18 21:56 kubelet-server-2018-06-18-21-56-52.pem lrwxrwxrwx. 1 root root 68 Jun 18 21:56 kubelet-server-current.pem -> /etc/origin/node/certificates/kubelet-server-2018-06-18-21-56-52.pem- After the CSR approval, the node.kubeconfig file is created at /etc/origin/node/node.kubeconfig.

- The kubelet is restarted with the /etc/origin/node/node.kubeconfig file and the certificates in the /etc/origin/node/certificates/ directory, after which point it is ready to join the cluster.

Node Configuration Workflow

Sourcing a node’s configuration uses the following workflow:

- Initially the node’s kubelet is started with the bootstrap configuration file, bootstrap-node-config.yaml in the /etc/origin/node/ directory, created at the time of node provisioning.

- On each node, the node service file uses the local script openshift-node in the /usr/local/bin/ directory to start the kubelet with the supplied bootstrap-node-config.yaml.

- On each master, the directory /etc/origin/node/pods contains pod manifests for apiserver, controller and etcd which are created as static pods on masters.

During cluster installation, a sync DaemonSet is created which creates a sync pod on each node. The sync pod monitors changes in the file /etc/sysconfig/atomic-openshift-node. It specifically watches for

BOOTSTRAP_CONFIG_NAMEto be set.BOOTSTRAP_CONFIG_NAMEis set by the openshift-ansible installer and is the name of the ConfigMap based on the node configuration group the node belongs to.By default, the installer creates the following node configuration groups:

- node-config-master

- node-config-infra

- node-config-compute

- node-config-all-in-one

- node-config-master-infra

A ConfigMap for each group is created in the openshift-node project.

-

The sync pod extracts the appropriate ConfigMap based on the value set in

BOOTSTRAP_CONFIG_NAME. - The sync pod converts the ConfigMap data into kubelet configurations and creates a /etc/origin/node/node-config.yaml for that node host. If a change is made to this file (or it is the file’s initial creation), the kubelet is restarted.

Modifying Node Configurations

A node’s configuration is modified by editing the appropriate ConfigMap in the openshift-node project. The /etc/origin/node/node-config.yaml must not be modified directly.

For example, for a node that is in the node-config-compute group, edit the ConfigMap using:

$ oc edit cm node-config-compute -n openshift-node2.2. Container Registry

2.2.1. Overview

OpenShift Container Platform can utilize any server implementing the Docker registry API as a source of images, including the Docker Hub, private registries run by third parties, and the integrated OpenShift Container Platform registry.

2.2.2. Integrated OpenShift Container Registry

OpenShift Container Platform provides an integrated container registry called OpenShift Container Registry (OCR) that adds the ability to automatically provision new image repositories on demand. This provides users with a built-in location for their application builds to push the resulting images.

Whenever a new image is pushed to OCR, the registry notifies OpenShift Container Platform about the new image, passing along all the information about it, such as the namespace, name, and image metadata. Different pieces of OpenShift Container Platform react to new images, creating new builds and deployments.

OCR can also be deployed as a stand-alone component that acts solely as a container registry, without the build and deployment integration. See Installing a Stand-alone Deployment of OpenShift Container Registry for details.

2.2.3. Third Party Registries

OpenShift Container Platform can create containers using images from third party registries, but it is unlikely that these registries offer the same image notification support as the integrated OpenShift Container Platform registry. In this situation OpenShift Container Platform will fetch tags from the remote registry upon imagestream creation. Refreshing the fetched tags is as simple as running oc import-image <stream>. When new images are detected, the previously-described build and deployment reactions occur.

2.2.3.1. Authentication

OpenShift Container Platform can communicate with registries to access private image repositories using credentials supplied by the user. This allows OpenShift to push and pull images to and from private repositories. The Authentication topic has more information.

2.3. Web Console

2.3.1. Overview

The OpenShift Container Platform web console is a user interface accessible from a web browser. Developers can use the web console to visualize, browse, and manage the contents of projects.

JavaScript must be enabled to use the web console. For the best experience, use a web browser that supports WebSockets.

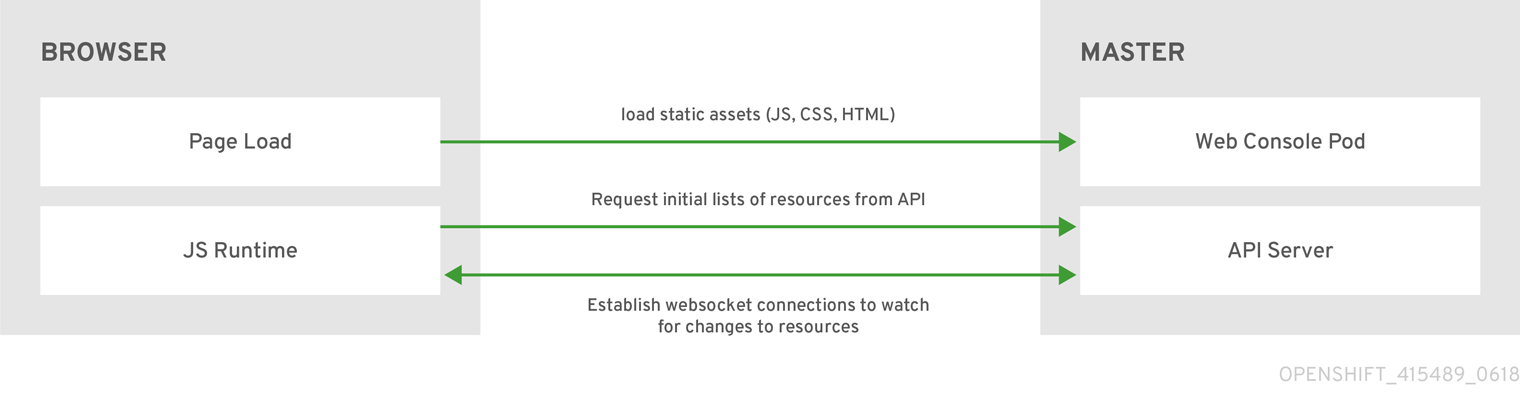

The web console runs as a pod on the master. The static assets required to run the web console are served by the pod. Administrators can also customize the web console using extensions, which let you run scripts and load custom stylesheets when the web console loads.

When you access the web console from a browser, it first loads all required static assets. It then makes requests to the OpenShift Container Platform APIs using the values defined from the openshift start option --public-master, or from the related parameter masterPublicURL in the webconsole-config config map defined in the openshift-web-console namespace. The web console uses WebSockets to maintain a persistent connection with the API server and receive updated information as soon as it is available.

Figure 2.3. Web Console Request Architecture

The configured host names and IP addresses for the web console are whitelisted to access the API server safely even when the browser would consider the requests to be cross-origin. To access the API server from a web application using a different host name, you must whitelist that host name by specifying the --cors-allowed-origins option on openshift start or from the related master configuration file parameter corsAllowedOrigins.

The corsAllowedOrigins parameter is controlled by the configuration field. No pinning or escaping is done to the value. The following is an example of how you can pin a host name and escape dots:

corsAllowedOrigins:

- (?i)//my\.subdomain\.domain\.com(:|\z)-

The

(?i)makes it case-insensitive. -

The

//pins to the beginning of the domain (and matches the double slash followinghttp:orhttps:). -

The

\.escapes dots in the domain name. -

The

(:|\z)matches the end of the domain name(\z)or a port separator(:).

2.3.2. CLI Downloads

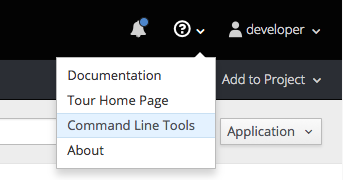

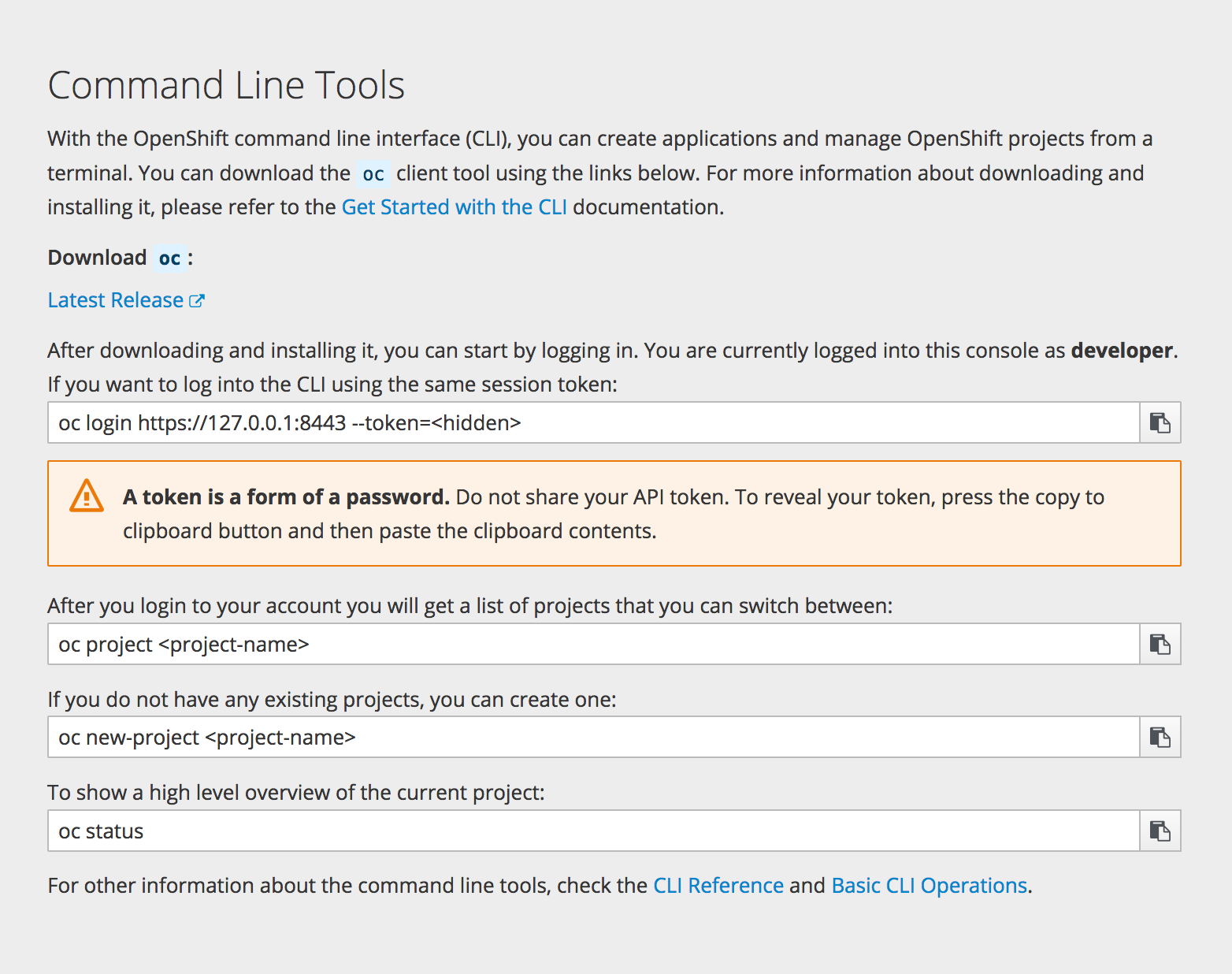

You can access CLI downloads from the Help icon in the web console:

Cluster administrators can customize these links further.

2.3.3. Browser Requirements

Review the tested integrations for OpenShift Container Platform.

2.3.4. Project Overviews

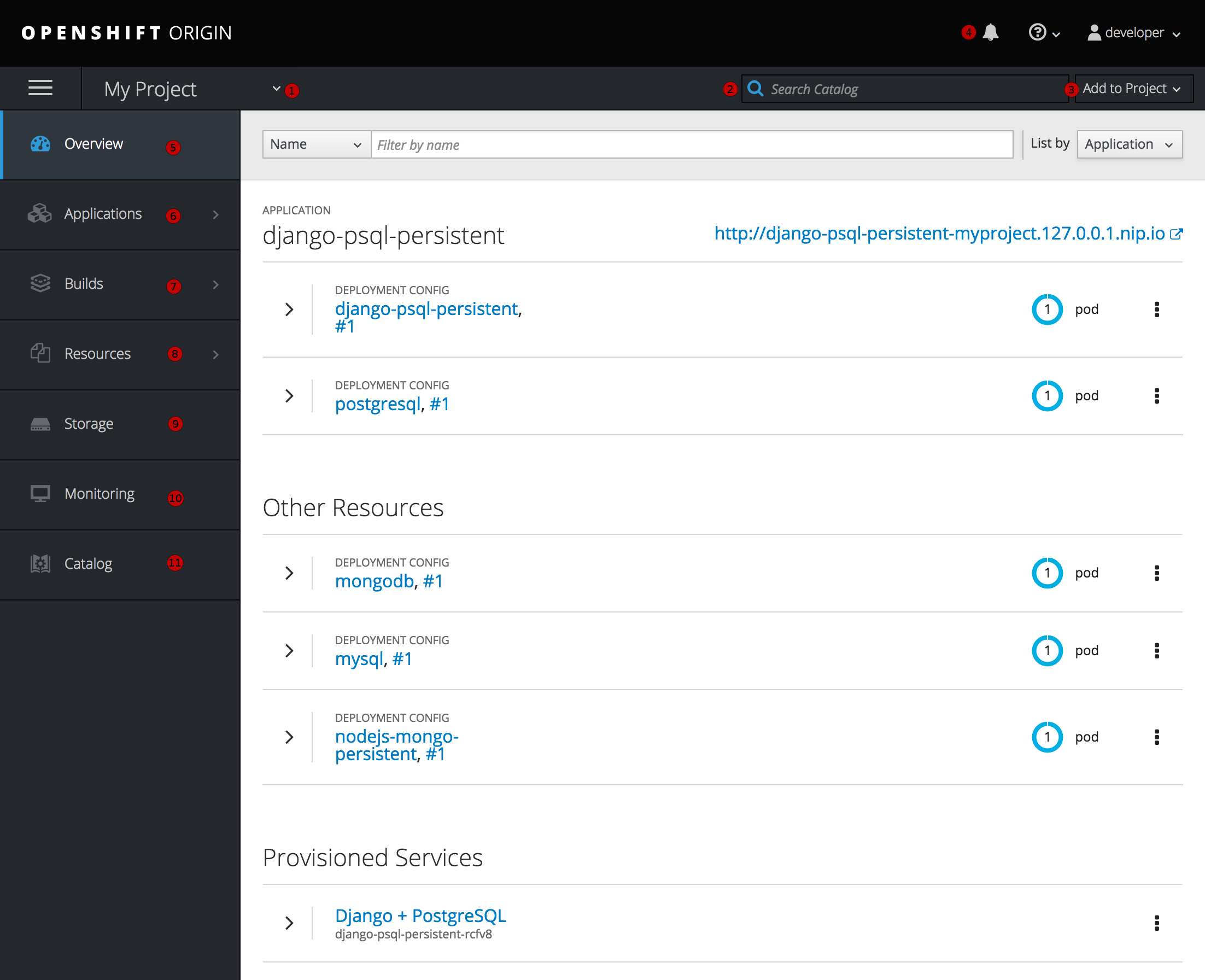

After logging in, the web console provides developers with an overview for the currently selected project:

Figure 2.4. Web Console Project Overview

- The project selector allows you to switch between projects you have access to.

- To quickly find services from within project view, type in your search criteria

- Create new applications using a source repository or service from the service catalog.

- Notifications related to your project.

- The Overview tab (currently selected) visualizes the contents of your project with a high-level view of each component.

- Applications tab: Browse and perform actions on your deployments, pods, services, and routes.

- Builds tab: Browse and perform actions on your builds and image streams.

- Resources tab: View your current quota consumption and other resources.

- Storage tab: View persistent volume claims and request storage for your applications.

- Monitoring tab: View logs for builds, pods, and deployments, as well as event notifications for all objects in your project.

- Catalog tab: Quickly get to the catalog from within a project.

Cockpit is automatically installed and enabled in OpenShift Container Platform 3.1 and later to help you monitor your development environment. Red Hat Enterprise Linux Atomic Host: Getting Started with Cockpit provides more information on using Cockpit.

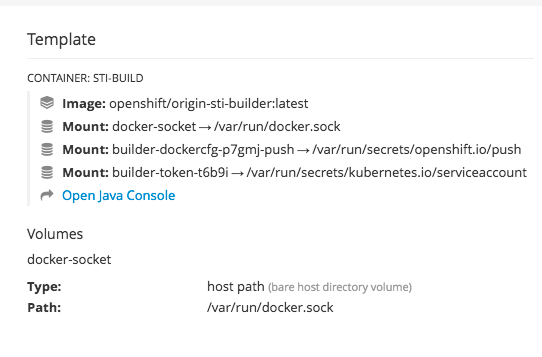

2.3.5. JVM Console

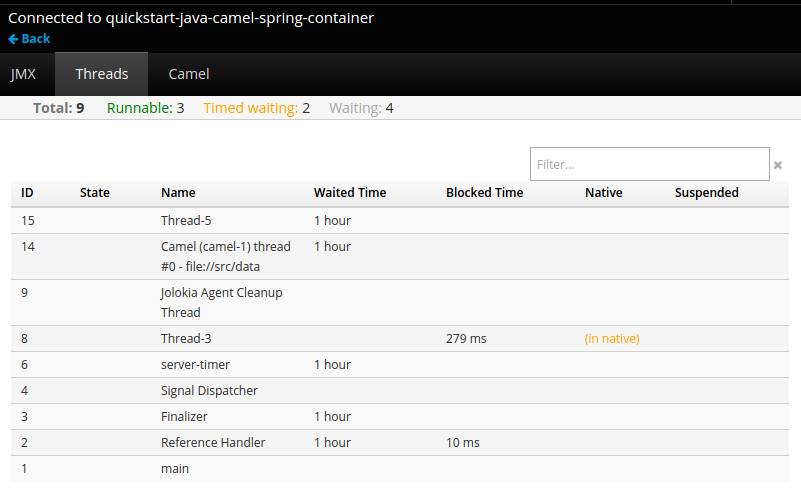

For pods based on Java images, the web console also exposes access to a hawt.io-based JVM console for viewing and managing any relevant integration components. A Connect link is displayed in the pod’s details on the Browse → Pods page, provided the container has a port named jolokia.

Figure 2.5. Pod with a Link to the JVM Console

After connecting to the JVM console, different pages are displayed depending on which components are relevant to the connected pod.

Figure 2.6. JVM Console

The following pages are available:

| Page | Description |

|---|---|

| JMX | View and manage JMX domains and mbeans. |

| Threads | View and monitor the state of threads. |

| ActiveMQ | View and manage Apache ActiveMQ brokers. |

| Camel | View and manage Apache Camel routes and dependencies. |

| OSGi | View and manage the JBoss Fuse OSGi environment. |

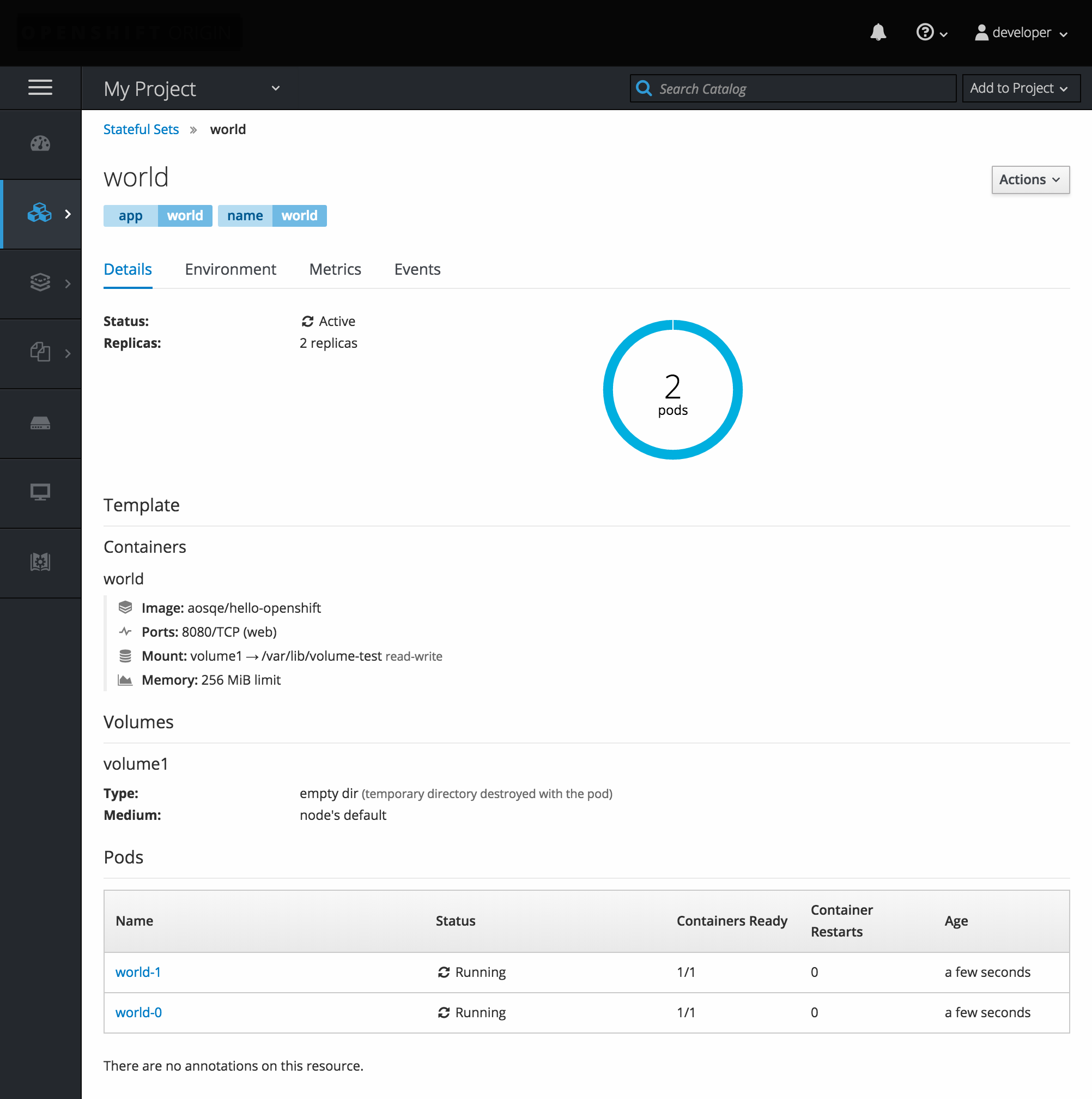

2.3.6. StatefulSets

A StatefulSet controller provides a unique identity to its pods and determines the order of deployments and scaling. StatefulSet is useful for unique network identifiers, persistent storage, graceful deployment and scaling, and graceful deletion and termination.

Figure 2.7. StatefulSet in OpenShift Container Platform

Chapter 3. Core Concepts

3.1. Overview

The following topics provide high-level, architectural information on core concepts and objects you will encounter when using OpenShift Container Platform. Many of these objects come from Kubernetes, which is extended by OpenShift Container Platform to provide a more feature-rich development lifecycle platform.

- Containers and images are the building blocks for deploying your applications.

- Pods and services allow for containers to communicate with each other and proxy connections.

- Projects and users provide the space and means for communities to organize and manage their content together.

- Builds and image streams allow you to build working images and react to new images.

- Deployments add expanded support for the software development and deployment lifecycle.

- Routes announce your service to the world.

- Templates allow for many objects to be created at once based on customized parameters.

3.2. Containers and Images

3.2.1. Containers

The basic units of OpenShift Container Platform applications are called containers. Linux container technologies are lightweight mechanisms for isolating running processes so that they are limited to interacting with only their designated resources.

Many application instances can be running in containers on a single host without visibility into each others' processes, files, network, and so on. Typically, each container provides a single service (often called a "micro-service"), such as a web server or a database, though containers can be used for arbitrary workloads.

The Linux kernel has been incorporating capabilities for container technologies for years. More recently the Docker project has developed a convenient management interface for Linux containers on a host. OpenShift Container Platform and Kubernetes add the ability to orchestrate Docker-formatted containers across multi-host installations.

Though you do not directly interact with the Docker CLI or service when using OpenShift Container Platform, understanding their capabilities and terminology is important for understanding their role in OpenShift Container Platform and how your applications function inside of containers. The docker RPM is available as part of RHEL 7, as well as CentOS and Fedora, so you can experiment with it separately from OpenShift Container Platform. Refer to the article Get Started with Docker Formatted Container Images on Red Hat Systems for a guided introduction.

3.2.1.1. Init Containers

A pod can have init containers in addition to application containers. Init containers allow you to reorganize setup scripts and binding code. An init container differs from a regular container in that it always runs to completion. Each init container must complete successfully before the next one is started.

For more information, see Pods and Services.

3.2.2. Images

Containers in OpenShift Container Platform are based on Docker-formatted container images. An image is a binary that includes all of the requirements for running a single container, as well as metadata describing its needs and capabilities.

You can think of it as a packaging technology. Containers only have access to resources defined in the image unless you give the container additional access when creating it. By deploying the same image in multiple containers across multiple hosts and load balancing between them, OpenShift Container Platform can provide redundancy and horizontal scaling for a service packaged into an image.

You can use the Docker CLI directly to build images, but OpenShift Container Platform also supplies builder images that assist with creating new images by adding your code or configuration to existing images.

Because applications develop over time, a single image name can actually refer to many different versions of the "same" image. Each different image is referred to uniquely by its hash (a long hexadecimal number e.g. fd44297e2ddb050ec4f…) which is usually shortened to 12 characters (e.g. fd44297e2ddb).

Image Version Tag Policy

Rather than version numbers, the Docker service allows applying tags (such as v1, v2.1, GA, or the default latest) in addition to the image name to further specify the image desired, so you may see the same image referred to as centos (implying the latest tag), centos:centos7, or fd44297e2ddb.

Do not use the latest tag for any official OpenShift Container Platform images. These are images that start with openshift3/. latest can refer to a number of versions, such as 3.4, or 3.5.

How you tag the images dictates the updating policy. The more specific you are, the less frequently the image will be updated. Use the following to determine your chosen OpenShift Container Platform images policy:

- vX.Y

-

The vX.Y tag points to X.Y.Z-<number>. For example, if the

registry-consoleimage is updated to v3.4, it points to the newest 3.4.Z-<number> tag, such as 3.4.1-8. - X.Y.Z

- Similar to the vX.Y example above, the X.Y.Z tag points to the latest X.Y.Z-<number>. For example, 3.4.1 would point to 3.4.1-8

- X.Y.Z-<number>

- The tag is unique and does not change. When using this tag, the image does not update if an image is updated. For example, the 3.4.1-8 will always point to 3.4.1-8, even if an image is updated.

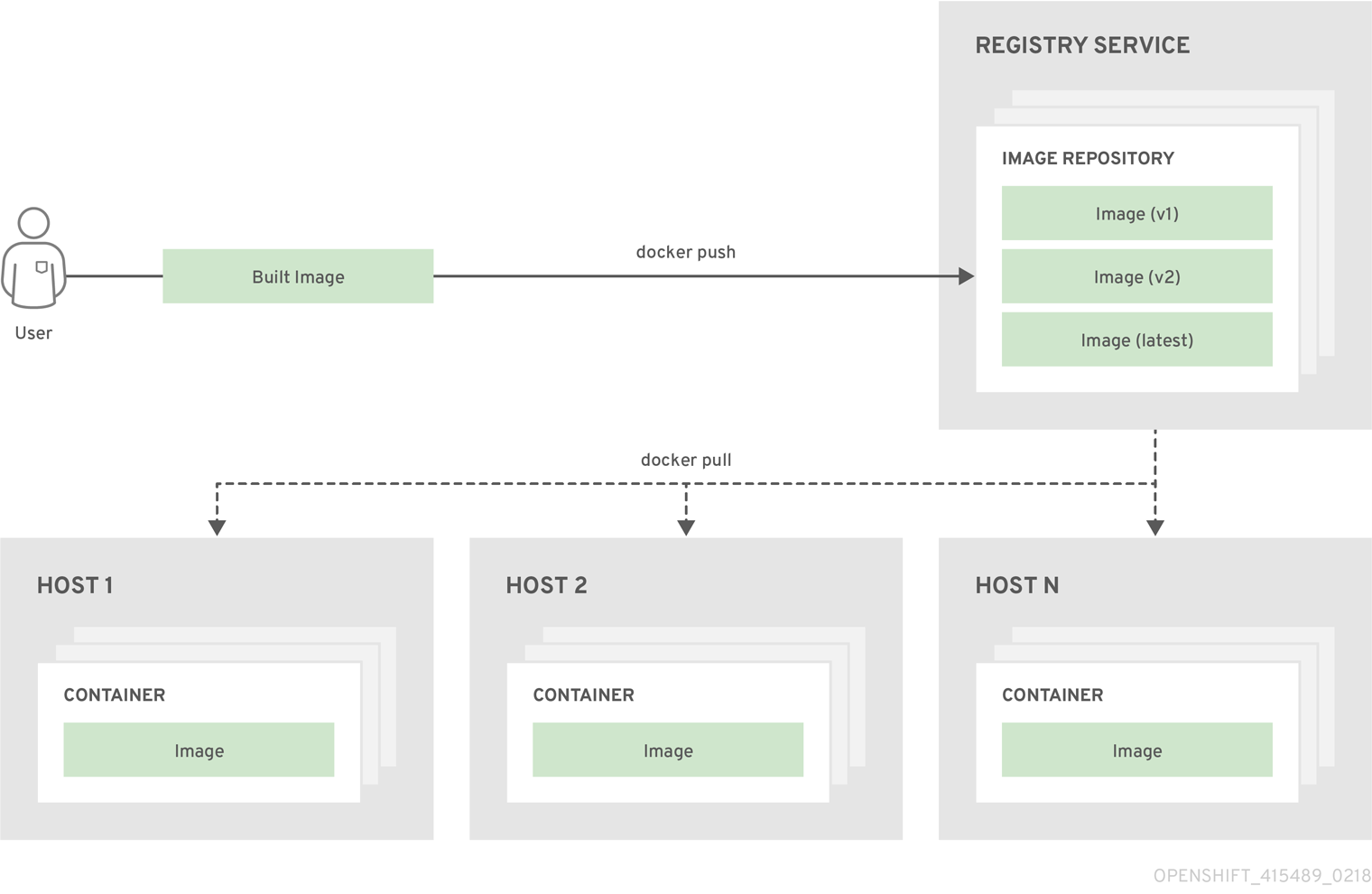

3.2.3. Container Registries

A container registry is a service for storing and retrieving Docker-formatted container images. A registry contains a collection of one or more image repositories. Each image repository contains one or more tagged images. Docker provides its own registry, the Docker Hub, and you can also use private or third-party registries. Red Hat provides a registry at registry.access.redhat.com for subscribers. OpenShift Container Platform can also supply its own internal registry for managing custom container images.

The relationship between containers, images, and registries is depicted in the following diagram:

3.3. Pods and Services

3.3.1. Pods

OpenShift Container Platform leverages the Kubernetes concept of a pod, which is one or more containers deployed together on one host, and the smallest compute unit that can be defined, deployed, and managed.

Pods are the rough equivalent of a machine instance (physical or virtual) to a container. Each pod is allocated its own internal IP address, therefore owning its entire port space, and containers within pods can share their local storage and networking.

Pods have a lifecycle; they are defined, then they are assigned to run on a node, then they run until their container(s) exit or they are removed for some other reason. Pods, depending on policy and exit code, may be removed after exiting, or may be retained in order to enable access to the logs of their containers.

OpenShift Container Platform treats pods as largely immutable; changes cannot be made to a pod definition while it is running. OpenShift Container Platform implements changes by terminating an existing pod and recreating it with modified configuration, base image(s), or both. Pods are also treated as expendable, and do not maintain state when recreated. Therefore pods should usually be managed by higher-level controllers, rather than directly by users.

For the maximum number of pods per OpenShift Container Platform node host, see the Cluster Limits.

Bare pods that are not managed by a replication controller will be not rescheduled upon node disruption.

Below is an example definition of a pod that provides a long-running service, which is actually a part of the OpenShift Container Platform infrastructure: the integrated container registry. It demonstrates many features of pods, most of which are discussed in other topics and thus only briefly mentioned here:

Example 3.1. Pod Object Definition (YAML)

apiVersion: v1

kind: Pod

metadata:

annotations: { ... }

labels:

deployment: docker-registry-1

deploymentconfig: docker-registry

docker-registry: default

generateName: docker-registry-1-

spec:

containers:

- env:

- name: OPENSHIFT_CA_DATA

value: ...

- name: OPENSHIFT_CERT_DATA

value: ...

- name: OPENSHIFT_INSECURE

value: "false"

- name: OPENSHIFT_KEY_DATA

value: ...

- name: OPENSHIFT_MASTER

value: https://master.example.com:8443

image: openshift/origin-docker-registry:v0.6.2

imagePullPolicy: IfNotPresent

name: registry

ports:

- containerPort: 5000

protocol: TCP

resources: {}

securityContext: { ... }

volumeMounts:

- mountPath: /registry

name: registry-storage

- mountPath: /var/run/secrets/kubernetes.io/serviceaccount

name: default-token-br6yz

readOnly: true

dnsPolicy: ClusterFirst

imagePullSecrets:

- name: default-dockercfg-at06w

restartPolicy: Always

serviceAccount: default

volumes:

- emptyDir: {}

name: registry-storage

- name: default-token-br6yz

secret:

secretName: default-token-br6yz- 1

- Pods can be "tagged" with one or more labels, which can then be used to select and manage groups of pods in a single operation. The labels are stored in key/value format in the

metadatahash. One label in this example is docker-registry=default. - 2

- Pods must have a unique name within their namespace. A pod definition may specify the basis of a name with the

generateNameattribute, and random characters will be added automatically to generate a unique name. - 3

containersspecifies an array of container definitions; in this case (as with most), just one.- 4

- Environment variables can be specified to pass necessary values to each container.

- 5

- Each container in the pod is instantiated from its own Docker-formatted container image.

- 6

- The container can bind to ports which will be made available on the pod’s IP.

- 7

- OpenShift Container Platform defines a security context for containers which specifies whether they are allowed to run as privileged containers, run as a user of their choice, and more. The default context is very restrictive but administrators can modify this as needed.

- 8

- The container specifies where external storage volumes should be mounted within the container. In this case, there is a volume for storing the registry’s data, and one for access to credentials the registry needs for making requests against the OpenShift Container Platform API.

- 9

- The pod restart policy with possible values

Always,OnFailure, andNever. The default value isAlways. - 10

- Pods making requests against the OpenShift Container Platform API is a common enough pattern that there is a

serviceAccountfield for specifying which service account user the pod should authenticate as when making the requests. This enables fine-grained access control for custom infrastructure components. - 11

- The pod defines storage volumes that are available to its container(s) to use. In this case, it provides an ephemeral volume for the registry storage and a

secretvolume containing the service account credentials.

This pod definition does not include attributes that are filled by OpenShift Container Platform automatically after the pod is created and its lifecycle begins. The Kubernetes pod documentation has details about the functionality and purpose of pods.

3.3.1.1. Pod Restart Policy

A pod restart policy determines how OpenShift Container Platform responds when containers in that pod exit. The policy applies to all containers in that pod.

The possible values are:

-

Always- Tries restarting a successfully exited container on the pod continuously, with an exponential back-off delay (10s, 20s, 40s) until the pod is restarted. The default isAlways. -

OnFailure- Tries restarting a failed container on the pod with an exponential back-off delay (10s, 20s, 40s) capped at 5 minutes. -

Never- Does not try to restart exited or failed containers on the pod. Pods immediately fail and exit.

Once bound to a node, a pod will never be bound to another node. This means that a controller is necessary in order for a pod to survive node failure:

| Condition | Controller Type | Restart Policy |

|---|---|---|

| Pods that are expected to terminate (such as batch computations) |

| |

| Pods that are expected to not terminate (such as web servers) |

| |

| Pods that need to run one-per-machine | Daemonset | Any |

If a container on a pod fails and the restart policy is set to OnFailure, the pod stays on the node and the container is restarted. If you do not want the container to restart, use a restart policy of Never.

If an entire pod fails, OpenShift Container Platform starts a new pod. Developers need to address the possibility that applications might be restarted in a new pod. In particular, applications need to handle temporary files, locks, incomplete output, and so forth caused by previous runs.

Kubernetes architecture expects reliable endpoints from cloud providers. When a cloud provider is down, the kubelet prevents OpenShift Container Platform from restarting.

If the underlying cloud provider endpoints are not reliable, do not install a cluster using cloud provider integration. Install the cluster as if it was in a no-cloud environment. It is not recommended to toggle cloud provider integration on or off in an installed cluster.

For details on how OpenShift Container Platform uses restart policy with failed containers, see the Example States in the Kubernetes documentation.

3.3.1.2. Injecting Information into Pods Using Pod Presets

A pod preset is an object that injects user-specified information into pods as they are created.

As of OpenShift Container Platform 3.7, pod presets are no longer supported.

Using pod preset objects you can inject:

- secret objects

-

ConfigMapobjects - storage volumes

- container volume mounts

- environment variables

Developers need to ensure the pod labels match the label selector on the PodPreset in order to add all that information to the pod. The label on a pod associates the pod with one or more pod preset objects that have a matching label selectors.

Using pod presets, a developer can provision pods without needing to know the details about the services the pod will consume. An administrator can keep configuration items of a service invisible from a developer without preventing the developer from deploying pods.

The Pod Preset feature is available only if the Service Catalog has been installed.

You can exclude specific pods from being injected using the podpreset.admission.kubernetes.io/exclude: "true" parameter in the pod specification. See the example pod specification.

For more information, see Injecting Information into Pods Using Pod Presets.

3.3.2. Init Containers

An init container is a container in a pod that is started before the pod app containers are started. Init containers can share volumes, perform network operations, and perform computations before the remaining containers start. Init containers can also block or delay the startup of application containers until some precondition is met.

When a pod starts, after the network and volumes are initialized, the init containers are started in order. Each init container must exit successfully before the next is invoked. If an init container fails to start (due to the runtime) or exits with failure, it is retried according to the pod restart policy.

A pod cannot be ready until all init containers have succeeded.

See the Kubernetes documentation for some init container usage examples.

The following example outlines a simple pod which has two init containers. The first init container waits for myservice and the second waits for mydb. Once both containers succeed, the Pod starts.

Example 3.2. Sample Init Container Pod Object Definition (YAML)

apiVersion: v1

kind: Pod

metadata:

name: myapp-pod

labels:

app: myapp

spec:

containers:

- name: myapp-container

image: busybox

command: ['sh', '-c', 'echo The app is running! && sleep 3600']

initContainers:

- name: init-myservice

image: busybox

command: ['sh', '-c', 'until nslookup myservice; do echo waiting for myservice; sleep 2; done;']

- name: init-mydb

image: busybox

command: ['sh', '-c', 'until nslookup mydb; do echo waiting for mydb; sleep 2; done;']

Each init container has all of the fields of an app container except for readinessProbe. Init containers must exit for pod startup to continue and cannot define readiness other than completion.

Init containers can include activeDeadlineSeconds on the pod and livenessProbe on the container to prevent init containers from failing forever. The active deadline includes init containers.

3.3.3. Services

A Kubernetes service serves as an internal load balancer. It identifies a set of replicated pods in order to proxy the connections it receives to them. Backing pods can be added to or removed from a service arbitrarily while the service remains consistently available, enabling anything that depends on the service to refer to it at a consistent address. The default service clusterIP addresses are from the OpenShift Container Platform internal network and they are used to permit pods to access each other.

To permit external access to the service, additional externalIP and ingressIP addresses that are external to the cluster can be assigned to the service. These externalIP addresses can also be virtual IP addresses that provide highly available access to the service.

Services are assigned an IP address and port pair that, when accessed, proxy to an appropriate backing pod. A service uses a label selector to find all the containers running that provide a certain network service on a certain port.

Like pods, services are REST objects. The following example shows the definition of a service for the pod defined above:

Example 3.3. Service Object Definition (YAML)

apiVersion: v1

kind: Service

metadata:

name: docker-registry

spec:

selector:

docker-registry: default

clusterIP: 172.30.136.123

ports:

- nodePort: 0

port: 5000

protocol: TCP

targetPort: 5000 - 1

- The service name docker-registry is also used to construct an environment variable with the service IP that is inserted into other pods in the same namespace. The maximum name length is 63 characters.

- 2

- The label selector identifies all pods with the docker-registry=default label attached as its backing pods.

- 3

- Virtual IP of the service, allocated automatically at creation from a pool of internal IPs.

- 4

- Port the service listens on.

- 5

- Port on the backing pods to which the service forwards connections.

The Kubernetes documentation has more information on services.

3.3.3.1. Service externalIPs

In addition to the cluster’s internal IP addresses, the user can configure IP addresses that are external to the cluster. The administrator is responsible for ensuring that traffic arrives at a node with this IP.

The externalIPs must be selected by the cluster adminitrators from the externalIPNetworkCIDRs range configured in master-config.yaml file. When master-config.yaml is changed, the master services must be restarted.

Example 3.4. Sample externalIPNetworkCIDR /etc/origin/master/master-config.yaml

networkConfig:

externalIPNetworkCIDRs:

- 192.0.1.0.0/24Example 3.5. Service externalIPs Definition (JSON)

{

"kind": "Service",

"apiVersion": "v1",

"metadata": {

"name": "my-service"

},

"spec": {

"selector": {

"app": "MyApp"

},

"ports": [

{

"name": "http",

"protocol": "TCP",

"port": 80,

"targetPort": 9376

}

],

"externalIPs" : [

"192.0.1.1"

]

}

}- 1

- List of external IP addresses on which the port is exposed. This list is in addition to the internal IP address list.

3.3.3.2. Service ingressIPs

In non-cloud clusters, externalIP addresses can be automatically assigned from a pool of addresses. This eliminates the need for the administrator manually assigning them.

The pool is configured in /etc/origin/master/master-config.yaml file. After changing this file, restart the master service.

The ingressIPNetworkCIDR is set to 172.29.0.0/16 by default. If the cluster environment is not already using this private range, use the default range or set a custom range.

If you are using high availability, then this range must be less than 256 addresses.

Example 3.6. Sample ingressIPNetworkCIDR /etc/origin/master/master-config.yaml

networkConfig:

ingressIPNetworkCIDR: 172.29.0.0/163.3.3.3. Service NodePort

Setting the service type=NodePort will allocate a port from a flag-configured range (default: 30000-32767), and each node will proxy that port (the same port number on every node) into your service.

The selected port will be reported in the service configuration, under spec.ports[*].nodePort.

To specify a custom port just place the port number in the nodePort field. The custom port number must be in the configured range for nodePorts. When 'master-config.yaml' is changed the master services must be restarted.

Example 3.7. Sample servicesNodePortRange /etc/origin/master/master-config.yaml

kubernetesMasterConfig:

servicesNodePortRange: ""

The service will be visible as both the <NodeIP>:spec.ports[].nodePort and spec.clusterIp:spec.ports[].port

Setting a nodePort is a privileged operation.

3.3.3.4. Service Proxy Mode

OpenShift Container Platform has two different implementations of the service-routing infrastructure. The default implementation is entirely iptables-based, and uses probabilistic iptables rewriting rules to distribute incoming service connections between the endpoint pods. The older implementation uses a user space process to accept incoming connections and then proxy traffic between the client and one of the endpoint pods.

The iptables-based implementation is much more efficient, but it requires that all endpoints are always able to accept connections; the user space implementation is slower, but can try multiple endpoints in turn until it finds one that works. If you have good readiness checks (or generally reliable nodes and pods), then the iptables-based service proxy is the best choice. Otherwise, you can enable the user space-based proxy when installing, or after deploying the cluster by editing the node configuration file.

3.3.3.5. Headless services

If your application does not need load balancing or single-service IP addresses, you can create a headless service. When you create a headless service, no load-balancing or proxying is done and no cluster IP is allocated for this service. For such services, DNS is automatically configured depending on whether the service has selectors defined or not.

Services with selectors: For headless services that define selectors, the endpoints controller creates Endpoints records in the API and modifies the DNS configuration to return A records (addresses) that point directly to the pods backing the service.

Services without selectors: For headless services that do not define selectors, the endpoints controller does not create Endpoints records. However, the DNS system looks for and configures the following records:

-

For

ExternalNametype services,CNAMErecords. -

For all other service types,

Arecords for any endpoints that share a name with the service.

3.3.3.5.1. Creating a headless service

Creating a headless service is similar to creating a standard service, but you do not declare the ClusterIP address. To create a headless service, add the clusterIP: None parameter value to the service YAML definition.

For example, for a group of pods that you want to be a part of the same cluster or service.

List of pods

$ oc get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE

frontend-1-287hw 1/1 Running 0 7m 172.17.0.3 node_1

frontend-1-68km5 1/1 Running 0 7m 172.17.0.6 node_1You can define the headless service as:

Headless service definition

apiVersion: v1

kind: Service

metadata:

labels:

app: ruby-helloworld-sample

template: application-template-stibuild

name: frontend-headless

spec:

clusterIP: None

ports:

- name: web

port: 5432

protocol: TCP

targetPort: 8080

selector:

name: frontend

sessionAffinity: None

type: ClusterIP

status:

loadBalancer: {}Also, headless service does not have any IP address of its own.

$ oc get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

frontend ClusterIP 172.30.232.77 <none> 5432/TCP 12m

frontend-headless ClusterIP None <none> 5432/TCP 10m3.3.3.5.2. Endpoint discovery by using a headless service

The benefit of using a headless service is that you can discover a pod’s IP address directly. Standard services act as load balancer or proxy and give access to the workload object by using the service name. With headless services, the service name resolves to the set of IP addresses of the pods that are grouped by the service.

When you look up the DNS A record for a standard service, you get the loadbalanced IP of the service.

$ dig frontend.test A +search +short

172.30.232.77But for a headless service, you get the list of IPs of individual pods.

$ dig frontend-headless.test A +search +short

172.17.0.3

172.17.0.6

For using a headless service with a StatefulSet and related use cases where you need to resolve DNS for the pod during initialization and termination, set publishNotReadyAddresses to true (the default value is false). When publishNotReadyAddresses is set to true, it indicates that DNS implementations must publish the notReadyAddresses of subsets for the Endpoints associated with the Service.

3.3.4. Labels

Labels are used to organize, group, or select API objects. For example, pods are "tagged" with labels, and then services use label selectors to identify the pods they proxy to. This makes it possible for services to reference groups of pods, even treating pods with potentially different containers as related entities.

Most objects can include labels in their metadata. So labels can be used to group arbitrarily-related objects; for example, all of the pods, services, replication controllers, and deployment configurations of a particular application can be grouped.

Labels are simple key/value pairs, as in the following example:

labels:

key1: value1

key2: value2Consider:

- A pod consisting of an nginx container, with the label role=webserver.

- A pod consisting of an Apache httpd container, with the same label role=webserver.

A service or replication controller that is defined to use pods with the role=webserver label treats both of these pods as part of the same group.

The Kubernetes documentation has more information on labels.

3.3.5. Endpoints

The servers that back a service are called its endpoints, and are specified by an object of type Endpoints with the same name as the service. When a service is backed by pods, those pods are normally specified by a label selector in the service specification, and OpenShift Container Platform automatically creates the Endpoints object pointing to those pods.

In some cases, you may want to create a service but have it be backed by external hosts rather than by pods in the OpenShift Container Platform cluster. In this case, you can leave out the selector field in the service, and create the Endpoints object manually.

Note that OpenShift Container Platform will not let most users manually create an Endpoints object that points to an IP address in the network blocks reserved for pod and service IPs. Only cluster admins or other users with permission to create resources under endpoints/restricted can create such Endpoint objects.

3.4. Projects and Users

3.4.1. Users

Interaction with OpenShift Container Platform is associated with a user. An OpenShift Container Platform user object represents an actor which may be granted permissions in the system by adding roles to them or to their groups.

Several types of users can exist:

| Regular users |

This is the way most interactive OpenShift Container Platform users will be represented. Regular users are created automatically in the system upon first login, or can be created via the API. Regular users are represented with the |

| System users |

Many of these are created automatically when the infrastructure is defined, mainly for the purpose of enabling the infrastructure to interact with the API securely. They include a cluster administrator (with access to everything), a per-node user, users for use by routers and registries, and various others. Finally, there is an |

| Service accounts |

These are special system users associated with projects; some are created automatically when the project is first created, while project administrators can create more for the purpose of defining access to the contents of each project. Service accounts are represented with the |

Every user must authenticate in some way in order to access OpenShift Container Platform. API requests with no authentication or invalid authentication are authenticated as requests by the anonymous system user. Once authenticated, policy determines what the user is authorized to do.

3.4.2. Namespaces

A Kubernetes namespace provides a mechanism to scope resources in a cluster. In OpenShift Container Platform, a project is a Kubernetes namespace with additional annotations.

Namespaces provide a unique scope for:

- Named resources to avoid basic naming collisions.

- Delegated management authority to trusted users.

- The ability to limit community resource consumption.

Most objects in the system are scoped by namespace, but some are excepted and have no namespace, including nodes and users.

The Kubernetes documentation has more information on namespaces.

3.4.3. Projects

A project is a Kubernetes namespace with additional annotations, and is the central vehicle by which access to resources for regular users is managed. A project allows a community of users to organize and manage their content in isolation from other communities. Users must be given access to projects by administrators, or if allowed to create projects, automatically have access to their own projects.

Projects can have a separate name, displayName, and description.

-

The mandatory

nameis a unique identifier for the project and is most visible when using the CLI tools or API. The maximum name length is 63 characters. -

The optional

displayNameis how the project is displayed in the web console (defaults toname). -

The optional

descriptioncan be a more detailed description of the project and is also visible in the web console.

Each project scopes its own set of:

| Objects | Pods, services, replication controllers, etc. |

| Policies | Rules for which users can or cannot perform actions on objects. |

| Constraints | Quotas for each kind of object that can be limited. |

| Service accounts | Service accounts act automatically with designated access to objects in the project. |

Cluster administrators can create projects and delegate administrative rights for the project to any member of the user community. Cluster administrators can also allow developers to create their own projects.

Developers and administrators can interact with projects using the CLI or the web console.

3.4.3.1. Projects provided at installation

OpenShift Container Platform comes with a number of projects out of the box, and projects starting with openshift- are the most essential to users:

Starting from 3.10 and above, we have a number of projects starting with openshift- to host our master components running as pods and other infrastructure components. The pods created in these namespaces when having a critical pod annotation, would be considered critical and they would have guaranteed admission by kubelet. Pods created for master components in these namespaces are already marked critical.

3.5. Builds and Image Streams

3.5.1. Builds

A build is the process of transforming input parameters into a resulting object. Most often, the process is used to transform input parameters or source code into a runnable image. A BuildConfig object is the definition of the entire build process.

OpenShift Container Platform leverages Kubernetes by creating Docker-formatted containers from build images and pushing them to a container registry.

Build objects share common characteristics: inputs for a build, the need to complete a build process, logging the build process, publishing resources from successful builds, and publishing the final status of the build. Builds take advantage of resource restrictions, specifying limitations on resources such as CPU usage, memory usage, and build or pod execution time.

The OpenShift Container Platform build system provides extensible support for build strategies that are based on selectable types specified in the build API. There are three primary build strategies available:

By default, Docker builds and S2I builds are supported.

The resulting object of a build depends on the builder used to create it. For Docker and S2I builds, the resulting objects are runnable images. For Custom builds, the resulting objects are whatever the builder image author has specified.

Additionally, the Pipeline build strategy can be used to implement sophisticated workflows:

- continuous integration

- continuous deployment

For a list of build commands, see the Developer’s Guide.

For more information on how OpenShift Container Platform leverages Docker for builds, see the upstream documentation.

3.5.1.1. Docker Build

The Docker build strategy invokes the docker build command, and it therefore expects a repository with a Dockerfile and all required artifacts in it to produce a runnable image.

3.5.1.2. Source-to-Image (S2I) Build

Source-to-Image (S2I) is a tool for building reproducible, Docker-formatted container images. It produces ready-to-run images by injecting application source into a container image and assembling a new image. The new image incorporates the base image (the builder) and built source and is ready to use with the docker run command. S2I supports incremental builds, which re-use previously downloaded dependencies, previously built artifacts, etc.

The advantages of S2I include the following:

| Image flexibility |

S2I scripts can be written to inject application code into almost any existing Docker-formatted container image, taking advantage of the existing ecosystem. Note that, currently, S2I relies on |

| Speed | With S2I, the assemble process can perform a large number of complex operations without creating a new layer at each step, resulting in a fast process. In addition, S2I scripts can be written to re-use artifacts stored in a previous version of the application image, rather than having to download or build them each time the build is run. |

| Patchability | S2I allows you to rebuild the application consistently if an underlying image needs a patch due to a security issue. |

| Operational efficiency | By restricting build operations instead of allowing arbitrary actions, as a Dockerfile would allow, the PaaS operator can avoid accidental or intentional abuses of the build system. |

| Operational security | Building an arbitrary Dockerfile exposes the host system to root privilege escalation. This can be exploited by a malicious user because the entire Docker build process is run as a user with Docker privileges. S2I restricts the operations performed as a root user and can run the scripts as a non-root user. |

| User efficiency |

S2I prevents developers from performing arbitrary |

| Ecosystem | S2I encourages a shared ecosystem of images where you can leverage best practices for your applications. |

| Reproducibility | Produced images can include all inputs including specific versions of build tools and dependencies. This ensures that the image can be reproduced precisely. |

3.5.1.3. Custom Build

The Custom build strategy allows developers to define a specific builder image responsible for the entire build process. Using your own builder image allows you to customize your build process.

A Custom builder image is a plain Docker-formatted container image embedded with build process logic, for example for building RPMs or base images. The openshift/origin-custom-docker-builder image is available on the Docker Hub registry as an example implementation of a Custom builder image.

3.5.1.4. Pipeline Build

The Pipeline build strategy allows developers to define a Jenkins pipeline for execution by the Jenkins pipeline plugin. The build can be started, monitored, and managed by OpenShift Container Platform in the same way as any other build type.

Pipeline workflows are defined in a Jenkinsfile, either embedded directly in the build configuration, or supplied in a Git repository and referenced by the build configuration.

The first time a project defines a build configuration using a Pipeline strategy, OpenShift Container Platform instantiates a Jenkins server to execute the pipeline. Subsequent Pipeline build configurations in the project share this Jenkins server.

For more details on how the Jenkins server is deployed and how to configure or disable the autoprovisioning behavior, see Configuring Pipeline Execution.

The Jenkins server is not automatically removed, even if all Pipeline build configurations are deleted. It must be manually deleted by the user.

For more information about Jenkins Pipelines, see the Jenkins documentation.

3.5.2. Image Streams

An image stream and its associated tags provide an abstraction for referencing Docker images from within OpenShift Container Platform. The image stream and its tags allow you to see what images are available and ensure that you are using the specific image you need even if the image in the repository changes.

Image streams do not contain actual image data, but present a single virtual view of related images, similar to an image repository.

You can configure Builds and Deployments to watch an image stream for notifications when new images are added and react by performing a Build or Deployment, respectively.

For example, if a Deployment is using a certain image and a new version of that image is created, a Deployment could be automatically performed to pick up the new version of the image.

However, if the image stream tag used by the Deployment or Build is not updated, then even if the Docker image in the Docker registry is updated, the Build or Deployment will continue using the previous (presumably known good) image.

The source images can be stored in any of the following:

- OpenShift Container Platform’s integrated registry

-

An external registry, for example

registry.access.redhat.comorhub.docker.com - Other image streams in the OpenShift Container Platform cluster

When you define an object that references an image stream tag (such as a Build or Deployment configuration), you point to an image stream tag, not the Docker repository. When you Build or Deploy your application, OpenShift Container Platform queries the Docker repository using the image stream tag to locate the associated ID of the image and uses that exact image.

The image stream metadata is stored in the etcd instance along with other cluster information.

The following image stream contains two tags: 34 which points to a Python v3.4 image and 35 which points to a Python v3.5 image:

oc describe is python

Name: python

Namespace: imagestream

Created: 25 hours ago

Labels: app=python

Annotations: openshift.io/generated-by=OpenShiftWebConsole

openshift.io/image.dockerRepositoryCheck=2017-10-03T19:48:00Z

Docker Pull Spec: docker-registry.default.svc:5000/imagestream/python

Image Lookup: local=false

Unique Images: 2

Tags: 2

34

tagged from centos/python-34-centos7

* centos/python-34-centos7@sha256:28178e2352d31f240de1af1370be855db33ae9782de737bb005247d8791a54d0

14 seconds ago

35

tagged from centos/python-35-centos7

* centos/python-35-centos7@sha256:2efb79ca3ac9c9145a63675fb0c09220ab3b8d4005d35e0644417ee552548b10

7 seconds agoUsing image streams has several significant benefits:

- You can tag, rollback a tag, and quickly deal with images, without having to re-push using the command line.

- You can trigger Builds and Deployments when a new image is pushed to the registry. Also, OpenShift Container Platform has generic triggers for other resources (such as Kubernetes objects).

- You can mark a tag for periodic re-import. If the source image has changed, that change is picked up and reflected in the image stream, which triggers the Build and/or Deployment flow, depending upon the Build or Deployment configuration.

- You can share images using fine-grained access control and quickly distribute images across your teams.

- If the source image changes, the image stream tag will still point to a known-good version of the image, ensuring that your application will not break unexpectedly.

- You can configure security around who can view and use the images through permissions on the image stream objects.

- Users that lack permission to read or list images on the cluster level can still retrieve the images tagged in a project using image streams.

For a curated set of image streams, see the OpenShift Image Streams and Templates library.

When using image streams, it is important to understand what the image stream tag is pointing to and how changes to tags and images can affect you. For example:

-

If your image stream tag points to a Docker image tag, you need to understand how that Docker image tag is updated. For example, a Docker image tag

docker.io/ruby:2.5points to a v2.5 ruby image, but a Docker image tagdocker.io/ruby:latestchanges with major versions. So, the Docker image tag that a image stream tag points to can tell you how stable the image stream tag is. - If your image stream tag follows another image stream tag instead of pointing directly to a Docker image tag, it is possible that the image stream tag might be updated to follow a different image stream tag in the future. This change might result in picking up an incompatible version change.

3.5.2.1. Important terms

- Docker repository

A collection of related docker images and tags identifying them. For example, the OpenShift Jenkins images are in a Docker repository:

docker.io/openshift/jenkins-2-centos7- Docker registry

A content server that can store and service images from Docker repositories. For example:

registry.access.redhat.com- Docker image

- A specific set of content that can be run as a container. Usually associated with a particular tag within a Docker repository.

- Docker image tag

- A label applied to a Docker image in a repository that distinguishes a specific image. For example, here 3.6.0 is a tag:

docker.io/openshift/jenkins-2-centos7:3.6.0A Docker image tag can be updated to point to new Docker image content at any time.

- Docker image ID

- A SHA (Secure Hash Algorithm) code that can be used to pull an image. For example:

docker.io/openshift/jenkins-2-centos7@sha256:ab312bda324A SHA image ID cannot change. A specific SHA identifier always references the exact same docker image content.

- Image stream