Configuring AMQ Broker

For Use with AMQ Broker 7.8

Abstract

Chapter 1. Overview

AMQ Broker configuration files define important settings for a broker instance. By editing a broker’s configuration files, you can control how the broker operates in your environment.

1.1. AMQ Broker configuration files and locations

All of a broker’s configuration files are stored in <broker-instance-dir>/etc. You can configure a broker by editing the settings in these configuration files.

Each broker instance uses the following configuration files:

broker.xml- The main configuration file. You use this file to configure most aspects of the broker, such as network connections, security settings, message addresses, and so on.

bootstrap.xml-

The file that AMQ Broker uses to start a broker instance. You use it to change the location of

broker.xml, configure the web server, and set some security settings. logging.properties- You use this file to set logging properties for the broker instance.

artemis.profile- You use this file to set environment variables used while the broker instance is running.

login.config,artemis-users.properties,artemis-roles.properties- Security-related files. You use these files to set up authentication for user access to the broker instance.

1.2. Understanding the default broker configuration

You configure most of a broker’s functionality by editing the broker.xml configuration file. This file contains default settings, which are sufficient to start and operate a broker. However, you will likely need to change some of the default settings and add new settings to configure the broker for your environment.

By default, broker.xml contains default settings for the following functionality:

- Message persistence

- Acceptors

- Security

- Message addresses

Default message persistence settings

By default, AMQ Broker persistence uses an append-only file journal that consists of a set of files on disk. The journal saves messages, transactions, and other information.

Default acceptor settings

Brokers listen for incoming client connections by using an acceptor configuration element to define the port and protocols a client can use to make connections. By default, AMQ Broker includes configuration for an acceptor for each supported messaging protocol.

Default security settings

AMQ Broker contains a flexible role-based security model for applying security to queues, based on their addresses. The default configuration uses wildcards to apply the amq role to all addresses (represented by the number sign, #).

Default message address settings

AMQ Broker includes a default address that establishes a default set of configuration settings to be applied to any created queue or topic.

Additionally, the default configuration defines two queues: DLQ (Dead Letter Queue) handles messages that arrive with no known destination, and Expiry Queue holds messages that have lived past their expiration and therefore should not be routed to their original destination.

1.3. Reloading configuration updates

By default, a broker checks for changes in the configuration files every 5000 milliseconds. If the broker detects a change in the "last modified" time stamp of the configuration file, the broker determines that a configuration change took place. In this case, the broker reloads the configuration file to activate the changes.

When the broker reloads the broker.xml configuration file, it reloads the following modules:

Address settings and queues

When the configuration file is reloaded, the address settings determine how to handle addresses and queues that have been deleted from the configuration file. You can set this with the

config-delete-addressesandconfig-delete-queuesproperties. For more information, see Appendix B, Address Setting Configuration Elements.Security settings

SSL/TLS keystores and truststores on an existing acceptor can be reloaded to establish new certificates without any impact to existing clients. Connected clients, even those with older or differing certificates, can continue to send and receive messages.

Diverts

A configuration reload deploys any new divert that you have added. However, removal of a divert from the configuration or a change to a sub-element within a

<divert>element do not take effect until you restart the broker.

The following procedure shows how to change the interval at which the broker checks for changes to the broker.xml configuration file.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. Within the

<core>element, add the<configuration-file-refresh-period>element and set the refresh period (in milliseconds).This example sets the configuration refresh period to be 60000 milliseconds:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow

1.4. Modularizing the broker configuration file

If you have multiple brokers that share common configuration settings, you can define the common configuration in separate files, and then include these files in each broker’s broker.xml configuration file.

The most common configuration settings that you might share between brokers include:

- Addresses

- Address settings

- Security settings

Procedure

Create a separate XML file for each

broker.xmlsection that you want to share.Each XML file can only include a single section from

broker.xml(for example, either addresses or address settings, but not both). The top-level element must also define the element namespace (xmlns="urn:activemq:core").This example shows a security settings configuration defined in

my-security-settings.xml:my-security-settings.xml

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file for each broker that should use the common configuration settings. For each

broker.xmlfile that you opened, do the following:In the

<configuration>element at the beginning ofbroker.xml, verify that the following line appears:xmlns:xi="http://www.w3.org/2001/XInclude"

xmlns:xi="http://www.w3.org/2001/XInclude"Copy to Clipboard Copied! Toggle word wrap Toggle overflow Add an XML inclusion for each XML file that contains shared configuration settings.

This example includes the

my-security-settings.xmlfile.broker.xml

Copy to Clipboard Copied! Toggle word wrap Toggle overflow If desired, validate

broker.xmlto verify that the XML is valid against the schema.You can use any XML validator program. This example uses

xmllintto validatebroker.xmlagainst theartemis-server.xslschema.xmllint --noout --xinclude --schema /opt/redhat/amq-broker/amq-broker-7.2.0/schema/artemis-server.xsd /var/opt/amq-broker/mybroker/etc/broker.xml /var/opt/amq-broker/mybroker/etc/broker.xml validates

$ xmllint --noout --xinclude --schema /opt/redhat/amq-broker/amq-broker-7.2.0/schema/artemis-server.xsd /var/opt/amq-broker/mybroker/etc/broker.xml /var/opt/amq-broker/mybroker/etc/broker.xml validatesCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Additional resources

- For more information about XML Inclusions (XIncludes), see https://www.w3.org/TR/xinclude/.

1.4.1. Reloading modular configuration files

When the broker periodically checks for configuration changes (according to the frequency specified by configuration-file-refresh-period), it does not automatically detect changes made to configuration files that are included in the broker.xml configuration file via xi:include. For example, if broker.xml includes my-address-settings.xml and you make configuration changes to my-address-settings.xml, the broker does not automatically detect the changes in my-address-settings.xml and reload the configuration.

To force a reload of the broker.xml configuration file and any modified configuration files included within it, you must ensure that the "last modified" time stamp of the broker.xml configuration file has changed. You can use a standard Linux touch command to update the last-modified time stamp of broker.xml without making any other changes. For example:

touch -m <broker-instance-dir>/etc/broker.xml

$ touch -m <broker-instance-dir>/etc/broker.xml1.5. Document conventions

This document uses the following conventions for the sudo command and file paths.

The sudo command

In this document, sudo is used for any command that requires root privileges. You should always exercise caution when using sudo, as any changes can affect the entire system.

For more information about using sudo, see The sudo Command.

About the use of file paths in this document

In this document, all file paths are valid for Linux, UNIX, and similar operating systems (for example, /home/...). If you are using Microsoft Windows, you should use the equivalent Microsoft Windows paths (for example, C:\Users\...).

Chapter 2. Network Connections: Acceptors and Connectors

There are two types of connections used in AMQ Broker: network and In-VM. Network connections are used when the two parties are located in different virtual machines, whether on the same server or physically remote. An In-VM connection is used when the client, whether an application or a server, resides within the same virtual machine as the broker.

Network connections rely on Netty. Netty is a high-performance, low-level network library that allows network connections to be configured in several different ways: using Java IO or NIO, TCP sockets, SSL/TLS, even tunneling over HTTP or HTTPS. Netty also allows for a single port to be used for all messaging protocols. A broker will automatically detect which protocol is being used and direct the incoming message to the appropriate handler for further processing.

The URI within a network connection’s configuration determines its type. For example, using vm in the URI will create an In-VM connection. In the example below, note that the URI of the acceptor starts with vm.

<acceptor name="in-vm-example">vm://0</acceptor>

<acceptor name="in-vm-example">vm://0</acceptor>

Using tcp in the URI, alternatively, will create a network connection.

<acceptor name="network-example">tcp://localhost:61617</acceptor>

<acceptor name="network-example">tcp://localhost:61617</acceptor>This chapter will first discuss the two configuration elements specific to network connections, Acceptors and Connectors. Next, configuration steps for TCP, HTTP, and SSL/TLS network connections, as well as In-VM connections, are explained.

2.1. About Acceptors

One of the most important concepts when discussing network connections in AMQ Broker is the acceptor. Acceptors define the way connections are made to the broker. Below is a typical configuration for an acceptor that might be in found inside the configuration file BROKER_INSTANCE_DIR/etc/broker.xml.

<acceptors> <acceptor name="example-acceptor">tcp://localhost:61617</acceptor> </acceptors>

<acceptors>

<acceptor name="example-acceptor">tcp://localhost:61617</acceptor>

</acceptors>

Note that each acceptor is grouped inside an acceptors element. There is no upper limit to the number of acceptors you can list per server.

Configuring an Acceptor

You configure an acceptor by appending key-value pairs to the query string of the URI defined for the acceptor. Use a semicolon (';') to separate multiple key-value pairs, as shown in the following example. It configures an acceptor for SSL/TLS by adding multiple key-value pairs at the end of the URI, starting with sslEnabled=true.

<acceptor name="example-acceptor">tcp://localhost:61617?sslEnabled=true;key-store-path=/path</acceptor>

<acceptor name="example-acceptor">tcp://localhost:61617?sslEnabled=true;key-store-path=/path</acceptor>

For details on connector configuration parameters, see Acceptor and Connector Configuration Parameters.

2.2. About Connectors

Whereas acceptors define how a server accepts connections, a connector is used by clients to define how they can connect to a server.

Below is a typical connector as defined in the BROKER_INSTANCE_DIR/etc/broker.xml configuration file:

<connectors> <connector name="example-connector">tcp://localhost:61617</connector> </connectors>

<connectors>

<connector name="example-connector">tcp://localhost:61617</connector>

</connectors>

Note that connectors are defined inside a connectors element. There is no upper limit to the number of connectors per server.

Although connectors are used by clients, they are configured on the server just like acceptors. There are a couple of important reasons why:

- A server itself can act as a client and therefore needs to know how to connect to other servers. For example, when one server is bridged to another or when a server takes part in a cluster.

- A server is often used by JMS clients to look up connection factory instances. In these cases, JNDI needs to know details of the connection factories used to create client connections. The information is provided to the client when a JNDI lookup is performed. See Configuring a Connection on the Client Side for more information.

Configuring a Connector

Like acceptors, connectors have their configuration attached to the query string of their URI. Below is an example of a connector that has the tcpNoDelay parameter set to false, which turns off Nagle’s algorithm for this connection.

<connector name="example-connector">tcp://localhost:61616?tcpNoDelay=false</connector>

<connector name="example-connector">tcp://localhost:61616?tcpNoDelay=false</connector>

For details on connector configuration parameters, see Acceptor and Connector Configuration Parameters.

2.3. Configuring a TCP Connection

AMQ Broker uses Netty to provide basic, unencrypted, TCP-based connectivity that can be configured to use blocking Java IO or the newer, non-blocking Java NIO. Java NIO is preferred for better scalability with many concurrent connections. However, using the old IO can sometimes give you better latency than NIO when you are less worried about supporting many thousands of concurrent connections.

If you are running connections across an untrusted network, remember that a TCP network connection is unencrypted. You may want to consider using an SSL or HTTPS configuration to encrypt messages sent over this connection if encryption is a priority. Refer to Section 5.1, “Securing connections” for details. When using a TCP connection, all connections are initiated from the client side. In other words, the server does not initiate any connections to the client, which works well with firewall policies that force connections to be initiated from one direction.

For TCP connections, the host and the port of the connector’s URI defines the address used for the connection.

Procedure

-

Open the configuration file

BROKER_INSTANCE_DIR/etc/broker.xml -

Add or modify the connection and include a URI that uses

tcpas the protocol. Be sure to include both an IP or hostname as well as a port.

In the example below, an acceptor is configured as a TCP connection. A broker configured with this acceptor will accept clients making TCP connections to the IP 10.10.10.1 and port 61617.

<acceptors> <acceptor name="tcp-acceptor">tcp://10.10.10.1:61617</acceptor> ... </acceptors>

<acceptors>

<acceptor name="tcp-acceptor">tcp://10.10.10.1:61617</acceptor>

...

</acceptors>You configure a connector to use TCP in much the same way.

<connectors> <connector name="tcp-connector">tcp://10.10.10.2:61617</connector> ... </connectors>

<connectors>

<connector name="tcp-connector">tcp://10.10.10.2:61617</connector>

...

</connectors>

The connector above would be referenced by a client, or even the broker itself, when making a TCP connection to the specified IP and port, 10.10.10.2:61617.

For details on available configuration parameters for TCP connections, see Acceptor and Connector Configuration Parameters. Most parameters can be used either with acceptors or connectors, but some only work with acceptors.

2.4. Configuring an HTTP Connection

HTTP connections tunnel packets over the HTTP protocol and are useful in scenarios where firewalls allow only HTTP traffic. With single port support, AMQ Broker will automatically detect if HTTP is being used, so configuring a network connection for HTTP is the same as configuring a connection for TCP. For a full working example showing how to use HTTP, see the http-transport example, located under INSTALL_DIR/examples/features/standard/.

Procedure

-

Open the configuration file

BROKER_INSTANCE_DIR/etc/broker.xml -

Add or modify the connection and include a URI that uses

tcpas the protocol. Be sure to include both an IP or hostname as well as a port

In the example below, the broker will accept HTTP communications from clients connecting to port 80 at the IP address 10.10.10.1. Furthermore, the broker will automatically detect that the HTTP protocol is in use and will communicate with the client accordingly.

<acceptors> <acceptor name="http-acceptor">tcp://10.10.10.1:80</acceptor> ... </acceptors>

<acceptors>

<acceptor name="http-acceptor">tcp://10.10.10.1:80</acceptor>

...

</acceptors>Configuring a connector for HTTP is again the same as for TCP.

<connectors> <connector name="http-connector">tcp://10.10.10.2:80</connector> ... </connectors>

<connectors>

<connector name="http-connector">tcp://10.10.10.2:80</connector>

...

</connectors>

Using the configuration in the example above, a broker will create an outbound HTTP connection to port 80 at the IP address 10.10.10.2.

An HTTP connection uses the same configuration parameters as TCP, but it also has some of its own. For details on HTTP-related and other configuration parameters, see Acceptor and Connector Configuration Parameters.

2.5. Configuring an SSL/TLS Connection

You can also configure connections to use SSL/TLS. Refer to Section 5.1, “Securing connections” for details.

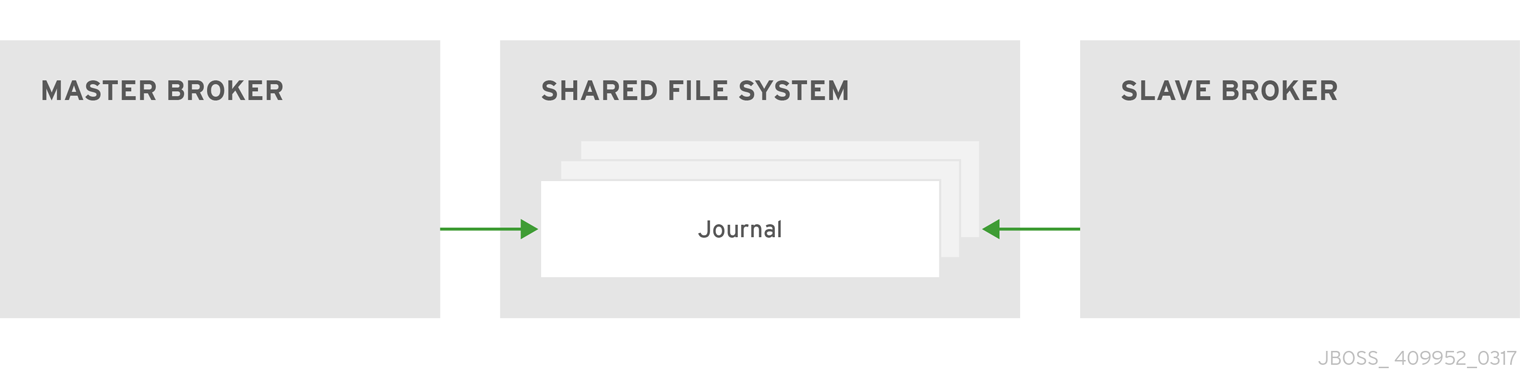

2.6. Configuring an In-VM Connection

An In-VM connection can be used when multiple brokers are co-located in the same virtual machine, as part of a high availability solution for example. In-VM connections can also be used by local clients running in the same JVM as the server. For an in-VM connection, the authority part of the URI defines a unique server ID. In fact, no other part of the URI is needed.

Procedure

-

Open the configuration file

BROKER_INSTANCE_DIR/etc/broker.xml -

Add or modify the connection and include a URI that uses

vmas the protocol.

<acceptors> <acceptor name="in-vm-acceptor">vm://0</acceptor> ... </acceptors>

<acceptors>

<acceptor name="in-vm-acceptor">vm://0</acceptor>

...

</acceptors>

The example acceptor above tells the broker to accept connections from the server with an ID of 0. The other server must be running in the same virtual machine as the broker.

Configuring a connector as an in-vm connection follows the same syntax.

<connectors> <connector name="in-vm-connector">vm://0</connector> ... </connectors>

<connectors>

<connector name="in-vm-connector">vm://0</connector>

...

</connectors>

The connector in the example above defines how clients establish an in-VM connection to the server with an ID of 0 that resides in the same virtual machine. The client can be be an application or broker.

2.7. Configuring a Connection from the Client Side

Connectors are also used indirectly in client applications. You can configure the JMS connection factory directly on the client side without having to define a connector on the server side:

Chapter 3. Network Connections: Protocols

AMQ Broker has a pluggable protocol architecture, so that you can easily enable one or more protocols for a network connection.

The broker supports the following protocols:

In addition to the protocols above, the broker also supports its own native protocol known as "Core Protocol". Past versions of this protocol were known as "HornetQ" and used by Red Hat JBoss Enterprise Application Platform.

3.1. Configuring a Network Connection to Use a Protocol

You must associate a protocol with a network connection before you can use it. (See Network Connections: Acceptors and Connectors for more information about how to create and configure network connections.) The default configuration, located in the file BROKER_INSTANCE_DIR/etc/broker.xml, includes several connections already defined. For convenience, AMQ Broker includes an acceptor for each supported protocol, plus a default acceptor that supports all protocols.

Overview of default acceptors

Shown below are the acceptors included by default in the broker.xml configuration file.

The only requirement to enable a protocol on a given network connnection is to add the protocols parameter to the URI for the acceptor. The value of the parameter must be a comma separated list of protocol names. If the protocol parameter is omitted from the URI, all protocols are enabled.

For example, to create an acceptor for receiving messages on port 3232 using the AMQP protocol, follow these steps:

-

Open the configuration file

BROKER_INSTANCE_DIR/etc/broker.xml -

Add the following line to the

<acceptors>stanza:

<acceptor name="ampq">tcp://0.0.0.0:3232?protocols=AMQP</acceptor>

<acceptor name="ampq">tcp://0.0.0.0:3232?protocols=AMQP</acceptor>Additional parameters in default acceptors

In a minimal acceptor configuration, you specify a protocol as part of the connection URI. However, the default acceptors in the broker.xml configuration file have some additional parameters configured. The following table details the additional parameters configured for the default acceptors.

| Acceptor(s) | Parameter | Description |

|---|---|---|

| All-protocols acceptor AMQP STOMP | tcpSendBufferSize |

Size of the TCP send buffer in bytes. The default value is |

| tcpReceiveBufferSize |

Size of the TCP receive buffer in bytes. The default value is TCP buffer sizes should be tuned according to the bandwidth and latency of your network. In summary TCP send/receive buffer sizes should be calculated as: buffer_size = bandwidth * RTT.

Where bandwidth is in bytes per second and network round trip time (RTT) is in seconds. RTT can be easily measured using the For fast networks you may want to increase the buffer sizes from the defaults. | |

| All-protocols acceptor AMQP STOMP HornetQ MQTT | useEpoll |

Use Netty epoll if using a system (Linux) that supports it. The Netty native transport offers better performance than the NIO transport. The default value of this option is |

| All-protocols acceptor AMQP | amqpCredits |

Maximum number of messages that an AMQP producer can send, regardless of the total message size. The default value is To learn more about how credits are used to control the flow of AMQP messages, see Section 10.2.3, “Blocking AMQP Messages”. |

| All-protocols acceptor AMQP | amqpLowCredits |

Lower threshold at which the broker replenishes producer credits. The default value is To learn more about how credits are used to control the flow of AMQP messages, see Section 10.2.3, “Blocking AMQP Messages”. |

| HornetQ compatibility acceptor | anycastPrefix |

Prefix that clients use to specify the For more information about configuring a prefix to enable clients to specify a routing type when connecting to an address, see Section 4.6, “Adding a routing type to an acceptor configuration”. |

| multicastPrefix |

Prefix that clients use to specify the For more information about configuring a prefix to enable clients to specify a routing type when connecting to an address, see Section 4.6, “Adding a routing type to an acceptor configuration”. |

Additional resources

- For information about other parameters that you can configure for Netty network connections, see Appendix A, Acceptor and Connector Configuration Parameters.

3.2. Using AMQP with a Network Connection

The broker supports the AMQP 1.0 specification. An AMQP link is a uni-directional protocol for messages between a source and a target, that is, a client and the broker.

Procedure

-

Open the configuration file

BROKER_INSTANCE_DIR/etc/broker.xml -

Add or configure an

acceptorto receive AMQP clients by including theprotocolsparameter with a value ofAMQPas part of the URI, as shown in the following example:

<acceptors> <acceptor name="amqp-acceptor">tcp://localhost:5672?protocols=AMQP</acceptor> ... </acceptors>

<acceptors>

<acceptor name="amqp-acceptor">tcp://localhost:5672?protocols=AMQP</acceptor>

...

</acceptors>In the preceding example, the broker accepts AMQP 1.0 clients on port 5672, which is the default AMQP port.

An AMQP link has two endpoints, a sender and a receiver. When senders transmit a message, the broker converts it into an internal format, so it can be forwarded to its destination on the broker. Receivers connect to the destination at the broker and convert the messages back into AMQP before they are delivered.

If an AMQP link is dynamic, a temporary queue is created and either the remote source or the remote target address is set to the name of the temporary queue. If the link is not dynamic, the address of the remote target or source is used for the queue. If the remote target or source does not exist, an exception is sent.

A link target can also be a Coordinator, which is used to handle the underlying session as a transaction, either rolling it back or committing it.

AMQP allows the use of multiple transactions per session, amqp:multi-txns-per-ssn, however the current version of AMQ Broker will support only single transactions per session.

The details of distributed transactions (XA) within AMQP are not provided in the 1.0 version of the specification. If your environment requires support for distributed transactions, it is recommended that you use the AMQ Core Protocol JMS.

See the AMQP 1.0 specification for more information about the protocol and its features.

3.2.1. Using an AMQP Link as a Topic

Unlike JMS, the AMQP protocol does not include topics. However, it is still possible to treat AMQP consumers or receivers as subscriptions rather than just consumers on a queue. By default, any receiving link that attaches to an address with the prefix jms.topic. is treated as a subscription, and a subscription queue is created. The subscription queue is made durable or volatile, depending on how the Terminus Durability is configured, as captured in the following table:

| To create this kind of subscription for a multicast-only queue… | Set Terminus Durability to this… |

|---|---|

| Durable |

|

| Non-durable |

|

The name of a durable queue is composed of the container ID and the link name, for example my-container-id:my-link-name.

AMQ Broker also supports the qpid-jms client and will respect its use of topics regardless of the prefix used for the address.

3.2.2. Configuring AMQP Security

The broker supports AMQP SASL Authentication. See Security for more information about how to configure SASL-based authentication on the broker.

3.3. Using MQTT with a Network Connection

The broker supports MQTT v3.1.1 (and also the older v3.1 code message format). MQTT is a lightweight, client to server, publish/subscribe messaging protocol. MQTT reduces messaging overhead and network traffic, as well as a client’s code footprint. For these reasons, MQTT is ideally suited to constrained devices such as sensors and actuators and is quickly becoming the de facto standard communication protocol for Internet of Things(IoT).

Procedure

-

Open the configuration file

BROKER_INSTANCE_DIR/etc/broker.xml - Add an acceptor with the MQTT protocol enabled. For example:

<acceptors> <acceptor name="mqtt">tcp://localhost:1883?protocols=MQTT</acceptor> ... </acceptors>

<acceptors>

<acceptor name="mqtt">tcp://localhost:1883?protocols=MQTT</acceptor>

...

</acceptors>MQTT comes with a number of useful features including:

- Quality of Service

- Each message can define a quality of service that is associated with it. The broker will attempt to deliver messages to subscribers at the highest quality of service level defined.

- Retained Messages

- Messages can be retained for a particular address. New subscribers to that address receive the last-sent retained message before any other messages, even if the retained message was sent before the client connected.

- Wild card subscriptions

- MQTT addresses are hierarchical, similar to the hierarchy of a file system. Clients are able to subscribe to specific topics or to whole branches of a hierarchy.

- Will Messages

- Clients are able to set a "will message" as part of their connect packet. If the client abnormally disconnects, the broker will publish the will message to the specified address. Other subscribers receive the will message and can react accordingly.

The best source of information about the MQTT protocol is in the specification. The MQTT v3.1.1 specification can be downloaded from the OASIS website.

3.4. Using OpenWire with a Network Connection

The broker supports the OpenWire protocol, which allows a JMS client to talk directly to a broker. Use this protocol to communicate with older versions of AMQ Broker.

Currently AMQ Broker supports OpenWire clients that use standard JMS APIs only.

Procedure

-

Open the configuration file

BROKER_INSTANCE_DIR/etc/broker.xml Add or modify an

acceptorso that it includesOPENWIREas part of theprotocolparameter, as shown in the following example:<acceptors> <acceptor name="openwire-acceptor">tcp://localhost:61616?protocols=OPENWIRE</acceptor> ... </acceptors>

<acceptors> <acceptor name="openwire-acceptor">tcp://localhost:61616?protocols=OPENWIRE</acceptor> ... </acceptors>Copy to Clipboard Copied! Toggle word wrap Toggle overflow

In the preceding example, the broker will listen on port 61616 for incoming OpenWire commands.

For more details, see the examples located under INSTALL_DIR/examples/protocols/openwire.

3.5. Using STOMP with a Network Connection

STOMP is a text-orientated wire protocol that allows STOMP clients to communicate with STOMP Brokers. The broker supports STOMP 1.0, 1.1 and 1.2. STOMP clients are available for several languages and platforms making it a good choice for interoperability.

Procedure

-

Open the configuration file

BROKER_INSTANCE_DIR/etc/broker.xml -

Configure an existing

acceptoror create a new one and include aprotocolsparameter with a value ofSTOMP, as below.

<acceptors> <acceptor name="stomp-acceptor">tcp://localhost:61613?protocols=STOMP</acceptor> ... </acceptors>

<acceptors>

<acceptor name="stomp-acceptor">tcp://localhost:61613?protocols=STOMP</acceptor>

...

</acceptors>

In the preceding example, the broker accepts STOMP connections on the port 61613, which is the default.

See the stomp example located under INSTALL_DIR/examples/protocols for an example of how to configure a broker with STOMP.

3.5.1. Knowing the Limitations When Using STOMP

When using STOMP, the following limitations apply:

-

The broker currently does not support virtual hosting, which means the

hostheader inCONNECTframes are ignored. -

Message acknowledgements are not transactional. The

ACKframe cannot be part of a transaction, and it is ignored if itstransactionheader is set).

3.5.2. Providing IDs for STOMP Messages

When receiving STOMP messages through a JMS consumer or a QueueBrowser, the messages do not contain any JMS properties, for example JMSMessageID, by default. However, you can set a message ID on each incoming STOMP message by using a broker paramater.

Procedure

-

Open the configuration file

BROKER_INSTANCE_DIR/etc/broker.xml -

Set the

stompEnableMessageIdparameter totruefor theacceptorused for STOMP connections, as shown in the following example:

<acceptors> <acceptor name="stomp-acceptor">tcp://localhost:61613?protocols=STOMP;stompEnableMessageId=true</acceptor> ... </acceptors>

<acceptors>

<acceptor name="stomp-acceptor">tcp://localhost:61613?protocols=STOMP;stompEnableMessageId=true</acceptor>

...

</acceptors>

By using the stompEnableMessageId parameter, each STOMP message sent using this acceptor has an extra property added. The property key is amq-message-id and the value is a String representation of an internal message id prefixed with “STOMP”, as shown in the following example:

amq-message-id : STOMP12345

amq-message-id : STOMP12345

If stompEnableMessageId is not specified in the configuration, the default value is false.

3.5.3. Setting a Connection’s Time to Live (TTL)

STOMP clients must send a DISCONNECT frame before closing their connections. This allows the broker to close any server-side resources, such as sessions and consumers. However, if STOMP clients exit without sending a DISCONNECT frame, or if they fail, the broker will have no way of knowing immediately whether the client is still alive. STOMP connections therefore are configured to have a "Time to Live" (TTL) of 1 minute. The means that the broker stops the connection to the STOMP client if it has been idle for more than one minute.

Procedure

-

Open the configuration file

BROKER_INSTANCE_DIR/etc/broker.xml -

Add the

connectionTTLparameter to URI of theacceptorused for STOMP connections, as shown in the following example:

<acceptors> <acceptor name="stomp-acceptor">tcp://localhost:61613?protocols=STOMP;connectionTTL=20000</acceptor> ... </acceptors>

<acceptors>

<acceptor name="stomp-acceptor">tcp://localhost:61613?protocols=STOMP;connectionTTL=20000</acceptor>

...

</acceptors>

In the preceding example, any STOMP connection that using the stomp-acceptor will have its TTL set to 20 seconds.

Version 1.0 of the STOMP protocol does not contain any heartbeat frame. It is therefore the user’s responsibility to make sure data is sent within connection-ttl or the broker will assume the client is dead and clean up server-side resources. With version 1.1, you can use heart-beats to maintain the life cycle of STOMP connections.

Overriding the Broker’s Default Time to Live (TTL)

As noted, the default TTL for a STOMP connection is one minute. You can override this value by adding the connection-ttl-override attribute to the broker configuration.

Procedure

-

Open the configuration file

BROKER_INSTANCE_DIR/etc/broker.xml -

Add the

connection-ttl-overrideattribute and provide a value in milliseconds for the new default. It belongs inside the<core>stanza, as below.

In the preceding example, the default Time to Live (TTL) for a STOMP connection is set to 30000 milliseconds.

3.5.4. Sending and Consuming STOMP Messages from JMS

STOMP is mainly a text-orientated protocol. To make it simpler to interoperate with JMS, the STOMP implementation checks for presence of the content-length header to decide how to map a STOMP message to JMS.

| If you want a STOMP message to map to a … | The message should…. |

|---|---|

| JMS TextMessage |

Not include a |

| JMS BytesMessage |

Include a |

The same logic applies when mapping a JMS message to STOMP. A STOMP client can confirm the presence of the content-length header to determine the type of the message body (string or bytes).

See the STOMP specification for more information about message headers.

3.5.5. Mapping STOMP Destinations to AMQ Broker Addresses and Queues

When sending messages and subscribing, STOMP clients typically include a destination header. Destination names are string values, which are mapped to a destination on the broker. In AMQ Broker, these destinations are mapped to addresses and queues. See the STOMP specification for more information about the destination frame.

Take for example a STOMP client that sends the following message (headers and body included):

SEND destination:/my/stomp/queue hello queue a ^@

SEND

destination:/my/stomp/queue

hello queue a

^@

In this case, the broker will forward the message to any queues associated with the address /my/stomp/queue.

For example, when a STOMP client sends a message (by using a SEND frame), the specified destination is mapped to an address.

It works the same way when the client sends a SUBSCRIBE or UNSUBSCRIBE frame, but in this case AMQ Broker maps the destination to a queue.

SUBSCRIBE destination: /other/stomp/queue ack: client ^@

SUBSCRIBE

destination: /other/stomp/queue

ack: client

^@

In the preceding example, the broker will map the destination to the queue /other/stomp/queue.

Mapping STOMP Destinations to JMS Destinations

JMS destinations are also mapped to broker addresses and queues. If you want to use STOMP to send messages to JMS destinations, the STOMP destinations must follow the same convention:

Send or subscribe to a JMS Queue by prepending the queue name by

jms.queue.. For example, to send a message to theordersJMS Queue, the STOMP client must send the frame:SEND destination:jms.queue.orders hello queue orders ^@

SEND destination:jms.queue.orders hello queue orders ^@Copy to Clipboard Copied! Toggle word wrap Toggle overflow Send or subscribe to a JMS Topic by prepending the topic name by

jms.topic.. For example, to subscribe to thestocksJMS Topic, the STOMP client must send a frame similar to the following:SUBSCRIBE destination:jms.topic.stocks ^@

SUBSCRIBE destination:jms.topic.stocks ^@Copy to Clipboard Copied! Toggle word wrap Toggle overflow

Chapter 4. Configuring addresses and queues

4.1. Addresses, queues, and routing types

In AMQ Broker, the addressing model comprises three main concepts; addresses, queues, and routing types.

An address represents a messaging endpoint. Within the configuration, a typical address is given a unique name, one or more queues, and a routing type.

A queue is associated with an address. There can be multiple queues per address. Once an incoming message is matched to an address, the message is sent on to one or more of its queues, depending on the routing type configured. Queues can be configured to be automatically created and deleted. You can also configure an address (and hence its associated queues) as durable. Messages in a durable queue can survive a crash or restart of the broker, as long as the messages in the queue are also persistent. By contrast, messages in a non-durable queue do not survive a crash or restart of the broker, even if the messages themselves are persistent.

A routing type determines how messages are sent to the queues associated with an address. In AMQ Broker, you can configure an address with two different routing types, as shown in the table.

| If you want your messages routed to… | Use this routing type… |

|---|---|

| A single queue within the matching address, in a point-to-point manner |

|

| Every queue within the matching address, in a publish-subscribe manner |

|

An address must have at least one defined routing type.

It is possible to define more than one routing type per address, but this is not recommended.

If an address does have both routing types defined, and the client does not show a preference for either one, the broker defaults to the multicast routing type.

Additional resources

For more information about configuring:

-

Point-to-point messaging using the

anycastrouting type, see Section 4.3, “Configuring addresses for point-to-point messaging” -

Publish-subscribe messaging using the

multicastrouting type, see Section 4.4, “Configuring addresses for publish-subscribe messaging”

-

Point-to-point messaging using the

4.1.1. Address and queue naming requirements

Be aware of the following requirements when you configure addresses and queues:

To ensure that a client can connect to a queue, regardless of which wire protocol the client uses, your address and queue names should not include any of the following characters:

&::,?>-

The number sign (

#) and asterisk (*) characters are reserved for wildcard expressions and should not be used in address and queue names. For more information, see Section 4.2.1, “AMQ Broker wildcard syntax”. - Address and queue names should not include spaces.

-

To separate words in an address or queue name, use the configured delimiter character. The default delimiter character is a period (

.). For more information, see Section 4.2.1, “AMQ Broker wildcard syntax”.

4.2. Applying address settings to sets of addresses

In AMQ Broker, you can apply the configuration specified in an address-setting element to a set of addresses by using a wildcard expression to represent the matching address name.

The following sections describe how to use wildcard expressions.

4.2.1. AMQ Broker wildcard syntax

AMQ Broker uses a specific syntax for representing wildcards in address settings. Wildcards can also be used in security settings, and when creating consumers.

-

A wildcard expression contains words delimited by a period (

.). The number sign (

#) and asterisk (*) characters also have special meaning and can take the place of a word, as follows:- The number sign character means "match any sequence of zero or more words". Use this at the end of your expression.

- The asterisk character means "match a single word". Use this anywhere within your expression.

Matching is not done character by character, but at each delimiter boundary. For example, an address-setting element that is configured to match queues with my in their name would not match with a queue named myqueue.

When more than one address-setting element matches an address, the broker overlays configurations, using the configuration of the least specific match as the baseline. Literal expressions are more specific than wildcards, and an asterisk (*) is more specific than a number sign (#). For example, both my.destination and my.* match the address my.destination. In this case, the broker first applies the configuration found under my.*, since a wildcard expression is less specific than a literal. Next, the broker overlays the configuration of the my.destination address setting element, which overwrites any configuration shared with my.*. For example, given the following configuration, a queue associated with my.destination has max-delivery-attempts set to 3 and last-value-queue set to false.

The examples in the following table illustrate how wildcards are used to match a set of addresses.

| Example | Description |

|---|---|

|

|

The default |

|

|

Matches |

|

|

Matches |

|

|

Matches |

4.2.2. Configuring the broker wildcard syntax

The following procedure show how to customize the syntax used for wildcard addresses.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. Add a

<wildcard-addresses>section to the configuration, as in the example below.Copy to Clipboard Copied! Toggle word wrap Toggle overflow enabled-

When set to

true, instruct the broker to use your custom settings. delimiter-

Provide a custom character to use as the

delimiterinstead of the default, which is.. any-words-

The character provided as the value for

any-wordsis used to mean 'match any sequence of zero or more words' and will replace the default#. Use this character at the end of your expression. single-word-

The character provided as the value for

single-wordis used to mean 'match a single word' and will replaced the default*. Use this character anywhere within your expression.

4.3. Configuring addresses for point-to-point messaging

Point-to-point messaging is a common scenario in which a message sent by a producer has only one consumer. AMQP and JMS message producers and consumers can make use of point-to-point messaging queues, for example. To ensure that the queues associated with an address receive messages in a point-to-point manner, you define an anycast routing type for the given address element in your broker configuration.

When a message is received on an address using anycast, the broker locates the queue associated with the address and routes the message to it. A consumer might then request to consume messages from that queue. If multiple consumers connect to the same queue, messages are distributed between the consumers equally, provided that the consumers are equally able to handle them.

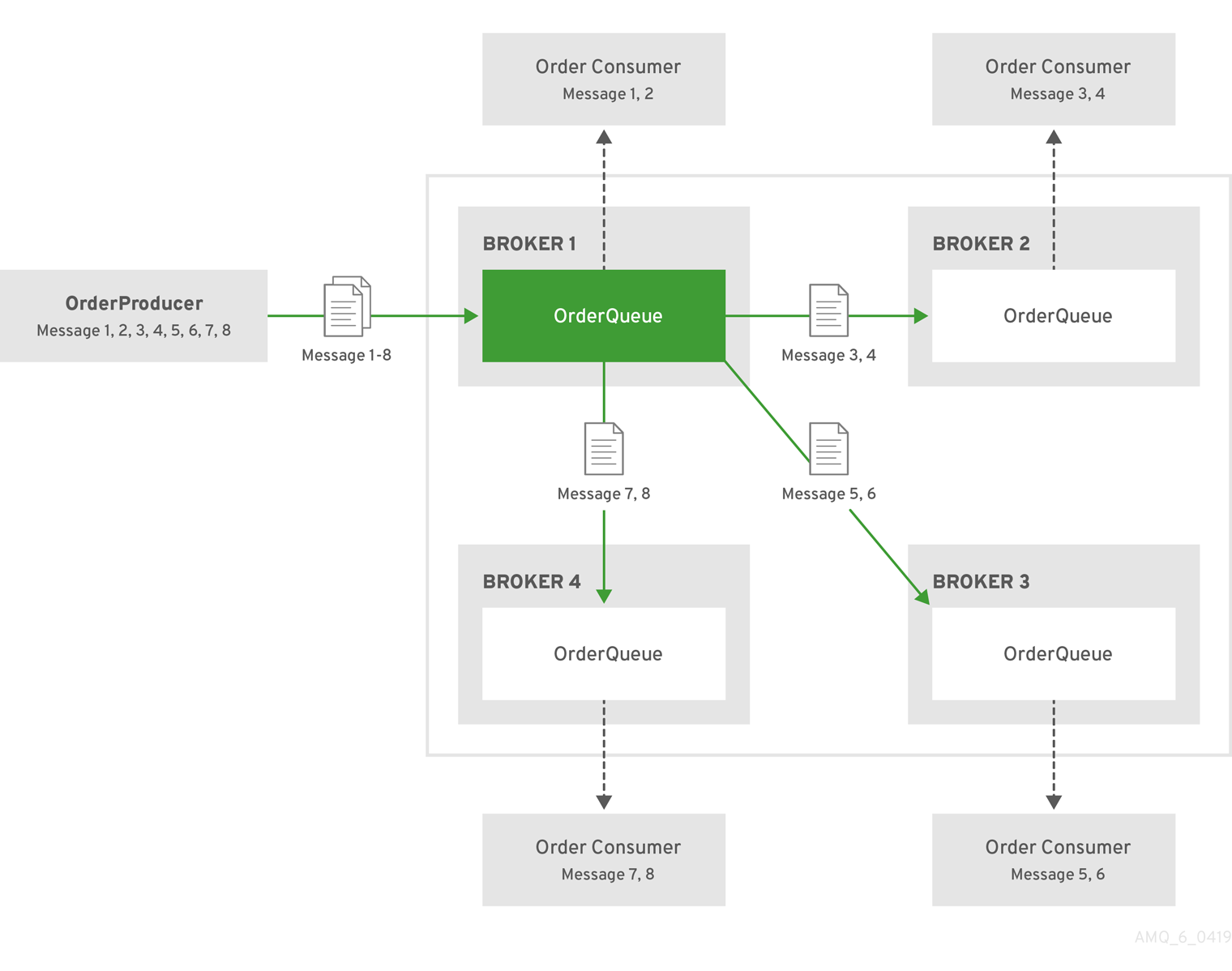

The following figure shows an example of point-to-point messaging.

4.3.1. Configuring basic point-to-point messaging

The following procedure shows how to configure an address with a single queue for point-to-point messaging.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. Wrap an

anycastconfiguration element around the chosenqueueelement of anaddress. Ensure that the values of thenameattribute for both theaddressandqueueelements are the same. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow

4.3.2. Configuring point-to-point messaging for multiple queues

You can define more than one queue on an address that uses an anycast routing type. The broker distributes messages sent to an anycast address evenly across all associated queues. By specifying a Fully Qualified Queue Name (FQQN), you can connect a client to a specific queue. If more than one consumer connects to the same queue, the broker distributes messages evenly between the consumers.

The following figure shows an example of point-to-point messaging using two queues.

The following procedure shows how to configure point-to-point messaging for an address that has multiple queues.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. Wrap an

anycastconfiguration element around thequeueelements in theaddresselement. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow

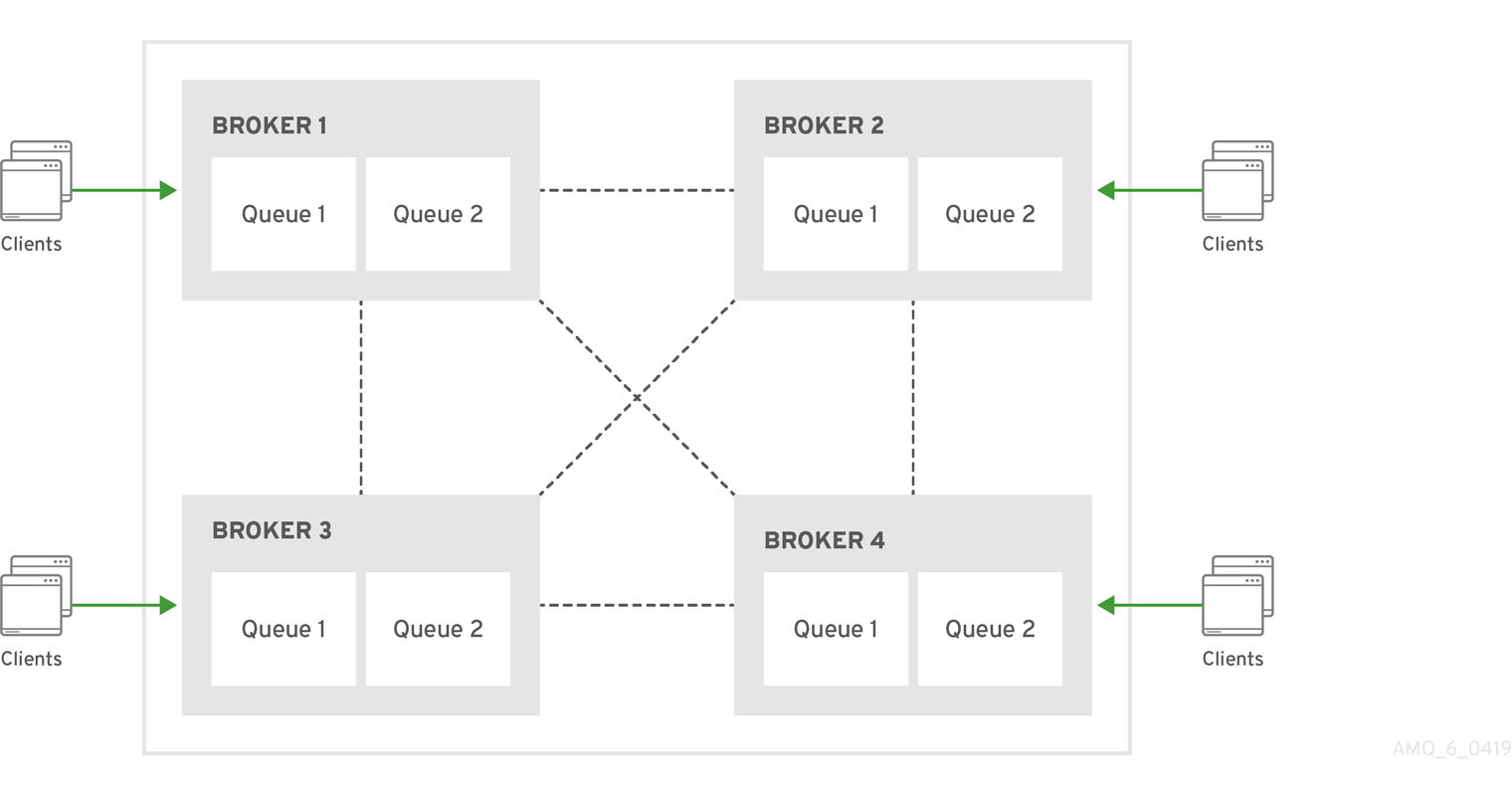

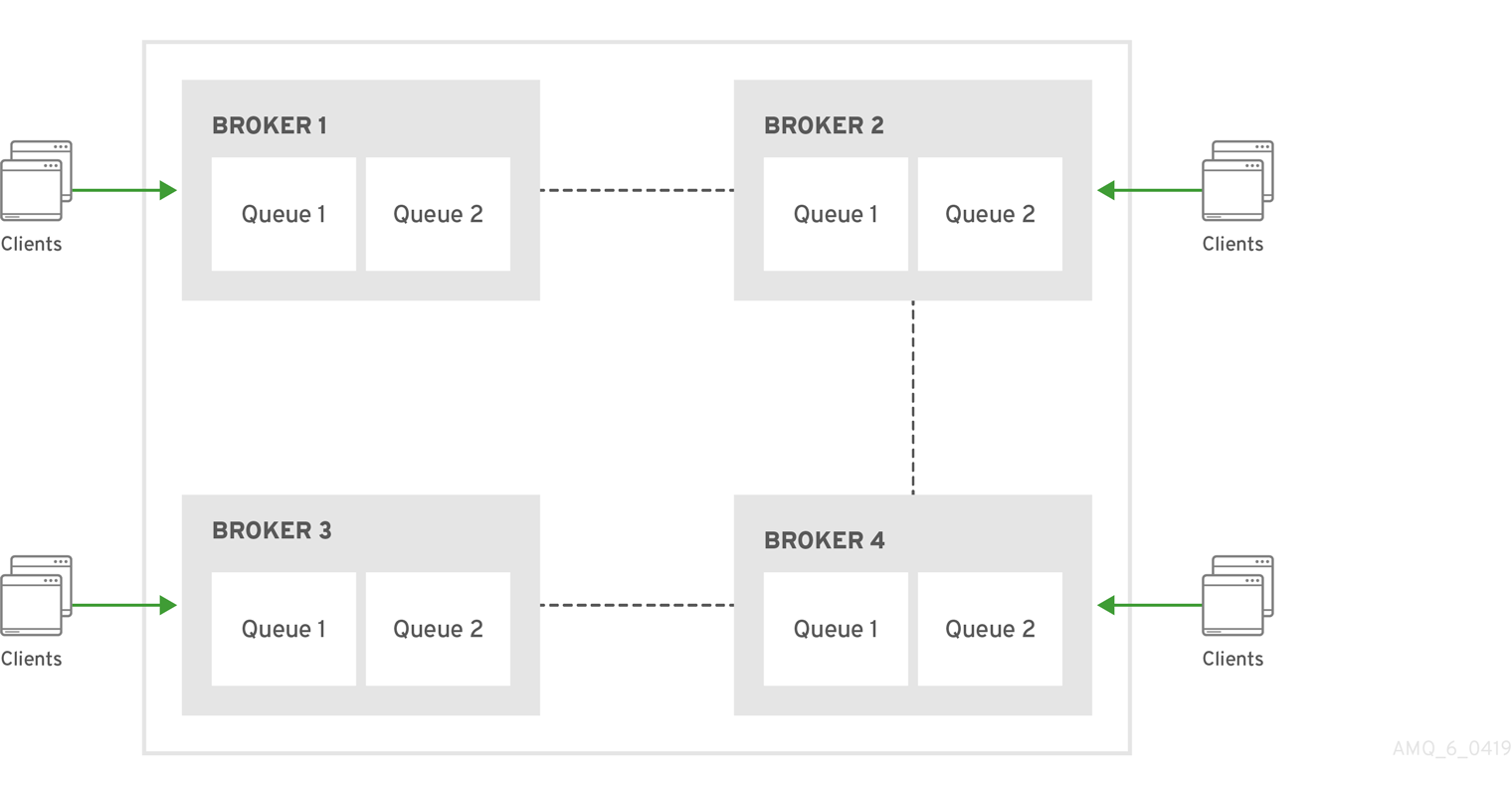

If you have a configuration such as that shown above mirrored across multiple brokers in a cluster, the cluster can load-balance point-to-point messaging in a way that is opaque to producers and consumers. The exact behavior depends on how the message load balancing policy is configured for the cluster.

Additional resources

For more information about:

- Specifying Fully Qualified Queue Names, see Section 4.9, “Specifying a fully qualified queue name”.

- How to configure message load balancing for a broker cluster, see Section 16.1.1, “How broker clusters balance message load”.

4.4. Configuring addresses for publish-subscribe messaging

In a publish-subscribe scenario, messages are sent to every consumer subscribed to an address. JMS topics and MQTT subscriptions are two examples of publish-subscribe messaging. To ensure that the queues associated with an address receive messages in a publish-subscribe manner, you define a multicast routing type for the given address element in your broker configuration.

When a message is received on an address with a multicast routing type, the broker routes a copy of the message to each queue associated with the address. To reduce the overhead of copying, each queue is sent only a reference to the message, and not a full copy.

The following figure shows an example of publish-subscribe messaging.

The following procedure shows how to configure an address for publish-subscribe messaging.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. Add an empty

multicastconfiguration element to the address.Copy to Clipboard Copied! Toggle word wrap Toggle overflow (Optional) Add one or more

queueelements to the address and wrap themulticastelement around them. This step is typically not needed since the broker automatically creates a queue for each subscription requested by a client.Copy to Clipboard Copied! Toggle word wrap Toggle overflow

4.5. Configuring an address for both point-to-point and publish-subscribe messaging

You can also configure an address with both point-to-point and publish-subscribe semantics.

Configuring an address that uses both point-to-point and publish-subscribe semantics is not typically recommended. However, it can be useful when you want, for example, a JMS queue named orders and a JMS topic also named orders. The different routing types make the addresses appear to be distinct for client connections. In this situation, messages sent by a JMS queue producer use the anycast routing type. Messages sent by a JMS topic producer use the multicast routing type. When a JMS topic consumer connects to the broker, it is attached to its own subscription queue. A JMS queue consumer, however, is attached to the anycast queue.

The following figure shows an example of point-to-point and publish-subscribe messaging used together.

The following procedure shows how to configure an address for both point-to-point and publish-subscribe messaging.

The behavior in this scenario is dependent on the protocol being used. For JMS, there is a clear distinction between topic and queue producers and consumers, which makes the logic straightforward. Other protocols like AMQP do not make this distinction. A message being sent via AMQP is routed by both anycast and multicast and consumers default to anycast. For more information, see Chapter 3, Network Connections: Protocols.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. Wrap an

anycastconfiguration element around thequeueelements in theaddresselement. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow Add an empty

multicastconfiguration element to the address.Copy to Clipboard Copied! Toggle word wrap Toggle overflow NoteTypically, the broker creates subscription queues on demand, so there is no need to list specific queue elements inside the

multicastelement.

4.6. Adding a routing type to an acceptor configuration

Normally, if a message is received by an address that uses both anycast and multicast, one of the anycast queues receives the message and all of the multicast queues. However, clients can specify a special prefix when connecting to an address to specify whether to connect using anycast or multicast. The prefixes are custom values that are designated using the anycastPrefix and multicastPrefix parameters within the URL of an acceptor in the broker configuration.

The following procedure shows how to configure prefixes for a given acceptor.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. For a given acceptor, to configure an

anycastprefix, addanycastPrefixto the configured URL. Set a custom value. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow Based on the preceding configuration, the acceptor is configured to use

anycast://for theanycastprefix. Client code can specifyanycast://<my.destination>/if the client needs to send a message to only one of theanycastqueues.For a given acceptor, to configure a

multicastprefix, addmulticastPrefixto the configured URL. Set a custom value. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow Based on the preceding configuration, the acceptor is configured to use

multicast://for themulticastprefix. Client code can specifymulticast://<my.destination>/if the client needs the message sent to only themulticastqueues.

4.7. Configuring subscription queues

In most cases, it is not necessary to manually create subscription queues because protocol managers create subscription queues automatically when clients first request to subscribe to an address. See Section 4.8.3, “Protocol managers and addresses” for more information. For durable subscriptions, the generated queue name is usually a concatenation of the client ID and the address.

The following sections show how to manually create subscription queues, when required.

4.7.1. Configuring a durable subscription queue

When a queue is configured as a durable subscription, the broker saves messages for any inactive subscribers and delivers them to the subscribers when they reconnect. Therefore, a client is guaranteed to receive each message delivered to the queue after subscribing to it.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. Add the

durableconfiguration element to a chosen queue. Set a value oftrue.Copy to Clipboard Copied! Toggle word wrap Toggle overflow NoteBecause queues are durable by default, including the

durableelement and setting the value totrueis not strictly necessary to create a durable queue. However, explicitly including the element enables you to later change the behavior of the queue to non-durable, if necessary.

4.7.3. Configuring a non-durable subscription queue

Non-durable subscriptions are usually managed by the relevant protocol manager, which creates and deletes temporary queues.

However, if you want to manually create a queue that behaves like a non-durable subscription queue, you can use the purge-on-no-consumers attribute on the queue. When purge-on-no-consumers is set to true, the queue does not start receiving messages until a consumer is connected. In addition, when the last consumer is disconnected from the queue, the queue is purged (that is, its messages are removed). The queue does not receive any further messages until a new consumer is connected to the queue.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. Add the

purge-on-no-consumersattribute to each chosen queue. Set a value oftrue.Copy to Clipboard Copied! Toggle word wrap Toggle overflow

4.8. Creating and deleting addresses and queues automatically

You can configure the broker to automatically create addresses and queues, and to delete them after they are no longer in use. This saves you from having to pre-configure each address before a client can connect to it.

4.8.1. Configuration options for automatic queue creation and deletion

The following table lists the configuration elements available when configuring an address-setting element to automatically create and delete queues and addresses.

If you want the address-setting to… | Add this configuration… |

|---|---|

| Create addresses when a client sends a message to or attempts to consume a message from a queue mapped to an address that does not exist. |

|

| Create a queue when a client sends a message to or attempts to consume a message from a queue. |

|

| Delete an automatically created address when it no longer has any queues. |

|

| Delete an automatically created queue when the queue has 0 consumers and 0 messages. |

|

| Use a specific routing type if the client does not specify one. |

|

4.8.2. Configuring automatic creation and deletion of addresses and queues

The following procedure shows how to configure automatic creation and deletion of addresses and queues.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. Configure an

address-settingfor automatic creation and deletion. The following example uses all of the configuration elements mentioned in the previous table.Copy to Clipboard Copied! Toggle word wrap Toggle overflow address-setting-

The configuration of the

address-settingelement is applied to any address or queue that matches the wildcard addressactivemq.#. auto-create-addresses- When a client requests to connect to an address that does not yet exist, the broker creates the address.

auto-delete-addresses- An automatically created address is deleted when it no longer has any queues associated with it.

auto-create-queues- When a client requests to connect to a queue that does not yet exist, the broker creates the queue.

auto-delete-queues- An automatically created queue is deleted when it no longer has any consumers or messages.

default-address-routing-type-

If the client does not specify a routing type when connecting, the broker uses

ANYCASTwhen delivering messages to an address. The default value isMULTICAST.

Additional resources

For more information about:

- The wildcard syntax that you can use when configuring addresses, see Section 4.2, “Applying address settings to sets of addresses”.

- Routing types, see Section 4.1, “Addresses, queues, and routing types”.

4.8.3. Protocol managers and addresses

A component called a protocol manager maps protocol-specific concepts to concepts used in the AMQ Broker address model; queues and routing types. In certain situations, a protocol manager might automatically create queues on the broker.

For example, when a client sends an MQTT subscription packet with the addresses /house/room1/lights and /house/room2/lights, the MQTT protocol manager understands that the two addresses require multicast semantics. Therefore, the protocol manager first looks to ensure that multicast is enabled for both addresses. If not, it attempts to dynamically create them. If successful, the protocol manager then creates special subscription queues for each subscription requested by the client.

Each protocol behaves slightly differently. The table below describes what typically happens when subscribe frames to various types of queue are requested.

| If the queue is of this type… | The typical action for a protocol manager is to… |

|---|---|

| Durable subscription queue |

Look for the appropriate address and ensures that The special name allows the protocol manager to quickly identify the required client subscription queues should the client disconnect and reconnect at a later date. When the client unsubscribes the queue is deleted. |

| Temporary subscription queue |

Look for the appropriate address and ensures that When the client disconnects the queue is deleted. |

| Point-to-point queue |

Look for the appropriate address and ensures that If the queue is auto created, it is automatically deleted once there are no consumers and no messages in it. |

4.9. Specifying a fully qualified queue name

Internally, the broker maps a client’s request for an address to specific queues. The broker decides on behalf of the client to which queues to send messages, or from which queue to receive messages. However, more advanced use cases might require that the client specifies a queue name directly. In these situations the client can use a fully qualified queue name (FQQN). An FQQN includes both the address name and the queue name, separated by a ::.

The following procedure shows how to specify an FQQN when connecting to an address with multiple queues.

Prerequisites

You have an address configured with two or more queues, as shown in the example below.

Copy to Clipboard Copied! Toggle word wrap Toggle overflow

Procedure

In the client code, use both the address name and the queue name when requesting a connection from the broker. Use two colons,

::, to separate the names. For example:String FQQN = "my.address::q1"; Queue q1 session.createQueue(FQQN); MessageConsumer consumer = session.createConsumer(q1);

String FQQN = "my.address::q1"; Queue q1 session.createQueue(FQQN); MessageConsumer consumer = session.createConsumer(q1);Copy to Clipboard Copied! Toggle word wrap Toggle overflow

4.10. Configuring sharded queues

A common pattern for processing of messages across a queue where only partial ordering is required is to use queue sharding. This means that you define an anycast address that acts as a single logical queue, but which is backed by many underlying physical queues.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. Add an

addresselement and set thenameattribute. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow Add the

anycastrouting type and include the desired number of sharded queues. In the example below, the queuesq1,q2, andq3are added asanycastdestinations.Copy to Clipboard Copied! Toggle word wrap Toggle overflow

Based on the preceding configuration, messages sent to my.sharded.address are distributed equally across q1, q2 and q3. Clients are able to connect directly to a specific physical queue when using a Fully Qualified Queue Name (FQQN). and receive messages sent to that specific queue only.

To tie particular messages to a particular queue, clients can specify a message group for each message. The broker routes grouped messages to the same queue, and one consumer processes them all.

Additional resources

For more information about:

- Fully Qualified Queue Names, see Section 4.9, “Specifying a fully qualified queue name”

- Message grouping, see Chapter 11, Message Grouping

4.11. Configuring last value queues

A last value queue is a type of queue that discards messages in the queue when a newer message with the same last value key value is placed in the queue. Through this behavior, last value queues retain only the last values for messages of the same key.

A simple use case for a last value queue is for monitoring stock prices, where only the latest value for a particular stock is of interest.

If a message without a configured last value key is sent to a last value queue, the broker handles this message as a "normal" message. Such messages are not purged from the queue when a new message with a configured last value key arrives.

You can configure last value queues individually, or for all of the queues associated with a set of addresses.

The following procedures show how to configure last value queues in these ways.

4.11.1. Configuring last value queues individually

The following procedure shows to configure last value queues individually.

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. For a given queue, add the

last-value-keykey and specify a custom value. For example:<address name="my.address"> <multicast> <queue name="prices1" last-value-key="stock_ticker"/> </multicast> </address><address name="my.address"> <multicast> <queue name="prices1" last-value-key="stock_ticker"/> </multicast> </address>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Alternatively, you can configure a last value queue that uses the default last value key name of

_AMQ_LVQ_NAME. To do this, add thelast-valuekey to a given queue. Set the value totrue. For example:<address name="my.address"> <multicast> <queue name="prices1" last-value="true"/> </multicast> </address><address name="my.address"> <multicast> <queue name="prices1" last-value="true"/> </multicast> </address>Copy to Clipboard Copied! Toggle word wrap Toggle overflow

4.11.2. Configuring last value queues for addresses

The following procedure shows to configure last value queues for an address or set of addresses.

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. In the

address-settingelement, for a matching address, adddefault-last-value-key. Specify a custom value. For example:<address-setting match="lastValue"> <default-last-value-key>stock_ticker</default-last-value-key> </address-setting>

<address-setting match="lastValue"> <default-last-value-key>stock_ticker</default-last-value-key> </address-setting>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Based on the preceding configuration, all queues associated with the

lastValueaddress use a last value key ofstock_ticker. By default, the value ofdefault-last-value-keyis not set.To configure last value queues for a set of addresses, you can specify an address wildcard. For example:

<address-setting match="lastValue.*"> <default-last-value-key>stock_ticker</default-last-value-key> </address-setting>

<address-setting match="lastValue.*"> <default-last-value-key>stock_ticker</default-last-value-key> </address-setting>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Alternatively, you can configure all queues associated with an address or set of addresses to use the default last value key name of

_AMQ_LVQ_NAME. To do this, adddefault-last-value-queueinstead ofdefault-last-value-key. Set the value totrue. For example:<address-setting match="lastValue"> <default-last-value-queue>true</default-last-value-queue> </address-setting>

<address-setting match="lastValue"> <default-last-value-queue>true</default-last-value-queue> </address-setting>Copy to Clipboard Copied! Toggle word wrap Toggle overflow

Additional resources

- For more information about the wildcard syntax that you can use when configuring addresses, see Section 4.2, “Applying address settings to sets of addresses”.

4.11.3. Example of last value queue behavior

This example shows the behavior of a last value queue.

In your broker.xml configuration file, suppose that you have added configuration that looks like the following:

<address name="my.address">

<multicast>

<queue name="prices1" last-value-key="stock_ticker"/>

</multicast>

</address>

<address name="my.address">

<multicast>

<queue name="prices1" last-value-key="stock_ticker"/>

</multicast>

</address>

The preceding configuration creates a queue called prices1, with a last value key of stock_ticker.

Now, suppose that a client sends two messages. Each message has the same value of ATN for the property stock_ticker. Each message has a different value for a property called stock_price. Each message is sent to the same queue, prices1.

TextMessage message = session.createTextMessage("First message with last value property set");

message.setStringProperty("stock_ticker", "ATN");

message.setStringProperty("stock_price", "36.83");

producer.send(message);

TextMessage message = session.createTextMessage("First message with last value property set");

message.setStringProperty("stock_ticker", "ATN");

message.setStringProperty("stock_price", "36.83");

producer.send(message);TextMessage message = session.createTextMessage("Second message with last value property set");

message.setStringProperty("stock_ticker", "ATN");

message.setStringProperty("stock_price", "37.02");

producer.send(message);

TextMessage message = session.createTextMessage("Second message with last value property set");

message.setStringProperty("stock_ticker", "ATN");

message.setStringProperty("stock_price", "37.02");

producer.send(message);

When two messages with the same value for the stock_ticker last value key (in this case, ATN) arrive to the prices1 queue, only the latest message remains in the queue, with the first message being purged. At the command line, you can enter the following lines to validate this behavior:

TextMessage messageReceived = (TextMessage)messageConsumer.receive(5000);

System.out.format("Received message: %s\n", messageReceived.getText());

TextMessage messageReceived = (TextMessage)messageConsumer.receive(5000);

System.out.format("Received message: %s\n", messageReceived.getText());In this example, the output you see is the second message, since both messages use the same value for the last value key and the second message was received in the queue after the first.

4.11.4. Enforcing non-destructive consumption for last value queues

When a consumer connects to a queue, the normal behavior is that messages sent to that consumer are acquired exclusively by the consumer. When the consumer acknowledges receipt of the messages, the broker removes the messages from the queue.

As an alternative to the normal consumption behaviour, you can configure a queue to enforce non-destructive consumption. In this case, when a queue sends a message to a consumer, the message can still be received by other consumers. In addition, the message remains in the queue even when a consumer has consumed it. When you enforce this non-destructive consumption behavior, the consumers are known as queue browsers.

Enforcing non-destructive consumption is a useful configuration for last value queues, because it ensures that the queue always holds the latest value for a particular last value key.

The following procedure shows how to enforce non-destructive consumption for a last value queue.

Prerequisites

You have already configured last-value queues individually, or for all queues associated with an address or set of addresses. For more information, see:

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. If you previously configured a queue individually as a last value queue, add the

non-destructivekey. Set the value totrue. For example:<address name="my.address"> <multicast> <queue name="orders1" last-value-key="stock_ticker" non-destructive="true" /> </multicast> </address><address name="my.address"> <multicast> <queue name="orders1" last-value-key="stock_ticker" non-destructive="true" /> </multicast> </address>Copy to Clipboard Copied! Toggle word wrap Toggle overflow If you previously configured an address or set of addresses for last value queues, add the

default-non-destructivekey. Set the value totrue. For example:<address-setting match="lastValue"> <default-last-value-key>stock_ticker </default-last-value-key> <default-non-destructive>true</default-non-destructive> </address-setting>

<address-setting match="lastValue"> <default-last-value-key>stock_ticker </default-last-value-key> <default-non-destructive>true</default-non-destructive> </address-setting>Copy to Clipboard Copied! Toggle word wrap Toggle overflow NoteBy default, the value of

default-non-destructiveisfalse.

4.12. Moving expired messages to an expiry address

For a queue other than a last value queue, if you have only non-destructive consumers, the broker never deletes messages from the queue, causing the queue size to increase over time. To prevent this unconstrained growth in queue size, you can configure when messages expire and specify an address to which the broker moves expired messages.

4.12.1. Configuring message expiry

The following procedure shows how to configure message expiry.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. In the

coreelement, set themessage-expiry-scan-periodto specify how frequently the broker scans for expired messages.<configuration ...> <core ...> ... <message-expiry-scan-period>1000</message-expiry-scan-period> ...<configuration ...> <core ...> ... <message-expiry-scan-period>1000</message-expiry-scan-period> ...Copy to Clipboard Copied! Toggle word wrap Toggle overflow Based on the preceding configuration, the broker scans queues for expired messages every 1000 milliseconds.

In the

address-settingelement for a matching address or set of addresses, specify an expiry address. Also, set a message expiration time. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow expiry-address-

Expiry address for the matching address or addresses. In the preceding example, the broker sends expired messages for the

stocksaddress to an expiry address calledExpiryAddress. expiry-delayExpiration time, in milliseconds, that the broker applies to messages that are using the default expiration time. By default, messages have an expiration time of

0, meaning that they don’t expire. For messages with an expiration time greater than the default,expiry-delayhas no effect.For example, suppose you set

expiry-delayon an address to10, as shown in the preceding example. If a message with the default expiration time of0arrives to a queue at this address, then the broker changes the expiration time of the message from0to10. However, if another message that is using an expiration time of20arrives, then its expiration time is unchanged. If you set expiry-delay to-1, this feature is disabled. By default,expiry-delayis set to-1.

Alternatively, instead of specifying a value for

expiry-delay, you can specify minimum and maximum expiry delay values. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow min-expiry-delay- Minimum expiration time, in milliseconds, that the broker applies to messages.

max-expiry-delayMaximum expiration time, in milliseconds, that the broker applies to messages.

The broker applies the values of

min-expiry-delayandmax-expiry-delayas follows:-

For a message with the default expiration time of

0, the broker sets the expiration time to the specified value ofmax-expiry-delay. If you have not specified a value formax-expiry-delay, the broker sets the expiration time to the specified value ofmin-expiry-delay. If you have not specified a value formin-expiry-delay, the broker does not change the expiration time of the message. -

For a message with an expiration time above the value of

max-expiry-delay, the broker sets the expiration time to the specified value ofmax-expiry-delay. -

For a message with an expiration time below the value of

min-expiry-delay, the broker sets the expiration time to the specified value ofmin-expiry-delay. -

For a message with an expiration between the values of

min-expiry-delayandmax-expiry-delay, the broker does not change the expiration time of the message. -

If you specify a value for

expiry-delay(that is, other than the default value of-1), this overrides any values that you specify formin-expiry-delayandmax-expiry-delay. -

The default value for both

min-expiry-delayandmax-expiry-delayis-1(that is, disabled).

-

For a message with the default expiration time of

In the

addresseselement of your configuration file, configure the address previously specified forexpiry-address. Define a queue at this address. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow The preceding example configuration associates an expiry queue,

ExpiryQueue, with the expiry address,ExpiryAddress.

4.12.2. Creating expiry resources automatically

A common use case is to segregate expired messages according to their original addresses. For example, you might choose to route expired messages from an address called stocks to an expiry queue called EXP.stocks. Likewise, you might route expired messages from an address called orders to an expiry queue called EXP.orders.

This type of routing pattern makes it easy to track, inspect, and administer expired messages. However, a pattern such as this is difficult to implement in an environment that uses mainly automatically-created addresses and queues. In this type of environment, an administrator does not want the extra effort required to manually create addresses and queues to hold expired messages.

As a solution, you can configure the broker to automatically create resources (that is, addressees and queues) to handle expired messages for a given address or set of addresses. The following procedure shows an example.

Prerequisites

- You have already configured an expiry address for a given address or set of addresses. For more information, see Section 4.12.1, “Configuring message expiry”.

Procedure

-

Open the

<broker-instance-dir>/etc/broker.xmlconfiguration file. Locate the

<address-setting>element that you previously added to the configuration file to define an expiry address for a matching address or set of addresses. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow In the

<address-setting>element, add configuration items that instruct the broker to automatically create expiry resources (that is, addresses and queues) and how to name these resources. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow auto-create-expiry-resourcesSpecifies whether the broker automatically creates an expiry address and queue to receive expired messages. The default value is

false.If the parameter value is set to

true, the broker automatically creates an<address>element that defines an expiry address and an associated expiry queue. The name value of the automatically-created<address>element matches the name value specified for<expiry-address>.The automatically-created expiry queue has the

multicastrouting type. By default, the broker names the expiry queue to match the address to which expired messages were originally sent, for example,stocks.The broker also defines a filter for the expiry queue that uses the

_AMQ_ORIG_ADDRESSproperty. This filter ensures that the expiry queue receives only messages sent to the corresponding original address.expiry-queue-prefixPrefix that the broker applies to the name of the automatically-created expiry queue. The default value is

EXP.When you define a prefix value or keep the default value, the name of the expiry queue is a concatenation of the prefix and the original address, for example,

EXP.stocks.expiry-queue-suffix- Suffix that the broker applies to the name of an automatically-created expiry queue. The default value is not defined (that is, the broker applies no suffix).

You can directly access the expiry queue using either the queue name by itself (for example, when using the AMQ Broker Core Protocol JMS client) or using the fully qualified queue name (for example, when using another JMS client).

Because the expiry address and queue are automatically created, any address settings related to deletion of automatically-created addresses and queues also apply to these expiry resources.

Additional resources