Thorntail Runtime Guide

Use Thorntail to develop small, stand-alone, microservice-based applications that run on OpenShift and on stand-alone RHEL

Abstract

Preface

This guide covers concepts as well as practical details needed by developers to use the Thorntail runtime.

Providing feedback on Red Hat documentation

We appreciate your feedback on our documentation. To provide feedback, you can highlight the text in a document and add comments.

This section explains how to submit feedback.

Prerequisites

- You are logged in to the Red Hat Customer Portal.

- In the Red Hat Customer Portal, view the document in Multi-page HTML format.

Procedure

To provide your feedback, perform the following steps:

Click the Feedback button in the top-right corner of the document to see existing feedback.

NoteThe feedback feature is enabled only in the Multi-page HTML format.

- Highlight the section of the document where you want to provide feedback.

Click the Add Feedback pop-up that appears near the highlighted text.

A text box appears in the feedback section on the right side of the page.

Enter your feedback in the text box and click Submit.

A documentation issue is created.

- To view the issue, click the issue tracker link in the feedback view.

Chapter 1. Introduction to Application Development with Thorntail

This section explains the basic concepts of application development with Red Hat runtimes. It also provides an overview about the Thorntail runtime.

1.1. Overview of Application Development with Red Hat Runtimes

Red Hat OpenShift is a container application platform, which provides a collection of cloud-native runtimes. You can use the runtimes to develop, build, and deploy Java or JavaScript applications on OpenShift.

Application development using Red Hat Runtimes for OpenShift includes:

- A collection of runtimes, such as, Eclipse Vert.x, Thorntail, Spring Boot, and so on, designed to run on OpenShift.

- A prescriptive approach to cloud-native development on OpenShift.

OpenShift helps you manage, secure, and automate the deployment and monitoring of your applications. You can break your business problems into smaller microservices and use OpenShift to deploy, monitor, and maintain the microservices. You can implement patterns such as circuit breaker, health check, and service discovery, in your applications.

Cloud-native development takes full advantage of cloud computing.

You can build, deploy, and manage your applications on:

- OpenShift Container Platform

- A private on-premise cloud by Red Hat.

- Red Hat Container Development Kit (Minishift)

- A local cloud that you can install and execute on your local machine. This functionality is provided by Red Hat Container Development Kit (CDK) or Minishift.

- Red Hat CodeReady Studio

- An integrated development environment (IDE) for developing, testing, and deploying applications.

To help you get started with application development, all the runtimes are available with example applications. These example applications are accessible from the Developer Launcher. You can use the examples as templates to create your applications. For more information on example applications, see the section Introduction to example applications.

This guide provides detailed information about the Thorntail runtime. For more information on other runtimes, see the relevant runtime documentation.

1.2. Application Development on Red Hat OpenShift using Developer Launcher

You can get started with developing cloud-native applications on OpenShift using Developer Launcher (developers.redhat.com/launch). It is a service provided by Red Hat.

Developer Launcher is a stand-alone project generator. You can use it to build and deploy applications on OpenShift instances, such as, OpenShift Container Platform or Minishift or CDK.

For more information on how to download and deploy applications on Developer Launcher, see the section Downloading and deploying applications using Developer Launcher.

1.3. Overview of Thorntail

Thorntail was formerly known as WildFly Swarm.

Thorntail deconstructs the features in JBoss EAP and allows them to be selectively reconstructed based on the needs of your application. This allows you to create microservices that run on a just-enough-appserver that supports the exact subset of APIs you need.

The Thorntail runtime enables you to run Thorntail applications and services in OpenShift while providing all the advantages and conveniences of the OpenShift platform such as rolling updates, service discovery, and canary deployments. OpenShift also makes it easier for your applications to implement common microservice patterns such as externalized configuration, health check, circuit breaker, and failover.

Thorntail has a product version of its runtime that runs on OpenShift and is provided as part of a Red Hat subscription.

1.3.1. Supported Architectures by Thorntail

Thorntail supports the following architectures:

- x86_64 (AMD64)

- IBM Z (s390x) in the OpenShift environment

Different images are supported for different architectures. The example codes in this guide demonstrate the commands for x86_64 architecture. If you are using other architectures, specify the relevant image name in the commands. Refer to the section Supported Java images for Thorntail for more information about the image names.

1.3.2. Introduction to example applications

Examples are working applications that demonstrate how to build cloud native applications and services. They demonstrate prescriptive architectures, design patterns, tools, and best practices that should be used when you develop your applications. The example applications can be used as templates to create your cloud-native microservices. You can update and redeploy these examples using the deployment process explained in this guide.

The examples implement Microservice patterns such as:

- Creating REST APIs

- Interoperating with a database

- Implementing the health check pattern

- Externalizing the configuration of your applications to make them more secure and easier to scale

You can use the examples applications as:

- Working demonstration of the technology

- Learning tool or a sandbox to understand how to develop applications for your project

- Starting point for updating or extending your own use case

Each example application is implemented in one or more runtimes. For example, the REST API Level 0 example is available for the following runtimes:

The subsequent sections explain the example applications implemented for the Thorntail runtime.

You can download and deploy all the example applications on:

- x86_64 architecture - The example applications in this guide demonstrate how to build and deploy example applications on x86_64 architecture.

s390x architecture - To deploy the example applications on OpenShift environments provisioned on IBM Z infrastructure, specify the relevant IBM Z image name in the commands. Refer to the section Supported Java images for Thorntail for more information about the image names.

Some of the example applications also require other products, such as Red Hat Data Grid to demonstrate the workflows. In this case, you must also change the image names of these products to their relevant IBM Z image names in the YAML file of the example applications.

Chapter 2. Downloading and deploying applications using Developer Launcher

This section shows you how to download and deploy example applications provided with the runtimes. The example applications are available on Developer Launcher.

2.1. Working with Developer Launcher

Developer Launcher (developers.redhat.com/launch) runs on OpenShift. When you deploy example applications, the Developer Launcher guides you through the process of:

- Selecting a runtime

- Building and executing the application

Based on your selection, Developer Launcher generates a custom project. You can either download a ZIP version of the project or directly launch the application on an OpenShift Online instance.

When you deploy your application on OpenShift using Developer Launcher, the Source-to-Image (S2I) build process is used. This build process handles all the configuration, build, and deployment steps that are required to run your application on OpenShift.

2.2. Downloading the example applications using Developer Launcher

Red Hat provides example applications that help you get started with the Thorntail runtime. These examples are available on Developer Launcher (developers.redhat.com/launch).

You can download the example applications, build, and deploy them. This section explains how to download example applications.

You can use the example applications as templates to create your own cloud-native applications.

Procedure

- Go to Developer Launcher (developers.redhat.com/launch).

- Click Start.

- Click Deploy an Example Application.

- Click Select an Example to see the list of example applications available with the runtime.

- Select a runtime.

Select an example application.

NoteSome example applications are available for multiple runtimes. If you have not selected a runtime in the previous step, you can select a runtime from the list of available runtimes in the example application.

- Select the release version for the runtime. You can choose from the community or product releases listed for the runtime.

- Click Save.

Click Download to download the example application.

A ZIP file containing the source and documentation files is downloaded.

2.3. Deploying an example application on OpenShift Container Platform or CDK (Minishift)

You can deploy the example application to either OpenShift Container Platform or CDK (Minishift). Depending on where you want to deploy your application use the relevant web console for authentication.

Prerequisites

- An example application project created using Developer Launcher.

- If you are deploying your application on OpenShift Container Platform, you must have access to the OpenShift Container Platform web console.

- If you are deploying your application on CDK (Minishift), you must have access to the CDK (Minishift) web console.

-

occommand-line client installed.

Procedure

- Download the example application.

You can deploy the example application on OpenShift Container Platform or CDK (Minishift) using the

occommand-line client.You must authenticate the client using the token provided by the web console. Depending on where you want to deploy your application, use either the OpenShift Container Platform web console or CDK (Minishift) web console. Perform the following steps to get the authenticate the client:

- Login to the web console.

- Click the question mark icon, which is in the upper-right corner of the web console.

- Select Command Line Tools from the list.

-

Copy the

oc logincommand. Paste the command in a terminal to authenticate your

ocCLI client with your account.$ oc login OPENSHIFT_URL --token=MYTOKEN

Extract the contents of the ZIP file.

$ unzip MY_APPLICATION_NAME.zipCreate a new project in OpenShift.

$ oc new-project MY_PROJECT_NAME-

Navigate to the root directory of

MY_APPLICATION_NAME. Deploy your example application using Maven.

$ mvn clean fabric8:deploy -PopenshiftNOTE: Some example applications may require additional setups. To build and deploy the example applications, follow the instructions provided in the

READMEfile.Check the status of your application and ensure your pod is running.

$ oc get pods -w NAME READY STATUS RESTARTS AGE MY_APP_NAME-1-aaaaa 1/1 Running 0 58s MY_APP_NAME-s2i-1-build 0/1 Completed 0 2mThe

MY_APP_NAME-1-aaaaapod has the statusRunningafter it is fully deployed and started. The pod name of your application may be different. The numeric value in the pod name is incremented for every new build. The letters at the end are generated when the pod is created.After your example application is deployed and started, determine its route.

Example Route Information

$ oc get routes NAME HOST/PORT PATH SERVICES PORT TERMINATION MY_APP_NAME MY_APP_NAME-MY_PROJECT_NAME.OPENSHIFT_HOSTNAME MY_APP_NAME 8080The route information of a pod gives you the base URL which you can use to access it. In this example, you can use

http://MY_APP_NAME-MY_PROJECT_NAME.OPENSHIFT_HOSTNAMEas the base URL to access the application.

Chapter 3. Developing and deploying Thorntail application

In addition to using an example, you can create new Thorntail applications from scratch and deploy them to OpenShift.

3.1. Creating an application from scratch

Creating a simple Thorntail–based application with a REST endpoint from scratch.

Prerequisites

- OpenJDK 8 or OpenJDK 11 installed

- Maven 3.5.0 installed

Procedure

Create a directory for the application and navigate to it:

$ mkdir myApp $ cd myAppWe recommend you start tracking the directory contents with Git. For more information, see Git tutorial.

In the directory, create a

pom.xmlfile with the following content.<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>com.example</groupId> <artifactId>restful-endpoint</artifactId> <version>1.0.0-SNAPSHOT</version> <packaging>war</packaging> <name>Thorntail Example</name> <properties> <version.thorntail>{version}</version.thorntail> <maven.compiler.source>1.8</maven.compiler.source> <maven.compiler.target>1.8</maven.compiler.target> <failOnMissingWebXml>false</failOnMissingWebXml> <project.build.sourceEncoding>UTF-8</project.build.sourceEncoding> <!-- Specify the JDK builder image used to build your application. --> <fabric8.generator.from>registry.access.redhat.com/redhat-openjdk-18/openjdk18-openshift:latest</fabric8.generator.from> </properties> <dependencyManagement> <dependencies> <dependency> <groupId>io.thorntail</groupId> <artifactId>bom</artifactId> <version>${version.thorntail}</version> <scope>import</scope> <type>pom</type> </dependency> </dependencies> </dependencyManagement> <dependencies> <dependency> <groupId>io.thorntail</groupId> <artifactId>jaxrs</artifactId> </dependency> </dependencies> <build> <finalName>restful-endpoint</finalName> <plugins> <plugin> <groupId>io.thorntail</groupId> <artifactId>thorntail-maven-plugin</artifactId> <version>${version.thorntail}</version> <executions> <execution> <goals> <goal>package</goal> </goals> </execution> </executions> </plugin> </plugins> </build> <!-- Specify the repositories containing RHOAR artifacts --> <repositories> <repository> <id>redhat-ga</id> <name>Red Hat GA Repository</name> <url>https://maven.repository.redhat.com/ga/</url> </repository> </repositories> <pluginRepositories> <pluginRepository> <id>redhat-ga</id> <name>Red Hat GA Repository</name> <url>https://maven.repository.redhat.com/ga/</url> </pluginRepository> </pluginRepositories> </project>Create a directory structure for your application:

mkdir -p src/main/java/com/example/restIn the

src/main/java/com/example/restdirectory, create the source files:HelloWorldEndpoint.javawith the class that serves the HTTP endpoint:package com.example.rest; import javax.ws.rs.Path; import javax.ws.rs.core.Response; import javax.ws.rs.GET; import javax.ws.rs.Produces; @Path("/hello") public class HelloWorldEndpoint { @GET @Produces("text/plain") public Response doGet() { return Response.ok("Hello from Thorntail!").build(); } }RestApplication.javawith the application context:package com.example.rest; import javax.ws.rs.core.Application; import javax.ws.rs.ApplicationPath; @ApplicationPath("/rest") public class RestApplication extends Application { }

Execute the application using Maven:

$ mvn thorntail:run

Results

Accessing the http://localhost:8080/rest/hello URL in your browser should return the following message:

Hello from Thorntail!After finishing the procedure, there should be a directory on your hard drive with the following contents:

myApp

├── pom.xml

└── src

└── main

└── java

└── com

└── example

└── rest

├── HelloWorldEndpoint.java

└── RestApplication.java3.2. Deploying Thorntail application to OpenShift

To deploy your Thorntail application to OpenShift, configure the pom.xml file in your application and then use the Fabric8 Maven plugin. You can specify a Java image by replacing the fabric8.generator.from URL in the pom.xml file.

The images are available in the Red Hat Ecosystem Catalog.

<fabric8.generator.from>IMAGE_NAME</fabric8.generator.from>For example, the Java image for RHEL 7 with OpenJDK 8 is specified as:

<fabric8.generator.from>registry.access.redhat.com/redhat-openjdk-18/openjdk18-openshift:latest</fabric8.generator.from>3.2.1. Supported Java images for Thorntail

Thorntail is certified and tested with various Java images that are available for different operating systems. For example, Java images are available for RHEL 7 and RHEL 8 with OpenJDK 8 or OpenJDK 11. Similar images are available on IBM Z.

You require Docker or podman authentication to access the RHEL 8 images in the Red Hat Ecosystem Catalog.

The following table lists the images supported by Thorntail for different architectures. It also provides links to the images available in the Red Hat Ecosystem Catalog. The image pages contain authentication procedures required to access the RHEL 8 images.

3.2.1.1. Images on x86_64 architecture

| OS | Java | Red Hat Ecosystem Catalog |

|---|---|---|

| RHEL 7 | OpenJDK 8 | |

| RHEL 7 | OpenJDK 11 | |

| RHEL 8 | OpenJDK 8 | |

| RHEL 8 | OpenJDK 11 |

The use of a RHEL 8-based container on a RHEL 7 host, for example with OpenShift 3 or OpenShift 4, has limited support. For more information, see the Red Hat Enterprise Linux Container Compatibility Matrix.

3.2.1.2. Images on s390x (IBM Z) architecture

| OS | Java | Red Hat Ecosystem Catalog |

|---|---|---|

| RHEL 8 | Eclipse OpenJ9 11 |

The use of a RHEL 8-based container on a RHEL 7 host, for example with OpenShift 3 or OpenShift 4, has limited support. For more information, see the Red Hat Enterprise Linux Container Compatibility Matrix.

3.2.2. Preparing Thorntail application for OpenShift deployment

For deploying your Thorntail application to OpenShift, it must contain:

-

Launcher profile information in the application’s

pom.xmlfile.

In the following procedure, a profile with Fabric8 Maven plugin is used for building and deploying the application to OpenShift.

Prerequisites

- Maven is installed.

- Docker or podman authentication into Red Hat Ecosystem Catalog to access RHEL 8 images.

Procedure

Add the following content to the

pom.xmlfile in the application root directory:... <profiles> <profile> <id>openshift</id> <build> <plugins> <plugin> <groupId>io.fabric8</groupId> <artifactId>fabric8-maven-plugin</artifactId> <version>4.3.0</version> <executions> <execution> <goals> <goal>resource</goal> <goal>build</goal> </goals> </execution> </executions> </plugin> </plugins> </build> </profile> </profiles>Replace the

fabric8.generator.fromproperty in thepom.xmlfile to specify relevant Java image based on your architecture.x86_64 architecture

RHEL 7 with OpenJDK 8

<fabric8.generator.from>registry.access.redhat.com/redhat-openjdk-18/openjdk18-openshift:latest</fabric8.generator.from>RHEL 7 with OpenJDK 11

<fabric8.generator.from>registry.access.redhat.com/openjdk/openjdk-11-rhel7:latest</fabric8.generator.from>RHEL 8 with OpenJDK 8

<fabric8.generator.from>registry.redhat.io/openjdk/openjdk-8-rhel8:latest</fabric8.generator.from>RHEL 8 with OpenJDK 11

<fabric8.generator.from>registry.redhat.io/openjdk/openjdk-11-rhel8:latest</fabric8.generator.from>

s390x (IBM Z) architecture

RHEL 8 with Eclipse OpenJ9 11

<fabric8.generator.from>registry.access.redhat.com/openj9/openj9-11-rhel8:latest</fabric8.generator.from>

3.2.3. Deploying Thorntail application to OpenShift using Fabric8 Maven plugin

To deploy your Thorntail application to OpenShift, you must perform the following:

- Log in to your OpenShift instance.

- Deploy the application to the OpenShift instance.

Prerequisites

-

ocCLI client installed. - Maven installed.

Procedure

Log in to your OpenShift instance with the

occlient.$ oc login ...Create a new project in the OpenShift instance.

$ oc new-project MY_PROJECT_NAMEDeploy the application to OpenShift using Maven from the application’s root directory. The root directory of an application contains the

pom.xmlfile.$ mvn clean fabric8:deploy -PopenshiftThis command uses the Fabric8 Maven Plugin to launch the S2I process on OpenShift and start the pod.

Verify the deployment.

Check the status of your application and ensure your pod is running.

$ oc get pods -w NAME READY STATUS RESTARTS AGE MY_APP_NAME-1-aaaaa 1/1 Running 0 58s MY_APP_NAME-s2i-1-build 0/1 Completed 0 2mThe

MY_APP_NAME-1-aaaaapod should have a status ofRunningonce it is fully deployed and started.Your specific pod name will vary.

Determine the route for the pod.

Example Route Information

$ oc get routes NAME HOST/PORT PATH SERVICES PORT TERMINATION MY_APP_NAME MY_APP_NAME-MY_PROJECT_NAME.OPENSHIFT_HOSTNAME MY_APP_NAME 8080The route information of a pod gives you the base URL which you use to access it.

In this example,

http://MY_APP_NAME-MY_PROJECT_NAME.OPENSHIFT_HOSTNAMEis the base URL to access the application.Verify that your application is running in OpenShift.

$ curl http://MY_APP_NAME-MY_PROJECT_NAME.OPENSHIFT_HOSTNAME/rest/hello Hello from Thorntail!

3.3. Deploying Thorntail application to stand-alone Red Hat Enterprise Linux

To deploy your Thorntail application to stand-alone Red Hat Enterprise Linux, configure the pom.xml file in the application, package it using Maven and deploy using the java -jar command.

Prerequisites

- RHEL 7 or RHEL 8 installed.

3.3.1. Preparing Thorntail application for stand-alone Red Hat Enterprise Linux deployment

For deploying your Thorntail application to stand-alone Red Hat Enterprise Linux, you must first package the application using Maven.

Prerequisites

- Maven installed.

Procedure

Add the following content to the

pom.xmlfile in the application’s root directory:... <build> <plugins> <plugin> <groupId>io.thorntail</groupId> <artifactId>thorntail-maven-plugin</artifactId> <version>${version.thorntail}</version> <executions> <execution> <goals> <goal>package</goal> </goals> </execution> </executions> </plugin> </plugins> </build> ...Package your application using Maven.

$ mvn clean packageThe resulting JAR file is in the

targetdirectory.

3.3.2. Deploying Thorntail application to stand-alone Red Hat Enterprise Linux using jar

To deploy your Thorntail application to stand-alone Red Hat Enterprise Linux, use java -jar command.

Prerequisites

- RHEL 7 or RHEL 8 installed.

- OpenJDK 8 or OpenJDK 11 installed.

- A JAR file with the application.

Procedure

Deploy the JAR file with the application.

$ java -jar my-app-thorntail.jarVerify the deployment.

Use

curlor your browser to verify your application is running athttp://localhost:8080:$ curl http://localhost:8080

Chapter 4. Using Thorntail Maven Plugin

Thorntail provides a Maven plugin to accomplish most of the work of building uberjar packages.

4.1. Thorntail Maven plugin general usage

The Thorntail Maven plugin is used like any other Maven plugin, that is through editing the pom.xml file in your application and adding a <plugin> section:

<plugin>

<groupId>io.thorntail</groupId>

<artifactId>thorntail-maven-plugin</artifactId>

<version>${version.thorntail}</version>

<executions>

...

<execution>

<goals>

...

</goals>

<configuration>

...

</configuration>

</execution>

</executions>

</plugin>4.2. Thorntail Maven plugin goals

The Thorntail Maven plugin provides several goals:

- package

- Creates the executable package (see Section 9.2, “Creating an uberjar”).

- run

-

Executes your application in the Maven process. The application is stopped if the Maven build is interrupted, for example when you press

Ctrl + C.

- start and multistart

-

Executes your application in a forked process. Generally, it is only useful for running integration tests using a plugin, such as the

maven-failsafe-plugin. Themultistartvariant allows starting multiple Thorntail–built applications using Maven GAVs to support complex testing scenarios. - stop

Stops any previously started applications.

NoteThe

stopgoal can only stop applications that were started in the same Maven execution.

4.3. Thorntail Maven plugin configuration options

The Thorntail Maven plugin accepts the following configuration options:

- bundleDependencies

If true, dependencies are included in the

-thorntail.jarfile. Otherwise, they are resolved from$M2_REPOor from the network at runtime.Expand Property

thorntail.bundleDependenciesDefault

true

Used by

package- debug

The port to use for debugging. If set, the Thorntail process suspends on start and opens a debugger on this port.

Expand Property

thorntail.debug.portDefault

Used by

run,start- environment

A properties-style list of environment variables to use when executing the application.

Expand Property

none

Default

Used by

multistart,run,start- environmentFile

A

.propertiesfile with environment variables to use when executing the application.Expand Property

thorntail.environmentFileDefault

Used by

multistart,run,start- filterWebInfLib

If true, the plugin removes artifacts that are provided by the Thorntail runtime from the

WEB-INF/libdirectory of the project WAR file. Otherwise, the contents ofWEB-INF/libremain untouched.Expand Property

thorntail.filterWebInfLibDefault

true

Used by

package

This option is generally not necessary and is provided as a workaround in case the Thorntail plugin removes a dependency required by the application. When false, it is the responsibility of the developer to ensure that the WEB-INF/lib directory does not contain Thorntail artifacts that would compromise the functionality of the application. One way to do that is to avoid expressing dependencies on fractions and rely on auto-detection or by explicitly listing any required extra fractions using the fractions option.

- fractionDetectMode

The mode of fraction detection. The available options are:

-

when_missing: Runs only when no Thorntail dependencies are found. -

force: Always run, and merge any detected fractions with the existing dependencies. Existing dependencies take precedence. -

never: Disable fraction detection.

Expand Property

thorntail.detect.modeDefault

when_missingUsed by

package,run,start-

- fractions

A list of extra fractions to include when using auto-detection. It is useful for fractions that cannot be detected or for user-provided fractions.

Use one of the following formats when specifying a fraction: *

group:artifact:version*artifact:version*artifactIf no group is provided,

io.thorntailis assumed.If no version is provided, the version is taken from the Thorntail BOM for the version of the plugin you are using.

If the value starts with a

!character, the corresponding auto-detected fraction is not installed (unless it is a dependency of any other fraction). In the following example the Undertow fraction is not installed even though your application references a class from thejavax.servletpackage:<plugin> <groupId>io.thorntail</groupId> <artifactId>thorntail-maven-plugin</artifactId> <version>${version.thorntail}</version> <executions> <execution> <goals> <goal>package</goal> </goals> <configuration> <fractions> <fraction>!undertow</fraction> </fractions> </configuration> </execution> </executions> </plugin>Expand Property

none

Default

Used by

package,run,start- jvmArguments

A list of

<jvmArgument>elements specifying additional JVM arguments (such as-Xmx32m).Expand Property

thorntail.jvmArgumentsDefault

Used by

multistart,run,start- modules

Paths to a directory containing additional module definitions.

Expand Property

none

Default

Used by

package,run,start- processes

Application configurations to start (see multistart).

Expand Property

none

Default

Used by

multistart- properties

See Section 4.4, “Thorntail Maven plugin configuration properties”.

Expand Property

none

Default

Used by

package,run,start- propertiesFile

See Section 4.4, “Thorntail Maven plugin configuration properties”.

Expand Property

thorntail.propertiesFileDefault

Used by

package,run,start- stderrFile

Specifies the path to a file where the

stderroutput is stored instead of being sent to thestderroutput of the launching process.Expand Property

thorntail.stderrDefault

Used by

run,start- stdoutFile

Specifies the path to a file where the

stdoutoutput is stored instead of being sent to thestdoutoutput of the launching process.Expand Property

thorntail.stdoutDefault

Used by

run,start- useUberJar

If specified, the

-thorntail.jarfile located in${project.build.directory}is used. This JAR is not created automatically, so make sure you execute thepackagegoal first.Expand Property

thorntail.useUberJarDefault

Used by

run,start

4.4. Thorntail Maven plugin configuration properties

Properties can be used to configure the execution and affect the packaging or running of your application.

If you add a <properties> or <propertiesFile> section to the <configuration> of the plugin, the properties are used when executing your application using the mvn thorntail:run command. In addition to that, the same properties are added to your myapp-thorntail.jar file to affect subsequent executions of the uberjar. Any properties loaded from the <propertiesFile> override identically-named properties in the <properties> section.

Any properties added to the uberjar can be overridden at runtime using the traditional -Dname=value mechanism of the java binary, or using the YAML-based configuration files.

Only the following properties are added to the uberjar at package time:

The properties specified outside of the

<properties>section or the<propertiesFile>, whose path starts with one of the following:-

jboss. -

wildfly. -

thorntail. -

swarm. -

maven.

-

-

The properties that override a property specified in the

<properties>section or the<propertiesFile>.

Chapter 5. Using Thorntail fractions

5.1. Fractions

Thorntail is defined by an unbounded set of capabilities. Each piece of functionality is called a fraction. Some fractions provide only access to APIs, such as JAX-RS or CDI; other fractions provide higher-level capabilities, such as integration with RHSSO (Keycloak).

The typical method for consuming Thorntail fractions is through Maven coordinates, which you add to the pom.xml file in your application. The functionality the fraction provides is then packaged with your application (see Section 9.2, “Creating an uberjar”).

To enable easier consumption of Thorntail fractions, a bill of materials (BOM) is available. For more information, see Chapter 6, Using a BOM.

5.2. Auto-detecting fractions

Migrating existing legacy applications to benefit from Thorntail is simple when using fraction auto-detection. If you enable the Thorntail Maven plugin in your application, Thorntail detects which APIs you use, and includes the appropriate fractions at build time.

By default, Thorntail only auto-detects if you do not specify any fractions explicitly. This behavior is controlled by the fractionDetectMode property. For more information, see the Maven plugin configuration reference.

For example, consider your pom.xml already specifies the API .jar file for a specification such as JAX-RS:

<dependencies>

<dependency>

<groupId>org.jboss.spec.javax.ws.rs</groupId>

<artifactId>jboss-jaxrs-api_2.1_spec</artifactId>

<version>${version.jaxrs-api}</version>

<scope>provided</scope>

</dependency>

</dependencies>

Thorntail then includes the jaxrs fraction during the build automatically.

Prerequisites

-

An existing Maven-based application with a

pom.xmlfile.

Procedure

Add the

thorntail-maven-pluginto yourpom.xmlin a<plugin>block, with an<execution>specifying thepackagegoal.<plugins> <plugin> <groupId>io.thorntail</groupId> <artifactId>thorntail-maven-plugin</artifactId> <version>${version.thorntail}</version> <executions> <execution> <id>package</id> <goals> <goal>package</goal> </goals> </execution> </executions> </plugin> </plugins>Perform a normal Maven build:

$ mvn packageExecute the resulting uberjar:

$ java -jar ./target/myapp-thorntail.jar

Related Information

5.3. Using explicit fractions

When writing your application from scratch, ensure it compiles correctly and uses the correct version of APIs by explicitly selecting which fractions are packaged with it.

Prerequisites

-

A Maven-based application with a

pom.xmlfile.

Procedure

-

Add the BOM to your

pom.xml. For more information, see Chapter 6, Using a BOM. -

Add the Thorntail Maven plugin to your

pom.xml. For more information, see Section 9.2, “Creating an uberjar”. Add one or more dependencies on Thorntail fractions to the

pom.xmlfile:<dependencies> <dependency> <groupId>io.thorntail</groupId> <artifactId>jaxrs</artifactId> </dependency> </dependencies>Perform a normal Maven build:

$ mvn packageExecute the resulting uberjar:

$ java -jar ./target/myapp-thorntail.jar

Related Information

Chapter 6. Using a BOM

To explicitly specify the Thorntail fractions your application uses, instead of relying on auto-detection, Thorntail includes a set of BOMs (bill of materials) which you can use instead of having to track and update Maven artifact versions in several places.

6.1. Thorntail product BOM types

Thorntail is described as just enough app-server, which means it consists of multiple pieces. Your application includes only the pieces it needs.

When using the Thorntail product, you can specify the following Maven BOMs:

- bom

- All fractions available in the product.

- bom-certified

-

All community fractions that have been certified against the product. Any fraction used from

bom-certifiedis unsupported.

6.2. Specifying a BOM for in your application

Importing a specific BOM in the pom.xml file in your application allows you to track all your application dependencies in one place.

One shortcoming of importing a Maven BOM import is that it does not handle the configuration on the level of <pluginManagement>. When you use the Thorntail Maven Plugin, you must specify the version of the plugin to use.

Thanks to the property you use in your pom.xml file, you can easily ensure that your plugin usage matches the release of Thorntail that you are targeting with the BOM import.

<plugins>

<plugin>

<groupId>io.thorntail</groupId>

<artifactId>thorntail-maven-plugin</artifactId>

<version>${version.thorntail}</version>

...

</plugin>

</plugins>Prerequisites

-

Your application as a Maven-based project with a

pom.xmlfile.

Procedure

Include a

bomartifact in yourpom.xml.Tracking the current version of Thorntail through a property in your

pom.xmlis recommended.<properties> <version.thorntail>{version}</version.thorntail> </properties>Import BOMs in the

<dependencyManagement>section. Specify the<type>pom</type>and<scope>import</scope>.<dependencyManagement> <dependencies> <dependency> <groupId>io.thorntail</groupId> <artifactId>bom</artifactId> <version>${version.thorntail}</version> <type>pom</type> <scope>import</scope> </dependency> </dependencies> </dependencyManagement>In the example above, the

bomartifact is imported to ensure that only stable fractions are available.By including the BOMs of your choice in the

<dependencyManagement>section, you have:- Provided version-management for any Thorntail artifacts you subsequently choose to use.

-

Provided support to your IDE for auto-completing known artifacts when you edit your the

pom.xmlfile of your application.

Include Thorntail dependencies.

Even though you imported the Thorntail BOMs in the

<dependencyManagement>section, your application still has no dependencies on Thorntail artifacts.To include Thorntail artifact dependencies based on the capabilities your application, enter the relevant artifacts as

<dependency>elements:NoteYou do not have to specify the version of the artifacts because the BOM imported in

<dependencyManagement>handles that.<dependencies> <dependency> <groupId>io.thorntail</groupId> <artifactId>jaxrs</artifactId> </dependency> <dependency> <groupId>io.thorntail</groupId> <artifactId>datasources</artifactId> </dependency> </dependencies>In the example above, we include explicit dependencies on the

jaxrsanddatasourcesfractions, which will provide transitive inclusion of others, for exampleundertow.

Chapter 7. Accessing logs on your Thorntail application

7.1. Enabling logging

Each Thorntail fraction is dependent on the Logging fraction, which means that if you use any Thorntail fraction in your application, logging is automatically enabled on the INFO level and higher. If you want to enable logging explicitly, add the Logging fraction to the POM file of your application.

Prerequisites

- A Maven-based application

Procedure

Find the

<dependencies>section in thepom.xmlfile of your application. Verify it contains the following coordinates. If it does not, add them.<dependency> <groupId>io.thorntail</groupId> <artifactId>logging</artifactId> </dependency>If you want to log messages of a level other than

INFO, launch the application while specifying thethorntail.loggingsystem property:$ mvn thorntail:run -Dthorntail.logging=FINESee the

org.wildfly.swarm.config.logging.Levelclass for the list of available levels.

7.2. Logging to a file

In addition to the console logging, you can save the logs of your application in a file. Typically, deployments use rotating logs to save disk space.

In Thorntail, logging is configured using system properties. Even though it is possible to use the -Dproperty=value syntax when launching your application, it is strongly recommended to configure file logging using the YAML profile files.

Prerequisites

- A Maven-based application with the logging fraction enabled. For more information, see Section 7.1, “Enabling logging”.

- A writable directory on your file system.

Procedure

Open a YAML profile file of your choice. If you do not know which one to use, open

project-defaults.ymlin thesrc/main/resourcesdirectory in your application sources. In the YAML file, add the following section:thorntail: logging:Configure a formatter (optional). The following formatters are configured by default:

- PATTERN

- Useful for logging into a file.

- COLOR_PATTERN

- Color output. Useful for logging to the console.

To configure a custom formatter, add a new formatter with a pattern of your choice in the

loggingsection. In this example, it is calledLOG_FORMATTER:pattern-formatters: LOG_FORMATTER: pattern: "%p [%c] %s%e%n"Configure a file handler to use with the loggers. This example shows the configuration of a periodic rotating file handler. Under

logging, add aperiodic-rotating-file-handlerssection with a new handler.periodic-rotating-file-handlers: FILE: file: path: target/MY_APP_NAME.log suffix: .yyyy-MM-dd named-formatter: LOG_FORMATTER level: INFOHere, a new handler named

FILEis created, logging events of theINFOlevel and higher. It logs in thetargetdirectory, and each log file is namedMY_APP_NAME.logwith the suffix.yyyy-MM-dd. Thorntail automatically parses the log rotation period from the suffix, so ensure you use a format compatible with thejava.text.SimpleDateFormatclass.Configure the root logger.

The root logger is by default configured to use the

CONSOLEhandler only. Underlogging, add aroot-loggersection with the handlers you wish to use:root-logger: handlers: - CONSOLE - FILEHere, the

FILEhandler from the previous step is used, along with the default console handler.

Below, you can see the complete logging configuration section:

The logging section in a YAML configuration profile

thorntail:

logging:

pattern-formatters:

LOG_FORMATTER:

pattern: "CUSTOM LOG FORMAT %p [%c] %s%e%n"

periodic-rotating-file-handlers:

FILE:

file:

path: path/to/your/file.log

suffix: .yyyy-MM-dd

named-formatter: LOG_FORMATTER

root-logger:

handlers:

- CONSOLE

- FILEChapter 8. Configuring a Thorntail application

You can configure numerous options with applications built with Thorntail. For most options, reasonable defaults are already applied, so you do not have to change any options unless you explicitly want to.

This reference is a complete list of all configurable items, grouped by the fraction that introduces them. Only the items related to the fractions that your application uses are relevant to you.

8.1. System properties

Using system properties for configuring your application is advantageous for experimenting, debugging, and other short-term activities.

8.1.1. Commonly used system properties

This is a non-exhaustive list of system properties you are likely to use in your application:

General system properties

- thorntail.bind.address

The interface to bind servers

Expand Default

0.0.0.0

- thorntail.port.offset

The global port adjustment

Expand Default

0

- thorntail.context.path

The context path for the deployed application

Expand Default

/

- thorntail.http.port

The port for the HTTP server

Expand Default

8080

- thorntail.https.port

The port for the HTTPS server

Expand Default

8443

- thorntail.debug.port

If provided, the Thorntail process will pause for debugging on the given port.

This option is only available when running an Arquillian test or starting the application using the

mvn thorntail:runcommand, not when executing a JAR file. The JAR file execution requires normal Java debug agent parameters.Expand Default

Datasource-related system properties

With JDBC driver autodetection, use the following properties to configure the datasource:

- thorntail.ds.name

The name of the datasource

Expand Default

ExampleDS

- thorntail.ds.username

The user name to access the database

Expand Default

driver-specific

- thorntail.ds.password

The password to access the database

Expand Default

driver-specific

- thorntail.ds.connection.url

The JDBC connection URL

Expand Default

driver-specific

For a full set of available properties, see the documentation for each fraction and the javadocs on class SwarmProperties.java

8.1.2. Application configuration using system properties

Configuration properties are presented using dotted notation, and are suitable for use as Java system property names, which your application consumes through explicit setting in the Maven plugin configuration, or through the command line when your application is being executed.

Any property that has the KEY parameter in its name indicates that you must supply a key or identifier in that segment of the name.

Configuration of items with the KEY parameter

A configuration item documented as thorntail.undertow.servers.KEY.default-host indicates that the configuration applies to a particular named server.

In practical usage, the property would be, for example, thorntail.undertow.servers.default.default-host for a server known as default.

8.1.3. Setting system properties using the Maven plugin

Setting properties using the Maven plugin is useful for temporarily changing a configuration item for a single execution of your Thorntail application.

Even though the configuration in the POM file of your application is persistent, it is not recommended to use it for long-term configuration of your application. Instead, use the YAML configuration files.

If you want to set explicit configuration values as defaults through the Maven plugin, add a <properties> section to the <configuration> block of the plugin in the pom.xml file in your application.

Prerequisites

- Your Thorntail-based application with a POM file

Procedure

- In the POM file of your application, locate the configuration you want to modify.

Insert a block with configuration of the

io.thorntail:thorntail-maven-pluginartifact, for example:<build> <plugins> <plugin> <groupId>io.thorntail</groupId> <artifactId>thorntail-maven-plugin</artifactId> <version>{version}</version> <configuration> <properties> <thorntail.bind.address>127.0.0.1</thorntail.bind.address> <java.net.preferIPv4Stack>true</java.net.preferIPv4Stack> </properties> </configuration> </plugin> </plugins> </build>In the example above, the

thorntail.bind.addressproperty is set to127.0.0.1and thejava.net.preferIPv4Stackproperty is set totrue.

8.1.4. Setting system properties using the command line

Setting properties using the Maven plugin is useful for temporarily changing a configuration item for a single execution of your Thorntail application.

You can customize an environment-specific setting or experiment with configuration items before setting them in a YAML configuration file.

To use a property on the command line, pass it as a command-line parameter to the Java binary:

Prerequisites

- A JAR file with your application

Procedure

- In a terminal application, navigate to the directory with your application JAR file.

Execute your application JAR file using the Java binary and specify the property and its value:

$ java -Dthorntail.bind.address=127.0.0.1 -jar myapp-thorntail.jarIn this example, you assing the value

127.0.0.1to the property calledthorntail.bind.address.

8.1.5. Specifying JDBC drivers for hollow JARs

When executing a hollow JAR, you can specify a JDBC Driver JAR using the thorntail.classpath property. This way, you do not need to package the driver in the hollow JAR.

The thorntail.classpath property accepts one or more paths to JAR files separated by ; (a semicolon). The specified JAR files are added to the classpath of the application.

Prerequisites

- A JAR file with your application

Procedure

- In a terminal application, navigate to the directory with your application JAR file.

Execute your application JAR file using the Java binary and specify the JDBC driver:

$ java -Dthorntail.classpath=./h2-1.4.196.jar -jar microprofile-jpa-hollow-thorntail.jar example-jpa-jaxrs-cdi.war

8.2. Environment Variables

Use environment variables to configure your application or override values stored in YAML files.

8.2.1. Application configuration using environment variables

Use environment variables to configure your application in various deployments—especially in a containerized environment, such as Docker.

Example 8.1. Environment variables configuration

A property documented as thorntail.undertow.servers.KEY.default-host translates to the following environment variable (substituting the KEY segment with the default identifier):

export THORNTAIL.UNDERTOW.SERVERS.DEFAULT.DEFAULT_DASH_HOST=<myhost>

Unlike other configuration options, properties defined as environment variables in Linux-based containers do not allow defining non-alphanumeric characters like dot (.), dash/hyphen (-) or any other characters not in the [A-Za-z0-9_] range. Many configuration properties in Thorntail contain these characters, so you must follow these rules when defining the environment variables in the following environments:

Linux-based container rules

-

It is a naming convention that all environment properties are defined using uppercase letters. For example, define the

serveraddressproperty asSERVERADDRESS. -

All the dot (.) characters must be replaced with underscore (_). For example, define the

thorntail.bind.address=127.0.0.1property asTHORNTAIL_BIND_ADDRESS=127.0.0.1. -

All dash/hyphen (-) characters must be replaced with the

_DASH_string. For example, define thethorntail.data-sources.foo.url=<url>property asTHORNTAIL_DATA_DASH_SOURCES_FOO_URL=<url>. -

If the property name contains underscores, all underscore (_) characters must be replaced with the

_UNDERSCORE_string. For example, define thethorntail.data_sources.foo.url=<url>property asTHORNTAIL_DATA_UNDERSCORE_SOURCES_FOO_URL=<url>.

Example 8.2. An example data source configuration

| System property |

|

| Env. variable |

|

| System property |

|

| Env. variable |

|

| System property |

|

| Env. variable |

|

| System property |

|

| Env. variable |

|

| System property |

|

| Env. variable |

|

8.3. YAML files

YAML is the preferred method for long-term configuration of your application. In addition to that, the YAML strategy provides grouping of environment-specific configurations, which you can selectively enable when executing the application.

8.3.1. The general YAML file format

The Thorntail configuration item names correspond to the YAML configuration structure. That is, if you want to write a piece of YAML configuration for some configuration property, you just need to separate the configuration property around the . characters.

Example 8.3. YAML configuration

For example, a configuration item documented as thorntail.undertow.servers.KEY.default-host translates to the following YAML structure, substituting the KEY segment with the default identifier:

thorntail:

undertow:

servers:

default:

default-host: <myhost>

This simple rule applies always, there are no exceptions and no additional delimiters. Most notably, some Eclipse MicroProfile specifications define configuration properties that use / as a delimiter, because the . character is used in fully qualified class names. When writing the YAML configuration, it is still required to split around . and not around /.

Example 8.4. YAML configuration for MicroProfile Rest Client

For example, MicroProfile Rest Client specifies that you can configure URL of an external service with a configuration property named com.example.demo.client.Service/mp-rest/url. This translates to the following YAML:

com:

example:

demo:

client:

Service/mp-rest/url: http://localhost:8080/...8.3.2. Default Thorntail YAML Files

By default, Thorntail looks up permanent configuration in files with specific names to put on the classpath.

project-defaults.yml

If the original .war file with your application contains a file named project-defaults.yml, that file represents the defaults applied over the absolute defaults that Thorntail provides.

Other default file names

In addition to the project-defaults.yml file, you can provide specific configuration files using the -S <name> command-line option. The specified files are loaded, in the order you provided them, before project-defaults.yml. A name provided in the -S <name> argument specifies the project-<name>.yml file on your classpath.

Example 8.5. Specifying configuration files on the command line

Consider the following application execution:

$ java -jar myapp-thorntail.jar -Stesting -ScloudThe following YAML files are loaded, in this order. The first file containing a given configuration item takes precedence over others:

-

project-testing.yml -

project-cloud.yml -

project-defaults.yml

8.3.3. Non-default Thorntail YAML configuration files

In addition to default configuration files for your Thorntail-based application, you can specify YAML files outside of your application. Use the -s <path> command-line option to load the desired file.

Both the -s <path> and -S <name> command-line options can be used at the same time, but files specified using the -s <path> option take precedence over YAML files contained in your application.

Example 8.6. Specifying configuration files inside and outside of the application

Consider the following application execution:

$ java -jar myapp-thorntail.jar -s/home/app/openshift.yml -Scloud -StestingThe following YAML files are loaded, in this order:

-

/home/app/openshift.yml -

project-cloud.yml -

project-testing.yml -

project-defaults.yml

The same order of preference is applied even if you invoke the application as follows:

$ java -jar myapp-thorntail.jar -Scloud -Stesting -s/home/app/openshift.ymlRelated information

Chapter 9. Packaging your application

This sections contains information about packaging your Thorntail–based application for deployment and execution.

9.1. Packaging Types

When using Thorntail, there are the following ways to package your runtime and application, depending on how you intend to use and deploy it:

9.1.1. Uberjar

An uberjar is a single Java .jar file that includes everything you need to execute your application. This means both the runtime components you have selected—you can understand that as the app server—along with the application components (your .war file).

An uberjar is useful for many continuous integration and continuous deployment (CI/CD) pipeline styles, in which a single executable binary artifact is produced and moved through the testing, validation, and production environments in your organization.

The names of the uberjars that Thorntail produces include the name of your application and the -thorntail.jar suffix.

An uberjar can be executed like any executable JAR:

$ java -jar myapp-thorntail.jar9.1.2. Hollow JAR

A hollow JAR is similar to an uberjar, but includes only the runtime components, and does not include your application code.

A hollow jar is suitable for deployment processes that involve Linux containers such as Docker. When using containers, place the runtime components in a container image lower in the image hierarchy—which means it changes less often—so that the higher layer which contains only your application code can be rebuilt more quickly.

The names of the hollow JARs that Thorntail produces include the name of your application, and the -hollow-thorntail.jar suffix. You must package the .war file of your application separately in order to benefit from the hollow JAR.

Using hollow JARs has certain limitations:

To enable Thorntail to autodetect a JDBC driver, you must add the JAR with the driver to the

thorntail.classpathsystem property, for example:$ java -Dthorntail.classpath=./h2-1.4.196.jar -jar my-hollow-thorntail.jar myApp.warYAML configuration files in your application are not automatically applied. You must specify them manually, for example:

$ java -jar my-hollow-thorntail.jar myApp.war -s ./project-defaults.yml

When executing the hollow JAR, provide the application .war file as an argument to the Java binary:

$ java -jar myapp-hollow-thorntail.jar myapp.war9.1.2.1. Pre-Built Hollow JARs

Thorntail ships the following pre-built hollow JARs:

- web

- Functionality focused on web technologies

- microprofile

- Functionality defined by all Eclipse MicroProfile specifications

The hollow JARs are available under the following coordinates:

<dependency>

<groupId>io.thorntail.servers</groupId>

<artifactId>[web|microprofile]</artifactId>

</dependency>9.2. Creating an uberjar

One method of packaging an application for execution with Thorntail is as an uberjar.

Prerequisites

-

A Maven-based application with a

pom.xmlfile.

Procedure

Add the

thorntail-maven-pluginto yourpom.xmlin a<plugin>block, with an<execution>specifying thepackagegoal.<plugins> <plugin> <groupId>io.thorntail</groupId> <artifactId>thorntail-maven-plugin</artifactId> <version>${version.thorntail}</version> <executions> <execution> <id>package</id> <goals> <goal>package</goal> </goals> </execution> </executions> </plugin> </plugins>Perform a normal Maven build:

$ mvn packageExecute the resulting uberjar:

$ java -jar ./target/myapp-thorntail.jar

Chapter 10. Testing your application

10.1. Testing in a container

Using Arquillian, you have the capability of injecting unit tests into a running application. This allows you to verify your application is behaving correctly. There is an adapter for Thorntail that makes Arquillian-based testing work well with Thorntail–based applications.

Prerequisites

-

A Maven-based application with a

pom.xmlfile.

Procedure

Include the Thorntail BOM as described in Chapter 6, Using a BOM:

<dependencyManagement> <dependencies> <dependency> <groupId>io.thorntail</groupId> <artifactId>bom</artifactId> <version>${version.thorntail}</version> <type>pom</type> <scope>import</scope> </dependency> </dependencies> </dependencyManagement>Reference the

io.thorntail:arquillianartifact in yourpom.xmlfile with the<scope>set totest:<dependencies> <dependency> <groupId>io.thorntail</groupId> <artifactId>arquillian</artifactId> <scope>test</scope> </dependency> </dependencies>Create your Application.

Write your application as you normally would; use any default

project-defaults.ymlfiles you need to configure it.thorntail: datasources: data-sources: MyDS: driver-name: myh2 connection-url: jdbc:h2:mem:test;DB_CLOSE_DELAY=-1;DB_CLOSE_ON_EXIT=FALSE user-name: sa password: sa jdbc-drivers: myh2: driver-module-name: com.h2database.h2 driver-xa-datasource-class-name: org.h2.jdbcx.JdbcDataSourceCreate a test class.

NoteCreating an Arquillian test before Thorntail existed usually involved programatically creating

Archivedue to the fact that applications were larger, and the aim was to test a single component in isolation.package org.wildfly.swarm.howto.incontainer; public class InContainerTest { }Create a deployment.

In the context of microservices, the entire application represents one small microservice component.

Use the

@DefaultDeploymentannotation to automatically create the deployment of the entire application. The@DefaultDeploymentannotation defaults to creating a.warfile, which is not applicable in this case because Undertow is not involved in this process.Apply the

@DefaultDeploymentannotation at the class level of a JUnit test, along with the@RunWith(Arquillian.class)annotation:@RunWith(Arquillian.class) @DefaultDeployment(type = DefaultDeployment.Type.JAR) public class InContainerTest {Using the

@DefaultDeploymentannotation provided by Arquillian integration with Thorntail means you should not use the Arquillian@Deploymentannotation on static methods that return anArchive.The

@DefaultDeploymentannotation inspects the package of the test:package org.wildfly.swarm.howto.incontainer;From the package, it uses heuristics to include all of your other application classes in the same package or deeper in the Java packaging hierarchy.

Even though using the

@DefaultDeploymentannotation allows you to write tests that only create a default deployment for sub-packages of your application, it also prevents you from placing tests in an unrelated package, for example:package org.mycorp.myapp.test;Write your test code.

Write an Arquillian-type of test as you normally would, including using Arquillian facilities to gain access to internal running components.

In the example below, Arquillian is used to inject the

InitialContextof the running application into an instance member of the test case:@ArquillianResource InitialContext context;That means the test method itself can use that

InitialContextto ensure the Datasource you configured usingproject-defaults.ymlis live and available:@Test public void testDataSourceIsBound() throws Exception { DataSource ds = (DataSource) context.lookup("java:jboss/datasources/MyDS"); assertNotNull( ds ); }Run the tests.

Because Arquillian provides an integration with JUnit, you can execute your test classes using Maven or your IDE:

$ mvn installNoteIn many IDEs, execute a test class by right-clicking it and selecting

Run.

Chapter 11. Debugging your application

This sections contains information about debugging your Thorntail–based application both in local and remote deployments.

11.1. Remote debugging

To remotely debug an application, you must first configure it to start in a debugging mode, and then attach a debugger to it.

11.1.1. Starting your application locally in debugging mode

One of the ways of debugging a Maven-based project is manually launching the application while specifying a debugging port, and subsequently connecting a remote debugger to that port. This method is applicable at least to the following deployments of the application:

-

When launching the application manually using the

mvn thorntail:rungoal. -

When starting the application without waiting for it to exit using the

mvn thorntail:startgoal. This is useful especially when performing integration testing. - When using the Arquillian adapter for Thorntail.

Prerequisites

- A Maven-based application

Procedure

- In a console, navigate to the directory with your application.

Launch your application and specify the debug port using the

-Dthorntail.debug.portargument:$ mvn thorntail:run -Dthorntail.debug.port=$PORT_NUMBERHere,

$PORT_NUMBERis an unused port number of your choice. Remember this number for the remote debugger configuration.

11.1.2. Starting an uberjar in debugging mode

If you chose to package your application as a Thorntail uberjar, debug it by executing it with the following parameters.

Prerequisites

- An uberjar with your application

Procedure

- In a console, navigate to the directory with the uberjar.

Execute the uberjar with the following parameters. Ensure that all the parameters are specified before the name of the uberjar on the line.

$ java -agentlib:jdwp=transport=dt_socket,server=y,suspend=n,address=$PORT_NUMBER -jar $UBERJAR_FILENAME$PORT_NUMBERis an unused port number of your choice. Remember this number for the remote debugger configuration.If you want the JVM to pause and wait for remote debugger connection before it starts the application, change

suspendtoy.

Additional resources

11.1.3. Starting your application on OpenShift in debugging mode

To debug your Thorntail-based application on OpenShift remotely, you must set the JAVA_DEBUG environment variable inside the container to true and configure port forwarding so that you can connect to your application from a remote debugger.

Prerequisites

- Your application running on OpenShift.

-

The

ocbinary installed on your machine. -

The ability to execute the

oc port-forwardcommand in your target OpenShift environment.

Procedure

Using the

occommand, list the available deployment configurations:$ oc get dcSet the

JAVA_DEBUGenvironment variable in the deployment configuration of your application totrue, which configures the JVM to open the port number5005for debugging. For example:$ oc set env dc/MY_APP_NAME JAVA_DEBUG=trueRedeploy the application if it is not set to redeploy automatically on configuration change. For example:

$ oc rollout latest dc/MY_APP_NAMEConfigure port forwarding from your local machine to the application pod:

List the currently running pods and find one containing your application:

$ oc get pod NAME READY STATUS RESTARTS AGE MY_APP_NAME-3-1xrsp 0/1 Running 0 6s ...Configure port forwarding:

$ oc port-forward MY_APP_NAME-3-1xrsp $LOCAL_PORT_NUMBER:5005Here,

$LOCAL_PORT_NUMBERis an unused port number of your choice on your local machine. Remember this number for the remote debugger configuration.

When you are done debugging, unset the

JAVA_DEBUGenvironment variable in your application pod. For example:$ oc set env dc/MY_APP_NAME JAVA_DEBUG-

Additional resources

You can also set the JAVA_DEBUG_PORT environment variable if you want to change the debug port from the default, which is 5005.

11.1.4. Attaching a remote debugger to the application

When your application is configured for debugging, attach a remote debugger of your choice to it. In this guide, Red Hat CodeReady Studio is covered, but the procedure is similar when using other programs.

Prerequisites

- The application running either locally or on OpenShift, and configured for debugging.

- The port number that your application is listening on for debugging.

- Red Hat CodeReady Studio installed on your machine. You can download it from the Red Hat CodeReady Studio download page.

Procedure

- Start Red Hat CodeReady Studio.

Create a new debug configuration for your application:

- Click Run→Debug Configurations.

- In the list of configurations, double-click Remote Java application. This creates a new remote debugging configuration.

- Enter a suitable name for the configuration in the Name field.

- Enter the path to the directory with your application into the Project field. You can use the Browse… button for convenience.

- Set the Connection Type field to Standard (Socket Attach) if it is not already.

- Set the Port field to the port number that your application is listening on for debugging.

- Click Apply.

Start debugging by clicking the Debug button in the Debug Configurations window.

To quickly launch your debug configuration after the first time, click Run→Debug History and select the configuration from the list.

Additional resources

Debug an OpenShift Java Application with JBoss Developer Studio on Red Hat Knowledgebase.

Red Hat CodeReady Studio was previously called JBoss Developer Studio.

- A Debugging Java Applications On OpenShift and Kubernetes article on OpenShift Blog.

11.2. Debug logging

11.2.1. Local debug logging

To enable debug logging locally, see the Section 7.1, “Enabling logging” section and use the DEBUG log level.

If you want to enable debug logging permanently, add the following configuration to the src/main/resources/project-defaults.yml file in your application:

Debug logging YAML configuration

swarm:

logging: DEBUG11.2.2. Accessing debug logs on OpenShift

Start your application and interact with it to see the debugging statements in OpenShift.

Prerequisites

- A Maven-based application with debug logging enabled.

-

The

ocCLI client installed and authenticated.

Procedure

Deploy your application to OpenShift:

$ mvn clean fabric8:deploy -PopenshiftView the logs:

Get the name of the pod with your application:

$ oc get podsStart watching the log output:

$ oc logs -f pod/MY_APP_NAME-2-aaaaaKeep the terminal window displaying the log output open so that you can watch the log output.

Interact with your application:

For example, if you had debug logging in the REST API Level 0 example to log the

messagevariable in the/api/greetingmethod:Get the route of your application:

$ oc get routesMake an HTTP request on the

/api/greetingendpoint of your application:$ curl $APPLICATION_ROUTE/api/greeting?name=Sarah

Return to the window with your pod logs and inspect debug logging messages in the logs.

... 2018-02-11 11:12:31,158 INFO [io.openshift.MY_APP_NAME] (default task-18) Hello, Sarah! ...-

To disable debug logging, remove the

loggingkey from theproject-defaults.ymlfile and redeploy the appliation.

Additional resources

Chapter 12. Monitoring your application

This section contains information about monitoring your Thorntail–based application running on OpenShift.

12.1. Accessing JVM metrics for your application on OpenShift

12.1.1. Accessing JVM metrics using Jolokia on OpenShift

Jolokia is a built-in lightweight solution for accessing JMX (Java Management Extension) metrics over HTTP on OpenShift. Jolokia allows you to access CPU, storage, and memory usage data collected by JMX over an HTTP bridge. Jolokia uses a REST interface and JSON-formatted message payloads. It is suitable for monitoring cloud applications thanks to its comparably high speed and low resource requirements.

For Java-based applications, the OpenShift Web console provides the integrated hawt.io console that collects and displays all relevant metrics output by the JVM running your application.

Prerequistes

-

the

occlient authenticated - a Java-based application container running in a project on OpenShift

- latest JDK 1.8.0 image

Procedure

List the deployment configurations of the pods inside your project and select the one that corresponds to your application.

oc get dcNAME REVISION DESIRED CURRENT TRIGGERED BY MY_APP_NAME 2 1 1 config,image(my-app:6) ...Open the YAML deployment template of the pod running your application for editing.

oc edit dc/MY_APP_NAMEAdd the following entry to the

portssection of the template and save your changes:... spec: ... ports: - containerPort: 8778 name: jolokia protocol: TCP ... ...Redeploy the pod running your application.

oc rollout latest dc/MY_APP_NAMEThe pod is redeployed with the updated deployment configuration and exposes the port

8778.- Log into the OpenShift Web console.

- In the sidebar, navigate to Applications > Pods, and click on the name of the pod running your application.

- In the pod details screen, click Open Java Console to access the hawt.io console.

Additional resources

12.2. Application metrics

Thorntail provides ways of exposing application metrics in order to track performance and service availability.

12.2.1. What are metrics

In the microservices architecture, where multiple services are invoked in order to serve a single user request, diagnosing performance issues or reacting to service outages might be hard. To make solving problems easier, applications must expose machine-readable data about their behavior, such as:

- How many requests are currently being processed.

- How many connections to the database are currently in use.

- How long service invocations take.

These kinds of data are referred to as metrics. Collecting metrics, visualizing them, setting alerts, discovering trends, etc. are very important to keep a service healthy.

Thorntail provides a fraction for Eclipse MicroProfile Metrics, an easy-to-use API for exposing metrics. Among other formats, it supports exporting data in the native format of Prometheus, a popular monitoring solution. Inside the application, you need nothing except this fraction. Outside of the application, Prometheus typically runs.

Additional resources

- The MicroProfile Metrics GitHub page.

- The Prometheus homepage

- A popular solution to visualize metrics stored in Prometheus is Grafana. For more information, see the Grafana homepage.

12.2.2. Exposing application metrics

In this example, you:

- Configure your application to expose metrics.

- Collect and view the data using Prometheus.

Note that Prometheus actively connects to a monitored application to collect data; the application does not actively send metrics to a server.

Prerequisites

Prometheus configured to collect metrics from the application:

Download and extract the archive with the latest Prometheus release:

$ wget https://github.com/prometheus/prometheus/releases/download/v2.4.3/prometheus-2.4.3.linux-amd64.tar.gz $ tar -xvf prometheus-2.4.3.linux-amd64.tar.gzNavigate to the directory with Prometheus:

$ cd prometheus-2.4.3.linux-amd64Append the following snippet to the

prometheus.ymlfile to make Prometheus automatically collect metrics from your application:- job_name: 'thorntail' static_configs: - targets: ['localhost:8080']The default behavior of Thorntail-based applications is to expose metrics at the

/metricsendpoint. This is what the MicroProfile Metrics specification requires, and also what Prometheus expects.

The Prometheus server started on

localhost:Start Prometheus and wait until the

Server is ready to receive web requestsmessage is displayed in the console.$ ./prometheus

Procedure

Include the

microprofile-metricsfraction in thepom.xmlfile in your application:pom.xml

<dependencies> <dependency> <groupId>io.thorntail</groupId> <artifactId>microprofile-metrics</artifactId> </dependency> </dependencies>Annotate methods or classes with the metrics annotations, for example:

@GET @Counted(name = "hello-count", absolute = true) @Timed(name = "hello-time", absolute = true) public String get() { return "Hello from counted and timed endpoint"; }Here, the

@Countedannotation is used to keep track of how many times this method was invoked. The@Timedannotation is used to keep track of how long the invocations took.In this example, a JAX-RS resource method was annotated directly, but you can annotate any CDI bean in your application as well.

Launch your application:

$ mvn thorntail:runInvoke the traced endpoint several times:

$ curl http://localhost:8080/ Hello from counted and timed endpointWait at least 15 seconds for the collection to happen, and see the metrics in Prometheus UI:

-

Open the Prometheus UI at http://localhost:9090/ and type

hellointo the Expression box. -

From the suggestions, select for example

application:hello_countand click Execute. - In the table that is displayed, you can see how many times the resource method was invoked.

-

Alternatively, select

application:hello_time_mean_secondsto see the mean time of all the invocations.

Note that all metrics you created are prefixed with

application:. There are other metrics, automatically exposed by Thorntail as the MicroProfile Metrics specification requires. Those metrics are prefixed withbase:andvendor:and expose information about the JVM in which the application runs.-

Open the Prometheus UI at http://localhost:9090/ and type

Additional resources

- For additional types of metrics, see the Eclipse MicroProfile Metrics documentation.

Chapter 13. Available examples for Thorntail

The Thorntail runtime provides example applications. When you start developing applications on OpenShift, you can use the example applications as templates.

You can access these example applications on Developer Launcher.

You can download and deploy all the example applications on:

- x86_64 architecture - The example applications in this guide demonstrate how to build and deploy example applications on x86_64 architecture.

s390x architecture - To deploy the example applications on OpenShift environments provisioned on IBM Z infrastructure, specify the relevant IBM Z image name in the commands. Refer to the section Supported Java images for Thorntail for more information about the image names.

Some of the example applications also require other products, such as Red Hat Data Grid to demonstrate the workflows. In this case, you must also change the image names of these products to their relevant IBM Z image names in the YAML file of the example applications.

The Secured example application in Thorntail requires Red Hat SSO 7.3. Since Red Hat SSO 7.3 is not supported on IBM Z, the Secured example is not available for IBM Z.

13.1. REST API Level 0 example for Thorntail

The following example is not meant to be run in a production environment.

Example proficiency level: Foundational.

What the REST API Level 0 example does

The REST API Level 0 example shows how to map business operations to a remote procedure call endpoint over HTTP using a REST framework. This corresponds to Level 0 in the Richardson Maturity Model. Creating an HTTP endpoint using REST and its underlying principles to define your API lets you quickly prototype and design the API flexibly.

This example introduces the mechanics of interacting with a remote service using the HTTP protocol. It allows you to:

-

Execute an HTTP

GETrequest on theapi/greetingendpoint. -

Receive a response in JSON format with a payload consisting of the

Hello, World!String. -

Execute an HTTP

GETrequest on theapi/greetingendpoint while passing in a String argument. This uses thenamerequest parameter in the query string. -

Receive a response in JSON format with a payload of

Hello, $name!with$namereplaced by the value of thenameparameter passed into the request.

13.1.1. REST API Level 0 design tradeoffs

| Pros | Cons |

|---|---|

|

|

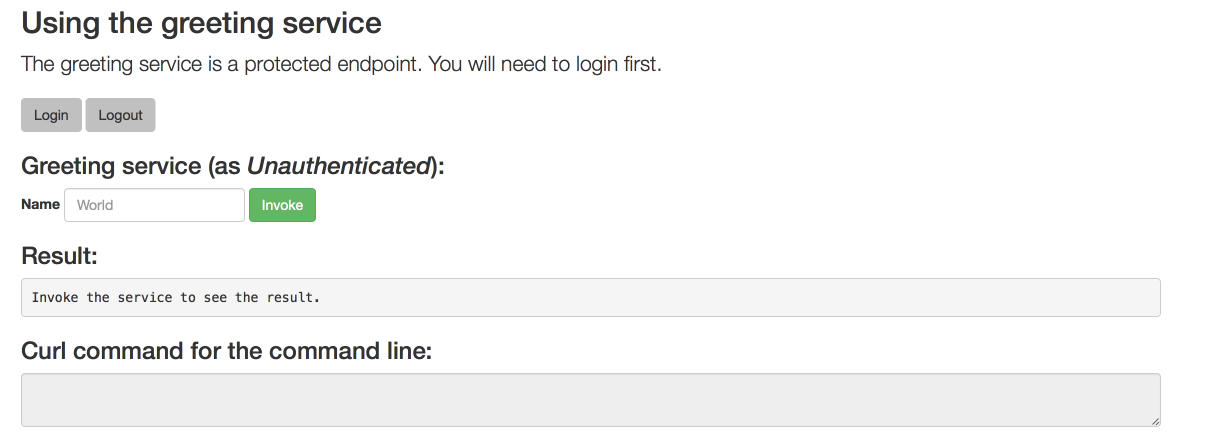

13.1.2. Deploying the REST API Level 0 example application to OpenShift Online