Block Device Guide

Managing, creating, configuring, and using Red Hat Ceph Storage Block Devices

Abstract

Chapter 1. Introduction to Ceph block devices

A block is a set length of bytes in a sequence, for example, a 512-byte block of data. Combining many blocks together into a single file can be used as a storage device that you can read from and write to. Block-based storage interfaces are the most common way to store data with rotating media such as:

- Hard drives

- CD/DVD discs

- Floppy disks

- Traditional 9-track tapes

The ubiquity of block device interfaces makes a virtual block device an ideal candidate for interacting with a mass data storage system like Red Hat Ceph Storage.

Ceph block devices are thin-provisioned, resizable and store data striped over multiple Object Storage Devices (OSD) in a Ceph storage cluster. Ceph block devices are also known as Reliable Autonomic Distributed Object Store (RADOS) Block Devices (RBDs). Ceph block devices leverage RADOS capabilities such as:

- Snapshots

- Replication

- Data consistency

Ceph block devices interact with OSDs by using the librbd library.

Ceph block devices deliver high performance with infinite scalability to Kernel Virtual Machines (KVMs), such as Quick Emulator (QEMU), and cloud-based computing systems, like OpenStack, that rely on the libvirt and QEMU utilities to integrate with Ceph block devices. You can use the same storage cluster to operate the Ceph Object Gateway and Ceph block devices simultaneously.

To use Ceph block devices, requires you to have access to a running Ceph storage cluster. For details on installing a Red Hat Ceph Storage cluster, see the Red Hat Ceph Storage Installation Guide.

Chapter 2. Ceph block device commands

As a storage administrator, being familiar with Ceph’s block device commands can help you effectively manage the Red Hat Ceph Storage cluster. You can create and manage block devices pools and images, along with enabling and disabling the various features of Ceph block devices.

2.1. Prerequisites

- A running Red Hat Ceph Storage cluster.

2.2. Displaying the command help

Display command, and sub-command online help from the command-line interface.

The -h option still displays help for all available commands.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the client node.

Procedure

Use the

rbd helpcommand to display help for a particularrbdcommand and its subcommand:Syntax

rbd help COMMAND SUBCOMMANDTo display help for the

snap listcommand:[root@rbd-client ~]# rbd help snap list

2.3. Creating a block device pool

Before using the block device client, ensure a pool for rbd exists, is enabled and initialized.

You MUST create a pool first before you can specify it as a source.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the client node.

Procedure

To create an

rbdpool, execute the following:Syntax

ceph osd pool create POOL_NAME PG_NUM ceph osd pool application enable POOL_NAME rbd rbd pool init -p POOL_NAMEExample

[root@rbd-client ~]# ceph osd pool create example 128 [root@rbd-client ~]# ceph osd pool application enable example rbd [root@rbd-client ~]# rbd pool init -p example

Additional Resources

- See the Pools chapter in the Red Hat Ceph Storage Storage Strategies Guide for additional details.

2.4. Creating a block device image

Before adding a block device to a node, create an image for it in the Ceph storage cluster.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the client node.

Procedure

To create a block device image, execute the following command:

Syntax

rbd create IMAGE_NAME --size MEGABYTES --pool POOL_NAMEExample

[root@rbd-client ~]# rbd create data --size 1024 --pool stackThis example creates a 1 GB image named

datathat stores information in a pool namedstack.NoteEnsure the pool exists before creating an image.

Additional Resources

- See the Creating a block device pool section in the Red Hat Ceph Storage Block Device Guide for additional details.

2.5. Listing the block device images

List the block device images.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the client node.

Procedure

To list block devices in the

rbdpool, execute the following (rbdis the default pool name):[root@rbd-client ~]# rbd lsTo list block devices in a particular pool, execute the following, but replace

POOL_NAMEwith the name of the pool:Syntax

rbd ls POOL_NAMEExample

[root@rbd-client ~]# rbd ls swimmingpool

2.6. Retrieving the block device image information

Retrieve information on the block device image.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the client node.

Procedure

To retrieve information from a particular image, execute the following, but replace

IMAGE_NAMEwith the name for the image:Syntax

rbd --image IMAGE_NAME infoExample

[root@rbd-client ~]# rbd --image foo infoTo retrieve information from an image within a pool, execute the following, but replace

IMAGE_NAMEwith the name of the image and replacePOOL_NAMEwith the name of the pool:Syntax

rbd --image IMAGE_NAME -p POOL_NAME infoExample

[root@rbd-client ~]# rbd --image bar -p swimmingpool info

2.7. Resizing a block device image

Ceph block device images are thin-provisioned. They do not actually use any physical storage until you begin saving data to them. However, they do have a maximum capacity that you set with the --size option.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the client node.

Procedure

To increase or decrease the maximum size of a Ceph block device image:

Syntax

[root@rbd-client ~]# rbd resize --image IMAGE_NAME --size SIZE

2.8. Removing a block device image

Remove a block device image.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the client node.

Procedure

To remove a block device, execute the following, but replace

IMAGE_NAMEwith the name of the image you want to remove:Syntax

rbd rm IMAGE_NAMEExample

[root@rbd-client ~]# rbd rm fooTo remove a block device from a pool, execute the following, but replace

IMAGE_NAMEwith the name of the image to remove and replacePOOL_NAMEwith the name of the pool:Syntax

rbd rm IMAGE_NAME -p POOL_NAMEExample

[root@rbd-client ~]# rbd rm bar -p swimmingpool

2.9. Managing block device images using the trash command

RADOS Block Device (RBD) images can be moved to the trash using the rbd trash command.

This command provides a wide array of options such as:

- Removing images from the trash.

- Listing images from the trash.

- Deferring deletion of images from the trash.

- Deleting images from the trash.

- Restoring images from the trash

- Restoring images from the trash and renaming them.

- Purging expired images from the trash.

- Scheduling purge from the trash.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the client node.

Procedure

Move an image to the trash:

Syntax

rbd trash mv POOL_NAME/IMAGE_NAMEExample

[root@rbd-client ~]# rbd trash mv mypool/myimageOnce an image is in the trash, a unique image ID is assigned.

NoteYou need this image ID to specify the image later if you need to use any of the trash options.

List the images in the trash:

Syntax

rbd trash ls POOL_NAMEExample

[root@rbd-client ~]# rbd trash ls mypool 1558a57fa43b rename_imageThe unique IMAGE_ID

1558a57fa43bcan used for anytrashoptions.Move an image to the trash and defer the deletion of the image from the trash:

Syntax

rbd trash mv POOL_NAME/IMAGE_NAME --expires-at "EXPIRATION_TIME"The EXPIRATION_TIME can be a number of seconds, hours, date, time in "HH:MM:SS", or "tomorrow".

Example

[root@rbd-client ~]# rbd trash mv mypool/myimage --expires-at "60 seconds"In this example,

myimageis moved to trash. However, you cannot delete it from trash until 60 seconds.Restore the image from the trash:

Syntax

rbd trash restore POOL_NAME/IMAGE_IDExample

[root@rbd-client ~]# rbd trash restore mypool/14502ff9ee4dDelete the image from the trash:

Syntax

rbd trash rm POOL_NAME/IMAGE_ID [--force]Example

[root@rbd-client ~]# rbd trash rm mypool/14502ff9ee4d Removing image: 100% complete...done.If the image is deferred for deletion, then you cannot delete it from the trash until expiration. You get the following error message:

Example

Deferment time has not expired, please use --force if you really want to remove the image Removing image: 0% complete...failed. 2021-12-02 06:37:49.573 7fb5d237a500 -1 librbd::api::Trash: remove: error: deferment time has not expired.ImportantOnce an image is deleted from the trash, it cannot be restored.

Rename the image and then restore it from the trash:

Syntax

rbd trash restore POOL_NAME/IMAGE_ID --image NEW_IMAGE_NAMEExample

[root@rbd-client ~]# rbd trash restore mypool/14502ff9ee4d --image test_imageRemove expired images from the trash:

Syntax

rbd trash purge POOL_NAMEExample

[root@rbd-client ~]# rbd trash purge mypoolIn this example, all the images that are trashed from

mypoolare removed.

2.10. Enabling and disabling image features

You can enable or disable image features, such as fast-diff, exclusive-lock, object-map, or journaling, on already existing images.

The deep flatten feature can be only disabled on already existing images but not enabled. To use deep flatten, enable it when creating images.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the client node.

Procedure

To enable a feature.

Syntax

rbd feature enable POOL_NAME/IMAGE_NAME FEATURE_NAMETo enable the

exclusive-lockfeature on theimage1image in thedatapool:Example

[root@rbd-client ~]# rbd feature enable data/image1 exclusive-lockImportantIf you enable the

fast-diffandobject-mapfeatures, then rebuild the object map:+ .Syntax

rbd object-map rebuild POOL_NAME/IMAGE_NAME

To disable a feature.

Syntax

rbd feature disable POOL_NAME/IMAGE_NAME FEATURE_NAMETo disable the

fast-difffeature on theimage2image in thedatapool:Example

[root@rbd-client ~]# rbd feature disable data/image2 fast-diff

2.11. Working with image metadata

Ceph supports adding custom image metadata as key-value pairs. The pairs do not have any strict format.

Also, by using metadata, you can set the RADOS Block Device (RBD) configuration parameters for particular images.

Use the rbd image-meta commands to work with metadata.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the client node.

Procedure

To set a new metadata key-value pair:

Syntax

rbd image-meta set POOL_NAME/IMAGE_NAME KEY VALUEExample

[root@rbd-client ~]# rbd image-meta set data/dataset last_update 2016-06-06This example sets the

last_updatekey to the2016-06-06value on thedatasetimage in thedatapool.To remove a metadata key-value pair:

Syntax

rbd image-meta remove POOL_NAME/IMAGE_NAME KEYExample

[root@rbd-client ~]# rbd image-meta remove data/dataset last_updateThis example removes the

last_updatekey-value pair from thedatasetimage in thedatapool.To view a value of a key:

Syntax

rbd image-meta get POOL_NAME/IMAGE_NAME KEYExample

[root@rbd-client ~]# rbd image-meta get data/dataset last_updateThis example views the value of the

last_updatekey.To show all metadata on an image:

Syntax

rbd image-meta list POOL_NAME/IMAGE_NAMEExample

[root@rbd-client ~]# rbd data/dataset image-meta listThis example lists the metadata set for the

datasetimage in thedatapool.To override the RBD image configuration settings set in the Ceph configuration file for a particular image:

Syntax

rbd config image set POOL_NAME/IMAGE_NAME PARAMETER VALUEExample

[root@rbd-client ~]# rbd config image set data/dataset rbd_cache falseThis example disables the RBD cache for the

datasetimage in thedatapool.

Additional Resources

- See the Block device general options section in the Red Hat Ceph Storage Block Device Guide for a list of possible configuration options.

2.12. Moving images between pools

You can move RADOS Block Device (RBD) images between different pools within the same cluster. The migration can be between replicated pools, erasure-coded pools, or between replicated and erasure-coded pools.

During this process, the source image is copied to the target image with all snapshot history and optionally with link to the source image’s parent to help preserve sparseness. The source image is read only, the target image is writable. The target image is linked to the source image while the migration is in progress.

You can safely run this process in the background while the new target image is in use. However, stop all clients using the target image before the preparation step to ensure that clients using the image are updated to point to the new target image.

The krbd kernel module does not support live migration at this time.

Prerequisites

- Stop all clients that use the source image.

- Root-level access to the client node.

Procedure

Prepare for migration by creating the new target image that cross-links the source and target images:

Syntax

rbd migration prepare SOURCE_IMAGE TARGET_IMAGEReplace:

- SOURCE_IMAGE with the name of the image to be moved. Use the POOL/IMAGE_NAME format.

- TARGET_IMAGE with the name of the new image. Use the POOL/IMAGE_NAME format.

Example

[root@rbd-client ~]# rbd migration prepare data/source stack/targetVerify the state of the new target image, which is supposed to be

prepared:Syntax

rbd status TARGET_IMAGEExample

[root@rbd-client ~]# rbd status stack/target Watchers: none Migration: source: data/source (5e2cba2f62e) destination: stack/target (5e2ed95ed806) state: prepared- Optionally, restart the clients using the new target image name.

Copy the source image to target image:

Syntax

rbd migration execute TARGET_IMAGEExample

[root@rbd-client ~]# rbd migration execute stack/targetEnsure that the migration is completed:

Example

[root@rbd-client ~]# rbd status stack/target Watchers: watcher=1.2.3.4:0/3695551461 client.123 cookie=123 Migration: source: data/source (5e2cba2f62e) destination: stack/target (5e2ed95ed806) state: executedCommit the migration by removing the cross-link between the source and target images, and this also removes the source image:

Syntax

rbd migration commit TARGET_IMAGEExample

[root@rbd-client ~]# rbd migration commit stack/targetIf the source image is a parent of one or more clones, use the

--forceoption after ensuring that the clone images are not in use:Example

[root@rbd-client ~]# rbd migration commit stack/target --force- If you did not restart the clients after the preparation step, restart them using the new target image name.

2.13. The rbdmap service

The systemd unit file, rbdmap.service, is included with the ceph-common package. The rbdmap.service unit executes the rbdmap shell script.

This script automates the mapping and unmapping of RADOS Block Devices (RBD) for one or more RBD images. The script can be ran manually at any time, but the typical use case is to automatically mount RBD images at boot time, and unmount at shutdown. The script takes a single argument, which can be either map, for mounting or unmap, for unmounting RBD images. The script parses a configuration file, the default is /etc/ceph/rbdmap, but can be overridden using an environment variable called RBDMAPFILE. Each line of the configuration file corresponds to an RBD image.

The format of the configuration file format is as follows:

IMAGE_SPEC RBD_OPTS

Where IMAGE_SPEC specifies the POOL_NAME / IMAGE_NAME, or just the IMAGE_NAME, in which case the POOL_NAME defaults to rbd. The RBD_OPTS is an optional list of options to be passed to the underlying rbd map command. These parameters and their values should be specified as a comma-separated string:

OPT1=VAL1,OPT2=VAL2,…,OPT_N=VAL_N

This will cause the script to issue an rbd map command like the following:

rbd map POOLNAME/IMAGE_NAME --OPT1 VAL1 --OPT2 VAL2

For options and values which contain commas or equality signs, a simple apostrophe can be used to prevent replacing them.

When successful, the rbd map operation maps the image to a /dev/rbdX device, at which point a udev rule is triggered to create a friendly device name symlink, for example, /dev/rbd/POOL_NAME/IMAGE_NAME, pointing to the real mapped device. For mounting or unmounting to succeed, the friendly device name must have a corresponding entry in /etc/fstab file. When writing /etc/fstab entries for RBD images, it is a good idea to specify the noauto or nofail mount option. This prevents the init system from trying to mount the device too early, before the device exists.

2.14. Configuring the rbdmap service

To automatically map and mount, or unmap and unmount, RADOS Block Devices (RBD) at boot time, or at shutdown respectively.

Prerequisites

- Root-level access to the node doing the mounting.

-

Installation of the

ceph-commonpackage.

Procedure

-

Open for editing the

/etc/ceph/rbdmapconfiguration file. Add the RBD image or images to the configuration file:

Example

foo/bar1 id=admin,keyring=/etc/ceph/ceph.client.admin.keyring foo/bar2 id=admin,keyring=/etc/ceph/ceph.client.admin.keyring,options='lock_on_read,queue_depth=1024'- Save changes to the configuration file.

Enable the RBD mapping service:

Example

[root@client ~]# systemctl enable rbdmap.service

2.15. Monitoring performance of Ceph Block Devices using the command-line interface

Starting with Red Hat Ceph Storage 4.1, a performance metrics gathering framework is integrated within the Ceph OSD and Manager components. This framework provides a built-in method to generate and process performance metrics upon which other Ceph Block Device performance monitoring solutions are built.

A new Ceph Manager module,rbd_support, aggregates the performance metrics when enabled. The rbd command has two new actions: iotop and iostat.

The initial use of these actions can take around 30 seconds to populate the data fields.

Prerequisites

- User-level access to a Ceph Monitor node.

Procedure

Enable the

rbd_supportCeph Manager module:Example

[user@mon ~]$ ceph mgr module enable rbd_supportTo display an "iotop"-style of images:

Example

[user@mon ~]$ rbd perf image iotopNoteThe write ops, read-ops, write-bytes, read-bytes, write-latency, and read-latency columns can be sorted dynamically by using the right and left arrow keys.

To display an "iostat"-style of images:

Example

[user@mon ~]$ rbd perf image iostatNoteThe output from this command can be in JSON or XML format, and then can be sorted using other command-line tools.

2.16. Additional Resources

-

See Chapter 3, The

rbdkernel module for details on mapping and unmapping block devices.

Chapter 3. The rbd kernel module

As a storage administrator, you can access Ceph block devices through the rbd kernel module. You can map and unmap a block device, and displaying those mappings. Also, you can get a list of images through the rbd kernel module.

Kernel clients on Linux distributions other than Red Hat Enterprise Linux (RHEL) are permitted but not supported. If issues are found in the storage cluster when using these kernel clients, Red Hat will address them, but if the root cause is found to be on the kernel client side, the issue will have to be addressed by the software vendor.

3.1. Prerequisites

- A running Red Hat Ceph Storage cluster.

3.2. Create a Ceph Block Device and use it from a Linux kernel module client

As a storage administrator, you can create a Ceph Block Device for a Linux kernel module client in the Red Hat Ceph Storage Dashboard. As a system administrator, you can map that block device on a Linux client, and partition, format, and mount it, using the command line. After this, you can read and write files to it.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- A Red Hat Enterprise Linux client.

3.2.1. Create a Ceph Block Device for a Linux kernel module client using Dashboard

You can create a Ceph Block Device specifically for a Linux kernel module client using the Dashboard web interface by enabling only the features it requires.

Prerequisites

- A running Red Hat Ceph Storage cluster.

Procedure

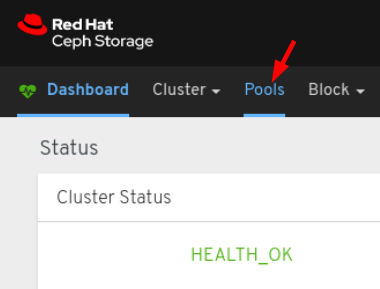

- Log in to the Dashboard.

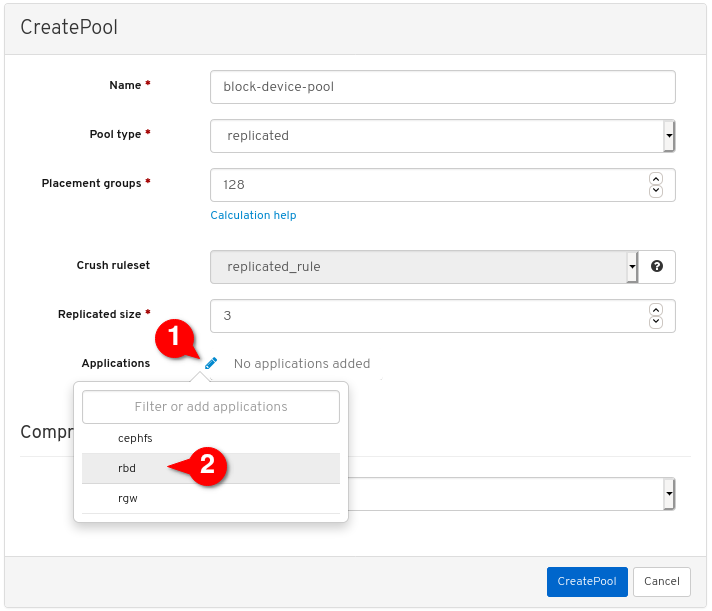

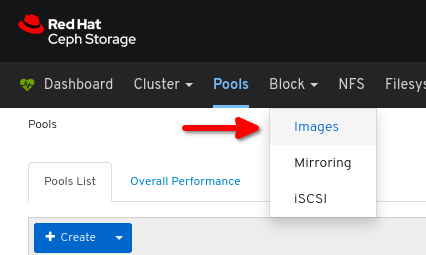

On the navigation bar, click Pools:

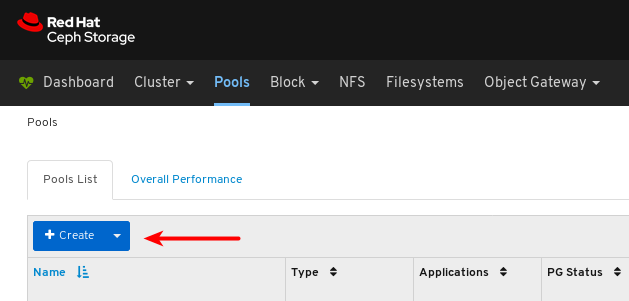

Click the Create button:

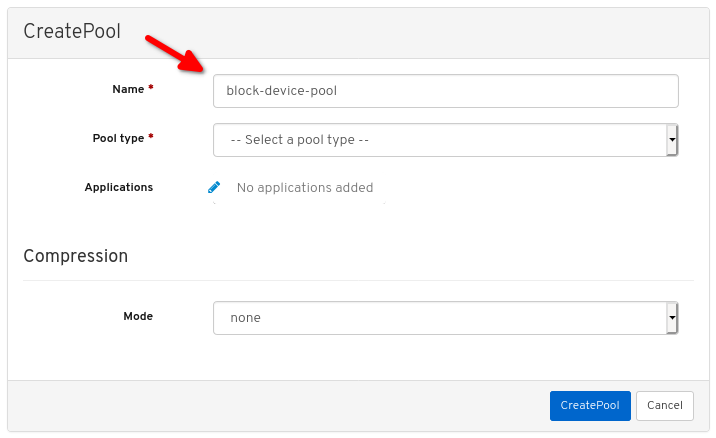

In the dialog window, set the name:

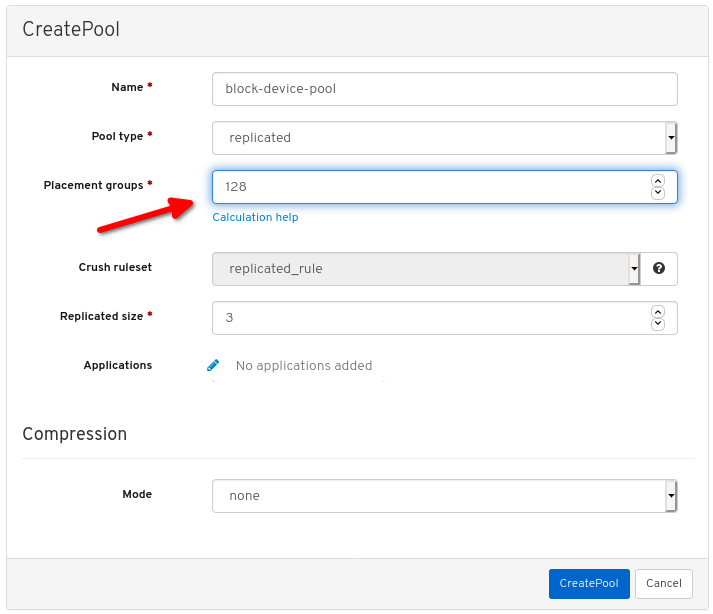

Set the Pool type to replicated:

Set the Placement Group (PG) number:

For assistance in choosing the PG number, use the PG calculator. Contact Red Hat Technical Support if unsure.

Set the replicated size:

Enable the

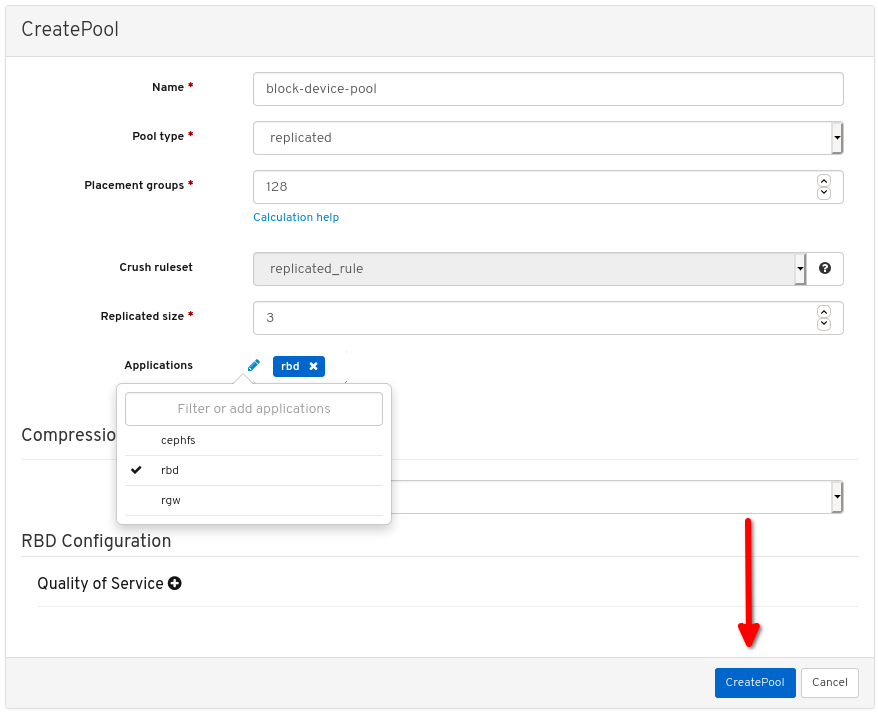

rbdapplication:

Click Create pool:

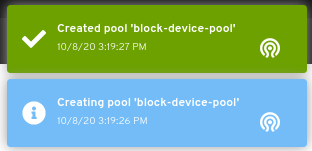

View the notifications indicating the pool was created successfully:

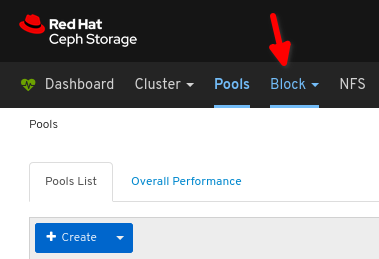

Click Block:

Click Images:

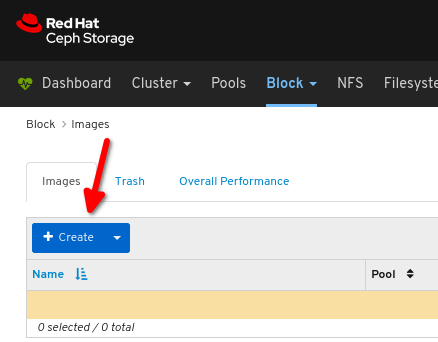

Click Create:

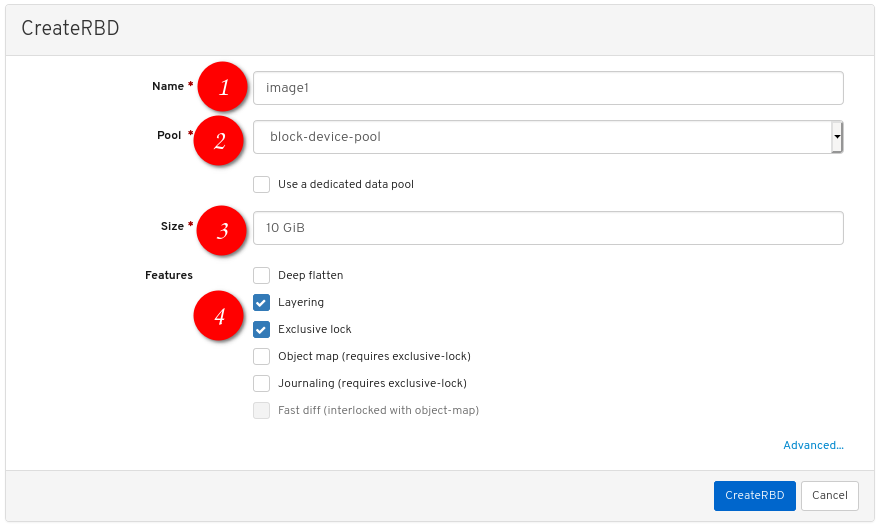

Configure the following:

the desired image name,

the desired image name,

set Pool to the pool created earlier,

set Pool to the pool created earlier,

set the desired size of the image,

set the desired size of the image,

ensure Layering and Exclusive lock are the only enabled features:

ensure Layering and Exclusive lock are the only enabled features:

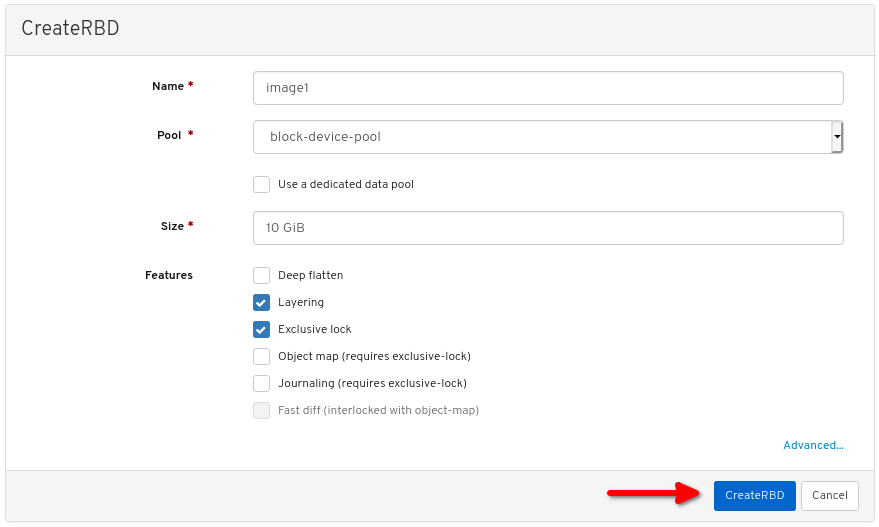

Click CreateRBD:

View the notification indicating the image was created successfully:

Additional Resources

- For more information, see Map and mount a Ceph Block Device on Linux using the command line.

- For more information, see the Dashboard Guide.

3.2.2. Map and mount a Ceph Block Device on Linux using the command line

You can map a Ceph Block Device from a Red Hat Enterprise Linux client using the Linux rbd kernel module. After mapping it, you can partition, format, and mount it, so you can write files to it.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- A Ceph block device for a Linux kernel module client was created.

- A Red Hat Enterprise Linux client.

Procedure

On the Red Hat Enterprise Linux client node, enable the Red Hat Ceph Storage 4 Tools repository:

Red Hat Enterprise Linux 7

[root@client1 ~]# subscription-manager repos --enable=rhel-7-server-rhceph-4-tools-rpmsRed Hat Enterprise Linux 8

[root@client1 ~]# subscription-manager repos --enable=rhceph-4-tools-for-rhel-8-x86_64-rpmsInstall the

ceph-commonRPM package:Red Hat Enterprise Linux 7

[root@client1 ~]# yum install ceph-commonRed Hat Enterprise Linux 8

[root@client1 ~]# dnf install ceph-commonCopy the Ceph configuration file from a Monitor node to the Client node:

scp root@MONITOR_NODE:/etc/ceph/ceph.conf /etc/ceph/ceph.confExample

[root@client1 ~]# scp root@cluster1-node2:/etc/ceph/ceph.conf /etc/ceph/ceph.conf root@192.168.0.32's password: ceph.conf 100% 497 724.9KB/s 00:00Copy the key file from a Monitor node to the Client node:

scp root@MONITOR_NODE:/etc/ceph/ceph.client.admin.keyring /etc/ceph/ceph.client.admin.keyringExample

[root@client1 ~]# scp root@cluster1-node2:/etc/ceph/ceph.client.admin.keyring /etc/ceph/ceph.client.admin.keyring root@192.168.0.32's password: ceph.client.admin.keyring 100% 151 265.0KB/s 00:00Map the image:

rbd map --pool POOL_NAME IMAGE_NAME --id adminExample

[root@client1 ~]# rbd map --pool block-device-pool image1 --id admin /dev/rbd0 [root@client1 ~]#Create a partition table on the block device:

parted /dev/MAPPED_BLOCK_DEVICE mklabel msdosExample

[root@client1 ~]# parted /dev/rbd0 mklabel msdos Information: You may need to update /etc/fstab.Create a partition for an XFS file system:

parted /dev/MAPPED_BLOCK_DEVICE mkpart primary xfs 0% 100%Example

[root@client1 ~]# parted /dev/rbd0 mkpart primary xfs 0% 100% Information: You may need to update /etc/fstab.Format the partition:

mkfs.xfs /dev/MAPPED_BLOCK_DEVICE_WITH_PARTITION_NUMBERExample

[root@client1 ~]# mkfs.xfs /dev/rbd0p1 meta-data=/dev/rbd0p1 isize=512 agcount=16, agsize=163824 blks = sectsz=512 attr=2, projid32bit=1 = crc=1 finobt=1, sparse=1, rmapbt=0 = reflink=1 data = bsize=4096 blocks=2621184, imaxpct=25 = sunit=16 swidth=16 blks naming =version 2 bsize=4096 ascii-ci=0, ftype=1 log =internal log bsize=4096 blocks=2560, version=2 = sectsz=512 sunit=16 blks, lazy-count=1 realtime =none extsz=4096 blocks=0, rtextents=0Create a directory to mount the new file system on:

mkdir PATH_TO_DIRECTORYExample

[root@client1 ~]# mkdir /mnt/cephMount the file system:

mount /dev/MAPPED_BLOCK_DEVICE_WITH_PARTITION_NUMBER PATH_TO_DIRECTORYExample

[root@client1 ~]# mount /dev/rbd0p1 /mnt/ceph/Verify that the file system is mounted and showing the correct size:

df -h PATH_TO_DIRECTORYExample

[root@client1 ~]# df -h /mnt/ceph/ Filesystem Size Used Avail Use% Mounted on /dev/rbd0p1 10G 105M 9.9G 2% /mnt/ceph

Additional Resources

- For more information, see Create a Ceph Block Device for a Linux kernel module client using Dashboard.

- For more information, see Managing file systems for Red Hat Enterprise Linux 8.

- For more information, see Storage Administration Guide for Red Hat Enterprise Linux 7.

3.3. Getting a list of images

Get a list of Ceph block device images.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

To mount a block device image, first return a list of the images:

[root@rbd-client ~]# rbd list

3.4. Mapping a block device

Use rbd to map an image name to a kernel module. You must specify the image name, the pool name and the user name. rbd will load the RBD kernel module if it is not already loaded.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Map an image name to a kernel module:

Syntax

rbd device map POOL_NAME/IMAGE_NAME --id USER_NAMEExample

[root@rbd-client ~]# rbd device map rbd/myimage --id adminSpecify a secret when using

cephxauthentication by either the keyring or a file containing the secret:Syntax

[root@rbd-client ~]# rbd device map POOL_NAME/IMAGE_NAME --id USER_NAME --keyring PATH_TO_KEYRINGor

[root@rbd-client ~]# rbd device map POOL_NAME/IMAGE_NAME --id USER_NAME --keyfile PATH_TO_FILE

3.5. Displaying mapped block devices

You can display which block device images are mapped to the kernel module with the rbd command.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Display the mapped block devices:

[root@rbd-client ~]# rbd device list

3.6. Unmapping a block device

You can unmap a block device image with the rbd command, by using the unmap option and providing the device name.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Unmap the block device image:

Syntax

rbd device unmap /dev/rbd/POOL_NAME/IMAGE_NAMEExample

[root@rbd-client ~]# rbd device unmap /dev/rbd/rbd/foo

3.7. Segregating images within isolated namespaces within the same pool

When using Ceph Block Devices directly without a higher-level system, such as OpenStack or OpenShift Container Storage, it was not possible to restrict user access to specific block device images. When combined with CephX capabilities, users can be restricted to specific pool namespaces to restrict access to the images.

You can use RADOS namespaces, a new level of identity to identify an object, to provide isolation between rados clients within a pool. For example, a client can only have full permissions on a namespace specific to them. This makes using a different RADOS client for each tenant feasible, which is particularly useful for a block device where many different tenants are accessing their own block device images.

You can segregate block device images within isolated namespaces within the same pool.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Upgrade all the kernels to 4x and to librbd and librados on all clients.

- Root-level access to the monitor and client nodes.

Procedure

Create an

rbdpool:Syntax

ceph osd pool create POOL_NAME PG_NUMExample

[root@mon ~]# ceph osd pool create mypool 100 pool 'mypool' createdAssociate the

rbdpool with the RBD application:Syntax

ceph osd pool application enable POOL_NAME rbdExample

[root@mon ~]# ceph osd pool application enable mypool rbd enabled application 'rbd' on pool 'mypool'Initialize the pool with the RBD application:

Syntax

rbd pool init -p POOL_NAMEExample

[root@mon ~]# rbd pool init -p mypoolCreate two namespaces:

Syntax

rbd namespace create --namespace NAMESPACEExample

[root@mon ~]# rbd namespace create --namespace namespace1 [root@mon ~]# rbd namespace create --namespace namespace2 [root@mon ~]# rbd namespace ls --format=json [{"name":"namespace2"},{"name":"namespace1"}]Provide access to the namespaces for two users:

Syntax

ceph auth get-or-create client.USER_NAME mon 'profile rbd' osd 'profile rbd pool=rbd namespace=NAMESPACE' -o /etc/ceph/client.USER_NAME.keyringExample

[root@mon ~]# ceph auth get-or-create client.testuser mon 'profile rbd' osd 'profile rbd pool=rbd namespace=namespace1' -o /etc/ceph/client.testuser.keyring [root@mon ~]# ceph auth get-or-create client.newuser mon 'profile rbd' osd 'profile rbd pool=rbd namespace=namespace2' -o /etc/ceph/client.newuser.keyringGet the key of the clients:

Syntax

ceph auth get client.USER_NAMEExample

[root@mon ~]# ceph auth get client.testuser [client.testuser] key = AQDMp61hBf5UKRAAgjQ2In0Z3uwAase7mrlKnQ== caps mon = "profile rbd" caps osd = "profile rbd pool=rbd namespace=namespace1" exported keyring for client.testuser [root@mon ~]# ceph auth get client.newuser [client.newuser] key = AQDfp61hVfLFHRAA7D80ogmZl80ROY+AUG4A+Q== caps mon = "profile rbd" caps osd = "profile rbd pool=rbd namespace=namespace2" exported keyring for client.newuserCreate the block device images and use the pre-defined namespace within a pool:

Syntax

rbd create --namespace NAMESPACE IMAGE_NAME --size SIZE_IN_GBExample

[root@mon ~]# rbd create --namespace namespace1 image01 --size 1G [root@mon ~]# rbd create --namespace namespace2 image02 --size 1GOptional: Get the details of the namespace and the associated image:

Syntax

rbd --namespace NAMESPACE ls --longExample

[root@mon ~]# rbd --namespace namespace1 ls --long NAME SIZE PARENT FMT PROT LOCK image01 1 GiB 2 [root@mon ~]# rbd --namespace namespace2 ls --long NAME SIZE PARENT FMT PROT LOCK image02 1 GiB 2Copy the Ceph configuration file from the Ceph Monitor node to the client node:

scp /etc/ceph/ceph.conf root@CLIENT_NODE:/etc/ceph/Example

[root@mon ~]# scp /etc/ceph/ceph.conf root@host02:/etc/ceph/ root@host02's password: ceph.conf 100% 497 724.9KB/s 00:00Copy the admin keyring from the Ceph Monitor node to the client node:

Syntax

scp /etc/ceph/ceph.client.admin.keyring root@CLIENT_NODE:/etc/cephExample

[root@mon ~]# scp /etc/ceph/ceph.client.admin.keyring root@host02:/etc/ceph/ root@host02's password: ceph.client.admin.keyring 100% 151 265.0KB/s 00:00Copy the keyrings of the users from the Ceph Monitor node to the client node:

Syntax

scp /etc/ceph/ceph.client.USER_NAME.keyring root@CLIENT_NODE:/etc/ceph/Example

[root@mon ~]# scp /etc/ceph/client.newuser.keyring root@host02:/etc/ceph/ [root@mon ~]# scp /etc/ceph/client.testuser.keyring root@host02:/etc/ceph/Map the block device image:

Syntax

rbd map --name NAMESPACE IMAGE_NAME -n client.USER_NAME --keyring /etc/ceph/client.USER_NAME.keyringExample

[root@mon ~]# rbd map --namespace namespace1 image01 -n client.testuser --keyring=/etc/ceph/client.testuser.keyring /dev/rbd0 [root@mon ~]# rbd map --namespace namespace2 image02 -n client.newuser --keyring=/etc/ceph/client.newuser.keyring /dev/rbd1This does not allow access to users in the other namespaces in the same pool.

Example

[root@mon ~]# rbd map --namespace namespace2 image02 -n client.testuser --keyring=/etc/ceph/client.testuser.keyring rbd: warning: image already mapped as /dev/rbd1 rbd: sysfs write failed rbd: error asserting namespace: (1) Operation not permitted In some cases useful info is found in syslog - try "dmesg | tail". 2021-12-06 02:49:08.106 7f8d4fde2500 -1 librbd::api::Namespace: exists: error asserting namespace: (1) Operation not permitted rbd: map failed: (1) Operation not permitted [root@mon ~]# rbd map --namespace namespace1 image01 -n client.newuser --keyring=/etc/ceph/client.newuser.keyring rbd: warning: image already mapped as /dev/rbd0 rbd: sysfs write failed rbd: error asserting namespace: (1) Operation not permitted In some cases useful info is found in syslog - try "dmesg | tail". 2021-12-03 12:16:24.011 7fcad776a040 -1 librbd::api::Namespace: exists: error asserting namespace: (1) Operation not permitted rbd: map failed: (1) Operation not permittedVerify the device:

Example

[root@mon ~]# rbd showmapped id pool namespace image snap device 0 rbd namespace1 image01 - /dev/rbd0 1 rbd namespace2 image02 - /dev/rbd1

Chapter 4. Snapshot management

As a storage administrator, being familiar with Ceph’s snapshotting feature can help you manage the snapshots and clones of images stored in the Red Hat Ceph Storage cluster.

4.1. Prerequisites

- A running Red Hat Ceph Storage cluster.

4.2. Ceph block device snapshots

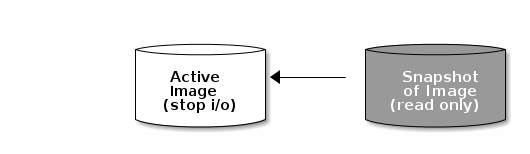

A snapshot is a read-only copy of the state of an image at a particular point in time. One of the advanced features of Ceph block devices is that you can create snapshots of the images to retain a history of an image’s state. Ceph also supports snapshot layering, which allows you to clone images quickly and easily, for example a virtual machine image. Ceph supports block device snapshots using the rbd command and many higher level interfaces, including QEMU, libvirt, OpenStack and CloudStack.

If a snapshot is taken while I/O is occurring, then the snapshot might not get the exact or latest data of the image and the snapshot might have to be cloned to a new image to be mountable. Red Hat recommends stopping I/O before taking a snapshot of an image. If the image contains a filesystem, the filesystem must be in a consistent state before taking a snapshot. To stop I/O you can use fsfreeze command. For virtual machines, the qemu-guest-agent can be used to automatically freeze filesystems when creating a snapshot.

Additional Resources

-

See the

fsfreeze(8)man page for more details.

4.3. The Ceph user and keyring

When cephx is enabled, you must specify a user name or ID and a path to the keyring containing the corresponding key for the user.

cephx is enabled by default.

You might also add the CEPH_ARGS environment variable to avoid re-entry of the following parameters:

Syntax

rbd --id USER_ID --keyring=/path/to/secret [commands]

rbd --name USERNAME --keyring=/path/to/secret [commands]Example

[root@rbd-client ~]# rbd --id admin --keyring=/etc/ceph/ceph.keyring [commands]

[root@rbd-client ~]# rbd --name client.admin --keyring=/etc/ceph/ceph.keyring [commands]

Add the user and secret to the CEPH_ARGS environment variable so that you do not need to enter them each time.

4.4. Creating a block device snapshot

Create a snapshot of a Ceph block device.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Specify the

snap createoption, the pool name and the image name:Syntax

rbd --pool POOL_NAME snap create --snap SNAP_NAME IMAGE_NAME rbd snap create POOL_NAME/IMAGE_NAME@SNAP_NAMEExample

[root@rbd-client ~]# rbd --pool rbd snap create --snap snapname foo [root@rbd-client ~]# rbd snap create rbd/foo@snapname

4.5. Listing the block device snapshots

List the block device snapshots.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Specify the pool name and the image name:

Syntax

rbd --pool POOL_NAME snap ls IMAGE_NAME rbd snap ls POOL_NAME/IMAGE_NAMEExample

[root@rbd-client ~]# rbd --pool rbd snap ls foo [root@rbd-client ~]# rbd snap ls rbd/foo

4.6. Rolling back a block device snapshot

Rollback a block device snapshot.

Rolling back an image to a snapshot means overwriting the current version of the image with data from a snapshot. The time it takes to execute a rollback increases with the size of the image. It is faster to clone from a snapshot than to rollback an image to a snapshot, and it is the preferred method of returning to a pre-existing state.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Specify the

snap rollbackoption, the pool name, the image name and the snap name:Syntax

rbd --pool POOL_NAME snap rollback --snap SNAP_NAME IMAGE_NAME rbd snap rollback POOL_NAME/IMAGE_NAME@SNAP_NAMEExample

[root@rbd-client ~]# rbd --pool rbd snap rollback --snap snapname foo [root@rbd-client ~]# rbd snap rollback rbd/foo@snapname

4.7. Deleting a block device snapshot

Delete a snapshot for Ceph block devices.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Specify the

snap rmoption, the pool name, the image name and the snapshot name:Syntax

rbd --pool POOL_NAME snap rm --snap SNAP_NAME IMAGE_NAME rbd snap rm POOL_NAME-/IMAGE_NAME@SNAP_NAMEExample

[root@rbd-client ~]# rbd --pool rbd snap rm --snap snapname foo [root@rbd-client ~]# rbd snap rm rbd/foo@snapname

If an image has any clones, the cloned images retain reference to the parent image snapshot. To delete the parent image snapshot, you must flatten the child images first.

Ceph OSD daemons delete data asynchronously, so deleting a snapshot does not free up the disk space immediately.

Additional Resources

- See the Flattening cloned images in the Red Hat Ceph Storage Block Device Guide for details.

4.8. Purging the block device snapshots

Purge block device snapshots.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Specify the

snap purgeoption and the image name:Syntax

rbd --pool POOL_NAME snap purge IMAGE_NAME rbd snap purge POOL_NAME/IMAGE_NAMEExample

[root@rbd-client ~]# rbd --pool rbd snap purge foo [root@rbd-client ~]# rbd snap purge rbd/foo

4.9. Renaming a block device snapshot

Rename a block device snapshot.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

To rename a snapshot:

Syntax

rbd snap rename POOL_NAME/IMAGE_NAME@ORIGINAL_SNAPSHOT_NAME POOL_NAME/IMAGE_NAME@NEW_SNAPSHOT_NAMEExample

[root@rbd-client ~]# rbd snap rename data/dataset@snap1 data/dataset@snap2This renames

snap1snapshot of thedatasetimage on thedatapool tosnap2.-

Execute the

rbd help snap renamecommand to display additional details on renaming snapshots.

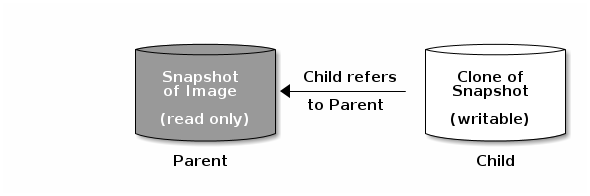

4.10. Ceph block device layering

Ceph supports the ability to create many copy-on-write (COW) or copy-on-read (COR) clones of a block device snapshot. Snapshot layering enables Ceph block device clients to create images very quickly. For example, you might create a block device image with a Linux VM written to it. Then, snapshot the image, protect the snapshot, and create as many clones as you like. A snapshot is read-only, so cloning a snapshot simplifies semantics—making it possible to create clones rapidly.

The terms parent and child mean a Ceph block device snapshot, parent, and the corresponding image cloned from the snapshot, child. These terms are important for the command line usage below.

Each cloned image, the child, stores a reference to its parent image, which enables the cloned image to open the parent snapshot and read it. This reference is removed when the clone is flattened that is, when information from the snapshot is completely copied to the clone.

A clone of a snapshot behaves exactly like any other Ceph block device image. You can read to, write from, clone, and resize the cloned images. There are no special restrictions with cloned images. However, the clone of a snapshot refers to the snapshot, so you MUST protect the snapshot before you clone it.

A clone of a snapshot can be a copy-on-write (COW) or copy-on-read (COR) clone. Copy-on-write (COW) is always enabled for clones while copy-on-read (COR) has to be enabled explicitly. Copy-on-write (COW) copies data from the parent to the clone when it writes to an unallocated object within the clone. Copy-on-read (COR) copies data from the parent to the clone when it reads from an unallocated object within the clone. Reading data from a clone will only read data from the parent if the object does not yet exist in the clone. Rados block device breaks up large images into multiple objects. The default is set to 4 MB and all copy-on-write (COW) and copy-on-read (COR) operations occur on a full object, that is writing 1 byte to a clone will result in a 4 MB object being read from the parent and written to the clone if the destination object does not already exist in the clone from a previous COW/COR operation.

Whether or not copy-on-read (COR) is enabled, any reads that cannot be satisfied by reading an underlying object from the clone will be rerouted to the parent. Since there is practically no limit to the number of parents, meaning that you can clone a clone, this reroute continues until an object is found or you hit the base parent image. If copy-on-read (COR) is enabled, any reads that fail to be satisfied directly from the clone result in a full object read from the parent and writing that data to the clone so that future reads of the same extent can be satisfied from the clone itself without the need of reading from the parent.

This is essentially an on-demand, object-by-object flatten operation. This is specially useful when the clone is in a high-latency connection away from it’s parent, that is the parent in a different pool, in another geographical location. Copy-on-read (COR) reduces the amortized latency of reads. The first few reads will have high latency because it will result in extra data being read from the parent, for example, you read 1 byte from the clone but now 4 MB has to be read from the parent and written to the clone, but all future reads will be served from the clone itself.

To create copy-on-read (COR) clones from snapshot you have to explicitly enable this feature by adding rbd_clone_copy_on_read = true under [global] or [client] section in the ceph.conf file.

Additional Resources

-

For more information on

flattening, see the Flattening cloned images section in the Red Hat Ceph Storage Block Device Gudie.

4.11. Protecting a block device snapshot

Clones access the parent snapshots. All clones would break if a user inadvertently deleted the parent snapshot. To prevent data loss, by default, you MUST protect the snapshot before you can clone it.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Specify

POOL_NAME,IMAGE_NAME, andSNAP_SHOT_NAMEin the following command:Syntax

rbd --pool POOL_NAME snap protect --image IMAGE_NAME --snap SNAPSHOT_NAME rbd snap protect POOL_NAME/IMAGE_NAME@SNAPSHOT_NAMEExample

[root@rbd-client ~]# rbd --pool rbd snap protect --image my-image --snap my-snapshot [root@rbd-client ~]# rbd snap protect rbd/my-image@my-snapshotNoteYou cannot delete a protected snapshot.

4.12. Cloning a block device snapshot

Clone a block device snapshot to create a read or write child image of the snapshot within the same pool or in another pool. One use case would be to maintain read-only images and snapshots as templates in one pool, and writable clones in another pool.

By default, you must protect the snapshot before you can clone it. To avoid having to protect the snapshot before you clone it, set ceph osd set-require-min-compat-client mimic. You can set it to higher versions than mimic as well.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

To clone a snapshot, you need to specify the parent pool, snapshot, child pool and image name:

Syntax

rbd --pool POOL_NAME --image PARENT_IMAGE --snap SNAP_NAME --dest-pool POOL_NAME --dest CHILD_IMAGE_NAME rbd clone POOL_NAME/PARENT_IMAGE@SNAP_NAME POOL_NAME/CHILD_IMAGE_NAMEExample

[root@rbd-client ~]# rbd --pool rbd --image my-image --snap my-snapshot --dest-pool rbd --dest new-image [root@rbd-client ~]# rbd clone rbd/my-image@my-snapshot rbd/new-image

4.13. Unprotecting a block device snapshot

Before you can delete a snapshot, you must unprotect it first. Additionally, you may NOT delete snapshots that have references from clones. You must flatten each clone of a snapshot, before you can delete the snapshot.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Run the following commands:

Syntax

rbd --pool POOL_NAME snap unprotect --image IMAGE_NAME --snap SNAPSHOT_NAME rbd snap unprotect POOL_NAME/IMAGE_NAME@SNAPSHOT_NAMEExample

[root@rbd-client ~]# rbd --pool rbd snap unprotect --image my-image --snap my-snapshot [root@rbd-client ~]# rbd snap unprotect rbd/my-image@my-snapshot

4.14. Listing the children of a snapshot

List the children of a snapshot.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

To list the children of a snapshot, execute the following:

Syntax

rbd --pool POOL_NAME children --image IMAGE_NAME --snap SNAP_NAME rbd children POOL_NAME/IMAGE_NAME@SNAPSHOT_NAMEExample

rbd --pool rbd children --image my-image --snap my-snapshot rbd children rbd/my-image@my-snapshot

4.15. Flattening cloned images

Cloned images retain a reference to the parent snapshot. When you remove the reference from the child clone to the parent snapshot, you effectively "flatten" the image by copying the information from the snapshot to the clone. The time it takes to flatten a clone increases with the size of the snapshot. Because a flattened image contains all the information from the snapshot, a flattened image will use more storage space than a layered clone.

If the deep flatten feature is enabled on an image, the image clone is dissociated from its parent by default.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

To delete a parent image snapshot associated with child images, you must flatten the child images first:

Syntax

rbd --pool POOL_NAME flatten --image IMAGE_NAME rbd flatten POOL_NAME/IMAGE_NAMEExample

[root@rbd-client ~]# rbd --pool rbd flatten --image my-image [root@rbd-client ~]# rbd flatten rbd/my-image

Chapter 5. Mirroring Ceph block devices

As a storage administrator, you can add another layer of redundancy to Ceph block devices by mirroring data images between Red Hat Ceph Storage clusters. Understanding and using Ceph block device mirroring can provide you protection against data loss, such as a site failure. There are two configurations for mirroring Ceph block devices, one-way mirroring or two-way mirroring, and you can configure mirroring on pools and individual images.

5.1. Prerequisites

- A minimum of two healthy running Red Hat Ceph Storage clusters.

- Network connectivity between the two storage clusters.

- Access to a Ceph client node for each Red Hat Ceph Storage cluster.

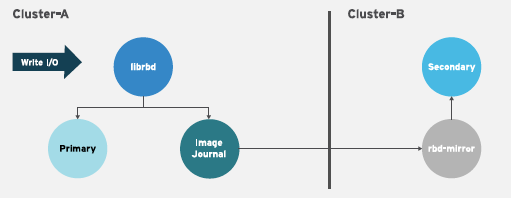

5.2. Ceph block device mirroring

RADOS Block Device (RBD) mirroring is a process of asynchronous replication of Ceph block device images between two or more Ceph storage clusters. By locating a Ceph storage cluster in different geographic locations, RBD Mirroring can help you recover from a site disaster. Journal-based Ceph block device mirroring ensures point-in-time consistent replicas of all changes to an image, including reads and writes, block device resizing, snapshots, clones and flattening.

RBD mirroring uses exclusive locks and the journaling feature to record all modifications to an image in the order in which they occur. This ensures that a crash-consistent mirror of an image is available.

The CRUSH hierarchies supporting primary and secondary pools that mirror block device images must have the same capacity and performance characteristics, and must have adequate bandwidth to ensure mirroring without excess latency. For example, if you have X MB/s average write throughput to images in the primary storage cluster, the network must support N * X throughput in the network connection to the secondary site plus a safety factor of Y% to mirror N images.

The rbd-mirror daemon is responsible for synchronizing images from one Ceph storage cluster to another Ceph storage cluster by pulling changes from the remote primary image and writes those changes to the local, non-primary image. The rbd-mirror daemon can run either on a single Ceph storage cluster for one-way mirroring or on two Ceph storage clusters for two-way mirroring that participate in the mirroring relationship.

For RBD mirroring to work, either using one-way or two-way replication, a couple of assumptions are made:

- A pool with the same name exists on both storage clusters.

- A pool contains journal-enabled images you want to mirror.

In one-way or two-way replication, each instance of rbd-mirror must be able to connect to the other Ceph storage cluster simultaneously. Additionally, the network must have sufficient bandwidth between the two data center sites to handle mirroring.

One-way Replication

One-way mirroring implies that a primary image or pool of images in one storage cluster gets replicated to a secondary storage cluster. One-way mirroring also supports replicating to multiple secondary storage clusters.

On the secondary storage cluster, the image is the non-primary replicate; that is, Ceph clients cannot write to the image. When data is mirrored from a primary storage cluster to a secondary storage cluster, the rbd-mirror runs ONLY on the secondary storage cluster.

For one-way mirroring to work, a couple of assumptions are made:

- You have two Ceph storage clusters and you want to replicate images from a primary storage cluster to a secondary storage cluster.

-

The secondary storage cluster has a Ceph client node attached to it running the

rbd-mirrordaemon. Therbd-mirrordaemon will connect to the primary storage cluster to sync images to the secondary storage cluster.

Two-way Replication

Two-way replication adds an rbd-mirror daemon on the primary cluster so images can be demoted on it and promoted on the secondary cluster. Changes can then be made to the images on the secondary cluster and they will be replicated in the reverse direction, from secondary to primary. Both clusters must have rbd-mirror running to allow promoting and demoting images on either cluster. Currently, two-way replication is only supported between two sites.

For two-way mirroring to work, a couple of assumptions are made:

- You have two storage clusters and you want to be able to replicate images between them in either direction.

-

Both storage clusters have a client node attached to them running the

rbd-mirrordaemon. Therbd-mirrordaemon running on the secondary storage cluster will connect to the primary storage cluster to synchronize images to secondary, and therbd-mirrordaemon running on the primary storage cluster will connect to the secondary storage cluster to synchronize images to primary.

As of Red Hat Ceph Storage 4, running multiple active rbd-mirror daemons in a single cluster is supported.

Mirroring Modes

Mirroring is configured on a per-pool basis with mirror peering storage clusters. Ceph supports two mirroring modes, depending on the type of images in the pool.

- Pool Mode

- All images in a pool with the journaling feature enabled are mirrored.

- Image Mode

- Only a specific subset of images within a pool are mirrored. You must enable mirroring for each image separately.

Image States

Whether or not an image can be modified depends on its state:

- Images in the primary state can be modified.

- Images in the non-primary state cannot be modified.

Images are automatically promoted to primary when mirroring is first enabled on an image. The promotion can happen:

- Implicitly by enabling mirroring in pool mode.

- Explicitly by enabling mirroring of a specific image.

It is possible to demote primary images and promote non-primary images.

Additional Resources

- See the Image promotion and demotion section of the Red Hat Ceph Storage Block Device Guide for more details.

5.3. Configuring one-way mirroring using Ansible

This procedure uses ceph-ansible to configure one-way replication of images on a primary storage cluster known as site-a, to a secondary storage cluster known as site-b. In the following examples, data is the name of the pool that contains the images to be mirrored.

Prerequisites

- Two running Red Hat Ceph Storage clusters.

- A Ceph client node.

- A pool with the same name exists on both clusters.

- Images within the pool must have exclusive-lock and journaling enabled for journal-based mirroring.

When using one-way replication, you can mirror to multiple secondary storage clusters.

Procedure

On the cluster where the images originate, enable the exclusive-lock and journaling features on an image.

For new images, use the

--image-featureoption:Syntax

rbd create IMAGE_NAME --size MEGABYTES --pool POOL_NAME --image-feature FEATURE[,FEATURE]Example

[root@rbd-client ~]# rbd create image1 --size 1024 --pool data --image-feature exclusive-lock,journalingFor existing images, use the

rbd feature enablecommand:Syntax

rbd feature enable POOL_NAME/IMAGE_NAME FEATURE_NAMEExample

[root@rbd-client ~]# rbd feature enable data/image1 exclusive-lock,journalingTo enable exclusive-lock and journaling on all new images by default, add the following setting to the Ceph configuration file:

rbd_default_features = 125

In the

site-acluster, complete the following steps:On a monitor node, create the user that the

rbd-mirrordaemon will use to connect to the cluster. The example creates asite-auser and outputs the key to a file namedsite-a.client.site-a.keyring:Syntax

ceph auth get-or-create client.CLUSTER_NAME mon 'profile rbd' osd 'profile rbd pool=data' -o /etc/ceph/CLUSTER_NAME.client.USER_NAME.keyringExample

[root@mon ~]# ceph auth get-or-create client.site-a mon 'profile rbd' osd 'profile rbd pool=data' -o /etc/ceph/site-a.client.site-a.keyring-

Copy the Ceph configuration file and the newly created key file from the monitor node to the

site-bmonitor and client nodes. -

Rename the Ceph configuration file from

ceph.confto CLUSTER-NAME.conf. In these examples, the file is/etc/ceph/site-a.conf.

In the

site-bcluster, complete the following steps:-

On the Ansible administration node, add an

[rbdmirrors]group in the Ansible inventory file. The usual inventory file is/etc/ansible/hosts. Under the

[rbdmirrors]group, add the name of thesite-bclient node on which therbd-mirrordaemon will run. The daemon will pull image changes fromsite-atosite-b.[rbdmirrors] ceph-clientNavigate to the

/usr/share/ceph-ansible/directory:[root@admin ~]# cd /usr/share/ceph-ansibleCreate a new

rbdmirrors.ymlfile by copyinggroup_vars/rbdmirrors.yml.sampletogroup_vars/rbdmirrors.yml:[root@admin ceph-ansible]# cp group_vars/rbdmirrors.yml.sample group_vars/rbdmirrors.yml-

Open the

group_vars/rbdmirrors.ymlfile for editing. Set

ceph_rbd_mirror_configuretotrue. Setceph_rbd_mirror_poolto the pool in which you want to mirror images. In these examples,datais the name of the pool.ceph_rbd_mirror_configure: true ceph_rbd_mirror_pool: "data"By default,

ceph-ansibleconfigures mirroring using pool mode, which mirrors all images in a pool. Enable image mode where only images that have mirroring explicitly enabled are mirrored. To enable image mode, setceph_rbd_mirror_modetoimage:ceph_rbd_mirror_mode: imageSet a name for the cluster that

rbd-mirrorwill pull from. In these examples, the other cluster issite-a.ceph_rbd_mirror_remote_cluster: "site-a"On the Ansible administration node, set the user name of the key using

ceph_rbd_mirror_remote_user. Use the same name you used when you created the key. In these examples the user is namedclient.site-a.ceph_rbd_mirror_remote_user: "client.site-a"As the ceph-ansible user, run the Ansible playbook:

Bare-metal deployments:

[user@admin ceph-ansible]$ ansible-playbook site.yml --limit rbdmirrors -i hostsContainer deployments:

[ansible@admin ceph-ansible]$ ansible-playbook site-container.yml --limit rbdmirrors -i hosts

-

On the Ansible administration node, add an

Explicitly enable mirroring on the desired images in both

site-aandsite-bclusters:Syntax

Journal-based mirroring:

rbd mirror image enable POOL/IMAGESnapshot-based mirroring:

rbd mirror image enable POOL/IMAGE snapshotExample

[root@mon ~]# rbd mirror image enable data/image1 [root@mon ~]# rbd mirror image enable data/image1 snapshotNoteRepeat this step whenever you want to mirror new image to peer cluster.

Verify the mirroring status. Run the following command from a Ceph Monitor node in the

site-bcluster:Example

Journal-based mirroring:

[root@mon ~]# rbd mirror image status data/image1 image1: global_id: 7d486c3f-d5a1-4bee-ae53-6c4f1e0c8eac state: up+replaying1 description: replaying, master_position=[object_number=3, tag_tid=1, entry_tid=3], mirror_position=[object_number=3, tag_tid=1, entry_tid=3], entries_behind_master=0 last_update: 2019-04-22 13:19:27Snapshot-based mirroring:

[root@mon ~]# rbd mirror image status data/image1 image1: global_id: 06acc9e6-a63d-4aa1-bd0d-4f3a79b0ae33 state: up+replaying1 description: replaying, {"bytes_per_second":0.0,"bytes_per_snapshot":0.0,"local_snapshot_timestamp":1642689843,"remote_snapshot_timestamp":1642689843,"replay_state":"idle"} service: admin on ceph-rbd2-vasi-43-5hwia4-node2 last_update: 2022-01-20 12:41:57NoteBased on the connection between the sites, mirroring can take a long time to sync the images.

5.4. Configuring two-way mirroring using Ansible

This procedure uses ceph-ansible to configure two-way replication so images can be mirrored in either direction between two clusters known as site-a and site-b. In the following examples, data is the name of the pool that contains the images to be mirrored.

Two-way mirroring does not allow simultaneous writes to be made to the same image on either cluster. Images are promoted on one cluster and demoted on another. Depending on their status, they will mirror in one direction or the other.

Prerequisites

- Two running Red Hat Ceph Storage clusters.

- Each cluster has a client node.

- A pool with the same name exists on both clusters.

- Images within the pool must have exclusive-lock and journaling enabled for journal-based mirroring.

Procedure

On the cluster where the images originate, enable the exclusive-lock and journaling features on an image.

For new images, use the

--image-featureoption:Syntax

rbd create IMAGE_NAME --size MEGABYTES --pool POOL_NAME --image-feature FEATURE[,FEATURE]Example

[root@rbd-client ~]# rbd create image1 --size 1024 --pool data --image-feature exclusive-lock,journalingFor existing images, use the

rbd feature enablecommand:Syntax

rbd feature enable POOL_NAME/IMAGE_NAME FEATURE_NAMEExample

[root@rbd-client ~]# rbd feature enable data/image1 exclusive-lock,journalingTo enable exclusive-lock and journaling on all new images by default, add the following setting to the Ceph configuration file:

rbd_default_features = 125

In the

site-acluster, complete the following steps:On a monitor node, create the user the

rbd-mirrordaemon will use to connect to the cluster. The example creates asite-auser and outputs the key to a file namedsite-a.client.site-a.keyring, and the Ceph configuration file is/etc/ceph/site-a.conf.Syntax

ceph auth get-or-create client.PRIMARY_CLUSTER_NAME mon 'profile rbd' osd 'profile rbd pool=data' -o /etc/ceph/PRIMARY_CLUSTER_NAME.client.USER_NAME.keyring -c /etc/ceph/PRIMARY_CLUSTER_NAME.confExample

[root@mon ~]# ceph auth get-or-create client.site-a mon 'profile rbd' osd 'profile rbd pool=data' -o /etc/ceph/site-a.client.site-a.keyring -c /etc/ceph/site-a.confCopy the keyring to the

site-bcluster. Copy the file to the client node in thesite-bcluster that therbd-daemonwill run on. Save the file to/etc/ceph/site-a.client.site-a.keyring:Syntax

scp /etc/ceph/PRIMARY_CLUSTER_NAME.client.USER_NAME.keyring root@SECONDARY_CLIENT_NODE_NAME:/etc/ceph/PRIMARY_CLUSTER_NAME.client.USER_NAME.keyringExample

[root@mon ~]# scp /etc/ceph/site-a.client.site-a.keyring root@client.site-b:/etc/ceph/site-a.client.site-a.keyringCopy the Ceph configuration file from the monitor node to the

site-bmonitor node and client nodes. The Ceph configuration file in this example is/etc/ceph/site-a.conf.Syntax

scp /etc/ceph/PRIMARY_CLUSTER_NAME.conf root@SECONDARY_MONITOR_NODE_NAME:/etc/ceph/PRIMARY_CLUSTER_NAME.conf scp /etc/ceph/PRIMARY_CLUSTER_NAME.conf user@SECONDARY_CLIENT_NODE_NAME:/etc/ceph/PRIMARY_CLUSTER_NAME.confExample

[root@mon ~]# scp /etc/ceph/site-a.conf root@mon.site-b:/etc/ceph/site-a.conf [root@mon ~]# scp /etc/ceph/site-a.conf user@client.site-b:/etc/ceph/site-a.conf

In the

site-bcluster, complete the following steps:-

Configure mirroring from

site-atosite-b. On the Ansible administration node, add an[rbdmirrors]group in the Ansible inventory file, usually/usr/share/ceph-ansible/hosts. Under the

[rbdmirrors]group, add the name of asite-bclient node that therbd-mirrordaemon will run on. This daemon pulls image changes fromsite-atosite-b.Example

[rbdmirrors] client.site-bNavigate to the

/usr/share/ceph-ansible/directory:[root@admin ~]$ cd /usr/share/ceph-ansibleCreate a new

rbdmirrors.ymlfile by copyinggroup_vars/rbdmirrors.yml.sampletogroup_vars/rbdmirrors.yml:[root@admin ceph-ansible]# cp group_vars/rbdmirrors.yml.sample group_vars/rbdmirrors.yml-

Open for editing the

group_vars/rbdmirrors.ymlfile. Set

ceph_rbd_mirror_configuretotrue, and setceph_rbd_mirror_poolto the pool you want to mirror images in. In these examples,datais the name of the pool.ceph_rbd_mirror_configure: true ceph_rbd_mirror_pool: "data"By default,

ceph-ansibleconfigures mirroring using pool mode, which mirrors all images in a pool. Enable image mode where only images that have mirroring explicitly enabled are mirrored. To enable image mode, setceph_rbd_mirror_modetoimage:ceph_rbd_mirror_mode: imageSet a name for the cluster that

rbd-mirrorin thegroup_vars/rbdmirrors.ymlfile. In these examples, the other cluster issite-a.ceph_rbd_mirror_remote_cluster: "site-a"On the Ansible administration node, set the user name of the key using

ceph_rbd_mirror_remote_userin thegroup_vars/rbdmirrors.ymlfile. Use the same name you used when you created the key. In these examples the user is namedclient.site-a.ceph_rbd_mirror_remote_user: "client.site-a"As the ansible user, run the Ansible playbook:

Bare-metal deployments:

[user@admin ceph-ansible]$ ansible-playbook site.yml --limit rbdmirrors -i hostsContainer deployments:

[user@admin ceph-ansible]$ ansible-playbook site-container.yml --limit rbdmirrors -i hosts

-

Configure mirroring from

Verify the mirroring status. Run the following command from a Ceph Monitor node on the

site-bcluster:Example

Journal-based mirroring:

[root@mon ~]# rbd mirror image status data/image1 image1: global_id: 7d486c3f-d5a1-4bee-ae53-6c4f1e0c8eac state: up+replaying1 description: replaying, master_position=[object_number=3, tag_tid=1, entry_tid=3], mirror_position=[object_number=3, tag_tid=1, entry_tid=3], entries_behind_master=0 last_update: 2021-04-22 13:19:27Snapshot-based mirroring:

[root@mon ~]# rbd mirror image status data/image1 image1: global_id: 06acc9e6-a63d-4aa1-bd0d-4f3a79b0ae33 state: up+replaying1 description: replaying, {"bytes_per_second":0.0,"bytes_per_snapshot":0.0,"local_snapshot_timestamp":1642689843,"remote_snapshot_timestamp":1642689843,"replay_state":"idle"} service: admin on ceph-rbd2-vasi-43-5hwia4-node2 last_update: 2022-01-20 12:41:57NoteBased on the connection between the sites, mirroring can take a long time to sync the images.

In the

site-bcluster, complete the following steps. The steps are largely the same as above:On a monitor node, create the user the

rbd-mirrordaemon will use to connect to the cluster. The example creates asite-buser and outputs the key to a file namedsite-b.client.site-b.keyring, and the Ceph configuration file is/etc/ceph/site-b.conf.Syntax

ceph auth get-or-create client.SECONDARY_CLUSTER_NAME mon 'profile rbd' osd 'profile rbd pool=data' -o /etc/ceph/SECONDARY_CLUSTER_NAME.client.USER_NAME.keyring -c /etc/ceph/SECONDARY_CLUSTER_NAME.confExample

[root@mon ~]# ceph auth get-or-create client.site-b mon 'profile rbd' osd 'profile rbd pool=data' -o /etc/ceph/site-b.client.site-b.keyring -c /etc/ceph/site-b.confCopy the keyring to the

site-acluster. Copy the file to the client node in thesite-acluster that therbd-daemonwill run on. Save the file to/etc/ceph/site-b.client.site-b.keyring:Syntax

scp /etc/ceph/SECONDARY_CLUSTER_NAME.client.USER_NAME.keyring root@PRIMARY_CLIENT_NODE_NAME:/etc/ceph/SECONDARY_CLUSTER_NAME.client.USER_NAME.keyringExample

[root@mon ~]# scp /etc/ceph/site-b.client.site-b.keyring root@client.site-a:/etc/ceph/site-b.client.site-b.keyringCopy the Ceph configuration file from the monitor node to the

site-amonitor node and client nodes. The Ceph configuration file in this example is/etc/ceph/site-b.conf.Syntax

scp /etc/ceph/SECONDARY_CLUSTER_NAME.conf root@PRIMARY_MONITOR_NODE_NAME:/etc/ceph/SECONDARY_CLUSTER_NAME.conf scp /etc/ceph/SECONDARY_CLUSTER_NAME.conf user@PRIMARY_CLIENT_NODE_NAME:/etc/ceph/SECONDARY_CLUSTER_NAME.confExample

[root@mon ~]# scp /etc/ceph/site-b.conf root@mon.site-a:/etc/ceph/site-b.conf [root@mon ~]# scp /etc/ceph/site-b.conf user@client.site-a:/etc/ceph/site-b.conf

In the

site-acluster, complete the following steps:-

Configure mirroring from

site-btosite-a. On the Ansible administration node, add an[rbdmirrors]group in the Ansible inventory file, usually/usr/share/ceph-ansible/hosts. Under the

[rbdmirrors]group, add the name of asite-aclient node that therbd-mirrordaemon will run on. This daemon pulls image changes fromsite-btosite-a.Example

[rbdmirrors] client.site-aNavigate to the

/usr/share/ceph-ansible/directory:[root@admin ~]# cd /usr/share/ceph-ansibleCreate a new

rbdmirrors.ymlfile by copyinggroup_vars/rbdmirrors.yml.sampletogroup_vars/rbdmirrors.yml:[root@admin ceph-ansible]# cp group_vars/rbdmirrors.yml.sample group_vars/rbdmirrors.yml-

Open for editing the

group_vars/rbdmirrors.ymlfile. Set

ceph_rbd_mirror_configuretotrue, and setceph_rbd_mirror_poolto the pool you want to mirror images in. In these examples,datais the name of the pool.ceph_rbd_mirror_configure: true ceph_rbd_mirror_pool: "data"By default,

ceph-ansibleconfigures mirroring using pool mode which mirrors all images in a pool. Enable image mode where only images that have mirroring explicitly enabled are mirrored. To enable image mode, setceph_rbd_mirror_modetoimage:ceph_rbd_mirror_mode: imageOn the Ansible administration node, set a name for the cluster that

rbd-mirrorin thegroup_vars/rbdmirrors.ymlfile. Following the examples, the other cluster is namedsite-b.ceph_rbd_mirror_remote_cluster: "site-b"On the Ansible administration node, set the user name of the key using

ceph_rbd_mirror_remote_useringroup_vars/rbdmirrors.ymlfile. In these examples the user is namedclient.site-b.ceph_rbd_mirror_remote_user: "client.site-b"As the Ansible user on the administration node, run the Ansible playbook:

Bare-metal deployments:

[user@admin ceph-ansible]$ ansible-playbook site.yml --limit rbdmirrors -i hostsContainer deployments:

[user@admin ceph-ansible]$ ansible-playbook site-container.yml --limit rbdmirrors -i hosts

-

Configure mirroring from

Explicitly enable mirroring on the desired images in both

site-aandsite-bclusters:Syntax

Journal-based mirroring:

rbd mirror image enable POOL/IMAGESnapshot-based mirroring:

rbd mirror image enable POOL/IMAGE snapshotExample

[root@mon ~]# rbd mirror image enable data/image1 [root@mon ~]# rbd mirror image enable data/image1 snapshotNoteRepeat this step whenever you want to mirror new image to peer cluster.

Verify the mirroring status. Run the following command from the client node on the

site-acluster:Example

Journal-based mirroring:

[root@mon ~]# rbd mirror image status data/image1 image1: global_id: 08027096-d267-47f8-b52e-59de1353a034 state: up+stopped1 description: local image is primary last_update: 2021-04-16 15:45:31Snapshot-based mirroring:

[root@mon ~]# rbd mirror image status data/image1 image1: global_id: 47fd1aae-5f19-4193-a5df-562b5c644ea7 state: up+stopped1 description: local image is primary service: admin on ceph-rbd1-vasi-43-5hwia4-node2 last_update: 2022-01-20 12:42:54 peer_sites: name: rbd-mirror.site-b state: up+replaying description: replaying, {"bytes_per_second":0.0,"bytes_per_snapshot":0.0,"local_snapshot_timestamp":1642693094,"remote_snapshot_timestamp":1642693094,"replay_state":"idle"} last_update: 2022-01-20 12:42:59 snapshots: 5 .mirror.primary.47fd1aae-5f19-4193-a5df-562b5c644ea7.dda146c6-5f21-4e75-ba93-660f6e57e301 (peer_uuids:[bfd09289-c9c9-40c8-b2d3-ead9b6a99a45])

5.5. Configuring one-way mirroring using the command-line interface

This procedure configures one-way replication of a pool from the primary storage cluster to a secondary storage cluster.

When using one-way replication you can mirror to multiple secondary storage clusters.

Examples in this section will distinguish between two storage clusters by referring to the primary storage cluster with the primary images as site-a, and the secondary storage cluster you are replicating the images to, as site-b. The pool name used in these examples is called data.

Prerequisites

- A minimum of two healthy and running Red Hat Ceph Storage clusters.

- Root-level access to a Ceph client node for each storage cluster.

- A CephX user with administrator-level capabilities.

- Images within the pool must have exclusive-lock and journaling enabled for journal-based mirroring.

Procedure

Install the

rbd-mirrorpackage on the client node connected to thesite-bstorage cluster:Red Hat Enterprise Linux 7

[root@rbd-client ~]# yum install rbd-mirrorRed Hat Enterprise Linux 8

[root@rbd-client ~]# dnf install rbd-mirrorNoteThe package is provided by the Red Hat Ceph Storage Tools repository.

Enable the exclusive-lock, and journaling features on an image.

For new images, use the

--image-featureoption:Syntax

rbd create IMAGE_NAME --size MEGABYTES --pool POOL_NAME --image-feature FEATURE [,FEATURE]Example

[root@rbd-client ~]# rbd create image1 --size 1024 --pool data --image-feature exclusive-lock,journalingFor existing images, use the

rbd feature enablecommand:Syntax

rbd feature enable POOL_NAME/IMAGE_NAME FEATURE [,FEATURE]Example

[root@rbd-client ~]# rbd feature enable data/image1 exclusive-lock,journalingTo enable exclusive-lock and journaling on all new images by default, add the following setting to the Ceph configuration file:

rbd_default_features = 125

Choose the mirroring mode, either pool or image mode.

ImportantUse image mode for snapshot-based mirroring.

Enabling pool mode:

Syntax

rbd mirror pool enable POOL_NAME MODEExample

[root@rbd-client ~]# rbd mirror pool enable data poolThis example enables mirroring of the whole pool named

data.Enabling image mode:

Syntax

rbd mirror pool enable POOL_NAME MODEExample

[root@rbd-client ~]# rbd mirror pool enable data imageThis example enables image mode mirroring on the pool named

data.Verify that mirroring has been successfully enabled:

Syntax

rbd mirror pool info POOL_NAMEExample

[root@rbd-client ~]# rbd mirror pool info data Mode: image Site Name: 94cbd9ca-7f9a-441a-ad4b-52a33f9b7148 Peer Sites: none

In the

site-acluster, complete the following steps:On the Ceph client node, create a user:

Syntax

ceph auth get-or-create client.PRIMARY_CLUSTER_NAME mon 'profile rbd-mirror' osd 'profile rbd' -o /etc/ceph/ceph.PRIMARY_CLUSTER_NAME.keyringExample

[root@rbd-client-site-a ~]# ceph auth get-or-create client.rbd-mirror.site-a mon 'profile rbd-mirror' osd 'profile rbd' -o /etc/ceph/ceph.client.rbd-mirror.site-a.keyringCopy keyring to

site-bcluster:Syntax

scp /etc/ceph/ceph.PRIMARY_CLUSTER_NAME.keyring root@SECONDARY_CLUSTER:_PATH_Example

[root@rbd-client-site-a ~]# scp /etc/ceph/ceph.client.rbd-mirror.site-a.keyring root@rbd-client-site-b:/etc/ceph/On a Ceph client node, bootstrap the storage cluster peers.

Register the storage cluster peer to the pool:

Syntax

rbd mirror pool peer bootstrap create --site-name LOCAL_SITE_NAME POOL_NAME > PATH_TO_BOOTSTRAP_TOKENExample

[root@rbd-client-site-a ~]# rbd mirror pool peer bootstrap create --site-name rbd-mirror.site-a data > /root/bootstrap_token_rbd-mirror.site-aNoteThis example bootstrap command creates the

client.rbd-mirror-peerCeph user.Copy the bootstrap token file to the

site-bstorage cluster.Syntax

scp PATH_TO_BOOTSTRAP_TOKEN root@SECONDARY_CLUSTER:/root/Example

[root@rbd-client-site-a ~]# scp /root/bootstrap_token_site-a root@ceph-rbd2:/root/

In the

site-bcluster, complete the following steps:On the client node, create a user:

Syntax

ceph auth get-or-create client.SECONDARY_CLUSTER_NAME mon 'profile rbd-mirror' osd 'profile rbd' -o /etc/ceph/ceph.SECONDARY_CLUSTER_NAME.keyringExample

[root@rbd-client-site-b ~]# ceph auth get-or-create client.rbd-mirror.site-b mon 'profile rbd-mirror' osd 'profile rbd' -o /etc/ceph/ceph.client.rbd-mirror.site-b.keyringCopy keyring to the

site-acluster, the Ceph client node:Syntax

scp /etc/ceph/ceph.SECONDARY_CLUSTER_NAME.keyring root@PRIMARY_CLUSTER:_PATH_Example

[root@rbd-client-site-b ~]# scp /etc/ceph/ceph.client.rbd-mirror.site-b.keyring root@rbd-client-site-a:/etc/ceph/Import the bootstrap token:

Syntax

rbd mirror pool peer bootstrap import --site-name LOCAL_SITE_NAME --direction rx-only POOL_NAME PATH_TO_BOOTSTRAP_TOKENExample

[root@rbd-client-site-b ~]# rbd mirror pool peer bootstrap import --site-name rbd-mirror.site-b --direction rx-only data /root/bootstrap_token_rbd-mirror.site-aNoteFor one-way RBD mirroring, you must use the

--direction rx-onlyargument, as two-way mirroring is the default when bootstrapping peers.Enable and start the

rbd-mirrordaemon on client node:Syntax

systemctl enable ceph-rbd-mirror.target systemctl enable ceph-rbd-mirror@rbd-mirror.CLIENT_ID systemctl start ceph-rbd-mirror@rbd-mirror.CLIENT_IDReplace

CLIENT_IDwith the Ceph user created earlier.Example

[root@rbd-client-site-b ~]# systemctl enable ceph-rbd-mirror.target [root@rbd-client-site-b ~]# systemctl enable ceph-rbd-mirror@rbd-mirror.site-a [root@rbd-client-site-b ~]# systemctl start ceph-rbd-mirror@rbd-mirror.site-a

ImportantEach

rbd-mirrordaemon must have a unique Client ID.To verify the mirroring status, run the following command from a Ceph Monitor node in the

site-aandsite-bclusters:Syntax

rbd mirror image status POOL_NAME/IMAGE_NAMEExample

Journal-based mirroring:

[root@mon-site-a ~]# rbd mirror image status data/image1 image1: global_id: 08027096-d267-47f8-b52e-59de1353a034 state: up+stopped1 description: local image is primary last_update: 2021-04-22 13:45:31Snapshot-based mirroring:

[root@mon-site-a ~]# rbd mirror image status data/image1 image1: global_id: 47fd1aae-5f19-4193-a5df-562b5c644ea7 state: up+stopped1 description: local image is primary service: admin on ceph-rbd1-vasi-43-5hwia4-node2 last_update: 2022-01-20 12:42:54 peer_sites: name: rbd-mirror.site-b state: up+replaying description: replaying, {"bytes_per_second":0.0,"bytes_per_snapshot":0.0,"local_snapshot_timestamp":1642693094,"remote_snapshot_timestamp":1642693094,"replay_state":"idle"} last_update: 2022-01-20 12:42:59 snapshots: 5 .mirror.primary.47fd1aae-5f19-4193-a5df-562b5c644ea7.dda146c6-5f21-4e75-ba93-660f6e57e301 (peer_uuids:[bfd09289-c9c9-40c8-b2d3-ead9b6a99a45])Example

Journal-based mirroring: