Configuration Guide

Configuration settings for Red Hat Ceph Storage

Abstract

Chapter 1. The basics of Ceph configuration

As a storage administrator, you need to have a basic understanding of how to view the Ceph configuration, and how to set the Ceph configuration options for the Red Hat Ceph Storage cluster. You can view and set the Ceph configuration options at runtime.

1.1. Prerequisites

- Installation of the Red Hat Ceph Storage software.

1.2. Ceph configuration

All Red Hat Ceph Storage clusters have a configuration, which defines:

- Cluster Identity

- Authentication settings

- Ceph daemons

- Network configuration

- Node names and addresses

- Paths to keyrings

- Paths to OSD log files

- Other runtime options

A deployment tool, such as cephadm, will typically create an initial Ceph configuration file for you. However, you can create one yourself if you prefer to bootstrap a Red Hat Ceph Storage cluster without using a deployment tool.

Additional Resources

-

For more information about

cephadmand the Ceph orchestrator, see the Red Hat Ceph Storage Operations Guide.

1.3. The Ceph configuration database

The Ceph Monitor manages a configuration database of Ceph options that centralize configuration management by storing configuration options for the entire storage cluster. By centralizing the Ceph configuration in a database, this simplifies storage cluster administration.

The priority order that Ceph uses to set options is:

- Compiled-in default values

- Ceph cluster configuration database

-

Local

ceph.conffile -

Runtime override, using the

ceph daemon DAEMON-NAME config setorceph tell DAEMON-NAME injectargscommands

There are still a few Ceph options that can be defined in the local Ceph configuration file, which is /etc/ceph/ceph.conf by default. However, ceph.conf has been deprecated for Red Hat Ceph Storage 5.

cephadm uses a basic ceph.conf file that only contains a minimal set of options for connecting to Ceph Monitors, authenticating, and fetching configuration information. In most cases, cephadm uses only the mon_host option. To avoid using ceph.conf only for the mon_host option, use DNS SRV records to perform operations with Monitors.

Red Hat recommends that you use the assimilate-conf administrative command to move valid options into the configuration database from the ceph.conf file. For more information about assimilate-conf, see Administrative Commands.

Ceph allows you to make changes to the configuration of a daemon at runtime. This capability can be useful for increasing or decreasing the logging output, by enabling or disabling debug settings, and can even be used for runtime optimization.

When the same option exists in the configuration database and the Ceph configuration file, the configuration database option has a lower priority than what is set in the Ceph configuration file.

Sections and Masks

Just as you can configure Ceph options globally, per daemon type, or by a specific daemon in the Ceph configuration file, you can also configure the Ceph options in the configuration database according to these sections:

| Section | Description |

|---|---|

|

| Affects all daemons and clients. |

|

| Affects all Ceph Monitors. |

|

| Affects all Ceph Managers. |

|

| Affects all Ceph OSDs. |

|

| Affects all Ceph Metadata Servers. |

|

| Affects all Ceph Clients, including mounted file systems, block devices, and RADOS Gateways. |

Ceph configuration options can have a mask associated with them. These masks can further restrict which daemons or clients the options apply to.

Masks have two forms:

type:locationThe

typeis a CRUSH property, for example,rackorhost. Thelocationis a value for the property type. For example,host:foolimits the option only to daemons or clients running on thefoohost.Example

ceph config set osd/host:magna045 debug_osd 20class:device-classThe

device-classis the name of the CRUSH device class, such ashddorssd. For example,class:ssdlimits the option only to Ceph OSDs backed by solid state drives (SSD). This mask has no effect on non-OSD daemons of clients.Example

ceph config set osd/class:hdd osd_max_backfills 8

Administrative Commands

The Ceph configuration database can be administered with the subcommand ceph config ACTION. These are the actions you can do:

ls- Lists the available configuration options.

dump- Dumps the entire configuration database of options for the storage cluster.

get WHO-

Dumps the configuration for a specific daemon or client. For example, WHO can be a daemon, like

mds.a. set WHO OPTION VALUE- Sets a configuration option in the Ceph configuration database, where WHO is the target daemon, OPTION is the option to set, and VALUE is the desired value.

show WHO- Shows the reported running configuration for a running daemon. These options might be different from those stored by the Ceph Monitors if there is a local configuration file in use or options have been overridden on the command line or at run time. Also, the source of the option values is reported as part of the output.

assimilate-conf -i INPUT_FILE -o OUTPUT_FILE- Assimilate a configuration file from the INPUT_FILE and move any valid options into the Ceph Monitors’ configuration database. Any options that are unrecognized, invalid, or cannot be controlled by the Ceph Monitor return in an abbreviated configuration file stored in the OUTPUT_FILE. This command can be useful for transitioning from legacy configuration files to a centralized configuration database. Note that when you assimilate a configuration and the Monitors or other daemons have different configuration values set for the same set of options, the end result depends on the order in which the files are assimilated.

help OPTION -f json-pretty- Displays help for a particular OPTION using a JSON-formatted output.

1.4. Using the Ceph metavariables

Metavariables simplify Ceph storage cluster configuration dramatically. When a metavariable is set in a configuration value, Ceph expands the metavariable into a concrete value.

Metavariables are very powerful when used within the [global], [osd], [mon], or [client] sections of the Ceph configuration file. However, you can also use them with the administration socket. Ceph metavariables are similar to Bash shell expansion.

Ceph supports the following metavariables:

$cluster- Description

- Expands to the Ceph storage cluster name. Useful when running multiple Ceph storage clusters on the same hardware.

- Example

-

/etc/ceph/$cluster.keyring - Default

-

ceph

$type- Description

-

Expands to one of

osdormon, depending on the type of the instant daemon. - Example

-

/var/lib/ceph/$type

$id- Description

-

Expands to the daemon identifier. For

osd.0, this would be0. - Example

-

/var/lib/ceph/$type/$cluster-$id

$host- Description

- Expands to the host name of the instant daemon.

$name- Description

-

Expands to

$type.$id. - Example

-

/var/run/ceph/$cluster-$name.asok

1.5. Viewing the Ceph configuration at runtime

The Ceph configuration files can be viewed at boot time and run time.

Prerequisites

- Root-level access to the Ceph node.

- Access to admin keyring.

Procedure

To view a runtime configuration, log in to a Ceph node running the daemon and execute:

Syntax

ceph daemon DAEMON_TYPE.ID config showTo see the configuration for

osd.0, you can log into the node containingosd.0and execute this command:Example

[root@osd ~]# ceph daemon osd.0 config showFor additional options, specify a daemon and

help.Example

[root@osd ~]# ceph daemon osd.0 help

1.6. Viewing a specific configuration at runtime

Configuration settings for Red Hat Ceph Storage can be viewed at runtime from the Ceph Monitor node.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the Ceph Monitor node.

Procedure

Log into a Ceph node and execute:

Syntax

ceph daemon DAEMON_TYPE.ID config get PARAMETERExample

[root@mon ~]# ceph daemon osd.0 config get public_addr

1.7. Setting a specific configuration at runtime

To set a specific Ceph configuration at runtime, use the ceph config set command.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the Ceph Monitor or OSD nodes.

Procedure

Set the configuration on all Monitor or OSD daemons :

Syntax

ceph config set DAEMON CONFIG-OPTION VALUEExample

[root@mon ~]# ceph config set osd debug_osd 10Validate that the option and value are set:

Example

[root@mon ~]# ceph config dump osd advanced debug_osd 10/10To remove the configuration option from all daemons:

Syntax

ceph config rm DAEMON CONFIG-OPTION VALUEExample

[root@mon ~]# ceph config rm osd debug_osdTo set the configuration for a specific daemon:

Syntax

ceph config set DAEMON.DAEMON-NUMBER CONFIG-OPTION VALUEExample

[root@mon ~]# ceph config set osd.0 debug_osd 10To validate that the configuration is set for the specified daemon:

Example

[root@mon ~]# ceph config dump osd.0 advanced debug_osd 10/10To remove the configuration for a specific daemon:

Syntax

ceph config rm DAEMON.DAEMON-NUMBER CONFIG-OPTIONExample

[root@mon ~]# ceph config rm osd.0 debug_osd

If you use a client that does not support reading options from the configuration database, or if you still need to use ceph.conf to change your cluster configuration for other reasons, run the following command:

ceph config set mgr mgr/cephadm/manage_etc_ceph_ceph_conf false

You must maintain and distribute the ceph.conf file across the storage cluster.

1.8. OSD Memory Target

BlueStore keeps OSD heap memory usage under a designated target size with the osd_memory_target configuration option.

The option osd_memory_target sets OSD memory based upon the available RAM in the system. Use this option when TCMalloc is configured as the memory allocator, and when the bluestore_cache_autotune option in BlueStore is set to true.

Ceph OSD memory caching is more important when the block device is slow; for example, traditional hard drives, because the benefit of a cache hit is much higher than it would be with a solid state drive. However, this must be weighed into a decision to colocate OSDs with other services, such as in a hyper-converged infrastructure (HCI) or other applications.

1.8.1. Setting the OSD memory target

Use the osd_memory_target option to set the maximum memory threshold for all OSDs in the storage cluster, or for specific OSDs. An OSD with an osd_memory_target option set to 16 GB might use up to 16 GB of memory.

Configuration options for individual OSDs take precedence over the settings for all OSDs.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to all hosts in the storage cluster.

Procedure

To set

osd_memory_targetfor all OSDs in the storage cluster:Syntax

ceph config set osd osd_memory_target VALUEVALUE is the number of GBytes of memory to be allocated to each OSD in the storage cluster.

To set

osd_memory_targetfor a specific OSD in the storage cluster:Syntax

ceph config set osd.id osd_memory_target VALUE.idis the ID of the OSD and VALUE is the number of GB of memory to be allocated to the specified OSD. For example, to configure the OSD with ID 8 to use up to 16 GBytes of memory:Example

[ceph: root@host01 /]# ceph config set osd.8 osd_memory_target 16GTo set an individual OSD to use one maximum amount of memory and configure the rest of the OSDs to use another amount, specify the individual OSD first:

Example

[ceph: root@host01 /]# ceph config set osd osd_memory_target 16G [ceph: root@host01 /]# ceph config set osd.8 osd_memory_target 8G

1.9. Automatically tuning OSD memory

The OSD daemons adjust the memory consumption based on the osd_memory_target configuration option. The option osd_memory_target sets OSD memory based upon the available RAM in the system.

If Red Hat Ceph Storage is deployed on dedicated nodes that do not share memory with other services, cephadm automatically adjusts the per-OSD consumption based on the total amount of RAM and the number of deployed OSDs.

By default, the osd_memory_target_autotune parameter is set to true in Red Hat Ceph Storage 5.1.

Syntax

ceph config set osd osd_memory_target_autotune true

Once the storage cluster is upgraded to Red Hat Ceph Storage 5.0, for cluster maintenance such as addition of OSDs or replacement of OSDs, Red Hat recommends setting osd_memory_target_autotune parameter to true to autotune osd memory as per system memory.

Cephadm starts with a fraction mgr/cephadm/autotune_memory_target_ratio, which defaults to 0.7 of the total RAM in the system, subtract off any memory consumed by non-autotuned daemons such as non-OSDS and for OSDs for which osd_memory_target_autotune is false, and then divide by the remaining OSDs.

By default, autotune_memory_target_ratio is 0.2 for hyper-converged infrastructure and 0.7 for other environments.

The osd_memory_target parameter is calculated as follows:

Syntax

osd_memory_target = TOTAL_RAM_OF_THE_OSD_NODE (in Bytes) * (autotune_memory_target_ratio) / NUMBER_OF_OSDS_IN_THE_OSD_NODE - (SPACE_ALLOCATED_FOR_OTHER_DAEMONS (in Bytes))SPACE_ALLOCATED_FOR_OTHER_DAEMONS may optionally include the following daemon space allocations:

- Alertmanager: 1 GB

- Grafana: 1 GB

- Ceph Manager: 4 GB

- Ceph Monitor: 2 GB

- Node-exporter: 1 GB

- Prometheus: 1 GB

For example, if a node has 24 OSDs and has 251 GB RAM space, then osd_memory_target is 7860684936.

The final targets are reflected in the configuration database with options. You can view the limits and the current memory consumed by each daemon from the ceph orch ps output under MEM LIMIT column.

In Red Hat Ceph Storage 5.1, the default setting of osd_memory_target_autotune true is unsuitable for hyperconverged infrastructures where compute and Ceph storage services are colocated. In a hyperconverged infrastructure, the autotune_memory_target_ratio can be set to 0.2 to reduce the memory consumption of Ceph.

Example

[ceph: root@host01 /]# ceph config set mgr mgr/cephadm/autotune_memory_target_ratio 0.2You can manually set a specific memory target for an OSD in the storage cluster.

Example

[ceph: root@host01 /]# ceph config set osd.123 osd_memory_target 7860684936You can manually set a specific memory target for an OSD host in the storage cluster.

Syntax

ceph config set osd/host:HOSTNAME osd_memory_target TARGET_BYTESExample

[ceph: root@host01 /]# ceph config set osd/host:host01 osd_memory_target 1000000000

Enabling osd_memory_target_autotune overwrites existing manual OSD memory target settings. To prevent daemon memory from being tuned even when the osd_memory_target_autotune option or other similar options are enabled, set the _no_autotune_memory label on the host.

Syntax

ceph orch host label add HOSTNAME _no_autotune_memoryYou can exclude an OSD from memory autotuning by disabling the autotune option and setting a specific memory target.

Example

[ceph: root@host01 /]# ceph config set osd.123 osd_memory_target_autotune false

[ceph: root@host01 /]# ceph config set osd.123 osd_memory_target 16G1.10. MDS Memory Cache Limit

MDS servers keep their metadata in a separate storage pool, named cephfs_metadata, and are the users of Ceph OSDs. For Ceph File Systems, MDS servers have to support an entire Red Hat Ceph Storage cluster, not just a single storage device within the storage cluster, so their memory requirements can be significant, particularly if the workload consists of small-to-medium-size files, where the ratio of metadata to data is much higher.

Example: Set the mds_cache_memory_limit to 2000000000 bytes

ceph_conf_overrides:

osd:

mds_cache_memory_limit=2000000000For a large Red Hat Ceph Storage cluster with a metadata-intensive workload, do not put an MDS server on the same node as other memory-intensive services, doing so gives you the option to allocate more memory to MDS, for example, sizes greater than 100 GB.

Additional Resources

- See Metadata Server cache size limits in Red Hat Ceph Storage File System Guide.

1.11. Additional Resources

- See the general Ceph configuration options in Appendix A for specific option descriptions and usage.

Chapter 2. Ceph network configuration

As a storage administrator, you must understand the network environment that the Red Hat Ceph Storage cluster will operate in, and configure the Red Hat Ceph Storage accordingly. Understanding and configuring the Ceph network options will ensure optimal performance and reliability of the overall storage cluster.

2.1. Prerequisites

- Network connectivity.

- Installation of the Red Hat Ceph Storage software.

2.2. Network configuration for Ceph

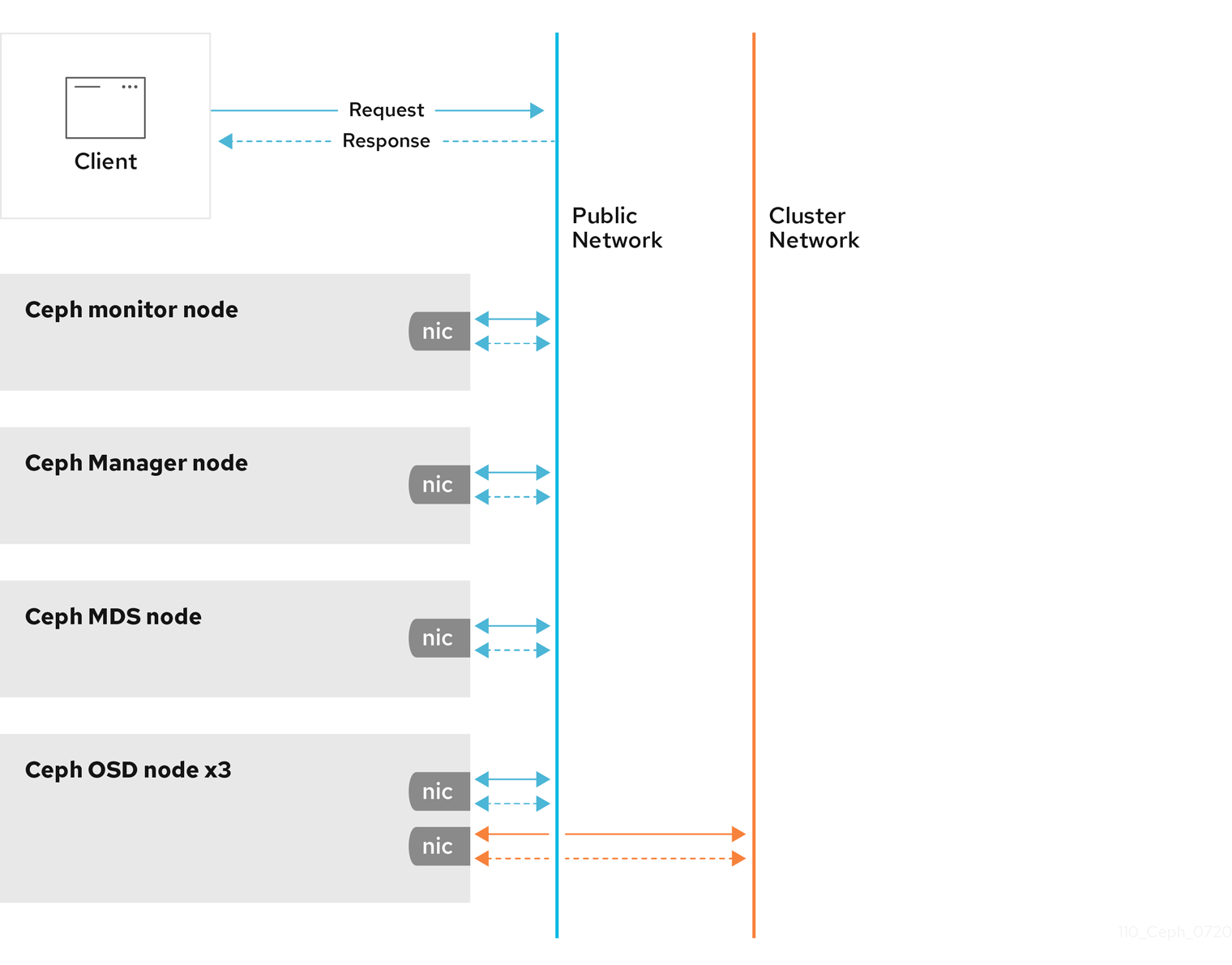

Network configuration is critical for building a high performance Red Hat Ceph Storage cluster. The Ceph storage cluster does not perform request routing or dispatching on behalf of the Ceph client. Instead, Ceph clients make requests directly to Ceph OSD daemons. Ceph OSDs perform data replication on behalf of Ceph clients, which means replication and other factors impose additional loads on the networks of Ceph storage clusters.

Ceph has one network configuration requirement that applies to all daemons. The Ceph configuration file must specify the host for each daemon.

Some deployment utilities, such as cephadm creates a configuration file for you. Do not set these values if the deployment utility does it for you.

The host option is the short name of the node, not its FQDN. It is not an IP address.

All Ceph clusters must use a public network. However, unless you specify an internal cluster network, Ceph assumes a single public network. Ceph can function with a public network only, but for large storage clusters, you will see significant performance improvement with a second private network for carrying only cluster-related traffic.

Red Hat recommends running a Ceph storage cluster with two networks. One public network and one private network.

To support two networks, each Ceph Node will need to have more than one network interface card (NIC).

There are several reasons to consider operating two separate networks:

- Performance: Ceph OSDs handle data replication for the Ceph clients. When Ceph OSDs replicate data more than once, the network load between Ceph OSDs easily dwarfs the network load between Ceph clients and the Ceph storage cluster. This can introduce latency and create a performance problem. Recovery and rebalancing can also introduce significant latency on the public network.

-

Security: While most people are generally civil, some actors will engage in what is known as a Denial of Service (DoS) attack. When traffic between Ceph OSDs gets disrupted, peering may fail and placement groups may no longer reflect an

active + cleanstate, which may prevent users from reading and writing data. A great way to defeat this type of attack is to maintain a completely separate cluster network that does not connect directly to the internet.

Network configuration settings are not required. Ceph can function with a public network only, assuming a public network is configured on all hosts running a Ceph daemon. However, Ceph allows you to establish much more specific criteria, including multiple IP networks and subnet masks for your public network. You can also establish a separate cluster network to handle OSD heartbeat, object replication, and recovery traffic.

Do not confuse the IP addresses you set in the configuration with the public-facing IP addresses network clients might use to access your service. Typical internal IP networks are often 192.168.0.0 or 10.0.0.0.

Ceph uses CIDR notation for subnets, for example, 10.0.0.0/24.

If you specify more than one IP address and subnet mask for either the public or the private network, the subnets within the network must be capable of routing to each other. Additionally, make sure you include each IP address and subnet in your IP tables and open ports for them as necessary.

When you configured the networks, you can restart the cluster or restart each daemon. Ceph daemons bind dynamically, so you do not have to restart the entire cluster at once if you change the network configuration.

2.3. Ceph network messenger

Messenger is the Ceph network layer implementation. Red Hat supports two messenger types:

-

simple -

async

In Red Hat Ceph Storage 4 and higher, async is the default messenger type. To change the messenger type, specify the ms_type configuration setting in the [global] section of the Ceph configuration file.

For the async messenger, Red Hat supports the posix transport type, but does not currently support rdma or dpdk. By default, the ms_type setting in Red Hat Ceph Storage 4 or higher reflects async+posix, where async is the messenger type and posix is the transport type.

SimpleMessenger

The SimpleMessenger implementation uses TCP sockets with two threads per socket. Ceph associates each logical session with a connection. A pipe handles the connection, including the input and output of each message. While SimpleMessenger is effective for the posix transport type, it is not effective for other transport types such as rdma or dpdk.

AsyncMessenger

Consequently, AsyncMessenger is the default messenger type for Red Hat Ceph Storage 4 or higher. For Red Hat Ceph Storage 4 or higher, the AsyncMessenger implementation uses TCP sockets with a fixed-size thread pool for connections, which should be equal to the highest number of replicas or erasure-code chunks. The thread count can be set to a lower value if performance degrades due to a low CPU count or a high number of OSDs per server.

Red Hat does not support other transport types such as rdma or dpdk at this time.

Additional Resources

- See the AsyncMessenger options in Red Hat Ceph Storage Configuration Guide, Appendix B for specific option descriptions and usage.

- See the Red Hat Ceph Storage Architecture Guide for details about using on-wire encryption with the Ceph messenger version 2 protocol.

2.4. Configuring a public network

To configure Ceph networks, use the config set command within the cephadm shell. Note that the IP addresses you set in your network configuration are different from the public-facing IP addresses that network clients might use to access your service.

Ceph functions perfectly well with only a public network. However, Ceph allows you to establish much more specific criteria, including multiple IP networks for your public network.

You can also establish a separate, private cluster network to handle OSD heartbeat, object replication, and recovery traffic. For more information about the private network, see Configuring a private network.

Ceph uses CIDR notation for subnets, for example, 10.0.0.0/24. Typical internal IP networks are often 192.168.0.0/24 or 10.0.0.0/24.

If you specify more than one IP address for either the public or the cluster network, the subnets within the network must be capable of routing to each other. In addition, make sure you include each IP address in your IP tables, and open ports for them as necessary.

The public network configuration allows you specifically define IP addresses and subnets for the public network.

Prerequisites

- Installation of the Red Hat Ceph Storage software.

Procedure

Log in to the

cephadmshell:Example

[root@host01 ~]# cephadm shellConfigure the public network with the subnet:

Syntax

ceph config set mon public_network IP_ADDRESS_WITH_SUBNETExample

[ceph: root@host01 /]# ceph config set mon public_network 192.168.0.0/24Get the list of services in the storage cluster:

Example

[ceph: root@host01 /]# ceph orch lsRestart the daemons. Ceph daemons bind dynamically, so you do not have to restart the entire cluster at once if you change the network configuration for a specific daemon.

Example

[ceph: root@host01 /]# ceph orch restart monOptional: If you want to restart the cluster, on the admin node as a root user, run

systemctlcommand:Syntax

systemctl restart ceph-FSID_OF_CLUSTER.targetExample

[root@host01 ~]# systemctl restart ceph-1ca9f6a8-d036-11ec-8263-fa163ee967ad.target

2.5. Configuring multiple public networks to the cluster

When the user wants to place the Ceph Monitor daemons on hosts belonging to multiple network subnets, configuring multiple public networks to the cluster is necessary. An example of usage is a stretch cluster mode used for Advanced Cluster Management (ACM) in Metro DR for OpenShift Data Foundation.

You can configure multiple public networks to the cluster during bootstrap and once bootstrap is complete.

Prerequisites

- Before adding a host be sure that you have a running Red Hat Ceph Storage cluster.

Procedure

Bootstrap a Ceph cluster configured with multiple public networks.

Prepare a

ceph.conffile containing amonpublic network section:ImportantAt least one of the provided public networks must be configured on the current host used for bootstrap.

Syntax

[mon] public_network = PUBLIC_NETWORK1, PUBLIC_NETWORK2Example

[mon] public_network = 10.40.0.0/24, 10.41.0.0/24, 10.42.0.0/24This is an example with three public networks to be provided for bootstrap.

Bootstrap the cluster by providing the

ceph.conffile as input:NoteDuring the bootstrap you can include any other arguments that you want to provide.

Syntax

cephadm --image IMAGE_URL bootstrap --mon-ip MONITOR_IP -c PATH_TO_CEPH_CONFNoteAlternatively, an

IMAGE_ID(such as,13ea90216d0be03003d12d7869f72ad9de5cec9e54a27fd308e01e467c0d4a0a) can be used instead ofIMAGE_URL.Example

[root@host01 ~]# cephadm –image cp.icr.io/cp/ibm-ceph/ceph-5-rhel8:latest bootstrap –mon-ip 10.40.0.0/24 -c /etc/ceph/ceph.conf

Add new hosts to the subnets:

NoteThe host being added must be reachable from the host that the active manager is running on.

Install the cluster’s public SSH key in the new host’s root user’s

authorized_keysfile:Syntax

ssh-copy-id -f -i /etc/ceph/ceph.pub root@NEW_HOSTExample

[root@host01 ~]# ssh-copy-id -f -i /etc/ceph/ceph.pub root@host02 [root@host01 ~]# ssh-copy-id -f -i /etc/ceph/ceph.pub root@host03Log into

cephadmshell:Example

[root@host01 ~]# cephadm shellAdd the new host to the Ceph cluster:

Syntax

ceph orch host add NEW_HOST IP [LABEL1 ...]Example

[root@host01 ~]# ceph orch host add host02 10.10.0.102 label1 [root@host01 ~]# ceph orch host add host03 10.10.0.103 label2Note- It is best to explicitly provide the host IP address. If an IP is not provided, then the host name is immediately resolved via DNS and that IP is used.

-

One or more labels can also be included to immediately label the new host. For example, by default the

_adminlabel makes cephadm maintain a copy of theceph.conffile and aclient.adminkeyring file in/etc/cephdirectory.

Add the networks configurations for the public network parameters to a running cluster. Be sure that the subnets are separated by commas and that the subnets are listed in subnet/mask format.

Syntax

ceph config set mon public_network "SUBNET_1,SUBNET_2, ..."Example

[root@host01 ~]# ceph config set mon public_network "192.168.0.0/24, 10.42.0.0/24, ..."If necessary, update the

monspecifications to place themondaemons on hosts within the specified subnets.

2.6. Configuring a private network

Network configuration settings are not required. Ceph assumes a public network with all hosts operating on it, unless you specifically configure a cluster network, also known as a private network.

If you create a cluster network, OSDs routes heartbeat, object replication, and recovery traffic over the cluster network. This can improve performance, compared to using a single network.

For added security, the cluster network should not be reachable from the public network or the Internet.

To assign a cluster network, use the --cluster-network option with the cephadm bootstrap command. The cluster network that you specify must define a subnet in CIDR notation (for example, 10.90.90.0/24 or fe80::/64).

You can also configure the cluster_network after boostrap.

Prerequisites

- Access to the Ceph software repository.

- Root-level access to all nodes in the storage cluster.

Procedure

Run the

cephadm bootstrap commandfrom the initial node that you want to use as the Monitor node in the storage cluster. Include the--cluster-networkoption in the command.Syntax

cephadm bootstrap --mon-ip IP-ADDRESS --registry-url registry.redhat.io --registry-username USER_NAME --registry-password PASSWORD --cluster-network NETWORK-IP-ADDRESSExample

[root@host01 ~]# cephadm bootstrap --mon-ip 10.10.128.68 --registry-url registry.redhat.io --registry-username myuser1 --registry-password mypassword1 --cluster-network 10.10.0.0/24To configure the

cluster_networkafter bootstrap, run theconfig setcommand and redeploy the daemons:Log in to the

cephadmshell:Example

[root@host01 ~]# cephadm shellConfigure the cluster network with the subnet:

Syntax

ceph config set global cluster_network IP_ADDRESS_WITH_SUBNETExample

[ceph: root@host01 /]# ceph config set global cluster_network 10.10.0.0/24Get the list of services in the storage cluster:

Example

[ceph: root@host01 /]# ceph orch lsRestart the daemons. Ceph daemons bind dynamically, so you do not have to restart the entire cluster at once if you change the network configuration for a specific daemon.

Example

[ceph: root@host01 /]# ceph orch restart monOptional: If you want to restart the cluster, on the admin node as a root user, run

systemctlcommand:Syntax

systemctl restart ceph-FSID_OF_CLUSTER.targetExample

[root@host01 ~]# systemctl restart ceph-1ca9f6a8-d036-11ec-8263-fa163ee967ad.target

2.7. Verifying firewall rules are configured for default Ceph ports

By default, Red Hat Ceph Storage daemons use TCP ports 6800—7100 to communicate with other hosts in the cluster. You can verify that the host’s firewall allows connection on these ports.

If your network has a dedicated firewall, you might need to verify its configuration in addition to following this procedure. See the firewall’s documentation for more information.

See the firewall’s documentation for more information.

Prerequisites

- Root-level access to the host.

Procedure

Verify the host’s

iptablesconfiguration:List active rules:

[root@host1 ~]# iptables -LVerify the absence of rules that restrict connectivity on TCP ports 6800—7100.

Example

REJECT all -- anywhere anywhere reject-with icmp-host-prohibited

Verify the host’s

firewalldconfiguration:List ports open on the host:

Syntax

firewall-cmd --zone ZONE --list-portsExample

[root@host1 ~]# firewall-cmd --zone default --list-ports- Verify the range is inclusive of TCP ports 6800—7100.

2.8. Firewall settings for Ceph Monitor node

You can enable encryption for all Ceph traffic over the network with the introduction of the messenger version 2 protocol. The secure mode setting for messenger v2 encrypts communication between Ceph daemons and Ceph clients, giving you end-to-end encryption.

Messenger v2 Protocol

The second version of Ceph’s on-wire protocol, msgr2, includes several new features:

- A secure mode encrypts all data moving through the network.

- Encapsulation improvement of authentication payloads.

- Improvements to feature advertisement and negotiation.

The Ceph daemons bind to multiple ports allowing both the legacy, v1-compatible, and the new, v2-compatible, Ceph clients to connect to the same storage cluster. Ceph clients or other Ceph daemons connecting to the Ceph Monitor daemon will try to use the v2 protocol first, if possible, but if not, then the legacy v1 protocol will be used. By default, both messenger protocols, v1 and v2, are enabled. The new v2 port is 3300, and the legacy v1 port is 6789, by default.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Access to the Ceph software repository.

- Root-level access to the Ceph Monitor node.

Procedure

Add rules using the following example:

[root@mon ~]# sudo iptables -A INPUT -i IFACE -p tcp -s IP-ADDRESS/NETMASK --dport 6789 -j ACCEPT [root@mon ~]# sudo iptables -A INPUT -i IFACE -p tcp -s IP-ADDRESS/NETMASK --dport 3300 -j ACCEPT-

Replace

IFACEwith the public network interface (for example,eth0,eth1, and so on). -

Replace

IP-ADDRESSwith the IP address of the public network andNETMASKwith the netmask for the public network.

-

Replace

For the

firewallddaemon, execute the following commands:[root@mon ~]# firewall-cmd --zone=public --add-port=6789/tcp [root@mon ~]# firewall-cmd --zone=public --add-port=6789/tcp --permanent [root@mon ~]# firewall-cmd --zone=public --add-port=3300/tcp [root@mon ~]# firewall-cmd --zone=public --add-port=3300/tcp --permanent

2.9. Firewall settings for Ceph OSDs

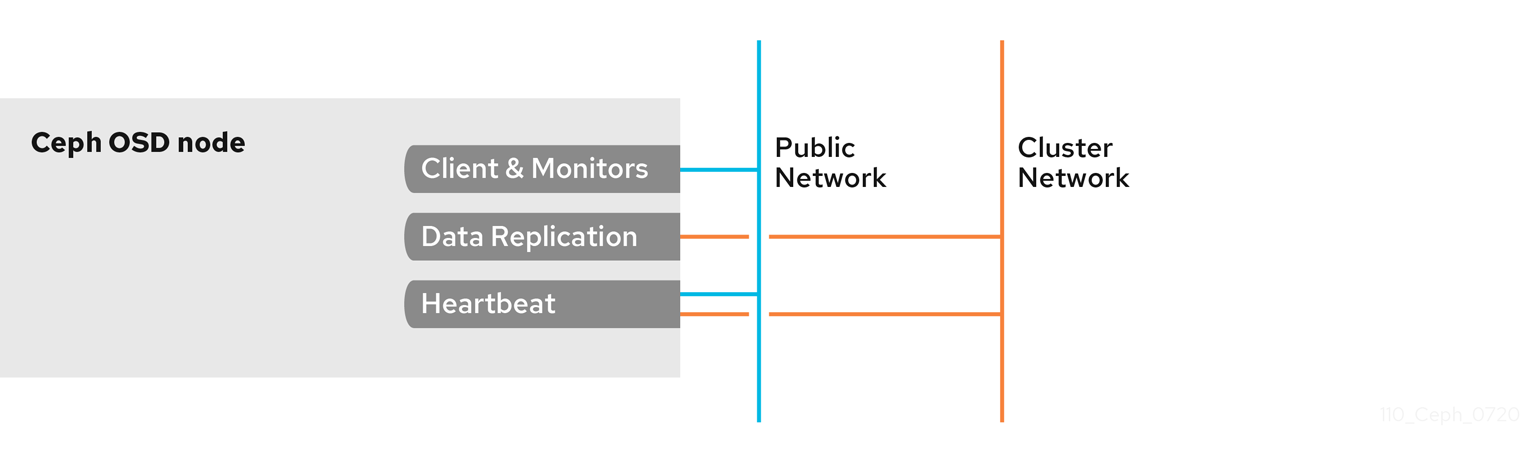

By default, Ceph OSDs bind to the first available ports on a Ceph node beginning at port 6800. Ensure to open at least four ports beginning at port 6800 for each OSD that runs on the node:

- One for talking to clients and monitors on the public network.

- One for sending data to other OSDs on the cluster network.

- Two for sending heartbeat packets on the cluster network.

Ports are node-specific. However, you might need to open more ports than the number of ports needed by Ceph daemons running on that Ceph node in the event that processes get restarted and the bound ports do not get released. Consider opening a few additional ports in case a daemon fails and restarts without releasing the port such that the restarted daemon binds to a new port. Also, consider opening the port range of 6800—7300 on each OSD node.

If you set separate public and cluster networks, you must add rules for both the public network and the cluster network, because clients will connect using the public network and other Ceph OSD Daemons will connect using the cluster network.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Access to the Ceph software repository.

- Root-level access to the Ceph OSD nodes.

Procedure

Add rules using the following example:

[root@mon ~]# sudo iptables -A INPUT -i IFACE -m multiport -p tcp -s IP-ADDRESS/NETMASK --dports 6800:7300 -j ACCEPT-

Replace

IFACEwith the public network interface (for example,eth0,eth1, and so on). -

Replace

IP-ADDRESSwith the IP address of the public network andNETMASKwith the netmask for the public network.

-

Replace

For the

firewallddaemon, execute the following:[root@mon ~] # firewall-cmd --zone=public --add-port=6800-7300/tcp [root@mon ~] # firewall-cmd --zone=public --add-port=6800-7300/tcp --permanent

If you put the cluster network into another zone, open the ports within that zone as appropriate.

2.10. Additional Resources

- See the Red Hat Ceph Storage network configuration options in Appendix B for specific option descriptions and usage.

- See the Red Hat Ceph Storage Architecture Guide for details about using on-wire encryption with the Ceph messenger version 2 protocol.

Chapter 3. Ceph Monitor configuration

As a storage administrator, you can use the default configuration values for the Ceph Monitor or customize them according to the intended workload.

3.1. Prerequisites

- Installation of the Red Hat Ceph Storage software.

3.2. Ceph Monitor configuration

Understanding how to configure a Ceph Monitor is an important part of building a reliable Red Hat Ceph Storage cluster. All storage clusters have at least one monitor. A Ceph Monitor configuration usually remains fairly consistent, but you can add, remove or replace a Ceph Monitor in a storage cluster.

Ceph monitors maintain a "master copy" of the cluster map. That means a Ceph client can determine the location of all Ceph monitors and Ceph OSDs just by connecting to one Ceph monitor and retrieving a current cluster map.

Before Ceph clients can read from or write to Ceph OSDs, they must connect to a Ceph Monitor first. With a current copy of the cluster map and the CRUSH algorithm, a Ceph client can compute the location for any object. The abilityto compute object locations allows a Ceph client to talk directly to Ceph OSDs, which is a very important aspect of Ceph’s high scalability and performance.

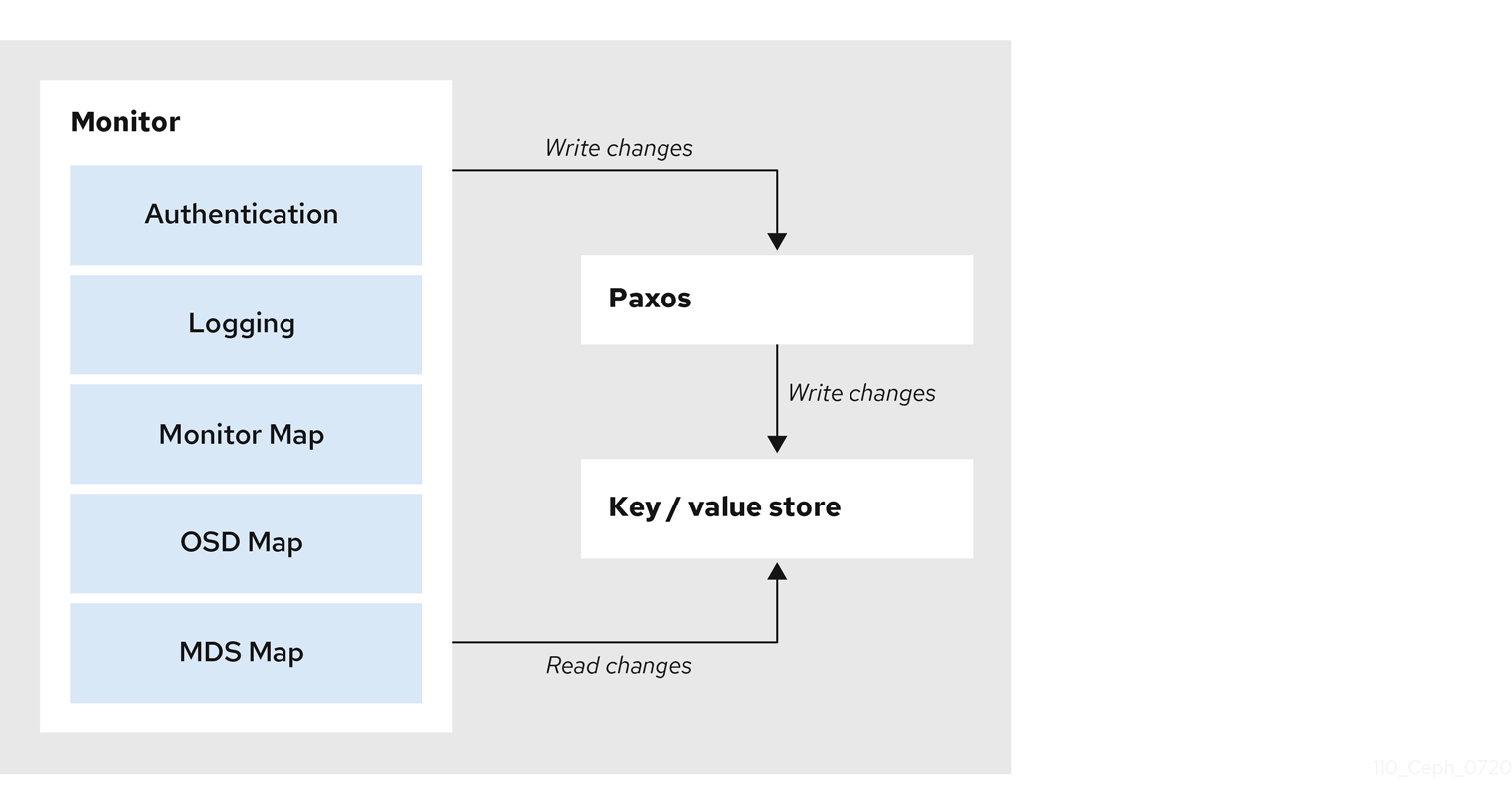

The primary role of the Ceph Monitor is to maintain a master copy of the cluster map. Ceph Monitors also provide authentication and logging services. Ceph Monitors write all changes in the monitor services to a single Paxos instance, and Paxos writes the changes to a key-value store for strong consistency. Ceph Monitors can query the most recent version of the cluster map during synchronization operations. Ceph Monitors leverage the key-value store’s snapshots and iterators, using the rocksdb database, to perform store-wide synchronization.

3.2.1. Viewing the Ceph Monitor configuration database

You can view Ceph Monitor configuration in the configuration database.

Previous releases of Red Hat Ceph Storage centralize Ceph Monitor configuration in /etc/ceph/ceph.conf. This configuration file has been deprecated as of Red Hat Ceph Storage 5.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to a Ceph Monitor host.

Procedure

Log into the

cephadmshell.[root@host01 ~]# cephadm shellUse the

ceph configcommand to view the configuration database:Example

[ceph: root@host01 /]# ceph config get mon

3.3. Ceph cluster maps

The cluster map is a composite of maps, including the monitor map, the OSD map, and the placement group map. The cluster map tracks a number of important events:

-

Which processes are

inthe Red Hat Ceph Storage cluster. -

Which processes that are

inthe Red Hat Ceph Storage cluster areupand running ordown. -

Whether, the placement groups are

activeorinactive, andcleanor in some other state. other details that reflect the current state of the cluster such as:

- the total amount of storage space or

- the amount of storage used.

When there is a significant change in the state of the cluster, for example, a Ceph OSD goes down, a placement group falls into a degraded state, and so on. The cluster map gets updated to reflect the current state of the cluster. Additionally, the Ceph monitor also maintains a history of the prior states of the cluster. The monitor map, OSD map, and placement group map each maintain a history of their map versions. Each version is called an epoch.

When operating the Red Hat Ceph Storage cluster, keeping track of these states is an important part of the cluster administration.

3.4. Ceph Monitor quorum

A cluster will run sufficiently with a single monitor. However, a single monitor is a single-point-of-failure. To ensure high availability in a production Ceph storage cluster, run Ceph with multiple monitors so that the failure of a single monitor will not cause a failure of the entire storage cluster.

When a Ceph storage cluster runs multiple Ceph Monitors for high availability, Ceph Monitors use the Paxos algorithm to establish consensus about the master cluster map. A consensus requires a majority of monitors running to establish a quorum for consensus about the cluster map. For example, 1; 2 out of 3; 3 out of 5; 4 out of 6; and so on.

Red Hat recommends running a production Red Hat Ceph Storage cluster with at least three Ceph Monitors to ensure high availability. When you run multiple monitors, you can specify the initial monitors that must be members of the storage cluster to establish a quorum. This may reduce the time it takes for the storage cluster to come online.

[mon]

mon_initial_members = a,b,c

A majority of the monitors in the storage cluster must be able to reach each other in to establish a quorum. You can decrease the initial number of monitors to establish a quorum with the mon_initial_members option.

3.5. Ceph Monitor consistency

When you add monitor settings to the Ceph configuration file, you need to be aware of some of the architectural aspects of Ceph Monitors. Ceph imposes strict consistency requirements for a Ceph Monitor when discovering another Ceph Monitor within the cluster. Whereas Ceph clients and other Ceph daemons use the Ceph configuration file to discover monitors, monitors discover each other using the monitor map (monmap), not the Ceph configuration file.

A Ceph Monitor always refers to the local copy of the monitor map when discovering other Ceph Monitors in the Red Hat Ceph Storage cluster. Using the monitor map instead of the Ceph configuration file avoids errors that could break the cluster. For example, typos in the Ceph configuration file when specifying a monitor address or port. Since monitors use monitor maps for discovery and they share monitor maps with clients and other Ceph daemons, the monitor map provides monitors with a strict guarantee that their consensus is valid.

Strict consistency when applying updates to the monitor maps

As with any other updates on the Ceph Monitor, changes to the monitor map always run through a distributed consensus algorithm called Paxos. The Ceph Monitors must agree on each update to the monitor map, such as adding or removing a Ceph Monitor, to ensure that each monitor in the quorum has the same version of the monitor map. Updates to the monitor map are incremental so that Ceph Monitors have the latest agreed-upon version and a set of previous versions.

Maintaining history

Maintaining a history enables a Ceph Monitor that has an older version of the monitor map to catch up with the current state of the Red Hat Ceph Storage cluster.

If Ceph Monitors discovered each other through the Ceph configuration file instead of through the monitor map, it would introduce additional risks because the Ceph configuration files are not updated and distributed automatically. Ceph Monitors might inadvertently use an older Ceph configuration file, fail to recognize a Ceph Monitor, fall out of a quorum, or develop a situation where Paxos is not able to determine the current state of the system accurately.

3.6. Bootstrap the Ceph Monitor

In most configuration and deployment cases, tools that deploy Ceph, such as cephadm, might help bootstrap the Ceph monitors by generating a monitor map for you.

A Ceph monitor requires a few explicit settings:

-

File System ID: The

fsidis the unique identifier for your object store. Since you can run multiple storage clusters on the same hardware, you must specify the unique ID of the object store when bootstrapping a monitor. Using deployment tools, such ascephadm, will generate a file system identifier, but you can also specify thefsidmanually. Monitor ID: A monitor ID is a unique ID assigned to each monitor within the cluster. By convention, the ID is set to the monitor’s hostname. This option can be set using a deployment tool, using the

cephcommand, or in the Ceph configuration file. In the Ceph configuration file, sections are formed as follows:Example

[mon.host1] [mon.host2]- Keys: The monitor must have secret keys.

Additional Resources

-

For more information about

cephadmand the Ceph orchestrator, see the Red Hat Ceph Storage Operations Guide.

3.7. Minimum configuration for a Ceph Monitor

The bare minimum monitor settings for a Ceph Monitor in the Ceph configuration file includes a host name for each monitor if it is not configured for DNS and the monitor address. The Ceph Monitors run on port 6789 and 3300 by default.

Do not edit the Ceph configuration file.

This minimum configuration for monitors assumes that a deployment tool generates the fsid and the mon. key for you.

You can use the following commands to set or read the storage cluster configuration options.

-

ceph config dump- Dumps the entire configuration database for the whole storage cluster. -

ceph config generate-minimal-conf- Generates a minimalceph.conffile. -

ceph config get WHO- Dumps the configuration for a specific daemon or a client, as stored in the Ceph Monitor’s configuration database. -

ceph config set WHO OPTION VALUE- Sets the configuration option in the Ceph Monitor’s configuration database. -

ceph config show WHO- Shows the reported running configuration for a running daemon. -

ceph config assimilate-conf -i INPUT_FILE -o OUTPUT_FILE- Ingests a configuration file from the input file and moves any valid options into the Ceph Monitors’ configuration database.

Here, WHO parameter might be name of the section or a Ceph daemon, OPTION is a configuration file, and VALUE can be either true or false.

When a Ceph daemon needs a config option prior to getting the option from the config store, you can set the configuration by running the following command:

ceph cephadm set-extra-ceph-conf

This command adds text to all the daemon’s ceph.conf files. It is a workaround and is NOT a recommended operation.

3.8. Unique identifier for Ceph

Each Red Hat Ceph Storage cluster has a unique identifier (fsid). If specified, it usually appears under the [global] section of the configuration file. Deployment tools usually generate the fsid and store it in the monitor map, so the value may not appear in a configuration file. The fsid makes it possible to run daemons for multiple clusters on the same hardware.

Do not set this value if you use a deployment tool that does it for you.

3.9. Ceph Monitor data store

Ceph provides a default path where Ceph monitors store data.

Red Hat recommends running Ceph monitors on separate drives from Ceph OSDs for optimal performance in a production Red Hat Ceph Storage cluster.

A dedicated /var/lib/ceph partition should be used for the MON database with a size between 50 and 100 GB.

Ceph monitors call the fsync() function often, which can interfere with Ceph OSD workloads.

Ceph monitors store their data as key-value pairs. Using a data store prevents recovering Ceph monitors from running corrupted versions through Paxos, and it enables multiple modification operations in one single atomic batch, among other advantages.

Red Hat does not recommend changing the default data location. If you modify the default location, make it uniform across Ceph monitors by setting it in the [mon] section of the configuration file.

3.10. Ceph storage capacity

When a Red Hat Ceph Storage cluster gets close to its maximum capacity (specifies by the mon_osd_full_ratio parameter), Ceph prevents you from writing to or reading from Ceph OSDs as a safety measure to prevent data loss. Therefore, letting a production Red Hat Ceph Storage cluster approach its full ratio is not a good practice, because it sacrifices high availability. The default full ratio is .95, or 95% of capacity. This a very aggressive setting for a test cluster with a small number of OSDs.

When monitoring a cluster, be alert to warnings related to the nearfull ratio. This means that a failure of some OSDs could result in a temporary service disruption if one or more OSDs fails. Consider adding more OSDs to increase storage capacity.

A common scenario for test clusters involves a system administrator removing a Ceph OSD from the Red Hat Ceph Storage cluster to watch the cluster re-balance. Then, removing another Ceph OSD, and so on until the Red Hat Ceph Storage cluster eventually reaches the full ratio and locks up.

Red Hat recommends a bit of capacity planning even with a test cluster. Planning enables you to gauge how much spare capacity you will need in to maintain high availability.

Ideally, you want to plan for a series of Ceph OSD failures where the cluster can recover to an active + clean state without replacing those Ceph OSDs immediately. You can run a cluster in an active + degraded state, but this is not ideal for normal operating conditions.

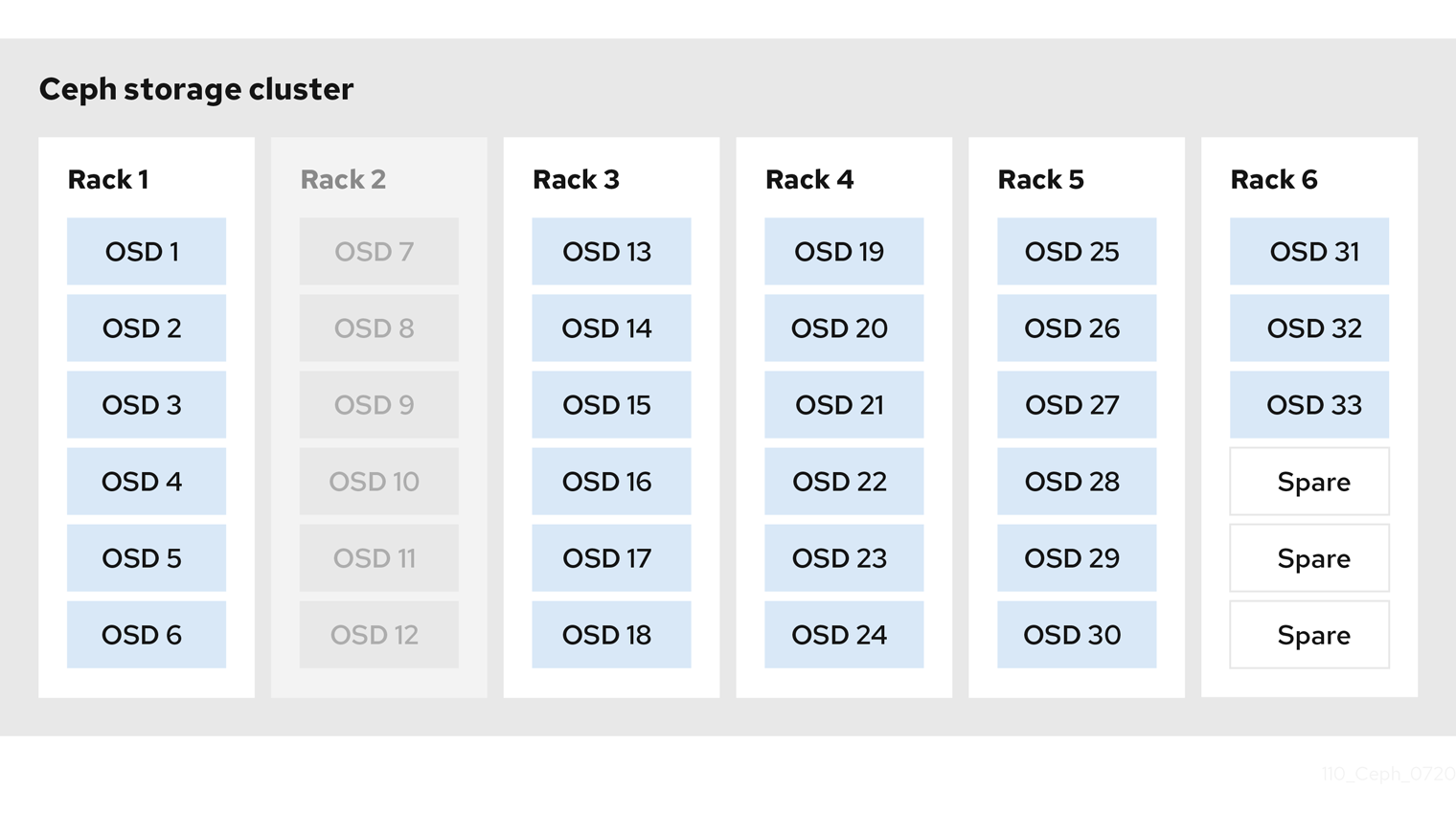

The following diagram depicts a simplistic Red Hat Ceph Storage cluster containing 33 Ceph Nodes with one Ceph OSD per host, each Ceph OSD Daemon reading from and writing to a 3TB drive. So this exemplary Red Hat Ceph Storage cluster has a maximum actual capacity of 99TB. With a mon osd full ratio of 0.95, if the Red Hat Ceph Storage cluster falls to 5 TB of remaining capacity, the cluster will not allow Ceph clients to read and write data. So the Red Hat Ceph Storage cluster’s operating capacity is 95 TB, not 99 TB.

It is normal in such a cluster for one or two OSDs to fail. A less frequent but reasonable scenario involves a rack’s router or power supply failing, which brings down multiple OSDs simultaneously, for example, OSDs 7-12. In such a scenario, you should still strive for a cluster that can remain operational and achieve an active + clean state, even if that means adding a few hosts with additional OSDs in short order. If your capacity utilization is too high, you might not lose data, but you could still sacrifice data availability while resolving an outage within a failure domain if capacity utilization of the cluster exceeds the full ratio. For this reason, Red Hat recommends at least some rough capacity planning.

Identify two numbers for your cluster:

- the number of OSDs

- the total capacity of the cluster

To determine the mean average capacity of an OSD within a cluster, divide the total capacity of the cluster by the number of OSDs in the cluster. Consider multiplying that number by the number of OSDs you expect to fail simultaneously during normal operations (a relatively small number). Finally, multiply the capacity of the cluster by the full ratio to arrive at a maximum operating capacity. Then, subtract the amount of data from the OSDs you expect to fail to arrive at a reasonable full ratio. Repeat the foregoing process with a higher number of OSD failures (for example, a rack of OSDs) to arrive at a reasonable number for a near full ratio.

3.11. Ceph heartbeat

Ceph monitors know about the cluster by requiring reports from each OSD, and by receiving reports from OSDs about the status of their neighboring OSDs. Ceph provides reasonable default settings for interaction between monitor and OSD, however, you can modify them as needed.

3.12. Ceph Monitor synchronization role

When you run a production cluster with multiple monitors which is recommended, each monitor checks to see if a neighboring monitor has a more recent version of the cluster map. For example, a map in a neighboring monitor with one or more epoch numbers higher than the most current epoch in the map of the instant monitor. Periodically, one monitor in the cluster might fall behind the other monitors to the point where it must leave the quorum, synchronize to retrieve the most current information about the cluster, and then rejoin the quorum.

Synchronization roles

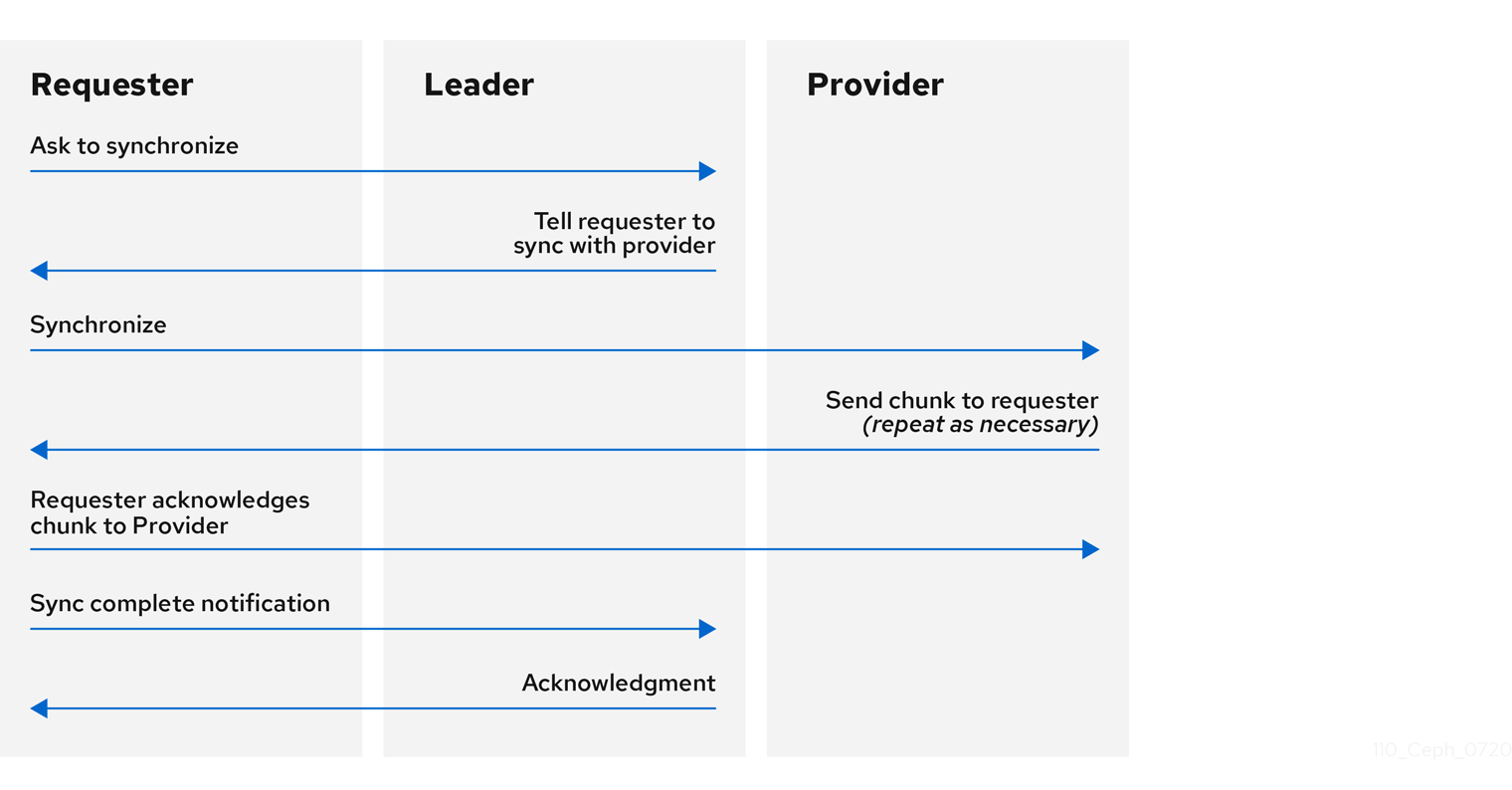

For the purposes of synchronization, monitors can assume one of three roles:

- Leader: The Leader is the first monitor to achieve the most recent Paxos version of the cluster map.

- Provider: The Provider is a monitor that has the most recent version of the cluster map, but was not the first to achieve the most recent version.

- Requester: The Requester is a monitor that has fallen behind the leader and must synchronize to retrieve the most recent information about the cluster before it can rejoin the quorum.

These roles enable a leader to delegate synchronization duties to a provider, which prevents synchronization requests from overloading the leader and improving performance. In the following diagram, the requester has learned that it has fallen behind the other monitors. The requester asks the leader to synchronize, and the leader tells the requester to synchronize with a provider.

Monitor synchronization

Synchronization always occurs when a new monitor joins the cluster. During runtime operations, monitors can receive updates to the cluster map at different times. This means the leader and provider roles may migrate from one monitor to another. If this happens while synchronizing, for example, a provider falls behind the leader, the provider can terminate synchronization with a requester.

Once synchronization is complete, Ceph requires trimming across the cluster. Trimming requires that the placement groups are active + clean.

3.13. Ceph time synchronization

Ceph daemons pass critical messages to each other, which must be processed before daemons reach a timeout threshold. If the clocks in Ceph monitors are not synchronized, it can lead to a number of anomalies.

For example:

- Daemons ignoring received messages such as outdated timestamps.

- Timeouts triggered too soon or late when a message was not received in time.

Install NTP on the Ceph monitor hosts to ensure that the monitor cluster operates with synchronized clocks.

Clock drift may still be noticeable with NTP even though the discrepancy is not yet harmful. Ceph clock drift and clock skew warnings can get triggered even though NTP maintains a reasonable level of synchronization. Increasing your clock drift may be tolerable under such circumstances. However, a number of factors such as workload, network latency, configuring overrides to default timeouts, and other synchronization options can influence the level of acceptable clock drift without compromising Paxos guarantees.

Additional Resources

- See the section on Ceph time synchronization for more details.

3.14. Additional Resources

- See all the Red Hat Ceph Storage Monitor configuration options in Appendix C for specific option descriptions and usage.

Chapter 4. Ceph authentication configuration

As a storage administrator, authenticating users and services is important to the security of the Red Hat Ceph Storage cluster. Red Hat Ceph Storage includes the Cephx protocol, as the default, for cryptographic authentication, and the tools to manage authentication in the storage cluster.

4.1. Prerequisites

- Installation of the Red Hat Ceph Storage software.

4.2. Cephx authentication

The cephx protocol is enabled by default. Cryptographic authentication has some computational costs, though they are generally quite low. If the network environment connecting clients and hosts is considered safe and you cannot afford authentication computational costs, you can disable it. When deploying a Ceph storage cluster, the deployment tool will create the client.admin user and keyring.

Red Hat recommends using authentication.

If you disable authentication, you are at risk of a man-in-the-middle attack altering client and server messages, which could lead to significant security issues.

Enabling and disabling Cephx

Enabling Cephx requires that you have deployed keys for the Ceph Monitors and OSDs. When toggling Cephx authentication on or off, you do not have to repeat the deployment procedures.

4.3. Enabling Cephx

When cephx is enabled, Ceph will look for the keyring in the default search path, which includes /etc/ceph/$cluster.$name.keyring. You can override this location by adding a keyring option in the [global] section of the Ceph configuration file, but this is not recommended.

Execute the following procedures to enable cephx on a cluster with authentication disabled. If you or your deployment utility have already generated the keys, you may skip the steps related to generating keys.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the Ceph Monitor node.

Procedure

Create a

client.adminkey, and save a copy of the key for your client host:[root@mon ~]# ceph auth get-or-create client.admin mon 'allow *' osd 'allow *' -o /etc/ceph/ceph.client.admin.keyringWarningThis will erase the contents of any existing

/etc/ceph/client.admin.keyringfile. Do not perform this step if a deployment tool has already done it for you.Create a keyring for the monitor cluster and generate a monitor secret key:

[root@mon ~]# ceph-authtool --create-keyring /tmp/ceph.mon.keyring --gen-key -n mon. --cap mon 'allow *'Copy the monitor keyring into a

ceph.mon.keyringfile in every monitormon datadirectory. For example, to copy it tomon.ain clusterceph, use the following:[root@mon ~]# cp /tmp/ceph.mon.keyring /var/lib/ceph/mon/ceph-a/keyringGenerate a secret key for every OSD, where

IDis the OSD number:ceph auth get-or-create osd.ID mon 'allow rwx' osd 'allow *' -o /var/lib/ceph/osd/ceph-ID/keyringBy default the

cephxauthentication protocol is enabled.NoteIf the

cephxauthentication protocol was disabled previously by setting the authentication options tonone, then by removing the following lines under the[global]section in the Ceph configuration file (/etc/ceph/ceph.conf) will reenable thecephxauthentication protocol:auth_cluster_required = none auth_service_required = none auth_client_required = noneStart or restart the Ceph storage cluster.

ImportantEnabling

cephxrequires downtime because the cluster needs to be completely restarted, or it needs to be shut down and then started while client I/O is disabled.These flags need to be set before restarting or shutting down the storage cluster:

[root@mon ~]# ceph osd set noout [root@mon ~]# ceph osd set norecover [root@mon ~]# ceph osd set norebalance [root@mon ~]# ceph osd set nobackfill [root@mon ~]# ceph osd set nodown [root@mon ~]# ceph osd set pauseOnce

cephxis enabled and all PGs are active and clean, unset the flags:[root@mon ~]# ceph osd unset noout [root@mon ~]# ceph osd unset norecover [root@mon ~]# ceph osd unset norebalance [root@mon ~]# ceph osd unset nobackfill [root@mon ~]# ceph osd unset nodown [root@mon ~]# ceph osd unset pause

4.4. Disabling Cephx

The following procedure describes how to disable Cephx. If your cluster environment is relatively safe, you can offset the computation expense of running authentication.

Red Hat recommends enabling authentication.

However, it may be easier during setup or troubleshooting to temporarily disable authentication.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the Ceph Monitor node.

Procedure

Disable

cephxauthentication by setting the following options in the[global]section of the Ceph configuration file:Example

auth_cluster_required = none auth_service_required = none auth_client_required = none- Start or restart the Ceph storage cluster.

4.5. Cephx user keyrings

When you run Ceph with authentication enabled, the ceph administrative commands and Ceph clients require authentication keys to access the Ceph storage cluster.

The most common way to provide these keys to the ceph administrative commands and clients is to include a Ceph keyring under the /etc/ceph/ directory. The file name is usually ceph.client.admin.keyring or $cluster.client.admin.keyring. If you include the keyring under the /etc/ceph/ directory, you do not need to specify a keyring entry in the Ceph configuration file.

Red Hat recommends copying the Red Hat Ceph Storage cluster keyring file to nodes where you will run administrative commands, because it contains the client.admin key.

To do so, execute the following command:

# scp USER@HOSTNAME:/etc/ceph/ceph.client.admin.keyring /etc/ceph/ceph.client.admin.keyring

Replace USER with the user name used on the host with the client.admin key and HOSTNAME with the host name of that host.

Ensure the ceph.keyring file has appropriate permissions set on the client machine.

You can specify the key itself in the Ceph configuration file using the key setting, which is not recommended, or a path to a key file using the keyfile setting.

4.6. Cephx daemon keyrings

Administrative users or deployment tools might generate daemon keyrings in the same way as generating user keyrings. By default, Ceph stores daemons keyrings inside their data directory. The default keyring locations, and the capabilities necessary for the daemon to function.

The monitor keyring contains a key but no capabilities, and is not part of the Ceph storage cluster auth database.

The daemon data directory locations default to directories of the form:

/var/lib/ceph/$type/CLUSTER-IDExample

/var/lib/ceph/osd/ceph-12You can override these locations, but it is not recommended.

4.7. Cephx message signatures

Ceph provides fine-grained control so you can enable or disable signatures for service messages between the client and Ceph. You can enable or disable signatures for messages between Ceph daemons.

Red Hat recommends that Ceph authenticate all ongoing messages between the entities using the session key set up for that initial authentication.

Ceph kernel modules do not support signatures yet.

4.8. Additional Resources

- See all the Red Hat Ceph Storage Cephx configuration options in Appendix D for specific option descriptions and usage.

Chapter 5. Pools, placement groups, and CRUSH configuration

As a storage administrator, you can choose to use the Red Hat Ceph Storage default options for pools, placement groups, and the CRUSH algorithm or customize them for the intended workload.

5.1. Prerequisites

- Installation of the Red Hat Ceph Storage software.

5.2. Pools placement groups and CRUSH

When you create pools and set the number of placement groups for the pool, Ceph uses default values when you do not specifically override the defaults.

Red Hat recommends overriding some of the defaults. Specifically, set a pool’s replica size and override the default number of placement groups.

You can set these values when running pool commands.

By default, Ceph makes 3 replicas of objects. If you want to set 4 copies of an object as the default value, a primary copy and three replica copies, reset the default values as shown in osd_pool_default_size. If you want to allow Ceph to write a lesser number of copies in a degraded state, set osd_pool_default_min_size to a number less than the osd_pool_default_size value.

Example

[ceph: root@host01 /]# ceph config set global osd_pool_default_size 4 # Write an object 4 times.

[ceph: root@host01 /]# ceph config set global osd_pool_default_min_size 1 # Allow writing one copy in a degraded state.

Ensure you have a realistic number of placement groups. Red Hat recommends approximately 100 per OSD. For example, total number of OSDs multiplied by 100 divided by the number of replicas, that is, osd_pool_default_size. For 10 OSDs and osd_pool_default_size = 4, we would recommend approximately (100 * 10) / 4 = 250.

Example

[ceph: root@host01 /]# ceph config set global osd_pool_default_pg_num 250

[ceph: root@host01 /]# ceph config set global osd_pool_default_pgp_num 2505.3. Additional Resources

- See all the Red Hat Ceph Storage pool, placement group, and CRUSH configuration options in Appendix E for specific option descriptions and usage.

Chapter 6. Ceph Object Storage Daemon (OSD) configuration

As a storage administrator, you can configure the Ceph Object Storage Daemon (OSD) to be redundant and optimized based on the intended workload.

6.1. Prerequisites

- Installation of the Red Hat Ceph Storage software.

6.2. Ceph OSD configuration

All Ceph clusters have a configuration, which defines:

- Cluster identity

- Authentication settings

- Ceph daemon membership in the cluster

- Network configuration

- Host names and addresses

- Paths to keyrings

- Paths to OSD log files

- Other runtime options

A deployment tool, such as cephadm, will typically create an initial Ceph configuration file for you. However, you can create one yourself if you prefer to bootstrap a cluster without using a deployment tool.

For your convenience, each daemon has a series of default values. Many are set by the ceph/src/common/config_opts.h script. You can override these settings with a Ceph configuration file or at runtime by using the monitor tell command or connecting directly to a daemon socket on a Ceph node.

Red Hat does not recommend changing the default paths, as it makes it more difficult to troubleshoot Ceph later.

Additional Resources

-

For more information about

cephadmand the Ceph orchestrator, see the Red Hat Ceph Storage Operations Guide.

6.3. Scrubbing the OSD

In addition to making multiple copies of objects, Ceph ensures data integrity by scrubbing placement groups. Ceph scrubbing is analogous to the fsck command on the object storage layer.

For each placement group, Ceph generates a catalog of all objects and compares each primary object and its replicas to ensure that no objects are missing or mismatched.

Light scrubbing (daily) checks the object size and attributes. Deep scrubbing (weekly) reads the data and uses checksums to ensure data integrity.

Scrubbing is important for maintaining data integrity, but it can reduce performance. Adjust the following settings to increase or decrease scrubbing operations.

6.4. Backfilling an OSD

When you add Ceph OSDs to a cluster or remove them from the cluster, the CRUSH algorithm rebalances the cluster by moving placement groups to or from Ceph OSDs to restore the balance. The process of migrating placement groups and the objects they contain can reduce the cluster operational performance considerably. To maintain operational performance, Ceph performs this migration with the 'backfill' process, which allows Ceph to set backfill operations to a lower priority than requests to read or write data.

6.5. OSD recovery

When the cluster starts or when a Ceph OSD terminates unexpectedly and restarts, the OSD begins peering with other Ceph OSDs before a write operation can occur.

If a Ceph OSD crashes and comes back online, usually it will be out of sync with other Ceph OSDs containing more recent versions of objects in the placement groups. When this happens, the Ceph OSD goes into recovery mode and seeks to get the latest copy of the data and bring its map back up to date. Depending upon how long the Ceph OSD was down, the OSD’s objects and placement groups may be significantly out of date. Also, if a failure domain went down, for example, a rack, more than one Ceph OSD might come back online at the same time. This can make the recovery process time consuming and resource intensive.

To maintain operational performance, Ceph performs recovery with limitations on the number of recovery requests, threads, and object chunk sizes which allows Ceph to perform well in a degraded state.

6.6. Additional Resources

- See all the Red Hat Ceph Storage Ceph OSD configuration options in Appendix F for specific option descriptions and usage.

Chapter 7. Ceph Monitor and OSD interaction configuration

As a storage administrator, you must properly configure the interactions between the Ceph Monitors and OSDs to ensure a stable working environment.

7.1. Prerequisites

- Installation of the Red Hat Ceph Storage software.

7.2. Ceph Monitor and OSD interaction

After you have completed your initial Ceph configuration, you can deploy and run Ceph. When you execute a command such as ceph health or ceph -s, the Ceph Monitor reports on the current state of the Ceph storage cluster. The Ceph Monitor knows about the Ceph storage cluster by requiring reports from each Ceph OSD daemon, and by receiving reports from Ceph OSD daemons about the status of their neighboring Ceph OSD daemons. If the Ceph Monitor does not receive reports, or if it receives reports of changes in the Ceph storage cluster, the Ceph Monitor updates the status of the Ceph cluster map.

Ceph provides reasonable default settings for Ceph Monitor and OSD interaction. However, you can override the defaults. The following sections describe how Ceph Monitors and Ceph OSD daemons interact for the purposes of monitoring the Ceph storage cluster.

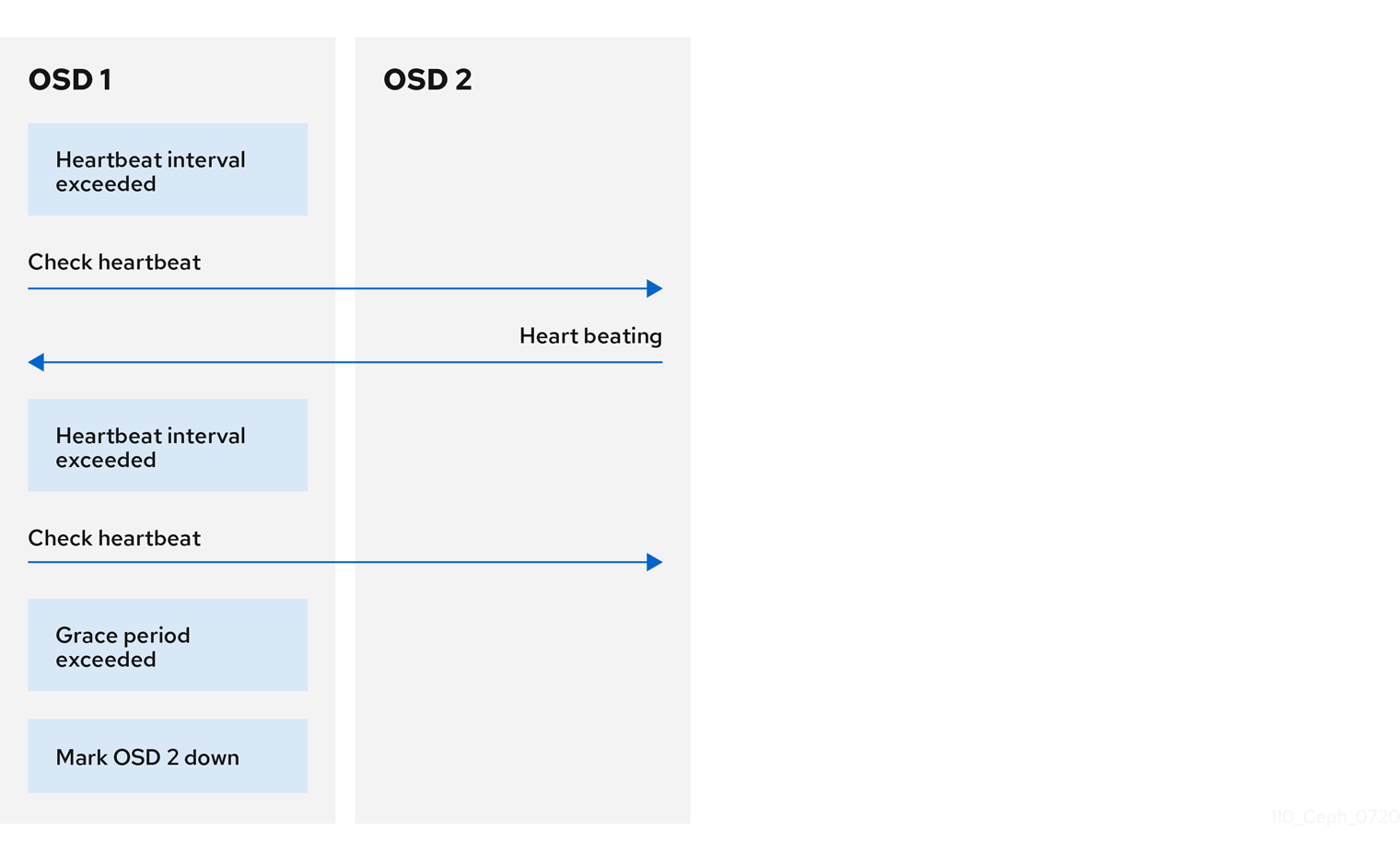

7.3. OSD heartbeat

Each Ceph OSD daemon checks the heartbeat of other Ceph OSD daemons every 6 seconds. To change the heartbeat interval, change the value at runtime:

Syntax

ceph config set osd osd_heartbeat_interval TIME_IN_SECONDSExample

[ceph: root@host01 /]# ceph config set osd osd_heartbeat_interval 60

If a neighboring Ceph OSD daemon does not send heartbeat packets within a 20 second grace period, the Ceph OSD daemon might consider the neighboring Ceph OSD daemon down. It can report it back to a Ceph Monitor, which updates the Ceph cluster map. To change the grace period, set the value at runtime:

Syntax

ceph config set osd osd_heartbeat_grace TIME_IN_SECONDSExample

[ceph: root@host01 /]# ceph config set osd osd_heartbeat_grace 30

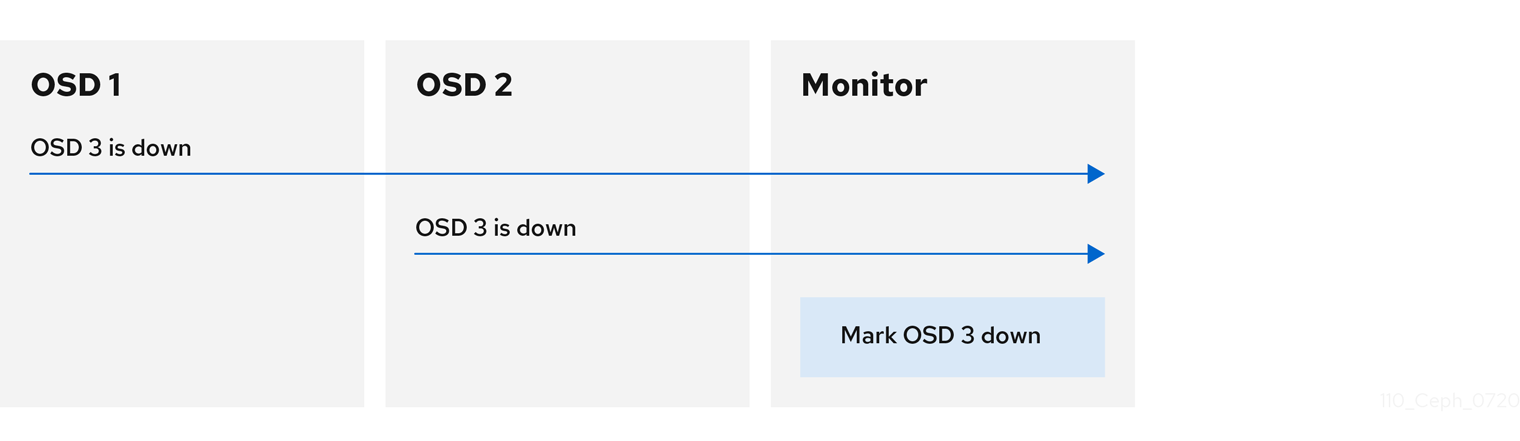

7.4. Reporting an OSD as down

By default, two Ceph OSD Daemons from different hosts must report to the Ceph Monitors that another Ceph OSD Daemon is down before the Ceph Monitors acknowledge that the reported Ceph OSD Daemon is down.

However, there is the chance that all the OSDs reporting the failure are in different hosts in a rack with a bad switch that causes connection problems between OSDs.

To avoid a "false alarm," Ceph considers the peers reporting the failure as a proxy for a "subcluster" that is similarly laggy. While this is not always the case, it may help administrators localize the grace correction to a subset of the system that is performing poorly.

Ceph uses the mon_osd_reporter_subtree_level setting to group the peers into the "subcluster" by their common ancestor type in the CRUSH map.

By default, only two reports from a different subtree are required to report another Ceph OSD Daemon down. Administrators can change the number of reporters from unique subtrees and the common ancestor type required to report a Ceph OSD Daemon down to a Ceph Monitor by setting the mon_osd_min_down_reporters and mon_osd_reporter_subtree_level values at runtime:

Syntax

ceph config set mon mon_osd_min_down_reporters NUMBERExample

[ceph: root@host01 /]# ceph config set mon mon_osd_min_down_reporters 4Syntax

ceph config set mon mon_osd_reporter_subtree_level CRUSH_ITEMExample

[ceph: root@host01 /]# ceph config set mon mon_osd_reporter_subtree_level host

[ceph: root@host01 /]# ceph config set mon mon_osd_reporter_subtree_level rack

[ceph: root@host01 /]# ceph config set mon mon_osd_reporter_subtree_level osd

7.5. Reporting a peering failure

If a Ceph OSD daemon cannot peer with any of the Ceph OSD daemons defined in its Ceph configuration file or the cluster map, it pings a Ceph Monitor for the most recent copy of the cluster map every 30 seconds. You can change the Ceph Monitor heartbeat interval by setting the value at runtime:

Syntax

ceph config set osd osd_mon_heartbeat_interval TIME_IN_SECONDSExample

[ceph: root@host01 /]# ceph config set osd osd_mon_heartbeat_interval 60

7.6. OSD reporting status

If a Ceph OSD Daemon does not report to a Ceph Monitor, the Ceph Monitor marks the Ceph OSD Daemon down after the mon_osd_report_timeout, which is 900 seconds, elapses. A Ceph OSD Daemon sends a report to a Ceph Monitor when a reportable event such as a failure, a change in placement group stats, a change in up_thru or when it boots within 5 seconds.

You can change the Ceph OSD Daemon minimum report interval by setting the osd_mon_report_interval value at runtime:

Syntax

ceph config set osd osd_mon_report_interval TIME_IN_SECONDSTo get, set, and verify the config you can use the following example:

Example

[ceph: root@host01 /]# ceph config get osd osd_mon_report_interval

5

[ceph: root@host01 /]# ceph config set osd osd_mon_report_interval 20

[ceph: root@host01 /]# ceph config dump | grep osd

global advanced osd_pool_default_crush_rule -1

osd basic osd_memory_target 4294967296

osd advanced osd_mon_report_interval 20

7.7. Additional Resources

- See all the Red Hat Ceph Storage Ceph Monitor and OSD configuration options in Appendix G for specific option descriptions and usage.

Chapter 8. Ceph debugging and logging configuration

As a storage administrator, you can increase the amount of debugging and logging information in cephadm to help diagnose problems with Red Hat Ceph Storage.

8.1. Prerequisites

- Red Hat Ceph Storage software is installed.

8.2. Additional Resources

-

For more information about troubleshooting

cephadm, see Cephadm troubleshooting in the Red Hat Ceph Storage Administration Guide. -

For more information about

cephadmlogging, see Cephadm operations in the Red Hat Ceph Storage Administration Guide.

Appendix A. General configuration options

These are the general configuration options for Ceph.

Typically, these will be set automatically by deployment tools, such as cephadm.

fsid- Description

- The file system ID. One per cluster.

- Type

- UUID

- Required

- No.

- Default

- N/A. Usually generated by deployment tools.

admin_socket- Description

- The socket for executing administrative commands on a daemon, irrespective of whether Ceph monitors have established a quorum.

- Type

- String

- Required

- No

- Default

-

/var/run/ceph/$cluster-$name.asok

pid_file- Description

-

The file in which the monitor or OSD will write its PID. For instance,

/var/run/$cluster/$type.$id.pidwill create /var/run/ceph/mon.a.pid for themonwith idarunning in thecephcluster. Thepid fileis removed when the daemon stops gracefully. If the process is not daemonized (meaning it runs with the-for-doption), thepid fileis not created. - Type

- String

- Required

- No

- Default

- No

chdir- Description

-

The directory Ceph daemons change to once they are up and running. Default

/directory recommended. - Type

- String

- Required

- No

- Default

-

/

max_open_files- Description

-

If set, when the Red Hat Ceph Storage cluster starts, Ceph sets the

max_open_fdsat the OS level (that is, the max # of file descriptors). It helps prevent Ceph OSDs from running out of file descriptors. - Type

- 64-bit Integer

- Required

- No

- Default

-

0

fatal_signal_handlers- Description

- If set, we will install signal handlers for SEGV, ABRT, BUS, ILL, FPE, XCPU, XFSZ, SYS signals to generate a useful log message.

- Type

- Boolean

- Default

-

true

Appendix B. Ceph network configuration options

These are the common network configuration options for Ceph.

public_network- Description

-

The IP address and netmask of the public (front-side) network (for example,

192.168.0.0/24). Set in[global]. You can specify comma-delimited subnets. - Type

-

<ip-address>/<netmask> [, <ip-address>/<netmask>] - Required

- No

- Default

- N/A

public_addr- Description

- The IP address for the public (front-side) network. Set for each daemon.

- Type

- IP Address

- Required

- No

- Default

- N/A

cluster_network- Description

-

The IP address and netmask of the cluster network (for example,

10.0.0.0/24). Set in[global]. You can specify comma-delimited subnets. - Type

-

<ip-address>/<netmask> [, <ip-address>/<netmask>] - Required

- No

- Default

- N/A

cluster_addr- Description

- The IP address for the cluster network. Set for each daemon.

- Type

- Address

- Required

- No

- Default

- N/A

ms_type- Description

-

The messenger type for the network transport layer. Red Hat supports the

simpleand theasyncmessenger type usingposixsemantics. - Type

- String.

- Required

- No.

- Default

-

async+posix

ms_public_type- Description

-

The messenger type for the network transport layer of the public network. It operates identically to

ms_type, but is applicable only to the public or front-side network. This setting enables Ceph to use a different messenger type for the public or front-side and cluster or back-side networks. - Type

- String.

- Required

- No.

- Default

- None.

ms_cluster_type- Description

-

The messenger type for the network transport layer of the cluster network. It operates identically to

ms_type, but is applicable only to the cluster or back-side network. This setting enables Ceph to use a different messenger type for the public or front-side and cluster or back-side networks. - Type

- String.

- Required

- No.

- Default

- None.

Host options

You must declare at least one Ceph Monitor in the Ceph configuration file, with a mon addr setting under each declared monitor. Ceph expects a host setting under each declared monitor, metadata server and OSD in the Ceph configuration file.

Do not use localhost. Use the short name of the node, not the fully-qualified domain name (FQDN). Do not specify any value for host when using a third party deployment system that retrieves the node name for you.

mon_addr- Description

-

A list of