Operations Guide

Operational tasks for Red Hat Ceph Storage

Abstract

Chapter 1. Introduction to the Ceph Orchestrator

As a storage administrator, you can use the Ceph Orchestrator with Cephadm utility that provides the ability to discover devices and create services in a Red Hat Ceph Storage cluster.

1.1. Use of the Ceph Orchestrator

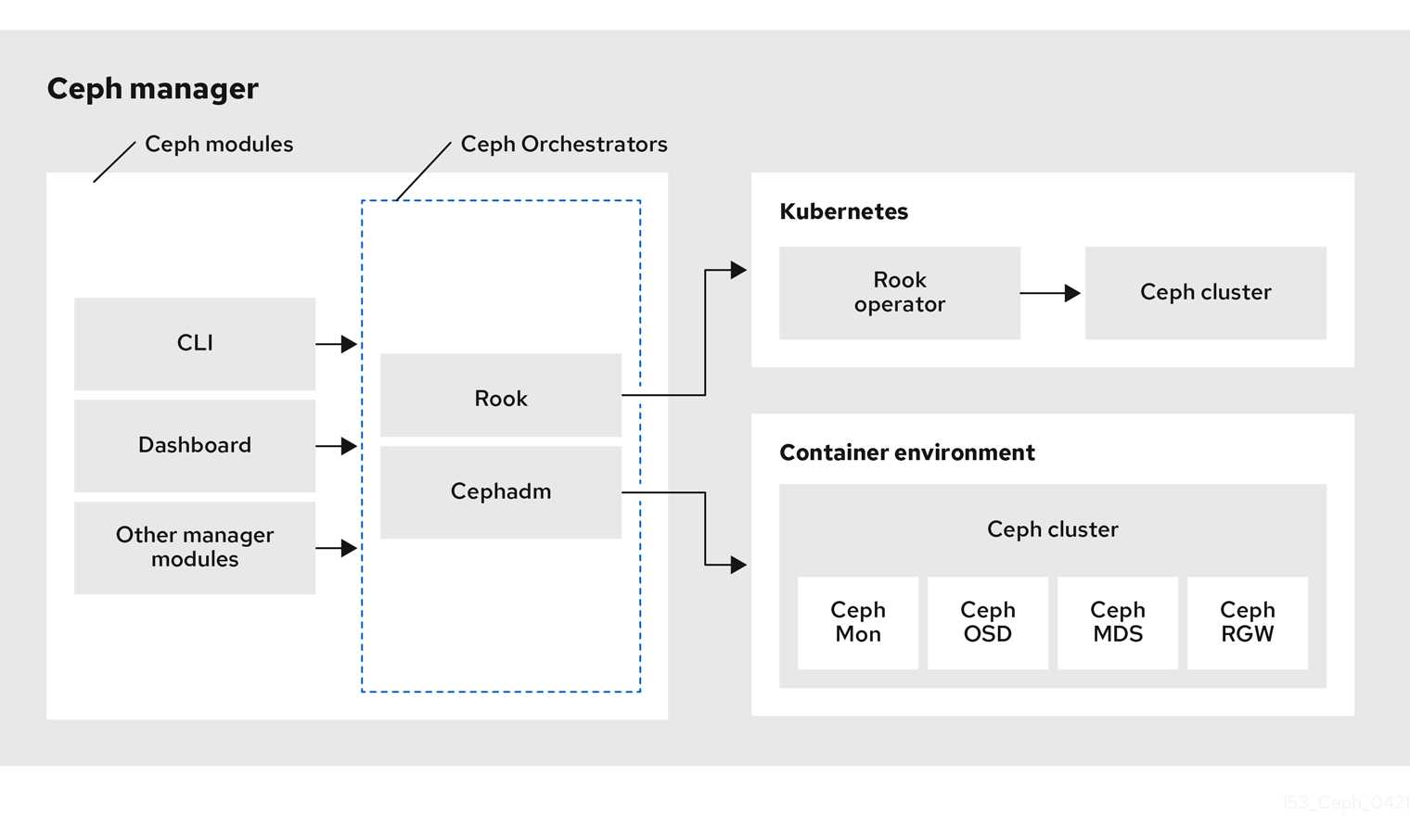

Red Hat Ceph Storage Orchestrators are manager modules that primarily act as a bridge between a Red Hat Ceph Storage cluster and deployment tools like Rook and Cephadm for a unified experience. They also integrate with the Ceph command line interface and Ceph Dashboard.

The following is a workflow diagram of Ceph Orchestrator:

NFS-Ganesha gateway is not supported, starting from Red Hat Ceph Storage 5.1 release.

Types of Red Hat Ceph Storage Orchestrators

There are three main types of Red Hat Ceph Storage Orchestrators:

Orchestrator CLI : These are common APIs used in Orchestrators and include a set of commands that can be implemented. These APIs also provide a common command line interface (CLI) to orchestrate

ceph-mgrmodules with external orchestration services. The following are the nomenclature used with the Ceph Orchestrator:- Host : This is the host name of the physical host and not the pod name, DNS name, container name, or host name inside the container.

- Service type : This is the type of the service, such as nfs, mds, osd, mon, rgw, and mgr.

- Service : A functional service provided by a Ceph storage cluster such as monitors service, managers service, OSD services, Ceph Object Gateway service, and NFS service.

- Daemon : A specific instance of a service deployed by one or more hosts such as Ceph Object Gateway services can have different Ceph Object Gateway daemons running in three different hosts.

Cephadm Orchestrator - This is a Ceph Orchestrator module that does not rely on an external tool such as Rook or Ansible, but rather manages nodes in a cluster by establishing an SSH connection and issuing explicit management commands. This module is intended for day-one and day-two operations.

Using the Cephadm Orchestrator is the recommended way of installing a Ceph storage cluster without leveraging any deployment frameworks like Ansible. The idea is to provide the manager daemon with access to an SSH configuration and key that is able to connect to all nodes in a cluster to perform any management operations, like creating an inventory of storage devices, deploying and replacing OSDs, or starting and stopping Ceph daemons. In addition, the Cephadm Orchestrator will deploy container images managed by

systemdin order to allow independent upgrades of co-located services.This orchestrator will also likely highlight a tool that encapsulates all necessary operations to manage the deployment of container image based services on the current host, including a command that bootstraps a minimal cluster running a Ceph Monitor and a Ceph Manager.

Rook Orchestrator - Rook is an orchestration tool that uses the Kubernetes Rook operator to manage a Ceph storage cluster running inside a Kubernetes cluster. The rook module provides integration between Ceph’s Orchestrator framework and Rook. Rook is an open source cloud-native storage operator for Kubernetes.

Rook follows the “operator” model, in which a custom resource definition (CRD) object is defined in Kubernetes to describe a Ceph storage cluster and its desired state, and a rook operator daemon is running in a control loop that compares the current cluster state to desired state and takes steps to make them converge. The main object describing Ceph’s desired state is the Ceph storage cluster CRD, which includes information about which devices should be consumed by OSDs, how many monitors should be running, and what version of Ceph should be used. Rook defines several other CRDs to describe RBD pools, CephFS file systems, and so on.

The Rook Orchestrator module is the glue that runs in the

ceph-mgrdaemon and implements the Ceph orchestration API by making changes to the Ceph storage cluster in Kubernetes that describe desired cluster state. A Rook cluster’sceph-mgrdaemon is running as a Kubernetes pod, and hence, the rook module can connect to the Kubernetes API without any explicit configuration.

Chapter 2. Management of services using the Ceph Orchestrator

As a storage administrator, after installing the Red Hat Ceph Storage cluster, you can monitor and manage the services in a storage cluster using the Ceph Orchestrator. A service is a group of daemons that are configured together.

This section covers the following administrative information:

- Placement specification of the Ceph Orchestrator.

- Deploying the Ceph daemons using the command line interface.

- Deploying the Ceph daemons on a subset of hosts using the command line interface.

- Service specification of the Ceph Orchestrator.

- Deploying the Ceph daemons using the service specification.

- Deploying the Ceph File System mirroring daemon using the service specification.

2.1. Placement specification of the Ceph Orchestrator

You can use the Ceph Orchestrator to deploy osds, mons, mgrs, mds, and rgw services. Red Hat recommends deploying services using placement specifications. You need to know where and how many daemons have to be deployed to deploy a service using the Ceph Orchestrator. Placement specifications can either be passed as command line arguments or as a service specification in a yaml file.

There are two ways of deploying the services using the placement specification:

Using the placement specification directly in the command line interface. For example, if you want to deploy three monitors on the hosts, running the following command deploys three monitors on

host01,host02, andhost03.Example

[ceph: root@host01 /]# ceph orch apply mon --placement="3 host01 host02 host03"Using the placement specification in the YAML file. For example, if you want to deploy

node-exporteron all the hosts, then you can specify the following in theyamlfile.Example

service_type: node-exporter placement: host_pattern: '*' extra_entrypoint_args: - "--collector.textfile.directory=/var/lib/node_exporter/textfile_collector2"

2.2. Deploying the Ceph daemons using the command line interface

Using the Ceph Orchestrator, you can deploy the daemons such as Ceph Manager, Ceph Monitors, Ceph OSDs, monitoring stack, and others using the ceph orch command. Placement specification is passed as --placement argument with the Orchestrator commands.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Hosts are added to the storage cluster.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shellUse one of the following methods to deploy the daemons on the hosts:

Method 1: Specify the number of daemons and the host names:

Syntax

ceph orch apply SERVICE_NAME --placement="NUMBER_OF_DAEMONS HOST_NAME_1 HOST_NAME_2 HOST_NAME_3"Example

[ceph: root@host01 /]# ceph orch apply mon --placement="3 host01 host02 host03"Method 2: Add the labels to the hosts and then deploy the daemons using the labels:

Add the labels to the hosts:

Syntax

ceph orch host label add HOSTNAME_1 LABELExample

[ceph: root@host01 /]# ceph orch host label add host01 monDeploy the daemons with labels:

Syntax

ceph orch apply DAEMON_NAME label:LABELExample

ceph orch apply mon label:mon

Method 3: Add the labels to the hosts and deploy using the

--placementargument:Add the labels to the hosts:

Syntax

ceph orch host label add HOSTNAME_1 LABELExample

[ceph: root@host01 /]# ceph orch host label add host01 monDeploy the daemons using the label placement specification:

Syntax

ceph orch apply DAEMON_NAME --placement="label:LABEL"Example

ceph orch apply mon --placement="label:mon"

Verification

List the service:

Example

[ceph: root@host01 /]# ceph orch lsList the hosts, daemons, and processes:

Syntax

ceph orch ps --daemon_type=DAEMON_NAME ceph orch ps --service_name=SERVICE_NAMEExample

[ceph: root@host01 /]# ceph orch ps --daemon_type=mon [ceph: root@host01 /]# ceph orch ps --service_name=mon

2.3. Deploying the Ceph daemons on a subset of hosts using the command line interface

You can use the --placement option to deploy daemons on a subset of hosts. You can specify the number of daemons in the placement specification with the name of the hosts to deploy the daemons.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Hosts are added to the cluster.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shellList the hosts on which you want to deploy the Ceph daemons:

Example

[ceph: root@host01 /]# ceph orch host lsDeploy the daemons:

Syntax

ceph orch apply SERVICE_NAME --placement="NUMBER_OF_DAEMONS HOST_NAME_1 _HOST_NAME_2 HOST_NAME_3"Example

ceph orch apply mgr --placement="2 host01 host02 host03"In this example, the

mgrdaemons are deployed only on two hosts.

Verification

List the hosts:

Example

[ceph: root@host01 /]# ceph orch host ls

2.4. Service specification of the Ceph Orchestrator

A service specification is a data structure to specify the service attributes and configuration settings that is used to deploy the Ceph service. The following is an example of the multi-document YAML file, cluster.yaml, for specifying service specifications:

Example

service_type: mon

placement:

host_pattern: "mon*"

---

service_type: mgr

placement:

host_pattern: "mgr*"

---

service_type: osd

service_id: default_drive_group

placement:

host_pattern: "osd*"

data_devices:

all: trueThe following list are the parameters where the properties of a service specification are defined as follows:

service_type: The type of service:- Ceph services like mon, crash, mds, mgr, osd, rbd, or rbd-mirror.

- Ceph gateway like nfs or rgw.

- Monitoring stack like Alertmanager, Prometheus, Grafana or Node-exporter.

- Container for custom containers.

-

service_id: A unique name of the service. -

placement: This is used to define where and how to deploy the daemons. -

unmanaged: If set totrue, the Orchestrator will neither deploy nor remove any daemon associated with this service.

Stateless service of Orchestrators

A stateless service is a service that does not need information of the state to be available. For example, to start an rgw service, additional information is not needed to start or run the service. The rgw service does not create information about this state in order to provide the functionality. Regardless of when the rgw service starts, the state is the same.

2.5. Disabling automatic management of daemons

You can mark the Cephadm services as managed or unmanaged without having to edit and re-apply the service specification.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to all the nodes.

Procedure

Set

unmanagedfor services by using this command:Syntax

ceph orch set-unmanaged SERVICE_NAMEExample

[root@host01 ~]# ceph orch set-unmanaged grafanaSet

managedfor services by using this command:Syntax

ceph orch set-managed SERVICE_NAMEExample

[root@host01 ~]# ceph orch set-managed mon

2.6. Deploying the Ceph daemons using the service specification

Using the Ceph Orchestrator, you can deploy daemons such as ceph Manager, Ceph Monitors, Ceph OSDs, monitoring stack, and others using the service specification in a YAML file.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to all the nodes.

Procedure

Create the

yamlfile:Example

[root@host01 ~]# touch mon.yamlThis file can be configured in two different ways:

Edit the file to include the host details in placement specification:

Syntax

service_type: SERVICE_NAME placement: hosts: - HOST_NAME_1 - HOST_NAME_2Example

service_type: mon placement: hosts: - host01 - host02 - host03Edit the file to include the label details in placement specification:

Syntax

service_type: SERVICE_NAME placement: label: "LABEL_1"Example

service_type: mon placement: label: "mon"

Optional: You can also use extra container arguments in the service specification files such as CPUs, CA certificates, and other files while deploying services:

Example

extra_container_args: - "-v" - "/etc/pki/ca-trust/extracted:/etc/pki/ca-trust/extracted:ro" - "--security-opt" - "label=disable" - "cpus=2" - "--collector.textfile.directory=/var/lib/node_exporter/textfile_collector2"NoteRed Hat Ceph Storage supports the use of extra arguments to enable additional metrics in node-exporter deployed by Cephadm.

Mount the YAML file under a directory in the container:

Example

[root@host01 ~]# cephadm shell --mount mon.yaml:/var/lib/ceph/mon/mon.yamlNavigate to the directory:

Example

[ceph: root@host01 /]# cd /var/lib/ceph/mon/Deploy the Ceph daemons using service specification:

Syntax

ceph orch apply -i FILE_NAME.yamlExample

[ceph: root@host01 mon]# ceph orch apply -i mon.yaml

Verification

List the service:

Example

[ceph: root@host01 /]# ceph orch lsList the hosts, daemons, and processes:

Syntax

ceph orch ps --daemon_type=DAEMON_NAMEExample

[ceph: root@host01 /]# ceph orch ps --daemon_type=mon

2.7. Deploying the Ceph File System mirroring daemon using the service specification

Ceph File System (CephFS) supports asynchronous replication of snapshots to a remote CephFS file system using the CephFS mirroring daemon (cephfs-mirror). Snapshot synchronization copies snapshot data to a remote CephFS, and creates a new snapshot on the remote target with the same name. Using the Ceph Orchestrator, you can deploy cephfs-mirror using the service specification in a YAML file.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to all the nodes.

- A CephFS created.

Procedure

Create the

yamlfile:Example

[root@host01 ~]# touch mirror.yamlEdit the file to include the following:

Syntax

service_type: cephfs-mirror service_name: SERVICE_NAME placement: hosts: - HOST_NAME_1 - HOST_NAME_2 - HOST_NAME_3Example

service_type: cephfs-mirror service_name: cephfs-mirror placement: hosts: - host01 - host02 - host03Mount the YAML file under a directory in the container:

Example

[root@host01 ~]# cephadm shell --mount mirror.yaml:/var/lib/ceph/mirror.yamlNavigate to the directory:

Example

[ceph: root@host01 /]# cd /var/lib/ceph/Deploy the

cephfs-mirrordaemon using the service specification:Example

[ceph: root@host01 /]# ceph orch apply -i mirror.yaml

Verification

List the service:

Example

[ceph: root@host01 /]# ceph orch lsList the hosts, daemons, and processes:

Example

[ceph: root@host01 /]# ceph orch ps --daemon_type=cephfs-mirror

Chapter 3. Management of hosts using the Ceph Orchestrator

As a storage administrator, you can use the Ceph Orchestrator with Cephadm in the backend to add, list, and remove hosts in an existing Red Hat Ceph Storage cluster.

You can also add labels to hosts. Labels are free-form and have no specific meanings. Each host can have multiple labels. For example, apply the mon label to all hosts that have monitor daemons deployed, mgr for all hosts with manager daemons deployed, rgw for Ceph object gateways, and so on.

Labeling all the hosts in the storage cluster helps to simplify system management tasks by allowing you to quickly identify the daemons running on each host. In addition, you can use the Ceph Orchestrator or a YAML file to deploy or remove daemons on hosts that have specific host labels.

This section covers the following administrative tasks:

- Adding hosts using the Ceph Orchestrator.

- Adding multiple hosts using the Ceph Orchestrator.

- Listing hosts using the Ceph Orchestrator.

- Adding a label to a host.

- Removing a label from a host.

- Removing hosts using the Ceph Orchestrator.

- Placing hosts in the maintenance mode using the Ceph Orchestrator.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to all the nodes.

-

The IP addresses of the new hosts should be updated in

/etc/hostsfile.

3.1. Adding hosts using the Ceph Orchestrator

You can use the Ceph Orchestrator with Cephadm in the backend to add hosts to an existing Red Hat Ceph Storage cluster.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to all nodes in the storage cluster.

- Register the nodes to the CDN and attach subscriptions.

-

Ansible user with sudo and passwordless

sshaccess to all nodes in the storage cluster.

Procedure

From the Ceph administration node, log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shellExtract the cluster’s public SSH keys to a folder:

Syntax

ceph cephadm get-pub-key > ~/PATHExample

[ceph: root@host01 /]# ceph cephadm get-pub-key > ~/ceph.pubCopy Ceph cluster’s public SSH keys to the root user’s

authorized_keysfile on the new host:Syntax

ssh-copy-id -f -i ~/PATH root@HOST_NAME_2Example

[ceph: root@host01 /]# ssh-copy-id -f -i ~/ceph.pub root@host02From the Ansible administration node, add the new host to the Ansible inventory file. The default location for the file is

/usr/share/cephadm-ansible/hosts. The following example shows the structure of a typical inventory file:Example

host01 host02 host03 [admin] host00NoteIf you have previously added the new host to the Ansible inventory file and run the preflight playbook on the host, skip to step 6.

Run the preflight playbook with the

--limitoption:Syntax

ansible-playbook -i INVENTORY_FILE cephadm-preflight.yml --extra-vars "ceph_origin=rhcs" --limit NEWHOSTExample

[ceph-admin@admin cephadm-ansible]$ ansible-playbook -i hosts cephadm-preflight.yml --extra-vars "ceph_origin=rhcs" --limit host02The preflight playbook installs

podman,lvm2,chronyd, andcephadmon the new host. After installation is complete,cephadmresides in the/usr/sbin/directory.From the Ceph administration node, log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shellUse the

cephadmorchestrator to add hosts to the storage cluster:Syntax

ceph orch host add HOST_NAME IP_ADDRESS_OF_HOST [--label=LABEL_NAME_1,LABEL_NAME_2]The

--labeloption is optional and this adds the labels when adding the hosts. You can add multiple labels to the host.Example

[ceph: root@host01 /]# ceph orch host add host02 10.10.128.70 --labels=mon,mgr

Verification

List the hosts:

Example

[ceph: root@host01 /]# ceph orch host ls

3.2. Adding multiple hosts using the Ceph Orchestrator

You can use the Ceph Orchestrator to add multiple hosts to a Red Hat Ceph Storage cluster at the same time using the service specification in YAML file format.

Prerequisites

- A running Red Hat Ceph Storage cluster.

Procedure

Create the

hosts.yamlfile:Example

[root@host01 ~]# touch hosts.yamlEdit the

hosts.yamlfile to include the following details:Example

service_type: host addr: host01 hostname: host01 labels: - mon - osd - mgr --- service_type: host addr: host02 hostname: host02 labels: - mon - osd - mgr --- service_type: host addr: host03 hostname: host03 labels: - mon - osdMount the YAML file under a directory in the container:

Example

[root@host01 ~]# cephadm shell --mount hosts.yaml:/var/lib/ceph/hosts.yamlNavigate to the directory:

Example

[ceph: root@host01 /]# cd /var/lib/ceph/Deploy the hosts using service specification:

Syntax

ceph orch apply -i FILE_NAME.yamlExample

[ceph: root@host01 hosts]# ceph orch apply -i hosts.yaml

Verification

List the hosts:

Example

[ceph: root@host01 /]# ceph orch host ls

3.3. Listing hosts using the Ceph Orchestrator

You can list hosts of a Ceph cluster with Ceph Orchestrators.

The STATUS of the hosts is blank, in the output of the ceph orch host ls command.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Hosts are added to the storage cluster.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shellList the hosts of the cluster:

Example

[ceph: root@host01 /]# ceph orch host lsYou will see that the STATUS of the hosts is blank which is expected.

3.4. Adding a label to a host

Use the Ceph Orchestrator to add a label to a host. Labels can be used to specify placement of daemons.

A few examples of labels are mgr, mon, and osd based on the service deployed on the hosts. Each host can have multiple labels.

You can also add the following host labels that have special meaning to cephadm and they begin with _:

-

_no_schedule: This label preventscephadmfrom scheduling or deploying daemons on the host. If it is added to an existing host that already contains Ceph daemons, it causescephadmto move those daemons elsewhere, except OSDs which are not removed automatically. When a host is added with the_no_schedulelabel, no daemons are deployed on it. When the daemons are drained before the host is removed, the_no_schedulelabel is set on that host. -

_no_autotune_memory: This label does not autotune memory on the host. It prevents the daemon memory from being tuned even when theosd_memory_target_autotuneoption or other similar options are enabled for one or more daemons on that host. -

_admin: By default, the_adminlabel is applied to the bootstrapped host in the storage cluster and theclient.adminkey is set to be distributed to that host with theceph orch client-keyring {ls|set|rm}function. Adding this label to additional hosts normally causescephadmto deploy configuration and keyring files in the/etc/cephdirectory.

Prerequisites

- A storage cluster that has been installed and bootstrapped.

- Root-level access to all nodes in the storage cluster.

- Hosts are added to the storage cluster.

Procedure

Log in to the Cephadm shell:

Example

[root@host01 ~]# cephadm shellAdd a label to a host:

Syntax

ceph orch host label add HOSTNAME LABELExample

[ceph: root@host01 /]# ceph orch host label add host02 mon

Verification

List the hosts:

Example

[ceph: root@host01 /]# ceph orch host ls

3.5. Removing a label from a host

You can use the Ceph orchestrator to remove a label from a host.

Prerequisites

- A storage cluster that has been installed and bootstrapped.

- Root-level access to all nodes in the storage cluster.

Procedure

Launch the

cephadmshell:[root@host01 ~]# cephadm shell [ceph: root@host01 /]#Remove the label.

Syntax

ceph orch host label rm HOSTNAME LABELExample

[ceph: root@host01 /]# ceph orch host label rm host02 mon

Verification

List the hosts:

Example

[ceph: root@host01 /]# ceph orch host ls

3.6. Removing hosts using the Ceph Orchestrator

You can remove hosts of a Ceph cluster with the Ceph Orchestrators. All the daemons are removed with the drain option which adds the _no_schedule label to ensure that you cannot deploy any daemons or a cluster till the operation is complete.

If you are removing the bootstrap host, be sure to copy the admin keyring and the configuration file to another host in the storage cluster before you remove the host.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to all the nodes.

- Hosts are added to the storage cluster.

- All the services are deployed.

- Cephadm is deployed on the nodes where the services have to be removed.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shellFetch the host details:

Example

[ceph: root@host01 /]# ceph orch host lsDrain all the daemons from the host:

Syntax

ceph orch host drain HOSTNAMEExample

[ceph: root@host01 /]# ceph orch host drain host02The

_no_schedulelabel is automatically applied to the host which blocks deployment.Check the status of OSD removal:

Example

[ceph: root@host01 /]# ceph orch osd rm statusWhen no placement groups (PG) are left on the OSD, the OSD is decommissioned and removed from the storage cluster.

Check if all the daemons are removed from the storage cluster:

Syntax

ceph orch ps HOSTNAMEExample

[ceph: root@host01 /]# ceph orch ps host02Remove the host:

Syntax

ceph orch host rm HOSTNAMEExample

[ceph: root@host01 /]# ceph orch host rm host02

3.7. Placing hosts in the maintenance mode using the Ceph Orchestrator

You can use the Ceph Orchestrator to place the hosts in and out of the maintenance mode. The ceph orch host maintenance enter command stops the systemd target which causes all the Ceph daemons to stop on the host. Similarly, the ceph orch host maintenance exit command restarts the systemd target and the Ceph daemons restart on their own.

The orchestrator adopts the following workflow when the host is placed in maintenance:

-

Confirms the removal of hosts does not impact data availability by running the

orch host ok-to-stopcommand. -

If the host has Ceph OSD daemons, it applies

nooutto the host subtree to prevent data migration from triggering during the planned maintenance slot. - Stops the Ceph target, thereby, stopping all the daemons.

-

Disables the

ceph targeton the host, to prevent a reboot from automatically starting Ceph services.

Exiting maintenance reverses the above sequence.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to all the nodes.

- Hosts added to the cluster.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shellYou can either place the host in maintenance mode or place it out of the maintenance mode:

Place the host in maintenance mode:

Syntax

ceph orch host maintenance enter HOST_NAME [--force]Example

[ceph: root@host01 /]# ceph orch host maintenance enter host02 --forceThe

--forceflag allows the user to bypass warnings, but not alerts.Place the host out of the maintenance mode:

Syntax

ceph orch host maintenance exit HOST_NAMEExample

[ceph: root@host01 /]# ceph orch host maintenance exit host02

Verification

List the hosts:

Example

[ceph: root@host01 /]# ceph orch host ls

Chapter 4. Management of monitors using the Ceph Orchestrator

As a storage administrator, you can deploy additional monitors using placement specification, add monitors using service specification, add monitors to a subnet configuration, and add monitors to specific hosts. Apart from this, you can remove the monitors using the Ceph Orchestrator.

By default, a typical Red Hat Ceph Storage cluster has three or five monitor daemons deployed on different hosts.

Red Hat recommends deploying five monitors if there are five or more nodes in a cluster.

Red Hat recommends deploying three monitors when Ceph is deployed with the OSP director.

Ceph deploys monitor daemons automatically as the cluster grows, and scales back monitor daemons automatically as the cluster shrinks. The smooth execution of this automatic growing and shrinking depends upon proper subnet configuration.

If your monitor nodes or your entire cluster are located on a single subnet, then Cephadm automatically adds up to five monitor daemons as you add new hosts to the cluster. Cephadm automatically configures the monitor daemons on the new hosts. The new hosts reside on the same subnet as the bootstrapped host in the storage cluster.

Cephadm can also deploy and scale monitors to correspond to changes in the size of the storage cluster.

4.1. Ceph Monitors

Ceph Monitors are lightweight processes that maintain a master copy of the storage cluster map. All Ceph clients contact a Ceph monitor and retrieve the current copy of the storage cluster map, enabling clients to bind to a pool and read and write data.

Ceph Monitors use a variation of the Paxos protocol to establish consensus about maps and other critical information across the storage cluster. Due to the nature of Paxos, Ceph requires a majority of monitors running to establish a quorum, thus establishing consensus.

Red Hat requires at least three monitors on separate hosts to receive support for a production cluster.

Red Hat recommends deploying an odd number of monitors. An odd number of Ceph Monitors has a higher resilience to failures than an even number of monitors. For example, to maintain a quorum on a two-monitor deployment, Ceph cannot tolerate any failures; with three monitors, one failure; with four monitors, one failure; with five monitors, two failures. This is why an odd number is advisable. Summarizing, Ceph needs a majority of monitors to be running and to be able to communicate with each other, two out of three, three out of four, and so on.

For an initial deployment of a multi-node Ceph storage cluster, Red Hat requires three monitors, increasing the number two at a time if a valid need for more than three monitors exists.

Since Ceph Monitors are lightweight, it is possible to run them on the same host as OpenStack nodes. However, Red Hat recommends running monitors on separate hosts.

Red Hat ONLY supports collocating Ceph services in containerized environments.

When you remove monitors from a storage cluster, consider that Ceph Monitors use the Paxos protocol to establish a consensus about the master storage cluster map. You must have a sufficient number of Ceph Monitors to establish a quorum.

4.2. Configuring monitor election strategy

The monitor election strategy identifies the net splits and handles failures. You can configure the election monitor strategy in three different modes:

-

classic- This is the default mode in which the lowest ranked monitor is voted based on the elector module between the two sites. -

disallow- This mode lets you mark monitors as disallowed, in which case they will participate in the quorum and serve clients, but cannot be an elected leader. This lets you add monitors to a list of disallowed leaders. If a monitor is in the disallowed list, it will always defer to another monitor. -

connectivity- This mode is mainly used to resolve network discrepancies. It evaluates connection scores, based on pings that check liveness, provided by each monitor for its peers and elects the most connected and reliable monitor to be the leader. This mode is designed to handle net splits, which may happen if your cluster is stretched across multiple data centers or otherwise susceptible. This mode incorporates connection score ratings and elects the monitor with the best score. If a specific monitor is desired to be the leader, configure the election strategy so that the specific monitor is the first monitor in the list with a rank of0.

Red Hat recommends you to stay in the classic mode unless you require features in the other modes.

Before constructing the cluster, change the election_strategy to classic, disallow, or connectivity in the following command:

Syntax

ceph mon set election_strategy {classic|disallow|connectivity}4.3. Deploying the Ceph monitor daemons using the command line interface

The Ceph Orchestrator deploys one monitor daemon by default. You can deploy additional monitor daemons by using the placement specification in the command line interface. To deploy a different number of monitor daemons, specify a different number. If you do not specify the hosts where the monitor daemons should be deployed, the Ceph Orchestrator randomly selects the hosts and deploys the monitor daemons to them.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Hosts are added to the cluster.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shell- There are four different ways of deploying Ceph monitor daemons:

Method 1

Use placement specification to deploy monitors on hosts:

NoteRed Hat recommends that you use the

--placementoption to deploy on specific hosts.Syntax

ceph orch apply mon --placement="HOST_NAME_1 HOST_NAME_2 HOST_NAME_3"Example

[ceph: root@host01 /]# ceph orch apply mon --placement="host01 host02 host03"NoteBe sure to include the bootstrap node as the first node in the command.

ImportantDo not add the monitors individually as

ceph orch apply monsupersedes and will not add the monitors to all the hosts. For example, if you run the following commands, then the first command creates a monitor onhost01. Then the second command supersedes the monitor on host1 and creates a monitor onhost02. Then the third command supersedes the monitor onhost02and creates a monitor onhost03. Eventually, there is a monitor only on the third host.# ceph orch apply mon host01 # ceph orch apply mon host02 # ceph orch apply mon host03

Method 2

Use placement specification to deploy specific number of monitors on specific hosts with labels:

Add the labels to the hosts:

Syntax

ceph orch host label add HOSTNAME_1 LABELExample

[ceph: root@host01 /]# ceph orch host label add host01 monDeploy the daemons:

Syntax

ceph orch apply mon --placement="HOST_NAME_1:mon HOST_NAME_2:mon HOST_NAME_3:mon"Example

[ceph: root@host01 /]# ceph orch apply mon --placement="host01:mon host02:mon host03:mon"

Method 3

Use placement specification to deploy specific number of monitors on specific hosts:

Syntax

ceph orch apply mon --placement="NUMBER_OF_DAEMONS HOST_NAME_1 HOST_NAME_2 HOST_NAME_3"Example

[ceph: root@host01 /]# ceph orch apply mon --placement="3 host01 host02 host03"

Method 4

Deploy monitor daemons randomly on the hosts in the storage cluster:

Syntax

ceph orch apply mon NUMBER_OF_DAEMONSExample

[ceph: root@host01 /]# ceph orch apply mon 3

Verification

List the service:

Example

[ceph: root@host01 /]# ceph orch lsList the hosts, daemons, and processes:

Syntax

ceph orch ps --daemon_type=DAEMON_NAMEExample

[ceph: root@host01 /]# ceph orch ps --daemon_type=mon

4.4. Deploying the Ceph monitor daemons using the service specification

The Ceph Orchestrator deploys one monitor daemon by default. You can deploy additional monitor daemons by using the service specification, like a YAML format file.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Hosts are added to the cluster.

Procedure

Create the

mon.yamlfile:Example

[root@host01 ~]# touch mon.yamlEdit the

mon.yamlfile to include the following details:Syntax

service_type: mon placement: hosts: - HOST_NAME_1 - HOST_NAME_2Example

service_type: mon placement: hosts: - host01 - host02Mount the YAML file under a directory in the container:

Example

[root@host01 ~]# cephadm shell --mount mon.yaml:/var/lib/ceph/mon/mon.yamlNavigate to the directory:

Example

[ceph: root@host01 /]# cd /var/lib/ceph/mon/Deploy the monitor daemons:

Syntax

ceph orch apply -i FILE_NAME.yamlExample

[ceph: root@host01 mon]# ceph orch apply -i mon.yaml

Verification

List the service:

Example

[ceph: root@host01 /]# ceph orch lsList the hosts, daemons, and processes:

Syntax

ceph orch ps --daemon_type=DAEMON_NAMEExample

[ceph: root@host01 /]# ceph orch ps --daemon_type=mon

4.5. Deploying the monitor daemons on specific network using the Ceph Orchestrator

The Ceph Orchestrator deploys one monitor daemon by default. You can explicitly specify the IP address or CIDR network for each monitor and control where each monitor is placed.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Hosts are added to the cluster.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shellDisable automated monitor deployment:

Example

[ceph: root@host01 /]# ceph orch apply mon --unmanagedDeploy monitors on hosts on specific network:

Syntax

ceph orch daemon add mon HOST_NAME_1:IP_OR_NETWORKExample

[ceph: root@host01 /]# ceph orch daemon add mon host03:10.1.2.123

Verification

List the service:

Example

[ceph: root@host01 /]# ceph orch lsList the hosts, daemons, and processes:

Syntax

ceph orch ps --daemon_type=DAEMON_NAMEExample

[ceph: root@host01 /]# ceph orch ps --daemon_type=mon

4.6. Managing the Ceph Monitor service with Red Hat OpenStack Platform

Understand the impact of replacing Controller nodes that host the Ceph Monitor service in director-deployed Red Hat Ceph Storage environments.

If you are using director-deployed Red Hat Ceph Storage, it is important to understand the impact of replacing Controller nodes. The Ceph Monitor service runs on the Controller nodes and is typically assigned IP addresses from the Storage network.

These Ceph Monitor service IP addresses are associated with virtual machine instances that use Red Hat Ceph Storage. The IP addresses are not dynamically updated if a Ceph Monitor service IP address changes during replacement of a Controller node. As a result, replacing Controller nodes can lead to a storage outage, particularly if multiple Controller nodes are replaced.

In this situation, each affected virtual machine instance must be migrated, rebooted, or shelved and unshelved to resolve the IP address change and restore storage access.

To avoid this issue, reuse the IP addresses of the removed Ceph Monitor service instances instead of assigning new IP addresses.

For example,

- name: Controller

count: 3

defaults:

network_config:

template: /home/stack/templates/nic-config/myController.j2

default_route_network:

- external

instances:

- hostname: overcloud-controller-0

name: node00

networks:

- network: ctlplane

vif: true

- network: external

subnet: external_subnet

- network: internal_api

subnet: internal_api_subnet01

fixed_ip: 172.21.11.100

- network: storage

subnet: storage_subnet01

- network: storage_mgmt

subnet: storage_mgmt_subnet01

- network: tenant

subnet: tenant_subnet01To find the current Ceph Monitor service IP addresses on a Controller node, by using the following command:

sudo cephadm shell -- ceph mon statFor more information, see Red Hat OpenStack Platform documentation.

4.7. Removing the monitor daemons using the Ceph Orchestrator

To remove the monitor daemons from the host, you can just redeploy the monitor daemons on other hosts.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Hosts are added to the cluster.

- At least one monitor daemon deployed on the hosts.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shellRun the

ceph orch applycommand to deploy the required monitor daemons:Syntax

ceph orch apply mon “NUMBER_OF_DAEMONS HOST_NAME_1 HOST_NAME_3”If you want to remove monitor daemons from

host02, then you can redeploy the monitors on other hosts.Example

[ceph: root@host01 /]# ceph orch apply mon “2 host01 host03”

Verification

List the hosts,daemons, and processes:

Syntax

ceph orch ps --daemon_type=DAEMON_NAMEExample

[ceph: root@host01 /]# ceph orch ps --daemon_type=mon

4.8. Removing a Ceph Monitor from an unhealthy storage cluster

You can remove a ceph-mon daemon from an unhealthy storage cluster. An unhealthy storage cluster is one that has placement groups persistently in not active + clean state.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the Ceph Monitor node.

- At least one running Ceph Monitor node.

Procedure

Identify a surviving monitor and log into the host:

Syntax

ssh root@MONITOR_IDExample

[root@admin ~]# ssh root@host00Log in to each Ceph Monitor host and stop all the Ceph Monitors:

Syntax

cephadm unit --name DAEMON_NAME.HOSTNAME stopExample

[root@host00 ~]# cephadm unit --name mon.host00 stopSet up the environment suitable for extended daemon maintenance and to run the daemon interactively:

Syntax

cephadm shell --name DAEMON_NAME.HOSTNAMEExample

[root@host00 ~]# cephadm shell --name mon.host00Extract a copy of the

monmapfile:Syntax

ceph-mon -i HOSTNAME --extract-monmap TEMP_PATHExample

[ceph: root@host00 /]# ceph-mon -i host01 --extract-monmap /tmp/monmap 2022-01-05T11:13:24.440+0000 7f7603bd1700 -1 wrote monmap to /tmp/monmapRemove the non-surviving Ceph Monitor(s):

Syntax

monmaptool TEMPORARY_PATH --rm HOSTNAMEExample

[ceph: root@host00 /]# monmaptool /tmp/monmap --rm host01Inject the surviving monitor map with the removed monitor(s) into the surviving Ceph Monitor:

Syntax

ceph-mon -i HOSTNAME --inject-monmap TEMP_PATHExample

[ceph: root@host00 /]# ceph-mon -i host00 --inject-monmap /tmp/monmapStart only the surviving monitors:

Syntax

cephadm unit --name DAEMON_NAME.HOSTNAME startExample

[root@host00 ~]# cephadm unit --name mon.host00 startVerify the monitors form a quorum:

Example

[ceph: root@host00 /]# ceph -s-

Optional: Archive the removed Ceph Monitor’s data directory in

/var/lib/ceph/CLUSTER_FSID/mon.HOSTNAMEdirectory.

Chapter 5. Management of managers using the Ceph Orchestrator

As a storage administrator, you can use the Ceph Orchestrator to deploy additional manager daemons. Cephadm automatically installs a manager daemon on the bootstrap node during the bootstrapping process.

In general, you should set up a Ceph Manager on each of the hosts running the Ceph Monitor daemon to achieve same level of availability.

By default, whichever ceph-mgr instance comes up first is made active by the Ceph Monitors, and others are standby managers. There is no requirement that there should be a quorum among the ceph-mgr daemons.

If the active daemon fails to send a beacon to the monitors for more than the mon mgr beacon grace, then it is replaced by a standby.

If you want to pre-empt failover, you can explicitly mark a ceph-mgr daemon as failed with ceph mgr fail MANAGER_NAME command.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to all the nodes.

- Hosts are added to the cluster.

5.1. Deploying the manager daemons using the Ceph Orchestrator

The Ceph Orchestrator deploys two Manager daemons by default. You can deploy additional manager daemons using the placement specification in the command line interface. To deploy a different number of Manager daemons, specify a different number. If you do not specify the hosts where the Manager daemons should be deployed, the Ceph Orchestrator randomly selects the hosts and deploys the Manager daemons to them.

Ensure your deployment has at least three Ceph Managers in each deployment.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Hosts are added to the cluster.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shell- You can deploy manager daemons in two different ways:

Method 1

Deploy manager daemons using placement specification on specific set of hosts:

NoteRed Hat recommends that you use the

--placementoption to deploy on specific hosts.Syntax

ceph orch apply mgr --placement=" HOST_NAME_1 HOST_NAME_2 HOST_NAME_3"Example

[ceph: root@host01 /]# ceph orch apply mgr --placement="host01 host02 host03"

Method 2

Deploy manager daemons randomly on the hosts in the storage cluster:

Syntax

ceph orch apply mgr NUMBER_OF_DAEMONSExample

[ceph: root@host01 /]# ceph orch apply mgr 3

Verification

List the service:

Example

[ceph: root@host01 /]# ceph orch lsList the hosts, daemons, and processes:

Syntax

ceph orch ps --daemon_type=DAEMON_NAMEExample

[ceph: root@host01 /]# ceph orch ps --daemon_type=mgr

5.2. Removing the manager daemons using the Ceph Orchestrator

To remove the manager daemons from the host, you can just redeploy the daemons on other hosts.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to all the nodes.

- Hosts are added to the cluster.

- At least one manager daemon deployed on the hosts.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shellRun the

ceph orch applycommand to redeploy the required manager daemons:Syntax

ceph orch apply mgr "NUMBER_OF_DAEMONS HOST_NAME_1 HOST_NAME_3"If you want to remove manager daemons from

host02, then you can redeploy the manager daemons on other hosts.Example

[ceph: root@host01 /]# ceph orch apply mgr "2 host01 host03"

Verification

List the hosts,daemons, and processes:

Syntax

ceph orch ps --daemon_type=DAEMON_NAMEExample

[ceph: root@host01 /]# ceph orch ps --daemon_type=mgr

5.3. Using the Ceph Manager modules

Use the ceph mgr module ls command to see the available modules and the modules that are presently enabled.

Enable or disable modules with ceph mgr module enable MODULE command or ceph mgr module disable MODULE command respectively.

If a module is enabled, then the active ceph-mgr daemon loads and executes it. In the case of modules that provide a service, such as an HTTP server, the module might publish its address when it is loaded. To see the addresses of such modules, run the ceph mgr services command.

Some modules might also implement a special standby mode which runs on standby ceph-mgr daemon as well as the active daemon. This enables modules that provide services to redirect their clients to the active daemon, if the client tries to connect to a standby.

Following is an example to enable the dashboard module:

[ceph: root@host01 /]# ceph mgr module enable dashboard

[ceph: root@host01 /]# ceph mgr module ls

MODULE

balancer on (always on)

crash on (always on)

devicehealth on (always on)

orchestrator on (always on)

pg_autoscaler on (always on)

progress on (always on)

rbd_support on (always on)

status on (always on)

telemetry on (always on)

volumes on (always on)

cephadm on

dashboard on

iostat on

nfs on

prometheus on

restful on

alerts -

diskprediction_local -

influx -

insights -

k8sevents -

localpool -

mds_autoscaler -

mirroring -

osd_perf_query -

osd_support -

rgw -

rook -

selftest -

snap_schedule -

stats -

telegraf -

test_orchestrator -

zabbix -

[ceph: root@host01 /]# ceph mgr services

{

"dashboard": "http://myserver.com:7789/",

"restful": "https://myserver.com:8789/"

}

The first time the cluster starts, it uses the mgr_initial_modules setting to override which modules to enable. However, this setting is ignored through the rest of the lifetime of the cluster: only use it for bootstrapping. For example, before starting your monitor daemons for the first time, you might add a section like this to your ceph.conf file:

[mon]

mgr initial modules = dashboard balancerWhere a module implements comment line hooks, the commands are accessible as ordinary Ceph commands and Ceph automatically incorporates module commands into the standard CLI interface and route them appropriately to the module:

[ceph: root@host01 /]# ceph <command | help>You can use the following configuration parameters with the above command:

| Configuration | Description | Type | Default |

|---|---|---|---|

|

| Path to load modules from. | String |

|

|

| Path to load daemon data (such as keyring) | String |

|

|

| How many seconds between manager beacons to monitors, and other periodic checks. | Integer |

|

|

| How long after last beacon should a manager be considered failed. | Integer |

|

5.4. Using the Ceph Manager balancer module

The balancer is a module for Ceph Manager (ceph-mgr) that optimizes the placement of placement groups (PGs) across OSDs in order to achieve a balanced distribution, either automatically or in a supervised fashion.

Currently the balancer module cannot be disabled. It can only be turned off to customize the configuration.

Modes

There are currently two supported balancer modes:

crush-compat: The CRUSH compat mode uses the compat

weight-setfeature, introduced in Ceph Luminous, to manage an alternative set of weights for devices in the CRUSH hierarchy. The normal weights should remain set to the size of the device to reflect the target amount of data that you want to store on the device. The balancer then optimizes theweight-setvalues, adjusting them up or down in small increments in order to achieve a distribution that matches the target distribution as closely as possible. Because PG placement is a pseudorandom process, there is a natural amount of variation in the placement; by optimizing the weights, the balancer counter-acts that natural variation.This mode is fully backwards compatible with older clients. When an OSDMap and CRUSH map are shared with older clients, the balancer presents the optimized weightsff as the real weights.

The primary restriction of this mode is that the balancer cannot handle multiple CRUSH hierarchies with different placement rules if the subtrees of the hierarchy share any OSDs. Because this configuration makes managing space utilization on the shared OSDs difficult, it is generally not recommended. As such, this restriction is normally not an issue.

upmap: Starting with Luminous, the OSDMap can store explicit mappings for individual OSDs as exceptions to the normal CRUSH placement calculation. These

upmapentries provide fine-grained control over the PG mapping. This CRUSH mode will optimize the placement of individual PGs in order to achieve a balanced distribution. In most cases, this distribution is "perfect", with an equal number of PGs on each OSD +/-1 PG, as they might not divide evenly.ImportantTo allow use of this feature, you must tell the cluster that it only needs to support luminous or later clients with the following command:

[ceph: root@host01 /]# ceph osd set-require-min-compat-client luminousThis command fails if any pre-luminous clients or daemons are connected to the monitors.

Due to a known issue, kernel CephFS clients report themselves as jewel clients. To work around this issue, use the

--yes-i-really-mean-itflag:[ceph: root@host01 /]# ceph osd set-require-min-compat-client luminous --yes-i-really-mean-itYou can check what client versions are in use with:

[ceph: root@host01 /]# ceph features

5.4.1. Balancing a Red Hat Ceph cluster using capacity balancer

Balance a Red Hat Ceph storage cluster using the capacity balancer.

Prerequisites

- A running Red Hat Ceph Storage cluster.

Procedure

Check if the balancer module is enabled:

Example

[ceph: root@host01 /]# ceph mgr module enable balancerTurn on the balancer module:

Example

[ceph: root@host01 /]# ceph balancer onTo change the mode use the following command. The default mode is

upmap:Example

[ceph: root@host01 /]# ceph balancer mode crush-compator

Example

[ceph: root@host01 /]# ceph balancer mode upmapCheck the current status of the balancer.

Example

[ceph: root@host01 /]# ceph balancer status

Automatic balancing

By default, when turning on the balancer module, automatic balancing is used:

Example

[ceph: root@host01 /]# ceph balancer onYou can turn off the balancer again with:

Example

[ceph: root@host01 /]# ceph balancer off

This uses the crush-compat mode, which is backward compatible with older clients and makes small changes to the data distribution over time to ensure that OSDs are equally utilized.

Throttling

No adjustments are made to the PG distribution if the cluster is degraded, for example, if an OSD has failed and the system has not yet healed itself.

When the cluster is healthy, the balancer throttles its changes such that the percentage of PGs that are misplaced, or need to be moved, is below a threshold of 5% by default. This percentage can be adjusted using the target_max_misplaced_ratio setting. For example, to increase the threshold to 7%:

Example

[ceph: root@host01 /]# ceph config-key set mgr target_max_misplaced_ratio .07For automatic balancing:

- Set the number of seconds to sleep in between runs of the automatic balancer:

Example

[ceph: root@host01 /]# ceph config set mgr mgr/balancer/sleep_interval 60- Set the time of day to begin automatic balancing in HHMM format:

Example

[ceph: root@host01 /]# ceph config set mgr mgr/balancer/begin_time 0000- Set the time of day to finish automatic balancing in HHMM format:

Example

[ceph: root@host01 /]# ceph config set mgr mgr/balancer/end_time 2359-

Restrict automatic balancing to this day of the week or later. Uses the same conventions as crontab,

0is Sunday,1is Monday, and so on:

Example

[ceph: root@host01 /]# ceph config set mgr mgr/balancer/begin_weekday 0-

Restrict automatic balancing to this day of the week or earlier. This uses the same conventions as crontab,

0is Sunday,1is Monday, and so on:

Example

[ceph: root@host01 /]# ceph config set mgr mgr/balancer/end_weekday 6-

Define the pool IDs to which the automatic balancing is limited. The default for this is an empty string, meaning all pools are balanced. The numeric pool IDs can be gotten with the

ceph osd pool ls detailcommand:

Example

[ceph: root@host01 /]# ceph config set mgr mgr/balancer/pool_ids 1,2,3Supervised optimization

The balancer operation is broken into a few distinct phases:

-

Building a

plan. -

Evaluating the quality of the data distribution, either for the current PG distribution, or the PG distribution that would result after executing a

plan. Executing the

plan.To evaluate and score the current distribution:

Example

[ceph: root@host01 /]# ceph balancer evalTo evaluate the distribution for a single pool:

Syntax

ceph balancer eval POOL_NAMEExample

[ceph: root@host01 /]# ceph balancer eval rbdTo see greater detail for the evaluation:

Example

[ceph: root@host01 /]# ceph balancer eval-verbose ...To generate a plan using the currently configured mode:

Syntax

ceph balancer optimize PLAN_NAMEReplace PLAN_NAME with a custom plan name.

Example

[ceph: root@host01 /]# ceph balancer optimize rbd_123To see the contents of a plan:

Syntax

ceph balancer show PLAN_NAMEExample

[ceph: root@host01 /]# ceph balancer show rbd_123To discard old plans:

Syntax

ceph balancer rm PLAN_NAMEExample

[ceph: root@host01 /]# ceph balancer rm rbd_123To see currently recorded plans use the status command:

[ceph: root@host01 /]# ceph balancer statusTo calculate the quality of the distribution that would result after executing a plan:

Syntax

ceph balancer eval PLAN_NAMEExample

[ceph: root@host01 /]# ceph balancer eval rbd_123To execute the plan:

Syntax

ceph balancer execute PLAN_NAMEExample

[ceph: root@host01 /]# ceph balancer execute rbd_123NoteOnly execute the plan if it is expected to improve the distribution. After execution, the plan is discarded.

5.4.2. Balancing a Red Hat Ceph cluster using read balancer [Technology Preview]

Read Balancer is a Technology Preview feature only for Red Hat Ceph Storage 7.0. Technology Preview features are not supported with Red Hat production service level agreements (SLAs), might not be functionally complete, and Red Hat does not recommend using them for production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process. See the support scope for Red Hat Technology Preview features for more details.

If you have unbalanced primary OSDs, you can update them with an offline optimizer that is built into the osdmaptool.

Red Hat recommends that you run the capacity balancer before running the read balancer to ensure optimal results.

Follow the steps in the procedure to balance a cluster using the read balancer:

Prerequisites

- A running and capacity balanced Red Hat Ceph Storage cluster.

Red Hat recommends that you run the capacity balancer to balance the capacity on each OSD before running the read balancer to ensure optimal results. Run the following steps to balance the capacity:

Get the latest copy of your osdmap.

[ceph: root@host01 /]# ceph osd getmap -o mapRun the upmap balancer.

[ceph: root@host01 /]# ospmaptool map –upmap out.txtThe file out.txt contains the proposed solution.

The commands in this procedure are normal Ceph CLI commands that are run to apply the changes to the cluster.

Run the following command if there are any recommendations in the out.txt file.

[ceph: root@host01 /]# source out.txtFor information, see Balancing IBM Ceph cluster using capacity balancer

Procedure

Check the

read_balance_score, available for each pool:[ceph: root@host01 /]# ceph osd pool ls detailIf the

read_balance_scoreis considerably above 1, your pool has unbalanced primary OSDs.For a homogenous cluster the optimal score is [Ceil{(number of PGs/Number of OSDs)}/(number of PGs/Number of OSDs)]/[ (number of PGs/Number of OSDs)/(number of PGs/Number of OSDs)]. For example, if you have a pool with 32 PG and 10 OSDs then (number of PGs/Number of OSDs) = 32/10 = 3.2. So, the optimal score if all the devices are identical is the ceiling value of 3.2 divided by (number of PGs/Number of OSDs) that is 4/3.2 = 1.25. If you have another pool in the same system with 64 PGs the optimal score is 7/6.4 =1.09375

Example output:

$ ceph osd pool ls detail pool 1 '.mgr' replicated size 3 min_size 1 crush_rule 0 object_hash rjenkins pg_num 1 pgp_num 1 autoscale_mode on last_change 17 flags hashpspool stripe_width 0 pg_num_max 32 pg_num_min 1 application mgr read_balance_score 3.00 pool 2 'cephfs.a.meta' replicated size 3 min_size 1 crush_rule 0 object_hash rjenkins pg_num 16 pgp_num 16 autoscale_mode on last_change 55 lfor 0/0/25 flags hashpspool stripe_width 0 pg_autoscale_bias 4 pg_num_min 16 recovery_priority 5 application cephfs read_balance_score 1.50 pool 3 'cephfs.a.data' replicated size 3 min_size 1 crush_rule 0 object_hash rjenkins pg_num 128 pgp_num 128 autoscale_mode on last_change 27 lfor 0/0/25 flags hashpspool,bulk stripe_width 0 application cephfs read_balance_score 1.31Get the the latest copy of your

osdmap:[ceph: root@host01 /]# ceph osd getmap -o omExample output:

got osdmap epoch 56Run the optimizer:

The file

out.txtcontains the the proposed solution.[ceph: root@host01 /]# osdmaptool om --read out.txt --read-pool _POOL_NAME_ [--vstart]Example output:

$ osdmaptool om --read out.txt --read-pool cephfs.a.meta ./bin/osdmaptool: osdmap file 'om' writing upmap command output to: out.txt ---------- BEFORE ------------ osd.0 | primary affinity: 1 | number of prims: 4 osd.1 | primary affinity: 1 | number of prims: 8 osd.2 | primary affinity: 1 | number of prims: 4 read_balance_score of 'cephfs.a.meta': 1.5 ---------- AFTER ------------ osd.0 | primary affinity: 1 | number of prims: 5 osd.1 | primary affinity: 1 | number of prims: 6 osd.2 | primary affinity: 1 | number of prims: 5 read_balance_score of 'cephfs.a.meta': 1.13 num changes: 2The file

out.txtcontains the the proposed solution.The commands in this procedure are normal Ceph CLI commands that are run in order to apply the changes to the cluster. If you are working in a vstart cluster, you can pass the

--vstartparameter so the CLI commands are formatted with the./bin/ prefix.[ceph: root@host01 /]# source out.txtExample output:

$ cat out.txt ceph osd pg-upmap-primary 2.3 0 ceph osd pg-upmap-primary 2.4 2 $ source out.txt change primary for pg 2.3 to osd.0 change primary for pg 2.4 to osd.2NoteIf you are running the command

ceph osd pg-upmap-primaryfor the first time, you might get a warning as:Error EPERM: min_compat_client luminous < reef, which is required for pg-upmap-primary. Try 'ceph osd set-require-min-compat-client reef' before using the new interfaceIn this case, run the recommended command

ceph osd set-require-min-compat-client reefand adjust your cluster’s min-compact-client.

Consider rechecking the scores and re-running the balancer if the number of placement groups (PGs) change or if any OSDs are added or removed from the cluster as these operations can considerably impact the read balancer effect on a pool.

5.5. Using the Ceph Manager alerts module

You can use the Ceph Manager alerts module to send simple alert messages about the Red Hat Ceph Storage cluster’s health by email.

This module is not intended to be a robust monitoring solution. The fact that it is run as part of the Ceph cluster itself is fundamentally limiting in that a failure of the ceph-mgr daemon prevents alerts from being sent. This module can, however, be useful for standalone clusters that exist in environments where existing monitoring infrastructure does not exist.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the Ceph Monitor node.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shellEnable the alerts module:

Example

[ceph: root@host01 /]# ceph mgr module enable alertsEnsure the alerts module is enabled:

Example

[ceph: root@host01 /]# ceph mgr module ls | more { "always_on_modules": [ "balancer", "crash", "devicehealth", "orchestrator", "pg_autoscaler", "progress", "rbd_support", "status", "telemetry", "volumes" ], "enabled_modules": [ "alerts", "cephadm", "dashboard", "iostat", "nfs", "prometheus", "restful" ]Configure the Simple Mail Transfer Protocol (SMTP):

Syntax

ceph config set mgr mgr/alerts/smtp_host SMTP_SERVER ceph config set mgr mgr/alerts/smtp_destination RECEIVER_EMAIL_ADDRESS ceph config set mgr mgr/alerts/smtp_sender SENDER_EMAIL_ADDRESSExample

[ceph: root@host01 /]# ceph config set mgr mgr/alerts/smtp_host smtp.example.com [ceph: root@host01 /]# ceph config set mgr mgr/alerts/smtp_destination example@example.com [ceph: root@host01 /]# ceph config set mgr mgr/alerts/smtp_sender example2@example.comOptional: By default, the alerts module uses SSL and port 465.

Syntax

ceph config set mgr mgr/alerts/smtp_port PORT_NUMBERExample

[ceph: root@host01 /]# ceph config set mgr mgr/alerts/smtp_port 587Do not set the

smtp_sslparameter while configuring alerts.Authenticate to the SMTP server:

Syntax

ceph config set mgr mgr/alerts/smtp_user USERNAME ceph config set mgr mgr/alerts/smtp_password PASSWORDExample

[ceph: root@host01 /]# ceph config set mgr mgr/alerts/smtp_user admin1234 [ceph: root@host01 /]# ceph config set mgr mgr/alerts/smtp_password admin1234Optional: By default, SMTP

Fromname isCeph. To change that, set thesmtp_from_nameparameter:Syntax

ceph config set mgr mgr/alerts/smtp_from_name CLUSTER_NAMEExample

[ceph: root@host01 /]# ceph config set mgr mgr/alerts/smtp_from_name 'Ceph Cluster Test'Optional: By default, the alerts module checks the storage cluster’s health every minute, and sends a message when there is a change in the cluster health status. To change the frequency, set the

intervalparameter:Syntax

ceph config set mgr mgr/alerts/interval INTERVALExample

[ceph: root@host01 /]# ceph config set mgr mgr/alerts/interval "5m"In this example, the interval is set to 5 minutes.

Optional: Send an alert immediately:

Example

[ceph: root@host01 /]# ceph alerts send

5.6. Using the Ceph manager crash module

Using the Ceph manager crash module, you can collect information about daemon crashdumps and store it in the Red Hat Ceph Storage cluster for further analysis.

By default, daemon crashdumps are dumped in /var/lib/ceph/crash. You can configure it with the option crash dir. Crash directories are named by time, date, and a randomly-generated UUID, and contain a metadata file meta and a recent log file, with a crash_id that is the same.

You can use ceph-crash.service to submit these crashes automatically and persist in the Ceph Monitors. The ceph-crash.service watches the crashdump directory and uploads them with ceph crash post.

The RECENT_CRASH heath message is one of the most common health messages in a Ceph cluster. This health message means that one or more Ceph daemons has crashed recently, and the crash has not yet been archived or acknowledged by the administrator. This might indicate a software bug, a hardware problem like a failing disk, or some other problem. The option mgr/crash/warn_recent_interval controls the time period of what recent means, which is two weeks by default. You can disable the warnings by running the following command:

Example

[ceph: root@host01 /]# ceph config set mgr/crash/warn_recent_interval 0

The option mgr/crash/retain_interval controls the period for which you want to retain the crash reports before they are automatically purged. The default for this option is one year.

Prerequisites

- A running Red Hat Ceph Storage cluster.

Procedure

Ensure the crash module is enabled:

Example

[ceph: root@host01 /]# ceph mgr module ls | more { "always_on_modules": [ "balancer", "crash", "devicehealth", "orchestrator_cli", "progress", "rbd_support", "status", "volumes" ], "enabled_modules": [ "dashboard", "pg_autoscaler", "prometheus" ]Save a crash dump: The metadata file is a JSON blob stored in the crash dir as

meta. You can invoke the ceph command-i -option, which reads from stdin.Example

[ceph: root@host01 /]# ceph crash post -i metaList the timestamp or the UUID crash IDs for all the new and archived crash info:

Example

[ceph: root@host01 /]# ceph crash lsList the timestamp or the UUID crash IDs for all the new crash information:

Example

[ceph: root@host01 /]# ceph crash ls-newList the timestamp or the UUID crash IDs for all the new crash information:

Example

[ceph: root@host01 /]# ceph crash ls-newList the summary of saved crash information grouped by age:

Example

[ceph: root@host01 /]# ceph crash stat 8 crashes recorded 8 older than 1 days old: 2022-05-20T08:30:14.533316Z_4ea88673-8db6-4959-a8c6-0eea22d305c2 2022-05-20T08:30:14.590789Z_30a8bb92-2147-4e0f-a58b-a12c2c73d4f5 2022-05-20T08:34:42.278648Z_6a91a778-bce6-4ef3-a3fb-84c4276c8297 2022-05-20T08:34:42.801268Z_e5f25c74-c381-46b1-bee3-63d891f9fc2d 2022-05-20T08:34:42.803141Z_96adfc59-be3a-4a38-9981-e71ad3d55e47 2022-05-20T08:34:42.830416Z_e45ed474-550c-44b3-b9bb-283e3f4cc1fe 2022-05-24T19:58:42.549073Z_b2382865-ea89-4be2-b46f-9a59af7b7a2d 2022-05-24T19:58:44.315282Z_1847afbc-f8a9-45da-94e8-5aef0738954eView the details of the saved crash:

Syntax

ceph crash info CRASH_IDExample

[ceph: root@host01 /]# ceph crash info 2022-05-24T19:58:42.549073Z_b2382865-ea89-4be2-b46f-9a59af7b7a2d { "assert_condition": "session_map.sessions.empty()", "assert_file": "/builddir/build/BUILD/ceph-16.1.0-486-g324d7073/src/mon/Monitor.cc", "assert_func": "virtual Monitor::~Monitor()", "assert_line": 287, "assert_msg": "/builddir/build/BUILD/ceph-16.1.0-486-g324d7073/src/mon/Monitor.cc: In function 'virtual Monitor::~Monitor()' thread 7f67a1aeb700 time 2022-05-24T19:58:42.545485+0000\n/builddir/build/BUILD/ceph-16.1.0-486-g324d7073/src/mon/Monitor.cc: 287: FAILED ceph_assert(session_map.sessions.empty())\n", "assert_thread_name": "ceph-mon", "backtrace": [ "/lib64/libpthread.so.0(+0x12b30) [0x7f679678bb30]", "gsignal()", "abort()", "(ceph::__ceph_assert_fail(char const*, char const*, int, char const*)+0x1a9) [0x7f6798c8d37b]", "/usr/lib64/ceph/libceph-common.so.2(+0x276544) [0x7f6798c8d544]", "(Monitor::~Monitor()+0xe30) [0x561152ed3c80]", "(Monitor::~Monitor()+0xd) [0x561152ed3cdd]", "main()", "__libc_start_main()", "_start()" ], "ceph_version": "16.2.8-65.el8cp", "crash_id": "2022-07-06T19:58:42.549073Z_b2382865-ea89-4be2-b46f-9a59af7b7a2d", "entity_name": "mon.ceph-adm4", "os_id": "rhel", "os_name": "Red Hat Enterprise Linux", "os_version": "8.5 (Ootpa)", "os_version_id": "8.5", "process_name": "ceph-mon", "stack_sig": "957c21d558d0cba4cee9e8aaf9227b3b1b09738b8a4d2c9f4dc26d9233b0d511", "timestamp": "2022-07-06T19:58:42.549073Z", "utsname_hostname": "host02", "utsname_machine": "x86_64", "utsname_release": "4.18.0-240.15.1.el8_3.x86_64", "utsname_sysname": "Linux", "utsname_version": "#1 SMP Wed Jul 06 03:12:15 EDT 2022" }Remove saved crashes older than KEEP days: Here, KEEP must be an integer.

Syntax

ceph crash prune KEEPExample

[ceph: root@host01 /]# ceph crash prune 60Archive a crash report so that it is no longer considered for the

RECENT_CRASHhealth check and does not appear in thecrash ls-newoutput. It appears in thecrash ls.Syntax

ceph crash archive CRASH_IDExample

[ceph: root@host01 /]# ceph crash archive 2022-05-24T19:58:42.549073Z_b2382865-ea89-4be2-b46f-9a59af7b7a2dArchive all crash reports:

Example

[ceph: root@host01 /]# ceph crash archive-allRemove the crash dump:

Syntax

ceph crash rm CRASH_IDExample

[ceph: root@host01 /]# ceph crash rm 2022-05-24T19:58:42.549073Z_b2382865-ea89-4be2-b46f-9a59af7b7a2d

5.7. Telemetry module

The telemetry module sends data about the storage cluster to help understand how Ceph is used and what problems are encountered during operations. The data is visualized on the public dashboard to view the summary statistics on how many clusters are reporting, their total capacity and OSD count, and version distribution trends.

Channels

The telemetry report is broken down into different channels, each with a different type of information. After the telemetry is enabled, you can turn on or turn off the individual channels.

The following are the four different channels:

basic- The default ison. This channel provides the basic information about the clusters, which includes the following information:- The capacity of the cluster.

- The number of monitors, managers, OSDs, MDSs, object gateways, or other daemons.

- The software version that is currently being used.

- The number and types of RADOS pools and Ceph File Systems.

- The names of configuration options that are changed from their default (but not their values).

crash- The default ison. This channel provides information about the daemon crashes, which includes the following information:- The type of daemon.

- The version of the daemon.

- The operating system, the OS distribution, and the kernel version.

- The stack trace that identifies where in the Ceph code the crash occurred.

-

device- The default ison. This channel provides information about the device metrics, which includes anonymized SMART metrics. -

ident- The default isoff. This channel provides the user-provided identifying information about the cluster such as cluster description, and contact email address. perf- The default isoff. This channel provides the various performance metrics of the cluster, which can be used for the following:- Reveal overall cluster health.

- Identify workload patterns.

- Troubleshoot issues with latency, throttling, memory management, and other similar issues.

- Monitor cluster performance by daemon.

The data that is reported does not contain any sensitive data such as pool names, object names, object contents, hostnames, or device serial numbers.

It contains counters and statistics on how the cluster is deployed, Ceph version, host distribution, and other parameters that help the project to gain a better understanding of the way Ceph is used.

Data is secure and is sent to https://telemetry.ceph.com.

Enable telemetry

Before enabling channels, ensure that the telemetry is on.

Enable telemetry:

ceph telemetry on

Enable and disable channels

Enable or disable individual channels:

ceph telemetry enable channel basic ceph telemetry enable channel crash ceph telemetry enable channel device ceph telemetry enable channel ident ceph telemetry enable channel perf ceph telemetry disable channel basic ceph telemetry disable channel crash ceph telemetry disable channel device ceph telemetry disable channel ident ceph telemetry disable channel perfEnable or disable multiple channels:

ceph telemetry enable channel basic crash device ident perf ceph telemetry disable channel basic crash device ident perfEnable or disable all channels together:

ceph telemetry enable channel all ceph telemetry disable channel all

Sample report

To review the data reported at any time, generate a sample report:

ceph telemetry showIf telemetry is

off, preview the sample report:ceph telemetry previewIt takes longer to generate a sample report for storage clusters with hundreds of OSDs or more.

To protect your privacy, device reports are generated separately, and data such as hostname and device serial number are anonymized. The device telemetry is sent to a different endpoint and does not associate the device data with a particular cluster. To see the device report, run the following command:

ceph telemetry show-deviceIf telemetry is

off, preview the sample device report:ceph telemetry preview-deviceGet a single output of both the reports with telemetry

on:ceph telemetry show-allGet a single output of both the reports with telemetry

off:ceph telemetry preview-allGenerate a sample report by channel:

Syntax

ceph telemetry show CHANNEL_NAMEGenerate a preview of the sample report by channel:

Syntax

ceph telemetry preview CHANNEL_NAME

Collections

Collections are different aspects of data that is collected within a channel.

List the collections:

ceph telemetry collection lsSee the difference between the collections that you are enrolled in, and the new, available collections:

ceph telemetry diffEnroll to the most recent collections:

Syntax

ceph telemetry on ceph telemetry enable channel CHANNEL_NAME

Interval

The module compiles and sends a new report every 24 hours by default.

Adjust the interval:

Syntax

ceph config set mgr mgr/telemetry/interval INTERVALExample

[ceph: root@host01 /]# ceph config set mgr mgr/telemetry/interval 72In the example, the report is generated every three days (72 hours).

Status

View the current configuration:

ceph telemetry status

Manually sending telemetry

Send telemetry data on an ad hoc basis:

ceph telemetry sendIf telemetry is disabled, add

--license sharing-1-0to theceph telemetry sendcommand.

Sending telemetry through a proxy

If the cluster cannot connect directly to the configured telemetry endpoint, you can configure a HTTP/HTTPs proxy server:

Syntax

ceph config set mgr mgr/telemetry/proxy PROXY_URLExample

[ceph: root@host01 /]# ceph config set mgr mgr/telemetry/proxy https://10.0.0.1:8080You can include the user pass in the command:

Example

[ceph: root@host01 /]# ceph config set mgr mgr/telemetry/proxy https://10.0.0.1:8080

Contact and description

Optional: Add a contact and description to the report:

Syntax

ceph config set mgr mgr/telemetry/contact '_CONTACT_NAME_' ceph config set mgr mgr/telemetry/description '_DESCRIPTION_' ceph config set mgr mgr/telemetry/channel_ident trueExample

[ceph: root@host01 /]# ceph config set mgr mgr/telemetry/contact 'John Doe <john.doe@example.com>' [ceph: root@host01 /]# ceph config set mgr mgr/telemetry/description 'My first Ceph cluster' [ceph: root@host01 /]# ceph config set mgr mgr/telemetry/channel_ident trueIf

identflag is enabled, its details are not displayed in the leaderboard.

Leaderboard

Participate in a leaderboard on the public dashboard:

Example

[ceph: root@host01 /]# ceph config set mgr mgr/telemetry/leaderboard trueThe leaderboard displays basic information about the storage cluster. This board includes the total storage capacity and the number of OSDs.

Disable telemetry

Disable telemetry any time:

Example

ceph telemetry off

Chapter 6. Management of OSDs using the Ceph Orchestrator

As a storage administrator, you can use the Ceph Orchestrators to manage OSDs of a Red Hat Ceph Storage cluster.

6.1. Ceph OSDs

When a Red Hat Ceph Storage cluster is up and running, you can add OSDs to the storage cluster at runtime.

A Ceph OSD generally consists of one ceph-osd daemon for one storage drive and its associated journal within a node. If a node has multiple storage drives, then map one ceph-osd daemon for each drive.

Red Hat recommends checking the capacity of a cluster regularly to see if it is reaching the upper end of its storage capacity. As a storage cluster reaches its near full ratio, add one or more OSDs to expand the storage cluster’s capacity.

When you want to reduce the size of a Red Hat Ceph Storage cluster or replace the hardware, you can also remove an OSD at runtime. If the node has multiple storage drives, you might also need to remove one of the ceph-osd daemon for that drive. Generally, it’s a good idea to check the capacity of the storage cluster to see if you are reaching the upper end of its capacity. Ensure that when you remove an OSD that the storage cluster is not at its near full ratio.