High Availability Add-On Reference

Reference guide for configuration and management of the High Availability Add-On

Abstract

Chapter 1. Red Hat High Availability Add-On Configuration and Management Reference Overview

pcs configuration interface or with the pcsd GUI interface.

1.1. New and Changed Features

1.1.1. New and Changed Features for Red Hat Enterprise Linux 7.1

- The

pcs resource cleanupcommand can now reset the resource status andfailcountfor all resources, as documented in Section 6.11, “Cluster Resources Cleanup”. - You can specify a

lifetimeparameter for thepcs resource movecommand, as documented in Section 8.1, “Manually Moving Resources Around the Cluster”. - As of Red Hat Enterprise Linux 7.1, you can use the

pcs aclcommand to set permissions for local users to allow read-only or read-write access to the cluster configuration by using access control lists (ACLs). For information on ACLs, see Section 4.5, “Setting User Permissions”. - Section 7.2.3, “Ordered Resource Sets” and Section 7.3, “Colocation of Resources” have been extensively updated and clarified.

- Section 6.1, “Resource Creation” documents the

disabledparameter of thepcs resource createcommand, to indicate that the resource being created is not started automatically. - Section 10.1, “Configuring Quorum Options” documents the new

cluster quorum unblockfeature, which prevents the cluster from waiting for all nodes when establishing quorum. - Section 6.1, “Resource Creation” documents the

beforeandafterparameters of thepcs resource createcommand, which can be used to configure resource group ordering. - As of the Red Hat Enterprise Linux 7.1 release, you can backup the cluster configuration in a tarball and restore the cluster configuration files on all nodes from backup with the

backupandrestoreoptions of thepcs configcommand. For information on this feature, see Section 3.8, “Backing Up and Restoring a Cluster Configuration”. - Small clarifications have been made throughout this document.

1.1.2. New and Changed Features for Red Hat Enterprise Linux 7.2

- You can now use the

pcs resource relocate runcommand to move a resource to its preferred node, as determined by current cluster status, constraints, location of resources and other settings. For information on this command, see Section 8.1.2, “Moving a Resource to its Preferred Node”. - Section 13.2, “Event Notification with Monitoring Resources” has been modified and expanded to better document how to configure the

ClusterMonresource to execute an external program to determine what to do with cluster notifications. - When configuring fencing for redundant power supplies, you now are only required to define each device once and to specify that both devices are required to fence the node. For information on configuring fencing for redundant power supplies, see Section 5.10, “Configuring Fencing for Redundant Power Supplies”.

- This document now provides a procedure for adding a node to an existing cluster in Section 4.4.3, “Adding Cluster Nodes”.

- The new

resource-discoverylocation constraint option allows you to indicate whether Pacemaker should perform resource discovery on a node for a specified resource, as documented in Table 7.1, “Simple Location Constraint Options”. - Small clarifications and corrections have been made throughout this document.

1.1.3. New and Changed Features for Red Hat Enterprise Linux 7.3

- Section 9.4, “The pacemaker_remote Service”, has been wholly rewritten for this version of the document.

- You can configure Pacemaker alerts by means of alert agents, which are external programs that the cluster calls in the same manner as the cluster calls resource agents to handle resource configuration and operation. Pacemaker alert agents are described in Section 13.1, “Pacemaker Alert Agents (Red Hat Enterprise Linux 7.3 and later)”.

- New quorum administration commands are supported with this release which allow you to display the quorum status and to change the

expected_votesparameter. These commands are described in Section 10.2, “Quorum Administration Commands (Red Hat Enterprise Linux 7.3 and Later)”. - You can now modify general quorum options for your cluster with the

pcs quorum updatecommand, as described in Section 10.3, “Modifying Quorum Options (Red Hat Enterprise Linux 7.3 and later)”. - You can configure a separate quorum device which acts as a third-party arbitration device for the cluster. The primary use of this feature is to allow a cluster to sustain more node failures than standard quorum rules allow. This feature is provided for technical preview only. For information on quorum devices, see Section 10.5, “Quorum Devices”.

- Red Hat Enterprise Linux release 7.3 provides the ability to configure high availability clusters that span multiple sites through the use of a Booth cluster ticket manager. This feature is provided for technical preview only. For information on the Booth cluster ticket manager, see Chapter 14, Configuring Multi-Site Clusters with Pacemaker.

- When configuring a KVM guest node running a the

pacemaker_remoteservice, you can include guest nodes in groups, which allows you to group a storage device, file system, and VM. For information on configuring KVM guest nodes, see Section 9.4.5, “Configuration Overview: KVM Guest Node”.

1.1.4. New and Changed Features for Red Hat Enterprise Linux 7.4

- Red Hat Enterprise Linux release 7.4 provides full support for the ability to configure high availability clusters that span multiple sites through the use of a Booth cluster ticket manager. For information on the Booth cluster ticket manager, see Chapter 14, Configuring Multi-Site Clusters with Pacemaker.

- Red Hat Enterprise Linux 7.4 provides full support for the ability to configure a separate quorum device which acts as a third-party arbitration device for the cluster. The primary use of this feature is to allow a cluster to sustain more node failures than standard quorum rules allow. For information on quorum devices, see Section 10.5, “Quorum Devices”.

- You can now specify nodes in fencing topology by a regular expression applied on a node name and by a node attribute and its value. For information on configuring fencing levels, see Section 5.9, “Configuring Fencing Levels”.

- Red Hat Enterprise Linux 7.4 supports the

NodeUtilizationresource agent, which can detect the system parameters of available CPU, host memory availability, and hypervisor memory availability and add these parameters into the CIB. For information on this resource agent, see Section 9.6.5, “The NodeUtilization Resource Agent (Red Hat Enterprise Linux 7.4 and later)”. - For Red Hat Enterprise Linux 7.4, the

cluster node add-guestand thecluster node remove-guestcommands replace thecluster remote-node addandcluster remote-node removecommands. Thepcs cluster node add-guestcommand sets up theauthkeyfor guest nodes and thepcs cluster node add-remotecommand sets up theauthkeyfor remote nodes. For updated guest and remote node configuration procedures, see Section 9.3, “Configuring a Virtual Domain as a Resource”. - Red Hat Enterprise Linux 7.4 supports the

systemdresource-agents-depstarget. This allows you to configure the appropriate startup order for a cluster that includes resources with dependencies that are not themselves managed by the cluster, as described in Section 9.7, “Configuring Startup Order for Resource Dependencies not Managed by Pacemaker (Red Hat Enterprise Linux 7.4 and later)”. - The format for the command to create a resource as a master/slave clone has changed for this release. For information on creating a master/slave clone, see Section 9.2, “Multistate Resources: Resources That Have Multiple Modes”.

1.1.5. New and Changed Features for Red Hat Enterprise Linux 7.5

- As of Red Hat Enterprise Linux 7.5, you can use the

pcs_snmp_agentdaemon to query a Pacemaker cluster for data by means of SNMP. For information on querying a cluster with SNMP, see Section 9.8, “Querying a Pacemaker Cluster with SNMP (Red Hat Enterprise Linux 7.5 and later)”.

1.1.6. New and Changed Features for Red Hat Enterprise Linux 7.8

- As of Red Hat Enterprise Linux 7.8, you can configure Pacemaker so that when a node shuts down cleanly, the resources attached to the node will be locked to the node and unable to start elsewhere until they start again when the node that has shut down rejoins the cluster. This allows you to power down nodes during maintenance windows when service outages are acceptable without causing that node’s resources to fail over to other nodes in the cluster. For information on configuring resources to remain stopped on clean node shutdown, see Section 9.9, “ Configuring Resources to Remain Stopped on Clean Node Shutdown (Red Hat Enterprise Linux 7.8 and later) ”.

1.2. Installing Pacemaker configuration tools

yum install command to install the Red Hat High Availability Add-On software packages along with all available fence agents from the High Availability channel.

# yum install pcs pacemaker fence-agents-all# yum install pcs pacemaker fence-agents-model# rpm -q -a | grep fence

fence-agents-rhevm-4.0.2-3.el7.x86_64

fence-agents-ilo-mp-4.0.2-3.el7.x86_64

fence-agents-ipmilan-4.0.2-3.el7.x86_64

...

lvm2-cluster and gfs2-utils packages are part of ResilientStorage channel. You can install them, as needed, with the following command.

# yum install lvm2-cluster gfs2-utilsWarning

1.3. Configuring the iptables Firewall to Allow Cluster Components

Note

firewalld daemon by executing the following commands.

# firewall-cmd --permanent --add-service=high-availability

# firewall-cmd --add-service=high-availability| Port | When Required |

|---|---|

|

TCP 2224

|

Required on all nodes (needed by the

pcsd Web UI and required for node-to-node communication)

It is crucial to open port 2224 in such a way that

pcs from any node can talk to all nodes in the cluster, including itself. When using the Booth cluster ticket manager or a quorum device you must open port 2224 on all related hosts, such as Booth arbiters or the quorum device host.

|

|

TCP 3121

|

Required on all nodes if the cluster has any Pacemaker Remote nodes

Pacemaker's

crmd daemon on the full cluster nodes will contact the pacemaker_remoted daemon on Pacemaker Remote nodes at port 3121. If a separate interface is used for cluster communication, the port only needs to be open on that interface. At a minimum, the port should open on Pacemaker Remote nodes to full cluster nodes. Because users may convert a host between a full node and a remote node, or run a remote node inside a container using the host's network, it can be useful to open the port to all nodes. It is not necessary to open the port to any hosts other than nodes.

|

|

TCP 5403

|

Required on the quorum device host when using a quorum device with

corosync-qnetd. The default value can be changed with the -p option of the corosync-qnetd command.

|

|

UDP 5404

|

Required on corosync nodes if

corosync is configured for multicast UDP

|

|

UDP 5405

|

Required on all corosync nodes (needed by

corosync)

|

|

TCP 21064

|

Required on all nodes if the cluster contains any resources requiring DLM (such as

clvm or GFS2)

|

|

TCP 9929, UDP 9929

|

Required to be open on all cluster nodes and booth arbitrator nodes to connections from any of those same nodes when the Booth ticket manager is used to establish a multi-site cluster.

|

1.4. The Cluster and Pacemaker Configuration Files

corosync.conf and cib.xml.

corosync.conf file provides the cluster parameters used by corosync, the cluster manager that Pacemaker is built on. In general, you should not edit the corosync.conf directly but, instead, use the pcs or pcsd interface. However, there may be a situation where you do need to edit this file directly. For information on editing the corosync.conf file, see Editing the corosync.conf file in Red Hat Enterprise Linux 7.

cib.xml file is an XML file that represents both the cluster’s configuration and current state of all resources in the cluster. This file is used by Pacemaker's Cluster Information Base (CIB). The contents of the CIB are automatically kept in sync across the entire cluster Do not edit the cib.xml file directly; use the pcs or pcsd interface instead.

1.5. Cluster Configuration Considerations

- Red Hat does not support cluster deployments greater than 32 nodes for RHEL 7.7 (and later). It is possible, however, to scale beyond that limit with remote nodes running the

pacemaker_remoteservice. For information on thepacemaker_remoteservice, see Section 9.4, “The pacemaker_remote Service”. - The use of Dynamic Host Configuration Protocol (DHCP) for obtaining an IP address on a network interface that is utilized by the

corosyncdaemons is not supported. The DHCP client can periodically remove and re-add an IP address to its assigned interface during address renewal. This will result incorosyncdetecting a connection failure, which will result in fencing activity from any other nodes in the cluster usingcorosyncfor heartbeat connectivity.

1.6. Updating a Red Hat Enterprise Linux High Availability Cluster

- Rolling Updates: Remove one node at a time from service, update its software, then integrate it back into the cluster. This allows the cluster to continue providing service and managing resources while each node is updated.

- Entire Cluster Update: Stop the entire cluster, apply updates to all nodes, then start the cluster back up.

Warning

1.7. Issues with Live Migration of VMs in a RHEL cluster

Note

- If any preparations need to be made before stopping or moving the resources or software running on the VM to migrate, perform those steps.

- Move any managed resources off the VM. If there are specific requirements or preferences for where resources should be relocated, then consider creating new location constraints to place the resources on the correct node.

- Place the VM in standby mode to ensure it is not considered in service, and to cause any remaining resources to be relocated elsewhere or stopped.

# pcs cluster standby VM - Run the following command on the VM to stop the cluster software on the VM.

# pcs cluster stop - Perform the live migration of the VM.

- Start cluster services on the VM.

# pcs cluster start - Take the VM out of standby mode.

# pcs cluster unstandby VM - If you created any temporary location constraints before putting the VM in standby mode, adjust or remove those constraints to allow resources to go back to their normally preferred locations.

Chapter 2. The pcsd Web UI

pcsd Web UI.

2.1. pcsd Web UI Setup

pcsd Web UI to configure a cluster, use the following procedure.

- Install the Pacemaker configuration tools, as described in Section 1.2, “Installing Pacemaker configuration tools”.

- On each node that will be part of the cluster, use the

passwdcommand to set the password for userhacluster, using the same password on each node. - Start and enable the

pcsddaemon on each node:# systemctl start pcsd.service # systemctl enable pcsd.service - On one node of the cluster, authenticate the nodes that will constitute the cluster with the following command. After executing this command, you will be prompted for a

Usernameand aPassword. Specifyhaclusteras theUsername.# pcs cluster auth node1 node2 ... nodeN - On any system, open a browser to the following URL, specifying one of the nodes you have authorized (note that this uses the

httpsprotocol). This brings up thepcsdWeb UI login screen.https://nodename:2224 - Log in as user

hacluster. This brings up the Manage Clusters page as shown in Figure 2.1, “Manage Clusters page”.

Figure 2.1. Manage Clusters page

2.2. Creating a Cluster with the pcsd Web UI

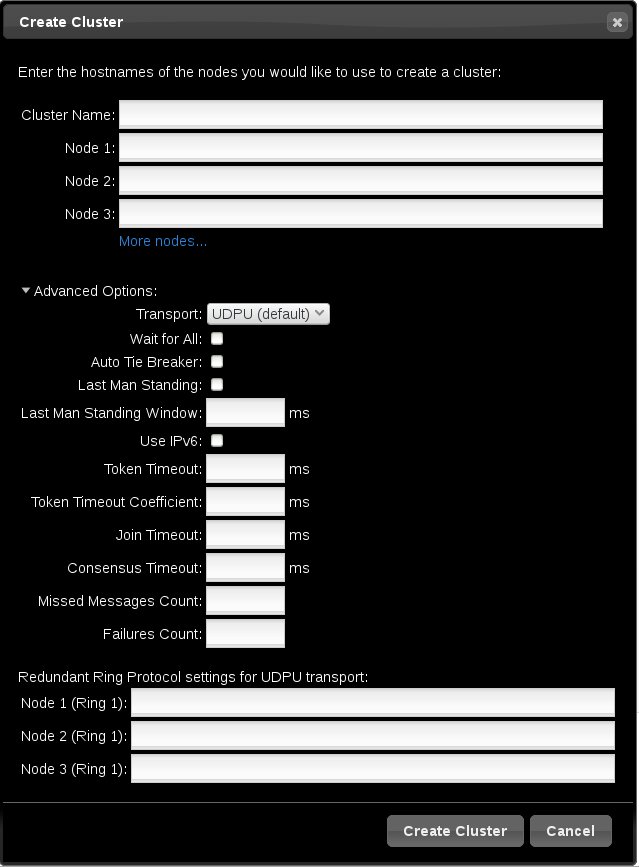

- To create a cluster, click on Create New and enter the name of the cluster to create and the nodes that constitute the cluster. You can also configure advanced cluster options from this screen, including the transport mechanism for cluster communication, as described in Section 2.2.1, “Advanced Cluster Configuration Options”. After entering the cluster information, click .

- To add an existing cluster to the Web UI, click on Add Existing and enter the host name or IP address of a node in the cluster that you would like to manage with the Web UI.

Note

pcsd Web UI to configure a cluster, you can move your mouse over the text describing many of the options to see longer descriptions of those options as a tooltip display.

2.2.1. Advanced Cluster Configuration Options

Figure 2.2. Create Clusters page

2.2.2. Setting Cluster Management Permissions

- Permissions for managing the cluster with the Web UI, which also grants permissions to run

pcscommands that connect to nodes over a network. This section describes how to configure those permissions with the Web UI. - Permissions for local users to allow read-only or read-write access to the cluster configuration, using ACLs. Configuring ACLs with the Web UI is described in Section 2.3.4, “Configuring ACLs”.

hacluster to manage the cluster through the Web UI and to run pcs commands that connect to nodes over a network by adding them to the group haclient. You can then configure the permissions set for an individual member of the group haclient by clicking the tab on the page and setting the permissions on the resulting screen. From this screen, you can also set permissions for groups.

- Read permissions, to view the cluster settings

- Write permissions, to modify cluster settings (except for permissions and ACLs)

- Grant permissions, to modify cluster permissions and ACLs

- Full permissions, for unrestricted access to a cluster, including adding and removing nodes, with access to keys and certificates

2.3. Configuring Cluster Components

- , as described in Section 2.3.1, “Cluster Nodes”

- , as described in Section 2.3.2, “Cluster Resources”

- , as described in Section 2.3.3, “Fence Devices”

- , as described in Section 2.3.4, “Configuring ACLs”

- , as described in Section 2.3.5, “Cluster Properties”

Figure 2.3. Cluster Components Menu

2.3.1. Cluster Nodes

Nodes option from the menu along the top of the cluster management page displays the currently configured nodes and the status of the currently selected node, including which resources are running on the node and the resource location preferences. This is the default page that displays when you select a cluster from the Manage Clusters screen.

Configure Fencing.

2.3.2. Cluster Resources

2.3.3. Fence Devices

2.3.4. Configuring ACLs

ACLS option from the menu along the top of the cluster management page displays a screen from which you can set permissions for local users, allowing read-only or read-write access to the cluster configuration by using access control lists (ACLs).

2.3.5. Cluster Properties

Cluster Properties option from the menu along the top of the cluster management page displays the cluster properties and allows you to modify these properties from their default values. For information on the Pacemaker cluster properties, see Chapter 12, Pacemaker Cluster Properties.

2.4. Configuring a High Availability pcsd Web UI

pcsd Web UI, you connect to one of the nodes of the cluster to display the cluster management pages. If the node to which you are connecting goes down or becomes unavailable, you can reconnect to the cluster by opening your browser to a URL that specifies a different node of the cluster. It is possible, however, to configure the pcsd Web UI itself for high availability, in which case you can continue to manage the cluster without entering a new URL.

pcsd Web UI for high availability, perform the following steps.

- Ensure that

PCSD_SSL_CERT_SYNC_ENABLEDis set totruein the/etc/sysconfig/pcsdconfiguration file, which is the default value in RHEL 7. Enabling certificate syncing causespcsdto sync thepcsdcertificates for the cluster setup and node add commands. - Create an

IPaddr2cluster resource, which is a floating IP address that you will use to connect to thepcsdWeb UI. The IP address must not be one already associated with a physical node. If theIPaddr2resource’s NIC device is not specified, the floating IP must reside on the same network as one of the node’s statically assigned IP addresses, otherwise the NIC device to assign the floating IP address cannot be properly detected. - Create custom SSL certificates for use with

pcsdand ensure that they are valid for the addresses of the nodes used to connect to thepcsdWeb UI.- To create custom SSL certificates, you can use either wildcard certificates or you can use the Subject Alternative Name certificate extension. For information on the Red Hat Certificate System, see the Red Hat Certificate System Administration Guide.

- Install the custom certificates for

pcsdwith thepcs pcsd certkeycommand. - Sync the

pcsdcertificates to all nodes in the cluster with thepcs pcsd sync-certificatescommand.

- Connect to the

pcsdWeb UI using the floating IP address you configured as a cluster resource.

Note

pcsd Web UI for high availability, you will be asked to log in again when the node to which you are connecting goes down.

Chapter 3. The pcs Command Line Interface

pcs command line interface controls and configures corosync and Pacemaker by providing an interface to the corosync.conf and cib.xml files.

pcs command is as follows.

pcs [-f file] [-h] [commands]...

3.1. The pcs Commands

pcs commands are as follows.

clusterConfigure cluster options and nodes. For information on thepcs clustercommand, see Chapter 4, Cluster Creation and Administration.resourceCreate and manage cluster resources. For information on thepcs clustercommand, see Chapter 6, Configuring Cluster Resources, Chapter 8, Managing Cluster Resources, and Chapter 9, Advanced Configuration.stonithConfigure fence devices for use with Pacemaker. For information on thepcs stonithcommand, see Chapter 5, Fencing: Configuring STONITH.constraintManage resource constraints. For information on thepcs constraintcommand, see Chapter 7, Resource Constraints.propertySet Pacemaker properties. For information on setting properties with thepcs propertycommand, see Chapter 12, Pacemaker Cluster Properties.statusView current cluster and resource status. For information on thepcs statuscommand, see Section 3.5, “Displaying Status”.configDisplay complete cluster configuration in user readable form. For information on thepcs configcommand, see Section 3.6, “Displaying the Full Cluster Configuration”.

3.2. pcs Usage Help Display

-h option of pcs to display the parameters of a pcs command and a description of those parameters. For example, the following command displays the parameters of the pcs resource command. Only a portion of the output is shown.

# pcs resource -h

Usage: pcs resource [commands]...

Manage pacemaker resources

Commands:

show [resource id] [--all]

Show all currently configured resources or if a resource is specified

show the options for the configured resource. If --all is specified

resource options will be displayed

start <resource id>

Start resource specified by resource_id

...

3.3. Viewing the Raw Cluster Configuration

pcs cluster cib command.

pcs cluster cib filename command as described in Section 3.4, “Saving a Configuration Change to a File”.

3.4. Saving a Configuration Change to a File

pcs command, you can use the -f option to save a configuration change to a file without affecting the active CIB.

pcs cluster cib filenametestfile.

# pcs cluster cib testfiletestfile but does not add that resource to the currently running cluster configuration.

# pcs -f testfile resource create VirtualIP ocf:heartbeat:IPaddr2 ip=192.168.0.120 cidr_netmask=24 op monitor interval=30stestfile to the CIB with the following command.

# pcs cluster cib-push testfile3.5. Displaying Status

pcs status commandsresources, groups, cluster, nodes, or pcsd.

3.6. Displaying the Full Cluster Configuration

pcs config

3.7. Displaying The Current pcs Version

pcs that is running.

pcs --version

3.8. Backing Up and Restoring a Cluster Configuration

pcs config backup filename--local option restores the cluster configuration files only on the node from which you run this command. If you do not specify a file name, the standard input will be used.

pcs config restore [--local] [filename]

Chapter 4. Cluster Creation and Administration

4.1. Cluster Creation

- Start the

pcsdon each node in the cluster. - Authenticate the nodes that will constitute the cluster.

- Configure and sync the cluster nodes.

- Start cluster services on the cluster nodes.

4.1.1. Starting the pcsd daemon

pcsd service and enable pcsd at system start. These commands should be run on each node in the cluster.

# systemctl start pcsd.service

# systemctl enable pcsd.service4.1.2. Authenticating the Cluster Nodes

pcs to the pcs daemon on the nodes in the cluster.

- The user name for the

pcsadministrator must behaclusteron every node. It is recommended that the password for userhaclusterbe the same on each node. - If you do not specify

usernameorpassword, the system will prompt you for those parameters for each node when you execute the command. - If you do not specify any nodes, this command will authenticate

pcson the nodes that are specified with apcs cluster setupcommand, if you have previously executed that command.

pcs cluster auth [node] [...] [-u username] [-p password]

hacluster on z1.example.com for both of the nodes in the cluster that consist of z1.example.com and z2.example.com. This command prompts for the password for user hacluster on the cluster nodes.

root@z1 ~]# pcs cluster auth z1.example.com z2.example.com

Username: hacluster

Password:

z1.example.com: Authorized

z2.example.com: Authorized

~/.pcs/tokens (or /var/lib/pcsd/tokens).

4.1.3. Configuring and Starting the Cluster Nodes

- If you specify the

--startoption, the command will also start the cluster services on the specified nodes. If necessary, you can also start the cluster services with a separatepcs cluster startcommand.When you create a cluster with thepcs cluster setup --startcommand or when you start cluster services with thepcs cluster startcommand, there may be a slight delay before the cluster is up and running. Before performing any subsequent actions on the cluster and its configuration, it is recommended that you use thepcs cluster statuscommand to be sure that the cluster is up and running. - If you specify the

--localoption, the command will perform changes on the local node only.

pcs cluster setup [--start] [--local] --name cluster_ name node1 [node2] [...]

- If you specify the

--alloption, the command starts cluster services on all nodes. - If you do not specify any nodes, cluster services are started on the local node only.

pcs cluster start [--all] [node] [...]

4.2. Configuring Timeout Values for a Cluster

pcs cluster setup command, timeout values for the cluster are set to default values that should be suitable for most cluster configurations. If you system requires different timeout values, however, you can modify these values with the pcs cluster setup options summarized in Table 4.1, “Timeout Options”

| Option | Description |

|---|---|

--token timeout | Sets time in milliseconds until a token loss is declared after not receiving a token (default 1000 ms) |

--join timeout | sets time in milliseconds to wait for join messages (default 50 ms) |

--consensus timeout | sets time in milliseconds to wait for consensus to be achieved before starting a new round of member- ship configuration (default 1200 ms) |

--miss_count_const count | sets the maximum number of times on receipt of a token a message is checked for retransmission before a retransmission occurs (default 5 messages) |

--fail_recv_const failures | specifies how many rotations of the token without receiving any messages when messages should be received may occur before a new configuration is formed (default 2500 failures) |

new_cluster and sets the token timeout value to 10000 milliseconds (10 seconds) and the join timeout value to 100 milliseconds.

# pcs cluster setup --name new_cluster nodeA nodeB --token 10000 --join 1004.3. Configuring Redundant Ring Protocol (RRP)

Note

pcs cluster setup command, you can configure a cluster with Redundant Ring Protocol by specifying both interfaces for each node. When using the default udpu transport, when you specify the cluster nodes you specify the ring 0 address followed by a ',', then the ring 1 address.

my_rrp_clusterM with two nodes, node A and node B. Node A has two interfaces, nodeA-0 and nodeA-1. Node B has two interfaces, nodeB-0 and nodeB-1. To configure these nodes as a cluster using RRP, execute the following command.

# pcs cluster setup --name my_rrp_cluster nodeA-0,nodeA-1 nodeB-0,nodeB-1 udp transport, see the help screen for the pcs cluster setup command.

4.4. Managing Cluster Nodes

4.4.1. Stopping Cluster Services

pcs cluster start, the --all option stops cluster services on all nodes and if you do not specify any nodes, cluster services are stopped on the local node only.

pcs cluster stop [--all] [node] [...]

kill -9 command.

pcs cluster kill

4.4.2. Enabling and Disabling Cluster Services

- If you specify the

--alloption, the command enables cluster services on all nodes. - If you do not specify any nodes, cluster services are enabled on the local node only.

pcs cluster enable [--all] [node] [...]

- If you specify the

--alloption, the command disables cluster services on all nodes. - If you do not specify any nodes, cluster services are disabled on the local node only.

pcs cluster disable [--all] [node] [...]

4.4.3. Adding Cluster Nodes

Note

clusternode-01.example.com, clusternode-02.example.com, and clusternode-03.example.com. The new node is newnode.example.com.

- Install the cluster packages. If the cluster uses SBD, the Booth ticket manager, or a quorum device, you must manually install the respective packages (

sbd,booth-site,corosync-qdevice) on the new node as well.[root@newnode ~]# yum install -y pcs fence-agents-all - If you are running the

firewallddaemon, execute the following commands to enable the ports that are required by the Red Hat High Availability Add-On.# firewall-cmd --permanent --add-service=high-availability # firewall-cmd --add-service=high-availability - Set a password for the user ID

hacluster. It is recommended that you use the same password for each node in the cluster.[root@newnode ~]# passwd hacluster Changing password for user hacluster. New password: Retype new password: passwd: all authentication tokens updated successfully. - Execute the following commands to start the

pcsdservice and to enablepcsdat system start.# systemctl start pcsd.service # systemctl enable pcsd.service

- Authenticate user

haclusteron the new cluster node.[root@clusternode-01 ~]# pcs cluster auth newnode.example.com Username: hacluster Password: newnode.example.com: Authorized - Add the new node to the existing cluster. This command also syncs the cluster configuration file

corosync.confto all nodes in the cluster, including the new node you are adding.[root@clusternode-01 ~]# pcs cluster node add newnode.example.com

- Start and enable cluster services on the new node.

[root@newnode ~]# pcs cluster start Starting Cluster... [root@newnode ~]# pcs cluster enable - Ensure that you configure and test a fencing device for the new cluster node. For information on configuring fencing devices, see Chapter 5, Fencing: Configuring STONITH.

4.4.4. Removing Cluster Nodes

corosync.conf, on all of the other nodes in the cluster. For information on removing all information about the cluster from the cluster nodes entirely, thereby destroying the cluster permanently, see Section 4.6, “Removing the Cluster Configuration”.

pcs cluster node remove node4.4.5. Standby Mode

--all, this command puts all nodes into standby mode.

pcs cluster standby node | --all

--all, this command removes all nodes from standby mode.

pcs cluster unstandby node | --all

pcs cluster standby command, this prevents resources from running on the indicated node. When you execute the pcs cluster unstandby command, this allows resources to run on the indicated node. This does not necessarily move the resources back to the indicated node; where the resources can run at that point depends on how you have configured your resources initially. For information on resource constraints, see Chapter 7, Resource Constraints.

4.5. Setting User Permissions

hacluster to manage the cluster. There are two sets of permissions that you can grant to individual users:

- Permissions that allow individual users to manage the cluster through the Web UI and to run

pcscommands that connect to nodes over a network, as described in Section 4.5.1, “Setting Permissions for Node Access Over a Network”. Commands that connect to nodes over a network include commands to set up a cluster, or to add or remove nodes from a cluster. - Permissions for local users to allow read-only or read-write access to the cluster configuration, as described in Section 4.5.2, “Setting Local Permissions Using ACLs”. Commands that do not require connecting over a network include commands that edit the cluster configuration, such as those that create resources and configure constraints.

pcs commands do not require network access and in those cases the network permissions will not apply.

4.5.1. Setting Permissions for Node Access Over a Network

pcs commands that connect to nodes over a network, add those users to the group haclient. You can then use the Web UI to grant permissions for those users, as described in Section 2.2.2, “Setting Cluster Management Permissions”.

4.5.2. Setting Local Permissions Using ACLs

pcs acl command to set permissions for local users to allow read-only or read-write access to the cluster configuration by using access control lists (ACLs). You can also configure ACLs using the pcsd Web UI, as described in Section 2.3.4, “Configuring ACLs”. By default, the root user and any user who is a member of the group haclient has full local read/write access to the cluster configuration.

- Execute the

pcs acl role create...command to create a role which defines the permissions for that role. - Assign the role you created to a user with the

pcs acl user createcommand.

rouser.

- This procedure requires that the user

rouserexists on the local system and that the userrouseris a member of the grouphaclient.# adduser rouser # usermod -a -G haclient rouser - Enable Pacemaker ACLs with the

enable-aclcluster property.# pcs property set enable-acl=true --force - Create a role named

read-onlywith read-only permissions for the cib.# pcs acl role create read-only description="Read access to cluster" read xpath /cib - Create the user

rouserin the pcs ACL system and assign that user theread-onlyrole.# pcs acl user create rouser read-only - View the current ACLs.

# pcs acl User: rouser Roles: read-only Role: read-only Description: Read access to cluster Permission: read xpath /cib (read-only-read)

wuser.

- This procedure requires that the user

wuserexists on the local system and that the userwuseris a member of the grouphaclient.# adduser wuser # usermod -a -G haclient wuser - Enable Pacemaker ACLs with the

enable-aclcluster property.# pcs property set enable-acl=true --force - Create a role named

write-accesswith write permissions for the cib.# pcs acl role create write-access description="Full access" write xpath /cib - Create the user

wuserin the pcs ACL system and assign that user thewrite-accessrole.# pcs acl user create wuser write-access - View the current ACLs.

# pcs acl User: rouser Roles: read-only User: wuser Roles: write-access Role: read-only Description: Read access to cluster Permission: read xpath /cib (read-only-read) Role: write-access Description: Full Access Permission: write xpath /cib (write-access-write)

pcs acl command.

4.6. Removing the Cluster Configuration

Warning

pcs cluster stop before destroying the cluster.

pcs cluster destroy

4.7. Displaying Cluster Status

pcs status

pcs cluster status

pcs status resources

4.8. Cluster Maintenance

- If you need to stop a node in a cluster while continuing to provide the services running on that cluster on another node, you can put the cluster node in standby mode. A node that is in standby mode is no longer able to host resources. Any resource currently active on the node will be moved to another node, or stopped if no other node is eligible to run the resource.For information on standby mode, see Section 4.4.5, “Standby Mode”.

- If you need to move an individual resource off the node on which it is currently running without stopping that resource, you can use the

pcs resource movecommand to move the resource to a different node. For information on thepcs resource movecommand, see Section 8.1, “Manually Moving Resources Around the Cluster”.When you execute thepcs resource movecommand, this adds a constraint to the resource to prevent it from running on the node on which it is currently running. When you are ready to move the resource back, you can execute thepcs resource clearor thepcs constraint deletecommand to remove the constraint. This does not necessarily move the resources back to the original node, however, since where the resources can run at that point depends on how you have configured your resources initially. You can relocate a resource to a specified node with thepcs resource relocate runcommand, as described in Section 8.1.1, “Moving a Resource from its Current Node”. - If you need to stop a running resource entirely and prevent the cluster from starting it again, you can use the

pcs resource disablecommand. For information on thepcs resource disablecommand, see Section 8.4, “Enabling, Disabling, and Banning Cluster Resources”. - If you want to prevent Pacemaker from taking any action for a resource (for example, if you want to disable recovery actions while performing maintenance on the resource, or if you need to reload the

/etc/sysconfig/pacemakersettings), use thepcs resource unmanagecommand, as described in Section 8.6, “Managed Resources”. Pacemaker Remote connection resources should never be unmanaged. - If you need to put the cluster in a state where no services will be started or stopped, you can set the

maintenance-modecluster property. Putting the cluster into maintenance mode automatically unmanages all resources. For information on setting cluster properties, see Table 12.1, “Cluster Properties”. - If you need to perform maintenance on a Pacemaker remote node, you can remove that node from the cluster by disabling the remote node resource, as described in Section 9.4.8, “System Upgrades and pacemaker_remote”.

Chapter 5. Fencing: Configuring STONITH

5.1. Available STONITH (Fencing) Agents

pcs stonith list [filter]

5.2. General Properties of Fencing Devices

- You can disable a fencing device by running the

pcs stonith disable stonith_idcommand. This will prevent any node from using that device - To prevent a specific node from using a fencing device, you can configure location constraints for the fencing resource with the

pcs constraint location ... avoidscommand. - Configuring

stonith-enabled=falsewill disable fencing altogether. Note, however, that Red Hat does not support clusters when fencing is disabled, as it is not suitable for a production environment.

Note

| Field | Type | Default | Description |

|---|---|---|---|

pcmk_host_map | string | A mapping of host names to port numbers for devices that do not support host names. For example: node1:1;node2:2,3 tells the cluster to use port 1 for node1 and ports 2 and 3 for node2 | |

pcmk_host_list | string | A list of machines controlled by this device (Optional unless pcmk_host_check=static-list). | |

pcmk_host_check | string | dynamic-list | How to determine which machines are controlled by the device. Allowed values: dynamic-list (query the device), static-list (check the pcmk_host_list attribute), none (assume every device can fence every machine) |

5.3. Displaying Device-Specific Fencing Options

pcs stonith describe stonith_agent# pcs stonith describe fence_apc

Stonith options for: fence_apc

ipaddr (required): IP Address or Hostname

login (required): Login Name

passwd: Login password or passphrase

passwd_script: Script to retrieve password

cmd_prompt: Force command prompt

secure: SSH connection

port (required): Physical plug number or name of virtual machine

identity_file: Identity file for ssh

switch: Physical switch number on device

inet4_only: Forces agent to use IPv4 addresses only

inet6_only: Forces agent to use IPv6 addresses only

ipport: TCP port to use for connection with device

action (required): Fencing Action

verbose: Verbose mode

debug: Write debug information to given file

version: Display version information and exit

help: Display help and exit

separator: Separator for CSV created by operation list

power_timeout: Test X seconds for status change after ON/OFF

shell_timeout: Wait X seconds for cmd prompt after issuing command

login_timeout: Wait X seconds for cmd prompt after login

power_wait: Wait X seconds after issuing ON/OFF

delay: Wait X seconds before fencing is started

retry_on: Count of attempts to retry power on

Warning

method option, a value of cycle is unsupported and should not be specified, as it may cause data corruption.

5.4. Creating a Fencing Device

pcs stonith create stonith_id stonith_device_type [stonith_device_options]

# pcs stonith create MyStonith fence_virt pcmk_host_list=f1 op monitor interval=30s - Some fence devices can automatically determine what nodes they can fence.

- You can use the

pcmk_host_listparameter when creating a fencing device to specify all of the machines that are controlled by that fencing device. - Some fence devices require a mapping of host names to the specifications that the fence device understands. You can map host names with the

pcmk_host_mapparameter when creating a fencing device.

pcmk_host_list and pcmk_host_map parameters, see Table 5.1, “General Properties of Fencing Devices”.

5.5. Displaying Fencing Devices

--full option is specified, all configured stonith options are displayed.

pcs stonith show [stonith_id] [--full]

5.6. Modifying and Deleting Fencing Devices

pcs stonith update stonith_id [stonith_device_options]

pcs stonith delete stonith_id5.7. Managing Nodes with Fence Devices

--off this will use the off API call to stonith which will turn the node off instead of rebooting it.

pcs stonith fence node [--off]

Warning

pcs stonith confirm node5.8. Additional Fencing Configuration Options

| Field | Type | Default | Description |

|---|---|---|---|

pcmk_host_argument | string | port | An alternate parameter to supply instead of port. Some devices do not support the standard port parameter or may provide additional ones. Use this to specify an alternate, device-specific, parameter that should indicate the machine to be fenced. A value of none can be used to tell the cluster not to supply any additional parameters. |

pcmk_reboot_action | string | reboot | An alternate command to run instead of reboot. Some devices do not support the standard commands or may provide additional ones. Use this to specify an alternate, device-specific, command that implements the reboot action. |

pcmk_reboot_timeout | time | 60s | Specify an alternate timeout to use for reboot actions instead of stonith-timeout. Some devices need much more/less time to complete than normal. Use this to specify an alternate, device-specific, timeout for reboot actions. |

pcmk_reboot_retries | integer | 2 | The maximum number of times to retry the reboot command within the timeout period. Some devices do not support multiple connections. Operations may fail if the device is busy with another task so Pacemaker will automatically retry the operation, if there is time remaining. Use this option to alter the number of times Pacemaker retries reboot actions before giving up. |

pcmk_off_action | string | off | An alternate command to run instead of off. Some devices do not support the standard commands or may provide additional ones. Use this to specify an alternate, device-specific, command that implements the off action. |

pcmk_off_timeout | time | 60s | Specify an alternate timeout to use for off actions instead of stonith-timeout. Some devices need much more or much less time to complete than normal. Use this to specify an alternate, device-specific, timeout for off actions. |

pcmk_off_retries | integer | 2 | The maximum number of times to retry the off command within the timeout period. Some devices do not support multiple connections. Operations may fail if the device is busy with another task so Pacemaker will automatically retry the operation, if there is time remaining. Use this option to alter the number of times Pacemaker retries off actions before giving up. |

pcmk_list_action | string | list | An alternate command to run instead of list. Some devices do not support the standard commands or may provide additional ones. Use this to specify an alternate, device-specific, command that implements the list action. |

pcmk_list_timeout | time | 60s | Specify an alternate timeout to use for list actions instead of stonith-timeout. Some devices need much more or much less time to complete than normal. Use this to specify an alternate, device-specific, timeout for list actions. |

pcmk_list_retries | integer | 2 | The maximum number of times to retry the list command within the timeout period. Some devices do not support multiple connections. Operations may fail if the device is busy with another task so Pacemaker will automatically retry the operation, if there is time remaining. Use this option to alter the number of times Pacemaker retries list actions before giving up. |

pcmk_monitor_action | string | monitor | An alternate command to run instead of monitor. Some devices do not support the standard commands or may provide additional ones. Use this to specify an alternate, device-specific, command that implements the monitor action. |

pcmk_monitor_timeout | time | 60s | Specify an alternate timeout to use for monitor actions instead of stonith-timeout. Some devices need much more or much less time to complete than normal. Use this to specify an alternate, device-specific, timeout for monitor actions. |

pcmk_monitor_retries | integer | 2 | The maximum number of times to retry the monitor command within the timeout period. Some devices do not support multiple connections. Operations may fail if the device is busy with another task so Pacemaker will automatically retry the operation, if there is time remaining. Use this option to alter the number of times Pacemaker retries monitor actions before giving up. |

pcmk_status_action | string | status | An alternate command to run instead of status. Some devices do not support the standard commands or may provide additional ones. Use this to specify an alternate, device-specific, command that implements the status action. |

pcmk_status_timeout | time | 60s | Specify an alternate timeout to use for status actions instead of stonith-timeout. Some devices need much more or much less time to complete than normal. Use this to specify an alternate, device-specific, timeout for status actions. |

pcmk_status_retries | integer | 2 | The maximum number of times to retry the status command within the timeout period. Some devices do not support multiple connections. Operations may fail if the device is busy with another task so Pacemaker will automatically retry the operation, if there is time remaining. Use this option to alter the number of times Pacemaker retries status actions before giving up. |

pcmk_delay_base | time | 0s |

Enable a base delay for stonith actions and specify a base delay value. In a cluster with an even number of nodes, configuring a delay can help avoid nodes fencing each other at the same time in an even split. A random delay can be useful when the same fence device is used for all nodes, and differing static delays can be useful on each fencing device when a separate device is used for each node. The overall delay is derived from a random delay value adding this static delay so that the sum is kept below the maximum delay. If you set

pcmk_delay_base but do not set pcmk_delay_max, there is no random component to the delay and it will be the value of pcmk_delay_base.

Some individual fence agents implement a "delay" parameter, which is independent of delays configured with a

pcmk_delay_* property. If both of these delays are configured, they are added together and thus would generally not be used in conjunction.

|

pcmk_delay_max | time | 0s |

Enable a random delay for stonith actions and specify the maximum of random delay. In a cluster with an even number of nodes, configuring a delay can help avoid nodes fencing each other at the same time in an even split. A random delay can be useful when the same fence device is used for all nodes, and differing static delays can be useful on each fencing device when a separate device is used for each node. The overall delay is derived from this random delay value adding a static delay so that the sum is kept below the maximum delay. If you set

pcmk_delay_max but do not set pcmk_delay_base there is no static component to the delay.

Some individual fence agents implement a "delay" parameter, which is independent of delays configured with a

pcmk_delay_* property. If both of these delays are configured, they are added together and thus would generally not be used in conjunction.

|

pcmk_action_limit | integer | 1 | The maximum number of actions that can be performed in parallel on this device. The cluster property concurrent-fencing=true needs to be configured first. A value of -1 is unlimited. |

pcmk_on_action | string | on | For advanced use only: An alternate command to run instead of on. Some devices do not support the standard commands or may provide additional ones. Use this to specify an alternate, device-specific, command that implements the on action. |

pcmk_on_timeout | time | 60s | For advanced use only: Specify an alternate timeout to use for on actions instead of stonith-timeout. Some devices need much more or much less time to complete than normal. Use this to specify an alternate, device-specific, timeout for on actions. |

pcmk_on_retries | integer | 2 | For advanced use only: The maximum number of times to retry the on command within the timeout period. Some devices do not support multiple connections. Operations may fail if the device is busy with another task so Pacemaker will automatically retry the operation, if there is time remaining. Use this option to alter the number of times Pacemaker retries on actions before giving up. |

fence-reaction cluster property, as decribed in Table 12.1, “Cluster Properties”. A cluster node may receive notification of its own fencing if fencing is misconfigured, or if fabric fencing is in use that does not cut cluster communication. Although the default value for this property is stop, which attempts to immediately stop Pacemaker and keep it stopped, the safest choice for this value is panic, which attempts to immediately reboot the local node. If you prefer the stop behavior, as is most likely to be the case in conjunction with fabric fencing, it is recommended that you set this explicitly.

5.9. Configuring Fencing Levels

- Each level is attempted in ascending numeric order, starting at 1.

- If a device fails, processing terminates for the current level. No further devices in that level are exercised and the next level is attempted instead.

- If all devices are successfully fenced, then that level has succeeded and no other levels are tried.

- The operation is finished when a level has passed (success), or all levels have been attempted (failed).

pcs stonith level add level node devicespcs stonith level

rh7-2: an ilo fence device called my_ilo and an apc fence device called my_apc. These commands sets up fence levels so that if the device my_ilo fails and is unable to fence the node, then Pacemaker will attempt to use the device my_apc. This example also shows the output of the pcs stonith level command after the levels are configured.

# pcs stonith level add 1 rh7-2 my_ilo

# pcs stonith level add 2 rh7-2 my_apc

# pcs stonith level

Node: rh7-2

Level 1 - my_ilo

Level 2 - my_apc

pcs stonith level remove level [node_id] [stonith_id] ... [stonith_id]

pcs stonith level clear [node|stonith_id(s)]

# pcs stonith level clear dev_a,dev_bpcs stonith level verify

node1, node2, and `node3 to use fence devices apc1 and `apc2, and nodes `node4, node5, and `node6 to use fence devices apc3 and `apc4.

pcs stonith level add 1 "regexp%node[1-3]" apc1,apc2

pcs stonith level add 1 "regexp%node[4-6]" apc3,apc4

pcs node attribute node1 rack=1

pcs node attribute node2 rack=1

pcs node attribute node3 rack=1

pcs node attribute node4 rack=2

pcs node attribute node5 rack=2

pcs node attribute node6 rack=2

pcs stonith level add 1 attrib%rack=1 apc1,apc2

pcs stonith level add 1 attrib%rack=2 apc3,apc4

5.10. Configuring Fencing for Redundant Power Supplies

# pcs stonith create apc1 fence_apc_snmp ipaddr=apc1.example.com login=user passwd='7a4D#1j!pz864' pcmk_host_map="node1.example.com:1;node2.example.com:2"

# pcs stonith create apc2 fence_apc_snmp ipaddr=apc2.example.com login=user passwd='7a4D#1j!pz864' pcmk_host_map="node1.example.com:1;node2.example.com:2"

# pcs stonith level add 1 node1.example.com apc1,apc2

# pcs stonith level add 1 node2.example.com apc1,apc25.11. Configuring ACPI For Use with Integrated Fence Devices

shutdown -h now). Otherwise, if ACPI Soft-Off is enabled, an integrated fence device can take four or more seconds to turn off a node (see the note that follows). In addition, if ACPI Soft-Off is enabled and a node panics or freezes during shutdown, an integrated fence device may not be able to turn off the node. Under those circumstances, fencing is delayed or unsuccessful. Consequently, when a node is fenced with an integrated fence device and ACPI Soft-Off is enabled, a cluster recovers slowly or requires administrative intervention to recover.

Note

- The preferred way to disable ACPI Soft-Off is to change the BIOS setting to "instant-off" or an equivalent setting that turns off the node without delay, as described in Section 5.11.1, “Disabling ACPI Soft-Off with the BIOS”.

- Setting

HandlePowerKey=ignorein the/etc/systemd/logind.conffile and verifying that the node node turns off immediately when fenced, as described in Section 5.11.2, “Disabling ACPI Soft-Off in the logind.conf file”. This is the first alternate method of disabling ACPI Soft-Off. - Appending

acpi=offto the kernel boot command line, as described in Section 5.11.3, “Disabling ACPI Completely in the GRUB 2 File”. This is the second alternate method of disabling ACPI Soft-Off, if the preferred or the first alternate method is not available.Important

This method completely disables ACPI; some computers do not boot correctly if ACPI is completely disabled. Use this method only if the other methods are not effective for your cluster.

5.11.1. Disabling ACPI Soft-Off with the BIOS

Note

- Reboot the node and start the

BIOS CMOS Setup Utilityprogram. - Navigate to the menu (or equivalent power management menu).

- At the menu, set the function (or equivalent) to (or the equivalent setting that turns off the node by means of the power button without delay). Example 5.1, “

BIOS CMOS Setup Utility: set to ” shows a menu with set to and set to .Note

The equivalents to , , and may vary among computers. However, the objective of this procedure is to configure the BIOS so that the computer is turned off by means of the power button without delay. - Exit the

BIOS CMOS Setup Utilityprogram, saving the BIOS configuration. - Verify that the node turns off immediately when fenced. For information on testing a fence device, see Section 5.12, “Testing a Fence Device”.

Example 5.1. BIOS CMOS Setup Utility: set to

+---------------------------------------------|-------------------+

| ACPI Function [Enabled] | Item Help |

| ACPI Suspend Type [S1(POS)] |-------------------|

| x Run VGABIOS if S3 Resume Auto | Menu Level * |

| Suspend Mode [Disabled] | |

| HDD Power Down [Disabled] | |

| Soft-Off by PWR-BTTN [Instant-Off | |

| CPU THRM-Throttling [50.0%] | |

| Wake-Up by PCI card [Enabled] | |

| Power On by Ring [Enabled] | |

| Wake Up On LAN [Enabled] | |

| x USB KB Wake-Up From S3 Disabled | |

| Resume by Alarm [Disabled] | |

| x Date(of Month) Alarm 0 | |

| x Time(hh:mm:ss) Alarm 0 : 0 : | |

| POWER ON Function [BUTTON ONLY | |

| x KB Power ON Password Enter | |

| x Hot Key Power ON Ctrl-F1 | |

| | |

| | |

+---------------------------------------------|-------------------+

5.11.2. Disabling ACPI Soft-Off in the logind.conf file

/etc/systemd/logind.conf file, use the following procedure.

- Define the following configuration in the

/etc/systemd/logind.conffile:HandlePowerKey=ignore - Reload the

systemdconfiguration:# systemctl daemon-reload - Verify that the node turns off immediately when fenced. For information on testing a fence device, see Section 5.12, “Testing a Fence Device”.

5.11.3. Disabling ACPI Completely in the GRUB 2 File

acpi=off to the GRUB menu entry for a kernel.

Important

- Use the

--argsoption in combination with the--update-kerneloption of thegrubbytool to change thegrub.cfgfile of each cluster node as follows:# grubby --args=acpi=off --update-kernel=ALLFor general information on GRUB 2, see the Working with GRUB 2 chapter in the System Administrator's Guide. - Reboot the node.

- Verify that the node turns off immediately when fenced. For information on testing a fence device, see Section 5.12, “Testing a Fence Device”.

5.12. Testing a Fence Device

- Use ssh, telnet, HTTP, or whatever remote protocol is used to connect to the device to manually log in and test the fence device or see what output is given. For example, if you will be configuring fencing for an IPMI-enabled device, then try to log in remotely with

ipmitool. Take note of the options used when logging in manually because those options might be needed when using the fencing agent.If you are unable to log in to the fence device, verify that the device is pingable, there is nothing such as a firewall configuration that is preventing access to the fence device, remote access is enabled on the fencing agent, and the credentials are correct. - Run the fence agent manually, using the fence agent script. This does not require that the cluster services are running, so you can perform this step before the device is configured in the cluster. This can ensure that the fence device is responding properly before proceeding.

Note

The examples in this section use thefence_ilofence agent script for an iLO device. The actual fence agent you will use and the command that calls that agent will depend on your server hardware. You should consult the man page for the fence agent you are using to determine which options to specify. You will usually need to know the login and password for the fence device and other information related to the fence device.The following example shows the format you would use to run thefence_ilofence agent script with-o statusparameter to check the status of the fence device interface on another node without actually fencing it. This allows you to test the device and get it working before attempting to reboot the node. When running this command, you specify the name and password of an iLO user that has power on and off permissions for the iLO device.# fence_ilo -a ipaddress -l username -p password -o statusThe following example shows the format you would use to run thefence_ilofence agent script with the-o rebootparameter. Running this command on one node reboots another node on which you have configured the fence agent.# fence_ilo -a ipaddress -l username -p password -o rebootIf the fence agent failed to properly do a status, off, on, or reboot action, you should check the hardware, the configuration of the fence device, and the syntax of your commands. In addition, you can run the fence agent script with the debug output enabled. The debug output is useful for some fencing agents to see where in the sequence of events the fencing agent script is failing when logging into the fence device.# fence_ilo -a ipaddress -l username -p password -o status -D /tmp/$(hostname)-fence_agent.debugWhen diagnosing a failure that has occurred, you should ensure that the options you specified when manually logging in to the fence device are identical to what you passed on to the fence agent with the fence agent script.For fence agents that support an encrypted connection, you may see an error due to certificate validation failing, requiring that you trust the host or that you use the fence agent'sssl-insecureparameter. Similarly, if SSL/TLS is disabled on the target device, you may need to account for this when setting the SSL parameters for the fence agent.Note

If the fence agent that is being tested is afence_drac,fence_ilo, or some other fencing agent for a systems management device that continues to fail, then fall back to tryingfence_ipmilan. Most systems management cards support IPMI remote login and the only supported fencing agent isfence_ipmilan. - Once the fence device has been configured in the cluster with the same options that worked manually and the cluster has been started, test fencing with the

pcs stonith fencecommand from any node (or even multiple times from different nodes), as in the following example. Thepcs stonith fencecommand reads the cluster configuration from the CIB and calls the fence agent as configured to execute the fence action. This verifies that the cluster configuration is correct.# pcs stonith fence node_nameIf thepcs stonith fencecommand works properly, that means the fencing configuration for the cluster should work when a fence event occurs. If the command fails, it means that cluster management cannot invoke the fence device through the configuration it has retrieved. Check for the following issues and update your cluster configuration as needed.- Check your fence configuration. For example, if you have used a host map you should ensure that the system can find the node using the host name you have provided.

- Check whether the password and user name for the device include any special characters that could be misinterpreted by the bash shell. Making sure that you enter passwords and user names surrounded by quotation marks could address this issue.

- Check whether you can connect to the device using the exact IP address or host name you specified in the

pcs stonithcommand. For example, if you give the host name in the stonith command but test by using the IP address, that is not a valid test. - If the protocol that your your fence device uses is accessible to you, use that protocol to try to connect to the device. For example many agents use ssh or telnet. You should try to connect to the device with the credentials you provided when configuring the device, to see if you get a valid prompt and can log in to the device.

If you determine that all your parameters are appropriate but you still have trouble connecting to your fence device, you can check the logging on the fence device itself, if the device provides that, which will show if the user has connected and what command the user issued. You can also search through the/var/log/messagesfile for instances of stonith and error, which could give some idea of what is transpiring, but some agents can provide additional information. - Once the fence device tests are working and the cluster is up and running, test an actual failure. To do this, take an action in the cluster that should initiate a token loss.

- Take down a network. How you take a network depends on your specific configuration. In many cases, you can physically pull the network or power cables out of the host.

Note

Disabling the network interface on the local host rather than physically disconnecting the network or power cables is not recommended as a test of fencing because it does not accurately simulate a typical real-world failure. - Block corosync traffic both inbound and outbound using the local firewall.The following example blocks corosync, assuming the default corosync port is used,

firewalldis used as the local firewall, and the network interface used by corosync is in the default firewall zone:# firewall-cmd --direct --add-rule ipv4 filter OUTPUT 2 -p udp --dport=5405 -j DROP # firewall-cmd --add-rich-rule='rule family="ipv4" port port="5405" protocol="udp" drop' - Simulate a crash and panic your machine with

sysrq-trigger. Note, however, that triggering a kernel panic can cause data loss; it is recommended that you disable your cluster resources first.# echo c > /proc/sysrq-trigger

Chapter 6. Configuring Cluster Resources

6.1. Resource Creation

pcs resource create resource_id [standard:[provider:]]type [resource_options] [op operation_action operation_options [operation_action operation options]...] [meta meta_options...] [clone [clone_options] | master [master_options] | --group group_name [--before resource_id | --after resource_id] | [bundle bundle_id] [--disabled] [--wait[=n]]

--group option, the resource is added to the resource group named. If the group does not exist, this creates the group and adds this resource to the group. For information on resource groups, see Section 6.5, “Resource Groups”.

--before and --after options specify the position of the added resource relative to a resource that already exists in a resource group.

--disabled option indicates that the resource is not started automatically.

VirtualIP of standard ocf, provider heartbeat, and type IPaddr2. The floating address of this resource is 192.168.0.120, the system will check whether the resource is running every 30 seconds.

# pcs resource create VirtualIP ocf:heartbeat:IPaddr2 ip=192.168.0.120 cidr_netmask=24 op monitor interval=30socf and a provider of heartbeat.

# pcs resource create VirtualIP IPaddr2 ip=192.168.0.120 cidr_netmask=24 op monitor interval=30spcs resource delete resource_idVirtualIP

# pcs resource delete VirtualIP- For information on the resource_id, standard, provider, and type fields of the

pcs resource createcommand, see Section 6.2, “Resource Properties”. - For information on defining resource parameters for individual resources, see Section 6.3, “Resource-Specific Parameters”.

- For information on defining resource meta options, which are used by the cluster to decide how a resource should behave, see Section 6.4, “Resource Meta Options”.

- For information on defining the operations to perform on a resource, see Section 6.6, “Resource Operations”.

- Specifying the

cloneoption creates a clone resource. Specifying themasteroption creates a master/slave resource. For information on resource clones and resources with multiple modes, see Chapter 9, Advanced Configuration.

6.2. Resource Properties

| Field | Description |

|---|---|

|

resource_id

| |

|

standard

| |

|

type

| |

|

provider

|

| pcs Display Command | Output |

|---|---|

pcs resource list | Displays a list of all available resources. |

pcs resource standards | Displays a list of available resources agent standards. |

pcs resource providers | Displays a list of available resources agent providers. |

pcs resource list string | Displays a list of available resources filtered by the specified string. You can use this command to display resources filtered by the name of a standard, a provider, or a type. |

6.3. Resource-Specific Parameters

# pcs resource describe standard:provider:type|typeLVM.

# pcs resource describe LVM

Resource options for: LVM

volgrpname (required): The name of volume group.

exclusive: If set, the volume group will be activated exclusively.

partial_activation: If set, the volume group will be activated even

only partial of the physical volumes available. It helps to set to

true, when you are using mirroring logical volumes.

6.4. Resource Meta Options

| Field | Default | Description |

|---|---|---|

priority

| 0

| |

target-role

| Started

|

What state should the cluster attempt to keep this resource in? Allowed values:

* Stopped - Force the resource to be stopped

* Started - Allow the resource to be started (In the case of multistate resources, they will not promoted to master)

|

is-managed

| true

| |

resource-stickiness

|

0

| |

requires

|

Calculated

|

Indicates under what conditions the resource can be started.

Defaults to

fencing except under the conditions noted below. Possible values:

*

nothing - The cluster can always start the resource.

*

quorum - The cluster can only start this resource if a majority of the configured nodes are active. This is the default value if stonith-enabled is false or the resource's standard is stonith.

*

fencing - The cluster can only start this resource if a majority of the configured nodes are active and any failed or unknown nodes have been powered off.

*

unfencing - The cluster can only start this resource if a majority of the configured nodes are active and any failed or unknown nodes have been powered off and only on nodes that have been unfenced. This is the default value if the provides=unfencing stonith meta option has been set for a fencing device.

|

migration-threshold

| INFINITY

|

How many failures may occur for this resource on a node, before this node is marked ineligible to host this resource. A value of 0 indicates that this feature is disabled (the node will never be marked ineligible); by contrast, the cluster treats

INFINITY (the default) as a very large but finite number. This option has an effect only if the failed operation has on-fail=restart (the default), and additionally for failed start operations if the cluster property start-failure-is-fatal is false. For information on configuring the migration-threshold option, see Section 8.2, “Moving Resources Due to Failure”. For information on the start-failure-is-fatal option, see Table 12.1, “Cluster Properties”.

|

failure-timeout

| 0 (disabled)

|

Used in conjunction with the

migration-threshold option, indicates how many seconds to wait before acting as if the failure had not occurred, and potentially allowing the resource back to the node on which it failed. As with any time-based actions, this is not guaranteed to be checked more frequently than the value of the cluster-recheck-interval cluster parameter. For information on configuring the failure-timeout option, see Section 8.2, “Moving Resources Due to Failure”.

|

multiple-active

| stop_start

|

What should the cluster do if it ever finds the resource active on more than one node. Allowed values:

*

block - mark the resource as unmanaged

*

stop_only - stop all active instances and leave them that way

*

stop_start - stop all active instances and start the resource in one location only

|

pcs resource defaults optionsresource-stickiness to 100.

# pcs resource defaults resource-stickiness=100pcs resource defaults displays a list of currently configured default values for resource options. The following example shows the output of this command after you have reset the default value of resource-stickiness to 100.

# pcs resource defaults

resource-stickiness:100

pcs resource create command you use when specifying a value for a resource meta option.

pcs resource create resource_id standard:provider:type|type [resource options] [meta meta_options...]

resource-stickiness value of 50.

# pcs resource create VirtualIP ocf:heartbeat:IPaddr2 ip=192.168.0.120 cidr_netmask=24 meta resource-stickiness=50pcs resource meta resource_id | group_id | clone_id | master_id meta_optionsdummy_resource. This command sets the failure-timeout meta option to 20 seconds, so that the resource can attempt to restart on the same node in 20 seconds.

# pcs resource meta dummy_resource failure-timeout=20s failure-timeout=20s is set.

# pcs resource show dummy_resource

Resource: dummy_resource (class=ocf provider=heartbeat type=Dummy)

Meta Attrs: failure-timeout=20s

Operations: start interval=0s timeout=20 (dummy_resource-start-timeout-20)

stop interval=0s timeout=20 (dummy_resource-stop-timeout-20)

monitor interval=10 timeout=20 (dummy_resource-monitor-interval-10)

6.5. Resource Groups

pcs resource group add group_name resource_id [resource_id] ... [resource_id]

[--before resource_id | --after resource_id]

--before and --after options of this command to specify the position of the added resources relative to a resource that already exists in the group.

pcs resource create resource_id standard:provider:type|type [resource_options] [op operation_action operation_options] --group group_namepcs resource group remove group_name resource_id...

pcs resource group list

shortcut that contains the existing resources IPaddr and Email.

# pcs resource group add shortcut IPaddr Email- Resources are started in the order in which you specify them (in this example,

IPaddrfirst, thenEmail). - Resources are stopped in the reverse order in which you specify them. (

Emailfirst, thenIPaddr).

- If

IPaddrcannot run anywhere, neither canEmail. - If

Emailcannot run anywhere, however, this does not affectIPaddrin any way.

6.5.1. Group Options

priority, target-role, is-managed. For information on resource options, see Table 6.3, “Resource Meta Options”.

6.5.2. Group Stickiness

resource-stickiness is 100, and a group has seven members, five of which are active, then the group as a whole will prefer its current location with a score of 500.

6.6. Resource Operations

pcs command will create a monitoring operation, with an interval that is determined by the resource agent. If the resource agent does not provide a default monitoring interval, the pcs command will create a monitoring operation with an interval of 60 seconds.

6.6.1. Configuring Resource Operations

pcs resource create resource_id standard:provider:type|type [resource_options] [op operation_action operation_options [operation_type operation_options]...]

IPaddr2 resource with a monitoring operation. The new resource is called VirtualIP with an IP address of 192.168.0.99 and a netmask of 24 on eth2. A monitoring operation will be performed every 30 seconds.

# pcs resource create VirtualIP ocf:heartbeat:IPaddr2 ip=192.168.0.99 cidr_netmask=24 nic=eth2 op monitor interval=30spcs resource op add resource_id operation_action [operation_properties]

pcs resource op remove resource_id operation_name operation_propertiesNote

VirtualIP with the following command.

# pcs resource create VirtualIP ocf:heartbeat:IPaddr2 ip=192.168.0.99 cidr_netmask=24 nic=eth2Operations: start interval=0s timeout=20s (VirtualIP-start-timeout-20s)

stop interval=0s timeout=20s (VirtualIP-stop-timeout-20s)

monitor interval=10s timeout=20s (VirtualIP-monitor-interval-10s)

# pcs resource update VirtualIP op stop interval=0s timeout=40s

# pcs resource show VirtualIP

Resource: VirtualIP (class=ocf provider=heartbeat type=IPaddr2)

Attributes: ip=192.168.0.99 cidr_netmask=24 nic=eth2

Operations: start interval=0s timeout=20s (VirtualIP-start-timeout-20s)

monitor interval=10s timeout=20s (VirtualIP-monitor-interval-10s)

stop interval=0s timeout=40s (VirtualIP-name-stop-interval-0s-timeout-40s)

Note

pcs resource update command, any options you do not specifically call out are reset to their default values.

6.6.2. Configuring Global Resource Operation Defaults

pcs resource op defaults [options]

timeout value of 240 seconds for all monitoring operations.

# pcs resource op defaults timeout=240spcs resource op defaults command.

timeout value of 240 seconds.

# pcs resource op defaults

timeout: 240s

timeout option for all operations. For the global operation timeout value to be honored, you must create the cluster resource without the timeout option explicitly or you must remove the timeout option by updating the cluster resource, as in the following command.