Security Guide

Concepts and techniques to secure RHEL servers and workstations

Abstract

Chapter 1. Overview of Security Topics

Note

/lib directory. When using 64-bit systems, some of the files mentioned may instead be located in /lib64.

1.1. What is Computer Security?

1.1.1. Standardizing Security

- Confidentiality — Sensitive information must be available only to a set of pre-defined individuals. Unauthorized transmission and usage of information should be restricted. For example, confidentiality of information ensures that a customer's personal or financial information is not obtained by an unauthorized individual for malicious purposes such as identity theft or credit fraud.

- Integrity — Information should not be altered in ways that render it incomplete or incorrect. Unauthorized users should be restricted from the ability to modify or destroy sensitive information.

- Availability — Information should be accessible to authorized users any time that it is needed. Availability is a warranty that information can be obtained with an agreed-upon frequency and timeliness. This is often measured in terms of percentages and agreed to formally in Service Level Agreements (SLAs) used by network service providers and their enterprise clients.

1.1.2. Cryptographic Software and Certifications

1.2. Security Controls

- Physical

- Technical

- Administrative

1.2.1. Physical Controls

- Closed-circuit surveillance cameras

- Motion or thermal alarm systems

- Security guards

- Picture IDs

- Locked and dead-bolted steel doors

- Biometrics (includes fingerprint, voice, face, iris, handwriting, and other automated methods used to recognize individuals)

1.2.2. Technical Controls

- Encryption

- Smart cards

- Network authentication

- Access control lists (ACLs)

- File integrity auditing software

1.2.3. Administrative Controls

- Training and awareness

- Disaster preparedness and recovery plans

- Personnel recruitment and separation strategies

- Personnel registration and accounting

1.3. Vulnerability Assessment

- The expertise of the staff responsible for configuring, monitoring, and maintaining the technologies.

- The ability to patch and update services and kernels quickly and efficiently.

- The ability of those responsible to keep constant vigilance over the network.

1.3.1. Defining Assessment and Testing

Warning

- Creates proactive focus on information security.

- Finds potential exploits before crackers find them.

- Results in systems being kept up to date and patched.

- Promotes growth and aids in developing staff expertise.

- Abates financial loss and negative publicity.

1.3.2. Establishing a Methodology for Vulnerability Assessment

- https://www.owasp.org/ — The Open Web Application Security Project

1.3.3. Vulnerability Assessment Tools

README file or man page for the tools. Additionally, look to the Internet for more information, such as articles, step-by-step guides, or even mailing lists specific to the tools.

1.3.3.1. Scanning Hosts with Nmap

yum install nmap command as the root user.

1.3.3.1.1. Using Nmap

nmap command followed by the host name or IP address of the machine to scan:

nmap <hostname>foo.example.com, type the following at a shell prompt:

~]$ nmap foo.example.comInteresting ports on foo.example.com:

Not shown: 1710 filtered ports

PORT STATE SERVICE

22/tcp open ssh

53/tcp open domain

80/tcp open http

113/tcp closed auth

1.3.3.2. Nessus

Note

1.3.3.3. OpenVAS

1.3.3.4. Nikto

1.4. Security Threats

1.4.1. Threats to Network Security

Insecure Architectures

Broadcast Networks

Centralized Servers

1.4.2. Threats to Server Security

Unused Services and Open Ports

Unpatched Services

Inattentive Administration

Inherently Insecure Services

1.4.3. Threats to Workstation and Home PC Security

Bad Passwords

Vulnerable Client Applications

1.5. Common Exploits and Attacks

| Exploit | Description | Notes |

|---|---|---|

| Null or Default Passwords | Leaving administrative passwords blank or using a default password set by the product vendor. This is most common in hardware such as routers and firewalls, but some services that run on Linux can contain default administrator passwords as well (though Red Hat Enterprise Linux 7 does not ship with them). |

Commonly associated with networking hardware such as routers, firewalls, VPNs, and network attached storage (NAS) appliances.

Common in many legacy operating systems, especially those that bundle services (such as UNIX and Windows.)

Administrators sometimes create privileged user accounts in a rush and leave the password null, creating a perfect entry point for malicious users who discover the account.

|

| Default Shared Keys | Secure services sometimes package default security keys for development or evaluation testing purposes. If these keys are left unchanged and are placed in a production environment on the Internet, all users with the same default keys have access to that shared-key resource, and any sensitive information that it contains. |

Most common in wireless access points and preconfigured secure server appliances.

|

| IP Spoofing | A remote machine acts as a node on your local network, finds vulnerabilities with your servers, and installs a backdoor program or Trojan horse to gain control over your network resources. |

Spoofing is quite difficult as it involves the attacker predicting TCP/IP sequence numbers to coordinate a connection to target systems, but several tools are available to assist crackers in performing such a vulnerability.

Depends on target system running services (such as

rsh, telnet, FTP and others) that use source-based authentication techniques, which are not recommended when compared to PKI or other forms of encrypted authentication used in ssh or SSL/TLS.

|

| Eavesdropping | Collecting data that passes between two active nodes on a network by eavesdropping on the connection between the two nodes. |

This type of attack works mostly with plain text transmission protocols such as Telnet, FTP, and HTTP transfers.

Remote attacker must have access to a compromised system on a LAN in order to perform such an attack; usually the cracker has used an active attack (such as IP spoofing or man-in-the-middle) to compromise a system on the LAN.

Preventative measures include services with cryptographic key exchange, one-time passwords, or encrypted authentication to prevent password snooping; strong encryption during transmission is also advised.

|

| Service Vulnerabilities | An attacker finds a flaw or loophole in a service run over the Internet; through this vulnerability, the attacker compromises the entire system and any data that it may hold, and could possibly compromise other systems on the network. |

HTTP-based services such as CGI are vulnerable to remote command execution and even interactive shell access. Even if the HTTP service runs as a non-privileged user such as "nobody", information such as configuration files and network maps can be read, or the attacker can start a denial of service attack which drains system resources or renders it unavailable to other users.

Services sometimes can have vulnerabilities that go unnoticed during development and testing; these vulnerabilities (such as buffer overflows, where attackers crash a service using arbitrary values that fill the memory buffer of an application, giving the attacker an interactive command prompt from which they may execute arbitrary commands) can give complete administrative control to an attacker.

Administrators should make sure that services do not run as the root user, and should stay vigilant of patches and errata updates for applications from vendors or security organizations such as CERT and CVE.

|

| Application Vulnerabilities | Attackers find faults in desktop and workstation applications (such as email clients) and execute arbitrary code, implant Trojan horses for future compromise, or crash systems. Further exploitation can occur if the compromised workstation has administrative privileges on the rest of the network. |

Workstations and desktops are more prone to exploitation as workers do not have the expertise or experience to prevent or detect a compromise; it is imperative to inform individuals of the risks they are taking when they install unauthorized software or open unsolicited email attachments.

Safeguards can be implemented such that email client software does not automatically open or execute attachments. Additionally, the automatic update of workstation software using Red Hat Network; or other system management services can alleviate the burdens of multi-seat security deployments.

|

| Denial of Service (DoS) Attacks | Attacker or group of attackers coordinate against an organization's network or server resources by sending unauthorized packets to the target host (either server, router, or workstation). This forces the resource to become unavailable to legitimate users. |

The most reported DoS case in the US occurred in 2000. Several highly-trafficked commercial and government sites were rendered unavailable by a coordinated ping flood attack using several compromised systems with high bandwidth connections acting as zombies, or redirected broadcast nodes.

Source packets are usually forged (as well as rebroadcast), making investigation as to the true source of the attack difficult.

Advances in ingress filtering (IETF rfc2267) using

iptables and Network Intrusion Detection Systems such as snort assist administrators in tracking down and preventing distributed DoS attacks.

|

Chapter 2. Security Tips for Installation

2.1. Securing BIOS

2.1.1. BIOS Passwords

- Preventing Changes to BIOS Settings — If an intruder has access to the BIOS, they can set it to boot from a CD-ROM or a flash drive. This makes it possible for them to enter rescue mode or single user mode, which in turn allows them to start arbitrary processes on the system or copy sensitive data.

- Preventing System Booting — Some BIOSes allow password protection of the boot process. When activated, an attacker is forced to enter a password before the BIOS launches the boot loader.

2.1.1.1. Securing Non-BIOS-based Systems

2.2. Partitioning the Disk

/boot, /, /home, /tmp, and /var/tmp/ directories. The reasons for each are different, and we will address each partition.

/boot- This partition is the first partition that is read by the system during boot up. The boot loader and kernel images that are used to boot your system into Red Hat Enterprise Linux 7 are stored in this partition. This partition should not be encrypted. If this partition is included in / and that partition is encrypted or otherwise becomes unavailable then your system will not be able to boot.

/home- When user data (

/home) is stored in/instead of in a separate partition, the partition can fill up causing the operating system to become unstable. Also, when upgrading your system to the next version of Red Hat Enterprise Linux 7 it is a lot easier when you can keep your data in the/homepartition as it will not be overwritten during installation. If the root partition (/) becomes corrupt your data could be lost forever. By using a separate partition there is slightly more protection against data loss. You can also target this partition for frequent backups. /tmpand/var/tmp/- Both the

/tmpand/var/tmp/directories are used to store data that does not need to be stored for a long period of time. However, if a lot of data floods one of these directories it can consume all of your storage space. If this happens and these directories are stored within/then your system could become unstable and crash. For this reason, moving these directories into their own partitions is a good idea.

Note

2.3. Installing the Minimum Amount of Packages Required

Minimal install environment, see the Software Selection chapter of the Red Hat Enterprise Linux 7 Installation Guide. A minimal installation can also be performed by a Kickstart file using the --nobase option. For more information about Kickstart installations, see the Package Selection section from the Red Hat Enterprise Linux 7 Installation Guide.

2.4. Restricting Network Connectivity During the Installation Process

2.5. Post-installation Procedures

- Update your system. enter the following command as root:

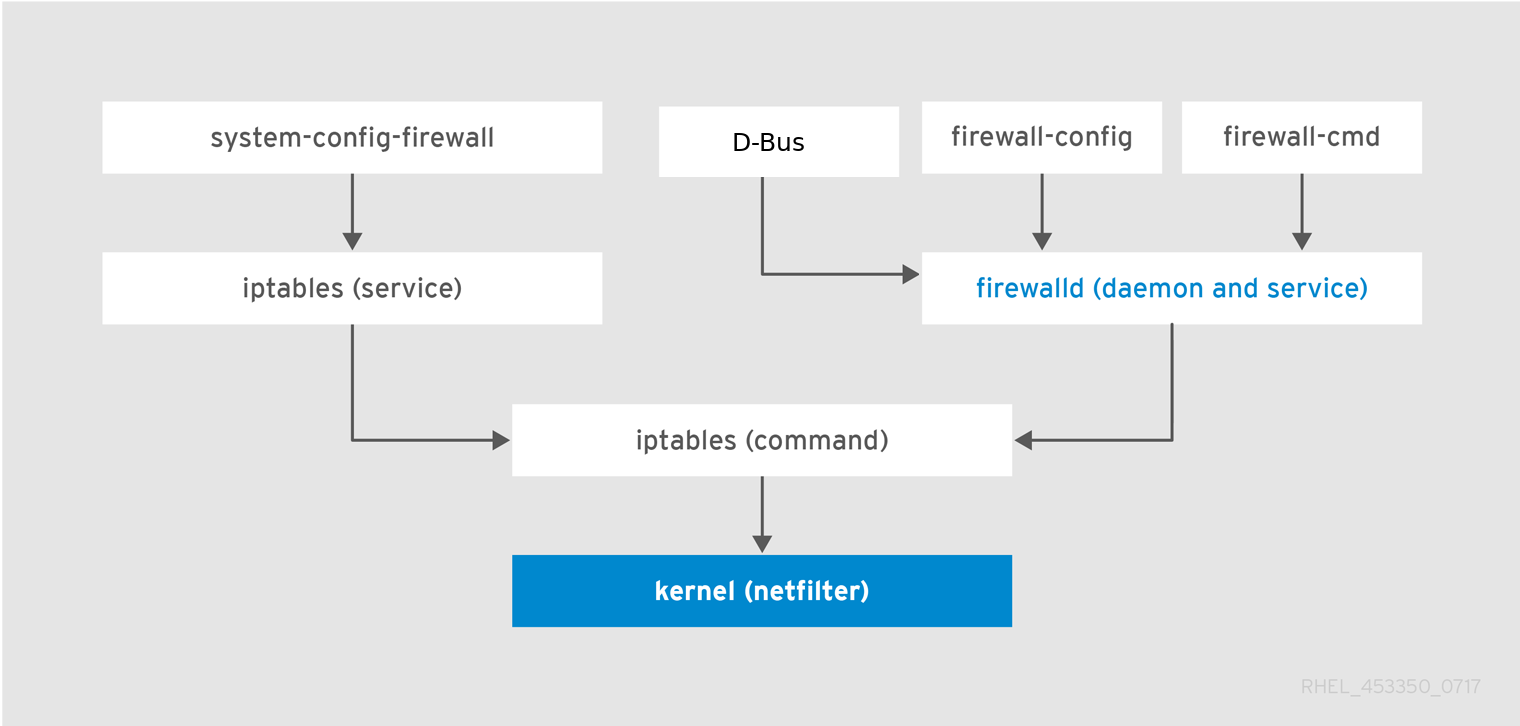

~]# yum update - Even though the firewall service,

firewalld, is automatically enabled with the installation of Red Hat Enterprise Linux, there are scenarios where it might be explicitly disabled, for example in the kickstart configuration. In such a case, it is recommended to consider re-enabling the firewall.To startfirewalldenter the following commands as root:~]# systemctl start firewalld ~]# systemctl enable firewalld - To enhance security, disable services you do not need. For example, if there are no printers installed on your computer, disable the

cupsservice using the following command:~]# systemctl disable cupsTo review active services, enter the following command:~]$ systemctl list-units | grep service

2.6. Additional Resources

Chapter 3. Keeping Your System Up-to-Date

3.1. Maintaining Installed Software

3.1.1. Planning and Configuring Security Updates

3.1.1.1. Using the Security Features of Yum

root:

~]# yum check-update --security

Loaded plugins: langpacks, product-id, subscription-manager

rhel-7-workstation-rpms/x86_64 | 3.4 kB 00:00:00

No packages needed for security; 0 packages available~]# yum update --securityupdateinfo subcommand to display or act upon information provided by repositories about available updates. The updateinfo subcommand itself accepts a number of commands, some of which pertain to security-related uses. See Table 3.1, “Security-related commands usable with yum updateinfo” for an overview of these commands.

| Command | Description | |

|---|---|---|

advisory [advisories] | Displays information about one or more advisories. Replace advisories with an advisory number or numbers. | |

cves | Displays the subset of information that pertains to CVE (Common Vulnerabilities and Exposures). | |

security or sec | Displays all security-related information. | |

severity [severity_level] or sev [severity_level] | Displays information about security-relevant packages of the supplied severity_level. |

3.1.2. Updating and Installing Packages

3.1.2.1. Verifying Signed Packages

gpgcheck configuration directive is set to 1 in the /etc/yum.conf configuration file.

rpmkeys --checksig package_file.rpm3.1.2.2. Installing Signed Packages

yum install command as the root user as follows:

yum install package_file.rpm.rpm packages in the current directory:

yum install *.rpmImportant

3.1.3. Applying Changes Introduced by Installed Updates

Note

- Applications

- User-space applications are any programs that can be initiated by the user. Typically, such applications are used only when the user, a script, or an automated task utility launch them.Once such a user-space application is updated, halt any instances of the application on the system, and launch the program again to use the updated version.

- Kernel

- The kernel is the core software component for the Red Hat Enterprise Linux 7 operating system. It manages access to memory, the processor, and peripherals, and it schedules all tasks.Because of its central role, the kernel cannot be restarted without also rebooting the computer. Therefore, an updated version of the kernel cannot be used until the system is rebooted.

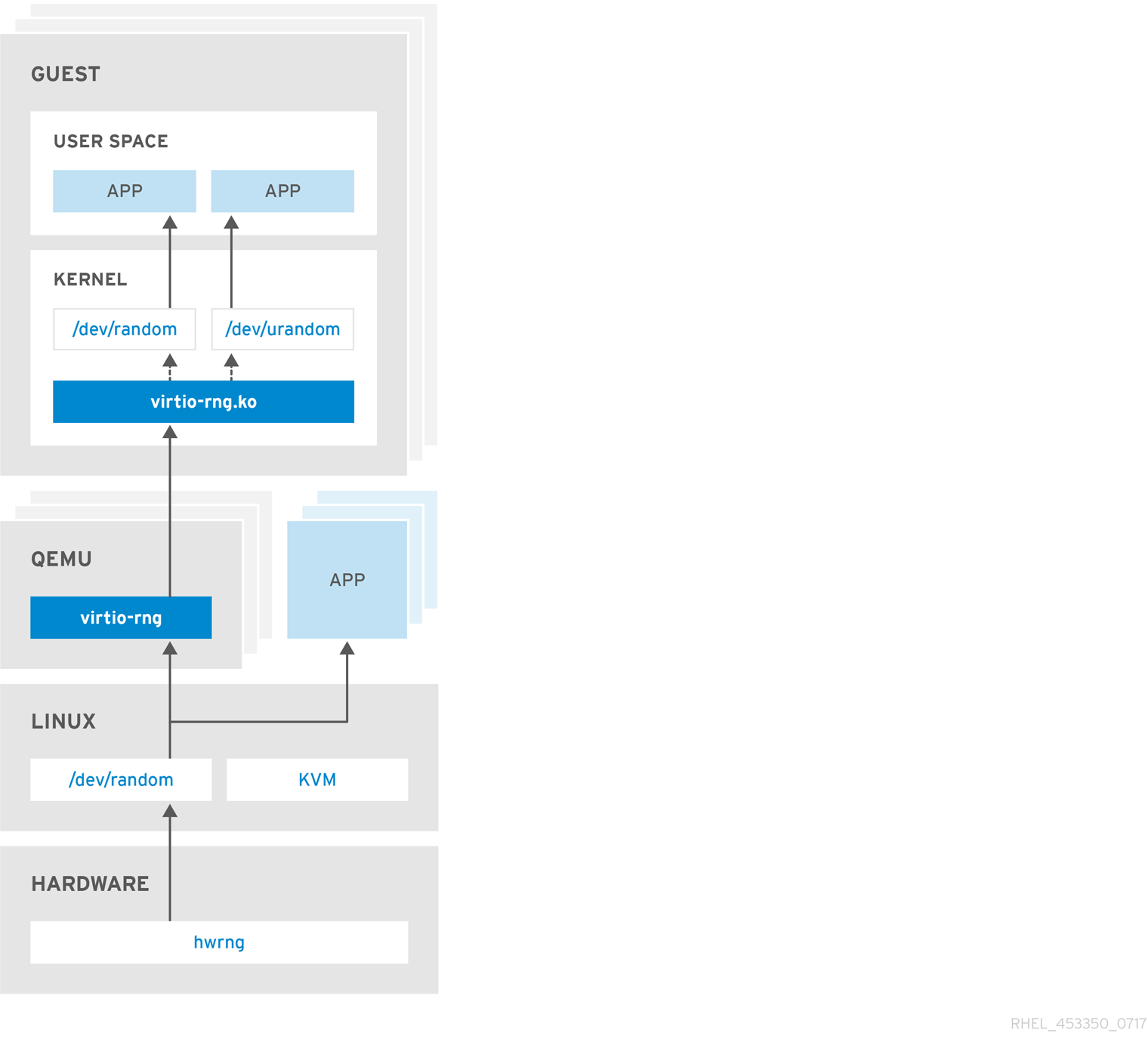

- KVM

- When the qemu-kvm and libvirt packages are updated, it is necessary to stop all guest virtual machines, reload relevant virtualization modules (or reboot the host system), and restart the virtual machines.Use the

lsmodcommand to determine which modules from the following are loaded:kvm,kvm-intel, orkvm-amd. Then use themodprobe -rcommand to remove and subsequently themodprobe -acommand to reload the affected modules. Fox example:~]# lsmod | grep kvm kvm_intel 143031 0 kvm 460181 1 kvm_intel ~]# modprobe -r kvm-intel ~]# modprobe -r kvm ~]# modprobe -a kvm kvm-intel - Shared Libraries

- Shared libraries are units of code, such as

glibc, that are used by a number of applications and services. Applications utilizing a shared library typically load the shared code when the application is initialized, so any applications using an updated library must be halted and relaunched.To determine which running applications link against a particular library, use thelsofcommand:lsof libraryFor example, to determine which running applications link against thelibwrap.so.0library, type:~]# lsof /lib64/libwrap.so.0 COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME pulseaudi 12363 test mem REG 253,0 42520 34121785 /usr/lib64/libwrap.so.0.7.6 gnome-set 12365 test mem REG 253,0 42520 34121785 /usr/lib64/libwrap.so.0.7.6 gnome-she 12454 test mem REG 253,0 42520 34121785 /usr/lib64/libwrap.so.0.7.6This command returns a list of all the running programs that useTCPwrappers for host-access control. Therefore, any program listed must be halted and relaunched when the tcp_wrappers package is updated. - systemd Services

- systemd services are persistent server programs usually launched during the boot process. Examples of systemd services include

sshdorvsftpd.Because these programs usually persist in memory as long as a machine is running, each updated systemd service must be halted and relaunched after its package is upgraded. This can be done as therootuser using thesystemctlcommand:systemctl restart service_nameReplace service_name with the name of the service you want to restart, such assshd. - Other Software

- Follow the instructions outlined by the resources linked below to correctly update the following applications.

- Red Hat Directory Server — See the Release Notes for the version of the Red Hat Directory Server in question at https://access.redhat.com/documentation/en-US/Red_Hat_Directory_Server/.

- Red Hat Enterprise Virtualization Manager — See the Installation Guide for the version of the Red Hat Enterprise Virtualization in question at https://access.redhat.com/documentation/en-US/Red_Hat_Enterprise_Virtualization/.

3.2. Using the Red Hat Customer Portal

3.2.1. Viewing Security Advisories on the Customer Portal

3.2.3. Understanding Issue Severity Classification

3.3. Additional Resources

Installed Documentation

- yum(8) — The manual page for the Yum package manager provides information about the way Yum can be used to install, update, and remove packages on your systems.

- rpmkeys(8) — The manual page for the

rpmkeysutility describes the way this program can be used to verify the authenticity of downloaded packages.

Online Documentation

- Red Hat Enterprise Linux 7 System Administrator's Guide — The System Administrator's Guide for Red Hat Enterprise Linux 7 documents the use of the

Yumandrpmcommands that are used to install, update, and remove packages on Red Hat Enterprise Linux 7 systems. - Red Hat Enterprise Linux 7 SELinux User's and Administrator's Guide — The SELinux User's and Administrator's Guide for Red Hat Enterprise Linux 7 documents the configuration of the SELinux mandatory access control mechanism.

Red Hat Customer Portal

- Red Hat Customer Portal, Security — The Security section of the Customer Portal contains links to the most important resources, including the Red Hat CVE database, and contacts for Red Hat Product Security.

- Red Hat Security Blog — Articles about latest security-related issues from Red Hat security professionals.

See Also

- Chapter 2, Security Tips for Installation describes how to configure your system securely from the beginning to make it easier to implement additional security settings later.

- Section 4.9.2, “Creating GPG Keys” describes how to create a set of personal GPG keys to authenticate your communications.

Chapter 4. Hardening Your System with Tools and Services

4.1. Desktop Security

4.1.1. Password Security

/etc/passwd file, which makes the system vulnerable to offline password cracking attacks. If an intruder can gain access to the machine as a regular user, he can copy the /etc/passwd file to his own machine and run any number of password cracking programs against it. If there is an insecure password in the file, it is only a matter of time before the password cracker discovers it.

/etc/shadow, which is readable only by the root user.

Note

4.1.1.1. Creating Strong Passwords

randomword1 randomword2 randomword3 randomword41!". Note that such a modification does not increase the security of the passphrase significantly.

/dev/urandom. The minimum number of bits you can specify is 56, which is enough for passwords on systems and services where brute force attacks are rare. 64 bits is adequate for applications where the attacker does not have direct access to the password hash file. For situations when the attacker might obtain the direct access to the password hash or the password is used as an encryption key, 80 to 128 bits should be used. If you specify an invalid number of entropy bits, pwmake will use the default of bits. To create a password of 128 bits, enter the following command:

pwmake 128- Using a single dictionary word, a word in a foreign language, an inverted word, or only numbers.

- Using less than 10 characters for a password or passphrase.

- Using a sequence of keys from the keyboard layout.

- Writing down your passwords.

- Using personal information in a password, such as birth dates, anniversaries, family member names, or pet names.

- Using the same passphrase or password on multiple machines.

4.1.1.2. Forcing Strong Passwords

passwd command-line utility, which is PAM-aware (Pluggable Authentication Modules) and checks to see if the password is too short or otherwise easy to crack. This checking is performed by the pam_pwquality.so PAM module.

Note

pam_pwquality PAM module replaced pam_cracklib, which was used in Red Hat Enterprise Linux 6 as a default module for password quality checking. It uses the same back end as pam_cracklib.

pam_pwquality module is used to check a password's strength against a set of rules. Its procedure consists of two steps: first it checks if the provided password is found in a dictionary. If not, it continues with a number of additional checks. pam_pwquality is stacked alongside other PAM modules in the password component of the /etc/pam.d/passwd file, and the custom set of rules is specified in the /etc/security/pwquality.conf configuration file. For a complete list of these checks, see the pwquality.conf (8) manual page.

Example 4.1. Configuring password strength-checking in pwquality.conf

pam_quality, add the following line to the password stack in the /etc/pam.d/passwd file:

password required pam_pwquality.so retry=3/etc/security/pwquality.conf file:

minlen = 8

minclass = 4/etc/security/pwquality.conf:

maxsequence = 3

maxrepeat = 3abcd, and more than 3 identical consecutive characters, such as 1111.

Note

4.1.1.3. Configuring Password Aging

chage command.

Important

-M option of the chage command specifies the maximum number of days the password is valid. For example, to set a user's password to expire in 90 days, use the following command:

chage -M 90 username-1 after the -M option.

chage command, see the table below.

| Option | Description |

|---|---|

-d days | Specifies the number of days since January 1, 1970 the password was changed. |

-E date | Specifies the date on which the account is locked, in the format YYYY-MM-DD. Instead of the date, the number of days since January 1, 1970 can also be used. |

-I days | Specifies the number of inactive days after the password expiration before locking the account. If the value is 0, the account is not locked after the password expires. |

-l | Lists current account aging settings. |

-m days | Specify the minimum number of days after which the user must change passwords. If the value is 0, the password does not expire. |

-M days | Specify the maximum number of days for which the password is valid. When the number of days specified by this option plus the number of days specified with the -d option is less than the current day, the user must change passwords before using the account. |

-W days | Specifies the number of days before the password expiration date to warn the user. |

chage command in interactive mode to modify multiple password aging and account details. Use the following command to enter interactive mode:

chage <username>~]# chage juan

Changing the aging information for juan

Enter the new value, or press ENTER for the default

Minimum Password Age [0]: 10

Maximum Password Age [99999]: 90

Last Password Change (YYYY-MM-DD) [2006-08-18]:

Password Expiration Warning [7]:

Password Inactive [-1]:

Account Expiration Date (YYYY-MM-DD) [1969-12-31]:- Set up an initial password. To assign a default password, enter the following command at a shell prompt as

root:passwd usernameWarning

Thepasswdutility has the option to set a null password. Using a null password, while convenient, is a highly insecure practice, as any third party can log in and access the system using the insecure user name. Avoid using null passwords wherever possible. If it is not possible, always make sure that the user is ready to log in before unlocking an account with a null password. - Force immediate password expiration by running the following command as

root:chage -d 0 usernameThis command sets the value for the date the password was last changed to the epoch (January 1, 1970). This value forces immediate password expiration no matter what password aging policy, if any, is in place.

4.1.2. Account Locking

pam_faillock PAM module allows system administrators to lock out user accounts after a specified number of failed attempts. Limiting user login attempts serves mainly as a security measure that aims to prevent possible brute force attacks targeted to obtain a user's account password.

pam_faillock module, failed login attempts are stored in a separate file for each user in the /var/run/faillock directory.

Note

root user account when the even_deny_root option is used.

- To lock out any non-root user after three unsuccessful attempts and unlock that user after 10 minutes, add two lines to the

authsection of the/etc/pam.d/system-authand/etc/pam.d/password-authfiles. After your edits, the entireauthsection in both files should look like this:1 auth required pam_env.so 2 auth required pam_faillock.so preauth silent audit deny=3 unlock_time=600 3 auth sufficient pam_unix.so nullok try_first_pass 4 auth [default=die] pam_faillock.so authfail audit deny=3 unlock_time=600 5 auth requisite pam_succeed_if.so uid >= 1000 quiet_success 6 auth required pam_deny.soLines number 2 and 4 have been added. - Add the following line to the

accountsection of both files specified in the previous step:account required pam_faillock.so - To apply account locking for the root user as well, add the

even_deny_rootoption to thepam_faillockentries in the/etc/pam.d/system-authand/etc/pam.d/password-authfiles:auth required pam_faillock.so preauth silent audit deny=3 even_deny_root unlock_time=600 auth sufficient pam_unix.so nullok try_first_pass auth [default=die] pam_faillock.so authfail audit deny=3 even_deny_root unlock_time=600 account required pam_faillock.so

john attempts to log in for the fourth time after failing to log in three times previously, his account is locked upon the fourth attempt:

~]$ su - john

Account locked due to 3 failed logins

su: incorrect passwordpam_faillock is called for the first time in both /etc/pam.d/system-auth and /etc/pam.d/password-auth. Also replace user1, user2, and user3 with the actual user names.

auth [success=1 default=ignore] pam_succeed_if.so user in user1:user2:user3root, the following command:

~]$ faillock

john:

When Type Source Valid

2013-03-05 11:44:14 TTY pts/0 Vroot, the following command:

faillock --user <username> --reset

Important

cron jobs resets the failure counter of pam_faillock of that user that is running the cron job, and thus pam_faillock should not be configured for cron. See the Knowledge Centered Support (KCS) solution for more information.

Keeping Custom Settings with authconfig

system-auth and password-auth files are overwritten with the settings from the authconfig utility. This can be avoided by creating symbolic links in place of the configuration files, which authconfig recognizes and does not overwrite. In order to use custom settings in the configuration files and authconfig simultaneously, configure account locking using the following steps:

- Check whether the

system-authandpassword-authfiles are already symbolic links pointing tosystem-auth-acandpassword-auth-ac(this is the system default):~]# ls -l /etc/pam.d/{password,system}-authIf the output is similar to the following, the symbolic links are in place, and you can skip to step number 3:lrwxrwxrwx. 1 root root 16 24. Feb 09.29 /etc/pam.d/password-auth -> password-auth-ac lrwxrwxrwx. 1 root root 28 24. Feb 09.29 /etc/pam.d/system-auth -> system-auth-acIf thesystem-authandpassword-authfiles are not symbolic links, continue with the next step. - Rename the configuration files:

~]# mv /etc/pam.d/system-auth /etc/pam.d/system-auth-ac ~]# mv /etc/pam.d/password-auth /etc/pam.d/password-auth-ac - Create configuration files with your custom settings:

~]# vi /etc/pam.d/system-auth-localThe/etc/pam.d/system-auth-localfile should contain the following lines:auth required pam_faillock.so preauth silent audit deny=3 unlock_time=600 auth include system-auth-ac auth [default=die] pam_faillock.so authfail silent audit deny=3 unlock_time=600 account required pam_faillock.so account include system-auth-ac password include system-auth-ac session include system-auth-ac~]# vi /etc/pam.d/password-auth-localThe/etc/pam.d/password-auth-localfile should contain the following lines:auth required pam_faillock.so preauth silent audit deny=3 unlock_time=600 auth include password-auth-ac auth [default=die] pam_faillock.so authfail silent audit deny=3 unlock_time=600 account required pam_faillock.so account include password-auth-ac password include password-auth-ac session include password-auth-ac - Create the following symbolic links:

~]# ln -sf /etc/pam.d/system-auth-local /etc/pam.d/system-auth ~]# ln -sf /etc/pam.d/password-auth-local /etc/pam.d/password-auth

pam_faillock configuration options, see the pam_faillock(8) manual page.

Removing the nullok option

nullok option, which allows users to log in with a blank password if the password field in the /etc/shadow file is empty, is enabled by default. To disable the nullok option, remove the nullok string from configuration files in the /etc/pam.d/ directory, such as /etc/pam.d/system-auth or /etc/pam.d/password-auth.

nullok option allow users to login without entering a password? KCS solution for more information.

4.1.3. Session Locking

Note

4.1.3.1. Locking Virtual Consoles Using vlock

vlock utility. Install it by entering the following command as root:

~]# yum install kbdvlock command without any additional parameters. This locks the currently active virtual console session while still allowing access to the others. To prevent access to all virtual consoles on the workstation, execute the following:

vlock -avlock locks the currently active console and the -a option prevents switching to other virtual consoles.

vlock(1) man page for additional information.

4.1.4. Enforcing Read-Only Mounting of Removable Media

udev rule to detect removable media and configure them to be mounted read-only using the blockdev utility. This is sufficient for enforcing read-only mounting of physical media.

Using blockdev to Force Read-Only Mounting of Removable Media

udev configuration file named, for example, 80-readonly-removables.rules in the /etc/udev/rules.d/ directory with the following content:

SUBSYSTEM=="block",ATTRS{removable}=="1",RUN{program}="/sbin/blockdev --setro %N"udev rule ensures that any newly connected removable block (storage) device is automatically configured as read-only using the blockdev utility.

Applying New udev Settings

udev rules need to be applied. The udev service automatically detects changes to its configuration files, but new settings are not applied to already existing devices. Only newly connected devices are affected by the new settings. Therefore, you need to unmount and unplug all connected removable media to ensure that the new settings are applied to them when they are next plugged in.

udev to re-apply all rules to already existing devices, enter the following command as root:

~# udevadm triggerudev to re-apply all rules using the above command does not affect any storage devices that are already mounted.

udev to reload all rules (in case the new rules are not automatically detected for some reason), use the following command:

~# udevadm control --reload4.2. Controlling Root Access

root user or by acquiring effective root privileges using a setuid program, such as sudo or su. A setuid program is one that operates with the user ID (UID) of the program's owner rather than the user operating the program. Such programs are denoted by an s in the owner section of a long format listing, as in the following example:

~]$ ls -l /bin/su

-rwsr-xr-x. 1 root root 34904 Mar 10 2011 /bin/suNote

s may be upper case or lower case. If it appears as upper case, it means that the underlying permission bit has not been set.

pam_console.so, some activities normally reserved only for the root user, such as rebooting and mounting removable media, are allowed for the first user that logs in at the physical console. However, other important system administration tasks, such as altering network settings, configuring a new mouse, or mounting network devices, are not possible without administrative privileges. As a result, system administrators must decide how much access the users on their network should receive.

4.2.1. Disallowing Root Access

root for these or other reasons, the root password should be kept secret, and access to runlevel one or single user mode should be disallowed through boot loader password protection (see Section 4.2.5, “Securing the Boot Loader” for more information on this topic.)

root logins are disallowed:

- Changing the root shell

- To prevent users from logging in directly as

root, the system administrator can set therootaccount's shell to/sbin/nologinin the/etc/passwdfile.Expand Table 4.2. Disabling the Root Shell Effects Does Not Affect Prevents access to arootshell and logs any such attempts. The following programs are prevented from accessing therootaccount:logingdmkdmxdmsusshscpsftp

Programs that do not require a shell, such as FTP clients, mail clients, and many setuid programs. The following programs are not prevented from accessing therootaccount:sudo- FTP clients

- Email clients

- Disabling root access using any console device (tty)

- To further limit access to the

rootaccount, administrators can disablerootlogins at the console by editing the/etc/securettyfile. This file lists all devices therootuser is allowed to log into. If the file does not exist at all, therootuser can log in through any communication device on the system, whether through the console or a raw network interface. This is dangerous, because a user can log in to their machine asrootusing Telnet, which transmits the password in plain text over the network.By default, Red Hat Enterprise Linux 7's/etc/securettyfile only allows therootuser to log in at the console physically attached to the machine. To prevent therootuser from logging in, remove the contents of this file by typing the following command at a shell prompt asroot:echo > /etc/securettyTo enablesecurettysupport in the KDM, GDM, and XDM login managers, add the following line:auth [user_unknown=ignore success=ok ignore=ignore default=bad] pam_securetty.soto the files listed below:/etc/pam.d/gdm/etc/pam.d/gdm-autologin/etc/pam.d/gdm-fingerprint/etc/pam.d/gdm-password/etc/pam.d/gdm-smartcard/etc/pam.d/kdm/etc/pam.d/kdm-np/etc/pam.d/xdm

Warning

A blank/etc/securettyfile does not prevent therootuser from logging in remotely using the OpenSSH suite of tools because the console is not opened until after authentication.Expand Table 4.3. Disabling Root Logins Effects Does Not Affect Prevents access to therootaccount using the console or the network. The following programs are prevented from accessing therootaccount:logingdmkdmxdm- Other network services that open a tty

Programs that do not log in asroot, but perform administrative tasks through setuid or other mechanisms. The following programs are not prevented from accessing therootaccount:susudosshscpsftp

- Disabling root SSH logins

- To prevent

rootlogins through the SSH protocol, edit the SSH daemon's configuration file,/etc/ssh/sshd_config, and change the line that reads:#PermitRootLogin yesto read as follows:PermitRootLogin noExpand Table 4.4. Disabling Root SSH Logins Effects Does Not Affect Preventsrootaccess using the OpenSSH suite of tools. The following programs are prevented from accessing therootaccount:sshscpsftp

Programs that are not part of the OpenSSH suite of tools. - Using PAM to limit root access to services

- PAM, through the

/lib/security/pam_listfile.somodule, allows great flexibility in denying specific accounts. The administrator can use this module to reference a list of users who are not allowed to log in. To limitrootaccess to a system service, edit the file for the target service in the/etc/pam.d/directory and make sure thepam_listfile.somodule is required for authentication.The following is an example of how the module is used for thevsftpdFTP server in the/etc/pam.d/vsftpdPAM configuration file (the\character at the end of the first line is not necessary if the directive is on a single line):auth required /lib/security/pam_listfile.so item=user \ sense=deny file=/etc/vsftpd.ftpusers onerr=succeedThis instructs PAM to consult the/etc/vsftpd.ftpusersfile and deny access to the service for any listed user. The administrator can change the name of this file, and can keep separate lists for each service or use one central list to deny access to multiple services.If the administrator wants to deny access to multiple services, a similar line can be added to the PAM configuration files, such as/etc/pam.d/popand/etc/pam.d/imapfor mail clients, or/etc/pam.d/sshfor SSH clients.For more information about PAM, see The Linux-PAM System Administrator's Guide, located in the/usr/share/doc/pam-<version>/html/directory.Expand Table 4.5. Disabling Root Using PAM Effects Does Not Affect Preventsrootaccess to network services that are PAM aware. The following services are prevented from accessing therootaccount:logingdmkdmxdmsshscpsftp- FTP clients

- Email clients

- Any PAM aware services

Programs and services that are not PAM aware.

4.2.2. Allowing Root Access

root access may not be an issue. Allowing root access by users means that minor activities, like adding devices or configuring network interfaces, can be handled by the individual users, leaving system administrators free to deal with network security and other important issues.

root access to individual users can lead to the following issues:

- Machine Misconfiguration — Users with

rootaccess can misconfigure their machines and require assistance to resolve issues. Even worse, they might open up security holes without knowing it. - Running Insecure Services — Users with

rootaccess might run insecure servers on their machine, such as FTP or Telnet, potentially putting usernames and passwords at risk. These services transmit this information over the network in plain text. - Running Email Attachments As Root — Although rare, email viruses that affect Linux do exist. A malicious program poses the greatest threat when run by the

rootuser. - Keeping the audit trail intact — Because the

rootaccount is often shared by multiple users, so that multiple system administrators can maintain the system, it is impossible to figure out which of those users wasrootat a given time. When using separate logins, the account a user logs in with, as well as a unique number for session tracking purposes, is put into the task structure, which is inherited by every process that the user starts. When using concurrent logins, the unique number can be used to trace actions to specific logins. When an action generates an audit event, it is recorded with the login account and the session associated with that unique number. Use theaulastcommand to view these logins and sessions. The--proofoption of theaulastcommand can be used suggest a specificausearchquery to isolate auditable events generated by a particular session. For more information about the Audit system, see Chapter 7, System Auditing.

4.2.3. Limiting Root Access

root user, the administrator may want to allow access only through setuid programs, such as su or sudo. For more information on su and sudo, see the Gaining Privileges chapter in Red Hat Enterprise Linux 7 System Administrator's Guide, and the su(1) and sudo(8) man pages.

4.2.4. Enabling Automatic Logouts

root, an unattended login session may pose a significant security risk. To reduce this risk, you can configure the system to automatically log out idle users after a fixed period of time.

- As

root, add the following line at the beginning of the/etc/profilefile to make sure the processing of this file cannot be interrupted:trap "" 1 2 3 15 - As

root, insert the following lines to the/etc/profilefile to automatically log out after 120 seconds:export TMOUT=120 readonly TMOUTTheTMOUTvariable terminates the shell if there is no activity for the specified number of seconds (set to120in the above example). You can change the limit according to the needs of the particular installation.

4.2.5. Securing the Boot Loader

- Preventing Access to Single User Mode — If attackers can boot the system into single user mode, they are logged in automatically as

rootwithout being prompted for therootpassword.Warning

Protecting access to single user mode with a password by editing theSINGLEparameter in the/etc/sysconfig/initfile is not recommended. An attacker can bypass the password by specifying a custom initial command (using theinit=parameter) on the kernel command line in GRUB 2. It is recommended to password-protect the GRUB 2 boot loader, as described in the Protecting GRUB 2 with a Password chapter in Red Hat Enterprise Linux 7 System Administrator's Guide. - Preventing Access to the GRUB 2 Console — If the machine uses GRUB 2 as its boot loader, an attacker can use the GRUB 2 editor interface to change its configuration or to gather information using the

catcommand. - Preventing Access to Insecure Operating Systems — If it is a dual-boot system, an attacker can select an operating system at boot time, for example DOS, which ignores access controls and file permissions.

4.2.5.1. Disabling Interactive Startup

root, disable the PROMPT parameter in the /etc/sysconfig/init file:

PROMPT=no4.2.6. Protecting Hard and Symbolic Links

- The user owns the file to which they link.

- The user already has read and write access to the file to which they link.

- The process following the symbolic link is the owner of the symbolic link.

- The owner of the directory is the same as the owner of the symbolic link.

/usr/lib/sysctl.d/50-default.conf file:

fs.protected_hardlinks = 1

fs.protected_symlinks = 151-no-protect-links.conf in the /etc/sysctl.d/ directory with the following content:

fs.protected_hardlinks = 0

fs.protected_symlinks = 0Note

.conf extension, and it needs to be read after the default system file (the files are read in lexicographic order, therefore settings contained in a file with a higher number at the beginning of the file name take precedence).

sysctl mechanism.

4.3. Securing Services

4.3.1. Risks To Services

- Denial of Service Attacks (DoS) — By flooding a service with requests, a denial of service attack can render a system unusable as it tries to log and answer each request.

- Distributed Denial of Service Attack (DDoS) — A type of DoS attack which uses multiple compromised machines (often numbering in the thousands or more) to direct a coordinated attack on a service, flooding it with requests and making it unusable.

- Script Vulnerability Attacks — If a server is using scripts to execute server-side actions, as Web servers commonly do, an attacker can target improperly written scripts. These script vulnerability attacks can lead to a buffer overflow condition or allow the attacker to alter files on the system.

- Buffer Overflow Attacks — Services that want to listen on ports 1 through 1023 must start either with administrative privileges or the

CAP_NET_BIND_SERVICEcapability needs to be set for them. Once a process is bound to a port and is listening on it, the privileges or the capability are often dropped. If the privileges or the capability are not dropped, and the application has an exploitable buffer overflow, an attacker could gain access to the system as the user running the daemon. Because exploitable buffer overflows exist, crackers use automated tools to identify systems with vulnerabilities, and once they have gained access, they use automated rootkits to maintain their access to the system.

Note

Important

4.3.2. Identifying and Configuring Services

cups— The default print server for Red Hat Enterprise Linux 7.cups-lpd— An alternative print server.xinetd— A super server that controls connections to a range of subordinate servers, such asgssftpandtelnet.sshd— The OpenSSH server, which is a secure replacement for Telnet.

cups running. The same is true for portreserve. If you do not mount NFSv3 volumes or use NIS (the ypbind service), then rpcbind should be disabled. Checking which network services are available to start at boot time is not sufficient. It is recommended to also check which ports are open and listening. Refer to Section 4.4.2, “Verifying Which Ports Are Listening” for more information.

4.3.3. Insecure Services

- Transmit Usernames and Passwords Over a Network Unencrypted — Many older protocols, such as Telnet and FTP, do not encrypt the authentication session and should be avoided whenever possible.

- Transmit Sensitive Data Over a Network Unencrypted — Many protocols transmit data over the network unencrypted. These protocols include Telnet, FTP, HTTP, and SMTP. Many network file systems, such as NFS and SMB, also transmit information over the network unencrypted. It is the user's responsibility when using these protocols to limit what type of data is transmitted.

rlogin, rsh, telnet, and vsftpd.

rlogin, rsh, and telnet) should be avoided in favor of SSH. See Section 4.3.11, “Securing SSH” for more information about sshd.

FTP is not as inherently dangerous to the security of the system as remote shells, but FTP servers must be carefully configured and monitored to avoid problems. See Section 4.3.9, “Securing FTP” for more information about securing FTP servers.

authnfs-serversmbandnbm(Samba)yppasswddypservypxfrd

4.3.4. Securing rpcbind

rpcbind service is a dynamic port assignment daemon for RPC services such as NIS and NFS. It has weak authentication mechanisms and has the ability to assign a wide range of ports for the services it controls. For these reasons, it is difficult to secure.

Note

rpcbind only affects NFSv2 and NFSv3 implementations, since NFSv4 no longer requires it. If you plan to implement an NFSv2 or NFSv3 server, then rpcbind is required, and the following section applies.

4.3.4.1. Protect rpcbind With TCP Wrappers

rpcbind service since it has no built-in form of authentication.

4.3.4.2. Protect rpcbind With firewalld

rpcbind service, it is a good idea to add firewalld rules to the server and restrict access to specific networks.

firewalld rich language commands. The first allows TCP connections to the port 111 (used by the rpcbind service) from the 192.168.0.0/24 network. The second allows TCP connections to the same port from the localhost. All other packets are dropped.

~]# firewall-cmd --add-rich-rule='rule family="ipv4" port port="111" protocol="tcp" source address="192.168.0.0/24" invert="True" drop'

~]# firewall-cmd --add-rich-rule='rule family="ipv4" port port="111" protocol="tcp" source address="127.0.0.1" accept'~]# firewall-cmd --add-rich-rule='rule family="ipv4" port port="111" protocol="udp" source address="192.168.0.0/24" invert="True" drop'Note

--permanent to the firewalld rich language commands to make the settings permanent. See Chapter 5, Using Firewalls for more information about implementing firewalls.

4.3.5. Securing rpc.mountd

rpc.mountd daemon implements the server side of the NFS MOUNT protocol, a protocol used by NFS version 2 (RFC 1904) and NFS version 3 (RFC 1813).

4.3.5.1. Protect rpc.mountd With TCP Wrappers

rpc.mountd service since it has no built-in form of authentication.

IP addresses when limiting access to the service. Avoid using host names, as they can be forged by DNS poisoning and other methods.

4.3.5.2. Protect rpc.mountd With firewalld

rpc.mountd service, add firewalld rich language rules to the server and restrict access to specific networks.

firewalld rich language commands. The first allows mountd connections from the 192.168.0.0/24 network. The second allows mountd connections from the local host. All other packets are dropped.

~]# firewall-cmd --add-rich-rule 'rule family="ipv4" source NOT address="192.168.0.0/24" service name="mountd" drop'

~]# firewall-cmd --add-rich-rule 'rule family="ipv4" source address="127.0.0.1" service name="mountd" accept'Note

--permanent to the firewalld rich language commands to make the settings permanent. See Chapter 5, Using Firewalls for more information about implementing firewalls.

4.3.6. Securing NIS

ypserv, which is used in conjunction with rpcbind and other related services to distribute maps of user names, passwords, and other sensitive information to any computer claiming to be within its domain.

/usr/sbin/rpc.yppasswdd— Also called theyppasswddservice, this daemon allows users to change their NIS passwords./usr/sbin/rpc.ypxfrd— Also called theypxfrdservice, this daemon is responsible for NIS map transfers over the network./usr/sbin/ypserv— This is the NIS server daemon.

rpcbind service as outlined in Section 4.3.4, “Securing rpcbind”, then address the following issues, such as network planning.

4.3.6.1. Carefully Plan the Network

4.3.6.2. Use a Password-like NIS Domain Name and Hostname

/etc/passwd map:

ypcat -d <NIS_domain> -h <DNS_hostname> passwd/etc/shadow file by typing the following command:

ypcat -d <NIS_domain> -h <DNS_hostname> shadowNote

/etc/shadow file is not stored within a NIS map.

o7hfawtgmhwg.domain.com. Similarly, create a different randomized NIS domain name. This makes it much more difficult for an attacker to access the NIS server.

4.3.6.3. Edit the /var/yp/securenets File

/var/yp/securenets file is blank or does not exist (as is the case after a default installation), NIS listens to all networks. One of the first things to do is to put netmask/network pairs in the file so that ypserv only responds to requests from the appropriate network.

/var/yp/securenets file:

255.255.255.0 192.168.0.0Warning

/var/yp/securenets file.

4.3.6.4. Assign Static Ports and Use Rich Language Rules

rpc.yppasswdd — the daemon that allows users to change their login passwords. Assigning ports to the other two NIS server daemons, rpc.ypxfrd and ypserv, allows for the creation of firewall rules to further protect the NIS server daemons from intruders.

/etc/sysconfig/network:

YPSERV_ARGS="-p 834"

YPXFRD_ARGS="-p 835"firewalld rules can then be used to enforce which network the server listens to for these ports:

~]# firewall-cmd --add-rich-rule='rule family="ipv4" source address="192.168.0.0/24" invert="True" port port="834-835" protocol="tcp" drop'

~]# firewall-cmd --add-rich-rule='rule family="ipv4" source address="192.168.0.0/24" invert="True" port port="834-835" protocol="udp" drop'192.168.0.0/24 network. The first rule is for TCP and the second for UDP.

Note

4.3.6.5. Use Kerberos Authentication

/etc/shadow map is sent over the network. If an intruder gains access to a NIS domain and sniffs network traffic, they can collect user names and password hashes. With enough time, a password cracking program can guess weak passwords, and an attacker can gain access to a valid account on the network.

4.3.7. Securing NFS

Important

RPCSEC_GSS kernel module. Information on rpcbind is still included, since Red Hat Enterprise Linux 7 supports NFSv3 which utilizes rpcbind.

4.3.7.1. Carefully Plan the Network

4.3.7.2. Securing NFS Mount Options

mount command in the /etc/fstab file is explained in the Using the mount Command chapter of the Red Hat Enterprise Linux 7 Storage Administration Guide. From a security administration point of view it is worthwhile to note that the NFS mount options can also be specified in /etc/nfsmount.conf, which can be used to set custom default options.

4.3.7.2.1. Review the NFS Server

Warning

exports(5) man page.

ro option to export the file system as read-only whenever possible to reduce the number of users able to write to the mounted file system. Only use the rw option when specifically required. See the man exports(5) page for more information. Allowing write access increases the risk from symlink attacks for example. This includes temporary directories such as /tmp and /usr/tmp.

rw option avoid making them world-writable whenever possible to reduce risk. Exporting home directories is also viewed as a risk as some applications store passwords in clear text or weakly encrypted. This risk is being reduced as application code is reviewed and improved. Some users do not set passwords on their SSH keys so this too means home directories present a risk. Enforcing the use of passwords or using Kerberos would mitigate that risk.

showmount -e command on an NFS server to review what the server is exporting. Do not export anything that is not specifically required.

no_root_squash option and review existing installations to make sure it is not used. See Section 4.3.7.4, “Do Not Use the no_root_squash Option” for more information.

secure option is the server-side export option used to restrict exports to “reserved” ports. By default, the server allows client communication only from “reserved” ports (ports numbered less than 1024), because traditionally clients have only allowed “trusted” code (such as in-kernel NFS clients) to use those ports. However, on many networks it is not difficult for anyone to become root on some client, so it is rarely safe for the server to assume that communication from a reserved port is privileged. Therefore the restriction to reserved ports is of limited value; it is better to rely on Kerberos, firewalls, and restriction of exports to particular clients.

4.3.7.2.2. Review the NFS Client

nosuid option to disallow the use of a setuid program. The nosuid option disables the set-user-identifier or set-group-identifier bits. This prevents remote users from gaining higher privileges by running a setuid program. Use this option on the client and the server side.

noexec option disables all executable files on the client. Use this to prevent users from inadvertently executing files placed in the file system being shared. The nosuid and noexec options are standard options for most, if not all, file systems.

nodev option to prevent “device-files” from being processed as a hardware device by the client.

resvport option is a client-side mount option and secure is the corresponding server-side export option (see explanation above). It restricts communication to a "reserved port". The reserved or "well known" ports are reserved for privileged users and processes such as the root user. Setting this option causes the client to use a reserved source port to communicate with the server.

sec=krb5.

krb5i for integrity and krb5p for privacy protection. These are used when mounting with sec=krb5, but need to be configured on the NFS server. See the man page on exports (man 5 exports) for more information.

man 5 nfs) has a “SECURITY CONSIDERATIONS” section which explains the security enhancements in NFSv4 and contains all the NFS specific mount options.

Important

4.3.7.3. Beware of Syntax Errors

/etc/exports file. Be careful not to add extraneous spaces when editing this file.

/etc/exports file shares the directory /tmp/nfs/ to the host bob.example.com with read/write permissions.

/tmp/nfs/ bob.example.com(rw)/etc/exports file, on the other hand, shares the same directory to the host bob.example.com with read-only permissions and shares it to the world with read/write permissions due to a single space character after the host name.

/tmp/nfs/ bob.example.com (rw)showmount command to verify what is being shared:

showmount -e <hostname>4.3.7.4. Do Not Use the no_root_squash Option

nfsnobody user, an unprivileged user account. This changes the owner of all root-created files to nfsnobody, which prevents uploading of programs with the setuid bit set.

no_root_squash is used, remote root users are able to change any file on the shared file system and leave applications infected by Trojans for other users to inadvertently execute.

4.3.7.5. NFS Firewall Configuration

Configuring Ports for NFSv3

rpcbind service, which might cause problems when creating firewall rules. To simplify this process, use the /etc/sysconfig/nfs file to specify which ports are to be used:

MOUNTD_PORT— TCP and UDP port for mountd (rpc.mountd)STATD_PORT— TCP and UDP port for status (rpc.statd)

/etc/modprobe.d/lockd.conf file:

nlm_tcpport— TCP port for nlockmgr (rpc.lockd)nlm_udpport— UDP port nlockmgr (rpc.lockd)

/etc/modprobe.d/lockd.conf for descriptions of additional customizable NFS lock manager parameters.

rpcinfo -p command on the NFS server to see which ports and RPC programs are being used.

4.3.7.6. Securing NFS with Red Hat Identity Management

4.3.8. Securing HTTP Servers

4.3.8.1. Securing the Apache HTTP Server

chown root <directory_name>chmod 755 <directory_name>/etc/httpd/conf/httpd.conf):

FollowSymLinks- This directive is enabled by default, so be sure to use caution when creating symbolic links to the document root of the Web server. For instance, it is a bad idea to provide a symbolic link to

/. Indexes- This directive is enabled by default, but may not be desirable. To prevent visitors from browsing files on the server, remove this directive.

UserDir- The

UserDirdirective is disabled by default because it can confirm the presence of a user account on the system. To enable user directory browsing on the server, use the following directives:UserDir enabled UserDir disabled rootThese directives activate user directory browsing for all user directories other than/root/. To add users to the list of disabled accounts, add a space-delimited list of users on theUserDir disabledline. ServerTokens- The

ServerTokensdirective controls the server response header field which is sent back to clients. It includes various information which can be customized using the following parameters:ServerTokens Full(default option) — provides all available information (OS type and used modules), for example:Apache/2.0.41 (Unix) PHP/4.2.2 MyMod/1.2ServerTokens ProdorServerTokens ProductOnly— provides the following information:ApacheServerTokens Major— provides the following information:Apache/2ServerTokens Minor— provides the following information:Apache/2.0ServerTokens MinorServerTokens Minimal— provides the following information:Apache/2.0.41ServerTokens OS— provides the following information:Apache/2.0.41 (Unix)

It is recommended to use theServerTokens Prodoption so that a possible attacker does not gain any valuable information about your system.

Important

IncludesNoExec directive. By default, the Server-Side Includes (SSI) module cannot execute commands. It is recommended that you do not change this setting unless absolutely necessary, as it could, potentially, enable an attacker to execute commands on the system.

Removing httpd Modules

httpd modules to limit the functionality of the HTTP Server. To do so, edit configuration files in the /etc/httpd/conf.modules.d directory. For example, to remove the proxy module:

echo '# All proxy modules disabled' > /etc/httpd/conf.modules.d/00-proxy.conf/etc/httpd/conf.d/ directory contains configuration files which are used to load modules as well.

httpd and SELinux

4.3.8.2. Securing NGINX

server section of your NGINX configuration files.

Disabling Version Strings

server_tokens off;nginx in all requests served by NGINX:

$ curl -sI http://localhost | grep Server

Server: nginxIncluding Additional Security-related Headers

add_header X-Frame-Options SAMEORIGIN;— this option denies any page outside of your domain to frame any content served by NGINX, effectively mitigating clickjacking attacks.add_header X-Content-Type-Options nosniff;— this option prevents MIME-type sniffing in certain older browsers.add_header X-XSS-Protection "1; mode=block";— this option enables the Cross-Site Scripting (XSS) filtering, which prevents a browser from rendering potentially malicious content included in a response by NGINX.

Disabling Potentially Harmful HTTP Methods

# Allow GET, PUT, POST; return "405 Method Not Allowed" for all others.

if ( $request_method !~ ^(GET|PUT|POST)$ ) {

return 405;

}Configuring SSL

4.3.9. Securing FTP

- Red Hat Content Accelerator (

tux) — A kernel-space Web server with FTP capabilities. vsftpd— A standalone, security oriented implementation of the FTP service.

vsftpd FTP service.

4.3.9.1. FTP Greeting Banner

vsftpd, add the following directive to the /etc/vsftpd/vsftpd.conf file:

ftpd_banner=<insert_greeting_here>/etc/banners/. The banner file for FTP connections in this example is /etc/banners/ftp.msg. Below is an example of what such a file may look like:

######### Hello, all activity on ftp.example.com is logged. #########Note

220 as specified in Section 4.4.1, “Securing Services With TCP Wrappers and xinetd”.

vsftpd, add the following directive to the /etc/vsftpd/vsftpd.conf file:

banner_file=/etc/banners/ftp.msg4.3.9.2. Anonymous Access

/var/ftp/ directory activates the anonymous account.

vsftpd package. This package establishes a directory tree for anonymous users and configures the permissions on directories to read-only for anonymous users.

Warning

4.3.9.2.1. Anonymous Upload

/var/ftp/pub/. To do this, enter the following command as root:

~]# mkdir /var/ftp/pub/upload~]# chmod 730 /var/ftp/pub/upload~]# ls -ld /var/ftp/pub/upload

drwx-wx---. 2 root ftp 4096 Nov 14 22:57 /var/ftp/pub/uploadvsftpd, add the following line to the /etc/vsftpd/vsftpd.conf file:

anon_upload_enable=YES4.3.9.3. User Accounts

vsftpd, add the following directive to /etc/vsftpd/vsftpd.conf:

local_enable=NO4.3.9.3.1. Restricting User Accounts

sudo privileges, the easiest way is to use a PAM list file as described in Section 4.2.1, “Disallowing Root Access”. The PAM configuration file for vsftpd is /etc/pam.d/vsftpd.

vsftpd, add the user name to /etc/vsftpd/ftpusers

4.3.9.4. Use TCP Wrappers To Control Access

4.3.10. Securing Postfix

4.3.10.1. Limiting a Denial of Service Attack

/etc/postfix/main.cf file. You can change the value of the directives which are already there or you can add the directives you need with the value you want in the following format:

<directive> = <value>smtpd_client_connection_rate_limit— The maximum number of connection attempts any client is allowed to make to this service per time unit (described below). The default value is 0, which means a client can make as many connections per time unit as Postfix can accept. By default, clients in trusted networks are excluded.anvil_rate_time_unit— This time unit is used for rate limit calculations. The default value is 60 seconds.smtpd_client_event_limit_exceptions— Clients that are excluded from the connection and rate limit commands. By default, clients in trusted networks are excluded.smtpd_client_message_rate_limit— The maximum number of message deliveries a client is allowed to request per time unit (regardless of whether or not Postfix actually accepts those messages).default_process_limit— The default maximum number of Postfix child processes that provide a given service. This limit can be overruled for specific services in themaster.cffile. By default the value is 100.queue_minfree— The minimum amount of free space in bytes in the queue file system that is needed to receive mail. This is currently used by the Postfix SMTP server to decide if it will accept any mail at all. By default, the Postfix SMTP server rejectsMAIL FROMcommands when the amount of free space is less than 1.5 times the message_size_limit. To specify a higher minimum free space limit, specify a queue_minfree value that is at least 1.5 times the message_size_limit. By default the queue_minfree value is 0.header_size_limit— The maximum amount of memory in bytes for storing a message header. If a header is larger, the excess is discarded. By default the value is 102400.message_size_limit— The maximum size in bytes of a message, including envelope information. By default the value is 10240000.

4.3.10.2. NFS and Postfix

/var/spool/postfix/, on an NFS shared volume. Because NFSv2 and NFSv3 do not maintain control over user and group IDs, two or more users can have the same UID, and receive and read each other's mail.

Note

SECRPC_GSS kernel module does not utilize UID-based authentication. However, it is still considered good practice not to put the mail spool directory on NFS shared volumes.

4.3.10.3. Mail-only Users

/etc/passwd file should be set to /sbin/nologin (with the possible exception of the root user).

4.3.10.4. Disable Postfix Network Listening

/etc/postfix/main.cf.

/etc/postfix/main.cf to ensure that only the following inet_interfaces line appears:

inet_interfaces = localhostinet_interfaces = all setting can be used.

4.3.10.5. Configuring Postfix to Use SASL

SASL implementations for SMTP Authentication (or SMTP AUTH). SMTP Authentication is an extension of the Simple Mail Transfer Protocol. When enabled, SMTP clients are required to authenticate to the SMTP server using an authentication method supported and accepted by both the server and the client. This section describes how to configure Postfix to make use of the Dovecot SASL implementation.

POP/IMAP server, and thus make the Dovecot SASL implementation available on your system, issue the following command as the root user:

~]# yum install dovecotSMTP server can communicate with the Dovecot SASL implementation using either a UNIX-domain socket or a TCP socket. The latter method is only needed in case the Postfix and Dovecot applications are running on separate machines. This guide gives preference to the UNIX-domain socket method, which affords better privacy.

SASL implementation, a number of configuration changes need to be performed for both applications. Follow the procedures below to effect these changes.

Setting Up Dovecot

- Modify the main Dovecot configuration file,

/etc/dovecot/conf.d/10-master.conf, to include the following lines (the default configuration file already includes most of the relevant section, and the lines just need to be uncommented):service auth { unix_listener /var/spool/postfix/private/auth { mode = 0660 user = postfix group = postfix } }The above example assumes the use of UNIX-domain sockets for communication between Postfix and Dovecot. It also assumes default settings of the PostfixSMTPserver, which include the mail queue located in the/var/spool/postfix/directory, and the application running under thepostfixuser and group. In this way, read and write permissions are limited to thepostfixuser and group.Alternatively, you can use the following configuration to set up Dovecot to listen for Postfix authentication requests throughTCP:service auth { inet_listener { port = 12345 } }In the above example, replace12345with the number of the port you want to use. - Edit the

/etc/dovecot/conf.d/10-auth.confconfiguration file to instruct Dovecot to provide the PostfixSMTPserver with theplainandloginauthentication mechanisms:auth_mechanisms = plain login

Setting Up Postfix

/etc/postfix/main.cf, needs to be modified. Add or edit the following configuration directives:

- Enable SMTP Authentication in the Postfix

SMTPserver:smtpd_sasl_auth_enable = yes - Instruct Postfix to use the Dovecot

SASLimplementation for SMTP Authentication:smtpd_sasl_type = dovecot - Provide the authentication path relative to the Postfix queue directory (note that the use of a relative path ensures that the configuration works regardless of whether the Postfix server runs in a chroot or not):

smtpd_sasl_path = private/authThis step assumes that you want to use UNIX-domain sockets for communication between Postfix and Dovecot. To configure Postfix to look for Dovecot on a different machine in case you useTCPsockets for communication, use configuration values similar to the following:smtpd_sasl_path = inet:127.0.0.1:12345In the above example,127.0.0.1needs to be substituted by theIPaddress of the Dovecot machine and12345by the port specified in Dovecot's/etc/dovecot/conf.d/10-master.confconfiguration file. - Specify

SASLmechanisms that the PostfixSMTPserver makes available to clients. Note that different mechanisms can be specified for encrypted and unencrypted sessions.smtpd_sasl_security_options = noanonymous, noplaintext smtpd_sasl_tls_security_options = noanonymousThe above example specifies that during unencrypted sessions, no anonymous authentication is allowed and no mechanisms that transmit unencrypted user names or passwords are allowed. For encrypted sessions (usingTLS), only non-anonymous authentication mechanisms are allowed.See http://www.postfix.org/SASL_README.html#smtpd_sasl_security_options for a list of all supported policies for limiting allowedSASLmechanisms.

Additional Resources

SASL.

- http://wiki2.dovecot.org/HowTo/PostfixAndDovecotSASL — Contains information on how to set up Postfix to use the Dovecot

SASLimplementation for SMTP Authentication. - http://www.postfix.org/SASL_README.html#server_sasl — Contains information on how to set up Postfix to use either the Dovecot or Cyrus

SASLimplementations for SMTP Authentication.

4.3.11. Securing SSH

SSH are encrypted and protected from interception. See the OpenSSH chapter of the Red Hat Enterprise Linux 7 System Administrator's Guide for general information about the SSH protocol and about using the SSH service in Red Hat Enterprise Linux 7.

Important

SSH setup. By no means should this list of suggested measures be considered exhaustive or definitive. See sshd_config(5) for a description of all configuration directives available for modifying the behavior of the sshd daemon and to ssh(1) for an explanation of basic SSH concepts.

4.3.11.1. Cryptographic Login

SSH supports the use of cryptographic keys for logging in to computers. This is much more secure than using only a password. If you combine this method with other authentication methods, it can be considered a multi-factor authentication. See Section 4.3.11.2, “Multiple Authentication Methods” for more information about using multiple authentication methods.

PubkeyAuthentication configuration directive in the /etc/ssh/sshd_config file needs to be set to yes. Note that this is the default setting. Set the PasswordAuthentication directive to no to disable the possibility of using passwords for logging in.

SSH keys can be generated using the ssh-keygen command. If invoked without additional arguments, it creates a 2048-bit RSA key set. The keys are stored, by default, in the ~/.ssh/ directory. You can utilize the -b switch to modify the bit-strength of the key. Using 2048-bit keys is normally sufficient. The Configuring OpenSSH chapter in the Red Hat Enterprise Linux 7 System Administrator's Guide includes detailed information about generating key pairs.

~/.ssh/ directory. If you accepted the defaults when running the ssh-keygen command, then the generated files are named id_rsa and id_rsa.pub and contain the private and public key respectively. You should always protect the private key from exposure by making it unreadable by anyone else but the file's owner. The public key, however, needs to be transferred to the system you are going to log in to. You can use the ssh-copy-id command to transfer the key to the server:

~]$ ssh-copy-id -i [user@]server~/.ssh/authorized_keys file on the server. The sshd daemon will check this file when you attempt to log in to the server.

SSH keys regularly. When you do, make sure you remove any unused keys from the authorized_keys file.

4.3.11.2. Multiple Authentication Methods

AuthenticationMethods configuration directive in the /etc/ssh/sshd_config file to specify which authentication methods are to be utilized. Note that it is possible to define more than one list of required authentication methods using this directive. If that is the case, the user must complete every method in at least one of the lists. The lists need to be separated by blank spaces, and the individual authentication-method names within the lists must be comma-separated. For example:

AuthenticationMethods publickey,gssapi-with-mic publickey,keyboard-interactivesshd daemon configured using the above AuthenticationMethods directive only grants access if the user attempting to log in successfully completes either publickey authentication followed by gssapi-with-mic or by keyboard-interactive authentication. Note that each of the requested authentication methods needs to be explicitly enabled using a corresponding configuration directive (such as PubkeyAuthentication) in the /etc/ssh/sshd_config file. See the AUTHENTICATION section of ssh(1) for a general list of available authentication methods.

4.3.11.3. Other Ways of Securing SSH

Protocol Version

SSH protocol supplied with Red Hat Enterprise Linux 7 still supports both the SSH-1 and SSH-2 versions of the protocol for SSH clients, only the latter should be used whenever possible. The SSH-2 version contains a number of improvements over the older SSH-1, and the majority of advanced configuration options is only available when using SSH-2.

SSH protocol protects the authentication and communication for which it is used. The version or versions of the protocol supported by the sshd daemon can be specified using the Protocol configuration directive in the /etc/ssh/sshd_config file. The default setting is 2. Note that the SSH-2 version is the only version supported by the Red Hat Enterprise Linux 7 SSH server.

Key Types

ssh-keygen command generates a pair of SSH-2 RSA keys by default, using the -t option, it can be instructed to generate DSA or ECDSA keys as well. The ECDSA (Elliptic Curve Digital Signature Algorithm) offers better performance at the same equivalent symmetric key length. It also generates shorter keys.

Non-Default Port

sshd daemon listens on TCP port 22. Changing the port reduces the exposure of the system to attacks based on automated network scanning, thus increasing security through obscurity. The port can be specified using the Port directive in the /etc/ssh/sshd_config configuration file. Note also that the default SELinux policy must be changed to allow for the use of a non-default port. You can do this by modifying the ssh_port_t SELinux type by typing the following command as root:

~]# semanage -a -t ssh_port_t -p tcp port_numberPort directive.

No Root Login

root user, you should consider setting the PermitRootLogin configuration directive to no in the /etc/ssh/sshd_config file. By disabling the possibility of logging in as the root user, the administrator can audit which user runs what privileged command after they log in as regular users and then gain root rights.

Using the X Security extension

ForwardX11Trusted option in the /etc/ssh/ssh_config file is set to yes, and there is no difference between the ssh -X remote_machine (untrusted host) and ssh -Y remote_machine (trusted host) command.

Warning

4.3.12. Securing PostgreSQL

postgresql-server package provides PostgreSQL. If it is not installed, enter the following command as the root user to install it:

~]# yum install postgresql-server-D option. For example:

~]$ initdb -D /home/postgresql/db1initdb command will attempt to create the directory you specify if it does not already exist. We use the name /home/postgresql/db1 in this example. The /home/postgresql/db1 directory contains all the data stored in the database and also the client authentication configuration file:

~]$ cat pg_hba.conf

# PostgreSQL Client Authentication Configuration File