Fuse on OpenShift Guide

Installing and developing with Red Hat Fuse on OpenShift

Abstract

Chapter 1. Before You Begin

1.1. Release Notes

See the Release Notes for important information about this release.

1.2. Version Compatibility and Support

See the Red Hat JBoss Fuse Supported Configurations page for details of version compatibility and support.

1.3. Support for Windows O/S

The developer tooling (oc client and Container Development Kit) for Fuse on OpenShift is fully supported on the Windows O/S. The examples shown in Linux command-line syntax can also work on the Windows O/S, provided they are modified appropriately to obey Windows command-line syntax.

1.4. Comparison: Fuse Standalone and Fuse on OpenShift

There are several major functionality differences:

- An application deployment with Fuse on OpenShift consists of an application and all required runtime components packaged inside a Docker image. Applications are not deployed to a runtime as with Fuse Standalone, the application image itself is a complete runtime environment deployed and managed through OpenShift.

- Patching in an OpenShift environment is different from Fuse Standalone, as each application image is a complete runtime environment. To apply a patch, the application image is rebuilt and redeployed within OpenShift. Core OpenShift management capabilities allow for rolling upgrades and side-by-side deployment to maintain availability of your application during upgrade.

- Provisioning and clustering capabilities provided by Fabric in Fuse have been replaced with equivalent functionality in Kubernetes and OpenShift. There is no need to create or configure individual child containers as OpenShift automatically does this for you as part of deploying and scaling your application.

- Fabric endpoints are not used within an OpenShift environment. Kubernetes services must be used instead.

- Messaging services are created and managed using the A-MQ for OpenShift image and not included directly within a Karaf container. Fuse on OpenShift provides an enhanced version of the camel-amq component to allow for seamless connectivity to messaging services in OpenShift through Kubernetes.

- Live updates to running Karaf instances using the Karaf shell is strongly discouraged as updates will not be preserved if an application container is restarted or scaled up. This is a fundamental tenet of immutable architecture and essential to achieving scalability and flexibility within OpenShift.

- Maven dependencies directly linked to Red Hat Fuse components are supported by Red Hat. Third-party Maven dependencies introduced by users are not supported.

- The SSH Agent is not included in the Apache Karaf micro-container, so you cannot connect to it using the bin/client console client.

- Protocol compatibility and Camel components within a Fuse on OpenShift application: non-HTTP based communications must use TLS and SNI to be routable from outside OpenShift into a Fuse service (Camel consumer endpoint).

Chapter 2. Get Started for Administrators

If you are an OpenShift administrator, you can prepare an OpenShift cluster for Fuse on OpenShift deployments by installing the Fuse on OpenShift images and templates as described here.

2.1. Prepare the OpenShift Server

- Start the OpenShift Server.

Log in to the OpenShift Server as an administrator, as follows:

oc login -u system:admin

oc login -u system:adminCopy to Clipboard Copied! Toggle word wrap Toggle overflow Install the Fuse on OpenShift image streams. Enter the following commands at a command prompt:

BASEURL=https://raw.githubusercontent.com/jboss-fuse/application-templates/application-templates-2.1.fuse-000099-redhat-5 oc create -n openshift -f ${BASEURL}/fis-image-streams.jsonBASEURL=https://raw.githubusercontent.com/jboss-fuse/application-templates/application-templates-2.1.fuse-000099-redhat-5 oc create -n openshift -f ${BASEURL}/fis-image-streams.jsonCopy to Clipboard Copied! Toggle word wrap Toggle overflow Install the quickstart templates. Enter the following commands at a command prompt:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow Install the templates for Fuse Console (Hawtio). Enter the following commands at a command prompt:

oc create -n openshift -f https://raw.githubusercontent.com/jboss-fuse/application-templates/application-templates-2.1.fuse-000099-redhat-5/fis-console-cluster-template.json oc create -n openshift -f https://raw.githubusercontent.com/jboss-fuse/application-templates/application-templates-2.1.fuse-000099-redhat-5/fis-console-namespace-template.json

oc create -n openshift -f https://raw.githubusercontent.com/jboss-fuse/application-templates/application-templates-2.1.fuse-000099-redhat-5/fis-console-cluster-template.json oc create -n openshift -f https://raw.githubusercontent.com/jboss-fuse/application-templates/application-templates-2.1.fuse-000099-redhat-5/fis-console-namespace-template.jsonCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Chapter 3. Get Started for Developers

You can start using Fuse on OpenShift by creating an application and deploying it to OpenShift using one of the following OpenShift Source-to-Image (S2I) application development workflows:

- S2I binary workflow

- S2I with build input from a binary source. This workflow is characterized by the fact that the build is partly executed on the developer’s own machine. After building a binary package locally, this workflow hands off the binary package to OpenShift. For more details, see Binary Source from the OpenShift 3.9 Developer Guide.

- S2I source workflow

- S2I with build input from a Git source. This workflow is characterised by the fact that the build is executed entirely on the OpenShift server. For more details, see Git Source from the OpenShift 3.9 Developer Guide.

3.1. Prerequisites

3.1.1. Access to an OpenShift Server

The fundamental requirement for developing and testing Fuse on OpenShift projects is having access to an OpenShift Server. You have the following basic alternatives:

3.1.1.1. Install Container Development Kit (CDK) on Your Local Machine

To get started quickly, the most practical alternative for a developer is to install Red Hat CDK on their local machine. Using CDK, you can boot a virtual machine (VM) instance that runs an image of OpenShift on Red Hat Enterprise Linux (RHEL) 7. An installation of CDK consists of the following key components:

- A virtual machine (libvirt, VirtualBox, or Hyper-V)

- Minishift to start and manage the Container Development Environment

For Fuse on OpenShift, we recommend you install version 3.4 of CDK. Detailed instructions for installing and using CDK 3.4 are provided in the following guide:

If you opt to use CDK, we recommend that you read and thoroughly understand the content of the preceding guide before proceeding with the examples in this chapter.

Recent versions of CDK have Fuse on OpenShift images and templates pre-installed. If the images and templates are not pre-installed, however, or if the provided versions are out of date, you will need to install (or update) the Fuse on OpenShift images and templates manually — see Chapter 2, Get Started for Administrators.

Red Hat CDK is intended for development purposes only. It is not intended for other purposes, such as production environments, and may not address known security vulnerabilities. For full support of running mission-critical applications inside of docker-formatted containers, you need an active RHEL 7 or RHEL Atomic subscription. For more details, see Support for Red Hat Container Development Kit (CDK).

3.1.1.2. Get Remote Access to an Existing OpenShift Server

Your IT department might already have set up an OpenShift cluster on some server machines. In this case, the following requirements must be satisfied for getting started with Fuse on OpenShift:

- The server machines must be running a supported version of OpenShift Container Platform (as documented in the Supported Configurations page). The examples in this guide have been tested against version 3.9.

- Ask the OpenShift administrator to install the latest Fuse on OpenShift container base images and the Fuse on OpenShift templates on the OpenShift servers.

- Ask the OpenShift administrator to create a user account for you, having the usual developer permissions (enabling you to create, deploy, and run OpenShift projects).

-

Ask the administrator for the URL of the OpenShift Server (which you can use either to browse to the OpenShift console or connect to OpenShift using the

occommand-line client) and the login credentials for your account.

3.1.2. Java Version

On your developer machine, make sure you have installed a Java version that is supported by Fuse 7.0. For details of the supported Java versions, see Supported Configurations.

3.1.3. Install the Requisite Client-Side Tools

We recommend that you have the following tools installed on your developer machine:

- Apache Maven 3.3.x

- Required for local builds of OpenShift projects. Download the appropriate package from the Apache Maven download page. Make sure that you have at least version 3.3.x (or later) installed, otherwise Maven might have problems resolving dependencies when you build your project.

- Git

- Required for the OpenShift S2I source workflow and generally recommended for source control of your Fuse on OpenShift projects. Download the appropriate package from the Git Downloads page.

- OpenShift client

If you are using CDK, you can add the

ocbinary to your PATH usingminishift oc-envwhich displays the command you need to type into your shell (the output ofoc-envwill differ depending on OS and shell type):minishift oc-env export PATH="/Users/john/.minishift/cache/oc/v1.5.0:$PATH" # Run this command to configure your shell: eval $(minishift oc-env)

$ minishift oc-env export PATH="/Users/john/.minishift/cache/oc/v1.5.0:$PATH" # Run this command to configure your shell: # eval $(minishift oc-env)Copy to Clipboard Copied! Toggle word wrap Toggle overflow For more details, see Using the OpenShift Client Binary in CDK 3.4 Getting Started Guide.

If you are not using CDK, follow the instructions in the CLI Reference to install the

occlient tool.- (Optional) Docker client

Advanced users might find it convenient to have the Docker client tool installed (to communicate with the docker daemon running on an OpenShift server). For information about specific binary installations for your operating system, see the Docker installation site.

For more details, see Reusing the docker Daemon in CDK 3.4 Getting Started Guide.

Make sure that you install versions of the oc tool and the docker tool that are compatible with the version of OpenShift running on the OpenShift Server.

3.2. Prepare Your Development Environment

After installing the required software and tools, prepare your development environment as follows.

3.2.1. Configure Maven Repositories

Configure the Maven repositories, which hold the archetypes and artifacts you will need for building an Fuse on OpenShift project on your local machine. Edit your Maven settings.xml file, which is usually located in ~/.m2/settings.xml (on Linux or macOS) or Documents and Settings\<USER_NAME>\.m2\settings.xml (on Windows). The following Maven repositories are required:

-

Maven central:

https://repo1.maven.org/maven2 -

Red Hat GA repository:

https://maven.repository.redhat.com/ga -

Red Hat EA repository:

https://maven.repository.redhat.com/earlyaccess/all

You must add the preceding repositories both to the dependency repositories section as well as the plug-in repositories section of your settings.xml file.

3.2.2. (Optional) Install Developer Studio

Red Hat JBoss Developer Studio is an Eclipse-based development environment, which includes support for developing Fuse on OpenShift applications. For details about how to install this development environment, see Install Red Hat JBoss Developer Studio.

3.3. Create and Deploy a Project Using the S2I Binary Workflow

In this section, you will use the OpenShift S2I binary workflow to create, build, and deploy an Fuse on OpenShift project.

Create a new Fuse on OpenShift project using a Maven archetype. For this example, we use an archetype that creates a sample Spring Boot Camel project. Open a new shell prompt and enter the following Maven command:

mvn org.apache.maven.plugins:maven-archetype-plugin:2.4:generate \ -DarchetypeCatalog=https://maven.repository.redhat.com/ga/io/fabric8/archetypes/archetypes-catalog/2.2.0.fuse-000110-redhat-5/archetypes-catalog-2.2.0.fuse-000110-redhat-5-archetype-catalog.xml \ -DarchetypeGroupId=org.jboss.fuse.fis.archetypes \ -DarchetypeArtifactId=spring-boot-camel-xml-archetype \ -DarchetypeVersion=2.2.0.fuse-000110-redhat-5

mvn org.apache.maven.plugins:maven-archetype-plugin:2.4:generate \ -DarchetypeCatalog=https://maven.repository.redhat.com/ga/io/fabric8/archetypes/archetypes-catalog/2.2.0.fuse-000110-redhat-5/archetypes-catalog-2.2.0.fuse-000110-redhat-5-archetype-catalog.xml \ -DarchetypeGroupId=org.jboss.fuse.fis.archetypes \ -DarchetypeArtifactId=spring-boot-camel-xml-archetype \ -DarchetypeVersion=2.2.0.fuse-000110-redhat-5Copy to Clipboard Copied! Toggle word wrap Toggle overflow The archetype plug-in switches to interactive mode to prompt you for the remaining fields:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow When prompted, enter

org.example.fisfor thegroupIdvalue andfuse70-spring-bootfor theartifactIdvalue. Accept the defaults for the remaining fields.-

If the previous command exited with the

BUILD SUCCESSstatus, you should now have a new Fuse on OpenShift project under thefuse70-spring-bootsubdirectory. You can inspect the XML DSL code in thefuse70-spring-boot/src/main/resources/spring/camel-context.xmlfile. The demonstration code defines a simple Camel route that continuously sends message containing a random number to the log. In preparation for building and deploying the Fuse on OpenShift project, log in to the OpenShift Server as follows:

oc login -u developer -p developer https://OPENSHIFT_IP_ADDR:8443

oc login -u developer -p developer https://OPENSHIFT_IP_ADDR:8443Copy to Clipboard Copied! Toggle word wrap Toggle overflow Where,

OPENSHIFT_IP_ADDRis a placeholder for the OpenShift server’s IP address as this IP address is not always the same.NoteThe

developeruser (withdeveloperpassword) is a standard account that is automatically created on the virtual OpenShift Server by CDK. If you are accessing a remote server, use the URL and credentials provided by your OpenShift administrator.Create a new project namespace called

test(assuming it does not already exist), as follows:oc new-project test

oc new-project testCopy to Clipboard Copied! Toggle word wrap Toggle overflow If the

testproject namespace already exists, you can switch to it using the following command:oc project test

oc project testCopy to Clipboard Copied! Toggle word wrap Toggle overflow You are now ready to build and deploy the

fuse70-spring-bootproject. Assuming you are still logged into OpenShift, change to the directory of thefuse70-spring-bootproject, and then build and deploy the project, as follows:cd fuse70-spring-boot mvn fabric8:deploy -P openshift

cd fuse70-spring-boot mvn fabric8:deploy -P openshiftCopy to Clipboard Copied! Toggle word wrap Toggle overflow At the end of a successful build, you should see some output like the following:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow NoteThe first time you run this command, Maven has to download a lot of dependencies, which takes several minutes. Subsequent builds will be faster.

-

Navigate to the OpenShift console in your browser and log in to the console with your credentials (for example, with username

developerand password,developer). - In the OpenShift console, scroll down to find the test project namespace. Click the test project to open the test project namespace. The Overview tab of the test project opens, showing the fuse70-spring-boot application.

Click the arrow on the left of the fuse70-spring-boot deployment to expand and view the details of this deployment, as shown.

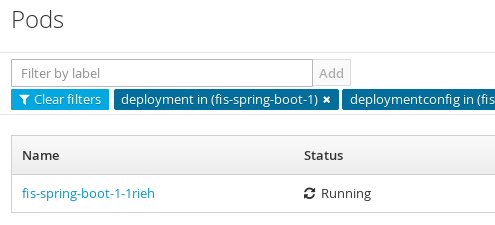

Click in the centre of the pod icon (blue circle) to view the list of pods for fuse70-spring-boot.

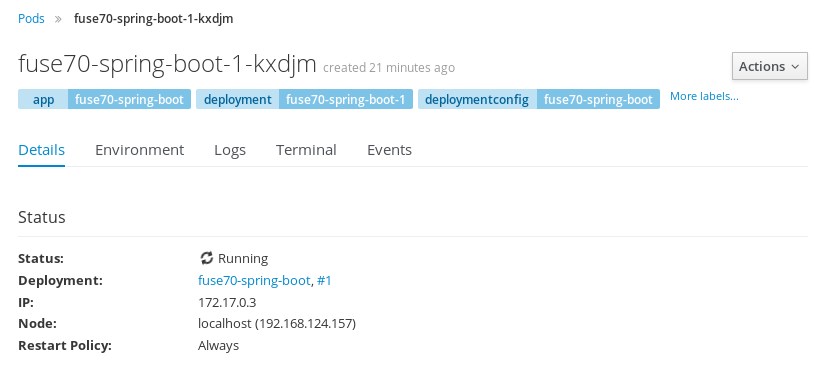

Click on the pod Name (in this example,

fuse70-spring-boot-1-kxdjm) to view the details of the running pod.

Click on the Logs tab to view the application log and scroll down the log to find the random number log messages generated by the Camel application.

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Click Overview on the left-hand navigation bar to return to the applications overview in the

testnamespace. To shut down the running pod, click the down arrow beside the pod icon. When a dialog prompts you with the question Scale down deployment fuse70-spring-boot-1?, click Scale Down.

beside the pod icon. When a dialog prompts you with the question Scale down deployment fuse70-spring-boot-1?, click Scale Down.

(Optional) If you are using CDK, you can shut down the virtual OpenShift Server completely by returning to the shell prompt and entering the following command:

minishift stop

minishift stopCopy to Clipboard Copied! Toggle word wrap Toggle overflow

3.3.1. Undeploy and Redeploy the Project

You can undeploy or redeploy your projects, as follows:

To undeploy the project, enter the command:

mvn fabric8:undeploy

mvn fabric8:undeployCopy to Clipboard Copied! Toggle word wrap Toggle overflow To redeploy the project, enter the commands:

mvn fabric8:undeploy mvn fabric8:deploy -P openshift

mvn fabric8:undeploy mvn fabric8:deploy -P openshiftCopy to Clipboard Copied! Toggle word wrap Toggle overflow

3.3.2. Opening the HawtIO Console

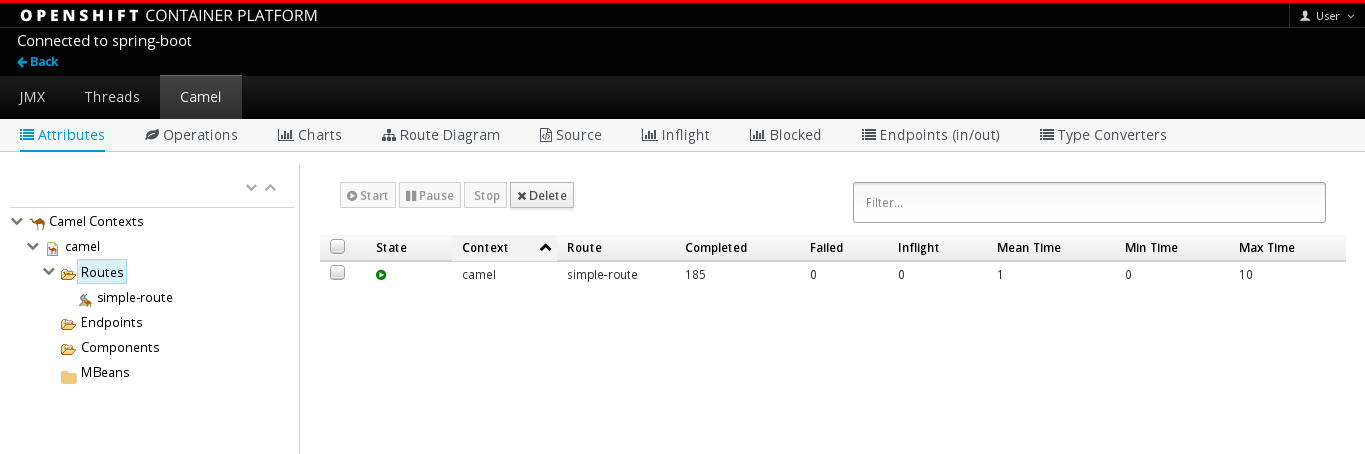

To open the HawtIO console for a pod running the Fuse on OpenShift Spring Boot example, proceed as follows:

From the Applications → Pods view in your OpenShift project, click on the pod name to view the details of the running Fuse on OpenShift Spring Boot pod. On the right-hand side of this page, you see a summary of the container template:

From this view, click on the Open Java Console link to open the HawtIO console.

Note

NoteIn order to configure OpenShift to display a link to HawtIO console in the pod view, the pod running a Fuse on OpenShift image must declare a tcp port within a name attribute set to

jolokia:Copy to Clipboard Copied! Toggle word wrap Toggle overflow

3.4. Create and Deploy a Project Using the S2I Source Workflow

In this section, you will use the OpenShift S2I source workflow to build and deploy a Fuse on OpenShift project based on a template. The starting point for this demonstration is a quickstart project stored in a remote Git repository. Using the OpenShift console, you will download, build, and deploy this quickstart project in the OpenShift server.

-

Navigate to the OpenShift console in your browser (https://OPENSHIFT_IP_ADDR:8443, replace

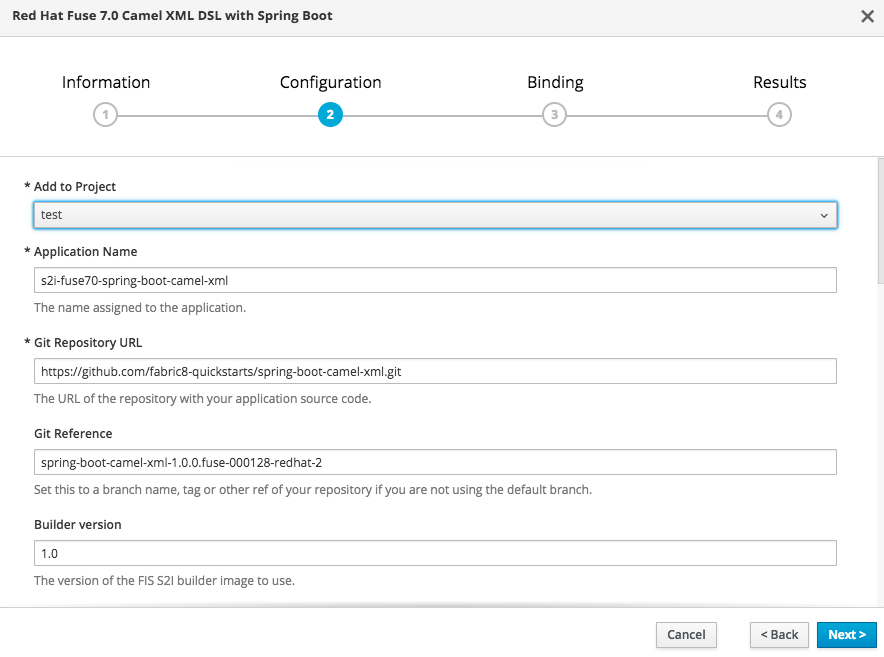

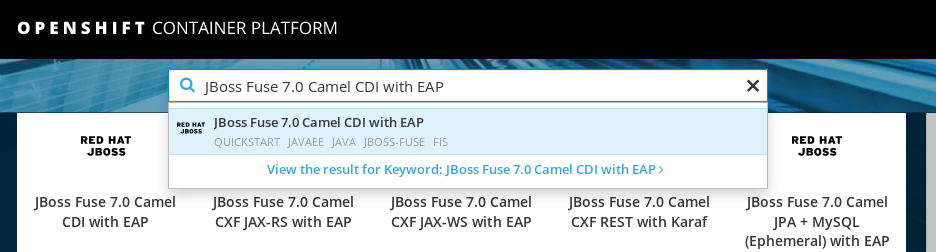

OPENSHIFT_IP_ADDRwith the IP address that was displayed in the case of CDK) and log in to the console with your credentials (for example, with usernamedeveloperand password,developer). In the Catalog search field, enter

Red Hat Fuse 7.0 Camel XML DSL with Spring Bootas the search string and select the Red Hat Fuse 7.0 Camel XML DSL with Spring Boot template.

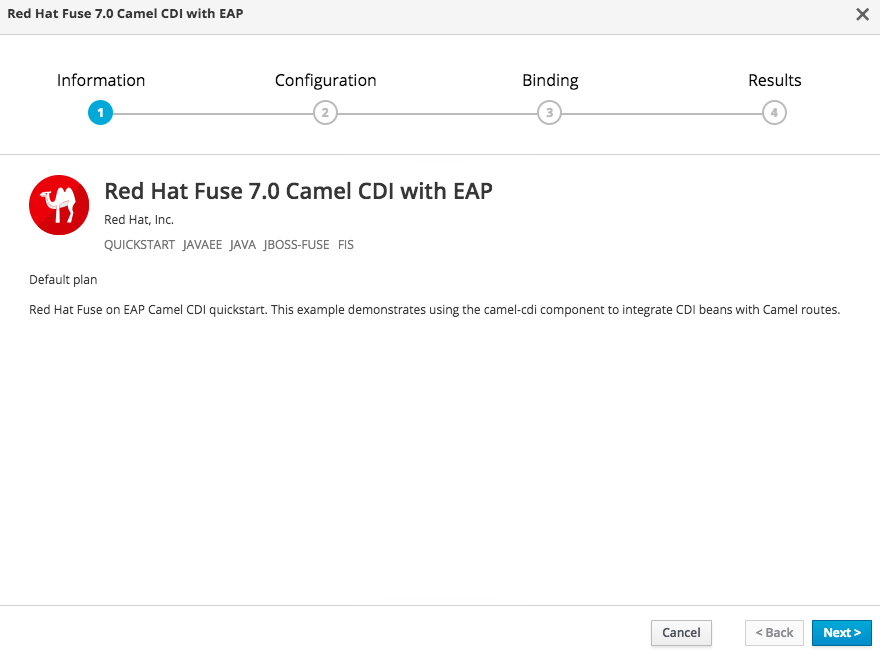

The Information step of the template wizard opens. Click Next.

The Configuration step of the template wizard opens, as shown. From the Add to Project dropdown, select test. You can accept the default values for the rest of the settings in the Configuration step.

NoteAlternatively, if you prefer to create a new project for this example, select Create Project from the Add to Project dropdown. A Project Name field then appears for you to fill in the name of the new project.

Note

NoteIf you want to modify the application code (instead of just running the quickstart as is), you would need to fork the original quickstart Git repository and fill in the appropriate values in the Git Repository URL and Git Reference fields.

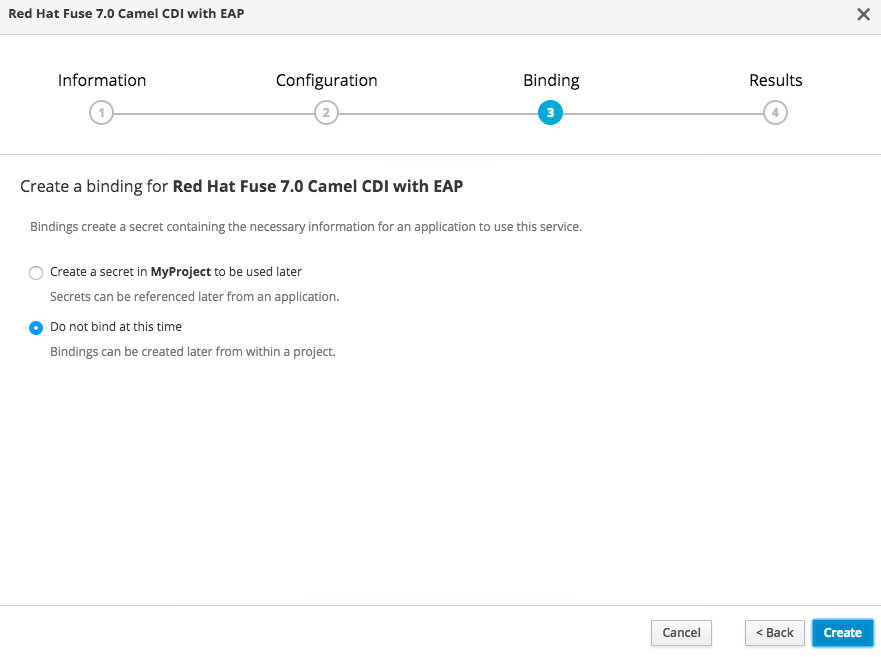

The Bindings option creates a secret with necessary information for an application to use a service. Click Create.

- The Results step of the template wizard opens. Click Close.

- In the right-hand My Projects pane, click test. The Overview tab of the test project opens, showing the s2i-fuse70-spring-boot-camel-xml application.

Click the arrow on the left of the s2i-fuse70-spring-boot-camel-xml deployment to expand and view the details of this deployment, as shown.

In this view, you can see the build log. If the build should fail for any reason, the build log can help you to diagnose the problem.

NoteThe build can take several minutes to complete, because a lot of dependencies must be downloaded from remote Maven repositories. To speed up build times, we recommend you deploy a Nexus server on your local network.

If the build completes successfully, the pod icon shows as a blue circle with 1 pod running. Click in the centre of the pod icon (blue circle) to view the list of pods for s2i-fuse70-spring-boot-camel-xml.

NoteIf multiple pods are running, you would see a list of running pods at this point. Otherwise (if there is just one pod), you get straight through to the details view of the running pod.

The pod details view opens. Click on the Logs tab to view the application log and scroll down the log to find the log messages generated by the Camel application.

-

Click Overview on the left-hand navigation bar to return to the overview of the applications in the

testnamespace. To shut down the running pod, click the down arrow beside the pod icon. When a dialog prompts you with the question Scale down deployment s2i-fuse70-spring-boot-camel-xml-1?, click Scale Down.

beside the pod icon. When a dialog prompts you with the question Scale down deployment s2i-fuse70-spring-boot-camel-xml-1?, click Scale Down.

(Optional) If you are using CDK, you can shut down the virtual OpenShift Server completely by returning to the shell prompt and entering the following command:

minishift stop

minishift stopCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Chapter 4. Develop an Application for the Spring Boot Image

4.1. Overview

This chapter explains how to develop applications for the Spring Boot image.

4.2. Create a Spring Boot Project using Maven Archetype

To create a Spring Boot project, follow these steps:

- Go to the appropriate directory on your system.

In a shell prompt, enter the following the

mvncommand to create a Spring Boot projectmvn org.apache.maven.plugins:maven-archetype-plugin:2.4:generate \ -DarchetypeCatalog=https://maven.repository.redhat.com/ga/io/fabric8/archetypes/archetypes-catalog/2.2.0.fuse-000110-redhat-5/archetypes-catalog-2.2.0.fuse-000110-redhat-5-archetype-catalog.xml \ -DarchetypeGroupId=org.jboss.fuse.fis.archetypes \ -DarchetypeArtifactId=spring-boot-camel-xml-archetype \ -DarchetypeVersion=2.2.0.fuse-000110-redhat-5

mvn org.apache.maven.plugins:maven-archetype-plugin:2.4:generate \ -DarchetypeCatalog=https://maven.repository.redhat.com/ga/io/fabric8/archetypes/archetypes-catalog/2.2.0.fuse-000110-redhat-5/archetypes-catalog-2.2.0.fuse-000110-redhat-5-archetype-catalog.xml \ -DarchetypeGroupId=org.jboss.fuse.fis.archetypes \ -DarchetypeArtifactId=spring-boot-camel-xml-archetype \ -DarchetypeVersion=2.2.0.fuse-000110-redhat-5Copy to Clipboard Copied! Toggle word wrap Toggle overflow The archetype plug-in switches to interactive mode to prompt you for the remaining fields

Copy to Clipboard Copied! Toggle word wrap Toggle overflow When prompted, enter

org.example.fisfor thegroupIdvalue andfuse70-spring-bootfor theartifactIdvalue. Accept the defaults for the remaining fields.

Then, follow the instructions in the quickstart on how to build and deploy the example.

For the full list of available Spring Boot archetypes, see Section 4.4, “Spring Boot Archetype Catalog”.

4.3. Structure of the Camel Spring Boot Application

The directory structure of a Camel Spring Boot application is as follows:

Where the following files are important for developing an application:

- pom.xml

-

Includes additional dependencies. Camel components that are compatible with Spring Boot are available in the starter version, for example

camel-jdbc-starterorcamel-infinispan-starter. Once the starters are included in thepom.xmlthey are automatically configured and registered with the Camel content at boot time. Users can configure the properties of the components using theapplication.propertiesfile. - application.properties

Allows you to externalize your configuration and work with the same application code in different environments. For details, see Externalized Configuration

For example, in this Camel application you can configure certain properties such as name of the application or the IP addresses, and so on.

application.properties

Copy to Clipboard Copied! Toggle word wrap Toggle overflow - Application.java

It is an important file to run your application. As a user you will import here a file

camel-context.xmlto configure routes using the Spring DSL.The

Application.java filespecifies the@SpringBootApplicationannotation, which is equivalent to@Configuration,@EnableAutoConfigurationand@ComponentScanwith their default attributes.Application.java

@SpringBootApplication // load regular Blueprint file from the classpath that contains the Camel XML DSL @ImportResource({"classpath:blueprint/camel-context.xml"})@SpringBootApplication // load regular Blueprint file from the classpath that contains the Camel XML DSL @ImportResource({"classpath:blueprint/camel-context.xml"})Copy to Clipboard Copied! Toggle word wrap Toggle overflow It must have a

mainmethod to run the Spring Boot application.Application.java

Copy to Clipboard Copied! Toggle word wrap Toggle overflow - camel-context.xml

The

src/main/resources/spring/camel-context.xmlis an important file for developing application as it contains the Camel routes.NoteYou can find more information on developing Spring-Boot applications at Developing your first Spring Boot Application

- src/main/fabric8/deployment.yml

Provides additional configuration that is merged with the default OpenShift configuration file generated by the fabric8-maven-plugin.

NoteThis file is not used part of Spring Boot application but it is used in all quickstarts to limit the resources such as CPU and memory usage.

4.4. Spring Boot Archetype Catalog

The Spring Boot Archetype catalog includes the following examples.

| Name | Description |

|---|---|

|

| Demonstrates how to use Apache Camel with Spring Boot based on a fabric8 Java base image. |

|

| Demonstrates how to connect a Spring-Boot application to an ActiveMQ broker and use JMS messaging between two Camel routes using Kubernetes or OpenShift. |

|

| Demonstrates how to configure a Spring-Boot application using Kubernetes ConfigMaps and Secrets. |

|

| Demonstrates how to use Apache Camel to integrate a Spring-Boot application running on Kubernetes or OpenShift with a remote Kie Server. |

|

| Demonstrates how to connect a Spring-Boot application to a JBoss Data Grid or Infinispan server using the Hot Rod protocol. |

|

| Demonstrates how to connect Apache Camel to a remote JBoss Data Virtualization (or Teiid) Server using the JDBC protocol. |

|

| Demonstrates how to configure Camel routes in Spring Boot via a Blueprint configuration file. |

|

| Demonstrates how to use Apache CXF with Spring Boot based on a fabric8 Java base image. The quickstart uses Spring Boot to configure an application that includes a CXF JAXRS endpoint with Swagger enabled. |

|

| Demonstrates how to use Apache CXF with Spring Bootbased on a fabric8 Java base image. The quickstart uses Spring Boot to configure an application that includes a CXF JAXWS endpoint. |

A Technology Preview quickstart is also available. The Spring Boot Camel XA Transactions quickstart demonstrates how to use Spring Boot to run a Camel service that supports XA transactions. This quickstart shows the use of two external transactional resources: a JMS (AMQ) broker and a database (PostgreSQL). You can find this quickstart here: https://github.com/jboss-fuse/spring-boot-camel-xa.

Technology Preview features are not supported with Red Hat production service level agreements (SLAs), might not be functionally complete, and Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process. For more information, see Red Hat Technology Preview features support scope.

4.5. Camel Starter Modules

4.5.1. Overview

Starters are Apache Camel modules intended to be used in Spring Boot applications. There is a camel-xxx-starter module for each Camel component (with a few exceptions listed below).

Starters meet the following requirements:

- Allow auto-configuration of the component using native Spring Boot configuration system which is compatible with IDE tooling.

- Allow auto-configuration of data formats and languages.

- Manage transitive logging dependencies to integrate with Spring Boot logging system.

- Include additional dependencies and align transitive dependencies to minimize the effort of creating a working Spring Boot application.

Each starter has its own integration test in tests/camel-itest-spring-boot, that verifies the compatibility with the current release of Spring Boot.

4.5.2. Using Camel Starter Modules

Apache Camel provides a starter module that allows you to develop Spring Boot applications using starters.

To use the Spring Boot starter:

Add the following to your Spring Boot pom.xml file:

<dependency> <groupId>org.apache.camel</groupId> <artifactId>camel-spring-boot-starter</artifactId> </dependency><dependency> <groupId>org.apache.camel</groupId> <artifactId>camel-spring-boot-starter</artifactId> </dependency>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Add classes with your Camel routes such as:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow

These routes will be started automatically.

To keep the main thread blocked so that Camel stays up, either include the spring-boot-starter-web dependency, or add camel.springboot.main-run-controller=true to your application.properties or application.yml file.

You can customize the Camel application in the application.properties or application.yml file with camel.springboot.* properties.

4.6. Unsupported Starter Modules

The following components do not have a starter because of compatibility issues:

- camel-blueprint (intended for OSGi only)

- camel-cdi (intended for CDI only)

- camel-core-osgi (intended for OSGi only)

- camel-ejb (intended for JEE only)

- camel-eventadmin (intended for OSGi only)

-

camel-ibatis (

camel-mybatis-starteris included) - camel-jclouds

-

camel-mina (

camel-mina2-starteris included) - camel-paxlogging (intended for OSGi only)

-

camel-quartz (

camel-quartz2-starteris included) - camel-spark-rest

-

camel-swagger (

camel-swagger-java-starteris included)

4.7. BOM file for Spring Boot

The purpose of a Maven Bill of Materials (BOM) file is to provide a curated set of Maven dependency versions that work well together, saving you from having to define versions individually for every Maven artifact.

The Fuse BOM for Spring Boot offers the following advantages:

- Defines versions for Maven dependencies, so that you do not need to specify the version when you add a dependency to your POM.

- Defines a set of curated dependencies that are fully tested and supported for a specific version of Fuse.

- Simplifies upgrades of Fuse.

Only the set of dependencies defined by a Fuse BOM are supported by Red Hat.

To incorporate a BOM file into your Maven project, specify a dependencyManagement element in your project’s pom.xml file (or, possibly, in a parent POM file), as shown in the following example:

The org.jboss.redhat-fuse BOM is new in Fuse 7.0 and has been designed to simplify BOM versioning. The Fuse quickstarts and Maven archetypes still use the old style of BOM, however, as they have not yet been refactored to use the new one. Both BOMs are correct and you can use either one in your Maven projects. In an upcoming Fuse release, the quickstarts and Maven archetypes will be refactored to use the new BOM.

After specifying the BOM using the dependency management mechanism, it becomes possible to add Maven dependencies to your POM without specifying the version of the artifact. For example, to add a dependency for the camel-hystrix component, you would add the following XML fragment to the dependencies element in your POM:

<dependency> <groupId>org.apache.camel</groupId> <artifactId>camel-hystrix-starter</artifactId> </dependency>

<dependency>

<groupId>org.apache.camel</groupId>

<artifactId>camel-hystrix-starter</artifactId>

</dependency>

Note how the Camel artifact ID is specified with the -starter suffix — that is, you specify the Camel Hystrix component as camel-hystrix-starter, not as camel-hystrix. The Camel starter components are packaged in a way that is optimized for the Spring Boot environment.

4.8. Spring Boot Maven plugin

The Spring Boot Maven plugin is provided by Spring Boot and it is a developer utility for building and running a Spring Boot project:

-

Building — create an executable Jar package for your Spring Boot application by entering the command

mvn packagein the project directory. The output of the build is placed in thetarget/subdirectory of your Maven project. -

Running — for convenience, you can run the newly-built application with the command,

mvn spring-boot:start.

To incorporate the Spring Boot Maven plugin into your project POM file, add the plugin configuration to the project/build/plugins section of your pom.xml file, as shown in the following example:

Chapter 5. Apache Camel in Spring Boot

5.1. Introduction to Camel Spring Boot

The Camel Spring Boot component provides auto configuration for Apache Camel. Auto-configuration of the Camel context auto-detects Camel routes available in the Spring context and registers the key Camel utilities such as producer template, consumer template, and the type converter as beans.

Every Camel Spring Boot application should use dependencyManagement with productized versions, see quickstart pom. Versions that are tagged later can be omitted to not override the versions from BOM.

camel-spring-boot jar comes with the spring.factories file which allows you to add that dependency into your classpath and hence Spring Boot will automatically auto-configure Camel.

5.2. Introduction to Camel Spring Boot Starter

Apache Camel includes a Spring Boot starter module that allows you to develop Spring Boot applications using starters.

For more details, see sample application in the source code.

To use the starter, add the following snippet to your Spring Boot pom.xml file:

<dependency>

<groupId>org.apache.camel</groupId>

<artifactId>camel-spring-boot-starter</artifactId>

</dependency>

<dependency>

<groupId>org.apache.camel</groupId>

<artifactId>camel-spring-boot-starter</artifactId>

</dependency>The starter allows you to add classes with your Camel routes, as shown in the snippet below. Once these routes are added to the class path the routes are started automatically.

You can customize the Camel application in the application.properties or application.yml file.

Camel Spring Boot now supports referring to bean by the id name in the configuration files (application.properties or yaml file) when you configure any of the Camel starter components. In the src/main/resources/application.properties (or yaml) file you can now easily configure the options on the Camel that refers to other beans by refering to the beans ID name. For example, the xslt component can refer to a custom bean using the bean ID as follows:

Refer to a custom bean by the id myExtensionFactory as follows:

camel.component.xslt.saxon-extension-functions=myExtensionFactory

camel.component.xslt.saxon-extension-functions=myExtensionFactoryWhich you can then create using Spring Boot @Bean annotation as follows:

@Bean(name = "myExtensionFactory")

public ExtensionFunctionDefinition myExtensionFactory() {

}

@Bean(name = "myExtensionFactory")

public ExtensionFunctionDefinition myExtensionFactory() {

}

Or, in case of a Jackson ObjectMapper in the camel-jackson data-format:

camel.dataformat.json-jackson.object-mapper=myJacksonMapper

camel.dataformat.json-jackson.object-mapper=myJacksonMapper5.3. Auto-configured Camel context

Camel auto-configuration provides a CamelContext instance and creates a SpringCamelContext. It also initializes and performs shutdown of that context. This Camel context is registered in the Spring application context under camelContext bean name and you can access it like other Spring bean.

For example, you can access the camelContext as shown below:

5.4. Auto-detecting Camel routes

Camel auto configuration collects all the RouteBuilder instances from the Spring context and automatically injects them into the CamelContext. It simplifies the process of creating new Camel route with the Spring Boot starter. You can create the routes by adding the @Component annotated class to your classpath.

To create a new route RouteBuilder bean in your @Configuration class, see below:

5.5. Camel properties

Spring Boot auto configuration automatically connects to Spring Boot external configuration such as properties placeholders, OS environment variables, or system properties with Camel properties support.

These properties are defined in application.properties file:

route.from = jms:invoices

route.from = jms:invoicesUse as system property

java -Droute.to=jms:processed.invoices -jar mySpringApp.jar

java -Droute.to=jms:processed.invoices -jar mySpringApp.jarUse as placeholders in Camel route:

5.6. Custom Camel context configuration

To perform operations on CamelContext bean created by Camel auto configuration, you need to register CamelContextConfiguration instance in your Spring context as shown below:

The method CamelContextConfiguration and beforeApplicationStart(CamelContext) will be called before the Spring context is started, so the CamelContext instance passed to this callback is fully auto-configured. You can add many instances of CamelContextConfiguration into your Spring context and all of them will be executed.

5.7. Disabling JMX

To disable JMX of the auto-configured CamelContext use camel.springboot.jmxEnabled property as JMX is enabled by default.

For example, you could add the following property to your application.properties file:

camel.springboot.jmxEnabled = false

camel.springboot.jmxEnabled = false5.8. Auto-configured consumer and producer templates

Camel auto configuration provides pre-configured ConsumerTemplate and ProducerTemplate instances. You can inject them into your Spring-managed beans:

By default consumer templates and producer templates come with the endpoint cache sizes set to 1000. You can change those values using the following Spring properties:

camel.springboot.consumerTemplateCacheSize = 100 camel.springboot.producerTemplateCacheSize = 200

camel.springboot.consumerTemplateCacheSize = 100

camel.springboot.producerTemplateCacheSize = 2005.9. Auto-configured TypeConverter

Camel auto configuration registers a TypeConverter instance named typeConverter in the Spring context.

5.10. Spring type conversion API bridge

Spring consist of type conversion API. Spring API is similar to the Camel type converter API. Due to the similarities between the two APIs Camel Spring Boot automatically registers a bridge converter (SpringTypeConverter) that delegates to the Spring conversion API. That means that out-of-the-box Camel will treat Spring Converters similar to Camel.

This allows you to access both Camel and Spring converters using the Camel TypeConverter API, as shown below:

Here, Spring Boot delegates conversion to the Spring’s ConversionService instances available in the application context. If no ConversionService instance is available, Camel Spring Boot auto configuration creates an instance of ConversionService.

5.11. Disabling type conversions features

To disable registering type conversion features of Camel Spring Boot such as TypeConverter instance or Spring bridge, set the camel.springboot.typeConversion property to false as shown below:

camel.springboot.typeConversion = false

camel.springboot.typeConversion = false5.12. Adding XML routes

By default, you can put Camel XML routes in the classpath under the directory camel, which camel-spring-boot will auto detect and include. From Camel version 2.17 onwards you can configure the directory name or disable this feature using the configuration option, as shown below:

// turn off camel.springboot.xmlRoutes = false // scan in the com/foo/routes classpath camel.springboot.xmlRoutes = classpath:com/foo/routes/*.xml

// turn off

camel.springboot.xmlRoutes = false

// scan in the com/foo/routes classpath

camel.springboot.xmlRoutes = classpath:com/foo/routes/*.xml

The XML files should be Camel XML routes and not CamelContext such as:

When using Spring XML files with <camelContext>, you can configure Camel in the Spring XML file as well as in the application.properties file. For example, to set a name on Camel and turn On the stream caching, add:

camel.springboot.name = MyCamel camel.springboot.stream-caching-enabled=true

camel.springboot.name = MyCamel

camel.springboot.stream-caching-enabled=true5.13. Adding XML Rest-DSL

By default, you can put Camel Rest-DSL XML routes in the classpath under the directory camel-rest, which camel-spring-boot will auto detect and include. You can configure the directory name or disable this feature using the configuration option, as shown below:

// turn off camel.springboot.xmlRests = false // scan in the com/foo/routes classpath camel.springboot.xmlRests = classpath:com/foo/rests/*.xml

// turn off

camel.springboot.xmlRests = false

// scan in the com/foo/routes classpath

camel.springboot.xmlRests = classpath:com/foo/rests/*.xml

The Rest-DSL XML files should be Camel XML rests and not CamelContext such as:

5.14. Testing with Camel Spring Boot

In case on Camel running on Spring Boot, Spring Boot automatically embeds Camel and all its routes, which are annotated with @Component. When testing with Spring boot you use @SpringBootTest instead of @ContextConfiguration to specify which configuration class to use.

When you have multiple Camel routes in different RouteBuilder classes, Camel Spring Boot will include all these routes. Hence, when you wish to test routes from only one RouteBuilder class you can use the following patterns to include or exclude which RouteBuilders to enable:

- java-routes-include-pattern: Used for including RouteBuilder classes that match the pattern.

- java-routes-exclude-pattern: Used for excluding RouteBuilder classes that match the pattern. Exclude takes precedence over include.

You can specify these patterns in your unit test classes as properties to @SpringBootTest annonation, as shown below:

@RunWith(CamelSpringBootRunner.class)

@SpringBootTest(classes = {MyApplication.class);

properties = {"camel.springboot.java-routes-include-pattern=**/Foo*"})

public class FooTest {

@RunWith(CamelSpringBootRunner.class)

@SpringBootTest(classes = {MyApplication.class);

properties = {"camel.springboot.java-routes-include-pattern=**/Foo*"})

public class FooTest {

In the FooTest class, the include pattern is **/Foo*, which represents an Ant style pattern. Here, the pattern starts with double asterisk, which matches with any leading package name. /Foo* means the class name must start with Foo, for example, FooRoute. You can run a test using following maven command:

mvn test -Dtest=FooTest

mvn test -Dtest=FooTest5.15. See Also

Chapter 6. Integrate a Camel Application with the AMQ Broker

6.1. Example How to Deploy a Spring Boot Camel A-MQ Quickstart

This tutorial shows how to deploy a quickstart using the A-MQ image.

6.1.1. Prerequisites

- Ensure that OpenShift is running correctly and the Fuse image streams are already installed in OpenShift. See Section 3.1, “Prerequisites”.

- Ensure that Maven Repositories are configured for fuse, see Section 3.2.1, “Configure Maven Repositories”

6.1.2. Building and Deploying the Quickstart

This example requires a Red Hat A-MQ 6 image and deployment template. If you are using CDK 3.1.1+, Red Hat A-MQ 6 images and templates should be already installed in the openshift namespace by default.

To build and deploy the A-MQ quickstart, perform the following steps:

Log in to OpenShift as a developer, for example:

oc login -u developer -p developer

oc login -u developer -p developerCopy to Clipboard Copied! Toggle word wrap Toggle overflow Create a new project amq-quickstart

oc new-project amq-quickstart

oc new-project amq-quickstartCopy to Clipboard Copied! Toggle word wrap Toggle overflow Determine the version of the A-MQ 6 images and templates installed:

oc get template -n openshift

$ oc get template -n openshiftCopy to Clipboard Copied! Toggle word wrap Toggle overflow You should be able to find a template named

amqXX-basic, where XX is the version of A-MQ installed in Openshift.Deploy the A-MQ 6 image in the

amq-quickstartnamespace (replace XX with the actual version of A-MQ found in previous step):oc process openshift//amqXX-basic -p APPLICATION_NAME=broker -p MQ_USERNAME=admin -p MQ_PASSWORD=admin -p MQ_QUEUES=test -p MQ_PROTOCOL=amqp -n amq-quickstart | oc create -f -

$ oc process openshift//amqXX-basic -p APPLICATION_NAME=broker -p MQ_USERNAME=admin -p MQ_PASSWORD=admin -p MQ_QUEUES=test -p MQ_PROTOCOL=amqp -n amq-quickstart | oc create -f -Copy to Clipboard Copied! Toggle word wrap Toggle overflow NoteThis

occommand could fail, if you use an older version ofoc. This syntax works withocversions 3.5.x (based on Kubernetes 1.5.x).Add role which is needed for discovery of mesh endpoints (through Kubernetes REST API agent).

oc policy add-role-to-user view system:serviceaccount:amq-quickstart:default

$ oc policy add-role-to-user view system:serviceaccount:amq-quickstart:defaultCopy to Clipboard Copied! Toggle word wrap Toggle overflow Create the quickstart project using the Maven workflow:

mvn org.apache.maven.plugins:maven-archetype-plugin:2.4:generate \ -DarchetypeCatalog=https://maven.repository.redhat.com/ga/io/fabric8/archetypes/archetypes-catalog/2.2.0.fuse-000110-redhat-5/archetypes-catalog-2.2.0.fuse-000110-redhat-5-archetype-catalog.xml \ -DarchetypeGroupId=org.jboss.fuse.fis.archetypes \ -DarchetypeArtifactId=spring-boot-camel-amq-archetype \ -DarchetypeVersion=2.2.0.fuse-000110-redhat-5

$ mvn org.apache.maven.plugins:maven-archetype-plugin:2.4:generate \ -DarchetypeCatalog=https://maven.repository.redhat.com/ga/io/fabric8/archetypes/archetypes-catalog/2.2.0.fuse-000110-redhat-5/archetypes-catalog-2.2.0.fuse-000110-redhat-5-archetype-catalog.xml \ -DarchetypeGroupId=org.jboss.fuse.fis.archetypes \ -DarchetypeArtifactId=spring-boot-camel-amq-archetype \ -DarchetypeVersion=2.2.0.fuse-000110-redhat-5Copy to Clipboard Copied! Toggle word wrap Toggle overflow The archetype plug-in switches to interactive mode to prompt you for the remaining fields:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow When prompted, enter

org.example.fisfor thegroupIdvalue andfuse70-spring-boot-camel-amqfor theartifactIdvalue. Accept the defaults for the remaining fields.Navigate to the quickstart directory

fuse70-spring-boot-camel-amq:cd fuse70-spring-boot-camel-amq

$ cd fuse70-spring-boot-camel-amqCopy to Clipboard Copied! Toggle word wrap Toggle overflow Customize the client credentials for logging on to the broker, by setting the

ACTIVEMQ_BROKER_USERNAMEandACTIVEMQ_BROKER_PASSWORDenvironment variables. In thefuse70-spring-boot-camel-amqproject, edit thesrc/main/fabric8/deployment.ymlfile, as follows:Copy to Clipboard Copied! Toggle word wrap Toggle overflow Run the

mvncommand to deploy the quickstart to OpenShift server.mvn fabric8:deploy

mvn fabric8:deployCopy to Clipboard Copied! Toggle word wrap Toggle overflow - To verify that the quickstart is running successfully, navigate to the OpenShift console, select the project amq-quickstart, click Applications, select Pods, click fuse70-spring-boot-camel-amq-xxx, and click Logs.

The output shows the messages are sent successfully.

Copy to Clipboard Copied! Toggle word wrap Toggle overflow - To view the routes on the web interface, click Open Java Console and check the messages in the A-MQ queue.

Chapter 7. Integrate Spring Boot with Kubernetes

7.1. Introduction to Spring Boot with Kubernetes Integration

7.1.1. What are we Integrating?

The Spring Cloud Kubernetes plug-in currently enables you to integrate the following features of Spring Boot and Kubernetes:

7.1.2. Spring Boot Externalized Configuration

In Spring Boot, externalized configuration is the mechanism that enables you to inject configuration values from external sources into Java code. In your Java code, injection is typically enabled by annotating with the @Value annotation (to inject into a single field) or the @ConfigurationProperties annotation (to inject into multiple properties on a Java bean class).

The configuration data can come from a wide variety of different sources (or property sources). In particular, configuration properties are often set in a project’s application.properties file (or application.yaml file, if you prefer).

7.1.3. Kubernetes ConfigMap

A Kubernetes ConfigMap is a mechanism that can provide configuration data to a deployed application. A ConfigMap object is typically defined in a YAML file, which is then uploaded to the Kubernetes cluster, making the configuration data available to deployed applications.

7.1.4. Kubernetes Secrets

A Kubernetes Secrets is a mechanism for providing sensitive data (such as passwords, certificates, and so on) to deployed applications.

7.1.5. Spring Cloud Kubernetes Plug-In

The Spring Cloud Kubernetes plug-in implements the integration between Kubernetes and Spring Boot. In principle, you could access the configuration data from a ConfigMap using the Kubernetes API. It is much more convenient, however, to integrate Kubernetes ConfigMap directly with the Spring Boot externalized configuration mechanism, so that Kubernetes ConfigMaps behave as an alternative property source for Spring Boot configuration. This is essentially what the Spring Cloud Kubernetes plug-in provides.

7.1.6. How to Enable Spring Boot with Kubernetes Integration

In a typical Spring Boot Maven project, you can enable the integration by adding the following Maven dependency to your project’s POM file:

To complete the integration, you need to add some annotations to your Java source code, create a Kubernetes ConfigMap object, and modify the OpenShift service account permissions to allow your application to read the ConfigMap object. These steps are described in detail in Section 7.2, “Tutorial for ConfigMap Property Source”.

7.2. Tutorial for ConfigMap Property Source

The following tutorial is based on the spring-boot-camel-config-archetype Maven archetype, which enables you to experiment with setting Kubernetes Secrets and ConfigMaps. The Spring Cloud Kubernetes plug-in is also enabled, making it possible to integrate Kubernetes configuration objects with Spring Boot Externalized Configuration.

7.2.1. Build and run the spring-boot-camel-config quickstart

Perform the following steps to create a simple Camel Spring Boot project:

Open a new shell prompt and enter the following Maven command:

mvn org.apache.maven.plugins:maven-archetype-plugin:2.4:generate \ -DarchetypeCatalog=https://maven.repository.redhat.com/ga/io/fabric8/archetypes/archetypes-catalog/2.2.0.fuse-000110-redhat-5/archetypes-catalog-2.2.0.fuse-000110-redhat-5-archetype-catalog.xml \ -DarchetypeGroupId=org.jboss.fuse.fis.archetypes \ -DarchetypeArtifactId=spring-boot-camel-config-archetype \ -DarchetypeVersion=2.2.0.fuse-000110-redhat-5

mvn org.apache.maven.plugins:maven-archetype-plugin:2.4:generate \ -DarchetypeCatalog=https://maven.repository.redhat.com/ga/io/fabric8/archetypes/archetypes-catalog/2.2.0.fuse-000110-redhat-5/archetypes-catalog-2.2.0.fuse-000110-redhat-5-archetype-catalog.xml \ -DarchetypeGroupId=org.jboss.fuse.fis.archetypes \ -DarchetypeArtifactId=spring-boot-camel-config-archetype \ -DarchetypeVersion=2.2.0.fuse-000110-redhat-5Copy to Clipboard Copied! Toggle word wrap Toggle overflow The archetype plug-in switches to interactive mode to prompt you for the remaining fields:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow When prompted, enter

org.example.fisfor thegroupIdvalue andfuse70-configmapfor theartifactIdvalue. Accept the defaults for the remaining fields.Log in to OpenShift and switch to the OpenShift project where you will deploy your application. For example, to log in as the

developeruser and deploy to thetestproject, enter the following commands:oc login -u developer -p developer oc project test

oc login -u developer -p developer oc project testCopy to Clipboard Copied! Toggle word wrap Toggle overflow At the command line, change to the directory of the new

fuse70-configmapproject and create the Secret object for this application:oc create -f sample-secret.yml

oc create -f sample-secret.ymlCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteIt is necessary to create the Secret object before you deploy the application, otherwise the deployed container enters a wait state until the Secret becomes available. If you subsequently create the Secret, the container will come out of the wait state.

Build and deploy the quickstart application. From the top level of the

fuse70-configmapproject, enter:mvn fabric8:deploy

mvn fabric8:deployCopy to Clipboard Copied! Toggle word wrap Toggle overflow -

View the application log as follows. Open the OpenShift console in your browser and select the relevant project namespace (for example,

test). Click in the center of the circular pod icon for thefuse70-configmapservice and then — in the Pods view — click on the pod Name to view the details of the running pod (alternatively, you will get straight through to the details page, if there is only one pod running). Now click on the Logs tag to view the application log and scroll down to find the log messages generated by the Camel application. The default recipient list, which is configured in

src/main/resources/application.properties, sends the generated messages to two dummy endpoints:direct:async-queueanddirect:file. This causes messages like the following to be written to the application log:5:44:57.376 [Camel (camel) thread #0 - timer://order] INFO generate-order-route - Generating message message-44, sending to the recipient list 15:44:57.378 [Camel (camel) thread #0 - timer://order] INFO target-route-queue - ----> message-44 pushed to an async queue (simulation) 15:44:57.379 [Camel (camel) thread #0 - timer://order] INFO target-route-queue - ----> Using username 'myuser' for the async queue 15:44:57.380 [Camel (camel) thread #0 - timer://order] INFO target-route--file - ----> message-44 written to a file

5:44:57.376 [Camel (camel) thread #0 - timer://order] INFO generate-order-route - Generating message message-44, sending to the recipient list 15:44:57.378 [Camel (camel) thread #0 - timer://order] INFO target-route-queue - ----> message-44 pushed to an async queue (simulation) 15:44:57.379 [Camel (camel) thread #0 - timer://order] INFO target-route-queue - ----> Using username 'myuser' for the async queue 15:44:57.380 [Camel (camel) thread #0 - timer://order] INFO target-route--file - ----> message-44 written to a fileCopy to Clipboard Copied! Toggle word wrap Toggle overflow Before you can update the configuration of the

fuse70-configmapapplication using a ConfigMap object, you must give thefuse70-configmapapplication permission to view data from the OpenShift ApiServer. Enter the following command to give theviewpermission to thefuse70-configmapapplication’s service account:oc policy add-role-to-user view system:serviceaccount:test:qs-camel-config

oc policy add-role-to-user view system:serviceaccount:test:qs-camel-configCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteA service account is specified using the syntax

system:serviceaccount:PROJECT_NAME:SERVICE_ACCOUNT_NAME. Thefis-configdeployment descriptor defines theSERVICE_ACCOUNT_NAMEto beqs-camel-config.To see the live reload feature in action, create a ConfigMap object as follows:

oc create -f sample-configmap.yml

oc create -f sample-configmap.ymlCopy to Clipboard Copied! Toggle word wrap Toggle overflow The new ConfigMap overrides the recipient list of the Camel route in the running application, configuring it to send the generated messages to three dummy endpoints:

direct:async-queue,direct:file, anddirect:mail. This causes messages like the following to be written to the application log:16:25:24.121 [Camel (camel) thread #0 - timer://order] INFO generate-order-route - Generating message message-9, sending to the recipient list 16:25:24.124 [Camel (camel) thread #0 - timer://order] INFO target-route-queue - ----> message-9 pushed to an async queue (simulation) 16:25:24.125 [Camel (camel) thread #0 - timer://order] INFO target-route-queue - ----> Using username 'myuser' for the async queue 16:25:24.125 [Camel (camel) thread #0 - timer://order] INFO target-route--file - ----> message-9 written to a file (simulation) 16:25:24.126 [Camel (camel) thread #0 - timer://order] INFO target-route--mail - ----> message-9 sent via mail

16:25:24.121 [Camel (camel) thread #0 - timer://order] INFO generate-order-route - Generating message message-9, sending to the recipient list 16:25:24.124 [Camel (camel) thread #0 - timer://order] INFO target-route-queue - ----> message-9 pushed to an async queue (simulation) 16:25:24.125 [Camel (camel) thread #0 - timer://order] INFO target-route-queue - ----> Using username 'myuser' for the async queue 16:25:24.125 [Camel (camel) thread #0 - timer://order] INFO target-route--file - ----> message-9 written to a file (simulation) 16:25:24.126 [Camel (camel) thread #0 - timer://order] INFO target-route--mail - ----> message-9 sent via mailCopy to Clipboard Copied! Toggle word wrap Toggle overflow

7.2.2. Configuration Properties bean

A configuration properties bean is a regular Java bean that can receive configuration settings by injection. It provides the basic interface between your Java code and the external configuration mechanisms.

7.2.2.1. Overview

Externalized Configuration and Bean Registry shows how Spring Boot Externalized Configuration works in the spring-boot-camel-config quickstart.

Externalized Configuration and Bean Registry

The configuration mechanism has the following main parts:

- Property Sources

-

Provides property settings for injection into configuration. The default property source is the application’s

application.propertiesfile, and this can optionally be overridden by a ConfigMap object or a Secret object. - Configuration Properties bean

-

Receives configuraton updates from the property sources. A configuration properties bean is a Java bean decorated by the

@Configurationand@ConfigurationPropertiesannotations. - Spring bean registry

- With the requisite annotations, a configuration properties bean is registered in the Spring bean registry.

- Integration with Camel bean registry

- The Camel bean registry is automatically integrated with the Spring bean registry, so that registered Spring beans can be referenced in your Camel routes.

7.2.2.2. QuickstartConfiguration class

The configuration properties bean for the fuse70-configmap project is defined as the QuickstartConfiguration Java class (under the src/main/java/org/example/fis/ directory), as follows:

- 1

- The

@Configurationannotation causes theQuickstartConfigurationclass to be instantiated and registered in Spring as the bean with ID,quickstartConfiguration. This automatically makes the bean accessible from Camel. For example, thetarget-route-queueroute is able to access thequeueUserNameproperty using the Camel syntax${bean:quickstartConfiguration?method=getQueueUsername}. - 2

- The

@ConfigurationPropertiesannotation defines a prefix,quickstart, that must be used when defining property values in a property source. For example, a properties file would reference therecipientsproperty asquickstart.recipients. - 3

- The

recipientproperty is injectable from property sources. - 4

- The

queueUsernameproperty is injectable from property sources. - 5

- The

queuePasswordproperty is injectable from property sources.

7.2.3. How to set up the Secret

The Kubernetes Secret in this quickstart is set up in the standard way, apart from one additional required step: the Spring Cloud Kubernetes plug-in must be configured with the mount paths of the Secrets, so that it can read the Secrets at run time.

For more details, see the chapter on Secrets in the Kubernetes reference documentation.

7.2.3.1. Sample Secret object

The quickstart project provides a sample Secret, sample-secret.yml, as follows:

Note the following settings:

- metadata.name

- Identifies the Secret. Other parts of the OpenShift system use this identifier to reference the Secret.

- quickstart.queue-username

-

Is meant to be injected into the

queueUsernameproperty of thequickstartConfigurationbean. The value must be base64 encoded. - quickstart.queue-password

-

Is meant to be injected into the

queuePasswordproperty of thequickstartConfigurationbean. The value must be base64 encoded.

Property values in Secret objects are always base64 encoded (use the base64 command-line utility). When the Secret is mounted in a pod’s filesystem, the values are automatically decoded back into plain text.

Kubernetes does not allow you to define property names in CamelCase (it requires property names to be all lowercase). To work around this limitation, use the hyphenated form queue-username, which Spring Boot matches with queueUsername. This takes advantage of Spring Boot’s relaxed binding rules for externalized configuration.

7.2.3.2. Configure volume mount for the Secret

The application must be configured to load the Secret at run time, by configuring the Secret as a volume mount. After the application starts, the Secret properties then become available at the specified location in the filesystem.

The Example 7.1, “deployment.yml file” listing shows the application’s deployment.yml file (located under src/main/fabric8/), which defines the volume mount for the Secret.

Example 7.1. deployment.yml file

- 1

- In the

volumessection, the deployment declares a new volume namedcamel-config, which references the Secret namedcamel-config. - 2

- In the

volumeMountssection, the deployment declares a new volume mount, which references thecamel-configvolume and specifies that the Secret volume should be mounted to the path/etc/secrets/camel-configin the pod’s filesystem.

7.2.3.3. Configure spring-cloud-kubernetes to read Secret properties

To integrate secrets with Spring Boot externalized configuration, the Spring Cloud Kubernetes plug-in must be configured with the secret’s mount path. Spring Cloud Kubernetes reads the secrets from the specified location and makes them available to Spring Boot as property sources.

The Spring Cloud Kubernetes plug-in is configured by settings in the bootstrap.yml file, located under src/main/resources in the quickstart project, as shown in the Example 7.2, “bootstrap.yml file” listing.

Example 7.2. bootstrap.yml file

The spring.cloud.kubernetes.secrets.paths property specifies the list of paths of secrets volume mounts in the pod.

A bootstrap.properties file (or bootstrap.yml file) behaves similarly to an application.properties file, but it is loaded at an earlier phase of application start-up. It is more reliable to set the properties relating to the Spring Cloud Kubernetes plug-in in the bootstrap.properties file.

7.2.4. How to set up the ConfigMap

In addition to creating a ConfigMap object and setting the view permission appropriately, the integration with Spring Cloud Kubernetes requires you to match the ConfigMap’s metadata.name with the value of the spring.application.name property configured in the project’s bootstrap.yml file.

7.2.4.1. Sample ConfigMap object

The quickstart project provides a sample ConfigMap, sample-configmap.yml, as follows:

Note the following settings:

- metadata.name

- Identifies the ConfigMap. Other parts of the OpenShift system use this identifier to reference the ConfigMap.

- data.application.properties

-

This section lists property settings that can override settings from the original

application.propertiesfile that was deployed with the application. - quickstart.recipients

-

Is meant to be injected into the

recipientsproperty of thequickstartConfigurationbean.

For more details about the format of this file, see Section 7.3, “ConfigMap PropertySource”.

7.2.4.2. Setting the view permission

As shown in the Example 7.1, “deployment.yml file” listing, the serviceAccountName is set to qs-camel-config in the project’s deployment.yml file. Hence, you need to enter the following command to enable the view permission on the quickstart application (assuming that it deploys into the test project namespace):

oc policy add-role-to-user view system:serviceaccount:test:qs-camel-config

oc policy add-role-to-user view system:serviceaccount:test:qs-camel-config7.2.4.3. Configuring the Spring Cloud Kubernetes plug-in

The Spring Cloud Kubernetes plug-in is configured by the following settings in the bootstrap.yml file, as shown in the Example 7.2, “bootstrap.yml file” listing:

- spring.application.name

-

This value must match the

metadata.nameof the ConfigMap object (for example, as defined insample-configmap.ymlin the quickstart project). It defaults toapplication. - spring.cloud.kubernetes.reload.enabled

-

Setting this to

trueenables dynamic reloading of ConfigMap objects.

For more details about the supported properties, see Section 7.5, “PropertySource Reload”.

7.3. ConfigMap PropertySource

Kubernetes has the notion of ConfigMap for passing configuration to the application. The Spring cloud Kubernetes plug-in provides integration with ConfigMap to make config maps accessible by Spring Boot.

The ConfigMap PropertySource when enabled will look up Kubernetes for a ConfigMap named after the application (see spring.application.name). If the map is found it will read its data and do the following:

7.3.1. Apply Individual Properties

Let’s assume that we have a Spring Boot application named demo that uses properties to read its thread pool configuration.

-

pool.size.core -

pool.size.max

This can be externalized to config map in YAML format:

7.3.2. Apply Property Named application.yaml

Individual properties work fine for most cases but sometimes we find YAML is more convenient. In this case we use a single property named application.yaml and embed our YAML inside it:

7.3.3. Apply Property Named application.properties

You can also define the ConfigMap properties in the style of a Spring Boot application.properties file. In this case we use a single property named application.properties and list the property settings inside it:

7.3.4. Deploying a ConfigMap

To deploy a ConfigMap and make it accessible to a Spring Boot application, perform the following steps:

-

In your Spring Boot application, use the externalized configuration mechanism to access the ConfigMap property source. For example, by annotating a Java bean with the

@Configurationannotation, it becomes possible for the bean’s property values to be injected by a ConfigMap. -

In your project’s

bootstrap.propertiesfile (orbootstrap.yamlfile), set thespring.application.nameproperty to match the name of the ConfigMap. Enable the

viewpermission on the service account that is associated with your application (by default, this would be the service account calleddefault). For example, to add theviewpermission to thedefaultservice account:oc policy add-role-to-user view system:serviceaccount:$(oc project -q):default -n $(oc project -q)

oc policy add-role-to-user view system:serviceaccount:$(oc project -q):default -n $(oc project -q)Copy to Clipboard Copied! Toggle word wrap Toggle overflow

7.4. Secrets PropertySource

Kubernetes has the notion of Secrets for storing sensitive data such as password, OAuth tokens, etc. The Spring cloud Kubernetes plug-in provides integration with Secrets to make secrets accessible by Spring Boot.

The Secrets property source when enabled will look up Kubernetes for Secrets from the following sources:

- Reading recursively from secrets mounts

-

Named after the application (see

spring.application.name) - Matching some labels

Please note that, by default, consuming Secrets via API (points 2 and 3 above) is not enabled.

If the secrets are found, their data is made available to the application.

7.4.1. Example of Setting Secrets

Let’s assume that we have a Spring Boot application named demo that uses properties to read its ActiveMQ and PostreSQL configuration.

amq.username amq.password pg.username pg.password

amq.username

amq.password

pg.username

pg.password

These secrets can be externalized to Secrets in YAML format:

- ActiveMQ Secrets

Copy to Clipboard Copied! Toggle word wrap Toggle overflow - PostreSQL Secrets

7.4.2. Consuming the Secrets

You can select the Secrets to consume in a number of ways:

By listing the directories where the secrets are mapped:

-Dspring.cloud.kubernetes.secrets.paths=/etc/secrets/activemq,etc/secrets/postgres

-Dspring.cloud.kubernetes.secrets.paths=/etc/secrets/activemq,etc/secrets/postgresCopy to Clipboard Copied! Toggle word wrap Toggle overflow If you have all the secrets mapped to a common root, you can set them like this:

-Dspring.cloud.kubernetes.secrets.paths=/etc/secrets

-Dspring.cloud.kubernetes.secrets.paths=/etc/secretsCopy to Clipboard Copied! Toggle word wrap Toggle overflow By setting a named secret:

-Dspring.cloud.kubernetes.secrets.name=postgres-secrets

-Dspring.cloud.kubernetes.secrets.name=postgres-secretsCopy to Clipboard Copied! Toggle word wrap Toggle overflow By defining a list of labels:

-Dspring.cloud.kubernetes.secrets.labels.broker=activemq -Dspring.cloud.kubernetes.secrets.labels.db=postgres

-Dspring.cloud.kubernetes.secrets.labels.broker=activemq -Dspring.cloud.kubernetes.secrets.labels.db=postgresCopy to Clipboard Copied! Toggle word wrap Toggle overflow

7.4.3. Secrets Configuration Properties

You can use the following properties to configure the Secrets property source:

- spring.cloud.kubernetes.secrets.enabled

-

Enable the Secrets property source. Type is

Booleanand default istrue. - spring.cloud.kubernetes.secrets.name

-

Sets the name of the secret to look up. Type is

Stringand default is${spring.application.name}. - spring.cloud.kubernetes.secrets.labels

-

Sets the labels used to lookup secrets. This property behaves as defined by Map-based binding. Type is

java.util.Mapand default isnull. - spring.cloud.kubernetes.secrets.paths

-

Sets the paths where secrets are mounted. This property behaves as defined by Collection-based binding. Type is

java.util.Listand default isnull. - spring.cloud.kubernetes.secrets.enableApi

-

Enable/disable consuming secrets via APIs. Type is

Booleanand default isfalse.

Access to secrets via API may be restricted for security reasons — the preferred way is to mount a secret to the POD.

7.5. PropertySource Reload

Some applications may need to detect changes on external property sources and update their internal status to reflect the new configuration. The reload feature of Spring Cloud Kubernetes is able to trigger an application reload when a related ConfigMap or Secret change.

This feature is disabled by default and can be enabled using the configuration property spring.cloud.kubernetes.reload.enabled=true (for example, in the bootstrap.properties file).

The following levels of reload are supported (property spring.cloud.kubernetes.reload.strategy):

- refresh

(default) only configuration beans annotated with

@ConfigurationPropertiesor@RefreshScopeare reloaded. This reload level leverages the refresh feature of Spring Cloud Context.NoteThe PropertySource reload feature can only be used for simple properties (that is, not collections) when the reload strategy is set to

refresh. Properties backed by collections must not be changed at runtime.- restart_context

- the whole Spring ApplicationContext is gracefully restarted. Beans are recreated with the new configuration.

- shutdown

- the Spring ApplicationContext is shut down to activate a restart of the container. When using this level, make sure that the lifecycle of all non-daemon threads is bound to the ApplicationContext and that a replication controller or replica set is configured to restart the pod.

7.5.1. Example

Assuming that the reload feature is enabled with default settings (refresh mode), the following bean will be refreshed when the config map changes:

A way to see that changes effectively happen is creating another bean that prints the message periodically.

The message printed by the application can be changed using a ConfigMap like the following one:

Any change to the property named bean.message in the Config Map associated with the pod will be reflected in the output of the program.

The full example is available in [spring-cloud-kubernetes-reload-example](spring-cloud-kubernetes-examples/spring-cloud-kubernetes-reload-example).

The reload feature supports two operating modes:

- event

-

(default) watches for changes in ConfigMaps or secrets using the Kubernetes API (web socket). Any event will produce a re-check on the configuration and a reload in case of changes. The

viewrole on the service account is required in order to listen for config map changes. A higher level role (eg.edit) is required for secrets (secrets are not monitored by default). - polling

-

re-creates the configuration periodically from config maps and secrets to see if it has changed. The polling period can be configured using the property

spring.cloud.kubernetes.reload.periodand defaults to 15 seconds. It requires the same role as the monitored property source. This means, for example, that using polling on file mounted secret sources does not require particular privileges.

The following properties can be used to configure the reloading feature:

- spring.cloud.kubernetes.reload.enabled

-

Enables monitoring of property sources and configuration reload. Type is

Booleanand default isfalse. - spring.cloud.kubernetes.reload.monitoring-config-maps

-

Allow monitoring changes in config maps. Type is

Booleanand default istrue. - spring.cloud.kubernetes.reload.monitoring-secrets

-

Allow monitoring changes in secrets. Type is

Booleanand default isfalse. - spring.cloud.kubernetes.reload.strategy

-

The strategy to use when firing a reload (

refresh,restart_context,shutdown). Type isEnumand default isrefresh. - spring.cloud.kubernetes.reload.mode

-

Specifies how to listen for changes in property sources (

event,polling). Type isEnumand default isevent. - spring.cloud.kubernetes.reload.period

-

The period in milliseconds for verifying changes when using the

pollingstrategy. Type isLongand default is15000.

Note the following points:

-

The

spring.cloud.kubernetes.reload.*properties should not be used in ConfigMaps or Secrets. Changing such properties at run time may lead to unexpected results; -

Deleting a property or the whole config map does not restore the original state of the beans when using the

refreshlevel.

Chapter 8. Develop an Application for the Karaf Image

8.1. Create a Karaf Project using Maven Archetype

To create a Karaf project using a Maven archetype, follow these steps:

- Go to the appropriate directory on your system.

Launch the Maven command to create a Karaf project