Debezium User Guide

For use with Debezium 1.1

Abstract

Preface

Debezium is a set of distributed services that capture row-level changes in your databases so that your applications can see and respond to those changes. Debezium records all row-level changes committed to each database table. Each application reads the transaction logs of interest to view all operations in the order in which they occurred.

This guide provides details about using Debezium connectors:

- Chapter 1, High level overview of Debezium

- Chapter 2, Debezium Connector for MySQL

- Chapter 3, Debezium Connector for PostgreSQL

- Chapter 4, Debezium Connector for MongoDB

- Chapter 5, Debezium Connector for SQL Server

- Chapter 7, Debezium logging

- Chapter 6, Monitoring Debezium

- Chapter 8, Configuring Debezium connectors for your application

Chapter 1. High level overview of Debezium

Debezium is a set of distributed services that capture changes in your databases. Your applications can consume and respond to those changes. Debezium captures each row-level change in each database table in a change event record and streams these records to Kafka topics. Applications read these streams, which provide the change event records in the same order in which they were generated.

More details are in the following sections:

1.1. Debezium Features

Debezium is a set of source connectors for Apache Kafka Connect, ingesting changes from different databases using change data capture (CDC). Unlike other approaches such as polling or dual writes, log-based CDC as implemented by Debezium:

- makes sure that all data changes are captured

- produces change events with a very low delay (e.g. ms range for MySQL or Postgres) while avoiding increased CPU usage of frequent polling

- requires no changes to your data model (such as "Last Updated" column)

- can capture deletes

- can capture old record state and further metadata such as transaction id and causing query (depending on the database’s capabilities and configuration)

To learn more about the advantages of log-based CDC, refer to this blog post.

The actual change data capture feature of Debezium is amended with a range of related capabilities and options:

- Snapshots: optionally, an initial snapshot of a database’s current state can be taken if a connector gets started up and not all logs still exist (typically the case when the database has been running for some time and has discarded any transaction logs not needed any longer for transaction recovery or replication); different modes exist for snapshotting, refer to the docs of the specific connector you’re using to learn more

- Filters: the set of captured schemas, tables and columns can be configured via whitelist/blacklist filters

- Masking: the values from specific columns can be masked, e.g. for sensitive data

- Monitoring: most connectors can be monitored using JMX

- Different ready-to-use message transformations: e.g. for message routing, extraction of new record state (relational connectors, MongoDB) and routing of events from a transactional outbox table

Refer to the connector documentation for a list of all supported databases and detailed information about the features and configuration options of each connector.

1.2. Debezium Architecture

You deploy Debezium by means of Apache Kafka Connect. Kafka Connect is a framework and runtime for implementing and operating:

- Source connectors such as Debezium that send records into Kafka

- Sink connectors that propagate records from Kafka topics to other systems

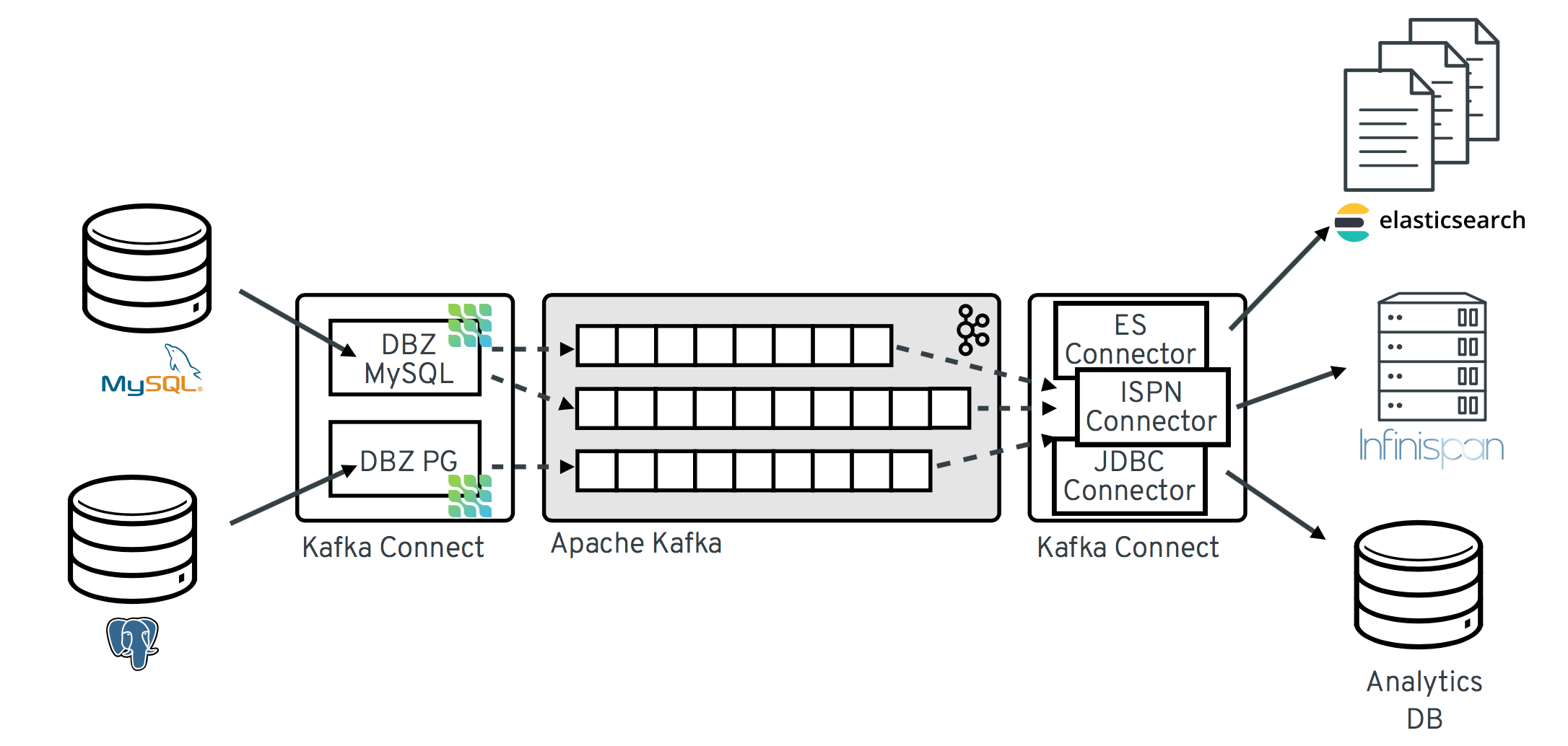

The following image shows the architecture of a change data capture pipeline based on Debezium:

As shown in the image, the Debezium connectors for MySQL and PostgresSQL are deployed to capture changes to these two types of databases. Each Debezium connector establishes a connection to its source database:

-

The MySQL connector uses a client library for accessing the

binlog. - The PostgreSQL connector reads from a logical replication stream.

Kafka Connect operates as a separate service besides the Kafka broker.

By default, changes from one database table are written to a Kafka topic whose name corresponds to the table name. If needed, you can adjust the destination topic name by configuring Debezium’s topic routing transformation. For example, you can:

- Route records to a topic whose name is different from the table’s name

- Stream change event records for multiple tables into a single topic

After change event records are in Apache Kafka, different connectors in the Kafka Connect eco-system can stream the records to other systems and databases such as Elasticsearch, data warehouses and analytics systems, or caches such as Infinispan. Depending on the chosen sink connector, you might need to configure Debezium’s new record state extraction transformation. This Kafka Connect SMT propagates the after structure from Debezium’s change event to the sink connector. This is in place of the verbose change event record that is propagated by default.

Chapter 2. Debezium Connector for MySQL

MySQL has a binary log (binlog) that records all operations in the order in which they are committed to the database. This includes changes to table schemas and the data within tables. MySQL uses the binlog for replication and recovery.

The MySQL connector reads the binlog and produces change events for row-level INSERT, UPDATE, and DELETE operations and records the change events in a Kafka topic. Client applications read those Kafka topics.

As MySQL is typically set up to purge binlogs after a specified period of time, the MySQL connector performs and initial consistent snapshot of each of your databases. The MySQL connector reads the binlog from the point at which the snapshot was made.

2.1. Overview of how the MySQL connector works

The Debezium MySQL connector tracks the structure of the tables, performs snapshots, transforms binlog events into Debezium change events and records where those events are recorded in Kafka.

2.1.1. How the MySQL connector uses database schemas

When a database client queries a database, the client uses the database’s current schema. However, the database schema can be changed at any time, which means that the connector must be able to identify what the schema was at the time each insert, update, or delete operation was recorded. Also, a connector cannot just use the current schema because the connector might be processing events that are relatively old and may have been recorded before the tables' schemas were changed.

To handle this, MySQL includes in the binlog the row-level changes to the data and the DDL statements that are applied to the database. As the connector reads the binlog and comes across these DDL statements, it parses them and updates an in-memory representation of each table’s schema. The connector uses this schema representation to identify the structure of the tables at the time of each insert, update, or delete and to produce the appropriate change event. In a separate database history Kafka topic, the connector also records all DDL statements along with the position in the binlog where each DDL statement appeared.

When the connector restarts after having crashed or been stopped gracefully, the connector starts reading the binlog from a specific position, that is, from a specific point in time. The connector rebuilds the table structures that existed at this point in time by reading the database history Kafka topic and parsing all DDL statements up to the point in the binlog where the connector is starting.

This database history topic is for connector use only. The connector can optionally generate schema change events on a different topic that is intended for consumer applications. This is described in how the MySQL connector handles schema change topics.

When the MySQL connector captures changes in a table to which a schema change tool such as gh-ost or pt-online-schema-change is applied then helper tables created during the migration process need to be included among whitelisted tables.

If downstream systems do not need the messages generated by the temporary table then a simple message transform can be written and applied to filter them out.

For information about topic naming conventions, see MySQL connector and Kafka topics.

2.1.2. How the MySQL connector performs database snapshots

When your Debezium MySQL connector is first started, it performs an initial consistent snapshot of your database. The following flow describes how this snapshot is completed.

This is the default snapshot mode which is set as initial in the snapshot.mode property. For other snapshots modes, please check out the MySQL connector configuration properties.

- The connector…

| Step | Action |

|---|---|

|

| Grabs a global read lock that blocks writes by other database clients. Note The snapshot itself does not prevent other clients from applying DDL which might interfere with the connector’s attempt to read the binlog position and table schemas. The global read lock is kept while the binlog position is read before released in a later step. |

|

| Starts a transaction with repeatable read semantics to ensure that all subsequent reads within the transaction are done against the consistent snapshot. |

|

| Reads the current binlog position. |

|

| Reads the schema of the databases and tables allowed by the connector’s configuration. |

|

| Releases the global read lock. This now allows other database clients to write to the database. |

|

|

Writes the DDL changes to the schema change topic, including all necessary Note This happens if applicable. |

|

|

Scans the database tables and generates |

|

| Commits the transaction. |

|

| Records the completed snapshot in the connector offsets. |

2.1.2.1. What happens if the connector fails?

If the connector fails, stops, or is rebalanced while making the initial snapshot, the connector creates a new snapshot once restarted. Once that intial snapshot is completed, the Debezium MySQL connector restarts from the same position in the binlog so it does not miss any updates.

If the connector stops for long enough, MySQL could purge old binlog files and the connector’s position would be lost. If the position is lost, the connector reverts to the initial snapshot for its starting position. For more tips on troubleshooting the Debezium MySQL connector, see MySQL connector common issues.

2.1.2.2. What if Global Read Locks are not allowed?

Some environments do not allow a global read lock. If the Debezium MySQL connector detects that global read locks are not permitted, the connector uses table-level locks instead and performs a snapshot with this method.

The user must have LOCK_TABLES privileges.

- The connector…

| Step | Action |

|---|---|

|

| Starts a transaction with repeatable read semantics to ensure that all subsequent reads within the transaction are done against the consistent snapshot. |

|

| Reads and filters the names of the databases and tables. |

|

| Reads the current binlog position. |

|

| Reads the schema of the databases and tables allowed by the connector’s configuration. |

|

|

Writes the DDL changes to the schema change topic, including all necessary Note This happens if applicable. |

|

|

Scans the database tables and generates |

|

| Commits the transaction. |

|

| Releases the table-level locks. |

|

| Records the completed snapshot in the connector offsets. |

2.1.3. How the MySQL connector handles schema change topics

You can configure the Debezium MySQL connector to produce schema change events that include all DDL statements applied to databases in the MySQL server. The connector writes all of these events to a Kafka topic named <serverName> where serverName is the name of the connector as specified in the database.server.name configuration property.

If you choose to use schema change events, use the schema change topic and do not consume the database history topic.

It is vital that there is a global order of the events in the database schema history. Therefore, the database history topic must not be partitioned. This means that a partition count of 1 must be specified when creating this topic. When relying on auto topic creation, make sure that Kafka’s num.partitions configuration option (the default number of partitions) is set to 1.

2.1.3.1. Schema change topic structure

Each message that is written to the schema change topic contains a message key which includes the name of the connected database used when applying DDL statements:

{

"schema": {

"type": "struct",

"name": "io.debezium.connector.mysql.SchemaChangeKey",

"optional": false,

"fields": [

{

"field": "databaseName",

"type": "string",

"optional": false

}

]

},

"payload": {

"databaseName": "inventory"

}

}The schema change event message value contains a structure that includes the DDL statements, the database to which the statements were applied, and the position in the binlog where the statements appeared:

{

"schema": {

"type": "struct",

"name": "io.debezium.connector.mysql.SchemaChangeValue",

"optional": false,

"fields": [

{

"field": "databaseName",

"type": "string",

"optional": false

},

{

"field": "ddl",

"type": "string",

"optional": false

},

{

"field": "source",

"type": "struct",

"name": "io.debezium.connector.mysql.Source",

"optional": false,

"fields": [

{

"type": "string",

"optional": true,

"field": "version"

},

{

"type": "string",

"optional": false,

"field": "name"

},

{

"type": "int64",

"optional": false,

"field": "server_id"

},

{

"type": "int64",

"optional": false,

"field": "ts_sec"

},

{

"type": "string",

"optional": true,

"field": "gtid"

},

{

"type": "string",

"optional": false,

"field": "file"

},

{

"type": "int64",

"optional": false,

"field": "pos"

},

{

"type": "int32",

"optional": false,

"field": "row"

},

{

"type": "boolean",

"optional": true,

"default": false,

"field": "snapshot"

},

{

"type": "int64",

"optional": true,

"field": "thread"

},

{

"type": "string",

"optional": true,

"field": "db"

},

{

"type": "string",

"optional": true,

"field": "table"

},

{

"type": "string",

"optional": true,

"field": "query"

}

]

}

]

},

"payload": {

"databaseName": "inventory",

"ddl": "CREATE TABLE products ( id INTEGER NOT NULL AUTO_INCREMENT PRIMARY KEY, name VARCHAR(255) NOT NULL, description VARCHAR(512), weight FLOAT ); ALTER TABLE products AUTO_INCREMENT = 101;",

"source" : {

"version": "0.10.0.Beta4",

"name": "mysql-server-1",

"server_id": 0,

"ts_sec": 0,

"gtid": null,

"file": "mysql-bin.000003",

"pos": 154,

"row": 0,

"snapshot": true,

"thread": null,

"db": null,

"table": null,

"query": null

}

}

}2.1.3.1.1. Important tips regarding schema change topics

The ddl field may contain multiple DDL statements. Every statement applies to the database in the databaseName field and appears in the same order as they were applied in the database. The source field is structured exactly as a standard data change event written to table-specific topics. This field is useful to correlate events on different topic.

....

"payload": {

"databaseName": "inventory",

"ddl": "CREATE TABLE products ( id INTEGER NOT NULL AUTO_INCREMENT PRIMARY KEY,...

"source" : {

....

}

}

....- What if a client submits DDL statements to multiple databases?

- If MySQL applies them atomically, the connector takes the DDL statements in order, groups them by database, and creates a schema change event for each group.

- If MySQL applies them individually, the connector creates a separate schema change event for each statement.

Additional resources

- If you do not use the schema change topics detailed here, check out the database history topic.

2.1.4. MySQL connector events

All data change events produced by the Debezium MySQL connector contain a key and a value. The change event key and the change event value each contain a schema and a payload where the schema describes the structure of the payload and the payload contains the data.

The MySQL connector ensures that all Kafka Connect schema names adhere to the Avro schema name format. This is important as any character that is not a latin letter or underscore is replaced by an underscore which can lead to unexpected conflicts in schema names when the logical server names, database names, and table names container other characters that are replaced with these underscores.

2.1.4.1. Change event key

For any given table, the change event’s key has a structure that contains a field for each column in the PRIMARY KEY (or unique constraint) at the time the event was created. Let us look at an example table and then how the schema and payload would appear for the table.

example table

CREATE TABLE customers ( id INTEGER NOT NULL AUTO_INCREMENT PRIMARY KEY, first_name VARCHAR(255) NOT NULL, last_name VARCHAR(255) NOT NULL, email VARCHAR(255) NOT NULL UNIQUE KEY ) AUTO_INCREMENT=1001;

example change event key

{

"schema": { 1

"type": "struct",

"name": "mysql-server-1.inventory.customers.Key", 2

"optional": false, 3

"fields": [ 4

{

"field": "id",

"type": "int32",

"optional": false

}

]

},

"payload": { 5

"id": 1001

}

}

- 1

- The

schemadescribes what is in thepayload. - 2

- The

mysql-server-1.inventory.customers.Keyis the name of the schema which defines the structure wheremysql-server-1is the connector name,inventoryis the database, andcustomersis the table. - 3

- Denotes that the

payloadis not optional. - 4

- Specifies the type of fields expected in the

payload. - 5

- The payload itself, which in this case only contains a single

idfield.

This key describes the row in the inventory.customers table which is out from the connector entitled mysql-server-1 whose id primary key column has a value of 1001.

2.1.4.2. Change event value

The change event value contains a schema and a payload section. There are three types of change event values which have an envelope structure. The fields in this structure are explained below and marked on each of the change event value examples.

| Item | Field name | Description |

|---|---|---|

| 1 |

|

|

| 2 |

| A mandatory string that describes the type of operation. values

|

| 3 |

| An optional field that specifies the state of the row before the event occurred. |

| 4 |

| An optional field that specifies the state of the row after the event occurred. |

| 5 |

| A mandatory field that describes the source metadata for the event including:

Note

If the binlog_rows_query_log_events option is enabled and the connector has the |

| 6 |

| An optional field that displays the time at which the connector processed the event. Note The time is based on the system clock in the JVM running the Kafka Connect task. |

Let us look at an example table and then how the schema and payload would appear for the table.

example table

CREATE TABLE customers ( id INTEGER NOT NULL AUTO_INCREMENT PRIMARY KEY, first_name VARCHAR(255) NOT NULL, last_name VARCHAR(255) NOT NULL, email VARCHAR(255) NOT NULL UNIQUE KEY ) AUTO_INCREMENT=1001;

2.1.4.2.1. Create change event value

This example shows a create event for the customers table:

{

"schema": { 1

"type": "struct",

"fields": [

{

"type": "struct",

"fields": [

{

"type": "int32",

"optional": false,

"field": "id"

},

{

"type": "string",

"optional": false,

"field": "first_name"

},

{

"type": "string",

"optional": false,

"field": "last_name"

},

{

"type": "string",

"optional": false,

"field": "email"

}

],

"optional": true,

"name": "mysql-server-1.inventory.customers.Value",

"field": "before"

},

{

"type": "struct",

"fields": [

{

"type": "int32",

"optional": false,

"field": "id"

},

{

"type": "string",

"optional": false,

"field": "first_name"

},

{

"type": "string",

"optional": false,

"field": "last_name"

},

{

"type": "string",

"optional": false,

"field": "email"

}

],

"optional": true,

"name": "mysql-server-1.inventory.customers.Value",

"field": "after"

},

{

"type": "struct",

"fields": [

{

"type": "string",

"optional": false,

"field": "version"

},

{

"type": "string",

"optional": false,

"field": "connector"

},

{

"type": "string",

"optional": false,

"field": "name"

},

{

"type": "int64",

"optional": false,

"field": "ts_ms"

},

{

"type": "boolean",

"optional": true,

"default": false,

"field": "snapshot"

},

{

"type": "string",

"optional": false,

"field": "db"

},

{

"type": "string",

"optional": true,

"field": "table"

},

{

"type": "int64",

"optional": false,

"field": "server_id"

},

{

"type": "string",

"optional": true,

"field": "gtid"

},

{

"type": "string",

"optional": false,

"field": "file"

},

{

"type": "int64",

"optional": false,

"field": "pos"

},

{

"type": "int32",

"optional": false,

"field": "row"

},

{

"type": "int64",

"optional": true,

"field": "thread"

},

{

"type": "string",

"optional": true,

"field": "query"

}

],

"optional": false,

"name": "io.product.connector.mysql.Source",

"field": "source"

},

{

"type": "string",

"optional": false,

"field": "op"

},

{

"type": "int64",

"optional": true,

"field": "ts_ms"

}

],

"optional": false,

"name": "mysql-server-1.inventory.customers.Envelope"

},

"payload": { 2

"op": "c",

"ts_ms": 1465491411815,

"before": null,

"after": {

"id": 1004,

"first_name": "Anne",

"last_name": "Kretchmar",

"email": "annek@noanswer.org"

},

"source": {

"version": "1.1.2.Final",

"connector": "mysql",

"name": "mysql-server-1",

"ts_ms": 0,

"snapshot": false,

"db": "inventory",

"table": "customers",

"server_id": 0,

"gtid": null,

"file": "mysql-bin.000003",

"pos": 154,

"row": 0,

"thread": 7,

"query": "INSERT INTO customers (first_name, last_name, email) VALUES ('Anne', 'Kretchmar', 'annek@noanswer.org')"

}

}

}- 1

- The

schemaportion of this event’s value shows the schema for the envelope, the schema for the source structure (which is specific to the MySQL connector and reused across all events), and the table-specific schemas for thebeforeandafterfields. - 2

- The

payloadportion of this event’s value shows the information in the event, namely that it is describing that the row was created (becauseop=c), and that theafterfield value contains the values of the new inserted row’sid,first_name,last_name, andemailcolumns.

2.1.4.2.2. Update change event value

The value of an update change event on the customers table has the exact same schema as a create event. The payload is structured the same, but holds different values. Here is an example (formatted for readability):

{

"schema": { ... },

"payload": {

"before": { 1

"id": 1004,

"first_name": "Anne",

"last_name": "Kretchmar",

"email": "annek@noanswer.org"

},

"after": { 2

"id": 1004,

"first_name": "Anne Marie",

"last_name": "Kretchmar",

"email": "annek@noanswer.org"

},

"source": { 3

"version": "1.1.2.Final",

"name": "mysql-server-1",

"connector": "mysql",

"name": "mysql-server-1",

"ts_ms": 1465581,

"snapshot": false,

"db": "inventory",

"table": "customers",

"server_id": 223344,

"gtid": null,

"file": "mysql-bin.000003",

"pos": 484,

"row": 0,

"thread": 7,

"query": "UPDATE customers SET first_name='Anne Marie' WHERE id=1004"

},

"op": "u", 4

"ts_ms": 1465581029523 5

}

}

Comparing this to the value in the insert event, you can see a couple of differences in the payload section:

- 1

- The

beforefield now has the state of the row with the values before the database commit. - 2

- The

afterfield now has the updated state of the row, and thefirst_namevalue is nowAnne Marie. You can compare thebeforeandafterstructures to determine what actually changed in this row because of the commit. - 3

- The

sourcefield structure has the same fields as before, but the values are different (this event is from a different position in the binlog). Thesourcestructure shows information about MySQL’s record of this change (providing traceability). It also has information you can use to compare to other events in this and other topics to know whether this event occurred before, after, or as part of the same MySQL commit as other events. - 4

- The

opfield value is nowu, signifying that this row changed because of an update. - 5

- The

ts_msfield shows the timestamp when Debezium processed this event.

When the columns for a row’s primary or unique key are updated, the value of the row’s key is changed and Debezium outputs three events: a DELETE event and tombstone event with the old key for the row, followed by an INSERT event with the new key for the row.

2.1.4.2.3. Delete change event value

The value of a delete change event on the customers table has the exact same schema as create and update events. The payload is structured the same, but holds different values. Here is an example (formatted for readability):

{

"schema": { ... },

"payload": {

"before": { 1

"id": 1004,

"first_name": "Anne Marie",

"last_name": "Kretchmar",

"email": "annek@noanswer.org"

},

"after": null, 2

"source": { 3

"version": "1.1.2.Final",

"connector": "mysql",

"name": "mysql-server-1",

"ts_ms": 1465581,

"snapshot": false,

"db": "inventory",

"table": "customers",

"server_id": 223344,

"gtid": null,

"file": "mysql-bin.000003",

"pos": 805,

"row": 0,

"thread": 7,

"query": "DELETE FROM customers WHERE id=1004"

},

"op": "d", 4

"ts_ms": 1465581902461 5

}

}

Comparing the payload portion to the payloads in the create and update events, you can see some differences:

- 1

- The

beforefield now has the state of the row that was deleted with the database commit. - 2

- The

afterfield isnull, signifying that the row no longer exists. - 3

- The

sourcefield structure has many of the same values as before, except thets_secandposfields have changed (and the file might have changed in other scenarios). - 4

- The

opfield value is nowd, signifying that this row was deleted. - 5

- The

ts_msshows the timestamp when Debezium processed this event.

This event provides a consumer with the information that it needs to process the removal of this row. The old values are included because some consumers might require them in order to properly handle the removal.

The MySQL connector’s events are designed to work with Kafka log compaction, which allows for the removal of some older messages as long as at least the most recent message for every key is kept. This allows Kafka to reclaim storage space while ensuring the topic contains a complete data set and can be used for reloading key-based state.

When a row is deleted, the delete event value listed above still works with log compaction, because Kafka can still remove all earlier messages with that same key. If the message value is null, Kafka knows that it can remove all messages with that same key. To make this possible, Debezium’s MySQL connector always follows a delete event with a special tombstone event that has the same key but a null value.

2.1.5. How the MySQL connector maps data types

The Debezium MySQL connector represents changes to rows with events that are structured like the table in which the row exists. The event contains a field for each column value. The MySQL data type of that column dictates how the value is represented in the event.

Columns that store strings are defined in MySQL with a character set and collation. The MySQL connector uses the column’s character set when reading the binary representation of the column values in the binlog events. The following table shows how the connector maps the MySQL data types to both literal and semantic types.

- literal type : how the value is represented using Kafka Connect schema types

- semantic type : how the Kafka Connect schema captures the meaning of the field (schema name)

| MySQL type | Literal type | Semantic type |

|---|---|---|

|

|

| n/a |

|

|

| n/a |

|

|

|

Note

The example (where n is bits)

numBytes = n/8 + (n%8== 0 ? 0 : 1) |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

| n/a |

|

|

|

Note

Contains the string representation of a |

|

|

|

Note

The |

|

|

|

Note

The |

|

|

|

|

|

|

|

Note

In ISO 8601 format with microsecond precision. MySQL allows |

2.1.5.1. Temporal values

Excluding the TIMESTAMP data type, MySQL temporal types depend on the value of the time.precision.mode configuration property. For TIMESTAMP columns whose default value is specified as CURRENT_TIMESTAMP or NOW, the value 1970-01-01 00:00:00 is used as the default value in the Kafka Connect schema.

MySQL allows zero-values for DATE, `DATETIME, and TIMESTAMP columns because zero-values are sometimes preferred over null values. The MySQL connector represents zero-values as null values when the column definition allows null values, or as the epoch day when the column does not allow null values.

Temporal values without time zones

The DATETIME type represents a local date and time such as "2018-01-13 09:48:27". As you can see, there is no time zone information. Such columns are converted into epoch milli-seconds or micro-seconds based on the column’s precision by using UTC. The TIMESTAMP type represents a timestamp without time zone information and is converted by MySQL from the server (or session’s) current time zone into UTC when writing and vice versa when reading back the value. For example:

-

DATETIMEwith a value of2018-06-20 06:37:03becomes1529476623000. -

TIMESTAMPwith a value of2018-06-20 06:37:03becomes2018-06-20T13:37:03Z.

Such columns are converted into an equivalent io.debezium.time.ZonedTimestamp in UTC based on the server (or session’s) current time zone. The time zone will be queried from the server by default. If this fails, it must be specified explicitly by the database.serverTimezone connector configuration property. For example, if the database’s time zone (either globally or configured for the connector by means of the database.serverTimezone property) is "America/Los_Angeles", the TIMESTAMP value "2018-06-20 06:37:03" is represented by a ZonedTimestamp with the value "2018-06-20T13:37:03Z".

Note that the time zone of the JVM running Kafka Connect and Debezium does not affect these conversions.

More details about properties related to termporal values are in the documentation for MySQL connector configuration properties.

- time.precision.mode=adaptive_time_microseconds(default)

The MySQL connector determines the literal type and semantic type based on the column’s data type definition so that events represent exactly the values in the database. All time fields are in microseconds. Only positive

TIMEfield values in the range of00:00:00.000000to23:59:59.999999can be captured correctly.MySQL type Literal type Semantic type DATEINT32io.debezium.time.DateNoteRepresents the number of days since epoch.

TIME[(M)]INT64io.debezium.time.MicroTimeNoteRepresents the time value in microseconds and does not include time zone information. MySQL allows

Mto be in the range of0-6.DATETIME, DATETIME(0), DATETIME(1), DATETIME(2), DATETIME(3)INT64io.debezium.time.TimestampNoteRepresents the number of milliseconds past epoch and does not include time zone information.

DATETIME(4), DATETIME(5), DATETIME(6)INT64io.debezium.time.MicroTimestampNoteRepresents the number of microseconds past epoch and does not include time zone information.

- time.precision.mode=connect

The MySQL connector uses the predefined Kafka Connect logical types. This approach is less precise than the default approach and the events could be less precise if the database column has a fractional second precision value of greater than

3. Only values in the range of00:00:00.000to23:59:59.999can be handled. Settime.precision.mode=connectonly if you can ensure that theTIMEvalues in your tables never exceed the supported ranges. Theconnectsetting is expected to be removed in a future version of Debezium.MySQL type Literal type Semantic type DATEINT32org.apache.kafka.connect.data.DateNoteRepresents the number of days since epoch.

TIME[(M)]INT64org.apache.kafka.connect.data.TimeNoteRepresents the time value in microseconds since midnight and does not include time zone information.

DATETIME[(M)]INT64org.apache.kafka.connect.data.TimestampNoteRepresents the number of milliseconds since epoch, and does not include time zone information.

== Decimal values

Decimals are handled via the decimal.handling.mode property.

See MySQL connector configuration properties for more details.

- decimal.handling.mode=precise

MySQL type Literal type Semantic type NUMERIC[(M[,D])]BYTESorg.apache.kafka.connect.data.DecimalNoteThe

scaleschema parameter contains an integer that represents how many digits the decimal point shifted.DECIMAL[(M[,D])]BYTESorg.apache.kafka.connect.data.DecimalNoteThe

scaleschema parameter contains an integer that represents how many digits the decimal point shifted.- decimal.handling.mode=double

MySQL type Literal type Semantic type NUMERIC[(M[,D])]FLOAT64n/a

DECIMAL[(M[,D])]FLOAT64n/a

- decimal.handling.mode=string

MySQL type Literal type Semantic type NUMERIC[(M[,D])]STRINGn/a

DECIMAL[(M[,D])]STRINGn/a

2.1.5.2. Boolean values

MySQL handles the BOOLEAN value internally in a specific way. The BOOLEAN column is internally mapped to TINYINT(1) datatype. When the table is created during streaming then it uses proper BOOLEAN mapping as Debezium receives the original DDL. During snapshot Debezium executes SHOW CREATE TABLE to obtain table definition which returns TINYINT(1) for both BOOLEAN and TINYINT(1) columns.

Debezium then has no way how to obtain the original type mapping and will map to TINYINT(1).

An example configuration is

converters=boolean boolean.type=io.debezium.connector.mysql.converters.TinyIntOneToBooleanConverter boolean.selector=db1.table1.*, db1.table2.column1

2.1.5.3. Spatial data types

Currently, the Debezium MySQL connector supports the following spatial data types:

| MySQL type | Literal type | Semantic type |

|---|---|---|

|

|

|

Note Contains a structure with two fields:

|

2.1.6. The MySQL connector and Kafka topics

The Debezium MySQL connector writes events for all INSERT, UPDATE, and DELETE operations from a single table to a single Kafka topic. The Kafka topic naming convention is as follows:

format

serverName.databaseName.tableName

Example 2.1. example

Let us say that fulfillment is the server name and inventory is the database which contains three tables of orders, customers, and products. The Debezium MySQL connector produces events on three Kafka topics, one for each table in the database:

fulfillment.inventory.orders fulfillment.inventory.customers fulfillment.inventory.products

2.1.7. MySQL supported topologies

The Debezium MySQL connector supports the following MySQL topologies:

- Standalone

- When a single MySQL server is used, the server must have the binlog enabled (and optionally GTIDs enabled) so the Debezium MySQL connector can monitor the server. This is often acceptable, since the binary log can also be used as an incremental backup. In this case, the MySQL connector always connects to and follows this standalone MySQL server instance.

- Master and slave

The Debezium MySQL connector can follow one of the masters or one of the slaves (if that slave has its binlog enabled), but the connector only sees changes in the cluster that are visible to that server. Generally, this is not a problem except for the multi-master topologies.

The connector records its position in the server’s binlog, which is different on each server in the cluster. Therefore, the connector will need to follow just one MySQL server instance. If that server fails, it must be restarted or recovered before the connector can continue.

- High available clusters

- A variety of high availability solutions exist for MySQL, and they make it far easier to tolerate and almost immediately recover from problems and failures. Most HA MySQL clusters use GTIDs so that slaves are able to keep track of all changes on any of the master.

- Multi-master

A multi-master MySQL topology uses one or more MySQL slaves that each replicate from multiple masters. This is a powerful way to aggregate the replication of multiple MySQL clusters, and requires using GTIDs.

The Debezium MySQL connector can use these multi-master MySQL slaves as sources, and can fail over to different multi-master MySQL slaves as long as thew new slave is caught up to the old slave (e.g., the new slave has all of the transactions that were last seen on the first slave). This works even if the connector is only using a subset of databases and/or tables, as the connector can be configured to include or exclude specific GTID sources when attempting to reconnect to a new multi-master MySQL slave and find the correct position in the binlog.

- Hosted

There is support for the Debezium MySQL connector to use hosted options such as Amazon RDS and Amazon Aurora.

ImportantBecause these hosted options do not allow a global read lock, table-level locks are used to create the consistent snapshot.

2.2. Setting up MySQL server

2.2.1. Creating a MySQL user for Debezium

You have to define a MySQL user with appropriate permissions on all databases that the Debezium MySQL connector monitors.

Prerequisites

- You must have a MySQL server.

- You must know basic SQL commands.

Procedure

- Create the MySQL user:

mysql> CREATE USER 'user'@'localhost' IDENTIFIED BY 'password';

- Grant the required permissions to the user:

mysql> GRANT SELECT, RELOAD, SHOW DATABASES, REPLICATION SLAVE, REPLICATION CLIENT ON *.* TO 'user' IDENTIFIED BY 'password';

See permissions explained for notes on each permission.

If using a hosted option such as Amazon RDS or Amazon Aurora that do not allow a global read lock, table-level locks are used to create the consistent snapshot. In this case, you need to also grant LOCK_TABLES permissions to the user that you create. See Overview of how the MySQL connector works for more details.

- Finalize the user’s permissions:

mysql> FLUSH PRIVILEGES;

2.2.1.1. Permissions explained

| Permission/item | Description |

|---|---|

|

| enables the connector to select rows from tables in databases Note This is only used when performing a snapshot. |

|

|

enables the connector the use of the Note This is only used when performing a snapshot. |

|

|

enables the connector to see database names by issuing the Note This is only used when performing a snapshot. |

|

| enables the connector to connect to and read the MySQL server binlog. |

|

| enables the connector the use of following statements:

Important This is always required for the connector. |

|

| Identifies the database to which the permission apply. |

|

| Specifies the user to which the permissions are granted. |

|

| Specifies the password for the user. |

2.2.2. Enabling the MySQL binlog for Debezium

You must enable binary logging for MySQL replication. The binary logs record transaction updates for replication tools to propagate changes.

Prerequisites

- You must have a MySQL server.

- You should have appropriate MySQL user privileges.

Procedure

-

Check if the

log-binoption is already on or not.

mysql> SELECT variable_value as "BINARY LOGGING STATUS (log-bin) ::" FROM information_schema.global_variables WHERE variable_name='log_bin';

-

If

OFF, configure your MySQL server configuration file with the following:

See Binlog config properties for notes on each property.

server-id = 223344 1 log_bin = mysql-bin 2 binlog_format = ROW 3 binlog_row_image = FULL 4 expire_logs_days = 10 5

- Confirm your changes by checking the binlog status once more.

mysql> SELECT variable_value as "BINARY LOGGING STATUS (log-bin) ::" FROM information_schema.global_variables WHERE variable_name='log_bin';

2.2.2.1. Binlog configuration properties

| Number | Property | Description |

|---|---|---|

| 1 |

|

The value for the |

| 2 |

|

The value of |

| 3 |

|

The |

| 4 |

|

The |

| 5 |

|

This is the number of days for automatic binlog file removal. The default is Tip Set the value to match the needs of your environment. |

2.2.3. Enabling MySQL Global Transaction Identifiers for Debezium

Global transaction identifiers (GTIDs) uniquely identify transactions that occur on a server within a cluster. Though not required for the Debezium MySQL connector, using GTIDs simplifies replication and allows you to more easily confirm if master and slave servers are consistent.

GTIDs are only available from MySQL 5.6.5 and later. See the MySQL documentation for more details.

Prerequisites

- You must have a MySQL server.

- You must know basic SQL commands.

- You must have access to the MySQL configuration file.

Procedure

-

Enable

gtid_mode:

mysql> gtid_mode=ON

-

Enable

enforce_gtid_consistency:

mysql> enforce_gtid_consistency=ON

- Confirm the changes:

mysql> show global variables like '%GTID%';

response

+--------------------------+-------+ | Variable_name | Value | +--------------------------+-------+ | enforce_gtid_consistency | ON | | gtid_mode | ON | +--------------------------+-------+

2.2.3.1. Options explained

| Permission/item | Description |

|---|---|

|

| Boolean which specifies whether GTID mode of the MySQL server is enabled or not.

|

|

| Boolean which instructs the server whether or not to enforce GTID consistency by allowing the execution of statements that can be logged in a transactionally safe manner; required when using GTIDs.

|

2.2.4. Setting up session timeouts for Debezium

When an initial consistent snapshot is made for large databases, your established connection could timeout while the tables are being read. You can prevent this behavior by configuring interactive_timeout and wait_timeout in your MySQL configuration file.

Prerequisites

- You must have a MySQL server.

- You must know basic SQL commands.

- You must have access to the MySQL configuration file.

Procedure

-

Configure

interactive_timeout:

mysql> interactive_timeout=<duration-in-seconds>

-

Configure

wait_timeout:

mysql> wait_timeout= <duration-in-seconds>

2.2.4.1. Options explained

| Permission/item | Description |

|---|---|

|

| The number of seconds the server waits for activity on an interactive connection before closing it. Tip See MySQL’s documentation for more details. |

|

| The number of seconds the server waits for activity on a noninteractive connection before closing it. Tip See MySQL’s documentation for more details. |

2.2.5. Enabling query log events for Debezium

You might want to see the original SQL statement for each binlog event. Enabling the binlog_rows_query_log_events option in the MySQL configuration file allows you to do this.

This option is only available from MySQL 5.6 and later.

Prerequisites

- You must have a MySQL server.

- You must know basic SQL commands.

- You must have access to the MySQL configuration file.

Procedure

-

Enable

binlog_rows_query_log_events:

mysql> binlog_rows_query_log_events=ON

2.2.5.1. Options explained

| Permission/item | Description |

|---|---|

|

|

Boolean which enables/disables support for including the original

|

2.3. Deploying the MySQL connector

2.3.1. Installing the MySQL connector

Installing the Debezium MySQL connector is a simple process whereby you only need to download the JAR, extract it to your Kafka Connect environment, and ensure the plug-in’s parent directory is specified in your Kafka Connect environment.

Prerequisites

- You have Zookeeper, Kafka, and Kafka Connect installed.

- You have MySQL Server installed and setup.

Procedure

- Download the Debezium MySQL connector.

- Extract the files into your Kafka Connect environment.

-

Add the plug-in’s parent directory to your Kafka Connect

plugin.path:

plugin.path=/kafka/connect

The above example assumes you have extracted the Debezium MySQL connector to the /kafka/connect/debezium-connector-mysql path.

- Restart your Kafka Connect process. This ensures the new JARs are picked up.

2.3.2. Configuring the MySQL connector

Typically, you configure the Debezium MySQL connector in a .yaml file using the configuration properties available for the connector.

Prerequisites

- You should have completed the installation process for the connector.

Procedure

-

Set the

"name"of the connector in the.yamlfile. - Set the configuration properties that you require for your Debezium MySQL connector.

For a complete list of configuration properties, see MySQL connector configuration properties.

MySQL connector example configuration

apiVersion: kafka.strimzi.io/v1beta1

kind: KafkaConnector

metadata:

name: inventory-connector 1

labels:

strimzi.io/cluster: my-connect-cluster

spec:

class: io.debezium.connector.mysql.MySqlConnector

tasksMax: 1 2

config: 3

database.hostname: mysql 4

database.port: 3306

database.user: debezium

database.password: dbz

database.server.id: 184054 5

database.server.name: dbserver1 6

database.whitelist: inventory 7

database.history.kafka.bootstrap.servers: my-cluster-kafka-bootstrap:9092 8

database.history.kafka.topic: schema-changes.inventory 9

- 1 1

- The name of the connector.

- 2 2

- Only one task should operate at any one time. Because the MySQL connector reads the MySQL server’s

binlog, using a single connector task ensures proper order and event handling. The Kafka Connect service uses connectors to start one or more tasks that do the work, and it automatically distributes the running tasks across the cluster of Kafka Connect services. If any of the services stop or crash, those tasks will be redistributed to running services. - 3 3

- The connector’s configuration.

- 4 4

- The database host, which is the name of the container running the MySQL server (

mysql). - 5 5 6

- A unique server ID and name. The server name is the logical identifier for the MySQL server or cluster of servers. This name will be used as the prefix for all Kafka topics.

- 7

- Only changes in the

inventorydatabase will be detected. - 8 9

- The connector will store the history of the database schemas in Kafka using this broker (the same broker to which you are sending events) and topic name. Upon restart, the connector will recover the schemas of the database that existed at the point in time in the

binlogwhen the connector should begin reading.

2.3.3. MySQL connector configuration properties

The configuration properties listed here are required to run the Debezium MySQL connector. There are also advanced MySQL connector properties whose default value rarely needs to be changed and therefore, they do not need to be specified in the connector configuration.

The Debezium MySQL connector supports pass-through configuration when creating the Kafka producer and consumer. See information about pass-through properties at the end of this section, and also see the Kafka documentation for more details about pass-through properties.

| Property | Default | Description |

|---|---|---|

| Unique name for the connector. Attempting to register again with the same name will fail. (This property is required by all Kafka Connect connectors.) | ||

|

The name of the Java class for the connector. Always use a value of | ||

|

| The maximum number of tasks that should be created for this connector. The MySQL connector always uses a single task and therefore does not use this value, so the default is always acceptable. | |

| IP address or hostname of the MySQL database server. | ||

|

| Integer port number of the MySQL database server. | |

| Name of the MySQL database to use when connecting to the MySQL database server. | ||

| Password to use when connecting to the MySQL database server. | ||

| Logical name that identifies and provides a namespace for the particular MySQL database server/cluster being monitored. The logical name should be unique across all other connectors, since it is used as a prefix for all Kafka topic names emanating from this connector. Only alphanumeric characters and underscores should be used. | ||

| random | A numeric ID of this database client, which must be unique across all currently-running database processes in the MySQL cluster. This connector joins the MySQL database cluster as another server (with this unique ID) so it can read the binlog. By default, a random number is generated between 5400 and 6400, though we recommend setting an explicit value. | |

| The full name of the Kafka topic where the connector will store the database schema history. | ||

| A list of host/port pairs that the connector will use for establishing an initial connection to the Kafka cluster. This connection will be used for retrieving database schema history previously stored by the connector, and for writing each DDL statement read from the source database. This should point to the same Kafka cluster used by the Kafka Connect process. | ||

| empty string |

An optional comma-separated list of regular expressions that match database names to be monitored; any database name not included in the whitelist will be excluded from monitoring. By default all databases will be monitored. May not be used with | |

| empty string |

An optional comma-separated list of regular expressions that match database names to be excluded from monitoring; any database name not included in the blacklist will be monitored. May not be used with | |

| empty string |

An optional comma-separated list of regular expressions that match fully-qualified table identifiers for tables to be monitored; any table not included in the whitelist will be excluded from monitoring. Each identifier is of the form databaseName.tableName. By default the connector will monitor every non-system table in each monitored database. May not be used with | |

| empty string |

An optional comma-separated list of regular expressions that match fully-qualified table identifiers for tables to be excluded from monitoring; any table not included in the blacklist will be monitored. Each identifier is of the form databaseName.tableName. May not be used with | |

| empty string | An optional comma-separated list of regular expressions that match the fully-qualified names of columns that should be excluded from change event message values. Fully-qualified names for columns are of the form databaseName.tableName.columnName, or databaseName.schemaName.tableName.columnName. | |

| n/a | An optional comma-separated list of regular expressions that match the fully-qualified names of character-based columns whose values should be truncated in the change event message values if the field values are longer than the specified number of characters. Multiple properties with different lengths can be used in a single configuration, although in each the length must be a positive integer. Fully-qualified names for columns are of the form databaseName.tableName.columnName. | |

| n/a |

An optional comma-separated list of regular expressions that match the fully-qualified names of character-based columns whose values should be replaced in the change event message values with a field value consisting of the specified number of asterisk ( | |

| n/a |

An optional comma-separated list of regular expressions that match the fully-qualified names of columns whose original type and length should be added as a parameter to the corresponding field schemas in the emitted change messages. The schema parameters | |

| n/a |

An optional comma-separated list of regular expressions that match the database-specific data type name of columns whose original type and length should be added as a parameter to the corresponding field schemas in the emitted change messages. The schema parameters | |

|

|

Time, date, and timestamps can be represented with different kinds of precision, including: | |

|

|

Specifies how the connector should handle values for | |

|

|

Specifies how BIGINT UNSIGNED columns should be represented in change events, including: | |

|

|

Boolean value that specifies whether the connector should publish changes in the database schema to a Kafka topic with the same name as the database server ID. Each schema change will be recorded using a key that contains the database name and whose value includes the DDL statement(s). This is independent of how the connector internally records database history. The default is | |

|

|

Boolean value that specifies whether the connector should include the original SQL query that generated the change event. | |

|

|

Specifies how the connector should react to exceptions during deserialization of binlog events. | |

|

|

Specifies how the connector should react to binlog events that relate to tables that are not present in internal schema representation (i.e. internal representation is not consistent with database) | |

|

|

Positive integer value that specifies the maximum size of the blocking queue into which change events read from the database log are placed before they are written to Kafka. This queue can provide backpressure to the binlog reader when, for example, writes to Kafka are slower or if Kafka is not available. Events that appear in the queue are not included in the offsets periodically recorded by this connector. Defaults to 8192, and should always be larger than the maximum batch size specified in the | |

|

| Positive integer value that specifies the maximum size of each batch of events that should be processed during each iteration of this connector. Defaults to 2048. | |

|

| Positive integer value that specifies the number of milliseconds the connector should wait during each iteration for new change events to appear. Defaults to 1000 milliseconds, or 1 second. | |

|

| A positive integer value that specifies the maximum time in milliseconds this connector should wait after trying to connect to the MySQL database server before timing out. Defaults to 30 seconds. | |

|

A comma-separated list of regular expressions that match source UUIDs in the GTID set used to find the binlog position in the MySQL server. Only the GTID ranges that have sources matching one of these include patterns will be used. May not be used with | ||

|

A comma-separated list of regular expressions that match source UUIDs in the GTID set used to find the binlog position in the MySQL server. Only the GTID ranges that have sources matching none of these exclude patterns will be used. May not be used with | ||

|

|

Controls whether a tombstone event should be generated after a delete event. | |

| empty string |

A semi-colon list of regular expressions that match fully-qualified tables and columns to map a primary key. |

2.3.3.1. Advanced MySQL connector properties

The following table describes advanced MySQL connector properties.

| Property | Default | Description |

|---|---|---|

|

| A boolean value that specifies whether a separate thread should be used to ensure the connection to the MySQL server/cluster is kept alive. | |

|

| Boolean value that specifies whether built-in system tables should be ignored. This applies regardless of the table whitelist or blacklists. By default system tables are excluded from monitoring, and no events are generated when changes are made to any of the system tables. | |

|

| An integer value that specifies the maximum number of milliseconds the connector should wait during startup/recovery while polling for persisted data. The default is 100ms. | |

|

|

The maximum number of times that the connector should attempt to read persisted history data before the connector recovery fails with an error. The maximum amount of time to wait after receiving no data is | |

|

|

Boolean value that specifies if connector should ignore malformed or unknown database statements or stop processing and let operator to fix the issue. The safe default is | |

|

|

Boolean value that specifies if connector should should record all DDL statements or (when | |

|

|

Specifies whether to use an encrypted connection. The default is

The

The

The

The | |

| 0 |

The size of a look-ahead buffer used by the binlog reader. | |

|

|

Specifies the criteria for running a snapshot upon startup of the connector. The default is

| |

|

|

Controls if and how long the connector holds onto the global MySQL read lock (preventing any updates to the database) while it is performing a snapshot. There are three possible values

| |

|

Controls which rows from tables will be included in snapshot. | ||

|

| During a snapshot operation, the connector will query each included table to produce a read event for all rows in that table. This parameter determines whether the MySQL connection will pull all results for a table into memory (which is fast but requires large amounts of memory), or whether the results will instead be streamed (can be slower, but will work for very large tables). The value specifies the minimum number of rows a table must contain before the connector will stream results, and defaults to 1,000. Set this parameter to '0' to skip all table size checks and always stream all results during a snapshot. | |

|

|

Controls how frequently the heartbeat messages are sent. | |

|

|

Controls the naming of the topic to which heartbeat messages are sent. | |

|

A semicolon separated list of SQL statements to be executed when a JDBC connection (not the transaction log reading connection) to the database is established. Use doubled semicolon (';;') to use a semicolon as a character and not as a delimiter. | ||

|

An interval in milli-seconds that the connector should wait before taking a snapshot after starting up; | ||

| Specifies the maximum number of rows that should be read in one go from each table while taking a snapshot. The connector will read the table contents in multiple batches of this size. | ||

|

| Positive integer value that specifies the maximum amount of time (in milliseconds) to wait to obtain table locks when performing a snapshot. If table locks cannot be acquired in this time interval, the snapshot will fail. See How the MySQL connector performs database snapshots. | |

|

MySQL allows user to insert year value as either 2-digit or 4-digit. In case of two digits the value is automatically mapped to 1970 - 2069 range. This is usually done by database. | ||

|

| Whether field names will be sanitized to adhere to Avro naming requirements. |

2.3.3.2. Pass-through configuration properties

The MySQL connector also supports pass-through configuration properties that are used when creating the Kafka producer and consumer. Specifically, all connector configuration properties that begin with the database.history.producer. prefix are used (without the prefix) when creating the Kafka producer that writes to the database history. All properties that begin with the prefix database.history.consumer. are used (without the prefix) when creating the Kafka consumer that reads the database history upon connector start-up.

For example, the following connector configuration properties can be used to secure connections to the Kafka broker:

database.history.producer.security.protocol=SSL database.history.producer.ssl.keystore.location=/var/private/ssl/kafka.server.keystore.jks database.history.producer.ssl.keystore.password=test1234 database.history.producer.ssl.truststore.location=/var/private/ssl/kafka.server.truststore.jks database.history.producer.ssl.truststore.password=test1234 database.history.producer.ssl.key.password=test1234 database.history.consumer.security.protocol=SSL database.history.consumer.ssl.keystore.location=/var/private/ssl/kafka.server.keystore.jks database.history.consumer.ssl.keystore.password=test1234 database.history.consumer.ssl.truststore.location=/var/private/ssl/kafka.server.truststore.jks database.history.consumer.ssl.truststore.password=test1234 database.history.consumer.ssl.key.password=test1234

2.3.3.3. Pass-through properties for database drivers

In addition to the pass-through properties for the Kafka producer and consumer, there are pass-through properties for database drivers. These properties have the database. prefix. For example, database.tinyInt1isBit=false is passed to the JDBC URL.

2.3.4. MySQL connector monitoring metrics

The Debezium MySQL connector has three metric types in addition to the built-in support for JMX metrics that Zookeeper, Kafka, and Kafka Connect have.

- snapshot metrics; for monitoring the connector when performing snapshots

- binlog metrics; for monitoring the connector when reading CDC table data

- schema history metrics; for monitoring the status of the connector’s schema history

Refer to the monitoring documentation for details of how to expose these metrics via JMX.

2.3.4.1. Snapshot metrics

The MBean is debezium.mysql:type=connector-metrics,context=snapshot,server=<database.server.name>.

| Attribute | Type | Description |

|---|---|---|

|

|

| The total number of tables that are being included in the snapshot. |

|

|

| The number of tables that the snapshot has yet to copy. |

|

|

| Whether the connector currently holds a global or table write lock. |

|

|

| Whether the snapshot was started. |

|

|

| Whether the snapshot was aborted. |

|

|

| Whether the snapshot completed. |

|

|

| The total number of seconds that the snapshot has taken so far, even if not complete. |

|

|

| Map containing the number of rows scanned for each table in the snapshot. Tables are incrementally added to the Map during processing. Updates every 10,000 rows scanned and upon completing a table. |

|

|

| The last snapshot event that the connector has read. |

|

|

| The number of milliseconds since the connector has read and processed the most recent event. |

|

|

| The total number of events that this connector has seen since last started or reset. |

|

|

| The number of events that have been filtered by whitelist or blacklist filtering rules configured on the connector. |

|

|

| The list of tables that are monitored by the connector. |

|

|

| The length of the queue used to pass events between snapshot reader and the main Kafka Connect loop. |

|

|

| The free capacity of the queue used to pass events between snapshot reader and the main Kafka Connect loop. |

2.3.4.2. Binlog metrics

The MBean is debezium.mysql:type=connector-metrics,context=binlog,server=<database.server.name>.

The transaction-related attributes are only available if binlog event buffering is enabled. See binlog.buffer.size in the advanced connector configuration properties for more details.

| Attribute | Type | Description |

|---|---|---|

|

|

| Flag that denotes whether the connector is currently connected to the MySQL server. |

|

|

| The name of the binlog filename that the connector has most recently read. |

|

|

| The most recent position (in bytes) within the binlog that the connector has read. |

|

|

| Flag that denotes whether the connector is currently tracking GTIDs from MySQL server. |

|

|

| The string representation of the most recent GTID set seen by the connector when reading the binlog. |

|

|

| The last binlog event that the connector has read. |

|

|

| The number of seconds since the connector has read and processed the most recent event. |

|

|

| The number of seconds between the last event’s MySQL timestamp and the connector processing it. The values will incorporate any differences between the clocks on the machines where the MySQL server and the MySQL connector are running. |

|

|

| The number of milliseconds between the last event’s MySQL timestamp and the connector processing it. The values will incorporate any differences between the clocks on the machines where the MySQL server and the MySQL connector are running. |

|

|

| The total number of events that this connector has seen since last started or reset. |

|

|

| The number of events that have been skipped by the MySQL connector. Typically events are skipped due to a malformed or unparseable event from MySQL’s binlog. |

|

|

| The number of events that have been filtered by whitelist or blacklist filtering rules configured on the connector. |

|

|

| The number of disconnects by the MySQL connector. |

|

|

| The coordinates of the last received event. |

|

|

| Transaction identifier of the last processed transaction. |

|

|

| The last binlog event that the connector has read. |

|

|

| The number of milliseconds since the connector has read and processed the most recent event. |

|

|

| The list of tables that are monitored by Debezium. |

|

|

| The length of the queue used to pass events between binlog reader and the main Kafka Connect loop. |

|

|

| The free capacity of the queue used to pass events between binlog reader and the main Kafka Connect loop. |

|

|

| The number of processed transactions that were committed. |

|

|

| The number of processed transactions that were rolled back and not streamed. |

|

|

|

The number of transactions that have not conformed to expected protocol |

|

|

|

The number of transactions that have not fitted into the look-ahead buffer. Should be significantly smaller than |

2.3.4.3. Schema history metrics

The MBean is debezium.mysql:type=connector-metrics,context=schema-history,server=<database.server.name>.

| Attribute | Type | Description |

|---|---|---|

|

|

|

One of |

|

|

| The time in epoch seconds at what recovery has started. |

|

|

| The number of changes that were read during recovery phase. |

|

|

| The total number of schema changes applie during recovery and runtime. |

|

|

| The number of milliseconds that elapsed since the last change was recovered from the history store. |

|

|

| The number of milliseconds that elapsed since the last change was applied. |

|

|

| The string representation of the last change recovered from the history store. |

|

|

| The string representation of the last applied change. |

2.4. MySQL connector common issues

2.4.1. Configuration and startup errors

The Debezium MySQL connector fails, reports an error, and stops running when the following startup errors occur:

- The connector’s configuration is invalid.

- The connector cannot connect to the MySQL server using the specified connectivity parameters.

- The connector is attempting to restart at a position in the binlog where MySQL no longer has the history available.

If you receive any of these errors, you receive more details in the error message. The error message also contains workarounds where possible.

2.4.3. Kafka Connect stops

There are three scenarios that cause some issues when Kafka Connect stops:

2.4.3.1. Kafka Connect stops gracefully

When Kafka Connect stops gracefully, there is only a short delay while the Debezium MySQL connector tasks are stopped and restarted on new Kafka Connect processes.

2.4.3.2. Kafka Connect process crashes

If Kafka Connect crashes, the process stops and any Debezium MySQL connector tasks terminate without their most recently-processed offsets being recorded. In distributed mode, Kafka Connect restarts the connector tasks on other processes. However, the MySQL connector resumes from the last offset recorded by the earlier processes. This means that the replacement tasks may generate some of the same events processed prior to the crash, creating duplicate events.

Each change event message includes source-specific information about:

- the event origin

- the MySQL server’s event time

- the binlog filename and position

- GTIDs (if used)

2.4.4. MySQL purges binlog files

If the Debezium MySQL connector stops for too long, the MySQL server purges older binlog files and the connector’s last position may be lost. When the connector is restarted, the MySQL server no longer has the starting point and the connector performs another initial snapshot. If the snapshot is disabled, the connector fails with an error.

See How the MySQL connector performs database snapshots for more information on initial snapshots.

Chapter 3. Debezium Connector for PostgreSQL

Debezium’s PostgreSQL Connector can monitor and record row-level changes in the schemas of a PostgreSQL database.

The first time it connects to a PostgreSQL server/cluster, it reads a consistent snapshot of all of the schemas. When that snapshot is complete, the connector continuously streams the changes that were committed to PostgreSQL 9.6 or later and generates corresponding insert, update and delete events. All of the events for each table are recorded in a separate Kafka topic, where they can be easily consumed by applications and services.

3.1. Overview

PostgreSQL’s logical decoding feature was first introduced in version 9.4 and is a mechanism which allows the extraction of the changes which were committed to the transaction log and the processing of these changes in a user-friendly manner via the help of an output plug-in. This output plug-in must be installed prior to running the PostgreSQL server and enabled together with a replication slot in order for clients to be able to consume the changes.

PostgreSQL connector contains two different parts which work together in order to be able to read and process server changes:

- A logical decoding output plug-in, which has to be installed and configured in the PostgreSQL server.

- Java code (the actual Kafka Connect connector) which reads the changes produced by the plug-in, using PostgreSQL’s streaming replication protocol, via the PostgreSQL JDBC driver

The connector then produces a change event for every row-level insert, update, and delete operation that was received, recording all the change events for each table in a separate Kafka topic. Your client applications read the Kafka topics that correspond to the database tables they’re interested in following, and react to every row-level event it sees in those topics.

PostgreSQL normally purges WAL segments after some period of time. This means that the connector does not have the complete history of all changes that have been made to the database. Therefore, when the PostgreSQL connector first connects to a particular PostgreSQL database, it starts by performing a consistent snapshot of each of the database schemas. After the connector completes the snapshot, it continues streaming changes from the exact point at which the snapshot was made. This way, we start with a consistent view of all of the data, yet continue reading without having lost any of the changes made while the snapshot was taking place.

The connector is also tolerant of failures. As the connector reads changes and produces events, it records the position in the write-ahead log with each event. If the connector stops for any reason (including communication failures, network problems, or crashes), upon restart it simply continues reading the WAL where it last left off. This includes snapshots: if the snapshot was not completed when the connector is stopped, upon restart it will begin a new snapshot.

3.1.1. Logical decoding output plug-in

The pgoutput logical decoder is the only supported logical decoder in the Tecnhology Preview release of Debezium.

pgoutput, the standard logical decoding plug-in in PostgreSQL 10+, is maintained by the Postgres community, and is also used by Postgres for logical replication. The pgoutput plug-in is always present, meaning that no additional libraries must be installed, and the connector will interpret the raw replication event stream into change events directly.

The connector’s functionality relies on PostgreSQL’s logical decoding feature. Please be aware of the following limitations which are also reflected by the connector:

- Logical Decoding does not support DDL changes: this means that the connector is unable to report DDL change events back to consumers.

-

Logical Decoding replication slots are only supported on

primaryservers: this means that when there is a cluster of PostgreSQL servers, the connector can only run on the activeprimaryserver. It cannot run onhotorwarmstandby replicas. If theprimaryserver fails or is demoted, the connector will stop. Once theprimaryhas recovered the connector can simply be restarted. If a different PostgreSQL server has been promoted toprimary, the connector configuration must be adjusted before the connector is restarted. Make sure you read more about how the connector behaves when things go wrong.

Debezium currently supports only databases with UTF-8 character encoding. With a single byte character encoding it is not possible to correctly process strings containing extended ASCII code characters.

3.2. Setting up PostgreSQL