Development Guide

Red Hat JBoss BPM Suite Development Guide for Red Hat JBoss Developers

Abstract

Part I. Overview

Chapter 1. About This Guide

This guide is intended for users who are implementing a standalone Red Hat JBoss BRMS solution or the complete Red Hat JBoss BPM Suite solution. It discusses the following topics:

- Detailed Architecture of Red Hat JBoss BRMS and Red Hat JBoss BPM Suite.

- Detailed description of how to author, test, debug, and package simple and complex business rules and processes using Integrated Development environment (IDE).

- Red Hat JBoss BRMS runtime environment.

- Domain specific languages (DSLs) and how to use them in a rule.

- Complex event processing.

This guide comprises the following sections:

Overview

This section provides detailed information on Red Hat JBoss BRMS and Red Hat JBoss BPM suite, their architecture, key components. It also discusses the role of Maven in project building and deploying.

All About Rules

This section provides details on all you have to know to author rules with Red Hat JBoss Developer Studio. It describes the rule algorithms, rule structure, components, advanced conditions, constraints, commands, Domain Specific Languages and Complex Event Processing. It provides details on how to use the various views, editors, and perspectives that Red Hat JBoss Developer Studio offers.

All About Processes

This section describes what comprises a business process and how you can author and test them using Red Hat JBoss Developer Studio.

KIE

This section highlights the KIE API with detailed description of how to create, build, deploy, and run KIE projects.

Appendix

This section comprises important reference material such as key knowledge terms, and examples.

1.1. Audience

This book has been designed to be understood by:

- Author of rules and processes who are responsible for authoring and testing business rules and processes using Red Hat JBoss Developer Studio.

- Java application developers responsible for developing and integrating business rules and processes into Java and Java EE enterprise applications.

1.2. Prerequisites

Users of this guide must meet one or more of the following prerequisites:

- Basic Java/Java EE programming experience

- Knowledge of the Eclipse IDE, Maven, and GIT

Chapter 2. Red Hat JBoss BRMS and Red Hat JBoss BPM Suite Architecture

2.1. Red Hat JBoss Business Rules Management System

Red Hat JBoss BRMS is an open source business rule management system that provides rules development, access, change, and management capabilities. In today’s world, when IT organizations consistently face changes in terms of policies, new products, government imposed regulations, a system like JBoss BRMS makes it easy by separating business logic from the underlying code. It includes a rule engine, a rules development environment, a management system, and a repository. It allows both developers and business analysts to view, manage, and verify business rules as they are executed within an IT application infrastructure.

Red Hat JBoss BRMS can be executed in any Java EE-compliant container. It supports an open choice of authoring and management consoles and language and decision table inputs.

2.1.1. Red Hat JBoss BRMS Key Components

Red Hat JBoss BRMS comprises the following components:

Drools Expert

Drools Expert is a pattern matching based rule engine that runs on Java EE application servers, Red Hat JBoss BRMS platform, or bundled with Java applications. It comprises an inference engine, a production memory, and a working memory. Rules are stored in the production memory and the facts that the inference engine matches the rules against, are stored in the working memory.

Business Central

Business Central is a web-based application intended for business analysts for creation and maintenance of business rules and rule artifacts. It is designed to ease creation, testing, and packaging of rules for business users.

Drools Flow

Drools flow provides business process capabilities to the Red Hat JBoss BRMS platform. This framework can be embedded into any Java application or can even run standalone on a server. A business process provides stepwise tasks using a flow chart, for the Rule Engine to execute.

Drools Fusion

Drools Fusion provides event processing capabilities to the Red Hat JBoss BRMS platform. Drools Fusion defines a set of goals to be achieved such as:

- Support events as first class citizens.

- Support detection, correlation, aggregation and composition of events.

- Support processing streams of events.

- Support temporal constraints in order to model the temporal relationships between events.

Drools Integrated Development Environment (IDE)

We encourage you to use Red Hat JBoss Developer Studio (JBDS) with Red Hat JBoss BRMS plug-ins to develop and test business rules. The Red Hat JBoss Developer Studio builds upon an extensible, open source Java-based IDE Eclipse providing platform and framework capabilities, making it ideal for Red Hat JBoss BRMS rules development.

2.1.2. Red Hat JBoss BRMS Features

The Red Hat JBoss BRMS provides the following key features:

- Centralized repository of business assets (JBoss BRMS artifacts).

- IDE tools to define and govern decision logic.

- Building, deploying, and testing the decision logic.

- Packages of business assets.

- Categorization of business assets.

- Integration with development tools.

- Business logic and data separation.

- Business logic open to reuse and changes.

- Easy to maintain business logic.

- Enables several stakeholders (business analysts, developer, administrators) to contribute in defining the business logic.

2.2. Red Hat JBoss Business Process Management Suite

Red Hat JBoss BPM Suite is an open source business process management system that combines business process management and business rules management. Red Hat JBoss BRMS offers tools to author rules and business processes, but does not provide tools to start or manage the business processes. Red Hat JBoss BPM Suite includes all the Red Hat JBoss BRMS functionality, with additional capabilities of business activity monitoring, starting business processes, and managing tasks using Business Central. Red Hat JBoss BPM Suite also provides a central repository to store rules and processes.

2.2.1. Red Hat JBoss BPM Suite Key Components

The Red Hat JBoss BPM Suite comprises the following components:

JBoss BPM Central (Business Central)

Business Central is a web-based application for creating, editing, building, managing, and monitoring Red Hat JBoss BPM Suite business assets. It also allows execution of business processes and management of tasks created by those processes.

Business Activity Monitoring Dashboards

The Business Activity Monitor (BAM) dashboard provides report generation capabilities. It enables you to use a pre-defined dashboard and even create your own customized dashboard.

Maven Artifact Repository

Red Hat JBoss BPM Suite projects are built as Apache Maven projects and the default location of the Maven repository is

WORKING_DIRECTORY/repositories/kie. You can specify an alternate repository location by changing theorg.guvnor.m2repo.dirproperty.Each project builds a JAR artifact file called a KJAR. You can store your project artifacts and dependent JAR files in this repository.

Execution Engine

The Red Hat JBoss BPM Suite execution engine is responsible for executing business processes and managing the tasks, which result from these processes. Business Central provides a user interface for executing processes and managing tasks.

NoteTo execute your business processes, you can use Business Central web application that bundles the execution engine, enabling a ready-to-use process execution environment. Alternatively, you can create your own execution server and embed the Red Hat JBoss BPM Suite and Red Hat JBoss BRMS libraries with your application using Java EE.

For example, if you are developing a web application, include the Red Hat JBoss BPM Suite or Red Hat JBoss BRMS libraries in the

WEB-INF/libfolder of your application.Business Central Repository

The business artifacts of a Red Hat JBoss BPM Suite project, such as process models, rules, and forms, are stored in Git repositories managed through the Business Central. You can also access these repositories outside of Business Central through the Git or SSH protocols.

2.2.2. Red Hat JBoss BPM Suite Features

Red Hat JBoss BPM Suite provides the following features:

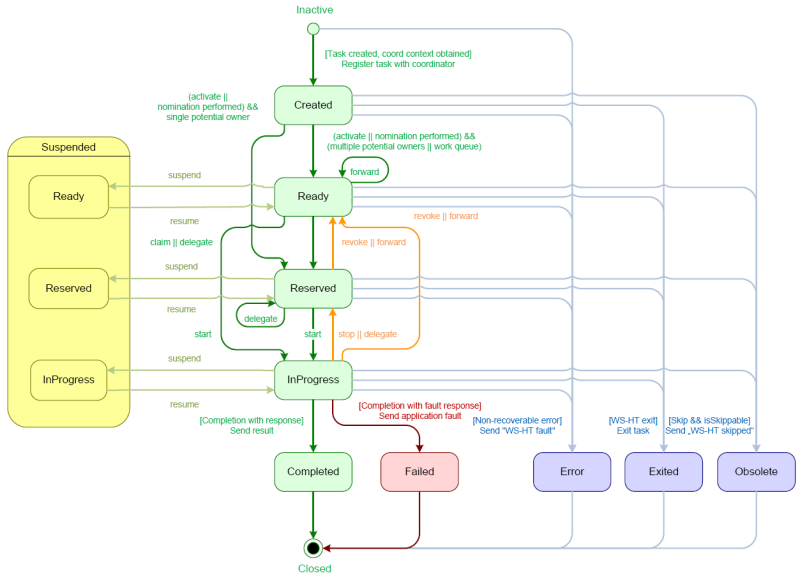

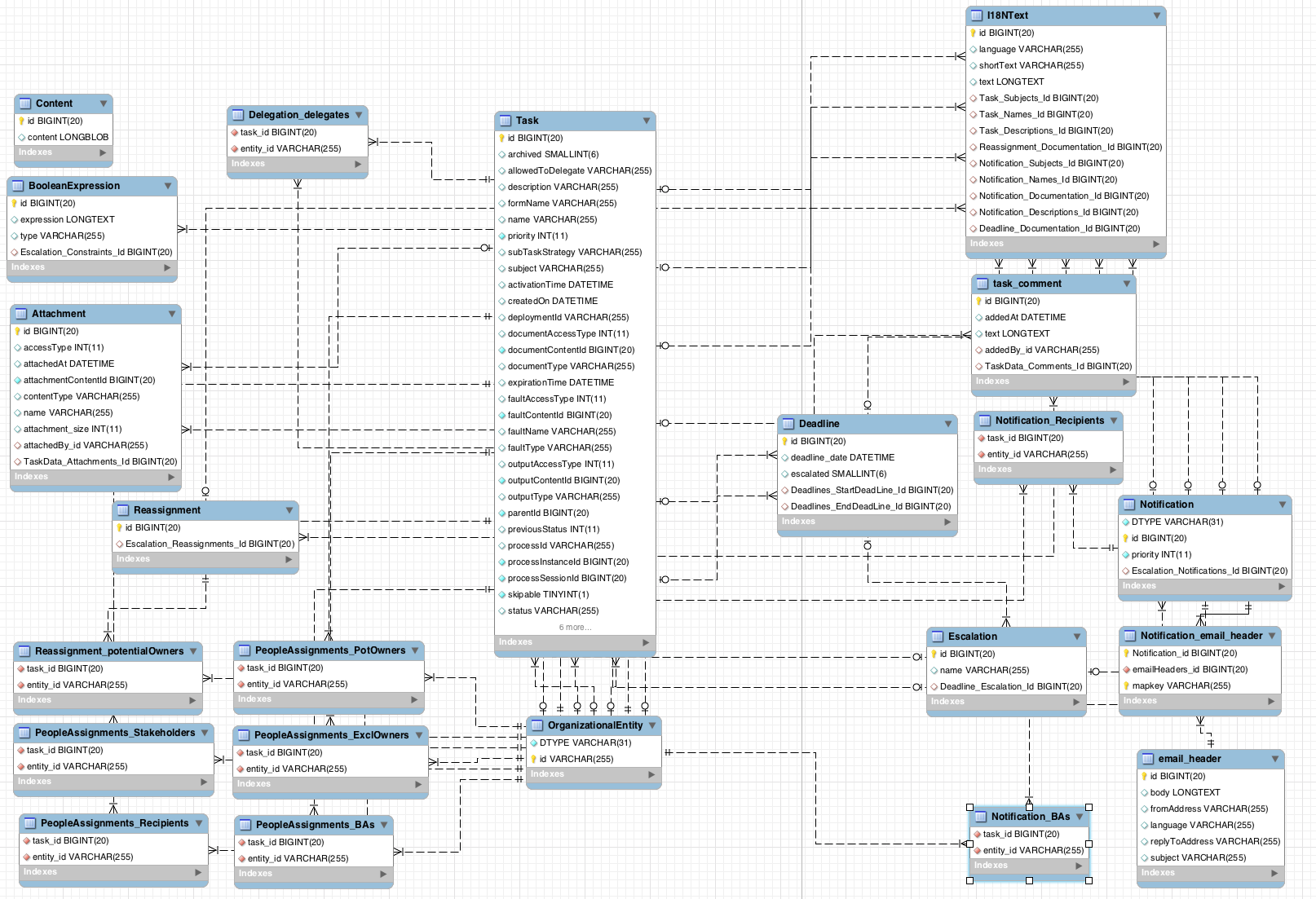

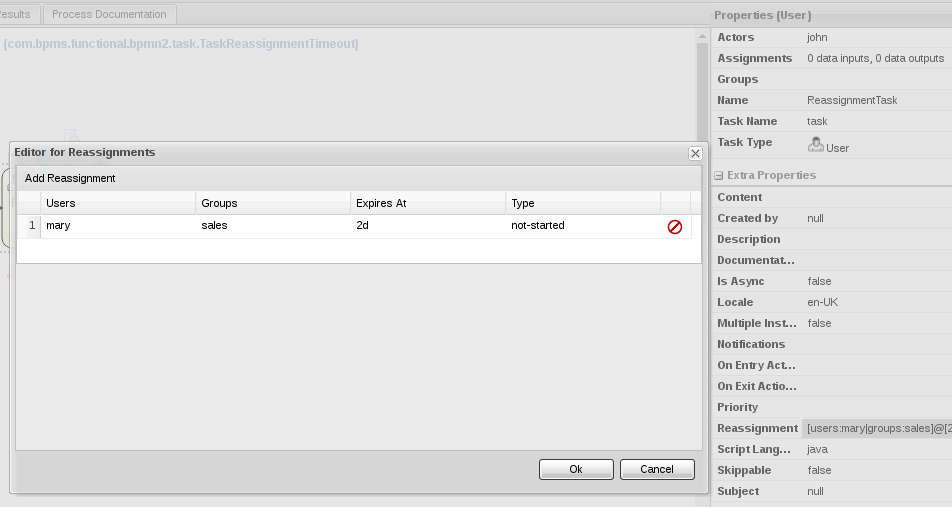

- Pluggable human task service for including tasks that need to be performed by human actors (based on the WS-HumanTask specification).

- Pluggable persistence and transactions (based on JPA/JTA).

- Web-based process designer to support the graphical creation and simulation of your business processes (drag and drop).

- Web-based data modeler and form modeler to support the creation of data models and process and task forms.

- Web-based, customizable dashboards and reporting.

A web-based workbench called Business Central, supporting the complete BPM life cycle:

- Modeling and deployment: to author your processes, rules, data models, forms and other assets.

- Execution: to execute processes, tasks, rules and events on the core runtime engine.

- Runtime Management: to work on assigned task, manage process instances.

- Reporting: to monitor the execution using Business Activity Monitoring capabilities.

- Eclipse-based developer tools to support the modeling, testing and debugging of processes.

- Remote API to process engine as a service (REST, JMS, Remote Java API).

- Integration with Maven, Spring, and OSGi.

2.3. Supported Platforms and APIs

For a list of supported containers and configurations, see section Supported Platforms of Red Hat JBoss BPM Suite Installation Guide.

The kie-api is a fully supported API and it is the recommended way to interact with your project. For further information about API supportability, see Knowledgebase article What Are the Public and Internal APIs for BPM Suite and BRMS 6?.

2.4. Use Cases

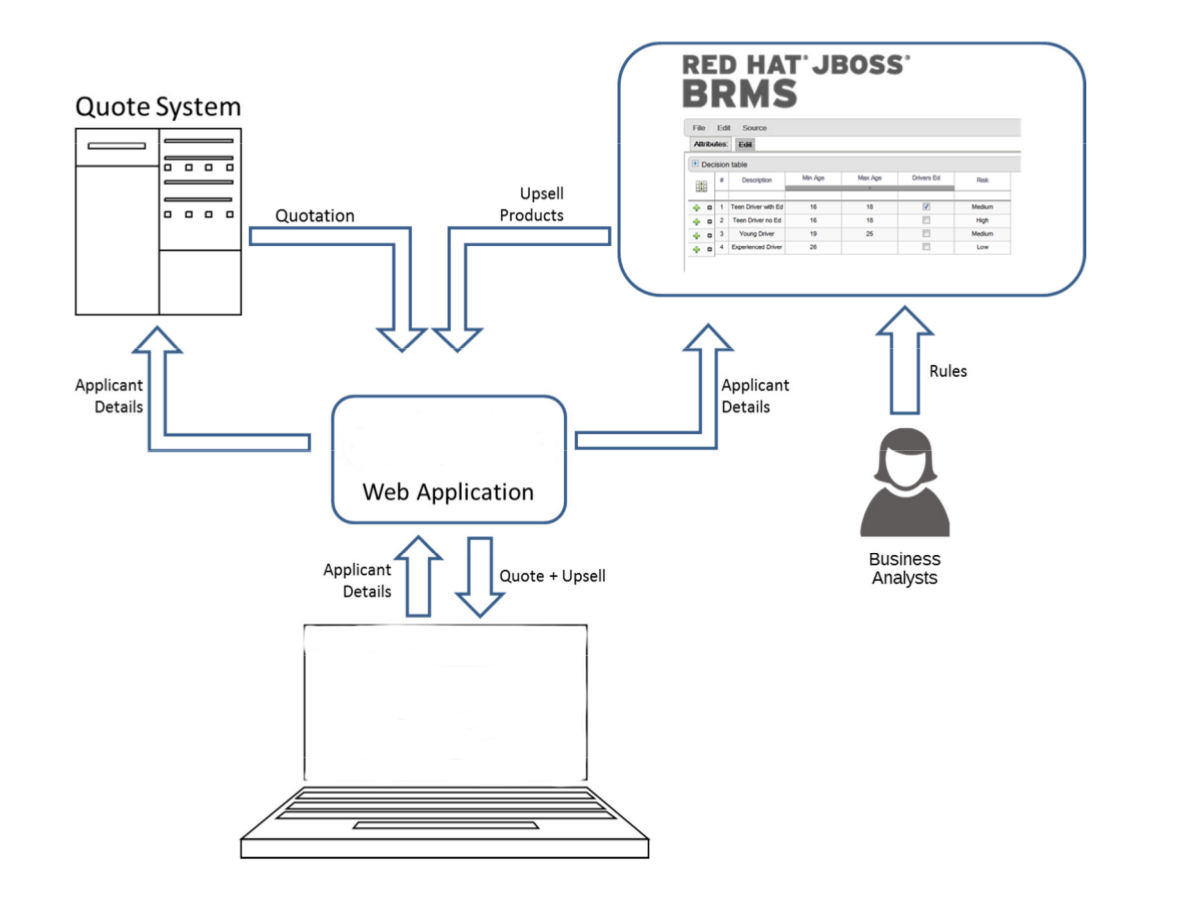

2.4.1. Use Case: Business Decision Management in Insurance Industry with Red Hat JBoss BRMS

Red Hat JBoss BRMS comprises a high performance rule engine, a rule repository, easy to use rule authoring tools, and complex event processing rule engine extensions. The following use case describes how these features of Red Hat JBoss BRMS are implemented in insurance industry.

The consumer insurance market is extremely competitive, and it is imperative that customers receive efficient, competitive, and comprehensive services when visiting an online insurance quotation solution. An insurance provider increased revenue from their online quotation solution by upselling relevant, additional products during the quotation process to the visitors of the solution.

The diagram below shows integration of Red Hat JBoss BRMS with the insurance provider’s infrastructure. This integration is fruitful in such a way that when a request for insurance is processed, Red Hat JBoss BRMS is consulted and appropriate additional products are presented with the insurance quotation.

Figure 2.1. JBoss BRMS Use Case: Insurance Industry Decision Making

Red Hat JBoss BRMS provides the decision management functionality, that automatically determines the products to present to the applicant based on the rules defined by the business analysts. The rules are implemented as decision tables, so they can be easily understood and modified without requiring additional support from IT.

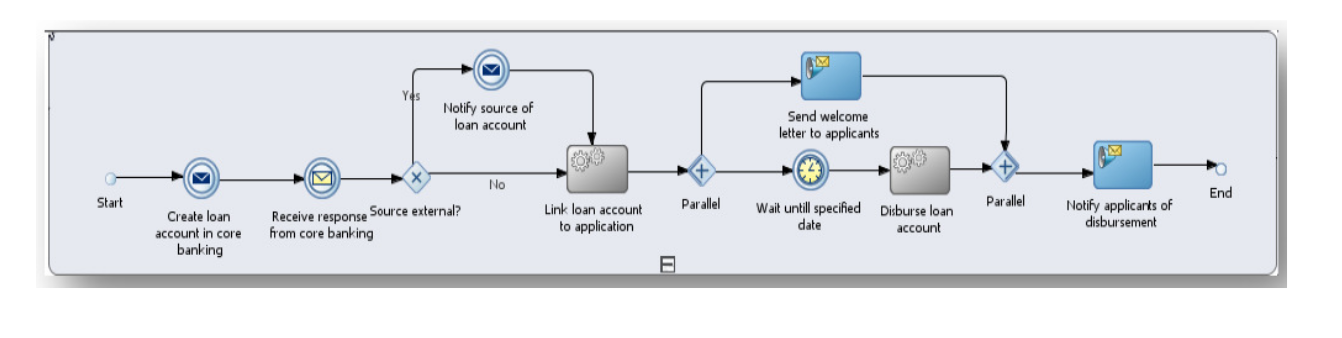

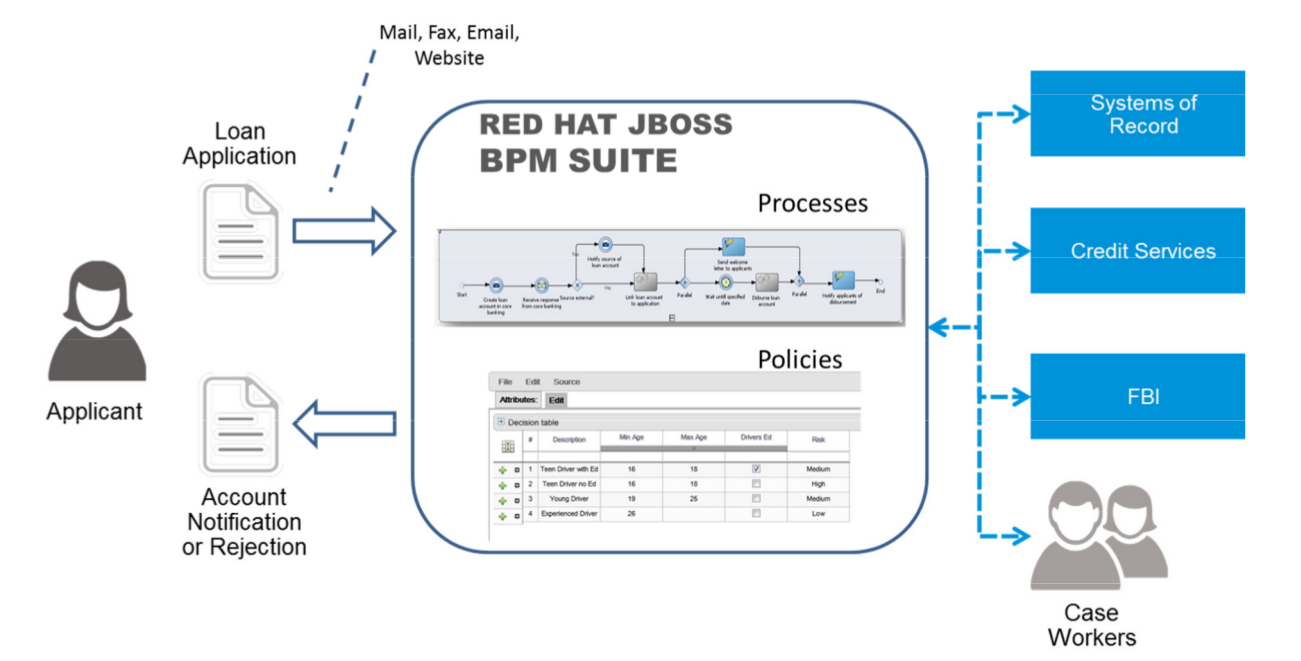

2.4.2. Use Case: Process-Based Solution in Loan Industry

This section describes a use case of deploying Red Hat JBoss BPM Suite to automate business processes (such as loan approval process) at a retail bank. This use case is a typical process-based specific deployment that might be the first step in a wider adoption of Red Hat JBoss BPM Suite throughout an enterprise. It leverages features of both business rules and processes of Red Hat JBoss BPM Suite.

A retail bank offers several types of loan products each with varying terms and eligibility requirements. Customers requiring a loan must file a loan application with the bank. The bank then processes the application in several steps, such as verifying eligibility, determining terms, checking for fraudulent activity, and determining the most appropriate loan product. Once approved, the bank creates and funds a loan account for the applicant, who can then access funds. The bank must be sure to comply with all relevant banking regulations at each step of the process, and has to manage its loan portfolio to maximize profitability. Policies are in place to aid in decision making at each step, and those policies are actively managed to optimize outcomes for the bank.

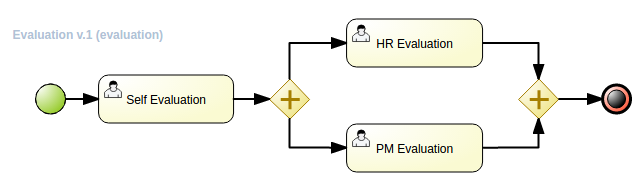

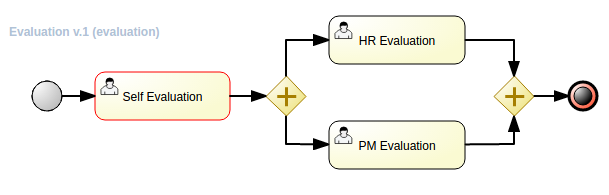

Business analysts at the bank model the loan application processes using the BPMN2 authoring tools (Process Designer) in Red Hat JBoss BPM Suite. Here is the process flow:

High-Level Loan Application Process Flow

Business rules are developed with the rule authoring tools in Red Hat JBoss BPM Suite to enforce policies and make decisions. Rules are linked with the process models to enforce the correct policies at each process step.

The bank’s IT organization deploys the Red Hat JBoss BPM Suite so that the entire loan application process can be automated.

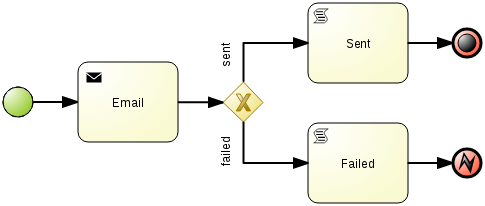

Figure 2.2. Loan Application Process Automation

The entire loan process and rules can be modified at any time by the bank’s business analysts. The bank is able to maintain constant compliance with changing regulations, and is able to quickly introduce new loan products and improve loan policies in order to compete effectively and drive profitability.

Chapter 3. Apache Maven

Apache Maven is a distributed build automation tool used in Java application development to build and manage software projects. Apart from building, publishing, and deploying capabilities, using Maven for your Red Hat JBoss BRMS and Red Hat JBoss BPM suite projects ensures the following:

- The build process is easy and a uniform build system is implemented across projects.

- All of the required JAR files for a project are made available at compile time.

- A proper project structure is configured.

- Dependencies and versions are well managed.

- No need for additional build processing, as Maven builds output into a number of predefined types, such as JAR and WAR.

3.1. Maven Repositories

Maven uses repositories to store Java libraries, plug-ins, and other build artifacts. These repositories can be local or remote. Red Hat JBoss BRMS and Red Hat JBoss BPM Suite products maintain local and remote maven repositories that you can add to your project for accessing the rules, processes, events, and other project dependencies. You must configure Maven to use these repositories and the Maven Central Repository to provide correct build functionality.

When building projects and archetypes, Maven dynamically retrieves Java libraries and Maven plug-ins from local or remote repositories. Doing so promotes sharing and reuse of dependencies across projects.

3.2. Using the Maven Repository in Your Project

You can direct Maven to use the Red Hat JBoss Enterprise Application Platform Maven repository in your project in one of the following ways:

-

Configure the Project Object Model (POM) file (

pom.xml). -

Modify the Maven settings file (

settings.xml).

The recommended approach is to direct Maven to use the Red Hat JBoss Enterprise Application Platform Maven repository across all projects by using the Maven global or user settings.

From version 6.1.0 onwards, Red Hat JBoss BPM Suite and Red Hat JBoss BRMS are designed to be used in combination with Red Hat JBoss Middleware Maven Repository and Maven Central repository as dependency sources. Ensure that both repositories are available for project builds.

3.3. Maven Project Configuration File

To use Maven for building and managing your Red Hat JBoss BRMS and Red Hat JBoss BPM Suite projects, you must configure your projects to be built with Maven. To do so, Maven provides the POM file (pom.xml) that holds configuration details for your project.

pom.xml is an XML file that contains information about the project (such as project name, version, description, developers, mailing list, and license), and build details (such as dependencies, location of the source, test, target directories, repositories, and plug-ins).

When you generate a Maven project, a pom.xml file is automatically generated. You can edit pom.xml to add more dependencies and new repositories. Maven downloads all of the JAR files and the dependent JAR files from the Maven repository when you compile and package your project.

Find the schema for the pom.xml file at http://maven.apache.org/maven-v4_0_0.xsd.

For more information about POM files, see Apache Maven Project POM Reference.

3.4. Maven Settings File

The Maven settings file (settings.xml) is used to configure Maven execution. You can locate this file in the following locations:

-

In the Maven install directory at

$M2_HOME/conf/settings.xml. These settings are called global settings. -

In the user’s install directory at

$USER_HOME/.m2/settings.xml. These settings are called user settings. -

A custom location specified by the system property

kie.maven.settings.custom.

The settings used is a merge of the files located in these locations.

The following is an example of a Maven settings.xml file. Note the activeByDefault tag, which specifies the default profile. In the following example, it is a profile with a remote Maven repository.

<settings>

<profiles>

<profile>

<id>my-profile</id>

<activation>

<activeByDefault>true</activeByDefault>

</activation>

<repositories>

<repository>

<id>fusesource</id>

<url>http://repo.fusesource.com/nexus/content/groups/public/</url>

<snapshots>

<enabled>false</enabled>

</snapshots>

<releases>

<enabled>true</enabled>

</releases>

</repository>

...

</repositories>

</profile>

</profiles>

...

</settings>3.5. Dependency Management

In order to use the correct Maven dependencies in your Red Hat JBoss BPM Suite project, you must add relevant Bill Of Materials (BOM) files to the project’s pom.xml file. Adding the BOM files ensures that the correct versions of transitive dependencies from the provided Maven repositories are included in the project.

See the Supported Component Versions chapter of Red Hat JBoss BPM Suite Installation Guide to view the supported BOM components.

Declare the BOM in pom.xml. For example:

Example 3.1. BOM for Red Hat JBoss BPM Suite 6.4.0

<dependencyManagement>

<dependencies>

<dependency>

<groupId>org.jboss.bom.brms</groupId>

<artifactId>jboss-brms-bpmsuite-platform-bom</artifactId>

<version>6.4.2.GA-redhat-2</version>

<type>pom</type>

<scope>import</scope>

</dependency>

</dependencies>

</dependencyManagement>

<dependencies>

<!-- Your dependencies -->

</dependencies>To check the current BOM version, see the Supported Component Versions chapter of Red Hat JBoss BPM Suite Installation Guide.

Furthermore, declare dependencies needed for your project in the dependencies tag.

For a basic Red Hat JBoss BPM Suite project, declare the following dependencies:

Embedded jBPM Engine Dependencies

<dependency> <groupId>org.jbpm</groupId> <artifactId>jbpm-kie-services</artifactId> </dependency> <!-- Dependency needed for default WorkItemHandler implementations. --> <dependency> <groupId>org.jbpm</groupId> <artifactId>jbpm-workitems</artifactId> </dependency> <!-- Logging dependency. You can use any logging framework compatible with slf4j. --> <dependency> <groupId>ch.qos.logback</groupId> <artifactId>logback-classic</artifactId> <version>${logback.version}</version> </dependency> <dependency> <groupId>org.kie</groupId> <artifactId>kie-api</artifactId> </dependency>For a Red Hat JBoss BPM Suite project that uses CDI, declare the following dependencies:

CDI-Enabled jBPM Engine dependencies

<dependency> <groupId>org.kie</groupId> <artifactId>kie-api</artifactId> </dependency> <dependency> <groupId>org.jbpm</groupId> <artifactId>jbpm-kie-services</artifactId> </dependency> <dependency> <groupId>org.jbpm</groupId> <artifactId>jbpm-services-cdi</artifactId> </dependency>For a basic Red Hat JBoss BRMS project, declare the following dependencies:

Embedded Drools Engine Dependencies

<dependency> <groupId>org.drools</groupId> <artifactId>drools-compiler</artifactId> </dependency> <!-- Dependency for persistence support. --> <dependency> <groupId>org.drools</groupId> <artifactId>drools-persistence-jpa</artifactId> </dependency> <!-- Dependencies for decision tables, templates, and scorecards. For other assets, declare org.drools:drools-workbench-models-* dependencies. --> <dependency> <groupId>org.drools</groupId> <artifactId>drools-decisiontables</artifactId> </dependency> <dependency> <groupId>org.drools</groupId> <artifactId>drools-templates</artifactId> </dependency> <dependency> <groupId>org.drools</groupId> <artifactId>drools-scorecards</artifactId> </dependency> <!-- Dependency for loading KJARs from a Maven repository using KieScanner. --> <dependency> <groupId>org.kie</groupId> <artifactId>kie-ci</artifactId> </dependency> <!-- Dependency for loading KJARs from a Maven repository using KieScanner in an OSGi environment. --> <dependency> <groupId>org.kie</groupId> <artifactId>kie-ci-osgi</artifactId> </dependency> <dependency> <groupId>org.kie</groupId> <artifactId>kie-api</artifactId> </dependency>Do not use both

kie-ciandkie-ci-osgiin onepom.xmlfile.To use the Intelligent Process Server, declare the following dependencies:

Client Application Intelligent Process Server Dependencies

<dependency> <groupId>org.kie.server</groupId> <artifactId>kie-server-client</artifactId> </dependency> <dependency> <groupId>org.kie.server</groupId> <artifactId>kie-server-api</artifactId> </dependency> <!-- Dependency for Red Hat JBoss BRMS functionality. --> <dependency> <groupId>org.drools</groupId> <artifactId>drools-core</artifactId> </dependency> <dependency> <groupId>org.kie</groupId> <artifactId>kie-api</artifactId> </dependency>To create a remote client for Red Hat JBoss BPM Suite or Red Hat JBoss BRMS, declare the following dependencies:

Client Dependencies

<dependency> <groupId>org.kie.remote</groupId> <artifactId>kie-remote-client</artifactId> </dependency>To use assets in

KJARpackaging, the preferred way is to includekie-maven-plugin:Kie Maven Plugin

<!-- BOM does not resolve plugin versioning. Consult section Supported Components of Red Hat JBoss BPM Suite Installation Guide for newest version number. --> <packaging>kjar</packaging> <build> <plugins> <plugin> <groupId>org.kie</groupId> <artifactId>kie-maven-plugin</artifactId> <version>6.5.0.Final-redhat-7</version> <extensions>true</extensions> </plugin> </plugins> </build>For testing purposes, declare the following dependencies:

Testing Dependencies

<!-- JUnit dependency --> <dependency> <groupId>junit</groupId> <artifactId>junit</artifactId> <version>${junit.version}</version> <scope>test</scope> </dependency> <!-- Red Hat JBoss BPM Suite integration services dependency --> <dependency> <groupId>org.jbpm</groupId> <artifactId>jbpm-shared-services</artifactId> <classifier>btm</classifier> <scope>test</scope> </dependency> <!-- Logging dependency --> <dependency> <groupId>ch.qos.logback</groupId> <artifactId>logback-classic</artifactId> <version>${logback.version}</version> <scope>test</scope> </dependency> <!-- Persistence tests dependencies --> <dependency> <groupId>org.hibernate</groupId> <artifactId>hibernate-entitymanager</artifactId> <version>${hibernate.version}</version> <scope>test</scope> </dependency> <dependency> <groupId>org.hibernate</groupId> <artifactId>hibernate-core</artifactId> <version>${hibernate.core.version}</version> <scope>test</scope> </dependency> <dependency> <groupId>com.h2database</groupId> <artifactId>h2</artifactId> <version>${h2.version}</version> <scope>test</scope> </dependency> <dependency> <groupId>org.codehaus.btm</groupId> <artifactId>btm</artifactId> <version>${btm.version}</version> <scope>test</scope> </dependency> <dependency> <groupId>org.kie</groupId> <artifactId>kie-api</artifactId> </dependency>Alternatively, for extensive testing of Red Hat JBoss BPM Suite, include the

jbpm-testdependency. Note thatjbpm-testincludes some of the previous dependencies, for example thejunitdependency, dependencies required for persistence tests, and others.Declaring jbpm-test Dependency

<dependency> <groupId>org.jbpm</groupId> <artifactId>jbpm-test</artifactId> </dependency>To include the

jbpm-testdependency as part of your KJAR, set the dependency scope toprovided. Doing so ensures that the dependency is available at runtime, thereby avoiding unresolved dependency errors. The recommended practice is to use only business resources in your KJAR and not includejbpm-testdependency in it. It is a best practice to keep the test suite for the KJAR in a separate project.NoteIf you are deploying Red Hat JBoss BRMS or Red Hat JBoss BPM Suite on Red Hat JBoss EAP 7, you must make changes to the project BOM files. For more information on the BOM changes, see the Red Hat JBoss EAP Migration chapter in the Red Hat JBoss BPM Suite Migration Guide.

For more information on BOM usage in Red Hat JBoss EAP 7, see the Using Maven with JBoss EAP chapter in the Red Hat JBoss EAP Development Guide.

3.6. Integrated Maven Dependencies

Throughout the Red Hat JBoss BRMS and BPM Suite documentation, various code samples are presented with KIE API for the 6.1.x releases. These code samples will require Maven dependencies in the various pom.xml file and should be included like the following example:

<dependency>

<groupId>commons-logging</groupId>

<artifactId>commons-logging</artifactId>

<version>1.1.1-redhat-2</version>

<scope>compile</scope>

</dependency>All the Red Hat JBoss related product dependencies can be found at the following location: Red Hat Maven Repository.

3.7. Uploading Artifacts to Maven Repository

There may be scenarios when your project may fail to fetch dependencies from a remote repository configured in its pom.xml. In such cases, you can programmatically upload dependencies to Red Hat JBoss BPM Suite by uploading artifacts to the embedded maven repository through Business Central. Red Hat JBoss BPM Suite uses a servlet for the maven repository interactions. This servlet processes a GET request to download an artifact and a POST request to upload one. You can leverage the servlet’s POST request to upload an artifact to the repository using REST. To do this, implement the Http basic authentication and issue an HTTP POST request in the following format:

PROTOCOL://HOST_NAME:PORT/CONTEXT_ROOT/maven2/[GROUP_ID replacing '.' with '/']/ARTIFACT_ID/VERSION/ARTIFACT_ID-VERSION.jar

For example, to upload the org.slf4j:slf4j-api:1.7.7.jar, where ARTIFACT_ID is slf4j-api, GROUP_ID is slf4j, and VERSION is 1.7.7, the URI must be:

http://localhost:8080/business-central/maven2/org/slf4j/slf4j-api/1.7.7/slf4j-api-1.7.7.jar

The following example illustrates uploading a JAR located at /tmp directory as a user bpmsAdmin with the password abcd1234!, to an instance of Red Hat JBoss BPM Suite running locally:

package com.rhc.example;

import java.io.File;

import java.io.IOException;

import org.apache.http.HttpEntity;

import org.apache.http.HttpHost;

import org.apache.http.auth.AuthScope;

import org.apache.http.auth.UsernamePasswordCredentials;

import org.apache.http.client.AuthCache;

import org.apache.http.client.ClientProtocolException;

import org.apache.http.client.CredentialsProvider;

import org.apache.http.client.methods.CloseableHttpResponse;

import org.apache.http.client.methods.HttpPost;

import org.apache.http.client.protocol.HttpClientContext;

import org.apache.http.entity.mime.HttpMultipartMode;

import org.apache.http.entity.mime.MultipartEntityBuilder;

import org.apache.http.entity.mime.content.FileBody;

import org.apache.http.impl.auth.BasicScheme;

import org.apache.http.impl.client.BasicAuthCache;

import org.apache.http.impl.client.BasicCredentialsProvider;

import org.apache.http.impl.client.CloseableHttpClient;

import org.apache.http.impl.client.HttpClients;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

public class UploadMavenArtifact {

private static final Logger LOG = LoggerFactory.getLogger(UploadMavenArtifact.class);

public static void main(String[] args) {

// Maven coordinates:

String groupId = "com.rhc.example";

String artifactId = "bpms-upload-jar";

String version = "1.0.0-SNAPSHOT";

// File to upload:

File file = new File("/tmp/" + artifactId + "-" + version + ".jar");

// Server properties:

String protocol = "http";

String hostname = "localhost";

Integer port = 8080;

String username = "bpmsAdmin";

String password = "abcd1234!";

// Create the HttpEntity (body of our POST):

FileBody fileBody = new FileBody(file);

MultipartEntityBuilder builder = MultipartEntityBuilder.create();

builder.setMode(HttpMultipartMode.BROWSER_COMPATIBLE);

builder.addPart("upfile", fileBody);

HttpEntity entity = builder.build();

// Calculate the endpoint from the Maven coordinates:

String resource = "/business-central/maven2/" + groupId.replace('.', '/') + "/" + artifactId +"/" + version + "/" + artifactId + "-" + version + ".jar";

LOG.info("POST " + hostname + ":" + port + resource);

// Set up HttpClient to use Basic pre-emptive authentication with the provided credentials:

HttpHost target = new HttpHost(hostname, port, protocol);

CredentialsProvider credsProvider = new BasicCredentialsProvider();

credsProvider.setCredentials(

new AuthScope(target.getHostName(), target.getPort()),

new UsernamePasswordCredentials(username,password));

CloseableHttpClient httpclient = HttpClients.custom().setDefaultCredentialsProvider(credsProvider).build();

HttpPost httpPost = new HttpPost(resource);

httpPost.setEntity(entity);

AuthCache authCache = new BasicAuthCache();

BasicScheme basicAuth = new BasicScheme();

authCache.put(target, basicAuth);

HttpClientContext localContext = HttpClientContext.create();

localContext.setAuthCache(authCache);

try {

// Perform the HTTP POST:

CloseableHttpResponse response = httpclient.execute(target, httpPost, localContext);

LOG.info(response.toString());

// Now check your artifact repository!

} catch (ClientProtocolException e) {

LOG.error("Protocol Error", e);

throw new RuntimeException(e);

} catch (IOException e) {

LOG.error("IOException while getting response", e);

throw new RuntimeException(e);

}

}

}Alternative Maven Approach

An alternative Maven approach is to configure your projects pom.xml by adding the repository as shown below:

<distributionManagement>

<repository>

<id>guvnor-m2-repo</id>

<name>maven repo</name>

<url>http://localhost:8080/business-central/maven2/</url>

<layout>default</layout>

</repository>

</distributionManagement>

Once you specify the repository information in the pom.xml, add the corresponding configuration in settings.xml as shown below:

<server>

<id>guvnor-m2-repo</id>

<username>bpmsAdmin</username>

<password>abcd1234!</password>

<configuration>

<wagonProvider>httpclient</wagonProvider>

<httpConfiguration>

<all>

<usePreemptive>true</usePreemptive>

</all>

</httpConfiguration>

</configuration>

</server>

Now when you run the mvn deploy command, the JAR file gets uploaded.

3.8. Deploying Red Hat JBoss BPM Suite Artifacts to Red Hat JBoss Fuse

Red Hat JBoss Fuse is an open source Enterprise Service Bus (ESB) with an elastic footprint and is based on Apache Karaf. The 6.4 version of Red Hat JBoss BPM Suite supports deployment of runtime artifacts to Fuse.

With the 6.1 release, Red Hat JBoss BPM Suite runtime components (in the form of JARs) are OSGi enabled. The runtime engines JARs MANIFEST.MF files describe their dependencies, amongst other things. You can plug these JARs directly into an OSGi environment, like Fuse.

Red Hat JBoss BPM Suite uses a scanner to enable continuous integration, resolution, and fetching of artifacts from remote Maven repositories. This scanner, called KIE-CI, uses a native Maven parser called Plexus to parse Maven POMs. However, this parser is not OSGi compatible and fails to instantiate in an OSGi environment. KIE-CI automatically switches to a simpler POM parser called MinimalPomParser.

The MinimalPomParser is a very simple POM parser implementation provided by Drools and is limited in what it can parse. It ignores some POM file parts, such as the parent POM of a KJAR. This means that users must not rely on those POM features (such as dependencies declared in the parent POM in their KJARs) when using KIE-CI in an OSGi environment.

Separating Assets and Code

One of the main advantage of deploying Red Hat JBoss BPM Suite artifacts on Red Hat JBoss Fuse is that each bundle is isolated, running in its own classloader. This allows you to separate the logic (code) from the assets. Business users can produce and change the rules and processes (assets) and package them in their own bundle, keeping them separate from the project bundle (code), created by the developer team. Assets can be updated without needing to change the project code.

Chapter 4. Install and Set up Red Hat JBoss Developer Studio

Red Hat JBoss Developer Studio is the JBoss Integrated Development Environment (IDE) based on Eclipse. Get the latest Red Hat JBoss Developer Studio from the Red Hat Customer Portal. Red Hat JBoss Developer Studio provides plug-ins with tools and interfaces for Red Hat JBoss BRMS and Red Hat JBoss BPM Suite. These plugins are based on the community version of these products. So, the Red Hat JBoss BRMS plug-in is called the Drools plug-in and the Red Hat JBoss BPM Suite plug-in is called the jBPM plug-in.

See the Red Hat JBoss Developer Studio documentation for installation and setup instructions.

Due to an issue in the way multi-byte rule names are handled, you must ensure that the instance of Red Hat JBoss Developer Studio is started with the file encoding set to UTF-8. You can do this by editing the $JBDS_HOME/studio/jbdevstudio.ini file and adding the following property: "-Dfile.encoding=UTF-8".

4.1. Installing Red Hat JBoss Developer Studio Plug-ins

Get the latest Red Hat JBoss Developer Studio from the Red Hat Customer Portal. The Red Hat JBoss BRMS and Red Hat JBoss BPM Suite plug-ins for Red Hat JBoss Developer Studio are available using the update site.

Installing Red Hat JBoss BRMS and Red Hat JBoss BPM Suite Plug-ins in Red Hat JBoss Developer Studio

- Start Red Hat JBoss Developer Studio.

- Click Help → Install New Software.

- Click Add to enter the Add Repository menu.

-

Provide a name next to the Name field and add the following URL in the Location field:

https://devstudio.jboss.com/10.0/stable/updates/integration-stack/. - Click OK.

- Select the JBoss Business Process and Rule Development feature from the available options, click Next and then Next again.

- Read the license and accept it by selecting the appropriate radio button, and click Finish.

- Restart Red Hat JBoss Developer Studio after the installation process finishes.

4.2. Configuring Red Hat JBoss BRMS/BPM Suite Server

Red Hat JBoss Developer Studio can be configured to run the Red Hat JBoss BRMS and Red Hat JBoss BPM Suite server.

Configuring Red Hat JBoss BRMS and Red Hat JBoss BPM Suite Server

Open the Drools view: click Window → Open Perspective → Other, select Drools and click OK.

To open the Red Hat JBoss BPM Suite view, go to Window → Open Perspective → Other, select jBPM and click OK.

- Click Window → Show View → Other… and select Server → Servers to add the server view.

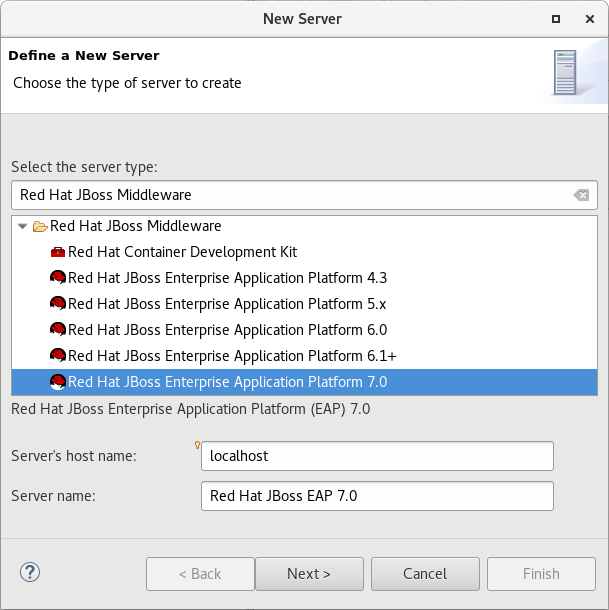

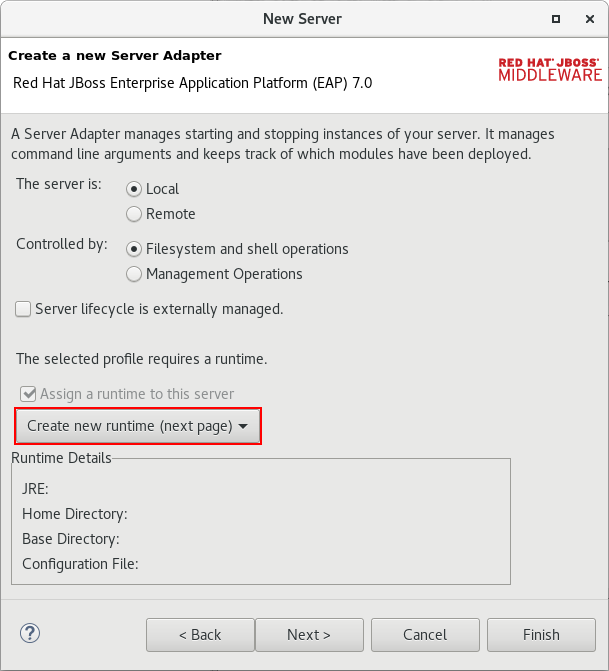

- Right click the Servers panel and select New → Server to open the server menu.

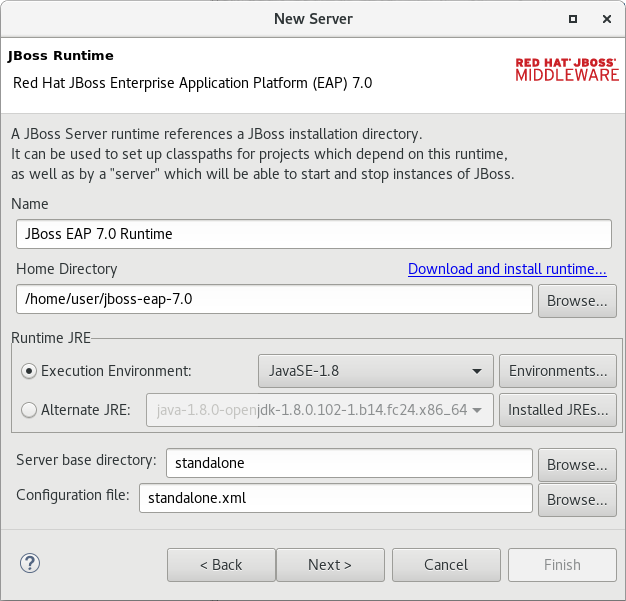

- Click JBoss Enterprise Middleware → JBoss Enterprise Application Platform 6.1+ and click Next to define the server.

Set the home directory by clicking Browse button. Navigate to the Red Hat JBoss EAP directory which has Red Hat JBoss BRMS installed.

For configuring Red Hat JBoss BPM Suite server, select the Red Hat JBoss EAP directory which has Red Hat JBoss BPM Suite installed.

- Provide a name for the server in the Name field, ensure that the configuration file is set, and click Finish.

4.3. Importing Projects from Git Repository into Red Hat JBoss Developer Studio

You can configure Red Hat JBoss Developer Studio to connect to a central Git asset repository. The repository stores rules, models, functions, and processes.

You can either clone a remote Git repository or import a local Git repository.

Cloning Remote Git Repository

- Select the server from the Server tab and click the start icon to start your server.

Start the Secure Shell server, if not running already, by using the following command. The command is Linux and Mac specific only. On these platforms, if

sshdhas already been started, this command fails. In that case, you may safely ignore this step./sbin/service sshd start- In Red Hat JBoss Developer Studio , select File → Import… and navigate to the Git folder. Open the Git folder to select Projects from Git and click Next.

- Select the repository source as Clone URI and click Next.

- Enter the details of the Git repository in the next window and click Next.

- Select the branch you wish to import in the following window and click Next.

- To define the local storage for this project, enter (or select) a non-empty directory, make any configuration changes and click Next.

- Import the project as a general project in the following window and click Next.

- Name the project and click Finish.

Importing Local Git Repository

- Select your server from the Server tab and click the start icon to start the server.

- In Red Hat JBoss Developer Studio, select File → Import… and navigate to the Git folder. Open the Git folder to select Projects from Git and click Next.

- Select the repository source as Existing local repository and click Next.

- Select the repository that is to be configured from the list of available repositories and click Next.

- In the dialog window that opens, select the Import as general project radio button from the Wizard for project import group and click Next.

- Name the project and click Finish.

Part II. All About Rules

Chapter 5. Rule Algorithms

5.1. PHREAK Algorithm

The new PHREAK algorithm is evolved from the RETE algorithm. While RETE is considered eager and data oriented, PHREAK on the other hand follows lazy and goal oriented approach. The RETE algorithm does a lot of work during the insert, update and delete actions in order to find partial matches for all rules. In case of PHREAK, this partial matching of rule is delayed deliberately.

The eagerness of RETE algorithm during rule matching wastes a lot of time in case of large systems as it does result in a rule firing eventually. PHREAK algorithm addresses this issue and therefore is able to handle large data more efficiently.

PHREAK is derived from a number of algorithms including the following LEAPS, RETE/UL and Collection-Oriented Match algorithms.

In addition to the enhancements listed in the Rete00 algorithm, PHREAK algorithm adds the following set of enhancements:

- Three layers of contextual memory: Node, Segment, and Rule memories.

- Rule, segment, and node based linking.

- Lazy (delayed) rule evaluation.

- Stack-based evaluations with pause and resume.

- Isolated rule evaluation.

- Set-oriented propagations.

5.2. Rule Evaluation With PHREAK Algorithm

When the rule engine starts, all the rules are unlinked. At this stage, there is no rule evaluation. The insert, update, and delete actions are queued before entering the beta network. The rule engine uses a simple heuristic—based on the rule most likely to result in firings—to calculate and select the next rule for evaluation. This delays the evaluation and firing of the other rules. When a rule has all the right input values populated, it gets linked in—a goal representing this rule is created and placed into a priority queue, which is ordered by salience. Each queue is associated with an AgendaGroup. The engine only evaluates rules for the active AgendaGroup by inspecting the queue and popping the goal for the rule with the highest salience. This means the work done shifts from the insert, update, delete phase to the fireAllRules phase. Only the rule for which the goal was created is evaluated, and other potential rule evaluations are delayed. While individual rules are evaluated, node sharing is still achieved through the process of segmentation.

Unlike the tuple-oriented RETE, the PHREAK propagation is collection-oriented. For the rule that is being evaluated, the engine accesses the first node and processes all queued insert, update, and delete actions. The results are added to a set, and the set is propagated to the child node. In the child node, all queued insert, update, and delete actions are processed, adding the results to the same set. Once finished, this set is propagated to the next child node and the same process repeats until it reaches the terminal node. This creates a batch process effect, which can provide performance advantages for certain rule constructs.

This linking and unlinking of rules happens through a layered bit mask system, based on network segmentation. When the rule network is built, segments are created for nodes that are shared by the same set of rules. A rule itself is made up from a path of segments. In case a rule does not share any node with any other rule, it becomes a single segment.

A bit-mask offset is assigned to each node in the segment. Furthermore, another bit mask is assigned to each segment in the rule’s path according to these rules:

- If there is at least one input, the node’s bit is set to the on state.

- If each node in a segment has its bit set to the on state, the segment’s bit is also set to the on state.

- If any node’s bit is set to the off state, the segment is also set to the off state.

- If each segment in the rule’s path is set to the on state, the rule is said to be linked in, and a goal is created to schedule the rule for evaluation.

The same bit-mask technique is used to also track dirty nodes, segments, and rules. This allows for an already linked rule to be scheduled for evaluation if it has been considered dirty since it was last evaluated. This ensures that no rule will ever evaluate partial matches.

As opposed to a single unit of memory in RETE, PHREAK has three levels of memory. This allows for much more contextual understanding during the evaluation of a rule.

PHREAK and Sequential Mode

The sequential mode is supported for the PHREAK algorithm: the modify and update rule statements are now allowed. Any rule that has not yet been evaluated will have access to data modified by the previous rules that used modify or update. This results in a more intuitive behavior of the sequential mode.

For example, consider the following rule:

rule "Rule1"

salience 100

when

$fact : MyFact( field1 == false )

then

System.out.println("Rule1 : " + $fact);

$fact.setField1(true);

update($fact);

end

rule "Rule2"

salience 95

when

$fact : MyFact( field1 == true )

then

System.out.println("Rule2 : " + $fact);

update($fact);

end

When you insert a MyFact with the value field1==false:

-

The ReteOO algorithm executes only

Rule1. -

The PHREAK algorithm executes both

Rule1andRule2.

For more information about the sequential mode, see Section 20.1.11.2.1, “Sequential Mode”.

5.3. Rete Algorithm

5.3.1. ReteOO

The Rete implementation used in BRMS is called ReteOO. It is an enhanced and optimized implementation of the Rete algorithm specifically for object-oriented systems. The Rete Algorithm has now been deprecated, and PHREAK is an enhancement of Rete. However, Rete can still be used by developers. This section describes how the Rete Algorithm functions.

Rete Root Node

When using ReteOO, the root node is where all objects enter the network. From there, it immediately goes to the ObjectTypeNode.

Figure 5.1. ReteNode

ObjectTypeNode

The ObjectTypeNode helps to reduce the workload of the rules engine. If there are several objects, the rule engine wastes a lot of cycles trying to evaluate every node against every object. To make things efficient, the ObjectTypeNode is used so that the engine only passes objects to the nodes that match the object’s type. This way, if an application asserts a new Account, it does not propagate to the nodes for the Order object.

In Red Hat JBoss BRMS, an inserted object retrieves a list of valid ObjectTypesNodes through a lookup in a HashMap from the object’s class. If this list does not exist, it scans all the ObjectTypeNodes to find valid matches. It then caches these matched nodes in the list. This enables Red Hat JBoss BRMS to match against any class type that matches with an instanceof check.

AlphaNodes

AlphaNodes are used to evaluate literal conditions. When a rule has multiple literal conditions for a single object type, they are linked together. This means that if an application asserts an Account object, it must first satisfy the first literal condition before it can proceed to the next AlphaNode.

AlphaNodes are propagated using ObjectTypeNodes.

Hashing

Red Hat JBoss BRMS uses hashing to extend Rete by optimizing the propagation from ObjectTypeNode to AlphaNode. Each time an AlphaNode is added to an ObjectTypeNode, it adds the literal value as a key to the HashMap with the AlphaNode as the value. When a new instance enters the ObjectType node, rather than propagating to each AlphaNode, it retrieves the correct AlphaNode from the HashMap. This avoids unnecessary literal checks.

When facts enter from one side, you may do a hash lookup returning potentially valid candidates (referred to as indexing). At any point a valid join is found, the Tuple joins with the Object (referred to as a partial match) and then propagates to the next node.

BetaNodes

BetaNodes are used to compare two objects and their fields. The objects may be of the same or different types.

Alpha Memory and Beta Memory

Alpha memory refers to the left input on a BetaNode. In Red Hat JBoss BRMS, this input remembers all incoming objects.

Beta memory is the term used to refer to the right input of a BetaNode. It remembers all incoming tuples.

Lookups with BetaNodes

When facts enter from one side, you can do a hash lookup returning potentially valid candidates (referred to as indexing). If a valid join is found, the Tuple joins with the Object (referred to as a partial match) and then propagates to the next node.

LeftInputNodeAdapters

A LeftInputNodeAdapter takes an Object as an input and propagates a single Object Tuple.

Terminal Nodes

Terminal nodes are used to indicate when a single rule matches all its conditions (that is, the rule has a full match). A rule with an OR conditional disjunctive connective results in a sub-rule generation for each possible logical branch. Because of this, one rule can have multiple terminal nodes.

Node Sharing

Node sharing is used to prevent redundancy. As many rules repeat the same patterns, node sharing allows users to collapse those patterns so that the patterns need not be reevaluated for every single instance.

The following rules share the first pattern but not the last:

rule

when

Cheese($cheddar : name == "cheddar")

$person: Person(favouriteCheese == $cheddar)

then

System.out.println($person.getName() + "likes cheddar");

endrule

when

Cheese($cheddar : name == "cheddar")

$person : Person(favouriteCheese != $cheddar)

then

System.out.println($person.getName() + " does not like cheddar");

end

The Rete network displayed below denotes that the alpha node is shared but the beta nodes are not. Each beta node has its own TerminalNode.

Figure 5.2. Node Sharing

5.4. Switching Between PHREAK and ReteOO

It is possible to switch between PHREAK and ReteOO either by setting system properties, or in KieBase configuration. PHREAK is the default algorithm in both cases.

Switching to ReteOO requires the drools-reteoo-VERSION.jar file to be available on the class path. To include the file, add the following ReteOO Maven dependency to the pom.xml file in your project:

<dependency>

<groupId>org.drools</groupId>

<artifactId>drools-reteoo</artifactId>

<version>DROOLS_VERSION</version>

</dependency>For the supported Maven artifact version, see the Supported Component Versions section of the Red Hat JBoss BPM Suite Installation Guide.

If the ReteOO Maven dependency is not specified in the pom.xml file in your project, the BRMS engine uses PHREAK instead and issues a warning.

Switching Between PHREAK and ReteOO in System Properties

To switch between the PHREAK and ReteOO algorithms, edit the drools.ruleEngine system property to contain one the following values:

drools.ruleEngine=phreakdrools.ruleEngine=reteoo

The default value is phreak.

Switching Between PHREAK and ReteOO in KieBaseConfiguration

When creating a KieBase, specify the rule engine algorithm in KieBaseConfiguration. See the following example:

import org.kie.api.KieBase;

import org.kie.api.KieBaseConfiguration;

import org.kie.api.KieServices;

import org.kie.api.runtime.KieContainer;

import org.kie.internal.builder.conf.RuleEngineOption;

...KieServices kservices = KieServices.Factory.get();

KieBaseConfiguration kconfig = kieServices.Factory.get().newKieBaseConfiguration();

// You can either specify PHREAK (default):

kconfig.setOption(RuleEngineOption.PHREAK);

// or legacy ReteOO:

kconfig.setOption(RuleEngineOption.RETEOO);

// ... and then create a KieBase for the selected algorithm

// (getKieClasspathContainer() is just an example):

KieContainer container = kservices.getKieClasspathContainer();

KieBase kbase = container.newKieBase(kieBaseName, kconfig);For a list of Maven dependencies, see example Embedded jBPM Engine Dependencies. If you use Red Hat JBoss BRMS, see example Embedded Drools Engine Dependencies.

Additionally, if you want to switch to ReteOO, use the drools-reteoo dependency:

<dependency>

<groupId>org.drools</groupId>

<artifactId>drools-reteoo</artifactId>

<version>6.5.0.Final-redhat-2</version>

</dependency>For the current Maven artifact version, see chapter Supported Component Versions of the Red Hat JBoss BPM Suite Installation Guide.

Switching to ReteOO requires drools-reteoo-(version).jar to exist on the classpath. If not present, the BRMS Engine reverts back to PHREAK and issues a warning. This applies for switching with KieBaseConfiguration and system properties.

Chapter 6. Getting Started with Rules and Facts

To create business rules, an appropriate fact model on which the business rules operate must be present. A fact is an instance of an application object represented as POJO. Rules that contain the business logic can then be authored by using either the Business Central web user interface or Red Hat JBoss Developer Studio.

The structure of a rule is as follows:

rule "NAME"

when

RULE CONDITIONS

then

RULE CONSEQUENCES

end

Conditions inside the when clause of a rule query for fact combinations that match the criteria. If such a fact combination is found, consequences specified in the then clause are executed. These actions can assert a fact, retract a fact, or update a fact within the rule engine. As a result, other rules can be fired as well.

Rules Processing Steps

-

BRMS parses all

.drlrule files into the knowledge base. - Each fact is asserted into the working memory. As the facts are being asserted, BRMS uses the PHREAK or ReteOO algorithm to infer how the facts relate to the rules. After that, the working memory contains copies of the parsed rules and a reference to the facts.

-

The

fireAllRules()method is called. All rules and facts are evaluated by the rule engine and rule-facts pairs are created, based on which rules match against which set of facts. - All the rule-facts combinations are queued within a data construct called an agenda.

- Finally, activations are processed one by one from the agenda, calling the rule consequences on the facts. Note that executing an activation can modify the contents of the agenda before the next activation is performed. The PHREAK and ReteOO algorithms handle such situations efficiently.

6.1. Creating and Executing Rules

In this section, procedures describing how to create and execute rules using plain Java, Maven, Red Hat JBoss Developer Studio, and Business Central in Red Hat JBoss BPM Suite are provided.

6.1.1. Creating and Executing Rules Using Plain Java

Create a fact model.

Create a Plain old Java object (POJO) on which a rule will operate. In this example, a

Person.javafile in a directorymy-projectis created. ThePersonclass contains getter and setter methods to set and retrieve the first name, last name, hourly rate, and the wage of a person:import org.kie.api.KieServices; import org.kie.api.runtime.KieContainer; import org.kie.api.runtime.KieSession; public class Person { private String firstName; private String lastName; private Integer hourlyRate; private Integer wage; public String getFirstName() { return firstName; } public void setFirstName(String firstName) { this.firstName = firstName; } public String getLastName() { return lastName; } public void setLastName(String lastName) { this.lastName = lastName; } public Integer getHourlyRate() { return hourlyRate; } public void setHourlyRate(Integer hourlyRate) { this.hourlyRate = hourlyRate; } public Integer getWage(){ return wage; } public void setWage(Integer wage){ this.wage = wage; } }Create a rule.

Create a rule file in the

.drlformat under themy-projectdirectory. The followingPerson.drlrule calculates the wage and hourly rate values and displays a message based on the result afterwards.dialect "java" rule "Wage" when Person(hourlyRate * wage > 100) Person(name : firstName, surname : lastName) then System.out.println("Hello" + " " + name + " " + surname + "!"); System.out.println("You are rich!"); endCreate a main class.

Create a main class and save it to the same directory as the POJO created earlier. The main class will load the knowledge base and fire rules. In the following example, a main class

DroolsTest.javais created.In the main class:

Add the following

importstatements to import KIE services, a KIE container, and a KIE session:import org.kie.api.KieServices; import org.kie.api.runtime.KieContainer; import org.kie.api.runtime.KieSession;Load the knowledge base, insert facts, and fire the rule from the

main()method which passes the fact model to the rule:public class DroolsTest { public static final void main(String[] args) { try { // Load the knowledge base: KieServices ks = KieServices.Factory.get(); KieContainer kContainer = ks.getKieClasspathContainer(); KieSession kSession = kContainer.newKieSession(); // Go! Person p = new Person(); p.setWage(12); p.setFirstName("Tom"); p.setLastName("Summers"); p.setHourlyRate(10); kSession.insert(p); kSession.fireAllRules(); } catch (Throwable t) { t.printStackTrace(); } } }

-

Download the Red Hat JBoss BRMS 6.4 Core Engine ZIP file from the Red Hat Customer Portal and extract it under

my-project/BRMS-engine-jars/. In the

my-project/META-INFdirectory, create akmodule.xmlmetadata file with the following content:<?xml version="1.0" encoding="UTF-8"?> <kmodule xmlns="http://www.drools.org/xsd/kmodule"> </kmodule>Build the example.

To compile and build your Java files, navigate to the

my-projectdirectory on the command line and run the following command:javac -classpath "./BRMS-engine-jars/*:." DroolsTest.javaRun the example.

If there are no compilation errors, run the following command to execute the rule:

java -classpath "./BRMS-engine-jars/*:." DroolsTestThe expected output looks similar to the following:

Hello Tom Summers! You are rich!

6.1.2. Creating and Executing Rules Using Maven

Create a basic Maven archetype.

Navigate to a directory where you want to create a Maven archetype and run the following command:

mvn archetype:generate -DgroupId=com.sample.app -DartifactId=my-app -DarchetypeArtifactId=maven-archetype-quickstart -DinteractiveMode=falseThis creates a directory

my-appwith the following structure:my-app |-- pom.xml `-- src |-- main | `-- java | `-- com | `-- mycompany | `-- app | `-- App.java `-- test `-- java `-- com `-- mycompany `-- app `-- AppTest.javaThe

my-appdirectory contains:-

A

src/maindirectory for storing the application’s sources. -

A

src/testdirectory for storing the test sources. -

A

pom.xmlfile with the project’s configuration.

-

A

Create a fact model.

A fact model is a POJO, based on which a rule will operate. Create a

Person.javafile under themy-app/src/main/java/com/mycompany/appdirectory. ThePersonclass contains getter and setter methods to set and retrieve the first name, last name, hourly rate, and the wage of a person.package com.mycompany.app; public class Person { private String firstName; private String lastName; private Integer hourlyRate; private Integer wage; public String getFirstName() { return firstName; } public void setFirstName(String firstName) { this.firstName = firstName; } public String getLastName() { return lastName; } public void setLastName(String lastName) { this.lastName = lastName; } public Integer getHourlyRate() { return hourlyRate; } public void setHourlyRate(Integer hourlyRate) { this.hourlyRate = hourlyRate; } public Integer getWage(){ return wage; } public void setWage(Integer wage){ this.wage = wage; } }Create a rule.

Create a rule file in the

.drlformat under themy-app/src/main/resources/rulesdirectory. See the following example with a simple rulePerson.drlwhich imports thePersonclass:package com.mycompany.app; import com.mycompany.app.Person; dialect "java" rule "Wage" when Person(hourlyRate * wage > 100) Person(name : firstName, surname : lastName) then System.out.println("Hello " + name + " " + surname + "!"); System.out.println("You are rich!"); endThe rule above calculates the wage and hourly rate values and displays a message based on the result afterwards.

In the

my-app/src/main/resources/META-INFdirectory, create a metadata filekmodule.xmlwith the following content:<?xml version="1.0" encoding="UTF-8"?> <kmodule xmlns="http://www.drools.org/xsd/kmodule"> </kmodule>Set project dependencies.

Specify the libraries your application requires in the

my-app/pom.xmlconfiguration file. Provide the Red Hat JBoss BRMS dependencies as well as the group ID, artifact ID, and version (GAV) of your application as shown below:<?xml version="1.0" encoding="UTF-8"?> <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <groupId>com.mycompany.app</groupId> <artifactId>my-app</artifactId> <version>1.0.0</version> <repositories> <repository> <id>jboss-ga-repository</id> <url>http://maven.repository.redhat.com/ga/</url> </repository> </repositories> <dependencies> <dependency> <groupId>org.drools</groupId> <artifactId>drools-compiler</artifactId> <version>VERSION</version> </dependency> <dependency> <groupId>org.kie</groupId> <artifactId>kie-api</artifactId> <version>VERSION</version> </dependency> <dependency> <groupId>junit</groupId> <artifactId>junit</artifactId> <version>4.11</version> <scope>test</scope> </dependency> </dependencies> </project>For the supported Maven artifact version, see section Supported Component Versions of the Red Hat JBoss BPM Suite Installation Guide.

Test the example.

Use the

testAppmethod inmy-app/src/test/java/com/mycompany/app/AppTest.javato test the rule. TheAppTest.javafile is created by Maven by default.In the

AppTest.javafile:Add the following

importstatements to import KIE services, a KIE container, and a KIE session:import org.kie.api.KieServices; import org.kie.api.runtime.KieContainer; import org.kie.api.runtime.KieSession;Load the knowledge base, insert facts, and fire the rule from the

testApp()method which passes the fact model to the rule:public void testApp() { // Load the knowledge base: KieServices ks = KieServices.Factory.get(); KieContainer kContainer = ks.getKieClasspathContainer(); KieSession kSession = kContainer.newKieSession(); // Set up the fact model: Person p = new Person(); p.setWage(12); p.setFirstName("Tom"); p.setLastName("Summers"); p.setHourlyRate(10); // Insert the person into the session: kSession.insert(p); // Fire all rules: kSession.fireAllRules(); }

Build the example.

On the command line, navigate to the

my-appdirectory and run the following command:mvn clean installNote that executing this command for the first time may take a while.

The expected output looks similar to the following:

Hello Tom Summers! You are rich! Tests run: 1, Failures: 0, Errors: 0, Skipped: 0, Time elapsed: 1.194 sec Results : Tests run: 1, Failures: 0, Errors: 0, Skipped: 0 [INFO] ... [INFO] -------------------------------------------------------------- [INFO] BUILD SUCCESS [INFO] -------------------------------------------------------------- [INFO] Total time: 6.393 s ... [INFO] --------------------------------------------------------------

6.1.3. Creating and Executing Rules Using Red Hat JBoss Developer Studio

Make sure you have Red Hat JBoss Developer Studio properly set before proceeding further. See chapter Red Hat JBoss Developer Studio of Red Hat JBoss BPM Suite Installation Guide for more information.

Create a BRMS project.

To create a BRMS project in Red Hat JBoss Developer Studio:

- Start Red Hat JBoss Developer Studio and click File → New → Project.

- In the New Project dialog window that opens, select Drools → Drools Project and click Next.

- Click on the second icon to create a project and populate it with some example files to help you get started quickly. Click Next.

Enter a name of the project a select the Maven radio button as the project building option. Specify the GAV values which form the project’s fully qualified name, for example:

-

Group ID:

com.mycompany.app -

Artifact ID:

my-app -

Version:

1.0.0

-

Group ID:

- Click Finish.

This configuration sets up a basic project structure, class path, and sample rules. The project structure is as follows:

My-Project `-- src/main/java | `-- com.sample | `-- DecisionTable.java | `-- DroolsTest.java | `-- ProcessTest.java | `-- src/main/resources | `-- dtables | `-- Sample.xls | `-- process | `-- sample.bpmn | `-- rules | `-- Sample.drl | `-- META-INF | `-- JRE System Library | `-- Maven Dependencies | `-- Drools Library | `-- src | `-- target | `-- pom.xmlNotice the following:

-

A

Sample.drlrule file in thesrc/main/resourcesdirectory, containing an exampleHello WorldandGoodByerules. -

A

DroolsTest.javafile under thesrc/main/javadirectory in thecom.samplepackage. TheDroolsTestclass can be used to execute rules. -

The

Drools Librarydirectory which acts as a custom class path containing JAR files necessary for execution.

Create a fact model.

The

DroolsTest.javafile contains a sample POJOMessagewith getter and setter methods. You can edit this class or create a different POJO. In this example, a classPersoncontaining methods to set and retrieve the first name, last name, hourly rate, and wage of a person is used.public static class Person { private String firstName; private String lastName; private Integer hourlyRate; private Integer wage; public String getFirstName() { return firstName; } public void setFirstName(String firstName) { this.firstName = firstName; } public String getLastName() { return lastName; } public void setLastName(String lastName) { this.lastName = lastName; } public Integer getHourlyRate() { return hourlyRate; } public void setHourlyRate(Integer hourlyRate) { this.hourlyRate = hourlyRate; } public Integer getWage(){ return wage; } public void setWage(Integer wage){ this.wage = wage; } }Update the

main()method.The

DroolsTest.javafile contains amain()method that loads the knowledge base, inserts facts, and fires rules. Update the method to pass the objectPersonto a rule:public static final void main(String[] args) { try { // Load the knowledge base: KieServices ks = KieServices.Factory.get(); KieContainer kContainer = ks.getKieClasspathContainer(); KieSession kSession = kContainer.newKieSession("ksession-rules"); // Go! Person p = new Person(); p.setWage(12); p.setFirstName("Tom"); p.setLastName("Summers"); p.setHourlyRate(10); kSession.insert(p); kSession.fireAllRules(); } catch (Throwable t) { t.printStackTrace(); } }To load the knowledge base, get a

KieServicesinstance and a class-path-basedKieContainerand build theKieSessionwith theKieContainer. In the example above, a sessionksession-rulesmatching the one defined inkmodule.xmlfile is passed.Create a rule.

The rule file

Sample.drlcontains an example of two rules. Edit this file or create a new one. In your rule file:Specify the package name:

package com.sampleImport facts:

import com.sample.DroolsTest.Person;Write the rule:

dialect "java" rule "Wage" when Person(hourlyRate * wage > 100) Person(name : firstName, surname : lastName) then System.out.println("Hello" + " " + name + " " + surname + "!"); System.out.println("You are rich!"); end

Test the rule.

Right-click the

DroolsTest.javafile and select Run As → Java Application.The expected output looks similar to the following:

Hello Tom Summers! You are rich!

6.1.4. Creating and Executing Rules Using Business Central

Make sure you have Red Hat JBoss BPM Suite successfully installed before proceeding further.

- Start the server and log in to Business Central. For more information how to do so, see sections Starting Server and Logging into Business Central of Red Hat JBoss BPM Suite Installation Guide.

Create a repository structure and a project.

- In Business Central, click Authoring → Administration.

- Click Organizational Units → Manage Organizational Units.

- In the displayed Organizational Unit Manager, click Add.

In the Add New Organizational Unit dialog window, define the unit properties. For example:

-

Name:

EmployeeWage -

Owner:

Employee

-

Name:

- Click Ok.

- Click Repositories → New repository.

In the New Repository dialog window, define the repository properties. For example:

-

Repository Name:

EmployeeRepo -

In Organizational Unit:

EmployeeWage

-

Repository Name:

- Click Finish.

- In the main menu, click Authoring → Project Authoring.

-

In Project Explorer, navigate to the

EmployeeWageorganizational unit and theEmployeeReporepository. - Click New Item → Project.

In the New Project dialog window, enter a name of the project, for example

MyProject, and specify project’s Maven properties. For example:-

Group ID:

org.bpms -

Artifact ID:

MyProject -

Version:

1.0.0

-

Group ID:

- Click Finish.

Create a fact model.

- Click New Item → Data Object.

In the Create new Data Object dialog window, enter the object’s name and specify a package. For example:

-

Data Object:

Person -

Package:

org.bpms.myproject

-

Data Object:

- Click Ok.

In the Editor than opens, click Add field and create four fields with the following values by clicking Create and continue:

-

Id:

firstName, Type: String -

Id:

lastName, Type: String -

Id:

hourlyRate, Type: Integer -

Id:

wage, Type: Integer

-

Id:

- Save the project.

Create a rule.

- Click New Item → DRL file.

In the Create new DRL file dialog window, enter a name of the rule and specify a package. For example:

-

DRL file:

MyRule -

Package:

org.bpms.myproject

-

DRL file:

- Click Ok.

Paste the definition of a rule shown below into the DRL Editor or create your own rule.

package org.bpms.myproject; rule "MyRule" ruleflow-group "MyProjectGroup" when Person(hourlyRate * wage > 100) Person(name : firstName, surname : lastName) then System.out.println("Hello" + " " + name + " " + surname + "!"); System.out.println("You are rich!"); end- Click Save.

Create a business process with a business rule task.

- Click New Item → Business Process.

In the Create new Business Process dialog window, enter a name of the business process and specify a package. For example:

-

Business Process:

MyProcess -

Package:

org.bpms.myproject

-

Business Process:

- Click Ok. The Business Process Editor opens with a Start Event element on the canvas.

- Expand the Object Library palette on the left and drag and drop a Business Rule task (Tasks → Business Rule) on the canvas.

- Click on an empty space on the canvas and open the Properties panel on the right. Click on the Value text field of the Variable Definitions property. Click on the arrow that appears on the right to open the Editor for Variable Definitions dialog window.

Click Add Variable and define the following variable:

-

Name:

person -

Defined Types:

Person [org.bpms.myproject]

-

Name:

- Click Ok.

-

Click on the Business Rule task on the canvas and in the Properties panel on the right, set the Name of the task, for example

My_Rule. -

Click on the Value text field of the Ruleflow Group property. Click on the arrow that appears on the right to open the Editor for RuleFlow Groups dialog window. Select

MyProjectGroupand click Save. Click on the Value text field of the Assignments property. Click on the arrow that appears on the right to open the My_Rule Data I/O dialog window and click Add next to the Data Inputs and Assignments option to add the following:

-

Name:

Person -

Data Type:

Person [org.bpms.myproject] -

Source:

person

-

Name:

Click Save.

You have now successfully created an object that maps to the variables you set before in your fact model. Your business process passes this object as an input to the rule.

- Add an End Event and connect all events on the canvas to complete the process.

-

Click

and select Generate all Forms.

and select Generate all Forms.

- Save the process.

Build and deploy the rule.

Click Open Project Editor on the left, change the version of the project and click Build → Build & Deploy.

A notification appears in the upper part of the screen informing you that the project has been built successfully.

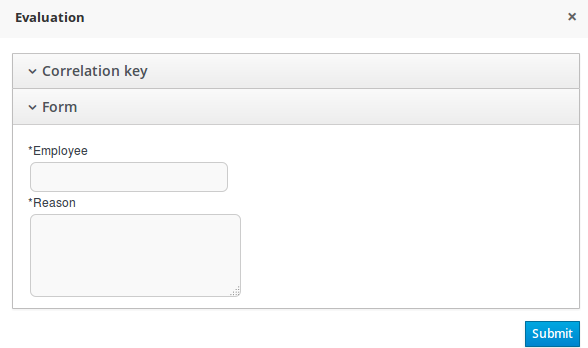

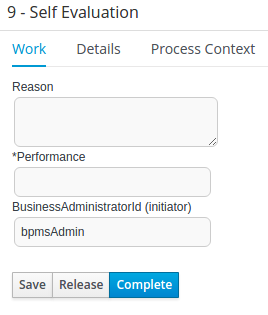

- Click Process Management → Process Definitions.

- Click Start next to the newly built process.

In the opened MyProcess dialog window, provide the following values of the variables defined in your fact model and click Submit:

-

firstName:

Tom -

lastName:

Summers -

hourlyRate:

12 -

wage:

10

As these values satisfy the rule condition, the expected output looks similar to the following:

16:19:58,479 INFO [org.jbpm.kie.services.impl.store.DeploymentSynchronizer] (http-/127.0.0.1:8080-1) Deployment unit org.bpms:MyProject:1.0 stored successfully 16:26:56,119 INFO [stdout] (http-/127.0.0.1:8080-5) Hello Tom Summers! 16:26:56,119 INFO [stdout] (http-/127.0.0.1:8080-5) You are rich!-

firstName:

6.2. Execution of Rules

6.2.1. Agenda

The Agenda is a Rete feature. During actions on the WorkingMemory, rules may become fully matched and eligible for execution. A single Working Memory Action can result in multiple eligible rules. When a rule is fully matched an Activation is created, referencing the rule and the matched facts, and placed onto the Agenda. The Agenda controls the execution order of these Activations using a Conflict Resolution strategy.

6.2.2. Agenda Processing

The engine cycles repeatedly through two phases:

-

Working Memory Actions. This is where most of the work takes place, either in the Consequence (the RHS itself) or the main Java application process. Once the Consequence has finished or the main Java application process calls

fireAllRules()the engine switches to the Agenda Evaluation phase. - Agenda Evaluation. This attempts to select a rule to fire. If no rule is found it exits, otherwise it fires the found rule, switching the phase back to Working Memory Actions.

The process repeats until the agenda is clear, in which case control returns to the calling application. When Working Memory Actions are taking place, no rules are being fired.

6.2.3. Conflict Resolution

Conflict resolution is required when there are multiple rules on the agenda. As firing a rule may have side effects on the working memory, the rule engine needs to know in what order the rules should fire (for instance, firing ruleA may cause ruleB to be removed from the agenda).

6.2.4. AgendaGroup

Agenda groups are a way to partition rules on the agenda. At any one time, only one group has "focus" which means that activations for rules in that group only will take effect. You can also have rules with "auto focus" which means that the focus is taken for its agenda group when that rule’s conditions are true.

Agenda groups are known as "modules" in CLIPS terminology. Agenda groups provide a way to create a "flow" between grouped rules. You can switch the group which has focus either from within the rule engine, or via the API. If your rules have a clear need for multiple "phases" or "sequences" of processing, consider using agenda-groups for this purpose.

6.2.5. setFocus()

Each time setFocus() is called it pushes the specified Agenda Group onto a stack. When the focus group is empty it is popped from the stack and the focus group that is now on top evaluates. An Agenda Group can appear in multiple locations on the stack. The default Agenda Group is "MAIN", with all rules which do not specify an Agenda Group being in this group. It is also always the first group on the stack, given focus initially, by default.

The setFocus() method call looks like follows:

ksession.getAgenda().getAgendaGroup("Group A").setFocus();6.2.6. ActivationGroup

An activation group is a set of rules bound together by the same activation-group rule attribute. In this group only one rule can fire, and after that rule has fired all the other rules are cancelled from the agenda. The clear() method can be called at any time, which cancels all of the activations before one has had a chance to fire.

An activation group looks like follows:

ksession.getAgenda().getActivationGroup("Group B").clear();6.3. Inference

6.3.1. The Inference Engine

The inference engine is the part of the Red Hat JBoss BRMS engine which matches production facts and data to rules. It is often called the brain of a Production Rules System as it is able to scale to a large number of rules and facts. It makes inferences based on its existing knowledge and performs the actions based on what it infers from the information.

The rules are stored in the production memory and the facts that the inference engine matches against, are stored in the working memory. Facts are asserted into the working memory where they may get modified or retracted. A system with a large number of rules and facts may result in many rules being true for the same fact assertion. Such conflicting rules are managed using a conflict resolution strategy. This strategy determines the order of execution of the rules by assigning a priority level to each rule.