Networking Guide

An Advanced Guide to OpenStack Networking

Abstract

Preface

OpenStack Networking (codename neutron) is the software-defined networking component of Red Hat OpenStack Platform 12.

1. OpenStack Networking and SDN

Software-defined networking (SDN) is an approach to computer networking that allows network administrators to manage network services through abstraction of lower-level functionality. While server workloads have been migrated into virtual environments, they’re still just servers looking for a network connection to let them send and receive data. SDN meets this need by moving networking equipment (such as routers and switches) into the same virtualized space. If you’re already familiar with basic networking concepts, then it’s not much of a leap to consider that they’ve now been virtualized just like the servers they’re connecting.

This book intends to give administrators an understanding of basic administration and troubleshooting tasks in Part 1, and explores the advanced capabilities of OpenStack Networking in a cookbook style in Part 2. If you’re already comfortable with general networking concepts, then the content of this book should be accessible to you (someone less familiar with networking might benefit from the general networking overview in Part 1).

1.1. Topics covered in this book

- Preface - Describes the political landscape of SDN in large organizations, and offers a short introduction to general networking concepts.

Part 1 - Covers common administrative tasks and basic troubleshooting steps:

- Adding and removing network resources

- Basic network troubleshooting

- Tenant network troubleshooting

Part 2 - Contains cookbook-style scenarios for advanced OpenStack Networking features, including:

- Configure Layer 3 High Availability for virtual routers

- Configure DVR and other Neutron features

2. The Politics of Virtual Networks

Software-defined networking (SDN) allows engineers to deploy virtual routers and switches in their virtualization environment, be it OpenStack or RHEV-based. SDN also shifts the business of moving data packets between computers into an unfamiliar space. These routers and switches were previously physical devices with all kinds of cabling running through them, but with SDN they can be deployed and operational just by clicking a few buttons.

In many large virtualization environments, the adoption of software-defined networking (SDN) can result in political tensions within the organisation. Virtualization engineers who may not be familiar with advanced networking concepts are expected to suddenly manage the virtual routers and switches of their cloud deployment, and need to think sensibly about IP address allocation, VLAN isolation, and subnetting. And while this is going on, the network engineers are watching this other team discuss technologies that used to be their exclusive domain, resulting in agitation and perhaps job security concerns. This demarcation can also greatly complicate troubleshooting: When systems are down and can’t connect to each other, are the virtualization engineers expected to handover the troubleshooting efforts to the network engineers the moment they see the packets reaching the physical switch?

This tension can be more easily mitigated if you think of your virtual network as an extension of your physical network. All of the same concepts of default gateways, routers, and subnets still apply, and it all still runs using TCP/IP.

However you choose to manage this politically, there are also technical measures available to address this. For example, Cisco’s Nexus product enables OpenStack operators to deploy a virtual router that runs the familiar Cisco NX-OS. This allows network engineers to login and manage network ports the way they already do with their existing physical Cisco networking equipment. Alternatively, if the network engineers are not going to manage the virtual network, it would still be sensible to involve them from the very beginning. Physical networking infrastructure will still be required for the OpenStack nodes, IP addresses will still need to be allocated, VLANs will need to be trunked, and switch ports will need to be configured to trunk the VLANs. Aside from troubleshooting, there are times when extensive co-operation will be expected from both teams. For example, when adjusting the MTU size for a VM, this will need to be done from end-to-end, including all virtual and physical switches and routers, requiring a carefully choreographed change between both teams.

Network engineers remain a critical part of your virtualization deployment, even more so after the introduction of SDN. The additional complexity will certainly need to draw on their skills, especially when things go wrong and their sage wisdom is needed.

Chapter 1. Networking Overview

1.1. How Networking Works

The term Networking refers to the act of moving information from one computer to another. At the most basic level, this is performed by running a cable between two machines, each with network interface cards (NICs) installed.

If you’ve ever studied the OSI networking model, this would be layer 1.

Now, if you want more than two computers to get involved in the conversation, you would need to scale out this configuration by adding a device called a switch. Enterprise switches resemble pizza boxes with multiple Ethernet ports for you to plug in additional machines. By the time you’ve done all this, you have on your hands something that’s called a Local Area Network (LAN).

Switches move us up the OSI model to layer two, and apply a bit more intelligence than the lower layer 1: Each NIC has a unique MAC address number assigned to the hardware, and it’s this number that lets machines plugged into the same switch find each other. The switch maintains a list of which MAC addresses are plugged into which ports, so that when one computer attempts to send data to another, the switch will know where they’re both situated, and will adjust entries in the CAM (Content Addressable Memory), which keeps track of MAC-address-to-port mappings.

1.1.1. VLANs

VLANs allow you to segment network traffic for computers running on the same switch. In other words, you can logically carve up your switch by configuring the ports to be members of different networks — they are basically mini-LANs that allow you to separate traffic for security reasons. For example, if your switch has 24 ports in total, you can say that ports 1-6 belong to VLAN200, and ports 7-18 belong to VLAN201. As a result, computers plugged into VLAN200 are completely separate from those on VLAN201; they can no longer communicate directly, and if they wanted to, the traffic would have to pass through a router as if they were two separate physical switches (which would be a useful way to think of them). This is where firewalls can also be useful for governing which VLANs can communicate with each other.

1.2. Connecting two LANs together

Imagine that you have two LANs running on two separate switches, and now you’d like them to share information with each other. You have two options for configuring this:

- First option: Use 802.1Q VLAN tagging to configure a single VLAN that spans across both physical switches. For this to work, you take a network cable and plug one end into a port on each switch, then you configure these ports as 802.1Q tagged ports (sometimes known as trunk ports). Basically you’ve now configured these two switches to act as one big logical switch, and the connected computers can now successfully find each other. The downside to this option is scalability, you can only daisy-chain so many switches until overhead becomes an issue.

- Second option: Buy a device called a router and plug in cables from each switch. As a result, the router will be aware of the networks configured on both switches. Each end plugged into the switch will be assigned an IP address, known as the default gateway for that network. The "default" in default gateway defines the destination where traffic will be sent if is clear that the destined machine is not on the same LAN as you. By setting this default gateway on each of your computers, they don’t need to be aware of all the other computers on the other networks in order to send traffic to them. Now they just send it on to the default gateway and let the router handle it from there. And since the router is aware of which networks reside on which interface, it should have no trouble sending the packets on to their intended destinations. Routing works at layer 3 of the OSI model, and is where the familiar concepts like IP addresses and subnets do their work.

This concept is how the internet itself works. Lots of separate networks run by different organizations are all interconnected using switches and routers. Keep following the right default gateways and your traffic will eventually get to where it needs to be.

1.2.1. Firewalls

Firewalls can filter traffic across multiple OSI layers, including layer 7 (for inspecting actual content). They are often situated in the same network segments as routers, where they govern the traffic moving between all the networks. Firewalls refer to a pre-defined set of rules that prescribe which traffic may or may not enter a network. These rules can become very granular, for example:

"Servers on VLAN200 may only communicate with computers on VLAN201, and only on a Thursday afternoon, and only if they are sending encrypted web traffic (HTTPS) in one direction".

To help enforce these rules, some firewalls also perform Deep Packet Inspection (DPI) at layers 5-7, whereby they examine the contents of packets to ensure they actually are whatever they claim to be. Hackers are known to exfiltrate data by having the traffic masquerade as something it’s not, so DPI is one of the means that can help mitigate that threat.

1.3. OpenStack Networking (neutron)

These same networking concepts apply in OpenStack, where they are known as Software-Defined Networking (SDN). The OpenStack Networking (neutron) component provides the API for virtual networking capabilities, and includes switches, routers, and firewalls. The virtual network infrastructure allows your instances to communicate with each other and also externally using the physical network. The Open vSwitch bridge allocates virtual ports to instances, and can span across to the physical network for incoming and outgoing traffic.

1.4. Using CIDR format

IP addresses are generally first allocated in blocks of subnets. For example, the IP address range 192.168.100.0 - 192.168.100.255 with a subnet mask of 255.555.255.0 allows for 254 IP addresses (the first and last addresses are reserved).

These subnets can be represented in a number of ways:

Common usage: Subnet addresses are traditionally displayed using the network address accompanied by the subnet mask. For example:

- Network Address: 192.168.100.0

- Subnet mask: 255.255.255.0

-

Using CIDR format: This format shortens the subnet mask into its total number of active bits. For example, in

192.168.100.0/24the/24is a shortened representation of255.255.255.0, and is a total of the number of flipped bits when converted to binary. For example, CIDR format can be used inifcfg-xxxscripts instead of theNETMASKvalue:

#NETMASK=255.255.255.0

PREFIX=24Chapter 2. OpenStack Networking Concepts

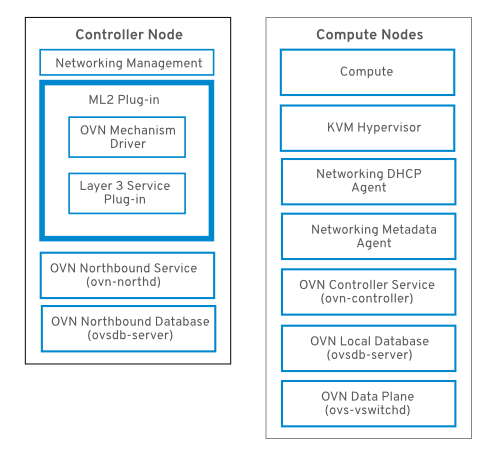

OpenStack Networking has system services to manage core services such as routing, DHCP, and metadata. Together, these services are included in the concept of the controller node, which is a conceptual role assigned to a physical server. A physical server is typically assigned the role of Network node, keeping it dedicated to the task of managing Layer 3 routing for network traffic to and from instances. In OpenStack Networking, you can have multiple physical hosts performing this role, allowing for redundant service in the event of hardware failure. For more information, see the chapter on Layer 3 High Availability.

Red Hat OpenStack Platform 11 added support for composable roles, allowing you to separate network services into a custom role. However, for simplicity, this guide assumes that a deployment uses the default controller role.

2.1. Installing OpenStack Networking (neutron)

2.1.1. Supported installation

The OpenStack Networking component is installed as part of a Red Hat OpenStack Platform director deployment. Refer to the Director Installation and Usage guide for more information.

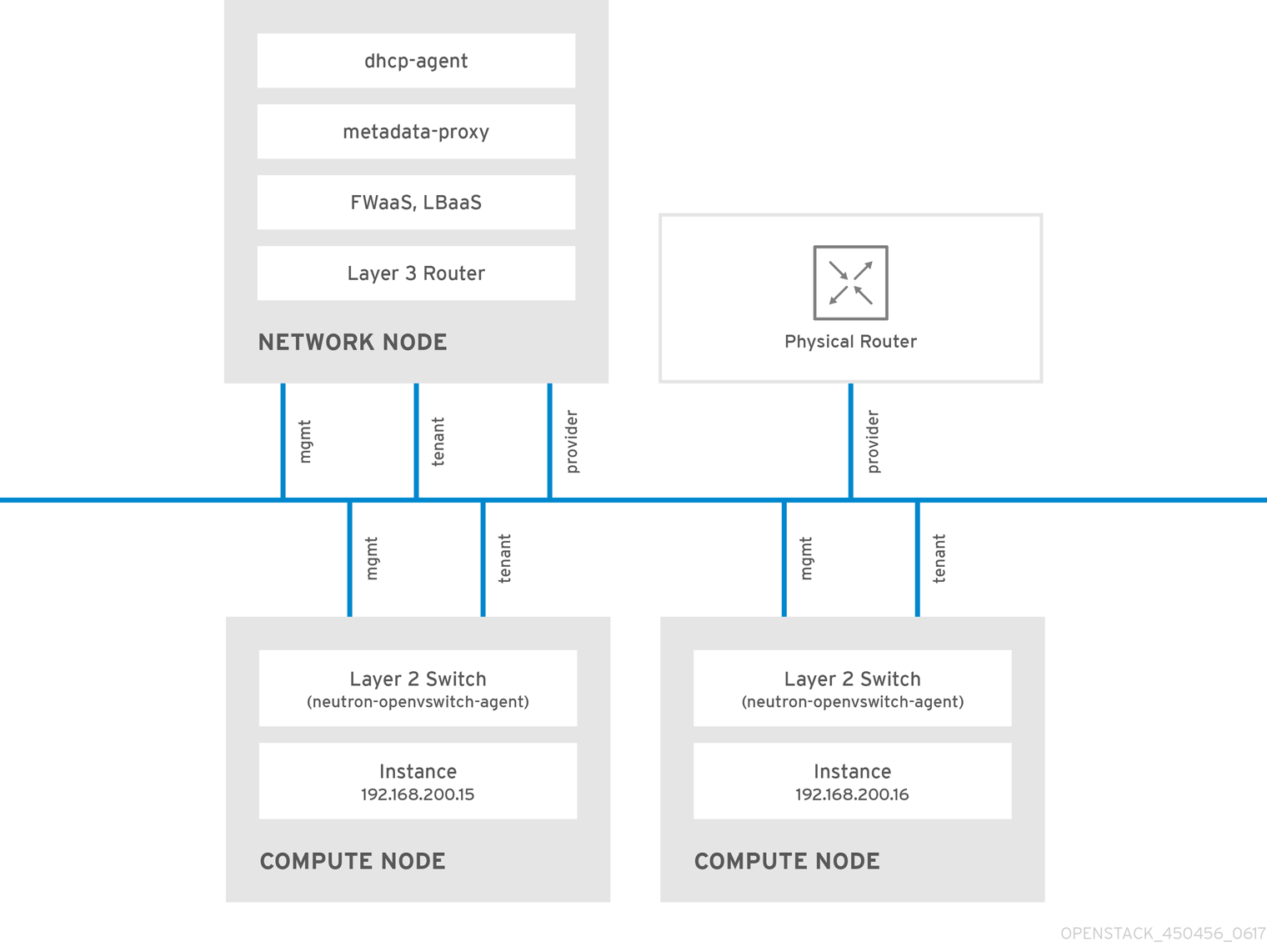

2.2. OpenStack Networking diagram

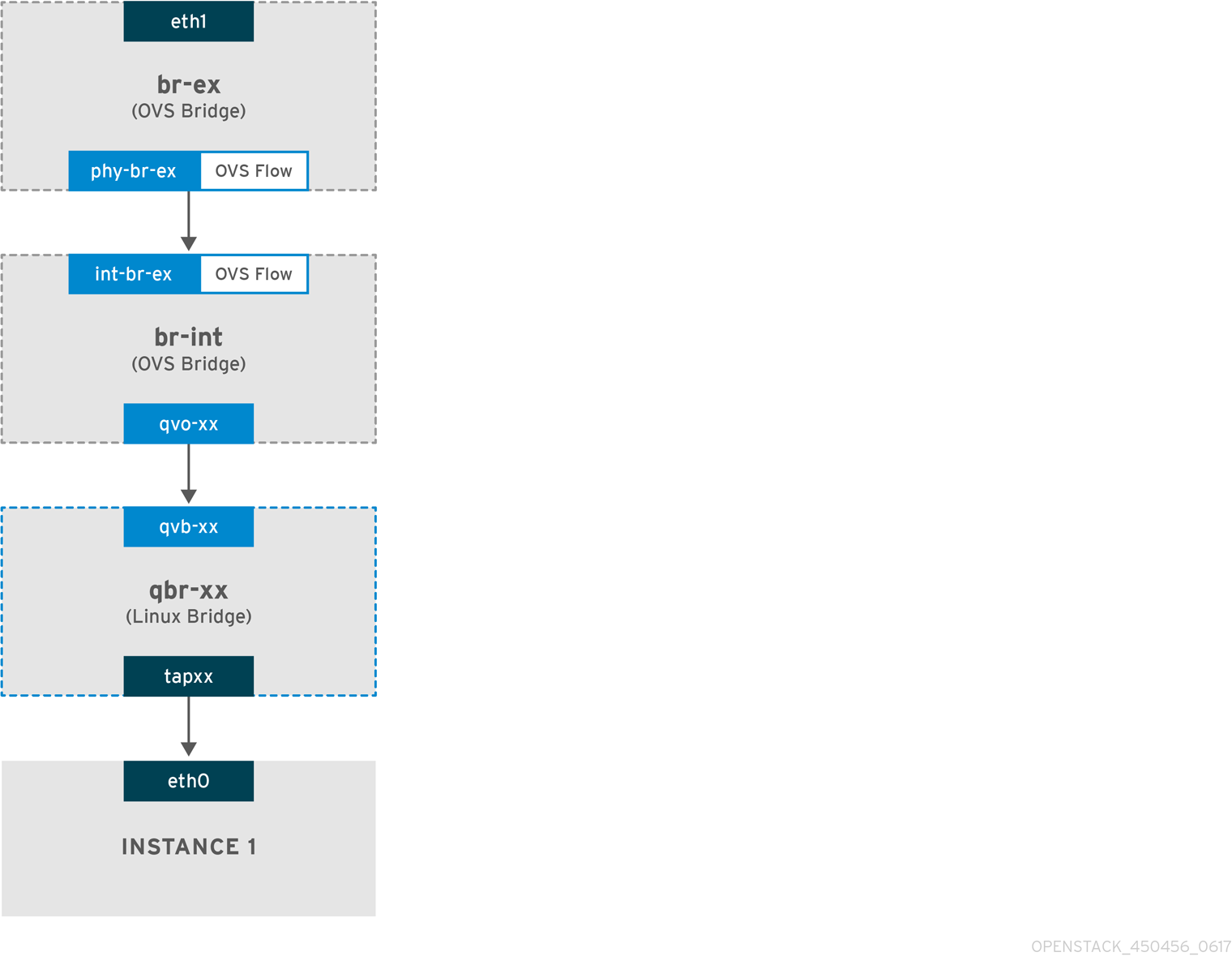

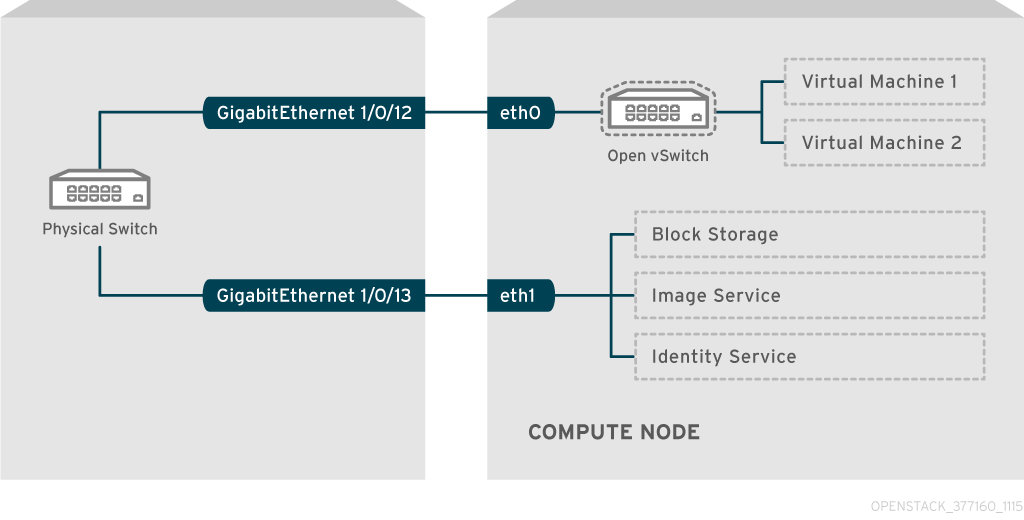

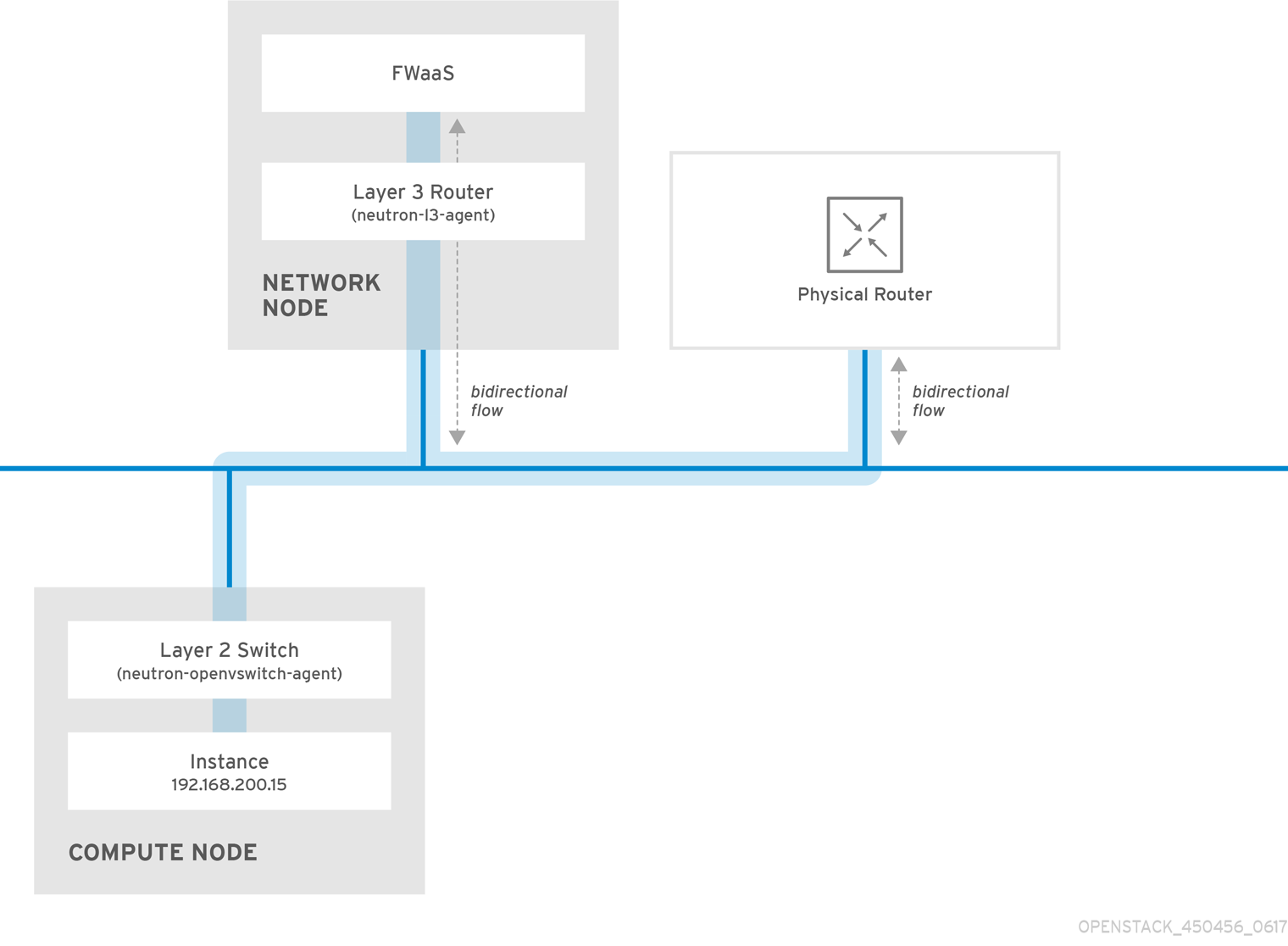

This diagram depicts a sample OpenStack Networking deployment, with a dedicated OpenStack Networking node performing L3 routing and DHCP, and running the advanced services FWaaS and LBaaS. Two Compute nodes run the Open vSwitch (openvswitch-agent) and have two physical network cards each, one for tenant traffic, and another for management connectivity. The OpenStack Networking node has a third network card specifically for provider traffic:

2.3. Security Groups

Security groups and rules filter the type and direction of network traffic sent to (and received from) a given neutron port. This provides an additional layer of security to complement any firewall rules present on the Compute instance. The security group is a container object with one or more security rules. A single security group can manage traffic to multiple compute instances. Ports created for floating IP addresses, OpenStack Networking LBaaS VIPs, and instances are associated with a security group. If none is specified, then the port is associated with the default security group. By default, this group will drop all inbound traffic and allow all outbound traffic. Additional security rules can be added to the default security group to modify its behavior or new security groups can be created as necessary.

2.4. Open vSwitch

Open vSwitch (OVS) is a software-defined networking (SDN) virtual switch similar to the Linux software bridge. OVS provides switching services to virtualized networks with support for industry standard NetFlow, OpenFlow, and sFlow. Open vSwitch is also able to integrate with physical switches using layer 2 features, such as STP, LACP, and 802.1Q VLAN tagging. Tunneling with VXLAN and GRE is supported with Open vSwitch version 1.11.0-1.el6 or later.

Do not use LACP with OVS-based bonds, as this configuration is problematic and unsupported. Instead, consider using bond_mode=balance-slb as a replacement for this functionality. In addition, you can still use LACP with Linux bonding. For the technical details behind this requirement, see BZ#1267291.

To mitigate the risk of network loops in Open vSwitch, only a single interface or a single bond may be a member of a given bridge. If you require multiple bonds or interfaces, you can configure multiple bridges.

2.5. Modular Layer 2 (ML2)

ML2 is the OpenStack Networking core plug-in introduced in OpenStack’s Havana release. Superseding the previous model of monolithic plug-ins, ML2’s modular design enables the concurrent operation of mixed network technologies. The monolithic Open vSwitch and Linux Bridge plug-ins have been deprecated and removed; their functionality has instead been reimplemented as ML2 mechanism drivers.

ML2 is the default OpenStack Networking plug-in, with Open vSwitch configured as the default mechanism driver.

2.5.1. The reasoning behind ML2

Previously, OpenStack Networking deployments were only able to use the plug-in that had been selected at implementation time. For example, a deployment running the Open vSwitch plug-in was only able to use Open vSwitch exclusively; it wasn’t possible to simultaneously run another plug-in such as linuxbridge. This was found to be a limitation in environments with heterogeneous requirements.

2.5.2. ML2 network types

Multiple network segment types can be operated concurrently. In addition, these network segments can interconnect using ML2’s support for multi-segmented networks. Ports are automatically bound to the segment with connectivity; it is not necessary to bind them to a specific segment. Depending on the mechanism driver, ML2 supports the following network segment types:

- flat

- GRE

- local

- VLAN

- VXLAN

The various Type drivers are enabled in the ML2 section of the ml2_conf.ini file:

[ml2]

type_drivers = local,flat,vlan,gre,vxlan2.5.3. ML2 Mechanism Drivers

Plug-ins have been reimplemented as mechanisms with a common code base. This approach enables code reuse and eliminates much of the complexity around code maintenance and testing.

Refer to the Release Notes for the list of supported mechanism drivers.

The various mechanism drivers are enabled in the ML2 section of the ml2_conf.ini file. For example:

[ml2]

mechanism_drivers = openvswitch,linuxbridge,l2populationIf your deployment uses Red Hat OpenStack Platform director, then these settings are managed by director and should not be changed manually.

Neutron’s Linux Bridge ML2 driver and agent were deprecated in Red Hat OpenStack Platform 11. The Open vSwitch (OVS) plugin is the one deployed by default by the OpenStack Platform director, and is recommended by Red Hat for general usage.

2.6. L2 Population

The L2 Population driver enables broadcast, multicast, and unicast traffic to scale out on large overlay networks. By default, Open vSwitch GRE and VXLAN replicate broadcasts to every agent, including those that do not host the destination network. This design requires the acceptance of significant network and processing overhead. The alternative design introduced by the L2 Population driver implements a partial mesh for ARP resolution and MAC learning traffic; it also creates tunnels for a particular network only between the nodes that host the network. This traffic is sent only to the necessary agent by encapsulating it as a targeted unicast.

1. Enable the L2 population driver by adding it to the list of mechanism drivers. You also need to have at least one tunneling driver enabled; either GRE, VXLAN, or both. Add the appropriate configuration options to the ml2_conf.ini file:

[ml2]

type_drivers = local,flat,vlan,gre,vxlan

mechanism_drivers = openvswitch,linuxbridge,l2populationNeutron’s Linux Bridge ML2 driver and agent were deprecated in Red Hat OpenStack Platform 11. The Open vSwitch (OVS) plugin is the one deployed by default by the OpenStack Platform director, and is recommended by Red Hat for general usage.

2. Enable L2 population in the openvswitch_agent.ini file. This must be enabled on each node running the L2 agent:

[agent]

l2_population = True

To install ARP reply flows, you will need to configure the arp_responder flag. For example:

[agent]

l2_population = True

arp_responder = True2.7. OpenStack Networking Services

By default, Red Hat OpenStack Platform includes components that integrate with the ML2 and Open vSwitch plugin to provide networking functionality in your deployment:

2.7.1. L3 Agent

The L3 agent is part of the openstack-neutron package. Network namespaces are used to provide each project with its own isolated layer 3 routers, which direct traffic and provide gateway services for the layer 2 networks; the L3 agent assists with managing these routers. The nodes on which the L3 agent is to be hosted must not have a manually-configured IP address on a network interface that is connected to an external network. Instead there must be a range of IP addresses from the external network that are available for use by OpenStack Networking. These IP addresses will be assigned to the routers that provide the link between the internal and external networks. The range selected must be large enough to provide a unique IP address for each router in the deployment as well as each desired floating IP.

2.7.2. DHCP Agent

The OpenStack Networking DHCP agent manages the network namespaces that are spawned for each project subnet to act as DHCP server. Each namespace is running a dnsmasq process that is capable of allocating IP addresses to virtual machines running on the network. If the agent is enabled and running when a subnet is created then by default that subnet has DHCP enabled.

2.7.3. Open vSwitch Agent

The Open vSwitch (OVS) neutron plug-in uses its own agent, which runs on each node and manages the OVS bridges. The ML2 plugin integrates with a dedicated agent to manage L2 networks. By default, Red Hat OpenStack Platform uses ovs-agent, which builds overlay networks using OVS bridges.

2.8. Tenant and Provider networks

The following diagram presents an overview of the tenant and provider network types, and illustrates how they interact within the overall OpenStack Networking topology:

2.8.1. Tenant networks

Tenant networks are created by users for connectivity within projects. They are fully isolated by default and are not shared with other projects. OpenStack Networking supports a range of tenant network types:

- Flat - All instances reside on the same network, which can also be shared with the hosts. No VLAN tagging or other network segregation takes place.

- VLAN - OpenStack Networking allows users to create multiple provider or tenant networks using VLAN IDs (802.1Q tagged) that correspond to VLANs present in the physical network. This allows instances to communicate with each other across the environment. They can also communicate with dedicated servers, firewalls, load balancers and other network infrastructure on the same layer 2 VLAN.

- VXLAN and GRE tunnels - VXLAN and GRE use network overlays to support private communication between instances. An OpenStack Networking router is required to enable traffic to traverse outside of the GRE or VXLAN tenant network. A router is also required to connect directly-connected tenant networks with external networks, including the Internet; the router provides the ability to connect to instances directly from an external network using floating IP addresses.

You can configure QoS policies for tenant networks. For more information, see Chapter 11, Configure Quality-of-Service (QoS).

2.8.2. Provider networks

Provider networks are created by the OpenStack administrator and map directly to an existing physical network in the data center. Useful network types in this category are flat (untagged) and VLAN (802.1Q tagged). It is possible to allow provider networks to be shared among tenants as part of the network creation process.

2.8.2.1. Flat provider networks

You can use flat provider networks to connect instances directly to the external network. This is useful if you have multiple physical networks (for example, physnet1 and physnet2) and separate physical interfaces (eth0 → physnet1 and eth1 → physnet2), and intend to connect each Compute and Network node to those external networks. If you would like to use multiple vlan-tagged interfaces on a single interface to connect to multiple provider networks, please refer to Section 7.2, “Using VLAN provider networks”.

2.8.2.2. Configure controller nodes

1. Edit /etc/neutron/plugin.ini (symbolic link to /etc/neutron/plugins/ml2/ml2_conf.ini) and add flat to the existing list of values, and set flat_networks to *. For example:

type_drivers = vxlan,flat

flat_networks =*

2. Create an external network as a flat network and associate it with the configured physical_network. Configuring it as a shared network (using --shared) will let other users create instances directly connected to it.

neutron net-create public01 --provider:network_type flat --provider:physical_network physnet1 --router:external=True --shared

3. Create a subnet using neutron subnet-create, or the dashboard. For example:

# neutron subnet-create --name public_subnet --enable_dhcp=False --allocation_pool start=192.168.100.20,end=192.168.100.100 --gateway=192.168.100.1 public01 192.168.100.0/24

4. Restart the neutron-server service to apply the change:

systemctl restart neutron-server2.8.2.3. Configure the Network and Compute nodes

Perform these steps on the network node and compute nodes. This will connect the nodes to the external network, and allow instances to communicate directly with the external network.

1. Create an external network bridge (br-ex) and add an associated port (eth1) to it:

Create the external bridge in /etc/sysconfig/network-scripts/ifcfg-br-ex:

DEVICE=br-ex

TYPE=OVSBridge

DEVICETYPE=ovs

ONBOOT=yes

NM_CONTROLLED=no

BOOTPROTO=none

In /etc/sysconfig/network-scripts/ifcfg-eth1, configure eth1 to connect to br-ex:

DEVICE=eth1

TYPE=OVSPort

DEVICETYPE=ovs

OVS_BRIDGE=br-ex

ONBOOT=yes

NM_CONTROLLED=no

BOOTPROTO=noneReboot the node or restart the network service for the changes to take effect.

2. Configure physical networks in /etc/neutron/plugins/ml2/openvswitch_agent.ini and map bridges to the physical network:

bridge_mappings = physnet1:br-exFor more information on bridge mappings, see Chapter 12, Configure Bridge Mappings.

3. Restart the neutron-openvswitch-agent service on both the network and compute nodes for the changes to take effect:

systemctl restart neutron-openvswitch-agent2.8.2.4. Configure the network node

1. Set external_network_bridge = to an empty value in /etc/neutron/l3_agent.ini:

Previously, OpenStack Networking used external_network_bridge when only a single bridge was used for connecting to an external network. This value may now be set to a blank string, which allows multiple external network bridges. OpenStack Networking will then create a patch from each bridge to br-int.

# Name of bridge used for external network traffic. This should be set to

# empty value for the linux bridge

external_network_bridge =

2. Restart neutron-l3-agent for the changes to take effect.

systemctl restart neutron-l3-agent

If there are multiple flat provider networks, then each of them should have a separate physical interface and bridge to connect them to the external network. You will need to configure the ifcfg-* scripts appropriately and use a comma-separated list for each network when specifying the mappings in the bridge_mappings option. For more information on bridge mappings, see Chapter 12, Configure Bridge Mappings.

2.9. Layer 2 and layer 3 networking

When designing your virtual network, you will need to anticipate where the majority of traffic is going to be sent. Network traffic moves faster within the same logical network, rather than between networks. This is because traffic between logical networks (using different subnets) needs to pass through a router, resulting in additional latency.

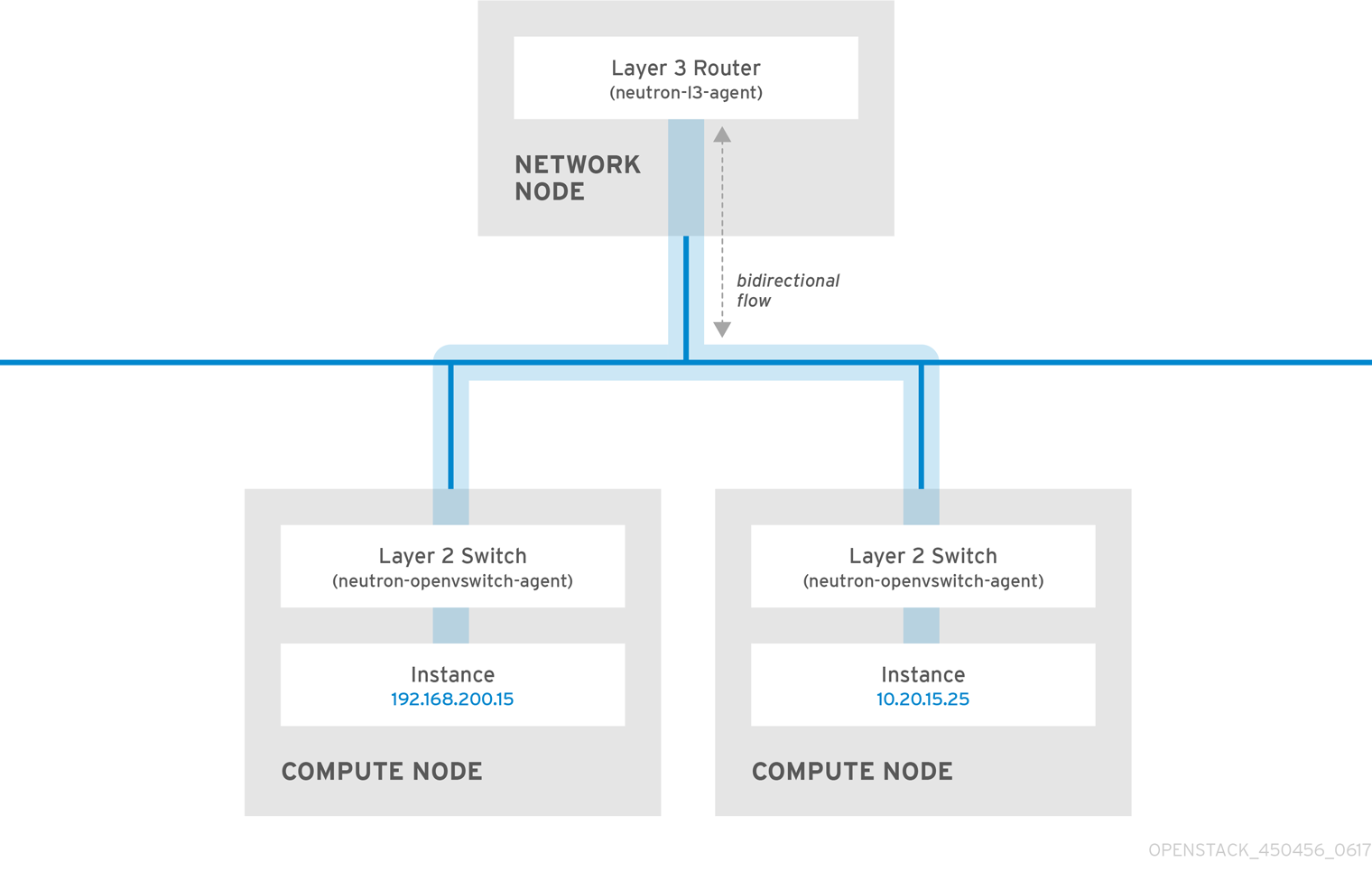

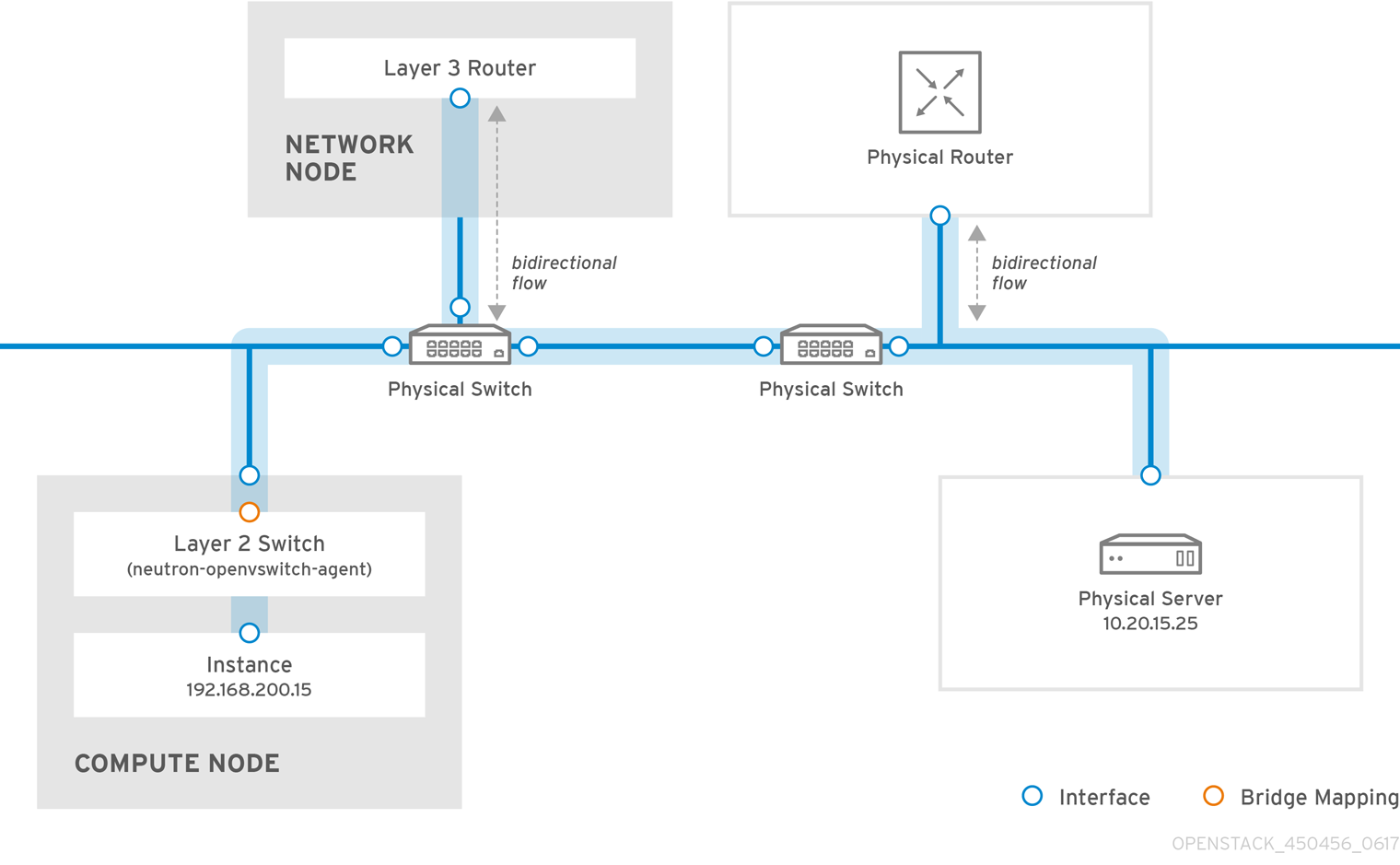

Consider the diagram below which has network traffic flowing between instances on separate VLANs:

Even a high performance hardware router is still going to add some latency to this configuration.

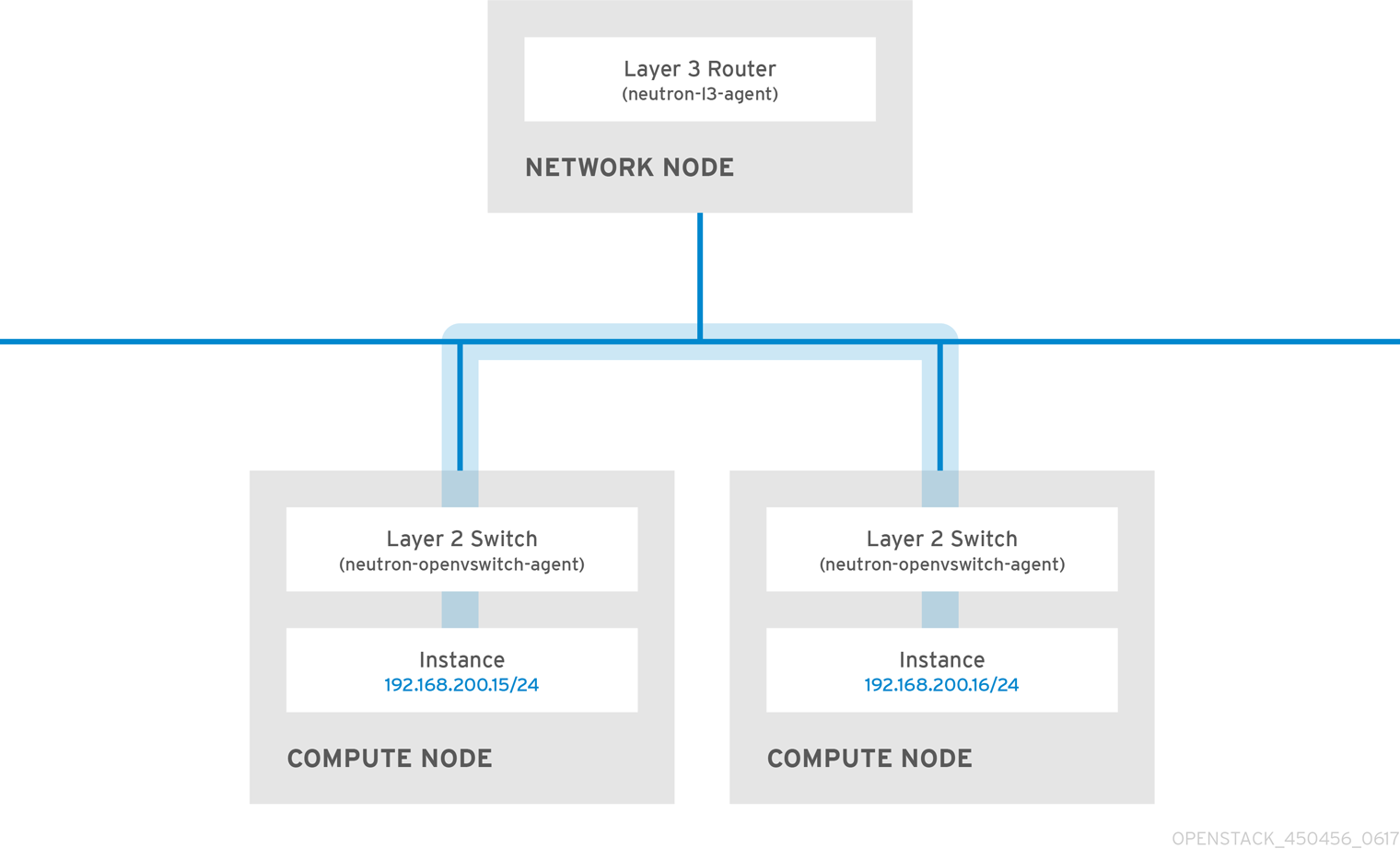

2.9.1. Use switching where possible

Switching occurs at a lower level of the network (layer 2), so can function much quicker than the routing that occurs at layer 3. The preference should be to have as few hops as possible between systems that frequently communicate. For example, this diagram depicts a switched network that spans two physical nodes, allowing the two instances to directly communicate without using a router for navigation first. You’ll notice that the instances now share the same subnet, to indicate that they’re on the same logical network:

In order to allow instances on separate nodes to communicate as if they’re on the same logical network, you’ll need to use an encapsulation tunnel such as VXLAN or GRE. It is recommended you consider adjusting the MTU size from end-to-end in order to accommodate the additional bits required for the tunnel header, otherwise network performance can be negatively impacted as a result of fragmentation. For more information, see Configure MTU Settings.

You can further improve the performance of VXLAN tunneling by using supported hardware that features VXLAN offload capabilities. The full list is available here: https://access.redhat.com/articles/1390483

Part I. Common Tasks

Covers common administrative tasks and basic troubleshooting steps.

Chapter 3. Common administrative tasks

OpenStack Networking (neutron) is the software-defined networking component of Red Hat OpenStack Platform. The virtual network infrastructure enables connectivity between instances and the physical external network.

This section describes common administration tasks, such as adding and removing subnets and routers to suit your Red Hat OpenStack Platform deployment.

3.1. Create a network

Create a network to give your instances a place to communicate with each other and receive IP addresses using DHCP. A network can also be integrated with external networks in your Red Hat OpenStack Platform deployment or elsewhere, such as the physical network. This integration allows your instances to communicate with outside systems. For more information, see Bridge the physical network.

When creating networks, it is important to know that networks can host multiple subnets. This is useful if you intend to host distinctly different systems in the same network, and would prefer a measure of isolation between them. For example, you can designate that only webserver traffic is present on one subnet, while database traffic traverse another. Subnets are isolated from each other, and any instance that wishes to communicate with another subnet must have their traffic directed by a router. Consider placing systems that will require a high volume of traffic amongst themselves in the same subnet, so that they don’t require routing, and avoid the subsequent latency and load.

1. In the dashboard, select Project > Network > Networks.

2. Click +Create Network and specify the following:

| Field | Description |

|---|---|

| Network Name |

Descriptive name, based on the role that the network will perform. If you are integrating the network with an external VLAN, consider appending the VLAN ID number to the name. For example, |

| Admin State | Controls whether the network is immediately available. This field allows you to create the network but still keep it in a Down state, where it is logically present but still inactive. This is useful if you do not intend to enter the network into production right away. |

3. Click the Next button, and specify the following in the Subnet tab:

| Field | Description |

|---|---|

| Create Subnet | Determines whether a subnet is created. For example, you might not want to create a subnet if you intend to keep this network as a placeholder without network connectivity. |

| Subnet Name | Enter a descriptive name for the subnet. |

| Network Address | Enter the address in CIDR format, which contains the IP address range and subnet mask in one value. To determine the address, calculate the number of bits masked in the subnet mask and append that value to the IP address range. For example, the subnet mask 255.255.255.0 has 24 masked bits. To use this mask with the IPv4 address range 192.168.122.0, specify the address 192.168.122.0/24. |

| IP Version | Specifies the internet protocol version, where valid types are IPv4 or IPv6. The IP address range in the Network Address field must match whichever version you select. |

| Gateway IP | IP address of the router interface for your default gateway. This address is the next hop for routing any traffic destined for an external location, and must be within the range specified in the Network Address field. For example, if your CIDR network address is 192.168.122.0/24, then your default gateway is likely to be 192.168.122.1. |

| Disable Gateway | Disables forwarding and keeps the subnet isolated. |

4. Click Next to specify DHCP options:

- Enable DHCP - Enables DHCP services for this subnet. DHCP allows you to automate the distribution of IP settings to your instances.

IPv6 Address -Configuration Modes If creating an IPv6 network, specifies how IPv6 addresses and additional information are allocated:

- No Options Specified - Select this option if IP addresses are set manually, or a non OpenStack-aware method is used for address allocation.

- SLAAC (Stateless Address Autoconfiguration) - Instances generate IPv6 addresses based on Router Advertisement (RA) messages sent from the OpenStack Networking router. This configuration results in an OpenStack Networking subnet created with ra_mode set to slaac and address_mode set to slaac.

- DHCPv6 stateful - Instances receive IPv6 addresses as well as additional options (for example, DNS) from OpenStack Networking DHCPv6 service. This configuration results in a subnet created with ra_mode set to dhcpv6-stateful and address_mode set to dhcpv6-stateful.

- DHCPv6 stateless - Instances generate IPv6 addresses based on Router Advertisement (RA) messages sent from the OpenStack Networking router. Additional options (for example, DNS) are allocated from the OpenStack Networking DHCPv6 service. This configuration results in a subnet created with ra_mode set to dhcpv6-stateless and address_mode set to dhcpv6-stateless.

- Allocation Pools - Range of IP addresses you would like DHCP to assign. For example, the value 192.168.22.100,192.168.22.100 considers all up addresses in that range as available for allocation.

- DNS Name Servers - IP addresses of the DNS servers available on the network. DHCP distributes these addresses to the instances for name resolution.

- Host Routes - Static host routes. First specify the destination network in CIDR format, followed by the next hop that should be used for routing. For example: 192.168.23.0/24, 10.1.31.1 Provide this value if you need to distribute static routes to instances.

5. Click Create.

The completed network is available for viewing in the Networks tab. You can also click Edit to change any options as needed. Now when you create instances, you can configure them now to use its subnet, and they will subsequently receive any specified DHCP options.

3.2. Create an advanced network

Advanced network options are available for administrators, when creating a network from the Admin view. These options define the network type to use, and allow tenants to be specified:

1. In the dashboard, select Admin > Networks > Create Network > Project. Select a destination project to host the new network using Project.

2. Review the options in Provider Network Type:

- Local - Traffic remains on the local Compute host and is effectively isolated from any external networks.

- Flat - Traffic remains on a single network and can also be shared with the host. No VLAN tagging or other network segregation takes place.

- VLAN - Create a network using a VLAN ID that corresponds to a VLAN present in the physical network. Allows instances to communicate with systems on the same layer 2 VLAN.

- GRE - Use a network overlay that spans multiple nodes for private communication between instances. Traffic egressing the overlay must be routed.

- VXLAN - Similar to GRE, and uses a network overlay to span multiple nodes for private communication between instances. Traffic egressing the overlay must be routed.

Click Create Network, and review the Project’s Network Topology to validate that the network has been successfully created.

3.3. Add network routing

To allow traffic to be routed to and from your new network, you must add its subnet as an interface to an existing virtual router:

1. In the dashboard, select Project > Network > Routers.

2. Click on your virtual router’s name in the Routers list, and click +Add Interface. In the Subnet list, select the name of your new subnet. You can optionally specify an IP address for the interface in this field.

3. Click Add Interface.

Instances on your network are now able to communicate with systems outside the subnet.

3.4. Delete a network

There are occasions where it becomes necessary to delete a network that was previously created, perhaps as housekeeping or as part of a decommissioning process. In order to successfully delete a network, you must first remove or detach any interfaces where it is still in use. The following procedure provides the steps for deleting a network in your project, together with any dependent interfaces.

1. In the dashboard, select Project > Network > Networks. Remove all router interfaces associated with the target network’s subnets. To remove an interface: Find the ID number of the network you would like to delete by clicking on your target network in the Networks list, and looking at the its ID field. All the network’s associated subnets will share this value in their Network ID field.

2. Select Project > Network > Routers, click on your virtual router’s name in the Routers list, and locate the interface attached to the subnet you would like to delete. You can distinguish it from the others by the IP address that would have served as the gateway IP. In addition, you can further validate the distinction by ensuring that the interface’s network ID matches the ID you noted in the previous step.

3. Click the interface’s Delete Interface button.

Select Project > Network > Networks, and click the name of your network. Click the target subnet’s Delete Subnet button.

If you are still unable to remove the subnet at this point, ensure it is not already being used by any instances.

4. Select Project > Network > Networks, and select the network you would like to delete.

5. Click Delete Networks.

3.5. Purge a tenant’s networking

In a previous release, after deleting a project, you might have noticed the presence of stale resources that were once allocated to the project. This included networks, routers, and ports. Previously, these stale resources had to be been manually deleted, while also being mindful of deleting them in the correct order. This has been addressed in Red Hat OpenStack Platform 12, where you can instead use the neutron purge command to delete all the neutron resources that once belonged to a particular project.

For example, to purge the neutron resources of test-project prior to deletion:

# openstack project list

+----------------------------------+--------------+

| ID | Name |

+----------------------------------+--------------+

| 02e501908c5b438dbc73536c10c9aac0 | test-project |

| 519e6344f82e4c079c8e2eabb690023b | services |

| 80bf5732752a41128e612fe615c886c6 | demo |

| 98a2f53c20ce4d50a40dac4a38016c69 | admin |

+----------------------------------+--------------+

# neutron purge 02e501908c5b438dbc73536c10c9aac0

Purging resources: 100% complete.

Deleted 1 security_group, 1 router, 1 port, 1 network.

# openstack project delete 02e501908c5b438dbc73536c10c9aac03.6. Create a subnet

Subnets are the means by which instances are granted network connectivity. Each instance is assigned to a subnet as part of the instance creation process, therefore it’s important to consider proper placement of instances to best accommodate their connectivity requirements. Subnets are created in pre-existing networks. Remember that tenant networks in OpenStack Networking can host multiple subnets. This is useful if you intend to host distinctly different systems in the same network, and would prefer a measure of isolation between them. For example, you can designate that only webserver traffic is present on one subnet, while database traffic traverse another. Subnets are isolated from each other, and any instance that wishes to communicate with another subnet must have their traffic directed by a router. Consider placing systems that will require a high volume of traffic amongst themselves in the same subnet, so that they don’t require routing, and avoid the subsequent latency and load.

3.6.1. Create a new subnet

In the dashboard, select Project > Network > Networks, and click your network’s name in the Networks view.

1. Click Create Subnet, and specify the following.

| Field | Description |

|---|---|

| Subnet Name | Descriptive subnet name. |

| Network Address | Address in CIDR format, which contains the IP address range and subnet mask in one value. To determine the address, calculate the number of bits masked in the subnet mask and append that value to the IP address range. For example, the subnet mask 255.255.255.0 has 24 masked bits. To use this mask with the IPv4 address range 192.168.122.0, specify the address 192.168.122.0/24. |

| IP Version | Internet protocol version, where valid types are IPv4 or IPv6. The IP address range in the Network Address field must match whichever version you select. |

| Gateway IP | IP address of the router interface for your default gateway. This address is the next hop for routing any traffic destined for an external location, and must be within the range specified in the Network Address field. For example, if your CIDR network address is 192.168.122.0/24, then your default gateway is likely to be 192.168.122.1. |

| Disable Gateway | Disables forwarding and keeps the subnet isolated. |

2. Click Next to specify DHCP options:

- Enable DHCP - Enables DHCP services for this subnet. DHCP allows you to automate the distribution of IP settings to your instances.

IPv6 Address -Configuration Modes If creating an IPv6 network, specifies how IPv6 addresses and additional information are allocated:

- No Options Specified - Select this option if IP addresses are set manually, or a non OpenStack-aware method is used for address allocation.

- SLAAC (Stateless Address Autoconfiguration) - Instances generate IPv6 addresses based on Router Advertisement (RA) messages sent from the OpenStack Networking router. This configuration results in an OpenStack Networking subnet created with ra_mode set to slaac and address_mode set to slaac.

- DHCPv6 stateful - Instances receive IPv6 addresses as well as additional options (for example, DNS) from OpenStack Networking DHCPv6 service. This configuration results in a subnet created with ra_mode set to dhcpv6-stateful and address_mode set to dhcpv6-stateful.

- DHCPv6 stateless - Instances generate IPv6 addresses based on Router Advertisement (RA) messages sent from the OpenStack Networking router. Additional options (for example, DNS) are allocated from the OpenStack Networking DHCPv6 service. This configuration results in a subnet created with ra_mode set to dhcpv6-stateless and address_mode set to dhcpv6-stateless.

- Allocation Pools - Range of IP addresses you would like DHCP to assign. For example, the value 192.168.22.100,192.168.22.100 considers all up addresses in that range as available for allocation.

- DNS Name Servers - IP addresses of the DNS servers available on the network. DHCP distributes these addresses to the instances for name resolution.

- Host Routes - Static host routes. First specify the destination network in CIDR format, followed by the next hop that should be used for routing. For example: 192.168.23.0/24, 10.1.31.1 Provide this value if you need to distribute static routes to instances.

3. Click Create.

The new subnet is available for viewing in your network’s Subnets list. You can also click Edit to change any options as needed. When you create instances, you can configure them now to use this subnet, and they will subsequently receive any specified DHCP options.

3.7. Delete a subnet

You can delete a subnet if it is no longer in use. However, if any instances are still configured to use the subnet, the deletion attempt fails and the dashboard displays an error message. This procedure demonstrates how to delete a specific subnet in a network:

In the dashboard, select Project > Network > Networks, and click the name of your network. Select the target subnet and click Delete Subnets.

3.8. Add a router

OpenStack Networking provides routing services using an SDN-based virtual router. Routers are a requirement for your instances to communicate with external subnets, including those out in the physical network. Routers and subnets connect using interfaces, with each subnet requiring its own interface to the router. A router’s default gateway defines the next hop for any traffic received by the router. Its network is typically configured to route traffic to the external physical network using a virtual bridge.

1. In the dashboard, select Project > Network > Routers, and click +Create Router.

2. Enter a descriptive name for the new router, and click Create router.

3. Click Set Gateway next to the new router’s entry in the Routers list.

4. In the External Network list, specify the network that will receive traffic destined for an external location.

5. Click Set Gateway. After adding a router, the next step is to configure any subnets you have created to send traffic using this router. You do this by creating interfaces between the subnet and the router.

3.9. Delete a router

You can delete a router if it has no connected interfaces. This procedure describes the steps needed to first remove a router’s interfaces, and then the router itself.

1. In the dashboard, select Project > Network > Routers, and click on the name of the router you would like to delete.

2. Select the interfaces of type Internal Interface. Click Delete Interfaces.

3. From the Routers list, select the target router and click Delete Routers.

3.10. Add an interface

Interfaces allow you to interconnect routers with subnets. As a result, the router can direct any traffic that instances send to destinations outside of their intermediate subnet. This procedure adds a router interface and connects it to a subnet. The procedure uses the Network Topology feature, which displays a graphical representation of all your virtual router and networks and enables you to perform network management tasks.

1. In the dashboard, select Project > Network > Network Topology.

2. Locate the router you wish to manage, hover your mouse over it, and click Add Interface.

3. Specify the Subnet to which you would like to connect the router. You have the option of specifying an IP Address. The address is useful for testing and troubleshooting purposes, since a successful ping to this interface indicates that the traffic is routing as expected.

4. Click Add interface.

The Network Topology diagram automatically updates to reflect the new interface connection between the router and subnet.

3.11. Delete an interface

You can remove an interface to a subnet if you no longer require the router to direct its traffic. This procedure demonstrates the steps required for deleting an interface:

1. In the dashboard, select Project > Network > Routers.

2. Click on the name of the router that hosts the interface you would like to delete.

3. Select the interface (will be of type Internal Interface), and click Delete Interfaces.

3.12. Configure IP addressing

You can use procedures in this section to manage your IP address allocation in OpenStack Networking.

3.12.1. Create floating IP pools

Floating IP addresses allow you to direct ingress network traffic to your OpenStack instances. You begin by defining a pool of validly routable external IP addresses, which can then be dynamically assigned to an instance. OpenStack Networking then knows to route all incoming traffic destined for that floating IP to the instance to which it has been assigned.

OpenStack Networking allocates floating IP addresses to all projects (tenants) from the same IP ranges/CIDRs. Meaning that every subnet of floating IPs is consumable by any and all projects. You can manage this behavior using quotas for specific projects. For example, you can set the default to 10 for ProjectA and ProjectB, while setting ProjectC's quota to 0.

The Floating IP allocation pool is defined when you create an external subnet. If the subnet only hosts floating IP addresses, consider disabling DHCP allocation with the enable_dhcp=False option:

# neutron subnet-create --name SUBNET_NAME --enable_dhcp=False --allocation_pool start=IP_ADDRESS,end=IP_ADDRESS --gateway=IP_ADDRESS NETWORK_NAME CIDRFor example:

# neutron subnet-create --name public_subnet --enable_dhcp=False --allocation_pool start=192.168.100.20,end=192.168.100.100 --gateway=192.168.100.1 public 192.168.100.0/243.12.2. Assign a specific floating IP

You can assign a specific floating IP address to an instance using the nova command.

# nova floating-ip-associate INSTANCE_NAME IP_ADDRESSIn this example, a floating IP address is allocated to an instance named corp-vm-01:

# nova floating-ip-associate corp-vm-01 192.168.100.203.12.3. Assign a random floating IP

Floating IP addresses can be dynamically allocated to instances. You do not select a particular IP address, but instead request that OpenStack Networking allocates one from the pool. Allocate a floating IP from the previously created pool:

# neutron floatingip-create public

+---------------------+--------------------------------------+

| Field | Value |

+---------------------+--------------------------------------+

| fixed_ip_address | |

| floating_ip_address | 192.168.100.20 |

| floating_network_id | 7a03e6bc-234d-402b-9fb2-0af06c85a8a3 |

| id | 9d7e2603482d |

| port_id | |

| router_id | |

| status | ACTIVE |

| tenant_id | 9e67d44eab334f07bf82fa1b17d824b6 |

+---------------------+--------------------------------------+With the IP address allocated, you can assign it to a particular instance. Locate the ID of the port associated with your instance (this will match the fixed IP address allocated to the instance). This port ID is used in the following step to associate the instance’s port ID with the floating IP address ID. You can further distinguish the correct port ID by ensuring the MAC address in the third column matches the one on the instance.

# neutron port-list

+--------+------+-------------+--------------------------------------------------------+

| id | name | mac_address | fixed_ips |

+--------+------+-------------+--------------------------------------------------------+

| ce8320 | | 3e:37:09:4b | {"subnet_id": "361f27", "ip_address": "192.168.100.2"} |

| d88926 | | 3e:1d:ea:31 | {"subnet_id": "361f27", "ip_address": "192.168.100.5"} |

| 8190ab | | 3e:a3:3d:2f | {"subnet_id": "b74dbb", "ip_address": "10.10.1.25"}|

+--------+------+-------------+--------------------------------------------------------+Use the neutron command to associate the floating IP address with the desired port ID of an instance:

# neutron floatingip-associate 9d7e2603482d 8190ab3.13. Create multiple floating IP pools

OpenStack Networking supports one floating IP pool per L3 agent. Therefore, scaling out your L3 agents allows you to create additional floating IP pools.

Ensure that handle_internal_only_routers in /etc/neutron/neutron.conf is configured to True for only one L3 agent in your environment. This option configures the L3 agent to manage only non-external routers.

3.14. Bridge the physical network

The procedure below enables you to bridge your virtual network to the physical network to enable connectivity to and from virtual instances. In this procedure, the example physical eth0 interface is mapped to the br-ex bridge; the virtual bridge acts as the intermediary between the physical network and any virtual networks. As a result, all traffic traversing eth0 uses the configured Open vSwitch to reach instances. Map a physical NIC to the virtual Open vSwitch bridge (for more information, see Chapter 12, Configure Bridge Mappings):

IPADDR, NETMASK GATEWAY, and DNS1 (name server) must be updated to match your network.

# vi /etc/sysconfig/network-scripts/ifcfg-eth0

DEVICE=eth0

TYPE=OVSPort

DEVICETYPE=ovs

OVS_BRIDGE=br-ex

ONBOOT=yesConfigure the virtual bridge with the IP address details that were previously allocated to eth0:

# vi /etc/sysconfig/network-scripts/ifcfg-br-ex

DEVICE=br-ex

DEVICETYPE=ovs

TYPE=OVSBridge

BOOTPROTO=static

IPADDR=192.168.120.10

NETMASK=255.255.255.0

GATEWAY=192.168.120.1

DNS1=192.168.120.1

ONBOOT=yesYou can now assign floating IP addresses to instances and make them available to the physical network.

Chapter 4. Planning IP Address usage

An OpenStack deployment can consume a larger number of IP addresses than might be expected. This section aims to help with correctly anticipating the quantity of addresses required, and explains where they will be used.

VIPs (also known as Virtual IP Addresses) - VIP addresses host HA services, and are basically an IP address shared between multiple controller nodes.

4.1. Using multiple VLANs

When planning your OpenStack deployment, you might begin with a number of these subnets, from which you would be expected to allocate how the individual addresses will be used. Having multiple subnets allows you to segregate traffic between systems into VLANs. For example, you would not generally want management or API traffic to share the same network as systems serving web traffic. Traffic between VLANs will also need to traverse through a router, which represents an opportunity to have firewalls in place to further govern traffic flow.

4.2. Isolating VLAN traffic

You would typically allocate separate VLANs for the different types of network traffic you will host. For example, you could have separate VLANs for each of these types of networks. Of these, only the External network needs to be routable to the external physical network. In this release, DHCP services are provided by the director.

Not all of the isolated VLANs in this section will be required for every OpenStack deployment. For example, if your cloud users don’t need to create ad hoc virtual networks on demand, then you may not require a tenant network; if you just need each VM to connect directly to the same switch as any other physical system, then you probably just need to connect your Compute nodes directly to a provider network and have your instances use that provider network directly.

- Provisioning network - This VLAN is dedicated to deploying new nodes using director over PXE boot. OpenStack Orchestration (heat) installs OpenStack onto the overcloud bare metal servers; these are attached to the physical network to receive the platform installation image from the undercloud infrastructure.

Internal API network - The Internal API network is used for communication between the OpenStack services, and covers API communication, RPC messages, and database communication. In addition, this network is used for operational messages between controller nodes. When planning your IP address allocation, note that each API service requires its own IP address. Specifically, an IP address is required for each of these services:

- vip-msg (ampq)

- vip-keystone-int

- vip-glance-int

- vip-cinder-int

- vip-nova-int

- vip-neutron-int

- vip-horizon-int

- vip-heat-int

- vip-ceilometer-int

- vip-swift-int

- vip-keystone-pub

- vip-glance-pub

- vip-cinder-pub

- vip-nova-pub

- vip-neutron-pub

- vip-horizon-pub

- vip-heat-pub

- vip-ceilometer-pub

- vip-swift-pub

When using High Availability, Pacemaker expects to be able to move the VIP addresses between the physical nodes.

- Storage - Block Storage, NFS, iSCSI, among others. Ideally, this would be isolated to separate physical Ethernet links for performance reasons.

- Storage Management - OpenStack Object Storage (swift) uses this network to synchronise data objects between participating replica nodes. The proxy service acts as the intermediary interface between user requests and the underlying storage layer. The proxy receives incoming requests and locates the necessary replica to retrieve the requested data. Services that use a Ceph back-end connect over the Storage Management network, since they do not interact with Ceph directly but rather use the front-end service. Note that the RBD driver is an exception; this traffic connects directly to Ceph.

- Tenant networks - Neutron provides each tenant with their own networks using either VLAN segregation (where each tenant network is a network VLAN), or tunneling via VXLAN or GRE. Network traffic is isolated within each tenant network. Each tenant network has an IP subnet associated with it, and multiple tenant networks may use the same addresses.

- External - The External network hosts the public API endpoints and connections to the Dashboard (horizon). You can also optionally use this same network for SNAT, but this is not a requirement. In a production deployment, you will likely use a separate network for floating IP addresses and NAT.

- Provider networks - These networks allows instances to be attached to existing network infrastructure. You can use provider networks to map directly to an existing physical network in the data center, using flat networking or VLAN tags. This allows an instance to share the same layer-2 network as a system external to the OpenStack Networking infrastructure.

4.3. IP address consumption

The following systems will consume IP addresses from your allocated range:

- Physical nodes - Each physical NIC will require one IP address; it is common practice to dedicate physical NICs to specific functions. For example, management and NFS traffic would each be allocated their own physical NICs (sometimes with multiple NICs connecting across to different switches for redundancy purposes).

- Virtual IPs (VIPs) for High Availability - You can expect to allocate around 1 to 3 for each network shared between controller nodes.

4.4. Virtual Networking

These virtual resources consume IP addresses in OpenStack Networking. These are considered local to the cloud infrastructure, and do not need to be reachable by systems in the external physical network:

- Tenant networks - Each tenant network will require a subnet from which it will allocate IP addresses to instances.

- Virtual routers - Each router interface plugging into a subnet will require one IP address (with an additional address required if DHCP is enabled).

- Instances - Each instance will require an address from the tenant subnet they are hosted in. If ingress traffic is needed, an additional floating IP address will need to be allocated from the designated external network.

- Management traffic - Includes OpenStack Services and API traffic. In Red Hat OpenStack Platform 11, requirements for virtual IP addresses have been reduced; all services will instead share a small number of VIPs. API, RPC and database services will communicate on the internal API VIP.

4.5. Example network plan

This example shows a number of networks that accommodate multiple subnets, with each subnet being assigned a range of IP addresses:

| Subnet name | Address range | Number of addresses | Subnet Mask |

|---|---|---|---|

| Provisioning network | 192.168.100.1 - 192.168.100.250 | 250 | 255.255.255.0 |

| Internal API network | 172.16.1.10 - 172.16.1.250 | 241 | 255.255.255.0 |

| Storage | 172.16.2.10 - 172.16.2.250 | 241 | 255.255.255.0 |

| Storage Management | 172.16.3.10 - 172.16.3.250 | 241 | 255.255.255.0 |

| Tenant network (GRE/VXLAN) | 172.19.4.10 - 172.16.4.250 | 241 | 255.255.255.0 |

| External network (incl. floating IPs) | 10.1.2.10 - 10.1.3.222 | 469 | 255.255.254.0 |

| Provider network (infrastructure) | 10.10.3.10 - 10.10.3.250 | 241 | 255.255.252.0 |

Chapter 5. Review OpenStack Networking router ports

Virtual routers in OpenStack Networking use ports to interconnect with subnets. You can review the state of these ports to determine whether they’re connecting as expected.

5.1. View current port status

This procedure lists all the ports attached to a particular router, then demonstrates how to retrieve a port’s state (DOWN or ACTIVE).

1. View all the ports attached to the router named r1:

# neutron router-port-list r1Example result:

+--------------------------------------+------+-------------------+--------------------------------------------------------------------------------------+

| id | name | mac_address | fixed_ips |

+--------------------------------------+------+-------------------+--------------------------------------------------------------------------------------+

| b58d26f0-cc03-43c1-ab23-ccdb1018252a | | fa:16:3e:94:a7:df | {"subnet_id": "a592fdba-babd-48e0-96e8-2dd9117614d3", "ip_address": "192.168.200.1"} |

| c45e998d-98a1-4b23-bb41-5d24797a12a4 | | fa:16:3e:ee:6a:f7 | {"subnet_id": "43f8f625-c773-4f18-a691-fd4ebfb3be54", "ip_address": "172.24.4.225"} |

+--------------------------------------+------+-------------------+--------------------------------------------------------------------------------------+2. View the details of each port by running this command against its ID (the value in the left column). The result includes the port’s status, indicated in the following example as having an ACTIVE state:

# neutron port-show b58d26f0-cc03-43c1-ab23-ccdb1018252aExample result:

+-----------------------+--------------------------------------------------------------------------------------+

| Field | Value |

+-----------------------+--------------------------------------------------------------------------------------+

| admin_state_up | True |

| allowed_address_pairs | |

| binding:host_id | node.example.com |

| binding:profile | {} |

| binding:vif_details | {"port_filter": true, "ovs_hybrid_plug": true} |

| binding:vif_type | ovs |

| binding:vnic_type | normal |

| device_id | 49c6ebdc-0e62-49ad-a9ca-58cea464472f |

| device_owner | network:router_interface |

| extra_dhcp_opts | |

| fixed_ips | {"subnet_id": "a592fdba-babd-48e0-96e8-2dd9117614d3", "ip_address": "192.168.200.1"} |

| id | b58d26f0-cc03-43c1-ab23-ccdb1018252a |

| mac_address | fa:16:3e:94:a7:df |

| name | |

| network_id | 63c24160-47ac-4140-903d-8f9a670b0ca4 |

| security_groups | |

| status | ACTIVE |

| tenant_id | d588d1112e0f496fb6cac22f9be45d49 |

+-----------------------+--------------------------------------------------------------------------------------+Perform this step for each port to retrieve its status.

Chapter 6. Troubleshoot Provider Networks

A deployment of virtual routers and switches, also known as software-defined networking (SDN), may seem to introduce complexity at first glance. However, the diagnostic process of troubleshooting network connectivity in OpenStack Networking is similar to that of physical networks. If using VLANs, the virtual infrastructure can be considered a trunked extension of the physical network, rather than a wholly separate environment.

6.1. Topics covered

- Basic ping testing

- Troubleshooting VLAN networks

- Troubleshooting from within tenant networks

6.2. Basic ping testing

The ping command is a useful tool for analyzing network connectivity problems. The results serve as a basic indicator of network connectivity, but might not entirely exclude all connectivity issues, such as a firewall blocking the actual application traffic. The ping command works by sending traffic to specified destinations, and then reports back whether the attempts were successful.

The ping command expects that ICMP traffic is allowed to traverse any intermediary firewalls.

Ping tests are most useful when run from the machine experiencing network issues, so it may be necessary to connect to the command line via the VNC management console if the machine seems to be completely offline.

For example, the ping test command below needs to validate multiple layers of network infrastructure in order to succeed; name resolution, IP routing, and network switching will all need to be functioning correctly:

$ ping www.redhat.com

PING e1890.b.akamaiedge.net (125.56.247.214) 56(84) bytes of data.

64 bytes from a125-56.247-214.deploy.akamaitechnologies.com (125.56.247.214): icmp_seq=1 ttl=54 time=13.4 ms

64 bytes from a125-56.247-214.deploy.akamaitechnologies.com (125.56.247.214): icmp_seq=2 ttl=54 time=13.5 ms

64 bytes from a125-56.247-214.deploy.akamaitechnologies.com (125.56.247.214): icmp_seq=3 ttl=54 time=13.4 ms

^CYou can terminate the ping command with Ctrl-c, after which a summary of the results is presented. Zero packet loss indicates that the connection was timeous and stable:

--- e1890.b.akamaiedge.net ping statistics ---

3 packets transmitted, 3 received, 0% packet loss, time 2003ms

rtt min/avg/max/mdev = 13.461/13.498/13.541/0.100 ms

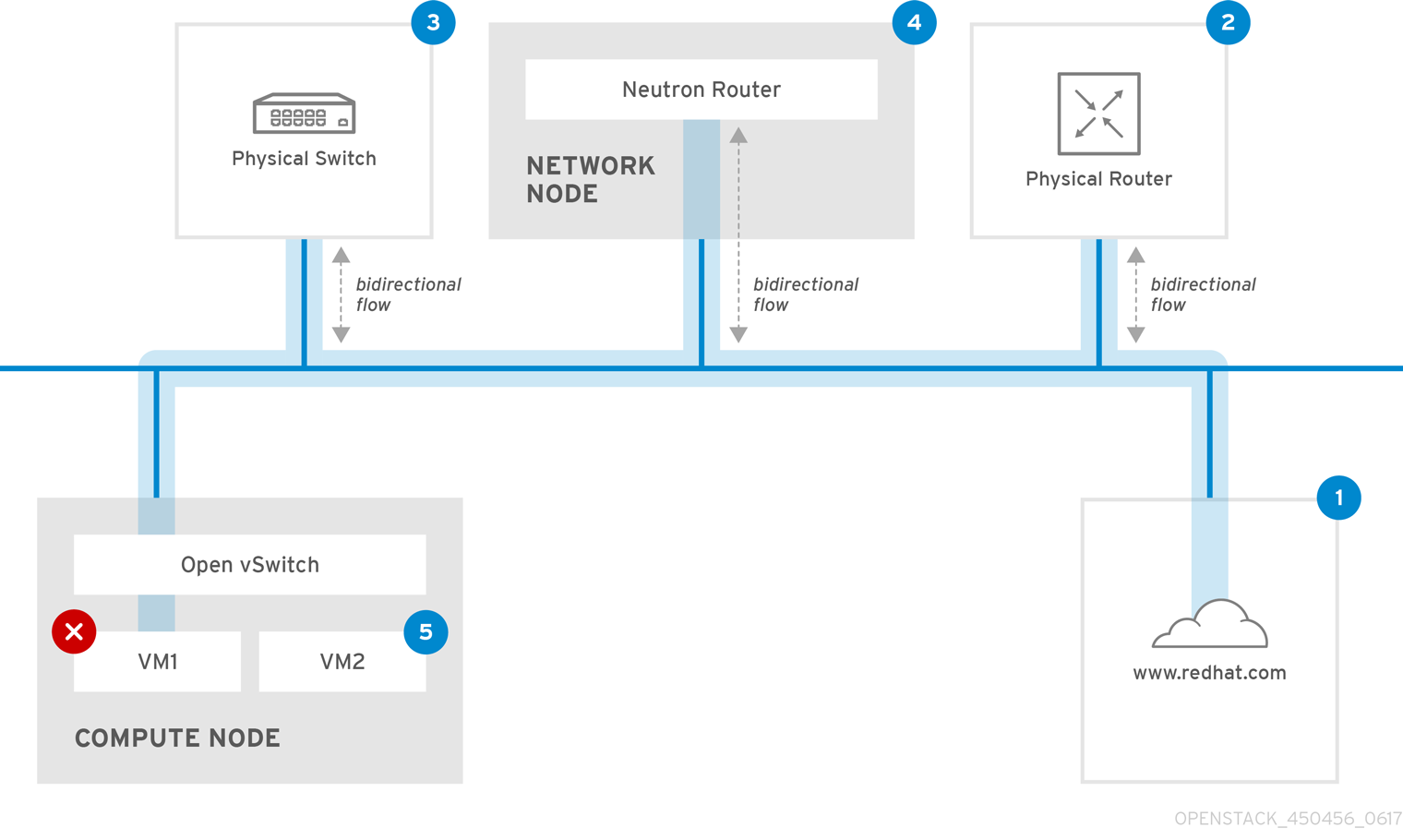

In addition, the results of a ping test can be very revealing, depending on which destination gets tested. For example, in the following diagram VM1 is experiencing some form of connectivity issue. The possible destinations are numbered in blue, and the conclusions drawn from a successful or failed result are presented:

1. The internet - a common first step is to send a ping test to an internet location, such as www.redhat.com.

- Success: This test indicates that all the various network points in between are working as expected. This includes the virtual and physical network infrastructure.

- Failure: There are various ways in which a ping test to a distant internet location can fail. If other machines on your network are able to successfully ping the internet, that proves the internet connection is working, and it’s time to bring the troubleshooting closer to home.

2. Physical router - This is the router interface designated by the network administrator to direct traffic onward to external destinations.

- Success: Ping tests to the physical router can determine whether the local network and underlying switches are functioning. These packets don’t traverse the router, so they do not prove whether there is a routing issue present on the default gateway.

- Failure: This indicates that the problem lies between VM1 and the default gateway. The router/switches might be down, or you may be using an incorrect default gateway. Compare the configuration with that on another server that is known to be working. Try pinging another server on the local network.

3. Neutron router - This is the virtual SDN (Software-defined Networking) router used by Red Hat OpenStack Platform to direct the traffic of virtual machines.

- Success: Firewall is allowing ICMP traffic, the Networking node is online.

- Failure: Confirm whether ICMP traffic is permitted in the instance’s security group. Check that the Networking node is online, confirm that all the required services are running, and review the L3 agent log (/var/log/neutron/l3-agent.log).

4. Physical switch - The physical switch’s role is to manage traffic between nodes on the same physical network.

- Success: Traffic sent by a VM to the physical switch will need to pass through the virtual network infrastructure, indicating that this segment is functioning as expected.

- Failure: Is the physical switch port configured to trunk the required VLANs?

5. VM2 - Attempt to ping a VM on the same subnet, on the same Compute node.

- Success: The NIC driver and basic IP configuration on VM1 are functional.

- Failure: Validate the network configuration on VM1. Or, VM2’s firewall might simply be blocking ping traffic. In addition, confirm that the virtual switching is set up correctly, and review the Open vSwitch (or Linux Bridge) log files.

6.3. Troubleshooting VLAN networks

OpenStack Networking is able to trunk VLAN networks through to the SDN switches. Support for VLAN-tagged provider networks means that virtual instances are able to integrate with server subnets in the physical network.

To troubleshoot connectivity to a VLAN Provider network, attempt to ping the gateway IP designated when the network was created. For example, if you created the network with these commands:

# neutron net-create provider --provider:network_type=vlan --provider:physical_network=phy-eno1 --provider:segmentation_id=120

# neutron subnet-create "provider" --allocation-pool start=192.168.120.1,end=192.168.120.253 --disable-dhcp --gateway 192.168.120.254 192.168.120.0/24Then you’ll want to attempt to ping the defined gateway IP of 192.168.120.254

If that fails, confirm that you have network flow for the associated VLAN (as defined during network creation). In the example above, OpenStack Networking is configured to trunk VLAN 120 to the provider network. This option is set using the parameter --provider:segmentation_id=120.

Confirm the VLAN flow on the bridge interface, in this case it’s named br-ex:

# ovs-ofctl dump-flows br-ex

NXST_FLOW reply (xid=0x4):

cookie=0x0, duration=987.521s, table=0, n_packets=67897, n_bytes=14065247, idle_age=0, priority=1 actions=NORMAL

cookie=0x0, duration=986.979s, table=0, n_packets=8, n_bytes=648, idle_age=977, priority=2,in_port=12 actions=drop6.3.1. Review the VLAN configuration and log files

- OpenStack Networking (neutron) agents - Use the neutron command to verify that all agents are up and registered with the correct names:

# neutron agent-list

+--------------------------------------+--------------------+-----------------------+-------+----------------+

| id | agent_type | host | alive | admin_state_up |

+--------------------------------------+--------------------+-----------------------+-------+----------------+

| a08397a8-6600-437d-9013-b2c5b3730c0c | Metadata agent | rhelosp.example.com | :-) | True |

| a5153cd2-5881-4fc8-b0ad-be0c97734e6a | L3 agent | rhelosp.example.com | :-) | True |

| b54f0be7-c555-43da-ad19-5593a075ddf0 | DHCP agent | rhelosp.example.com | :-) | True |

| d2be3cb0-4010-4458-b459-c5eb0d4d354b | Open vSwitch agent | rhelosp.example.com | :-) | True |

+--------------------------------------+--------------------+-----------------------+-------+----------------+- Review /var/log/neutron/openvswitch-agent.log - this log should provide confirmation that the creation process used the ovs-ofctl command to configure VLAN trunking.

-

Validate external_network_bridge in the /etc/neutron/l3_agent.ini file. A hardcoded value here won’t allow you to use a provider network via the L3-agent, and won’t create the necessary flows. As a result, this value should look like this:

external_network_bridge = "" - Check network_vlan_ranges in the /etc/neutron/plugin.ini file. You don’t need to specify the numeric VLAN ID if it’s a provider network. The only time you need to specify the ID(s) here is if you’re using VLAN isolated tenant networks.

-

Validate the OVS agent configuration file bridge mappings, confirm that the bridge mapped to

phy-eno1exists and is properly connected toeno1.

6.4. Troubleshooting from within tenant networks

In OpenStack Networking, all tenant traffic is contained within network namespaces. This allows tenants to configure networks without interfering with each other. For example, network namespaces allow different tenants to have the same subnet range of 192.168.1.1/24 without resulting in any interference between them.

To begin troubleshooting a tenant network, first determine which network namespace contains the network:

1. List all the tenant networks using the neutron command:

# neutron net-list

+--------------------------------------+-------------+-------------------------------------------------------+

| id | name | subnets |

+--------------------------------------+-------------+-------------------------------------------------------+

| 9cb32fe0-d7fb-432c-b116-f483c6497b08 | web-servers | 453d6769-fcde-4796-a205-66ee01680bba 192.168.212.0/24 |

| a0cc8cdd-575f-4788-a3e3-5df8c6d0dd81 | private | c1e58160-707f-44a7-bf94-8694f29e74d3 10.0.0.0/24 |

| baadd774-87e9-4e97-a055-326bb422b29b | private | 340c58e1-7fe7-4cf2-96a7-96a0a4ff3231 192.168.200.0/24 |

| 24ba3a36-5645-4f46-be47-f6af2a7d8af2 | public | 35f3d2cb-6e4b-4527-a932-952a395c4bb3 172.24.4.224/28 |

+--------------------------------------+-------------+-------------------------------------------------------+In this example, we’ll be examining the web-servers network. Make a note of the id value in the web-server row (in this case, its 9cb32fe0-d7fb-432c-b116-f483c6497b08). This value is appended to the network namespace, which will help you identify in the next step.

2. List all the network namespaces using the ip command:

# ip netns list

qdhcp-9cb32fe0-d7fb-432c-b116-f483c6497b08

qrouter-31680a1c-9b3e-4906-bd69-cb39ed5faa01

qrouter-62ed467e-abae-4ab4-87f4-13a9937fbd6b

qdhcp-a0cc8cdd-575f-4788-a3e3-5df8c6d0dd81

qrouter-e9281608-52a6-4576-86a6-92955df46f56In the result there is a namespace that matches the web-server network id. In this example it’s presented as qdhcp-9cb32fe0-d7fb-432c-b116-f483c6497b08.

3. Examine the configuration of the web-servers network by running commands within the namespace. This is done by prefixing the troubleshooting commands with ip netns exec (namespace). For example:

a) View the routing table of the web-servers network:

# ip netns exec qrouter-62ed467e-abae-4ab4-87f4-13a9937fbd6b route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 172.24.4.225 0.0.0.0 UG 0 0 0 qg-8d128f89-87

172.24.4.224 0.0.0.0 255.255.255.240 U 0 0 0 qg-8d128f89-87

192.168.200.0 0.0.0.0 255.255.255.0 U 0 0 0 qr-8efd6357-96b) View the routing table of the web-servers network:

# ip netns exec qrouter-62ed467e-abae-4ab4-87f4-13a9937fbd6b route -n

Kernel IP routing table

Destination Gateway Genmask Flags Metric Ref Use Iface

0.0.0.0 172.24.4.225 0.0.0.0 UG 0 0 0 qg-8d128f89-87

172.24.4.224 0.0.0.0 255.255.255.240 U 0 0 0 qg-8d128f89-87

192.168.200.0 0.0.0.0 255.255.255.0 U 0 0 0 qr-8efd6357-966.4.1. Perform advanced ICMP testing within the namespace

1. Capture ICMP traffic using the tcpdump command.

# ip netns exec qrouter-62ed467e-abae-4ab4-87f4-13a9937fbd6b tcpdump -qnntpi any icmpThere may not be any output until you perform the next step:

2. In a separate command line window, perform a ping test to an external network:

# ip netns exec qrouter-62ed467e-abae-4ab4-87f4-13a9937fbd6b ping www.redhat.com3. In the terminal running the tcpdump session, you will observe detailed results of the ping test.

tcpdump: listening on any, link-type LINUX_SLL (Linux cooked), capture size 65535 bytes

IP (tos 0xc0, ttl 64, id 55447, offset 0, flags [none], proto ICMP (1), length 88)

172.24.4.228 > 172.24.4.228: ICMP host 192.168.200.20 unreachable, length 68

IP (tos 0x0, ttl 64, id 22976, offset 0, flags [DF], proto UDP (17), length 60)

172.24.4.228.40278 > 192.168.200.21: [bad udp cksum 0xfa7b -> 0xe235!] UDP, length 32When performing a tcpdump analysis of traffic, you might observe the responding packets heading to the router interface rather than the instance. This is expected behaviour, as the qrouter performs DNAT on the return packets.

Chapter 7. Connect an instance to the physical network

This chapter explains how to use provider networks to connect instances directly to an external network.

Overview of the OpenStack Networking topology:

OpenStack Networking (neutron) has two categories of services distributed across a number of node types.

- Neutron server - This service runs the OpenStack Networking API server, which provides the API for end-users and services to interact with OpenStack Networking. This server also integrates with the underlying database to store and retrieve tenant network, router, and loadbalancer details, among others.

Neutron agents - These are the services that perform the network functions for OpenStack Networking:

-

neutron-dhcp-agent- manages DHCP IP addressing for tenant private networks. -

neutron-l3-agent- performs layer 3 routing between tenant private networks, the external network, and others. -

neutron-lbaas-agent- provisions the LBaaS routers created by tenants.

-

-

Compute node - This node hosts the hypervisor that runs the virtual machines, also known as instances. A Compute node must be wired directly to the network in order to provide external connectivity for instances. This node is typically where the l2 agents run, such as

neutron-openvswitch-agent.

Service placement:

The OpenStack Networking services can either run together on the same physical server, or on separate dedicated servers, which are named according to their roles:

- Controller node - The server that runs API service.

- Network node - The server that runs the OpenStack Networking agents.

- Compute node - The hypervisor server that hosts the instances.

The steps in this chapter assume that your environment has deployed these three node types. If your deployment has both the Controller and Network node roles on the same physical node, then the steps from both sections must be performed on that server. This also applies for a High Availability (HA) environment, where all three nodes might be running the Controller node and Network node services with HA. As a result, sections applicable to Controller and Network nodes will need to be performed on all three nodes.

7.1. Using Flat Provider Networks

This procedure creates flat provider networks that can connect instances directly to external networks. You would do this if you have multiple physical networks (for example, physnet1, physnet2) and separate physical interfaces (eth0 -> physnet1, and eth1 -> physnet2), and you need to connect each Compute node and Network node to those external networks.

If you want to connect multiple VLAN-tagged interfaces (on a single NIC) to multiple provider networks, please refer to Section 7.2, “Using VLAN provider networks”.

Configure the Controller nodes: