Bare Metal Provisioning

Install and configure the Bare Metal Provisioning service (ironic)

Abstract

Making open source more inclusive

Red Hat is committed to replacing problematic language in our code, documentation, and web properties. We are beginning with these four terms: master, slave, blacklist, and whitelist. Because of the enormity of this endeavor, these changes will be implemented gradually over several upcoming releases. For more details, see our CTO Chris Wright’s message.

Providing feedback on Red Hat documentation

We appreciate your input on our documentation. Tell us how we can make it better.

Using the Direct Documentation Feedback (DDF) function

Use the Add Feedback DDF function for direct comments on specific sentences, paragraphs, or code blocks.

- View the documentation in the Multi-page HTML format.

- Ensure that you see the Feedback button in the upper right corner of the document.

- Highlight the part of text that you want to comment on.

- Click Add Feedback.

- Complete the Add Feedback field with your comments.

- Optional: Add your email address so that the documentation team can contact you for clarification on your issue.

- Click Submit.

Chapter 1. Bare Metal Provisioning service (ironic) functionality

You use the Bare Metal Provisioning service (ironic) components to provision and manage physical machines as bare metal instances for your cloud users. To provision and manage bare metal instances, the Bare Metal Provisioning service interacts with the following Red Hat OpenStack Platform (RHOSP) services in the overcloud:

- The Compute service (nova) provides scheduling, tenant quotas, and a user-facing API for virtual machine instance management. The Bare Metal Provisioning service provides the administrative API for hardware management.

- The Identity service (keystone) provides request authentication and assists the Bare Metal Provisioning service to locate other RHOSP services.

- The Image service (glance) manages disk and instance images and image metadata.

- The Networking service (neutron) provides DHCP and network configuration, and provisions the virtual or physical networks that instances connect to on boot.

- The Object Storage service (swift) exposes temporary image URLs for some drivers.

Bare Metal Provisioning service components

The Bare Metal Provisioning service consists of services, named ironic-*. The following services are the core Bare Metal Provisioning services:

- Bare Metal Provisioning API (

ironic-api) - This service provides the external REST API to users. The API sends application requests to the Bare Metal Provisioning conductor over remote procedure call (RPC).

- Bare Metal Provisioning conductor (

ironic-conductor) This service uses drivers to perform the following bare metal node management tasks:

- Adds, edits, and deletes bare metal nodes.

- Powers bare metal nodes on and off with IPMI, Redfish, or other vendor-specific protocol.

- Provisions, deploys, and cleans bare metal nodes.

- Bare Metal Provisioning inspector (

ironic-inspector) - This service discovers the hardware properties of a bare metal node that are required for scheduling bare metal instances, and creates the Bare Metal Provisioning service ports for the discovered ethernet MACs.

- Bare Metal Provisioning database

- This database tracks hardware information and state.

- Message queue

-

All services use this messaging service to communicate with each other, including implementing the RPC between

ironic-apiandironic-conductor. - Bare Metal Provisioning agent (

ironic-python-agent) -

This service runs in a temporary ramdisk to provide

ironic-conductorandironic-inspectorservices with remote access, in-band hardware control, and hardware introspection.

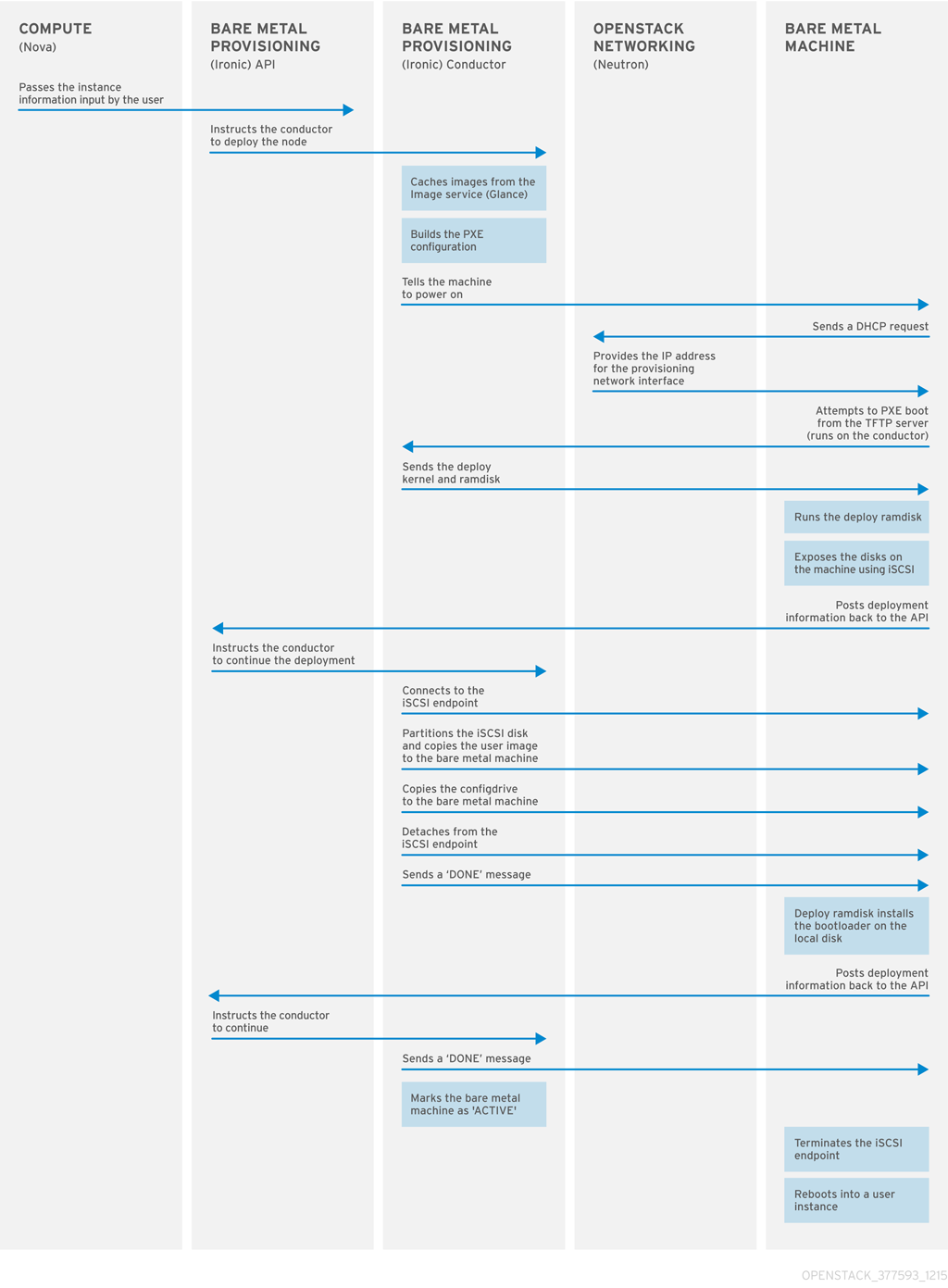

Provisioning a bare metal instance

The Bare Metal Provisioning service uses iPXE to provision physical machines as bare metal instances. The following diagram outlines how the RHOSP services interact during the provisioning process when a cloud user launches a new bare metal instance with the default drivers.

Chapter 2. Requirements for bare metal provisioning

To provide an overcloud where cloud users can launch bare metal instances, your Red Hat OpenStack Platform (RHOSP) environment must have the required hardware and network configuration.

2.1. Hardware requirements

The hardware requirements for the bare metal machines that you want to make available to your cloud users for provisioning depend on the operating system. For information about the hardware requirements for Red Hat Enterprise Linux installations, see Product Documentation for Red Hat Enterprise Linux.

All bare metal machines that you want to make available to your cloud users for provisioning must have the following capabilities:

- A NIC to connect to the bare metal network.

-

A power management interface, for example, Redfish or IPMI, that is connected to a network that is reachable from the

ironic-conductorservice. By default,ironic-conductorruns on all of the Controller nodes, unless you use composable roles and runironic-conductorelsewhere. - PXE boot on the bare metal network. Disable PXE boot on all other NICs in the deployment.

2.2. Networking requirements

The bare metal network must be a private network for the Bare Metal Provisioning service to use for the following operations:

- The provisioning and management of bare metal machines on the overcloud.

- Cleaning bare metal nodes when a node is unprovisioned.

- Tenant access to the bare metal machines.

The bare metal network provides DHCP and PXE boot functions to discover bare metal systems. This network must use a native VLAN on a trunked interface so that the Bare Metal Provisioning service can serve PXE boot and DHCP requests.

The Bare Metal Provisioning service in the overcloud is designed for a trusted tenant environment because the bare metal machines have direct access to the control plane network of your Red Hat OpenStack Platform (RHOSP) environment. Therefore, the default bare metal network uses a flat network for ironic-conductor services.

The default flat provisioning network can introduce security concerns in a customer environment because a tenant can interfere with the control plane network. To prevent this risk, you can configure a custom composable bare metal provisioning network for the Bare Metal Provisioning service that does not have access to the control plane.

The bare metal network must be untagged for provisioning, and must also have access to the Bare Metal Provisioning API. The control plane network, also known as the director provisioning network, is always untagged. Other networks can be tagged.

The Controller nodes that host the Bare Metal Provisioning service must have access to the bare metal network.

The NIC that the bare metal machine is configured to PXE-boot from must have access to the bare metal network.

The bare metal network is created by the OpenStack operator. Cloud users have direct access to the public OpenStack APIs, and to the bare metal network. With the default flat bare metal network, cloud users also have indirect access to the control plane.

The Bare Metal Provisioning service uses the bare metal network for node cleaning.

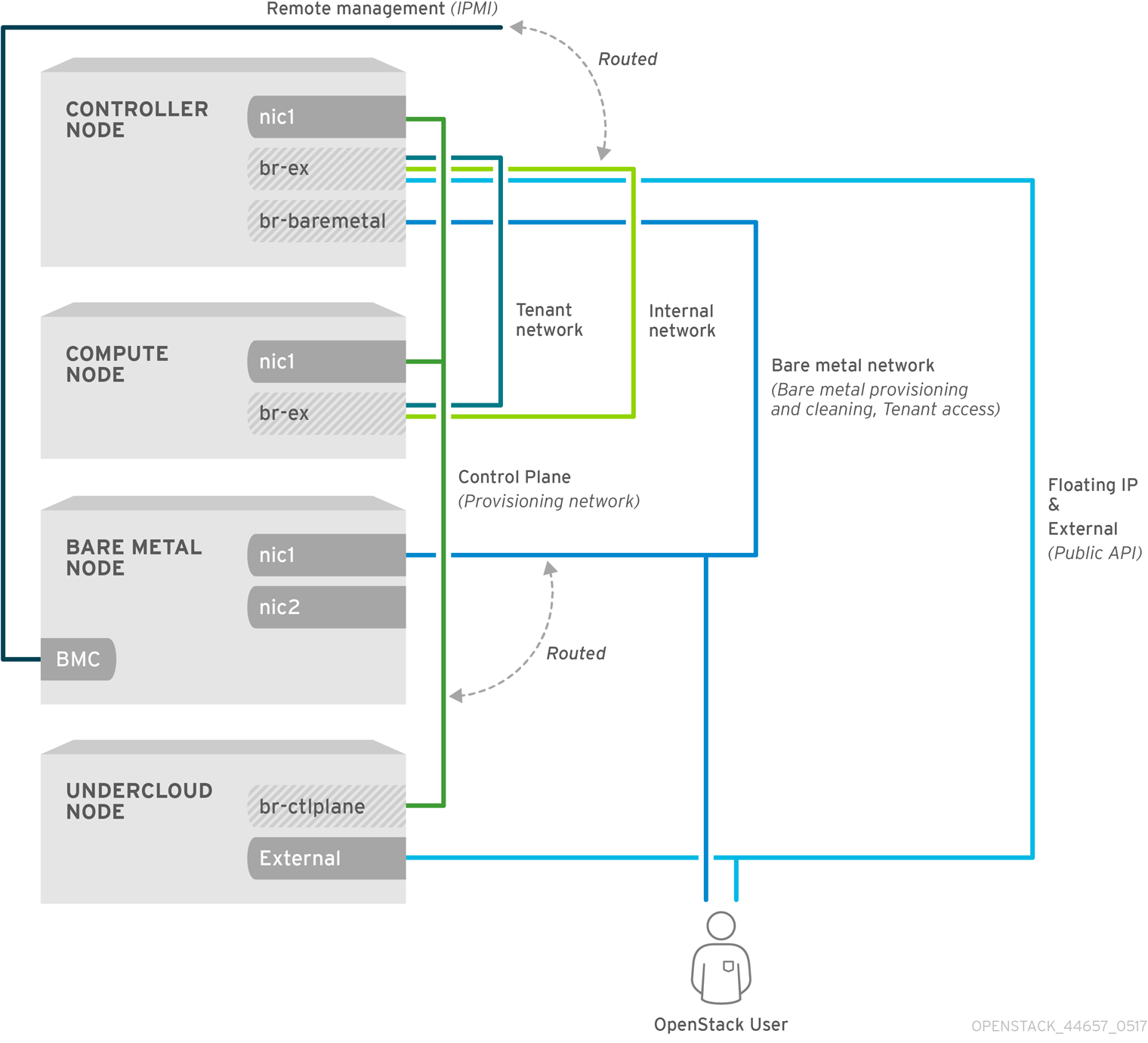

2.2.1. The default bare metal network

In the default Bare Metal Provisioning service deployment architecture, the bare metal network is separate from the control plane network. The bare metal network is a flat network that also acts as the tenant network. This network must route to the Bare Metal Provisioning services on the control plane, known as the director provisioning network. If you define an isolated bare metal network, the bare metal nodes cannot PXE boot.

Default bare metal network architecture diagram

2.2.2. The custom composable bare metal network

When you use a custom composable bare metal network in your Bare Metal Provisioning service deployment architecture, the bare metal network is a custom composable network that does not have access to the control plane. Use a custom composable bare metal network if you want to limit access to the control plane.

Chapter 3. Deploying an overcloud with the Bare Metal Provisioning service

To deploy an overcloud with the Bare Metal Provisioning service (ironic), you must create and configure the bare metal network, and configure the overcloud to enable bare metal provisioning.

Create the bare metal network. You can reuse the provisioning network interface on the Controller nodes to create a flat network, or you can create a custom network:

Configure the overcloud to enable bare metal provisioning:

If you use Open Virtual Network (OVN), the Bare Metal Provisioning service is supported only with the DHCP agent defined in the ironic-overcloud.yaml file, neutron-dhcp-agent. The built-in DHCP server on OVN cannot provision bare metal nodes or serve DHCP for the provisioning networks. To enable iPXE chain loading you must set the --dhcp-match tag in dnsmasq, which is not supported by the OVN DHCP server.

Prerequisites

- Your environment meets the minimum requirements. For more information, see Requirements for bare metal provisioning.

3.1. Configuring the default flat network

To use the default flat bare metal network, you reuse the provisioning network interface on the Controller nodes to create a bridge for the Bare Metal Provisioning service (ironic).

Procedure

-

Log in to the undercloud as the

stackuser. Source the

stackrcfile:[stack@director ~]$ source ~/stackrcModify the

/home/stack/templates/nic-configs/controller.yamlfile to reuse the provisioning network interface on the Controller nodes,eth1, to create a bridge for the bare metal network:network_config: - type: ovs_bridge name: br-baremetal use_dhcp: false members: - type: interface name: eth1 addresses: - ip_netmask: list_join: - / - - get_param: ControlPlaneIp - get_param: ControlPlaneSubnetCidrNoteYou cannot VLAN tag the bare metal network when you create it by reusing the provisioning network.

Add

br-baremetalto theNeutronBridgeMappingsparameter in yournetwork-environment.yamlfile:parameter_defaults: NeutronBridgeMappings: datacentre:br-ex,baremetal:br-baremetalAdd

baremetalto the list of networks specified by theNeutronFlatNetworksparameter in yournetwork-environment.yamlfile:parameter_defaults: NeutronBridgeMappings: datacentre:br-ex,baremetal:br-baremetal NeutronFlatNetworks: datacentre,baremetal

3.2. Configuring a custom IPv4 provisioning network

Create a custom IPv4 provisioning network to provision and deploy the overcloud over IPv4.

Procedure

-

Log in to the undercloud host as the

stackuser. Source the

stackrcundercloud credentials file:$ source ~/stackrcCopy the

network_data.yamlfile to your environment file directory:(undercloud) [stack@host01 ~]$ cp /usr/share/openstack-tripleo-heat-templates/network_data.yaml /home/stack/templates/network_data.yamlAdd a new network for overcloud provisioning to your

network_data.yamlfile:# custom network for overcloud provisioning - name: OcProvisioning name_lower: oc_provisioning vip: true vlan: 205 ip_subnet: '<ipv4_subnet_address>/<ipv4_mask>' allocation_pools: [{'start': '<ipv4_start_address>', 'end': '<ipv4_end_address>'}]-

Replace

<ipv4_subnet_address>with the IPv4 address of your IPv4 subnet. -

Replace

<ipv4_mask>with the IPv4 network mask for your IPv4 subnet. -

Replace

<ipv4_start_address>and<ipv4_end_address>with the IPv4 range that you want to use for address allocation.

-

Replace

Configure

IronicApiNetworkandIronicNetworkin yourServiceNetMapconfiguration to use the new IPv4 provisioning network:ServiceNetMap: IronicApiNetwork: oc_provisioning IronicNetwork: oc_provisioningAdd the new network as an interface to your local Controller NIC configuration file:

network_config: - type: vlan vlan_id: get_param: OcProvisioningNetworkVlanID addresses: - ip_netmask: get_param: OcProvisioningIpSubnetCopy the

roles_data.yamlfile to your environment file directory:(undercloud) [stack@host01 ~]$ cp /usr/share/openstack-tripleo-heat-templates/roles_data.yaml /home/stack/templates/roles_data.yamlAdd the new network for the controller to your

roles_data.yamlfile:networks: ... OcProvisioning: subnet: oc_provisioning_subnetInclude the

IronicInspectorservice in theIronicrole in yourroles_data.yamlfile, if not already present:ServicesDefault: OS::TripleO::Services::IronicInspector

3.3. Configuring a custom IPv6 provisioning network

Create a custom IPv6 provisioning network to provision and deploy the overcloud over IPv6.

Procedure

-

Log in to the undercloud as the

stackuser. Source the

stackrcfile:[stack@director ~]$ source ~/stackrcCopy the

network_data.yamlfile to your environment file directory:(undercloud) [stack@host01 ~]$ cp /usr/share/openstack-tripleo-heat-templates/network_data.yaml /home/stack/templates/network_data.yamlAdd a new IPv6 network for overcloud provisioning to your

network_data.yamlfile:# custom network for IPv6 overcloud provisioning - name: OcProvisioningIPv6 vip: true name_lower: oc_provisioning_ipv6 vlan: 10 ipv6: true ipv6_subnet: '<ipv6_subnet_address>/<ipv6_prefix>' ipv6_allocation_pools: [{'start': '<ipv6_start_address>', 'end': '<ipv6_end_address>'}] gateway_ipv6: '<ipv6_gw_address>'-

Replace

<ipv6_subnet_address>with the IPv6 address of your IPv6 subnet. -

Replace

<ipv6_prefix>with the IPv6 network prefix for your IPv6 subnet. -

Replace

<ipv6_start_address>and<ipv6_end_address>with the IPv6 range that you want to use for address allocation. -

Replace

<ipv6_gw_address>with the IPv6 address of your gateway.

-

Replace

Create a new file

network_environment_overrides.yamlin your environment file directory:$ touch /home/stack/templates/network_environment_overrides.yamlConfigure

IronicApiNetworkandIronicNetworkin yournetwork_environment_overrides.yamlfile to use the new IPv6 provisioning network:ServiceNetMap: IronicApiNetwork: oc_provisioning_ipv6 IronicNetwork: oc_provisioning_ipv6Set the

IronicIpVersionparameter to6:parameter_defaults: IronicIpVersion: 6Enable the

RabbitIPv6,MysqlIPv6, andRedisIPv6parameters:parameter_defaults: RabbitIPv6: True MysqlIPv6: True RedisIPv6: TrueAdd the new network as an interface to your local Controller NIC configuration file:

network_config: - type: vlan vlan_id: get_param: OcProvisioningIPv6NetworkVlanID addresses: - ip_netmask: get_param: OcProvisioningIPv6IpSubnetCopy the

roles_data.yamlfile to your environment file directory:(undercloud) [stack@host01 ~]$ cp /usr/share/openstack-tripleo-heat-templates/roles_data.yaml /home/stack/templates/roles_data.yamlAdd the new network for the Controller role to your

roles_data.yamlfile:networks: ... - OcProvisioningIPv6Include the

IronicInspectorservice in theIronicrole in yourroles_data.yamlfile, if not already present:ServicesDefault: OS::TripleO::Services::IronicInspector

3.4. Configuring the overcloud to enable bare metal provisioning

Use one of the default templates located in the /usr/share/openstack-tripleo-heat-templates/environments/services directory to deploy the overcloud with the Bare Metal Provisioning service (ironic) enabled:

-

For deployments that use OVS:

ironic.yaml -

For deployments that use OVN:

ironic-overcloud.yaml

You can create a local environment file to override the default configuration, as required by your deployment.

Procedure

-

Create an environment file in your local directory to configure the Bare Metal Provisioning service for your deployment, for example,

ironic-overrides.yaml. Optional: Configure the type of cleaning that is performed on the bare metal machines before and between provisioning:

parameter_defaults: IronicCleaningDiskErase: <cleaning_type>Replace

<cleaning_type>with one of the following values:-

full: (Default) Performs a full clean. -

metadata: Clean only the partition table. This type of cleaning substantially speeds up the cleaning process. However, because the deployment is less secure in a multi-tenant environment, use this option only in a trusted tenant environment.

-

Optional: Add additional drivers to the default drivers:

parameter_defaults: IronicEnabledHardwareTypes: ipmi,idrac,ilo,[additional_driver_1],...,[additional_driver_n]Replace

[additional_driver_1], and optionally all drivers up to[additional_driver_n], with the additional drivers you want to enable.To enable bare metal introspection, add the following configuration to your local Bare Metal Provisioning service environment file,

ironic-overrides.yaml:parameter_defaults: IronicInspectorSubnets: - ip_range: <ip_range> IPAImageURLs: '["http://<ip_address>:<port>/agent.kernel", "http://<ip_address>:<port>/agent.ramdisk"]' IronicInspectorInterface: '<baremetal_interface>'-

Replace

<ip_range>with the IP ranges for your environments, for example,192.168.0.100,192.168.0.120. -

Replace

<ip_address>:<port>with the IP address and port of the web server that hosts the IPA kernel and ramdisk. To use the same images that you use on the undercloud, set the IP address to the undercloud IP address, and the port to8088. If you omit this parameter, you must include alternatives on each Controller node. -

Replace

<baremetal_interface>with the bare metal network interface, for example,br-baremetal.

-

Replace

Add your new role and custom environment files to the stack with your other environment files and deploy the overcloud:

(undercloud)$ openstack overcloud deploy --templates \ -e [your environment files] \ -e /home/stack/templates/node-info.yaml -r /home/stack/templates/roles_data.yaml \ -e /usr/share/openstack-tripleo-heat-templates/network-environment.yaml \ -e /usr/share/openstack-tripleo-heat-templates/environments/services/<default_ironic_template> \ -e /usr/share/openstack-tripleo-heat-templates/environments/services/ironic-inspector.yaml \ -e /home/stack/templates/network_environment_overrides.yaml -n /home/stack/templates/network_data.yaml -e /home/stack/templates/ironic-overrides.yaml \-

Replace

<default_ironic_template>with eitherironic.yamlorironic-overcloud.yaml, depending on the Networking service mechanism driver for your deployment.

NoteThe order that you pass your environment files to the

openstack overcloud deploycommand is important, as the configuration in the later files takes precedence. Therefore, your environment file that enables and configures bare metal provisioning on your overcloud must be passed to the command after any network configuration files.-

Replace

3.5. Testing the Bare Metal Provisioning service

You can use the OpenStack Integration Test Suite to validate your Red Hat OpenStack deployment. For more information, see the OpenStack Integration Test Suite Guide.

Additional verification methods for the Bare Metal Provisioning service:

Configure the shell to access Identity as the administrative user:

$ source ~/overcloudrcCheck that the

nova-computeservice is running on the Controller nodes:$ openstack compute service list -c Binary -c Host -c StatusIf you changed the default ironic drivers, ensure that the required drivers are enabled:

$ openstack baremetal driver listEnsure that the ironic endpoints are listed:

$ openstack catalog list

3.6. Additional resources

- Deployment command options in the Director Installation and Usage guide

- IPv6 Networking for the Overcloud

- Bare Metal (ironic) Parameters in the Overcloud Parameters guide

Chapter 4. Configuring the Bare Metal Provisioning service after deployment

When you have deployed your overcloud with the Bare Metal Provisioning service (ironic), you must prepare your overcloud for bare-metal workloads. To prepare your overcloud for bare-metal workloads and enable your cloud users to create bare-metal instances, complete the following tasks:

- Configure the Networking service (neutron) to integrate with the Bare Metal Provisioning service.

- Configure node cleaning.

- Create the bare metal flavor and resource class.

- Optional: Create the bare metal images.

- Add physical machines as bare-metal nodes.

- Optional: Configure Redfish virtual media boot.

- Optional: Create host aggregates to separate physical and virtual machine provisioning.

4.1. Configuring the Networking service for bare metal provisioning

You can configure the Networking service (neutron) to integrate with the Bare Metal Provisioning service (ironic). You can configure the bare-metal network by using one of the following methods:

-

Create a single flat bare-metal network for the Bare Metal Provisioning conductor services,

ironic-conductor. This network must route to the Bare Metal Provisioning services on the control plane network. - Create a custom composable network to implement Bare Metal Provisioning services in the overcloud.

4.1.1. Configuring the Networking service to integrate with the Bare Metal Provisioning service on a flat network

You can configure the Networking service (neutron) to integrate with the Bare Metal Provisioning service (ironic) by creating a single flat bare-metal network for the Bare Metal Provisioning conductor services, ironic-conductor. This network must route to the Bare Metal Provisioning services on the control plane network.

Procedure

-

Log in to the node that hosts the Networking service (neutron) as the

rootuser. Source your overcloud credentials file:

# source ~/<credentials_file>-

Replace

<credentials_file>with the name of your credentials file, for example,overcloudrc.

-

Replace

Create the flat network over which to provision bare-metal instances:

# openstack network create \ --provider-network-type flat \ --provider-physical-network <provider_physical_network> \ --share <network_name>-

Replace

<provider_physical_network>with the name of the physical network over which you implement the virtual network, which is configured with the parameterNeutronBridgeMappingsin yournetwork-environment.yamlfile. -

Replace

<network_name>with a name for this network.

-

Replace

Create the subnet on the flat network:

# openstack subnet create \ --network <network_name> \ --subnet-range <network_cidr> \ --ip-version 4 \ --gateway <gateway_ip> \ --allocation-pool start=<start_ip>,end=<end_ip> \ --dhcp <subnet_name>-

Replace

<network_name>with the name of the provisioning network that you created in the previous step. -

Replace

<network_cidr>with the Classless Inter-Domain Routing (CIDR) representation of the block of IP addresses that the subnet represents. The block of IP addresses that you specify in the range starting with<start_ip>and ending with<end_ip>must be within the block of IP addresses specified by<network_cidr>. -

Replace

<gateway_ip>with the IP address or host name of the router interface that acts as the gateway for the new subnet. This address must be within the block of IP addresses specified by<network_cidr>, but outside of the block of IP addresses specified by the range that starts with<start_ip>and ends with<end_ip>. -

Replace

<start_ip>with the IP address that denotes the start of the range of IP addresses within the new subnet from which floating IP addresses are allocated. -

Replace

<end_ip>with the IP address that denotes the end of the range of IP addresses within the new subnet from which floating IP addresses are allocated. -

Replace

<subnet_name>with a name for the subnet.

-

Replace

Create a router for the network and subnet to ensure that the Networking service serves metadata requests:

# openstack router create <router_name>-

Replace

<router_name>with a name for the router.

-

Replace

Attach the subnet to the new router to enable the metadata requests from

cloud-initto be served and the node to be configured: :# openstack router add subnet <router_name> <subnet>-

Replace

<router_name>with the name of your router. -

Replace

<subnet>with the ID or name of the bare-metal subnet that you created in the step 4.

-

Replace

4.1.2. Configuring the Networking service to integrate with the Bare Metal Provisioning service on a custom composable network

You can configure the Networking service (neutron) to integrate with the Bare Metal Provisioning service (ironic) by creating a custom composable network to implement Bare Metal Provisioning services in the overcloud.

Procedure

- Log in to the undercloud host.

Source your overcloud credentials file:

$ source ~/<credentials_file>-

Replace

<credentials_file>with the name of your credentials file, for example,overcloudrc.

-

Replace

Retrieve the UUID for the provider network that hosts the Bare Metal Provisioning service:

(overcloud)$ openstack network show <network_name> -f value -c id-

Replace

<network_name>with the name of the provider network that you want to use for the bare-metal instance provisioning network.

-

Replace

-

Open your local environment file that configures the Bare Metal Provisioning service for your deployment, for example,

ironic-overrides.yaml. Configure the network to use as the bare-metal instance provisioning network:

parameter_defaults: IronicProvisioningNetwork: <network_uuid>-

Replace

<network_uuid>with the UUID of the provider network retrieved in step 3.

-

Replace

Source the

stackrcundercloud credentials file:$ source ~/stackrcTo apply the bare-metal instance provisioning network configuration, add your Bare Metal Provisioning environment files to the stack with your other environment files and deploy the overcloud:

(undercloud)$ openstack overcloud deploy --templates \ -e [your environment files] \ -e /home/stack/templates/node-info.yaml -r /home/stack/templates/roles_data.yaml \ -e /usr/share/openstack-tripleo-heat-templates/network-environment.yaml \ -e /usr/share/openstack-tripleo-heat-templates/environments/services/<default_ironic_template> \ -e /usr/share/openstack-tripleo-heat-templates/environments/services/ironic-inspector.yaml \ -e /home/stack/templates/network_environment_overrides.yaml -n /home/stack/templates/network_data.yaml -e /home/stack/templates/ironic-overrides.yaml \-

Replace

<default_ironic_template>with eitherironic.yamlorironic-overcloud.yaml, depending on the Networking service mechanism driver for your deployment.

-

Replace

4.2. Cleaning bare-metal nodes

The Bare Metal Provisioning service cleans nodes to prepare them for provisioning. You can clean bare-metal nodes by using one of the following methods:

- Automatic: You can configure your overcloud to automatically perform node cleaning when you unprovision a node.

- Manual: You can manually clean individual nodes when required.

4.2.1. Configuring automatic node cleaning

Automatic bare-metal node cleaning runs after you enroll a node, and before the node reaches the available provisioning state. Automatic cleaning is run each time the node is unprovisioned.

By default, the Bare Metal Provisioning service uses a network named provisioning for node cleaning. However, network names are not unique in the Networking service (neutron), so it is possible for a project to create a network with the same name, which causes a conflict with the Bare Metal Provisioning service. To avoid the conflict, use the network UUID to configure the node cleaning network.

Procedure

- Log in to the undercloud host.

Source your overcloud credentials file:

$ source ~/<credentials_file>-

Replace

<credentials_file>with the name of your credentials file, for example,overcloudrc.

-

Replace

Retrieve the UUID for the provider network that hosts the Bare Metal Provisioning service:

(overcloud)$ openstack network show <network_name> -f value -c id-

Replace

<network_name>with the name of the network that you want to use for the bare-metal node cleaning network.

-

Replace

-

Open your local environment file that configures the Bare Metal Provisioning service for your deployment, for example,

ironic-overrides.yaml. Configure the network to use as the node cleaning network:

parameter_defaults: IronicCleaningNetwork: <network_uuid>-

Replace

<network_uuid>with the UUID of the provider network that you retrieved in step 3.

-

Replace

Source the

stackrcundercloud credentials file:$ source ~/stackrcTo apply the node cleaning network configuration, add your Bare Metal Provisioning environment files to the stack with your other environment files and deploy the overcloud:

(undercloud)$ openstack overcloud deploy --templates \ -e [your environment files] \ -e /home/stack/templates/node-info.yaml -r /home/stack/templates/roles_data.yaml \ -e /usr/share/openstack-tripleo-heat-templates/network-environment.yaml \ -e /usr/share/openstack-tripleo-heat-templates/environments/services/<default_ironic_template> \ -e /usr/share/openstack-tripleo-heat-templates/environments/services/ironic-inspector.yaml \ -e /home/stack/templates/network_environment_overrides.yaml -n /home/stack/templates/network_data.yaml -e /home/stack/templates/ironic-overrides.yaml \-

Replace

<default_ironic_template>with eitherironic.yamlorironic-overcloud.yaml, depending on the Networking service mechanism driver for your deployment.

-

Replace

4.2.2. Cleaning nodes manually

You can clean specific nodes manually as required. Node cleaning has two modes:

- Metadata only clean: Removes partitions from all disks on the node. The metadata only mode of cleaning is faster than a full clean, but less secure because it erases only partition tables. Use this mode only on trusted tenant environments.

- Full clean: Removes all data from all disks, using either ATA secure erase or by shredding. A full clean can take several hours to complete.

Procedure

Source your overcloud credentials file:

$ source ~/<credentials_file>-

Replace

<credentials_file>with the name of your credentials file, for example,overcloudrc.

-

Replace

Check the current state of the node:

$ openstack baremetal node show \ -f value -c provision_state <node>-

Replace

<node>with the name or UUID of the node to clean.

-

Replace

If the node is not in the

manageablestate, then set it tomanageable:$ openstack baremetal node manage <node>Clean the node:

$ openstack baremetal node clean <node> \ --clean-steps '[{"interface": "deploy", "step": "<clean_mode>"}]'-

Replace

<node>with the name or UUID of the node to clean. Replace

<clean_mode>with the type of cleaning to perform on the node:-

erase_devices: Performs a full clean. -

erase_devices_metadata: Performs a metadata only clean.

-

-

Replace

Wait for the clean to complete, then check the status of the node:

-

manageable: The clean was successful, and the node is ready to provision. -

clean failed: The clean was unsuccessful. Inspect thelast_errorfield for the cause of failure.

-

4.3. Creating the bare metal flavor and resource class

You must create a flavor and a resource class to use to tag the bare metal nodes for a particular workload.

Procedure

Source the overcloud credentials file:

$ source ~/overcloudrcCreate a new instance flavor for bare metal nodes:

(overcloud)$ openstack flavor create --id auto \ --ram <ram_size_mb> --disk <disk_size_gb> \ --vcpus <no_vcpus> baremetal-

Replace

<ram_size_mb>with the RAM of the bare metal node, in MB. -

Replace

<disk_size_gb>with the size of the disk on the bare metal node, in GB. Replace

<no_vcpus>with the number of CPUs on the bare metal node.NoteThese properties are not used for scheduling instances. However, the Compute scheduler does use the disk size to determine the root partition size.

-

Replace

Retrieve a list of your nodes to identify their UUIDs:

(overcloud)$ openstack baremetal node listTag each bare metal node with a custom bare metal resource class:

(overcloud)$ openstack baremetal node set \ --resource-class baremetal.<CUSTOM> <node>-

Replace

<CUSTOM>with a string that identifies the purpose of the resource class. For example, set toGPUto create a custom GPU resource class that you can use to tag bare metal nodes that you want to designate for GPU workloads. -

Replace

<node>with the ID of the bare metal node.

-

Replace

Associate the new instance flavor for bare metal nodes with the custom resource class:

(overcloud)$ openstack flavor set \ --property resources:CUSTOM_BAREMETAL_<CUSTOM>=1 \ baremetalTo determine the name of a custom resource class that corresponds to a resource class of a Bare Metal service node, convert the resource class to uppercase, replace each punctuation mark with an underscore, and prefix with

CUSTOM_.NoteA flavor can request only one instance of a bare metal resource class.

Set the following flavor properties to prevent the Compute scheduler from using the bare metal flavor properties to schedule instances:

(overcloud)$ openstack flavor set \ --property resources:VCPU=0 \ --property resources:MEMORY_MB=0 \ --property resources:DISK_GB=0 baremetalVerify that the new flavor has the correct values:

(overcloud)$ openstack flavor list

4.4. Creating the bare metal images

An overcloud that includes the Bare Metal Provisioning service (ironic) requires two sets of images. During deployment, the Bare Metal Provisioning service boots bare metal nodes from the deploy image, and copies the user image onto nodes.

- The deploy image

-

The Bare Metal Provisioning service uses the deploy image to boot the bare metal node and copy a user image onto the bare metal node. The deploy image consists of the

kernelimage and theramdiskimage. - The user image

The user image is the image that you deploy onto the bare metal node. The user image also has a

kernelimage andramdiskimage, but additionally, the user image contains amainimage. The main image is either a root partition, or a whole-disk image.- A whole-disk image is an image that contains the partition table and boot loader. The Bare Metal Provisioning service does not control the subsequent reboot of a node deployed with a whole-disk image as the node supports localboot.

- A root partition image contains only the root partition of the operating system. If you use a root partition, after the deploy image is loaded into the Image service, you can set the deploy image as the node boot image in the node properties. A subsequent reboot of the node uses netboot to pull down the user image.

The examples in this section use a root partition image to provision bare metal nodes.

4.4.1. Preparing the deploy images

You do not have to create the deploy image because it was already created when the overcloud was deployed by the undercloud. The deploy image consists of two images - the kernel image and the ramdisk image:

/tftpboot/agent.kernel

/tftpboot/agent.ramdisk

These images are often in the home directory, unless you have deleted them, or unpacked them elsewhere. If they are not in the home directory, and you still have the rhosp-director-images-ipa package installed, these images are in the /usr/share/rhosp-director-images/ironic-python-agent*.tar file.

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

Procedure

Extract the images and upload them to the Image service:

$ openstack image create \

--container-format aki \

--disk-format aki \

--public \

--file ./tftpboot/agent.kernel bm-deploy-kernel

$ openstack image create \

--container-format ari \

--disk-format ari \

--public \

--file ./tftpboot/agent.ramdisk bm-deploy-ramdisk4.4.2. Preparing the user image

The final image that you need is the user image that you deploy onto the bare metal node. User images also have a kernel and ramdisk, along with a main image. To download and install these packages, you must first configure whole disk image environment variables to suit your requirements.

4.4.3. Installing the user image

Configure the user image and then upload the image to the Image service (glance).

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

Procedure

- Download the Red Hat Enterprise Linux KVM guest image from the Customer Portal.

Define

DIB_LOCAL_IMAGEas the downloaded image:$ export DIB_LOCAL_IMAGE=rhel-8.0-x86_64-kvm.qcow2Set your registration information. If you use Red Hat Customer Portal, you must configure the following information:

$ export REG_USER='USER_NAME' $ export REG_PASSWORD='PASSWORD' $ export REG_AUTO_ATTACH=true $ export REG_METHOD=portal $ export https_proxy='IP_address:port' (if applicable) $ export http_proxy='IP_address:port' (if applicable)If you use Red Hat Satellite, you must configure the following information:

$ export REG_USER='USER_NAME' $ export REG_PASSWORD='PASSWORD' $ export REG_SAT_URL='<SATELLITE URL>' $ export REG_ORG='<SATELLITE ORG>' $ export REG_ENV='<SATELLITE ENV>' $ export REG_METHOD=<METHOD>If you have any offline repositories, you can define DIB_YUM_REPO_CONF as local repository configuration:

$ export DIB_YUM_REPO_CONF=<path-to-local-repository-config-file>Create the user images with the

diskimage-buildertool:$ export DIB_RELEASE=8 $ disk-image-create rhel baremetal -o rhel-imageThis command extracts the kernel as

rhel-image.vmlinuzand initial ramdisk asrhel-image.initrd.Upload the images to the Image service:

$ KERNEL_ID=$(openstack image create \ --file rhel-image.vmlinuz --public \ --container-format aki --disk-format aki \ -f value -c id rhel-image.vmlinuz) $ RAMDISK_ID=$(openstack image create \ --file rhel-image.initrd --public \ --container-format ari --disk-format ari \ -f value -c id rhel-image.initrd) $ openstack image create \ --file rhel-image.qcow2 --public \ --container-format bare \ --disk-format qcow2 \ --property kernel_id=$KERNEL_ID \ --property ramdisk_id=$RAMDISK_ID \ rhel-image

4.5. Adding physical machines as bare metal nodes

Use one of the following methods to enroll a bare metal node:

- Prepare an inventory file with the node details, import the file into the Bare Metal Provisioning service, and make the nodes available.

-

Register a physical machine as a bare metal node, and then manually add its hardware details and create ports for each of its Ethernet MAC addresses. You can perform these steps on any node that has your

overcloudrcfile.

4.5.1. Enrolling a bare metal node with an inventory file

Prepare an inventory file with the node details, import the file into the Bare Metal Provisioning service (ironic), and make the nodes available.

Prerequisites

- An overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

Procedure

Create an inventory file,

overcloud-nodes.yaml, that includes the node details. You can enroll multiple nodes with one file.nodes: - name: node0 driver: ipmi driver_info: ipmi_address: <ipmi_ip> ipmi_username: <user> ipmi_password: <password> [<property>: <value>] properties: cpus: <cpu_count> cpu_arch: <cpu_arch> memory_mb: <memory> local_gb: <root_disk> root_device: serial: <serial> ports: - address: <mac_address>-

Replace

<ipmi_ip>with the address of the Bare Metal controller. -

Replace

<user>with your username. -

Replace

<password>with your password. -

Optional: Replace

<property>: <value>with an IPMI property that you want to configure, and the property value. For information on the available properties, see Intelligent Platform Management Interface (IPMI) power management driver. -

Replace

<cpu_count>with the number of CPUs. -

Replace

<cpu_arch>with the type of architecture of the CPUs. -

Replace

<memory>with the amount of memory in MiB. -

Replace

<root_disk>with the size of the root disk in GiB. Only required when the machine has multiple disks. -

Replace

<serial>with the serial number of the disk that you want to use for deployment. -

Replace

<mac_address>with the MAC address of the NIC used to PXE boot. - --driver-info <property>=<value>

-

Replace

Source the

overcloudrcfile:$ source ~/overcloudrcImport the inventory file into the Bare Metal Provisioning service:

$ openstack baremetal create overcloud-nodes.yamlThe nodes are now in the

enrollstate.Specify the deploy kernel and deploy ramdisk on each node:

$ openstack baremetal node set <node> \ --driver-info deploy_kernel=<kernel_file> \ --driver-info deploy_ramdisk=<initramfs_file>-

Replace

<node>with the name or ID of the node. -

Replace

<kernel_file>with the path to the.kernelimage, for example,file:///var/lib/ironic/httpboot/agent.kernel. -

Replace

<initramfs_file>with the path to the.initramfsimage, for example,file:///var/lib/ironic/httpboot/agent.ramdisk.

-

Replace

Optional: Specify the IPMI cipher suite for each node:

$ openstack baremetal node set <node> \ --driver-info ipmi_cipher_suite=<version>-

Replace

<node>with the name or ID of the node. Replace

<version>with the cipher suite version to use on the node. Set to one of the following valid values:-

3- The node uses the AES-128 with SHA1 cipher suite. -

17- The node uses the AES-128 with SHA256 cipher suite.

-

-

Replace

Set the provisioning state of the node to

available:$ openstack baremetal node manage <node> $ openstack baremetal node provide <node>The Bare Metal Provisioning service cleans the node if you enabled node cleaning.

Set the local boot option on the node:

$ openstack baremetal node set <node> --property capabilities="boot_option:local"Check that the nodes are enrolled:

$ openstack baremetal node listThere might be a delay between enrolling a node and its state being shown.

4.5.2. Enrolling a bare-metal node manually

Register a physical machine as a bare metal node, then manually add its hardware details and create ports for each of its Ethernet MAC addresses. You can perform these steps on any node that has your overcloudrc file.

Prerequisites

- An overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

-

The driver for the new node must be enabled by using the

IronicEnabledHardwareTypesparameter. For more information about supported drivers, see Bare metal drivers.

Procedure

-

Log in to the undercloud host as the

stackuser. Source the overcloud credentials file:

(undercloud)$ source ~/overcloudrcAdd a new node:

$ openstack baremetal node create --driver <driver_name> --name <node_name>-

Replace

<driver_name>with the name of the driver, for example,ipmi. -

Replace

<node_name>with the name of your new bare-metal node.

-

Replace

- Note the UUID assigned to the node when it is created.

Set the boot option to

localfor each registered node:$ openstack baremetal node set \ --property capabilities="boot_option:local" <node>Replace

<node>with the UUID of the bare metal node.Specify the deploy kernel and deploy ramdisk for the node driver:

$ openstack baremetal node set <node> \ --driver-info deploy_kernel=<kernel_file> \ --driver-info deploy_ramdisk=<initramfs_file>-

Replace

<node>with the ID of the bare metal node. -

Replace

<kernel_file>with the path to the.kernelimage, for example,file:///var/lib/ironic/httpboot/agent.kernel. -

Replace

<initramfs_file>with the path to the.initramfsimage, for example,file:///var/lib/ironic/httpboot/agent.ramdisk.

-

Replace

Update the node properties to match the hardware specifications on the node:

$ openstack baremetal node set <node> \ --property cpus=<cpu> \ --property memory_mb=<ram> \ --property local_gb=<disk> \ --property cpu_arch=<arch>-

Replace

<node>with the ID of the bare metal node. -

Replace

<cpu>with the number of CPUs. -

Replace

<ram>with the RAM in MB. -

Replace

<disk>with the disk size in GB. -

Replace

<arch>with the architecture type.

-

Replace

Optional: Specify the IPMI cipher suite for each node:

$ openstack baremetal node set <node> \ --driver-info ipmi_cipher_suite=<version>-

Replace

<node>with the ID of the bare metal node. Replace

<version>with the cipher suite version to use on the node. Set to one of the following valid values:-

3- The node uses the AES-128 with SHA1 cipher suite. -

17- The node uses the AES-128 with SHA256 cipher suite.

-

-

Replace

Optional: Specify the IPMI details for each node:

$ openstack baremetal node set <node> \ --driver-info <property>=<value>-

Replace

<node>with the ID of the bare metal node. -

Replace

<property>with the IPMI property that you want to configure. For information on the available properties, see Intelligent Platform Management Interface (IPMI) power management driver. -

Replace

<value>with the property value.

-

Replace

Optional: If you have multiple disks, set the root device hints to inform the deploy ramdisk which disk to use for deployment:

$ openstack baremetal node set <node> \ --property root_device='{"<property>": "<value>"}'-

Replace

<node>with the ID of the bare metal node. Replace

<property>and<value>with details about the disk that you want to use for deployment, for exampleroot_device='{"size": "128"}'RHOSP supports the following properties:

-

model(String): Device identifier. -

vendor(String): Device vendor. -

serial(String): Disk serial number. -

hctl(String): Host:Channel:Target:Lun for SCSI. -

size(Integer): Size of the device in GB. -

wwn(String): Unique storage identifier. -

wwn_with_extension(String): Unique storage identifier with the vendor extension appended. -

wwn_vendor_extension(String): Unique vendor storage identifier. -

rotational(Boolean): True for a rotational device (HDD), otherwise false (SSD). name(String): The name of the device, for example: /dev/sdb1 Use this property only for devices with persistent names.NoteIf you specify more than one property, the device must match all of those properties.

-

-

Replace

Inform the Bare Metal Provisioning service of the node network card by creating a port with the MAC address of the NIC on the provisioning network:

$ openstack baremetal port create --node <node_uuid> <mac_address>-

Replace

<node>with the unique ID of the bare metal node. -

Replace

<mac_address>with the MAC address of the NIC used to PXE boot.

-

Replace

Validate the configuration of the node:

$ openstack baremetal node validate <node> +------------+--------+---------------------------------------------+ | Interface | Result | Reason | +------------+--------+---------------------------------------------+ | boot | False | Cannot validate image information for node | | | | a02178db-1550-4244-a2b7-d7035c743a9b | | | | because one or more parameters are missing | | | | from its instance_info. Missing are: | | | | ['ramdisk', 'kernel', 'image_source'] | | console | None | not supported | | deploy | False | Cannot validate image information for node | | | | a02178db-1550-4244-a2b7-d7035c743a9b | | | | because one or more parameters are missing | | | | from its instance_info. Missing are: | | | | ['ramdisk', 'kernel', 'image_source'] | | inspect | None | not supported | | management | True | | | network | True | | | power | True | | | raid | True | | | storage | True | | +------------+--------+---------------------------------------------+The validation output

Resultindicates the following:-

False: The interface has failed validation. If the reason provided includes missing theinstance_infoparameters[\'ramdisk', \'kernel', and \'image_source'], this might be because the Compute service populates those missing parameters at the beginning of the deployment process, therefore they have not been set at this point. If you are using a whole disk image, then you might need to only setimage_sourceto pass the validation. -

True: The interface has passed validation. -

None: The interface is not supported for your driver.

-

4.5.3. Bare-metal node provisioning states

A bare-metal node transitions through several provisioning states during its lifetime. API requests and conductor events performed on the node initiate the transitions. There are two categories of provisioning states: "stable" and "in transition".

Use the following table to understand the provisioning states a node can be in, and the actions that are available for you to use to transition the node from one provisioning state to another.

| State | Category | Description |

|---|---|---|

|

| Stable | The initial state of each node. For information on enrolling a node, see Adding physical machines as bare metal nodes. |

|

| In transition |

The Bare Metal Provisioning service validates that it can manage the node by using the |

|

| Stable |

The node is transitioned to the manageable state when the Bare Metal Provisioning service has verified that it can manage the node. You can transition the node from the

You must move a node to the

Move a node into the |

|

| In transition |

The Bare Metal Provisioning service uses node introspection to update the hardware-derived node properties to reflect the current state of the hardware. The node transitions to |

|

| In transition |

The provision state that indicates that an asynchronous inspection is in progress. If the node inspection is successful, the node transitions to the |

|

| Stable |

The provisioning state that indicates that the node inspection failed. You can transition the node from the

|

|

| In transition |

Nodes in the

|

|

| In transition |

Nodes in the

You can interrupt the cleaning process of a node in the |

|

| Stable |

After nodes have been successfully preconfigured and cleaned, they are moved into the

|

|

| In transition |

Nodes in the

|

|

| In transition |

Nodes in the

You can interrupt the deployment of a node in the |

|

| Stable |

The provisioning state that indicates that the node deployment failed. You can transition the node from the

|

|

| Stable |

Nodes in the

|

|

| In transition |

When a node is in the |

|

| Stable |

If a node deletion is unsuccessful, the node is moved into the

|

|

| In transition |

You can use the |

|

| In transition |

Nodes in the

|

|

| In transition |

Nodes in the

You can interrupt the rescue operation of a node in the |

|

| Stable |

The provisioning state that indicates that the node rescue failed. You can transition the node from the

|

|

| Stable |

Nodes in the

|

|

| In transition |

Nodes in the |

|

| Stable |

The provisioning state that indicates that the node unrescue operation failed. You can transition the node from the

|

4.6. Configuring Redfish virtual media boot

This feature is available in this release as a Technology Preview, and therefore is not fully supported by Red Hat. It should only be used for testing, and should not be deployed in a production environment. For more information about Technology Preview features, see Scope of Coverage Details.

You can use Redfish virtual media boot to supply a boot image to the Baseboard Management Controller (BMC) of a node so that the BMC can insert the image into one of the virtual drives. The node can then boot from the virtual drive into the operating system that exists in the image.

Redfish hardware types support booting deploy, rescue, and user images over virtual media. The Bare Metal Provisioning service (ironic) uses kernel and ramdisk images associated with a node to build bootable ISO images for UEFI or BIOS boot modes at the moment of node deployment. The major advantage of virtual media boot is that you can eliminate the TFTP image transfer phase of PXE and use HTTP GET, or other methods, instead.

4.6.1. Deploying a bare metal server with Redfish virtual media boot

This feature is available in this release as a Technology Preview, and therefore is not fully supported by Red Hat. It should only be used for testing, and should not be deployed in a production environment. For more information about Technology Preview features, see Scope of Coverage Details.

To boot a node with the redfish hardware type over virtual media, set the boot interface to redfish-virtual-media and, for UEFI nodes, define the EFI System Partition (ESP) image. Then configure an enrolled node to use Redfish virtual media boot.

Prerequisites

-

Redfish driver enabled in the

enabled_hardware_typesparameter in theundercloud.conffile. - A bare metal node registered and enrolled.

- IPA and instance images in the Image Service (glance).

- For UEFI nodes, you must also have an EFI system partition image (ESP) available in the Image Service (glance).

- A bare metal flavor.

- A network for cleaning and provisioning.

Sushy library installed:

$ sudo yum install sushy

Procedure

Set the Bare Metal service (ironic) boot interface to

redfish-virtual-media:$ openstack baremetal node set --boot-interface redfish-virtual-media $NODE_NAMEReplace

$NODE_NAMEwith the name of the node.For UEFI nodes, set the boot mode to

uefi:$ openstack baremetal node set --property capabilities="boot_mode:uefi" $NODE_NAMEReplace

$NODE_NAMEwith the name of the node.NoteFor BIOS nodes, do not complete this step.

For UEFI nodes, define the EFI System Partition (ESP) image:

$ openstack baremetal node set --driver-info bootloader=$ESP $NODE_NAMEReplace

$ESPwith the glance image UUID or URL for the ESP image, and replace$NODE_NAMEwith the name of the node.NoteFor BIOS nodes, do not complete this step.

Create a port on the bare metal node and associate the port with the MAC address of the NIC on the bare metal node:

$ openstack baremetal port create --pxe-enabled True --node $UUID $MAC_ADDRESSReplace

$UUIDwith the UUID of the bare metal node, and replace$MAC_ADDRESSwith the MAC address of the NIC on the bare metal node.Create the new bare metal server:

$ openstack server create \ --flavor baremetal \ --image $IMAGE \ --network $NETWORK \ test_instanceReplace

$IMAGEand$NETWORKwith the names of the image and network that you want to use.

4.7. Using host aggregates to separate physical and virtual machine provisioning

OpenStack Compute uses host aggregates to partition availability zones, and group together nodes that have specific shared properties. When an instance is provisioned, the Compute scheduler compares properties on the flavor with the properties assigned to host aggregates, and ensures that the instance is provisioned in the correct aggregate and on the correct host: either on a physical machine or as a virtual machine.

Complete the steps in this section to perform the following operations:

-

Add the property

baremetalto your flavors and set it to eithertrueorfalse. -

Create separate host aggregates for bare metal hosts and compute nodes with a matching

baremetalproperty. Nodes grouped into an aggregate inherit this property.

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

Procedure

Set the

baremetalproperty totrueon the baremetal flavor.$ openstack flavor set baremetal --property baremetal=trueSet the

baremetalproperty tofalseon the flavors that virtual instances use:$ openstack flavor set FLAVOR_NAME --property baremetal=falseCreate a host aggregate called

baremetal-hosts:$ openstack aggregate create --property baremetal=true baremetal-hostsAdd each Controller node to the

baremetal-hostsaggregate:$ openstack aggregate add host baremetal-hosts HOSTNAMENoteIf you have created a composable role with the

NovaIronicservice, add all the nodes with this service to thebaremetal-hostsaggregate. By default, only the Controller nodes have theNovaIronicservice.Create a host aggregate called

virtual-hosts:$ openstack aggregate create --property baremetal=false virtual-hostsAdd each Compute node to the

virtual-hostsaggregate:$ openstack aggregate add host virtual-hosts HOSTNAMEIf you did not add the following Compute filter scheduler when you deployed the overcloud, add it now to the existing list under

scheduler_default_filtersin the_/etc/nova/nova.conf_file:AggregateInstanceExtraSpecsFilter

Chapter 5. Administering bare metal nodes

After you deploy an overcloud that includes the Bare Metal Provisioning service (ironic), you can provision a physical machine on an enrolled bare metal node and launch bare metal instances in your overcloud.

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

5.1. Launching bare metal instances

You can launch instances either from the command line or from the OpenStack dashboard.

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

5.1.1. Launching instances with the command line interface

You can create a bare-metal instance by using the OpenStack Client CLI.

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

Procedure

Configure the shell to access the Identity service (keystone) as the administrative user:

$ source ~/overcloudrcCreate your bare-metal instance:

$ openstack server create \ --nic net-id=<network_uuid> \ --flavor baremetal \ --image <image_uuid> \ myBareMetalInstance-

Replace

<network_uuid>with the unique identifier for the network that you created to use with the Bare Metal Provisioning service. -

Replace

<image_uuid>with the unique identifier for the image that has the software profile that your instance requires.

-

Replace

Check the status of the instance:

$ openstack server list --name myBareMetalInstance

5.1.2. Launching instances with the dashboard

Use the dashboard graphical user interface to deploy a bare metal instance.

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

Procedure

- Log in to the dashboard at http[s]://DASHBOARD_IP/dashboard.

- Click Project > Compute > Instances

Click Launch Instance.

-

In the Details tab, specify the Instance Name and select

1for Count. -

In the Source tab, select an

Imagefrom Select Boot Source, then click the+(plus) symbol to select an operating system disk image. The image that you choose moves to Allocated. -

In the Flavor tab, select

baremetal. -

In the Networks tab, use the

+(plus) and-(minus) buttons to move required networks from Available to Allocated. Ensure that the shared network that you created for the Bare Metal Provisioning service is selected here. - If you want to assign the instance to a security group, in the Security Groups tab, use the arrow to move the group to Allocated.

-

In the Details tab, specify the Instance Name and select

- Click Launch Instance.

5.2. Configuring port groups in the Bare Metal Provisioning service

Port group functionality for bare metal nodes is available in this release as a Technology Preview, and therefore is not fully supported by Red Hat. It should be used only for testing, and should not be deployed in a production environment. For more information about Technology Preview features, see Scope of Coverage Details.

Port groups (bonds) provide a method to aggregate multiple network interfaces into a single ‘bonded’ interface. Port group configuration always takes precedence over an individual port configuration.

If a port group has a physical network, then all the ports in that port group must have the same physical network. The Bare Metal Provisioning service uses configdrive to support configuration of port groups in the instances.

Bare Metal Provisioning service API version 1.26 supports port group configuration. .Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

5.2.1. Configuring port groups on switches manually

To configure port groups in a bare metal deployment, you must configure the port groups on the switches manually. You must ensure that the mode and properties on the switch correspond to the mode and properties on the bare metal side as the naming can vary on the switch.

You cannot use port groups for provisioning and cleaning if you need to boot a deployment using iPXE.

With port group fallback, all the ports in a port group can fallback to individual switch ports when a connection fails. Based on whether a switch supports port group fallback or not, you can use the --support-standalone-ports and --unsupport-standalone-ports options.

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

5.2.2. Configuring port groups in the Bare Metal Provisioning service

Create a port group to aggregate multiple network interfaces into a single bonded interface.

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

Procedure

Create a port group by specifying the node to which it belongs, its name, address, mode, properties and whether it supports fallback to standalone ports.

# openstack baremetal port group create --node NODE_UUID --name NAME --address MAC_ADDRESS --mode MODE --property miimon=100 --property xmit_hash_policy="layer2+3" --support-standalone-portsYou can also use the

openstack baremetal port group setcommand to update a port group.If you do not specify an address, the deployed instance port group address is the same as the OpenStack Networking port. If you do not attach the neutron port, the port group configuration fails.

During interface attachment, port groups have a higher priority than the ports, so they are used first. Currently, it is not possible to specify whether a port group or a port is desired in an interface attachment request. Port groups that do not have any ports are ignored.

NoteYou must configure port groups manually in standalone mode either in the image or by generating the

configdriveand adding it to the node’sinstance_info. Ensure that you havecloud-initversion 0.7.7 or later for the port group configuration to work.Associate a port with a port group:

During port creation:

# openstack baremetal port create --node NODE_UUID --address MAC_ADDRESS --port-group testDuring port update:

# openstack baremetal port set PORT_UUID --port-group PORT_GROUP_UUID

Boot an instance by providing an image that has

cloud-initor supports bonding.To check if the port group is configured properly, run the following command:

# cat /proc/net/bonding/bondXHere,

Xis a number thatcloud-initgenerates automatically for each configured port group, starting with a0and incremented by one for each configured port group.

5.3. Determining the host to IP address mapping

Use the following commands to determine which IP addresses are assigned to which host and bare metal node. With these commands, you can view the host to IP mapping from the undercloud without accessing the hosts directly.

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

Procedure

Run the following command to display the IP address for each host:

(undercloud) [stack@host01 ~]$ openstack stack output show overcloud HostsEntry --max-width 80 +--------------+---------------------------------------------------------------+ | Field | Value | +--------------+---------------------------------------------------------------+ | description | The content that should be appended to your /etc/hosts if you | | | want to get | | | hostname-based access to the deployed nodes (useful for | | | testing without | | | setting up a DNS). | | | | | output_key | HostsEntry | | output_value | 172.17.0.10 overcloud-controller-0.localdomain overcloud- | | | controller-0 | | | 10.8.145.18 overcloud-controller-0.external.localdomain | | | overcloud-controller-0.external | | | 172.17.0.10 overcloud-controller-0.internalapi.localdomain | | | overcloud-controller-0.internalapi | | | 172.18.0.15 overcloud-controller-0.storage.localdomain | | | overcloud-controller-0.storage | | | 172.21.2.12 overcloud-controller-0.storagemgmt.localdomain | | | overcloud-controller-0.storagemgmt | | | 172.16.0.15 overcloud-controller-0.tenant.localdomain | | | overcloud-controller-0.tenant | | | 10.8.146.13 overcloud-controller-0.management.localdomain | | | overcloud-controller-0.management | | | 10.8.146.13 overcloud-controller-0.ctlplane.localdomain | | | overcloud-controller-0.ctlplane | | | | | | 172.17.0.21 overcloud-compute-0.localdomain overcloud- | | | compute-0 | | | 10.8.146.12 overcloud-compute-0.external.localdomain | | | overcloud-compute-0.external | | | 172.17.0.21 overcloud-compute-0.internalapi.localdomain | | | overcloud-compute-0.internalapi | | | 172.18.0.20 overcloud-compute-0.storage.localdomain | | | overcloud-compute-0.storage | | | 10.8.146.12 overcloud-compute-0.storagemgmt.localdomain | | | overcloud-compute-0.storagemgmt | | | 172.16.0.16 overcloud-compute-0.tenant.localdomain overcloud- | | | compute-0.tenant | | | 10.8.146.12 overcloud-compute-0.management.localdomain | | | overcloud-compute-0.management | | | 10.8.146.12 overcloud-compute-0.ctlplane.localdomain | | | overcloud-compute-0.ctlplane | | | | | | | | | | | | | | | 10.8.145.16 overcloud.localdomain | | | 10.8.146.7 overcloud.ctlplane.localdomain | | | 172.17.0.19 overcloud.internalapi.localdomain | | | 172.18.0.19 overcloud.storage.localdomain | | | 172.21.2.16 overcloud.storagemgmt.localdomain | +--------------+---------------------------------------------------------------+To filter a particular host, run the following command:

(undercloud) [stack@host01 ~]$ openstack stack output show overcloud HostsEntry -c output_value -f value | grep overcloud-controller-0 172.17.0.12 overcloud-controller-0.localdomain overcloud-controller-0 10.8.145.18 overcloud-controller-0.external.localdomain overcloud-controller-0.external 172.17.0.12 overcloud-controller-0.internalapi.localdomain overcloud-controller-0.internalapi 172.18.0.12 overcloud-controller-0.storage.localdomain overcloud-controller-0.storage 172.21.2.13 overcloud-controller-0.storagemgmt.localdomain overcloud-controller-0.storagemgmt 172.16.0.19 overcloud-controller-0.tenant.localdomain overcloud-controller-0.tenant 10.8.146.13 overcloud-controller-0.management.localdomain overcloud-controller-0.management 10.8.146.13 overcloud-controller-0.ctlplane.localdomain overcloud-controller-0.ctlplaneTo map the hosts to bare metal nodes, run the following command:

(undercloud) [stack@host01 ~]$ openstack baremetal node list --fields uuid name instance_info -f json [ { "UUID": "c0d2568e-1825-4d34-96ec-f08bbf0ba7ae", "Instance Info": { "root_gb": "40", "display_name": "overcloud-compute-0", "image_source": "24a33990-e65a-4235-9620-9243bcff67a2", "capabilities": "{\"boot_option\": \"local\"}", "memory_mb": "4096", "vcpus": "1", "local_gb": "557", "configdrive": "******", "swap_mb": "0", "nova_host_id": "host01.lab.local" }, "Name": "host2" }, { "UUID": "8c3faec8-bc05-401c-8956-99c40cdea97d", "Instance Info": { "root_gb": "40", "display_name": "overcloud-controller-0", "image_source": "24a33990-e65a-4235-9620-9243bcff67a2", "capabilities": "{\"boot_option\": \"local\"}", "memory_mb": "4096", "vcpus": "1", "local_gb": "557", "configdrive": "******", "swap_mb": "0", "nova_host_id": "host01.lab.local" }, "Name": "host3" } ]

5.4. Attaching and detaching virtual network interfaces

The Bare Metal Provisioning service has an API that you can use to manage the mapping between virtual network interfaces. For example, the interfaces in the OpenStack Networking service and your physical interfaces (NICs). You can configure these interfaces for each Bare Metal Provisioning node to set the virtual network interface (VIF) to physical network interface (PIF) mapping logic. To configure the interfaces, use the openstack baremetal node vif* commands.

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

Procedure

List the VIF IDs currently connected to the bare metal node:

$ openstack baremetal node vif list baremetal-0 +--------------------------------------+ | ID | +--------------------------------------+ | 4475bc5a-6f6e-466d-bcb6-6c2dce0fba16 | +--------------------------------------+After the VIF is attached, the Bare Metal Provisioning service updates the virtual port in the OpenStack Networking service with the actual MAC address of the physical port. Check this port address:

$ openstack port show 4475bc5a-6f6e-466d-bcb6-6c2dce0fba16 -c mac_address -c fixed_ips +-------------+-----------------------------------------------------------------------------+ | Field | Value | +-------------+-----------------------------------------------------------------------------+ | fixed_ips | ip_address='192.168.24.9', subnet_id='1d11c677-5946-4733-87c3-23a9e06077aa' | | mac_address | 00:2d:28:2f:8d:95 | +-------------+-----------------------------------------------------------------------------+Create a new port on the network where you created the

baremetal-0node:$ openstack port create --network baremetal --fixed-ip ip-address=192.168.24.24 baremetal-0-extraRemove a port from the instance:

$ openstack server remove port overcloud-baremetal-0 4475bc5a-6f6e-466d-bcb6-6c2dce0fba16Check that the IP address no longer exists on the list:

$ openstack server listCheck if there are VIFs attached to the node:

$ openstack baremetal node vif list baremetal-0 $ openstack port listAdd the newly created port:

$ openstack server add port overcloud-baremetal-0 baremetal-0-extraVerify that the new IP address shows the new port:

$ openstack server list +--------------------------------------+-------------------------+--------+------------------------+------------------------------+---------+ | ID | Name | Status | Networks | Image | Flavor | +--------------------------------------+-------------------------+--------+------------------------+------------------------------+---------+ | 53095a64-1646-4dd1-bbf3-b51cbcc38789 | overcloud-controller-2 | ACTIVE | ctlplane=192.168.24.7 | overcloud-hardened-uefi-full | control | | 3a1bc89c-5d0d-44c7-a569-f2a3b4c73d65 | overcloud-controller-0 | ACTIVE | ctlplane=192.168.24.8 | overcloud-hardened-uefi-full | control | | 6b01531a-f55d-40e9-b3a2-6d02be0b915b | overcloud-controller-1 | ACTIVE | ctlplane=192.168.24.16 | overcloud-hardened-uefi-full | control | | c61cc52b-cc48-4903-a971-073c60f53091 | overcloud-novacompute-0overcloud-baremetal-0 | ACTIVE | ctlplane=192.168.24.24 | overcloud-hardened-uefi-full | compute | +--------------------------------------+-------------------------+--------+------------------------+------------------------------+---------+Check if the VIF ID is the UUID of the new port:

$ openstack baremetal node vif list baremetal-0 +--------------------------------------+ | ID | +--------------------------------------+ | 6181c089-7e33-4f1c-b8fe-2523ff431ffc | +--------------------------------------+Check if the OpenStack Networking port MAC address is updated and matches one of the Bare Metal Provisioning service ports:

$ openstack port show 6181c089-7e33-4f1c-b8fe-2523ff431ffc -c mac_address -c fixed_ips +-------------+------------------------------------------------------------------------------+ | Field | Value | +-------------+------------------------------------------------------------------------------+ | fixed_ips | ip_address='192.168.24.24', subnet_id='1d11c677-5946-4733-87c3-23a9e06077aa' | | mac_address | 00:2d:28:2f:8d:95 | +-------------+------------------------------------------------------------------------------+Reboot the bare metal node so that it recognizes the new IP address:

$ openstack server reboot overcloud-baremetal-0After you detach or attach interfaces, the bare metal OS removes, adds, or modifies the network interfaces that have changed. When you replace a port, a DHCP request obtains the new IP address, but this might take some time because the old DHCP lease is still valid. To initiate these changes immediately, reboot the bare metal host.

5.5. Configuring notifications for the Bare Metal Provisioning service

You can configure the Bare Metal Provisioning service (ironic) to display notifications for different events that occur within the service. External services can use these notifications for billing purposes, monitoring a data store, and other purposes. To enable notifications for the Bare Metal Provisioning service, you must set the following options in your ironic.conf configuration file.

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

Procedure

-

The

notification_leveloption in the[DEFAULT]section determines the minimum priority level for which notifications are sent. You can set the values for this option todebug,info,warning,error, orcritical. If the option is set towarning, all notifications with priority levelwarning,error, orcriticalare sent, but not notifications with priority leveldebugorinfo. If this option is not set, no notifications are sent. The priority level of each available notification is documented below. -

The

transport_urloption in the[oslo_messaging_notifications]section determines the message bus used when sending notifications. If this is not set, the default transport used for RPC is used.

All notifications are emitted on the ironic_versioned_notifications topic in the message bus. Generally, each type of message that traverses the message bus is associated with a topic that describes the contents of the message.

5.6. Configuring automatic power fault recovery

The Bare Metal Provisioning service (ironic) has a string field fault that records power, cleaning, and rescue abort failures for nodes.

| Fault | Description |

|---|---|

| power failure | The node is in maintenance mode due to power sync failures that exceed the maximum number of retries. |

| clean failure | The node is in maintenance mode due to the failure of a cleaning operation. |

| rescue abort failure | The node is in maintenance mode due to the failure of a cleaning operation during rescue abort. |

| none | There is no fault present. |

Conductor checks the value of this field periodically. If the conductor detects a power failure state and can successfully restore power to the node, the node is removed from maintenance mode and restored to operation.

If the operator places a node in maintenance mode manually, the conductor does not automatically remove the node from maintenance mode.

The default interval is 300 seconds, however, you can configure this interval with director using hieradata.

Prerequisites

- A successful overcloud deployment that includes the Bare Metal Provisioning service. For more information, see Deploying an overcloud with the Bare Metal Provisioning service.

Procedure

Include the following hieradata to configure a custom recovery interval:

ironic::conductor::power_failure_recovery_intervalTo disable automatic power fault recovery, set the value to

0.

5.7. Introspecting overcloud nodes

Perform introspection of overcloud nodes to identify and store the specification of your nodes in director.