Network Functions Virtualization Planning and Configuration Guide

Planning and Configuring the Network Functions Virtualization (NFV) OpenStack Deployment

Abstract

Providing feedback on Red Hat documentation

We appreciate your input on our documentation. Tell us how we can make it better.

Providing documentation feedback in Jira

Use the Create Issue form to provide feedback on the documentation. The Jira issue will be created in the Red Hat OpenStack Platform Jira project, where you can track the progress of your feedback.

- Ensure that you are logged in to Jira. If you do not have a Jira account, create an account to submit feedback.

- Click the following link to open a the Create Issue page: Create Issue

- Complete the Summary and Description fields. In the Description field, include the documentation URL, chapter or section number, and a detailed description of the issue. Do not modify any other fields in the form.

- Click Create.

Chapter 1. Understanding Red Hat Network Functions Virtualization (NFV)

Network Functions Virtualization (NFV) is a software-based solution that helps the Communication Service Providers (CSPs) to move beyond the traditional, proprietary hardware to achieve greater efficiency and agility while reducing the operational costs.

An NFV environment allows for IT and network convergence by providing a virtualized infrastructure using the standard virtualization technologies that run on standard hardware devices such as switches, routers, and storage to virtualize network functions (VNFs). The management and orchestration logic deploys and sustains these services. NFV also includes a Systems Administration, Automation and Life-Cycle Management thereby reducing the manual work necessary.

1.1. Advantages of NFV

The main advantages of implementing network functions virtualization (NFV) are as follows:

- Accelerates the time-to-market by allowing you to to quickly deploy and scale new networking services to address changing demands.

- Supports innovation by enabling service developers to self-manage their resources and prototype using the same platform that will be used in production.

- Addresses customer demands in hours or minutes instead of weeks or days, without sacrificing security or performance.

- Reduces capital expenditure because it uses commodity-off-the-shelf hardware instead of expensive tailor-made equipment.

- Uses streamlined operations and automation that optimize day-to-day tasks to improve employee productivity and reduce operational costs.

1.2. Supported Configurations for NFV Deployments

You can use the Red Hat OpenStack Platform director toolkit to isolate specific network types, for example, external, project, internal API, and so on. You can deploy a network on a single network interface, or distributed over a multiple-host network interface. With Open vSwitch you can create bonds by assigning multiple interfaces to a single bridge. Configure network isolation in a Red Hat OpenStack Platform installation with template files. If you do not provide template files, the service networks deploy on the provisioning network.

There are two types of template configuration files:

network-environment.yamlThis file contains network details, such as subnets and IP address ranges, for the overcloud nodes. This file also contains the different settings that override the default parameter values for various scenarios.

Host network templates, for example, compute.yaml and controller.yaml

These templates define the network interface configuration for the overcloud nodes. The values of the network details are provided by the

network-environment.yamlfile.

These heat template files are located at /usr/share/openstack-tripleo-heat-templates/ on the undercloud node. For samples of these heat template files for NFV, see Sample DPDK SR-IOV YAML files.

The Hardware requirements and Software requirements sections provide more details on how to plan and configure the heat template files for NFV using the Red Hat OpenStack Platform director.

You can edit YAML files to configure NFV. For an introduction to the YAML file format, see YAML in a Nutshell.

- Data Plane Development Kit (DPDK) and Single Root I/O Virtualization (SR-IOV)

Red Hat OpenStack Platform (RHOSP) supports NFV deployments with the inclusion of automated OVS-DPDK and SR-IOV configuration.

ImportantRed Hat does not support the use of OVS-DPDK for non-NFV workloads. If you need OVS-DPDK functionality for non-NFV workloads, contact your Technical Account Manager (TAM) or open a customer service request case to discuss a Support Exception and other options. To open a customer service request case, go to Create a case and choose Account > Customer Service Request.

- Hyper-converged Infrastructure (HCI)

- You can colocate the Compute sub-system with the Red Hat Ceph Storage nodes. This hyper-converged model delivers lower cost of entry, smaller initial deployment footprints, maximized capacity utilization, and more efficient management in NFV use cases. For more information about HCI, see the Hyperconverged Infrastructure Guide.

- Composable roles

- You can use composable roles to create custom deployments. Composable roles allow you to add or remove services from each role. For more information about the Composable Roles, see Composable services and custom roles.

- Open vSwitch (OVS) with LACP

- As of OVS 2.9, LACP with OVS is fully supported. This is not recommended for Openstack control plane traffic, as OVS or Openstack Networking interruptions might interfere with management. For more information, see Open vSwitch (OVS) bonding options.

- OVS Hardware offload

- Red Hat OpenStack Platform supports, with limitations, the deployment of OVS hardware offload. For information about deploying OVS with hardware offload, see Configuring OVS hardware offload.

- Open Virtual Network (OVN)

The following NFV OVN configurations are available in RHOSP 16.1.4:

1.3. NFV data plane connectivity

With the introduction of NFV, more networking vendors are starting to implement their traditional devices as VNFs. While the majority of networking vendors are considering virtual machines, some are also investigating a container-based approach as a design choice. An OpenStack-based solution should be rich and flexible due to two primary reasons:

- Application readiness - Network vendors are currently in the process of transforming their devices into VNFs. Different VNFs in the market have different maturity levels; common barriers to this readiness include enabling RESTful interfaces in their APIs, evolving their data models to become stateless, and providing automated management operations. OpenStack should provide a common platform for all.

Broad use-cases - NFV includes a broad range of applications that serve different use-cases. For example, Virtual Customer Premise Equipment (vCPE) aims at providing a number of network functions such as routing, firewall, virtual private network (VPN), and network address translation (NAT) at customer premises. Virtual Evolved Packet Core (vEPC), is a cloud architecture that provides a cost-effective platform for the core components of Long-Term Evolution (LTE) network, allowing dynamic provisioning of gateways and mobile endpoints to sustain the increased volumes of data traffic from smartphones and other devices.

These use cases are implemented using different network applications and protocols, and require different connectivity, isolation, and performance characteristics from the infrastructure. It is also common to separate between control plane interfaces and protocols and the actual forwarding plane. OpenStack must be flexible enough to offer different datapath connectivity options.

In principle, there are two common approaches for providing data plane connectivity to virtual machines:

- Direct hardware access bypasses the linux kernel and provides secure direct memory access (DMA) to the physical NIC using technologies such as PCI Passthrough or single root I/O virtualization (SR-IOV) for both Virtual Function (VF) and Physical Function (PF) pass-through.

- Using a virtual switch (vswitch), implemented as a software service of the hypervisor. Virtual machines are connected to the vSwitch using virtual interfaces (vNICs), and the vSwitch is capable of forwarding traffic between virtual machines, as well as between virtual machines and the physical network.

Some of the fast data path options are as follows:

- Single Root I/O Virtualization (SR-IOV) is a standard that makes a single PCI hardware device appear as multiple virtual PCI devices. It works by introducing Physical Functions (PFs), which are the fully featured PCIe functions that represent the physical hardware ports, and Virtual Functions (VFs), which are lightweight functions that are assigned to the virtual machines. To the VM, the VF resembles a regular NIC that communicates directly with the hardware. NICs support multiple VFs.

- Open vSwitch (OVS) is an open source software switch that is designed to be used as a virtual switch within a virtualized server environment. OVS supports the capabilities of a regular L2-L3 switch and also offers support to the SDN protocols such as OpenFlow to create user-defined overlay networks (for example, VXLAN). OVS uses Linux kernel networking to switch packets between virtual machines and across hosts using physical NIC. OVS now supports connection tracking (Conntrack) with built-in firewall capability to avoid the overhead of Linux bridges that use iptables/ebtables. Open vSwitch for Red Hat OpenStack Platform environments offers default OpenStack Networking (neutron) integration with OVS.

- Data Plane Development Kit (DPDK) consists of a set of libraries and poll mode drivers (PMD) for fast packet processing. It is designed to run mostly in the user-space, enabling applications to perform their own packet processing directly from or to the NIC. DPDK reduces latency and allows more packets to be processed. DPDK Poll Mode Drivers (PMDs) run in busy loop, constantly scanning the NIC ports on host and vNIC ports in guest for arrival of packets.

- DPDK accelerated Open vSwitch (OVS-DPDK) is Open vSwitch bundled with DPDK for a high performance user-space solution with Linux kernel bypass and direct memory access (DMA) to physical NICs. The idea is to replace the standard OVS kernel data path with a DPDK-based data path, creating a user-space vSwitch on the host that uses DPDK internally for its packet forwarding. The advantage of this architecture is that it is mostly transparent to users. The interfaces it exposes, such as OpenFlow, OVSDB, the command line, remain mostly the same.

1.4. ETSI NFV Architecture

The European Telecommunications Standards Institute (ETSI) is an independent standardization group that develops standards for information and communications technologies (ICT) in Europe.

Network functions virtualization (NFV) focuses on addressing problems involved in using proprietary hardware devices. With NFV, the necessity to install network-specific equipment is reduced, depending upon the use case requirements and economic benefits. The ETSI Industry Specification Group for Network Functions Virtualization (ETSI ISG NFV) sets the requirements, reference architecture, and the infrastructure specifications necessary to ensure virtualized functions are supported.

Red Hat is offering an open-source based cloud-optimized solution to help the Communication Service Providers (CSP) to achieve IT and network convergence. Red Hat adds NFV features such as single root I/O virtualization (SR-IOV) and Open vSwitch with Data Plane Development Kit (OVS-DPDK) to Red Hat OpenStack.

1.5. NFV ETSI architecture and components

In general, a network functions virtualization (NFV) platform has the following components:

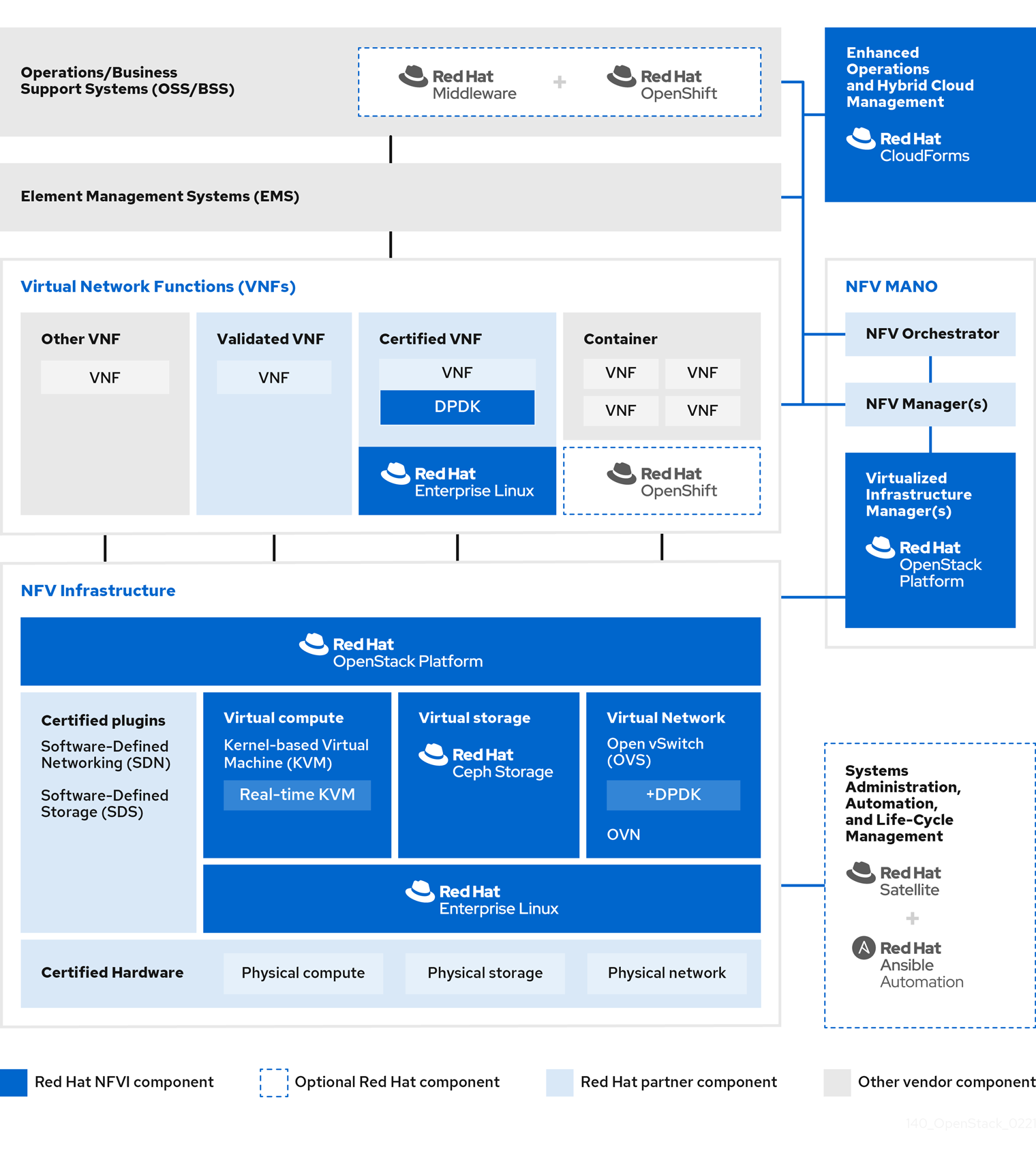

Figure 1.1. NFV ETSI architecture and components

- Virtualized Network Functions (VNFs) - the software implementation of routers, firewalls, load balancers, broadband gateways, mobile packet processors, servicing nodes, signalling, location services, and other network functions.

- NFV Infrastructure (NFVi) - the physical resources (compute, storage, network) and the virtualization layer that make up the infrastructure. The network includes the datapath for forwarding packets between virtual machines and across hosts. This allows you to install VNFs without being concerned about the details of the underlying hardware. NFVi forms the foundation of the NFV stack. NFVi supports multi-tenancy and is managed by the Virtual Infrastructure Manager (VIM). Enhanced Platform Awareness (EPA) improves the virtual machine packet forwarding performance (throughput, latency, jitter) by exposing low-level CPU and NIC acceleration components to the VNF.

- NFV Management and Orchestration (MANO) - the management and orchestration layer focuses on all the service management tasks required throughout the life cycle of the VNF. The main goals of MANO is to allow service definition, automation, error-correlation, monitoring, and life-cycle management of the network functions offered by the operator to its customers, decoupled from the physical infrastructure. This decoupling requires additional layers of management, provided by the Virtual Network Function Manager (VNFM). VNFM manages the life cycle of the virtual machines and VNFs by either interacting directly with them or through the Element Management System (EMS) provided by the VNF vendor. The other important component defined by MANO is the Orchestrator, also known as NFVO. NFVO interfaces with various databases and systems including Operations/Business Support Systems (OSS/BSS) on the top and the VNFM on the bottom. If the NFVO wants to create a new service for a customer, it asks the VNFM to trigger the instantiation of a VNF, which may result in multiple virtual machines.

- Operations and Business Support Systems (OSS/BSS) - provides the essential business function applications, for example, operations support and billing. The OSS/BSS needs to be adapted to NFV, integrating with both legacy systems and the new MANO components. The BSS systems set policies based on service subscriptions and manage reporting and billing.

- Systems Administration, Automation and Life-Cycle Management - manages system administration, automation of the infrastructure components and life cycle of the NFVi platform.

1.6. Red Hat NFV components

Red Hat’s solution for NFV includes a range of products that can act as the different components of the NFV framework in the ETSI model. The following products from the Red Hat portfolio integrate into an NFV solution:

- Red Hat OpenStack Platform - Supports IT and NFV workloads. The Enhanced Platform Awareness (EPA) features deliver deterministic performance improvements through CPU Pinning, Huge pages, Non-Uniform Memory Access (NUMA) affinity and network adaptors (NICs) that support SR-IOV and OVS-DPDK.

- Red Hat Enterprise Linux and Red Hat Enterprise Linux Atomic Host - Create virtual machines and containers as VNFs.

- Red Hat Ceph Storage - Provides the the unified elastic and high-performance storage layer for all the needs of the service provider workloads.

- Red Hat JBoss Middleware and OpenShift Enterprise by Red Hat - Optionally provide the ability to modernize the OSS/BSS components.

- Red Hat CloudForms - Provides a VNF manager and presents data from multiple sources, such as the VIM and the NFVi in a unified display.

- Red Hat Satellite and Ansible by Red Hat - Optionally provide enhanced systems administration, automation and life-cycle management.

1.7. NFV installation summary

The Red Hat OpenStack Platform director installs and manages a complete OpenStack environment. The director is based on the upstream OpenStack TripleO project, which is an abbreviation for "OpenStack-On-OpenStack". This project takes advantage of the OpenStack components to install a fully operational OpenStack environment; this includes a minimal OpenStack node called the undercloud. The undercloud provisions and controls the overcloud (a series of bare metal systems used as the production OpenStack nodes). The director provides a simple method for installing a complete Red Hat OpenStack Platform environment that is both lean and robust.

For more information on installing the undercloud and overcloud, see the Director Installation and Usage guide.

To install the NFV features, complete the following additional steps:

-

Include SR-IOV and PCI Passthrough parameters in your

network-environment.yamlfile, update thepost-install.yamlfile for CPU tuning, modify thecompute.yamlfile, and run theovercloud_deploy.shscript to deploy the overcloud. -

Install the DPDK libraries and drivers for fast packets processing by polling data directly from the NICs. Include the DPDK parameters in your

network-environment.yamlfile, update thepost-install.yamlfiles for CPU tuning, update thecompute.yamlfile to set the bridge with DPDK port, update thecontroller.yamlfile to set the bridge and an interface with VLAN configured, and run theovercloud_deploy.shscript to deploy the overcloud.

Chapter 2. NFV performance considerations

For a network functions virtualization (NFV) solution to be useful, its virtualized functions must meet or exceed the performance of physical implementations. Red Hat’s virtualization technologies are based on the high-performance Kernel-based Virtual Machine (KVM) hypervisor, common in OpenStack and cloud deployments.

Red Hat OpenStack Platform director configures the Compute nodes to enforce resource partitioning and fine tuning to achieve line rate performance for the guest virtual network functions (VNFs). The key performance factors in the NFV use case are throughput, latency, and jitter.

You can enable high-performance packet switching between physical NICs and virtual machines using data plane development kit (DPDK) accelerated virtual machines. OVS 2.10 embeds support for DPDK 17 and includes support for vhost-user multiqueue, allowing scalable performance. OVS-DPDK provides line-rate performance for guest VNFs.

Single root I/O virtualization (SR-IOV) networking provides enhanced performance, including improved throughput for specific networks and virtual machines.

Other important features for performance tuning include huge pages, NUMA alignment, host isolation, and CPU pinning. VNF flavors require huge pages and emulator thread isolation for better performance. Host isolation and CPU pinning improve NFV performance and prevent spurious packet loss.

2.1. CPUs and NUMA nodes

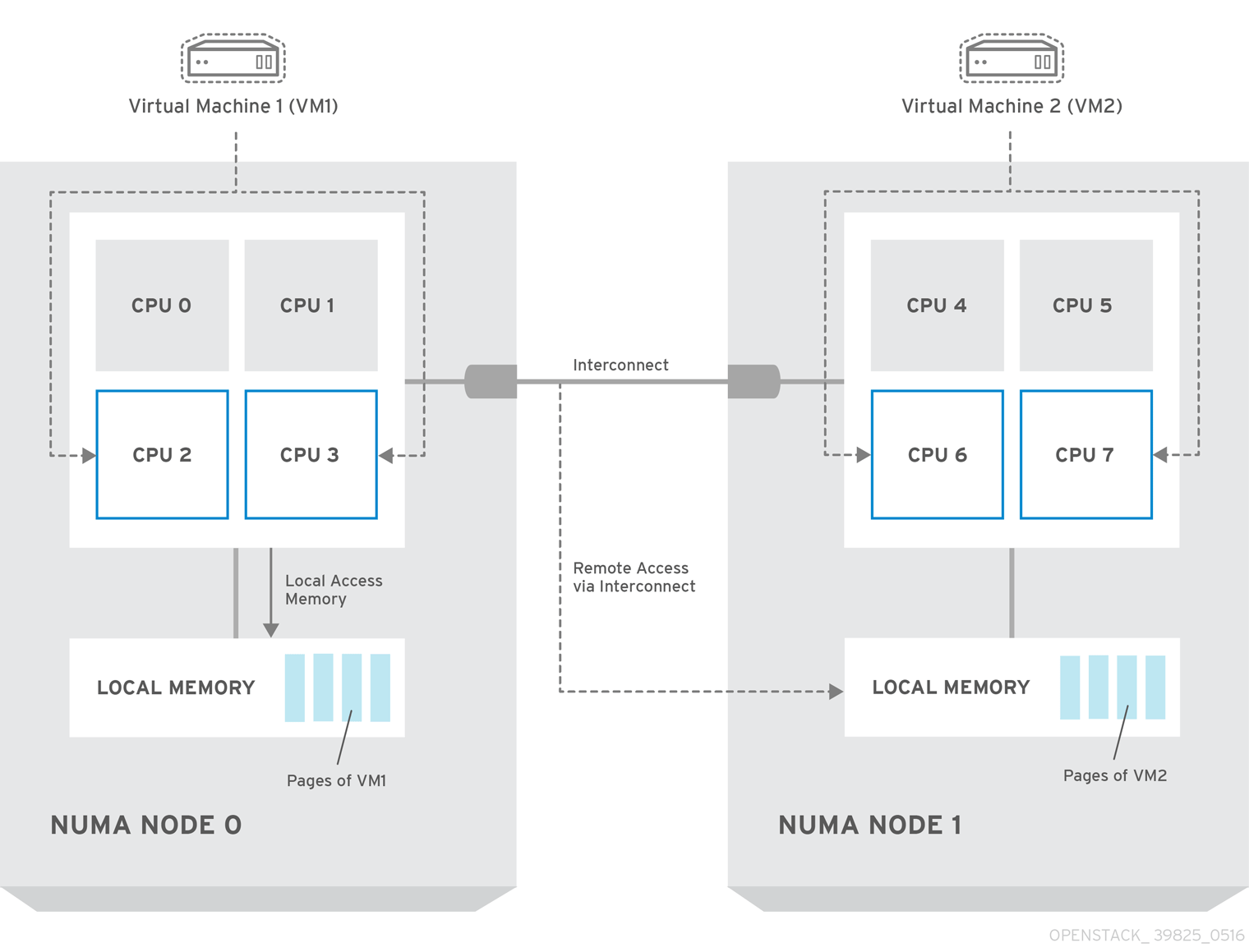

Previously, all memory on x86 systems was equally accessible to all CPUs in the system. This resulted in memory access times that were the same regardless of which CPU in the system was performing the operation and was referred to as Uniform Memory Access (UMA).

In Non-Uniform Memory Access (NUMA), system memory is divided into zones called nodes, which are allocated to particular CPUs or sockets. Access to memory that is local to a CPU is faster than memory connected to remote CPUs on that system. Normally, each socket on a NUMA system has a local memory node whose contents can be accessed faster than the memory in the node local to another CPU or the memory on a bus shared by all CPUs.

Similarly, physical NICs are placed in PCI slots on the Compute node hardware. These slots connect to specific CPU sockets that are associated to a particular NUMA node. For optimum performance, connect your datapath NICs to the same NUMA nodes in your CPU configuration (SR-IOV or OVS-DPDK).

The performance impact of NUMA misses are significant, generally starting at a 10% performance hit or higher. Each CPU socket can have multiple CPU cores which are treated as individual CPUs for virtualization purposes.

For more information about NUMA, see What is NUMA and how does it work on Linux?

2.1.1. NUMA node example

The following diagram provides an example of a two-node NUMA system and the way the CPU cores and memory pages are made available:

Figure 2.1. Example: two-node NUMA system

Remote memory available via Interconnect is accessed only if VM1 from NUMA node 0 has a CPU core in NUMA node 1. In this case, the memory of NUMA node 1 acts as local for the third CPU core of VM1 (for example, if VM1 is allocated with CPU 4 in the diagram above), but at the same time, it acts as remote memory for the other CPU cores of the same VM.

2.1.2. NUMA aware instances

You can configure an OpenStack environment to use NUMA topology awareness on systems with a NUMA architecture. When running a guest operating system in a virtual machine (VM) there are two NUMA topologies involved:

- the NUMA topology of the physical hardware of the host

- the NUMA topology of the virtual hardware exposed to the guest operating system

You can optimize the performance of guest operating systems by aligning the virtual hardware with the physical hardware NUMA topology.

2.2. CPU pinning

CPU pinning is the ability to run a specific virtual machine’s virtual CPU on a specific physical CPU, in a given host. vCPU pinning provides similar advantages to task pinning on bare-metal systems. Since virtual machines run as user space tasks on the host operating system, pinning increases cache efficiency.

For details on how to configure CPU pinning, see Configuring CPU pinning on Compute nodes in the Configuring the Compute service for instance creation guide.

2.3. Huge pages

Physical memory is segmented into contiguous regions called pages. For efficiency, the system retrieves memory by accessing entire pages instead of individual bytes of memory. To perform this translation, the system looks in the Translation Lookaside Buffers (TLB) that contain the physical to virtual address mappings for the most recently or frequently used pages. When the system cannot find a mapping in the TLB, the processor must iterate through all of the page tables to determine the address mappings. Optimize the TLB to minimize the performance penalty that occurs during these TLB misses.

The typical page size in an x86 system is 4KB, with other larger page sizes available. Larger page sizes mean that there are fewer pages overall, and therefore increases the amount of system memory that can have its virtual to physical address translation stored in the TLB. Consequently, this reduces TLB misses, which increases performance. With larger page sizes, there is an increased potential for memory to be under-utilized as processes must allocate in pages, but not all of the memory is likely required. As a result, choosing a page size is a compromise between providing faster access times with larger pages, and ensuring maximum memory utilization with smaller pages.

2.4. Port security

Port security is an anti-spoofing measure that blocks any egress traffic that does not match the source IP and source MAC address of the originating network port. You cannot view or modify this behavior using security group rules.

By default, the port_security_enabled parameter is set to enabled on newly created Neutron networks in OpenStack. Newly created ports copy the value of the port_security_enabled parameter from the network they are created on.

For some NFV use cases, such as building a firewall or router, you must disable port security.

To disable port security on a single port, run the following command:

openstack port set --disable-port-security <port-id>To prevent port security from being enabled on any newly created port on a network, run the following command:

openstack network set --disable-port-security <network-id>Chapter 3. Hardware requirements for NFV

This section describes the hardware requirements for NFV.

For a complete list of the certified hardware for Red Hat OpenStack Platform, see Red Hat OpenStack Platform certified hardware.

3.1. Tested NICs for NFV

For a list of tested NICs for NFV, see the Red Hat Knowledgebase solution Network Adapter Fast Datapath Feature Support Matrix.

Use the default driver for the supported NIC, unless you are configuring OVS-DPDK on NVIDIA (Mellanox) network interfaces. For NVIDIA network interfaces, you must set the corresponding kernel driver in the j2 network configuration template.

Example

In this example, the mlx5_core driver is set for the Mellanox ConnectX-5 network interface:

members

- type: ovs_dpdk_port

name: dpdk0

driver: mlx5_core

members:

- type: interface

name: enp3s0f03.2. Troubleshooting hardware offload

In a Red Hat OpenStack Platform(RHOSP) 16.2 deployment, OVS Hardware Offload might not offload flows for VMs with switchdev-capable ports and Mellanox ConnectX5 NICs. To troubleshoot and configure offload flows in this scenario, disable the ESWITCH_IPV4_TTL_MODIFY_ENABLE Mellanox firmware parameter. For more troubleshooting information about OVS Hardware Offload in RHOSP 16.2, see the Red Hat Knowledgebase solution OVS Hardware Offload with Mellanox NIC in OpenStack Platform 16.2.

Procedure

- Log in to the Compute nodes in your RHOSP deployment that have Mellanox NICs that you want to configure.

Use the

mstflintutility to query theESWITCH_IPV4_TTL_MODIFY_ENABLEMellanox firmware parameter .[root@compute-1 ~]# yum install -y mstflint [root@compute-1 ~]# mstconfig -d <PF PCI BDF> q ESWITCH_IPV4_TTL_MODIFY_ENABLEIf the

ESWITCH_IPV4_TTL_MODIFY_ENABLEparameter is enabled and set to1, then set the value to0to disable it.[root@compute-1 ~]# mstconfig -d <PF PCI BDF> s ESWITCH_IPV4_TTL_MODIFY_ENABLE=0`- Reboot the node.

3.3. Discovering your NUMA node topology

When you plan your deployment, you must understand the NUMA topology of your Compute node to partition the CPU and memory resources for optimum performance. To determine the NUMA information, perform one of the following tasks:

- Enable hardware introspection to retrieve this information from bare-metal nodes.

- Log on to each bare-metal node to manually collect the information.

You must install and configure the undercloud before you can retrieve NUMA information through hardware introspection. For more information about undercloud configuration, see the Director Installation and Usage guide.

3.4. Retrieving hardware introspection details

The Bare Metal service hardware-inspection-extras feature is enabled by default, and you can use it to retrieve hardware details for overcloud configuration. For more information about the inspection_extras parameter in the undercloud.conf file, see Configuring director.

For example, the numa_topology collector is part of the hardware-inspection extras and includes the following information for each NUMA node:

- RAM (in kilobytes)

- Physical CPU cores and their sibling threads

- NICs associated with the NUMA node

Procedure

To retrieve the information listed above, substitute <UUID> with the UUID of the bare-metal node to complete the following command:

$ openstack baremetal introspection data save \ <UUID> | jq .numa_topologyThe following example shows the retrieved NUMA information for a bare-metal node:

{ "cpus": [ { "cpu": 1, "thread_siblings": [ 1, 17 ], "numa_node": 0 }, { "cpu": 2, "thread_siblings": [ 10, 26 ], "numa_node": 1 }, { "cpu": 0, "thread_siblings": [ 0, 16 ], "numa_node": 0 }, { "cpu": 5, "thread_siblings": [ 13, 29 ], "numa_node": 1 }, { "cpu": 7, "thread_siblings": [ 15, 31 ], "numa_node": 1 }, { "cpu": 7, "thread_siblings": [ 7, 23 ], "numa_node": 0 }, { "cpu": 1, "thread_siblings": [ 9, 25 ], "numa_node": 1 }, { "cpu": 6, "thread_siblings": [ 6, 22 ], "numa_node": 0 }, { "cpu": 3, "thread_siblings": [ 11, 27 ], "numa_node": 1 }, { "cpu": 5, "thread_siblings": [ 5, 21 ], "numa_node": 0 }, { "cpu": 4, "thread_siblings": [ 12, 28 ], "numa_node": 1 }, { "cpu": 4, "thread_siblings": [ 4, 20 ], "numa_node": 0 }, { "cpu": 0, "thread_siblings": [ 8, 24 ], "numa_node": 1 }, { "cpu": 6, "thread_siblings": [ 14, 30 ], "numa_node": 1 }, { "cpu": 3, "thread_siblings": [ 3, 19 ], "numa_node": 0 }, { "cpu": 2, "thread_siblings": [ 2, 18 ], "numa_node": 0 } ], "ram": [ { "size_kb": 66980172, "numa_node": 0 }, { "size_kb": 67108864, "numa_node": 1 } ], "nics": [ { "name": "ens3f1", "numa_node": 1 }, { "name": "ens3f0", "numa_node": 1 }, { "name": "ens2f0", "numa_node": 0 }, { "name": "ens2f1", "numa_node": 0 }, { "name": "ens1f1", "numa_node": 0 }, { "name": "ens1f0", "numa_node": 0 }, { "name": "eno4", "numa_node": 0 }, { "name": "eno1", "numa_node": 0 }, { "name": "eno3", "numa_node": 0 }, { "name": "eno2", "numa_node": 0 } ] }

3.5. NFV BIOS settings

The following table describes the required BIOS settings for NFV:

You must enable SR-IOV global and NIC settings in the BIOS, or your Red Hat OpenStack Platform (RHOSP) deployment with SR-IOV Compute nodes will fail.

| Parameter | Setting |

|---|---|

|

| Disabled. |

|

| Disabled. |

|

| Enabled. |

|

| Enabled. |

|

| Enabled. |

|

| Enabled. |

|

| Performance. |

|

| Enabled. |

|

|

Disabled in NFV deployments that require deterministic performance. |

|

| Enabled for Intel cards if VFIO functionality is needed. |

|

| Disabled. |

On processors that use the intel_idle driver, Red Hat Enterprise Linux can ignore BIOS settings and re-enable the processor C-state.

You can disable intel_idle and instead use the acpi_idle driver by specifying the key-value pair intel_idle.max_cstate=0 on the kernel boot command line.

Confirm that the processor is using the acpi_idle driver by checking the contents of current_driver:

# cat /sys/devices/system/cpu/cpuidle/current_driver

acpi_idleYou will experience some latency after changing drivers, because it takes time for the Tuned daemon to start. However, after Tuned loads, the processor does not use the deeper C-state.

Chapter 4. Software requirements for NFV

This section describes the supported configurations and drivers, and subscription details necessary for NFV.

4.1. Registering and enabling repositories

To install Red Hat OpenStack Platform, you must register Red Hat OpenStack Platform director using the Red Hat Subscription Manager, and subscribe to the required channels. For more information about registering and updating your undercloud, see Registering the undercloud and attaching subscriptions in the Director Installation and Usage guide.

Procedure

Register your system with the Content Delivery Network, entering your Customer Portal user name and password when prompted.

[stack@director ~]$ sudo subscription-manager registerDetermine the entitlement pool ID for Red Hat OpenStack Platform director, for example {Pool ID} from the following command and output:

[stack@director ~]$ sudo subscription-manager list --available --all --matches="Red Hat OpenStack" Subscription Name: Name of SKU Provides: Red Hat Single Sign-On Red Hat Enterprise Linux Workstation Red Hat CloudForms Red Hat OpenStack Red Hat Software Collections (for RHEL Workstation) SKU: SKU-Number Contract: Contract-Number Pool ID: {Pool-ID}-123456 Provides Management: Yes Available: 1 Suggested: 1 Service Level: Support-level Service Type: Service-Type Subscription Type: Sub-type Ends: End-date System Type: PhysicalInclude the

Pool IDvalue in the following command to attach the Red Hat OpenStack Platform 16.2 entitlement.[stack@director ~]$ sudo subscription-manager attach --pool={Pool-ID}-123456Disable the default repositories.

subscription-manager repos --disable=*Enable the required repositories for Red Hat OpenStack Platform with NFV.

$ sudo subscription-manager repos \ --enable=rhel-8-for-x86_64-baseos-eus-rpms \ --enable=rhel-8-for-x86_64-appstream-eus-rpms \ --enable=rhel-8-for-x86_64-highavailability-eus-rpms \ --enable=ansible-2.9-for-rhel-8-x86_64-rpms \ --enable=openstack-16.2-for-rhel-8-x86_64-rpms \ --enable=rhel-8-for-x86_64-nfv-rpms \ --enable=fast-datapath-for-rhel-8-x86_64-rpmsUpdate your system so you have the latest base system packages.

[stack@director ~]$ sudo dnf update -y [stack@director ~]$ sudo reboot

To register your overcloud nodes, see Ansible-based overcloud registration in the Advanced Overcloud Customization guide.

4.2. Supported configurations for NFV deployments

Red Hat OpenStack Platform (RHOSP) supports the following NFV deployments using director:

- Single root I/O virtualization (SR-IOV)

- Open vSwitch with Data Plane Development Kit (OVS-DPDK)

Additionally, you can deploy RHOSP with any of the following features:

Implementing composable services and custom roles.

For more information, see the Composable services and custom roles in the Advanced Overcloud Customization guide

Colocating Compute and Ceph Storage service on the same host.

For more information, see the Hyperconverged Infrastructure Guide.

Configuring Real-time Compute nodes.

For more information, see Configuring real-time compute in the Configuring the Compute Service for Instance Creation guide.

Enabling hardware offload.

For more information, see Configuring OVS hardware offload.

4.2.1. Deploying RHOSP with the OVS mechanism driver

Deploy RHOSP with the OVS mechanism driver:

Procedure

Modify the

containers-prepare-parameter.yamlfile so that theneutron_driverparameter is set toovs.parameter_defaults: ContainerImagePrepare: - push_destination: true set: neutron_driver: ovs ...Include the

neutron-ovs.yamlenvironment file in the/usr/share/openstack-tripleo-heat-templates/environments/servicesdirectory with your deployment script.TEMPLATES=/usr/share/openstack-tripleo-heat-templates openstack overcloud deploy --templates \ -e ${TEMPLATES}/environments/network-environment.yaml \ -e ${TEMPLATES}/environments/network-isolation.yaml \ -e ${TEMPLATES}/environments/services/neutron-ovs.yaml \ -e ${TEMPLATES}/environments/services/neutron-ovs-dpdk.yaml \ -e ${TEMPLATES}/environments/services/neutron-sriov.yaml \ -e /home/stack/containers-prepare-parameter.yaml

4.2.2. Deploying OVN with OVS-DPDK and SR-IOV

Deploy DPDK and SRIOV VMs on the same node as OVN.

Procedure

Generate the

ComputeOvsDpdkSriovrole:openstack overcloud roles generate -o roles_data.yaml Controller ComputeOvsDpdkSriov-

Add

OS::TripleO::Services::OVNMetadataAgentto the Controller role. Add the custom resources for OVS-DPDK with the

resource_registryparameter:resource_registry: # Specify the relative/absolute path to the config files you want to use for override the default. OS::TripleO::ComputeOvsDpdkSriov::Net::SoftwareConfig: nic-configs/computeovsdpdksriov.yaml OS::TripleO::Controller::Net::SoftwareConfig: nic-configs/controller.yamlIn the parameter_defaults section, edit the value of the tunnel type parameter to

geneve:NeutronTunnelTypes: 'geneve' NeutronNetworkType: ['geneve', 'vlan']Optional: If you use a centralized routing model, disable Distributed Virtual Routing (DVR):

NeutronEnableDVR: falseUnder

parameters_defaults, set the bridge mapping:# The OVS logical-to-physical bridge mappings to use. NeutronBridgeMappings: "datacentre:br-ex,data1:br-link0,data2:br-link1"Configure the network interfaces in the

computeovsdpdksriov.yamlfile:- type: ovs_user_bridge name: br-link0 use_dhcp: false ovs_extra: - str_replace: template: set port br-link0 tag=_VLAN_TAG_ params: _VLAN_TAG_: get_param: TenantNetworkVlanID addresses: - ip_netmask: get_param: TenantIpSubnet members: - type: ovs_dpdk_port name: br-link0-dpdk-port0 rx_queue: 1 members: - type: interface name: eno3 - type: sriov_pf name: eno4 use_dhcp: false numvfs: 5 defroute: false nm_controlled: true hotplug: true promisc: falseInclude the following yaml files in your deployment script:

- neutron-ovn-dpdk.yaml

- neutron-ovn-sriov.yaml

Open Virtual Networking (OVN) is the default networking mechanism driver in Red Hat OpenStack Platform 16.2. If you want to use OVN with distributed virtual routing (DVR), you must include the environments/services/neutron-ovn-dvr-ha.yaml file in the openstack overcloud deploy command. If you want to use OVN without DVR, you must include the environments/services/neutron-ovn-ha.yaml file in the openstack overcloud deploy command, and set the NeutronEnableDVR parameter to false. If you want to use OVN with SR-IOV, you must include the environments/services/neutron-ovn-sriov.yaml file as the last of the OVN environment files in the openstack overcloud deploy command.

4.2.3. Deploying OVN with OVS TC Flower offload

Deploy OVS TC Flower offload on the same node as OVN.

The Red Hat Enterprise Linux Traffic Control (TC) subsystem does not support connection tracking (conntrack) helpers or application layer gateways (ALGs). Therefore, if you are using ALGs, you must disable TC Flower offload.

Procedure

Generate the

ComputeOvsDpdkSriovrole:openstack overcloud roles generate -o roles_data.yaml \ ControllerSriov ComputeSriovConfigure the

physical_networkparameter settings relevant to your deployment.-

For VLAN, set the

physical_networkparameter to the name of the network that you create in neutron after deployment. Use this value for theNeutronBridgeMappingsparameter also. Under role-specific parameters, such as

ComputeSriovOffloadParameters, ensure the value of theOvsHwOffloadparameter istrue.parameter_defaults: NeutronBridgeMappings: 'datacentre:br-ex,tenant:br-offload' NeutronNetworkVLANRanges: 'tenant:502:505' NeutronFlatNetworks: 'datacentre,tenant' NeutronPhysicalDevMappings: - tenant:ens1f0 - tenant:ens1f1 NovaPCIPassthrough: - address: "0000:17:00.1" physical_network: "tenant" - address: "0000:3b:00.1" physical_network: "tenant" NeutronTunnelTypes: '' NeutronNetworkType: 'vlan' ComputeSriovOffloadParameters: OvsHwOffload: True KernelArgs: "default_hugepagesz=1GB hugepagesz=1G hugepages=32 intel_iommu=on iommu=pt isolcpus=1-11,13-23" IsolCpusList: "1-11,13-23" NovaReservedHostMemory: 4096 NovaComputeCpuDedicatedSet: ['1-11','13-23'] NovaComputeCpuSharedSet: ['0','12']

-

For VLAN, set the

Configure the network interfaces in the

computeovsdpdksriov.yamlfile:- type: ovs_bridge name: br-offload mtu: 9000 use_dhcp: false addresses: - ip_netmask: get_param: TenantIpSubnet members: - type: linux_bond name: bond-pf bonding_options: "mode=active-backup miimon=100" members: - type: sriov_pf name: ens1f0 numvfs: 3 primary: true promisc: true use_dhcp: false defroute: false link_mode: switchdev - type: sriov_pf name: ens1f1 numvfs: 3 promisc: true use_dhcp: false defroute: false link_mode: switchdevInclude the following yaml files in your deployment script:

- ovs-hw-offload.yaml

neutron-ovn-sriov.yaml

TEMPLATES_HOME=”/usr/share/openstack-tripleo-heat-templates” CUSTOM_TEMPLATES=”/home/stack/templates” openstack overcloud deploy --templates \ -r ${CUSTOM_TEMPLATES}/roles_data.yaml \ -e ${TEMPLATES_HOME}/environments/services/neutron-ovn-sriov.yaml \ -e ${TEMPLATES_HOME}/environments/ovs-hw-offload.yaml \ -e ${CUSTOM_TEMPLATES}/network-environment.yaml

4.3. Supported drivers for NFV

For a complete list of supported drivers, see Component, Plug-In, and Driver Support in Red Hat OpenStack Platform .

For a list of NICs tested for Red Hat OpenStack Platform deployments with NFV, see Tested NICs for NFV.

4.4. Compatibility with third-party software

For a complete list of products and services tested, supported, and certified to perform with Red Hat OpenStack Platform, see Third Party Software compatible with Red Hat OpenStack Platform. You can filter the list by product version and software category.

For a complete list of products and services tested, supported, and certified to perform with Red Hat Enterprise Linux, see Third Party Software compatible with Red Hat Enterprise Linux. You can filter the list by product version and software category.

Chapter 5. Network considerations for NFV

The undercloud host requires at least the following networks:

- Provisioning network - Provides DHCP and PXE-boot functions to help discover bare-metal systems for use in the overcloud.

- External network - A separate network for remote connectivity to all nodes. The interface connecting to this network requires a routable IP address, either defined statically, or generated dynamically from an external DHCP service.

The minimal overcloud network configuration includes the following NIC configurations:

- Single NIC configuration - One NIC for the provisioning network on the native VLAN and tagged VLANs that use subnets for the different overcloud network types.

- Dual NIC configuration - One NIC for the provisioning network and the other NIC for the external network.

- Dual NIC configuration - One NIC for the provisioning network on the native VLAN, and the other NIC for tagged VLANs that use subnets for different overcloud network types.

- Multiple NIC configuration - Each NIC uses a subnet for a different overcloud network type.

For more information on the networking requirements, see Preparing your undercloud networking in the Director Installation and Usage guide.

Chapter 6. Planning an SR-IOV deployment

Optimize single root I/O virtualization (SR-IOV) deployments for NFV by setting individual parameters based on your Compute node hardware.

To evaluate your hardware impact on the SR-IOV parameters, see Discovering your NUMA node topology.

6.1. Hardware partitioning for an SR-IOV deployment

To achieve high performance with SR-IOV, partition the resources between the host and the guest.

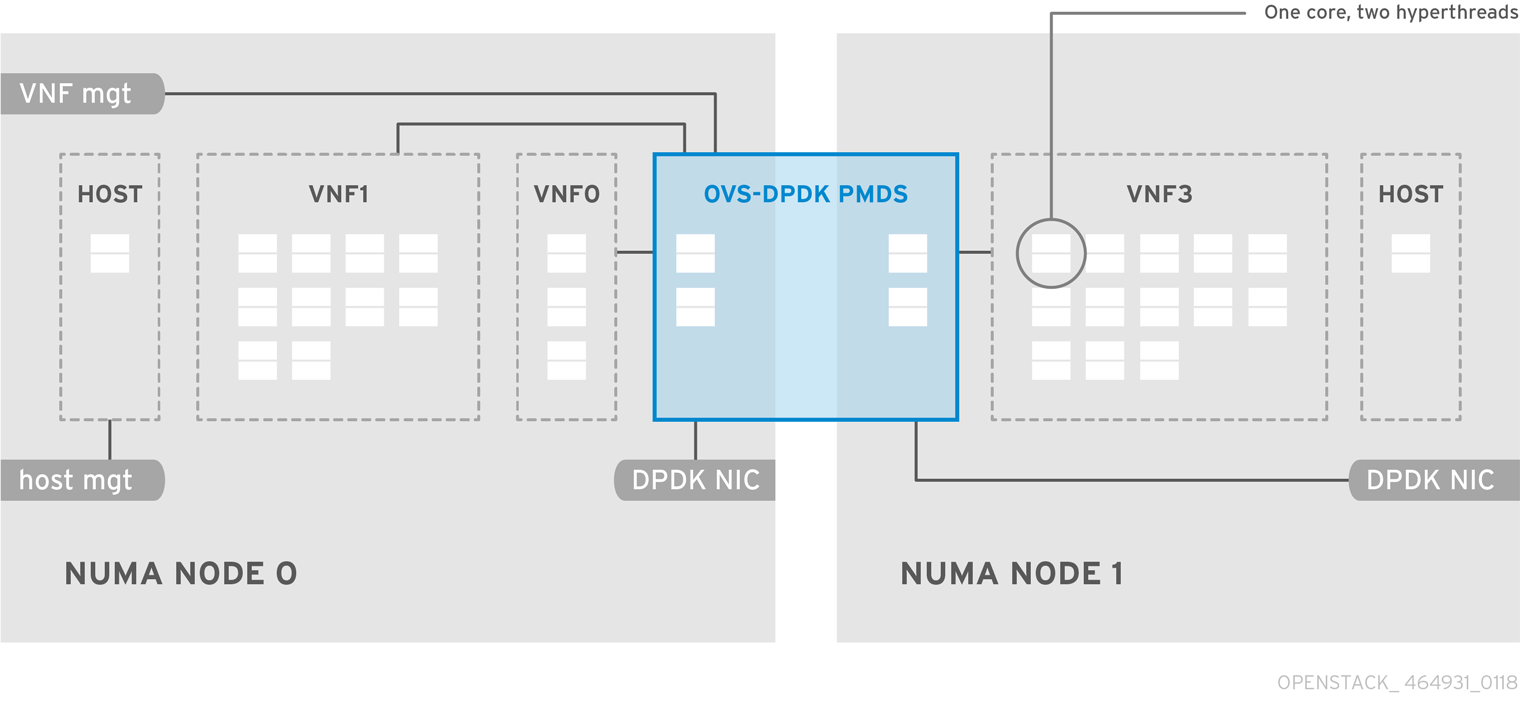

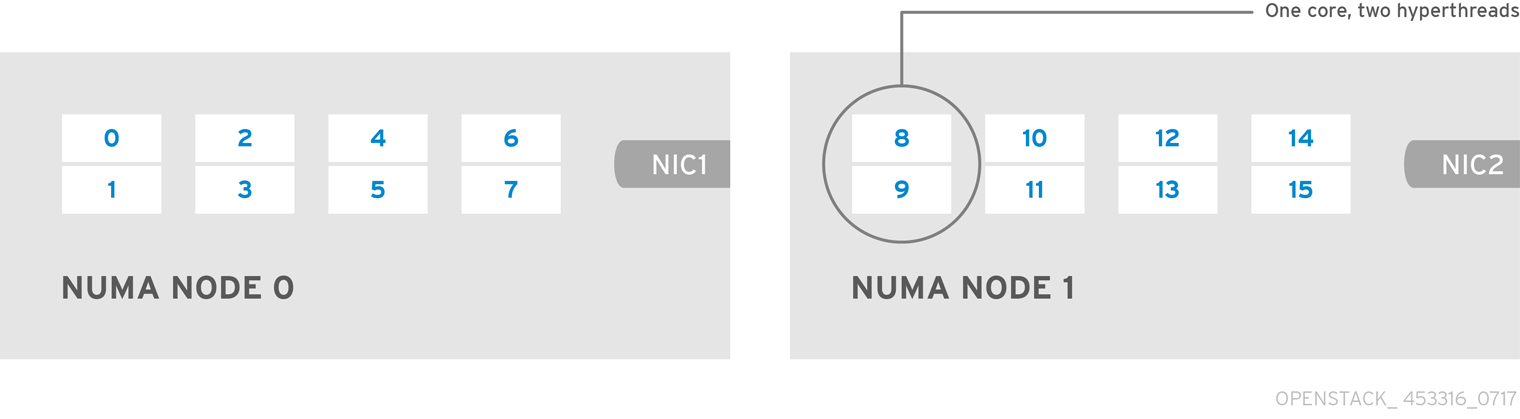

Figure 6.1. NUMA node topology

A typical topology includes 14 cores per NUMA node on dual socket Compute nodes. Both hyper-threading (HT) and non-HT cores are supported. Each core has two sibling threads. One core is dedicated to the host on each NUMA node. The virtual network function (VNF) handles the SR-IOV interface bonding. All the interrupt requests (IRQs) are routed on the host cores. The VNF cores are dedicated to the VNFs. They provide isolation from other VNFs and isolation from the host. Each VNF must use resources on a single NUMA node. The SR-IOV NICs used by the VNF must also be associated with that same NUMA node. This topology does not have a virtualization overhead. The host, OpenStack Networking (neutron), and Compute (nova) configuration parameters are exposed in a single file for ease, consistency, and to avoid incoherence that is fatal to proper isolation, causing preemption, and packet loss. The host and virtual machine isolation depend on a tuned profile, which defines the boot parameters and any Red Hat OpenStack Platform modifications based on the list of isolated CPUs.

6.2. Topology of an NFV SR-IOV deployment

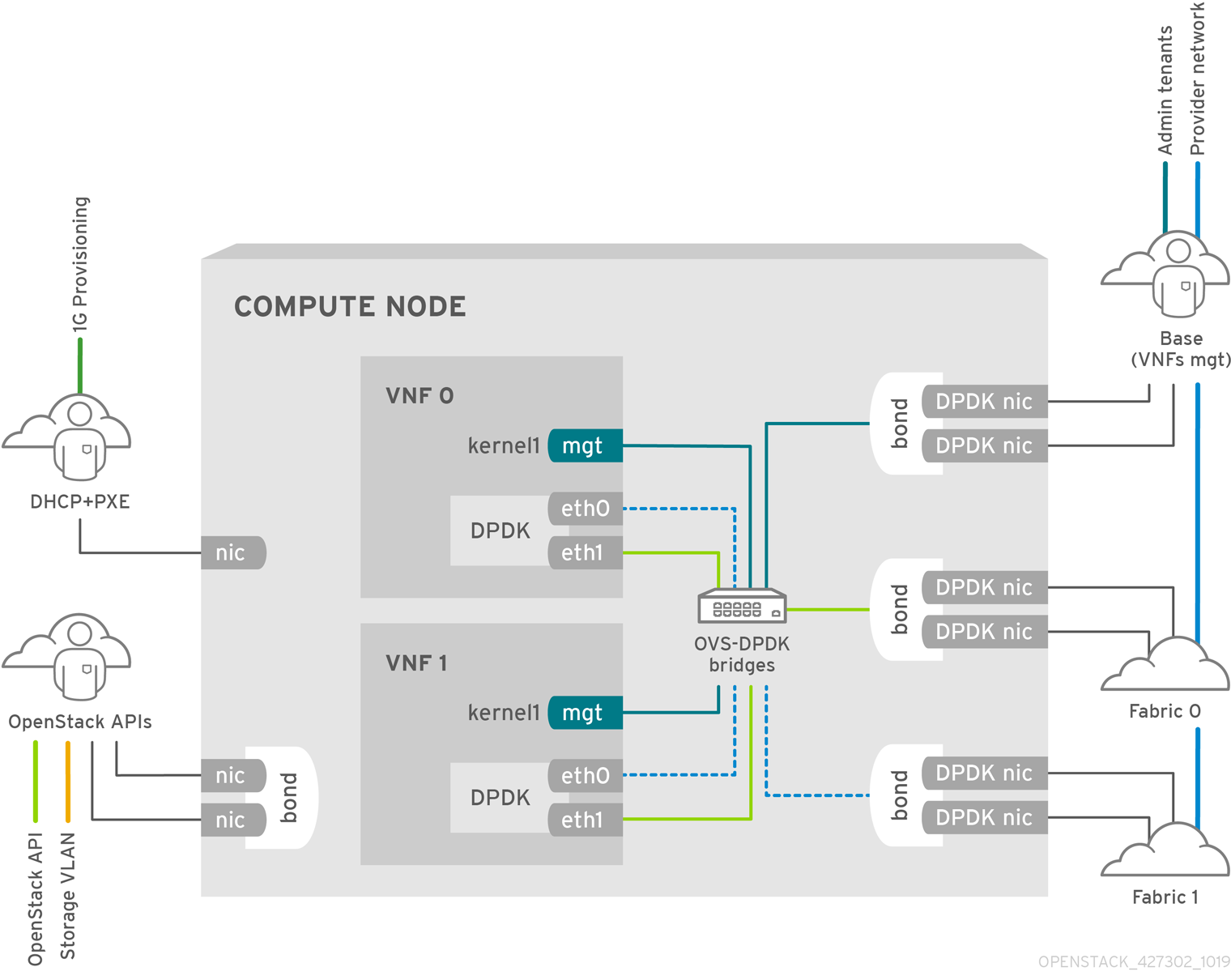

The following image has two VNFs each with the management interface represented by mgt and the data plane interfaces. The management interface manages the ssh access, and so on. The data plane interfaces bond the VNFs to DPDK to ensure high availability, as VNFs bond the data plane interfaces using the DPDK library. The image also has two provider networks for redundancy. The Compute node has two regular NICs bonded together and shared between the VNF management and the Red Hat OpenStack Platform API management.

Figure 6.2. NFV SR-IOV topology

The image shows a VNF that uses DPDK at an application level, and has access to SR-IOV virtual functions (VFs) and physical functions (PFs), for better availability or performance, depending on the fabric configuration. DPDK improves performance, while the VF/PF DPDK bonds provide support for failover, and high availability. The VNF vendor must ensure that the DPDK poll mode driver (PMD) supports the SR-IOV card that is being exposed as a VF/PF. The management network uses OVS, therefore the VNF sees a mgmt network device using the standard virtIO drivers. You can use that device to initially connect to the VNF, and ensure that the DPDK application bonds the two VF/PFs.

6.3. Topology for NFV SR-IOV without HCI

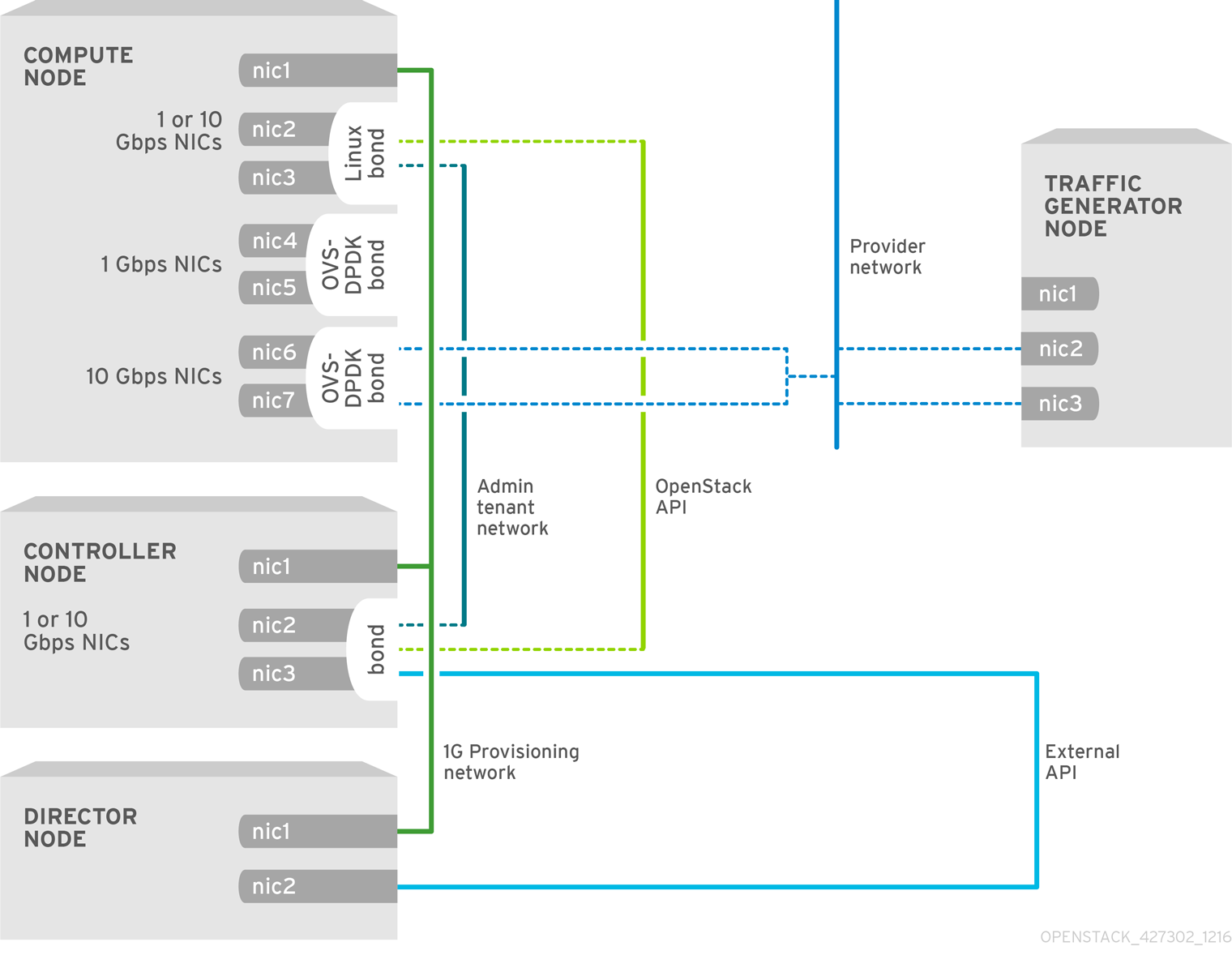

Observe the topology for SR-IOV without hyper-converged infrastructure (HCI) for NFV in the image below. It consists of compute and controller nodes with 1 Gbps NICs, and the director node.

Figure 6.3. NFV SR-IOV topology without HCI

Chapter 7. Deploying SR-IOV technologies

In your Red Hat OpenStack Platform NFV deployment, you can achieve higher performance with single root I/O virtualization (SR-IOV), when you configure direct access from your instances to a shared PCIe resource through virtual resources.

7.1. Configuring SR-IOV

To deploy Red Hat OpenStack Platform (RHOSP) with single root I/O virtualization (SR-IOV), configure the shared PCIe resources that have SR-IOV capabilities that instances can request direct access to.

The following CPU assignments, memory allocation, and NIC configurations are examples, and might be different from your use case.

Prerequisites

For details on how to install and configure the undercloud before deploying the overcloud, see the Director Installation and Usage guide.

NoteDo not manually edit any values in

/etc/tuned/cpu-partitioning-variables.confthat director heat templates modify.-

Access to the undercloud host and credentials for the

stackuser.

Procedure

-

Log in to the undercloud as the

stackuser. Source the

stackrcfile:[stack@director ~]$ source ~/stackrcGenerate a new roles data file named

roles_data_compute_sriov.yamlthat includes theControllerandComputeSriovroles:(undercloud)$ openstack overcloud roles \ generate -o /home/stack/templates/roles_data_compute_sriov.yaml \ Controller ComputeSriovComputeSriovis a custom role provided with your RHOSP installation that includes theNeutronSriovAgentandNeutronSriovHostConfigservices, in addition to the default compute services.To prepare the SR-IOV containers, include the

neutron-sriov.yamlandroles_data_compute_sriov.yamlfiles when you generate theovercloud_images.yamlfile.$ sudo openstack tripleo container image prepare \ --roles-file ~/templates/roles_data_compute_sriov.yaml \ -e /usr/share/openstack-tripleo-heat-templates/environments/services/neutron-sriov.yaml \ -e ~/containers-prepare-parameter.yaml \ --output-env-file=/home/stack/templates/overcloud_images.yamlFor more information on container image preparation, see Preparing container images in the Director Installation and Usage guide.

Create a copy of the

/usr/share/openstack-tripleo-heat-templates/environments/network-environment.yamlfile in your environment file directory:$ cp /usr/share/openstack-tripleo-heat-templates/environments/network-environment.yaml /home/stack/templates/network-environment-sriov.yamlAdd the following parameters under

parameter_defaultsin yournetwork-environment-sriov.yamlfile to configure the SR-IOV nodes for your cluster and your hardware configuration:NeutronNetworkType: 'vlan' NeutronNetworkVLANRanges: - tenant:22:22 - tenant:25:25 NeutronTunnelTypes: ''To determine the

vendor_idandproduct_idfor each PCI device type, use one of the following commands on the physical server that has the PCI cards:To return the

vendor_idandproduct_idfrom a deployed overcloud, use the following command:# lspci -nn -s <pci_device_address> 3b:00.0 Ethernet controller [0200]: Intel Corporation Ethernet Controller X710 for 10GbE SFP+ [<vendor_id>: <product_id>] (rev 02)To return the

vendor_idandproduct_idof a physical function (PF) if you have not yet deployed the overcloud, use the following command:(undercloud) [stack@undercloud-0 ~]$ openstack baremetal introspection data save <baremetal_node_name> | jq '.inventory.interfaces[] | .name, .vendor, .product'

Configure role specific parameters for SR-IOV compute nodes in your

network-environment-sriov.yamlfile:ComputeSriovParameters: IsolCpusList: "1-19,21-39" KernelArgs: "default_hugepagesz=1GB hugepagesz=1G hugepages=32 iommu=pt intel_iommu=on isolcpus=1-19,21-39" TunedProfileName: "cpu-partitioning" NeutronBridgeMappings: - tenant:br-link0 NeutronPhysicalDevMappings: - tenant:p7p1 NovaComputeCpuDedicatedSet: '1-19,21-39' NovaReservedHostMemory: 4096NoteThe

NovaVcpuPinSetparameter is now deprecated, and is replaced byNovaComputeCpuDedicatedSetfor dedicated, pinned workloads.Configure the PCI passthrough devices for the SR-IOV compute nodes in your

network-environment-sriov.yamlfile:ComputeSriovParameters: ... NovaPCIPassthrough: - vendor_id: "<vendor_id>" product_id: "<product_id>" address: <NIC_address> physical_network: "<physical_network>" ...-

Replace

<vendor_id>with the vendor ID of the PCI device. -

Replace

<product_id>with the product ID of the PCI device. -

Replace

<NIC_address>with the address of the PCI device. For information about how to configure theaddressparameter, see Guidelines for configuring NovaPCIPassthrough in the Configuring the Compute Service for Instance Creation guide. Replace

<physical_network>with the name of the physical network the PCI device is located on.NoteDo not use the

devnameparameter when you configure PCI passthrough because the device name of a NIC can change. To create a Networking service (neutron) port on a PF, specify thevendor_id, theproduct_id, and the PCI device address inNovaPCIPassthrough, and create the port with the--vnic-type direct-physicaloption. To create a Networking service port on a virtual function (VF), specify thevendor_idandproduct_idinNovaPCIPassthrough, and create the port with the--vnic-type directoption. The values of thevendor_idandproduct_idparameters might be different between physical function (PF) and VF contexts. For more information about how to configureNovaPCIPassthrough, see Guidelines for configuring NovaPCIPassthrough in the Configuring the Compute Service for Instance Creation guide.

-

Replace

Configure the SR-IOV enabled interfaces in the

compute.yamlnetwork configuration template. To create SR-IOV VFs, configure the interfaces as standalone NICs:- type: sriov_pf name: p7p3 mtu: 9000 numvfs: 10 use_dhcp: false defroute: false nm_controlled: true hotplug: true promisc: false - type: sriov_pf name: p7p4 mtu: 9000 numvfs: 10 use_dhcp: false defroute: false nm_controlled: true hotplug: true promisc: falseNoteThe

numvfsparameter replaces theNeutronSriovNumVFsparameter in the network configuration templates. Red Hat does not support modification of theNeutronSriovNumVFsparameter or thenumvfsparameter after deployment. If you modify either parameter after deployment, it might cause a disruption for the running instances that have an SR-IOV port on that PF. In this case, you must hard reboot these instances to make the SR-IOV PCI device available again.Ensure that the list of default filters includes the value

AggregateInstanceExtraSpecsFilter:NovaSchedulerDefaultFilters: ['AvailabilityZoneFilter','ComputeFilter','ComputeCapabilitiesFilter','ImagePropertiesFilter','Serve rGroupAntiAffinityFilter','ServerGroupAffinityFilter','PciPassthroughFilter','AggregateInstanceExt raSpecsFilter']-

Run the

overcloud_deploy.shscript.

7.2. Configuring NIC partitioning

You can reduce the number of NICs that you need for each host by configuring single root I/O virtualization (SR-IOV) virtual functions (VFs) for Red Hat OpenStack Platform (RHOSP) management networks and provider networks. When you partition a single, high-speed NIC into multiple VFs, you can use the NIC for both control and data plane traffic. This feature has been validated on Intel Fortville NICs, and Mellanox CX-5 NICs.

Procedure

- Open the NIC config file for your chosen role.

Add an entry for the interface type

sriov_pfto configure a physical function that the host can use:- type: sriov_pf name: <interface_name> use_dhcp: false numvfs: <number_of_vfs> promisc: <true/false>-

Replace

<interface_name>with the name of the interface. -

Replace

<number_of_vfs>with the number of VFs. -

Optional: Replace

<true/false>withtrueto set promiscuous mode, orfalseto disable promiscuous mode. The default value istrue.

NoteThe

numvfsparameter replaces theNeutronSriovNumVFsparameter in the network configuration templates. Red Hat does not support modification of theNeutronSriovNumVFsparameter or thenumvfsparameter after deployment. If you modify either parameter after deployment, it might cause a disruption for the running instances that have an SR-IOV port on that physical function (PF). In this case, you must hard reboot these instances to make the SR-IOV PCI device available again.-

Replace

Add an entry for the interface type

sriov_vfto configure virtual functions that the host can use:- type: <bond_type> name: internal_bond bonding_options: mode=<bonding_option> use_dhcp: false members: - type: sriov_vf device: <pf_device_name> vfid: <vf_id> - type: sriov_vf device: <pf_device_name> vfid: <vf_id> - type: vlan vlan_id: get_param: InternalApiNetworkVlanID spoofcheck: false device: internal_bond addresses: - ip_netmask: get_param: InternalApiIpSubnet routes: list_concat_unique: - get_param: InternalApiInterfaceRoutes-

Replace

<bond_type>with the required bond type, for example,linux_bond. You can apply VLAN tags on the bond for other bonds, such asovs_bond. Replace

<bonding_option>with one of the following supported bond modes:-

active-backup Balance-slbNoteLACP bonds are not supported.

-

Specify the

sriov_vfas the interface type to bond in thememberssection.NoteIf you are using an OVS bridge as the interface type, you can configure only one OVS bridge on the

sriov_vfof asriov_pfdevice. More than one OVS bridge on a singlesriov_pfdevice can result in packet duplication across VFs, and decreased performance.-

Replace

<pf_device_name>with the name of the PF device. -

If you use a

linux_bond, you must assign VLAN tags. If you set a VLAN tag, ensure that you set a unique tag for each VF associated with a singlesriov_pfdevice. You cannot have two VFs from the same PF on the same VLAN. -

Replace

<vf_id>with the ID of the VF. The applicable VF ID range starts at zero, and ends at the maximum number of VFs minus one. - Disable spoof checking.

-

Apply VLAN tags on the

sriov_vfforlinux_bondover VFs.

-

Replace

To reserve VFs for instances, include the

NovaPCIPassthroughparameter in an environment file, for example:NovaPCIPassthrough: - address: "0000:19:0e.3" trusted: "true" physical_network: "sriov1" - address: "0000:19:0e.0" trusted: "true" physical_network: "sriov2"Director identifies the host VFs, and derives the PCI addresses of the VFs that are available to the instance.

Enable

IOMMUon all nodes that require NIC partitioning. For example, if you want NIC Partitioning for Compute nodes, enable IOMMU using theKernelArgsparameter for that role:parameter_defaults: ComputeParameters: KernelArgs: "intel_iommu=on iommu=pt"NoteWhen you first add the

KernelArgsparameter to the configuration of a role, the overcloud nodes are automatically rebooted. If required, you can disable the automatic rebooting of nodes and instead perform node reboots manually after each overcloud deployment.For more information, see Configuring manual node reboot to define KernelArgs in the Configuring the Compute Service for Instance Creation guide.

Add your role file and environment files to the stack with your other environment files and deploy the overcloud:

(undercloud)$ openstack overcloud deploy --templates \ -r os-net-config.yaml -e [your environment files] \ -e /home/stack/templates/<compute_environment_file>.yaml

Validation

Log in to the overcloud Compute node as

heat-adminand check the number of VFs:[heat-admin@overcloud-compute-0 heat-admin]$ sudo cat /sys/class/net/p4p1/device/sriov_numvfs 10 [heat-admin@overcloud-compute-0 heat-admin]$ sudo cat /sys/class/net/p4p2/device/sriov_numvfs 10Show OVS connections:

[heat-admin@overcloud-compute-0]$ sudo ovs-vsctl show b6567fa8-c9ec-4247-9a08-cbf34f04c85f Manager "ptcp:6640:127.0.0.1" is_connected: true Bridge br-sriov2 Controller "tcp:127.0.0.1:6633" is_connected: true fail_mode: secure datapath_type: netdev Port phy-br-sriov2 Interface phy-br-sriov2 type: patch options: {peer=int-br-sriov2} Port br-sriov2 Interface br-sriov2 type: internal Bridge br-sriov1 Controller "tcp:127.0.0.1:6633" is_connected: true fail_mode: secure datapath_type: netdev Port phy-br-sriov1 Interface phy-br-sriov1 type: patch options: {peer=int-br-sriov1} Port br-sriov1 Interface br-sriov1 type: internal Bridge br-ex Controller "tcp:127.0.0.1:6633" is_connected: true fail_mode: secure datapath_type: netdev Port br-ex Interface br-ex type: internal Port phy-br-ex Interface phy-br-ex type: patch options: {peer=int-br-ex} Bridge br-tenant Controller "tcp:127.0.0.1:6633" is_connected: true fail_mode: secure datapath_type: netdev Port br-tenant tag: 305 Interface br-tenant type: internal Port phy-br-tenant Interface phy-br-tenant type: patch options: {peer=int-br-tenant} Port dpdkbond0 Interface dpdk0 type: dpdk options: {dpdk-devargs="0000:18:0e.0"} Interface dpdk1 type: dpdk options: {dpdk-devargs="0000:18:0a.0"} Bridge br-tun Controller "tcp:127.0.0.1:6633" is_connected: true fail_mode: secure datapath_type: netdev Port vxlan-98140025 Interface vxlan-98140025 type: vxlan options: {df_default="true", egress_pkt_mark="0", in_key=flow, local_ip="152.20.0.229", out_key=flow, remote_ip="152.20.0.37"} Port br-tun Interface br-tun type: internal Port patch-int Interface patch-int type: patch options: {peer=patch-tun} Port vxlan-98140015 Interface vxlan-98140015 type: vxlan options: {df_default="true", egress_pkt_mark="0", in_key=flow, local_ip="152.20.0.229", out_key=flow, remote_ip="152.20.0.21"} Port vxlan-9814009f Interface vxlan-9814009f type: vxlan options: {df_default="true", egress_pkt_mark="0", in_key=flow, local_ip="152.20.0.229", out_key=flow, remote_ip="152.20.0.159"} Port vxlan-981400cc Interface vxlan-981400cc type: vxlan options: {df_default="true", egress_pkt_mark="0", in_key=flow, local_ip="152.20.0.229", out_key=flow, remote_ip="152.20.0.204"} Bridge br-int Controller "tcp:127.0.0.1:6633" is_connected: true fail_mode: secure datapath_type: netdev Port int-br-tenant Interface int-br-tenant type: patch options: {peer=phy-br-tenant} Port int-br-ex Interface int-br-ex type: patch options: {peer=phy-br-ex} Port int-br-sriov1 Interface int-br-sriov1 type: patch options: {peer=phy-br-sriov1} Port patch-tun Interface patch-tun type: patch options: {peer=patch-int} Port br-int Interface br-int type: internal Port int-br-sriov2 Interface int-br-sriov2 type: patch options: {peer=phy-br-sriov2} Port vhu4142a221-93 tag: 1 Interface vhu4142a221-93 type: dpdkvhostuserclient options: {vhost-server-path="/var/lib/vhost_sockets/vhu4142a221-93"} ovs_version: "2.13.2"Log in to your OVS-DPDK SR-IOV Compute node as

heat-adminand check Linux bonds:[heat-admin@overcloud-computeovsdpdksriov-1 ~]$ cat /proc/net/bonding/<bond_name> Ethernet Channel Bonding Driver: v3.7.1 (April 27, 2011) Bonding Mode: fault-tolerance (active-backup) Primary Slave: None Currently Active Slave: eno3v1 MII Status: up MII Polling Interval (ms): 0 Up Delay (ms): 0 Down Delay (ms): 0 Peer Notification Delay (ms): 0 Slave Interface: eno3v1 MII Status: up Speed: 10000 Mbps Duplex: full Link Failure Count: 0 Permanent HW addr: 4e:77:94:bd:38:d2 Slave queue ID: 0 Slave Interface: eno4v1 MII Status: up Speed: 10000 Mbps Duplex: full Link Failure Count: 0 Permanent HW addr: 4a:74:52:a7:aa:7c Slave queue ID: 0List OVS bonds:

[heat-admin@overcloud-computeovsdpdksriov-1 ~]$ sudo ovs-appctl bond/show ---- dpdkbond0 ---- bond_mode: balance-slb bond may use recirculation: no, Recirc-ID : -1 bond-hash-basis: 0 updelay: 0 ms downdelay: 0 ms next rebalance: 9491 ms lacp_status: off lacp_fallback_ab: false active slave mac: ce:ee:c7:58:8e:b2(dpdk1) slave dpdk0: enabled may_enable: true slave dpdk1: enabled active slave may_enable: true

If you used NovaPCIPassthrough to pass VFs to instances, test by Deploying an instance for SR-IOV.

7.3. Example configurations for NIC partitions

Linux bond over VFs

The following example configures a Linux bond over VFs, disables spoofcheck, and applies VLAN tags to sriov_vf:

- type: linux_bond

name: bond_api

bonding_options: "mode=active-backup"

members:

- type: sriov_vf

device: eno2

vfid: 1

vlan_id:

get_param: InternalApiNetworkVlanID

spoofcheck: false

- type: sriov_vf

device: eno3

vfid: 1

vlan_id:

get_param: InternalApiNetworkVlanID

spoofcheck: false

addresses:

- ip_netmask:

get_param: InternalApiIpSubnet

routes:

list_concat_unique:

- get_param: InternalApiInterfaceRoutesOVS bridge on VFs

The following example configures an OVS bridge on VFs:

- type: ovs_bridge

name: br-bond

use_dhcp: true

members:

- type: vlan

vlan_id:

get_param: TenantNetworkVlanID

addresses:

- ip_netmask:

get_param: TenantIpSubnet

routes:

list_concat_unique:

- get_param: ControlPlaneStaticRoutes

- type: ovs_bond

name: bond_vf

ovs_options: "bond_mode=active-backup"

members:

- type: sriov_vf

device: p2p1

vfid: 2

- type: sriov_vf

device: p2p2

vfid: 2OVS user bridge on VFs

The following example configures an OVS user bridge on VFs and applies VLAN tags to ovs_user_bridge:

- type: ovs_user_bridge

name: br-link0

use_dhcp: false

mtu: 9000

ovs_extra:

- str_replace:

template: set port br-link0 tag=_VLAN_TAG_

params:

_VLAN_TAG_:

get_param: TenantNetworkVlanID

addresses:

- ip_netmask:

list_concat_unique:

- get_param: TenantInterfaceRoutes

members:

- type: ovs_dpdk_bond

name: dpdkbond0

mtu: 9000

ovs_extra:

- set port dpdkbond0 bond_mode=balance-slb

members:

- type: ovs_dpdk_port

name: dpdk0

members:

- type: sriov_vf

device: eno2

vfid: 3

- type: ovs_dpdk_port

name: dpdk1

members:

- type: sriov_vf

device: eno3

vfid: 37.4. Configuring OVS hardware offload

The procedure for OVS hardware offload configuration shares many of the same steps as configuring SR-IOV.

Since Red Hat OpenStack Platform 16.2.3, to offload traffic from Compute nodes with OVS hardware offload and ML2/OVS, you must set the disable_packet_marking parameter to true in the openvswitch_agent.ini configuration file, and then restart the neutron_ovs_agent container.

+

cat /var/lib/config-data/puppet-generated/neutron/\

etc/neutron/plugins/ml2/openvswitch_agent.ini

[ovs]

disable_packet_marking=TrueProcedure

Generate an overcloud role for OVS hardware offload that is based on the Compute role:

openstack overcloud roles generate -o roles_data.yaml \ Controller Compute:ComputeOvsHwOffload-

Optional: Change the

HostnameFormatDefault: '%stackname%-compute-%index%'name for theComputeOvsHwOffloadrole. -

Add the

OvsHwOffloadparameter under role-specific parameters with a value oftrue. -

To configure neutron to use the iptables/hybrid firewall driver implementation, include the line:

NeutronOVSFirewallDriver: iptables_hybrid. For more information aboutNeutronOVSFirewallDriver, see Using the Open vSwitch Firewall in the Advanced Overcloud Customization Guide. Configure the

physical_networkparameter to match your environment.-

For VLAN, set the

physical_networkparameter to the name of the network you create in neutron after deployment. This value should also be inNeutronBridgeMappings. For VXLAN, set the

physical_networkparameter tonull.Example:

parameter_defaults: NeutronOVSFirewallDriver: iptables_hybrid ComputeSriovParameters: IsolCpusList: 2-9,21-29,11-19,31-39 KernelArgs: "default_hugepagesz=1GB hugepagesz=1G hugepages=128 intel_iommu=on iommu=pt" OvsHwOffload: true TunedProfileName: "cpu-partitioning" NeutronBridgeMappings: - tenant:br-tenant NovaPCIPassthrough: - vendor_id: <vendor-id> product_id: <product-id> address: <address> physical_network: "tenant" - vendor_id: <vendor-id> product_id: <product-id> address: <address> physical_network: "null" NovaReservedHostMemory: 4096 NovaComputeCpuDedicatedSet: 1-9,21-29,11-19,31-39-

Replace

<vendor-id>with the vendor ID of the physical NIC. -

Replace

<product-id>with the product ID of the NIC VF. Replace

<address>with the address of the physical NIC.For more information about how to configure

NovaPCIPassthrough, see Guidelines for configuring NovaPCIPassthrough in the Configuring the Compute Service for Instance Creation guide.

-

For VLAN, set the

Ensure that the list of default filters includes

NUMATopologyFilter:parameter_defaults: NovaSchedulerEnabledFilters: - AvailabilityZoneFilter - ComputeFilter - ComputeCapabilitiesFilter - ImagePropertiesFilter - ServerGroupAntiAffinityFilter - ServerGroupAffinityFilter - PciPassthroughFilter - NUMATopologyFilterNoteOptional: For details on how to troubleshoot and configure OVS Hardware Offload issues in RHOSP 16.2 with Mellanox ConnectX5 NICs, see Troubleshooting Hardware Offload.

Configure one or more network interfaces intended for hardware offload in the

compute-sriov.yamlconfiguration file:- type: ovs_bridge name: br-tenant mtu: 9000 members: - type: sriov_pf name: p7p1 numvfs: 5 mtu: 9000 primary: true promisc: true use_dhcp: false link_mode: switchdevNote-

Do not use the

NeutronSriovNumVFsparameter when configuring Open vSwitch hardware offload. The number of virtual functions is specified using thenumvfsparameter in a network configuration file used byos-net-config. Red Hat does not support modifying thenumvfssetting during update or redeployment. -

Do not configure Mellanox network interfaces as a nic-config interface type

ovs-vlanbecause this prevents tunnel endpoints such as VXLAN from passing traffic due to driver limitations.

-

Do not use the

Include the

ovs-hw-offload.yamlfile in theovercloud deploycommand:TEMPLATES_HOME=”/usr/share/openstack-tripleo-heat-templates” CUSTOM_TEMPLATES=”/home/stack/templates” openstack overcloud deploy --templates \ -r ${CUSTOM_TEMPLATES}/roles_data.yaml \ -e ${TEMPLATES_HOME}/environments/ovs-hw-offload.yaml \ -e ${CUSTOM_TEMPLATES}/network-environment.yaml \ -e ${CUSTOM_TEMPLATES}/neutron-ovs.yaml

Verification

Confirm that a PCI device is in

switchdevmode:# devlink dev eswitch show pci/0000:03:00.0 pci/0000:03:00.0: mode switchdev inline-mode none encap enableVerify if offload is enabled in OVS:

# ovs-vsctl get Open_vSwitch . other_config:hw-offload “true”

7.5. Tuning examples for OVS hardware offload

For optimal performance you must complete additional configuration steps.

Adjusting the number of channels for each network interface to improve performance

A channel includes an interrupt request (IRQ) and the set of queues that trigger the IRQ. When you set the mlx5_core driver to switchdev mode, the mlx5_core driver defaults to one combined channel, which might not deliver optimal performance.

Procedure

On the PF representors, enter the following command to adjust the number of CPUs available to the host. Replace $(nproc) with the number of CPUs you want to make available:

$ sudo ethtool -L enp3s0f0 combined $(nproc)

CPU pinning

To prevent performance degradation from cross-NUMA operations, locate NICs, their applications, the VF guest, and OVS in the same NUMA node. For more information, see Configuring CPU pinning on Compute nodes in the Configuring the Compute Service for Instance Creation guide.

7.6. Configuring components of OVS hardware offload

A reference for configuring and troubleshooting the components of OVS HW Offload with Mellanox smart NICs.

Nova

Configure the Nova scheduler to use the NovaPCIPassthrough filter with the NUMATopologyFilter and DerivePciWhitelistEnabled parameters. When you enable OVS HW Offload, the Nova scheduler operates similarly to SR-IOV passthrough for instance spawning.

Neutron

When you enable OVS HW Offload, use the devlink cli tool to set the NIC e-switch mode to switchdev. Switchdev mode establishes representor ports on the NIC that are mapped to the VFs.

Procedure

To allocate a port from a

switchdev-enabled NIC, log in as an admin user, create a neutron port with abinding-profilevalue ofcapabilities, and disable port security:$ openstack port create --network private --vnic-type=direct --binding-profile '{"capabilities": ["switchdev"]}' direct_port1 --disable-port-securityPass this port information when you create the instance.

You associate the representor port with the instance VF interface and connect the representor port to OVS bridge

br-intfor one-time OVS data path processing. A VF port representor functions like a software version of a physical “patch panel” front-end.For more information about new instance creation, see Deploying an instance for SR-IOV.

OVS

In an environment with hardware offload configured, the first packet transmitted traverses the OVS kernel path, and this packet journey establishes the ml2 OVS rules for incoming and outgoing traffic for the instance traffic. When the flows of the traffic stream are established, OVS uses the traffic control (TC) Flower utility to push these flows on the NIC hardware.

Procedure

Use director to apply the following configuration on OVS:

$ sudo ovs-vsctl set Open_vSwitch . other_config:hw-offload=true- Restart to enable HW Offload.

Traffic Control (TC) subsystems

When you enable the hw-offload flag, OVS uses the TC data path. TC Flower is an iproute2 utility that writes data path flows on hardware. This ensures that the flow is programmed on both the hardware and software data paths, for redundancy.

Procedure

Apply the following configuration. This is the default option if you do not explicitly configure

tc-policy:$ sudo ovs-vsctl set Open_vSwitch . other_config:tc-policy=none- Restart OVS.

NIC PF and VF drivers

Mlx5_core is the PF and VF driver for the Mellanox ConnectX-5 NIC. The mlx5_core driver performs the following tasks:

- Creates routing tables on hardware.

- Manages network flow management.

-

Configures the Ethernet switch device driver model,

switchdev. - Creates block devices.

Procedure

Use the following

devlinkcommands to query the mode of the PCI device.$ sudo devlink dev eswitch set pci/0000:03:00.0 mode switchdev $ sudo devlink dev eswitch show pci/0000:03:00.0 pci/0000:03:00.0: mode switchdev inline-mode none encap enable

NIC firmware

The NIC firmware performs the following tasks:

- Maintains routing tables and rules.

- Fixes the pipelines of the tables.

- Manages hardware resources.

- Creates VFs.

The firmware works with the driver for optimal performance.

Although the NIC firmware is non-volatile and persists after you reboot, you can modify the configuration during run time.

Procedure

Apply the following configuration on the interfaces, and the representor ports, to ensure that TC Flower pushes the flow programming at the port level:

$ sudo ethtool -K enp3s0f0 hw-tc-offload on

Ensure that you keep the firmware updated.Yum or dnf updates might not complete the firmware update. For more information, see your vendor documentation.

7.7. Troubleshooting OVS hardware offload

Prerequisites

- Linux Kernel 4.13 or newer

- OVS 2.8 or newer

- RHOSP 12 or newer

- Iproute 4.12 or newer

- Mellanox NIC firmware, for example FW ConnectX-5 16.21.0338 or newer

For more information about supported prerequisites, see see the Red Hat Knowledgebase solution Network Adapter Fast Datapath Feature Support Matrix.

Configuring the network in an OVS HW offload deployment

In a HW offload deployment, you can choose one of the following scenarios for your network configuration according to your requirements:

- You can base guest VMs on VXLAN and VLAN by using either the same set of interfaces attached to a bond, or a different set of NICs for each type.

- You can bond two ports of a Mellanox NIC by using Linux bond.

- You can host tenant VXLAN networks on VLAN interfaces on top of a Mellanox Linux bond.

Ensure that individual NICs and bonds are members of an ovs-bridge.

Refer to the below example network configuration:

- type: ovs_bridge

name: br-offload

mtu: 9000

use_dhcp: false

members:

- type: linux_bond

name: bond-pf

bonding_options: "mode=active-backup miimon=100"

members:

- type: sriov_pf

name: p5p1

numvfs: 3

primary: true

promisc: true

use_dhcp: false

defroute: false

link_mode: switchdev

- type: sriov_pf

name: p5p2

numvfs: 3

promisc: true

use_dhcp: false

defroute: false

link_mode: switchdev

- type: vlan

vlan_id:

get_param: TenantNetworkVlanID

device: bond-pf

addresses:

- ip_netmask:

get_param: TenantIpSubnetThe following bonding configurations are supported:

- active-backup - mode=1

- active-active or balance-xor - mode=2

- 802.3ad (LACP) - mode=4

The following bonding configuration is not supported:

- xmit_hash_policy=layer3+4

Verifying the interface configuration

Verify the interface configuration with the following procedure.

Procedure

-

During deployment, use the host network configuration tool

os-net-configto enablehw-tc-offload. -

Enable

hw-tc-offloadon thesriov_configservice any time you reboot the Compute node. Set the

hw-tc-offloadparameter toonfor the NICs that are attached to the bond:.[root@overcloud-computesriov-0 ~]# ethtool -k ens1f0 | grep tc-offload hw-tc-offload: on

Verifying the interface mode

Verify the interface mode with the following procedure.

Procedure

-

Set the eswitch mode to

switchdevfor the interfaces you use for HW offload. -

Use the host network configuration tool

os-net-configto enableeswitchduring deployment. Enable

eswitchon thesriov_configservice any time you reboot the Compute node.[root@overcloud-computesriov-0 ~]# devlink dev eswitch show pci/$(ethtool -i ens1f0 | grep bus-info | cut -d ':' -f 2,3,4 | awk '{$1=$1};1')

The driver of the PF interface is set to "mlx5e_rep", to show that it is a representor of the e-switch uplink port. This does not affect the functionality.

Verifying the offload state in OVS

Verify the offload state in OVS with the following procedure.

Enable hardware offload in OVS in the Compute node.

[root@overcloud-computesriov-0 ~]# ovs-vsctl get Open_vSwitch . other_config:hw-offload "true"

Verifying the name of the VF representor port

To ensure consistent naming of VF representor ports, os-net-config uses udev rules to rename the ports in the <PF-name>_<VF_id> format.

Procedure

After deployment, verify that the VF representor ports are named correctly.

root@overcloud-computesriov-0 ~]# cat /etc/udev/rules.d/80-persistent-os-net-config.rules # This file is autogenerated by os-net-config SUBSYSTEM=="net", ACTION=="add", ATTR{phys_switch_id}!="", ATTR{phys_port_name}=="pf*vf*", ENV{NM_UNMANAGED}="1" SUBSYSTEM=="net", ACTION=="add", DRIVERS=="?*", KERNELS=="0000:65:00.0", NAME="ens1f0" SUBSYSTEM=="net", ACTION=="add", ATTR{phys_switch_id}=="98039b7f9e48", ATTR{phys_port_name}=="pf0vf*", IMPORT{program}="/etc/udev/rep-link-name.sh $attr{phys_port_name}", NAME="ens1f0_$env{NUMBER}" SUBSYSTEM=="net", ACTION=="add", DRIVERS=="?*", KERNELS=="0000:65:00.1", NAME="ens1f1" SUBSYSTEM=="net", ACTION=="add", ATTR{phys_switch_id}=="98039b7f9e49", ATTR{phys_port_name}=="pf1vf*", IMPORT{program}="/etc/udev/rep-link-name.sh $attr{phys_port_name}", NAME="ens1f1_$env{NUMBER}"

Examining network traffic flow

HW offloaded network flow functions in a similar way to physical switches or routers with application-specific integrated circuit (ASIC) chips. You can access the ASIC shell of a switch or router to examine the routing table and for other debugging. The following procedure uses a Broadcom chipset from a Cumulus Linux switch as an example. Replace the values that are appropriate to your environment.

Procedure

To get Broadcom chip table content, use the

bcmcmdcommand.root@dni-7448-26:~# cl-bcmcmd l2 show mac=00:02:00:00:00:08 vlan=2000 GPORT=0x2 modid=0 port=2/xe1 mac=00:02:00:00:00:09 vlan=2000 GPORT=0x2 modid=0 port=2/xe1 HitInspect the Traffic Control (TC) Layer.

# tc -s filter show dev p5p1_1 ingress … filter block 94 protocol ip pref 3 flower chain 5 filter block 94 protocol ip pref 3 flower chain 5 handle 0x2 eth_type ipv4 src_ip 172.0.0.1 ip_flags nofrag in_hw in_hw_count 1 action order 1: mirred (Egress Redirect to device eth4) stolen index 3 ref 1 bind 1 installed 364 sec used 0 sec Action statistics: Sent 253991716224 bytes 169534118 pkt (dropped 0, overlimits 0 requeues 0) Sent software 43711874200 bytes 30161170 pkt Sent hardware 210279842024 bytes 139372948 pkt backlog 0b 0p requeues 0 cookie 8beddad9a0430f0457e7e78db6e0af48 no_percpu-

Examine the

in_hwflags and the statistics in this output. The wordhardwareindicates that the hardware processes the network traffic. If you usetc-policy=none, you can check this output or a tcpdump to investigate when hardware or software handles the packets. You can see a corresponding log message indmesgor inovs-vswitch.logwhen the driver is unable to offload packets. For Mellanox, as an example, the log entries resemble syndrome messages in

dmesg.[13232.860484] mlx5_core 0000:3b:00.0: mlx5_cmd_check:756:(pid 131368): SET_FLOW_TABLE_ENTRY(0x936) op_mod(0x0) failed, status bad parameter(0x3), syndrome (0x6b1266)In this example, the error code (0x6b1266) represents the following behavior:

0x6B1266 | set_flow_table_entry: pop vlan and forward to uplink is not allowed

Validating systems