이 콘텐츠는 선택한 언어로 제공되지 않습니다.

Developer Guide

OpenShift Enterprise 3.1 Developer Reference

Abstract

- Monitor and browse projects with the web console

- Configure and utilize the CLI

- Generate configurations using templates

- Manage builds and webhooks

- Define and trigger deployments

- Integrate external services (databases, SaaS endpoints)

Chapter 1. Overview

This guide helps developers set up and configure a workstation to develop and deploy applications in an OpenShift Enterprise cloud environment with a command-line interface (CLI). This guide provides detailed instructions and examples to help developers:

- Monitor and browse projects with the web console

- Configure and utilize the CLI

- Generate configurations using templates

- Manage builds and webhooks

- Define and trigger deployments

- Integrate external services (databases, SaaS endpoints)

Chapter 2. Authentication

2.1. Web Console Authentication

When accessing the web console from a browser at <master_public_addr>:8443, you are automatically redirected to a login page.

Review the browser versions and operating systems that can be used to access the web console.

You can provide your login credentials on this page to obtain a token to make API calls. After logging in, you can navigate your projects using the web console.

2.2. CLI Authentication

You can authenticate from the command line using the CLI command oc login. You can get started with the CLI by running this command without any options:

$ oc loginThe command’s interactive flow helps you establish a session to an OpenShift server with the provided credentials. If any information required to successfully log in to an OpenShift server is not provided, the command prompts for user input as required. The configuration is automatically saved and is then used for every subsequent command.

All configuration options for the oc login command, listed in the oc login --help command output, are optional. The following example shows usage with some common options:

$ oc login [-u=<username>] \

[-p=<password>] \

[-s=<server>] \

[-n=<project>] \

[--certificate-authority=</path/to/file.crt>|--insecure-skip-tls-verify]The following table describes these common options:

| Option | Syntax | Description |

|---|---|---|

|

|

| Specifies the host name of the OpenShift server. If a server is provided through this flag, the command does not ask for it interactively. This flag can also be used if you already have a CLI configuration file and want to log in and switch to another server. |

|

|

| Allows you to specify the credentials to log in to the OpenShift server. If user name or password are provided through these flags, the command does not ask for it interactively. These flags can also be used if you already have a configuration file with a session token established and want to log in and switch to another user name. |

|

|

|

A global CLI option which, when used with |

|

|

| Correctly and securely authenticates with an OpenShift server that uses HTTPS. The path to a certificate authority file must be provided. |

|

|

|

Allows interaction with an HTTPS server bypassing the server certificate checks; however, note that it is not secure. If you try to |

CLI configuration files allow you to easily manage multiple CLI profiles.

If you have access to administrator credentials but are no longer logged in as the default system user system:admin, you can log back in as this user at any time as long as the credentials are still present in your CLI configuration file. The following command logs in and switches to the default project:

$ oc login -u system:admin -n defaultChapter 3. Projects

3.1. Overview

A project allows a community of users to organize and manage their content in isolation from other communities.

3.2. Creating a Project

If allowed by your cluster administrator, you can create a new project using the CLI or the web console.

To create a new project using the CLI:

$ oc new-project <project_name> \

--description="<description>" --display-name="<display_name>"For example:

$ oc new-project hello-openshift \

--description="This is an example project to demonstrate OpenShift v3" \

--display-name="Hello OpenShift"3.3. Viewing Projects

When viewing projects, you are restricted to seeing only the projects you have access to view based on the authorization policy.

To view a list of projects:

$ oc get projectsYou can change from the current project to a different project for CLI operations. The specified project is then used in all subsequent operations that manipulate project-scoped content:

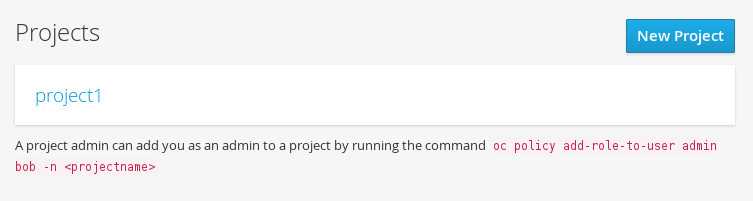

$ oc project <project_name>You can also use the web console to view and change between projects. After authenticating and logging in, you are presented with a list of projects that you have access to:

If you use the CLI to create a new project, you can then refresh the page in the browser to see the new project.

Selecting a project brings you to the project overview for that project.

3.4. Checking Project Status

The oc status command provides a high-level overview of the current project, with its components and their relationships. This command takes no argument:

$ oc status3.5. Filtering by Labels

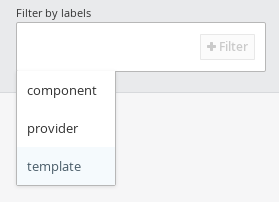

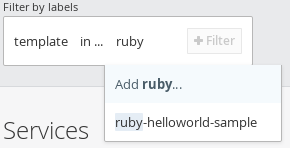

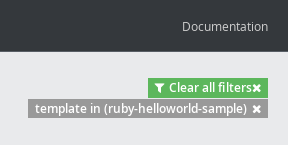

You can filter the contents of a project page in the web console by using the labels of a resource. You can pick from a suggested label name and values, or type in your own. Multiple filters can be added. When multiple filters are applied, resources must match all of the filters to remain visible.

To filter by labels:

Select a label type:

Select one of the following:

exists

Verify that the label name exists, but ignore its value.

in

Verify that the label name exists and is equal to one of the selected values.

not in

Verify that the label name does not exist, or is not equal to any of the selected values.

If you selected in or not in, select a set of values then select Filter:

After adding filters, you can stop filtering by selecting Clear all filters or by clicking individual filters to remove them:

3.6. Deleting a Project

When you delete a project, the server updates the project status to Terminating from Active. The server then clears all content from a project that is Terminating before finally removing the project. While a project is in Terminating status, a user cannot add new content to the project.

To delete a project:

$ oc delete project <project_name>Chapter 4. Service Accounts

4.1. Overview

When a person uses the OpenShift Enterprise CLI or web console, their API token authenticates them to the OpenShift API. However, when a regular user’s credentials are not available, it is common for components to make API calls independently. For example:

- Replication controllers make API calls to create or delete pods.

- Applications inside containers could make API calls for discovery purposes.

- External applications could make API calls for monitoring or integration purposes.

Service accounts provide a flexible way to control API access without sharing a regular user’s credentials.

4.2. Usernames and groups

Every service account has an associated username that can be granted roles, just like a regular user. The username is derived from its project and name: system:serviceaccount:<project>:<name>

For example, to add the view role to the robot service account in the top-secret project:

$ oc policy add-role-to-user view system:serviceaccount:top-secret:robotEvery service account is also a member of two groups:

- system:serviceaccounts, which includes all service accounts in the system

- system:serviceaccounts:<project>, which includes all service accounts in the specified project

For example, to allow all service accounts in all projects to view resources in the top-secret project:

$ oc policy add-role-to-group view system:serviceaccounts -n top-secretTo allow all service accounts in the managers project to edit resources in the top-secret project:

$ oc policy add-role-to-group edit system:serviceaccounts:managers -n top-secret4.3. Default service accounts and roles

Three service accounts are automatically created in every project:

- builder is used by build pods. It is given the system:image-builder role, which allows pushing images to any image stream in the project using the internal Docker registry.

- deployer is used by deployment pods and is given the system:deployer role, which allows viewing and modifying replication controllers and pods in the project.

- default is used to run all other pods unless they specify a different service account.

All service accounts in a project are given the system:image-puller role, which allows pulling images from any image stream in the project using the internal Docker registry.

4.4. Managing service accounts

4.5. Managing Service Accounts

$ more sa.json

{

"apiVersion": "v1",

"kind": "ServiceAccount",

"metadata": {

"name": "robot"

}

}

$ oc create -f sa.json

serviceaccounts/robot== Managing service account credentials

As soon as a service account is created, two secrets are automatically added to it:

- an API token

- credentials for the internal Docker registry

These can be seen by describing the service account:

$ oc describe serviceaccount robot

Name: robot

Labels: <none>

Image pull secrets: robot-dockercfg-624cx

Mountable secrets: robot-token-uzkbh

robot-dockercfg-624cx

Tokens: robot-token-8bhpp

robot-token-uzkbhThe system ensures that service accounts always have an API token and internal Docker registry credentials.

The generated API token and Docker registry credentials do not expire, but they can be revoked by deleting the secret. When the secret is deleted, a new one is automatically generated to take its place.

== Managing Allowed Secrets

In addition to providing API credentials, a pod’s service account determines which secrets the pod is allowed to use.

Pods use secrets in two ways:

- image pull secrets, providing credentials used to pull images for the pod’s containers

- mountable secrets, injecting the contents of secrets into containers as files

To allow a secret to be used as an image pull secret by a service account’s pods, run oc secrets add --for=pull serviceaccount/<serviceaccount-name> secret/<secret-name>

To allow a secret to be mounted by a service account’s pods, run oc secrets add --for=mount serviceaccount/<serviceaccount-name> secret/<secret-name>

This example creates and adds secrets to a service account:

$ oc secrets new secret-plans plan1.txt plan2.txt

secret/secret-plans

$ oc secrets new-dockercfg my-pull-secret \

--docker-username=mastermind \

--docker-password=12345 \

--docker-email=mastermind@example.com

secret/my-pull-secret

$ oc secrets add serviceaccount/robot secret/secret-plans --for=mount

$ oc secrets add serviceaccount/robot secret/my-pull-secret --for=pull

$ oc describe serviceaccount robot

Name: robot

Labels: <none>

Image pull secrets: robot-dockercfg-624cx

my-pull-secret

Mountable secrets: robot-token-uzkbh

robot-dockercfg-624cx

secret-plans

Tokens: robot-token-8bhpp

robot-token-uzkbh== Using a service account’s credentials inside a container

When a pod is created, it specifies a service account (or uses the default service account), and is allowed to use that service account’s API credentials and referenced secrets.

A file containing an API token for a pod’s service account is automatically mounted at /var/run/secrets/kubernetes.io/serviceaccount/token

That token can be used to make API calls as the pod’s service account. This example calls the users/~ API to get information about the user identified by the token:

$ TOKEN="$(cat /var/run/secrets/kubernetes.io/serviceaccount/token)"

$ curl --cacert /var/run/secrets/kubernetes.io/serviceaccount/ca.crt \

"https://openshift.default.svc.cluster.local/oapi/v1/users/~" \

-H "Authorization: Bearer $TOKEN"

kind: "User"

apiVersion: "v1"

metadata:

name: "system:serviceaccount:top-secret:robot"

selflink: "/oapi/v1/users/system:serviceaccount:top-secret:robot"

creationTimestamp: null

identities: null

groups:

- "system:serviceaccounts"

- "system:serviceaccounts:top-secret"4.6. Using a Service Account’s Credentials Externally

The same token can be distributed to external applications that need to authenticate to the API.

4.7. Using a service account’s credentials externally

The same token can be distributed to external applications that need to authenticate to the API.

Use oc describe secret <secret-name> to view a service account’s API token:

$ oc describe secret robot-token-uzkbh -n top-secret

Name: robot-token-uzkbh

Labels: <none>

Annotations: kubernetes.io/service-account.name=robot,kubernetes.io/service-account.uid=49f19e2e-16c6-11e5-afdc-3c970e4b7ffe

Type: kubernetes.io/service-account-token

Data

====

token: eyJhbGciOiJSUzI1NiIsInR5cCI6IkpXVCJ9...

$ oc login --token=eyJhbGciOiJSUzI1NiIsInR5cCI6IkpXVCJ9...

Logged into "https://server:8443" as "system:serviceaccount:top-secret:robot" using the token provided.

You don't have any projects. You can try to create a new project, by running

$ oc new-project <projectname>

$ oc whoami

system:serviceaccount:top-secret:robotChapter 5. Creating New Applications

5.1. Overview

You can create a new OpenShift Enterprise application from source code, images, or templates by using either the OpenShift CLI or web console.

5.2. Creating an Application Using the CLI

5.2.1. Creating an Application From Source Code

The new-app command allows you to create applications using source code in a local or remote Git repository.

To create an application using a Git repository in a local directory:

$ oc new-app /path/to/source/codeIf using a local Git repository, the repository must have an origin remote that points to a URL accessible by the OpenShift Enterprise cluster.

You can use a subdirectory of your source code repository by specifying a --context-dir flag. To create an application using a remote Git repository and a context subdirectory:

$ oc new-app https://github.com/sclorg/s2i-ruby-container.git \

--context-dir=2.0/test/puma-test-app

Also, when specifying a remote URL, you can specify a Git branch to use by appending #<branch_name> to the end of the URL:

$ oc new-app https://github.com/openshift/ruby-hello-world.git#beta4

Using new-app results in a build configuration, which creates a new application image from your source code. It also constructs a deployment configuration to deploy the new image, and a service to provide load-balanced access to the deployment running your image.

OpenShift Enterprise automatically detects whether the Docker or Sourcebuild strategy is being used, and in the case of Source builds, detects an appropriate language builder image.

Build Strategy Detection

If a Dockerfile is in the repository when creating a new application, OpenShift Enterprise generates a Docker build strategy. Otherwise, it generates a Source strategy.

You can specify a strategy by setting the --strategy flag to either source or docker.

$ oc new-app /home/user/code/myapp --strategy=dockerLanguage Detection

If creating a Source build, new-app attempts to determine the language builder to use by the presence of certain files in the root of the repository:

| Language | Files |

|---|---|

|

| Rakefile, Gemfile, config.ru |

|

| pom.xml |

|

| app.json, package.json |

|

| index.php, composer.json |

|

| requirements.txt, setup.py |

|

| index.pl, cpanfile |

After a language is detected, new-app searches the OpenShift Enterprise server for image stream tags that have a supports annotation matching the detected language, or an image stream that matches the name of the detected language. If a match is not found, new-app searches the Docker Hub registry for an image that matches the detected language based on name.

You can override the image the builder uses for a particular source repository by specifying the image (either an image stream or Docker specification) and the repository, with a ~ as a separator.

For example, to use the myproject/my-ruby image stream with the source in a remote repository:

$ oc new-app myproject/my-ruby~https://github.com/openshift/ruby-hello-world.gitTo use the openshift/ruby-20-centos7:latest Docker image stream with the source in a local repository:

$ oc new-app openshift/ruby-20-centos7:latest~/home/user/code/my-ruby-app5.2.2. Creating an Application From an Image

You can deploy an application from an existing image. Images can come from image streams in the OpenShift Enterprise server, images in a specific registry or Docker Hub registry, or images in the local Docker server.

The new-app command attempts to determine the type of image specified in the arguments passed to it. However, you can explicitly tell new-app whether the image is a Docker image (using the --docker-image argument) or an image stream (using the -i|--image argument).

If you specify an image from your local Docker repository, you must ensure that the same image is available to the OpenShift Enterprise cluster nodes.

For example, to create an application from the DockerHub MySQL image:

$ oc new-app mysqlTo create an application using an image in a private registry, specify the full Docker image specification:

$ oc new-app myregistry:5000/example/myimage

If the registry containing the image is not secured with SSL, cluster administrators must ensure that the Docker daemon on the OpenShift Enterprise node hosts is run with the --insecure-registry flag pointing to that registry. You must also tell new-app that the image comes from an insecure registry with the --insecure-registry=true flag.

You can create an application from an existing image stream and tag (optional) for the image stream:

$ oc new-app my-stream:v15.2.3. Creating an Application From a Template

You can create an application from a previously stored template or from a template file, by specifying the name of the template as an argument. For example, you can store a sample application template and use it to create an application.

To create an application from a stored template:

$ oc create -f examples/sample-app/application-template-stibuild.json

$ oc new-app ruby-helloworld-sample

To directly use a template in your local file system, without first storing it in OpenShift Enterprise, use the -f|--file argument:

$ oc new-app -f examples/sample-app/application-template-stibuild.jsonTemplate Parameters

When creating an application based on a template, use the -p|--param argument to set parameter values defined by the template:

$ oc new-app ruby-helloworld-sample \

-p ADMIN_USERNAME=admin,ADMIN_PASSWORD=mypassword5.2.4. Further Modifying Application Creation

The new-app command generates OpenShift Enterprise objects that will build, deploy, and run the application being created. Normally, these objects are created in the current project using names derived from the input source repositories or the input images. However, new-app allows you to modify this behavior.

The set of objects created by new-app depends on the artifacts passed as input: source repositories, images, or templates.

| Object | Description |

|---|---|

|

|

A |

|

|

For |

|

|

A |

|

|

The |

| Other | Other objects can be generated when instantiating templates. |

5.2.4.1. Specifying Environment Variables

When generating applications from a source or an image, you can use the -e|--env argument to pass environment variables to the application container at run time:

$ oc new-app openshift/postgresql-92-centos7 \

-e POSTGRESQL_USER=user \

-e POSTGRESQL_DATABASE=db \

-e POSTGRESQL_PASSWORD=password5.2.4.2. Specifying Labels

When generating applications from source, images, or templates, you can use the -l|--label argument to add labels to the created objects. Labels make it easy to collectively select, configure, and delete objects associated with the application.

$ oc new-app https://github.com/openshift/ruby-hello-world -l name=hello-world5.2.4.3. Viewing the Output Without Creation

To see a dry-run of what new-app will create, you can use the -o|--output argument with a yaml or json value. You can then use the output to preview the objects that will be created, or redirect it to a file that you can edit. Once you are satisfied, you can use oc create to create the OpenShift Enterprise objects.

To output new-app artifacts to a file, edit them, then create them:

$ oc new-app https://github.com/openshift/ruby-hello-world \

-o yaml > myapp.yaml

$ vi myapp.yaml

$ oc create -f myapp.yaml5.2.4.4. Creating Objects With Different Names

Objects created by new-app are normally named after the source repository, or the image used to generate them. You can set the name of the objects produced by adding a --name flag to the command:

$ oc new-app https://github.com/openshift/ruby-hello-world --name=myapp5.2.4.5. Creating Objects in a Different Project

Normally, new-app creates objects in the current project. However, you can create objects in a different project that you have access to using the -n|--namespace argument:

$ oc new-app https://github.com/openshift/ruby-hello-world -n myproject5.2.4.6. Creating Multiple Objects

The new-app command allows creating multiple applications specifying multiple parameters to new-app. Labels specified in the command line apply to all objects created by the single command. Environment variables apply to all components created from source or images.

To create an application from a source repository and a Docker Hub image:

$ oc new-app https://github.com/openshift/ruby-hello-world mysql

If a source code repository and a builder image are specified as separate arguments, new-app uses the builder image as the builder for the source code repository. If this is not the intent, simply specify a specific builder image for the source using the ~ separator.

5.2.4.7. Grouping Images and Source in a Single Pod

The new-app command allows deploying multiple images together in a single pod. In order to specify which images to group together, use the + separator. The --group command line argument can also be used to specify the images that should be grouped together. To group the image built from a source repository with other images, specify its builder image in the group:

$ oc new-app nginx+mysqlTo deploy an image built from source and an external image together:

$ oc new-app \

ruby~https://github.com/openshift/ruby-hello-world \

mysql \

--group=ruby+mysql5.2.4.8. Useful Edits

Following are some specific examples of useful edits to make in the myapp.yaml file.

These examples presume myapp.yaml was created as a result of the oc new-app … -o yaml command.

Example 5.1. Deploy to Selected Nodes

apiVersion: v1

items:

- apiVersion: v1

kind: Project

metadata:

name: myapp

annotations:

openshift.io/node-selector: region=west

- apiVersion: v1

kind: ImageStream

...

kind: List

metadata: {}- 1

- In myapp.yaml, the section that defines the myapp project has both

kind: Projectandmetadata.name: myapp. If this section is missing, you should add it at the second level, as a new item of the listitems, peer to thekind: ImageStreamdefinitions. - 2

- Add this node selector annotation to the myapp project to cause its pods to be deployed only on nodes that have the label

region=west.

5.3. Creating an Application Using the Web Console

While in the desired project, click Add to Project:

Select either a builder image from the list of images in your project, or from the global library:

Note

NoteOnly image stream tags that have the builder tag listed in their annotations appear in this list, as demonstrated here:

kind: "ImageStream" apiVersion: "v1" metadata: name: "ruby" creationTimestamp: null spec: dockerImageRepository: "registry.access.redhat.com/openshift3/ruby-20-rhel7" tags: - name: "2.0" annotations: description: "Build and run Ruby 2.0 applications" iconClass: "icon-ruby" tags: "builder,ruby"1 supports: "ruby:2.0,ruby" version: "2.0"- 1

- Including builder here ensures this

ImageStreamTagappears in the web console as a builder.

Modify the settings in the new application screen to configure the objects to support your application:

- The builder image name and description.

- The application name used for the generated OpenShift Enterprise objects.

- The Git repository URL, reference, and context directory for your source code.

- Routing configuration section for making this application publicly accessible.

- Deployment configuration section for customizing deployment triggers and image environment variables.

- Build configuration section for customizing build triggers.

- Replica scaling section for configuring the number of running instances of the application.

- The labels to assign to all items generated for the application. You can add and edit labels for all objects here.

NoteTo see all of the configuration options, click the "Show advanced build and deployment options" link.

Chapter 6. Application Tutorials

6.1. Overview

This topic group includes information on how to get your application up and running in OpenShift Enterprise and covers different languages and their frameworks.

6.2. Quickstart Templates

6.2.1. Overview

A quickstart is a basic example of an application running on OpenShift Enterprise. Quickstarts come in a variety of languages and frameworks, and are defined in a template, which is constructed from a set of services, build configurations, and deployment configurations. This template references the necessary images and source repositories to build and deploy the application.

To explore a quickstart, create an application from a template. Your administrator may have already installed these templates in your OpenShift Enterprise cluster, in which case you can simply select it from the web console. See the template documentation for more information on how to upload, create from, and modify a template.

Quickstarts refer to a source repository that contains the application source code. To customize the quickstart, fork the repository and, when creating an application from the template, substitute the default source repository name with your forked repository. This results in builds that are performed using your source code instead of the provided example source. You can then update the code in your source repository and launch a new build to see the changes reflected in the deployed application.

6.2.2. Web Framework Quickstart Templates

These quickstarts provide a basic application of the indicated framework and language:

CakePHP: a PHP web framework (includes a MySQL database)

Dancer: a Perl web framework (includes a MySQL database)

Django: a Python web framework (includes a PostgreSQL database)

NodeJS: a NodeJS web application (includes a MongoDB database)

Rails: a Ruby web framework (includes a PostgreSQL database)

6.3. Ruby on Rails

6.3.1. Overview

Ruby on Rails is a popular web framework written in Ruby. This guide covers using Rails 4 on OpenShift Enterprise.

We strongly advise going through the whole tutorial to have an overview of all the steps necessary to run your application on the OpenShift Enterprise. If you experience a problem try reading through the entire tutorial and then going back to your issue. It can also be useful to review your previous steps to ensure that all the steps were executed correctly.

For this guide you will need:

- Basic Ruby/Rails knowledge

- Locally installed version of Ruby 2.0.0+, Rubygems, Bundler

- Basic Git knowledge

- Running instance of OpenShift Enterprise v3

6.3.2. Local Workstation Setup

First make sure that an instance of OpenShift Enterprise is running and is available. For more info on how to get OpenShift Enterprise up and running check the installation methods. Also make sure that your oc CLI client is installed and the command is accessible from your command shell, so you can use it to log in using your email address and password.

6.3.2.1. Setting Up the Database

Rails applications are almost always used with a database. For the local development we chose the PostgreSQL database. To install it type:

$ sudo yum install -y postgresql postgresql-server postgresql-develNext you need to initialize the database with:

$ sudo postgresql-setup initdb

This command will create the /var/lib/pgsql/data directory, in which the data will be stored.

Start the database by typing:

$ sudo systemctl start postgresql.service

When the database is running, create your rails user:

$ sudo -u postgres createuser -s railsNote that the user we created has no password.

6.3.3. Writing Your Application

If you are starting your Rails application from scratch, you need to install the Rails gem first.

$ gem install rails

Successfully installed rails-4.2.0

1 gem installedAfter you install the Rails gem create a new application, with PostgreSQL as your database:

$ rails new rails-app --database=postgresqlThen change into your new application directory.

$ cd rails-app

If you already have an application, make sure the pg (postgresql) gem is present in your Gemfile. If not edit your Gemfile by adding the gem:

gem 'pg'

To generate a new Gemfile.lock with all your dependencies run:

$ bundle install

In addition to using the postgresql database with the pg gem, you’ll also need to ensure the config/database.yml is using the postgresql adapter.

Make sure you updated default section in the config/database.yml file, so it looks like this:

default: &default

adapter: postgresql

encoding: unicode

pool: 5

host: localhost

username: rails

password:

Create your application’s development and test databases by using this rake command:

$ rake db:create

This will create development and test database in your PostgreSQL server.

6.3.3.1. Creating a Welcome Page

Since Rails 4 no longer serves a static public/index.html page in production, we need to create a new root page.

In order to have a custom welcome page we need to do following steps:

- Create a controller with an index action

-

Create a view page for the

welcomecontrollerindexaction - Create a route that will serve applications root page with the created controller and view

Rails offers a generator that will do all this necessary steps for you.

$ rails generate controller welcome index

All the necessary files have been created, now we just need to edit line 2 in config/routes.rb file to look like:

root 'welcome#index'Run the rails server to verify the page is available.

$ rails serverYou should see your page by visiting http://localhost:3000 in your browser. If you don’t see the page, check the logs that are output to your server to debug.

6.3.3.2. Configuring the Application for OpenShift Enterprise

In order to have your application communicating with the PostgreSQL database service that will be running in OpenShift Enterprise, you will need to edit the default section in your config/database.yml to use environment variables, which you will define later, upon the database service creation.

The default section in your edited config/database.yml together with pre-defined variables should look like:

<% user = ENV.key?("POSTGRESQL_ADMIN_PASSWORD") ? "root" : ENV["POSTGRESQL_USER"] %>

<% password = ENV.key?("POSTGRESQL_ADMIN_PASSWORD") ? ENV["POSTGRESQL_ADMIN_PASSWORD"] : ENV["POSTGRESQL_PASSWORD"] %>

<% db_service = ENV.fetch("DATABASE_SERVICE_NAME","").upcase %>

default: &default

adapter: postgresql

encoding: unicode

# For details on connection pooling, see rails configuration guide

# http://guides.rubyonrails.org/configuring.html#database-pooling

pool: <%= ENV["POSTGRESQL_MAX_CONNECTIONS"] || 5 %>

username: <%= user %>

password: <%= password %>

host: <%= ENV["#{db_service}_SERVICE_HOST"] %>

port: <%= ENV["#{db_service}_SERVICE_PORT"] %>

database: <%= ENV["POSTGRESQL_DATABASE"] %>For an example of how the final file should look, see Ruby on Rails example application config/database.yml.

6.3.3.3. Storing Your Application in Git

OpenShift Enterprise requires git, if you don’t have it installed you will need to install it.

Building an application in OpenShift Enterprise usually requires that the source code be stored in a git repository, so you will need to install git if you do not already have it.

Make sure you are in your Rails application directory by running the ls -1 command. The output of the command should look like:

$ ls -1

app

bin

config

config.ru

db

Gemfile

Gemfile.lock

lib

log

public

Rakefile

README.rdoc

test

tmp

vendorNow run these commands in your Rails app directory to initialize and commit your code to git:

$ git init

$ git add .

$ git commit -m "initial commit"Once your application is committed you need to push it to a remote repository. For this you would need a GitHub account, in which you create a new repository.

Set the remote that points to your git repository:

$ git remote add origin git@github.com:<namespace/repository-name>.gitAfter that, push your application to your remote git repository.

$ git push6.3.4. Deploying Your Application to OpenShift Enterprise

To deploy your Ruby on Rails application, create a new Project for the application:

$ oc new-project rails-app --description="My Rails application" --display-name="Rails Application"

After creating the the rails-app project, you will be automatically switched to the new project namespace.

Deploying your application in OpenShift Enterprise involves three steps:

- Creating a database service from OpenShift Enterprise’s PostgreSQL image

- Creating a frontend service from OpenShift Enterprise’s Ruby 2.0 builder image and your Ruby on Rails source code, which we wire with the database service

- Creating a route for your application.

6.3.4.1. Creating the Database Service

Your Rails application expects a running database service. For this service use PostgeSQL database image.

To create the database service you will use the oc new-app command. To this command you will need to pass some necessary environment variables which will be used inside the database container. These environment variables are required to set the username, password, and name of the database. You can change the values of these environment variables to anything you would like. The variables we are going to be setting are as follows:

- POSTGRESQL_DATABASE

- POSTGRESQL_USER

- POSTGRESQL_PASSWORD

Setting these variables ensures:

- A database exists with the specified name

- A user exists with the specified name

- The user can access the specified database with the specified password

For example:

$ oc new-app postgresql -e POSTGRESQL_DATABASE=db_name -e POSTGRESQL_USER=username -e POSTGRESQL_PASSWORD=passwordTo also set the password for the database administrator, append to the previous command with:

-e POSTGRESQL_ADMIN_PASSWORD=admin_pwTo watch the progress of this command:

$ oc get pods --watch6.3.4.2. Creating the Frontend Service

To bring your application to OpenShift Enterprise, you need to specify a repository in which your application lives, using once again the oc new-app command, in which you will need to specify database related environment variables we setup in the Creating the Database Service:

$ oc new-app path/to/source/code --name=rails-app -e POSTGRESQL_USER=username -e POSTGRESQL_PASSWORD=password -e POSTGRESQL_DATABASE=db_name

With this command, OpenShift Enterprise fetches the source code, sets up the Builder image, builds your application image, and deploys the newly created image together with the specified environment variables. The application is named rails-app.

You can verify the environment variables have been added by viewing the JSON document of the rails-app DeploymentConfig:

$ oc get dc rails-app -o jsonYou should see the following section:

env": [

{

"name": "POSTGRESQL_USER",

"value": "username"

},

{

"name": "POSTGRESQL_PASSWORD",

"value": "password"

},

{

"name": "POSTGRESQL_DATABASE",

"value": "db_name"

}

],To check the build process, use the build-logs command:

$ oc logs -f build rails-app-1Once the build is complete, you can look at the running pods in OpenShift Enterprise:

$ oc get podsYou should see a line starting with myapp-(#number)-(some hash) and that is your application running in OpenShift Enterprise.

Before your application will be functional, you need to initialize the database by running the database migration script. There are two ways you can do this:

- Manually from the running frontend container:

First you need to exec into frontend container with rsh command:

$ oc rsh <FRONTEND_POD_ID>Run the migration from inside the container:

$ RAILS_ENV=production bundle exec rake db:migrate

If you are running your Rails application in a development or test environment you don’t have to specify the RAILS_ENV environment variable.

- By adding pre-deployment lifecycle hooks in your template. For example check the hooks example in our Rails example application.

6.3.4.3. Creating a Route for Your Application

To expose a service by giving it an externally-reachable hostname like www.example.com, use an OpenShift Enterprise route. In your case you need to expose the frontend service by typing:

$ oc expose service rails-app --hostname=www.example.comIt’s the user’s responsibility to ensure the hostname they specify resolves into the IP address of the router. For more information, check the OpenShift Enterprise documentation on:

Chapter 7. Opening a Remote Shell to Containers

7.1. Overview

The oc rsh command allows you to locally access and manage tools that are on the system. The secure shell (SSH) is the underlying technology and industry standard that provides a secure connection to the application. Access to applications with the shell environment is protected and restricted with Security-Enhanced Linux (SELinux) policies.

7.2. Start a Secure Shell Session

Open a remote shell session to a container:

$ oc rsh <pod>While in the remote shell, you can issue commands as if you are inside the container and perform local operations like monitoring, debugging, and using CLI commands specific to what is running in the container.

For example, in a MySQL container, you can count the number of records in the database by invoking the mysql command, then using the the prompt to type in the SELECT command. You can also use use commands like ps(1) and ls(1) for validation.

BuildConfigs and DeployConfigs map out how you want things to look and pods (with containers inside) are created and dismantled as needed. Your changes are not persistent. If you make changes directly within the container and that container is destroyed and rebuilt, your changes will no longer exist.

oc exec can be used to execute a command remotely. However, the oc rsh command provides an easier way to keep a remote shell open persistently.

7.3. Secure Shell Session Help

For help with usage, options, and to see examples:

$ oc rsh -hChapter 8. Templates

8.1. Overview

A template describes a set of objects that can be parameterized and processed to produce a list of objects for creation by OpenShift Enterprise. A template can be processed to create anything you have permission to create within a project, for example services, build configurations, and deployment configurations. A template may also define a set of labels to apply to every object defined in the template.

You can create a list of objects from a template using the CLI or, if a template has been uploaded to your project or the global template library, using the web console.

8.2. Uploading a Template

If you have a JSON or YAML file that defines a template, for example as seen in this example, you can upload the template to projects using the CLI. This saves the template to the project for repeated use by any user with appropriate access to that project. Instructions on writing your own templates are provided later in this topic.

To upload a template to your current project’s template library, pass the JSON or YAML file with the following command:

$ oc create -f <filename>

You can upload a template to a different project using the -n option with the name of the project:

$ oc create -f <filename> -n <project>The template is now available for selection using the web console or the CLI.

8.3. Creating from Templates Using the Web Console

To create the objects from an uploaded template using the web console:

While in the desired project, click Add to Project:

Select a template from the list of templates in your project, or provided by the global template library:

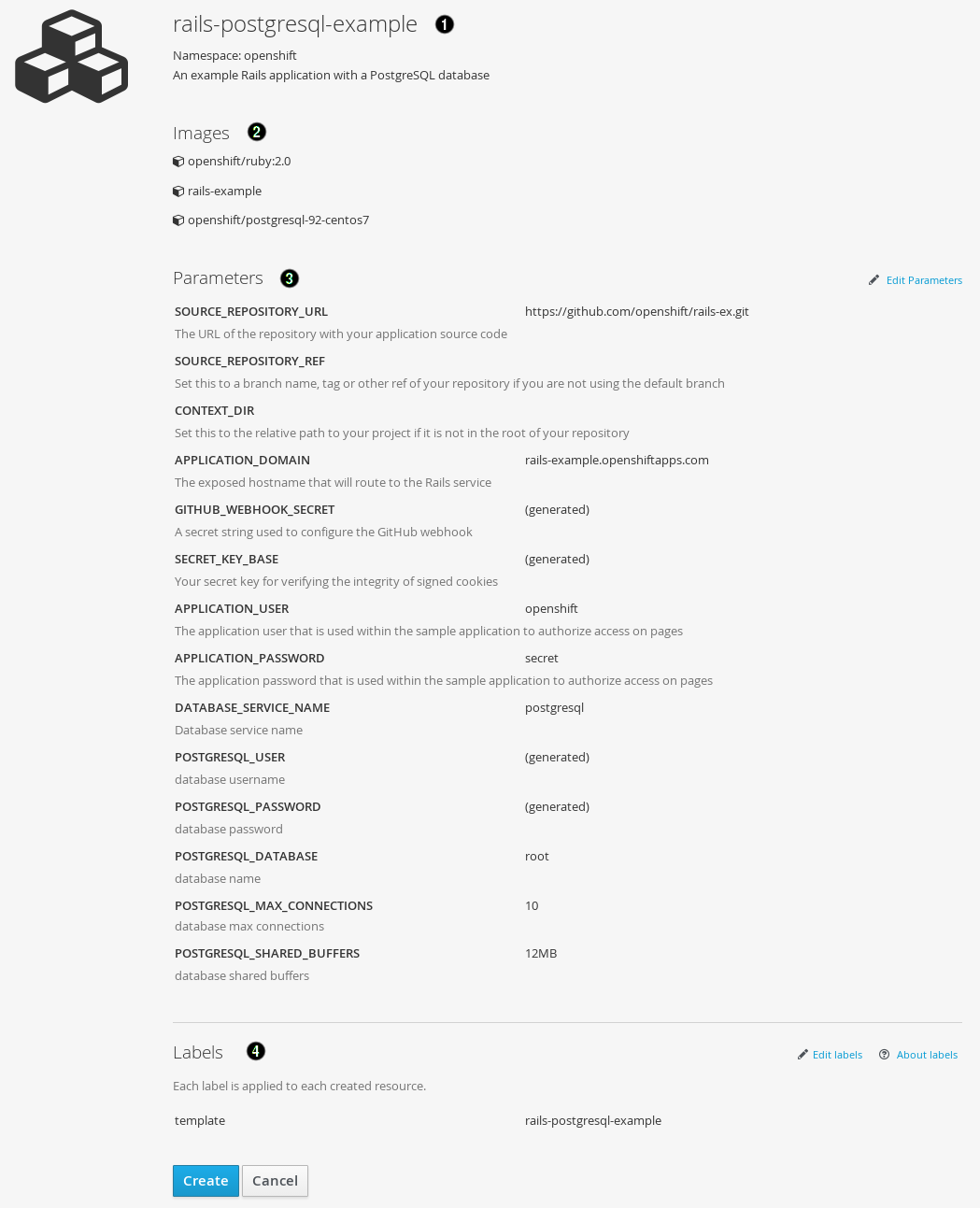

Modify template parameters in the template creation screen:

- Template name and description.

- Container images included in the template.

- Parameters defined by the template. You can edit values for parameters defined in the template here.

- Labels to assign to all items included in the template. You can add and edit labels for objects.

8.4. Creating from Templates Using the CLI

You can use the CLI to process templates and use the configuration that is generated to create objects.

8.4.1. Labels

Labels are used to manage and organize generated objects, such as pods. The labels specified in the template are applied to every object that is generated from the template.

There is also the ability to add labels in the template from the command line.

$ oc process -f <filename> -l name=otherLabel8.4.2. Parameters

The list of parameters that you can override are listed in the parameters section of the template. You can list them with the CLI by using the following command and specifying the file to be used:

$ oc process --parameters -f <filename>Alternatively, if the template is already uploaded:

$ oc process --parameters -n <project> <template_name>For example, the following shows the output when listing the parameters for one of the Quickstart templates in the default openshift project:

$ oc process --parameters -n openshift rails-postgresql-example

NAME DESCRIPTION GENERATOR VALUE

SOURCE_REPOSITORY_URL The URL of the repository with your application source code https://github.com/sclorg/rails-ex.git

SOURCE_REPOSITORY_REF Set this to a branch name, tag or other ref of your repository if you are not using the default branch

CONTEXT_DIR Set this to the relative path to your project if it is not in the root of your repository

APPLICATION_DOMAIN The exposed hostname that will route to the Rails service rails-postgresql-example.openshiftapps.com

GITHUB_WEBHOOK_SECRET A secret string used to configure the GitHub webhook expression [a-zA-Z0-9]{40}

SECRET_KEY_BASE Your secret key for verifying the integrity of signed cookies expression [a-z0-9]{127}

APPLICATION_USER The application user that is used within the sample application to authorize access on pages openshift

APPLICATION_PASSWORD The application password that is used within the sample application to authorize access on pages secret

DATABASE_SERVICE_NAME Database service name postgresql

POSTGRESQL_USER database username expression user[A-Z0-9]{3}

POSTGRESQL_PASSWORD database password expression [a-zA-Z0-9]{8}

POSTGRESQL_DATABASE database name root

POSTGRESQL_MAX_CONNECTIONS database max connections 10

POSTGRESQL_SHARED_BUFFERS database shared buffers 12MBThe output identifies several parameters that are generated with a regular expression-like generator when the template is processed.

8.4.3. Generating a List of Objects

Using the CLI, you can process a file defining a template to return the list of objects to standard output:

$ oc process -f <filename>Alternatively, if the template has already been uploaded to the current project:

$ oc process <template_name>

The process command also takes a list of templates you can process to a list of objects. In that case, every template will be processed and the resulting list of objects will contain objects from all templates passed to a process command:

$ cat <first_template> <second_template> | oc process -f -

You can create objects from a template by processing the template and piping the output to oc create:

$ oc process -f <filename> | oc create -f -Alternatively, if the template has already been uploaded to the current project:

$ oc process <template> | oc create -f -

You can override any parameter values defined in the file by adding the -v option followed by a comma-separated list of <name>=<value> pairs. A parameter reference may appear in any text field inside the template items.

For example, in the following the POSTGRESQL_USER and POSTGRESQL_DATABASE parameters of a template are overridden to output a configuration with customized environment variables:

Example 8.1. Creating a List of Objects from a Template

$ oc process -f my-rails-postgresql \

-v POSTGRESQL_USER=bob,POSTGRESQL_DATABASE=mydatabase

The JSON file can either be redirected to a file or applied directly without uploading the template by piping the processed output to the oc create command:

$ oc process -f my-rails-postgresql \

-v POSTGRESQL_USER=bob,POSTGRESQL_DATABASE=mydatabase \

| oc create -f -8.5. Modifying an Uploaded Template

You can edit a template that has already been uploaded to your project by using the following command:

$ oc edit template <template>8.6. Using the Instant App and Quickstart Templates

OpenShift Enterprise provides a number of default Instant App and Quickstart templates to make it easy to quickly get started creating a new application for different languages. Templates are provided for Rails (Ruby), Django (Python), Node.js, CakePHP (PHP), and Dancer (Perl). Your cluster administrator should have created these templates in the default, global openshift project so you have access to them. You can list the available default Instant App and Quickstart templates with:

$ oc get templates -n openshiftIf they are not available, direct your cluster administrator to the Loading the Default Image Streams and Templates topic.

By default, the templates build using a public source repository on GitHub that contains the necessary application code. In order to be able to modify the source and build your own version of the application, you must:

-

Fork the repository referenced by the template’s default

SOURCE_REPOSITORY_URLparameter. -

Override the value of the

SOURCE_REPOSITORY_URLparameter when creating from the template, specifying your fork instead of the default value.

By doing this, the build configuration created by the template will now point to your fork of the application code, and you can modify the code and rebuild the application at will. A walkthrough of this process using the web console is provided in Getting Started for Developers: Web Console.

Some of the Instant App and Quickstart templates define a database deployment configuration. The configuration they define uses ephemeral storage for the database content. These templates should be used for demonstration purposes only as all database data will be lost if the database pod restarts for any reason.

8.7. Writing Templates

You can define new templates to make it easy to recreate all the objects of your application. The template will define the objects it creates along with some metadata to guide the creation of those objects.

8.7.1. Description

The template description covers information that informs users what your template does and helps them find it when searching in the web console. In addition to general descriptive information, it includes a set of tags. Useful tags include the name of the language your template is related to (e.g., java, php, ruby, etc.). In addition, adding the special tag instant-app causes your template to be displayed in the list of Instant Apps on the template selection page of the web console.

kind: "Template"

apiVersion: "v1"

metadata:

name: "cakephp-mysql-example"

annotations:

description: "An example CakePHP application with a MySQL database"

tags: "instant-app,php,cakephp,mysql"

iconClass: "icon-php" 8.7.2. Labels

Templates can include a set of labels. These labels will be added to each object created when the template is instantiated. Defining a label in this way makes it easy for users to find and manage all the objects created from a particular template.

kind: "Template"

apiVersion: "v1"

...

labels:

template: "cakephp-mysql-example" - 1

- A label that will be applied to all objects created from this template.

8.7.3. Parameters

Parameters allow a value to be supplied by the user or generated when the template is instantiated. This is useful for generating random passwords or allowing the user to supply a host name or other user-specific value that is required to customize the template. Parameters can be referenced by placing values in the form "${PARAMETER_NAME}" in place of any string field in the template.

kind: Template

apiVersion: v1

objects:

- kind: BuildConfig

apiVersion: v1

metadata:

name: cakephp-mysql-example

annotations:

description: Defines how to build the application

spec:

source:

type: Git

git:

uri: "${SOURCE_REPOSITORY_URL}"

ref: "${SOURCE_REPOSITORY_REF}"

contextDir: "${CONTEXT_DIR}"

parameters:

- name: SOURCE_REPOSITORY_URL

description: The URL of the repository with your application source code

value: https://github.com/sclorg/cakephp-ex.git

required: true

- name: GITHUB_WEBHOOK_SECRET

description: A secret string used to configure the GitHub webhook

generate: expression

from: "[a-zA-Z0-9]{40}" - 1

- This value will be replaced with the value of the

SOURCE_REPOSITORY_URLparameter when the template is instantiated. - 2

- The name of the parameter. This value is displayed to users and used to reference the parameter within the template.

- 3

- A description of the parameter.

- 4

- A default value for the parameter which will be used if the user does not override the value when instantiating the template.

- 5

- Indicates this parameter is required, meaning the user cannot override it with an empty value. If the parameter does not provide a default or generated value, the user must supply a value.

- 6

- A parameter which has its value generated via a regular expression-like syntax.

- 7

- The input to the generator. In this case, the generator will produce a 40 character alphanumeric value including upper and lowercase characters.

8.7.4. Object List

The main portion of the template is the list of objects which will be created when the template is instantiated. This can be any valid API object, such as a BuildConfig, DeploymentConfig, Service, etc. The object will be created exactly as defined here, with any parameter values substituted in prior to creation. The definition of these objects can reference parameters defined earlier.

kind: "Template"

apiVersion: "v1"

objects:

- kind: "Service"

apiVersion: "v1"

metadata:

name: "cakephp-mysql-example"

annotations:

description: "Exposes and load balances the application pods"

spec:

ports:

- name: "web"

port: 8080

targetPort: 8080

selector:

name: "cakephp-mysql-example"- 1

- The definition of a

Servicewhich will be created by this template.

If an object definition’s metadata includes a namespace field, the field will be stripped out of the definition during template instantiation. This is necessary because all objects created during instantiation are placed into the target namespace, so it would be invalid for the object to declare a different namespace.

8.7.5. Creating a Template from Existing Objects

Rather than writing an entire template from scratch, you can also export existing objects from your project in template form, and then modify the template from there by adding parameters and other customizations. To export objects in a project in template form, run:

$ oc export all --as-template=<template_name>

You can also substitute a particular resource type or multiple resources instead of all. Run $ oc export -h for more examples.

Chapter 9. Builds

9.1. Overview

A build is the process of transforming input parameters into a resulting object. Most often, the process is used to transform source code into a runnable image.

Build configurations are characterized by a strategy and one or more sources. The strategy determines the aforementioned process, while the sources provide its input.

There are three build strategies:

- Source-To-Image (S2I) (description, options)

- Docker (description, options)

- Custom (description, options)

And there are three types of build source:

It is up to each build strategy to consider or ignore a certain type of source, as well as to determine how it is to be used.

Binary and Git are mutually exclusive source types, while Dockerfile can be used by itself or together with Git and Binary.

9.2. Defining a BuildConfig

A build configuration describes a single build definition and a set of triggers for when a new build should be created.

A build configuration is defined by a BuildConfig, which is a REST object that can be used in a POST to the API server to create a new instance. The following example BuildConfig results in a new build every time a Docker image tag or the source code changes:

Example 9.1. BuildConfig Object Definition

kind: "BuildConfig"

apiVersion: "v1"

metadata:

name: "ruby-sample-build"

spec:

triggers:

- type: "GitHub"

github:

secret: "secret101"

- type: "Generic"

generic:

secret: "secret101"

- type: "ImageChange"

source:

type: "Git"

git:

uri: "https://github.com/openshift/ruby-hello-world"

dockerfile: "FROM openshift/ruby-22-centos7\nUSER example"

strategy:

type: "Source"

sourceStrategy:

from:

kind: "ImageStreamTag"

name: "ruby-20-centos7:latest"

output:

to:

kind: "ImageStreamTag"

name: "origin-ruby-sample:latest"- 1

- This specification will create a new

BuildConfignamed ruby-sample-build. - 2

- You can specify a list of triggers, which cause a new build to be created.

- 3

- The

sourcesection defines the source of the build. The source type determines the primary source of input, and can be eitherGit, to point to a code repository location,Dockerfile, to build from an inline Dockerfile, orBinary, to accept binary payloads. It is possible to have multiple sources at once, refer to the documentation for each source type for details. - 4

- The

strategysection describes the build strategy used to execute the build. You can specifySource,DockerandCustomstrategies here. This above example uses theruby-20-centos7Docker image that Source-To-Image will use for the application build. - 5

- After the Docker image is successfully built, it will be pushed into the repository described in the

outputsection.

9.3. Source-to-Image Strategy Options

The following options are specific to the S2I build strategy.

9.3.1. Force Pull

By default, if the builder image specified in the build configuration is available locally on the node, that image will be used. However, to override the local image and refresh it from the registry to which the image stream points, create a BuildConfig with the forcePull flag set to true:

strategy:

type: "Source"

sourceStrategy:

from:

kind: "ImageStreamTag"

name: "builder-image:latest"

forcePull: true - 1

- The builder image being used, where the local version on the node may not be up to date with the version in the registry to which the image stream points.

- 2

- This flag causes the local builder image to be ignored and a fresh version to be pulled from the registry to which the image stream points. Setting

forcePullto false results in the default behavior of honoring the image stored locally.

9.3.2. Incremental Builds

S2I can perform incremental builds, which means it reuses artifacts from previously-built images. To create an incremental build, create a BuildConfig with the following modification to the strategy definition:

strategy:

type: "Source"

sourceStrategy:

from:

kind: "ImageStreamTag"

name: "incremental-image:latest"

incremental: true - 1

- Specify an image that supports incremental builds. Consult the documentation of the builder image to determine if it supports this behavior.

- 2

- This flag controls whether an incremental build is attempted. If the builder image does not support incremental builds, the build will still succeed, but you will get a log message stating the incremental build was not successful because of a missing save-artifacts script.

See the S2I Requirements topic for information on how to create a builder image supporting incremental builds.

9.3.3. Overriding Builder Image Scripts

You can override the assemble, run, and save-artifactsS2I scripts provided by the builder image in one of two ways. Either:

- Provide an assemble, run, and/or save-artifacts script in the .sti/bin directory of your application source repository, or

- Provide a URL of a directory containing the scripts as part of the strategy definition. For example:

strategy:

type: "Source"

sourceStrategy:

from:

kind: "ImageStreamTag"

name: "builder-image:latest"

scripts: "http://somehost.com/scripts_directory" - 1

- This path will have run, assemble, and save-artifacts appended to it. If any or all scripts are found they will be used in place of the same named script(s) provided in the image.

Files located at the scripts URL take precedence over files located in .sti/bin of the source repository. See the S2I Requirements topic and the S2I documentation for information on how S2I scripts are used.

9.3.4. Environment Variables

There are two ways to make environment variables available to the source build process and resulting image: environment files and BuildConfig environment values.

9.3.4.1. Environment Files

Source build enables you to set environment values (one per line) inside your application, by specifying them in a .sti/environment file in the source repository. The environment variables specified in this file are present during the build process and in the final Docker image. The complete list of supported environment variables is available in the documentation for each image.

If you provide a .sti/environment file in your source repository, S2I reads this file during the build. This allows customization of the build behavior as the assemble script may use these variables.

For example, if you want to disable assets compilation for your Rails application, you can add DISABLE_ASSET_COMPILATION=true in the .sti/environment file to cause assets compilation to be skipped during the build.

In addition to builds, the specified environment variables are also available in the running application itself. For example, you can add RAILS_ENV=development to the .sti/environment file to cause the Rails application to start in development mode instead of production.

9.3.4.2. BuildConfig Environment

You can add environment variables to the sourceStrategy definition of the BuildConfig. The environment variables defined there are visible during the assemble script execution and will be defined in the output image, making them also available to the run script and application code.

For example disabling assets compilation for your Rails application:

sourceStrategy:

...

env:

- name: "DISABLE_ASSET_COMPILATION"

value: "true"9.4. Docker Strategy Options

The following options are specific to the Docker build strategy.

9.4.1. FROM Image

The FROM instruction of the Dockerfile will be replaced by the from of the BuildConfig:

strategy:

type: Docker

dockerStrategy:

from:

kind: "ImageStreamTag"

name: "debian:latest"9.4.2. Dockerfile Path

By default, Docker builds use a Dockerfile (named Dockerfile) located at the root of the context specified in the BuildConfig.spec.source.contextDir field.

The dockerfilePath field allows the build to use a different path to locate your Dockerfile, relative to the BuildConfig.spec.source.contextDir field. It can be simply a different file name other than the default Dockerfile (for example, MyDockerfile), or a path to a Dockerfile in a subdirectory (for example, dockerfiles/app1/):

strategy:

type: Docker

dockerStrategy:

dockerfilePath: dockerfiles/app1/9.4.3. No Cache

Docker builds normally reuse cached layers found on the host performing the build. Setting the noCache option to true forces the build to ignore cached layers and rerun all steps of the Dockerfile:

strategy:

type: "Docker"

dockerStrategy:

noCache: true9.4.4. Force Pull

By default, if the builder image specified in the build configuration is available locally on the node, that image will be used. However, to override the local image and refresh it from the registry to which the image stream points, create a BuildConfig with the forcePull flag set to true:

strategy:

type: "Docker"

dockerStrategy:

forcePull: true - 1

- This flag causes the local builder image to be ignored, and a fresh version to be pulled from the registry to which the image stream points. Setting

forcePullto false results in the default behavior of honoring the image stored locally.

9.4.5. Environment Variables

To make environment variables available to the Docker build process and resulting image, you can add environment variables to the dockerStrategy definition of the BuildConfig.

The environment variables defined there are inserted as a single ENV Dockerfile instruction right after the FROM instruction, so that it can be referenced later on within the Dockerfile.

The variables are defined during build and stay in the output image, therefore they will be present in any container that runs that image as well.

For example, defining a custom HTTP proxy to be used during build and runtime:

dockerStrategy:

...

env:

- name: "HTTP_PROXY"

value: "http://myproxy.net:5187/"9.5. Custom Strategy Options

The following options are specific to the Custom build strategy.

9.5.1. Exposing the Docker Socket

In order to allow the running of Docker commands and the building of Docker images from inside the Docker container, the build container must be bound to an accessible socket. To do so, set the exposeDockerSocket option to true:

strategy:

type: "Custom"

customStrategy:

exposeDockerSocket: true9.5.2. Secrets

In addition to secrets for source and images that can be added to all build types, custom strategies allow adding an arbitrary list of secrets to the builder pod.

Each secret can be mounted at a specific location:

strategy:

type: "Custom"

customStrategy:

secrets:

- secretSource:

name: "secret1"

mountPath: "/tmp/secret1"

- secretSource:

name: "secret2"

mountPath: "/tmp/secret2"9.5.3. Force Pull

By default, when setting up the build pod, the build controller checks if the image specified in the build configuration is available locally on the node. If so, that image will be used. However, to override the local image and refresh it from the registry to which the image stream points, create a BuildConfig with the forcePull flag set to true:

strategy:

type: "Custom"

customStrategy:

forcePull: true - 1

- This flag causes the local builder image to be ignored, and a fresh version to be pulled from the registry to which the image stream points. Setting

forcePullto false results in the default behavior of honoring the image stored locally.

9.5.4. Environment Variables

To make environment variables available to the Custom build process, you can add environment variables to the customStrategy definition of the BuildConfig.

The environment variables defined there are passed to the pod that runs the custom build.

For example, defining a custom HTTP proxy to be used during build:

customStrategy:

...

env:

- name: "HTTP_PROXY"

value: "http://myproxy.net:5187/"9.6. Git Repository Source Options

When the BuildConfig.spec.source.type is Git, a Git repository is required, and an inline Dockerfile is optional.

The source code is fetched from the location specified and, if the BuildConfig.spec.source.dockerfile field is specified, the inline Dockerfile replaces the one in the contextDir of the Git repository.

The source definition is part of the spec section in the BuildConfig:

source:

type: "Git"

git:

uri: "https://github.com/openshift/ruby-hello-world"

ref: "master"

contextDir: "app/dir"

dockerfile: "FROM openshift/ruby-22-centos7\nUSER example" - 1

- The

gitfield contains the URI to the remote Git repository of the source code. Optionally, specify thereffield to check out a specific Git reference. A validrefcan be a SHA1 tag or a branch name. - 2

- The

contextDirfield allows you to override the default location inside the source code repository where the build looks for the application source code. If your application exists inside a sub-directory, you can override the default location (the root folder) using this field. - 3

- If the optional

dockerfilefield is provided, it should be a string containing a Dockerfile that overwrites any Dockerfile that may exist in the source repository.

9.6.1. Using a Proxy for Git Cloning

If your Git repository can only be accessed using a proxy, you can define the proxy to use in the source section of the BuildConfig. You can configure both a HTTP and HTTPS proxy to use. Both fields are optional.

Your source URI must use the HTTP or HTTPS protocol for this to work.

source:

type: Git

git:

uri: "https://github.com/openshift/ruby-hello-world"

httpProxy: http://proxy.example.com

httpsProxy: https://proxy.example.com9.6.2. Using Private Repositories for Builds

Supply valid credentials to build an application from a private repository.

Currently two types of authentication are supported: basic username-password and SSH key based authentication.

9.6.2.1. Basic Authentication

Basic authentication requires either a combination of username and password, or a token to authenticate against the SCM server. A CA certificate file, or a .gitconfig file can be attached.

A secret is used to store your keys.

Create the

secretfirst before using the username and password to access the private repository:$ oc secrets new-basicauth basicsecret --username=USERNAME --password=PASSWORDTo create a Basic Authentication Secret with a token:

$ oc secrets new-basicauth basicsecret --password=TOKENTo create a Basic Authentication Secret with a CA certificate file:

$ oc secrets new-basicauth basicsecret --username=USERNAME --password=PASSWORD --ca-cert=FILENAMETo create a Basic Authentication Secret with a

.gitconfigfile:$ oc secrets new-basicauth basicsecret --username=USERNAME --password=PASSWORD --gitconfig=FILENAME

Add the

secretto the builder service account. Each build is run withserviceaccount/builderrole, so you need to give it access your secret with following command:$ oc secrets add serviceaccount/builder secrets/basicsecretAdd a

sourceSecretfield to thesourcesection inside theBuildConfigand set it to the name of thesecretthat you created. In this casebasicsecret:apiVersion: "v1" kind: "BuildConfig" metadata: name: "sample-build" spec: output: to: kind: "ImageStreamTag" name: "sample-image:latest" source: git: uri: "https://github.com/user/app.git"1 sourceSecret: name: "basicsecret" type: "Git" strategy: sourceStrategy: from: kind: "ImageStreamTag" name: "python-33-centos7:latest" type: "Source"- 1

- The URL of private repository, accessed by basic authentication, is usually in the

httporhttpsform.

9.6.2.2. SSH Key Based Authentication

SSH Key Based Authentication requires a private SSH key. A .gitconfig file can also be attached.

The repository keys are usually located in the $HOME/.ssh/ directory, and are named id_dsa.pub, id_ecdsa.pub, id_ed25519.pub, or id_rsa.pub by default. Generate SSH key credentials with the following command:

$ ssh-keygen -t rsa -C "your_email@example.com"Creating a passphrase for the SSH key prevents OpenShift Enterprisefrom building. When prompted for a passphrase, leave it blank.

Two files are created: the public key and a corresponding private key (one of id_dsa, id_ecdsa, id_ed25519, or id_rsa). With both of these in place, consult your source control management (SCM) system’s manual on how to upload the public key. The private key will be used to access your private repository.

A secret is used to store your keys.

Create the

secretfirst before using the SSH key to access the private repository:$ oc secrets new-sshauth sshsecret --ssh-privatekey=$HOME/.ssh/id_rsaTo create a SSH Based Authentication Secret with a

.gitconfigfile:$ oc secrets new-sshauth sshsecret --ssh-privatekey=$HOME/.ssh/id_rsa --gitconfig=FILENAME

Add the

secretto the builder service account. Each build is run withserviceaccount/builderrole, so you need to give it access your secret with following command:$ oc secrets add serviceaccount/builder secrets/sshsecretAdd a

sourceSecretfield into thesourcesection inside theBuildConfigand set it to the name of thesecretthat you created. In this casesshsecret:apiVersion: "v1" kind: "BuildConfig" metadata: name: "sample-build" spec: output: to: kind: "ImageStreamTag" name: "sample-image:latest" source: git: uri: "git@repository.com:user/app.git"1 sourceSecret: name: "sshsecret" type: "Git" strategy: sourceStrategy: from: kind: "ImageStreamTag" name: "python-33-centos7:latest" type: "Source"- 1

- The URL of private repository, accessed by a private SSH key, is usually in the form

git@example.com:<username>/<repository>.git.

9.6.2.3. Other

If the cloning of your application is dependent on a CA certificate, .gitconfig file, or both, then you can create a secret that contains them, add it to the builder service account, and then your BuildConfig.

Create desired type of

secret:To create a secret from a

.gitconfig:$ oc secrets new mysecret .gitconfig=path/to/.gitconfigTo create a secret from a

CA certificate:$ oc secrets new mysecret ca.crt=path/to/certificateTo create a secret from a

CA certificateand.gitconfig:$ oc secrets new mysecret ca.crt=path/to/certificate .gitconfig=path/to/.gitconfigNoteSSL verification can be turned off, if

sslVerify=falseis set for thehttpsection in your.gitconfigfile:[http] sslVerify=false

Add the

secretto the builder service account. Each build is run with theserviceaccount/builderrole, so you need to give it access your secret with following command:$ oc secrets add serviceaccount/builder secrets/mysecretAdd the

secretto theBuildConfig:source: git: uri: "https://github.com/sclorg/nodejs-ex.git" sourceSecret: name: "mysecret"

9.7. Dockerfile Source

When the BuildConfig.spec.source.type is Dockerfile, an inline Dockerfile is used as the build input, and no additional sources can be provided.

This source type is valid when the build strategy type is Docker or Custom.

The source definition is part of the spec section in the BuildConfig:

source:

type: "Dockerfile"

dockerfile: "FROM centos:7\nRUN yum install -y httpd" - 1

- The

dockerfilefield contains an inline Dockerfile that will be built.

9.8. Binary Source

When the BuildConfig.spec.source.type is Binary, the build will expect a binary as input, and an inline Dockerfile is optional.

The binary is generally assumed to be a tar, gzipped tar, or zip file depending on the strategy. For Docker builds, this is the build context and an optional Dockerfile may be specified to override any Dockerfile in the build context. For Source builds, this is assumed to be an archive as described above. For Source and Docker builds, if binary.asFile is set the build context will consist of a single file named by the value of binary.asFile. The contextDir field may be used when an archive is provided. Custom builds will receive this binary as input on standard input (stdin).

A binary source potentially extracts content (if asFile is not set), in which case contextDir allows changing to a subdirectory within the content before the build executes.

The source definition is part of the spec section in the BuildConfig:

source:

git:

uri: https://github.com/sclorg/nodejs-ex.git

secrets:

- secret:

name: secret-npmrc

type: GitTo include the secrets in a new build configuration, run the following command:

$ oc new-build openshift/nodejs-010-centos7~https://github.com/sclorg/nodejs-ex.git

During the build, the .npmrc file is copied into the directory where the source code is located. In case of the OpenShift Enterprise S2I builder images, this is the image working directory, which is set using the WORKDIR instruction in the Dockerfile. If you want to specify another directory, add a destinationDir to the secret definition:

source:

git:

uri: https://github.com/sclorg/nodejs-ex.git

secrets:

- secret:

name: secret-npmrc

destinationDir: /etc

type: GitYou can also specify the destination directory when creating a new build configuration:

$ oc new-build openshift/nodejs-010-centos7~https://github.com/sclorg/nodejs-ex.git- The

binaryfield specifies the details of the binary source. - The

asFilefield specifies the name of the file that will be created with the binary contents. - The

contextDirfield specifies a subdirectory within the extracted contents of a binary archive. - If the optional

dockerfilefield is provided, it should be a string containing an inline Dockerfile that potentially replaces one within the contents of the binary archive.

9.9. Starting a Build

Manually start a new build from an existing build configuration in your current project using the following command:

$ oc start-build <buildconfig_name>

Re-run a build using the --from-build flag:

$ oc start-build --from-build=<build_name>

Specify the --follow flag to stream the build’s logs in stdout:

$ oc start-build <buildconfig_name> --follow

Specify the --env flag to set any desired environment variable for the build:

$ oc start-build <buildconfig_name> --env=<key>=<value>

Rather than relying on a Git source pull or a Dockerfile for a build, you can can also start a build by directly pushing your source, which could be the contents of a Git or SVN working directory, a set of prebuilt binary artifacts you want to deploy, or a single file. This can be done by specifying one of the following options for the start-build command:

| Option | Description |

|---|---|

|

| Specifies a directory that will be archived and used as a binary input for the build. |

|

| Specifies a single file that will be the only file in the build source. The file is placed in the root of an empty directory with the same file name as the original file provided. |

|

|

Specifies a path to a local repository to use as the binary input for a build. Add the |

When passing any of these options directly to the build, the contents are streamed to the build and override the current build source settings.

Builds triggered from binary input will not preserve the source on the server, so rebuilds triggered by base image changes will use the source specified in the build configuration.

For example, the following command sends the contents of a local Git repository as an archive from the tag v2 and starts a build:

$ oc start-build hello-world --from-repo=../hello-world --commit=v29.10. Canceling a Build

Manually cancel a build using the web console, or with the following CLI command:

$ oc cancel-build <build_name>9.11. Viewing Build Details