Dieser Inhalt ist in der von Ihnen ausgewählten Sprache nicht verfügbar.

Chapter 25. OVN-Kubernetes network plugin

25.1. About the OVN-Kubernetes network plugin

The OpenShift Container Platform cluster uses a virtualized network for pod and service networks.

Part of Red Hat OpenShift Networking, the OVN-Kubernetes network plugin is the default network provider for OpenShift Container Platform. OVN-Kubernetes is based on Open Virtual Network (OVN) and provides an overlay-based networking implementation.

For a cloud controller manager (CCM) with the --cloud-provider=external option set to cloud-provider-vsphere, a known issue exists for a cluster that operates in a networking environment with multiple subnets.

When you upgrade your cluster from OpenShift Container Platform 4.12 to OpenShift Container Platform 4.13, the CCM selects a wrong node IP address and this operation generates an error message in the namespaces/openshift-cloud-controller-manager/pods/vsphere-cloud-controller-manager logs. The error message indicates a mismatch with the node IP address and the vsphere-cloud-controller-manager pod IP address in your cluster.

The known issue might not impact the cluster upgrade operation, but you can set the correct IP address in both the nodeNetworking.external.networkSubnetCidr and the nodeNetworking.internal.networkSubnetCidr parameters for the nodeNetworking object that your cluster uses for its networking requirements.

A cluster that uses the OVN-Kubernetes plugin also runs Open vSwitch (OVS) on each node. OVN configures OVS on each node to implement the declared network configuration.

OVN-Kubernetes is the default networking solution for OpenShift Container Platform and single-node OpenShift deployments.

OVN-Kubernetes, which arose from the OVS project, uses many of the same constructs, such as open flow rules, to determine how packets travel through the network. For more information, see the Open Virtual Network website.

OVN-Kubernetes is a series of daemons for OVS that translate virtual network configurations into OpenFlow rules. OpenFlow is a protocol for communicating with network switches and routers, providing a means for remotely controlling the flow of network traffic on a network device, allowing network administrators to configure, manage, and monitor the flow of network traffic.

OVN-Kubernetes provides more of the advanced functionality not available with OpenFlow. OVN supports distributed virtual routing, distributed logical switches, access control, DHCP and DNS. OVN implements distributed virtual routing within logic flows which equate to open flows. So for example if you have a pod that sends out a DHCP request on the network, it sends out that broadcast looking for DHCP address there will be a logic flow rule that matches that packet, and it responds giving it a gateway, a DNS server an IP address and so on.

OVN-Kubernetes runs a daemon on each node. There are daemon sets for the databases and for the OVN controller that run on every node. The OVN controller programs the Open vSwitch daemon on the nodes to support the network provider features; egress IPs, firewalls, routers, hybrid networking, IPSEC encryption, IPv6, network policy, network policy logs, hardware offloading and multicast.

25.1.1. OVN-Kubernetes purpose

The OVN-Kubernetes network plugin is an open-source, fully-featured Kubernetes CNI plugin that uses Open Virtual Network (OVN) to manage network traffic flows. OVN is a community developed, vendor-agnostic network virtualization solution. The OVN-Kubernetes network plugin:

- Uses OVN (Open Virtual Network) to manage network traffic flows. OVN is a community developed, vendor-agnostic network virtualization solution.

- Implements Kubernetes network policy support, including ingress and egress rules.

- Uses the Geneve (Generic Network Virtualization Encapsulation) protocol rather than VXLAN to create an overlay network between nodes.

The OVN-Kubernetes network plugin provides the following advantages over OpenShift SDN.

- Full support for IPv6 single-stack and IPv4/IPv6 dual-stack networking on supported platforms

- Support for hybrid clusters with both Linux and Microsoft Windows workloads

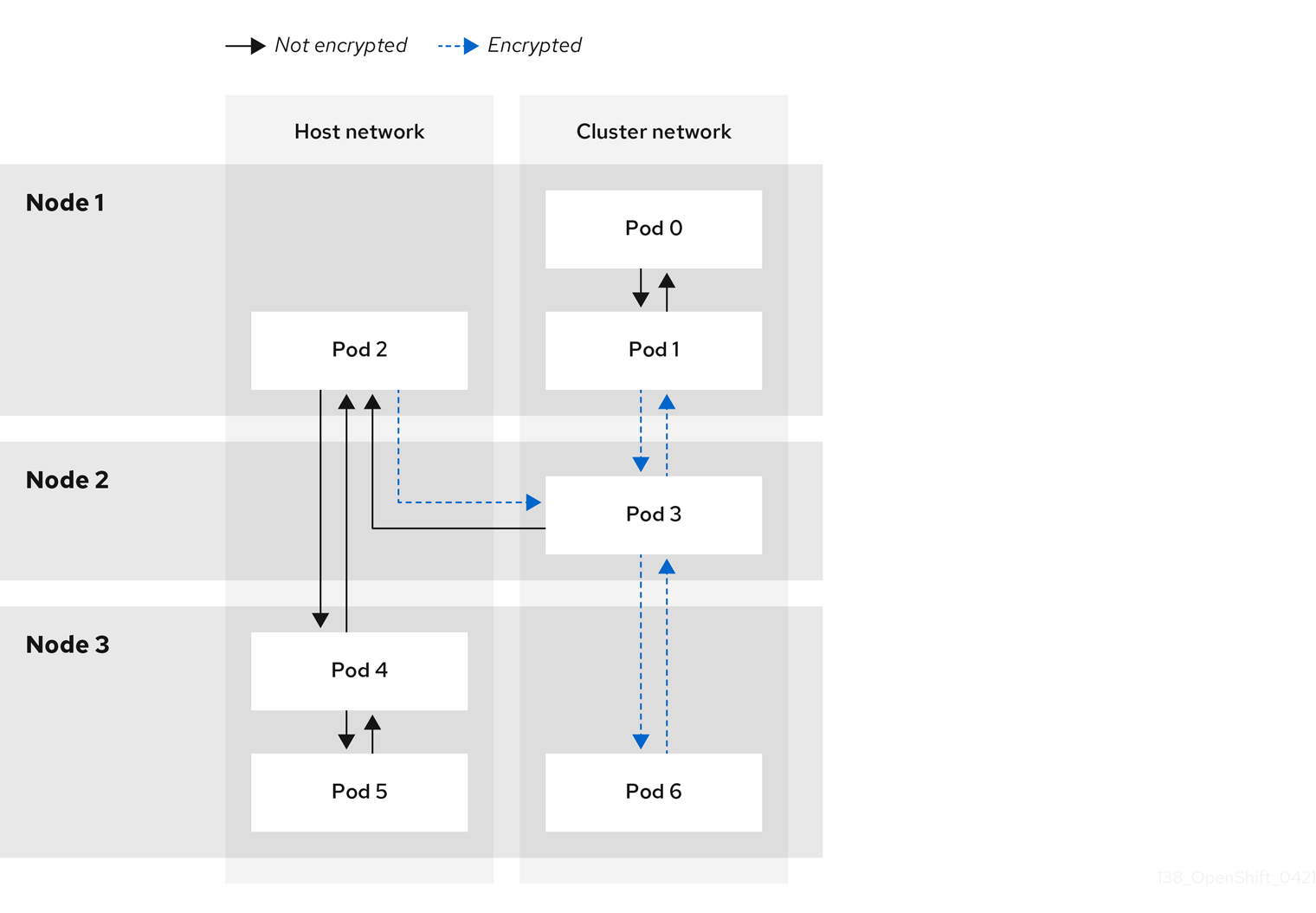

- Optional IPsec encryption of intra-cluster communications

- Offload of network data processing from host CPU to compatible network cards and data processing units (DPUs)

25.1.2. Supported network plugin feature matrix

Red Hat OpenShift Networking offers two options for the network plugin, OpenShift SDN and OVN-Kubernetes, for the network plugin. The following table summarizes the current feature support for both network plugins:

| Feature | OpenShift SDN | OVN-Kubernetes |

|---|---|---|

| Egress IPs | Supported | Supported |

| Egress firewall | Supported | Supported [1] |

| Egress router | Supported | Supported [2] |

| Hybrid networking | Not supported | Supported |

| IPsec encryption for intra-cluster communication | Not supported | Supported |

| IPv4 single-stack | Supported | Supported |

| IPv6 single-stack | Not supported | Supported [3] |

| IPv4/IPv6 dual-stack | Not Supported | Supported [4] |

| IPv6/IPv4 dual-stack | Not supported | Supported [5] |

| Kubernetes network policy | Supported | Supported |

| Kubernetes network policy logs | Not supported | Supported |

| Hardware offloading | Not supported | Supported |

| Multicast | Supported | Supported |

- Egress firewall is also known as egress network policy in OpenShift SDN. This is not the same as network policy egress.

- Egress router for OVN-Kubernetes supports only redirect mode.

- IPv6 single-stack networking on a bare-metal platform.

- IPv4/IPv6 dual-stack networking on bare-metal, VMware vSphere (installer-provisioned infrastructure installations only), IBM Power®, and IBM Z® platforms. On VMware vSphere, dual-stack networking limitations exist.

- IPv6/IPv4 dual-stack networking on bare-metal and IBM Power® platforms.

25.1.3. OVN-Kubernetes IPv6 and dual-stack limitations

The OVN-Kubernetes network plugin has the following limitations:

For clusters configured for dual-stack networking, both IPv4 and IPv6 traffic must use the same network interface as the default gateway. If this requirement is not met, pods on the host in the

ovnkube-nodedaemon set enter theCrashLoopBackOffstate. If you display a pod with a command such asoc get pod -n openshift-ovn-kubernetes -l app=ovnkube-node -o yaml, thestatusfield contains more than one message about the default gateway, as shown in the following output:I1006 16:09:50.985852 60651 helper_linux.go:73] Found default gateway interface br-ex 192.168.127.1 I1006 16:09:50.985923 60651 helper_linux.go:73] Found default gateway interface ens4 fe80::5054:ff:febe:bcd4 F1006 16:09:50.985939 60651 ovnkube.go:130] multiple gateway interfaces detected: br-ex ens4The only resolution is to reconfigure the host networking so that both IP families use the same network interface for the default gateway.

For clusters configured for dual-stack networking, both the IPv4 and IPv6 routing tables must contain the default gateway. If this requirement is not met, pods on the host in the

ovnkube-nodedaemon set enter theCrashLoopBackOffstate. If you display a pod with a command such asoc get pod -n openshift-ovn-kubernetes -l app=ovnkube-node -o yaml, thestatusfield contains more than one message about the default gateway, as shown in the following output:I0512 19:07:17.589083 108432 helper_linux.go:74] Found default gateway interface br-ex 192.168.123.1 F0512 19:07:17.589141 108432 ovnkube.go:133] failed to get default gateway interfaceThe only resolution is to reconfigure the host networking so that both IP families contain the default gateway.

25.1.4. Session affinity

Session affinity is a feature that applies to Kubernetes Service objects. You can use session affinity if you want to ensure that each time you connect to a <service_VIP>:<Port>, the traffic is always load balanced to the same back end. For more information, including how to set session affinity based on a client’s IP address, see Session affinity.

Stickiness timeout for session affinity

The OVN-Kubernetes network plugin for OpenShift Container Platform calculates the stickiness timeout for a session from a client based on the last packet. For example, if you run a curl command 10 times, the sticky session timer starts from the tenth packet not the first. As a result, if the client is continuously contacting the service, then the session never times out. The timeout starts when the service has not received a packet for the amount of time set by the timeoutSeconds parameter.

25.2. OVN-Kubernetes architecture

25.2.1. Introduction to OVN-Kubernetes architecture

The following diagram shows the OVN-Kubernetes architecture.

Figure 25.1. OVK-Kubernetes architecture

The key components are:

- Cloud Management System (CMS) - A platform specific client for OVN that provides a CMS specific plugin for OVN integration. The plugin translates the cloud management system’s concept of the logical network configuration, stored in the CMS configuration database in a CMS-specific format, into an intermediate representation understood by OVN.

-

OVN Northbound database (

nbdb) - Stores the logical network configuration passed by the CMS plugin. -

OVN Southbound database (

sbdb) - Stores the physical and logical network configuration state for OpenVswitch (OVS) system on each node, including tables that bind them. -

ovn-northd - This is the intermediary client between

nbdbandsbdb. It translates the logical network configuration in terms of conventional network concepts, taken from thenbdb, into logical data path flows in thesbdbbelow it. The container name isnorthdand it runs in theovnkube-masterpods. -

ovn-controller - This is the OVN agent that interacts with OVS and hypervisors, for any information or update that is needed for

sbdb. Theovn-controllerreads logical flows from thesbdb, translates them intoOpenFlowflows and sends them to the node’s OVS daemon. The container name isovn-controllerand it runs in theovnkube-nodepods.

The OVN northbound database has the logical network configuration passed down to it by the cloud management system (CMS). The OVN northbound Database contains the current desired state of the network, presented as a collection of logical ports, logical switches, logical routers, and more. The ovn-northd (northd container) connects to the OVN northbound database and the OVN southbound database. It translates the logical network configuration in terms of conventional network concepts, taken from the OVN northbound Database, into logical data path flows in the OVN southbound database.

The OVN southbound database has physical and logical representations of the network and binding tables that link them together. Every node in the cluster is represented in the southbound database, and you can see the ports that are connected to it. It also contains all the logic flows, the logic flows are shared with the ovn-controller process that runs on each node and the ovn-controller turns those into OpenFlow rules to program Open vSwitch.

The Kubernetes control plane nodes each contain an ovnkube-master pod which hosts containers for the OVN northbound and southbound databases. All OVN northbound databases form a Raft cluster and all southbound databases form a separate Raft cluster. At any given time a single ovnkube-master is the leader and the other ovnkube-master pods are followers.

25.2.2. Listing all resources in the OVN-Kubernetes project

Finding the resources and containers that run in the OVN-Kubernetes project is important to help you understand the OVN-Kubernetes networking implementation.

Prerequisites

-

Access to the cluster as a user with the

cluster-adminrole. -

The OpenShift CLI (

oc) installed.

Procedure

Run the following command to get all resources, endpoints, and

ConfigMapsin the OVN-Kubernetes project:$ oc get all,ep,cm -n openshift-ovn-kubernetesExample output

NAME READY STATUS RESTARTS AGE pod/ovnkube-master-9g7zt 6/6 Running 1 (48m ago) 57m pod/ovnkube-master-lqs4v 6/6 Running 0 57m pod/ovnkube-master-vxhtq 6/6 Running 0 57m pod/ovnkube-node-9k9kc 5/5 Running 0 57m pod/ovnkube-node-jg52r 5/5 Running 0 51m pod/ovnkube-node-k8wf7 5/5 Running 0 57m pod/ovnkube-node-tlwk6 5/5 Running 0 47m pod/ovnkube-node-xsvnk 5/5 Running 0 57m NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/ovn-kubernetes-master ClusterIP None <none> 9102/TCP 57m service/ovn-kubernetes-node ClusterIP None <none> 9103/TCP,9105/TCP 57m service/ovnkube-db ClusterIP None <none> 9641/TCP,9642/TCP 57m NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE daemonset.apps/ovnkube-master 3 3 3 3 3 beta.kubernetes.io/os=linux,node-role.kubernetes.io/master= 57m daemonset.apps/ovnkube-node 5 5 5 5 5 beta.kubernetes.io/os=linux 57m NAME ENDPOINTS AGE endpoints/ovn-kubernetes-master 10.0.132.11:9102,10.0.151.18:9102,10.0.192.45:9102 57m endpoints/ovn-kubernetes-node 10.0.132.11:9105,10.0.143.72:9105,10.0.151.18:9105 + 7 more... 57m endpoints/ovnkube-db 10.0.132.11:9642,10.0.151.18:9642,10.0.192.45:9642 + 3 more... 57m NAME DATA AGE configmap/control-plane-status 1 55m configmap/kube-root-ca.crt 1 57m configmap/openshift-service-ca.crt 1 57m configmap/ovn-ca 1 57m configmap/ovnkube-config 1 57m configmap/signer-ca 1 57mThere are three

ovnkube-mastersthat run on the control plane nodes, and two daemon sets used to deploy theovnkube-masterandovnkube-nodepods. There is oneovnkube-nodepod for each node in the cluster. Theovnkube-configConfigMaphas the OpenShift Container Platform OVN-Kubernetes configurations started by online-master andovnkube-node.List all the containers in the

ovnkube-masterpods by running the following command:$ oc get pods ovnkube-master-9g7zt \ -o jsonpath='{.spec.containers[*].name}' -n openshift-ovn-kubernetesExpected output

northd nbdb kube-rbac-proxy sbdb ovnkube-master ovn-dbcheckerThe

ovnkube-masterpod is made up of several containers. It is responsible for hosting the northbound database (nbdbcontainer), the southbound database (sbdbcontainer), watching for cluster events for pods, egressIP, namespaces, services, endpoints, egress firewall, and network policy and writing them to the northbound database (ovnkube-masterpod), as well as managing pod subnet allocation to nodes.List all the containers in the

ovnkube-nodepods by running the following command:$ oc get pods ovnkube-node-jg52r \ -o jsonpath='{.spec.containers[*].name}' -n openshift-ovn-kubernetesExpected output

ovn-controller ovn-acl-logging kube-rbac-proxy kube-rbac-proxy-ovn-metrics ovnkube-nodeThe

ovnkube-nodepod has a container (ovn-controller) that resides on each OpenShift Container Platform node. Each node’sovn-controllerconnects the OVN northbound to the OVN southbound database to learn about the OVN configuration. Theovn-controllerconnects southbound toovs-vswitchdas an OpenFlow controller, for control over network traffic, and to the localovsdb-serverto allow it to monitor and control Open vSwitch configuration.List the currently elected OVN-Kubernetes master leader by running the following command:

$ oc get lease -n openshift-ovn-kubernetesExpected output

NAME HOLDER AGE ovn-kubernetes-master ci-ln-gz990pb-72292-rthz2-master-2 50m

25.2.3. Listing the OVN-Kubernetes northbound database contents

To understand logic flow rules you need to examine the northbound database and understand what objects are there to see how they are translated into logic flow rules. The up to date information is present on the OVN Raft leader and this procedure describes how to find the Raft leader and subsequently query it to list the OVN northbound database contents.

Prerequisites

-

Access to the cluster as a user with the

cluster-adminrole. -

The OpenShift CLI (

oc) installed.

Procedure

Find the OVN Raft leader for the northbound database.

NoteThe Raft leader stores the most up to date information.

List the pods by running the following command:

$ oc get po -n openshift-ovn-kubernetesExample output

NAME READY STATUS RESTARTS AGE ovnkube-master-7j97q 6/6 Running 2 (148m ago) 149m ovnkube-master-gt4ms 6/6 Running 1 (140m ago) 147m ovnkube-master-mk6p6 6/6 Running 0 148m ovnkube-node-8qvtr 5/5 Running 0 149m ovnkube-node-fqdc9 5/5 Running 0 149m ovnkube-node-tlfwv 5/5 Running 0 149m ovnkube-node-wlwkn 5/5 Running 0 142mChoose one of the master pods at random and run the following command:

$ oc exec -n openshift-ovn-kubernetes ovnkube-master-7j97q \ -- /usr/bin/ovn-appctl -t /var/run/ovn/ovnnb_db.ctl \ --timeout=3 cluster/status OVN_NorthboundExample output

Defaulted container "northd" out of: northd, nbdb, kube-rbac-proxy, sbdb, ovnkube-master, ovn-dbchecker 1c57 Name: OVN_Northbound Cluster ID: c48a (c48aa5c0-a704-4c77-a066-24fe99d9b338) Server ID: 1c57 (1c57b6fc-2849-49b7-8679-fbf18bafe339) Address: ssl:10.0.147.219:9643 Status: cluster member Role: follower1 Term: 5 Leader: 2b4f2 Vote: unknown Election timer: 10000 Log: [2, 3018] Entries not yet committed: 0 Entries not yet applied: 0 Connections: ->0000 ->0000 <-8844 <-2b4f Disconnections: 0 Servers: 1c57 (1c57 at ssl:10.0.147.219:9643) (self) 8844 (8844 at ssl:10.0.163.212:9643) last msg 8928047 ms ago 2b4f (2b4f at ssl:10.0.242.240:9643) last msg 620 ms ago3 Find the

ovnkube-masterpod running on IP Address10.0.242.240using the following command:$ oc get po -o wide -n openshift-ovn-kubernetes | grep 10.0.242.240 | grep -v ovnkube-nodeExample output

ovnkube-master-gt4ms 6/6 Running 1 (143m ago) 150m 10.0.242.240 ip-10-0-242-240.ec2.internal <none> <none>The

ovnkube-master-gt4mspod runs on IP Address 10.0.242.240.

Run the following command to show all the objects in the northbound database:

$ oc exec -n openshift-ovn-kubernetes -it ovnkube-master-gt4ms \ -c northd -- ovn-nbctl showThe output is too long to list here. The list includes the NAT rules, logical switches, load balancers and so on.

Run the following command to display the options available with the command

ovn-nbctl:$ oc exec -n openshift-ovn-kubernetes -it ovnkube-master-mk6p6 \ -c northd ovn-nbctl --helpYou can narrow down and focus on specific components by using some of the following commands:

Run the following command to show the list of logical routers:

$ oc exec -n openshift-ovn-kubernetes -it ovnkube-master-gt4ms \ -c northd -- ovn-nbctl lr-listExample output

f971f1f3-5112-402f-9d1e-48f1d091ff04 (GR_ip-10-0-145-205.ec2.internal) 69c992d8-a4cf-429e-81a3-5361209ffe44 (GR_ip-10-0-147-219.ec2.internal) 7d164271-af9e-4283-b84a-48f2a44851cd (GR_ip-10-0-163-212.ec2.internal) 111052e3-c395-408b-97b2-8dd0a20a29a5 (GR_ip-10-0-165-9.ec2.internal) ed50ce33-df5d-48e8-8862-2df6a59169a0 (GR_ip-10-0-209-170.ec2.internal) f44e2a96-8d1e-4a4d-abae-ed8728ac6851 (GR_ip-10-0-242-240.ec2.internal) ef3d0057-e557-4b1a-b3c6-fcc3463790b0 (ovn_cluster_router)NoteFrom this output you can see there is router on each node plus an

ovn_cluster_router.Run the following command to show the list of logical switches:

$ oc exec -n openshift-ovn-kubernetes -it ovnkube-master-gt4ms \ -c northd -- ovn-nbctl ls-listExample output

82808c5c-b3bc-414a-bb59-8fec4b07eb14 (ext_ip-10-0-145-205.ec2.internal) 3d22444f-0272-4c51-afc6-de9e03db3291 (ext_ip-10-0-147-219.ec2.internal) bf73b9df-59ab-4c58-a456-ce8205b34ac5 (ext_ip-10-0-163-212.ec2.internal) bee1e8d0-ec87-45eb-b98b-63f9ec213e5e (ext_ip-10-0-165-9.ec2.internal) 812f08f2-6476-4abf-9a78-635f8516f95e (ext_ip-10-0-209-170.ec2.internal) f65e710b-32f9-482b-8eab-8d96a44799c1 (ext_ip-10-0-242-240.ec2.internal) 84dad700-afb8-4129-86f9-923a1ddeace9 (ip-10-0-145-205.ec2.internal) 1b7b448b-e36c-4ca3-9f38-4a2cf6814bfd (ip-10-0-147-219.ec2.internal) d92d1f56-2606-4f23-8b6a-4396a78951de (ip-10-0-163-212.ec2.internal) 6864a6b2-de15-4de3-92d8-f95014b6f28f (ip-10-0-165-9.ec2.internal) c26bf618-4d7e-4afd-804f-1a2cbc96ec6d (ip-10-0-209-170.ec2.internal) ab9a4526-44ed-4f82-ae1c-e20da04947d9 (ip-10-0-242-240.ec2.internal) a8588aba-21da-4276-ba0f-9d68e88911f0 (join)NoteFrom this output you can see there is an ext switch for each node plus switches with the node name itself and a join switch.

Run the following command to show the list of load balancers:

$ oc exec -n openshift-ovn-kubernetes -it ovnkube-master-gt4ms \ -c northd -- ovn-nbctl lb-listExample output

UUID LB PROTO VIP IPs f0fb50f9-4968-4b55-908c-616bae4db0a2 Service_default/ tcp 172.30.0.1:443 10.0.147.219:6443,10.0.163.212:6443,169.254.169.2:6443 0dc42012-4f5b-432e-ae01-2cc4bfe81b00 Service_default/ tcp 172.30.0.1:443 10.0.147.219:6443,169.254.169.2:6443,10.0.242.240:6443 f7fff5d5-5eff-4a40-98b1-3a4ba8f7f69c Service_default/ tcp 172.30.0.1:443 169.254.169.2:6443,10.0.163.212:6443,10.0.242.240:6443 12fe57a0-50a4-4a1b-ac10-5f288badee07 Service_default/ tcp 172.30.0.1:443 10.0.147.219:6443,10.0.163.212:6443,10.0.242.240:6443 3f137fbf-0b78-4875-ba44-fbf89f254cf7 Service_openshif tcp 172.30.23.153:443 10.130.0.14:8443 174199fe-0562-4141-b410-12094db922a7 Service_openshif tcp 172.30.69.51:50051 10.130.0.84:50051 5ee2d4bd-c9e2-4d16-a6df-f54cd17c9ac3 Service_openshif tcp 172.30.143.87:9001 10.0.145.205:9001,10.0.147.219:9001,10.0.163.212:9001,10.0.165.9:9001,10.0.209.170:9001,10.0.242.240:9001 a056ae3d-83f8-45bc-9c80-ef89bce7b162 Service_openshif tcp 172.30.164.74:443 10.0.147.219:6443,10.0.163.212:6443,10.0.242.240:6443 bac51f3d-9a6f-4f5e-ac02-28fd343a332a Service_openshif tcp 172.30.0.10:53 10.131.0.6:5353 tcp 172.30.0.10:9154 10.131.0.6:9154 48105bbc-51d7-4178-b975-417433f9c20a Service_openshif tcp 172.30.26.159:2379 10.0.147.219:2379,169.254.169.2:2379,10.0.242.240:2379 tcp 172.30.26.159:9979 10.0.147.219:9979,169.254.169.2:9979,10.0.242.240:9979 7de2b8fc-342a-415f-ac13-1a493f4e39c0 Service_openshif tcp 172.30.53.219:443 10.128.0.7:8443 tcp 172.30.53.219:9192 10.128.0.7:9192 2cef36bc-d720-4afb-8d95-9350eff1d27a Service_openshif tcp 172.30.81.66:443 10.128.0.23:8443 365cb6fb-e15e-45a4-a55b-21868b3cf513 Service_openshif tcp 172.30.96.51:50051 10.130.0.19:50051 41691cbb-ec55-4cdb-8431-afce679c5e8d Service_openshif tcp 172.30.98.218:9099 169.254.169.2:9099 82df10ba-8143-400b-977a-8f5f416a4541 Service_openshif tcp 172.30.26.159:2379 10.0.147.219:2379,10.0.163.212:2379,169.254.169.2:2379 tcp 172.30.26.159:9979 10.0.147.219:9979,10.0.163.212:9979,169.254.169.2:9979 debe7f3a-39a8-490e-bc0a-ebbfafdffb16 Service_openshif tcp 172.30.23.244:443 10.128.0.48:8443,10.129.0.27:8443,10.130.0.45:8443 8a749239-02d9-4dc2-8737-716528e0da7b Service_openshif tcp 172.30.124.255:8443 10.128.0.14:8443 880c7c78-c790-403d-a3cb-9f06592717a3 Service_openshif tcp 172.30.0.10:53 10.130.0.20:5353 tcp 172.30.0.10:9154 10.130.0.20:9154 d2f39078-6751-4311-a161-815bbaf7f9c7 Service_openshif tcp 172.30.26.159:2379 169.254.169.2:2379,10.0.163.212:2379,10.0.242.240:2379 tcp 172.30.26.159:9979 169.254.169.2:9979,10.0.163.212:9979,10.0.242.240:9979 30948278-602b-455c-934a-28e64c46de12 Service_openshif tcp 172.30.157.35:9443 10.130.0.43:9443 2cc7e376-7c02-4a82-89e8-dfa1e23fb003 Service_openshif tcp 172.30.159.212:17698 10.128.0.48:17698,10.129.0.27:17698,10.130.0.45:17698 e7d22d35-61c2-40c2-bc30-265cff8ed18d Service_openshif tcp 172.30.143.87:9001 10.0.145.205:9001,10.0.147.219:9001,10.0.163.212:9001,10.0.165.9:9001,10.0.209.170:9001,169.254.169.2:9001 75164e75-e0c5-40fb-9636-bfdbf4223a02 Service_openshif tcp 172.30.150.68:1936 10.129.4.8:1936,10.131.0.10:1936 tcp 172.30.150.68:443 10.129.4.8:443,10.131.0.10:443 tcp 172.30.150.68:80 10.129.4.8:80,10.131.0.10:80 7bc4ee74-dccf-47e9-9149-b011f09aff39 Service_openshif tcp 172.30.164.74:443 10.0.147.219:6443,10.0.163.212:6443,169.254.169.2:6443 0db59e74-1cc6-470c-bf44-57c520e0aa8f Service_openshif tcp 10.0.163.212:31460 tcp 10.0.163.212:32361 c300e134-018c-49af-9f84-9deb1d0715f8 Service_openshif tcp 172.30.42.244:50051 10.130.0.47:50051 5e352773-429b-4881-afb3-a13b7ba8b081 Service_openshif tcp 172.30.244.66:443 10.129.0.8:8443,10.130.0.8:8443 54b82d32-1939-4465-a87d-f26321442a7a Service_openshif tcp 172.30.12.9:8443 10.128.0.35:8443NoteFrom this truncated output you can see there are many OVN-Kubernetes load balancers. Load balancers in OVN-Kubernetes are representations of services.

25.2.4. Command-line arguments for ovn-nbctl to examine northbound database contents

The following table describes the command-line arguments that can be used with ovn-nbctl to examine the contents of the northbound database.

| Argument | Description |

|---|---|

|

| An overview of the northbound database contents. |

|

| Show the details associated with the specified switch or router. |

|

| Show the logical routers. |

|

|

Using the router information from |

|

| Show network address translation details for the specified router. |

|

| Show the logical switches |

|

|

Using the switch information from |

|

| Get the type for the logical port. |

|

| Show the load balancers. |

25.2.5. Listing the OVN-Kubernetes southbound database contents

Logic flow rules are stored in the southbound database that is a representation of your infrastructure. The up to date information is present on the OVN Raft leader and this procedure describes how to find the Raft leader and query it to list the OVN southbound database contents.

Prerequisites

-

Access to the cluster as a user with the

cluster-adminrole. -

The OpenShift CLI (

oc) installed.

Procedure

Find the OVN Raft leader for the southbound database.

NoteThe Raft leader stores the most up to date information.

List the pods by running the following command:

$ oc get po -n openshift-ovn-kubernetesExample output

NAME READY STATUS RESTARTS AGE ovnkube-master-7j97q 6/6 Running 2 (134m ago) 135m ovnkube-master-gt4ms 6/6 Running 1 (126m ago) 133m ovnkube-master-mk6p6 6/6 Running 0 134m ovnkube-node-8qvtr 5/5 Running 0 135m ovnkube-node-bqztb 5/5 Running 0 117m ovnkube-node-fqdc9 5/5 Running 0 135m ovnkube-node-tlfwv 5/5 Running 0 135m ovnkube-node-wlwkn 5/5 Running 0 128mChoose one of the master pods at random and run the following command to find the OVN southbound Raft leader:

$ oc exec -n openshift-ovn-kubernetes ovnkube-master-7j97q \ -- /usr/bin/ovn-appctl -t /var/run/ovn/ovnsb_db.ctl \ --timeout=3 cluster/status OVN_SouthboundExample output

Defaulted container "northd" out of: northd, nbdb, kube-rbac-proxy, sbdb, ovnkube-master, ovn-dbchecker 1930 Name: OVN_Southbound Cluster ID: f772 (f77273c0-7986-42dd-bd3c-a9f18e25701f) Server ID: 1930 (1930f4b7-314b-406f-9dcb-b81fe2729ae1) Address: ssl:10.0.147.219:9644 Status: cluster member Role: follower1 Term: 3 Leader: 70812 Vote: unknown Election timer: 16000 Log: [2, 2423] Entries not yet committed: 0 Entries not yet applied: 0 Connections: ->0000 ->7145 <-7081 <-7145 Disconnections: 0 Servers: 7081 (7081 at ssl:10.0.163.212:9644) last msg 59 ms ago3 1930 (1930 at ssl:10.0.147.219:9644) (self) 7145 (7145 at ssl:10.0.242.240:9644) last msg 7871735 ms agoFind the

ovnkube-masterpod running on IP Address10.0.163.212using the following command:$ oc get po -o wide -n openshift-ovn-kubernetes | grep 10.0.163.212 | grep -v ovnkube-nodeExample output

ovnkube-master-mk6p6 6/6 Running 0 136m 10.0.163.212 ip-10-0-163-212.ec2.internal <none> <none>The

ovnkube-master-mk6p6pod runs on IP Address 10.0.163.212.

Run the following command to show all the information stored in the southbound database:

$ oc exec -n openshift-ovn-kubernetes -it ovnkube-master-mk6p6 \ -c northd -- ovn-sbctl showExample output

Chassis "8ca57b28-9834-45f0-99b0-96486c22e1be" hostname: ip-10-0-156-16.ec2.internal Encap geneve ip: "10.0.156.16" options: {csum="true"} Port_Binding k8s-ip-10-0-156-16.ec2.internal Port_Binding etor-GR_ip-10-0-156-16.ec2.internal Port_Binding jtor-GR_ip-10-0-156-16.ec2.internal Port_Binding openshift-ingress-canary_ingress-canary-hsblx Port_Binding rtoj-GR_ip-10-0-156-16.ec2.internal Port_Binding openshift-monitoring_prometheus-adapter-658fc5967-9l46x Port_Binding rtoe-GR_ip-10-0-156-16.ec2.internal Port_Binding openshift-multus_network-metrics-daemon-77nvz Port_Binding openshift-ingress_router-default-64fd8c67c7-df598 Port_Binding openshift-dns_dns-default-ttpcq Port_Binding openshift-monitoring_alertmanager-main-0 Port_Binding openshift-e2e-loki_loki-promtail-g2pbh Port_Binding openshift-network-diagnostics_network-check-target-m6tn4 Port_Binding openshift-monitoring_thanos-querier-75b5cf8dcb-qf8qj Port_Binding cr-rtos-ip-10-0-156-16.ec2.internal Port_Binding openshift-image-registry_image-registry-7b7bc44566-mp9b8This detailed output shows the chassis and the ports that are attached to the chassis which in this case are all of the router ports and anything that runs like host networking. Any pods communicate out to the wider network using source network address translation (SNAT). Their IP address is translated into the IP address of the node that the pod is running on and then sent out into the network.

In addition to the chassis information the southbound database has all the logic flows and those logic flows are then sent to the

ovn-controllerrunning on each of the nodes. Theovn-controllertranslates the logic flows into open flow rules and ultimately programsOpenvSwitchso that your pods can then follow open flow rules and make it out of the network.Run the following command to display the options available with the command

ovn-sbctl:$ oc exec -n openshift-ovn-kubernetes -it ovnkube-master-mk6p6 \ -c northd -- ovn-sbctl --help

25.2.6. Command-line arguments for ovn-sbctl to examine southbound database contents

The following table describes the command-line arguments that can be used with ovn-sbctl to examine the contents of the southbound database.

| Argument | Description |

|---|---|

|

| Overview of the southbound database contents. |

|

| List the contents of southbound database for a the specified port . |

|

| List the logical flows. |

25.2.7. OVN-Kubernetes logical architecture

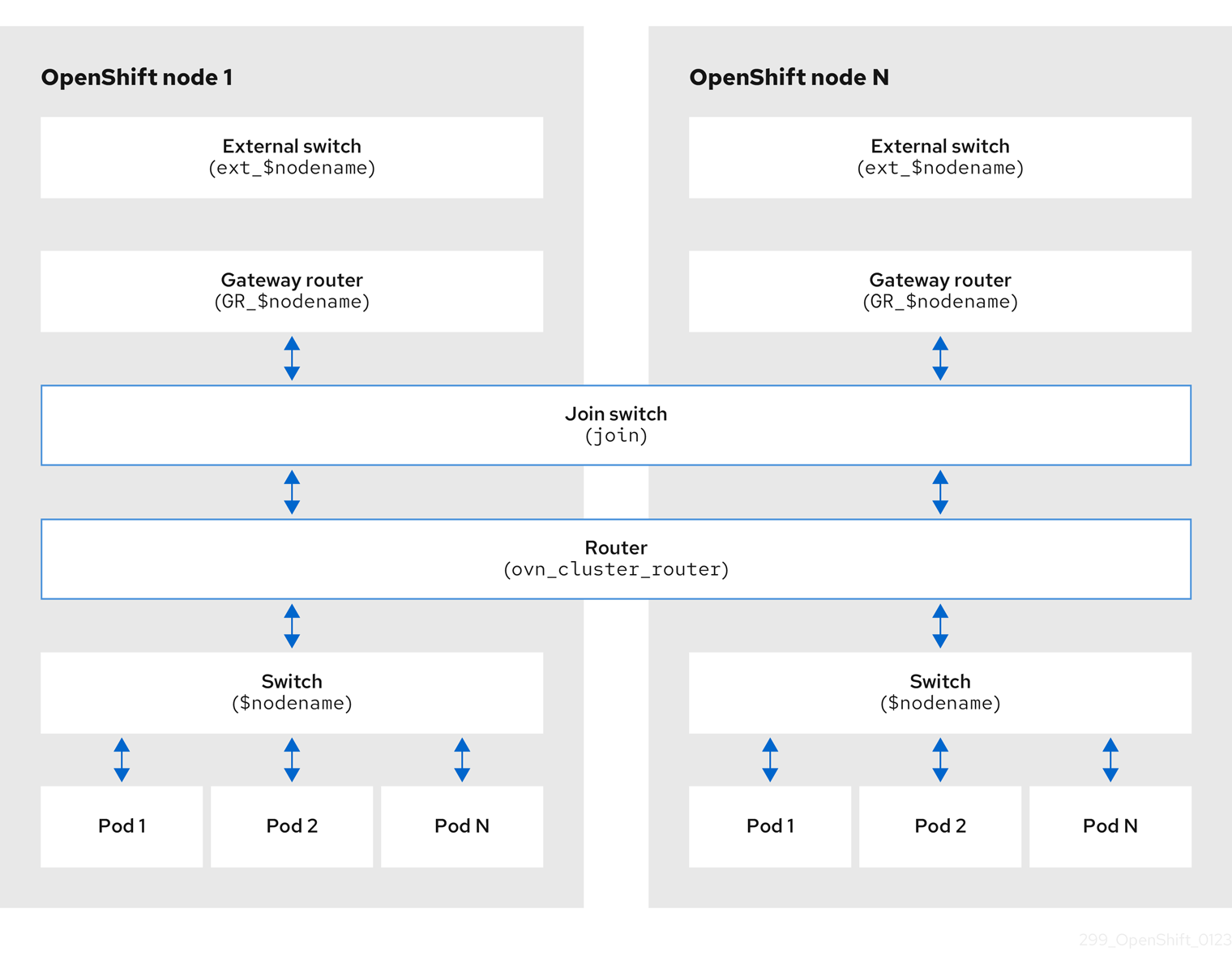

OVN is a network virtualization solution. It creates logical switches and routers. These switches and routers are interconnected to create any network topologies. When you run ovnkube-trace with the log level set to 2 or 5 the OVN-Kubernetes logical components are exposed. The following diagram shows how the routers and switches are connected in OpenShift Container Platform.

Figure 25.2. OVN-Kubernetes router and switch components

The key components involved in packet processing are:

- Gateway routers

-

Gateway routers sometimes called L3 gateway routers, are typically used between the distributed routers and the physical network. Gateway routers including their logical patch ports are bound to a physical location (not distributed), or chassis. The patch ports on this router are known as l3gateway ports in the ovn-southbound database (

ovn-sbdb). - Distributed logical routers

- Distributed logical routers and the logical switches behind them, to which virtual machines and containers attach, effectively reside on each hypervisor.

- Join local switch

- Join local switches are used to connect the distributed router and gateway routers. It reduces the number of IP addresses needed on the distributed router.

- Logical switches with patch ports

- Logical switches with patch ports are used to virtualize the network stack. They connect remote logical ports through tunnels.

- Logical switches with localnet ports

- Logical switches with localnet ports are used to connect OVN to the physical network. They connect remote logical ports by bridging the packets to directly connected physical L2 segments using localnet ports.

- Patch ports

- Patch ports represent connectivity between logical switches and logical routers and between peer logical routers. A single connection has a pair of patch ports at each such point of connectivity, one on each side.

- l3gateway ports

-

l3gateway ports are the port binding entries in the

ovn-sbdbfor logical patch ports used in the gateway routers. They are called l3gateway ports rather than patch ports just to portray the fact that these ports are bound to a chassis just like the gateway router itself. - localnet ports

-

localnet ports are present on the bridged logical switches that allows a connection to a locally accessible network from each

ovn-controllerinstance. This helps model the direct connectivity to the physical network from the logical switches. A logical switch can only have a single localnet port attached to it.

25.2.7.1. Installing network-tools on local host

Install network-tools on your local host to make a collection of tools available for debugging OpenShift Container Platform cluster network issues.

Procedure

Clone the

network-toolsrepository onto your workstation with the following command:$ git clone git@github.com:openshift/network-tools.gitChange into the directory for the repository you just cloned:

$ cd network-toolsOptional: List all available commands:

$ ./debug-scripts/network-tools -h

25.2.7.2. Running network-tools

Get information about the logical switches and routers by running network-tools.

Prerequisites

-

You installed the OpenShift CLI (

oc). -

You are logged in to the cluster as a user with

cluster-adminprivileges. -

You have installed

network-toolson local host.

Procedure

List the routers by running the following command:

$ ./debug-scripts/network-tools ovn-db-run-command ovn-nbctl lr-listExample output

Leader pod is ovnkube-master-vslqm 5351ddd1-f181-4e77-afc6-b48b0a9df953 (GR_helix13.lab.eng.tlv2.redhat.com) ccf9349e-1948-4df8-954e-39fb0c2d4d06 (GR_helix14.lab.eng.tlv2.redhat.com) e426b918-75a8-4220-9e76-20b7758f92b7 (GR_hlxcl7-master-0.hlxcl7.lab.eng.tlv2.redhat.com) dded77c8-0cc3-4b99-8420-56cd2ae6a840 (GR_hlxcl7-master-1.hlxcl7.lab.eng.tlv2.redhat.com) 4f6747e6-e7ba-4e0c-8dcd-94c8efa51798 (GR_hlxcl7-master-2.hlxcl7.lab.eng.tlv2.redhat.com) 52232654-336e-4952-98b9-0b8601e370b4 (ovn_cluster_router)List the localnet ports by running the following command:

$ ./debug-scripts/network-tools ovn-db-run-command \ ovn-sbctl find Port_Binding type=localnetExample output

Leader pod is ovnkube-master-vslqm _uuid : 3de79191-cca8-4c28-be5a-a228f0f9ebfc additional_chassis : [] additional_encap : [] chassis : [] datapath : 3f1a4928-7ff5-471f-9092-fe5f5c67d15c encap : [] external_ids : {} gateway_chassis : [] ha_chassis_group : [] logical_port : br-ex_helix13.lab.eng.tlv2.redhat.com mac : [unknown] nat_addresses : [] options : {network_name=physnet} parent_port : [] port_security : [] requested_additional_chassis: [] requested_chassis : [] tag : [] tunnel_key : 2 type : localnet up : false virtual_parent : [] _uuid : dbe21daf-9594-4849-b8f0-5efbfa09a455 additional_chassis : [] additional_encap : [] chassis : [] datapath : db2a6067-fe7c-4d11-95a7-ff2321329e11 encap : [] external_ids : {} gateway_chassis : [] ha_chassis_group : [] logical_port : br-ex_hlxcl7-master-2.hlxcl7.lab.eng.tlv2.redhat.com mac : [unknown] nat_addresses : [] options : {network_name=physnet} parent_port : [] port_security : [] requested_additional_chassis: [] requested_chassis : [] tag : [] tunnel_key : 2 type : localnet up : false virtual_parent : [] [...]List the

l3gatewayports by running the following command:$ ./debug-scripts/network-tools ovn-db-run-command \ ovn-sbctl find Port_Binding type=l3gatewayExample output

Leader pod is ovnkube-master-vslqm _uuid : 9314dc80-39e1-4af7-9cc0-ae8a9708ed59 additional_chassis : [] additional_encap : [] chassis : 336a923d-99e8-4e71-89a6-12564fde5760 datapath : db2a6067-fe7c-4d11-95a7-ff2321329e11 encap : [] external_ids : {} gateway_chassis : [] ha_chassis_group : [] logical_port : etor-GR_hlxcl7-master-2.hlxcl7.lab.eng.tlv2.redhat.com mac : ["52:54:00:3e:95:d3"] nat_addresses : ["52:54:00:3e:95:d3 10.46.56.77"] options : {l3gateway-chassis="7eb1f1c3-87c2-4f68-8e89-60f5ca810971", peer=rtoe-GR_hlxcl7-master-2.hlxcl7.lab.eng.tlv2.redhat.com} parent_port : [] port_security : [] requested_additional_chassis: [] requested_chassis : [] tag : [] tunnel_key : 1 type : l3gateway up : true virtual_parent : [] _uuid : ad7eb303-b411-4e9f-8d36-d07f1f268e27 additional_chassis : [] additional_encap : [] chassis : f41453b8-29c5-4f39-b86b-e82cf344bce4 datapath : 082e7a60-d9c7-464b-b6ec-117d3426645a encap : [] external_ids : {} gateway_chassis : [] ha_chassis_group : [] logical_port : etor-GR_helix14.lab.eng.tlv2.redhat.com mac : ["34:48:ed:f3:e2:2c"] nat_addresses : ["34:48:ed:f3:e2:2c 10.46.56.14"] options : {l3gateway-chassis="2e8abe3a-cb94-4593-9037-f5f9596325e2", peer=rtoe-GR_helix14.lab.eng.tlv2.redhat.com} parent_port : [] port_security : [] requested_additional_chassis: [] requested_chassis : [] tag : [] tunnel_key : 1 type : l3gateway up : true virtual_parent : [] [...]List the patch ports by running the following command:

$ ./debug-scripts/network-tools ovn-db-run-command \ ovn-sbctl find Port_Binding type=patchExample output

Leader pod is ovnkube-master-vslqm _uuid : c48b1380-ff26-4965-a644-6bd5b5946c61 additional_chassis : [] additional_encap : [] chassis : [] datapath : 72734d65-fae1-4bd9-a1ee-1bf4e085a060 encap : [] external_ids : {} gateway_chassis : [] ha_chassis_group : [] logical_port : jtor-ovn_cluster_router mac : [router] nat_addresses : [] options : {peer=rtoj-ovn_cluster_router} parent_port : [] port_security : [] requested_additional_chassis: [] requested_chassis : [] tag : [] tunnel_key : 4 type : patch up : false virtual_parent : [] _uuid : 5df51302-f3cd-415b-a059-ac24389938f7 additional_chassis : [] additional_encap : [] chassis : [] datapath : 0551c90f-e891-4909-8e9e-acc7909e06d0 encap : [] external_ids : {} gateway_chassis : [] ha_chassis_group : [] logical_port : rtos-hlxcl7-master-1.hlxcl7.lab.eng.tlv2.redhat.com mac : ["0a:58:0a:82:00:01 10.130.0.1/23"] nat_addresses : [] options : {chassis-redirect-port=cr-rtos-hlxcl7-master-1.hlxcl7.lab.eng.tlv2.redhat.com, peer=stor-hlxcl7-master-1.hlxcl7.lab.eng.tlv2.redhat.com} parent_port : [] port_security : [] requested_additional_chassis: [] requested_chassis : [] tag : [] tunnel_key : 4 type : patch up : false virtual_parent : [] [...]

25.3. Troubleshooting OVN-Kubernetes

OVN-Kubernetes has many sources of built-in health checks and logs.

25.3.1. Monitoring OVN-Kubernetes health by using readiness probes

The ovnkube-master and ovnkube-node pods have containers configured with readiness probes.

Prerequisites

-

Access to the OpenShift CLI (

oc). -

You have access to the cluster with

cluster-adminprivileges. -

You have installed

jq.

Procedure

Review the details of the

ovnkube-masterreadiness probe by running the following command:$ oc get pods -n openshift-ovn-kubernetes -l app=ovnkube-master \ -o json | jq '.items[0].spec.containers[] | .name,.readinessProbe'The readiness probe for the northbound and southbound database containers in the

ovnkube-masterpod checks for the health of the Raft cluster hosting the databases.Review the details of the

ovnkube-nodereadiness probe by running the following command:$ oc get pods -n openshift-ovn-kubernetes -l app=ovnkube-master \ -o json | jq '.items[0].spec.containers[] | .name,.readinessProbe'The

ovnkube-nodecontainer in theovnkube-nodepod has a readiness probe to verify the presence of the ovn-kubernetes CNI configuration file, the absence of which would indicate that the pod is not running or is not ready to accept requests to configure pods.Show all events including the probe failures, for the namespace by using the following command:

$ oc get events -n openshift-ovn-kubernetesShow the events for just this pod:

$ oc describe pod ovnkube-master-tp2z8 -n openshift-ovn-kubernetesShow the messages and statuses from the cluster network operator:

$ oc get co/network -o json | jq '.status.conditions[]'Show the

readystatus of each container inovnkube-masterpods by running the following script:$ for p in $(oc get pods --selector app=ovnkube-master -n openshift-ovn-kubernetes \ -o jsonpath='{range.items[*]}{" "}{.metadata.name}'); do echo === $p ===; \ oc get pods -n openshift-ovn-kubernetes $p -o json | jq '.status.containerStatuses[] | .name, .ready'; \ doneNoteThe expectation is all container statuses are reporting as

true. Failure of a readiness probe sets the status tofalse.

25.3.2. Viewing OVN-Kubernetes alerts in the console

The Alerting UI provides detailed information about alerts and their governing alerting rules and silences.

Prerequisites

- You have access to the cluster as a developer or as a user with view permissions for the project that you are viewing metrics for.

Procedure (UI)

-

In the Administrator perspective, select Observe

Alerting. The three main pages in the Alerting UI in this perspective are the Alerts, Silences, and Alerting Rules pages. -

View the rules for OVN-Kubernetes alerts by selecting Observe

Alerting Alerting Rules.

25.3.3. Viewing OVN-Kubernetes alerts in the CLI

You can get information about alerts and their governing alerting rules and silences from the command line.

Prerequisites

-

Access to the cluster as a user with the

cluster-adminrole. -

The OpenShift CLI (

oc) installed. -

You have installed

jq.

Procedure

View active or firing alerts by running the following commands.

Set the alert manager route environment variable by running the following command:

$ ALERT_MANAGER=$(oc get route alertmanager-main -n openshift-monitoring \ -o jsonpath='{@.spec.host}')Issue a

curlrequest to the alert manager route API with the correct authorization details requesting specific fields by running the following command:$ curl -s -k -H "Authorization: Bearer \ $(oc create token prometheus-k8s -n openshift-monitoring)" \ https://$ALERT_MANAGER/api/v1/alerts \ | jq '.data[] | "\(.labels.severity) \(.labels.alertname) \(.labels.pod) \(.labels.container) \(.labels.endpoint) \(.labels.instance)"'

View alerting rules by running the following command:

$ oc -n openshift-monitoring exec -c prometheus prometheus-k8s-0 -- curl -s 'http://localhost:9090/api/v1/rules' | jq '.data.groups[].rules[] | select(((.name|contains("ovn")) or (.name|contains("OVN")) or (.name|contains("Ovn")) or (.name|contains("North")) or (.name|contains("South"))) and .type=="alerting")'

25.3.4. Viewing the OVN-Kubernetes logs using the CLI

You can view the logs for each of the pods in the ovnkube-master and ovnkube-node pods using the OpenShift CLI (oc).

Prerequisites

-

Access to the cluster as a user with the

cluster-adminrole. -

Access to the OpenShift CLI (

oc). -

You have installed

jq.

Procedure

View the log for a specific pod:

$ oc logs -f <pod_name> -c <container_name> -n <namespace>where:

-f- Optional: Specifies that the output follows what is being written into the logs.

<pod_name>- Specifies the name of the pod.

<container_name>- Optional: Specifies the name of a container. When a pod has more than one container, you must specify the container name.

<namespace>- Specify the namespace the pod is running in.

For example:

$ oc logs ovnkube-master-7h4q7 -n openshift-ovn-kubernetes$ oc logs -f ovnkube-master-7h4q7 -n openshift-ovn-kubernetes -c ovn-dbcheckerThe contents of log files are printed out.

Examine the most recent entries in all the containers in the

ovnkube-masterpods:$ for p in $(oc get pods --selector app=ovnkube-master -n openshift-ovn-kubernetes \ -o jsonpath='{range.items[*]}{" "}{.metadata.name}'); \ do echo === $p ===; for container in $(oc get pods -n openshift-ovn-kubernetes $p \ -o json | jq -r '.status.containerStatuses[] | .name');do echo ---$container---; \ oc logs -c $container $p -n openshift-ovn-kubernetes --tail=5; done; doneView the last 5 lines of every log in every container in an

ovnkube-masterpod using the following command:$ oc logs -l app=ovnkube-master -n openshift-ovn-kubernetes --all-containers --tail 5

25.3.5. Viewing the OVN-Kubernetes logs using the web console

You can view the logs for each of the pods in the ovnkube-master and ovnkube-node pods in the web console.

Prerequisites

-

Access to the OpenShift CLI (

oc).

Procedure

-

In the OpenShift Container Platform console, navigate to Workloads

Pods or navigate to the pod through the resource you want to investigate. -

Select the

openshift-ovn-kubernetesproject from the drop-down menu. - Click the name of the pod you want to investigate.

-

Click Logs. By default for the

ovnkube-masterthe logs associated with thenorthdcontainer are displayed. - Use the down-down menu to select logs for each container in turn.

25.3.5.1. Changing the OVN-Kubernetes log levels

The default log level for OVN-Kubernetes is 2. To debug OVN-Kubernetes set the log level to 5. Follow this procedure to increase the log level of the OVN-Kubernetes to help you debug an issue.

Prerequisites

-

You have access to the cluster with

cluster-adminprivileges. - You have access to the OpenShift Container Platform web console.

Procedure

Run the following command to get detailed information for all pods in the OVN-Kubernetes project:

$ oc get po -o wide -n openshift-ovn-kubernetesExample output

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES ovnkube-master-84nc9 6/6 Running 0 50m 10.0.134.156 ip-10-0-134-156.ec2.internal <none> <none> ovnkube-master-gmlqv 6/6 Running 0 50m 10.0.209.180 ip-10-0-209-180.ec2.internal <none> <none> ovnkube-master-nhts2 6/6 Running 1 (48m ago) 50m 10.0.147.31 ip-10-0-147-31.ec2.internal <none> <none> ovnkube-node-2cbh8 5/5 Running 0 43m 10.0.217.114 ip-10-0-217-114.ec2.internal <none> <none> ovnkube-node-6fvzl 5/5 Running 0 50m 10.0.147.31 ip-10-0-147-31.ec2.internal <none> <none> ovnkube-node-f4lzz 5/5 Running 0 24m 10.0.146.76 ip-10-0-146-76.ec2.internal <none> <none> ovnkube-node-jf67d 5/5 Running 0 50m 10.0.209.180 ip-10-0-209-180.ec2.internal <none> <none> ovnkube-node-np9mf 5/5 Running 0 40m 10.0.165.191 ip-10-0-165-191.ec2.internal <none> <none> ovnkube-node-qjldg 5/5 Running 0 50m 10.0.134.156 ip-10-0-134-156.ec2.internal <none> <none>Create a

ConfigMapfile similar to the following example and use a filename such asenv-overrides.yaml:Example

ConfigMapfilekind: ConfigMap apiVersion: v1 metadata: name: env-overrides namespace: openshift-ovn-kubernetes data: ip-10-0-217-114.ec2.internal: |1 # This sets the log level for the ovn-kubernetes node process: OVN_KUBE_LOG_LEVEL=5 # You might also/instead want to enable debug logging for ovn-controller: OVN_LOG_LEVEL=dbg ip-10-0-209-180.ec2.internal: | # This sets the log level for the ovn-kubernetes node process: OVN_KUBE_LOG_LEVEL=5 # You might also/instead want to enable debug logging for ovn-controller: OVN_LOG_LEVEL=dbg _master: |2 # This sets the log level for the ovn-kubernetes master process as well as the ovn-dbchecker: OVN_KUBE_LOG_LEVEL=5 # You might also/instead want to enable debug logging for northd, nbdb and sbdb on all masters: OVN_LOG_LEVEL=dbgApply the

ConfigMapfile by using the following command:$ oc apply -n openshift-ovn-kubernetes -f env-overrides.yamlExample output

configmap/env-overrides.yaml createdRestart the

ovnkubepods to apply the new log level by using the following commands:$ oc delete pod -n openshift-ovn-kubernetes \ --field-selector spec.nodeName=ip-10-0-217-114.ec2.internal -l app=ovnkube-node$ oc delete pod -n openshift-ovn-kubernetes \ --field-selector spec.nodeName=ip-10-0-209-180.ec2.internal -l app=ovnkube-node$ oc delete pod -n openshift-ovn-kubernetes -l app=ovnkube-master

25.3.6. Checking the OVN-Kubernetes pod network connectivity

The connectivity check controller, in OpenShift Container Platform 4.10 and later, orchestrates connection verification checks in your cluster. These include Kubernetes API, OpenShift API and individual nodes. The results for the connection tests are stored in PodNetworkConnectivity objects in the openshift-network-diagnostics namespace. Connection tests are performed every minute in parallel.

Prerequisites

-

Access to the OpenShift CLI (

oc). -

Access to the cluster as a user with the

cluster-adminrole. -

You have installed

jq.

Procedure

To list the current

PodNetworkConnectivityCheckobjects, enter the following command:$ oc get podnetworkconnectivitychecks -n openshift-network-diagnosticsView the most recent success for each connection object by using the following command:

$ oc get podnetworkconnectivitychecks -n openshift-network-diagnostics \ -o json | jq '.items[]| .spec.targetEndpoint,.status.successes[0]'View the most recent failures for each connection object by using the following command:

$ oc get podnetworkconnectivitychecks -n openshift-network-diagnostics \ -o json | jq '.items[]| .spec.targetEndpoint,.status.failures[0]'View the most recent outages for each connection object by using the following command:

$ oc get podnetworkconnectivitychecks -n openshift-network-diagnostics \ -o json | jq '.items[]| .spec.targetEndpoint,.status.outages[0]'The connectivity check controller also logs metrics from these checks into Prometheus.

View all the metrics by running the following command:

$ oc exec prometheus-k8s-0 -n openshift-monitoring -- \ promtool query instant http://localhost:9090 \ '{component="openshift-network-diagnostics"}'View the latency between the source pod and the openshift api service for the last 5 minutes:

$ oc exec prometheus-k8s-0 -n openshift-monitoring -- \ promtool query instant http://localhost:9090 \ '{component="openshift-network-diagnostics"}'

25.4. Tracing Openflow with ovnkube-trace

OVN and OVS traffic flows can be simulated in a single utility called ovnkube-trace. The ovnkube-trace utility runs ovn-trace, ovs-appctl ofproto/trace and ovn-detrace and correlates that information in a single output.

You can execute the ovnkube-trace binary from a dedicated container. For releases after OpenShift Container Platform 4.7, you can also copy the binary to a local host and execute it from that host.

The binaries in the Quay images do not currently work for Dual IP stack or IPv6 only environments. For those environments, you must build from source.

25.4.1. Installing the ovnkube-trace on local host

The ovnkube-trace tool traces packet simulations for arbitrary UDP or TCP traffic between points in an OVN-Kubernetes driven OpenShift Container Platform cluster. Copy the ovnkube-trace binary to your local host making it available to run against the cluster.

Prerequisites

-

You installed the OpenShift CLI (

oc). -

You are logged in to the cluster with a user with

cluster-adminprivileges.

Procedure

Create a pod variable by using the following command:

$ POD=$(oc get pods -n openshift-ovn-kubernetes -l app=ovnkube-master -o name | head -1 | awk -F '/' '{print $NF}')Run the following command on your local host to copy the binary from the

ovnkube-masterpods:$ oc cp -n openshift-ovn-kubernetes $POD:/usr/bin/ovnkube-trace ovnkube-traceMake

ovnkube-traceexecutable by running the following command:$ chmod +x ovnkube-traceDisplay the options available with

ovnkube-traceby running the following command:$ ./ovnkube-trace -helpExpected output

I0111 15:05:27.973305 204872 ovs.go:90] Maximum command line arguments set to: 191102 Usage of ./ovnkube-trace: -dst string dest: destination pod name -dst-ip string destination IP address (meant for tests to external targets) -dst-namespace string k8s namespace of dest pod (default "default") -dst-port string dst-port: destination port (default "80") -kubeconfig string absolute path to the kubeconfig file -loglevel string loglevel: klog level (default "0") -ovn-config-namespace string namespace used by ovn-config itself -service string service: destination service name -skip-detrace skip ovn-detrace command -src string src: source pod name -src-namespace string k8s namespace of source pod (default "default") -tcp use tcp transport protocol -udp use udp transport protocolThe command-line arguments supported are familiar Kubernetes constructs, such as namespaces, pods, services so you do not need to find the MAC address, the IP address of the destination nodes, or the ICMP type.

The log levels are:

- 0 (minimal output)

- 2 (more verbose output showing results of trace commands)

- 5 (debug output)

25.4.2. Running ovnkube-trace

Run ovn-trace to simulate packet forwarding within an OVN logical network.

Prerequisites

-

You installed the OpenShift CLI (

oc). -

You are logged in to the cluster with a user with

cluster-adminprivileges. -

You have installed

ovnkube-traceon local host

Example: Testing that DNS resolution works from a deployed pod

This example illustrates how to test the DNS resolution from a deployed pod to the core DNS pod that runs in the cluster.

Procedure

Start a web service in the default namespace by entering the following command:

$ oc run web --namespace=default --image=nginx --labels="app=web" --expose --port=80List the pods running in the

openshift-dnsnamespace:oc get pods -n openshift-dnsExample output

NAME READY STATUS RESTARTS AGE dns-default-467qw 2/2 Running 0 49m dns-default-6prvx 2/2 Running 0 53m dns-default-fkqr8 2/2 Running 0 53m dns-default-qv2rg 2/2 Running 0 49m dns-default-s29vr 2/2 Running 0 49m dns-default-vdsbn 2/2 Running 0 53m node-resolver-6thtt 1/1 Running 0 53m node-resolver-7ksdn 1/1 Running 0 49m node-resolver-8sthh 1/1 Running 0 53m node-resolver-c5ksw 1/1 Running 0 50m node-resolver-gbvdp 1/1 Running 0 53m node-resolver-sxhkd 1/1 Running 0 50mRun the following

ovn-kube-tracecommand to verify DNS resolution is working:$ ./ovnkube-trace \ -src-namespace default \1 -src web \2 -dst-namespace openshift-dns \3 -dst dns-default-467qw \4 -udp -dst-port 53 \5 -loglevel 06 Expected output

I0116 10:19:35.601303 17900 ovs.go:90] Maximum command line arguments set to: 191102 ovn-trace source pod to destination pod indicates success from web to dns-default-467qw ovn-trace destination pod to source pod indicates success from dns-default-467qw to web ovs-appctl ofproto/trace source pod to destination pod indicates success from web to dns-default-467qw ovs-appctl ofproto/trace destination pod to source pod indicates success from dns-default-467qw to web ovn-detrace source pod to destination pod indicates success from web to dns-default-467qw ovn-detrace destination pod to source pod indicates success from dns-default-467qw to webThe ouput indicates success from the deployed pod to the DNS port and also indicates that it is successful going back in the other direction. So you know bi-directional traffic is supported on UDP port 53 if my web pod wants to do dns resolution from core DNS.

If for example that did not work and you wanted to get the ovn-trace, the ovs-appctl ofproto/trace and ovn-detrace, and more debug type information increase the log level to 2 and run the command again as follows:

$ ./ovnkube-trace \

-src-namespace default \

-src web \

-dst-namespace openshift-dns \

-dst dns-default-467qw \

-udp -dst-port 53 \

-loglevel 2The output from this increased log level is too much to list here. In a failure situation the output of this command shows which flow is dropping that traffic. For example an egress or ingress network policy may be configured on the cluster that does not allow that traffic.

Example: Verifying by using debug output a configured default deny

This example illustrates how to identify by using the debug output that an ingress default deny policy blocks traffic.

Procedure

Create the following YAML that defines a

deny-by-defaultpolicy to deny ingress from all pods in all namespaces. Save the YAML in thedeny-by-default.yamlfile:kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: deny-by-default namespace: default spec: podSelector: {} ingress: []Apply the policy by entering the following command:

$ oc apply -f deny-by-default.yamlExample output

networkpolicy.networking.k8s.io/deny-by-default createdStart a web service in the

defaultnamespace by entering the following command:$ oc run web --namespace=default --image=nginx --labels="app=web" --expose --port=80Run the following command to create the

prodnamespace:$ oc create namespace prodRun the following command to label the

prodnamespace:$ oc label namespace/prod purpose=productionRun the following command to deploy an

alpineimage in theprodnamespace and start a shell:$ oc run test-6459 --namespace=prod --rm -i -t --image=alpine -- sh- Open another terminal session.

In this new terminal session run

ovn-traceto verify the failure in communication between the source podtest-6459running in namespaceprodand destination pod running in thedefaultnamespace:$ ./ovnkube-trace \ -src-namespace prod \ -src test-6459 \ -dst-namespace default \ -dst web \ -tcp -dst-port 80 \ -loglevel 0Expected output

I0116 14:20:47.380775 50822 ovs.go:90] Maximum command line arguments set to: 191102 ovn-trace source pod to destination pod indicates failure from test-6459 to webIncrease the log level to 2 to expose the reason for the failure by running the following command:

$ ./ovnkube-trace \ -src-namespace prod \ -src test-6459 \ -dst-namespace default \ -dst web \ -tcp -dst-port 80 \ -loglevel 2Expected output

ct_lb_mark /* default (use --ct to customize) */ ------------------------------------------------ 3. ls_out_acl_hint (northd.c:6092): !ct.new && ct.est && !ct.rpl && ct_mark.blocked == 0, priority 4, uuid 32d45ad4 reg0[8] = 1; reg0[10] = 1; next; 4. ls_out_acl (northd.c:6435): reg0[10] == 1 && (outport == @a16982411286042166782_ingressDefaultDeny), priority 2000, uuid f730a8871 ct_commit { ct_mark.blocked = 1; };- 1

- Ingress traffic is blocked due to the default deny policy being in place

Create a policy that allows traffic from all pods in a particular namespaces with a label

purpose=production. Save the YAML in theweb-allow-prod.yamlfile:kind: NetworkPolicy apiVersion: networking.k8s.io/v1 metadata: name: web-allow-prod namespace: default spec: podSelector: matchLabels: app: web policyTypes: - Ingress ingress: - from: - namespaceSelector: matchLabels: purpose: productionApply the policy by entering the following command:

$ oc apply -f web-allow-prod.yamlRun

ovnkube-traceto verify that traffic is now allowed by entering the following command:$ ./ovnkube-trace \ -src-namespace prod \ -src test-6459 \ -dst-namespace default \ -dst web \ -tcp -dst-port 80 \ -loglevel 0Expected output

I0116 14:25:44.055207 51695 ovs.go:90] Maximum command line arguments set to: 191102 ovn-trace source pod to destination pod indicates success from test-6459 to web ovn-trace destination pod to source pod indicates success from web to test-6459 ovs-appctl ofproto/trace source pod to destination pod indicates success from test-6459 to web ovs-appctl ofproto/trace destination pod to source pod indicates success from web to test-6459 ovn-detrace source pod to destination pod indicates success from test-6459 to web ovn-detrace destination pod to source pod indicates success from web to test-6459In the open shell run the following command:

wget -qO- --timeout=2 http://web.defaultExpected output

<!DOCTYPE html> <html> <head> <title>Welcome to nginx!</title> <style> html { color-scheme: light dark; } body { width: 35em; margin: 0 auto; font-family: Tahoma, Verdana, Arial, sans-serif; } </style> </head> <body> <h1>Welcome to nginx!</h1> <p>If you see this page, the nginx web server is successfully installed and working. Further configuration is required.</p> <p>For online documentation and support please refer to <a href="http://nginx.org/">nginx.org</a>.<br/> Commercial support is available at <a href="http://nginx.com/">nginx.com</a>.</p> <p><em>Thank you for using nginx.</em></p> </body> </html>

25.5. Migrating from the OpenShift SDN network plugin

As a cluster administrator, you can migrate to the OVN-Kubernetes network plugin from the OpenShift SDN network plugin.

You can use the offline migration method for migrating from the OpenShift SDN network plugin to the OVN-Kubernetes plugin. The offline migration method is a manual process that includes some downtime.

25.5.1. Migration to the OVN-Kubernetes network plugin

Migrating to the OVN-Kubernetes network plugin is a manual process that includes some downtime during which your cluster is unreachable.

Before you migrate your OpenShift Container Platform cluster to use the OVN-Kubernetes network plugin, update your cluster to the latest z-stream release so that all the latest bug fixes apply to your cluster.

Although a rollback procedure is provided, the migration is intended to be a one-way process.

A migration to the OVN-Kubernetes network plugin is supported on the following platforms:

- Bare metal hardware

- Amazon Web Services (AWS)

- Google Cloud

- IBM Cloud®

- Microsoft Azure

- Red Hat OpenStack Platform (RHOSP)

- Red Hat Virtualization (RHV)

- {vmw-first}

Migrating to or from the OVN-Kubernetes network plugin is not supported for managed OpenShift cloud services such as Red Hat OpenShift Dedicated, Azure Red Hat OpenShift(ARO), and Red Hat OpenShift Service on AWS (ROSA).

Migrating from OpenShift SDN network plugin to OVN-Kubernetes network plugin is not supported on Nutanix.

25.5.1.1. Considerations for migrating to the OVN-Kubernetes network plugin

If you have more than 150 nodes in your OpenShift Container Platform cluster, then open a support case for consultation on your migration to the OVN-Kubernetes network plugin.

The subnets assigned to nodes and the IP addresses assigned to individual pods are not preserved during the migration.

While the OVN-Kubernetes network plugin implements many of the capabilities present in the OpenShift SDN network plugin, the configuration is not the same.

If your cluster uses any of the following OpenShift SDN network plugin capabilities, you must manually configure the same capability in the OVN-Kubernetes network plugin:

- Namespace isolation

- Egress router pods

-

If your cluster or surrounding network uses any part of the

100.64.0.0/16address range, you must choose another unused IP range by specifying thev4InternalSubnetspec under thespec.defaultNetwork.ovnKubernetesConfigobject definition. OVN-Kubernetes uses the IP range100.64.0.0/16internally by default. -

If your

openshift-sdncluster with Precision Time Protocol (PTP) uses the User Datagram Protocol (UDP) for hardware time stamping and you migrate to the OVN-Kubernetes plugin, the hardware time stamping cannot be applied to primary interface devices, such as an Open vSwitch (OVS) bridge. As a result, UDP version 4 configurations cannot work with abr-exinterface.

The following sections highlight the differences in configuration between the aforementioned capabilities in OVN-Kubernetes and OpenShift SDN network plugins.

Primary network interface

The OpenShift SDN plugin allows application of the NodeNetworkConfigurationPolicy (NNCP) custom resource (CR) to the primary interface on a node. The OVN-Kubernetes network plugin does not have this capability.

If you have an NNCP applied to the primary interface, you must delete the NNCP before migrating to the OVN-Kubernetes network plugin. Deleting the NNCP does not remove the configuration from the primary interface, but with OVN-Kubernetes, the Kubernetes NMState cannot manage this configuration. Instead, the configure-ovs.sh shell script manages the primary interface and the configuration attached to this interface.

Namespace isolation

OVN-Kubernetes supports only the network policy isolation mode.

For a cluster using OpenShift SDN that is configured in either the multitenant or subnet isolation mode, you can still migrate to the OVN-Kubernetes network plugin. Note that after the migration operation, multitenant isolation mode is dropped, so you must manually configure network policies to achieve the same level of project-level isolation for pods and services.

Egress IP addresses

OpenShift SDN supports two different Egress IP modes:

- In the automatically assigned approach, an egress IP address range is assigned to a node.

- In the manually assigned approach, a list of one or more egress IP addresses is assigned to a node.

The migration process supports migrating Egress IP configurations that use the automatically assigned mode.

The differences in configuring an egress IP address between OVN-Kubernetes and OpenShift SDN is described in the following table:

| OVN-Kubernetes | OpenShift SDN |

|---|---|

|

|

For more information on using egress IP addresses in OVN-Kubernetes, see "Configuring an egress IP address".

Egress network policies

The difference in configuring an egress network policy, also known as an egress firewall, between OVN-Kubernetes and OpenShift SDN is described in the following table:

| OVN-Kubernetes | OpenShift SDN |

|---|---|

|

|

Because the name of an EgressFirewall object can only be set to default, after the migration all migrated EgressNetworkPolicy objects are named default, regardless of what the name was under OpenShift SDN.

If you subsequently rollback to OpenShift SDN, all EgressNetworkPolicy objects are named default as the prior name is lost.

For more information on using an egress firewall in OVN-Kubernetes, see "Configuring an egress firewall for a project".

Egress router pods

OVN-Kubernetes supports egress router pods in redirect mode. OVN-Kubernetes does not support egress router pods in HTTP proxy mode or DNS proxy mode.

When you deploy an egress router with the Cluster Network Operator, you cannot specify a node selector to control which node is used to host the egress router pod.

Multicast

The difference between enabling multicast traffic on OVN-Kubernetes and OpenShift SDN is described in the following table:

| OVN-Kubernetes | OpenShift SDN |

|---|---|

|

|

For more information on using multicast in OVN-Kubernetes, see "Enabling multicast for a project".

Network policies

OVN-Kubernetes fully supports the Kubernetes NetworkPolicy API in the networking.k8s.io/v1 API group. No changes are necessary in your network policies when migrating from OpenShift SDN.

25.5.1.2. How the migration process works

The following table summarizes the migration process by segmenting between the user-initiated steps in the process and the actions that the migration performs in response.

| User-initiated steps | Migration activity |

|---|---|

|

Set the |

|

|

Update the |

|

| Reboot each node in the cluster. |

|

If a rollback to OpenShift SDN is required, the following table describes the process.

You must wait until the migration process from OpenShift SDN to OVN-Kubernetes network plugin is successful before initiating a rollback.

| User-initiated steps | Migration activity |

|---|---|

| Suspend the MCO to ensure that it does not interrupt the migration. | The MCO stops. |

|

Set the |

|

|

Update the |

|

| Reboot each node in the cluster. |

|

| Enable the MCO after all nodes in the cluster reboot. |

|

25.5.1.3. Using an Ansible playbook to migrate to the OVN-Kubernetes network plugin

As a cluster administrator, you can use an Ansible collection, network.offline_migration_sdn_to_ovnk, to migrate from the OpenShift SDN Container Network Interface (CNI) network plugin to the OVN-Kubernetes plugin for your cluster. The Ansible collection includes the following playbooks:

-

playbooks/playbook-migration.yml: Includes playbooks that execute in a sequence where each playbook represents a step in the migration process. -

playbooks/playbook-rollback.yml: Includes playbooks that execute in a sequence where each playbook represents a step in the rollback process.

Prerequisites

-

You installed the

python3package, minimum version 3.10. -

You installed the

jmespathandjqpackages. - You logged in to the Red Hat Hybrid Cloud Console and opened the Ansible Automation Platform web console.

-

You created a security group rule that allows User Datagram Protocol (UDP) packets on port

6081for all nodes on all cloud platforms. If you do not do this task, your cluster might fail to schedule pods. Check if your cluster uses static routes or routing policies in the host network.

-

If true, a later procedure step requires that you set the

routingViaHostparameter totrueand theipForwardingparameter toGlobalin thegatewayConfigsection of theplaybooks/playbook-migration.ymlfile.

-

If true, a later procedure step requires that you set the

-

If the OpenShift-SDN plugin uses the

100.64.0.0/16and100.88.0.0/16address ranges, you patched the address ranges. For more information, see "Patching OVN-Kubernetes address ranges" in the Additional resources section.

Procedure

Install the

ansible-corepackage, minimum version 2.15. The following example command shows how to install theansible-corepackage on Red Hat Enterprise Linux (RHEL):$ sudo dnf install -y ansible-coreCreate an

ansible.cfgfile and add information similar to the following example to the file. Ensure that file exists in the same directory as where theansible-galaxycommands and the playbooks run.$ cat << EOF >> ansible.cfg [galaxy] server_list = automation_hub, validated [galaxy_server.automation_hub] url=https://console.redhat.com/api/automation-hub/content/published/ auth_url=https://sso.redhat.com/auth/realms/redhat-external/protocol/openid-connect/token token= #[galaxy_server.release_galaxy] #url=https://galaxy.ansible.com/ [galaxy_server.validated] url=https://console.redhat.com/api/automation-hub/content/validated/ auth_url=https://sso.redhat.com/auth/realms/redhat-external/protocol/openid-connect/token token= EOFFrom the Ansible Automation Platform web console, go to the Connect to Hub page and complete the following steps:

- In the Offline token section of the page, click the Load token button.

- After the token loads, click the Copy to clipboard icon.

-

Open the

ansible.cfgfile and paste the API token in thetoken=parameter. The API token is required for authenticating against the server URL specified in theansible.cfgfile.

Install the

network.offline_migration_sdn_to_ovnkAnsible collection by entering the followingansible-galaxycommand:$ ansible-galaxy collection install network.offline_migration_sdn_to_ovnkVerify that the

network.offline_migration_sdn_to_ovnkAnsible collection is installed on your system:$ ansible-galaxy collection list | grep network.offline_migration_sdn_to_ovnkExample output

network.offline_migration_sdn_to_ovnk 1.0.2The

network.offline_migration_sdn_to_ovnkAnsible collection is saved in the default path of~/.ansible/collections/ansible_collections/network/offline_migration_sdn_to_ovnk/.Configure migration features in the

playbooks/playbook-migration.ymlfile:# ... migration_interface_name: eth0 migration_disable_auto_migration: true migration_egress_ip: false migration_egress_firewall: false migration_multicast: false migration_routing_via_host: true migration_ip_forwarding: Global migration_cidr: "10.240.0.0/14" migration_prefix: 23 migration_mtu: 1400 migration_geneve_port: 6081 migration_ipv4_subnet: "100.64.0.0/16" # ...migration_interface_name-

If you use an

NodeNetworkConfigurationPolicy(NNCP) resource on a primary interface, specify the interface name in themigration-playbook.ymlfile so that the NNCP resource gets deleted on the primary interface during the migration process. migration_disable_auto_migration-

Disables the auto-migration of OpenShift SDN CNI plug-in features to the OVN-Kubernetes plugin. If you disable auto-migration of features, you must also set the

migration_egress_ip,migration_egress_firewall, andmigration_multicastparameters tofalse. If you need to enable auto-migration of features, set the parameter tofalse. migration_routing_via_host-

Set to

trueto configure local gateway mode orfalseto configure shared gateway mode for nodes in your cluster. The default value isfalse. In local gateway mode, traffic is routed through the host network stack. In shared gateway mode, traffic is not routed through the host network stack. migration_ip_forwarding-

If you configured local gateway mode, set IP forwarding to

Globalif you need the host network of the node to act as a router for traffic not related to OVN-Kubernetes. migration_cidr-

Specifies a Classless Inter-Domain Routing (CIDR) IP address block for your cluster. You cannot use any CIDR block that overlaps the

100.64.0.0/16CIDR block, because the OVN-Kubernetes network provider uses this block internally. migration_prefix- Ensure that you specify a prefix value, which is the slice of the CIDR block apportioned to each node in your cluster.

migration_mtu- Optional parameter that sets a specific maximum transmission unit (MTU) to your cluster network after the migration process.

migration_geneve_port-

Optional parameter that sets a Geneve port for OVN-Kubernetes. The default port is

6081. migration_ipv4_subnet-

Optional parameter that sets an IPv4 address range for internal use by OVN-Kubernetes. The default value for the parameter is

100.64.0.0/16.

To run the

playbooks/playbook-migration.ymlfile, enter the following command:$ ansible-playbook -v playbooks/playbook-migration.yml

25.5.2. Migrating to the OVN-Kubernetes network plugin

As a cluster administrator, you can change the network plugin for your cluster to OVN-Kubernetes. During the migration, you must reboot every node in your cluster.

While performing the migration, your cluster is unavailable and workloads might be interrupted. Perform the migration only when an interruption in service is acceptable.

Prerequisites

- You have a cluster configured with the OpenShift SDN CNI network plugin in the network policy isolation mode.

-

You installed the OpenShift CLI (

oc). -

You have access to the cluster as a user with the

cluster-adminrole. - You have a recent backup of the etcd database.

- You can manually reboot each node.

- You checked that your cluster is in a known good state without any errors.

-

You created a security group rule that allows User Datagram Protocol (UDP) packets on port

6081for all nodes on all cloud platforms.

Procedure

To backup the configuration for the cluster network, enter the following command:

$ oc get Network.config.openshift.io cluster -o yaml > cluster-openshift-sdn.yamlVerify that the

OVN_SDN_MIGRATION_TIMEOUTenvironment variable is set and is equal to0sby running the following command:#!/bin/bash if [ -n "$OVN_SDN_MIGRATION_TIMEOUT" ] && [ "$OVN_SDN_MIGRATION_TIMEOUT" = "0s" ]; then unset OVN_SDN_MIGRATION_TIMEOUT fi #loops the timeout command of the script to repeatedly check the cluster Operators until all are available. co_timeout=${OVN_SDN_MIGRATION_TIMEOUT:-1200s} timeout "$co_timeout" bash <<EOT until oc wait co --all --for='condition=AVAILABLE=True' --timeout=10s && \ oc wait co --all --for='condition=PROGRESSING=False' --timeout=10s && \ oc wait co --all --for='condition=DEGRADED=False' --timeout=10s; do sleep 10 echo "Some ClusterOperators Degraded=False,Progressing=True,or Available=False"; done EOTRemove the configuration from the Cluster Network Operator (CNO) configuration object by running the following command:

$ oc patch Network.operator.openshift.io cluster --type='merge' \ --patch '{"spec":{"migration":null}}'Delete the

NodeNetworkConfigurationPolicy(NNCP) custom resource (CR) that defines the primary network interface for the OpenShift SDN network plugin by completing the following steps:Check that the existing NNCP CR bonded the primary interface to your cluster by entering the following command:

$ oc get nncpExample output

NAME STATUS REASON bondmaster0 Available SuccessfullyConfiguredNetwork Manager stores the connection profile for the bonded primary interface in the

/etc/NetworkManager/system-connectionssystem path.Remove the NNCP from your cluster:

$ oc delete nncp <nncp_manifest_filename>

To prepare all the nodes for the migration, set the

migrationfield on the CNO configuration object by running the following command:$ oc patch Network.operator.openshift.io cluster --type='merge' \ --patch '{ "spec": { "migration": { "networkType": "OVNKubernetes" } } }'NoteThis step does not deploy OVN-Kubernetes immediately. Instead, specifying the

migrationfield triggers the Machine Config Operator (MCO) to apply new machine configs to all the nodes in the cluster in preparation for the OVN-Kubernetes deployment.Check that the reboot is finished by running the following command:

$ oc get mcpCheck that all cluster Operators are available by running the following command:

$ oc get coAlternatively: You can disable automatic migration of several OpenShift SDN capabilities to the OVN-Kubernetes equivalents:

- Egress IPs

- Egress firewall

- Multicast

To disable automatic migration of the configuration for any of the previously noted OpenShift SDN features, specify the following keys:

$ oc patch Network.operator.openshift.io cluster --type='merge' \ --patch '{ "spec": { "migration": { "networkType": "OVNKubernetes", "features": { "egressIP": <bool>, "egressFirewall": <bool>, "multicast": <bool> } } } }'where: