Dieser Inhalt ist in der von Ihnen ausgewählten Sprache nicht verfügbar.

Chapter 3. Installing SAP application server instances

3.1. Configuration options used in this document

Below are the configuration options that will be used for instances in this document. Please adapt these options according to your local requirements.

For the HA cluster nodes and the (A)SCS and ERS instances managed by the HA cluster, the following values are used:

1st HA cluster node name: node1 2nd HA cluster node name: node2 SID: S4H ASCS Instance number: 20 ASCS virtual hostname: s4ascs ASCS virtual IP address: 192.168.200.101 ERS Instance number: 29 ERS virtual hostname: s4ers ASCS virtual IP address: 192.168.200.102

For the optional primary application server (PAS) and additional application server (AAS) instances, the following values are used:

PAS Instance number: 21 PAS virtual hostname: s4pas PAS virtual IP address: 192.168.200.103 AAS Instance number: 22 AAS virtual hostname: s4aas AAS virtual IP address: 192.168.200.104

3.2. Preparing the cluster nodes for installation of the SAP instances

Before starting the installation, ensure that:

- RHEL 9 is installed and configured on all HA cluster nodes according to the recommendations from SAP and Red Hat for running SAP application server instances on RHEL 9.

- The RHEL for SAP Applications or RHEL for SAP Solutions subscriptions are activated, and the required repositories are enabled on all HA cluster nodes, as documented in RHEL for SAP Subscriptions and Repositories.

- Shared storage and instance directories are present at the correct mount points.

- The virtual hostnames and IP addresses used by the SAP instances can be resolved in both directions, and the virtual IP addresses must be accessible.

- The SAP installation media are accessible on each HA cluster node where a SAP instance will be installed.

These setup steps can be partially automated using Ansible and rhel-system-roles-sap system roles. For more information on this, please check out Red Hat Enterprise Linux System Roles for SAP.

3.3. Installing SAP instances

Using software provisioning manager (SWPM), install instances in the following order:

-

(A)SCSinstance -

ERSinstance - DB instance

- PAS instance

- AAS instances

The following sections just provide some specific recommendations that should be followed when installing SAP instances that will be managed by the HA cluster setup described in this document. Please check the official SAP installation guides for detailed instructions on how to install SAP NetWeaver or S/4HANA application server instances.

3.3.1. Installing (A)SCS on node1

The local directories and mount points required by the SAP instance must be created on the HA cluster node where the (A)SCS instance will be installed:

/sapmnt/ /usr/sap/ /usr/sap/SYS/ /usr/sap/trans/ /usr/sap/S4H/ASCS20/

The shared directories and the instance directory must be manually mounted before starting the installation.

Also, the virtual IP address for the (A)SCS instance must be enabled on node 1, and it must have been verified that the virtual hostname for the ERS instance resolves to the virtual IP address.

When running the SAP installer, please make sure to specify the virtual hostname that should be used for the (A)SCS instance:

[root@node1]# ./sapinst SAPINST_USE_HOSTNAME=s4ascs

Select the High-Availability System option for the installation of the (A)SCS instance:

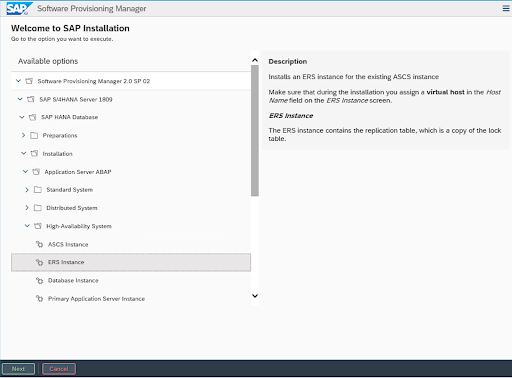

3.3.2. Installing ERS on node2

The local directories and mount points required by the SAP instance must be created on the HA cluster node where the ERS instance will be installed:

/sapmnt/ /usr/sap/ /usr/sap/SYS/ /usr/sap/trans/ /usr/sap/S4H/ERS29

The shared directories and the instance directory must be manually mounted before starting the installation.

Also, the virtual IP address for the ERS instance must be enabled on node 2, and it must have been verified that the virtual hostname for the ERS instance resolves to the virtual IP address.

Make sure to specify the virtual hostname for the ERS instance when starting the installation:

[root@node2]# ./sapinst SAPINST_USE_HOSTNAME=s4ers

Select the High-Availability System option for the installation of the ERS instance:

3.3.3. Installing primary/additional application server instances

The local directories and mount points required by the SAP instance must be created on the HA cluster node where the primary or additional application server instance(s) will be installed:

/sapmnt/ /usr/sap/ /usr/sap/SYS/ /usr/sap/trans/ /usr/sap/S4H/ /usr/sap/S4H/D<Ins#>

The shared directories and the instance directory must be manually mounted before starting the installation.

Also, the virtual IP address for the application server instance must be enabled on the HA cluster node, and it must have been verified that the virtual hostname for the application server instance resolves to the virtual IP address.

Specify the virtual hostname for the instance when starting the installer:

[root@node<X>]# ./sapinst SAPINST_USE_HOSTNAME=<virtual hostname of instance>

Select the High-Availability System option for the installation of the application server instance.

3.4. Post Installation

3.4.1. (A)SCS profile modification

The (A)SCS instance profile has to be modified to prevent the automatic restart of the enqueue server process by the sapstartsrv process of the instance, since the instance will be managed by the cluster.

To modify the (A)SCS instance profile, run the following command:

[root@node1]# sed -i -e 's/Restart_Program_01/Start_Program_01/' /sapmnt/S4H/profile/S4H_ASCS20_s4ascs

3.4.2. ERS profile modification

The ERS instance profile has to be modified to prevent the automatic restart of the enqueue replication server process by the sapstartsrv of the instance since the ERS instance will be managed by the cluster.

To modify the ERS instance profile, run the following command:

[root@node2]# sed -i -e 's/Restart_Program_00/Start_Program_00/' /sapmnt/S4H/profile/S4H_ERS29_s4ers

3.4.3. Updating the /usr/sap/sapservices file

To prevent the SAP instances that will be managed by the HA cluster to be started outside of the control of the HA cluster, make sure the following lines are commented out in the /usr/sap/sapservices file on all cluster nodes:

#LD_LIBRARY_PATH=/usr/sap/S4H/ERS29/exe:$LD_LIBRARY_PATH; export LD_LIBRARY_PATH; /usr/sap/S4H/ERS29/exe/sapstartsrv pf=/usr/sap/S4H/SYS/profile/S4H_ERS29_s4ers -D -u s4hadm #LD_LIBRARY_PATH=/usr/sap/S4H/ASCS20/exe:$LD_LIBRARY_PATH; export LD_LIBRARY_PATH; /usr/sap/S4H/ASCS20/exe/sapstartsrv pf=/usr/sap/S4H/SYS/profile/S4H_ASCS20_s4ascs -D -u s4hadm #LD_LIBRARY_PATH=/usr/sap/S4H/D21/exe:$LD_LIBRARY_PATH; export LD_LIBRARY_PATH; /usr/sap/S4H/D21/exe/sapstartsrv pf=/usr/sap/S4H/SYS/profile/S4H_D21_s4hpas -D -u s4hadm #LD_LIBRARY_PATH=/usr/sap/S4H/D22/exe:$LD_LIBRARY_PATH; export LD_LIBRARY_PATH; /usr/sap/S4H/D22/exe/sapstartsrv pf=/usr/sap/S4H/SYS/profile/S4H_D22_s4haas -D -u s4hadm

3.4.4. Creating mount points for the instance specific directories on the failover node

The mount points where the instance-specific directories will be mounted have to be created and the user and group ownership must be set to the <sid>adm user and the sapsys group on all HA cluster nodes:

[root@node1]# mkdir /usr/sap/S4H/ERS29/ [root@node1]# chown s4hadm:sapsys /usr/sap/S4H/ERS29/ [root@node2]# mkdir /usr/sap/S4H/ASCS20 [root@node2]# chown s4hadm:sapsys /usr/sap/S4H/ASCS20 [root@node<x>]# mkdir /usr/sap/S4H/D<Ins#> [root@node<x>]# chown s4hadm:sapsys /usr/sap/S4H/D<Ins#>

3.4.5. Verifying that the SAP instances can be started and stopped on all cluster nodes

Stop the (A)SCS and ERS instances using sapcontrol`, unmount the instance specific directories and then mount them on the other node:

/usr/sap/S4H/ASCS20/ /usr/sap/S4H/ERS29/ /usr/sap/S4H/D<Ins#>/

Verify that manual starting and stopping of all SAP instances using sapcontrol works on all HA cluster nodes and that the SAP instances are running correctly using the tools provided by SAP.

3.4.6. Verifying that the correct version of SAP Host Agent is installed on all HA cluster nodes

Run the following command on each cluster node to verify that SAP Host Agent has the same version and meets the minimum version requirement:

[root@node<x>]# /usr/sap/hostctrl/exe/saphostexec -version

Please check SAP Note 1031096-Installing Package SAPHOSTAGENT in case SAP Host Agent needs to be updated.

3.4.7. Installing permanent SAP license keys

To ensure that the SAP instances continue to run after a failover, it might be necessary to install several SAP license keys based on the hardware key of each cluster node. Please see SAP Note 1178686 - Linux: Alternative method to generate a SAP hardware key for more information.

3.4.8. Additional changes required when using systemd enabled SAP instances

If the SAP instances that will be managed by the cluster are systemd enabled, additional configuration changes are required to ensure that systemd does not interfere with the management of the SAP instances by the HA cluster. Please check out section 2. Red Hat HA Solutions for SAP in The Systemd-Based SAP Startup Framework for information.