Security hardening

Enhancing security of Red Hat Enterprise Linux 10 systems

Abstract

Providing feedback on Red Hat documentation

We are committed to providing high-quality documentation and value your feedback. To help us improve, you can submit suggestions or report errors through the Red Hat Jira tracking system.

Procedure

Log in to the Jira website.

If you do not have an account, select the option to create one.

- Click Create in the top navigation bar.

- Enter a descriptive title in the Summary field.

- Enter your suggestion for improvement in the Description field. Include links to the relevant parts of the documentation.

- Click Create at the bottom of the dialogue.

Chapter 1. Switching RHEL to FIPS mode

To enable the cryptographic module self-checks mandated by the Federal Information Processing Standard (FIPS) 140-3, you must operate RHEL 10 in FIPS mode. The only correct way to switch the system to FIPS mode is to enable it during the RHEL installation.

Switching a system to FIPS mode after installation is not supported in Red Hat Enterprise Linux 10. In particular, setting the FIPS system-wide cryptographic policy is not sufficient to enable FIPS mode and guarantee compliance with the FIPS 140 standard. The fips-mode-setup tool, which used the FIPS policy for enabling FIPS mode, has been removed from RHEL.

To turn off FIPS mode, you must reinstall the system without enabling FIPS mode during the installation process.

1.1. Federal Information Processing Standards 140 and FIPS mode

The Federal Information Processing Standard (FIPS) 140 is a government standard specifying cryptographic module security. FIPS mode enables required self-checks for compliance.

The FIPS Publication 140 is a series of computer security standards developed by the National Institute of Standards and Technology (NIST) to ensure the quality of cryptographic modules. The FIPS 140 standard ensures that cryptographic tools implement their algorithms correctly. Runtime cryptographic algorithms and integrity self-tests are some of the mechanisms to ensure a system uses cryptography that meets the requirements of the standard.

1.1.1. RHEL in FIPS mode

To ensure that your RHEL system generates and uses all cryptographic keys only with FIPS-approved algorithms, you must switch RHEL to FIPS mode.

To enable FIPS mode, start the installation in FIPS mode. This avoids cryptographic key material regeneration and reevaluation of the compliance of the resulting system associated with converting already deployed systems. Additionally, components that change their algorithm choices based on whether FIPS mode is enabled choose the correct algorithms. For example, LUKS disk encryption uses the PBKDF2 key derivation function (KDF) during installation in FIPS mode, but it chooses the non-FIPS-compliant Argon2 KDF otherwise. Therefore, a non-FIPS installation with disk encryption is either not compliant or potentially unbootable when switched to FIPS mode after the installation.

To operate a FIPS-compliant system, create all cryptographic key material in FIPS mode. Furthermore, the cryptographic key material must never leave the FIPS environment unless it is securely wrapped and never unwrapped in non-FIPS environments.

1.1.2. FIPS mode status

Whether FIPS mode is enabled is determined by the fips=1 boot option on the kernel command line. The system-wide cryptographic policy automatically follows this setting if it is not explicitly set by using the update-crypto-policies --set FIPS command. Systems with a separate partition for /boot additionally require a boot=UUID=<uuid-of-boot-disk> kernel command line argument. The installation program performs the required changes when started in FIPS mode.

Enforcement of restrictions required in FIPS mode depends on the contents of the /proc/sys/crypto/fips_enabled file. If the file contains 1, RHEL core cryptographic components switch to mode, in which they use only FIPS-approved implementations of cryptographic algorithms. If /proc/sys/crypto/fips_enabled contains 0, the cryptographic components do not enable their FIPS mode.

1.1.3. FIPS in crypto-policies

The FIPS system-wide cryptographic policy helps to configure higher-level restrictions. Therefore, communication protocols supporting cryptographic agility do not announce ciphers that the system refuses when selected. For example, the ChaCha20 algorithm is not FIPS-approved, and the FIPS cryptographic policy ensures that TLS servers and clients do not announce the TLS_ECDHE_ECDSA_WITH_CHACHA20_POLY1305_SHA256 TLS cipher suite, because any attempt to use such a cipher fails.

If you operate RHEL in FIPS mode and use an application providing its own FIPS-mode-related configuration options, ignore these options and the corresponding application guidance. The system runs in FIPS mode and the system-wide cryptographic policies enforce only FIPS-compliant cryptography. For example, the Node.js configuration option --enable-fips is ignored if the system runs in FIPS mode. If you use the --enable-fips option on a system not running in FIPS mode, you do not meet the FIPS-140 compliance requirements.

1.1.4. RHEL 10.0 OpenSSL FIPS indicators

Because RHEL introduced OpenSSL FIPS indicators before the OpenSSL upstream did, and both designs differ, the indicators might change in a future minor version of RHEL 10. After the potential adoption of the upstream API, the RHEL 10.0 indicators might return an error message "unsupported" instead of a result. See the OpenSSL FIPS Indicators GitHub document for details.

For the certification status of RHEL 10 cryptographic modules according to the FIPS 140-3 requirements by the National Institute of Standards and Technology (NIST) Cryptographic Module Validation Program (CMVP), see the FIPS - Federal Information Processing Standards section on the Product compliance Red Hat Customer Portal page.

1.2. Installing the system with FIPS mode enabled

To enable the cryptographic module self-checks mandated by the Federal Information Processing Standard (FIPS) 140, enable FIPS mode during the Red Hat Enterprise Linux system installation.

After you complete the setup of FIPS mode, you cannot disable FIPS mode without putting the system into an inconsistent state. If your scenario requires this change, the only correct way is a complete re-installation of the system.

Procedure

Add the

fips=1option to the kernel command line at the start of the system installation when the Red Hat Enterprise Linux boot window opens and displays available boot options.On UEFI systems, press the e key, move the cursor to the end of the

linuxefikernel command line, and addfips=1to the end of this line, for example:linuxefi /images/pxeboot/vmlinuz inst.stage2=hd:LABEL=RHEL-10-0-BaseOS-x86_64 rd.live.\ check quiet fips=1

linuxefi /images/pxeboot/vmlinuz inst.stage2=hd:LABEL=RHEL-10-0-BaseOS-x86_64 rd.live.\ check quiet fips=1Copy to Clipboard Copied! Toggle word wrap Toggle overflow On BIOS systems, press the Tab key, move the cursor to the end of the kernel command line, and add

fips=1to the end of this line, for example:> vmlinuz initrd=initrd.img inst.stage2=hd:LABEL=RHEL-10-0-BaseOS-x86_64 rd.live.check quiet fips=1

> vmlinuz initrd=initrd.img inst.stage2=hd:LABEL=RHEL-10-0-BaseOS-x86_64 rd.live.check quiet fips=1Copy to Clipboard Copied! Toggle word wrap Toggle overflow

- During the software selection stage, do not install any third-party software.

- After the installation, the system starts in FIPS mode automatically.

Verification

After the system starts, check that FIPS mode is enabled:

cat /proc/sys/crypto/fips_enabled 1

$ cat /proc/sys/crypto/fips_enabled 1Copy to Clipboard Copied! Toggle word wrap Toggle overflow

1.3. Enabling FIPS mode with RHEL image builder

You can create a customized image and boot a FIPS-enabled RHEL image. Before you compose the image, you must change the value of the fips directive in your blueprint.

Prerequisites

-

You are logged in as the root user or a user who is a member of the

weldrgroup.

Procedure

Create a plain text file in the Tom’s Obvious, Minimal Language (TOML) format with the following content:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow Build the customized RHEL image:

image-builder build image-type --blueprint blueprint-name

# image-builder build image-type --blueprint blueprint-nameCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Verification

- Log in to the system image with the username and password that you configured in your blueprint.

Check if FIPS mode is enabled:

cat /proc/sys/crypto/fips_enabled 1

$ cat /proc/sys/crypto/fips_enabled 1Copy to Clipboard Copied! Toggle word wrap Toggle overflow

1.4. Creating a bootable disk image for a FIPS-enabled system

You can create a disk image and enable FIPS mode when performing an Anaconda installation. You must add the fips=1 kernel argument when booting the disk image.

Prerequisites

- You have Podman installed on your host machine.

-

You have

virt-installinstalled on your host machine. -

You have root access to run the

bootc-image-buildertool, and run the containers in--privilegedmode, to build the images.

Procedure

Create a

01-fips.tomlto configure FIPS enablement, for example:# Enable FIPS kargs = ["fips=1"]

# Enable FIPS kargs = ["fips=1"]Copy to Clipboard Copied! Toggle word wrap Toggle overflow Create a Containerfile with the following instructions to enable the

fips=1kernel argument and adjust the cryptographic policies:FROM registry.redhat.io/rhel10/rhel-bootc:latest # Enable fips=1 kernel argument: https://bootc-dev.github.io/bootc/building/kernel-arguments.html COPY 01-fips.toml /usr/lib/bootc/kargs.d/ # Install and enable the FIPS crypto policy RUN dnf install -y crypto-policies-scripts && update-crypto-policies --no-reload --set FIPS

FROM registry.redhat.io/rhel10/rhel-bootc:latest # Enable fips=1 kernel argument: https://bootc-dev.github.io/bootc/building/kernel-arguments.html COPY 01-fips.toml /usr/lib/bootc/kargs.d/ # Install and enable the FIPS crypto policy RUN dnf install -y crypto-policies-scripts && update-crypto-policies --no-reload --set FIPSCopy to Clipboard Copied! Toggle word wrap Toggle overflow Before running the container, initialize the

outputfolder. Use the-pargument to ensure that the command does not fail if the directory already exists:mkdir -p ./output

$ mkdir -p ./outputCopy to Clipboard Copied! Toggle word wrap Toggle overflow Create your bootc

<image>compatible base disk image by usingContainerfilein the current directory:Copy to Clipboard Copied! Toggle word wrap Toggle overflow Enable FIPS mode during the system installation:

When booting the RHEL Anaconda installer, on the installation screen, press the TAB key and add the

fips=1kernel argument.After the installation, the system starts in FIPS mode automatically.

Verification

After login in to the system, check that FIPS mode is enabled:

cat /proc/sys/crypto/fips_enabled 1 $ update-crypto-policies --show FIPS

$ cat /proc/sys/crypto/fips_enabled 1 $ update-crypto-policies --show FIPSCopy to Clipboard Copied! Toggle word wrap Toggle overflow

1.5. List of RHEL applications using cryptography that is not compliant with FIPS 140-3

Review the RHEL applications that use cryptography that is not compliant with FIPS 140-3. To pass all relevant cryptographic certifications, such as FIPS 140-3, use libraries from the core cryptographic components set.

These libraries, except for libgcrypt, also follow the RHEL system-wide cryptographic policies.

See the RHEL core cryptographic components Red Hat Knowledgebase article for information about the core cryptographic components, how they are selected, how they integrate with the operating system, how they support hardware security modules and smart cards, and how cryptographic certifications apply to them.

The following RHEL 10 applications use cryptography that is not compliant with FIPS 140-3:

- Bacula

- Implements the CRAM-MD5 authentication protocol.

- Cyrus SASL

- Uses the SCRAM-SHA-1 authentication method.

- curl

- Supports NTLM authentication which uses MD4 and MD5.

- Dovecot

- Uses SCRAM-SHA-1.

- Emacs

- Uses SCRAM-SHA-1.

- FreeRADIUS

- Uses MD5 and SHA-1 for authentication protocols.

- Ghostscript

- Custom cryptography implementation (MD5, RC4, SHA-2, AES) to encrypt and decrypt documents.

- GnuPG

-

The package uses the

libgcryptmodule, which is not validated. - GRUB2

-

Supports legacy firmware protocols requiring SHA-1 and includes the

libgcryptlibrary. - iPXE

- Implements TLS stack.

- Kerberos

- Preserves support for SHA-1 (interoperability with Windows).

- Lasso

-

The

lasso_wsse_username_token_derive_key()key derivation function (KDF) uses SHA-1. - libgcrypt

- The module is deprecated. It is no longer validated since RHEL 10.0.

- MariaDB, MariaDB Connector

-

The

mysql_native_passwordauthentication plugin uses SHA-1. - MySQL

-

mysql_native_passworduses SHA-1. - OpenIPMI

- The RAKP-HMAC-MD5 authentication method is not approved for FIPS usage and does not work in FIPS mode.

- Ovmf (UEFI firmware), Edk2, shim

- Full cryptographic stack (an embedded copy of the OpenSSL library).

- Perl

- Uses HMAC, HMAC-SHA1, HMAC-MD5, SHA-1, and SHA-224.

- Pidgin

- Implements DES and RC4 ciphers.

- Poppler

- Can save PDFs with signatures, passwords, and encryption based on non-allowed algorithms if they are present in the original PDF (for example, MD5, RC4, and SHA-1).

- PostgreSQL

- Implements Blowfish, DES, and MD5. A KDF uses SHA-1.

- QAT Engine

- Uses a mix of hardware and software implementation of cryptographic primitives (RSA, EC, DH, AES, and others).

- Ruby

- Provides insecure MD5 and SHA-1 library functions.

- Samba

- Preserves support for RC4 and DES (interoperability with Windows).

- Sequoia

- Uses the deprecated OpenSSL API, which does not work in FIPS mode.

- Syslinux

- Firmware passwords use SHA-1.

- SWTPM

- Explicitly disables FIPS mode in its OpenSSL usage.

- Unbound

- DNS specification requires that DNSSEC resolvers use a SHA-1-based algorithm in DNSKEY records for validation.

- Valgrind

- AES, SHA hashes.[1]

- zip

- Custom cryptography implementation (insecure PKWARE encryption algorithm) to encrypt and decrypt archives by using a password.

Chapter 2. Using system-wide cryptographic policies

The system-wide cryptographic policies component configures the core cryptographic subsystems, which cover the TLS, IPsec, SSH, DNSSEC, and Kerberos protocols. As an administrator, you can select one of the provided cryptographic policies for your system.

2.1. System-wide cryptographic policies

The RHEL system-wide cryptographic policies configure core subsystems, such as TLS and SSH, which ensures that applications reject weak algorithms by default. The four predefined policies are DEFAULT, LEGACY, FUTURE, and FIPS.

When a system-wide policy is set up, applications in RHEL follow it and deny using algorithms and protocols that do not meet the policy, unless you explicitly request the application to do so. That is, the policy applies to the default behavior of applications when running with the system-provided configuration but you can override it if required.

2.1.1. Predefined cryptographic policies

RHEL 10 contains the following predefined policies:

DEFAULT- The default system-wide cryptographic policy level offers secure settings for current threat models. It allows the TLS 1.2 and 1.3 protocols, as well as the IKEv2 and SSH2 protocols. The RSA keys and Diffie-Hellman parameters are accepted if they are at least 2048 bits long. TLS ciphers that use the RSA key exchange are rejected.

LEGACY- Ensures maximum compatibility with Red Hat Enterprise Linux 6 and earlier; it is less secure due to an increased attack surface. CBC-mode ciphers are allowed to be used with SSH. It allows the TLS 1.2 and 1.3 protocols, as well as the IKEv2 and SSH2 protocols. The RSA keys and Diffie-Hellman parameters are accepted if they are at least 2048 bits long. SHA-1 signatures are allowed outside TLS. Ciphers that use the RSA key exchange are accepted.

FUTUREA stricter forward-looking security level intended for testing a possible future policy. This policy does not allow the use of SHA-1 in DNSSEC or as an HMAC. SHA2-224 and SHA3-224 hashes are rejected. 128-bit ciphers are disabled. CBC-mode ciphers are disabled except in Kerberos. It allows the TLS 1.2 and 1.3 protocols, as well as the IKEv2 and SSH2 protocols. The RSA keys and Diffie-Hellman parameters are accepted if they are at least 3072 bits long. If your system communicates on the public internet, you might face interoperability problems.

ImportantBecause a cryptographic key used by a certificate on the Customer Portal API does not meet the requirements by the

FUTUREsystem-wide cryptographic policy, theredhat-support-toolutility does not work with this policy level at the moment.To work around this problem, use the

DEFAULTcryptographic policy while connecting to the Customer Portal API.FIPSConforms with the FIPS 140 requirements. Red Hat Enterprise Linux systems in FIPS mode use this policy.

NoteYour system is not FIPS-compliant after you set the

FIPScryptographic policy. The only correct way to make your RHEL system compliant with the FIPS 140 standards is by installing it in FIPS mode.RHEL also provides the

FIPS:OSPPsystem-wide subpolicy, which contains further restrictions for cryptographic algorithms required by the Common Criteria (CC) certification. The system becomes less interoperable after you set this subpolicy. For example, you cannot use RSA and DH keys shorter than 3072 bits, additional SSH algorithms, and several TLS groups. SettingFIPS:OSPPalso prevents connecting to Red Hat Content Delivery Network (CDN) structure. Furthermore, you cannot integrate Active Directory (AD) into the IdM deployments that useFIPS:OSPP, communication between RHEL hosts that useFIPS:OSPPand AD domains might not work, or some AD accounts might not be able to authenticate.NoteYour system is not CC-compliant after you set the

FIPS:OSPPcryptographic subpolicy. The only correct way to make your RHEL system compliant with the CC standard is by following the guidance provided in thecc-configpackage. See the Common Criteria section on the Product compliance Red Hat Customer Portal page for a list of certified RHEL versions, validation reports, and links to CC guides.

Red Hat continuously adjusts all policy levels so that all libraries provide secure defaults, except when using the LEGACY policy. Even though the LEGACY profile does not provide secure defaults, it does not include any algorithms that are easily exploitable. As such, the set of enabled algorithms or acceptable key sizes in any provided policy might change during the lifetime of Red Hat Enterprise Linux.

Such changes reflect new security standards and new security research. If you must ensure interoperability with a specific system for the whole lifetime of Red Hat Enterprise Linux, opt out from the system-wide cryptographic policies for components that interact with that system or re-enable specific algorithms by using custom cryptographic policies.

The specific algorithms and ciphers described as allowed in the policy levels are available only if an application supports them:

LEGACY | DEFAULT | FIPS | FUTURE | |

|---|---|---|---|---|

| IKEv1 | no | no | no | no |

| 3DES | no | no | no | no |

| RC4 | no | no | no | no |

| DH | min. 2048-bit | min. 2048-bit | min. 2048-bit | min. 3072-bit |

| RSA | min. 2048-bit | min. 2048-bit | min. 2048-bit | min. 3072-bit |

| DSA | no | no | no | no |

| TLS v1.1 and older | no | no | no | no |

| TLS v1.2 and newer | yes | yes | yes | yes |

| SHA-1 in digital signatures and certificates | yes[a] | no | no | no |

| CBC mode ciphers | yes | no[b] | no[c] | no[d] |

| Symmetric ciphers with keys < 256 bits | yes | yes | yes | no |

[a]

SHA-1 signatures are disabled in TLS contexts

[b]

CBC ciphers are disabled for SSH

[c]

CBC ciphers are disabled for all protocols except Kerberos

[d]

CBC ciphers are disabled for all protocols except Kerberos

| ||||

You can find further details about cryptographic policies and covered applications in the crypto-policies(7) man page on your system.

2.1.2. Post-quantum cryptography

RHEL 10.0 introduced post-quantum cryptography (PQC) in its core cryptographic subsystems. The purpose of PQC is to safeguard the confidentiality, integrity, and authenticity of digital communication and stored data against future attacks that use cryptographically relevant quantum computers (CRQCs).

While CRQCs are not currently operational, continued advances in quantum computing might render classic cryptographic algorithms such as RSA and elliptic curve cryptography computationally feasible to break. PQC algorithms mitigate this anticipated long-term security risk by providing quantum-resistant equivalents. You can prevent the current risk of "harvest now, decrypt later" attacks by configuring RHEL with PQC.

Starting with RHEL 10.1, post-quantum cryptography (PQC) algorithms are enabled by default in all predefined policy levels. To turn off support for post-quantum cryptography, apply the NO-PQ subpolicy to your selected cryptographic policy.

2.2. Changing the system-wide cryptographic policy

You can change the system-wide cryptographic policy on your system by using the update-crypto-policies tool and restarting your system. With update-crypto-policies, you can quickly switch between predefined policies such as DEFAULT, LEGACY, or FUTURE.

The update-crypto-policies(8) man page on your system provides the reference of all options, corresponding files, and per-application details.

Prerequisites

- You have root privileges on the system.

Procedure

Optional: Display the current cryptographic policy:

update-crypto-policies --show DEFAULT

$ update-crypto-policies --show DEFAULTCopy to Clipboard Copied! Toggle word wrap Toggle overflow Set the new cryptographic policy:

update-crypto-policies --set <POLICY> <POLICY>

# update-crypto-policies --set <POLICY> <POLICY>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Replace

<POLICY>with the policy or subpolicy you want to set, for example,FUTURE,LEGACY, orFIPS:OSPP.Restart the system:

reboot

# rebootCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Verification

Display the current cryptographic policy:

update-crypto-policies --show <POLICY>

$ update-crypto-policies --show <POLICY>Copy to Clipboard Copied! Toggle word wrap Toggle overflow

2.3. Switching the system-wide cryptographic policy to mode compatible with earlier releases

Switch to the LEGACY cryptographic policy if environments require compatibility with systems such as RHEL 6 or earlier. This action sacrifices security for interoperability with insecure protocols.

Switching to the LEGACY policy results in a less secure system and applications.

Prerequisites

-

Commands that start with the

#command prompt require administrative privileges provided bysudoor root user access. For information on how to configuresudoaccess, see Enabling unprivileged users to run certain commands.

Procedure

To switch the system-wide cryptographic policy to

LEGACY, enter:update-crypto-policies --set LEGACY Setting system policy to LEGACY

# update-crypto-policies --set LEGACY Setting system policy to LEGACYCopy to Clipboard Copied! Toggle word wrap Toggle overflow For the list of available cryptographic policies, see the

update-crypto-policies(8)man page on your system.To make your cryptographic settings effective for already running services and applications, restart the system:

reboot

$ rebootCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Verification

After the restart, verify the current policy is set to

LEGACY:update-crypto-policies --show LEGACY

$ update-crypto-policies --show LEGACYCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Next steps

-

For defining custom cryptographic policies, see the

Custom Policiessection in theupdate-crypto-policies(8)man page and theCrypto Policy Definition Formatsection in thecrypto-policies(7)man page on your system.

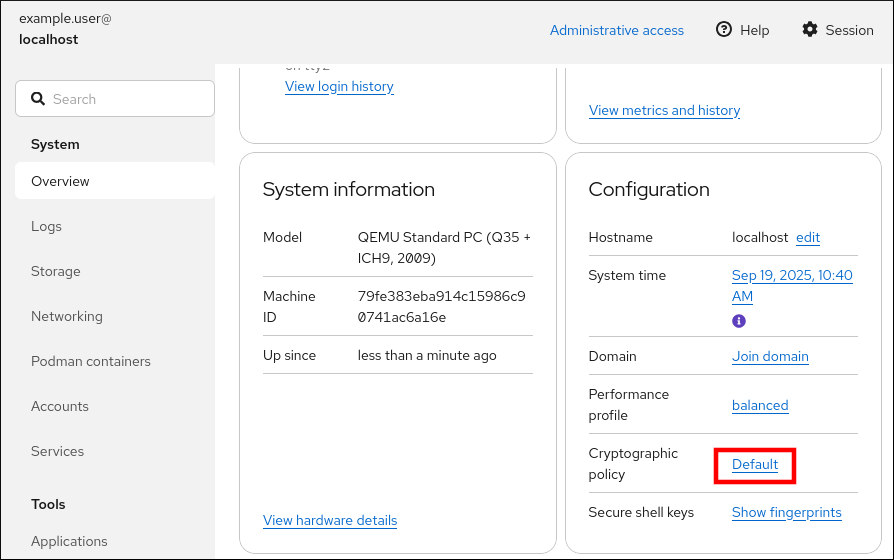

2.4. Setting up system-wide cryptographic policies in the web console

You can set up system-wide cryptographic policies in the RHEL web console. This provides a graphical interface for changing policies such as DEFAULT, LEGACY, or FUTURE.

Besides the three predefined system-wide cryptographic policies, you can also apply a combination of the LEGACY policy and the AD-SUPPORT subpolicy through the graphical interface. The LEGACY:AD-SUPPORT policy is the LEGACY policy with less secure settings that improve interoperability for Active Directory services.

Prerequisites

You have installed the RHEL 10 web console.

For instructions, see Installing and enabling the web console.

-

Commands that start with the

#command prompt require administrative privileges provided bysudoor root user access. For information on how to configuresudoaccess, see Enabling unprivileged users to run certain commands.

Procedure

- Log in to the RHEL 10 web console.

In the Configuration card of the Overview page, click your current policy value next to Crypto policy.

- In the Change crypto policy dialog window, click the policy you want to start using on your system.

- Click the button.

Verification

After the restart, log back in to web console, and check that the Crypto policy value corresponds to the one you selected.

Alternatively, you can enter the

update-crypto-policies --showcommand to display the current system-wide cryptographic policy in your terminal.

2.5. Examples of opting out of system-wide cryptographic policies

Review examples demonstrating how to opt out of system-wide crypto policies for applications such as OpenSSH and wget. Use these methods when specific applications require legacy ciphers or protocols.

You can customize cryptographic settings used by your application by configuring supported cipher suites and protocols directly in the application.

You can also remove a symlink related to your application from the /etc/crypto-policies/back-ends directory and replace it with your customized cryptographic settings. This configuration prevents system-wide cryptographic policies from being applied to applications that use the excluded back end. Furthermore, this modification is not supported by Red Hat.

- curl

To specify ciphers used by the

curltool, use the--ciphersoption and provide a colon-separated list of ciphers as a value. For example:curl <https://example.com> --ciphers '@SECLEVEL=0:DES-CBC3-SHA:RSA-DES-CBC3-SHA'

$ curl <https://example.com> --ciphers '@SECLEVEL=0:DES-CBC3-SHA:RSA-DES-CBC3-SHA'Copy to Clipboard Copied! Toggle word wrap Toggle overflow See the

curl(1)man page for more information.- Libreswan

- See the Enabling legacy ciphers and algorithms in Libreswan section in the Securing networks document for detailed information.

- Mozilla Firefox

-

Even though you cannot opt out of system-wide cryptographic policies in the Mozilla Firefox web browser, you can further restrict supported ciphers and TLS versions in the Firefox’s Configuration Editor. Type

about:configin the address bar and change the value of thesecurity.tls.version.minoption as required. Settingsecurity.tls.version.minto1allows TLS 1.0 as the minimum required,security.tls.version.min 2enables TLS 1.1, and so on. - OpenSSH server

To opt out of the system-wide cryptographic policies for your OpenSSH server, specify the cryptographic policy in a drop-in configuration file located in the

/etc/ssh/sshd_config.d/directory. Use a two-digit number prefix smaller than 50, so that it lexicographically precedes the50-redhat.conffile, and a.confsuffix, for example,49-crypto-policy-override.conf.See the

sshd_config(5)man page for more information.- OpenSSH client

To opt out of system-wide cryptographic policies for your OpenSSH client, perform one of the following tasks:

-

For a given user, override the global

ssh_configwith a user-specific configuration in the~/.ssh/configfile. -

For the entire system, specify the cryptographic policy in a drop-in configuration file located in the

/etc/ssh/ssh_config.d/directory, with a two-digit number prefix smaller than 50, so that it lexicographically precedes the50-redhat.conffile, and with a.confsuffix, for example,49-crypto-policy-override.conf.

See the

ssh_config(5)man page for more information.-

For a given user, override the global

- wget

To customize cryptographic settings used by the wget network downloader, use the

--secure-protocoland--ciphersoptions. For example:wget --secure-protocol=TLSv1_1 --ciphers="SECURE128" <https://example.com>

$ wget --secure-protocol=TLSv1_1 --ciphers="SECURE128" <https://example.com>Copy to Clipboard Copied! Toggle word wrap Toggle overflow See the HTTPS (SSL/TLS) Options section of the

wget(1)man page for more information.

2.6. Customizing system-wide cryptographic policies with subpolicies

Adjust the set of enabled cryptographic algorithms or protocols on the system. In RHEL, you can either apply custom subpolicies on top of an existing system-wide cryptographic policy or define such a policy from scratch.

The concept of scoped policies enables different sets of algorithms for different back ends. You can limit each configuration directive to specific protocols, libraries, or services.

Furthermore, you can use wildcard characters in directives, for example, an asterisk to specify multiple values. For the complete syntax reference, see the Custom Policies section in the update-crypto-policies(8) man page and the Crypto Policy Definition Format section in the crypto-policies(7) man page on your system.

-

The

/etc/crypto-policies/state/CURRENT.polfile lists all settings in the currently applied system-wide cryptographic policy after wildcard expansion. -

To make your cryptographic policy more strict, consider using values listed in the

/usr/share/crypto-policies/policies/FUTURE.polfile. -

You can find example subpolicies in the

/usr/share/crypto-policies/policies/modules/directory.

Procedure

Checkout to the

/etc/crypto-policies/policies/modules/directory:cd /etc/crypto-policies/policies/modules/

# cd /etc/crypto-policies/policies/modules/Copy to Clipboard Copied! Toggle word wrap Toggle overflow Create subpolicies for your adjustments, for example:

touch <MYCRYPTO-1>.pmod touch <SCOPES-AND-WILDCARDS>.pmod

# touch <MYCRYPTO-1>.pmod # touch <SCOPES-AND-WILDCARDS>.pmodCopy to Clipboard Copied! Toggle word wrap Toggle overflow ImportantUse upper-case letters in file names of policy modules.

Open the policy modules in a text editor of your choice and insert options that modify the system-wide cryptographic policy, for example:

vi <MYCRYPTO-1>.pmod

# vi <MYCRYPTO-1>.pmodCopy to Clipboard Copied! Toggle word wrap Toggle overflow min_rsa_size = 3072 hash = SHA2-384 SHA2-512 SHA3-384 SHA3-512

min_rsa_size = 3072 hash = SHA2-384 SHA2-512 SHA3-384 SHA3-512Copy to Clipboard Copied! Toggle word wrap Toggle overflow vi <SCOPES-AND-WILDCARDS>.pmod

# vi <SCOPES-AND-WILDCARDS>.pmodCopy to Clipboard Copied! Toggle word wrap Toggle overflow Copy to Clipboard Copied! Toggle word wrap Toggle overflow - Save the changes in the module files.

Apply your policy adjustments to the

DEFAULTsystem-wide cryptographic policy level:update-crypto-policies --set DEFAULT:<MYCRYPTO-1>:<SCOPES-AND-WILDCARDS>

# update-crypto-policies --set DEFAULT:<MYCRYPTO-1>:<SCOPES-AND-WILDCARDS>Copy to Clipboard Copied! Toggle word wrap Toggle overflow To make your cryptographic settings effective for already running services and applications, restart the system:

reboot

# rebootCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Verification

Check that the

/etc/crypto-policies/state/CURRENT.polfile contains your changes, for example:cat /etc/crypto-policies/state/CURRENT.pol | grep rsa_size min_rsa_size = 3072

$ cat /etc/crypto-policies/state/CURRENT.pol | grep rsa_size min_rsa_size = 3072Copy to Clipboard Copied! Toggle word wrap Toggle overflow

2.7. Creating and setting a custom system-wide cryptographic policy

Apply custom subpolicies on top of an existing system-wide policy to modify enabled cryptographic algorithms or protocols. By using this granular customization, you can ensure that your system adheres to your specific security requirements.

Procedure

Create a policy file for your customizations:

cd /etc/crypto-policies/policies/ touch <MYPOLICY>.pol

# cd /etc/crypto-policies/policies/ # touch <MYPOLICY>.polCopy to Clipboard Copied! Toggle word wrap Toggle overflow Alternatively, start by copying one of the four predefined policy levels:

cp /usr/share/crypto-policies/policies/DEFAULT.pol /etc/crypto-policies/policies/<MYPOLICY>.pol

# cp /usr/share/crypto-policies/policies/DEFAULT.pol /etc/crypto-policies/policies/<MYPOLICY>.polCopy to Clipboard Copied! Toggle word wrap Toggle overflow Edit the file with your custom cryptographic policy in a text editor of your choice to fit your requirements, for example:

vi /etc/crypto-policies/policies/<MYPOLICY>.pol

# vi /etc/crypto-policies/policies/<MYPOLICY>.polCopy to Clipboard Copied! Toggle word wrap Toggle overflow See the

Custom Policiessection in theupdate-crypto-policies(8)man page and theCrypto Policy Definition Formatsection in thecrypto-policies(7)man page on your system for the complete syntax reference.Switch the system-wide cryptographic policy to your custom level:

update-crypto-policies --set <MYPOLICY>

# update-crypto-policies --set <MYPOLICY>Copy to Clipboard Copied! Toggle word wrap Toggle overflow To make your cryptographic settings effective for already running services and applications, restart the system:

reboot

# rebootCopy to Clipboard Copied! Toggle word wrap Toggle overflow

2.8. Disabling post-quantum algorithms system-wide

From RHEL 10.1, post-quantum cryptography (PQC) algorithms are enabled in all predefined policies by default. You can turn them off by applying the NO-PQ subpolicy.

Prerequisites

-

Commands that start with the

#command prompt require administrative privileges provided bysudoor root user access. For information on how to configuresudoaccess, see Enabling unprivileged users to run certain commands.

Procedure

Apply the

NO-PQcryptographic subpolicy on top of your current system-wide policy, for example:update-crypto-policies --show DEFAULT update-crypto-policies --set DEFAULT:NO-PQ

# update-crypto-policies --show DEFAULT # update-crypto-policies --set DEFAULT:NO-PQCopy to Clipboard Copied! Toggle word wrap Toggle overflow To make your cryptographic settings effective for already running services and applications, restart the system:

reboot

# rebootCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Verification

Check that the

/etc/crypto-policies/state/CURRENT.polfile does not contain PQC algorithm stringsMLKEM,MLDSA, andKEM-ECDH, for example:cat /etc/crypto-policies/state/CURRENT.pol | grep MLKEM

$ cat /etc/crypto-policies/state/CURRENT.pol | grep MLKEMCopy to Clipboard Copied! Toggle word wrap Toggle overflow The output of the previous command must be empty.

2.9. Enhancing security with the FUTURE cryptographic policy using the crypto_policies RHEL system role

You can use the crypto_policies RHEL system role to configure the FUTURE cryptographic policy on your managed nodes.

The FUTURE policy helps to achieve, for example:

- Future-proofing against emerging threats

- Anticipates advancements in computational power.

- Enhanced security

- Stronger encryption standards require longer key lengths and more secure algorithms.

- Compliance with high-security standards

- In some industries, for example, in healthcare, telco, and finance the data sensitivity is high, and availability of strong cryptography is critical.

Typically, FUTURE is suitable for environments handling highly sensitive data, preparing for future regulations, or adopting long-term security strategies.

Legacy systems and software do not have to support the more modern and stricter algorithms and protocols enforced by the FUTURE policy. For example, older systems might not support TLS 1.3 or larger key sizes. This could lead to interoperability problems.

Also, using strong algorithms usually increases the computational workload, which could negatively affect your system performance.

Prerequisites

- You have prepared the control node and the managed nodes.

- You are logged in to the control node as a user who can run playbooks on the managed nodes.

-

The account you use to connect to the managed nodes has

sudopermissions for these nodes.

Procedure

Create a playbook file, for example,

~/playbook.yml, with the following content:Copy to Clipboard Copied! Toggle word wrap Toggle overflow The settings specified in the example playbook include the following:

crypto_policies_policy: FUTURE-

Configures the required cryptographic policy (

FUTURE) on the managed node. It can be either the base policy or a base policy with some subpolicies. The specified base policy and subpolicies have to be available on the managed node. The default value isnull, which means that the configuration is not changed and thecrypto_policiesRHEL system role only collects the Ansible facts. crypto_policies_reboot_ok: true-

Causes the system to reboot after the cryptographic policy change to make sure all of the services and applications will read the new configuration files. The default value is

false.

For details about the role variables and the cryptographic configuration options, see the

/usr/share/ansible/roles/rhel-system-roles.crypto_policies/README.mdfile and theupdate-crypto-policies(8)andcrypto-policies(7)manual pages on the control node.Validate the playbook syntax:

ansible-playbook --syntax-check ~/playbook.yml

$ ansible-playbook --syntax-check ~/playbook.ymlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Note that this command only validates the syntax and does not protect against a wrong but valid configuration.

Run the playbook:

ansible-playbook ~/playbook.yml

$ ansible-playbook ~/playbook.ymlCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Verification

On the control node, create another playbook named, for example,

verify_playbook.yml:Copy to Clipboard Copied! Toggle word wrap Toggle overflow The settings specified in the example playbook include the following:

crypto_policies_active-

An exported Ansible fact that contains the currently active policy name in the format as accepted by the

crypto_policies_policyvariable.

Validate the playbook syntax:

ansible-playbook --syntax-check ~/verify_playbook.yml

$ ansible-playbook --syntax-check ~/verify_playbook.ymlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Run the playbook:

ansible-playbook ~/verify_playbook.yml TASK [debug] ************************** ok: [host] => { "crypto_policies_active": "FUTURE" }$ ansible-playbook ~/verify_playbook.yml TASK [debug] ************************** ok: [host] => { "crypto_policies_active": "FUTURE" }Copy to Clipboard Copied! Toggle word wrap Toggle overflow The

crypto_policies_activevariable shows the active policy on the managed node.

Chapter 3. Configuring applications to use cryptographic hardware through PKCS #11

Configure applications to use cryptographic hardware such as smart cards and HSMs through the consistent PKCS #11 API. Isolating secrets on hardware helps provide an additional layer of security.

3.1. Cryptographic hardware support through PKCS #11

In RHEL, you can use the PKCS #11 API standard and the p11-kit tool to access cryptographic tokens such as smart cards and hardware security modules (HSMs). By isolating keys on these dedicated devices, you ensure your sensitive data is managed securely.

Public-Key Cryptography Standard (PKCS) #11 defines an application programming interface (API) to cryptographic devices that hold cryptographic information and perform cryptographic functions.

PKCS #11 introduces the cryptographic token, an object that presents each hardware or software device to applications in a unified manner. Therefore, applications view devices such as smart cards, which are typically used by persons, and hardware security modules, which are typically used by computers, as PKCS #11 cryptographic tokens.

A PKCS #11 token can store various object types including a certificate; a data object; and a public, private, or secret key. These objects are uniquely identifiable through the PKCS #11 Uniform Resource Identifier (URI) scheme.

A PKCS #11 URI is a standard way to identify a specific object in a PKCS #11 module according to the object attributes. This enables you to configure all libraries and applications with the same configuration string in the form of a URI.

RHEL provides the OpenSC PKCS #11 driver for smart cards by default. However, hardware tokens and HSMs can have their own PKCS #11 modules that do not have their counterpart in the system. You can register such PKCS #11 modules with the p11-kit tool, which acts as a wrapper over the registered smart-card drivers in the system.

To make your own PKCS #11 module work on the system, add a new text file to the /etc/pkcs11/modules/ directory.

You can add your own PKCS #11 module into the system by creating a new text file in the /etc/pkcs11/modules/ directory. For example, the OpenSC configuration file in p11-kit looks as follows:

cat /usr/share/p11-kit/modules/opensc.module module: opensc-pkcs11.so

$ cat /usr/share/p11-kit/modules/opensc.module

module: opensc-pkcs11.so3.2. Authenticating by SSH keys stored on a smart card

Use SSH keys stored on a smart card for authentication to add a physical layer of protection to your credentials. This method provides enhanced security against unauthorized access.

You can create and store ECDSA and RSA keys on a smart card and authenticate by the smart card on an OpenSSH client. Smart-card authentication replaces the default password authentication.

Prerequisites

-

On the client side, the

openscpackage is installed and thepcscdservice is running.

Procedure

List all keys provided by the OpenSC PKCS #11 module including their PKCS #11 URIs and save the output to the

keys.pubfile:ssh-keygen -D pkcs11: > keys.pub

$ ssh-keygen -D pkcs11: > keys.pubCopy to Clipboard Copied! Toggle word wrap Toggle overflow Transfer the public key to the remote server. Use the

ssh-copy-idcommand with thekeys.pubfile created in the previous step:ssh-copy-id -f -i keys.pub <username@ssh-server-example.com>

$ ssh-copy-id -f -i keys.pub <username@ssh-server-example.com>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Connect to

<ssh-server-example.com>by using the ECDSA key. You can use just a subset of the URI, which uniquely references your key, for example:ssh -i "pkcs11:id=%01?module-path=/usr/lib64/pkcs11/opensc-pkcs11.so" <ssh-server-example.com> Enter PIN for 'SSH key': [ssh-server-example.com] $

$ ssh -i "pkcs11:id=%01?module-path=/usr/lib64/pkcs11/opensc-pkcs11.so" <ssh-server-example.com> Enter PIN for 'SSH key': [ssh-server-example.com] $Copy to Clipboard Copied! Toggle word wrap Toggle overflow Because OpenSSH uses the

p11-kit-proxywrapper and the OpenSC PKCS #11 module is registered to thep11-kittool, you can simplify the previous command:ssh -i "pkcs11:id=%01" <ssh-server-example.com> Enter PIN for 'SSH key': [ssh-server-example.com] $

$ ssh -i "pkcs11:id=%01" <ssh-server-example.com> Enter PIN for 'SSH key': [ssh-server-example.com] $Copy to Clipboard Copied! Toggle word wrap Toggle overflow If you skip the

id=part of a PKCS #11 URI, OpenSSH loads all keys that are available in the proxy module. This can reduce the amount of typing required:ssh -i pkcs11: <ssh-server-example.com> Enter PIN for 'SSH key': [ssh-server-example.com] $

$ ssh -i pkcs11: <ssh-server-example.com> Enter PIN for 'SSH key': [ssh-server-example.com] $Copy to Clipboard Copied! Toggle word wrap Toggle overflow Optional: You can use the same URI string in the

~/.ssh/configfile to make the configuration permanent:cat ~/.ssh/config IdentityFile "pkcs11:id=%01?module-path=/usr/lib64/pkcs11/opensc-pkcs11.so" $ ssh <ssh-server-example.com> Enter PIN for 'SSH key': [ssh-server-example.com] $

$ cat ~/.ssh/config IdentityFile "pkcs11:id=%01?module-path=/usr/lib64/pkcs11/opensc-pkcs11.so" $ ssh <ssh-server-example.com> Enter PIN for 'SSH key': [ssh-server-example.com] $Copy to Clipboard Copied! Toggle word wrap Toggle overflow The

sshclient utility now automatically uses this URI and the key from the smart card.

3.3. Application settings for authentication with certificates on smart cards

You can configure applications such as curl to authenticate using certificates stored on a smart card. This robust method helps protect your system with physical security credentials.

Authentication by using smart cards in applications might increase security and simplify automation. You can integrate the Public Key Cryptography Standard (PKCS) #11 URIs into your application by using the following methods:

-

The

Firefoxweb browser automatically loads thep11-kit-proxyPKCS #11 module. This means that every supported smart card in the system is automatically detected. For using TLS client authentication, no additional setup is required and keys and certificates from a smart card are automatically used when a server requests them. -

If your application uses the

GnuTLSorNSSlibrary, it already supports PKCS #11 URIs. Also, applications that rely on theOpenSSLlibrary can access cryptographic hardware modules, including smart cards, through the PKCS #11 provider installed by thepkcs11-providerpackage. -

Applications that require working with private keys on smart cards and that do not use

NSS,GnuTLS, norOpenSSLcan use thep11-kitAPI directly to work with cryptographic hardware modules, including smart cards, rather than using the PKCS #11 API of specific PKCS #11 modules. With the

wgetnetwork downloader, you can specify PKCS #11 URIs instead of paths to locally stored private keys and certificates. This might simplify creation of scripts for tasks that require safely stored private keys and certificates. For example:wget --private-key 'pkcs11:token=softhsm;id=%01;type=private?pin-value=111111' --certificate 'pkcs11:token=softhsm;id=%01;type=cert' https://example.com/

$ wget --private-key 'pkcs11:token=softhsm;id=%01;type=private?pin-value=111111' --certificate 'pkcs11:token=softhsm;id=%01;type=cert' https://example.com/Copy to Clipboard Copied! Toggle word wrap Toggle overflow You can also specify PKCS #11 URI when using the

curltool:curl --key 'pkcs11:token=softhsm;id=%01;type=private?pin-value=111111' --cert 'pkcs11:token=softhsm;id=%01;type=cert' https://example.com/

$ curl --key 'pkcs11:token=softhsm;id=%01;type=private?pin-value=111111' --cert 'pkcs11:token=softhsm;id=%01;type=cert' https://example.com/Copy to Clipboard Copied! Toggle word wrap Toggle overflow NoteBecause a PIN is a security measure that controls access to keys stored on a smart card and the configuration file contains the PIN in the plain text form, consider additional protection to prevent an attacker from reading the PIN. For example, you can use the

pin-sourceattribute and provide afile:URI for reading the PIN from a file. See RFC 7512: PKCS #11 URI Scheme Query Attribute Semantics for more information. Note that using a command path as a value of thepin-sourceattribute is not supported.

See the curl(1), wget(1), p11-kit(8), and provider-pkcs11(7) man pages on your system for details and additional examples.

3.4. Using HSMs protecting private keys in Apache

The Apache HTTP server can work with private keys stored on hardware security modules (HSMs), which helps to prevent the keys' disclosure and man-in-the-middle attacks. Note that this usually requires high-performance HSMs for busy servers.

For secure communication in the form of the HTTPS protocol, the Apache HTTP server (httpd) uses the OpenSSL library. OpenSSL does not support PKCS #11 natively. To use HSMs, you must install the pkcs11-provider package, which provides access to PKCS #11 modules. You can use a PKCS #11 URI instead of a regular file name to specify a server key and a certificate in the /etc/httpd/conf.d/ssl.conf configuration file, for example:

SSLCertificateFile "pkcs11:id=%01;token=softhsm;type=cert" SSLCertificateKeyFile "pkcs11:id=%01;token=softhsm;type=private?pin-value=111111"

SSLCertificateFile "pkcs11:id=%01;token=softhsm;type=cert"

SSLCertificateKeyFile "pkcs11:id=%01;token=softhsm;type=private?pin-value=111111"

Install the httpd-manual package to obtain complete documentation for the Apache HTTP Server, including TLS configuration. The directives available in the /etc/httpd/conf.d/ssl.conf configuration file are described in detail in the /usr/share/httpd/manual/mod/mod_ssl.html file.

Chapter 4. Controlling access to smart cards by using polkit

Configure the polkit framework in RHEL to control access to smart cards. This provides fine-grained control and mitigates threats that built-in mechanisms, such as PINs, PIN pads, and biometrics, cannot prevent.

System administrators can configure polkit to fit specific scenarios, such as smart-card access for non-privileged or non-local users or services.

4.1. Smart-card access control through polkit

With the polkit authorization manager, you can define precise policies for securing smart cards. Administrators can use this framework to control which users can perform specific privileged operations with the card.

The Personal Computer/Smart Card (PC/SC) protocol specifies a standard for integrating smart cards and their readers into computing systems. In RHEL, the pcsc-lite package provides middleware to access smart cards that use the PC/SC API. A part of this package, the pcscd (PC/SC Smart Card) daemon, ensures that the system can access a smart card by using the PC/SC protocol.

Because access-control mechanisms built into smart cards, such as PINs, PIN pads, and biometrics, do not cover all possible threats, RHEL uses the polkit framework for more robust access control. The polkit authorization manager can grant access to privileged operations. In addition to granting access to disks, you can use polkit also to specify policies for securing smart cards. For example, you can define which users can perform which operations with a smart card.

After installing the pcsc-lite package and starting the pcscd daemon, the system enforces policies defined in the /usr/share/polkit-1/actions/ directory. The default system-wide policy is in the /usr/share/polkit-1/actions/org.debian.pcsc-lite.policy file. Polkit policy files use the XML format and the syntax is described in the polkit(8) man page.

The polkitd service monitors the /etc/polkit-1/rules.d/ and /usr/share/polkit-1/rules.d/ directories for any changes in rule files stored in these directories. The files contain authorization rules in JavaScript format. System administrators can add custom rule files in both directories, and polkitd reads them in lexical order based on their file name. If two files have the same names, then the file in /etc/polkit-1/rules.d/ is read first.

4.3. Displaying more detailed information about polkit authorization to PC/SC

In the default configuration, the polkit authorization framework sends only limited information to the Journal log. You can extend polkit log entries related to the PC/SC protocol by adding new rules.

Prerequisites

-

You have installed the

pcsc-litepackage on your system. -

The

pcscddaemon is running.

Procedure

Create a new file in the

/etc/polkit-1/rules.d/directory:touch /etc/polkit-1/rules.d/00-test.rules

# touch /etc/polkit-1/rules.d/00-test.rulesCopy to Clipboard Copied! Toggle word wrap Toggle overflow Edit the file in an editor of your choice, for example:

vi /etc/polkit-1/rules.d/00-test.rules

# vi /etc/polkit-1/rules.d/00-test.rulesCopy to Clipboard Copied! Toggle word wrap Toggle overflow Insert the following lines:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow Save the file, and exit the editor.

Restart the

pcscdandpolkitservices:systemctl restart pcscd.service pcscd.socket polkit.service

# systemctl restart pcscd.service pcscd.socket polkit.serviceCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Verification

-

Make an authorization request for

pcscd. For example, open the Mozilla Firefox web browser or use thepkcs11-tool -Lcommand provided by theopenscpackage. Display the extended log entries, for example:

journalctl -u polkit --since "1 hour ago" polkitd[1224]: <no filename>:4: action=[Action id='org.debian.pcsc-lite.access_pcsc'] polkitd[1224]: <no filename>:5: subject=[Subject pid=2020481 user=user' groups=user,wheel,mock,wireshark seat=null session=null local=true active=true]

# journalctl -u polkit --since "1 hour ago" polkitd[1224]: <no filename>:4: action=[Action id='org.debian.pcsc-lite.access_pcsc'] polkitd[1224]: <no filename>:5: subject=[Subject pid=2020481 user=user' groups=user,wheel,mock,wireshark seat=null session=null local=true active=true]Copy to Clipboard Copied! Toggle word wrap Toggle overflow

Chapter 5. Scanning the system for configuration compliance

Scan your RHEL configuration against specific rules defined in a compliance policy. With these checklists, you can ensure that your environment adheres to strict security requirements.

Security professionals define the compliance policy by specifying the required settings for a computing environment, often in the form of a checklist.

Compliance policies can vary substantially across organizations and even across different systems within the same organization. Differences among these policies are based on the purpose of each system and its importance for the organization. Custom software settings and deployment characteristics also raise a need for custom policy checklists.

5.1. Configuration compliance tools in RHEL

You can use tools such as OpenSCAP and the SCAP Security Guide (SSG) in RHEL to audit system security and maintain compliance with established security baselines.

RHEL 10 provides a set of configuration-compliance tools for performing a fully automated compliance audit. These tools are based on the Security Content Automation Protocol (SCAP) standard and are designed for automated tailoring of compliance policies.

- OpenSCAP

The

OpenSCAPlibrary, with the accompanyingoscapcommand-line utility, is designed to perform configuration scans on a local system, to validate configuration compliance content, and to generate reports and guides based on these scans and evaluations. Withoscap, you can scan systems to assess their alignment with security policies contained inscap-security-guide. You can also perform an automated remediation that configures the system into a state that is aligned with a selected policy.ImportantYou can experience memory-consumption problems while using OpenSCAP, which can cause stopping the program prematurely and prevent generating any result files. See the OpenSCAP memory-consumption problems Knowledgebase article for details.

- SCAP Security Guide (SSG)

-

The

scap-security-guidepackage provides collections of security policies for Linux systems. The guidance consists of a catalog of practical hardening advice, linked to government requirements where applicable. The project bridges the gap between generalized policy requirements and specific implementation guidelines. - Script Check Engine (SCE)

-

With SCE, which is an extension to the SCAP protocol, administrators can write their security content by using a scripting language, such as Bash, Python, and Ruby. The SCE extension is provided in the

openscap-engine-scepackage. The SCE itself is not part of the SCAP standard.

Alternatively, you can perform automated compliance audits on multiple systems remotely by using the OpenSCAP solution for Red Hat Satellite.

5.2. Configuration compliance scanning

Verify if your Red Hat Enterprise Linux systems adhere to security baselines, such as industry standards or internal policies, by performing a configuration compliance scan. You can scan local and remote systems, containers, and container images using OpenSCAP and the SCAP Security Guide.

5.2.1. Configuration compliance in RHEL

Use configuration compliance scanning to conform to a baseline defined by a specific organization. For example, if you are a payment processor, you can align your systems with the Payment Card Industry Data Security Standard (PCI-DSS). You can also perform scanning to harden your system security.

Follow the Security Content Automation Protocol (SCAP) content provided in the SCAP Security Guide package because it is in line with Red Hat best practices for affected components.

The SCAP Security Guide package provides content which conforms to the SCAP 1.2 and SCAP 1.3 standards. The openscap scanner utility is compatible with both SCAP 1.2 and SCAP 1.3 content provided in the SCAP Security Guide package.

Performing a configuration compliance scanning does not guarantee the system is compliant.

The SCAP Security Guide suite provides profiles for several platforms in the form of data stream documents. A data stream is a file that contains definitions, benchmarks, profiles, and individual rules. Each rule specifies the applicability and requirements for compliance. RHEL provides several profiles for compliance with security policies. In addition to the industry standard, Red Hat data streams also contain information for remediation of failed rules. The data streams use the following structure of compliance scanning resources:

A profile is a set of rules based on a security policy, such as PCI-DSS and Health Insurance Portability and Accountability Act (HIPAA). After you select a profile, you can then perform an automated audit of the system for compliance with that profile.

You can also modify, or tailor, a profile to customize certain rules, for example, password length. For more information about profile tailoring, see Customizing a security profile with autotailor.

5.2.2. Possible results of an OpenSCAP scan

Understanding possible results of an OpenSCAP scan, such as Pass, Fail, or Not Applicable, helps you interpret compliance reports accurately.

Depending on the data stream and profile applied to an OpenSCAP scan and the various properties of your system, each rule produces a specific result:

- Pass

- The scan did not find any conflicts with this rule.

- Fail

- The scan found a conflict with this rule.

- Not checked

- OpenSCAP does not perform an automatic evaluation of this rule. Check whether your system conforms to this rule manually.

- Not applicable

- This rule does not apply to the current configuration.

- Not selected

- This rule is not part of the profile. OpenSCAP does not evaluate this rule and does not display these rules in the results.

- Error

-

The scan encountered an error. For additional information, you can enter the

oscapcommand with the--verbose DEVELoption. File a support case on the Red Hat Customer Portal or open a ticket in the RHEL project in Red Hat Jira. - Unknown

-

The scan encountered an unexpected situation. For additional information, you can enter the

oscapcommand with the--verbose DEVELoption. File a support case on the Red Hat Customer Portal or open a ticket in the RHEL project in Red Hat Jira.

5.2.3. Viewing profiles for configuration compliance

Before you decide to use profiles for scanning or remediation, you can list them and check their detailed descriptions by using the oscap info subcommand.

Prerequisites

-

The

openscap-scannerandscap-security-guidepackages are installed.

Procedure

List all available files with security compliance profiles provided by the SCAP Security Guide project:

ls /usr/share/xml/scap/ssg/content/ ssg-rhel10-ds.xml

$ ls /usr/share/xml/scap/ssg/content/ ssg-rhel10-ds.xmlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Display detailed information about a selected data stream by using the

oscap infosubcommand. XML files containing data streams are indicated by the-dsstring in their names. In theProfilessection, you can find a list of available profiles and their IDs:Copy to Clipboard Copied! Toggle word wrap Toggle overflow Select a profile from the data stream file and display additional details about the selected profile. To do so, use

oscap infowith the--profileoption followed by the last section of the ID displayed in the output of the previous command. For example, the ID of the HIPPA profile isxccdf_org.ssgproject.content_profile_hipaa, and the value for the--profileoption ishipaa:Copy to Clipboard Copied! Toggle word wrap Toggle overflow

5.2.4. Assessing configuration compliance with a specific baseline

You can determine whether your system or a remote system conforms to a specific baseline, and save the results in a report by using the oscap command-line tool.

Prerequisites

-

The

openscap-scannerandscap-security-guidepackages are installed. - You know the ID of the profile within the baseline with which the system should comply. To find the ID, see the Viewing profiles for configuration compliance section.

Procedure

Scan the local system for compliance with the selected profile and save the scan results to a file:

oscap xccdf eval --report <scan_report.html> --profile <profile_ID> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xml

$ oscap xccdf eval --report <scan_report.html> --profile <profile_ID> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xmlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Replace:

-

<scan_report.html>with the file name whereoscapsaves the scan results. -

<profile_ID>with the profile ID with which the system should comply, for example,hipaa.

-

Optional: Scan a remote system for compliance with the selected profile and save the scan results to a file:

oscap-ssh <username>@<hostname> <port> xccdf eval --report <scan_report.html> --profile <profile_ID> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xml

$ oscap-ssh <username>@<hostname> <port> xccdf eval --report <scan_report.html> --profile <profile_ID> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xmlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Replace:

-

<username>@<hostname>with the user name and hostname of the remote system. -

<port>with the port number through which you can access the remote system. -

<scan_report.html>with the file name whereoscapsaves the scan results. -

<profile_ID>with the profile ID with which the system should comply, for example,hipaa.

-

5.2.5. Assessing security compliance of a container or a container image with a specific baseline

Assess the compliance of a container or container image in RHEL with security baselines, such as Payment Card Industry Data Security Standard (PCI-DSS) and Health Insurance Portability and Accountability Act (HIPAA), to identify vulnerabilities and ensure adherence to security standards.

Prerequisites

-

The

openscap-utilsandscap-security-guidepackages are installed. - You have root access to the system.

Procedure

Find the ID of a container or a container image:

To find the ID of a container:

podman ps -a

# podman ps -aCopy to Clipboard Copied! Toggle word wrap Toggle overflow To find the ID of a container image:

podman images

# podman imagesCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Evaluate the compliance of the container or container image with a profile and save the scan results into a file:

oscap-podman <ID> xccdf eval --report <scan_report.html> --profile <profile_ID> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xml

# oscap-podman <ID> xccdf eval --report <scan_report.html> --profile <profile_ID> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xmlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Replace:

-

<ID>with the ID of your container or container image -

<scan_report.html>with the file name whereoscapsaves the scan results -

<profile_ID>with the profile ID with which the system should comply, for example,hipaaorpci-dss

-

Verification

Check the results in a browser of your choice, for example:

firefox <scan_report.html> &

$ firefox <scan_report.html> &Copy to Clipboard Copied! Toggle word wrap Toggle overflow

The rules marked as notapplicable apply only to bare metal and virtual systems and not to containers or container images.

5.3. Configuration compliance remediation

To automatically align your system with a specific profile, you can perform a remediation. You can remediate the system to align with any profile provided by the SCAP Security Guide.

5.3.1. Remediating the system to align with a specific baseline

Remediate your RHEL system to align with a specific security baseline by using the oscap xccdf eval --remediate command. This automatically fixes configuration rules defined in the SCAP Security Guide.

For details on listing available profiles, see the Viewing profiles for configuration compliance section.

Remediations are supported on RHEL systems in the default configuration. Remediating a system that has been altered after installation might render the system nonfunctional or noncompliant with the required security profile. Red Hat does not provide any automated method to revert changes made by security-hardening remediations.

Test the effects of the remediation before applying it on production systems.

Prerequisites

-

The

openscap-scannerandscap-security-guidepackages are installed.

Procedure

Remediate the system:

oscap xccdf eval --profile <profile_ID> --remediate /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xml

# oscap xccdf eval --profile <profile_ID> --remediate /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xmlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Replace

<profile_ID>with the profile ID with which the system should comply, for example,hipaa.- Restart your system.

Verification

Evaluate compliance of the system with the profile, and save the scan results to a file:

oscap xccdf eval --report <scan_report.html> --profile <profile_ID> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xml

$ oscap xccdf eval --report <scan_report.html> --profile <profile_ID> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xmlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Replace:

-

<scan_report.html>with the file name whereoscapsaves the scan results. -

<profile_ID>with the profile ID with which the system should comply, for example,hipaa.

-

5.3.2. Remediating the system to align with a specific baseline by using an SSG Ansible Playbook

Use an Ansible Playbook provided by the SCAP Security Guide project to remediate your system against a specific security baseline. This helps ensure consistency and automation across multiple systems.

Remediations are supported on RHEL systems in the default configuration. Remediating a system that has been altered after installation might render the system nonfunctional or noncompliant with the required security profile. Red Hat does not provide any automated method to revert changes made by security-hardening remediations.

Test the effects of the remediation before applying it on production systems.

Prerequisites

-

The

scap-security-guidepackage is installed. -

The

ansible-corepackage is installed. See the Ansible Installation Guide for more information. -

The

rhc-worker-playbookpackage is installed. - You know the ID of the profile according to which you want to remediate your system. For details, see Viewing profiles for configuration compliance.

Procedure

Remediate your system to align with a selected profile by using Ansible:

ANSIBLE_COLLECTIONS_PATH=/usr/share/rhc-worker-playbook/ansible/collections/ansible_collections/ ansible-playbook -i "localhost," -c local /usr/share/scap-security-guide/ansible/rhel10-playbook-<profile_ID>.yml

# ANSIBLE_COLLECTIONS_PATH=/usr/share/rhc-worker-playbook/ansible/collections/ansible_collections/ ansible-playbook -i "localhost," -c local /usr/share/scap-security-guide/ansible/rhel10-playbook-<profile_ID>.ymlCopy to Clipboard Copied! Toggle word wrap Toggle overflow The

ANSIBLE_COLLECTIONS_PATHenvironment variable is necessary for the command to run the playbook.Replace

<profile_ID>with the profile ID of the selected profile.- Restart the system.

Verification

Evaluate the compliance of the system with the selected profile, and save the scan results to a file:

oscap xccdf eval --profile <profile_ID> --report <scan_report.html> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xml

# oscap xccdf eval --profile <profile_ID> --report <scan_report.html> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xmlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Replace

<scan_report.html>with the file name whereoscapsaves the scan results.

5.3.3. Creating a remediation Ansible Playbook to align the system with a specific baseline

You can create an Ansible Playbook containing only the remediations required to align your system with a specific baseline. This playbook is smaller because it does not cover requirements that are already satisfied.

Creating the playbook does not modify your system in any way, because you only prepare a file for later application.

Prerequisites

-

The

scap-security-guidepackage is installed. -

The

ansible-corepackage is installed. See the Ansible Installation Guide for more information. -

The

rhc-worker-playbookpackage is installed. - You know the ID of the profile according to which you want to remediate your system. For details, see Viewing profiles for configuration compliance.

Procedure

Scan the system and save the results:

oscap xccdf eval --profile <profile_ID> --results <profile_results.xml> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xml

# oscap xccdf eval --profile <profile_ID> --results <profile_results.xml> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xmlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Replace: *

<profile_ID>with the profile ID with which the system should comply, for example,hipaa*<profile_results.xml>with the path to the file whereoscapsaves the resultsFind the value of the result ID in the file with the results:

oscap info <profile_results.xml>

# oscap info <profile_results.xml>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Generate an Ansible Playbook based on the file generated in step 1:

oscap xccdf generate fix --fix-type ansible --result-id xccdf_org.open-scap_testresult_xccdf_org.ssgproject.content_profile_<profile_ID> --output <profile_remediations.yml> <profile_results.xml>

# oscap xccdf generate fix --fix-type ansible --result-id xccdf_org.open-scap_testresult_xccdf_org.ssgproject.content_profile_<profile_ID> --output <profile_remediations.yml> <profile_results.xml>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Replace

<profile_remediations.yml>with the path to the file whereoscapsaves rules that failed the scan.-

Review the generated

<profile_remediations.yml>file. Remediate your system to align with a selected profile by using Ansible:

ANSIBLE_COLLECTIONS_PATH=/usr/share/rhc-worker-playbook/ansible/collections/ansible_collections/ ansible-playbook -i "localhost," -c local <profile_remediations.yml>`

# ANSIBLE_COLLECTIONS_PATH=/usr/share/rhc-worker-playbook/ansible/collections/ansible_collections/ ansible-playbook -i "localhost," -c local <profile_remediations.yml>`Copy to Clipboard Copied! Toggle word wrap Toggle overflow The

ANSIBLE_COLLECTIONS_PATHenvironment variable is necessary for the command to run the playbook.WarningRemediations are supported on RHEL systems in the default configuration. Remediating a system that has been altered after installation might render the system nonfunctional or noncompliant with the required security profile. Red Hat does not provide any automated method to revert changes made by security-hardening remediations.

Test the effects of the remediation before applying it on production systems.

Verification

Evaluate the compliance of the system with the selected profile, and save the scan results to a file:

oscap xccdf eval --profile <profile_ID> --report <scan_report.html> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xml

# oscap xccdf eval --profile <profile_ID> --report <scan_report.html> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xmlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Replace

<scan_report.html>with the file name whereoscapsaves the scan results.

5.4. Performing a hardened installation of RHEL with Kickstart

To make your system compliant with a specific security profile, such as DISA STIG, CIS, or ANSSI, you can prepare a Kickstart file that defines the hardened configuration, customize it with a tailoring file, and run an automated installation of the hardened system.

Prerequisites

-

The

openscap-scanneris installed on your system. The

scap-security-guidepackage is installed on your system and the package version corresponds to the version of RHEL that you want to install. For more information, see Supported versions of the SCAP Security Guide in RHEL. Using a different version can cause conflicts.NoteIf your system has the same version of RHEL as the version you want to install, you can install the

scap-security-guidepackage directly.

Procedure

Find the ID of the security profile from the data stream file:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Optional: If you want to customize your hardening with XCCDF Tailoring file you can use the

autotailorcommand provided in theopenscap-utilspackage. For more information, see Customizing a security profile with autotailor. Generate the Kickstart file from the SCAP source data stream:

oscap xccdf generate fix --profile <profile_ID> --output <kickstart_file>.cfg --fix-type kickstart /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xml

$ oscap xccdf generate fix --profile <profile_ID> --output <kickstart_file>.cfg --fix-type kickstart /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xmlCopy to Clipboard Copied! Toggle word wrap Toggle overflow + Replace

<profile_ID>with the profile ID with which the system should comply, for example,hipaa.+ If you are using a tailoring file, embed the tailoring file into the generated Kickstart file by using the

--tailoring-file tailoring.xmloption and your custom profile ID, for example:+

*oscap xccdf generate fix --tailoring-file tailoring.xml --profile _<custom_profile_ID>_ --output _<kickstart_file>_.cfg --fix-type kickstart ./ssg-rhel10-ds.xml*

$ *oscap xccdf generate fix --tailoring-file tailoring.xml --profile _<custom_profile_ID>_ --output _<kickstart_file>_.cfg --fix-type kickstart ./ssg-rhel10-ds.xml*Review and, if necessary, manually modify the generated

<kickstart_file>.cfgto fit the needs of your deployment. Follow the instructions in the comments in the file.NoteSome changes might affect the compliance of the systems installed by the Kickstart file. For example, some security policies require defined partitions or specific packages and services.

- Use the Kickstart file for your installation. For the installation program to use the Kickstart, the Kickstart can be served through a web server, provided in PXE, or embedded into the ISO image. For detailed steps, see the Semi-automated installations: Making Kickstart files available to the RHEL installer chapter in the Automatically installing RHEL document.

-

After the installation finishes, the system reboots automatically. After the reboot, log in and review the installation SCAP report saved in the

/rootdirectory.

Verification

Scan the system for compliance and save the report in a HTML file for review:

With the original profile:

oscap xccdf eval --report report.html --profile <profile_ID> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xml

# oscap xccdf eval --report report.html --profile <profile_ID> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xmlCopy to Clipboard Copied! Toggle word wrap Toggle overflow With the tailored profile:

oscap xccdf eval --report report.html --tailoring-file tailoring.xml --profile <custom_profile_ID> /usr/share/xml/scap/ssg/content/ssg-rhel10-ds.xml