Este contenido no está disponible en el idioma seleccionado.

Developer Guide

OpenShift Container Platform 3.3 Developer Reference

Abstract

- Monitor and browse projects with the web console

- Configure and utilize the CLI

- Generate configurations using templates

- Manage builds and webhooks

- Define and trigger deployments

- Integrate external services (databases, SaaS endpoints)

Chapter 1. Overview

This guide helps developers set up and configure a workstation to develop and deploy applications in an OpenShift Container Platform cloud environment with a command-line interface (CLI). This guide provides detailed instructions and examples to help developers:

- Monitor and browse projects with the web console.

- Configure and utilize the CLI.

- Generate configurations using templates.

- Manage builds and webhooks.

- Define and trigger deployments.

- Integrate external services (databases, SaaS endpoints).

Chapter 2. Application Life Cycle Management

2.1. Planning Your Development Process

2.1.1. Overview

OpenShift Container Platform is designed for building and deploying applications. Depending on how much you want to involve OpenShift Container Platform in your development process, you can choose to:

- focus your development within an OpenShift Container Platform project, using it to build an application from scratch then continuously develop and manage its lifecycle, or

- bring an application (e.g., binary, container image, source code) you have already developed in a separate environment and deploy it onto OpenShift Container Platform.

2.1.2. Using OpenShift Container Platform as Your Development Environment

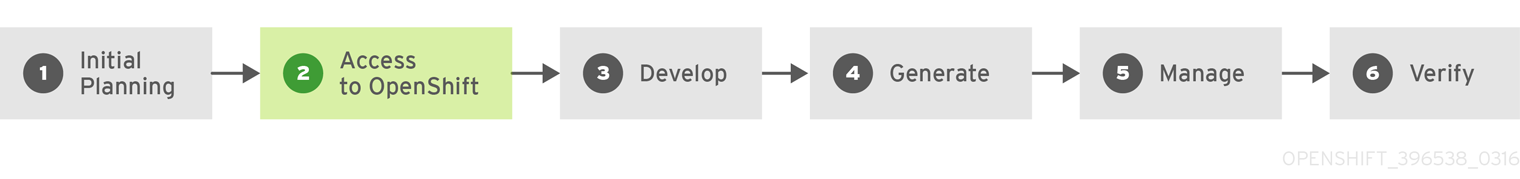

You can begin your application’s development from scratch using OpenShift Container Platform directly. Consider the following steps when planning this type of development process:

Initial Planning

- What does your application do?

- What programming language will it be developed in?

Access to OpenShift Container Platform

- OpenShift Container Platform should be installed by this point, either by yourself or an administrator within your organization.

Develop

- Using your editor or IDE of choice, create a basic skeleton of an application. It should be developed enough to tell OpenShift Container Platform what kind of application it is.

- Push the code to your Git repository.

Generate

-

Create a basic application using the

oc new-appcommand. OpenShift Container Platform generates build and deployment configurations.

Manage

- Start developing your application code.

- Ensure your application builds successfully.

- Continue to locally develop and polish your code.

- Push your code to a Git repository.

- Is any extra configuration needed? Explore the Developer Guide for more options.

Verify

-

You can verify your application in a number of ways. You can push your changes to your application’s Git repository, and use OpenShift Container Platform to rebuild and redeploy your application. Alternatively, you can hot deploy using

rsyncto synchronize your code changes into a running pod.

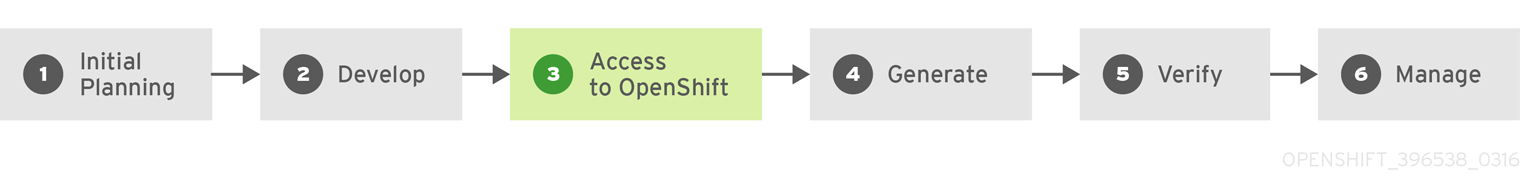

2.1.3. Bringing an Application to Deploy on OpenShift Container Platform

Another possible application development strategy is to develop locally, then use OpenShift Container Platform to deploy your fully developed application. Use the following process if you plan to have application code already, then want to build and deploy onto an OpenShift Container Platform installation when completed:

Initial Planning

- What does your application do?

- What programming language will it be developed in?

Develop

- Develop your application code using your editor or IDE of choice.

- Build and test your application code locally.

- Push your code to a Git repository.

Access to OpenShift Container Platform

- OpenShift Container Platform should be installed by this point, either by yourself or an administrator within your organization.

Generate

-

Create a basic application using the

oc new-appcommand. OpenShift Container Platform generates build and deployment configurations.

Verify

- Ensure that the application that you have built and deployed in the above Generate step is successfully running on OpenShift Container Platform.

Manage

- Continue to develop your application code until you are happy with the results.

- Rebuild your application in OpenShift Container Platform to accept any newly pushed code.

- Is any extra configuration needed? Explore the Developer Guide for more options.

2.2. Creating New Applications

2.2.1. Overview

You can create a new OpenShift Container Platform application from components including source or binary code, images and/or templates by using either the OpenShift CLI or web console.

2.2.2. Creating an Application Using the CLI

2.2.2.1. Creating an Application From Source Code

The new-app command allows you to create applications from source code in a local or remote Git repository.

To create an application using a Git repository in a local directory:

$ oc new-app /path/to/source/code

If using a local Git repository, the repository should have a remote named origin that points to a URL accessible by the OpenShift Container Platform cluster. If there is no recognised remote, new-app will create a binary build.

You can use a subdirectory of your source code repository by specifying a --context-dir flag. To create an application using a remote Git repository and a context subdirectory:

$ oc new-app https://github.com/openshift/sti-ruby.git \

--context-dir=2.0/test/puma-test-app

Also, when specifying a remote URL, you can specify a Git branch to use by appending #<branch_name> to the end of the URL:

$ oc new-app https://github.com/openshift/ruby-hello-world.git#beta4

The new-app command creates a build configuration, which itself creates a new application image from your source code. The new-app command typically also creates a deployment configuration to deploy the new image, and a service to provide load-balanced access to the deployment running your image.

OpenShift Container Platform automatically detects whether the Docker, Pipeline or Sourcebuild strategy should be used, and in the case of Source builds, detects an appropriate language builder image.

Build Strategy Detection

If a Jenkinsfile exists in the root or specified context directory of the source repository when creating a new application, OpenShift Container Platform generates a Pipeline build strategy. Otherwise, if a Dockerfile is found, OpenShift Container Platform generates a Docker build strategy. Otherwise, it generates a Source build strategy.

You can override the build strategy by setting the --strategy flag to either docker, pipeline or source.

$ oc new-app /home/user/code/myapp --strategy=dockerLanguage Detection

If using the Source build strategy, new-app attempts to determine the language builder to use by the presence of certain files in the root or specified context directory of the repository:

| Language | Files |

|---|---|

|

| project.json, *.csproj |

|

| pom.xml |

|

| app.json, package.json |

|

| cpanfile, index.pl |

|

| composer.json, index.php |

|

| requirements.txt, setup.py |

|

| Gemfile, Rakefile, config.ru |

|

| build.sbt |

After a language is detected, new-app searches the OpenShift Container Platform server for image stream tags that have a supports annotation matching the detected language, or an image stream that matches the name of the detected language. If a match is not found, new-app searches the Docker Hub registry for an image that matches the detected language based on name.

You can override the image the builder uses for a particular source repository by specifying the image (either an image stream or container specification) and the repository, with a ~ as a separator. Note that if this is done, build strategy detection and language detection are not carried out.

For example, to use the myproject/my-ruby image stream with the source in a remote repository:

$ oc new-app myproject/my-ruby~https://github.com/openshift/ruby-hello-world.gitTo use the openshift/ruby-20-centos7:latest container image stream with the source in a local repository:

$ oc new-app openshift/ruby-20-centos7:latest~/home/user/code/my-ruby-app2.2.2.2. Creating an Application From an Image

You can deploy an application from an existing image. Images can come from image streams in the OpenShift Container Platform server, images in a specific registry or Docker Hub registry, or images in the local Docker server.

The new-app command attempts to determine the type of image specified in the arguments passed to it. However, you can explicitly tell new-app whether the image is a Docker image (using the --docker-image argument) or an image stream (using the -i|--image argument).

If you specify an image from your local Docker repository, you must ensure that the same image is available to the OpenShift Container Platform cluster nodes.

For example, to create an application from the DockerHub MySQL image:

$ oc new-app mysqlTo create an application using an image in a private registry, specify the full Docker image specification:

$ oc new-app myregistry:5000/example/myimage

If the registry containing the image is not secured with SSL, cluster administrators must ensure that the Docker daemon on the OpenShift Container Platform node hosts is run with the --insecure-registry flag pointing to that registry. You must also tell new-app that the image comes from an insecure registry with the --insecure-registry flag.

You can create an application from an existing image stream and optional image stream tag:

$ oc new-app my-stream:v12.2.2.3. Creating an Application From a Template

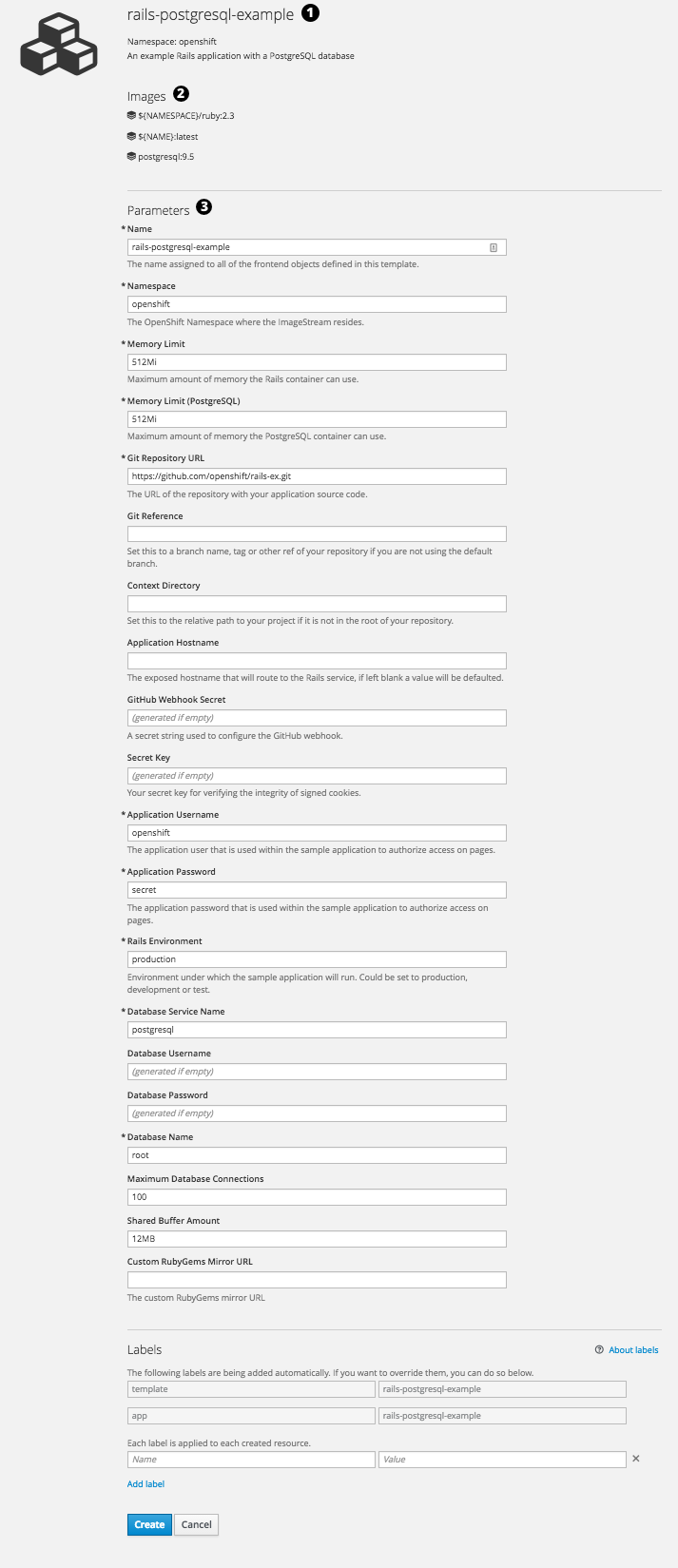

You can create an application from a previously stored template or from a template file, by specifying the name of the template as an argument. For example, you can store a sample application template and use it to create an application.

To create an application from a stored template:

$ oc create -f examples/sample-app/application-template-stibuild.json

$ oc new-app ruby-helloworld-sample

To directly use a template in your local file system, without first storing it in OpenShift Container Platform, use the -f|--file argument:

$ oc new-app -f examples/sample-app/application-template-stibuild.jsonTemplate Parameters

When creating an application based on a template, use the -p|--param argument to set parameter values defined by the template:

$ oc new-app ruby-helloworld-sample \

-p ADMIN_USERNAME=admin,ADMIN_PASSWORD=mypassword2.2.2.4. Further Modifying Application Creation

The new-app command generates OpenShift Container Platform objects that will build, deploy, and run the application being created. Normally, these objects are created in the current project using names derived from the input source repositories or the input images. However, new-app allows you to modify this behavior.

The set of objects created by new-app depends on the artifacts passed as input: source repositories, images, or templates.

| Object | Description |

|---|---|

|

|

A |

|

|

For |

|

|

A |

|

|

The |

| Other | Other objects may be generated when instantiating templates, according to the template. |

2.2.2.4.1. Specifying Environment Variables

When generating applications from a source or an image, you can use the -e|--env argument to pass environment variables to the application container at run time:

$ oc new-app openshift/postgresql-92-centos7 \

-e POSTGRESQL_USER=user \

-e POSTGRESQL_DATABASE=db \

-e POSTGRESQL_PASSWORD=password2.2.2.4.2. Specifying Labels

When generating applications from source, images, or templates, you can use the -l|--label argument to add labels to the created objects. Labels make it easy to collectively select, configure, and delete objects associated with the application.

$ oc new-app https://github.com/openshift/ruby-hello-world -l name=hello-world2.2.2.4.3. Viewing the Output Without Creation

To see a dry-run of what new-app will create, you can use the -o|--output argument with a yaml or json value. You can then use the output to preview the objects that will be created, or redirect it to a file that you can edit. Once you are satisfied, you can use oc create to create the OpenShift Container Platform objects.

To output new-app artifacts to a file, edit them, then create them:

$ oc new-app https://github.com/openshift/ruby-hello-world \

-o yaml > myapp.yaml

$ vi myapp.yaml

$ oc create -f myapp.yaml2.2.2.4.4. Creating Objects With Different Names

Objects created by new-app are normally named after the source repository, or the image used to generate them. You can set the name of the objects produced by adding a --name flag to the command:

$ oc new-app https://github.com/openshift/ruby-hello-world --name=myapp2.2.2.4.5. Creating Objects in a Different Project

Normally, new-app creates objects in the current project. However, you can create objects in a different project that you have access to using the -n|--namespace argument:

$ oc new-app https://github.com/openshift/ruby-hello-world -n myproject2.2.2.4.6. Creating Multiple Objects

The new-app command allows creating multiple applications specifying multiple parameters to new-app. Labels specified in the command line apply to all objects created by the single command. Environment variables apply to all components created from source or images.

To create an application from a source repository and a Docker Hub image:

$ oc new-app https://github.com/openshift/ruby-hello-world mysql

If a source code repository and a builder image are specified as separate arguments, new-app uses the builder image as the builder for the source code repository. If this is not the intent, specify the required builder image for the source using the ~ separator.

2.2.2.4.7. Grouping Images and Source in a Single Pod

The new-app command allows deploying multiple images together in a single pod. In order to specify which images to group together, use the + separator. The --group command line argument can also be used to specify the images that should be grouped together. To group the image built from a source repository with other images, specify its builder image in the group:

$ oc new-app ruby+mysqlTo deploy an image built from source and an external image together:

$ oc new-app \

ruby~https://github.com/openshift/ruby-hello-world \

mysql \

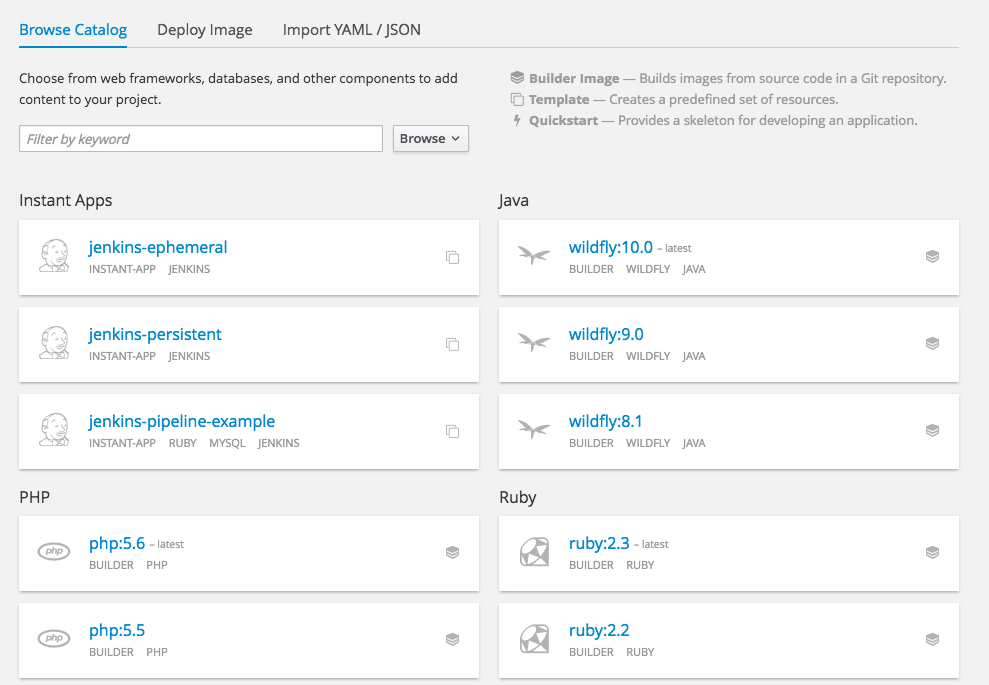

--group=ruby+mysql2.2.3. Creating an Application Using the Web Console

While in the desired project, click Add to Project:

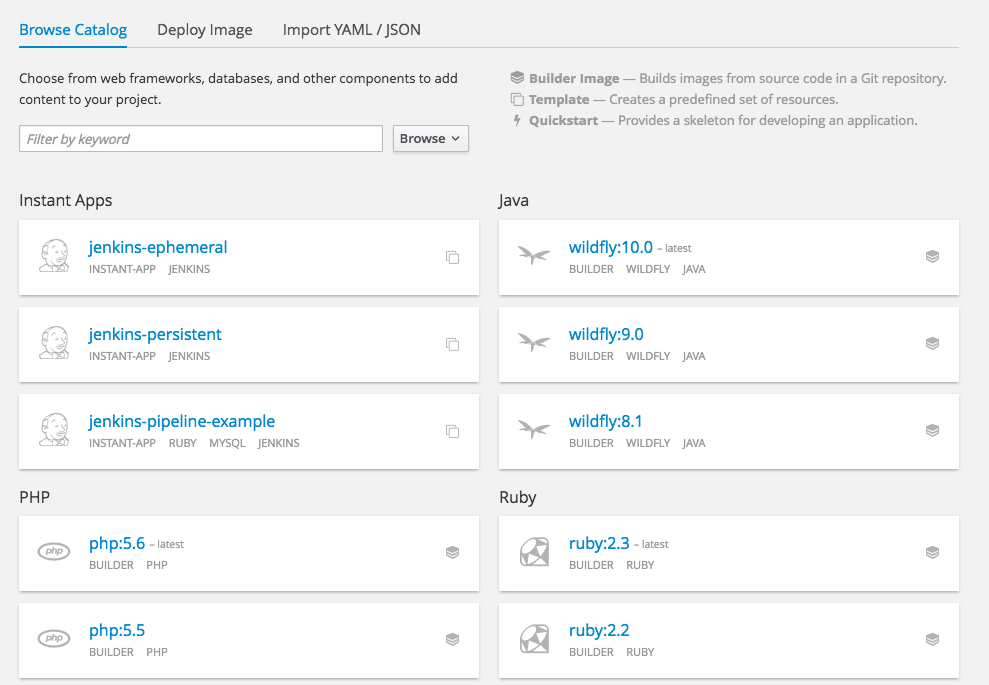

Select either a builder image from the list of images in your project, or from the global library:

Note

NoteOnly image stream tags that have the builder tag listed in their annotations appear in this list, as demonstrated here:

kind: "ImageStream" apiVersion: "v1" metadata: name: "ruby" creationTimestamp: null spec: dockerImageRepository: "registry.access.redhat.com/openshift3/ruby-20-rhel7" tags: - name: "2.0" annotations: description: "Build and run Ruby 2.0 applications" iconClass: "icon-ruby" tags: "builder,ruby"1 supports: "ruby:2.0,ruby" version: "2.0"- 1

- Including builder here ensures this

ImageStreamTagappears in the web console as a builder.

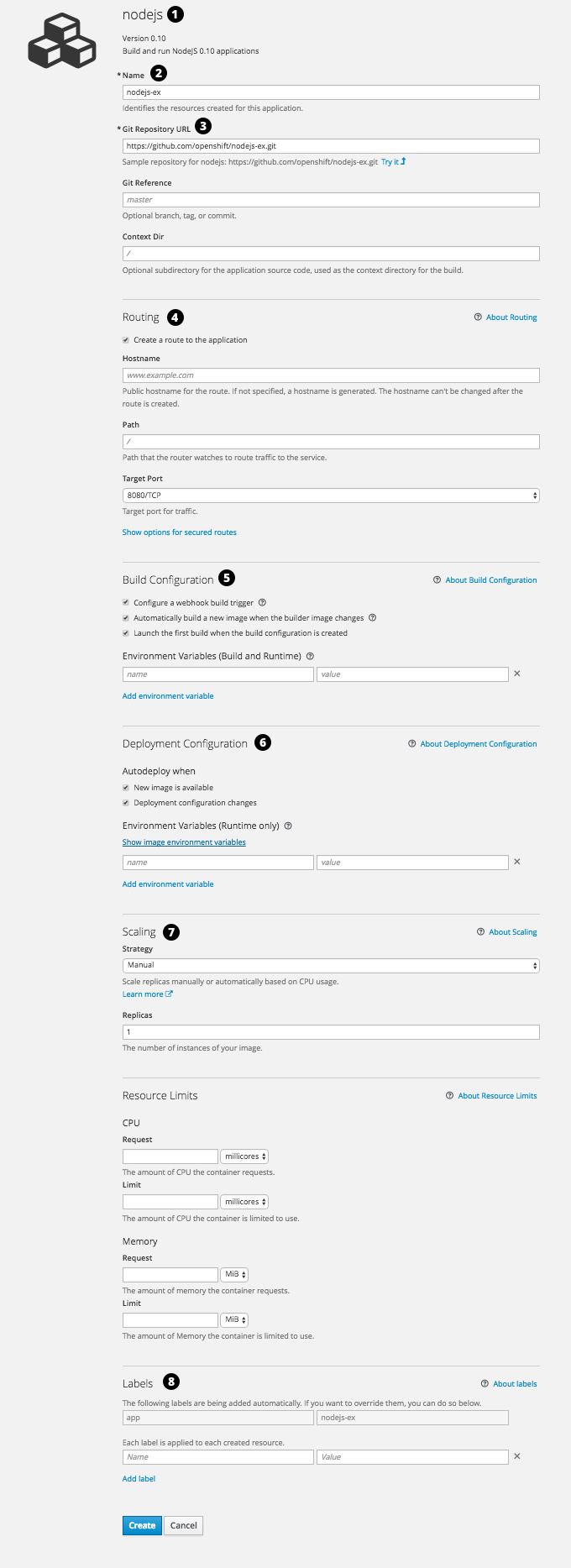

Modify the settings in the new application screen to configure the objects to support your application:

- The builder image name and description.

- The application name used for the generated OpenShift Container Platform objects.

- The Git repository URL, reference, and context directory for your source code.

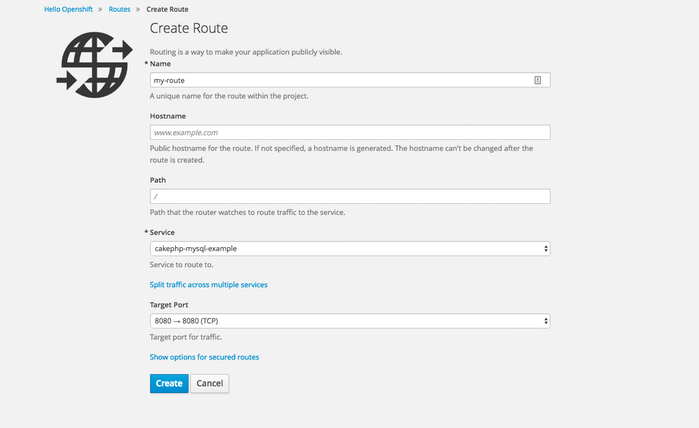

- Routing configuration section for making this application publicly accessible.

- Build configuration section for customizing build triggers.

- Deployment configuration section for customizing deployment triggers and image environment variables.

- Replica scaling section for configuring the number of running instances of the application.

- The labels to assign to all items generated for the application. You can add and edit labels for all objects here.

NoteTo see all of the configuration options, click the "Show advanced build and deployment options" link.

2.3. Promoting Applications Across Environments

2.3.1. Overview

Application promotion means moving an application through various runtime environments, typically with an increasing level of maturity. For example, an application might start out in a development environment, then be promoted to a stage environment for further testing, before finally being promoted into a production environment. As changes are introduced in the application, again the changes will start in development and be promoted through stage and production.

The "application" today is more than just the source code written in Java, Perl, Python, etc. It is more now than the static web content, the integration scripts, or the associated configuration for the language specific runtimes for the application. It is more than the application specific archives consumed by those language specific runtimes.

In the context of OpenShift Container Platform and its combined foundation of Kubernetes and Docker, additional application artifacts include:

- Docker container images with their rich set of metadata and associated tooling.

- Environment variables that are injected into containers for application use.

API objects (also known as resource definitions; see Core Concepts) of OpenShift Container Platform, which:

- are injected into containers for application use.

- dictate how OpenShift Container Platform manages containers and pods.

In examining how to promote applications in OpenShift Container Platform, this topic will:

- Elaborate on these new artifacts introduced to the application definition.

- Describe how you can demarcate the different environments for your application promotion pipeline.

- Discuss methodologies and tools for managing these new artifacts.

- Provide examples that apply the various concepts, constructs, methodologies, and tools to application promotion.

2.3.2. Application Components

2.3.2.1. API Objects

With regard to OpenShift Container Platform and Kubernetes resource definitions (the items newly introduced to the application inventory), there are a couple of key design points for these API objects that are relevant to revisit when considering the topic of application promotion.

First, as highlighted throughout OpenShift Container Platform documentation, every API object can be expressed via either JSON or YAML, making it easy to manage these resource definitions via traditional source control and scripting.

Also, the API objects are designed such that there are portions of the object which specify the desired state of the system, and other portions which reflect the status or current state of the system. This can be thought of as inputs and outputs. The input portions, when expressed in JSON or YAML, in particular are items that fit naturally as source control managed (SCM) artifacts.

Remember, the input or specification portions of the API objects can be totally static or dynamic in the sense that variable substitution via template processing is possible on instantiation.

The result of these points with respect to API objects is that with their expression as JSON or YAML files, you can treat the configuration of the application as code.

Conceivably, almost any of the API objects may be considered an application artifact by your organization. Listed below are the objects most commonly associated with deploying and managing an application:

- BuildConfigs

-

This is a special case resource in the context of application promotion. While a

BuildConfigis certainly a part of the application, especially from a developer’s perspective, typically theBuildConfigis not promoted through the pipeline. It produces theImagethat is promoted (along with other items) through the pipeline. - Templates

-

In terms of application promotion,

Templatescan serve as the starting point for setting up resources in a given staging environment, especially with the parameterization capabilities. Additional post-instantiation modifications are very conceivable though when applications move through a promotion pipeline. See Scenarios and Examples for more on this. - Routes

-

These are the most typical resources that differ stage to stage in the application promotion pipeline, as tests against different stages of an application access that application via its

Route. Also, remember that you have options with regard to manual specification or auto-generation of host names, as well as the HTTP-level security of theRoute. - Services

-

If reasons exist to avoid

RoutersandRoutesat given application promotion stages (perhaps for simplicity’s sake for individual developers at early stages), an application can be accessed via theClusterIP address and port. If used, some management of the address and port between stages could be warranted. - Endpoints

-

Certain application-level services (e.g., database instances in many enterprises) may not be managed by OpenShift Container Platform. If so, then creating those

Endpointsyourself, along with the necessary modifications to the associatedService(omitting the selector field on theService) are activities that are either duplicated or shared between stages (based on how you delineate your environment). - Secrets

-

The sensitive information encapsulated by

Secretsare shared between staging environments when the corresponding entity (either aServicemanaged by OpenShift Container Platform or an external service managed outside of OpenShift Container Platform) the information pertains to is shared. If there are different versions of the said entity in different stages of your application promotion pipeline, it may be necessary to maintain a distinctSecretin each stage of the pipeline or to make modifications to it as it traverses through the pipeline. Also, take care that if you are storing theSecretas JSON or YAML in an SCM, some form of encryption to protect the sensitive information may be warranted. - DeploymentConfigs

- This object is the primary resource for defining and scoping the environment for a given application promotion pipeline stage; it controls how your application starts up. While there are aspects of it that will be common across all the different stage, undoubtedly there will be modifications to this object as it progresses through your application promotion pipeline to reflect differences in the environments for each stage, or changes in behavior of the system to facilitate testing of the different scenarios your application must support.

- ImageStreams, ImageStreamTags, and ImageStreamImage

- Detailed in the Images section, these objects are central to the OpenShift Container Platform additions around managing container images.

- ServiceAccounts and RoleBindings

-

Management of permissions to other API objects within OpenShift Container Platform, as well as the external services of your enterprise, are intrinsic to managing your application. Similar to

Secrets, theServiceAccountsandRoleBindingscanobjects vary in how they are shared between the different stages of your application promotion pipeline based on how your enterprise needs to share or isolate those different environments. - PersistentVolumeClaims

- Relevant to stateful services like databases, how much these are shared between your different application promotion stages directly correlates to how your organization shares or isolates the copies of your application data.

- ConfigMaps

-

A useful decoupling of

Podconfiguration from thePoditself (think of an environment variable style configuration), these can either be shared by the various staging environments when consistentPodbehavior is desired. They can also be modified between stages to alterPodbehavior (usually as different aspects of the application are vetted at different stages).

2.3.2.2. Images

As noted earlier, container images are now artifacts of your application. In fact, of the new applications artifacts, images and the management of images are the key pieces with respect to application promotion. In some cases, an image might encapsulate the entirety of your application, and the application promotion flow consists solely of managing the image.

Images are not typically managed in a SCM system, just as application binaries were not in previous systems. However, just as with binaries, installable artifacts and corresponding repositories (that is, RPMs, RPM repositories, Nexus, etc.) arose with similar semantics to SCMs, similar constructs and terminology around image management that are similar to SCMs have arisen:

- Image registry == SCM server

- Image repository == SCM repository

As images reside in registries, application promotion is concerned with ensuring the appropriate image exists in a registry that can be accessed from the environment that needs to run the application represented by that image.

Rather than reference images directly, application definitions typically abstract the reference into an image stream. This means the image stream will be another API object that makes up the application components. For more details on image streams, see Core Concepts.

2.3.2.3. Summary

Now that the application artifacts of note, images and API objects, have been detailed in the context of application promotion within OpenShift Container Platform, the notion of where you run your application in the various stages of your promotion pipeline is next the point of discussion.

2.3.3. Deployment Environments

A deployment environment, in this context, describes a distinct space for an application to run during a particular stage of a CI/CD pipeline. Typical environments include development, test, stage, and production, for example. The boundaries of an environment can be defined in different ways, such as:

- Via labels and unique naming within a single project.

- Via distinct projects within a cluster.

- Via distinct clusters.

And it is conceivable that your organization leverages all three.

2.3.3.1. Considerations

Typically, you will consider the following heuristics in how you structure the deployment environments:

- How much resource sharing the various stages of your promotion flow allow

- How much isolation the various stages of your promotion flow require

- How centrally located (or geographically dispersed) the various stages of your promotion flow are

Also, some important reminders on how OpenShift Container Platform clusters and projects relate to image registries:

- Multiple project in the same cluster can access the same image streams.

- Multiple clusters can access the same external registries.

- Clusters can only share a registry if the OpenShift Container Platform internal image registry is exposed via a route.

2.3.3.2. Summary

After deployment environments are defined, promotion flows with delineation of stages within a pipeline can be implemented. The methods and tools for constructing those promotion flow implementations are the next point of discussion.

2.3.4. Methods and Tools

Fundamentally, application promotion is a process of moving the aforementioned application components from one environment to another. The following subsections outline tools that can be used to move the various components by hand, before advancing to discuss holistic solutions for automating application promotion.

There are a number of insertion points available during both the build and deployment processes. They are defined within BuildConfig and DeploymentConfig API objects. These hooks allow for the invocation of custom scripts which can interact with deployed components such as databases, and with the OpenShift Container Platform cluster itself.

Therefore, it is possible to use these hooks to perform component management operations that effectively move applications between environments, for example by performing an image tag operation from within a hook. However, the various hook points are best suited to managing an application’s lifecycle within a given environment (for example, using them to perform database schema migrations when a new version of the application is deployed), rather than to move application components between environments.

2.3.4.1. Managing API Objects

Resources, as defined in one environment, will be exported as JSON or YAML file content in preparation for importing it into a new environment. Therefore, the expression of API objects as JSON or YAML serves as the unit of work as you promote API objects through your application pipeline. The oc CLI is used to export and import this content.

While not required for promotion flows with OpenShift Container Platform, with the JSON or YAML stored in files, you can consider storing and retrieving the content from a SCM system. This allows you to leverage the versioning related capabilities of the SCM, including the creation of branches, and the assignment of and query on various labels or tags associated to versions.

2.3.4.1.1. Exporting API Object State

API object specifications should be captured with oc export. This operation removes environment specific data from the object definitions (e.g., current namespace or assigned IP addresses), allowing them to be recreated in different environments (unlike oc get operations, which output an unfiltered state of the object).

Use of oc label, which allows for adding, modifying, or removing labels on API objects, can prove useful as you organize the set of object collected for promotion flows, because labels allow for selection and management of groups of pods in a single operation. This makes it easier to export the correct set of objects and, because the labels will carry forward when the objects are created in a new environment, they also make for easier management of the application components in each environment.

API objects often contain references such as a DeploymentConfig that references a Secret. When moving an API object from one environment to another, you must ensure that such references are also moved to the new environment.

Similarly, API objects such as a DeploymentConfig often contain references to ImageStreams that reference an external registry. When moving an API object from one environment to another, you must ensure such references are resolvable within the new environment, meaning that the reference must be resolvable and the ImageStream must reference an accessible registry in the new environment. See Moving Images and Promotion Caveats for more detail.

2.3.4.1.2. Importing API Object State

2.3.4.1.2.1. Initial Creation

The first time an application is being introduced into a new environment, it is sufficient to take the JSON or YAML expressing the specifications of your API objects and run oc create to create them in the appropriate environment. When using oc create, keep the --save-config option in mind. Saving configuration elements on the object in its annotation list facilitates the later use of oc apply to modify the object.

2.3.4.1.2.2. Iterative Modification

After the various staging environments are initially established, as promotion cycles commence and the application moves from stage to stage, the updates to your application can include modification of the API objects that are part of the application. Changes in these API objects are conceivable since they represent the configuration for the OpenShift Container Platform system. Motivations for such changes include:

- Accounting for environmental differences between staging environments.

- Verifying various scenarios your application supports.

Transfer of the API objects to the next stage’s environment is accomplished via use of the oc CLI. While a rich set of oc commands which modify API objects exist, this topic focuses on oc apply, which computes and applies differences between objects.

Specifically, you can view oc apply as a three-way merge that takes in files or stdin as the input along with an existing object definition. It performs a three-way merge between:

- the input into the command,

- the current version of the object, and

- the most recent user specified object definition stored as an annotation in the current object.

The existing object is then updated with the result.

If further customization of the API objects is necessary, as in the case when the objects are not expected to be identical between the source and target environments, oc commands such as oc set can be used to modify the object after applying the latest object definitions from the upstream environment.

Some specific usages are cited in Scenarios and Examples.

2.3.4.2. Managing Images and Image Streams

Images in OpenShift Container Platform are managed via a series of API objects as well. However, managing images are so central to application promotion that discussion of the tools and API objects most directly tied to images warrant separate discussion. Both manual and automated forms exist to assist you in managing image promotion (the propagation of images through your pipeline).

2.3.4.2.1. Moving Images

For all the detailed caveats around managing images, refer to the Managing Images topic.

2.3.4.2.1.2. When Staging Environments Use Different Registries

More advanced usage occurs when your staging environments leverage different OpenShift Container Platform registries. Accessing the Internal Registry spells out the steps in detail, but in summary you can:

-

Use the

dockercommand in conjunction which obtaining the OpenShift Container Platform access token to supply into yourdocker logincommand. -

After being logged into the OpenShift Container Platform registry, use

docker pull,docker taganddocker pushto transfer the image. -

After the image is available in the registry of the next environment of your pipeline, use

oc tagas needed to populate any image streams.

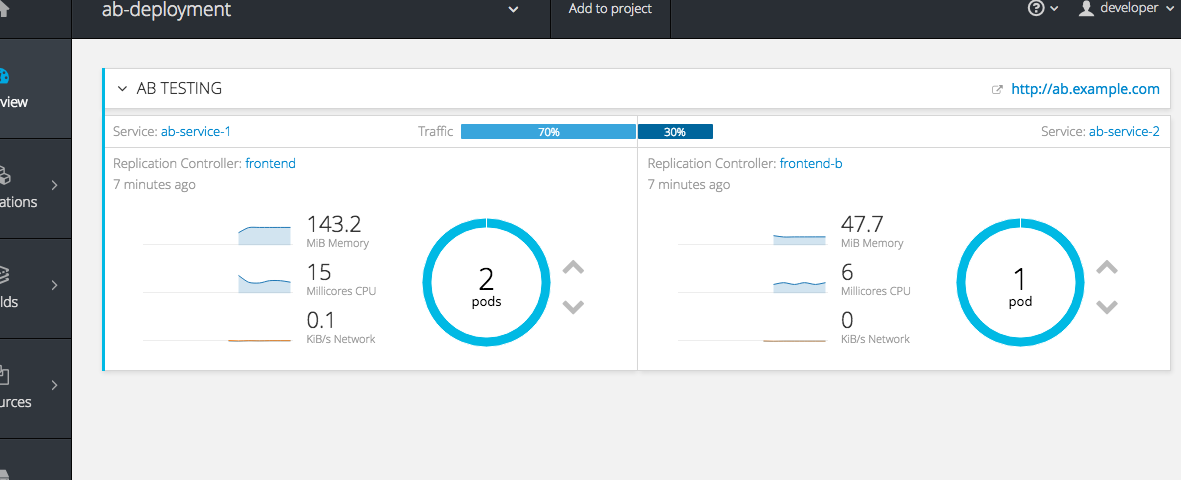

2.3.4.2.2. Deploying

Whether changing the underlying application image or the API objects that configure the application, a deployment is typically necessary to pick up the promoted changes. If the images for your application change (for example, due to an oc tag operation or a docker push as part of promoting an image from an upstream environment), ImageChangeTriggers on your DeploymentConfig can trigger the new deployment. Similarly, if the DeploymentConfig API object itself is being changed, a ConfigChangeTrigger can initiate a deployment when the API object is updated by the promotion step (for example, oc apply).

Otherwise, the oc commands that facilitate manual deployment include:

-

oc deploy: The original method to view, start, cancel, or retry deployments. -

oc rollout: The new approach to manage deployments, including pause and resume semantics and richer features around managing history. -

oc rollback: Allows for reversion to a previous deployment; in the promotion scenario, if testing of a new version encounters issues, confirming it still works with the previous version could be warranted.

2.3.4.2.3. Automating Promotion Flows with Jenkins

After you understand the components of your application that need to be moved between environments when promoting it and the steps required to move the components, you can start to orchestrate and automate the workflow. OpenShift Container Platform provides a Jenkins image and plug-ins to help with this process.

The OpenShift Container Platform Jenkins image is detailed in Using Images, including the set of OpenShift Container Platform-centric plug-ins that facilitate the integration of Jenkins, and Jenkins Pipelines. Also, the Pipeline build strategy facilitates the integration between Jenkins Pipelines and OpenShift Container Platform. All of these focus on enabling various aspects of CI/CD, including application promotion.

When moving beyond manual execution of application promotion steps, the Jenkins-related features provided by OpenShift Container Platform should be kept in mind:

- OpenShift Container Platform provides a Jenkins image that is heavily customized to greatly ease deployment in an OpenShift Container Platform cluster.

- The Jenkins image contains the OpenShift Pipeline plug-in, which provides building blocks for implementing promotion workflows. These building blocks include the triggering of Jenkins jobs as image streams change, as well as the triggering of builds and deployments within those jobs.

-

BuildConfigsemploying the OpenShift Container Platform Jenkins Pipeline build strategy enable execution of Jenkinsfile-based Jenkins Pipeline jobs. Pipeline jobs are the strategic direction within Jenkins for complex promotion flows and can leverage the steps provided by the OpenShift Pipeline Plug-in.

2.3.4.2.4. Promotion Caveats

2.3.4.2.4.1. API Object References

API objects can reference other objects. A common use for this is to have a DeploymentConfig that references an image stream, but other reference relationships may also exist.

When copying an API object from one environment to another, it is critical that all references can still be resolved in the target environment. There are a few reference scenarios to consider:

- The reference is "local" to the project. In this case, the referenced object resides in the same project as the object that references it. Typically the correct thing to do is to ensure that you copy the referenced object into the target environment in the same project as the object referencing it.

The reference is to an object in another project. This is typical when an image stream in a shared project is used by multiple application projects (see Managing Images). In this case, when copying the referencing object to the new environment, you must update the reference as needed so it can be resolved in the target environment. That may mean:

- Changing the project the reference points to, if the shared project has a different name in the target environment.

- Moving the referenced object from the shared project into the local project in the target environment and updating the reference to point to the local project when moving the primary object into the target environment.

- Some other combination of copying the referenced object into the target environment and updating references to it.

In general, the guidance is to consider objects referenced by the objects being copied to a new environment and ensure the references are resolvable in the target environment. If not, take appropriate action to fix the references and make the referenced objects available in the target environment.

2.3.4.2.4.2. Image Registry References

Image streams point to image repositories to indicate the source of the image they represent. When an image stream is moved from one environment to another, it is important to consider whether the registry and repository reference should also change:

- If different image registries are used to assert isolation between a test environment and a production environment.

- If different image repositories are used to separate test and production-ready images.

If either of these are the case, the image stream must be modified when it is copied from the source environment to the target environment so that it resolves to the correct image. This is in addition to performing the steps described in Scenarios and Examples to copy the image from one registry and repository to another.

2.3.4.3. Summary

At this point, the following have been defined:

- New application artifacts that make up a deployed application.

- Correlation of application promotion activities to tools and concepts provided by OpenShift Container Platform.

- Integration between OpenShift Container Platform and the CI/CD pipeline engine Jenkins.

Putting together examples of application promotion flows within OpenShift Container Platform is the final step for this topic.

2.3.5. Scenarios and Examples

Having defined the new application artifact components introduced by the Docker, Kubernetes, and OpenShift Container Platform ecosystems, this section covers how to promote those components between environments using the mechanisms and tools provided by OpenShift Container Platform.

Of the components making up an application, the image is the primary artifact of note. Taking that premise and extending it to application promotion, the core, fundamental application promotion pattern is image promotion, where the unit of work is the image. The vast majority of application promotion scenarios entails management and propagation of the image through the promotion pipeline.

Simpler scenarios solely deal with managing and propagating the image through the pipeline. As the promotion scenarios broaden in scope, the other application artifacts, most notably the API objects, are included in the inventory of items managed and propagated through the pipeline.

This topic lays out some specific examples around promoting images as well as API objects, using both manual and automated approaches. But first, note the following on setting up the environment(s) for your application promotion pipeline.

2.3.5.1. Setting up for Promotion

After you have completed development of the initial revision of your application, the next logical step is to package up the contents of the application so that you can transfer to the subsequent staging environments of your promotion pipeline.

First, group all the API objects you view as transferable and apply a common

labelto them:labels: promotion-group: <application_name>As previously described, the

oc labelcommand facilitates the management of labels with your various API objects.TipIf you initially define your API objects in a OpenShift Container Platform template, you can easily ensure all related objects have the common label you will use to query on when exporting in preparation for a promotion.

You can leverage that label on subsequent queries. For example, consider the following set of

occommand invocations that would then achieve the transfer of your application’s API objects:$ oc login <source_environment> $ oc project <source_project> $ oc export dc,is,svc,route,secret,sa -l promotion-group=<application_name> -o yaml > export.yaml $ oc login <target_environment> $ oc new-project <target_project>1 $ oc create -f export.yaml- 1

- Alternatively,

oc project <target_project>if it already exists.

NoteOn the

oc exportcommand, whether or not you include theistype for image streams depends on how you choose to manage images, image streams, and registries across the different environments in your pipeline. The caveats around this are discussed below. See also the Managing Images topic.You must also get any tokens necessary to operate against each registry used in the different staging environments in your promotion pipeline. For each environment:

Log in to the environment:

$ oc login <each_environment_with_a_unique_registry>Get the access token with:

$ oc whoami -t- Copy and paste the token value for later use.

2.3.5.2. Repeatable Promotion Process

After the initial setup of the different staging environments for your pipeline, a set of repeatable steps to validate each iteration of your application through the promotion pipeline can commence. These basic steps are taken each time the image or API objects in the source environment are changed:

Move updated images → Move updated API objects → Apply environment specific customizations

Typically, the first step is promoting any updates to the image(s) associated with your application to the next stage in the pipeline. As noted above, the key differentiator in promoting images is whether the OpenShift Container Platform registry is shared or not between staging environments.

If the registry is shared, simply leverage

oc tag:$ oc tag <project_for_stage_N>/<imagestream_name_for_stage_N>:<tag_for_stage_N> <project_for_stage_N+1>/<imagestream_name_for_stage_N+1>:<tag_for_stage_N+1>If the registry is not shared, you can leverage the access tokens for each of your promotion pipeline registries as you log into both the source and destination registries, pulling, tagging, and pushing your application images accordingly:

Log in to the source environment registry:

$ docker login -u <username> -e <any_email_address> -p <token_value> <src_env_registry_ip>:<port>Pull your application’s image:

$ docker pull <src_env_registry_ip>:<port>/<namespace>/<image name>:<tag>Tag your application’s image to the destination registry’s location, updating namespace, name, and tag as needed to conform to the destination staging environment:

$ docker tag <src_env_registry_ip>:<port>/<namespace>/<image name>:<tag> <dest_env_registry_ip>:<port>/<namespace>/<image name>:<tag>Log into the destination staging environment registry:

$ docker login -u <username> -e <any_email_address> -p <token_value> <dest_env_registry_ip>:<port>Push the image to its destination:

$ docker push <dest_env_registry_ip>:<port>/<namespace>/<image name>:<tag>TipTo automatically import new versions of an image from an external registry, the

oc tagcommand has a--scheduledoption. If used, the image theImageStreamTagreferences will be periodically pulled from the registry hosting the image.

Next, there are the cases where the evolution of your application necessitates fundamental changes to your API objects or additions and deletions from the set of API objects that make up the application. When such evolution in your application’s API objects occurs, the OpenShift Container Platform CLI provides a broad range of options to transfer to changes from one staging environment to the next.

Start in the same fashion as you did when you initially set up your promotion pipeline:

$ oc login <source_environment> $ oc project <source_project> $ oc export dc,is,svc,route,secret,sa -l template=<application_template_name> -o yaml > export.yaml $ oc login <target_environment> $ oc <target_project>Rather than simply creating the resources in the new environment, update them. You can do this a few different ways:

The more conservative approach is to leverage

oc applyand merge the new changes to each API object in the target environment. In doing so, you can--dry-run=trueoption and examine the resulting objects prior to actually changing the objects:$ oc apply -f export.yaml --dry-run=trueIf satisfied, actually run the

applycommand:$ oc apply -f export.yamlThe

applycommand optionally takes additional arguments that help with more complicated scenarios. Seeoc apply --helpfor more details.Alternatively, the simpler but more aggressive approach is to leverage

oc replace. There is no dry run with this update and replace. In the most basic form, this involves executing:$ oc replace -f export.yamlAs with

apply,replaceoptionally takes additional arguments for more sophisticated behavior. Seeoc replace --helpfor more details.

-

The previous steps automatically handle new API objects that were introduced, but if API objects were deleted from the source environment, they must be manually deleted from the target environment using

oc delete. Tuning of the environment variables cited on any of the API objects may be necessary as the desired values for those may differ between staging environments. For this, use

oc set env:$ oc set env <api_object_type>/<api_object_ID> <env_var_name>=<env_var_value>-

Finally, trigger a new deployment of the updated application using the

oc rolloutcommand or one of the other mechanisms discussed in the Deployments section above.

2.3.5.3. Repeatable Promotion Process Using Jenkins

The OpenShift Sample job defined in the Jenkins Docker Image for OpenShift Container Platform is an example of image promotion within OpenShift Container Platform within the constructs of Jenkins. Setup for this sample is located in the OpenShift Origin source repository.

This sample includes:

- Use of Jenkins as the CI/CD engine.

-

Use of the OpenShift Pipeline plug-in for Jenkins. This plug-in provides a subset of the functionality provided by the

ocCLI for OpenShift Container Platform packaged as Jenkins Freestyle and DSL Job steps. Note that theocbinary is also included in the Jenkins Docker Image for OpenShift Container Platform, and can also be used to interact with OpenShift Container Platform in Jenkins jobs. - The OpenShift Container Platform-provided templates for Jenkins. There is a template for both ephemeral and persistent storage.

-

A sample application: defined in the OpenShift Origin source repository, this application leverages

ImageStreams,ImageChangeTriggers,ImageStreamTags,BuildConfigs, and separateDeploymentConfigsandServicescorresponding to different stages in the promotion pipeline.

The following examines the various pieces of the OpenShift Sample job in more detail:

-

The first step is the equivalent of an

oc scale dc frontend --replicas=0call. This step is intended to bring down any previous versions of the application image that may be running. -

The second step is the equivalent of an

oc start-build frontendcall. -

The third step is the equivalent of an

oc deploy frontend --latestoroc rollout latest dc/frontendcall. - The fourth step is the "test" for this sample. It ensures that the associated service for this application is in fact accessible from a network perspective. Under the covers, a socket connection is attempted against the IP address and port associated with the OpenShift Container Platform service. Of course, additional tests can be added (if not via OpenShift Pipepline plug-in steps, then via use of the Jenkins Shell step to leverage OS-level commands and scripts to test your application).

-

The fifth step commences under that assumption that the testing of your application passed and hence intends to mark the image as "ready". In this step, a new prod tag is created for the application image off of the latest image. With the frontend

DeploymentConfighaving anImageChangeTriggerdefined for that tag, the corresponding "production" deployment is launched. - The sixth and last step is a verification step, where the plug-in confirms that OpenShift Container Platform launched the desired number of replicas for the "production" deployment.

Chapter 3. Authentication

3.1. Web Console Authentication

When accessing the web console from a browser at <master_public_addr>:8443, you are automatically redirected to a login page.

Review the browser versions and operating systems that can be used to access the web console.

You can provide your login credentials on this page to obtain a token to make API calls. After logging in, you can navigate your projects using the web console.

3.2. CLI Authentication

You can authenticate from the command line using the CLI command oc login. You can get started with the CLI by running this command without any options:

$ oc loginThe command’s interactive flow helps you establish a session to an OpenShift Container Platform server with the provided credentials. If any information required to successfully log in to an OpenShift Container Platform server is not provided, the command prompts for user input as required. The configuration is automatically saved and is then used for every subsequent command.

All configuration options for the oc login command, listed in the oc login --help command output, are optional. The following example shows usage with some common options:

$ oc login [-u=<username>] \

[-p=<password>] \

[-s=<server>] \

[-n=<project>] \

[--certificate-authority=</path/to/file.crt>|--insecure-skip-tls-verify]The following table describes these common options:

| Option | Syntax | Description |

|---|---|---|

|

|

| Specifies the host name of the OpenShift Container Platform server. If a server is provided through this flag, the command does not ask for it interactively. This flag can also be used if you already have a CLI configuration file and want to log in and switch to another server. |

|

|

| Allows you to specify the credentials to log in to the OpenShift Container Platform server. If user name or password are provided through these flags, the command does not ask for it interactively. These flags can also be used if you already have a configuration file with a session token established and want to log in and switch to another user name. |

|

|

|

A global CLI option which, when used with |

|

|

| Correctly and securely authenticates with an OpenShift Container Platform server that uses HTTPS. The path to a certificate authority file must be provided. |

|

|

|

Allows interaction with an HTTPS server bypassing the server certificate checks; however, note that it is not secure. If you try to |

CLI configuration files allow you to easily manage multiple CLI profiles.

If you have access to administrator credentials but are no longer logged in as the default system user system:admin, you can log back in as this user at any time as long as the credentials are still present in your CLI configuration file. The following command logs in and switches to the default project:

$ oc login -u system:admin -n defaultChapter 4. Projects

4.1. Overview

A project allows a community of users to organize and manage their content in isolation from other communities.

4.2. Creating a Project

If allowed by your cluster administrator , you can create a new project using the CLI or the web console.

To create a new project using the CLI:

$ oc new-project <project_name> \

--description="<description>" --display-name="<display_name>"For example:

$ oc new-project hello-openshift \

--description="This is an example project to demonstrate OpenShift v3" \

--display-name="Hello OpenShift"The number of projects you are allowed to create may be limited by the system administrator. Once your limit is reached, you may need to delete an existing project in order to create a new one.

4.3. Viewing Projects

When viewing projects, you are restricted to seeing only the projects you have access to view based on the authorization policy.

To view a list of projects:

$ oc get projectsYou can change from the current project to a different project for CLI operations. The specified project is then used in all subsequent operations that manipulate project-scoped content:

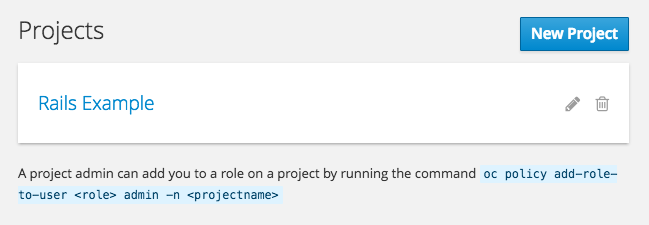

$ oc project <project_name>You can also use the web console to view and change between projects. After authenticating and logging in, you are presented with a list of projects that you have access to:

If you use the CLI to create a new project, you can then refresh the page in the browser to see the new project.

Selecting a project brings you to the project overview for that project.

4.4. Checking Project Status

The oc status command provides a high-level overview of the current project, with its components and their relationships. This command takes no argument:

$ oc status4.5. Filtering by Labels

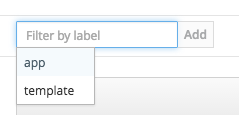

You can filter the contents of a project page in the web console by using the labels of a resource. You can pick from a suggested label name and values, or type in your own. Multiple filters can be added. When multiple filters are applied, resources must match all of the filters to remain visible.

To filter by labels:

Select a label type:

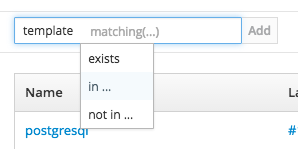

Select one of the following:

exists

Verify that the label name exists, but ignore its value.

in

Verify that the label name exists and is equal to one of the selected values.

not in

Verify that the label name does not exist, or is not equal to any of the selected values.

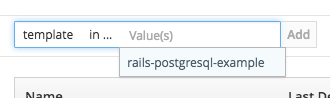

If you selected in or not in, select a set of values then select Filter:

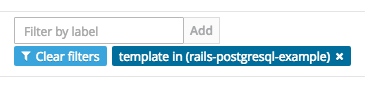

After adding filters, you can stop filtering by selecting Clear all filters or by clicking individual filters to remove them:

4.6. Deleting a Project

When you delete a project, the server updates the project status to Terminating from Active. The server then clears all content from a project that is Terminating before finally removing the project. While a project is in Terminating status, a user cannot add new content to the project. Projects can be deleted from the CLI or the web console.

To delete a project using the CLI:

$ oc delete project <project_name>Chapter 5. Migrating Applications

5.1. Overview

This topic covers the migration procedure of OpenShift version 2 (v2) applications to OpenShift version 3 (v3).

This topic uses some terminology that is specific to OpenShift v2. Comparing OpenShift Enterprise 2 and OpenShift Enterprise 3 provides insight on the differences between the two versions and the language used.

To migrate OpenShift v2 applications to OpenShift Container Platform v3, all cartridges in the v2 application must be recorded as each v2 cartridge is equivalent with a corresponding image or template in OpenShift Container Platform v3 and they must be migrated individually. For each cartridge, all dependencies or required packages also must be recorded, as they must be included in the v3 images.

The general migration procedure is:

Back up the v2 application.

- Web cartridge: The source code can be backed up to a Git repository such as by pushing to a repository on GitHub.

-

Database cartridge: The database can be backed up using a dump command (

mongodump,mysqldump,pg_dump) to back up the database. Web and database cartridges:

rhcclient tool provides snapshot ability to back up multiple cartridges:$ rhc snapshot save <app_name>The snapshot is a tar file that can be unzipped, and its content is application source code and the database dump.

- If the application has a database cartridge, create a v3 database application, sync the database dump to the pod of the new v3 database application, then restore the v2 database in the v3 database application with database restore commands.

- For a web framework application, edit the application source code to make it v3 compatible. Then, add any dependencies or packages required in appropriate files in the Git repository. Convert v2 environment variables to corresponding v3 environment variables.

- Create a v3 application from source (your Git repository) or from a quickstart with your Git URL. Also, add the database service parameters to the new application to link the database application to the web application.

- In v2, there is an integrated Git environment and your applications automatically rebuild and restart whenever a change is pushed to your v2 Git repository. In v3, in order to have a build automatically triggered by source code changes pushed to your public Git repository, you must set up a webhook after the initial build in v3 is completed.

5.2. Migrating Database Applications

5.2.1. Overview

This topic reviews how to migrate MySQL, PostgreSQL, and MongoDB database applications from OpenShift version 2 (v2) to OpenShift version 3 (v3).

5.2.2. Supported Databases

| v2 | v3 |

|---|---|

| MongoDB: 2.4 | MongoDB: 2.4, 2.6 |

| MySQL: 5.5 | MySQL: 5.5, 5.6 |

| PostgreSQL: 9.2 | PostgreSQL: 9.2, 9.4 |

5.2.3. MySQL

Export all databases to a dump file and copy it to a local machine (into the current directory):

$ rhc ssh <v2_application_name> $ mysqldump --skip-lock-tables -h $OPENSHIFT_MYSQL_DB_HOST -P ${OPENSHIFT_MYSQL_DB_PORT:-3306} -u ${OPENSHIFT_MYSQL_DB_USERNAME:-'admin'} \ --password="$OPENSHIFT_MYSQL_DB_PASSWORD" --all-databases > ~/app-root/data/all.sql $ exitDownload dbdump to your local machine:

$ mkdir mysqldumpdir $ rhc scp -a <v2_application_name> download mysqldumpdir app-root/data/all.sqlCreate a v3 mysql-persistent pod from template:

$ oc new-app mysql-persistent -p \ MYSQL_USER=<your_V2_mysql_username> -p \ MYSQL_PASSWORD=<your_v2_mysql_password> -p MYSQL_DATABASE=<your_v2_database_name>Check to see if the pod is ready to use:

$ oc get podsWhen the pod is up and running, copy database archive files to your v3 MySQL pod:

$ oc rsync /local/mysqldumpdir <mysql_pod_name>:/var/lib/mysql/dataRestore the database in the v3 running pod:

$ oc rsh <mysql_pod> $ cd /var/lib/mysql/data/mysqldumpdirIn v3, to restore databases you need to access MySQL as root user.

In v2, the

$OPENSHIFT_MYSQL_DB_USERNAMEhad full privileges on all databases. In v3, you must grant privileges to$MYSQL_USERfor each database.$ mysql -u root $ source all.sqlGrant all privileges on <dbname> to

<your_v2_username>@localhost, then flush privileges.Remove the dump directory from the pod:

$ cd ../; rm -rf /var/lib/mysql/data/mysqldumpdir

Supported MySQL Environment Variables

| v2 | v3 |

|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

| |

|

| |

|

| |

|

| |

|

| |

|

| |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| |

|

| |

|

| |

|

| |

|

| |

|

|

5.2.4. PostgreSQL

Back up the v2 PostgreSQL database from the gear:

$ rhc ssh -a <v2-application_name> $ mkdir ~/app-root/data/tmp $ pg_dump <database_name> | gzip > ~/app-root/data/tmp/<database_name>.gzExtract the backup file back to your local machine:

$ rhc scp -a <v2_application_name> download <local_dest> app-root/data/tmp/<db-name>.gz $ gzip -d <database-name>.gzNoteSave the backup file to a separate folder for step 4.

Create the PostgreSQL service using the v2 application database name, user name and password to create the new service:

$ oc new-app postgresql-persistent -p POSTGRESQL_DATABASE=dbname -p POSTGRESQL_PASSWORD=password -p POSTGRESQL_USER=usernameCheck to see if the pod is ready to use:

$ oc get podsWhen the pod is up and running, sync the backup directory to pod:

$ oc rsync /local/path/to/dir <postgresql_pod_name>:/var/lib/pgsql/dataRemotely access the pod:

$ oc rsh <pod_name>Restore the database:

psql dbname < /var/lib/pgsql/data/<database_backup_file>Remove all backup files that are no longer needed:

$ rm /var/lib/pgsql/data/<database-backup-file>

Supported PostgreSQL Environment Variables

| v2 | v3 |

|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

| |

|

| |

|

| |

|

| |

|

| |

|

| |

|

| |

|

| |

|

| |

|

| |

|

| |

|

| |

|

| |

|

|

5.2.5. MongoDB

- For OpenShift v3: MongoDB shell version 3.2.6

- For OpenShift v2: MongoDB shell version 2.4.9

Remotely access the v2 application via the

sshcommand:$ rhc ssh <v2_application_name>Run mongodump, specifying a single database with

-d <database_name> -c <collections>. Without those options, dump all databases. Each database is dumped in its own directory:$ mongodump -h $OPENSHIFT_MONGODB_DB_HOST -o app-root/repo/mydbdump -u 'admin' -p $OPENSHIFT_MONGODB_DB_PASSWORD $ cd app-root/repo/mydbdump/<database_name>; tar -cvzf dbname.tar.gz $ exitDownload dbdump to a local machine in the mongodump directory:

$ mkdir mongodump $ rhc scp -a <v2 appname> download mongodump \ app-root/repo/mydbdump/<dbname>/dbname.tar.gzStart a MongoDB pod in v3. Because the latest image (3.2.6) does not include mongo-tools, to use

mongorestoreormongoimportcommands you need to edit the default mongodb-persistent template to specify the image tag that contains themongo-tools, “mongodb:2.4”. For that reason, the followingoc exportcommand and edit are necessary:$ oc export template mongodb-persistent -n openshift -o json > mongodb-24persistent.jsonEdit L80 of mongodb-24persistent.json; replace

mongodb:latestwithmongodb:2.4.$ oc new-app --template=mongodb-persistent -n <project-name-that-template-was-created-in> \ MONGODB_USER=user_from_v2_app -p \ MONGODB_PASSWORD=password_from_v2_db -p \ MONGODB_DATABASE=v2_dbname -p \ MONGODB_ADMIN_PASSWORD=password_from_v2_db $ oc get podsWhen the mongodb pod is up and running, copy the database archive files to the v3 MongoDB pod:

$ oc rsync local/path/to/mongodump <mongodb_pod_name>:/var/lib/mongodb/data $ oc rsh <mongodb_pod>In the MongoDB pod, complete the following for each database you want to restore:

$ cd /var/lib/mongodb/data/mongodump $ tar -xzvf dbname.tar.gz $ mongorestore -u $MONGODB_USER -p $MONGODB_PASSWORD -d dbname -v /var/lib/mongodb/data/mongodumpCheck if the database is restored:

$ mongo admin -u $MONGODB_USER -p $MONGODB_ADMIN_PASSWORD $ use dbname $ show collections $ exitRemove the mongodump directory from the pod:

$ rm -rf /var/lib/mongodb/data/mongodump

Supported MongoDB Environment Variables

| v2 | v3 |

|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

| |

|

| |

|

| |

|

| |

|

| |

|

| |

|

| |

|

| |

|

|

5.3. Migrating Web Framework Applications

5.3.1. Overview

This topic reviews how to migrate Python, Ruby, PHP, Perl, Node.js, JBoss EAP, JBoss WS (Tomcat), and Wildfly 10 (JBoss AS) web framework applications from OpenShift version 2 (v2) to OpenShift version 3 (v3).

5.3.2. Python

Set up a new GitHub repository and add it as a remote branch to the current, local v2 Git repository:

$ git remote add <remote-name> https://github.com/<github-id>/<repo-name>.gitPush the local v2 source code to the new repository:

$ git push -u <remote-name> masterEnsure that all important files such as setup.py, wsgi.py, requirements.txt, and etc are pushed to new repository.

- Ensure all required packages for your application are included in requirements.txt.

Use the

occommand to launch a new Python application from the builder image and source code:$ oc new-app --strategy=source python:3.3~https://github.com/<github-id>/<repo-name> --name=<app-name> -e <ENV_VAR_NAME>=<env_var_value>

Supported Python Versions

| v2 | v3 |

|---|---|

| Python: 2.6, 2.7, 3.3 | Python: 2.7, 3.3, 3.4 |

| Django | Django-psql-example (quickstart) |

5.3.3. Ruby

Set up a new GitHub repository and add it as a remote branch to the current, local v2 Git repository:

$ git remote add <remote-name> https://github.com/<github-id>/<repo-name>.gitPush the local v2 source code to the new repository:

$ git push -u <remote-name> masterIf you do not have a Gemfile and are running a simple rack application, copy this Gemfile into the root of your source:

https://github.com/openshift/ruby-ex/blob/master/GemfileNoteThe latest version of the rack gem that supports Ruby 2.0 is 1.6.4, so the Gemfile needs to be modified to

gem 'rack', “1.6.4”.For Ruby 2.2 or later, use the rack gem 2.0 or later.

Use the

occommand to launch a new Ruby application from the builder image and source code:$ oc new-app --strategy=source ruby:2.0~https://github.com/<github-id>/<repo-name>.git

Supported Ruby Versions

| v2 | v3 |

|---|---|

| Ruby: 1.8, 1.9, 2.0 | Ruby: 2.0, 2.2 |

| Ruby on Rails: 3, 4 | Rails-postgresql-example (quickstart) |

| Sinatra |

5.3.4. PHP

Set up a new GitHub repository and add it as a remote branch to the current, local v2 Git repository:

$ git remote add <remote-name> https://github.com/<github-id>/<repo-name>Push the local v2 source code to the new repository:

$ git push -u <remote-name> masterUse the

occommand to launch a new PHP application from the builder image and source code:$ oc new-app https://github.com/<github-id>/<repo-name>.git --name=<app-name> -e <ENV_VAR_NAME>=<env_var_value>

Supported PHP Versions

| v2 | v3 |

|---|---|

| PHP: 5.3, 5.4 | PHP:5.5, 5.6 |

| PHP 5.4 with Zend Server 6.1 | |

| CodeIgniter 2 | |

| HHVM | |

| Laravel 5.0 | |

| cakephp-mysql-example (quickstart) |

5.3.5. Perl

Set up a new GitHub repository and add it as a remote branch to the current, local v2 Git repository:

$ git remote add <remote-name> https://github.com/<github-id>/<repo-name>Push the local v2 source code to the new repository:

$ git push -u <remote-name> masterEdit the local Git repository and push changes upstream to make it v3 compatible:

In v2, CPAN modules reside in .openshift/cpan.txt. In v3, the s2i builder looks for a file named cpanfile in the root directory of the source.

$ cd <local-git-repository> $ mv .openshift/cpan.txt cpanfileEdit cpanfile, as it has a slightly different format:

Expand format of cpanfile format of cpan.txt requires ‘cpan::mod’;

cpan::mod

requires ‘Dancer’;

Dancer

requires ‘YAML’;

YAML

Remove .openshift directory

NoteIn v3, action_hooks and cron tasks are not supported in the same way. See Action Hooks for more information.

-

Use the

occommand to launch a new Perl application from the builder image and source code:

$ oc new-app https://github.com/<github-id>/<repo-name>.gitSupported Perl Versions

| v2 | v3 |

|---|---|

| Perl: 5.10 | Perl: 5.16, 5.20 |

| Dancer-mysql-example (quickstart) |

5.3.6. Node.js

Set up a new GitHub repository and add it as a remote branch to the current, local Git repository:

$ git remote add <remote-name> https://github.com/<github-id>/<repo-name>Push the local v2 source code to the new repository:

$ git push -u <remote-name> masterEdit the local Git repository and push changes upstream to make it v3 compatible:

Remove the .openshift directory.

NoteIn v3, action_hooks and cron tasks are not supported in the same way. See Action Hooks for more information.

Edit server.js.

- L116 server.js: 'self.app = express();'

- L25 server.js: self.ipaddress = '0.0.0.0';

L26 server.js: self.port = 8080;

NoteLines(L) are from the base V2 cartridge server.js.

Use the

occommand to launch a new Node.js application from the builder image and source code:$ oc new-app https://github.com/<github-id>/<repo-name>.git --name=<app-name> -e <ENV_VAR_NAME>=<env_var_value>

Supported Node.js Versions

| v2 | v3 |

|---|---|

| Node.js 0.10 | Nodejs: 0.10 |

| Nodejs-mongodb-example (quickstart) |

5.3.7. JBoss EAP

Set up a new GitHub repository and add it as a remote branch to the current, local Git repository:

$ git remote add <remote-name> https://github.com/<github-id>/<repo-name>Push the local v2 source code to the new repository:

$ git push -u <remote-name> master- If the repository includes pre-built .war files, they need to reside in the deployments directory off the root directory of the repository.

Create the new application using the JBoss EAP 6 builder image (jboss-eap64-openshift) and the source code repository from GitHub:

$ oc new-app --strategy=source jboss-eap64-openshift~https://github.com/<github-id>/<repo-name>.git

5.3.8. JBoss WS (Tomcat)

Set up a new GitHub repository and add it as a remote branch to the current, local Git repository:

$ git remote add <remote-name> https://github.com/<github-id>/<repo-name>Push the local v2 source code to the new repository:

$ git push -u <remote-name> master- If the repository includes pre-built .war files, they need to reside in the deployments directory off the root directory of the repository.

Create the new application using the JBoss Web Server 3 (Tomcat 7) builder image (jboss-webserver30-tomcat7) and the source code repository from GitHub:

$ oc new-app --strategy=source jboss-webserver30-tomcat7-openshift~https://github.com/<github-id>/<repo-name>.git --name=<app-name> -e <ENV_VAR_NAME>=<env_var_value>

5.3.9. JBoss AS (Wildfly 10)

Set up a new GitHub repository and add it as a remote branch to the current, local Git repository:

$ git remote add <remote-name> https://github.com/<github-id>/<repo-name>Push the local v2 source code to the new repository:

$ git push -u <remote-name> masterEdit the local Git repository and push the changes upstream to make it v3 compatible:

Remove .openshift directory.

NoteIn v3, action_hooks and cron tasks are not supported in the same way. See Action Hooks for more information.

- Add the deployments directory to the root of the source repository. Move the .war files to ‘deployments’ directory.

Use the the

occommand to launch a new Wildfly application from the builder image and source code:$ oc new-app https://github.com/<github-id>/<repo-name>.git --image-stream=”openshift/wildfly:10.0" --name=<app-name> -e <ENV_VAR_NAME>=<env_var_value>NoteThe argument

--nameis optional to specify the name of your application. The argument-eis optional to add environment variables that are needed for build and deployment processes, such asOPENSHIFT_PYTHON_DIR.

5.3.10. Supported JBoss/XPaas Versions

| v2 | v3 |

|---|---|

| JBoss App Server 7 | |

| Tomcat 6 (JBoss EWS 1.0) | jboss-webserver30-tomcat7-openshift: 1.1 |

| Tomcat 7 (JBoss EWS 2.0) | |

| Vert.x 2.1 | |

| WildFly App Server 10 | |

| WildFly App Server 8.2.1.Final | |

| WildFly App Server 9 | |

| CapeDwarf | |

| JBoss Data Virtualization 6 | |

| JBoss Enterprise App Platform 6 | jboss-eap64-openshift: 1.2, 1.3 |

| JBoss Unified Push Server 1.0.0.Beta1, Beta2 | |

| JBoss BPM Suite | |

| JBoss BRMS | |

| jboss-eap70-openshift: 1.3-Beta | |

| eap64-https-s2i | |

| eap64-mongodb-persistent-s2i | |

| eap64-mysql-persistent-s2i | |

| eap64-psql-persistent-s2i |

5.4. QuickStart Examples

5.4.1. Overview

Although there is no clear-cut migration path for v2 quickstart to v3 quickstart, the following quickstarts are currently available in v3. If you have an application with a database, rather than using oc new-app to create your application, then oc new-app again to start a separate database service and linking the two with common environment variables, you can use one of the following to instantiate the linked application and database at once, from your GitHub repository containing your source code. You can list all available templates with oc get templates -n openshift:

CakePHP MySQL https://github.com/openshift/cakephp-ex

- template: cakephp-mysql-example

Node.js MongoDB https://github.com/openshift/nodejs-ex

- template: nodejs-mongodb-example

Django PosgreSQL https://github.com/openshift/django-ex

- template: django-psql-example

Dancer MySQL https://github.com/openshift/dancer-ex

- template: dancer-mysql-example

Rails PostgreSQL https://github.com/openshift/rails-ex

- template: rails-postgresql-example

5.4.2. Workflow

Run a git clone of one of the above template URLs locally. Add and commit your application source code and push a GitHub repository, then start a v3 quickstart application from one of the templates listed above:

- Create a GitHub repository for your application.

Clone a quickstart template and add your GitHub repository as a remote:

$ git clone <one-of-the-template-URLs-listed-above> $ cd <your local git repository> $ git remote add upstream <https://github.com/<git-id>/<quickstart-repo>.git> $ git push -u upstream masterCommit and push your source code to GitHub:

$ cd <your local repository> $ git commit -am “added code for my app” $ git push origin masterCreate a new application in v3:

$ oc new-app --template=<template> \ -p SOURCE_REPOSITORY_URL=<https://github.com/<git-id>/<quickstart_repo>.git> \ -p DATABASE_USER=<your_db_user> \ -p DATABASE_NAME=<your_db_name> \ -p DATABASE_PASSWORD=<your_db_password> \ -p DATABASE_ADMIN_PASSWORD=<your_db_admin_password>1 - 1

- Only applicable for MongoDB.

You should now have 2 pods running, a web framework pod, and a database pod. The web framework pod environment should match the database pod environment. You can list the environment variables with

oc set env pod/<pod_name> --list:-

DATABASE_NAMEis now<DB_SERVICE>_DATABASE -

DATABASE_USERis now<DB_SERVICE>_USER -

DATABASE_PASSWORDis now<DB_SERVICE>_PASSWORD DATABASE_ADMIN_PASSWORDis nowMONGODB_ADMIN_PASSWORD(only applicable for MongoDB)If no

SOURCE_REPOSITORY_URLis specified, the template will use the template URL (https://github.com/openshift/<quickstart>-ex) listed above as the source repository, and a hello-welcome application will be started.

-

If you are migrating a database, export databases to a dump file and restore the database in the new v3 database pod. Refer to the steps outlined in Database Applications, skipping the

oc new-appstep as the database pod is already up and running.

5.5. Continuous Integration and Deployment (CI/CD)

5.5.1. Overview

This topic reviews the differences in continuous integration and deployment (CI/CD) applications between OpenShift version 2 (v2) and OpenShift version 3 (v3) and how to migrate these applications into the v3 environment.

5.5.2. Jenkins