2.4. The Clustered Logical Volume Manager (CLVM)

The Clustered Logical Volume Manager (CLVM) is a set of clustering extensions to LVM. These extensions allow a cluster of computers to manage shared storage (for example, on a SAN) using LVM. CLVM is part of the Resilient Storage Add-On.

Whether you should use CLVM depends on your system requirements:

- If only one node of your system requires access to the storage you are configuring as logical volumes, then you can use LVM without the CLVM extensions and the logical volumes created with that node are all local to the node.

- If you are using a clustered system for failover where only a single node that accesses the storage is active at any one time, you should use High Availability Logical Volume Management agents (HA-LVM).

- If more than one node of your cluster will require access to your storage which is then shared among the active nodes, then you must use CLVM. CLVM allows a user to configure logical volumes on shared storage by locking access to physical storage while a logical volume is being configured, and uses clustered locking services to manage the shared storage.

In order to use CLVM, the High Availability Add-On and Resilient Storage Add-On software, including the

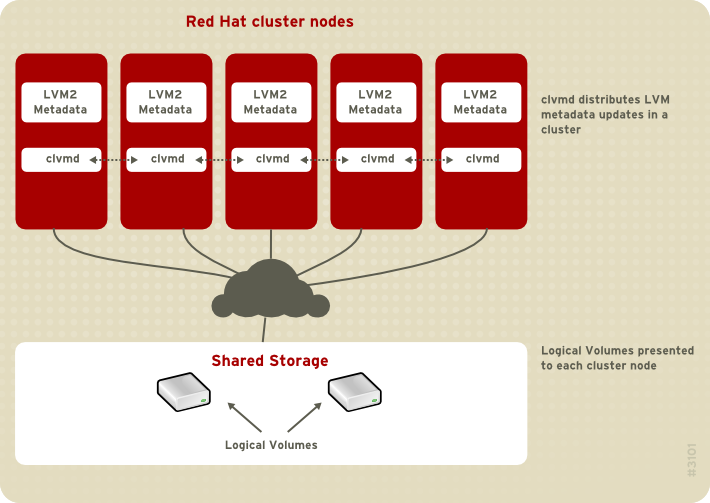

clvmd daemon, must be running. The clvmd daemon is the key clustering extension to LVM. The clvmd daemon runs in each cluster computer and distributes LVM metadata updates in a cluster, presenting each cluster computer with the same view of the logical volumes. For information on installing and administering the High Availability Add-On see Cluster Administration.

To ensure that

clvmd is started at boot time, you can execute a chkconfig ... on command on the clvmd service, as follows:

# chkconfig clvmd on

If the

clvmd daemon has not been started, you can execute a service ... start command on the clvmd service, as follows:

# service clvmd start

Creating LVM logical volumes in a cluster environment is identical to creating LVM logical volumes on a single node. There is no difference in the LVM commands themselves, or in the LVM graphical user interface, as described in Chapter 5, LVM Administration with CLI Commands and Chapter 8, LVM Administration with the LVM GUI. In order to enable the LVM volumes you are creating in a cluster, the cluster infrastructure must be running and the cluster must be quorate.

By default, logical volumes created with CLVM on shared storage are visible to all systems that have access to the shared storage. It is possible to create volume groups in which all of the storage devices are visible to only one node in the cluster. It is also possible to change the status of a volume group from a local volume group to a clustered volume group. For information, see Section 5.3.3, “Creating Volume Groups in a Cluster” and Section 5.3.8, “Changing the Parameters of a Volume Group”.

Warning

When you create volume groups with CLVM on shared storage, you must ensure that all nodes in the cluster have access to the physical volumes that constitute the volume group. Asymmetric cluster configurations in which some nodes have access to the storage and others do not are not supported.

Figure 2.2, “CLVM Overview” shows a CLVM overview in a cluster.

Figure 2.2. CLVM Overview

Note

CLVM requires changes to the

lvm.conf file for cluster-wide locking. Information on configuring the lvm.conf file to support clustered locking is provided within the lvm.conf file itself. For information about the lvm.conf file, see Appendix B, The LVM Configuration Files.