Installation and Configuration

OpenShift Container Platform 3.7 Installation and Configuration

Abstract

Chapter 1. Overview

OpenShift Container Platform Installation and Configuration topics cover the basics of installing and configuring OpenShift Container Platform in your environment. Configuration, management, and logging are also covered. Use these topics for the one-time tasks required to quickly set up your OpenShift Container Platform environment and configure it based on your organizational needs.

For day to day cluster administration tasks, see Cluster Administration.

Chapter 2. Installing a Cluster

2.1. Planning

2.1.1. Initial Planning

For production environments, several factors influence installation. Consider the following questions as you read through the documentation:

- Which installation method do you want to use? The Installation Methods section provides some information about the quick and advanced installation methods.

- How many pods are required in your cluster? The Sizing Considerations section provides limits for nodes and pods so you can calculate how large your environment needs to be.

- How many hosts do you require in the cluster? The Environment Scenarios section provides multiple examples of Single Master and Multiple Master configurations.

- Is high availability required? High availability is recommended for fault tolerance. In this situation, you might aim to use the Multiple Masters Using Native HA example as a basis for your environment.

- Which installation type do you want to use: RPM or containerized? Both installations provide a working OpenShift Container Platform environment, but you might have a preference for a particular method of installing, managing, and updating your services.

- Which identity provider do you use for authentication? If you already use a supported identity provider, it is a best practice to configure OpenShift Container Platform to use that identity provider during advanced installation.

- Is my installation supported if integrating with other technologies? See the OpenShift Container Platform Tested Integrations for a list of tested integrations.

2.1.2. Installation Methods

Both the quick and advanced installation methods are supported for development and production environments. If you want to quickly get OpenShift Container Platform up and running to try out for the first time, use the quick installer and let the interactive CLI guide you through the configuration options relevant to your environment.

For the most control over your cluster’s configuration, you can use the advanced installation method. This method is particularly suited if you are already familiar with Ansible. However, following along with the OpenShift Container Platform documentation should equip you with enough information to reliably deploy your cluster and continue to manage its configuration post-deployment using the provided Ansible playbooks directly.

If you install initially using the quick installer, you can always further tweak your cluster’s configuration and adjust the number of hosts in the cluster using the same installer tool. If you wanted to later switch to using the advanced method, you can create an inventory file for your configuration and carry on that way.

2.1.3. Sizing Considerations

Determine how many nodes and pods you require for your OpenShift Container Platform cluster. Cluster scalability correlates to the number of pods in a cluster environment. That number influences the other numbers in your setup. See Cluster Limits for the latest limits for objects in OpenShift Container Platform.

2.1.4. Environment Scenarios

This section outlines different examples of scenarios for your OpenShift Container Platform environment. Use these scenarios as a basis for planning your own OpenShift Container Platform cluster, based on your sizing needs.

Moving from a single master cluster to multiple masters after installation is not supported.

For information on updating labels, see Updating Labels on Nodes.

2.1.4.1. Single Master and Node on One System

OpenShift Container Platform can be installed on a single system for a development environment only. An all-in-one environment is not considered a production environment.

2.1.4.2. Single Master and Multiple Nodes

The following table describes an example environment for a single master (with embedded etcd) and two nodes:

| Host Name | Infrastructure Component to Install |

|---|---|

| master.example.com | Master and node |

| node1.example.com | Node |

| node2.example.com |

2.1.4.3. Single Master, Multiple etcd, and Multiple Nodes

The following table describes an example environment for a single master, three etcd hosts, and two nodes:

| Host Name | Infrastructure Component to Install |

|---|---|

| master.example.com | Master and node |

| etcd1.example.com | etcd |

| etcd2.example.com | |

| etcd3.example.com | |

| node1.example.com | Node |

| node2.example.com |

When specifying multiple etcd hosts, external etcd is installed and configured. Clustering of OpenShift Container Platform’s embedded etcd is not supported.

2.1.4.4. Multiple Masters Using Native HA

The following describes an example environment for three masters, one HAProxy load balancer, three etcd hosts, and two nodes using the native HA method:

| Host Name | Infrastructure Component to Install |

|---|---|

| master1.example.com | Master (clustered using native HA) and node |

| master2.example.com | |

| master3.example.com | |

| lb.example.com | HAProxy to load balance API master endpoints |

| etcd1.example.com | etcd |

| etcd2.example.com | |

| etcd3.example.com | |

| node1.example.com | Node |

| node2.example.com |

When specifying multiple etcd hosts, external etcd is installed and configured. Clustering of OpenShift Container Platform’s embedded etcd is not supported.

2.1.4.5. Stand-alone Registry

You can also install OpenShift Container Platform to act as a stand-alone registry using the OpenShift Container Platform’s integrated registry. See Installing a Stand-alone Registry for details on this scenario.

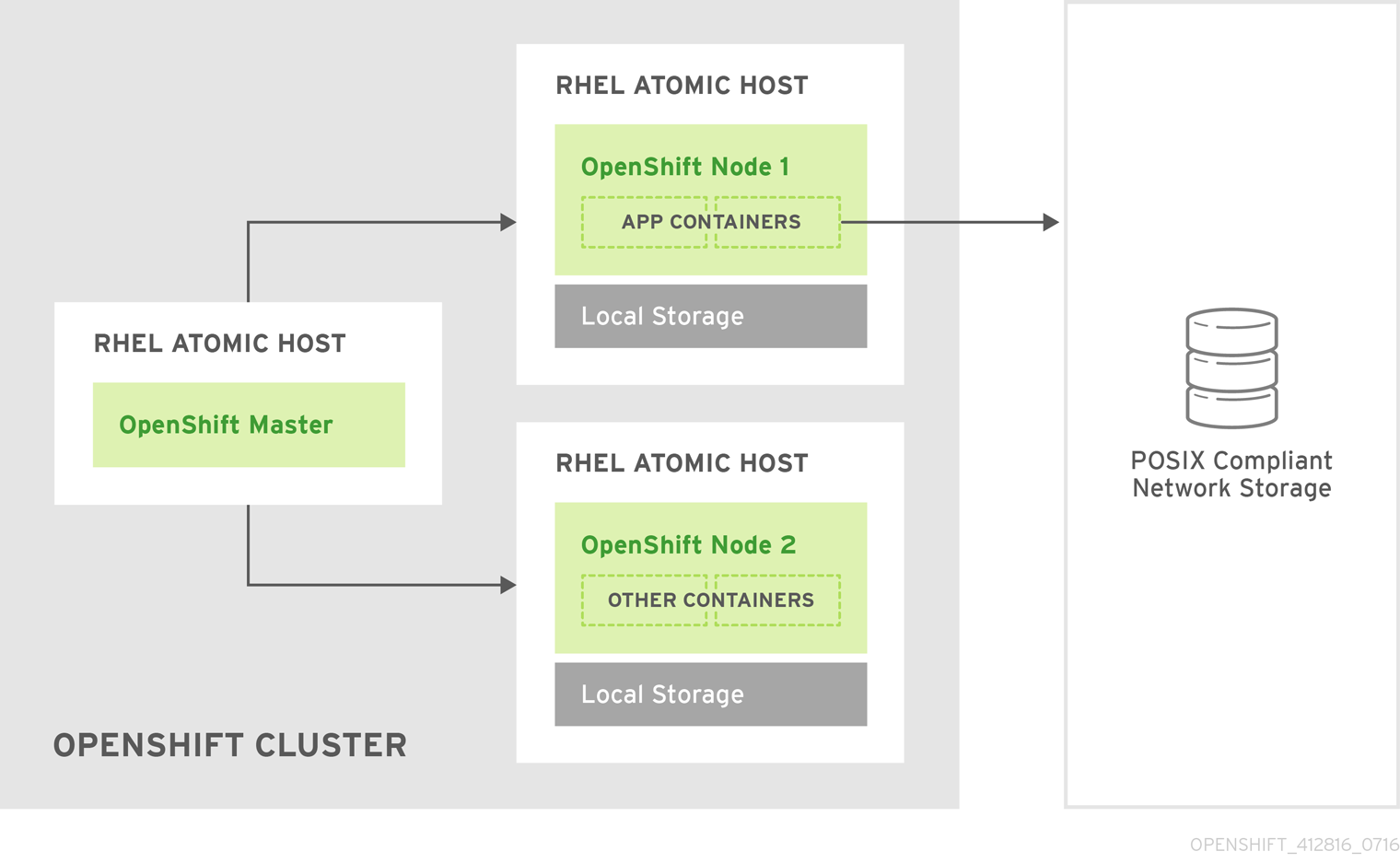

2.1.5. RPM Versus Containerized

An RPM installation installs all services through package management and configures services to run within the same user space, while a containerized installation installs services using container images and runs separate services in individual containers.

See the Installing on Containerized Hosts topic for more details on configuring your installation to use containerized services.

2.2. Prerequisites

2.2.1. System Requirements

The following sections identify the hardware specifications and system-level requirements of all hosts within your OpenShift Container Platform environment.

2.2.1.1. Red Hat Subscriptions

You must have an active OpenShift Container Platform subscription on your Red Hat account to proceed. If you do not, contact your sales representative for more information.

OpenShift Container Platform 3.7 requires Docker 1.12.

2.2.1.2. Minimum Hardware Requirements

The system requirements vary per host type:

| |

| |

| External etcd Nodes |

|

Meeting the /var/ file system sizing requirements in RHEL Atomic Host requires making changes to the default configuration. See Managing Storage with Docker-formatted Containers for instructions on configuring this during or after installation.

Meeting the /var/ file system sizing requirements in RHEL Atomic Host requires making changes to the default configuration. See Managing Storage with Docker-formatted Containers for instructions on configuring this during or after installation.

The system’s temporary directory is determined according to the rules defined in the

The system’s temporary directory is determined according to the rules defined in the tempfile module in Python’s standard library.

OpenShift Container Platform only supports servers with the x86_64 architecture.

2.2.1.3. Production Level Hardware Requirements

Test or sample environments function with the minimum requirements. For production environments, the following recommendations apply:

- Master Hosts

- In a highly available OpenShift Container Platform cluster with external etcd, a master host should have, in addition to the minimum requirements in the table above, 1 CPU core and 1.5 GB of memory for each 1000 pods. Therefore, the recommended size of a master host in an OpenShift Container Platform cluster of 2000 pods would be the minimum requirements of 2 CPU cores and 16 GB of RAM, plus 2 CPU cores and 3 GB of RAM, totaling 4 CPU cores and 19 GB of RAM.

A minimum of three etcd hosts and a load-balancer between the master hosts are required.

The OpenShift Container Platform master caches deserialized versions of resources aggressively to ease CPU load. However, in smaller clusters of less than 1000 pods, this cache can waste a lot of memory for negligible CPU load reduction. The default cache size is 50,000 entries, which, depending on the size of your resources, can grow to occupy 1 to 2 GB of memory. This cache size can be reduced using the following setting the in /etc/origin/master/master-config.yaml:

kubernetesMasterConfig:

apiServerArguments:

deserialization-cache-size:

- "1000"- Node Hosts

- The size of a node host depends on the expected size of its workload. As an OpenShift Container Platform cluster administrator, you will need to calculate the expected workload, then add about 10 percent for overhead. For production environments, allocate enough resources so that a node host failure does not affect your maximum capacity.

Use the above with the following table to plan the maximum loads for nodes and pods:

| Host | Sizing Recommendation |

|---|---|

| Maximum nodes per cluster | 2000 |

| Maximum pods per cluster | 120000 |

| Maximum pods per nodes | 250 |

| Maximum pods per core | 10 |

Oversubscribing the physical resources on a node affects resource guarantees the Kubernetes scheduler makes during pod placement. Learn what measures you can take to avoid memory swapping.

2.2.1.4. Storage management

| Directory | Notes | Sizing | Expected Growth |

|---|---|---|---|

| /var/lib/openshift | Used for etcd storage only when in single master mode and etcd is embedded in the atomic-openshift-master process. | Less than 10GB. | Will grow slowly with the environment. Only storing metadata. |

| /var/lib/etcd | Used for etcd storage when in Multi-Master mode or when etcd is made standalone by an administrator. | Less than 20 GB. | Will grow slowly with the environment. Only storing metadata. |

| /var/lib/docker | When the run time is docker, this is the mount point. Storage used for active container runtimes (including pods) and storage of local images (not used for registry storage). Mount point should be managed by docker-storage rather than manually. | 50 GB for a Node with 16 GB memory. Additional 20-25 GB for every additional 8 GB of memory. | Growth is limited by the capacity for running containers. |

| /var/lib/containers | When the run time is CRI-O, this is the mount point. Storage used for active container runtimes (including pods) and storage of local images (not used for registry storage). | 50 GB for a Node with 16 GB memory. Additional 20-25 GB for every additional 8 GB of memory. | Growth limited by capacity for running containers |

| /var/lib/origin/openshift.local.volumes | Ephemeral volume storage for pods. This includes anything external that is mounted into a container at runtime. Includes environment variables, kube secrets, and data volumes not backed by persistent storage PVs. | Varies | Minimal if pods requiring storage are using persistent volumes. If using ephemeral storage, this can grow quickly. |

| /var/log | Log files for all components. | 10 to 30 GB. | Log files can grow quickly; size can be managed by growing disks or managed using log rotate. |

2.2.1.5. Configuring Core Usage

By default, OpenShift Container Platform masters and nodes use all available cores in the system they run on. You can choose the number of cores you want OpenShift Container Platform to use by setting the GOMAXPROCS environment variable.

For example, run the following before starting the server to make OpenShift Container Platform only run on one core:

# export GOMAXPROCS=12.2.1.6. SELinux

Security-Enhanced Linux (SELinux) must be enabled on all of the servers before installing OpenShift Container Platform or the installer will fail. Also, configure SELINUXTYPE=targeted in the /etc/selinux/config file:

# This file controls the state of SELinux on the system.

# SELINUX= can take one of these three values:

# enforcing - SELinux security policy is enforced.

# permissive - SELinux prints warnings instead of enforcing.

# disabled - No SELinux policy is loaded.

SELINUX=enforcing

# SELINUXTYPE= can take one of these three values:

# targeted - Targeted processes are protected,

# minimum - Modification of targeted policy. Only selected processes are protected.

# mls - Multi Level Security protection.

SELINUXTYPE=targetedUsing OverlayFS

OverlayFS is a union file system that allows you to overlay one file system on top of another.

As of Red Hat Enterprise Linux 7.4, you have the option to configure your OpenShift Container Platform environment to use OverlayFS. The overlay2 graph driver is fully supported in addition to the older overlay driver. However, Red Hat recommends using overlay2 instead of overlay, because of its speed and simple implementation.

See the Overlay Graph Driver section of the Atomic Host documentation for instructions on how to to enable the overlay2 graph driver for the Docker service.

2.2.1.7. Security Warning

OpenShift Container Platform runs containers on hosts in the cluster, and in some cases, such as build operations and the registry service, it does so using privileged containers. Furthermore, those containers access the hosts' Docker daemon and perform docker build and docker push operations. As such, cluster administrators should be aware of the inherent security risks associated with performing docker run operations on arbitrary images as they effectively have root access. This is particularly relevant for docker build operations.

Exposure to harmful containers can be limited by assigning specific builds to nodes so that any exposure is limited to those nodes. To do this, see the Assigning Builds to Specific Nodes section of the Developer Guide. For cluster administrators, see the Configuring Global Build Defaults and Overrides section of the Installation and Configuration Guide.

You can also use security context constraints to control the actions that a pod can perform and what it has the ability to access. For instructions on how to enable images to run with USER in the Dockerfile, see Managing Security Context Constraints (requires a user with cluster-admin privileges).

For more information, see these articles:

2.2.2. Environment Requirements

The following section defines the requirements of the environment containing your OpenShift Container Platform configuration. This includes networking considerations and access to external services, such as Git repository access, storage, and cloud infrastructure providers.

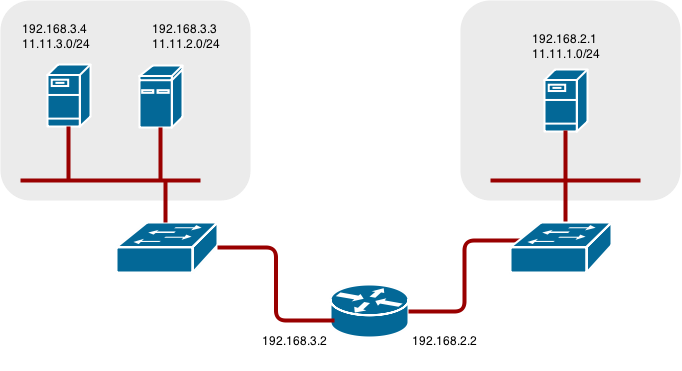

2.2.2.1. DNS

OpenShift Container Platform requires a fully functional DNS server in the environment. This is ideally a separate host running DNS software and can provide name resolution to hosts and containers running on the platform.

Adding entries into the /etc/hosts file on each host is not enough. This file is not copied into containers running on the platform.

Key components of OpenShift Container Platform run themselves inside of containers and use the following process for name resolution:

- By default, containers receive their DNS configuration file (/etc/resolv.conf) from their host.

-

OpenShift Container Platform then inserts one DNS value into the pods (above the node’s nameserver values). That value is defined in the /etc/origin/node/node-config.yaml file by the

dnsIPparameter, which by default is set to the address of the host node because the host is using dnsmasq. -

If the

dnsIPparameter is omitted from the node-config.yaml file, then the value defaults to the kubernetes service IP, which is the first nameserver in the pod’s /etc/resolv.conf file.

As of OpenShift Container Platform 3.2, dnsmasq is automatically configured on all masters and nodes. The pods use the nodes as their DNS, and the nodes forward the requests. By default, dnsmasq is configured on the nodes to listen on port 53, therefore the nodes cannot run any other type of DNS application.

NetworkManager is required on the nodes in order to populate dnsmasq with the DNS IP addresses. DNS does not work properly when the network interface for OpenShift Container Platform has NM_CONTROLLED=no.

The following is an example set of DNS records:

master1 A 10.64.33.100

master2 A 10.64.33.103

node1 A 10.64.33.101

node2 A 10.64.33.102If you do not have a properly functioning DNS environment, you could experience failure with:

- Product installation via the reference Ansible-based scripts

- Deployment of the infrastructure containers (registry, routers)

- Access to the OpenShift Container Platform web console, because it is not accessible via IP address alone

2.2.2.1.1. Configuring Hosts to Use DNS

Make sure each host in your environment is configured to resolve hostnames from your DNS server. The configuration for hosts' DNS resolution depend on whether DHCP is enabled. If DHCP is:

- Disabled, then configure your network interface to be static, and add DNS nameservers to NetworkManager.

Enabled, then the NetworkManager dispatch script automatically configures DNS based on the DHCP configuration. Optionally, you can add a value to

dnsIPin the node-config.yaml file to prepend the pod’s resolv.conf file. The second nameserver is then defined by the host’s first nameserver. By default, this will be the IP address of the node host.NoteFor most configurations, do not set the

openshift_dns_ipoption during the advanced installation of OpenShift Container Platform (using Ansible), because this option overrides the default IP address set bydnsIP.Instead, allow the installer to configure each node to use dnsmasq and forward requests to SkyDNS or the external DNS provider. If you do set the

openshift_dns_ipoption, then it should be set either with a DNS IP that queries SkyDNS first, or to the SkyDNS service or endpoint IP (the Kubernetes service IP).

To verify that hosts can be resolved by your DNS server:

Check the contents of /etc/resolv.conf:

$ cat /etc/resolv.conf # Generated by NetworkManager search example.com nameserver 10.64.33.1 # nameserver updated by /etc/NetworkManager/dispatcher.d/99-origin-dns.shIn this example, 10.64.33.1 is the address of our DNS server.

Test that the DNS servers listed in /etc/resolv.conf are able to resolve host names to the IP addresses of all masters and nodes in your OpenShift Container Platform environment:

$ dig <node_hostname> @<IP_address> +shortFor example:

$ dig master.example.com @10.64.33.1 +short 10.64.33.100 $ dig node1.example.com @10.64.33.1 +short 10.64.33.101

2.2.2.1.2. Configuring a DNS Wildcard

Optionally, configure a wildcard for the router to use, so that you do not need to update your DNS configuration when new routes are added.

A wildcard for a DNS zone must ultimately resolve to the IP address of the OpenShift Container Platform router.

For example, create a wildcard DNS entry for cloudapps that has a low time-to-live value (TTL) and points to the public IP address of the host where the router will be deployed:

*.cloudapps.example.com. 300 IN A 192.168.133.2

In almost all cases, when referencing VMs you must use host names, and the host names that you use must match the output of the hostname -f command on each node.

In your /etc/resolv.conf file on each node host, ensure that the DNS server that has the wildcard entry is not listed as a nameserver or that the wildcard domain is not listed in the search list. Otherwise, containers managed by OpenShift Container Platform may fail to resolve host names properly.

2.2.2.2. Network Access

A shared network must exist between the master and node hosts. If you plan to configure multiple masters for high-availability using the advanced installation method, you must also select an IP to be configured as your virtual IP (VIP) during the installation process. The IP that you select must be routable between all of your nodes, and if you configure using a FQDN it should resolve on all nodes.

2.2.2.2.1. NetworkManager

NetworkManager, a program for providing detection and configuration for systems to automatically connect to the network, is required. DNS does not work properly when the network interface for OpenShift Container Platform has NM_CONTROLLED=no.

2.2.2.2.2. Configuring firewalld as the firewall

While iptables is the default firewall, firewalld is recommended for new installations. You can enable firewalld by setting os_firewall_use_firewalld=true in the Ansible inventory file.

[OSEv3:vars]

os_firewall_use_firewalld=True

Setting this variable to true opens the required ports and adds rules to the default zone, which ensure that firewalld is configured correctly.

Using the firewalld default configuration comes with limited configuration options, and cannot be overridden. For example, while you can set up a storage network with interfaces in multiple zones, the interface that nodes communicate on must be in the default zone.

2.2.2.2.3. Required Ports

The OpenShift Container Platform installation automatically creates a set of internal firewall rules on each host using iptables. However, if your network configuration uses an external firewall, such as a hardware-based firewall, you must ensure infrastructure components can communicate with each other through specific ports that act as communication endpoints for certain processes or services.

Ensure the following ports required by OpenShift Container Platform are open on your network and configured to allow access between hosts. Some ports are optional depending on your configuration and usage.

| 4789 | UDP | Required for SDN communication between pods on separate hosts. |

| 53 or 8053 | TCP/UDP | Required for DNS resolution of cluster services (SkyDNS). Installations prior to 3.2 or environments upgraded to 3.2 use port 53. New installations will use 8053 by default so that dnsmasq may be configured. |

| 4789 | UDP | Required for SDN communication between pods on separate hosts. |

| 443 or 8443 | TCP | Required for node hosts to communicate to the master API, for the node hosts to post back status, to receive tasks, and so on. |

| 4789 | UDP | Required for SDN communication between pods on separate hosts. |

| 10250 | TCP |

The master proxies to node hosts via the Kubelet for |

| 53 or 8053 | TCP/UDP | Required for DNS resolution of cluster services (SkyDNS). Installations prior to 3.2 or environments upgraded to 3.2 use port 53. New installations will use 8053 by default so that dnsmasq may be configured. |

| 2049 | TCP/UDP | Required when provisioning an NFS host as part of the installer. |

| 2379 | TCP | Used for standalone etcd (clustered) to accept changes in state. |

| 2380 | TCP | etcd requires this port be open between masters for leader election and peering connections when using standalone etcd (clustered). |

| 4001 | TCP | Used for embedded etcd (non-clustered) to accept changes in state. |

| 4789 | UDP | Required for SDN communication between pods on separate hosts. |

| 9000 | TCP |

If you choose the |

| 443 or 8443 | TCP | Required for node hosts to communicate to the master API, for node hosts to post back status, to receive tasks, and so on. |

| 8444 | TCP |

Port that the |

| 22 | TCP | Required for SSH by the installer or system administrator. |

| 53 or 8053 | TCP/UDP | Required for DNS resolution of cluster services (SkyDNS). Installations prior to 3.2 or environments upgraded to 3.2 use port 53. New installations will use 8053 by default so that dnsmasq may be configured. Only required to be internally open on master hosts. |

| 80 or 443 | TCP | For HTTP/HTTPS use for the router. Required to be externally open on node hosts, especially on nodes running the router. |

| 1936 | TCP | (Optional) Required to be open when running the template router to access statistics. Can be open externally or internally to connections depending on if you want the statistics to be expressed publicly. Can require extra configuration to open. See the Notes section below for more information. |

| 4001 | TCP | For embedded etcd (non-clustered) use. Only required to be internally open on the master host. 4001 is for server-client connections. |

| 2379 and 2380 | TCP | For standalone etcd use. Only required to be internally open on the master host. 2379 is for server-client connections. 2380 is for server-server connections, and is only required if you have clustered etcd. |

| 4789 | UDP | For VxLAN use (OpenShift SDN). Required only internally on node hosts. |

| 8443 | TCP | For use by the OpenShift Container Platform web console, shared with the API server. |

| 10250 | TCP | For use by the Kubelet. Required to be externally open on nodes. |

Notes

- In the above examples, port 4789 is used for User Datagram Protocol (UDP).

- When deployments are using the SDN, the pod network is accessed via a service proxy, unless it is accessing the registry from the same node the registry is deployed on.

-

OpenShift Container Platform internal DNS cannot be received over SDN. Depending on the detected values of

openshift_facts, or if theopenshift_ipandopenshift_public_ipvalues are overridden, it will be the computed value ofopenshift_ip. For non-cloud deployments, this will default to the IP address associated with the default route on the master host. For cloud deployments, it will default to the IP address associated with the first internal interface as defined by the cloud metadata. -

The master host uses port 10250 to reach the nodes and does not go over SDN. It depends on the target host of the deployment and uses the computed values of

openshift_hostnameandopenshift_public_hostname. Port 1936 can still be inaccessible due to your iptables rules. Use the following to configure iptables to open port 1936:

# iptables -A OS_FIREWALL_ALLOW -p tcp -m state --state NEW -m tcp \ --dport 1936 -j ACCEPT

| 9200 | TCP |

For Elasticsearch API use. Required to be internally open on any infrastructure nodes so Kibana is able to retrieve logs for display. It can be externally opened for direct access to Elasticsearch by means of a route. The route can be created using |

| 9300 | TCP | For Elasticsearch inter-cluster use. Required to be internally open on any infrastructure node so the members of the Elasticsearch cluster may communicate with each other. |

2.2.2.3. Persistent Storage

The Kubernetes persistent volume framework allows you to provision an OpenShift Container Platform cluster with persistent storage using networked storage available in your environment. This can be done after completing the initial OpenShift Container Platform installation depending on your application needs, giving users a way to request those resources without having any knowledge of the underlying infrastructure.

The Installation and Configuration Guide provides instructions for cluster administrators on provisioning an OpenShift Container Platform cluster with persistent storage using NFS, GlusterFS, Ceph RBD, OpenStack Cinder, AWS Elastic Block Store (EBS), GCE Persistent Disks, and iSCSI.

2.2.2.4. Cloud Provider Considerations

There are certain aspects to take into consideration if installing OpenShift Container Platform on a cloud provider.

- For Amazon Web Services, see the Permissions and the Configuring a Security Group sections.

- For OpenStack, see the Permissions and the Configuring a Security Group sections.

2.2.2.4.1. Overriding Detected IP Addresses and Host Names

Some deployments require that the user override the detected host names and IP addresses for the hosts. To see the default values, run the openshift_facts playbook:

# ansible-playbook [-i /path/to/inventory] \

/usr/share/ansible/openshift-ansible/playbooks/byo/openshift_facts.ymlFor Amazon Web Services, see the Overriding Detected IP Addresses and Host Names section.

Now, verify the detected common settings. If they are not what you expect them to be, you can override them.

The Advanced Installation topic discusses the available Ansible variables in greater detail.

| Variable | Usage |

|---|---|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

If openshift_hostname is set to a value other than the metadata-provided private-dns-name value, the native cloud integration for those providers will no longer work.

2.2.2.4.2. Post-Installation Configuration for Cloud Providers

Following the installation process, you can configure OpenShift Container Platform for AWS, OpenStack, or GCE.

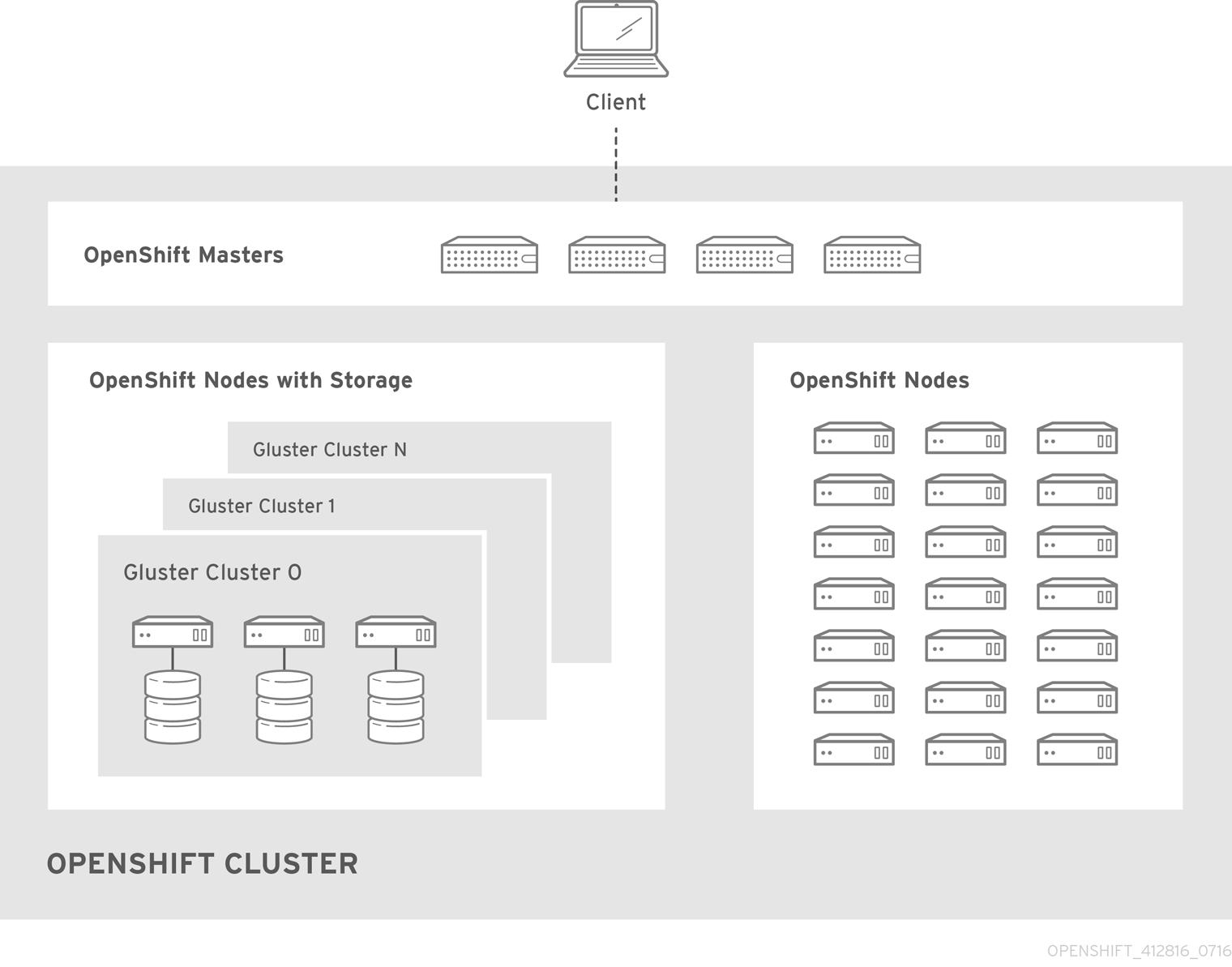

2.2.2.5. Containerized GlusterFS Considerations

If you choose to configure containerized GlusterFS persistent storage for your cluster, or if you choose to configure a containerized GlusterFS-backed OpenShift Container Registry, you must consider the following prerequisites.

2.2.2.5.1. Storage Nodes

To use containerized GlusterFS persistent storage:

- A minimum of 3 storage nodes is required.

- Each storage node must have at least 1 raw block device with least 100 GB available.

To run a containerized GlusterFS-backed OpenShift Container Registry:

- A minimum of 3 storage nodes is required.

- Each storage node must have at least 1 raw block device with at least 10 GB of free storage.

While containerized GlusterFS persistent storage can be configured and deployed on the same OpenShift Container Platform cluster as a containerized GlusterFS-backed registry, their storage should be kept separate from each other and also requires additional storage nodes. For example, if both are configured, a total of 6 storage nodes would be needed: 3 for the registry and 3 for persistent storage. This limitation is imposed to avoid potential impacts on performance in I/O and volume creation.

2.2.2.5.2. Required Software Components

For any RHEL (non-Atomic) storage nodes, the following RPM respository must be enabled:

# subscription-manager repos --enable=rh-gluster-3-client-for-rhel-7-server-rpms

The mount.glusterfs command must be available on all nodes that will host pods that will use GlusterFS volumes. For RPM-based systems, the glusterfs-fuse package must be installed:

# yum install glusterfs-fuseIf GlusterFS is already installed on the nodes, ensure the latest version is installed:

# yum update glusterfs-fuse2.3. Host Preparation

2.3.1. Setting PATH

The PATH for the root user on each host must contain the following directories:

- /bin

- /sbin

- /usr/bin

- /usr/sbin

These should all be included by default in a fresh RHEL 7.x installation.

2.3.2. Operating System Requirements

A base installation of RHEL 7.3 or 7.4 (with the latest packages from the Extras channel) or RHEL Atomic Host 7.4.2 or later is required for master and node hosts. See the following documentation for the respective installation instructions, if required:

2.3.3. Host Registration

Each host must be registered using Red Hat Subscription Manager (RHSM) and have an active OpenShift Container Platform subscription attached to access the required packages.

On each host, register with RHSM:

# subscription-manager register --username=<user_name> --password=<password>Pull the latest subscription data from RHSM:

# subscription-manager refreshList the available subscriptions:

# subscription-manager list --available --matches '*OpenShift*'In the output for the previous command, find the pool ID for an OpenShift Container Platform subscription and attach it:

# subscription-manager attach --pool=<pool_id>Disable all yum repositories:

Disable all the enabled RHSM repositories:

# subscription-manager repos --disable="*"List the remaining yum repositories and note their names under

repo id, if any:# yum repolistUse

yum-config-managerto disable the remaining yum repositories:# yum-config-manager --disable <repo_id>Alternatively, disable all repositories:

yum-config-manager --disable \*Note that this could take a few minutes if you have a large number of available repositories

Enable only the repositories required by OpenShift Container Platform 3.7:

# subscription-manager repos \ --enable="rhel-7-server-rpms" \ --enable="rhel-7-server-extras-rpms" \ --enable="rhel-7-server-ose-3.7-rpms" \ --enable="rhel-7-fast-datapath-rpms"

2.3.4. Installing Base Packages

For RHEL 7 systems:

Install the following base packages:

# yum install wget git net-tools bind-utils yum-utils iptables-services bridge-utils bash-completion kexec-tools sos psacctUpdate the system to the latest packages:

# yum update # systemctl rebootIf you plan to use the RPM-based installer to run an advanced installation, you can skip this step. However, if you plan to use the containerized installer (currently a Technology Preview feature):

Install the atomic package:

# yum install atomic- Skip to Installing Docker.

Install the following package, which provides RPM-based OpenShift Container Platform installer utilities and pulls in other tools required by the quick and advanced installation methods, such as Ansible and related configuration files:

# yum install atomic-openshift-utils

For RHEL Atomic Host 7 systems:

Ensure the host is up to date by upgrading to the latest Atomic tree if one is available:

# atomic host upgradeAfter the upgrade is completed and prepared for the next boot, reboot the host:

# systemctl reboot

2.3.5. Installing Docker

At this point, you should install Docker on all master and node hosts. This allows you to configure your Docker storage options before installing OpenShift Container Platform.

For RHEL 7 systems, install Docker 1.12:

On RHEL Atomic Host 7 systems, Docker should already be installed, configured, and running by default.

# yum install docker-1.12.6After the package installation is complete, verify that version 1.12 was installed:

# rpm -V docker-1.12.6

# docker versionThe Advanced Installation method automatically changes /etc/sysconfig/docker.

2.3.6. Configuring Docker Storage

Containers and the images they are created from are stored in Docker’s storage back end. This storage is ephemeral and separate from any persistent storage allocated to meet the needs of your applications.

For RHEL Atomic Host

The default storage back end for Docker on RHEL Atomic Host is a thin pool logical volume, which is supported for production environments. You must ensure that enough space is allocated for this volume per the Docker storage requirements mentioned in System Requirements.

If you do not have enough allocated, see Managing Storage with Docker Formatted Containers for details on using docker-storage-setup and basic instructions on storage management in RHEL Atomic Host.

For RHEL

The default storage back end for Docker on RHEL 7 is a thin pool on loopback devices, which is not supported for production use and only appropriate for proof of concept environments. For production environments, you must create a thin pool logical volume and re-configure Docker to use that volume.

Docker stores images and containers in a graph driver, which is a pluggable storage technology, such as DeviceMapper, OverlayFS, and Btrfs. Each has advantages and disadvantages. For example, OverlayFS is faster than DeviceMapper at starting and stopping containers, but is not Portable Operating System Interface for Unix (POSIX) compliant because of the architectural limitations of a union file system and is not supported prior to Red Hat Enterprise Linux 7.2. See the Red Hat Enterprise Linux release notes for information on using OverlayFS with your version of RHEL.

For more information on the benefits and limitations of DeviceMapper and OverlayFS, see Choosing a Graph Driver.

2.3.6.1. Configuring OverlayFS

OverlayFS is a type of union file system. It allows you to overlay one file system on top of another. Changes are recorded in the upper file system, while the lower file system remains unmodified.

Comparing the Overlay Versus Overlay2 Graph Drivers has more information about the overlay and overlay2 drivers.

For information on enabling the OverlayFS storage driver for the Docker service, see the Red Hat Enterprise Linux Atomic Host documentation.

2.3.6.2. Configuring Thin Pool Storage

You can use the docker-storage-setup script included with Docker to create a thin pool device and configure Docker’s storage driver. This can be done after installing Docker and should be done before creating images or containers. The script reads configuration options from the /etc/sysconfig/docker-storage-setup file and supports three options for creating the logical volume:

- Option A) Use an additional block device.

- Option B) Use an existing, specified volume group.

- Option C) Use the remaining free space from the volume group where your root file system is located.

Option A is the most robust option, however it requires adding an additional block device to your host before configuring Docker storage. Options B and C both require leaving free space available when provisioning your host. Option C is known to cause issues with some applications, for example Red Hat Mobile Application Platform (RHMAP).

Create the docker-pool volume using one of the following three options:

Option A) Use an additional block device.

In /etc/sysconfig/docker-storage-setup, set DEVS to the path of the block device you wish to use. Set VG to the volume group name you wish to create; docker-vg is a reasonable choice. For example:

# cat <<EOF > /etc/sysconfig/docker-storage-setup DEVS=/dev/vdc VG=docker-vg EOFThen run docker-storage-setup and review the output to ensure the docker-pool volume was created:

# docker-storage-setup [5/1868] 0 Checking that no-one is using this disk right now ... OK Disk /dev/vdc: 31207 cylinders, 16 heads, 63 sectors/track sfdisk: /dev/vdc: unrecognized partition table type Old situation: sfdisk: No partitions found New situation: Units: sectors of 512 bytes, counting from 0 Device Boot Start End #sectors Id System /dev/vdc1 2048 31457279 31455232 8e Linux LVM /dev/vdc2 0 - 0 0 Empty /dev/vdc3 0 - 0 0 Empty /dev/vdc4 0 - 0 0 Empty Warning: partition 1 does not start at a cylinder boundary Warning: partition 1 does not end at a cylinder boundary Warning: no primary partition is marked bootable (active) This does not matter for LILO, but the DOS MBR will not boot this disk. Successfully wrote the new partition table Re-reading the partition table ... If you created or changed a DOS partition, /dev/foo7, say, then use dd(1) to zero the first 512 bytes: dd if=/dev/zero of=/dev/foo7 bs=512 count=1 (See fdisk(8).) Physical volume "/dev/vdc1" successfully created Volume group "docker-vg" successfully created Rounding up size to full physical extent 16.00 MiB Logical volume "docker-poolmeta" created. Logical volume "docker-pool" created. WARNING: Converting logical volume docker-vg/docker-pool and docker-vg/docker-poolmeta to pool's data and metadata volumes. THIS WILL DESTROY CONTENT OF LOGICAL VOLUME (filesystem etc.) Converted docker-vg/docker-pool to thin pool. Logical volume "docker-pool" changed.Option B) Use an existing, specified volume group.

In /etc/sysconfig/docker-storage-setup, set VG to the desired volume group. For example:

# cat <<EOF > /etc/sysconfig/docker-storage-setup VG=docker-vg EOFThen run docker-storage-setup and review the output to ensure the docker-pool volume was created:

# docker-storage-setup Rounding up size to full physical extent 16.00 MiB Logical volume "docker-poolmeta" created. Logical volume "docker-pool" created. WARNING: Converting logical volume docker-vg/docker-pool and docker-vg/docker-poolmeta to pool's data and metadata volumes. THIS WILL DESTROY CONTENT OF LOGICAL VOLUME (filesystem etc.) Converted docker-vg/docker-pool to thin pool. Logical volume "docker-pool" changed.Option C) Use the remaining free space from the volume group where your root file system is located.

Verify that the volume group where your root file system resides has the desired free space, then run docker-storage-setup and review the output to ensure the docker-pool volume was created:

# docker-storage-setup Rounding up size to full physical extent 32.00 MiB Logical volume "docker-poolmeta" created. Logical volume "docker-pool" created. WARNING: Converting logical volume rhel/docker-pool and rhel/docker-poolmeta to pool's data and metadata volumes. THIS WILL DESTROY CONTENT OF LOGICAL VOLUME (filesystem etc.) Converted rhel/docker-pool to thin pool. Logical volume "docker-pool" changed.

Verify your configuration. You should have a dm.thinpooldev value in the /etc/sysconfig/docker-storage file and a docker-pool logical volume:

# cat /etc/sysconfig/docker-storage DOCKER_STORAGE_OPTIONS=--storage-opt dm.fs=xfs --storage-opt dm.thinpooldev=/dev/mapper/docker--vg-docker--pool # lvs LV VG Attr LSize Pool Origin Data% Meta% Move Log Cpy%Sync Convert docker-pool rhel twi-a-t--- 9.29g 0.00 0.12ImportantBefore using Docker or OpenShift Container Platform, verify that the docker-pool logical volume is large enough to meet your needs. The docker-pool volume should be 60% of the available volume group and will grow to fill the volume group via LVM monitoring.

Check if Docker is running:

# systemctl is-active dockerIf Docker has not yet been started on the host, enable and start the service:

# systemctl enable docker # systemctl start dockerIf Docker is already running, re-initialize Docker:

WarningThis will destroy any containers or images currently on the host.

# systemctl stop docker # rm -rf /var/lib/docker/* # systemctl restart dockerIf there is any content in /var/lib/docker/, it must be deleted. Files will be present if Docker has been used prior to the installation of OpenShift Container Platform.

2.3.6.3. Reconfiguring Docker Storage

Should you need to reconfigure Docker storage after having created the docker-pool, you should first remove the docker-pool logical volume. If you are using a dedicated volume group, you should also remove the volume group and any associated physical volumes before reconfiguring docker-storage-setup according to the instructions above.

See Logical Volume Manager Administration for more detailed information on LVM management.

2.3.6.4. Enabling Image Signature Support

OpenShift Container Platform is capable of cryptographically verifying images are from trusted sources. The Container Security Guide provides a high-level description of how image signing works.

You can configure image signature verification using the atomic command line interface (CLI), version 1.12.5 or greater. The atomic CLI is pre-installed on RHEL Atomic Host systems.

For more on the atomic CLI, see the Atomic CLI documentation.

Install the atomic package if it is not installed on the host system:

$ yum install atomicThe atomic trust sub-command manages trust configuration. The default configuration is to whitelist all registries. This means no signature verification is configured.

$ atomic trust show

* (default) acceptA reasonable configuration might be to whitelist a particular registry or namespace, blacklist (reject) untrusted registries, and require signature verification on a vendor registry. The following set of commands performs this example configuration:

Example Atomic Trust Configuration

$ atomic trust add --type insecureAcceptAnything 172.30.1.1:5000

$ atomic trust add --sigstoretype atomic \

--pubkeys pub@example.com \

172.30.1.1:5000/production

$ atomic trust add --sigstoretype atomic \

--pubkeys /etc/pki/example.com.pub \

172.30.1.1:5000/production

$ atomic trust add --sigstoretype web \

--sigstore https://access.redhat.com/webassets/docker/content/sigstore \

--pubkeys /etc/pki/rpm-gpg/RPM-GPG-KEY-redhat-release \

registry.access.redhat.com

# atomic trust show

* (default) accept

172.30.1.1:5000 accept

172.30.1.1:5000/production signed security@example.com

registry.access.redhat.com signed security@redhat.com,security@redhat.com

When all the signed sources are verified, nodes may be further hardened with a global reject default:

$ atomic trust default reject

$ atomic trust show

* (default) reject

172.30.1.1:5000 accept

172.30.1.1:5000/production signed security@example.com

registry.access.redhat.com signed security@redhat.com,security@redhat.com

Use the atomic man page man atomic-trust for additional examples.

The following files and directories comprise the trust configuration of a host:

- /etc/containers/registries.d/*

- /etc/containers/policy.json

The trust configuration may be managed directly on each node or the generated files managed on a separate host and distributed to the appropriate nodes using Ansible, for example. See this Red Hat Knowledgebase Article for an example of automating file distribution with Ansible.

2.3.6.5. Managing Container Logs

Sometimes a container’s log file (the /var/lib/docker/containers/<hash>/<hash>-json.log file on the node where the container is running) can increase to a problematic size. You can manage this by configuring Docker’s json-file logging driver to restrict the size and number of log files.

| Option | Purpose |

|---|---|

|

| Sets the size at which a new log file is created. |

|

| Sets the file on each host to configure the options. |

For example, to set the maximum file size to 1MB and always keep the last three log files, edit the /etc/sysconfig/docker file to configure max-size=1M and max-file=3:

OPTIONS='--insecure-registry=172.30.0.0/16 --selinux-enabled --log-opt max-size=1M --log-opt max-file=3'Next, restart the Docker service:

# systemctl restart docker2.3.6.6. Viewing Available Container Logs

Container logs are stored in the /var/lib/docker/containers/<hash>/ directory on the node where the container is running. For example:

# ls -lh /var/lib/docker/containers/f088349cceac173305d3e2c2e4790051799efe363842fdab5732f51f5b001fd8/

total 2.6M

-rw-r--r--. 1 root root 5.6K Nov 24 00:12 config.json

-rw-r--r--. 1 root root 649K Nov 24 00:15 f088349cceac173305d3e2c2e4790051799efe363842fdab5732f51f5b001fd8-json.log

-rw-r--r--. 1 root root 977K Nov 24 00:15 f088349cceac173305d3e2c2e4790051799efe363842fdab5732f51f5b001fd8-json.log.1

-rw-r--r--. 1 root root 977K Nov 24 00:15 f088349cceac173305d3e2c2e4790051799efe363842fdab5732f51f5b001fd8-json.log.2

-rw-r--r--. 1 root root 1.3K Nov 24 00:12 hostconfig.json

drwx------. 2 root root 6 Nov 24 00:12 secretsSee Docker’s documentation for additional information on how to configure logging drivers.

2.3.6.7. Blocking Local Volume Usage

When a volume is provisioned using the VOLUME instruction in a Dockerfile or using the docker run -v <volumename> command, a host’s storage space is used. Using this storage can lead to an unexpected out of space issue and could bring down the host.

In OpenShift Container Platform, users trying to run their own images risk filling the entire storage space on a node host. One solution to this issue is to prevent users from running images with volumes. This way, the only storage a user has access to can be limited, and the cluster administrator can assign storage quota.

Using docker-novolume-plugin solves this issue by disallowing starting a container with local volumes defined. In particular, the plug-in blocks docker run commands that contain:

-

The

--volumes-fromoption -

Images that have

VOLUME(s) defined -

References to existing volumes that were provisioned with the

docker volumecommand

The plug-in does not block references to bind mounts.

To enable docker-novolume-plugin, perform the following steps on each node host:

Install the docker-novolume-plugin package:

$ yum install docker-novolume-pluginEnable and start the docker-novolume-plugin service:

$ systemctl enable docker-novolume-plugin $ systemctl start docker-novolume-pluginEdit the /etc/sysconfig/docker file and append the following to the

OPTIONSlist:--authorization-plugin=docker-novolume-pluginRestart the docker service:

$ systemctl restart docker

After you enable this plug-in, containers with local volumes defined fail to start and show the following error message:

runContainer: API error (500): authorization denied by plugin

docker-novolume-plugin: volumes are not allowed2.3.7. Ensuring Host Access

The quick and advanced installation methods require a user that has access to all hosts. If you want to run the installer as a non-root user, passwordless sudo rights must be configured on each destination host.

For example, you can generate an SSH key on the host where you will invoke the installation process:

# ssh-keygenDo not use a password.

An easy way to distribute your SSH keys is by using a bash loop:

# for host in master.example.com \

node1.example.com \

node2.example.com; \

do ssh-copy-id -i ~/.ssh/id_rsa.pub $host; \

doneModify the host names in the above command according to your configuration.

2.3.8. Setting Proxy Overrides

If the /etc/environment file on your nodes contains either an http_proxy or https_proxy value, you must also set a no_proxy value in that file to allow open communication between OpenShift Container Platform components.

The no_proxy parameter in /etc/environment file is not the same value as the global proxy values that you set in your inventory file. The global proxy values configure specific OpenShift Container Platform services with your proxy settings. See Configuring Global Proxy Options for details.

If the /etc/environment file contains proxy values, define the following values in the no_proxy parameter of that file on each node:

- Master and node host names or their domain suffix.

- Other internal host names or their domain suffix.

- Etcd IP addresses. You must provide IP addresses and not host names because etcd access is controlled by IP address.

-

Kubernetes IP address, by default

172.30.0.1. Must be the value set in theopenshift_portal_netparameter in your inventory file. -

Kubernetes internal domain suffix,

cluster.local. -

Kubernetes internal domain suffix,

.svc.

Because no_proxy does not support CIDR, you can use domain suffixes.

If you use either an http_proxy or https_proxy value, your no_proxy parameter value resembles the following example:

no_proxy=.internal.example.com,10.0.0.1,10.0.0.2,10.0.0.3,.cluster.local,.svc,localhost,127.0.0.1,172.30.0.12.3.9. What’s Next?

If you are interested in installing OpenShift Container Platform using the containerized method (optional for RHEL but required for RHEL Atomic Host), see Installing on Containerized Hosts to prepare your hosts.

When you are ready to proceed, you can install OpenShift Container Platform using the quick installation or advanced installation method.

If you are installing a stand-alone registry, continue with Installing a Stand-alone Registry.

2.4. Installing on Containerized Hosts

2.4.1. RPM Versus Containerized Installation

You can opt to install OpenShift Container Platform using the RPM or containerized package method. Either installation method results in a working environment, but the choice comes from the operating system and how you choose to update your hosts.

The default method for installing OpenShift Container Platform on Red Hat Enterprise Linux (RHEL) uses RPMs. When targeting a Red Hat Atomic Host system, the containerized method is the only available option, and is automatically selected for you based on the detection of the /run/ostree-booted file.

When using RPMs, all services are installed and updated via package management from an outside source. These modify a host’s existing configuration within the same user space. Alternatively, containerized installs instead are a complete, all-in-one resource using container images and its own operating system within the container. Any updated, newer containers replace any existing ones on your host. Choosing one method over the other depends on how you choose to update OpenShift Container Platform in the future.

The following table outlines further differences between the RPM and Containerized methods:

| RPM | Containerized | |

|---|---|---|

| Installation Method |

Packages via |

Container images via |

| Service Management |

|

|

| Operating System | Red Hat Enterprise Linux | Red Hat Enterprise Linux or Red Hat Atomic Host |

2.4.2. Install Methods for Containerized Hosts

As with the RPM installation, you can choose between the quick and advanced install methods for the containerized install.

For the quick installation method, you can choose between the RPM or containerized method on a per host basis during the interactive installation, or set the values manually in an installation configuration file.

For the advanced installation method, you can set the Ansible variable containerized=true in an inventory file on a cluster-wide or per host basis.

For the disconnected installation method, to install the etcd container, you can set the Ansible variable osm_etcd_image to be the fully qualified name of the etcd image on your local registry, for example, registry.example.com/rhel7/etcd.

2.4.3. Required Images

Containerized installations make use of the following images:

- openshift3/ose

- openshift3/node

- openshift3/openvswitch

- registry.access.redhat.com/rhel7/etcd

By default, all of the above images are pulled from the Red Hat Registry at registry.access.redhat.com.

If you need to use a private registry to pull these images during the installation, you can specify the registry information ahead of time. For the advanced installation method, you can set the following Ansible variables in your inventory file, as required:

openshift_docker_additional_registries=<registry_hostname>

openshift_docker_insecure_registries=<registry_hostname>

openshift_docker_blocked_registries=<registry_hostname>For the quick installation method, you can export the following environment variables on each target host:

# export OO_INSTALL_ADDITIONAL_REGISTRIES=<registry_hostname>

# export OO_INSTALL_INSECURE_REGISTRIES=<registry_hostname>Blocked Docker registries cannot currently be specified using the quick installation method.

The configuration of additional, insecure, and blocked Docker registries occurs at the beginning of the installation process to ensure that these settings are applied before attempting to pull any of the required images.

2.4.4. Starting and Stopping Containers

The installation process creates relevant systemd units which can be used to start, stop, and poll services using normal systemctl commands. For containerized installations, these unit names match those of an RPM installation, with the exception of the etcd service which is named etcd_container.

This change is necessary as currently RHEL Atomic Host ships with the etcd package installed as part of the operating system, so a containerized version is used for the OpenShift Container Platform installation instead. The installation process disables the default etcd service. The etcd package is slated to be removed from RHEL Atomic Host in the future.

2.4.5. File Paths

All OpenShift Container Platform configuration files are placed in the same locations during containerized installation as RPM based installations and will survive os-tree upgrades.

However, the default image stream and template files are installed at /etc/origin/examples/ for containerized installations rather than the standard /usr/share/openshift/examples/, because that directory is read-only on RHEL Atomic Host.

2.4.6. Storage Requirements

RHEL Atomic Host installations normally have a very small root file system. However, the etcd, master, and node containers persist data in the /var/lib/ directory. Ensure that you have enough space on the root file system before installing OpenShift Container Platform. See the System Requirements section for details.

2.4.7. Open vSwitch SDN Initialization

OpenShift SDN initialization requires that the Docker bridge be reconfigured and that Docker is restarted. This complicates the situation when the node is running within a container. When using the Open vSwitch (OVS) SDN, you will see the node start, reconfigure Docker, restart Docker (which restarts all containers), and finally start successfully.

In this case, the node service may fail to start and be restarted a few times, because the master services are also restarted along with Docker. The current implementation uses a workaround which relies on setting the Restart=always parameter in the Docker based systemd units.

2.5. Quick Installation

2.5.1. Overview

The quick installation method allows you to use an interactive CLI utility, the atomic-openshift-installer command, to install OpenShift Container Platform across a set of hosts. This installer can deploy OpenShift Container Platform components on targeted hosts by either installing RPMs or running containerized services.

While RHEL Atomic Host is supported for running containerized OpenShift Container Platform services, the installer is provided by an RPM and not available by default in RHEL Atomic Host. Therefore, it must be run from a Red Hat Enterprise Linux 7 system. The host initiating the installation does not need to be intended for inclusion in the OpenShift Container Platform cluster, but it can be.

This installation method is provided to make the installation experience easier by interactively gathering the data needed to run on each host. The installer is a self-contained wrapper intended for usage on a Red Hat Enterprise Linux (RHEL) 7 system.

In addition to running interactive installations from scratch, the atomic-openshift-installer command can also be run or re-run using a predefined installation configuration file. This file can be used with the installer to:

- run an unattended installation,

- add nodes to an existing cluster,

- upgrade your cluster, or

- reinstall the OpenShift Container Platform cluster completely.

Alternatively, you can use the advanced installation method for more complex environments.

To install OpenShift Container Platform as a stand-alone registry, see Installing a Stand-alone Registry.

2.5.2. Before You Begin

The installer allows you to install OpenShift Container Platform master and node components on a defined set of hosts.

By default, any hosts you designate as masters during the installation process are automatically also configured as nodes so that the masters are configured as part of the OpenShift Container Platform SDN. The node component on the masters, however, are marked unschedulable, which blocks pods from being scheduled on it. After the installation, you can mark them schedulable if you want.

Before installing OpenShift Container Platform, you must first satisfy the prerequisites on your hosts, which includes verifying system and environment requirements and properly installing and configuring Docker. You must also be prepared to provide or validate the following information for each of your targeted hosts during the course of the installation:

- User name on the target host that should run the Ansible-based installation (can be root or non-root)

- Host name

- Whether to install components for master, node, or both

- Whether to use the RPM or containerized method

- Internal and external IP addresses

If you are installing OpenShift Container Platform using the containerized method (optional for RHEL but required for RHEL Atomic Host), see the Installing on Containerized Hosts topic to ensure that you understand the differences between these methods, then return to this topic to continue.

After following the instructions in the Prerequisites topic and deciding between the RPM and containerized methods, you can continue to running an interactive or unattended installation.

2.5.3. Running an Interactive Installation

Ensure you have read through Before You Begin.

You can start the interactive installation by running:

$ atomic-openshift-installer installThen follow the on-screen instructions to install a new OpenShift Container Platform cluster.

After it has finished, ensure that you back up the ~/.config/openshift/installer.cfg.ymlinstallation configuration file that is created, as it is required if you later want to re-run the installation, add hosts to the cluster, or upgrade your cluster. Then, verify the installation.

2.5.4. Defining an Installation Configuration File

The installer can use a predefined installation configuration file, which contains information about your installation, individual hosts, and cluster. When running an interactive installation, an installation configuration file based on your answers is created for you in ~/.config/openshift/installer.cfg.yml. The file is created if you are instructed to exit the installation to manually modify the configuration or when the installation completes. You can also create the configuration file manually from scratch to perform an unattended installation.

Installation Configuration File Specification

version: v2

variant: openshift-enterprise

variant_version: 3.7

ansible_log_path: /tmp/ansible.log

deployment:

ansible_ssh_user: root

hosts:

- ip: 10.0.0.1

hostname: master-private.example.com

public_ip: 24.222.0.1

public_hostname: master.example.com

roles:

- master

- node

containerized: true

connect_to: 24.222.0.1

- ip: 10.0.0.2

hostname: node1-private.example.com

public_ip: 24.222.0.2

public_hostname: node1.example.com

node_labels: {'region': 'infra'}

roles:

- node

connect_to: 10.0.0.2

- ip: 10.0.0.3

hostname: node2-private.example.com

public_ip: 24.222.0.3

public_hostname: node2.example.com

roles:

- node

connect_to: 10.0.0.3

roles:

master:

<variable_name1>: "<value1>"

<variable_name2>: "<value2>"

node:

<variable_name1>: "<value1>" - 1

- The version of this installation configuration file. As of OpenShift Container Platform 3.3, the only valid version here is

v2. - 2

- The OpenShift Container Platform variant to install. For OpenShift Container Platform, set this to

openshift-enterprise. - 3

- A valid version of your selected variant:

3.7,3.6,3.5,3.4,3.3,3.2, or3.1. If not specified, this defaults to the latest version for the specified variant. - 4

- Defines where the Ansible logs are stored. By default, this is the /tmp/ansible.log file.

- 5

- Defines which user Ansible uses to SSH in to remote systems for gathering facts and for the installation. By default, this is the root user, but you can set it to any user that has sudo privileges.

- 6

- Defines a list of the hosts onto which you want to install the OpenShift Container Platform master and node components.

- 7 8

- Required. Allows the installer to connect to the system and gather facts before proceeding with the install.

- 9 10

- Required for unattended installations. If these details are not specified, then this information is pulled from the facts gathered by the installer, and you are asked to confirm the details. If undefined for an unattended installation, the installation fails.

- 11

- Determines the type of services that are installed. Specified as a list.

- 12

- If set to true, containerized OpenShift Container Platform services are run on target master and node hosts instead of installed using RPM packages. If set to false or unset, the default RPM method is used. RHEL Atomic Host requires the containerized method, and is automatically selected for you based on the detection of the /run/ostree-booted file. See Installing on Containerized Hosts for more details.

- 13

- The IP address that Ansible attempts to connect to when installing, upgrading, or uninstalling the systems. If the configuration file was auto-generated, then this is the value you first enter for the host during that interactive install process.

- 14

- Node labels can optionally be set per-host.

- 15

- Defines a dictionary of roles across the deployment.

- 16 17

- Any ansible variables that should only be applied to hosts assigned a role can be defined. For examples, see Configuring Ansible.

2.5.5. Running an Unattended Installation

Ensure you have read through the Before You Begin.

Unattended installations allow you to define your hosts and cluster configuration in an installation configuration file before running the installer so that you do not have to go through all of the interactive installation questions and answers. It also allows you to resume an interactive installation you may have left unfinished, and quickly get back to where you left off.

To run an unattended installation, first define an installation configuration file at ~/.config/openshift/installer.cfg.yml. Then, run the installer with the -u flag:

$ atomic-openshift-installer -u installBy default in interactive or unattended mode, the installer uses the configuration file located at ~/.config/openshift/installer.cfg.yml if the file exists. If it does not exist, attempting to start an unattended installation fails.

Alternatively, you can specify a different location for the configuration file using the -c option, but doing so will require you to specify the file location every time you run the installation:

$ atomic-openshift-installer -u -c </path/to/file> installAfter the unattended installation finishes, ensure that you back up the ~/.config/openshift/installer.cfg.yml file that was used, as it is required if you later want to re-run the installation, add hosts to the cluster, or upgrade your cluster. Then, verify the installation.

2.5.6. Verifying the Installation

Verify that the master is started and nodes are registered and reporting in Ready status. On the master host, run the following as root:

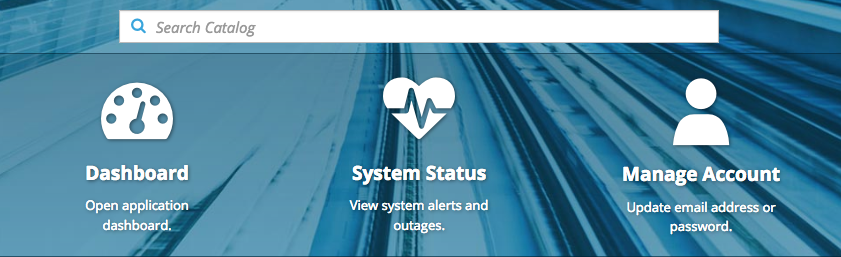

# oc get nodes NAME STATUS AGE master.example.com Ready,SchedulingDisabled 165d node1.example.com Ready 165d node2.example.com Ready 165dTo verify that the web console is installed correctly, use the master host name and the web console port number to access the web console with a web browser.

For example, for a master host with a host name of

master.openshift.comand using the default port of8443, the web console would be found athttps://master.openshift.com:8443/console.- Then, see What’s Next for the next steps on configuring your OpenShift Container Platform cluster.

2.5.7. Uninstalling OpenShift Container Platform

You can uninstall OpenShift Container Platform from all hosts in your cluster using the installer’s uninstall command. By default, the installer uses the installation configuration file located at ~/.config/openshift/installer.cfg.yml if the file exists:

$ atomic-openshift-installer uninstall

Alternatively, you can specify a different location for the configuration file using the -c option:

$ atomic-openshift-installer -c </path/to/file> uninstallSee the advanced installation method for more options.

2.5.8. What’s Next?

Now that you have a working OpenShift Container Platform instance, you can:

- Configure authentication; by default, authentication is set to Deny All.

- Configure the automatically-deployed integrated Docker registry.

- Configure the automatically-deployed router.

2.6. Advanced Installation

2.6.1. Overview

A reference configuration implemented using Ansible playbooks is available as the advanced installation method for installing a OpenShift Container Platform cluster. Familiarity with Ansible is assumed, however you can use this configuration as a reference to create your own implementation using the configuration management tool of your choosing.

While RHEL Atomic Host is supported for running containerized OpenShift Container Platform services, the advanced installation method utilizes Ansible, which is not available in RHEL Atomic Host, and must therefore be run from a RHEL 7 system. The host initiating the installation does not need to be intended for inclusion in the OpenShift Container Platform cluster, but it can be.

Alternatively, a containerized version of the installer is available as a system container, which is currently a Technology Preview feature.

Alternatively, you can use the quick installation method if you prefer an interactive installation experience.

To install OpenShift Container Platform as a stand-alone registry, see Installing a Stand-alone Registry.

Running Ansible playbooks with the --tags or --check options is not supported by Red Hat.

2.6.2. Before You Begin

Before installing OpenShift Container Platform, you must first see the Prerequisites and Host Preparation topics to prepare your hosts. This includes verifying system and environment requirements per component type and properly installing and configuring Docker. It also includes installing Ansible version 2.3 or later, as the advanced installation method is based on Ansible playbooks and as such requires directly invoking Ansible.

If you are interested in installing OpenShift Container Platform using the containerized method (optional for RHEL but required for RHEL Atomic Host), see Installing on Containerized Hosts to ensure that you understand the differences between these methods, then return to this topic to continue.

For large-scale installs, including suggestions for optimizing install time, see the Scaling and Performance Guide.

After following the instructions in the Prerequisites topic and deciding between the RPM and containerized methods, you can continue in this topic to Configuring Ansible Inventory Files.

2.6.3. Configuring Ansible Inventory Files

The /etc/ansible/hosts file is Ansible’s inventory file for the playbook used to install OpenShift Container Platform. The inventory file describes the configuration for your OpenShift Container Platform cluster. You must replace the default contents of the file with your desired configuration.

The following sections describe commonly-used variables to set in your inventory file during an advanced installation, followed by example inventory files you can use as a starting point for your installation.

Many of the Ansible variables described are optional. Accepting the default values should suffice for development environments, but for production environments, it is recommended you read through and become familiar with the various options available.

The example inventories describe various environment topographies, including using multiple masters for high availability. You can choose an example that matches your requirements, modify it to match your own environment, and use it as your inventory file when running the advanced installation.

Image Version Policy

Images require a version number policy in order to maintain updates. See the Image Version Tag Policy section in the Architecture Guide for more information.

2.6.3.1. Configuring Cluster Variables

To assign environment variables during the Ansible install that apply more globally to your OpenShift Container Platform cluster overall, indicate the desired variables in the /etc/ansible/hosts file on separate, single lines within the [OSEv3:vars] section. For example:

[OSEv3:vars]

openshift_master_identity_providers=[{'name': 'htpasswd_auth',

'login': 'true', 'challenge': 'true',

'kind': 'HTPasswdPasswordIdentityProvider',

'filename': '/etc/origin/master/htpasswd'}]

openshift_master_default_subdomain=apps.test.example.com

If a parameter value in the Ansible inventory file contains special characters, such as #, { or }, you must double-escape the value (that is enclose the value in both single and double quotation marks). For example, to use mypasswordwith###hashsigns as a value for the variable openshift_cloudprovider_openstack_password, declare it as openshift_cloudprovider_openstack_password='"mypasswordwith###hashsigns"' in the Ansible host inventory file.

The following table describe variables for use with the Ansible installer that can be assigned cluster-wide:

| Variable | Purpose |

|---|---|

|

|

This variable sets the SSH user for the installer to use and defaults to |

|

|

If |

|

|

This variable sets which INFO messages are logged to the

For more information on debug log levels, see Configuring Logging Levels. |

|

|

If set to |

|

|

Whether to enable Network Time Protocol (NTP) on cluster nodes. Important To prevent masters and nodes in the cluster from going out of sync, do not change the default value of this parameter. |

|

| This variable sets the parameter and arbitrary JSON values as per the requirement in your inventory hosts file. For example: |

|

| This variable enables API service auditing. See Audit Configuration for more information. |

|

| This variable overrides the host name for the cluster, which defaults to the host name of the master. |

|

| This variable overrides the public host name for the cluster, which defaults to the host name of the master. If you use an external load balancer, specify the address of the external load balancer. For example: |

|

|

Optional. This variable defines the HA method when deploying multiple masters. Supports the |

|

|

This variable enables rolling restarts of HA masters (i.e., masters are taken down one at a time) when running the upgrade playbook directly. It defaults to |

|

|

This variable configures which OpenShift SDN plug-in to use for the pod network, which defaults to |

|

| This variable sets the identity provider. The default value is Deny All. If you use a supported identity provider, configure OpenShift Container Platform to use it. |

|

| These variables are used to configure custom certificates which are deployed as part of the installation. See Configuring Custom Certificates for more information. |

|

| |

|

| Provide the location of the custom certificates for the hosted router. |

|

|

Validity of the auto-generated registry certificate in days. Defaults to |

|

|

Validity of the auto-generated CA certificate in days. Defaults to |

|

|

Validity of the auto-generated node certificate in days. Defaults to |

|

|

Validity of the auto-generated master certificate in days. Defaults to |

|

|

Validity of the auto-generated external etcd certificates in days. Controls validity for etcd CA, peer, server and client certificates. Defaults to |

|

|

Set to |

|

| These variables override defaults for session options in the OAuth configuration. See Configuring Session Options for more information. |

|

| |

|

| |

|

| |

|

|

This variable configures |

|

|

This variable configures the subnet in which services will be created within the OpenShift Container Platform SDN. This network block should be private and must not conflict with any existing network blocks in your infrastructure to which pods, nodes, or the master may require access to, or the installation will fail. Defaults to |

|

| This variable overrides the default subdomain to use for exposed routes. |

|

|

This variable specifies the service proxy mode to use: either |

|

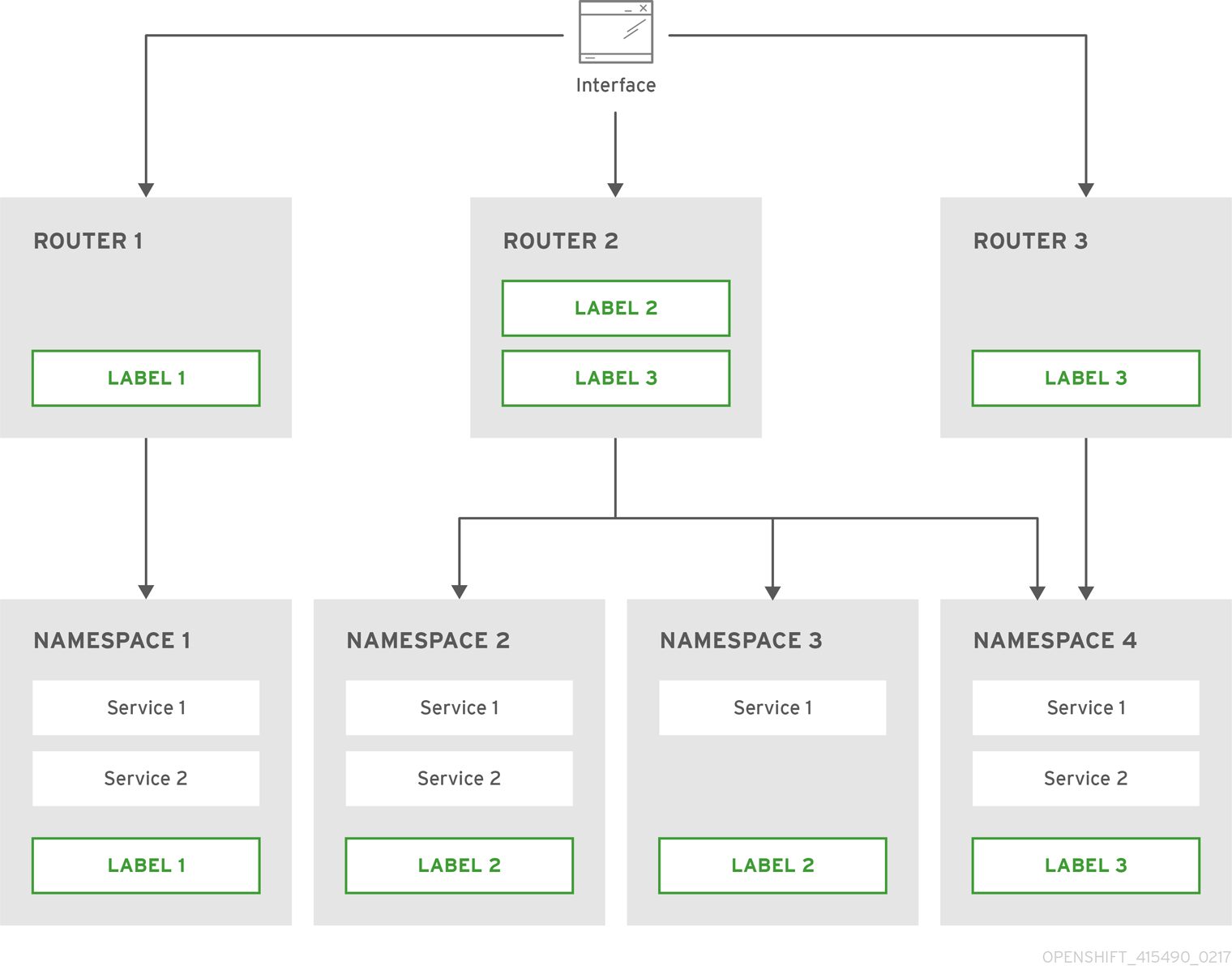

| Default node selector for automatically deploying router pods. See Configuring Node Host Labels for details. |

|

| Default node selector for automatically deploying registry pods. See Configuring Node Host Labels for details. |

|

| This variable enables the template service broker by specifying one or more namespaces whose templates will be served by the broker. |

|

|

Default node selector for automatically deploying template service broker pods, defaults |

|

| This variable overrides the node selector that projects will use by default when placing pods. |

|

|

This variable overrides the SDN cluster network CIDR block. This is the network from which pod IPs are assigned. This network block should be a private block and must not conflict with existing network blocks in your infrastructure to which pods, nodes, or the master may require access. Defaults to |

|

|