Postinstallation configuration

Day 2 operations for OpenShift Container Platform

Abstract

Chapter 1. Postinstallation configuration overview

After installing OpenShift Container Platform, a cluster administrator can configure and customize the following components:

- Machine

- Bare metal

- Cluster

- Node

- Network

- Storage

- Users

- Alerts and notifications

1.1. Post-installation configuration tasks

You can perform the post-installation configuration tasks to configure your environment to meet your need.

The following lists details these configurations:

-

Configure operating system features: The Machine Config Operator (MCO) manages

MachineConfigobjects. By using the MCO, you can configure nodes and custom resources. Configure bare metal nodes: You can use the Bare Metal Operator (BMO) to manage bare metal hosts. The BMO can complete the following operations:

- Inspects hardware details of the host and report them to the bare metal host.

- Inspect firmware and configure BIOS settings.

- Provision hosts with a desired image.

- Clean disk contents for the host before or after provisioning the host.

Configure cluster features. You can modify the following features of an OpenShift Container Platform cluster:

- Image registry

- Networking configuration

- Image build behavior

- Identity provider

- The etcd configuration

- Machine set creation to handle the workloads

- Cloud provider credential management

Configuring a private cluster: By default, the installation program provisions OpenShift Container Platform by using a publicly accessible DNS and endpoints. To make your cluster accessible only from within an internal network, configure the following components to make them private:

- DNS

- Ingress Controller

- API server

Perform node operations: By default, OpenShift Container Platform uses Red Hat Enterprise Linux CoreOS (RHCOS) compute machines. You can perform the following node operations:

- Add and remove compute machines.

- Add and remove taints and tolerations.

- Configure the maximum number of pods per node.

- Enable Device Manager.

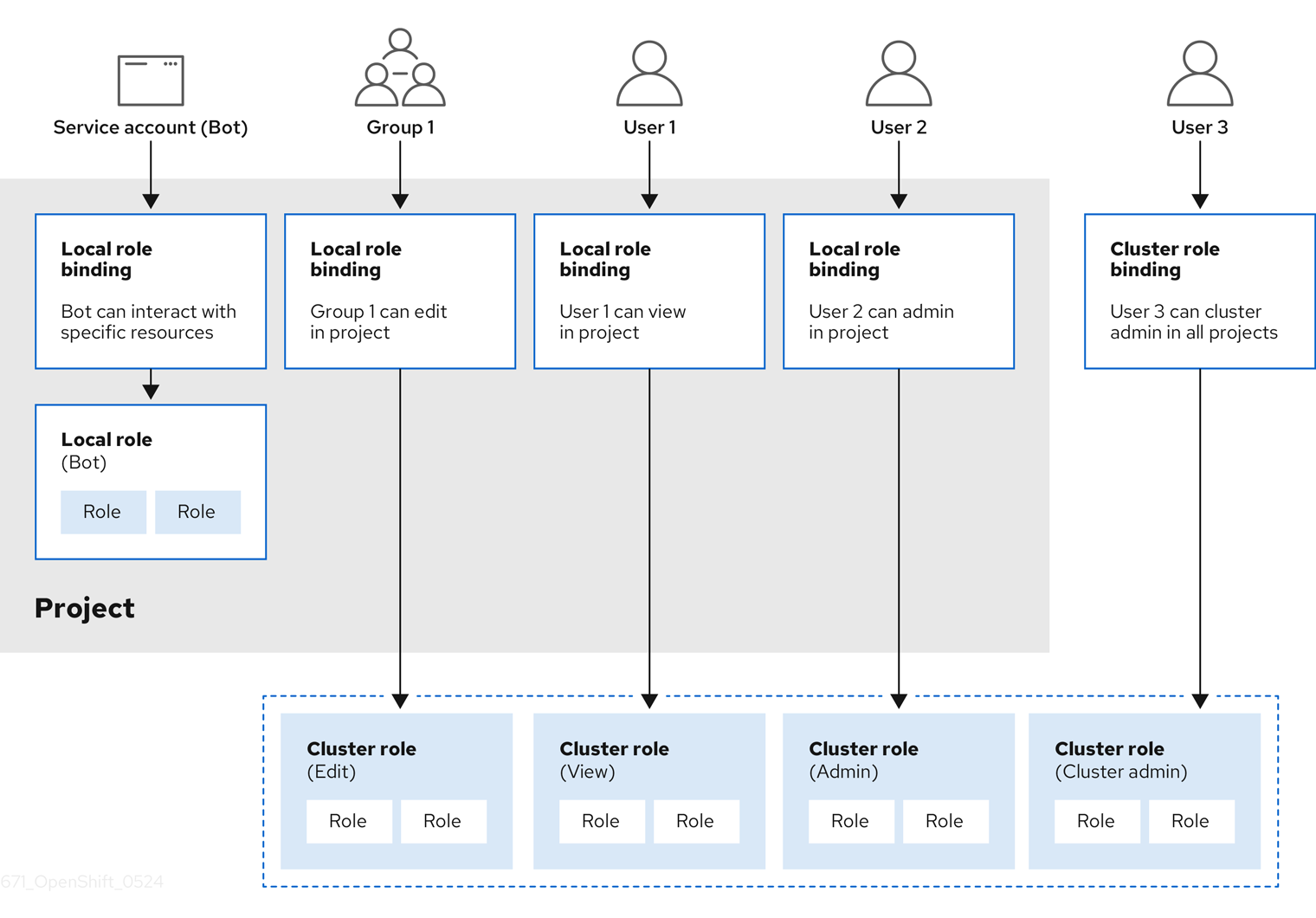

- Configure users: OAuth access tokens allow users to authenticate themselves to the API. You can configure OAuth to perform the following tasks:

- Specify an identity provider

- Use role-based access control to define and supply permissions to users

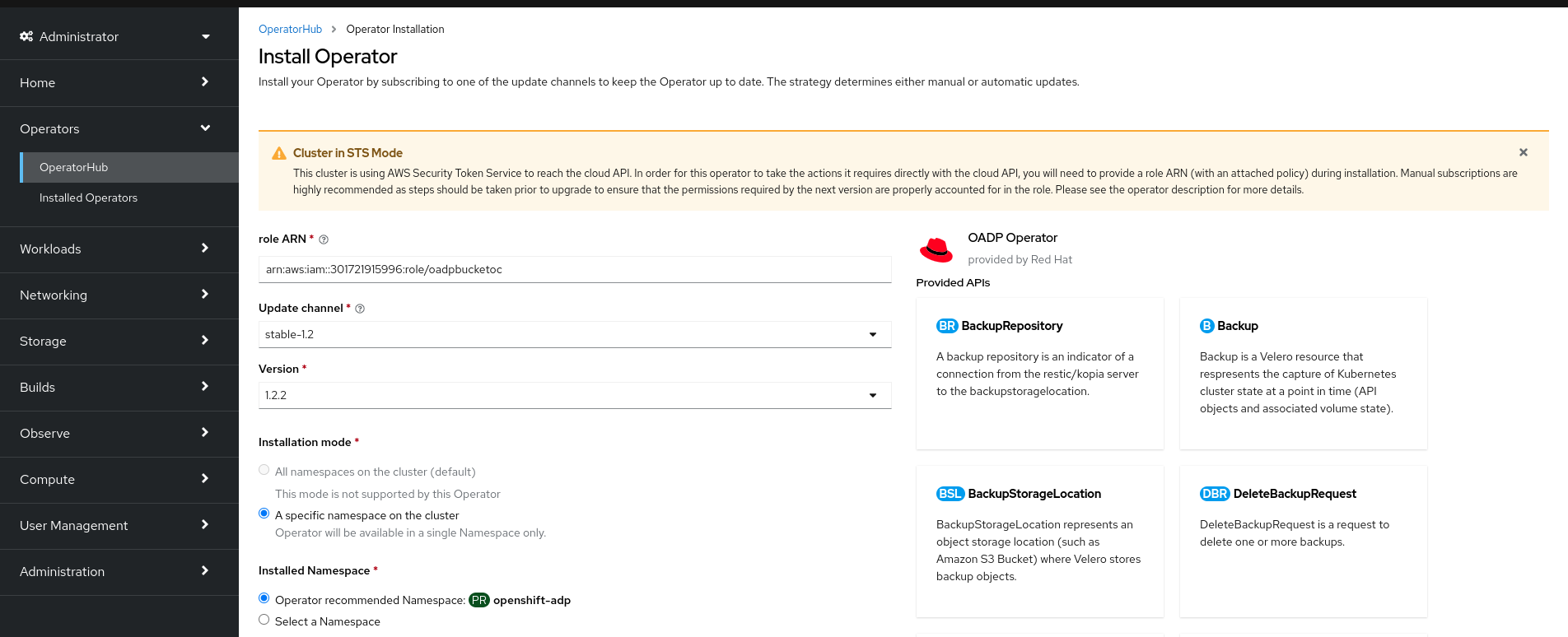

- Install an Operator from OperatorHub

- Configuring alert notifications: By default, firing alerts are displayed on the Alerting UI of the web console. You can also configure OpenShift Container Platform to send alert notifications to external systems.

Chapter 2. Configuring a private cluster

After you install an OpenShift Container Platform version 4.14 cluster, you can set some of its core components to be private.

2.1. About private clusters

By default, OpenShift Container Platform is provisioned using publicly-accessible DNS and endpoints. You can set the DNS, Ingress Controller, and API server to private after you deploy your private cluster.

If the cluster has any public subnets, load balancer services created by administrators might be publicly accessible. To ensure cluster security, verify that these services are explicitly annotated as private.

2.1.1. DNS

If you install OpenShift Container Platform on installer-provisioned infrastructure, the installation program creates records in a pre-existing public zone and, where possible, creates a private zone for the cluster’s own DNS resolution. In both the public zone and the private zone, the installation program or cluster creates DNS entries for *.apps, for the Ingress object, and api, for the API server.

The *.apps records in the public and private zone are identical, so when you delete the public zone, the private zone seamlessly provides all DNS resolution for the cluster.

2.1.2. Ingress Controller

Because the default Ingress object is created as public, the load balancer is internet-facing and in the public subnets.

The Ingress Operator generates a default certificate for an Ingress Controller to serve as a placeholder until you configure a custom default certificate. Do not use Operator-generated default certificates in production clusters. The Ingress Operator does not rotate its own signing certificate or the default certificates that it generates. Operator-generated default certificates are intended as placeholders for custom default certificates that you configure.

2.1.3. API server

By default, the installation program creates appropriate network load balancers for the API server to use for both internal and external traffic.

On Amazon Web Services (AWS), separate public and private load balancers are created. The load balancers are identical except that an additional port is available on the internal one for use within the cluster. Although the installation program automatically creates or destroys the load balancer based on API server requirements, the cluster does not manage or maintain them. As long as you preserve the cluster’s access to the API server, you can manually modify or move the load balancers. For the public load balancer, port 6443 is open and the health check is configured for HTTPS against the /readyz path.

On Google Cloud, a single load balancer is created to manage both internal and external API traffic, so you do not need to modify the load balancer.

On Microsoft Azure, both public and private load balancers are created. However, because of limitations in current implementation, you just retain both load balancers in a private cluster.

2.2. Configuring DNS records to be published in a private zone

For all OpenShift Container Platform clusters, whether public or private, DNS records are published in a public zone by default.

You can remove the public zone from the cluster DNS configuration to avoid exposing DNS records to the public. You might want to avoid exposing sensitive information, such as internal domain names, internal IP addresses, or the number of clusters at an organization, or you might simply have no need to publish records publicly. If all the clients that should be able to connect to services within the cluster use a private DNS service that has the DNS records from the private zone, then there is no need to have a public DNS record for the cluster.

After you deploy a cluster, you can modify its DNS to use only a private zone by modifying the DNS custom resource (CR). Modifying the DNS CR in this way means that any DNS records that are subsequently created are not published to public DNS servers, which keeps knowledge of the DNS records isolated to internal users. This can be done when you configure the cluster to be private, or if you never want DNS records to be publicly resolvable.

Alternatively, even in a private cluster, you might keep the public zone for DNS records because it allows clients to resolve DNS names for applications running on that cluster. For example, an organization can have machines that connect to the public internet and then establish VPN connections for certain private IP ranges in order to connect to private IP addresses. The DNS lookups from these machines use the public DNS to determine the private addresses of those services, and then connect to the private addresses over the VPN.

Procedure

Review the

DNSCR for your cluster by running the following command and observing the output:$ oc get dnses.config.openshift.io/cluster -o yamlExample output

apiVersion: config.openshift.io/v1 kind: DNS metadata: creationTimestamp: "2019-10-25T18:27:09Z" generation: 2 name: cluster resourceVersion: "37966" selfLink: /apis/config.openshift.io/v1/dnses/cluster uid: 0e714746-f755-11f9-9cb1-02ff55d8f976 spec: baseDomain: <base_domain> privateZone: tags: Name: <infrastructure_id>-int kubernetes.io/cluster/<infrastructure_id>: owned publicZone: id: Z2XXXXXXXXXXA4 status: {}Note that the

specsection contains both a private and a public zone.Patch the

DNSCR to remove the public zone by running the following command:$ oc patch dnses.config.openshift.io/cluster --type=merge --patch='{"spec": {"publicZone": null}}'Example output

dns.config.openshift.io/cluster patchedThe Ingress Operator consults the

DNSCR definition when it creates DNS records forIngressControllerobjects. If only private zones are specified, only private records are created.ImportantExisting DNS records are not modified when you remove the public zone. You must manually delete previously published public DNS records if you no longer want them to be published publicly.

Verification

Review the

DNSCR for your cluster and confirm that the public zone was removed, by running the following command and observing the output:$ oc get dnses.config.openshift.io/cluster -o yamlExample output

apiVersion: config.openshift.io/v1 kind: DNS metadata: creationTimestamp: "2019-10-25T18:27:09Z" generation: 2 name: cluster resourceVersion: "37966" selfLink: /apis/config.openshift.io/v1/dnses/cluster uid: 0e714746-f755-11f9-9cb1-02ff55d8f976 spec: baseDomain: <base_domain> privateZone: tags: Name: <infrastructure_id>-int kubernetes.io/cluster/<infrastructure_id>-wfpg4: owned status: {}

2.3. Setting the Ingress Controller to private

After you deploy a cluster, you can modify its Ingress Controller to use only a private zone.

Procedure

Modify the default Ingress Controller to use only an internal endpoint:

$ oc replace --force --wait --filename - <<EOF apiVersion: operator.openshift.io/v1 kind: IngressController metadata: namespace: openshift-ingress-operator name: default spec: endpointPublishingStrategy: type: LoadBalancerService loadBalancer: scope: Internal EOFExample output

ingresscontroller.operator.openshift.io "default" deleted ingresscontroller.operator.openshift.io/default replacedThe public DNS entry is removed, and the private zone entry is updated.

2.4. Restricting the API server to private

After you deploy a cluster to Amazon Web Services (AWS) or Microsoft Azure, you can reconfigure the API server to use only the private zone.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Have access to the web console as a user with

adminprivileges.

Procedure

In the web portal or console for your cloud provider, take the following actions:

Locate and delete the appropriate load balancer component:

- For AWS, delete the external load balancer. The API DNS entry in the private zone already points to the internal load balancer, which uses an identical configuration, so you do not need to modify the internal load balancer.

-

For Azure, delete the

api-internalrule for the load balancer.

-

Delete the

api.$clustername.$yourdomainDNS entry in the public zone.

Remove the external load balancers:

ImportantYou can run the following steps only for an installer-provisioned infrastructure (IPI) cluster. For a user-provisioned infrastructure (UPI) cluster, you must manually remove or disable the external load balancers.

If your cluster uses a control plane machine set, delete the following lines in the control plane machine set custom resource:

providerSpec: value: loadBalancers: - name: lk4pj-ext1 type: network2 - name: lk4pj-int type: networkIf your cluster does not use a control plane machine set, you must delete the external load balancers from each control plane machine.

From your terminal, list the cluster machines by running the following command:

$ oc get machine -n openshift-machine-apiExample output

NAME STATE TYPE REGION ZONE AGE lk4pj-master-0 running m4.xlarge us-east-1 us-east-1a 17m lk4pj-master-1 running m4.xlarge us-east-1 us-east-1b 17m lk4pj-master-2 running m4.xlarge us-east-1 us-east-1a 17m lk4pj-worker-us-east-1a-5fzfj running m4.xlarge us-east-1 us-east-1a 15m lk4pj-worker-us-east-1a-vbghs running m4.xlarge us-east-1 us-east-1a 15m lk4pj-worker-us-east-1b-zgpzg running m4.xlarge us-east-1 us-east-1b 15mThe control plane machines contain

masterin the name.Remove the external load balancer from each control plane machine:

Edit a control plane machine object to by running the following command:

$ oc edit machines -n openshift-machine-api <control_plane_name>1 - 1

- Specify the name of the control plane machine object to modify.

Remove the lines that describe the external load balancer, which are marked in the following example:

providerSpec: value: loadBalancers: - name: lk4pj-ext1 type: network2 - name: lk4pj-int type: network- Save your changes and exit the object specification.

- Repeat this process for each of the control plane machines.

Chapter 3. Bare metal configuration

When deploying OpenShift Container Platform on bare metal hosts, there are times when you need to make changes to the host either before or after provisioning. This can include inspecting the host’s hardware, firmware, and firmware details. It can also include formatting disks or changing modifiable firmware settings.

3.1. About the Bare Metal Operator

Use the Bare Metal Operator (BMO) to provision, manage, and inspect bare-metal hosts in your cluster.

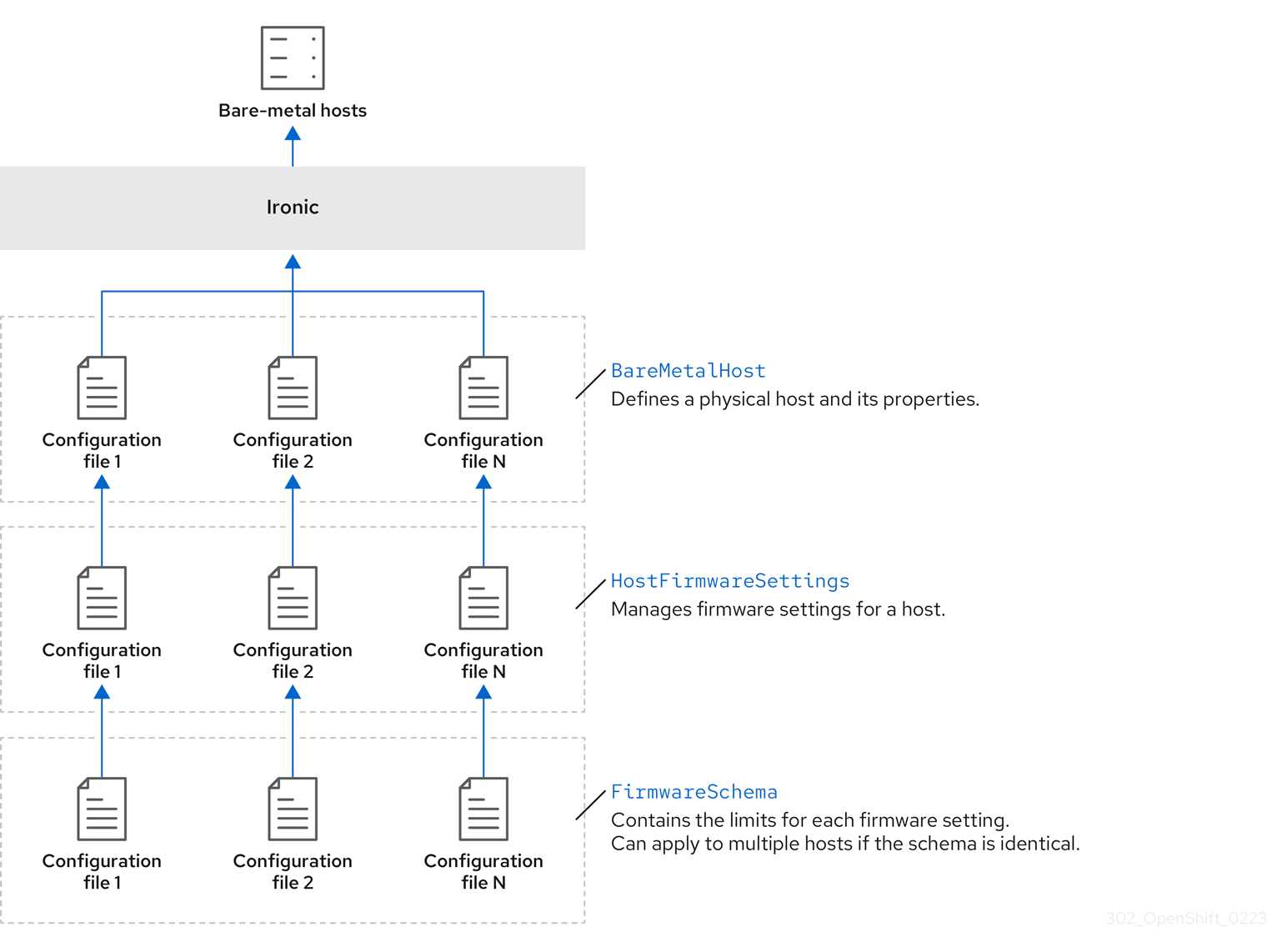

The BMO uses three resources to complete these tasks:

-

BareMetalHost -

HostFirmwareSettings -

FirmwareSchema

The BMO maintains an inventory of the physical hosts in the cluster by mapping each bare-metal host to an instance of the BareMetalHost custom resource definition. Each BareMetalHost resource features hardware, software, and firmware details. The BMO continually inspects the bare-metal hosts in the cluster to ensure each BareMetalHost resource accurately details the components of the corresponding host.

The BMO also uses the HostFirmwareSettings resource and the FirmwareSchema resource to detail firmware specifications for the bare-metal host.

The BMO interfaces with bare-metal hosts in the cluster by using the Ironic API service. The Ironic service uses the Baseboard Management Controller (BMC) on the host to interface with the machine.

Some common tasks you can complete by using the BMO include the following:

- Provision bare-metal hosts to the cluster with a specific image

- Format a host’s disk contents before provisioning or after deprovisioning

- Turn on or off a host

- Change firmware settings

- View the host’s hardware details

3.1.1. Bare Metal Operator architecture

The Bare Metal Operator (BMO) uses three resources to provision, manage, and inspect bare-metal hosts in your cluster. The following diagram illustrates the architecture of these resources:

BareMetalHost

The BareMetalHost resource defines a physical host and its properties. When you provision a bare-metal host to the cluster, you must define a BareMetalHost resource for that host. For ongoing management of the host, you can inspect the information in the BareMetalHost or update this information.

The BareMetalHost resource features provisioning information such as the following:

- Deployment specifications such as the operating system boot image or the custom RAM disk

- Provisioning state

- Baseboard Management Controller (BMC) address

- Desired power state

The BareMetalHost resource features hardware information such as the following:

- Number of CPUs

- MAC address of a NIC

- Size of the host’s storage device

- Current power state

HostFirmwareSettings

You can use the HostFirmwareSettings resource to retrieve and manage the firmware settings for a host. When a host moves to the Available state, the Ironic service reads the host’s firmware settings and creates the HostFirmwareSettings resource. There is a one-to-one mapping between the BareMetalHost resource and the HostFirmwareSettings resource.

You can use the HostFirmwareSettings resource to inspect the firmware specifications for a host or to update a host’s firmware specifications.

You must adhere to the schema specific to the vendor firmware when you edit the spec field of the HostFirmwareSettings resource. This schema is defined in the read-only FirmwareSchema resource.

FirmwareSchema

Firmware settings vary among hardware vendors and host models. A FirmwareSchema resource is a read-only resource that contains the types and limits for each firmware setting on each host model. The data comes directly from the BMC by using the Ironic service. The FirmwareSchema resource enables you to identify valid values you can specify in the spec field of the HostFirmwareSettings resource.

A FirmwareSchema resource can apply to many BareMetalHost resources if the schema is the same.

3.2. About the BareMetalHost resource

Metal3 introduces the concept of the BareMetalHost resource, which defines a physical host and its properties. The BareMetalHost resource contains two sections:

-

The

BareMetalHostspec -

The

BareMetalHoststatus

3.2.1. The BareMetalHost spec

The spec section of the BareMetalHost resource defines the desired state of the host.

| Parameters | Description |

|---|---|

|

|

An interface to enable or disable automated cleaning during provisioning and de-provisioning. When set to |

|

The

|

|

| The MAC address of the NIC used for provisioning the host. |

|

|

The boot mode of the host. It defaults to |

|

|

A reference to another resource that is using the host. It could be empty if another resource is not currently using the host. For example, a |

|

| A human-provided string to help identify the host. |

|

| A boolean indicating whether the host provisioning and deprovisioning are managed externally. When set:

|

|

|

Contains information about the BIOS configuration of bare-metal hosts. Currently,

|

|

The

|

|

| A reference to the secret containing the network configuration data and its namespace, so that it can be attached to the host before the host boots to set up the network. |

|

|

A boolean indicating whether the host should be powered on ( |

| (Optional) Contains the information about the RAID configuration for bare-metal hosts. If not specified, it retains the current configuration. Note OpenShift Container Platform 4.14 supports hardware RAID for BMCs using the iRMC protocol only. OpenShift Container Platform 4.14 does not support software RAID on the installation drive. See the following configuration settings:

You can set the

If you receive an error message indicating that the driver does not support RAID, set the |

|

The

|

3.2.2. The BareMetalHost status

The BareMetalHost status represents the host’s current state, and includes tested credentials, current hardware details, and other information.

| Parameters | Description |

|---|---|

|

| A reference to the secret and its namespace holding the last set of baseboard management controller (BMC) credentials the system was able to validate as working. |

|

| Details of the last error reported by the provisioning backend, if any. |

|

| Indicates the class of problem that has caused the host to enter an error state. The error types are:

|

|

The

|

| Contains BIOS firmware information. For example, the hardware vendor and version. |

|

The

|

| The host’s amount of memory in Mebibytes (MiB). |

|

The

|

|

Contains information about the host’s |

|

| The timestamp of the last time the status of the host was updated. |

|

| The status of the server. The status is one of the following:

|

|

| Boolean indicating whether the host is powered on. |

|

The

|

|

| A reference to the secret and its namespace holding the last set of BMC credentials that were sent to the provisioning backend. |

3.3. Getting the BareMetalHost resource

The BareMetalHost resource contains the properties of a physical host. You must get the BareMetalHost resource for a physical host to review its properties.

Procedure

Get the list of

BareMetalHostresources:$ oc get bmh -n openshift-machine-api -o yamlNoteYou can use

baremetalhostas the long form ofbmhwithoc getcommand.Get the list of hosts:

$ oc get bmh -n openshift-machine-apiGet the

BareMetalHostresource for a specific host:$ oc get bmh <host_name> -n openshift-machine-api -o yamlWhere

<host_name>is the name of the host.Example output

apiVersion: metal3.io/v1alpha1 kind: BareMetalHost metadata: creationTimestamp: "2022-06-16T10:48:33Z" finalizers: - baremetalhost.metal3.io generation: 2 name: openshift-worker-0 namespace: openshift-machine-api resourceVersion: "30099" uid: 1513ae9b-e092-409d-be1b-ad08edeb1271 spec: automatedCleaningMode: metadata bmc: address: redfish://10.46.61.19:443/redfish/v1/Systems/1 credentialsName: openshift-worker-0-bmc-secret disableCertificateVerification: true bootMACAddress: 48:df:37:c7:f7:b0 bootMode: UEFI consumerRef: apiVersion: machine.openshift.io/v1beta1 kind: Machine name: ocp-edge-958fk-worker-0-nrfcg namespace: openshift-machine-api customDeploy: method: install_coreos online: true rootDeviceHints: deviceName: /dev/disk/by-id/scsi-<serial_number> userData: name: worker-user-data-managed namespace: openshift-machine-api status: errorCount: 0 errorMessage: "" goodCredentials: credentials: name: openshift-worker-0-bmc-secret namespace: openshift-machine-api credentialsVersion: "16120" hardware: cpu: arch: x86_64 clockMegahertz: 2300 count: 64 flags: - 3dnowprefetch - abm - acpi - adx - aes model: Intel(R) Xeon(R) Gold 5218 CPU @ 2.30GHz firmware: bios: date: 10/26/2020 vendor: HPE version: U30 hostname: openshift-worker-0 nics: - mac: 48:df:37:c7:f7:b3 model: 0x8086 0x1572 name: ens1f3 ramMebibytes: 262144 storage: - hctl: "0:0:0:0" model: VK000960GWTTB name: /dev/disk/by-id/scsi-<serial_number> sizeBytes: 960197124096 type: SSD vendor: ATA systemVendor: manufacturer: HPE productName: ProLiant DL380 Gen10 (868703-B21) serialNumber: CZ200606M3 lastUpdated: "2022-06-16T11:41:42Z" operationalStatus: OK poweredOn: true provisioning: ID: 217baa14-cfcf-4196-b764-744e184a3413 bootMode: UEFI customDeploy: method: install_coreos image: url: "" raid: hardwareRAIDVolumes: null softwareRAIDVolumes: [] rootDeviceHints: deviceName: /dev/disk/by-id/scsi-<serial_number> state: provisioned triedCredentials: credentials: name: openshift-worker-0-bmc-secret namespace: openshift-machine-api credentialsVersion: "16120"

3.4. About the HostFirmwareSettings resource

You can use the HostFirmwareSettings resource to retrieve and manage the BIOS settings for a host. When a host moves to the Available state, Ironic reads the host’s BIOS settings and creates the HostFirmwareSettings resource. The resource contains the complete BIOS configuration returned from the baseboard management controller (BMC). Whereas, the firmware field in the BareMetalHost resource returns three vendor-independent fields, the HostFirmwareSettings resource typically comprises many BIOS settings of vendor-specific fields per host.

The HostFirmwareSettings resource contains two sections:

-

The

HostFirmwareSettingsspec. -

The

HostFirmwareSettingsstatus.

3.4.1. The HostFirmwareSettings spec

The spec section of the HostFirmwareSettings resource defines the desired state of the host’s BIOS, and it is empty by default. Ironic uses the settings in the spec.settings section to update the baseboard management controller (BMC) when the host is in the Preparing state. Use the FirmwareSchema resource to ensure that you do not send invalid name/value pairs to hosts. See "About the FirmwareSchema resource" for additional details.

Example

spec:

settings:

ProcTurboMode: Disabled- 1

- In the foregoing example, the

spec.settingssection contains a name/value pair that will set theProcTurboModeBIOS setting toDisabled.

Integer parameters listed in the status section appear as strings. For example, "1". When setting integers in the spec.settings section, the values should be set as integers without quotes. For example, 1.

3.4.2. The HostFirmwareSettings status

The status represents the current state of the host’s BIOS.

| Parameters | Description |

|---|---|

|

The

|

|

The

|

|

The |

3.5. Getting the HostFirmwareSettings resource

The HostFirmwareSettings resource contains the vendor-specific BIOS properties of a physical host. You must get the HostFirmwareSettings resource for a physical host to review its BIOS properties.

Procedure

Get the detailed list of

HostFirmwareSettingsresources:$ oc get hfs -n openshift-machine-api -o yamlNoteYou can use

hostfirmwaresettingsas the long form ofhfswith theoc getcommand.Get the list of

HostFirmwareSettingsresources:$ oc get hfs -n openshift-machine-apiGet the

HostFirmwareSettingsresource for a particular host$ oc get hfs <host_name> -n openshift-machine-api -o yamlWhere

<host_name>is the name of the host.

3.6. Editing the HostFirmwareSettings resource

You can edit the HostFirmwareSettings of provisioned hosts.

You can only edit hosts when they are in the provisioned state, excluding read-only values. You cannot edit hosts in the externally provisioned state.

Procedure

Get the list of

HostFirmwareSettingsresources:$ oc get hfs -n openshift-machine-apiEdit a host’s

HostFirmwareSettingsresource:$ oc edit hfs <host_name> -n openshift-machine-apiWhere

<host_name>is the name of a provisioned host. TheHostFirmwareSettingsresource will open in the default editor for your terminal.Add name/value pairs to the

spec.settingssection:Example

spec: settings: name: value1 - 1

- Use the

FirmwareSchemaresource to identify the available settings for the host. You cannot set values that are read-only.

- Save the changes and exit the editor.

Get the host’s machine name:

$ oc get bmh <host_name> -n openshift-machine nameWhere

<host_name>is the name of the host. The machine name appears under theCONSUMERfield.Annotate the machine to delete it from the machineset:

$ oc annotate machine <machine_name> machine.openshift.io/delete-machine=true -n openshift-machine-apiWhere

<machine_name>is the name of the machine to delete.Get a list of nodes and count the number of worker nodes:

$ oc get nodesGet the machineset:

$ oc get machinesets -n openshift-machine-apiScale the machineset:

$ oc scale machineset <machineset_name> -n openshift-machine-api --replicas=<n-1>Where

<machineset_name>is the name of the machineset and<n-1>is the decremented number of worker nodes.When the host enters the

Availablestate, scale up the machineset to make theHostFirmwareSettingsresource changes take effect:$ oc scale machineset <machineset_name> -n openshift-machine-api --replicas=<n>Where

<machineset_name>is the name of the machineset and<n>is the number of worker nodes.

3.7. Verifying the HostFirmware Settings resource is valid

When the user edits the spec.settings section to make a change to the HostFirmwareSetting(HFS) resource, the Bare Metal Operator (BMO) validates the change against the FimwareSchema resource, which is a read-only resource. If the setting is invalid, the BMO will set the Type value of the status.Condition setting to False and also generate an event and store it in the HFS resource. Use the following procedure to verify that the resource is valid.

Procedure

Get a list of

HostFirmwareSettingresources:$ oc get hfs -n openshift-machine-apiVerify that the

HostFirmwareSettingsresource for a particular host is valid:$ oc describe hfs <host_name> -n openshift-machine-apiWhere

<host_name>is the name of the host.Example output

Events: Type Reason Age From Message ---- ------ ---- ---- ------- Normal ValidationFailed 2m49s metal3-hostfirmwaresettings-controller Invalid BIOS setting: Setting ProcTurboMode is invalid, unknown enumeration value - FooImportantIf the response returns

ValidationFailed, there is an error in the resource configuration and you must update the values to conform to theFirmwareSchemaresource.

3.8. About the FirmwareSchema resource

BIOS settings vary among hardware vendors and host models. A FirmwareSchema resource is a read-only resource that contains the types and limits for each BIOS setting on each host model. The data comes directly from the BMC through Ironic. The FirmwareSchema enables you to identify valid values you can specify in the spec field of the HostFirmwareSettings resource. The FirmwareSchema resource has a unique identifier derived from its settings and limits. Identical host models use the same FirmwareSchema identifier. It is likely that multiple instances of HostFirmwareSettings use the same FirmwareSchema.

| Parameters | Description |

|---|---|

|

The

|

3.9. Getting the FirmwareSchema resource

Each host model from each vendor has different BIOS settings. When editing the HostFirmwareSettings resource’s spec section, the name/value pairs you set must conform to that host’s firmware schema. To ensure you are setting valid name/value pairs, get the FirmwareSchema for the host and review it.

Procedure

To get a list of

FirmwareSchemaresource instances, execute the following:$ oc get firmwareschema -n openshift-machine-apiTo get a particular

FirmwareSchemainstance, execute:$ oc get firmwareschema <instance_name> -n openshift-machine-api -o yamlWhere

<instance_name>is the name of the schema instance stated in theHostFirmwareSettingsresource (see Table 3).

Chapter 4. Configuring multi-architecture compute machines on an OpenShift cluster

4.1. About clusters with multi-architecture compute machines

An OpenShift Container Platform cluster with multi-architecture compute machines is a cluster that supports compute machines with different architectures. Clusters with multi-architecture compute machines are available only on Amazon Web Services (AWS) or Microsoft Azure installer-provisioned infrastructures and bare metal, IBM Power®, and IBM Z® user-provisioned infrastructures with x86_64 control plane machines.

When there are nodes with multiple architectures in your cluster, the architecture of your image must be consistent with the architecture of the node. You need to ensure that the pod is assigned to the node with the appropriate architecture and that it matches the image architecture. For more information on assigning pods to nodes, see Assigning pods to nodes.

The Cluster Samples Operator is not supported on clusters with multi-architecture compute machines. Your cluster can be created without this capability. For more information, see Enabling cluster capabilities

For information on migrating your single-architecture cluster to a cluster that supports multi-architecture compute machines, see Migrating to a cluster with multi-architecture compute machines.

4.1.1. Configuring your cluster with multi-architecture compute machines

To create a cluster with multi-architecture compute machines for various platforms, you can use the documentation in the following sections:

- Creating a cluster with multi-architecture compute machines on Azure

- Creating a cluster with multi-architecture compute machines on AWS

- Creating a cluster with multi-architecture compute machines on Google Cloud

- Creating a cluster with multi-architecture compute machines on bare metal

- Creating a cluster with multi-architecture compute machines on IBM Z® and IBM® LinuxONE with z/VM

- Creating a cluster with multi-architecture compute machines on IBM Z® and IBM® LinuxONE with RHEL KVM

- Creating a cluster with multi-architecture compute machines on IBM Power®

Autoscaling from zero is currently not supported on Google Cloud.

4.2. Creating a cluster with multi-architecture compute machine on Azure

To deploy an Azure cluster with multi-architecture compute machines, you must first create a single-architecture Azure installer-provisioned cluster that uses the multi-architecture installer binary. For more information on Azure installations, see Installing a cluster on Azure with customizations. You can then add an ARM64 compute machine set to your cluster to create a cluster with multi-architecture compute machines.

The following procedures explain how to generate an ARM64 boot image and create an Azure compute machine set that uses the ARM64 boot image. This adds ARM64 compute nodes to your cluster and deploys the amount of ARM64 virtual machines (VM) that you need.

4.2.1. Verifying cluster compatibility

Before you can start adding compute nodes of different architectures to your cluster, you must verify that your cluster is multi-architecture compatible.

Prerequisites

-

You installed the OpenShift CLI (

oc)

Procedure

You can check that your cluster uses the architecture payload by running the following command:

$ oc adm release info -o jsonpath="{ .metadata.metadata}"

Verification

If you see the following output, then your cluster is using the multi-architecture payload:

{ "release.openshift.io/architecture": "multi", "url": "https://access.redhat.com/errata/<errata_version>" }You can then begin adding multi-arch compute nodes to your cluster.

If you see the following output, then your cluster is not using the multi-architecture payload:

{ "url": "https://access.redhat.com/errata/<errata_version>" }ImportantTo migrate your cluster so the cluster supports multi-architecture compute machines, follow the procedure in Migrating to a cluster with multi-architecture compute machines.

4.2.2. Creating an ARM64 boot image using the Azure image gallery

The following procedure describes how to manually generate an ARM64 boot image.

Prerequisites

-

You installed the Azure CLI (

az). - You created a single-architecture Azure installer-provisioned cluster with the multi-architecture installer binary.

Procedure

Log in to your Azure account:

$ az loginCreate a storage account and upload the

arm64virtual hard disk (VHD) to your storage account. The OpenShift Container Platform installation program creates a resource group, however, the boot image can also be uploaded to a custom named resource group:$ az storage account create -n ${STORAGE_ACCOUNT_NAME} -g ${RESOURCE_GROUP} -l westus --sku Standard_LRS1 - 1

- The

westusobject is an example region.

Create a storage container using the storage account you generated:

$ az storage container create -n ${CONTAINER_NAME} --account-name ${STORAGE_ACCOUNT_NAME}You must use the OpenShift Container Platform installation program JSON file to extract the URL and

aarch64VHD name:Extract the

URLfield and set it toRHCOS_VHD_ORIGIN_URLas the file name by running the following command:$ RHCOS_VHD_ORIGIN_URL=$(oc -n openshift-machine-config-operator get configmap/coreos-bootimages -o jsonpath='{.data.stream}' | jq -r '.architectures.aarch64."rhel-coreos-extensions"."azure-disk".url')Extract the

aarch64VHD name and set it toBLOB_NAMEas the file name by running the following command:$ BLOB_NAME=rhcos-$(oc -n openshift-machine-config-operator get configmap/coreos-bootimages -o jsonpath='{.data.stream}' | jq -r '.architectures.aarch64."rhel-coreos-extensions"."azure-disk".release')-azure.aarch64.vhd

Generate a shared access signature (SAS) token. Use this token to upload the RHCOS VHD to your storage container with the following commands:

$ end=`date -u -d "30 minutes" '+%Y-%m-%dT%H:%MZ'`$ sas=`az storage container generate-sas -n ${CONTAINER_NAME} --account-name ${STORAGE_ACCOUNT_NAME} --https-only --permissions dlrw --expiry $end -o tsv`Copy the RHCOS VHD into the storage container:

$ az storage blob copy start --account-name ${STORAGE_ACCOUNT_NAME} --sas-token "$sas" \ --source-uri "${RHCOS_VHD_ORIGIN_URL}" \ --destination-blob "${BLOB_NAME}" --destination-container ${CONTAINER_NAME}You can check the status of the copying process with the following command:

$ az storage blob show -c ${CONTAINER_NAME} -n ${BLOB_NAME} --account-name ${STORAGE_ACCOUNT_NAME} | jq .properties.copyExample output

{ "completionTime": null, "destinationSnapshot": null, "id": "1fd97630-03ca-489a-8c4e-cfe839c9627d", "incrementalCopy": null, "progress": "17179869696/17179869696", "source": "https://rhcos.blob.core.windows.net/imagebucket/rhcos-411.86.202207130959-0-azure.aarch64.vhd", "status": "success",1 "statusDescription": null }- 1

- If the status parameter displays the

successobject, the copying process is complete.

Create an image gallery using the following command:

$ az sig create --resource-group ${RESOURCE_GROUP} --gallery-name ${GALLERY_NAME}Use the image gallery to create an image definition. In the following example command,

rhcos-arm64is the name of the image definition.$ az sig image-definition create --resource-group ${RESOURCE_GROUP} --gallery-name ${GALLERY_NAME} --gallery-image-definition rhcos-arm64 --publisher RedHat --offer arm --sku arm64 --os-type linux --architecture Arm64 --hyper-v-generation V2To get the URL of the VHD and set it to

RHCOS_VHD_URLas the file name, run the following command:$ RHCOS_VHD_URL=$(az storage blob url --account-name ${STORAGE_ACCOUNT_NAME} -c ${CONTAINER_NAME} -n "${BLOB_NAME}" -o tsv)Use the

RHCOS_VHD_URLfile, your storage account, resource group, and image gallery to create an image version. In the following example,1.0.0is the image version.$ az sig image-version create --resource-group ${RESOURCE_GROUP} --gallery-name ${GALLERY_NAME} --gallery-image-definition rhcos-arm64 --gallery-image-version 1.0.0 --os-vhd-storage-account ${STORAGE_ACCOUNT_NAME} --os-vhd-uri ${RHCOS_VHD_URL}Your

arm64boot image is now generated. You can access the ID of your image with the following command:$ az sig image-version show -r $GALLERY_NAME -g $RESOURCE_GROUP -i rhcos-arm64 -e 1.0.0The following example image ID is used in the

recourseIDparameter of the compute machine set:Example

resourceID/resourceGroups/${RESOURCE_GROUP}/providers/Microsoft.Compute/galleries/${GALLERY_NAME}/images/rhcos-arm64/versions/1.0.0

4.2.3. Adding a multi-architecture compute machine set to your cluster

To add ARM64 compute nodes to your cluster, you must create an Azure compute machine set that uses the ARM64 boot image. To create your own custom compute machine set on Azure, see "Creating a compute machine set on Azure".

Prerequisites

-

You installed the OpenShift CLI (

oc).

Procedure

Create a compute machine set and modify the

resourceIDandvmSizeparameters with the following command. This compute machine set will control thearm64worker nodes in your cluster:$ oc create -f arm64-machine-set-0.yamlSample YAML compute machine set with

arm64boot imageapiVersion: machine.openshift.io/v1beta1 kind: MachineSet metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machine-role: worker machine.openshift.io/cluster-api-machine-type: worker name: <infrastructure_id>-arm64-machine-set-0 namespace: openshift-machine-api spec: replicas: 2 selector: matchLabels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machineset: <infrastructure_id>-arm64-machine-set-0 template: metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machine-role: worker machine.openshift.io/cluster-api-machine-type: worker machine.openshift.io/cluster-api-machineset: <infrastructure_id>-arm64-machine-set-0 spec: lifecycleHooks: {} metadata: {} providerSpec: value: acceleratedNetworking: true apiVersion: machine.openshift.io/v1beta1 credentialsSecret: name: azure-cloud-credentials namespace: openshift-machine-api image: offer: "" publisher: "" resourceID: /resourceGroups/${RESOURCE_GROUP}/providers/Microsoft.Compute/galleries/${GALLERY_NAME}/images/rhcos-arm64/versions/1.0.01 sku: "" version: "" kind: AzureMachineProviderSpec location: <region> managedIdentity: <infrastructure_id>-identity networkResourceGroup: <infrastructure_id>-rg osDisk: diskSettings: {} diskSizeGB: 128 managedDisk: storageAccountType: Premium_LRS osType: Linux publicIP: false publicLoadBalancer: <infrastructure_id> resourceGroup: <infrastructure_id>-rg subnet: <infrastructure_id>-worker-subnet userDataSecret: name: worker-user-data vmSize: Standard_D4ps_v52 vnet: <infrastructure_id>-vnet zone: "<zone>"

Verification

Verify that the new ARM64 machines are running by entering the following command:

$ oc get machineset -n openshift-machine-apiExample output

NAME DESIRED CURRENT READY AVAILABLE AGE <infrastructure_id>-arm64-machine-set-0 2 2 2 2 10mYou can check that the nodes are ready and scheduable with the following command:

$ oc get nodes

4.3. Creating a cluster with multi-architecture compute machines on AWS

To create an AWS cluster with multi-architecture compute machines, you must first create a single-architecture AWS installer-provisioned cluster with the multi-architecture installer binary. For more information on AWS installations, refer to Installing a cluster on AWS with customizations. You can then add a ARM64 compute machine set to your AWS cluster.

4.3.1. Verifying cluster compatibility

Before you can start adding compute nodes of different architectures to your cluster, you must verify that your cluster is multi-architecture compatible.

Prerequisites

-

You installed the OpenShift CLI (

oc)

Procedure

You can check that your cluster uses the architecture payload by running the following command:

$ oc adm release info -o jsonpath="{ .metadata.metadata}"

Verification

If you see the following output, then your cluster is using the multi-architecture payload:

{ "release.openshift.io/architecture": "multi", "url": "https://access.redhat.com/errata/<errata_version>" }You can then begin adding multi-arch compute nodes to your cluster.

If you see the following output, then your cluster is not using the multi-architecture payload:

{ "url": "https://access.redhat.com/errata/<errata_version>" }ImportantTo migrate your cluster so the cluster supports multi-architecture compute machines, follow the procedure in Migrating to a cluster with multi-architecture compute machines.

4.3.2. Adding an ARM64 compute machine set to your cluster

To configure a cluster with multi-architecture compute machines, you must create a AWS ARM64 compute machine set. This adds ARM64 compute nodes to your cluster so that your cluster has multi-architecture compute machines.

Prerequisites

-

You installed the OpenShift CLI (

oc). - You used the installation program to create an AMD64 single-architecture AWS cluster with the multi-architecture installer binary.

Procedure

Create and modify a compute machine set, this will control the ARM64 compute nodes in your cluster.

$ oc create -f aws-arm64-machine-set-0.yamlSample YAML compute machine set to deploy an ARM64 compute node

apiVersion: machine.openshift.io/v1beta1 kind: MachineSet metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id>1 name: <infrastructure_id>-aws-arm64-machine-set-02 namespace: openshift-machine-api spec: replicas: 1 selector: matchLabels: machine.openshift.io/cluster-api-cluster: <infrastructure_id>3 machine.openshift.io/cluster-api-machineset: <infrastructure_id>-<role>-<zone>4 template: metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machine-role: <role>5 machine.openshift.io/cluster-api-machine-type: <role>6 machine.openshift.io/cluster-api-machineset: <infrastructure_id>-<role>-<zone>7 spec: metadata: labels: node-role.kubernetes.io/<role>: "" providerSpec: value: ami: id: ami-02a574449d4f4d2808 apiVersion: awsproviderconfig.openshift.io/v1beta1 blockDevices: - ebs: iops: 0 volumeSize: 120 volumeType: gp2 credentialsSecret: name: aws-cloud-credentials deviceIndex: 0 iamInstanceProfile: id: <infrastructure_id>-worker-profile9 instanceType: m6g.xlarge10 kind: AWSMachineProviderConfig placement: availabilityZone: us-east-1a11 region: <region>12 securityGroups: - filters: - name: tag:Name values: - <infrastructure_id>-worker-sg13 subnet: filters: - name: tag:Name values: - <infrastructure_id>-private-<zone> tags: - name: kubernetes.io/cluster/<infrastructure_id>14 value: owned - name: <custom_tag_name> value: <custom_tag_value> userDataSecret: name: worker-user-data- 1 2 3 9 13 14

- Specify the infrastructure ID that is based on the cluster ID that you set when you provisioned the cluster. If you have the OpenShift CLI installed, you can obtain the infrastructure ID by running the following command:

$ oc get -o jsonpath=‘{.status.infrastructureName}{“\n”}’ infrastructure cluster - 4 7

- Specify the infrastructure ID, role node label, and zone.

- 5 6

- Specify the role node label to add.

- 8

- Specify an ARM64 supported Red Hat Enterprise Linux CoreOS (RHCOS) Amazon Machine Image (AMI) for your AWS zone for your OpenShift Container Platform nodes.

$ oc get configmap/coreos-bootimages \ -n openshift-machine-config-operator \ -o jsonpath='{.data.stream}' | jq \ -r '.architectures.<arch>.images.aws.regions."<region>".image' - 10

- Specify an ARM64 supported machine type. For more information, refer to "Tested instance types for AWS 64-bit ARM"

- 11

- Specify the zone, for example

us-east-1a. Ensure that the zone you select offers 64-bit ARM machines. - 12

- Specify the region, for example,

us-east-1. Ensure that the zone you select offers 64-bit ARM machines.

Verification

View the list of compute machine sets by entering the following command:

$ oc get machineset -n openshift-machine-apiYou can then see your created ARM64 machine set.

Example output

NAME DESIRED CURRENT READY AVAILABLE AGE <infrastructure_id>-aws-arm64-machine-set-0 2 2 2 2 10mYou can check that the nodes are ready and scheduable with the following command:

$ oc get nodes

4.4. Creating a cluster with multi-architecture compute machines on Google Cloud

To create a Google Cloud cluster with multi-architecture compute machines, you must first create a single-architecture Google Cloud installer-provisioned cluster with the multi-architecture installer binary. For more information on AWS installations, refer to Installing a cluster on Google Cloud with customizations. You can then add ARM64 compute machines sets to your Google Cloud cluster.

Secure booting is currently not supported on ARM64 machines for Google Cloud

4.4.1. Verifying cluster compatibility

Before you can start adding compute nodes of different architectures to your cluster, you must verify that your cluster is multi-architecture compatible.

Prerequisites

-

You installed the OpenShift CLI (

oc)

Procedure

You can check that your cluster uses the architecture payload by running the following command:

$ oc adm release info -o jsonpath="{ .metadata.metadata}"

Verification

If you see the following output, then your cluster is using the multi-architecture payload:

{ "release.openshift.io/architecture": "multi", "url": "https://access.redhat.com/errata/<errata_version>" }You can then begin adding multi-arch compute nodes to your cluster.

If you see the following output, then your cluster is not using the multi-architecture payload:

{ "url": "https://access.redhat.com/errata/<errata_version>" }ImportantTo migrate your cluster so the cluster supports multi-architecture compute machines, follow the procedure in Migrating to a cluster with multi-architecture compute machines.

4.4.2. Adding an ARM64 compute machine set to your Google Cloud cluster

To configure a cluster with multi-architecture compute machines, you must create a Google Cloud ARM64 compute machine set. This adds ARM64 compute nodes to your cluster.

Prerequisites

-

You installed the OpenShift CLI (

oc). - You used the installation program to create an AMD64 single-architecture AWS cluster with the multi-architecture installer binary.

Procedure

Create and modify a compute machine set, this controls the ARM64 compute nodes in your cluster:

$ oc create -f gcp-arm64-machine-set-0.yamlSample Google Cloud YAML compute machine set to deploy an ARM64 compute node

apiVersion: machine.openshift.io/v1beta1 kind: MachineSet metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id>1 name: <infrastructure_id>-w-a namespace: openshift-machine-api spec: replicas: 1 selector: matchLabels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machineset: <infrastructure_id>-w-a template: metadata: creationTimestamp: null labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machine-role: <role>2 machine.openshift.io/cluster-api-machine-type: <role> machine.openshift.io/cluster-api-machineset: <infrastructure_id>-w-a spec: metadata: labels: node-role.kubernetes.io/<role>: "" providerSpec: value: apiVersion: gcpprovider.openshift.io/v1beta1 canIPForward: false credentialsSecret: name: gcp-cloud-credentials deletionProtection: false disks: - autoDelete: true boot: true image: <path_to_image>3 labels: null sizeGb: 128 type: pd-ssd gcpMetadata:4 - key: <custom_metadata_key> value: <custom_metadata_value> kind: GCPMachineProviderSpec machineType: n1-standard-45 metadata: creationTimestamp: null networkInterfaces: - network: <infrastructure_id>-network subnetwork: <infrastructure_id>-worker-subnet projectID: <project_name>6 region: us-central17 serviceAccounts: - email: <infrastructure_id>-w@<project_name>.iam.gserviceaccount.com scopes: - https://www.googleapis.com/auth/cloud-platform tags: - <infrastructure_id>-worker userDataSecret: name: worker-user-data zone: us-central1-a- 1

- Specify the infrastructure ID that is based on the cluster ID that you set when you provisioned the cluster. You can obtain the infrastructure ID by running the following command:

$ oc get -o jsonpath='{.status.infrastructureName}{"\n"}' infrastructure cluster - 2

- Specify the role node label to add.

- 3

- Specify the path to the image that is used in current compute machine sets. You need the project and image name for your path to image.

To access the project and image name, run the following command:

$ oc get configmap/coreos-bootimages \ -n openshift-machine-config-operator \ -o jsonpath='{.data.stream}' | jq \ -r '.architectures.aarch64.images.gcp'Example output

"gcp": { "release": "415.92.202309142014-0", "project": "rhcos-cloud", "name": "rhcos-415-92-202309142014-0-gcp-aarch64" }Use the

projectandnameparameters from the output to create the path to image field in your machine set. The path to the image should follow the following format:$ projects/<project>/global/images/<image_name> - 4

- Optional: Specify custom metadata in the form of a

key:valuepair. For example use cases, see the Google Cloud documentation for setting custom metadata. - 5

- Specify an ARM64 supported machine type. For more information, refer to Tested instance types for Google Cloud on 64-bit ARM infrastructures in "Additional resources".

- 6

- Specify the name of the Google Cloud project that you use for your cluster.

- 7

- Specify the region, for example,

us-central1. Ensure that the zone you select offers 64-bit ARM machines.

Verification

View the list of compute machine sets by entering the following command:

$ oc get machineset -n openshift-machine-apiYou can then see your created ARM64 machine set.

Example output

NAME DESIRED CURRENT READY AVAILABLE AGE <infrastructure_id>-gcp-arm64-machine-set-0 2 2 2 2 10mYou can check that the nodes are ready and scheduable with the following command:

$ oc get nodes

Additional resources

4.5. Creating a cluster with multi-architecture compute machine on bare metal

To create a cluster with multi-architecture compute machines on bare metal, you must have an existing single-architecture bare metal cluster. For more information on bare metal installations, see Installing a user provisioned cluster on bare metal. You can then add 64-bit ARM compute machines to your OpenShift Container Platform cluster on bare metal.

Before you can add 64-bit ARM nodes to your bare metal cluster, you must upgrade your cluster to one that uses the multi-architecture payload. For more information on migrating to the multi-architecture payload, see Migrating to a cluster with multi-architecture compute machines.

The following procedures explain how to create a RHCOS compute machine using an ISO image or network PXE booting. This will allow you to add ARM64 nodes to your bare metal cluster and deploy a cluster with multi-architecture compute machines.

4.5.1. Verifying cluster compatibility

Before you can start adding compute nodes of different architectures to your cluster, you must verify that your cluster is multi-architecture compatible.

Prerequisites

-

You installed the OpenShift CLI (

oc)

Procedure

You can check that your cluster uses the architecture payload by running the following command:

$ oc adm release info -o jsonpath="{ .metadata.metadata}"

Verification

If you see the following output, then your cluster is using the multi-architecture payload:

{ "release.openshift.io/architecture": "multi", "url": "https://access.redhat.com/errata/<errata_version>" }You can then begin adding multi-arch compute nodes to your cluster.

If you see the following output, then your cluster is not using the multi-architecture payload:

{ "url": "https://access.redhat.com/errata/<errata_version>" }ImportantTo migrate your cluster so the cluster supports multi-architecture compute machines, follow the procedure in Migrating to a cluster with multi-architecture compute machines.

4.5.2. Creating RHCOS machines using an ISO image

You can create more Red Hat Enterprise Linux CoreOS (RHCOS) compute machines for your bare metal cluster by using an ISO image to create the machines.

Prerequisites

- Obtain the URL of the Ignition config file for the compute machines for your cluster. You uploaded this file to your HTTP server during installation.

-

You must have the OpenShift CLI (

oc) installed.

Procedure

Extract the Ignition config file from the cluster by running the following command:

$ oc extract -n openshift-machine-api secret/worker-user-data-managed --keys=userData --to=- > worker.ign-

Upload the

worker.ignIgnition config file you exported from your cluster to your HTTP server. Note the URLs of these files. You can validate that the ignition files are available on the URLs. The following example gets the Ignition config files for the compute node:

$ curl -k http://<HTTP_server>/worker.ignYou can access the ISO image for booting your new machine by running to following command:

RHCOS_VHD_ORIGIN_URL=$(oc -n openshift-machine-config-operator get configmap/coreos-bootimages -o jsonpath='{.data.stream}' | jq -r '.architectures.<architecture>.artifacts.metal.formats.iso.disk.location')Use the ISO file to install RHCOS on more compute machines. Use the same method that you used when you created machines before you installed the cluster:

- Burn the ISO image to a disk and boot it directly.

- Use ISO redirection with a LOM interface.

Boot the RHCOS ISO image without specifying any options, or interrupting the live boot sequence. Wait for the installer to boot into a shell prompt in the RHCOS live environment.

NoteYou can interrupt the RHCOS installation boot process to add kernel arguments. However, for this ISO procedure you must use the

coreos-installercommand as outlined in the following steps, instead of adding kernel arguments.Run the

coreos-installercommand and specify the options that meet your installation requirements. At a minimum, you must specify the URL that points to the Ignition config file for the node type, and the device that you are installing to:$ sudo coreos-installer install --ignition-url=http://<HTTP_server>/<node_type>.ign <device> --ignition-hash=sha512-<digest>1 2 - 1

- You must run the

coreos-installercommand by usingsudo, because thecoreuser does not have the required root privileges to perform the installation. - 2

- The

--ignition-hashoption is required when the Ignition config file is obtained through an HTTP URL to validate the authenticity of the Ignition config file on the cluster node.<digest>is the Ignition config file SHA512 digest obtained in a preceding step.

NoteIf you want to provide your Ignition config files through an HTTPS server that uses TLS, you can add the internal certificate authority (CA) to the system trust store before running

coreos-installer.The following example initializes a compute node installation to the

/dev/sdadevice. The Ignition config file for the compute node is obtained from an HTTP web server with the IP address 192.168.1.2:$ sudo coreos-installer install --ignition-url=http://192.168.1.2:80/installation_directory/worker.ign /dev/sda --ignition-hash=sha512-a5a2d43879223273c9b60af66b44202a1d1248fc01cf156c46d4a79f552b6bad47bc8cc78ddf0116e80c59d2ea9e32ba53bc807afbca581aa059311def2c3e3bMonitor the progress of the RHCOS installation on the console of the machine.

ImportantEnsure that the installation is successful on each node before commencing with the OpenShift Container Platform installation. Observing the installation process can also help to determine the cause of RHCOS installation issues that might arise.

- Continue to create more compute machines for your cluster.

4.5.3. Creating RHCOS machines by PXE or iPXE booting

You can create more Red Hat Enterprise Linux CoreOS (RHCOS) compute machines for your bare metal cluster by using PXE or iPXE booting.

Prerequisites

- Obtain the URL of the Ignition config file for the compute machines for your cluster. You uploaded this file to your HTTP server during installation.

-

Obtain the URLs of the RHCOS ISO image, compressed metal BIOS,

kernel, andinitramfsfiles that you uploaded to your HTTP server during cluster installation. - You have access to the PXE booting infrastructure that you used to create the machines for your OpenShift Container Platform cluster during installation. The machines must boot from their local disks after RHCOS is installed on them.

-

If you use UEFI, you have access to the

grub.conffile that you modified during OpenShift Container Platform installation.

Procedure

Confirm that your PXE or iPXE installation for the RHCOS images is correct.

For PXE:

DEFAULT pxeboot TIMEOUT 20 PROMPT 0 LABEL pxeboot KERNEL http://<HTTP_server>/rhcos-<version>-live-kernel-<architecture>1 APPEND initrd=http://<HTTP_server>/rhcos-<version>-live-initramfs.<architecture>.img coreos.inst.install_dev=/dev/sda coreos.inst.ignition_url=http://<HTTP_server>/worker.ign coreos.live.rootfs_url=http://<HTTP_server>/rhcos-<version>-live-rootfs.<architecture>.img2 - 1

- Specify the location of the live

kernelfile that you uploaded to your HTTP server. - 2

- Specify locations of the RHCOS files that you uploaded to your HTTP server. The

initrdparameter value is the location of the liveinitramfsfile, thecoreos.inst.ignition_urlparameter value is the location of the worker Ignition config file, and thecoreos.live.rootfs_urlparameter value is the location of the liverootfsfile. Thecoreos.inst.ignition_urlandcoreos.live.rootfs_urlparameters only support HTTP and HTTPS.

NoteThis configuration does not enable serial console access on machines with a graphical console. To configure a different console, add one or more

console=arguments to theAPPENDline. For example, addconsole=tty0 console=ttyS0to set the first PC serial port as the primary console and the graphical console as a secondary console. For more information, see How does one set up a serial terminal and/or console in Red Hat Enterprise Linux?.For iPXE (

x86_64+aarch64):kernel http://<HTTP_server>/rhcos-<version>-live-kernel-<architecture> initrd=main coreos.live.rootfs_url=http://<HTTP_server>/rhcos-<version>-live-rootfs.<architecture>.img coreos.inst.install_dev=/dev/sda coreos.inst.ignition_url=http://<HTTP_server>/worker.ign1 2 initrd --name main http://<HTTP_server>/rhcos-<version>-live-initramfs.<architecture>.img3 boot- 1

- Specify the locations of the RHCOS files that you uploaded to your HTTP server. The

kernelparameter value is the location of thekernelfile, theinitrd=mainargument is needed for booting on UEFI systems, thecoreos.live.rootfs_urlparameter value is the location of therootfsfile, and thecoreos.inst.ignition_urlparameter value is the location of the worker Ignition config file. - 2

- If you use multiple NICs, specify a single interface in the

ipoption. For example, to use DHCP on a NIC that is namedeno1, setip=eno1:dhcp. - 3

- Specify the location of the

initramfsfile that you uploaded to your HTTP server.

NoteThis configuration does not enable serial console access on machines with a graphical console To configure a different console, add one or more

console=arguments to thekernelline. For example, addconsole=tty0 console=ttyS0to set the first PC serial port as the primary console and the graphical console as a secondary console. For more information, see How does one set up a serial terminal and/or console in Red Hat Enterprise Linux? and "Enabling the serial console for PXE and ISO installation" in the "Advanced RHCOS installation configuration" section.NoteTo network boot the CoreOS

kernelonaarch64architecture, you need to use a version of iPXE build with theIMAGE_GZIPoption enabled. SeeIMAGE_GZIPoption in iPXE.For PXE (with UEFI and GRUB as second stage) on

aarch64:menuentry 'Install CoreOS' { linux rhcos-<version>-live-kernel-<architecture> coreos.live.rootfs_url=http://<HTTP_server>/rhcos-<version>-live-rootfs.<architecture>.img coreos.inst.install_dev=/dev/sda coreos.inst.ignition_url=http://<HTTP_server>/worker.ign1 2 initrd rhcos-<version>-live-initramfs.<architecture>.img3 }- 1

- Specify the locations of the RHCOS files that you uploaded to your HTTP/TFTP server. The

kernelparameter value is the location of thekernelfile on your TFTP server. Thecoreos.live.rootfs_urlparameter value is the location of therootfsfile, and thecoreos.inst.ignition_urlparameter value is the location of the worker Ignition config file on your HTTP Server. - 2

- If you use multiple NICs, specify a single interface in the

ipoption. For example, to use DHCP on a NIC that is namedeno1, setip=eno1:dhcp. - 3

- Specify the location of the

initramfsfile that you uploaded to your TFTP server.

- Use the PXE or iPXE infrastructure to create the required compute machines for your cluster.

4.5.4. Approving the certificate signing requests for your machines

When you add machines to a cluster, two pending certificate signing requests (CSRs) are generated for each machine that you added. You must confirm that these CSRs are approved or, if necessary, approve them yourself. The client requests must be approved first, followed by the server requests.

Prerequisites

- You added machines to your cluster.

Procedure

Confirm that the cluster recognizes the machines:

$ oc get nodesExample output

NAME STATUS ROLES AGE VERSION master-0 Ready master 63m v1.27.3 master-1 Ready master 63m v1.27.3 master-2 Ready master 64m v1.27.3The output lists all of the machines that you created.

NoteThe preceding output might not include the compute nodes, also known as worker nodes, until some CSRs are approved.

Review the pending CSRs and ensure that you see the client requests with the

PendingorApprovedstatus for each machine that you added to the cluster:$ oc get csrExample output

NAME AGE REQUESTOR CONDITION csr-8b2br 15m system:serviceaccount:openshift-machine-config-operator:node-bootstrapper Pending csr-8vnps 15m system:serviceaccount:openshift-machine-config-operator:node-bootstrapper Pending ...In this example, two machines are joining the cluster. You might see more approved CSRs in the list.

If the CSRs were not approved, after all of the pending CSRs for the machines you added are in

Pendingstatus, approve the CSRs for your cluster machines:NoteBecause the CSRs rotate automatically, approve your CSRs within an hour of adding the machines to the cluster. If you do not approve them within an hour, the certificates will rotate, and more than two certificates will be present for each node. You must approve all of these certificates. After the client CSR is approved, the Kubelet creates a secondary CSR for the serving certificate, which requires manual approval. Then, subsequent serving certificate renewal requests are automatically approved by the

machine-approverif the Kubelet requests a new certificate with identical parameters.NoteFor clusters running on platforms that are not machine API enabled, such as bare metal and other user-provisioned infrastructure, you must implement a method of automatically approving the kubelet serving certificate requests (CSRs). If a request is not approved, then the

oc exec,oc rsh, andoc logscommands cannot succeed, because a serving certificate is required when the API server connects to the kubelet. Any operation that contacts the Kubelet endpoint requires this certificate approval to be in place. The method must watch for new CSRs, confirm that the CSR was submitted by thenode-bootstrapperservice account in thesystem:nodeorsystem:admingroups, and confirm the identity of the node.To approve them individually, run the following command for each valid CSR:

$ oc adm certificate approve <csr_name>1 - 1

<csr_name>is the name of a CSR from the list of current CSRs.

To approve all pending CSRs, run the following command:

$ oc get csr -o go-template='{{range .items}}{{if not .status}}{{.metadata.name}}{{"\n"}}{{end}}{{end}}' | xargs --no-run-if-empty oc adm certificate approveNoteSome Operators might not become available until some CSRs are approved.

Now that your client requests are approved, you must review the server requests for each machine that you added to the cluster:

$ oc get csrExample output

NAME AGE REQUESTOR CONDITION csr-bfd72 5m26s system:node:ip-10-0-50-126.us-east-2.compute.internal Pending csr-c57lv 5m26s system:node:ip-10-0-95-157.us-east-2.compute.internal Pending ...If the remaining CSRs are not approved, and are in the

Pendingstatus, approve the CSRs for your cluster machines:To approve them individually, run the following command for each valid CSR:

$ oc adm certificate approve <csr_name>1 - 1

<csr_name>is the name of a CSR from the list of current CSRs.

To approve all pending CSRs, run the following command:

$ oc get csr -o go-template='{{range .items}}{{if not .status}}{{.metadata.name}}{{"\n"}}{{end}}{{end}}' | xargs oc adm certificate approve

After all client and server CSRs have been approved, the machines have the

Readystatus. Verify this by running the following command:$ oc get nodesExample output

NAME STATUS ROLES AGE VERSION master-0 Ready master 73m v1.27.3 master-1 Ready master 73m v1.27.3 master-2 Ready master 74m v1.27.3 worker-0 Ready worker 11m v1.27.3 worker-1 Ready worker 11m v1.27.3NoteIt can take a few minutes after approval of the server CSRs for the machines to transition to the

Readystatus.

Additional information

- For more information on CSRs, see Certificate Signing Requests.

4.6. Creating a cluster with multi-architecture compute machines on IBM Z and IBM LinuxONE with z/VM

To create a cluster with multi-architecture compute machines on IBM Z® and IBM® LinuxONE (s390x) with z/VM, you must have an existing single-architecture x86_64 cluster. You can then add s390x compute machines to your OpenShift Container Platform cluster.

Before you can add s390x nodes to your cluster, you must upgrade your cluster to one that uses the multi-architecture payload. For more information on migrating to the multi-architecture payload, see Migrating to a cluster with multi-architecture compute machines.

The following procedures explain how to create a RHCOS compute machine using a z/VM instance. This will allow you to add s390x nodes to your cluster and deploy a cluster with multi-architecture compute machines.

4.6.1. Verifying cluster compatibility

Before you can start adding compute nodes of different architectures to your cluster, you must verify that your cluster is multi-architecture compatible.

Prerequisites

-

You installed the OpenShift CLI (

oc)

Procedure

You can check that your cluster uses the architecture payload by running the following command:

$ oc adm release info -o jsonpath="{ .metadata.metadata}"

Verification

If you see the following output, then your cluster is using the multi-architecture payload:

{ "release.openshift.io/architecture": "multi", "url": "https://access.redhat.com/errata/<errata_version>" }You can then begin adding multi-arch compute nodes to your cluster.

If you see the following output, then your cluster is not using the multi-architecture payload:

{ "url": "https://access.redhat.com/errata/<errata_version>" }ImportantTo migrate your cluster so the cluster supports multi-architecture compute machines, follow the procedure in Migrating to a cluster with multi-architecture compute machines.

4.6.2. Creating RHCOS machines on IBM Z with z/VM

You can create more Red Hat Enterprise Linux CoreOS (RHCOS) compute machines running on IBM Z® with z/VM and attach them to your existing cluster.

Prerequisites

- You have a domain name server (DNS) that can perform hostname and reverse lookup for the nodes.

- You have an HTTP or HTTPS server running on your provisioning machine that is accessible to the machines you create.

Procedure

Disable UDP aggregation.

Currently, UDP aggregation is not supported on IBM Z® and is not automatically deactivated on multi-architecture compute clusters with an

x86_64control plane and additionals390xcompute machines. To ensure that the addtional compute nodes are added to the cluster correctly, you must manually disable UDP aggregation.Create a YAML file

udp-aggregation-config.yamlwith the following content:apiVersion: v1 kind: ConfigMap data: disable-udp-aggregation: "true" metadata: name: udp-aggregation-config namespace: openshift-network-operatorCreate the ConfigMap resource by running the following command:

$ oc create -f udp-aggregation-config.yaml

Extract the Ignition config file from the cluster by running the following command:

$ oc extract -n openshift-machine-api secret/worker-user-data-managed --keys=userData --to=- > worker.ign-

Upload the

worker.ignIgnition config file you exported from your cluster to your HTTP server. Note the URL of this file. You can validate that the Ignition file is available on the URL. The following example gets the Ignition config file for the compute node:

$ curl -k http://<HTTP_server>/worker.ignDownload the RHEL live

kernel,initramfs, androotfsfiles by running the following commands:$ curl -LO $(oc -n openshift-machine-config-operator get configmap/coreos-bootimages -o jsonpath='{.data.stream}' \ | jq -r '.architectures.s390x.artifacts.metal.formats.pxe.kernel.location')$ curl -LO $(oc -n openshift-machine-config-operator get configmap/coreos-bootimages -o jsonpath='{.data.stream}' \ | jq -r '.architectures.s390x.artifacts.metal.formats.pxe.initramfs.location')$ curl -LO $(oc -n openshift-machine-config-operator get configmap/coreos-bootimages -o jsonpath='{.data.stream}' \ | jq -r '.architectures.s390x.artifacts.metal.formats.pxe.rootfs.location')-

Move the downloaded RHEL live

kernel,initramfs, androotfsfiles to an HTTP or HTTPS server that is accessible from the z/VM guest you want to add. Create a parameter file for the z/VM guest. The following parameters are specific for the virtual machine:

Optional: To specify a static IP address, add an

ip=parameter with the following entries, with each separated by a colon:- The IP address for the machine.

- An empty string.

- The gateway.

- The netmask.

-

The machine host and domain name in the form

hostname.domainname. If you omit this value, RHCOS obtains the hostname through a reverse DNS lookup. - The network interface name. If you omit this value, RHCOS applies the IP configuration to all available interfaces.

-

The value

none.

-

For

coreos.inst.ignition_url=, specify the URL to theworker.ignfile. Only HTTP and HTTPS protocols are supported. -

For

coreos.live.rootfs_url=, specify the matching rootfs artifact for thekernelandinitramfsyou are booting. Only HTTP and HTTPS protocols are supported. For installations on DASD-type disks, complete the following tasks:

-

For

coreos.inst.install_dev=, specify/dev/dasda. -

Use

rd.dasd=to specify the DASD where RHCOS is to be installed. Leave all other parameters unchanged.

The following is an example parameter file,

additional-worker-dasd.parm:rd.neednet=1 \ console=ttysclp0 \ coreos.inst.install_dev=/dev/dasda \ coreos.live.rootfs_url=http://cl1.provide.example.com:8080/assets/rhcos-live-rootfs.s390x.img \ coreos.inst.ignition_url=http://cl1.provide.example.com:8080/ignition/worker.ign \ ip=172.18.78.2::172.18.78.1:255.255.255.0:::none nameserver=172.18.78.1 \ rd.znet=qeth,0.0.bdf0,0.0.bdf1,0.0.bdf2,layer2=1,portno=0 \ zfcp.allow_lun_scan=0 \ rd.dasd=0.0.3490Write all options in the parameter file as a single line and make sure that you have no newline characters.

-

For

For installations on FCP-type disks, complete the following tasks:

Use

rd.zfcp=<adapter>,<wwpn>,<lun>to specify the FCP disk where RHCOS is to be installed. For multipathing, repeat this step for each additional path.NoteWhen you install with multiple paths, you must enable multipathing directly after the installation, not at a later point in time, as this can cause problems.

Set the install device as:

coreos.inst.install_dev=/dev/sda.NoteIf additional LUNs are configured with NPIV, FCP requires

zfcp.allow_lun_scan=0. If you must enablezfcp.allow_lun_scan=1because you use a CSI driver, for example, you must configure your NPIV so that each node cannot access the boot partition of another node.Leave all other parameters unchanged.

ImportantAdditional postinstallation steps are required to fully enable multipathing. For more information, see “Enabling multipathing with kernel arguments on RHCOS" in Postinstallation machine configuration tasks.

The following is an example parameter file,

additional-worker-fcp.parmfor a worker node with multipathing:rd.neednet=1 \ console=ttysclp0 \ coreos.inst.install_dev=/dev/sda \ coreos.live.rootfs_url=http://cl1.provide.example.com:8080/assets/rhcos-live-rootfs.s390x.img \ coreos.inst.ignition_url=http://cl1.provide.example.com:8080/ignition/worker.ign \ ip=172.18.78.2::172.18.78.1:255.255.255.0:::none nameserver=172.18.78.1 \ rd.znet=qeth,0.0.bdf0,0.0.bdf1,0.0.bdf2,layer2=1,portno=0 \ zfcp.allow_lun_scan=0 \ rd.zfcp=0.0.1987,0x50050763070bc5e3,0x4008400B00000000 \ rd.zfcp=0.0.19C7,0x50050763070bc5e3,0x4008400B00000000 \ rd.zfcp=0.0.1987,0x50050763071bc5e3,0x4008400B00000000 \ rd.zfcp=0.0.19C7,0x50050763071bc5e3,0x4008400B00000000Write all options in the parameter file as a single line and make sure that you have no newline characters.

-

Transfer the

initramfs,kernel, parameter files, and RHCOS images to z/VM, for example, by using FTP. For details about how to transfer the files with FTP and boot from the virtual reader, see Installing under Z/VM. Punch the files to the virtual reader of the z/VM guest virtual machine.

See PUNCH in IBM® Documentation.

TipYou can use the CP PUNCH command or, if you use Linux, the vmur command to transfer files between two z/VM guest virtual machines.

- Log in to CMS on the bootstrap machine.

IPL the bootstrap machine from the reader by running the following command:

$ ipl cSee IPL in IBM® Documentation.

4.6.3. Approving the certificate signing requests for your machines

When you add machines to a cluster, two pending certificate signing requests (CSRs) are generated for each machine that you added. You must confirm that these CSRs are approved or, if necessary, approve them yourself. The client requests must be approved first, followed by the server requests.

Prerequisites

- You added machines to your cluster.

Procedure

Confirm that the cluster recognizes the machines:

$ oc get nodesExample output

NAME STATUS ROLES AGE VERSION master-0 Ready master 63m v1.27.3 master-1 Ready master 63m v1.27.3 master-2 Ready master 64m v1.27.3The output lists all of the machines that you created.

NoteThe preceding output might not include the compute nodes, also known as worker nodes, until some CSRs are approved.

Review the pending CSRs and ensure that you see the client requests with the

PendingorApprovedstatus for each machine that you added to the cluster:$ oc get csrExample output

NAME AGE REQUESTOR CONDITION csr-8b2br 15m system:serviceaccount:openshift-machine-config-operator:node-bootstrapper Pending csr-8vnps 15m system:serviceaccount:openshift-machine-config-operator:node-bootstrapper Pending ...In this example, two machines are joining the cluster. You might see more approved CSRs in the list.