Scalability and performance

Scaling your OpenShift Container Platform cluster and tuning performance in production environments

Abstract

Chapter 1. OpenShift Container Platform scalability and performance overview

OpenShift Container Platform provides best practices and tools to help you optimize the performance and scale of your clusters. The following documentation provides information on recommended performance and scalability practices, reference design specifications, optimization, and low latency tuning.

To contact Red Hat support, see Getting support.

Some performance and scalability Operators have release cycles that are independent from OpenShift Container Platform release cycles. For more information, see OpenShift Operators.

1.1. Recommended performance and scalability practices

Recommended control plane practices

1.2. Telco reference design specifications

1.3. Planning, optimization, and measurement

Planning your environment according to object maximums

Recommended practices for IBM Z and IBM LinuxONE

Using the Node Tuning Operator

Using CPU Manager and Topology Manager

Scheduling NUMA-aware workloads

Optimizing storage, routing, networking and CPU usage

Managing bare metal hosts and events

What are huge pages and how are they used by apps

Low latency tuning for improving cluster stability and partitioning workload

Improving cluster stability in high latency environments using worker latency profiles

Chapter 2. Recommended performance and scalability practices

2.1. Recommended control plane practices

This topic provides recommended performance and scalability practices for control planes in OpenShift Container Platform.

2.1.1. Recommended practices for scaling the cluster

The guidance in this section is only relevant for installations with cloud provider integration.

Apply the following best practices to scale the number of worker machines in your OpenShift Container Platform cluster. You scale the worker machines by increasing or decreasing the number of replicas that are defined in the worker machine set.

When scaling up the cluster to higher node counts:

- Spread nodes across all of the available zones for higher availability.

- Scale up by no more than 25 to 50 machines at once.

- Consider creating new compute machine sets in each available zone with alternative instance types of similar size to help mitigate any periodic provider capacity constraints. For example, on AWS, use m5.large and m5d.large.

Cloud providers might implement a quota for API services. Therefore, gradually scale the cluster.

The controller might not be able to create the machines if the replicas in the compute machine sets are set to higher numbers all at one time. The number of requests the cloud platform, which OpenShift Container Platform is deployed on top of, is able to handle impacts the process. The controller will start to query more while trying to create, check, and update the machines with the status. The cloud platform on which OpenShift Container Platform is deployed has API request limits; excessive queries might lead to machine creation failures due to cloud platform limitations.

Enable machine health checks when scaling to large node counts. In case of failures, the health checks monitor the condition and automatically repair unhealthy machines.

When scaling large and dense clusters to lower node counts, it might take large amounts of time because the process involves draining or evicting the objects running on the nodes being terminated in parallel. Also, the client might start to throttle the requests if there are too many objects to evict. The default client queries per second (QPS) and burst rates are currently set to 50 and 100 respectively. These values cannot be modified in OpenShift Container Platform.

2.1.2. Control plane node sizing

The control plane node resource requirements depend on the number and type of nodes and objects in the cluster. The following control plane node size recommendations are based on the results of a control plane density focused testing, or Cluster-density. This test creates the following objects across a given number of namespaces:

- 1 image stream

- 1 build

-

5 deployments, with 2 pod replicas in a

sleepstate, mounting 4 secrets, 4 config maps, and 1 downward API volume each - 5 services, each one pointing to the TCP/8080 and TCP/8443 ports of one of the previous deployments

- 1 route pointing to the first of the previous services

- 10 secrets containing 2048 random string characters

- 10 config maps containing 2048 random string characters

| Number of worker nodes | Cluster-density (namespaces) | CPU cores | Memory (GB) |

|---|---|---|---|

| 24 | 500 | 4 | 16 |

| 120 | 1000 | 8 | 32 |

| 252 | 4000 | 16, but 24 if using the OVN-Kubernetes network plug-in | 64, but 128 if using the OVN-Kubernetes network plug-in |

| 501, but untested with the OVN-Kubernetes network plug-in | 4000 | 16 | 96 |

The data from the table above is based on an OpenShift Container Platform running on top of AWS, using r5.4xlarge instances as control-plane nodes and m5.2xlarge instances as worker nodes.

On a large and dense cluster with three control plane nodes, the CPU and memory usage will spike up when one of the nodes is stopped, rebooted, or fails. The failures can be due to unexpected issues with power, network, underlying infrastructure, or intentional cases where the cluster is restarted after shutting it down to save costs. The remaining two control plane nodes must handle the load in order to be highly available, which leads to increase in the resource usage. This is also expected during upgrades because the control plane nodes are cordoned, drained, and rebooted serially to apply the operating system updates, as well as the control plane Operators update. To avoid cascading failures, keep the overall CPU and memory resource usage on the control plane nodes to at most 60% of all available capacity to handle the resource usage spikes. Increase the CPU and memory on the control plane nodes accordingly to avoid potential downtime due to lack of resources.

The node sizing varies depending on the number of nodes and object counts in the cluster. It also depends on whether the objects are actively being created on the cluster. During object creation, the control plane is more active in terms of resource usage compared to when the objects are in the running phase.

Operator Lifecycle Manager (OLM ) runs on the control plane nodes and its memory footprint depends on the number of namespaces and user installed operators that OLM needs to manage on the cluster. Control plane nodes need to be sized accordingly to avoid OOM kills. Following data points are based on the results from cluster maximums testing.

| Number of namespaces | OLM memory at idle state (GB) | OLM memory with 5 user operators installed (GB) |

|---|---|---|

| 500 | 0.823 | 1.7 |

| 1000 | 1.2 | 2.5 |

| 1500 | 1.7 | 3.2 |

| 2000 | 2 | 4.4 |

| 3000 | 2.7 | 5.6 |

| 4000 | 3.8 | 7.6 |

| 5000 | 4.2 | 9.02 |

| 6000 | 5.8 | 11.3 |

| 7000 | 6.6 | 12.9 |

| 8000 | 6.9 | 14.8 |

| 9000 | 8 | 17.7 |

| 10,000 | 9.9 | 21.6 |

You can modify the control plane node size in a running OpenShift Container Platform 4.14 cluster for the following configurations only:

- Clusters installed with a user-provisioned installation method.

- AWS clusters installed with an installer-provisioned infrastructure installation method.

- Clusters that use a control plane machine set to manage control plane machines.

For all other configurations, you must estimate your total node count and use the suggested control plane node size during installation.

The recommendations are based on the data points captured on OpenShift Container Platform clusters with OpenShift SDN as the network plugin.

In OpenShift Container Platform 4.14, half of a CPU core (500 millicore) is now reserved by the system by default compared to OpenShift Container Platform 3.11 and previous versions. The sizes are determined taking that into consideration.

2.1.2.1. Selecting a larger Amazon Web Services instance type for control plane machines

If the control plane machines in an Amazon Web Services (AWS) cluster require more resources, you can select a larger AWS instance type for the control plane machines to use.

The procedure for clusters that use a control plane machine set is different from the procedure for clusters that do not use a control plane machine set.

If you are uncertain about the state of the ControlPlaneMachineSet CR in your cluster, you can verify the CR status.

2.1.2.1.1. Changing the Amazon Web Services instance type by using a control plane machine set

You can change the Amazon Web Services (AWS) instance type that your control plane machines use by updating the specification in the control plane machine set custom resource (CR).

Prerequisites

- Your AWS cluster uses a control plane machine set.

Procedure

Edit your control plane machine set CR by running the following command:

$ oc --namespace openshift-machine-api edit controlplanemachineset.machine.openshift.io clusterEdit the following line under the

providerSpecfield:providerSpec: value: ... instanceType: <compatible_aws_instance_type>1 - 1

- Specify a larger AWS instance type with the same base as the previous selection. For example, you can change

m6i.xlargetom6i.2xlargeorm6i.4xlarge.

Save your changes.

-

For clusters that use the default

RollingUpdateupdate strategy, the Operator automatically propagates the changes to your control plane configuration. -

For clusters that are configured to use the

OnDeleteupdate strategy, you must replace your control plane machines manually.

-

For clusters that use the default

2.1.2.1.2. Changing the Amazon Web Services instance type by using the AWS console

You can change the Amazon Web Services (AWS) instance type that your control plane machines use by updating the instance type in the AWS console.

Prerequisites

- You have access to the AWS console with the permissions required to modify the EC2 Instance for your cluster.

-

You have access to the OpenShift Container Platform cluster as a user with the

cluster-adminrole.

Procedure

- Open the AWS console and fetch the instances for the control plane machines.

Choose one control plane machine instance.

- For the selected control plane machine, back up the etcd data by creating an etcd snapshot. For more information, see "Backing up etcd".

- In the AWS console, stop the control plane machine instance.

- Select the stopped instance, and click Actions → Instance Settings → Change instance type.

-

Change the instance to a larger type, ensuring that the type is the same base as the previous selection, and apply changes. For example, you can change

m6i.xlargetom6i.2xlargeorm6i.4xlarge. - Start the instance.

-

If your OpenShift Container Platform cluster has a corresponding

Machineobject for the instance, update the instance type of the object to match the instance type set in the AWS console.

- Repeat this process for each control plane machine.

2.2. Recommended infrastructure practices

This topic provides recommended performance and scalability practices for infrastructure in OpenShift Container Platform.

2.2.1. Infrastructure node sizing

Infrastructure nodes are nodes that are labeled to run pieces of the OpenShift Container Platform environment. The infrastructure node resource requirements depend on the cluster age, nodes, and objects in the cluster, as these factors can lead to an increase in the number of metrics or time series in Prometheus. The following infrastructure node size recommendations are based on the results observed in cluster-density testing detailed in the Control plane node sizing section, where the monitoring stack and the default ingress-controller were moved to these nodes.

| Number of worker nodes | Cluster density, or number of namespaces | CPU cores | Memory (GB) |

|---|---|---|---|

| 27 | 500 | 4 | 24 |

| 120 | 1000 | 8 | 48 |

| 252 | 4000 | 16 | 128 |

| 501 | 4000 | 32 | 128 |

In general, three infrastructure nodes are recommended per cluster.

These sizing recommendations should be used as a guideline. Prometheus is a highly memory intensive application; the resource usage depends on various factors including the number of nodes, objects, the Prometheus metrics scraping interval, metrics or time series, and the age of the cluster. In addition, the router resource usage can also be affected by the number of routes and the amount/type of inbound requests.

These recommendations apply only to infrastructure nodes hosting Monitoring, Ingress and Registry infrastructure components installed during cluster creation.

In OpenShift Container Platform 4.14, half of a CPU core (500 millicore) is now reserved by the system by default compared to OpenShift Container Platform 3.11 and previous versions. This influences the stated sizing recommendations.

2.2.2. Scaling the Cluster Monitoring Operator

OpenShift Container Platform exposes metrics that the Cluster Monitoring Operator (CMO) collects and stores in the Prometheus-based monitoring stack. As an administrator, you can view dashboards for system resources, containers, and components metrics in the OpenShift Container Platform web console by navigating to Observe → Dashboards.

2.2.3. Prometheus database storage requirements

Red Hat performed various tests for different scale sizes.

- The following Prometheus storage requirements are not prescriptive and should be used as a reference. Higher resource consumption might be observed in your cluster depending on workload activity and resource density, including the number of pods, containers, routes, or other resources exposing metrics collected by Prometheus.

- You can configure the size-based data retention policy to suit your storage requirements.

| Number of nodes | Number of pods (2 containers per pod) | Prometheus storage growth per day | Prometheus storage growth per 15 days | Network (per tsdb chunk) |

|---|---|---|---|---|

| 50 | 1800 | 6.3 GB | 94 GB | 16 MB |

| 100 | 3600 | 13 GB | 195 GB | 26 MB |

| 150 | 5400 | 19 GB | 283 GB | 36 MB |

| 200 | 7200 | 25 GB | 375 GB | 46 MB |

Approximately 20 percent of the expected size was added as overhead to ensure that the storage requirements do not exceed the calculated value.

The above calculation is for the default OpenShift Container Platform Cluster Monitoring Operator.

CPU utilization has minor impact. The ratio is approximately 1 core out of 40 per 50 nodes and 1800 pods.

Recommendations for OpenShift Container Platform

- Use at least two infrastructure (infra) nodes.

- Use at least three openshift-container-storage nodes with non-volatile memory express (SSD or NVMe) drives.

2.2.4. Configuring cluster monitoring

You can increase the storage capacity for the Prometheus component in the cluster monitoring stack.

Procedure

To increase the storage capacity for Prometheus:

Create a YAML configuration file,

cluster-monitoring-config.yaml. For example:apiVersion: v1 kind: ConfigMap data: config.yaml: | prometheusK8s: retention: {{PROMETHEUS_RETENTION_PERIOD}}1 nodeSelector: node-role.kubernetes.io/infra: "" volumeClaimTemplate: spec: storageClassName: {{STORAGE_CLASS}}2 resources: requests: storage: {{PROMETHEUS_STORAGE_SIZE}}3 alertmanagerMain: nodeSelector: node-role.kubernetes.io/infra: "" volumeClaimTemplate: spec: storageClassName: {{STORAGE_CLASS}}4 resources: requests: storage: {{ALERTMANAGER_STORAGE_SIZE}}5 metadata: name: cluster-monitoring-config namespace: openshift-monitoring- 1

- The default value of Prometheus retention is

PROMETHEUS_RETENTION_PERIOD=15d. Units are measured in time using one of these suffixes: s, m, h, d. - 2 4

- The storage class for your cluster.

- 3

- A typical value is

PROMETHEUS_STORAGE_SIZE=2000Gi. Storage values can be a plain integer or a fixed-point integer using one of these suffixes: E, P, T, G, M, K. You can also use the power-of-two equivalents: Ei, Pi, Ti, Gi, Mi, Ki. - 5

- A typical value is

ALERTMANAGER_STORAGE_SIZE=20Gi. Storage values can be a plain integer or a fixed-point integer using one of these suffixes: E, P, T, G, M, K. You can also use the power-of-two equivalents: Ei, Pi, Ti, Gi, Mi, Ki.

- Add values for the retention period, storage class, and storage sizes.

- Save the file.

Apply the changes by running:

$ oc create -f cluster-monitoring-config.yaml

2.3. Recommended etcd practices

This topic provides recommended performance and scalability practices for etcd in OpenShift Container Platform.

2.3.1. Recommended etcd practices

Because etcd writes data to disk and persists proposals on disk, its performance depends on disk performance. Although etcd is not particularly I/O intensive, it requires a low latency block device for optimal performance and stability. Because etcd’s consensus protocol depends on persistently storing metadata to a log (WAL), etcd is sensitive to disk-write latency. Slow disks and disk activity from other processes can cause long fsync latencies.

Those latencies can cause etcd to miss heartbeats, not commit new proposals to the disk on time, and ultimately experience request timeouts and temporary leader loss. High write latencies also lead to an OpenShift API slowness, which affects cluster performance. Because of these reasons, avoid colocating other workloads on the control-plane nodes that are I/O sensitive or intensive and share the same underlying I/O infrastructure.

In terms of latency, run etcd on top of a block device that can write at least 50 IOPS of 8000 bytes long sequentially. That is, with a latency of 10ms, keep in mind that uses fdatasync to synchronize each write in the WAL. For heavy loaded clusters, sequential 500 IOPS of 8000 bytes (2 ms) are recommended. To measure those numbers, you can use a benchmarking tool, such as fio.

To achieve such performance, run etcd on machines that are backed by SSD or NVMe disks with low latency and high throughput. Consider single-level cell (SLC) solid-state drives (SSDs), which provide 1 bit per memory cell, are durable and reliable, and are ideal for write-intensive workloads.

The load on etcd arises from static factors, such as the number of nodes and pods, and dynamic factors, including changes in endpoints due to pod autoscaling, pod restarts, job executions, and other workload-related events. To accurately size your etcd setup, you must analyze the specific requirements of your workload. Consider the number of nodes, pods, and other relevant factors that impact the load on etcd.

The following hard drive practices provide optimal etcd performance:

- Use dedicated etcd drives. Avoid drives that communicate over the network, such as iSCSI. Do not place log files or other heavy workloads on etcd drives.

- Prefer drives with low latency to support fast read and write operations.

- Prefer high-bandwidth writes for faster compactions and defragmentation.

- Prefer high-bandwidth reads for faster recovery from failures.

- Use solid state drives as a minimum selection. Prefer NVMe drives for production environments.

- Use server-grade hardware for increased reliability.

Avoid NAS or SAN setups and spinning drives. Ceph Rados Block Device (RBD) and other types of network-attached storage can result in unpredictable network latency. To provide fast storage to etcd nodes at scale, use PCI passthrough to pass NVM devices directly to the nodes.

Always benchmark by using utilities such as fio. You can use such utilities to continuously monitor the cluster performance as it increases.

Avoid using the Network File System (NFS) protocol or other network based file systems.

Some key metrics to monitor on a deployed OpenShift Container Platform cluster are p99 of etcd disk write ahead log duration and the number of etcd leader changes. Use Prometheus to track these metrics.

The etcd member database sizes can vary in a cluster during normal operations. This difference does not affect cluster upgrades, even if the leader size is different from the other members.

To validate the hardware for etcd before or after you create the OpenShift Container Platform cluster, you can use fio.

Prerequisites

- Container runtimes such as Podman or Docker are installed on the machine that you’re testing.

-

Data is written to the

/var/lib/etcdpath.

Procedure

Run fio and analyze the results:

If you use Podman, run this command:

$ sudo podman run --volume /var/lib/etcd:/var/lib/etcd:Z quay.io/cloud-bulldozer/etcd-perfIf you use Docker, run this command:

$ sudo docker run --volume /var/lib/etcd:/var/lib/etcd:Z quay.io/cloud-bulldozer/etcd-perf

The output reports whether the disk is fast enough to host etcd by comparing the 99th percentile of the fsync metric captured from the run to see if it is less than 10 ms. A few of the most important etcd metrics that might affected by I/O performance are as follow:

-

etcd_disk_wal_fsync_duration_seconds_bucketmetric reports the etcd’s WAL fsync duration -

etcd_disk_backend_commit_duration_seconds_bucketmetric reports the etcd backend commit latency duration -

etcd_server_leader_changes_seen_totalmetric reports the leader changes

Because etcd replicates the requests among all the members, its performance strongly depends on network input/output (I/O) latency. High network latencies result in etcd heartbeats taking longer than the election timeout, which results in leader elections that are disruptive to the cluster. A key metric to monitor on a deployed OpenShift Container Platform cluster is the 99th percentile of etcd network peer latency on each etcd cluster member. Use Prometheus to track the metric.

The histogram_quantile(0.99, rate(etcd_network_peer_round_trip_time_seconds_bucket[2m])) metric reports the round trip time for etcd to finish replicating the client requests between the members. Ensure that it is less than 50 ms.

2.3.2. Moving etcd to a different disk

You can move etcd from a shared disk to a separate disk to prevent or resolve performance issues.

The Machine Config Operator (MCO) is responsible for mounting a secondary disk for OpenShift Container Platform 4.14 container storage.

This encoded script only supports device names for the following device types:

- SCSI or SATA

-

/dev/sd* - Virtual device

-

/dev/vd* - NVMe

-

/dev/nvme*[0-9]*n*

Limitations

-

When the new disk is attached to the cluster, the etcd database is part of the root mount. It is not part of the secondary disk or the intended disk when the primary node is recreated. As a result, the primary node will not create a separate

/var/lib/etcdmount.

Prerequisites

- You have a backup of your cluster’s etcd data.

-

You have installed the OpenShift CLI (

oc). -

You have access to the cluster with

cluster-adminprivileges. - Add additional disks before uploading the machine configuration.

-

The

MachineConfigPoolmust matchmetadata.labels[machineconfiguration.openshift.io/role]. This applies to a controller, worker, or a custom pool.

This procedure does not move parts of the root file system, such as /var/, to another disk or partition on an installed node.

This procedure is not supported when using control plane machine sets.

Procedure

Attach the new disk to the cluster and verify that the disk is detected in the node by running the

lsblkcommand in a debug shell:$ oc debug node/<node_name># lsblkNote the device name of the new disk reported by the

lsblkcommand.Create the following script and name it

etcd-find-secondary-device.sh:#!/bin/bash set -uo pipefail for device in <device_type_glob>; do1 /usr/sbin/blkid "${device}" &> /dev/null if [ $? == 2 ]; then echo "secondary device found ${device}" echo "creating filesystem for etcd mount" mkfs.xfs -L var-lib-etcd -f "${device}" &> /dev/null udevadm settle touch /etc/var-lib-etcd-mount exit fi done echo "Couldn't find secondary block device!" >&2 exit 77- 1

- Replace

<device_type_glob>with a shell glob for your block device type. For SCSI or SATA drives, use/dev/sd*; for virtual drives, use/dev/vd*; for NVMe drives, use/dev/nvme*[0-9]*n*.

Create a base64-encoded string from the

etcd-find-secondary-device.shscript and note its contents:$ base64 -w0 etcd-find-secondary-device.shCreate a

MachineConfigYAML file namedetcd-mc.ymlwith contents such as the following:apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfig metadata: labels: machineconfiguration.openshift.io/role: master name: 98-var-lib-etcd spec: config: ignition: version: 3.4.0 storage: files: - path: /etc/find-secondary-device mode: 0755 contents: source: data:text/plain;charset=utf-8;base64,<encoded_etcd_find_secondary_device_script>1 systemd: units: - name: find-secondary-device.service enabled: true contents: | [Unit] Description=Find secondary device DefaultDependencies=false After=systemd-udev-settle.service Before=local-fs-pre.target ConditionPathExists=!/etc/var-lib-etcd-mount [Service] RemainAfterExit=yes ExecStart=/etc/find-secondary-device RestartForceExitStatus=77 [Install] WantedBy=multi-user.target - name: var-lib-etcd.mount enabled: true contents: | [Unit] Before=local-fs.target [Mount] What=/dev/disk/by-label/var-lib-etcd Where=/var/lib/etcd Type=xfs TimeoutSec=120s [Install] RequiredBy=local-fs.target - name: sync-var-lib-etcd-to-etcd.service enabled: true contents: | [Unit] Description=Sync etcd data if new mount is empty DefaultDependencies=no After=var-lib-etcd.mount var.mount Before=crio.service [Service] Type=oneshot RemainAfterExit=yes ExecCondition=/usr/bin/test ! -d /var/lib/etcd/member ExecStart=/usr/sbin/setsebool -P rsync_full_access 1 ExecStart=/bin/rsync -ar /sysroot/ostree/deploy/rhcos/var/lib/etcd/ /var/lib/etcd/ ExecStart=/usr/sbin/semanage fcontext -a -t container_var_lib_t '/var/lib/etcd(/.*)?' ExecStart=/usr/sbin/setsebool -P rsync_full_access 0 TimeoutSec=0 [Install] WantedBy=multi-user.target graphical.target - name: restorecon-var-lib-etcd.service enabled: true contents: | [Unit] Description=Restore recursive SELinux security contexts DefaultDependencies=no After=var-lib-etcd.mount Before=crio.service [Service] Type=oneshot RemainAfterExit=yes ExecStart=/sbin/restorecon -R /var/lib/etcd/ TimeoutSec=0 [Install] WantedBy=multi-user.target graphical.target- 1

- Replace

<encoded_etcd_find_secondary_device_script>with the encoded script contents that you noted.

Verification steps

Run the

grep /var/lib/etcd /proc/mountscommand in a debug shell for the node to ensure that the disk is mounted:$ oc debug node/<node_name># grep -w "/var/lib/etcd" /proc/mountsExample output

/dev/sdb /var/lib/etcd xfs rw,seclabel,relatime,attr2,inode64,logbufs=8,logbsize=32k,noquota 0 0

2.3.3. Defragmenting etcd data

For large and dense clusters, etcd can suffer from poor performance if the keyspace grows too large and exceeds the space quota. Periodically maintain and defragment etcd to free up space in the data store. Monitor Prometheus for etcd metrics and defragment it when required; otherwise, etcd can raise a cluster-wide alarm that puts the cluster into a maintenance mode that accepts only key reads and deletes.

Monitor these key metrics:

-

etcd_server_quota_backend_bytes, which is the current quota limit -

etcd_mvcc_db_total_size_in_use_in_bytes, which indicates the actual database usage after a history compaction -

etcd_mvcc_db_total_size_in_bytes, which shows the database size, including free space waiting for defragmentation

Defragment etcd data to reclaim disk space after events that cause disk fragmentation, such as etcd history compaction.

History compaction is performed automatically every five minutes and leaves gaps in the back-end database. This fragmented space is available for use by etcd, but is not available to the host file system. You must defragment etcd to make this space available to the host file system.

Defragmentation occurs automatically, but you can also trigger it manually.

Automatic defragmentation is good for most cases, because the etcd operator uses cluster information to determine the most efficient operation for the user.

2.3.3.1. Automatic defragmentation

The etcd Operator automatically defragments disks. No manual intervention is needed.

Verify that the defragmentation process is successful by viewing one of these logs:

- etcd logs

- cluster-etcd-operator pod

- operator status error log

Automatic defragmentation can cause leader election failure in various OpenShift core components, such as the Kubernetes controller manager, which triggers a restart of the failing component. The restart is harmless and either triggers failover to the next running instance or the component resumes work again after the restart.

Example log output for successful defragmentation

etcd member has been defragmented: <member_name>, memberID: <member_id>Example log output for unsuccessful defragmentation

failed defrag on member: <member_name>, memberID: <member_id>: <error_message>2.3.3.2. Manual defragmentation

A Prometheus alert indicates when you need to use manual defragmentation. The alert is displayed in two cases:

- When etcd uses more than 50% of its available space for more than 10 minutes

- When etcd is actively using less than 50% of its total database size for more than 10 minutes

You can also determine whether defragmentation is needed by checking the etcd database size in MB that will be freed by defragmentation with the PromQL expression: (etcd_mvcc_db_total_size_in_bytes - etcd_mvcc_db_total_size_in_use_in_bytes)/1024/1024

Defragmenting etcd is a blocking action. The etcd member will not respond until defragmentation is complete. For this reason, wait at least one minute between defragmentation actions on each of the pods to allow the cluster to recover.

Follow this procedure to defragment etcd data on each etcd member.

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole.

Procedure

Determine which etcd member is the leader, because the leader should be defragmented last.

Get the list of etcd pods:

$ oc -n openshift-etcd get pods -l k8s-app=etcd -o wideExample output

etcd-ip-10-0-159-225.example.redhat.com 3/3 Running 0 175m 10.0.159.225 ip-10-0-159-225.example.redhat.com <none> <none> etcd-ip-10-0-191-37.example.redhat.com 3/3 Running 0 173m 10.0.191.37 ip-10-0-191-37.example.redhat.com <none> <none> etcd-ip-10-0-199-170.example.redhat.com 3/3 Running 0 176m 10.0.199.170 ip-10-0-199-170.example.redhat.com <none> <none>Choose a pod and run the following command to determine which etcd member is the leader:

$ oc rsh -n openshift-etcd etcd-ip-10-0-159-225.example.redhat.com etcdctl endpoint status --cluster -w tableExample output

Defaulting container name to etcdctl. Use 'oc describe pod/etcd-ip-10-0-159-225.example.redhat.com -n openshift-etcd' to see all of the containers in this pod. +---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+ | ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS | +---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+ | https://10.0.191.37:2379 | 251cd44483d811c3 | 3.5.9 | 104 MB | false | false | 7 | 91624 | 91624 | | | https://10.0.159.225:2379 | 264c7c58ecbdabee | 3.5.9 | 104 MB | false | false | 7 | 91624 | 91624 | | | https://10.0.199.170:2379 | 9ac311f93915cc79 | 3.5.9 | 104 MB | true | false | 7 | 91624 | 91624 | | +---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+Based on the

IS LEADERcolumn of this output, thehttps://10.0.199.170:2379endpoint is the leader. Matching this endpoint with the output of the previous step, the pod name of the leader isetcd-ip-10-0-199-170.example.redhat.com.

Defragment an etcd member.

Connect to the running etcd container, passing in the name of a pod that is not the leader:

$ oc rsh -n openshift-etcd etcd-ip-10-0-159-225.example.redhat.comUnset the

ETCDCTL_ENDPOINTSenvironment variable:sh-4.4# unset ETCDCTL_ENDPOINTSDefragment the etcd member:

sh-4.4# etcdctl --command-timeout=30s --endpoints=https://localhost:2379 defragExample output

Finished defragmenting etcd member[https://localhost:2379]If a timeout error occurs, increase the value for

--command-timeoutuntil the command succeeds.Verify that the database size was reduced:

sh-4.4# etcdctl endpoint status -w table --clusterExample output

+---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+ | ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS | +---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+ | https://10.0.191.37:2379 | 251cd44483d811c3 | 3.5.9 | 104 MB | false | false | 7 | 91624 | 91624 | | | https://10.0.159.225:2379 | 264c7c58ecbdabee | 3.5.9 | 41 MB | false | false | 7 | 91624 | 91624 | |1 | https://10.0.199.170:2379 | 9ac311f93915cc79 | 3.5.9 | 104 MB | true | false | 7 | 91624 | 91624 | | +---------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+This example shows that the database size for this etcd member is now 41 MB as opposed to the starting size of 104 MB.

Repeat these steps to connect to each of the other etcd members and defragment them. Always defragment the leader last.

Wait at least one minute between defragmentation actions to allow the etcd pod to recover. Until the etcd pod recovers, the etcd member will not respond.

If any

NOSPACEalarms were triggered due to the space quota being exceeded, clear them.Check if there are any

NOSPACEalarms:sh-4.4# etcdctl alarm listExample output

memberID:12345678912345678912 alarm:NOSPACEClear the alarms:

sh-4.4# etcdctl alarm disarm

2.3.4. Setting tuning parameters for etcd

You can set the control plane hardware speed to "Standard", "Slower", or the default, which is "".

The default setting allows the system to decide which speed to use. This value enables upgrades from versions where this feature does not exist, as the system can select values from previous versions.

By selecting one of the other values, you are overriding the default. If you see many leader elections due to timeouts or missed heartbeats and your system is set to "" or "Standard", set the hardware speed to "Slower" to make the system more tolerant to the increased latency.

Tuning etcd latency tolerances is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope.

2.3.4.1. Changing hardware speed tolerance

To change the hardware speed tolerance for etcd, complete the following steps.

Prerequisites

-

You have edited the cluster instance to enable

TechPreviewNoUpgradefeatures. For more information, see "Understanding feature gates" in the Additional resources.

Procedure

Check to see what the current value is by entering the following command:

$ oc describe etcd/cluster | grep "Control Plane Hardware Speed"Example output

Control Plane Hardware Speed: <VALUE>NoteIf the output is empty, the field has not been set and should be considered as the default ("").

Change the value by entering the following command. Replace

<value>with one of the valid values:"","Standard", or"Slower":$ oc patch etcd/cluster --type=merge -p '{"spec": {"controlPlaneHardwareSpeed": "<value>"}}'The following table indicates the heartbeat interval and leader election timeout for each profile. These values are subject to change.

Expand Profile

ETCD_HEARTBEAT_INTERVAL

ETCD_LEADER_ELECTION_TIMEOUT

""Varies depending on platform

Varies depending on platform

Standard100

1000

Slower500

2500

Review the output:

Example output

etcd.operator.openshift.io/cluster patchedIf you enter any value besides the valid values, error output is displayed. For example, if you entered

"Faster"as the value, the output is as follows:Example output

The Etcd "cluster" is invalid: spec.controlPlaneHardwareSpeed: Unsupported value: "Faster": supported values: "", "Standard", "Slower"Verify that the value was changed by entering the following command:

$ oc describe etcd/cluster | grep "Control Plane Hardware Speed"Example output

Control Plane Hardware Speed: ""Wait for etcd pods to roll out:

$ oc get pods -n openshift-etcd -wThe following output shows the expected entries for master-0. Before you continue, wait until all masters show a status of

4/4 Running.Example output

installer-9-ci-ln-qkgs94t-72292-9clnd-master-0 0/1 Pending 0 0s installer-9-ci-ln-qkgs94t-72292-9clnd-master-0 0/1 Pending 0 0s installer-9-ci-ln-qkgs94t-72292-9clnd-master-0 0/1 ContainerCreating 0 0s installer-9-ci-ln-qkgs94t-72292-9clnd-master-0 0/1 ContainerCreating 0 1s installer-9-ci-ln-qkgs94t-72292-9clnd-master-0 1/1 Running 0 2s installer-9-ci-ln-qkgs94t-72292-9clnd-master-0 0/1 Completed 0 34s installer-9-ci-ln-qkgs94t-72292-9clnd-master-0 0/1 Completed 0 36s installer-9-ci-ln-qkgs94t-72292-9clnd-master-0 0/1 Completed 0 36s etcd-guard-ci-ln-qkgs94t-72292-9clnd-master-0 0/1 Running 0 26m etcd-ci-ln-qkgs94t-72292-9clnd-master-0 4/4 Terminating 0 11m etcd-ci-ln-qkgs94t-72292-9clnd-master-0 4/4 Terminating 0 11m etcd-ci-ln-qkgs94t-72292-9clnd-master-0 0/4 Pending 0 0s etcd-ci-ln-qkgs94t-72292-9clnd-master-0 0/4 Init:1/3 0 1s etcd-ci-ln-qkgs94t-72292-9clnd-master-0 0/4 Init:2/3 0 2s etcd-ci-ln-qkgs94t-72292-9clnd-master-0 0/4 PodInitializing 0 3s etcd-ci-ln-qkgs94t-72292-9clnd-master-0 3/4 Running 0 4s etcd-guard-ci-ln-qkgs94t-72292-9clnd-master-0 1/1 Running 0 26m etcd-ci-ln-qkgs94t-72292-9clnd-master-0 3/4 Running 0 20s etcd-ci-ln-qkgs94t-72292-9clnd-master-0 4/4 Running 0 20sEnter the following command to review to the values:

$ oc describe -n openshift-etcd pod/<ETCD_PODNAME> | grep -e HEARTBEAT_INTERVAL -e ELECTION_TIMEOUTNoteThese values might not have changed from the default.

Chapter 3. Reference design specifications

3.1. Telco core and RAN DU reference design specifications

The telco core reference design specification (RDS) describes OpenShift Container Platform 4.14 clusters running on commodity hardware that can support large scale telco applications including control plane and some centralized data plane functions.

The telco RAN RDS describes the configuration for clusters running on commodity hardware to host 5G workloads in the Radio Access Network (RAN).

3.1.1. Reference design specifications for telco 5G deployments

Red Hat and certified partners offer deep technical expertise and support for networking and operational capabilities required to run telco applications on OpenShift Container Platform 4.14 clusters.

Red Hat’s telco partners require a well-integrated, well-tested, and stable environment that can be replicated at scale for enterprise 5G solutions. The telco core and RAN DU reference design specifications (RDS) outline the recommended solution architecture based on a specific version of OpenShift Container Platform. Each RDS describes a tested and validated platform configuration for telco core and RAN DU use models. The RDS ensures an optimal experience when running your applications by defining the set of critical KPIs for telco 5G core and RAN DU. Following the RDS minimizes high severity escalations and improves application stability.

5G use cases are evolving and your workloads are continually changing. Red Hat is committed to iterating over the telco core and RAN DU RDS to support evolving requirements based on customer and partner feedback.

3.1.2. Reference design scope

The telco core and telco RAN reference design specifications (RDS) capture the recommended, tested, and supported configurations to get reliable and repeatable performance for clusters running the telco core and telco RAN profiles.

Each RDS includes the released features and supported configurations that are engineered and validated for clusters to run the individual profiles. The configurations provide a baseline OpenShift Container Platform installation that meets feature and KPI targets. Each RDS also describes expected variations for each individual configuration. Validation of each RDS includes many long duration and at-scale tests.

The validated reference configurations are updated for each major Y-stream release of OpenShift Container Platform. Z-stream patch releases are periodically re-tested against the reference configurations.

3.1.3. Deviations from the reference design

Deviating from the validated telco core and telco RAN DU reference design specifications (RDS) can have significant impact beyond the specific component or feature that you change. Deviations require analysis and engineering in the context of the complete solution.

All deviations from the RDS should be analyzed and documented with clear action tracking information. Due diligence is expected from partners to understand how to bring deviations into line with the reference design. This might require partners to provide additional resources to engage with Red Hat to work towards enabling their use case to achieve a best in class outcome with the platform. This is critical for the supportability of the solution and ensuring alignment across Red Hat and with partners.

Deviation from the RDS can have some or all of the following consequences:

- It can take longer to resolve issues.

- There is a risk of missing project service-level agreements (SLAs), project deadlines, end provider performance requirements, and so on.

Unapproved deviations may require escalation at executive levels.

NoteRed Hat prioritizes the servicing of requests for deviations based on partner engagement priorities.

3.2. Telco RAN DU reference design specification

3.2.1. Telco RAN DU 4.14 reference design overview

The Telco RAN distributed unit (DU) 4.14 reference design configures an OpenShift Container Platform 4.14 cluster running on commodity hardware to host telco RAN DU workloads. It captures the recommended, tested, and supported configurations to get reliable and repeatable performance for a cluster running the telco RAN DU profile.

3.2.1.1. OpenShift Container Platform 4.14 features for telco RAN DU

The following features that are included in OpenShift Container Platform 4.14 and are leveraged by the telco RAN DU reference design specification (RDS) have been added or updated.

| Feature | Description |

|---|---|

| GitOps ZTP independence from managed cluster version | You can now use GitOps ZTP to manage clusters that are running different versions of OpenShift Container Platform compared to the version that is running on the hub cluster. You can also have a mix of OpenShift Container Platform versions in the deployed fleet of clusters. |

| Using custom CRs alongside the reference CRs in GitOps ZTP |

You can now use custom CRs alongside the reference configuration CRs provided in the |

|

Using custom node labels in the |

You can now use the |

| Intel Westport Channel e810 NIC as PTP Grandmaster clock (Technology Preview) | You can use the Intel Westport Channel E810-XXVDA4T as a GNSS-sourced grandmaster clock. The NIC is automatically configured by the PTP Operator with the E810 hardware plugin. |

| PTP Operator hardware specific functionality plugin (Technology Preview) | A new E810 NIC hardware plugin is now available in the PTP Operator. You can use the E810 plugin to configure the NIC directly. |

| PTP events and metrics |

The |

| Precaching user-specified images | You can now precache application workload images before upgrading your applications on single-node OpenShift clusters with Topology Aware Lifecycle Manager. |

| Using OpenShift capabilities to further reduce the single-node OpenShift DU footprint |

Use cluster capabilities to enable or disable optional components before you install the cluster. In OpenShift Container Platform 4.14, the following optional capabilities are available: |

|

Set | single-node OpenShift clusters that run DU workloads require logging and log forwarding. |

3.2.1.2. Deployment architecture overview

You deploy the telco RAN DU 4.14 reference configuration to managed clusters from a centrally managed RHACM hub cluster. The reference design specification (RDS) includes configuration of the managed clusters and the hub cluster components.

Figure 3.1. Telco RAN DU deployment architecture overview

3.2.2. Telco RAN DU use model overview

Use the following information to plan telco RAN DU workloads, cluster resources, and hardware specifications for the hub cluster and managed single-node OpenShift clusters.

3.2.2.1. Telco RAN DU application workloads

DU worker nodes must have 3rd Generation Xeon (Ice Lake) 2.20 GHz or better CPUs with firmware tuned for maximum performance.

5G RAN DU user applications and workloads should conform to the following best practices and application limits:

- Develop cloud-native network functions (CNFs) that conform to the latest version of the CNF best practices guide.

- Use SR-IOV for high performance networking.

Use exec probes sparingly and only when no other suitable options are available

-

Do not use exec probes if a CNF uses CPU pinning. Use other probe implementations, for example,

httpGetortcpSocket. - When you need to use exec probes, limit the exec probe frequency and quantity. The maximum number of exec probes must be kept below 10, and frequency must not be set to less than 10 seconds.

-

Do not use exec probes if a CNF uses CPU pinning. Use other probe implementations, for example,

Startup probes require minimal resources during steady-state operation. The limitation on exec probes applies primarily to liveness and readiness probes.

3.2.2.2. Telco RAN DU representative reference application workload characteristics

The representative reference application workload has the following characteristics:

- Has a maximum of 15 pods and 30 containers for the vRAN application including its management and control functions

-

Uses a maximum of 2

ConfigMapand 4SecretCRs per pod - Uses a maximum of 10 exec probes with a frequency of not less than 10 seconds

Incremental application load on the

kube-apiserveris less than 10% of the cluster platform usageNoteYou can extract CPU load can from the platform metrics. For example:

query=avg_over_time(pod:container_cpu_usage:sum{namespace="openshift-kube-apiserver"}[30m])- Application logs are not collected by the platform log collector

- Aggregate traffic on the primary CNI is less than 1 MBps

3.2.2.3. Telco RAN DU worker node cluster resource utilization

The maximum number of running pods in the system, inclusive of application workloads and OpenShift Container Platform pods, is 120.

- Resource utilization

OpenShift Container Platform resource utilization varies depending on many factors including application workload characteristics such as:

- Pod count

- Type and frequency of probes

- Messaging rates on primary CNI or secondary CNI with kernel networking

- API access rate

- Logging rates

- Storage IOPS

Cluster resource requirements are applicable under the following conditions:

- The cluster is running the described representative application workload.

- The cluster is managed with the constraints described in "Telco RAN DU worker node cluster resource utilization".

- Components noted as optional in the RAN DU use model configuration are not applied.

You will need to do additional analysis to determine the impact on resource utilization and ability to meet KPI targets for configurations outside the scope of the Telco RAN DU reference design. You might have to allocate additional resources in the cluster depending on your requirements.

3.2.2.4. Hub cluster management characteristics

Red Hat Advanced Cluster Management (RHACM) is the recommended cluster management solution. Configure it to the following limits on the hub cluster:

- Configure a maximum of 5 RHACM policies with a compliant evaluation interval of at least 10 minutes.

- Use a maximum of 10 managed cluster templates in policies. Where possible, use hub-side templating.

Disable all RHACM add-ons except for the

policy-controllerandobservability-controlleradd-ons. SetObservabilityto the default configuration.ImportantConfiguring optional components or enabling additional features will result in additional resource usage and can reduce overall system performance.

For more information, see Reference design deployment components.

| Metric | Limit | Notes |

|---|---|---|

| CPU usage | Less than 4000 mc – 2 cores (4 hyperthreads) | Platform CPU is pinned to reserved cores, including both hyperthreads in each reserved core. The system is engineered to use 3 CPUs (3000mc) at steady-state to allow for periodic system tasks and spikes. |

| Memory used | Less than 16G |

3.2.2.5. Telco RAN DU RDS components

The following sections describe the various OpenShift Container Platform components and configurations that you use to configure and deploy clusters to run telco RAN DU workloads.

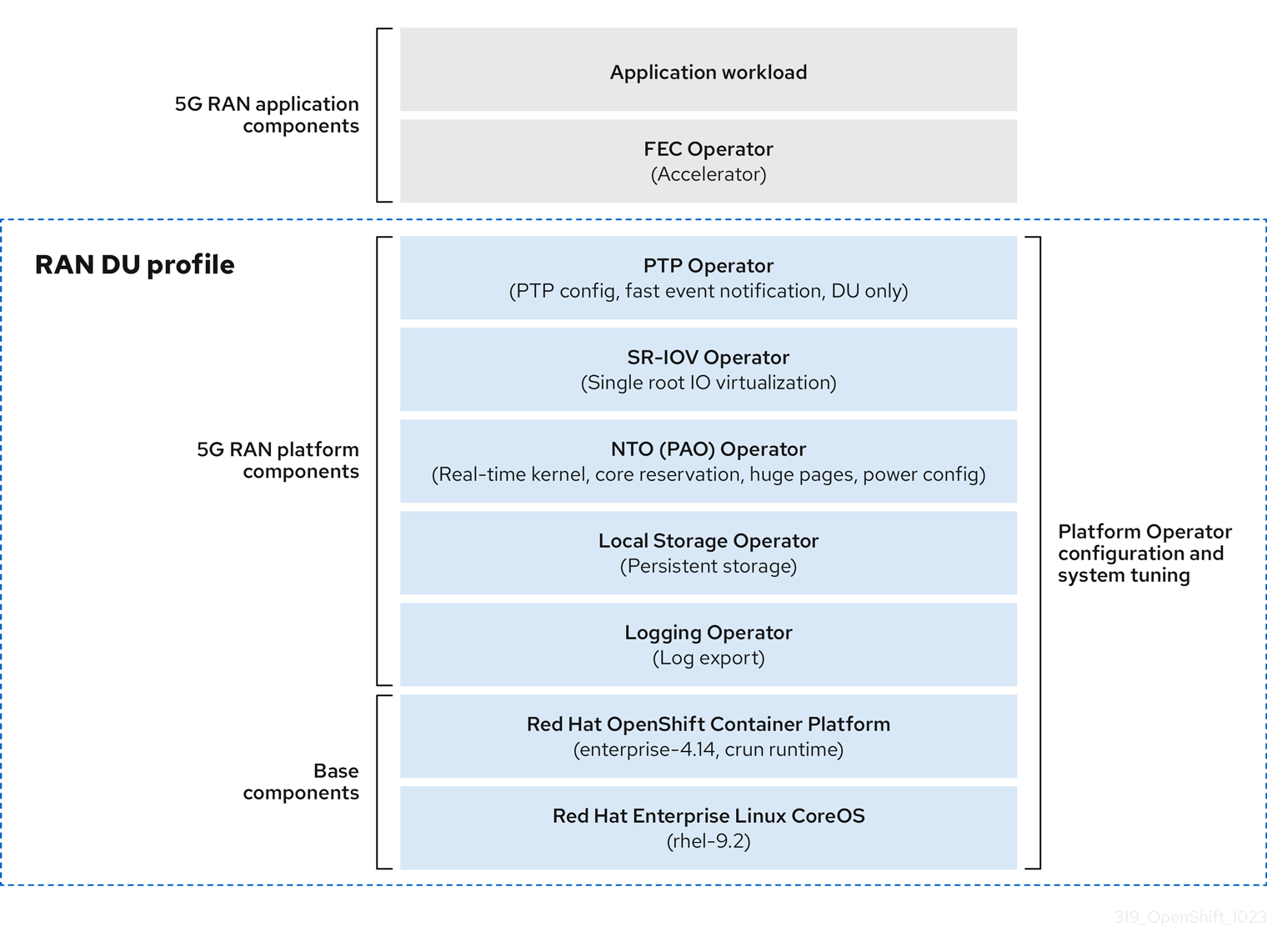

Figure 3.2. Telco RAN DU reference design components

Ensure that components that are not included in the telco RAN DU profile do not affect the CPU resources allocated to workload applications.

Out of tree drivers are not supported.

3.2.3. Telco RAN DU 4.14 reference design components

The following sections describe the various OpenShift Container Platform components and configurations that you use to configure and deploy clusters to run RAN DU workloads.

3.2.3.1. Host firmware tuning

- New in this release

- No reference design updates in this release

- Description

Configure system level performance. See Configuring host firmware for low latency and high performance for recommended settings.

If Ironic inspection is enabled, the firmware setting values are available from the per-cluster

BareMetalHostCR on the hub cluster. You enable Ironic inspection with a label in thespec.clusters.nodesfield in theSiteConfigCR that you use to install the cluster. For example:nodes: - hostName: "example-node1.example.com" ironicInspect: "enabled"NoteThe telco RAN DU reference

SiteConfigdoes not enable theironicInspectfield by default.- Limits and requirements

- Hyperthreading must be enabled

- Engineering considerations

Tune all settings for maximum performance

NoteYou can tune firmware selections for power savings at the expense of performance as required.

3.2.3.2. Node Tuning Operator

- New in this release

- No reference design updates in this release

- Description

You tune the cluster performance by creating a performance profile. Settings that you configure with a performance profile include:

- Selecting the realtime or non-realtime kernel.

-

Allocating cores to a reserved or isolated

cpuset. OpenShift Container Platform processes allocated to the management workload partition are pinned to reserved set. - Enabling kubelet features (CPU manager, topology manager, and memory manager).

- Configuring huge pages.

- Setting additional kernel arguments.

- Setting per-core power tuning and max CPU frequency.

- Limits and requirements

The Node Tuning Operator uses the

PerformanceProfileCR to configure the cluster. You need to configure the following settings in the RAN DU profilePerformanceProfileCR:- Select reserved and isolated cores and ensure that you allocate at least 4 hyperthreads (equivalent to 2 cores) on Intel 3rd Generation Xeon (Ice Lake) 2.20 GHz CPUs or better with firmware tuned for maximum performance.

-

Set the reserved

cpusetto include both hyperthread siblings for each included core. Unreserved cores are available as allocatable CPU for scheduling workloads. Ensure that hyperthread siblings are not split across reserved and isolated cores. - Configure reserved and isolated CPUs to include all threads in all cores based on what you have set as reserved and isolated CPUs.

- Set core 0 of each NUMA node to be included in the reserved CPU set.

- Set the huge page size to 1G.

You should not add additional workloads to the management partition. Only those pods which are part of the OpenShift management platform should be annotated into the management partition.

- Engineering considerations

You should use the RT kernel to meet performance requirements.

NoteYou can use the non-RT kernel if required.

- The number of huge pages that you configure depends on the application workload requirements. Variation in this parameter is expected and allowed.

- Variation is expected in the configuration of reserved and isolated CPU sets based on selected hardware and additional components in use on the system. Variation must still meet the specified limits.

- Hardware without IRQ affinity support impacts isolated CPUs. To ensure that pods with guaranteed whole CPU QoS have full use of the allocated CPU, all hardware in the server must support IRQ affinity. For more information, see About support of IRQ affinity setting.

In OpenShift Container Platform 4.14, any PerformanceProfile CR configured on the cluster causes the Node Tuning Operator to automatically set all cluster nodes to use cgroup v1.

For more information about cgroups, see Configuring Linux cgroup.

3.2.3.3. PTP Operator

- New in this release

- PTP grandmaster clock (T-GM) GPS timing with Intel E810-XXV-4T Westport Channel NIC – minimum firmware version 4.30 (Technology Preview)

- PTP events and metrics for grandmaster (T-GM) are new in OpenShift Container Platform 4.14 (Technology Preview)

- Description

Configure of PTP timing support for cluster nodes. The DU node can run in the following modes:

- As an ordinary clock synced to a T-GM or boundary clock (T-BC)

- As dual boundary clocks, one per NIC (high availability is not supported)

- As grandmaster clock with support for E810 Westport Channel NICs (Technology Preview)

- Optionally as a boundary clock for radio units (RUs)

Optional: subscribe applications to PTP events that happen on the node that the application is running. You subscribe the application to events via HTTP.

- Limits and requirements

- High availability is not supported with dual NIC configurations.

- Westport Channel NICs configured as T-GM do not support DPLL with the current ice driver version.

- GPS offsets are not reported. Use a default offset of less than or equal to 5.

- DPLL offsets are not reported. Use a default offset of less than or equal to 5.

- Engineering considerations

- Configurations are provided for ordinary clock, boundary clock, or grandmaster clock

-

PTP fast event notifications uses

ConfigMapCRs to store PTP event subscriptions - Use Intel E810-XXV-4T Westport Channel NICs for PTP grandmaster clocks with GPS timing, minimum firmware version 4.40

3.2.3.4. SR-IOV Operator

- New in this release

- No reference design updates in this release

- Description

-

The SR-IOV Operator provisions and configures the SR-IOV CNI and device plugins. Both

netdevice(kernel VFs) andvfio(DPDK) devices are supported. - Engineering considerations

-

Customer variation on the configuration and number of

SriovNetworkandSriovNetworkNodePolicycustom resources (CRs) is expected. -

IOMMU kernel command-line settings are applied with a

MachineConfigCR at install time. This ensures that theSriovOperatorCR does not cause a reboot of the node when adding them.

-

Customer variation on the configuration and number of

3.2.3.5. Logging

- New in this release

- Vector is now the recommended log collector.

- Description

- Use logging to collect logs from the far edge node for remote analysis.

- Engineering considerations

- Handling logs beyond the infrastructure and audit logs, for example, from the application workload requires additional CPU and network bandwidth based on additional logging rate.

As of OpenShift Container Platform 4.14, vector is the reference log collector.

NoteUse of fluentd in the RAN use model is deprecated.

3.2.3.6. SRIOV-FEC Operator

- New in this release

- No reference design updates in this release

- Description

- SRIOV-FEC Operator is an optional 3rd party Certified Operator supporting FEC accelerator hardware.

- Limits and requirements

Starting with FEC Operator v2.7.0:

-

SecureBootis supported -

The

vfiodriver for thePFrequires the usage ofvfio-tokenthat is injected into Pods. TheVFtoken can be passed to DPDK by using the EAL parameter--vfio-vf-token.

-

- Engineering considerations

-

The SRIOV-FEC Operator uses CPU cores from the

isolatedCPU set. - You can validate FEC readiness as part of the pre-checks for application deployment, for example, by extending the validation policy.

-

The SRIOV-FEC Operator uses CPU cores from the

3.2.3.7. Local Storage Operator

- New in this release

- No reference design updates in this release

- Description

-

You can create persistent volumes that can be used as

PVCresources by applications with the Local Storage Operator. The number and type ofPVresources that you create depends on your requirements. - Engineering considerations

-

Create backing storage for

PVCRs before creating thePV. This can be a partition, a local volume, LVM volume, or full disk. Refer to the device listing in

LocalVolumeCRs by the hardware path used to access each device to ensure correct allocation of disks and partitions. Logical names (for example,/dev/sda) are not guaranteed to be consistent across node reboots.For more information, see the RHEL 9 documentation on device identifiers.

-

Create backing storage for

3.2.3.8. LVMS Operator

- New in this release

- No reference design updates in this release

- New in this release

-

Simplified LVMS

deviceSelectorlogic -

LVM Storage with

ext4andPVresources

-

Simplified LVMS

LVMS Operator is an optional component.

- Description

The LVMS Operator provides dynamic provisioning of block and file storage. The LVMS Operator creates logical volumes from local devices that can be used as

PVCresources by applications. Volume expansion and snapshots are also possible.The following example configuration creates a

vg1volume group that leverages all available disks on the node except the installation disk:StorageLVMCluster.yaml

apiVersion: lvm.topolvm.io/v1alpha1 kind: LVMCluster metadata: name: storage-lvmcluster namespace: openshift-storage annotations: ran.openshift.io/ztp-deploy-wave: "10" spec: {} storage: deviceClasses: - name: vg1 thinPoolConfig: name: thin-pool-1 sizePercent: 90 overprovisionRatio: 10- Limits and requirements

- In single-node OpenShift clusters, persistent storage must be provided by either LVMS or Local Storage, not both.

- Engineering considerations

- The LVMS Operator is not the reference storage solution for the DU use case. If you require LVMS Operator for application workloads, the resource use is accounted for against the application cores.

- Ensure that sufficient disks or partitions are available for storage requirements.

3.2.3.9. Workload partitioning

- New in this release

- No reference design updates in this release

- Description

Workload partitioning pins OpenShift platform and Day 2 Operator pods that are part of the DU profile to the reserved

cpusetand removes the reserved CPU from node accounting. This leaves all unreserved CPU cores available for user workloads.The method of enabling and configuring workload partitioning changed in OpenShift Container Platform 4.14.

- 4.14 and later

Configure partitions by setting installation parameters:

cpuPartitioningMode: AllNodes-

Configure management partition cores with the reserved CPU set in the

PerformanceProfileCR

- 4.13 and earlier

-

Configure partitions with extra

MachineConfigurationCRs applied at install-time

-

Configure partitions with extra

- Limits and requirements

-

NamespaceandPodCRs must be annotated to allow the pod to be applied to the management partition - Pods with CPU limits cannot be allocated to the partition. This is because mutation can change the pod QoS.

- For more information about the minimum number of CPUs that can be allocated to the management partition, see Node Tuning Operator.

-

- Engineering considerations

- Workload Partitioning pins all management pods to reserved cores. A sufficient number of cores must be allocated to the reserved set to account for operating system, management pods, and expected spikes in CPU use that occur when the workload starts, the node reboots, or other system events happen.

3.2.3.10. Cluster tuning

- New in this release

You can remove the Image Registry Operator by using the cluster capabilities feature.

NoteYou configure cluster capabilities by using the

spec.clusters.installConfigOverridesfield in theSiteConfigCR that you use to install the cluster.

- Description

The cluster capabilities feature now includes a

MachineAPIcomponent which, when excluded, disables the following Operators and their resources in the cluster:-

openshift/cluster-autoscaler-operator -

openshift/cluster-control-plane-machine-set-operator -

openshift/machine-api-operator

-

- Limits and requirements

- Cluster capabilities are not available for installer-provisioned installation methods.

You must apply all platform tuning configurations. The following table lists the required platform tuning configurations:

Expand Table 3.3. Cluster capabilities configurations Feature Description Remove optional cluster capabilities

Reduce the OpenShift Container Platform footprint by disabling optional cluster Operators on single-node OpenShift clusters only.

- Remove all optional Operators except the Marketplace and Node Tuning Operators.

Configure cluster monitoring

Configure the monitoring stack for reduced footprint by doing the following:

-

Disable the local

alertmanagerandtelemetercomponents. -

If you use RHACM observability, the CR must be augmented with appropriate

additionalAlertManagerConfigsCRs to forward alerts to the hub cluster. Reduce the

Prometheusretention period to 24h.NoteThe RHACM hub cluster aggregates managed cluster metrics.

Disable networking diagnostics

Disable networking diagnostics for single-node OpenShift because they are not required.

Configure a single Operator Hub catalog source

Configure the cluster to use a single catalog source that contains only the Operators required for a RAN DU deployment. Each catalog source increases the CPU use on the cluster. Using a single

CatalogSourcefits within the platform CPU budget.

3.2.3.11. Machine configuration

- New in this release

-

Set

rcu_normalafter node recovery

-

Set

- Limits and requirements

The CRI-O wipe disable

MachineConfigassumes that images on disk are static other than during scheduled maintenance in defined maintenance windows. To ensure the images are static, do not set the podimagePullPolicyfield toAlways.Expand Table 3.4. Machine configuration options Feature Description Container runtime

Sets the container runtime to

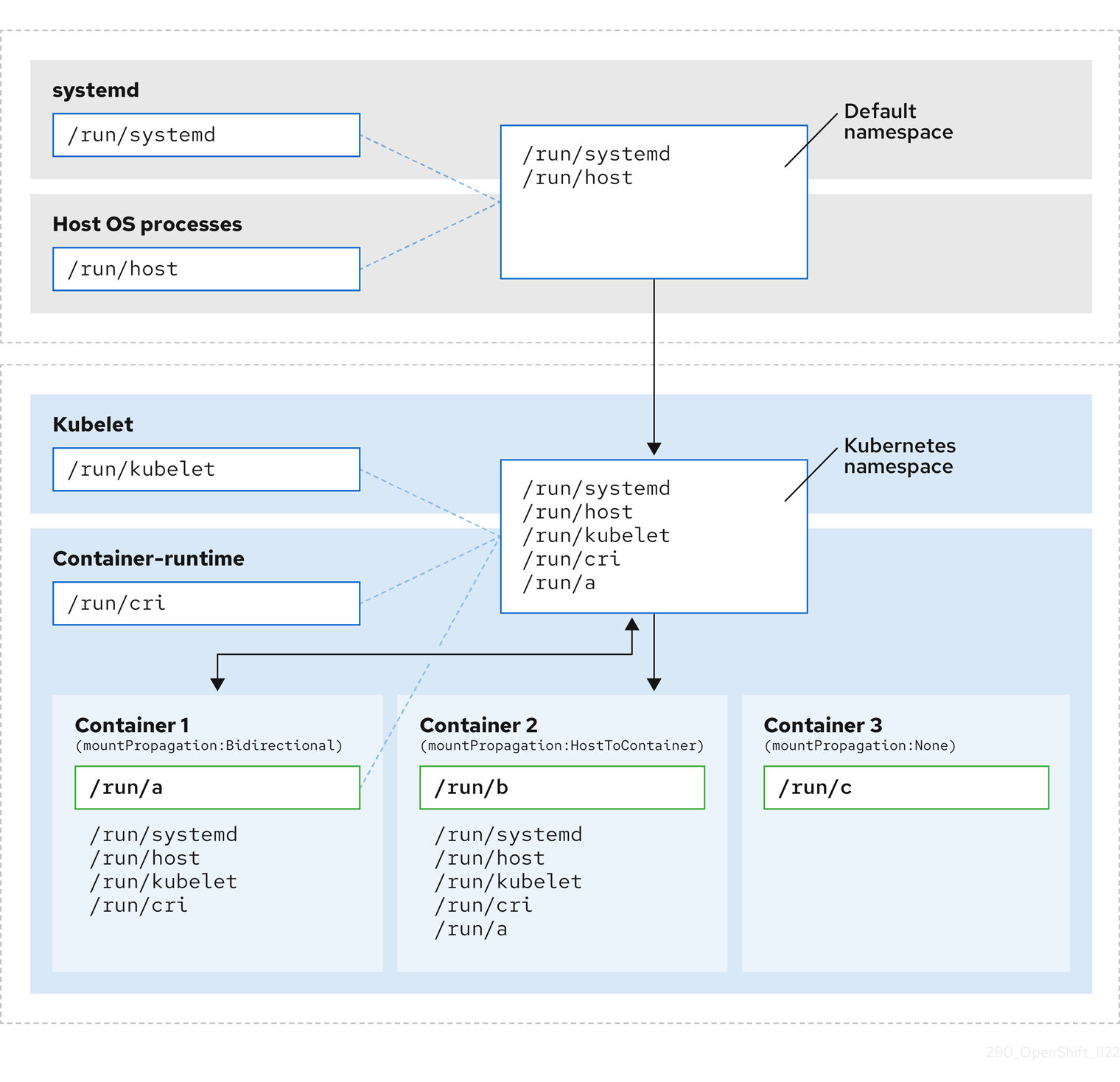

crunfor all node roles.kubelet config and container mount hiding

Reduces the frequency of kubelet housekeeping and eviction monitoring to reduce CPU usage. Create a container mount namespace, visible to kubelet and CRI-O, to reduce system mount scanning resource usage.

SCTP

Optional configuration (enabled by default) Enables SCTP. SCTP is required by RAN applications but disabled by default in RHCOS.

kdump

Optional configuration (enabled by default) Enables kdump to capture debug information when a kernel panic occurs.

CRI-O wipe disable

Disables automatic wiping of the CRI-O image cache after unclean shutdown.

SR-IOV-related kernel arguments

Includes additional SR-IOV related arguments in the kernel command line.

RCU Normal systemd service

Sets

rcu_normalafter the system is fully started.One-shot time sync

Runs a one-time system time synchronization job for control plane or worker nodes.

3.2.3.12. Reference design deployment components

The following sections describe the various OpenShift Container Platform components and configurations that you use to configure the hub cluster with Red Hat Advanced Cluster Management (RHACM).

3.2.3.12.1. Red Hat Advanced Cluster Management (RHACM)

- New in this release

- Additional node labels can be configured during installation.

- Description

RHACM provides Multi Cluster Engine (MCE) installation and ongoing lifecycle management functionality for deployed clusters. You declaratively specify configurations and upgrades with

PolicyCRs and apply the policies to clusters with the RHACM policy controller as managed by Topology Aware Lifecycle Manager.- GitOps Zero Touch Provisioning (ZTP) uses the MCE feature of RHACM

- Configuration, upgrades, and cluster status are managed with the RHACM policy controller

- Limits and requirements

-

A single hub cluster supports up to 3500 deployed single-node OpenShift clusters with 5

PolicyCRs bound to each cluster.

-

A single hub cluster supports up to 3500 deployed single-node OpenShift clusters with 5

- Engineering considerations

-

Cluster specific configuration: managed clusters typically have some number of configuration values that are specific to the individual cluster. These configurations should be managed using RHACM policy hub-side templating with values pulled from

ConfigMapCRs based on the cluster name. - To save CPU resources on managed clusters, policies that apply static configurations should be unbound from managed clusters after GitOps ZTP installation of the cluster. For more information, see Release a persistent volume.

-

Cluster specific configuration: managed clusters typically have some number of configuration values that are specific to the individual cluster. These configurations should be managed using RHACM policy hub-side templating with values pulled from

3.2.3.12.2. Topology Aware Lifecycle Manager (TALM)

- New in this release

- Added support for pre-caching additional user-specified images

- Description

- Managed updates

TALM is an Operator that runs only on the hub cluster for managing how changes (including cluster and Operator upgrades, configuration, and so on) are rolled out to the network. TALM does the following:

-

Progressively applies updates to fleets of clusters in user-configurable batches by using

PolicyCRs. -

Adds

ztp-donelabels or other user configurable labels on a per-cluster basis

-

Progressively applies updates to fleets of clusters in user-configurable batches by using

- Precaching for single-node OpenShift clusters

TALM supports optional precaching of OpenShift Container Platform, OLM Operator, and additional user images to single-node OpenShift clusters before initiating an upgrade.

A new

PreCachingConfigcustom resource is available for specifying optional pre-caching configurations. For example:apiVersion: ran.openshift.io/v1alpha1 kind: PreCachingConfig metadata: name: example-config namespace: example-ns spec: additionalImages: - quay.io/foobar/application1@sha256:3d5800990dee7cd4727d3fe238a97e2d2976d3808fc925ada29c559a47e2e - quay.io/foobar/application2@sha256:3d5800123dee7cd4727d3fe238a97e2d2976d3808fc925ada29c559a47adf - quay.io/foobar/applicationN@sha256:4fe1334adfafadsf987123adfffdaf1243340adfafdedga0991234afdadfs spaceRequired: 45 GiB1 overrides: preCacheImage: quay.io/test_images/pre-cache:latest platformImage: quay.io/openshift-release-dev/ocp-release@sha256:3d5800990dee7cd4727d3fe238a97e2d2976d3808fc925ada29c559a47e2e operatorsIndexes: - registry.example.com:5000/custom-redhat-operators:1.0.0 operatorsPackagesAndChannels: - local-storage-operator: stable - ptp-operator: stable - sriov-network-operator: stable excludePrecachePatterns:2 - aws - vsphere

- Backup and restore for single-node OpenShift

- TALM supports taking a snapshot of the cluster operating system and configuration to a dedicated partition on a local disk. A restore script is provided that returns the cluster to the backed up state.

- Limits and requirements

- TALM supports concurrent cluster deployment in batches of 400

- Precaching and backup features are for single-node OpenShift clusters only.

- Engineering considerations

-

The

PreCachingConfigCR is optional and does not need to be created if you just wants to precache platform related (OpenShift and OLM Operator) images. ThePreCachingConfigCR must be applied before referencing it in theClusterGroupUpgradeCR. - Create a recovery partition during installation if you opt to use the TALM backup and restore feature.

-

The

3.2.3.12.3. GitOps and GitOps ZTP plugins

- New in this release

- GA support for inclusion of user-provided CRs in Git for GitOps ZTP deployments

- GitOps ZTP independence from the deployed cluster version

- Description

GitOps and GitOps ZTP plugins provide a GitOps-based infrastructure for managing cluster deployment and configuration. Cluster definitions and configurations are maintained as a declarative state in Git. ZTP plugins provide support for generating installation CRs from the

SiteConfigCR and automatic wrapping of configuration CRs in policies based onPolicyGenTemplateCRs.You can deploy and manage multiple versions of OpenShift Container Platform on managed clusters with the baseline reference configuration CRs in a

/source-crssubdirectory provided that subdirectory also contains thekustomization.yamlfile. You add user-provided CRs to this subdirectory that you use with the predefined CRs that are specified in thePolicyGenTemplateCRs. This allows you to tailor your configurations to suit your specific requirements and provides GitOps ZTP version independence between managed clusters and the hub cluster.For more information, see the following:

- Limits

-

300

SiteConfigCRs per ArgoCD application. You can use multiple applications to achieve the maximum number of clusters supported by a single hub cluster. -

Content in the

/source-crsfolder in Git overrides content provided in the GitOps ZTP plugin container. Git takes precedence in the search path. Add the

/source-crsfolder in the same directory as thekustomization.yamlfile, which includes thePolicyGenTemplateas a generator.NoteAlternative locations for the

/source-crsdirectory are not supported in this context.

-

300

- Engineering considerations

-

To avoid confusion or unintentional overwriting of files when updating content, use unique and distinguishable names for user-provided CRs in the

/source-crsfolder and extra manifests in Git. -

The

SiteConfigCR allows multiple extra-manifest paths. When files with the same name are found in multiple directory paths, the last file found takes precedence. This allows the full set of version specific Day 0 manifests (extra-manifests) to be placed in Git and referenced from theSiteConfig. With this feature, you can deploy multiple OpenShift Container Platform versions to managed clusters simultaneously. -

The

extraManifestPathfield of theSiteConfigCR is deprecated from OpenShift Container Platform 4.15 and later. Use the newextraManifests.searchPathsfield instead.

-

To avoid confusion or unintentional overwriting of files when updating content, use unique and distinguishable names for user-provided CRs in the

3.2.3.12.4. Agent-based installer

- New in this release

- No reference design updates in this release

- Description

Agent-based installer (ABI) provides installation capabilities without centralized infrastructure. The installation program creates an ISO image that you mount to the server. When the server boots it installs OpenShift Container Platform and supplied extra manifests.

NoteYou can also use ABI to install OpenShift Container Platform clusters without a hub cluster. An image registry is still required when you use ABI in this manner.

Agent-based installer (ABI) is an optional component.

- Limits and requirements

- You can supply a limited set of additional manifests at installation time.

-

You must include

MachineConfigurationCRs that are required by the RAN DU use case.

- Engineering considerations

- ABI provides a baseline OpenShift Container Platform installation.

- You install Day 2 Operators and the remainder of the RAN DU use case configurations after installation.

3.2.3.13. Additional components

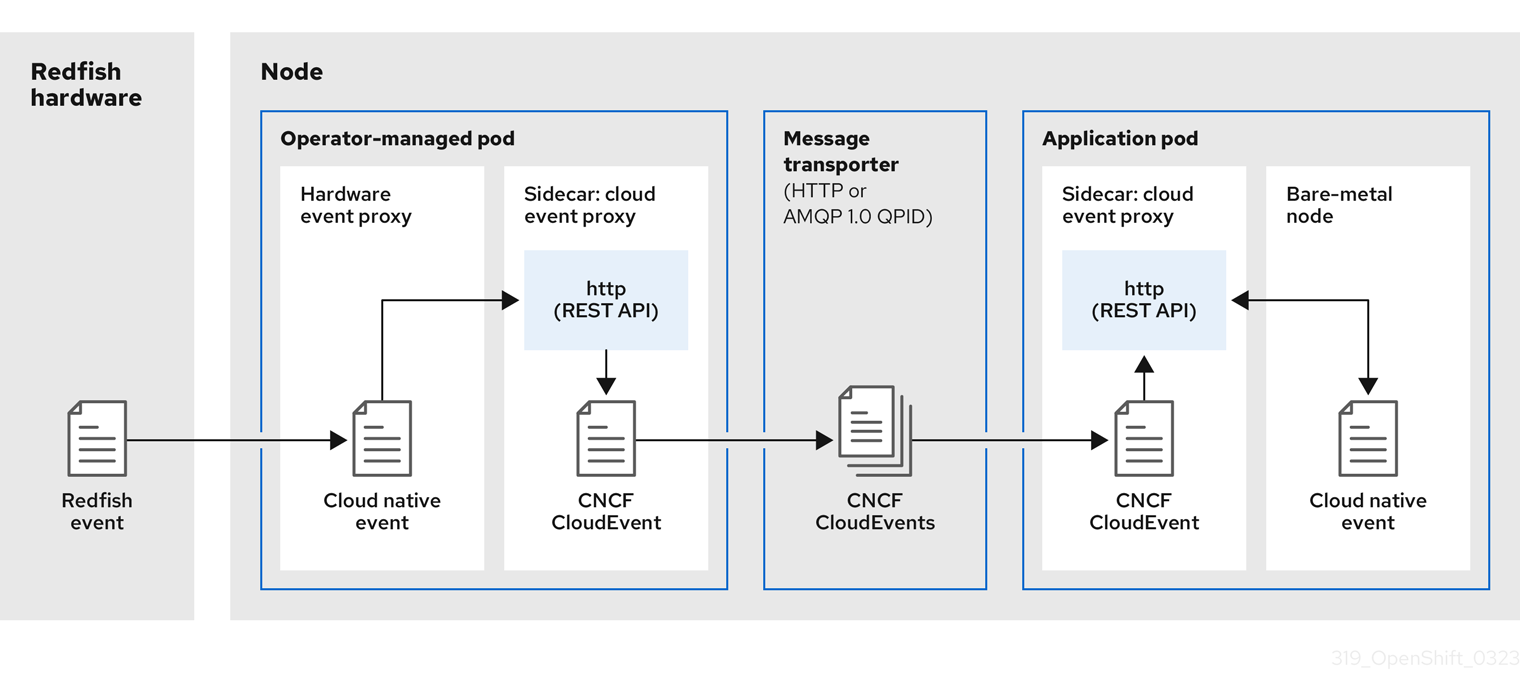

3.2.3.13.1. Bare Metal Event Relay

The Bare Metal Event Relay is an optional Operator that runs exclusively on the managed spoke cluster. It relays Redfish hardware events to cluster applications.

The Bare Metal Event Relay is not included in the RAN DU use model reference configuration and is an optional feature. If you want to use the Bare Metal Event Relay, assign additional CPU resources from the application CPU budget.

3.2.4. Telco RAN distributed unit (DU) reference configuration CRs

Use the following custom resources (CRs) to configure and deploy OpenShift Container Platform clusters with the telco RAN DU profile. Some of the CRs are optional depending on your requirements. CR fields you can change are annotated in the CR with YAML comments.

You can extract the complete set of RAN DU CRs from the ztp-site-generate container image. See Preparing the GitOps ZTP site configuration repository for more information.

3.2.4.1. Day 2 Operators reference CRs

| Component | Reference CR | Optional | New in this release |

|---|---|---|---|

| Cluster logging | No | No | |

| Cluster logging | No | No | |

| Cluster logging | No | No | |

| Cluster logging | No | No | |

| Cluster logging | No | No | |

| Local Storage Operator | Yes | No | |

| Local Storage Operator | Yes | No | |

| Local Storage Operator | Yes | No | |

| Local Storage Operator | Yes | No | |

| Local Storage Operator | Yes | No | |

| Node Tuning Operator | No | No | |

| Node Tuning Operator | No | No | |

| PTP fast event notifications | Yes | No | |

| PTP Operator | No | No | |

| PTP Operator | No | Yes | |

| PTP Operator | No | No | |

| PTP Operator | No | No | |

| PTP Operator | No | No | |

| PTP Operator | No | No | |

| PTP Operator | No | No | |

| SR-IOV FEC Operator | Yes | No | |

| SR-IOV FEC Operator | Yes | No | |

| SR-IOV FEC Operator | Yes | No | |

| SR-IOV FEC Operator | Yes | No | |

| SR-IOV Operator | No | No | |

| SR-IOV Operator | No | No | |

| SR-IOV Operator | No | No | |

| SR-IOV Operator | No | No | |

| SR-IOV Operator | No | No | |

| SR-IOV Operator | No | No |

3.2.4.2. Cluster tuning reference CRs

| Component | Reference CR | Optional | New in this release |

|---|---|---|---|

| Cluster capabilities | No | No | |

| Disabling network diagnostics | No | No | |

| Monitoring configuration | No | No | |

| OperatorHub | No | No | |

| OperatorHub | No | No | |

| OperatorHub | No | No | |

| OperatorHub | No | No |

3.2.4.3. Machine configuration reference CRs

| Component | Reference CR | Optional | New in this release |

|---|---|---|---|

| Container runtime (crun) | No | No | |

| Container runtime (crun) | No | No | |

| Disabling CRI-O wipe | No | No | |

| Disabling CRI-O wipe | No | No | |

| Enabling kdump | No | Yes | |

| Enabling kdump | No | Yes | |

| Enabling kdump | No | No | |

| Enabling kdump | No | No | |

| Kubelet configuration and container mount hiding | No | No | |