Advanced networking

Specialized and advanced networking topics in OpenShift Container Platform

Abstract

Chapter 1. Verifying connectivity to an endpoint

The Cluster Network Operator (CNO) runs a controller, the connectivity check controller, that performs a connection health check between resources within your cluster. By reviewing the results of the health checks, you can diagnose connection problems or eliminate network connectivity as the cause of an issue that you are investigating.

1.1. Connection health checks that are performed

To verify that cluster resources are reachable, a TCP connection is made to each of the following cluster API services:

- Kubernetes API server service

- Kubernetes API server endpoints

- OpenShift API server service

- OpenShift API server endpoints

- Load balancers

To verify that services and service endpoints are reachable on every node in the cluster, a TCP connection is made to each of the following targets:

- Health check target service

- Health check target endpoints

1.2. Implementation of connection health checks

The connectivity check controller orchestrates connection verification checks in your cluster. The results for the connection tests are stored in PodNetworkConnectivity objects in the openshift-network-diagnostics namespace. Connection tests are performed every minute in parallel.

The Cluster Network Operator (CNO) deploys several resources to the cluster to send and receive connectivity health checks:

- Health check source

-

This program deploys in a single pod replica set managed by a

Deploymentobject. The program consumesPodNetworkConnectivityobjects and connects to thespec.targetEndpointspecified in each object. - Health check target

- A pod deployed as part of a daemon set on every node in the cluster. The pod listens for inbound health checks. The presence of this pod on every node allows for the testing of connectivity to each node.

You can configure the nodes which network connectivity sources and targets run on with a node selector. Additionally, you can specify permissible tolerations for source and target pods. The configuration is defined in the singleton cluster custom resource of the Network API in the config.openshift.io/v1 API group.

Pod scheduling occurs after you have updated the configuration. Therefore, you must apply node labels that you intend to use in your selectors before updating the configuration. Labels applied after updating your network connectivity check pod placement are ignored.

Refer to the default configuration in the following YAML:

Default configuration for connectivity source and target pods

apiVersion: config.openshift.io/v1

kind: Network

metadata:

name: cluster

spec:

# ...

networkDiagnostics:

mode: "All"

sourcePlacement:

nodeSelector:

checkNodes: groupA

tolerations:

- key: myTaint

effect: NoSchedule

operator: Exists

targetPlacement:

nodeSelector:

checkNodes: groupB

tolerations:

- key: myOtherTaint

effect: NoExecute

operator: Exists- 1

- Specifies the network diagnostics configuration. If a value is not specified or an empty object is specified, and

spec.disableNetworkDiagnostics=trueis set in thenetwork.operator.openshift.iocustom resource namedcluster, network diagnostics are disabled. If set, this value overridesspec.disableNetworkDiagnostics=true. - 2

- Specifies the diagnostics mode. The value can be the empty string,

All, orDisabled. The empty string is equivalent to specifyingAll. - 3

- Optional: Specifies a selector for connectivity check source pods. You can use the

nodeSelectorandtolerationsfields to further specify thesourceNodepods. These are optional for both source and target pods. You can omit them, use both, or use only one of them. - 4

- Optional: Specifies a selector for connectivity check target pods. You can use the

nodeSelectorandtolerationsfields to further specify thetargetNodepods. These are optional for both source and target pods. You can omit them, use both, or use only one of them.

1.3. Configuring pod connectivity check placement

As a cluster administrator, you can configure which nodes the connectivity check pods run by modifying the network.config.openshift.io object named cluster.

Prerequisites

-

Install the OpenShift CLI (

oc).

Procedure

Edit the connectivity check configuration by entering the following command:

$ oc edit network.config.openshift.io cluster-

In the text editor, update the

networkDiagnosticsstanza to specify the node selectors that you want for the source and target pods. - Save your changes and exit the text editor.

Verification

- Verify that the source and target pods are running on the intended nodes by entering the following command:

$ oc get pods -n openshift-network-diagnostics -o wideExample output

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

network-check-source-84c69dbd6b-p8f7n 1/1 Running 0 9h 10.131.0.8 ip-10-0-40-197.us-east-2.compute.internal <none> <none>

network-check-target-46pct 1/1 Running 0 9h 10.131.0.6 ip-10-0-40-197.us-east-2.compute.internal <none> <none>

network-check-target-8kwgf 1/1 Running 0 9h 10.128.2.4 ip-10-0-95-74.us-east-2.compute.internal <none> <none>

network-check-target-jc6n7 1/1 Running 0 9h 10.129.2.4 ip-10-0-21-151.us-east-2.compute.internal <none> <none>

network-check-target-lvwnn 1/1 Running 0 9h 10.128.0.7 ip-10-0-17-129.us-east-2.compute.internal <none> <none>

network-check-target-nslvj 1/1 Running 0 9h 10.130.0.7 ip-10-0-89-148.us-east-2.compute.internal <none> <none>

network-check-target-z2sfx 1/1 Running 0 9h 10.129.0.4 ip-10-0-60-253.us-east-2.compute.internal <none> <none>1.4. PodNetworkConnectivityCheck object fields

The PodNetworkConnectivityCheck object fields are described in the following tables.

| Field | Type | Description |

|---|---|---|

|

|

|

The name of the object in the following format:

|

|

|

|

The namespace that the object is associated with. This value is always |

|

|

|

The name of the pod where the connection check originates, such as |

|

|

|

The target of the connection check, such as |

|

|

| Configuration for the TLS certificate to use. |

|

|

| The name of the TLS certificate used, if any. The default value is an empty string. |

|

|

| An object representing the condition of the connection test and logs of recent connection successes and failures. |

|

|

| The latest status of the connection check and any previous statuses. |

|

|

| Connection test logs from unsuccessful attempts. |

|

|

| Connect test logs covering the time periods of any outages. |

|

|

| Connection test logs from successful attempts. |

The following table describes the fields for objects in the status.conditions array:

| Field | Type | Description |

|---|---|---|

|

|

| The time that the condition of the connection transitioned from one status to another. |

|

|

| The details about last transition in a human readable format. |

|

|

| The last status of the transition in a machine readable format. |

|

|

| The status of the condition. |

|

|

| The type of the condition. |

The following table describes the fields for objects in the status.conditions array:

| Field | Type | Description |

|---|---|---|

|

|

| The timestamp from when the connection failure is resolved. |

|

|

| Connection log entries, including the log entry related to the successful end of the outage. |

|

|

| A summary of outage details in a human readable format. |

|

|

| The timestamp from when the connection failure is first detected. |

|

|

| Connection log entries, including the original failure. |

1.4.1. Connection log fields

The fields for a connection log entry are described in the following table. The object is used in the following fields:

-

status.failures[] -

status.successes[] -

status.outages[].startLogs[] -

status.outages[].endLogs[]

| Field | Type | Description |

|---|---|---|

|

|

| Records the duration of the action. |

|

|

| Provides the status in a human readable format. |

|

|

|

Provides the reason for status in a machine readable format. The value is one of |

|

|

| Indicates if the log entry is a success or failure. |

|

|

| The start time of connection check. |

1.5. Verifying network connectivity for an endpoint

As a cluster administrator, you can verify the connectivity of an endpoint, such as an API server, load balancer, service, or pod, and verify that network diagnostics is enabled.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Access to the cluster as a user with the

cluster-adminrole.

Procedure

Confirm that network diagnostics are enable by entering the following command:

$ oc get network.config.openshift.io cluster -o yamlExample output

# ... status: # ... conditions: - lastTransitionTime: "2024-05-27T08:28:39Z" message: "" reason: AsExpected status: "True" type: NetworkDiagnosticsAvailableList the current

PodNetworkConnectivityCheckobjects by entering the following command:$ oc get podnetworkconnectivitycheck -n openshift-network-diagnosticsExample output

NAME AGE network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-1 73m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-2 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-kubernetes-apiserver-service-cluster 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-kubernetes-default-service-cluster 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-load-balancer-api-external 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-load-balancer-api-internal 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-ci-ln-x5sv9rb-f76d1-4rzrp-master-0 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-ci-ln-x5sv9rb-f76d1-4rzrp-master-1 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-ci-ln-x5sv9rb-f76d1-4rzrp-master-2 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh 74m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-ci-ln-x5sv9rb-f76d1-4rzrp-worker-c-n8mbf 74m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-ci-ln-x5sv9rb-f76d1-4rzrp-worker-d-4hnrz 74m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-network-check-target-service-cluster 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-openshift-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-openshift-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-1 75m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-openshift-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-2 74m network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-openshift-apiserver-service-cluster 75mView the connection test logs:

- From the output of the previous command, identify the endpoint that you want to review the connectivity logs for.

View the object by entering the following command:

$ oc get podnetworkconnectivitycheck <name> \ -n openshift-network-diagnostics -o yamlwhere

<name>specifies the name of thePodNetworkConnectivityCheckobject.Example output

apiVersion: controlplane.operator.openshift.io/v1alpha1 kind: PodNetworkConnectivityCheck metadata: name: network-check-source-ci-ln-x5sv9rb-f76d1-4rzrp-worker-b-6xdmh-to-kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0 namespace: openshift-network-diagnostics ... spec: sourcePod: network-check-source-7c88f6d9f-hmg2f targetEndpoint: 10.0.0.4:6443 tlsClientCert: name: "" status: conditions: - lastTransitionTime: "2021-01-13T20:11:34Z" message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnectSuccess status: "True" type: Reachable failures: - latency: 2.241775ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:10:34Z" - latency: 2.582129ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:09:34Z" - latency: 3.483578ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:08:34Z" outages: - end: "2021-01-13T20:11:34Z" endLogs: - latency: 2.032018ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T20:11:34Z" - latency: 2.241775ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:10:34Z" - latency: 2.582129ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:09:34Z" - latency: 3.483578ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:08:34Z" message: Connectivity restored after 2m59.999789186s start: "2021-01-13T20:08:34Z" startLogs: - latency: 3.483578ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: failed to establish a TCP connection to 10.0.0.4:6443: dial tcp 10.0.0.4:6443: connect: connection refused' reason: TCPConnectError success: false time: "2021-01-13T20:08:34Z" successes: - latency: 2.845865ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:14:34Z" - latency: 2.926345ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:13:34Z" - latency: 2.895796ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:12:34Z" - latency: 2.696844ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:11:34Z" - latency: 1.502064ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:10:34Z" - latency: 1.388857ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:09:34Z" - latency: 1.906383ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:08:34Z" - latency: 2.089073ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:07:34Z" - latency: 2.156994ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:06:34Z" - latency: 1.777043ms message: 'kubernetes-apiserver-endpoint-ci-ln-x5sv9rb-f76d1-4rzrp-master-0: tcp connection to 10.0.0.4:6443 succeeded' reason: TCPConnect success: true time: "2021-01-13T21:05:34Z"

Chapter 2. Changing the MTU for the cluster network

As a cluster administrator, you can change the maximum transmission unit (MTU) for the cluster network after cluster installation. This change is disruptive as cluster nodes must be rebooted to finalize the MTU change.

2.1. About the cluster MTU

During installation, the cluster network maximum transmission unit (MTU) is set automatically based on the primary network interface MTU of cluster nodes. Typically, you do not need to override the detected MTU, but in some instances you must override it.

You might want to change the MTU of the cluster network for one of the following reasons:

- The MTU detected during cluster installation is not correct for your infrastructure.

- Your cluster infrastructure now requires a different MTU, such as from the addition of nodes that need a different MTU for optimal performance.

Only the OVN-Kubernetes network plugin supports changing the MTU value.

2.1.1. Service interruption considerations

When you initiate a maximum transmission unit (MTU) change on your cluster the following effects might impact service availability:

- At least two rolling reboots are required to complete the migration to a new MTU. During this time, some nodes are not available as they restart.

- Specific applications deployed to the cluster with shorter timeout intervals than the absolute TCP timeout interval might experience disruption during the MTU change.

2.1.2. MTU value selection

When planning your maximum transmission unit (MTU) migration there are two related but distinct MTU values to consider.

- Hardware MTU: This MTU value is set based on the specifics of your network infrastructure.

-

Cluster network MTU: This MTU value is always less than your hardware MTU to account for the cluster network overlay overhead. The specific overhead is determined by your network plugin. For OVN-Kubernetes, the overhead is

100bytes.

If your cluster requires different MTU values for different nodes, you must subtract the overhead value for your network plugin from the lowest MTU value that is used by any node in your cluster. For example, if some nodes in your cluster have an MTU of 9001, and some have an MTU of 1500, you must set this value to 1400.

To avoid selecting an MTU value that is not acceptable by a node, verify the maximum MTU value (maxmtu) that is accepted by the network interface by using the ip -d link command.

2.1.3. How the migration process works

The following table summarizes the migration process by segmenting between the user-initiated steps in the process and the actions that the migration performs in response.

| User-initiated steps | OpenShift Container Platform activity |

|---|---|

| Set the following values in the Cluster Network Operator configuration:

| Cluster Network Operator (CNO): Confirms that each field is set to a valid value.

If the values provided are valid, the CNO writes out a new temporary configuration with the MTU for the cluster network set to the value of the Machine Config Operator (MCO): Performs a rolling reboot of each node in the cluster. |

| Reconfigure the MTU of the primary network interface for the nodes on the cluster. You can use one of the following methods to accomplish this:

| N/A |

|

Set the | Machine Config Operator (MCO): Performs a rolling reboot of each node in the cluster with the new MTU configuration. |

2.2. Changing the cluster network MTU

As a cluster administrator, you can increase or decrease the maximum transmission unit (MTU) for your cluster.

You cannot roll back an MTU value for nodes during the MTU migration process, but you can roll back the value after the MTU migration process completes.

The migration is disruptive and nodes in your cluster might be temporarily unavailable as the MTU update takes effect.

The following procedures describe how to change the cluster network MTU by using machine configs, Dynamic Host Configuration Protocol (DHCP), or an ISO image. If you use either the DHCP or ISO approaches, you must refer to configuration artifacts that you kept after installing your cluster to complete the procedure.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You have access to the cluster using an account with

cluster-adminpermissions. -

You have identified the target MTU for your cluster. The MTU for the OVN-Kubernetes network plugin must be set to

100less than the lowest hardware MTU value in your cluster. - If your nodes are physical machines, ensure that the cluster network and the connected network switches support jumbo frames.

- If your nodes are virtual machines (VMs), ensure that the hypervisor and the connected network switches support jumbo frames.

2.2.1. Checking the current cluster MTU value

To ensure network stability and performance in a hybrid environment where part of your cluster is in the cloud and part is an on-premise environment, you can obtain the current maximum transmission unit (MTU) for the cluster network.

Procedure

To obtain the current MTU for the cluster network, enter the following command:

$ oc describe network.config clusterExample output

... Status: Cluster Network: Cidr: 10.217.0.0/22 Host Prefix: 23 Cluster Network MTU: 1400 Network Type: OVNKubernetes Service Network: 10.217.4.0/23 ...

2.2.2. Preparing your hardware MTU configuration

To maintain network stability during an MTU change, you must prepare the configuration for your underlying hardware using a method such as DHCP, PXE, or NetworkManager. This preparation ensures that all cluster nodes are ready to accept the new MTU value before you apply the changes to the cluster network.

Procedure

Prepare your configuration for the hardware MTU:

If your hardware MTU is specified with DHCP, update your DHCP configuration such as with the following dnsmasq configuration:

dhcp-option-force=26,<mtu>where:

<mtu>- Specifies the hardware MTU for the DHCP server to advertise.

- If your hardware MTU is specified with a kernel command line with PXE, update that configuration accordingly.

- If your hardware MTU is specified in a NetworkManager connection configuration, complete the following steps. This approach is the default for OpenShift Container Platform if you do not explicitly specify your network configuration with DHCP, a kernel command line, or some other method. Your cluster nodes must all use the same underlying network configuration for the following procedure to work unmodified.

Find the primary network interface by entering the following command:

$ oc debug node/<node_name> -- chroot /host nmcli -g connection.interface-name c show ovs-if-phys0where:

<node_name>- Specifies the name of a node in your cluster.

Create the following

NetworkManagerconfiguration in the<interface>-mtu.conffile:[connection-<interface>-mtu] match-device=interface-name:<interface> ethernet.mtu=<mtu>where:

<interface>- Specifies the primary network interface name.

<mtu>- Specifies the new hardware MTU value.

-

If you used Kubernetes NMState to configure the

br-exbridge, use the Kubernetes NMState Operator to update the MTU for thebr-exbridge. Changing the MTU for this bridge in a.nmconnectionfile could lead to persistence issues as the Machine Config Operator (MCO) might overwrite the file.

2.2.3. Creating MachineConfig objects

To prepare your nodes for a hardware MTU change, you must create MachineConfig objects for both control plane and compute nodes. Creating these objects ensures that the updated network interface settings are ready for deployment without causing immediate cluster instability.

Procedure

Create two

MachineConfigobjects, one for the control plane nodes and another for the worker nodes in your cluster:Create the following Butane config in the

control-plane-interface.bufile:NoteThe Butane version you specify in the config file should match the OpenShift Container Platform version and always ends in

0. For example,4.19.0. See "Creating machine configs with Butane" for information about Butane.variant: openshift version: 4.19.0 metadata: name: 01-control-plane-interface labels: machineconfiguration.openshift.io/role: master storage: files: - path: /etc/NetworkManager/conf.d/99-<interface>-mtu.conf contents: local: <interface>-mtu.conf mode: 0600where:

storage.files.path-

Specifies the

NetworkManagerconnection name for the primary network interface. storage.files.local-

Specifies the local filename for the updated

NetworkManagerconfiguration file from an earlier step.

Create the following Butane config in the

worker-interface.bufile:NoteThe Butane version you specify in the config file should match the OpenShift Container Platform version and always ends in

0. For example,4.19.0. See "Creating machine configs with Butane" for information about Butane.variant: openshift version: 4.19.0 metadata: name: 01-worker-interface labels: machineconfiguration.openshift.io/role: worker storage: files: - path: /etc/NetworkManager/conf.d/99-<interface>-mtu.conf contents: local: <interface>-mtu.conf mode: 0600where:

storage.files.path-

Specifies the

NetworkManagerconnection name for the primary network interface. storage.files.local-

Specifies the local filename for the updated

NetworkManagerconfiguration file from an earlier step.

Create

MachineConfigobjects from the Butane configs by running the following command:$ for manifest in control-plane-interface worker-interface; do butane --files-dir . $manifest.bu > $manifest.yaml doneWarningDo not apply these machine configs until explicitly instructed later in this procedure. Applying these machine configs now causes a loss of stability for the cluster.

2.2.4. Beginning the MTU migration

Start the maximum transmission unit (MTU) migration by specifying the migration configuration for the cluster network and machine interfaces. The Machine Config Operator performs a rolling reboot of the nodes to prepare the cluster for the MTU change.

Procedure

To begin the MTU migration, specify the migration configuration by entering the following command. The Machine Config Operator performs a rolling reboot of the nodes in the cluster in preparation for the MTU change.

$ oc patch Network.operator.openshift.io cluster --type=merge --patch \ '{"spec": { "migration": { "mtu": { "network": { "from": <overlay_from>, "to": <overlay_to> } , "machine": { "to" : <machine_to> } } } } }'where:

<overlay_from>- Specifies the current cluster network MTU value.

<overlay_to>-

Specifies the target MTU for the cluster network. This value is set relative to the value of

<machine_to>. For OVN-Kubernetes, this value must be100less than the value of<machine_to>. <machine_to>- Specifies the MTU for the primary network interface on the underlying host network.

$ oc patch Network.operator.openshift.io cluster --type=merge --patch \ '{"spec": { "migration": { "mtu": { "network": { "from": 1400, "to": 9000 } , "machine": { "to" : 9100} } } } }'As the Machine Config Operator updates machines in each machine config pool, the Operator reboots each node one by one. You must wait until all the nodes are updated. Check the machine config pool status by entering the following command:

$ oc get machineconfigpoolsA successfully updated node has the following status:

UPDATED=true,UPDATING=false,DEGRADED=false.NoteBy default, the Machine Config Operator updates one machine per pool at a time, causing the total time the migration takes to increase with the size of the cluster.

2.2.5. Verifying the machine configuration

Verify the machine configuration on your hosts to confirm that the maximum transmission unit (MTU) migration applied successfully. Checking the configuration state and system settings help ensures that the nodes use the correct migration script.

Procedure

Confirm the status of the new machine configuration on the hosts:

To list the machine configuration state and the name of the applied machine configuration, enter the following command:

$ oc describe node | egrep "hostname|machineconfig"Example output

kubernetes.io/hostname=master-0 machineconfiguration.openshift.io/currentConfig: rendered-master-c53e221d9d24e1c8bb6ee89dd3d8ad7b machineconfiguration.openshift.io/desiredConfig: rendered-master-c53e221d9d24e1c8bb6ee89dd3d8ad7b machineconfiguration.openshift.io/reason: machineconfiguration.openshift.io/state: DoneVerify that the following statements are true:

-

The value of

machineconfiguration.openshift.io/statefield isDone. -

The value of the

machineconfiguration.openshift.io/currentConfigfield is equal to the value of themachineconfiguration.openshift.io/desiredConfigfield.

-

The value of

To confirm that the machine config is correct, enter the following command:

$ oc get machineconfig <config_name> -o yaml | grep ExecStartwhere:

<config_name>-

Specifies the name of the machine config from the

machineconfiguration.openshift.io/currentConfigfield.

The machine config must include the following update to the systemd configuration:

ExecStart=/usr/local/bin/mtu-migration.sh

2.2.6. Applying the new hardware MTU value

To ensure consistent network communication across your cluster, you must apply the new hardware maximum transmission unit (MTU) value to your nodes. This process involves updating the underlying network interfaces and verifying that the Machine Config Operator successfully reboots and updates each node.

Procedure

Update the underlying network interface MTU value:

If you are specifying the new MTU with a NetworkManager connection configuration, enter the following command. The MachineConfig Operator automatically performs a rolling reboot of the nodes in your cluster.

$ for manifest in control-plane-interface worker-interface; do oc create -f $manifest.yaml done- If you are specifying the new MTU with a DHCP server option or a kernel command line and PXE, make the necessary changes for your infrastructure.

As the Machine Config Operator updates machines in each machine config pool, the Operator reboots each node one by one. You must wait until all the nodes are updated. Check the machine config pool status by entering the following command:

$ oc get machineconfigpoolsA successfully updated node has the following status:

UPDATED=true,UPDATING=false,DEGRADED=false.NoteBy default, the Machine Config Operator updates one machine per pool at a time, causing the total time the migration takes to increase with the size of the cluster.

Confirm the status of the new machine configuration on the hosts:

To list the machine configuration state and the name of the applied machine configuration, enter the following command:

$ oc describe node | egrep "hostname|machineconfig"Example output

kubernetes.io/hostname=master-0 machineconfiguration.openshift.io/currentConfig: rendered-master-c53e221d9d24e1c8bb6ee89dd3d8ad7b machineconfiguration.openshift.io/desiredConfig: rendered-master-c53e221d9d24e1c8bb6ee89dd3d8ad7b machineconfiguration.openshift.io/reason: machineconfiguration.openshift.io/state: DoneVerify that the following statements are true:

-

The value of

machineconfiguration.openshift.io/statefield isDone. -

The value of the

machineconfiguration.openshift.io/currentConfigfield is equal to the value of themachineconfiguration.openshift.io/desiredConfigfield.

-

The value of

To confirm that the machine config is correct, enter the following command:

$ oc get machineconfig <config_name> -o yaml | grep path:where:

<config_name>-

Specifies the name of the machine config from the

machineconfiguration.openshift.io/currentConfigfield.

If the machine config is successfully deployed, the previous output contains the

/etc/NetworkManager/conf.d/99-<interface>-mtu.conffile path and theExecStart=/usr/local/bin/mtu-migration.shline.

2.2.7. Finalizing the MTU migration

Finalize the MTU migration to apply the new maximum transmission unit (MTU) settings to the OVN-Kubernetes network plugin. This updates the cluster configuration and triggers a rolling reboot of the nodes to complete the process.

Procedure

To finalize the MTU migration, enter the following command for the OVN-Kubernetes network plugin:

$ oc patch Network.operator.openshift.io cluster --type=merge --patch \ '{"spec": { "migration": null, "defaultNetwork":{ "ovnKubernetesConfig": { "mtu": <mtu> }}}}'where:

<mtu>-

Specifies the new cluster network MTU that you specified with

<overlay_to>.

After finalizing the MTU migration, each machine config pool node is rebooted one by one. You must wait until all the nodes are updated. Check the machine config pool status by entering the following command:

$ oc get machineconfigpoolsA successfully updated node has the following status:

UPDATED=true,UPDATING=false,DEGRADED=false.

Verification

To get the current MTU for the cluster network, enter the following command:

$ oc describe network.config clusterGet the current MTU for the primary network interface of a node:

To list the nodes in your cluster, enter the following command:

$ oc get nodesTo obtain the current MTU setting for the primary network interface on a node, enter the following command:

$ oc adm node-logs <node> -u ovs-configuration | grep configure-ovs.sh | grep mtu | grep <interface> | head -1where:

<node>- Specifies a node from the output from the previous step.

<interface>- Specifies the primary network interface name for the node.

Example output

ens3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 8051

Chapter 3. Network bonding considerations

You can use network bonding, also known as link aggregration, to combine many network interfaces into a single, logical interface. This means that you can use different modes for handling how network traffic distributes across bonded interfaces. Each mode provides fault tolerance and some modes provide load balancing capabilities to your network. Red Hat supports Open vSwitch (OVS) bonding and kernel bonding.

3.1. Open vSwitch (OVS) bonding

With an OVS bonding configuration, you create a single, logical interface by connecting each physical network interface controller (NIC) as a port to a specific bond. This single bond then handles all network traffic, effectively replacing the function of individual interfaces.

Consider the following architectural layout for OVS bridges that interact with OVS interfaces:

- A network interface uses a bridge Media Access Control (MAC) address for managing protocol-level traffic and other administrative tasks, such as IP address assignment.

- The physical MAC addresses of physical interfaces do not handle traffic.

- OVS handles all MAC address management at the OVS bridge level.

This layout simplifies bond interface management as bonds act as data paths, where centralized MAC address management happens at the OVS bridge level.

For OVS bonding, you can select either active-backup mode or balance-slb mode. A bonding mode specifies the policy for how bond interfaces get used during network transmission.

3.1.1. Enable active-backup mode for your cluster

The active-backup mode provides fault tolerance for network connections by switching to a backup link where the primary link fails.

The mode specifies the following ports for your cluster:

- An active port where one physical interface sends and receives traffic at any given time.

- A standby port where all other ports act as backup links and continously monitor their link status.

During a failover process, if an active port or its link fails, the bonding logic switches all network traffic to a standby port. This standby port becomes the new active port. For failover to work, all ports in a bond must share the same Media Access Control (MAC) address.

3.1.2. Enabling OVS balance-slb mode for your cluster

You can enable the Open vSwitch (OVS) balance-slb mode so that two or more physical interfaces can share their network traffic. A balance-slb mode interface can give source load balancing (SLB) capabilities to a cluster that runs virtualization workloads, without requiring load balancing negotiation with the network switch.

Currently, source load balancing runs on a bond interface, where the interface connects to an auxiliary bridge, such as br-phy. Source load balancing balances only across different Media Access Control (MAC) address and virtual local area network (VLAN) combinations. Note that all OVN-Kubernetes pod traffic uses the same MAC address and VLAN, so this traffic cannot be load balanced across many physical interfaces.

The following diagram shows balance-slb mode on a simple cluster infrastructure layout. Virtual machines (VMs) connect to specific localnet NetworkAttachmentDefinition (NAD) custom resource definition (CRDs), NAD 0 or NAD 1. Each NAD provides VMs with access to the underlying physical network, supporting VLAN-tagged or untagged traffic. A br-ex OVS bridge receives traffic from VMs and passes the traffic to the next OVS bridge, br-phy. The br-phy bridge functions as the controller for the SLB bond. The SLB bond balances traffic from different VM ports over the physical interface links, such as eno0 and eno1. Additionally, ingress traffic from either physical interface can pass through the set of OVS bridges to reach the VMs.

Figure 3.1. OVS balance-slb mode operating on a localnet with two NADs

You can integrate the balance-slb mode interface into primary or secondary network types by using OVS bonding. Note the following points about OVS bonding:

- Supports the OVN-Kubernetes CNI plugin and easily integrates with the plugin.

-

Natively supports

balance-slbmode.

Prerequisites

-

You have more than one physical interface attached to your primary network and you defined the interfaces in a

MachineConfigfile. -

You created a manifest object and defined a customized

br-exbridge in the object configuration file. - You have more than one physical interfaces attached to your primary network and you defined the interfaces in a NAD CRD file.

Procedure

For each bare-metal host that exists in a cluster, in the

install-config.yamlfile for your cluster define anetworkConfigsection similar to the following example:# ... networkConfig: interfaces: - name: enp1s0 type: ethernet state: up ipv4: dhcp: true enabled: true ipv6: enabled: false - name: enp2s0 type: ethernet state: up mtu: 1500 ipv4: dhcp: true enabled: true ipv6: dhcp: true enabled: true - name: enp3s0 type: ethernet state: up mtu: 1500 ipv4: enabled: false ipv6: enabled: false # ...where:

enp1s0- The interface for the provisioned network interface controller (NIC).

enp2s0- The first bonded interface that pulls in the Ignition config file for the bond interface.

mtu-

Manually set the

br-exmaximum transmission unit (MTU) on the bond ports. enp3s0- The second bonded interface is part of a minimal configuration that pulls ignition during cluster installation.

Define each network interface in an NMState configuration file:

Example NMState configuration file that defines many network interfaces

ovn: bridge-mappings: - localnet: localnet-network bridge: br-ex state: present interfaces: - name: br-ex type: ovs-bridge state: up bridge: allow-extra-patch-ports: true port: - name: br-ex - name: patch-ex-to-phy ovs-db: external_ids: bridge-uplink: "patch-ex-to-phy" - name: br-ex type: ovs-interface state: up mtu: 1500 ipv4: enabled: true dhcp: true auto-route-metric: 48 ipv6: enabled: false dhcp: false auto-route-metric: 48 - name: br-phy type: ovs-bridge state: up bridge: allow-extra-patch-ports: true port: - name: patch-phy-to-ex - name: ovs-bond link-aggregation: mode: balance-slb port: - name: enp2s0 - name: enp3s0 - name: patch-ex-to-phy type: ovs-interface state: up patch: peer: patch-phy-to-ex - name: patch-phy-to-ex type: ovs-interface state: up patch: peer: patch-ex-to-phy - name: enp1s0 type: ethernet state: up ipv4: dhcp: true enabled: true ipv6: enabled: false - name: enp2s0 type: ethernet state: up mtu: 1500 ipv4: enabled: false ipv6: enabled: false - name: enp3s0 type: ethernet state: up mtu: 1500 ipv4: enabled: false ipv6: enabled: false # ...where:

mtu-

Manually set the

br-exMTU on the bond ports.

Use the

base64command to encode the interface content of the NMState configuration file:$ base64 -w0 <nmstate_configuration>.yml<nmstate_configuration>: Where the-w0option prevents line wrapping during the base64 encoding operation.Create

MachineConfigmanifest files for themasterrole and theworkerrole. Ensure that you embed the base64-encoded string from an earlier command into eachMachineConfigmanifest file. The following example manifest file configures themasterrole for all nodes that exist in a cluster. You can also create a manifest file formasterandworkerroles specific to a node.apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfig metadata: labels: machineconfiguration.openshift.io/role: master name: 10-br-ex-master spec: config: ignition: version: 3.2.0 storage: files: - contents: source: data:text/plain;charset=utf-8;base64,<base64_encoded_nmstate_configuration> mode: 0644 overwrite: true path: /etc/nmstate/openshift/cluster.ymlwhere:

name- The name of the policy.

source- Writes the encoded base64 information to the specified path.

path-

Specify the path to the

cluster.ymlfile. For each node in your cluster, you can specify the short hostname path to your node, such as<node_short_hostname>.yml.

Save each

MachineConfigmanifest file to the./<installation_directory>/manifestsdirectory, where<installation_directory>is the directory in which the installation program creates files.The Machine Config Operator (MCO) takes the content from each manifest file and consistently applies the content to all selected nodes during a rolling update.

3.2. Kernel bonding

You can use kernel bonding, which is a built-in Linux kernel function where link aggregation can exist among many Ethernet interfaces, to create a single logical physical interface. Kernel bonding allows multiple network interfaces to be combined into a single logical interface, which can enhance network performance by increasing bandwidth and providing redundancy in case of a link failure.

Kernel bonding is the default mode if no bond interfaces depend on OVS bonds. This bonding type does not give the same level of customization as supported OVS bonding.

For kernel-bonding mode, the bond interfaces exist outside, which means they are not in the data path, of the bridge interface. Network traffic in this mode is not sent or received on the bond interface port but instead requires additional bridging capabilities for MAC address assignment at the kernel level.

If you enabled kernel-bonding mode on network controller interfaces (NICs) for your nodes, you must specify a Media Access Control (MAC) address failover. This configuration prevents node communication issues with the bond interfaces, such as eno1f0 and eno2f0.

Red Hat supports only the following value for the fail_over_mac parameter:

-

0: Specifies thenonevalue, which disables MAC address failover so that all interfaces receive the same MAC address as the bond interface. This is the default value.

Red Hat does not support the following values for the fail_over_mac parameter:

-

1: Specifies theactivevalue and sets the MAC address of the primary bond interface to always remain the same as active interfaces. If during a failover, the MAC address of an interface changes, the MAC address of the bond interface changes to match the new MAC address of the interface. -

2: Specifies thefollowvalue so that during a failover, an active interface gets the MAC address of the bond interface and a formerly active interface receives the MAC address of the newly active interface.

Chapter 4. Using the Stream Control Transmission Protocol (SCTP)

As a cluster administrator, you can use the Stream Control Transmission Protocol (SCTP) on a bare-metal cluster.

4.1. Support for SCTP on OpenShift Container Platform

As a cluster administrator, you can enable SCTP on the hosts in the cluster. On Red Hat Enterprise Linux CoreOS (RHCOS), the SCTP module is disabled by default.

SCTP is a reliable message based protocol that runs on top of an IP network.

When enabled, you can use SCTP as a protocol with pods, services, and network policy. A Service object must be defined with the type parameter set to either the ClusterIP or NodePort value.

4.1.1. Example configurations using SCTP protocol

You can configure a pod or service to use SCTP by setting the protocol parameter to the SCTP value in the pod or service object.

In the following example, a pod is configured to use SCTP:

apiVersion: v1

kind: Pod

metadata:

namespace: project1

name: example-pod

spec:

containers:

- name: example-pod

...

ports:

- containerPort: 30100

name: sctpserver

protocol: SCTPIn the following example, a service is configured to use SCTP:

apiVersion: v1

kind: Service

metadata:

namespace: project1

name: sctpserver

spec:

...

ports:

- name: sctpserver

protocol: SCTP

port: 30100

targetPort: 30100

type: ClusterIP

In the following example, a NetworkPolicy object is configured to apply to SCTP network traffic on port 80 from any pods with a specific label:

kind: NetworkPolicy

apiVersion: networking.k8s.io/v1

metadata:

name: allow-sctp-on-http

spec:

podSelector:

matchLabels:

role: web

ingress:

- ports:

- protocol: SCTP

port: 804.2. Enabling Stream Control Transmission Protocol (SCTP)

As a cluster administrator, you can load and enable the blacklisted SCTP kernel module on worker nodes in your cluster.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Access to the cluster as a user with the

cluster-adminrole.

Procedure

Create a file named

load-sctp-module.yamlthat contains the following YAML definition:apiVersion: machineconfiguration.openshift.io/v1 kind: MachineConfig metadata: name: load-sctp-module labels: machineconfiguration.openshift.io/role: worker spec: config: ignition: version: 3.2.0 storage: files: - path: /etc/modprobe.d/sctp-blacklist.conf mode: 0644 overwrite: true contents: source: data:, - path: /etc/modules-load.d/sctp-load.conf mode: 0644 overwrite: true contents: source: data:,sctpTo create the

MachineConfigobject, enter the following command:$ oc create -f load-sctp-module.yamlOptional: To watch the status of the nodes while the MachineConfig Operator applies the configuration change, enter the following command. When the status of a node transitions to

Ready, the configuration update is applied.$ oc get nodes

4.3. Verifying Stream Control Transmission Protocol (SCTP) is enabled

You can verify that SCTP is working on a cluster by creating a pod with an application that listens for SCTP traffic, associating it with a service, and then connecting to the exposed service.

Prerequisites

-

Access to the internet from the cluster to install the

ncpackage. -

Install the OpenShift CLI (

oc). -

Access to the cluster as a user with the

cluster-adminrole.

Procedure

Create a pod starts an SCTP listener:

Create a file named

sctp-server.yamlthat defines a pod with the following YAML:apiVersion: v1 kind: Pod metadata: name: sctpserver labels: app: sctpserver spec: containers: - name: sctpserver image: registry.access.redhat.com/ubi9/ubi command: ["/bin/sh", "-c"] args: ["dnf install -y nc && sleep inf"] ports: - containerPort: 30102 name: sctpserver protocol: SCTPCreate the pod by entering the following command:

$ oc create -f sctp-server.yaml

Create a service for the SCTP listener pod.

Create a file named

sctp-service.yamlthat defines a service with the following YAML:apiVersion: v1 kind: Service metadata: name: sctpservice labels: app: sctpserver spec: type: NodePort selector: app: sctpserver ports: - name: sctpserver protocol: SCTP port: 30102 targetPort: 30102To create the service, enter the following command:

$ oc create -f sctp-service.yaml

Create a pod for the SCTP client.

Create a file named

sctp-client.yamlwith the following YAML:apiVersion: v1 kind: Pod metadata: name: sctpclient labels: app: sctpclient spec: containers: - name: sctpclient image: registry.access.redhat.com/ubi9/ubi command: ["/bin/sh", "-c"] args: ["dnf install -y nc && sleep inf"]To create the

Podobject, enter the following command:$ oc apply -f sctp-client.yaml

Run an SCTP listener on the server.

To connect to the server pod, enter the following command:

$ oc rsh sctpserverTo start the SCTP listener, enter the following command:

$ nc -l 30102 --sctp

Connect to the SCTP listener on the server.

- Open a new terminal window or tab in your terminal program.

Obtain the IP address of the

sctpserviceservice. Enter the following command:$ oc get services sctpservice -o go-template='{{.spec.clusterIP}}{{"\n"}}'To connect to the client pod, enter the following command:

$ oc rsh sctpclientTo start the SCTP client, enter the following command. Replace

<cluster_IP>with the cluster IP address of thesctpserviceservice.# nc <cluster_IP> 30102 --sctp

Chapter 5. Associating secondary interfaces metrics to network attachments

To gain better visibility into cluster traffic, you can associate secondary interface metrics with specific network attachments. By using the pod_network_info metric to label interfaces based on their NetworkAttachmentDefinition resource, you can more easily monitor performance and troubleshoot connectivity issues across your network.

5.1. Extending secondary network metrics for monitoring

To monitor and manage network traffic effectively, you can extend secondary network metrics with identifying information. By using the pod_network_name_info metric to label interfaces based on their NetworkAttachmentDefinition resource, you can classify interface types to enable precise metric aggregation and alerting.

Secondary devices, or interfaces, are used for different purposes. Metrics from secondary network interfaces need to be classified to allow for effective aggregation and monitoring.

Exposed metrics contain the interface but do not specify where the interface originates. This is workable when there are no additional interfaces. However, relying on interface names alone becomes problematic when secondary interfaces are added because it is difficult to identify their purpose and use their metrics effectively..

When adding secondary interfaces, their names depend on the order in which they are added. Secondary interfaces can belong to distinct networks that can each serve a different purposes.

With pod_network_name_info it is possible to extend the current metrics with additional information that identifies the interface type. In this way, it is possible to aggregate the metrics and to add specific alarms to specific interface types.

The network type is generated from the name of the NetworkAttachmentDefinition resource, which distinguishes different secondary network classes. For example, different interfaces belonging to different networks or using different CNIs use different network attachment definition names.

5.2. Network Metrics Daemon

The Network Metrics Daemon collects and publishes network-related metrics to support performance management in complex pod environments. This component provides metadata for secondary interfaces, which is required for accurate traffic monitoring across distinct network attachments.

The kubelet is already publishing network related metrics you can observe. These metrics are:

-

container_network_receive_bytes_total -

container_network_receive_errors_total -

container_network_receive_packets_total -

container_network_receive_packets_dropped_total -

container_network_transmit_bytes_total -

container_network_transmit_errors_total -

container_network_transmit_packets_total -

container_network_transmit_packets_dropped_total

The labels in these metrics contain, among others:

- Pod name

- Pod namespace

-

Interface name (such as

eth0)

These metrics work well until new interfaces are added to the pod, for example via Multus, as it is not clear what the interface names refer to.

The interface label refers to the interface name, but it is not clear what that interface is meant for. In case of many different interfaces, it would be impossible to understand what network the metrics you are monitoring refer to.

This is addressed by introducing the new pod_network_name_info described in the following section.

5.3. Metrics with network name

To simplify the monitoring of secondary networks, you can use the pod_network_name_info metric to correlate network performance data with specific network names. By joining this metric with container network metrics, you can identify traffic patterns and errors across distinct network attachment definitions.

Example of pod_network_name_info

pod_network_name_info{interface="net0",namespace="namespacename",network_name="nadnamespace/firstNAD",pod="podname"} 0

The network name label is produced using the annotation added by Multus. It is the concatenation of the namespace the network attachment definition belongs to, plus the name of the network attachment definition. The Network Metrics daemonset publishes a pod_network_name_info gauge metric, with a fixed value of 0.

The new metric alone does not provide much value, but combined with the network related container_network_* metrics, it offers better support for monitoring secondary networks.

Using a promql query like the following ones, it is possible to get a new metric containing the value and the network name retrieved from the k8s.v1.cni.cncf.io/network-status annotation:

(container_network_receive_bytes_total) + on(namespace,pod,interface) group_left(network_name) ( pod_network_name_info )

(container_network_receive_errors_total) + on(namespace,pod,interface) group_left(network_name) ( pod_network_name_info )

(container_network_receive_packets_total) + on(namespace,pod,interface) group_left(network_name) ( pod_network_name_info )

(container_network_receive_packets_dropped_total) + on(namespace,pod,interface) group_left(network_name) ( pod_network_name_info )

(container_network_transmit_bytes_total) + on(namespace,pod,interface) group_left(network_name) ( pod_network_name_info )

(container_network_transmit_errors_total) + on(namespace,pod,interface) group_left(network_name) ( pod_network_name_info )

(container_network_transmit_packets_total) + on(namespace,pod,interface) group_left(network_name) ( pod_network_name_info )

(container_network_transmit_packets_dropped_total) + on(namespace,pod,interface) group_left(network_name)Chapter 6. BGP routing

6.1. About BGP routing

This feature provides native Border Gateway Protocol (BGP) routing capabilities for the cluster.

If you are using the MetalLB Operator and there are existing FRRConfiguration CRs in the metallb-system namespace created by cluster administrators or third-party cluster components other than the MetalLB Operator, you must ensure that they are copied to the openshift-frr-k8s namespace or that those third-party cluster components use the new namespace. For more information, see Migrating FRR-K8s resources.

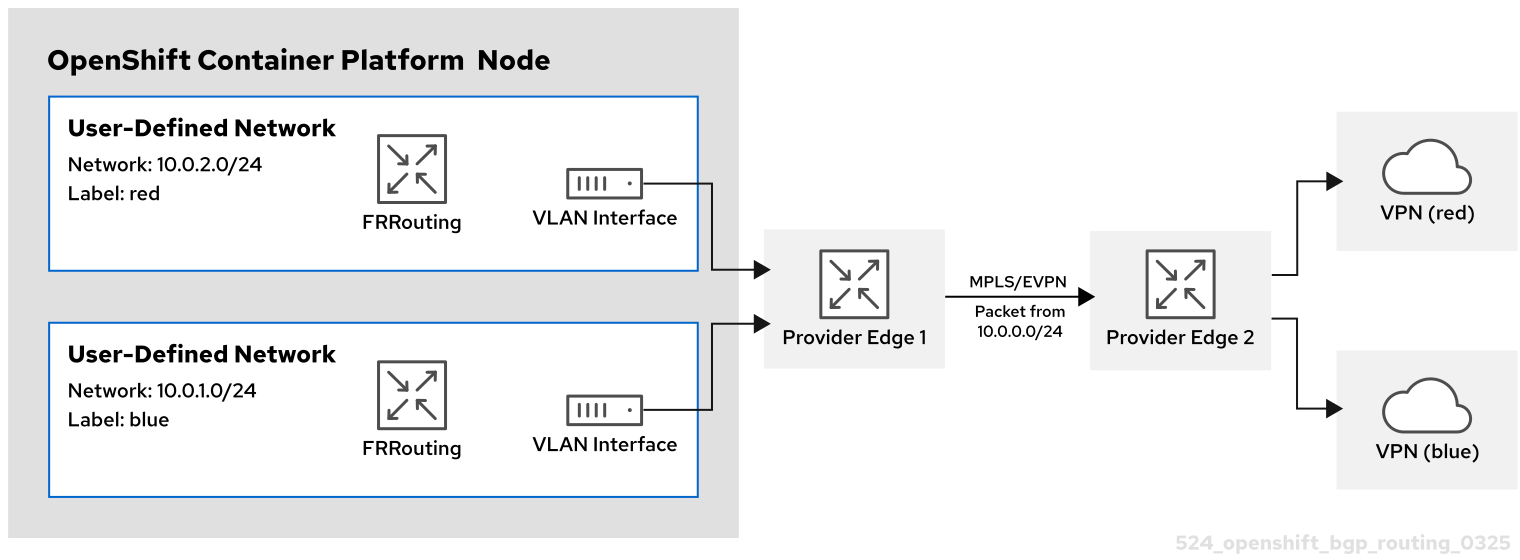

6.1.1. About Border Gateway Protocol (BGP) routing

OpenShift Container Platform supports BGP routing through FRRouting (FRR), a free, open source internet routing protocol suite for Linux, UNIX, and similar operating systems. FRR-K8s is a Kubernetes-based daemon set that exposes a subset of the FRR API in a Kubernetes-compliant manner. As a cluster administrator, you can use the FRRConfiguration custom resource (CR) to access FRR services.

6.1.1.1. Supported platforms

BGP routing is supported on the following infrastructure types:

- Bare metal

BGP routing requires that you have properly configured BGP for your network provider. Outages or misconfigurations of your network provider might cause disruptions to your cluster network.

6.1.1.2. Considerations for use with the MetalLB Operator

The MetalLB Operator is installed as an add-on to the cluster. Deployment of the MetalLB Operator automatically enables FRR-K8s as an additional routing capability provider and uses the FRR-K8s daemon installed by this feature.

Before upgrading to 4.18, any existing FRRConfiguration in the metallb-system namespace not managed by the MetalLB operator (added by a cluster administrator or any other component) needs to be copied to the openshift-frr-k8s namespace manually, creating the namespace if necessary.

If you are using the MetalLB Operator and there are existing FRRConfiguration CRs in the metallb-system namespace created by cluster administrators or third-party cluster components other than MetalLB Operator, you must:

-

Ensure that these existing

FRRConfigurationCRs are copied to theopenshift-frr-k8snamespace. -

Ensure that the third-party cluster components use the new namespace for the

FRRConfigurationCRs that they create.

6.1.1.3. Cluster Network Operator configuration

The Cluster Network Operator API exposes the following API field to configure BGP routing:

-

spec.additionalRoutingCapabilities: Enables deployment of the FRR-K8s daemon for the cluster, which can be used independently of route advertisements. When enabled, the FRR-K8s daemon is deployed on all nodes.

6.1.1.4. BGP routing custom resources

The following custom resources are used to configure BGP routing:

FRRConfiguration- This custom resource defines the FRR configuration for the BGP routing. This CR is namespaced.

6.1.2. Configuring the FRRConfiguration CRD

The following section provides reference examples that use the FRRConfiguration custom resource (CR).

6.1.2.1. The routers field

You can use the routers field to configure multiple routers, one for each Virtual Routing and Forwarding (VRF) resource. For each router, you must define the Autonomous System Number (ASN).

You can also define a list of Border Gateway Protocol (BGP) neighbors to connect to, as in the following example:

Example FRRConfiguration CR

apiVersion: frrk8s.metallb.io/v1beta1

kind: FRRConfiguration

metadata:

name: test

namespace: frr-k8s-system

spec:

bgp:

routers:

- asn: 64512

neighbors:

- address: 172.30.0.3

asn: 4200000000

ebgpMultiHop: true

port: 180

- address: 172.18.0.6

asn: 4200000000

port: 1796.1.2.2. The toAdvertise field

By default, FRR-K8s does not advertise the prefixes configured as part of a router configuration. In order to advertise them, you use the toAdvertise field.

You can advertise a subset of the prefixes, as in the following example:

Example FRRConfiguration CR

apiVersion: frrk8s.metallb.io/v1beta1

kind: FRRConfiguration

metadata:

name: test

namespace: frr-k8s-system

spec:

bgp:

routers:

- asn: 64512

neighbors:

- address: 172.30.0.3

asn: 4200000000

ebgpMultiHop: true

port: 180

toAdvertise:

allowed:

prefixes:

- 192.168.2.0/24

prefixes:

- 192.168.2.0/24

- 192.169.2.0/24- 1

- Advertises a subset of prefixes.

The following example shows you how to advertise all of the prefixes:

Example FRRConfiguration CR

apiVersion: frrk8s.metallb.io/v1beta1

kind: FRRConfiguration

metadata:

name: test

namespace: frr-k8s-system

spec:

bgp:

routers:

- asn: 64512

neighbors:

- address: 172.30.0.3

asn: 4200000000

ebgpMultiHop: true

port: 180

toAdvertise:

allowed:

mode: all

prefixes:

- 192.168.2.0/24

- 192.169.2.0/24- 1

- Advertises all prefixes.

6.1.2.3. The toReceive field

By default, FRR-K8s does not process any prefixes advertised by a neighbor. You can use the toReceive field to process such addresses.

You can configure for a subset of the prefixes, as in this example:

Example FRRConfiguration CR

apiVersion: frrk8s.metallb.io/v1beta1

kind: FRRConfiguration

metadata:

name: test

namespace: frr-k8s-system

spec:

bgp:

routers:

- asn: 64512

neighbors:

- address: 172.18.0.5

asn: 64512

port: 179

toReceive:

allowed:

prefixes:

- prefix: 192.168.1.0/24

- prefix: 192.169.2.0/24

ge: 25

le: 28 The following example configures FRR to handle all the prefixes announced:

Example FRRConfiguration CR

apiVersion: frrk8s.metallb.io/v1beta1

kind: FRRConfiguration

metadata:

name: test

namespace: frr-k8s-system

spec:

bgp:

routers:

- asn: 64512

neighbors:

- address: 172.18.0.5

asn: 64512

port: 179

toReceive:

allowed:

mode: all6.1.2.4. The bgp field

You can use the bgp field to define various BFD profiles and associate them with a neighbor. In the following example, BFD backs up the BGP session and FRR can detect link failures:

Example FRRConfiguration CR

apiVersion: frrk8s.metallb.io/v1beta1

kind: FRRConfiguration

metadata:

name: test

namespace: frr-k8s-system

spec:

bgp:

routers:

- asn: 64512

neighbors:

- address: 172.30.0.3

asn: 64512

port: 180

bfdProfile: defaultprofile

bfdProfiles:

- name: defaultprofile6.1.2.5. The nodeSelector field

By default, FRR-K8s applies the configuration to all nodes where the daemon is running. You can use the nodeSelector field to specify the nodes to which you want to apply the configuration. For example:

Example FRRConfiguration CR

apiVersion: frrk8s.metallb.io/v1beta1

kind: FRRConfiguration

metadata:

name: test

namespace: frr-k8s-system

spec:

bgp:

routers:

- asn: 64512

nodeSelector:

labelSelector:

foo: "bar"6.1.2.6. The interface field

The spec.bgp.routers.neighbors.interface field is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope.

You can use the interface field to configure unnumbered BGP peering by using the following example configuration:

Example FRRConfiguration CR

apiVersion: frrk8s.metallb.io/v1beta1

kind: FRRConfiguration

metadata:

name: test

namespace: frr-k8s-system

spec:

bgp:

bfdProfiles:

- echoMode: false

name: simple

passiveMode: false

routers:

- asn: 64512

neighbors:

- asn: 64512

bfdProfile: simple

disableMP: false

interface: net10

port: 179

toAdvertise:

allowed:

mode: filtered

prefixes:

- 5.5.5.5/32

toReceive:

allowed:

mode: filtered

prefixes:

- 5.5.5.5/32- 1

- Activates unnumbered BGP peering.

To use the interface field, you must establish a point-to-point, layer 2 connection between the two BGP peers. You can use unnumbered BGP peering with IPv4, IPv6, or dual-stack, but you must enable IPv6 RAs (Router Advertisements). Each interface is limited to one BGP connection.

If you use this field, you cannot specify a value in the spec.bgp.routers.neighbors.address field.

The fields for the FRRConfiguration custom resource are described in the following table:

| Field | Type | Description |

|---|---|---|

|

|

| Specifies the routers that FRR is to configure (one per VRF). |

|

|

| The Autonomous System Number (ASN) to use for the local end of the session. |

|

|

|

Specifies the ID of the |

|

|

| Specifies the host vrf used to establish sessions from this router. |

|

|

| Specifies the neighbors to establish BGP sessions with. |

|

|

|

Specifies the ASN to use for the remote end of the session. If you use this field, you cannot specify a value in the |

|

|

|

Detects the ASN to use for the remote end of the session without explicitly setting it. Specify |

|

|

|

Specifies the IP address to establish the session with. If you use this field, you cannot specify a value in the |

|

|

|

Specifies the interface name to use when establishing a session. Use this field to configure unnumbered BGP peering. There must be a point-to-point, layer 2 connection between the two BGP peers. You can use unnumbered BGP peering with IPv4, IPv6, or dual-stack, but you must enable IPv6 RAs (Router Advertisements). Each interface is limited to one BGP connection. The |

|

|

| Specifies the port to dial when establishing the session. Defaults to 179. |

|

|

|

Specifies the password to use for establishing the BGP session. |

|

|

|

Specifies the name of the authentication secret for the neighbor. The secret must be of type "kubernetes.io/basic-auth", and in the same namespace as the FRR-K8s daemon. The key "password" stores the password in the secret. |

|

|

| Specifies the requested BGP hold time, per RFC4271. Defaults to 180s. |

|

|

|

Specifies the requested BGP keepalive time, per RFC4271. Defaults to |

|

|

| Specifies how long BGP waits between connection attempts to a neighbor. |

|

|

| Indicates if the BGPPeer is multi-hops away. |

|

|

| Specifies the name of the BFD Profile to use for the BFD session associated with the BGP session. If not set, the BFD session is not set up. |

|

|

| Represents the list of prefixes to advertise to a neighbor, and the associated properties. |

|

|

| Specifies the list of prefixes to advertise to a neighbor. This list must match the prefixes that you define in the router. |

|

|

|

Specifies the mode to use when handling the prefixes. You can set to |

|

|

| Specifies the prefixes associated with an advertised local preference. You must specify the prefixes associated with a local preference in the prefixes allowed to be advertised. |

|

|

| Specifies the prefixes associated with the local preference. |

|

|

| Specifies the local preference associated with the prefixes. |

|

|

| Specifies the prefixes associated with an advertised BGP community. You must include the prefixes associated with a local preference in the list of prefixes that you want to advertise. |

|

|

| Specifies the prefixes associated with the community. |

|

|

| Specifies the community associated with the prefixes. |

|

|

| Specifies the prefixes to receive from a neighbor. |

|

|

| Specifies the information that you want to receive from a neighbor. |

|

|

| Specifies the prefixes allowed from a neighbor. |

|

|

|

Specifies the mode to use when handling the prefixes. When set to |

|

|

| Disables MP BGP to prevent it from separating IPv4 and IPv6 route exchanges into distinct BGP sessions. |

|

|

| Specifies all prefixes to advertise from this router instance. |

|

|

| Specifies the list of bfd profiles to use when configuring the neighbors. |

|

|

| The name of the BFD Profile to be referenced in other parts of the configuration. |

|

|

|

Specifies the minimum interval at which this system can receive control packets, in milliseconds. Defaults to |

|

|

|

Specifies the minimum transmission interval, excluding jitter, that this system wants to use to send BFD control packets, in milliseconds. Defaults to |

|

|

| Configures the detection multiplier to determine packet loss. To determine the connection loss-detection timer, multiply the remote transmission interval by this value. |

|

|

|

Configures the minimal echo receive transmission-interval that this system can handle, in milliseconds. Defaults to |

|

|

| Enables or disables the echo transmission mode. This mode is disabled by default, and not supported on multihop setups. |

|

|

| Mark session as passive. A passive session does not attempt to start the connection and waits for control packets from peers before it begins replying. |

|

|

| For multihop sessions only. Configures the minimum expected TTL for an incoming BFD control packet. |

|

|

| Limits the nodes that attempt to apply this configuration. If specified, only those nodes whose labels match the specified selectors attempt to apply the configuration. If it is not specified, all nodes attempt to apply this configuration. |

|

|

| Defines the observed state of FRRConfiguration. |

6.2. Enabling BGP routing

As a cluster administrator, you can enable OVN-Kubernetes Border Gateway Protocol (BGP) routing support for your cluster.

6.2.1. Enabling Border Gateway Protocol (BGP) routing

As a cluster administrator, you can enable Border Gateway Protocol (BGP) routing support for your cluster on bare-metal infrastructure.

If you are using BGP routing in conjunction with the MetalLB Operator, the necessary BGP routing support is enabled automatically. You do not need to manually enable BGP routing support.

6.2.1.1. Enabling BGP routing support

As a cluster administrator, you can enable BGP routing support for your cluster.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You are logged in to the cluster as a user with the

cluster-adminrole. - The cluster is installed on compatible infrastructure.

Procedure

To enable a dynamic routing provider, enter the following command:

$ oc patch Network.operator.openshift.io/cluster --type=merge -p '{ "spec": { "additionalRoutingCapabilities": { "providers": ["FRR"] } } }'

6.3. Disabling BGP routing

As a cluster administrator, you can enable OVN-Kubernetes Border Gateway Protocol (BGP) routing support for your cluster.

6.3.1. Disabling Border Gateway Protocol (BGP) routing

As a cluster administrator, you can disable Border Gateway Protocol (BGP) routing support for your cluster on bare-metal infrastructure.

6.3.1.1. Disabling BGP routing support

As a cluster administrator, you can disable BGP routing support for your cluster.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You are logged in to the cluster as a user with the

cluster-adminrole. - The cluster is installed on compatible infrastructure.

Procedure

To disable dynamic routing, enter the following command:

$ oc patch Network.operator.openshift.io/cluster --type=merge -p '{ "spec": { "additionalRoutingCapabilities": null } }'

6.4. Migrating FRR-K8s resources

All user-created FRR-K8s custom resources (CRs) in the metallb-system namespace under OpenShift Container Platform 4.17 and earlier releases must be migrated to the openshift-frr-k8s namespace. As a cluster administrator, complete the steps in this procedure to migrate your FRR-K8s custom resources.

6.4.1. Migrating FRR-K8s resources

You can migrate the FRR-K8s FRRConfiguration custom resources from the metallb-system namespace to the openshift-frr-k8s namespace.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You are logged in to the cluster as a user with the

cluster-adminrole.

Procedure

When upgrading from an earlier version of OpenShift Container Platform with the Metal LB Operator deployed, you must manually migrate your custom FRRConfiguration configurations from the metallb-system namespace to the openshift-frr-k8s namespace. To move these CRs, enter the following commands:

To create the

openshift-frr-k8snamespace, enter the following command:$ oc create namespace openshift-frr-k8sTo automate the migration, create a shell script named

migrate.shwith the following contents:#!/bin/bash OLD_NAMESPACE="metallb-system" NEW_NAMESPACE="openshift-frr-k8s" FILTER_OUT="metallb-" oc get frrconfigurations.frrk8s.metallb.io -n "${OLD_NAMESPACE}" -o json |\ jq -r '.items[] | select(.metadata.name | test("'"${FILTER_OUT}"'") | not)' |\ jq -r '.metadata.namespace = "'"${NEW_NAMESPACE}"'"' |\ oc create -f -To execute the migration, run the following command:

$ bash migrate.sh

Verification

To confirm that the migration succeeded, run the following command:

$ oc get frrconfigurations.frrk8s.metallb.io -n openshift-frr-k8s

After the migration is complete, you can remove the FRRConfiguration custom resources from the metallb-system namespace.

Chapter 7. Route advertisements

7.1. About route advertisements

To simplify network management and improve failover visibility, you can use route advertisements to share pod and egress IP routes between your cluster and the provider network. This feature requires the OVN-Kubernetes plugin and a Border Gateway Protocol (BGP) provider.

For more information, see About BGP routing.

7.1.1. Advertise cluster network routes with Border Gateway Protocol

To simplify routing and improve failover visibility without manual route management, you can enable route advertisements. Route advertisements allow you to advertise default and user-defined network routes, including EgressIPs, between your cluster and the provider network.

With route advertisements enabled, you can advertise network routes for the default pod network and user-defined networks to the provider network, including EgressIPs, and importing routes from the provider network to the default pod network and CUDNs. This simplifies routing while improving failover visibility, and eliminates manual route management.

From the provider network, IP addresses advertised from the default pod network and user defined networks can be reached directly and vice versa.

For example, you can import routes to the default pod network so you no longer need to manually configure routes on each node. Previously, you might have been setting the routingViaHost parameter to true and manually configuring routes on each node to approximate a similar configuration. With route advertisements you can accomplish this task seamlessly with routingViaHost parameter set to false.

You could also set the routingViaHost parameter to true in the Network custom resource CR for your cluster, but you must then manually configure routes on each node to simulate a similar configuration. When you enable route advertisements, you can set routingViaHost=false in the Network CR without having to then manually configure routes one each node.

Route reflectors on the provider network are supported and can reduce the number of BGP connections required to advertise routes on large networks.

If you use EgressIPs with route advertisements enabled, the layer 3 provider network is aware of EgressIP failovers. This means that you can locate cluster nodes that host EgressIPs on different layer 2 segments whereas before only the layer 2 provider network was aware so that required all the egress nodes to be on the same layer 2 segment.

7.1.1.1. Supported platforms

Advertising routes with border gateway protocol (BGP) is supported on the bare-metal infrastructure type.

7.1.1.2. Infrastructure requirements

To use route advertisements, you must have configured BGP for your network infrastructure. Outages or misconfigurations of your network infrastructure might cause disruptions to your cluster network.

7.1.1.3. Compatibility with other networking features