Authentication

Configuring user authentication, encryption, and access controls for users and services

Abstract

Chapter 1. Understanding authentication

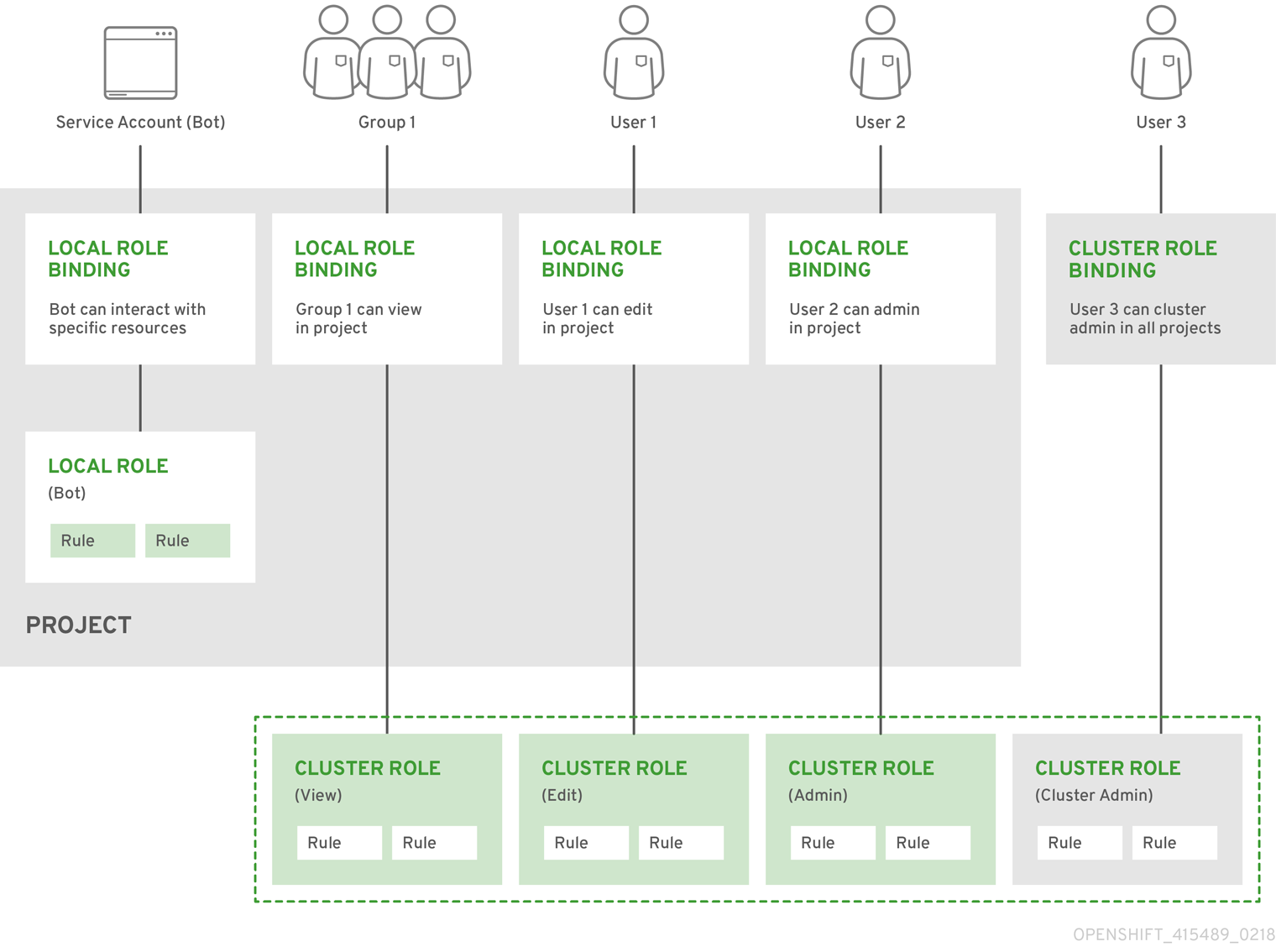

For users to interact with OpenShift Container Platform, they must first authenticate to the cluster. The authentication layer identifies the user associated with requests to the OpenShift Container Platform API. The authorization layer then uses information about the requesting user to determine if the request is allowed.

As an administrator, you can configure authentication for OpenShift Container Platform.

1.1. Users

A user in OpenShift Container Platform is an entity that can make requests to the OpenShift Container Platform API. An OpenShift Container Platform user object represents an actor which can be granted permissions in the system by adding roles to them or to their groups. Typically, this represents the account of a developer or administrator that is interacting with OpenShift Container Platform.

Several types of users can exist:

|

|

This is the way most interactive OpenShift Container Platform users are represented. Regular users are created automatically in the system upon first login or can be created via the API. Regular users are represented with the |

|

|

Many of these are created automatically when the infrastructure is defined, mainly for the purpose of enabling the infrastructure to interact with the API securely. They include a cluster administrator (with access to everything), a per-node user, users for use by routers and registries, and various others. Finally, there is an |

|

|

These are special system users associated with projects; some are created automatically when the project is first created, while project administrators can create more for the purpose of defining access to the contents of each project. Service accounts are represented with the |

Each user must authenticate in some way in order to access OpenShift Container Platform. API requests with no authentication or invalid authentication are authenticated as requests by the anonymous system user. Once authenticated, policy determines what the user is authorized to do.

1.2. Groups

A user can be assigned to one or more groups, each of which represent a certain set of users. Groups are useful when managing authorization policies to grant permissions to multiple users at once, for example allowing access to objects within a project, versus granting them to users individually.

In addition to explicitly defined groups, there are also system groups, or virtual groups, that are automatically provisioned by the cluster.

The following default virtual groups are most important:

| Virtual group | Description |

|---|---|

|

| Automatically associated with all authenticated users. |

|

| Automatically associated with all users authenticated with an OAuth access token. |

|

| Automatically associated with all unauthenticated users. |

1.3. API authentication

Requests to the OpenShift Container Platform API are authenticated using the following methods:

- OAuth Access Tokens

-

Obtained from the OpenShift Container Platform OAuth server using the

<namespace_route>/oauth/authorizeand<namespace_route>/oauth/tokenendpoints. -

Sent as an

Authorization: Bearer…header. -

Sent as a websocket subprotocol header in the form

base64url.bearer.authorization.k8s.io.<base64url-encoded-token>for websocket requests.

-

Obtained from the OpenShift Container Platform OAuth server using the

- X.509 Client Certificates

- Requires an HTTPS connection to the API server.

- Verified by the API server against a trusted certificate authority bundle.

- The API server creates and distributes certificates to controllers to authenticate themselves.

Any request with an invalid access token or an invalid certificate is rejected by the authentication layer with a 401 error.

If no access token or certificate is presented, the authentication layer assigns the system:anonymous virtual user and the system:unauthenticated virtual group to the request. This allows the authorization layer to determine which requests, if any, an anonymous user is allowed to make.

1.3.1. OpenShift Container Platform OAuth server

The OpenShift Container Platform master includes a built-in OAuth server. Users obtain OAuth access tokens to authenticate themselves to the API.

When a person requests a new OAuth token, the OAuth server uses the configured identity provider to determine the identity of the person making the request.

It then determines what user that identity maps to, creates an access token for that user, and returns the token for use.

1.3.1.1. OAuth token requests

Every request for an OAuth token must specify the OAuth client that will receive and use the token. The following OAuth clients are automatically created when starting the OpenShift Container Platform API:

| OAuth Client | Usage |

|---|---|

|

|

Requests tokens at |

|

|

Requests tokens with a user-agent that can handle |

<namespace_route> refers to the namespace’s route. This is found by running the following command.

oc get route oauth-openshift -n openshift-authentication -o json | jq .spec.host

All requests for OAuth tokens involve a request to <namespace_route>/oauth/authorize. Most authentication integrations place an authenticating proxy in front of this endpoint, or configure OpenShift Container Platform to validate credentials against a backing identity provider. Requests to <namespace_route>/oauth/authorize can come from user-agents that cannot display interactive login pages, such as the CLI. Therefore, OpenShift Container Platform supports authenticating using a WWW-Authenticate challenge in addition to interactive login flows.

If an authenticating proxy is placed in front of the <namespace_route>/oauth/authorize endpoint, it sends unauthenticated, non-browser user-agents WWW-Authenticate challenges rather than displaying an interactive login page or redirecting to an interactive login flow.

To prevent cross-site request forgery (CSRF) attacks against browser clients, only send Basic authentication challenges with if a X-CSRF-Token header is on the request. Clients that expect to receive Basic WWW-Authenticate challenges must set this header to a non-empty value.

If the authenticating proxy cannot support WWW-Authenticate challenges, or if OpenShift Container Platform is configured to use an identity provider that does not support WWW-Authenticate challenges, you must use a browser to manually obtain a token from <namespace_route>/oauth/token/request.

1.3.1.2. API impersonation

You can configure a request to the OpenShift Container Platform API to act as though it originated from another user. For more information, see User impersonation in the Kubernetes documentation.

1.3.1.3. Authentication metrics for Prometheus

OpenShift Container Platform captures the following Prometheus system metrics during authentication attempts:

-

openshift_auth_basic_password_countcounts the number ofoc loginuser name and password attempts. -

openshift_auth_basic_password_count_resultcounts the number ofoc loginuser name and password attempts by result,successorerror. -

openshift_auth_form_password_countcounts the number of web console login attempts. -

openshift_auth_form_password_count_resultcounts the number of web console login attempts by result,successorerror. -

openshift_auth_password_totalcounts the total number ofoc loginand web console login attempts.

Chapter 2. Certificate types and descriptions

2.1. Certificate validation

OpenShift Container Platform monitors certificates for proper validity, for the cluster certificates it issues and manages. The OpenShift Container Platform alerting framework has rules to help identify when a certificate issue is about to occur. These rules consist of the following checks:

- API server client certificate expiration is less than five minutes.

2.2. User-provided certificates for the API server

Purpose

The API server is accessible by clients external to the cluster at api.<cluster_name>.<base_domain>. You might want clients to access the API server at a different host name or without the need to distribute the cluster-managed certificate authority (CA) certificates to the clients. The administrator must set a custom default certificate to be used by the API server when serving content.

Location

The user-provided certificates must be provided in a kubernetes.io/tls type Secret in the openshift-config namespace. Update the API server cluster configuration, the apiserver/cluster resource, to enable the use of the user-provided certificate.

Management

User-provided certificates are managed by the user.

Expiration

User-provided certificates are managed by the user.

Customization

Update the secret containing the user-managed certificate as needed.

2.3. Proxy certificates

Purpose

Proxy certificates allow users to specify one or more custom certificate authority (CA) certificates used by platform components when making egress connections.

The trustedCA field of the Proxy object is a reference to a ConfigMap that contains a user-provided trusted certificate authority (CA) bundle. This bundle is merged with the Red Hat Enterprise Linux CoreOS (RHCOS) trust bundle and injected into the trust store of platform components that make egress HTTPS calls. For example, image-registry-operator calls an external image registry to download images. If trustedCA is not specified, only the RHCOS trust bundle is used for proxied HTTPS connections. Provide custom CA certificates to the RHCOS trust bundle if you want to use your own certificate infrastructure.

The trustedCA field should only be consumed by a proxy validator. The validator is responsible for reading the certificate bundle from required key ca-bundle.crt and copying it to a ConfigMap named trusted-ca-bundle in the openshift-config-managed namespace. The namespace for the ConfigMap referenced by trustedCA is openshift-config:

apiVersion: v1

kind: ConfigMap

metadata:

name: user-ca-bundle

namespace: openshift-config

data:

ca-bundle.crt: |

-----BEGIN CERTIFICATE-----

Custom CA certificate bundle.

-----END CERTIFICATE-----Managing proxy certificates during installation

The additionalTrustBundle value of the installer configuration is used to specify any proxy-trusted CA certificates during installation. For example:

$ cat install-config.yaml

. . .

proxy:

httpProxy: http://<HTTP_PROXY>

httpsProxy: https://<HTTPS_PROXY>

additionalTrustBundle: |

-----BEGIN CERTIFICATE-----

<MY_HTTPS_PROXY_TRUSTED_CA_CERT>

-----END CERTIFICATE-----

. . .Location

The user-provided trust bundle is represented as a ConfigMap. The ConfigMap is mounted into the file system of platform components that make egress HTTPS calls. Typically, Operators mount the ConfigMap to /etc/pki/ca-trust/extracted/pem/tls-ca-bundle.pem, but this is not required by the proxy. A proxy can modify or inspect the HTTPS connection. In either case, the proxy must generate and sign a new certificate for the connection.

Complete proxy support means connecting to the specified proxy and trusting any signatures it has generated. Therefore, it is necessary to let the user specify a trusted root, such that any certificate chain connected to that trusted root is also trusted.

If using the RHCOS trust bundle, place CA certificates in /etc/pki/ca-trust/source/anchors.

See Using shared system certificates in the Red Hat Enterprise Linux documentation for more information.

Expiration

The user sets the expiration term of the user-provided trust bundle.

The default expiration term is defined by the CA certificate itself. It is up to the CA administrator to configure this for the certificate before it can be used by OpenShift Container Platform or RHCOS.

Red Hat does not monitor for when CAs expire. However, due to the long life of CAs, this is generally not an issue. However, you might need to periodically update the trust bundle.

Services

By default, all platform components that make egress HTTPS calls will use the RHCOS trust bundle. If trustedCA is defined, it will also be used.

Any service that is running on the RHCOS node is able to use the trust bundle of the node.

Management

These certificates are managed by the system and not the user.

Customization

Updating the user-provided trust bundle consists of either:

-

updating the PEM-encoded certificates in the ConfigMap referenced by

trustedCA,or -

creating a ConfigMap in the namespace

openshift-configthat contains the new trust bundle and updatingtrustedCAto reference the name of the new ConfigMap.

The mechanism for writing CA certificates to the RHCOS trust bundle is exactly the same as writing any other file to RHCOS, which is done through the use of MachineConfigs. When the Machine Config Operator (MCO) applies the new MachineConfig that contains the new CA certificates, the node is rebooted. During the next boot, the service coreos-update-ca-trust.service runs on the RHCOS nodes, which automatically update the trust bundle with the new CA certificates. For example:

apiVersion: machineconfiguration.openshift.io/v1

kind: MachineConfig

metadata:

labels:

machineconfiguration.openshift.io/role: worker

name: 50-examplecorp-ca-cert

spec:

config:

ignition:

version: 2.2.0

storage:

files:

- contents:

source: data:text/plain;charset=utf-8;base64,LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUVORENDQXh5Z0F3SUJBZ0lKQU51bkkwRDY2MmNuTUEwR0NTcUdTSWIzRFFFQkN3VUFNSUdsTVFzd0NRWUQKV1FRR0V3SlZVekVYTUJVR0ExVUVDQXdPVG05eWRHZ2dRMkZ5YjJ4cGJtRXhFREFPQmdOVkJBY01CMUpoYkdWcApBMmd4RmpBVUJnTlZCQW9NRFZKbFpDQklZWFFzSUVsdVl5NHhFekFSQmdOVkJBc01DbEpsWkNCSVlYUWdTVlF4Ckh6QVpCZ05WQkFNTUVsSmxaQ0JJWVhRZ1NWUWdVbTl2ZENCRFFURWhNQjhHQ1NxR1NJYjNEUUVKQVJZU2FXNW0KWGpDQnBURUxNQWtHQTFVRUJoTUNWVk14RnpBVkJnTlZCQWdNRGs1dmNuUm9JRU5oY205c2FXNWhNUkF3RGdZRApXUVFIREFkU1lXeGxhV2RvTVJZd0ZBWURWUVFLREExU1pXUWdTR0YwTENCSmJtTXVNUk13RVFZRFZRUUxEQXBTCkFXUWdTR0YwSUVsVU1Sc3dHUVlEVlFRRERCSlNaV1FnU0dGMElFbFVJRkp2YjNRZ1EwRXhJVEFmQmdrcWhraUcKMHcwQkNRRVdFbWx1Wm05elpXTkFjbVZrYUdGMExtTnZiVENDQVNJd0RRWUpLb1pJaHZjTkFRRUJCUUFEZ2dFUApCRENDQVFvQ2dnRUJBTFF0OU9KUWg2R0M1TFQxZzgwcU5oMHU1MEJRNHNaL3laOGFFVHh0KzVsblBWWDZNSEt6CmQvaTdsRHFUZlRjZkxMMm55VUJkMmZRRGsxQjBmeHJza2hHSUlaM2lmUDFQczRsdFRrdjhoUlNvYjNWdE5xU28KSHhrS2Z2RDJQS2pUUHhEUFdZeXJ1eTlpckxaaW9NZmZpM2kvZ0N1dDBaV3RBeU8zTVZINXFXRi9lbkt3Z1BFUwpZOXBvK1RkQ3ZSQi9SVU9iQmFNNzYxRWNyTFNNMUdxSE51ZVNmcW5obzNBakxRNmRCblBXbG82MzhabTFWZWJLCkNFTHloa0xXTVNGa0t3RG1uZTBqUTAyWTRnMDc1dkNLdkNzQ0F3RUFBYU5qTUdFd0hRWURWUjBPQkJZRUZIN1IKNXlDK1VlaElJUGV1TDhacXczUHpiZ2NaTUI4R0ExVWRJd1FZTUJhQUZIN1I0eUMrVWVoSUlQZXVMOFpxdzNQegpjZ2NaTUE4R0ExVWRFd0VCL3dRRk1BTUJBZjh3RGdZRFZSMFBBUUgvQkFRREFnR0dNQTBHQ1NxR1NJYjNEUUVCCkR3VUFBNElCQVFCRE52RDJWbTlzQTVBOUFsT0pSOCtlbjVYejloWGN4SkI1cGh4Y1pROGpGb0cwNFZzaHZkMGUKTUVuVXJNY2ZGZ0laNG5qTUtUUUNNNFpGVVBBaWV5THg0ZjUySHVEb3BwM2U1SnlJTWZXK0tGY05JcEt3Q3NhawpwU29LdElVT3NVSks3cUJWWnhjckl5ZVFWMnFjWU9lWmh0UzV3QnFJd09BaEZ3bENFVDdaZTU4UUhtUzQ4c2xqCjVlVGtSaml2QWxFeHJGektjbGpDNGF4S1Fsbk92VkF6eitHbTMyVTB4UEJGNEJ5ZVBWeENKVUh3MVRzeVRtZWwKU3hORXA3eUhvWGN3bitmWG5hK3Q1SldoMWd4VVp0eTMKLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

filesystem: root

mode: 0644

path: /etc/pki/ca-trust/source/anchors/examplecorp-ca.crtThe trust store of machines must also support updating the trust store of nodes.

Renewal

There are no Operators that can auto-renew certificates on the RHCOS nodes.

Red Hat does not monitor for when CAs expire. However, due to the long life of CAs, this is generally not an issue. However, you might need to periodically update the trust bundle.

2.4. Service CA certificates

Purpose

service-ca is an Operator that creates a self-signed CA when an OpenShift Container Platform cluster is deployed.

Expiration

A custom expiration term is not supported. The self-signed CA is stored in a secret with qualified name service-ca/signing-key in fields tls.crt (certificate(s)), tls.key (private key), and ca-bundle.crt (CA bundle).

Other services can request a service serving certificate by annotating a service resource with service.beta.openshift.io/serving-cert-secret-name: <secret name>. In response, the Operator generates a new certificate, as tls.crt, and private key, as tls.key to the named secret. The certificate is valid for two years.

Other services can request that the CA bundle for the service CA be injected into APIService or ConfigMap resources by annotating with service.beta.openshift.io/inject-cabundle: true to support validating certificates generated from the service CA. In response, the Operator writes its current CA bundle to the CABundle field of APIService or as service-ca.crt to a ConfigMap.

As of OpenShift Container Platform 4.3.5, automated rotation is supported and is backported to some 4.2.z and 4.3.z releases. For any release supporting automated rotation, the service CA is valid for 26 months and is automatically refreshed when there is less than 13 months validity left. If necessary, you can manually refresh the service CA.

The service CA expiration of 26 months is longer than the expected upgrade interval for a supported OpenShift Container Platform cluster, such that non-control plane consumers of service CA certificates will be refreshed after CA rotation and prior to the expiration of the pre-rotation CA.

A manually-rotated service CA does not maintain trust with the previous service CA. You might experience a temporary service disruption until the Pods in the cluster are restarted, which ensures that Pods are using service serving certificates issued by the new service CA.

Management

These certificates are managed by the system and not the user.

Services

Services that use service CA certificates include:

- cluster-autoscaler-operator

- cluster-monitoring-operator

- cluster-authentication-operator

- cluster-image-registry-operator

- cluster-ingress-operator

- cluster-kube-apiserver-operator

- cluster-kube-controller-manager-operator

- cluster-kube-scheduler-operator

- cluster-networking-operator

- cluster-openshift-apiserver-operator

- cluster-openshift-controller-manager-operator

- cluster-samples-operator

- cluster-svcat-apiserver-operator

- cluster-svcat-controller-manager-operator

- machine-config-operator

- console-operator

- insights-operator

- machine-api-operator

- operator-lifecycle-manager

This is not a comprehensive list.

2.5. Node certificates

Purpose

Node certificates are signed by the cluster; they come from a certificate authority (CA) that is generated by the bootstrap process. Once the cluster is installed, the node certificates are auto-rotated.

Management

These certificates are managed by the system and not the user.

2.6. Bootstrap certificates

Purpose

The kubelet, in OpenShift Container Platform 4 and later, uses the bootstrap certificate located in /etc/kubernetes/kubeconfig to initially bootstrap. This is followed by the bootstrap initialization process and authorization of the kubelet to create a CSR.

In that process, the kubelet generates a CSR while communicating over the bootstrap channel. The controller manager signs the CSR, resulting in a certificate that the kubelet manages.

Management

These certificates are managed by the system and not the user.

Expiration

This bootstrap CA is valid for 10 years.

The kubelet-managed certificate is valid for one year and rotates automatically at around the 80 percent mark of that one year.

Customization

You cannot customize the bootstrap certificates.

2.7. etcd certificates

Purpose

etcd certificates are signed by the etcd-signer; they come from a certificate authority (CA) that is generated by the bootstrap process.

Location

CA certificates:

-

etcd CA certificate:

/etc/ssl/etcd/ca.crt -

etcd metric CA certificate:

/etc/ssl/etcd/metric-ca.crt

-

etcd CA certificate:

-

Server certificates:

/etc/ssl/etcd/system:etcd-server -

Client certificates:

<api_server_pod_directory>/secrets/etcd-client/ -

Peer certificates:

/etc/ssl/etcd/system:etcd-peer -

Metric certificates:

/etc/ssl/etcd/metric-signer

Expiration

The CA certificates are valid for 10 years. The peer, client, and server certificates are valid for three years.

Management

These certificates are managed by the system and not the user.

Services

etcd certificates are used for encrypted communication between etcd member peers, as well as encrypted client traffic. The following certificates are generated and used by etcd and other processes that communicate with etcd:

- Peer certificates: Used for communication between etcd members.

-

Client certificates: Used for encrypted server-client communication. Client certificates are currently used by the API server only, and no other service should connect to etcd directly except for the proxy. Client secrets (

etcd-client,etcd-metric-client,etcd-metric-signer, andetcd-signer) are added to theopenshift-config,openshift-monitoring, andopenshift-kube-apiservernamespaces. - Server certificates: Used by the etcd server for authenticating client requests.

- Metric certificates: All metric consumers connect to proxy with metric-client certificates.

2.8. OLM certificates

Management

All certificates for OpenShift Lifecycle Manager (OLM) components (olm-operator, catalog-operator, packageserver, and marketplace-operator) are managed by the system.

Operators installed via OLM can have certificates generated for them if they are providing API services. packageserver is one example.

Certificates in the openshift-operator-lifecycle-manager namespace are managed by OLM with the exception of certificates used by Operators that require a validating or mutating webhook.

Operators that install validating or mutating webhooks must currently manage those certificates themselves. They do not require the user to manage the certificates.

OLM will not update the certificates of Operators that it manages in proxy environments. These certificates must be managed by the user via the subscription config.

2.9. User-provided certificates for default ingress

Purpose

Applications are usually exposed at <route_name>.apps.<cluster_name>.<base_domain>. The <cluster_name> and <base_domain> come from the installation config file. <route_name> is the host field of the route, if specified, or the route name. For example, hello-openshift-default.apps.username.devcluster.openshift.com. hello-openshift is the name of the route and the route is in the default namespace. You might want clients to access the applications without the need to distribute the cluster-managed CA certificates to the clients. The administrator must set a custom default certificate when serving application content.

The Ingress Operator generates a default certificate for an Ingress Controller to serve as a placeholder until you configure a custom default certificate. Do not use operator-generated default certificates in production clusters.

Location

The user-provided certificates must be provided in a kubernetes.io/tls type Secret in the openshift-config namespace. Update the ingresscontroller.operator/default resource in the openshift-ingress-operator namespace to enable the use of the user-provided certificate.

Management

User-provided certificates are managed by the user.

Expiration

User-provided certificates are managed by the user.

Services

Applications deployed on the cluster use user-provided certificates for default ingress.

Customization

Update the secret containing the user-managed certificate as needed.

2.10. Ingress certificates

Purpose

The Ingress Operator uses certificates for:

- Securing access to metrics for Prometheus.

- Securing access to routes.

Location

To secure access to Ingress Operator and Ingress Controller metrics, the Ingress Operator uses service serving certificates. The Operator requests a certificate from the service-ca controller for its own metrics, and the service-ca controller puts the certificate in a secret named metrics-tls in the openshift-ingress-operator namespace. Additionally, the Ingress Operator requests a certificate for each Ingress Controller, and the service-ca controller puts the certificate in a secret named router-metrics-certs-<name>, where <name> is the name of the Ingress Controller, in the openshift-ingress namespace.

Each Ingress Controller has a default certificate that it uses for secured routes that do not specify their own certificates. Unless you specify a custom certificate, the Operator uses a self-signed certificate by default. The Operator uses its own self-signed signing certificate to sign any default certificate that it generates. The Operator generates this signing certificate and puts it in a secret named router-ca in the openshift-ingress-operator namespace. When the Operator generates a default certificate, it puts the default certificate in a secret named router-certs-<name> (where <name> is the name of the Ingress Controller) in the openshift-ingress namespace.

The Ingress Operator generates a default certificate for an Ingress Controller to serve as a placeholder until you configure a custom default certificate. Do not use Operator-generated default certificates in production clusters.

Workflow

Figure 2.1. Custom certificate workflow

Figure 2.2. Default certificate workflow

![]() An empty

An empty defaultCertificate field causes the Ingress Operator to use its self-signed CA to generate a serving certificate for the specified domain.

![]() The default CA certificate and key generated by the Ingress Operator. Used to sign Operator-generated default serving certificates.

The default CA certificate and key generated by the Ingress Operator. Used to sign Operator-generated default serving certificates.

![]() In the default workflow, the wildcard default serving certificate, created by the Ingress Operator and signed using the generated default CA certificate. In the custom workflow, this is the user-provided certificate.

In the default workflow, the wildcard default serving certificate, created by the Ingress Operator and signed using the generated default CA certificate. In the custom workflow, this is the user-provided certificate.

![]() The router deployment. Uses the certificate in

The router deployment. Uses the certificate in secrets/router-certs-default as its default front-end server certificate.

![]() In the default workflow, the contents of the wildcard default serving certificate (public and private parts) are copied here to enable OAuth integration. In the custom workflow, this is the user-provided certificate.

In the default workflow, the contents of the wildcard default serving certificate (public and private parts) are copied here to enable OAuth integration. In the custom workflow, this is the user-provided certificate.

![]() Transitional resource containing the certificate (public part) of the Operator-generated default CA certificate; read by OAuth and the web console to establish trust. This object will be removed in a future release.

Transitional resource containing the certificate (public part) of the Operator-generated default CA certificate; read by OAuth and the web console to establish trust. This object will be removed in a future release.

![]() The public (certificate) part of the default serving certificate. Replaces the

The public (certificate) part of the default serving certificate. Replaces the configmaps/router-ca resource.

![]() The user updates the cluster proxy configuration with the CA certificate that signed the

The user updates the cluster proxy configuration with the CA certificate that signed the ingresscontroller serving certificate. This enables components like auth, console, and the registry to trust the serving certificate.

![]() The cluster-wide trusted CA bundle containing the combined Red Hat Enterprise Linux CoreOS (RHCOS) and user-provided CA bundles or an RHCOS-only bundle if a user bundle is not provided.

The cluster-wide trusted CA bundle containing the combined Red Hat Enterprise Linux CoreOS (RHCOS) and user-provided CA bundles or an RHCOS-only bundle if a user bundle is not provided.

![]() The custom CA certificate bundle, which instructs other components (for example,

The custom CA certificate bundle, which instructs other components (for example, auth and console) to trust an ingresscontroller configured with a custom certificate.

![]() The

The trustedCA field is used to reference the user-provided CA bundle.

![]() The Cluster Network Operator injects the trusted CA bundle into the

The Cluster Network Operator injects the trusted CA bundle into the proxy-ca ConfigMap.

![]() OpenShift Container Platform 4.3 and earlier versions use

OpenShift Container Platform 4.3 and earlier versions use router-ca.

Expiration

The expiration terms for the Ingress Operator’s certificates are as follows:

-

The expiration date for metrics certificates that the

service-cacontroller creates is two years after the date of creation. - The expiration date for the Operator’s signing certificate is two years after the date of creation.

- The expiration date for default certificates that the Operator generates is two years after the date of creation.

You cannot specify custom expiration terms on certificates that the Ingress Operator or service-ca controller creates.

You cannot specify expiration terms when installing OpenShift Container Platform for certificates that the Ingress Operator or service-ca controller creates.

Services

Prometheus uses the certificates that secure metrics.

The Ingress Operator uses its signing certificate to sign default certificates that it generates for Ingress Controllers for which you do not set custom default certificates.

Cluster components that use secured routes may use the default Ingress Controller’s default certificate.

Ingress to the cluster via a secured route uses the default certificate of the Ingress Controller by which the route is accessed unless the route specifies its own certificate.

Management

Ingress certificates are managed by the user. See Replacing the default ingress certificate for more information.

Renewal

The service-ca controller automatically rotates the certificates that it issues. However, it is possible to use oc delete secret <secret> to manually rotate service serving certificates.

The Ingress Operator does not rotate its own signing certificate or the default certificates that it generates. Operator-generated default certificates are intended as placeholders for custom default certificates that you configure.

Chapter 3. Monitoring and cluster logging Operator component certificates

Monitoring components secure their traffic with service CA certificates. These certificates are valid for 2 years and are replaced automatically on rotation of the service CA, which is every 13 months.

If the certificate lives in the openshift-monitoring or openshift-logging namespace, it is system managed and rotated automatically.

Management

These certificates are managed by the system and not the user.

Chapter 4. Control plane certificates

Location

Control plane certificates are included in these namespaces:

- openshift-config-managed

- openshift-kube-apiserver

- openshift-kube-apiserver-operator

- openshift-kube-controller-manager

- openshift-kube-controller-manager-operator

- openshift-kube-scheduler

Management

Control plane certificates are managed by the system and rotated automatically.

In the rare case that your control plane certificates expired, see Recovering from expired control plane certificates

Additional resources

- Manually rotate service serving certificates

- Securing service traffic using service serving certificate secrets

- Recovering from expired control plane certificates

- Configuring the cluster-wide proxy

- Adding API server certificates

- Replacing the default ingress certificate

- Working with nodes

- Recovering from lost master hosts

Chapter 5. Configuring the internal OAuth server

5.1. OpenShift Container Platform OAuth server

The OpenShift Container Platform master includes a built-in OAuth server. Users obtain OAuth access tokens to authenticate themselves to the API.

When a person requests a new OAuth token, the OAuth server uses the configured identity provider to determine the identity of the person making the request.

It then determines what user that identity maps to, creates an access token for that user, and returns the token for use.

5.2. OAuth token request flows and responses

The OAuth server supports standard authorization code grant and the implicit grant OAuth authorization flows.

When requesting an OAuth token using the implicit grant flow (response_type=token) with a client_id configured to request WWW-Authenticate challenges (like openshift-challenging-client), these are the possible server responses from /oauth/authorize, and how they should be handled:

| Status | Content | Client response |

|---|---|---|

| 302 |

|

Use the |

| 302 |

|

Fail, optionally surfacing the |

| 302 |

Other | Follow the redirect, and process the result using these rules |

| 401 |

|

Respond to challenge if type is recognized (e.g. |

| 401 |

| No challenge authentication is possible. Fail and show response body (which might contain links or details on alternate methods to obtain an OAuth token) |

| Other | Other | Fail, optionally surfacing response body to the user |

5.3. Options for the internal OAuth server

Several configuration options are available for the internal OAuth server.

5.3.1. OAuth token duration options

The internal OAuth server generates two kinds of tokens:

| Access tokens | Longer-lived tokens that grant access to the API. |

| Authorize codes | Short-lived tokens whose only use is to be exchanged for an access token. |

You can configure the default duration for both types of token. If necessary, you can override the duration of the access token by using an OAuthClient object definition.

5.3.2. OAuth grant options

When the OAuth server receives token requests for a client to which the user has not previously granted permission, the action that the OAuth server takes is dependent on the OAuth client’s grant strategy.

The OAuth client requesting token must provide its own grant strategy.

You can apply the following default methods:

|

| Auto-approve the grant and retry the request. |

|

| Prompt the user to approve or deny the grant. |

5.4. Configuring the internal OAuth server’s token duration

You can configure default options for the internal OAuth server’s token duration.

By default, tokens are only valid for 24 hours. Existing sessions expire after this time elapses.

If the default time is insufficient, then this can be modified using the following procedure.

Procedure

Create a configuration file that contains the token duration options. The following file sets this to 48 hours, twice the default.

apiVersion: config.openshift.io/v1 kind: OAuth metadata: name: cluster spec: tokenConfig: accessTokenMaxAgeSeconds: 1728001 - 1

- Set

accessTokenMaxAgeSecondsto control the lifetime of access tokens. The default lifetime is 24 hours, or 86400 seconds. This attribute cannot be negative.

Apply the new configuration file:

NoteBecause you update the existing OAuth server, you must use the

oc applycommand to apply the change.$ oc apply -f </path/to/file.yaml>Confirm that the changes are in effect:

$ oc describe oauth.config.openshift.io/cluster ... Spec: Token Config: Access Token Max Age Seconds: 172800 ...

5.5. Register an additional OAuth client

If you need an additional OAuth client to manage authentication for your OpenShift Container Platform cluster, you can register one.

Procedure

To register additional OAuth clients:

$ oc create -f <(echo ' kind: OAuthClient apiVersion: oauth.openshift.io/v1 metadata: name: demo1 secret: "..."2 redirectURIs: - "http://www.example.com/"3 grantMethod: prompt4 ')- 1

- The

nameof the OAuth client is used as theclient_idparameter when making requests to<namespace_route>/oauth/authorizeand<namespace_route>/oauth/token. - 2

- The

secretis used as theclient_secretparameter when making requests to<namespace_route>/oauth/token. - 3

- The

redirect_uriparameter specified in requests to<namespace_route>/oauth/authorizeand<namespace_route>/oauth/tokenmust be equal to or prefixed by one of the URIs listed in theredirectURIsparameter value. - 4

- The

grantMethodis used to determine what action to take when this client requests tokens and has not yet been granted access by the user. Specifyautoto automatically approve the grant and retry the request, orpromptto prompt the user to approve or deny the grant.

5.6. OAuth server metadata

Applications running in OpenShift Container Platform might have to discover information about the built-in OAuth server. For example, they might have to discover what the address of the <namespace_route> is without manual configuration. To aid in this, OpenShift Container Platform implements the IETF OAuth 2.0 Authorization Server Metadata draft specification.

Thus, any application running inside the cluster can issue a GET request to https://openshift.default.svc/.well-known/oauth-authorization-server to fetch the following information:

{

"issuer": "https://<namespace_route>",

"authorization_endpoint": "https://<namespace_route>/oauth/authorize",

"token_endpoint": "https://<namespace_route>/oauth/token",

"scopes_supported": [

"user:full",

"user:info",

"user:check-access",

"user:list-scoped-projects",

"user:list-projects"

],

"response_types_supported": [

"code",

"token"

],

"grant_types_supported": [

"authorization_code",

"implicit"

],

"code_challenge_methods_supported": [

"plain",

"S256"

]

}- 1

- The authorization server’s issuer identifier, which is a URL that uses the

httpsscheme and has no query or fragment components. This is the location where.well-knownRFC 5785 resources containing information about the authorization server are published. - 2

- URL of the authorization server’s authorization endpoint. See RFC 6749.

- 3

- URL of the authorization server’s token endpoint. See RFC 6749.

- 4

- JSON array containing a list of the OAuth 2.0 RFC 6749 scope values that this authorization server supports. Note that not all supported scope values are advertised.

- 5

- JSON array containing a list of the OAuth 2.0

response_typevalues that this authorization server supports. The array values used are the same as those used with theresponse_typesparameter defined by "OAuth 2.0 Dynamic Client Registration Protocol" in RFC 7591. - 6

- JSON array containing a list of the OAuth 2.0 grant type values that this authorization server supports. The array values used are the same as those used with the

grant_typesparameter defined byOAuth 2.0 Dynamic Client Registration Protocolin RFC 7591. - 7

- JSON array containing a list of PKCE RFC 7636 code challenge methods supported by this authorization server. Code challenge method values are used in the

code_challenge_methodparameter defined in Section 4.3 of RFC 7636. The valid code challenge method values are those registered in the IANAPKCE Code Challenge Methodsregistry. See IANA OAuth Parameters.

5.7. Troubleshooting OAuth API events

In some cases the API server returns an unexpected condition error message that is difficult to debug without direct access to the API master log. The underlying reason for the error is purposely obscured in order to avoid providing an unauthenticated user with information about the server’s state.

A subset of these errors is related to service account OAuth configuration issues. These issues are captured in events that can be viewed by non-administrator users. When encountering an unexpected condition server error during OAuth, run oc get events to view these events under ServiceAccount.

The following example warns of a service account that is missing a proper OAuth redirect URI:

$ oc get events | grep ServiceAccount

1m 1m 1 proxy ServiceAccount Warning NoSAOAuthRedirectURIs service-account-oauth-client-getter system:serviceaccount:myproject:proxy has no redirectURIs; set serviceaccounts.openshift.io/oauth-redirecturi.<some-value>=<redirect> or create a dynamic URI using serviceaccounts.openshift.io/oauth-redirectreference.<some-value>=<reference>

Running oc describe sa/<service-account-name> reports any OAuth events associated with the given service account name.

$ oc describe sa/proxy | grep -A5 Events

Events:

FirstSeen LastSeen Count From SubObjectPath Type Reason Message

--------- -------- ----- ---- ------------- -------- ------ -------

3m 3m 1 service-account-oauth-client-getter Warning NoSAOAuthRedirectURIs system:serviceaccount:myproject:proxy has no redirectURIs; set serviceaccounts.openshift.io/oauth-redirecturi.<some-value>=<redirect> or create a dynamic URI using serviceaccounts.openshift.io/oauth-redirectreference.<some-value>=<reference>The following is a list of the possible event errors:

No redirect URI annotations or an invalid URI is specified

Reason Message

NoSAOAuthRedirectURIs system:serviceaccount:myproject:proxy has no redirectURIs; set serviceaccounts.openshift.io/oauth-redirecturi.<some-value>=<redirect> or create a dynamic URI using serviceaccounts.openshift.io/oauth-redirectreference.<some-value>=<reference>Invalid route specified

Reason Message

NoSAOAuthRedirectURIs [routes.route.openshift.io "<name>" not found, system:serviceaccount:myproject:proxy has no redirectURIs; set serviceaccounts.openshift.io/oauth-redirecturi.<some-value>=<redirect> or create a dynamic URI using serviceaccounts.openshift.io/oauth-redirectreference.<some-value>=<reference>]Invalid reference type specified

Reason Message

NoSAOAuthRedirectURIs [no kind "<name>" is registered for version "v1", system:serviceaccount:myproject:proxy has no redirectURIs; set serviceaccounts.openshift.io/oauth-redirecturi.<some-value>=<redirect> or create a dynamic URI using serviceaccounts.openshift.io/oauth-redirectreference.<some-value>=<reference>]Missing SA tokens

Reason Message

NoSAOAuthTokens system:serviceaccount:myproject:proxy has no tokensChapter 6. Understanding identity provider configuration

The OpenShift Container Platform master includes a built-in OAuth server. Developers and administrators obtain OAuth access tokens to authenticate themselves to the API.

As an administrator, you can configure OAuth to specify an identity provider after you install your cluster.

6.1. About identity providers in OpenShift Container Platform

By default, only a kubeadmin user exists on your cluster. To specify an identity provider, you must create a Custom Resource (CR) that describes that identity provider and add it to the cluster.

OpenShift Container Platform user names containing /, :, and % are not supported.

6.2. Supported identity providers

You can configure the following types of identity providers:

| Identity provider | Description |

|---|---|

|

Configure the | |

|

Configure the | |

|

Configure the | |

|

Configure a | |

|

Configure a | |

|

Configure a | |

|

Configure a | |

|

Configure a | |

|

Configure an |

Once an identity provider has been defined, you can use RBAC to define and apply permissions.

6.3. Removing the kubeadmin user

After you define an identity provider and create a new cluster-admin user, you can remove the kubeadmin to improve cluster security.

If you follow this procedure before another user is a cluster-admin, then OpenShift Container Platform must be reinstalled. It is not possible to undo this command.

Prerequisites

- You must have configured at least one identity provider.

-

You must have added the

cluster-adminrole to a user. - You must be logged in as an administrator.

Procedure

Remove the

kubeadminsecrets:$ oc delete secrets kubeadmin -n kube-system

6.4. Identity provider parameters

The following parameters are common to all identity providers:

| Parameter | Description |

|---|---|

|

| The provider name is prefixed to provider user names to form an identity name. |

|

| Defines how new identities are mapped to users when they log in. Enter one of the following values:

|

When adding or changing identity providers, you can map identities from the new provider to existing users by setting the mappingMethod parameter to add.

6.5. Sample identity provider CR

The following Custom Resource (CR) shows the parameters and default values that you use to configure an identity provider. This example uses the HTPasswd identity provider.

Sample identity provider CR

apiVersion: config.openshift.io/v1

kind: OAuth

metadata:

name: cluster

spec:

identityProviders:

- name: my_identity_provider

mappingMethod: claim

type: HTPasswd

htpasswd:

fileData:

name: htpass-secret Chapter 7. Configuring identity providers

7.1. Configuring an HTPasswd identity provider

7.1.1. About identity providers in OpenShift Container Platform

By default, only a kubeadmin user exists on your cluster. To specify an identity provider, you must create a Custom Resource (CR) that describes that identity provider and add it to the cluster.

OpenShift Container Platform user names containing /, :, and % are not supported.

To define an HTPasswd identity provider you must perform the following steps:

-

Create an

htpasswdfile to store the user and password information. Instructions are provided for Linux and Windows. -

Create an OpenShift Container Platform secret to represent the

htpasswdfile. - Define the HTPasswd identity provider resource.

- Apply the resource to the default OAuth configuration.

7.1.2. Creating an HTPasswd file using Linux

To use the HTPasswd identity provider, you must generate a flat file that contains the user names and passwords for your cluster by using htpasswd.

Prerequisites

-

Have access to the

htpasswdutility. On Red Hat Enterprise Linux this is available by installing thehttpd-toolspackage.

Procedure

Create or update your flat file with a user name and hashed password:

$ htpasswd -c -B -b </path/to/users.htpasswd> <user_name> <password>The command generates a hashed version of the password.

For example:

$ htpasswd -c -B -b users.htpasswd user1 MyPassword! Adding password for user user1Continue to add or update credentials to the file:

$ htpasswd -B -b </path/to/users.htpasswd> <user_name> <password>

7.1.3. Creating an HTPasswd file using Windows

To use the HTPasswd identity provider, you must generate a flat file that contains the user names and passwords for your cluster by using htpasswd.

Prerequisites

-

Have access to

htpasswd.exe. This file is included in the\bindirectory of many Apache httpd distributions.

Procedure

Create or update your flat file with a user name and hashed password:

> htpasswd.exe -c -B -b <\path\to\users.htpasswd> <user_name> <password>The command generates a hashed version of the password.

For example:

> htpasswd.exe -c -B -b users.htpasswd user1 MyPassword! Adding password for user user1Continue to add or update credentials to the file:

> htpasswd.exe -b <\path\to\users.htpasswd> <user_name> <password>

7.1.4. Creating the HTPasswd Secret

To use the HTPasswd identity provider, you must define a secret that contains the HTPasswd user file.

Prerequisites

- Create an HTPasswd file.

Procedure

Create an OpenShift Container Platform Secret that contains the HTPasswd users file.

$ oc create secret generic htpass-secret --from-file=htpasswd=</path/to/users.htpasswd> -n openshift-configNoteThe secret key containing the users file for the

--from-fileargument must be namedhtpasswd, as shown in the above command.

7.1.5. Sample HTPasswd CR

The following Custom Resource (CR) shows the parameters and acceptable values for an HTPasswd identity provider.

HTPasswd CR

apiVersion: config.openshift.io/v1

kind: OAuth

metadata:

name: cluster

spec:

identityProviders:

- name: my_htpasswd_provider

mappingMethod: claim

type: HTPasswd

htpasswd:

fileData:

name: htpass-secret 7.1.6. Adding an identity provider to your clusters

After you install your cluster, add an identity provider to it so your users can authenticate.

Prerequisites

- Create an OpenShift Container Platform cluster.

- Create the Custom Resource (CR) for your identity providers.

- You must be logged in as an administrator.

Procedure

Apply the defined CR:

$ oc apply -f </path/to/CR>NoteIf a CR does not exist,

oc applycreates a new CR and might trigger the following warning:Warning: oc apply should be used on resources created by either oc create --save-config or oc apply. In this case you can safely ignore this warning.Log in to the cluster as a user from your identity provider, entering the password when prompted.

$ oc login -u <username>Confirm that the user logged in successfully, and display the user name.

$ oc whoami

7.1.7. Updating users for an HTPasswd identity provider

You can add or remove users from an existing HTPasswd identity provider.

Prerequisites

-

You have created a secret that contains the HTPasswd user file. This procedure assumes that it is named

htpass-secret. -

You have configured an HTPasswd identity provider. This procedure assumes that it is named

my_htpasswd_provider. -

You have access to the

htpasswdutility. On Red Hat Enterprise Linux this is available by installing thehttpd-toolspackage. - You have cluster administrator privileges.

Procedure

Retrieve the HTPasswd file from the

htpass-secretsecret and save the file to your file system:$ oc get secret htpass-secret -ojsonpath={.data.htpasswd} -n openshift-config | base64 -d > users.htpasswdAdd or remove users from the

users.htpasswdfile.To add a new user:

$ htpasswd -bB users.htpasswd <username> <password> Adding password for user <username>To remove an existing user:

$ htpasswd -D users.htpasswd <username> Deleting password for user <username>

Replace the

htpass-secretsecret with the updated users in theusers.htpasswdfile:$ oc create secret generic htpass-secret --from-file=htpasswd=users.htpasswd --dry-run -o yaml -n openshift-config | oc replace -f -If you removed one or more users, you must additionally remove existing resources for each user.

Delete the user:

$ oc delete user <username> user.user.openshift.io "<username>" deletedBe sure to remove the user, otherwise the user can continue using their token as long as it has not expired.

Delete the identity for the user:

$ oc delete identity my_htpasswd_provider:<username> identity.user.openshift.io "my_htpasswd_provider:<username>" deleted

7.1.8. Configuring identity providers using the web console

Configure your identity provider (IDP) through the web console instead of the CLI.

Prerequisites

- You must be logged in to the web console as a cluster administrator.

Procedure

- Navigate to Administration → Cluster Settings.

- Under the Global Configuration tab, click OAuth.

- Under the Identity Providers section, select your identity provider from the Add drop-down menu.

You can specify multiple IDPs through the web console without overwriting existing IDPs.

7.2. Configuring a Keystone identity provider

Configure the keystone identity provider to integrate your OpenShift Container Platform cluster with Keystone to enable shared authentication with an OpenStack Keystone v3 server configured to store users in an internal database. This configuration allows users to log in to OpenShift Container Platform with their Keystone credentials.

Keystone is an OpenStack project that provides identity, token, catalog, and policy services.

You can configure the integration with Keystone so that the new OpenShift Container Platform users are based on either the Keystone user names or unique Keystone IDs. With both methods, users log in by entering their Keystone user name and password. Basing the OpenShift Container Platform users off of the Keystone ID is more secure. If you delete a Keystone user and create a new Keystone user with that user name, the new user might have access to the old user’s resources.

7.2.1. About identity providers in OpenShift Container Platform

By default, only a kubeadmin user exists on your cluster. To specify an identity provider, you must create a Custom Resource (CR) that describes that identity provider and add it to the cluster.

OpenShift Container Platform user names containing /, :, and % are not supported.

7.2.2. Creating the Secret

Identity providers use OpenShift Container Platform Secrets in the openshift-config namespace to contain the client secret, client certificates, and keys.

You can define an OpenShift Container Platform Secret containing a string by using the following command.

$ oc create secret generic <secret_name> --from-literal=clientSecret=<secret> -n openshift-configYou can define an OpenShift Container Platform Secret containing the contents of a file, such as a certificate file, by using the following command.

$ oc create secret generic <secret_name> --from-file=/path/to/file -n openshift-config

7.2.3. Creating a ConfigMap

Identity providers use OpenShift Container Platform ConfigMaps in the openshift-config namespace to contain the certificate authority bundle. These are primarily used to contain certificate bundles needed by the identity provider.

Define an OpenShift Container Platform ConfigMap containing the certificate authority by using the following command. The certificate authority must be stored in the

ca.crtkey of the ConfigMap.$ oc create configmap ca-config-map --from-file=ca.crt=/path/to/ca -n openshift-config

7.2.4. Sample Keystone CR

The following Custom Resource (CR) shows the parameters and acceptable values for a Keystone identity provider.

Keystone CR

apiVersion: config.openshift.io/v1

kind: OAuth

metadata:

name: cluster

spec:

identityProviders:

- name: keystoneidp

mappingMethod: claim

type: Keystone

keystone:

domainName: default

url: https://keystone.example.com:5000

ca:

name: ca-config-map

tlsClientCert:

name: client-cert-secret

tlsClientKey:

name: client-key-secret- 1

- This provider name is prefixed to provider user names to form an identity name.

- 2

- Controls how mappings are established between this provider’s identities and user objects.

- 3

- Keystone domain name. In Keystone, usernames are domain-specific. Only a single domain is supported.

- 4

- The URL to use to connect to the Keystone server (required). This must use https.

- 5

- Optional: Reference to an OpenShift Container Platform ConfigMap containing the PEM-encoded certificate authority bundle to use in validating server certificates for the configured URL.

- 6

- Optional: Reference to an OpenShift Container Platform Secret containing the client certificate to present when making requests to the configured URL.

- 7

- Reference to an OpenShift Container Platform Secret containing the key for the client certificate. Required if

tlsClientCertis specified.

7.2.5. Adding an identity provider to your clusters

After you install your cluster, add an identity provider to it so your users can authenticate.

Prerequisites

- Create an OpenShift Container Platform cluster.

- Create the Custom Resource (CR) for your identity providers.

- You must be logged in as an administrator.

Procedure

Apply the defined CR:

$ oc apply -f </path/to/CR>NoteIf a CR does not exist,

oc applycreates a new CR and might trigger the following warning:Warning: oc apply should be used on resources created by either oc create --save-config or oc apply. In this case you can safely ignore this warning.Log in to the cluster as a user from your identity provider, entering the password when prompted.

$ oc login -u <username>Confirm that the user logged in successfully, and display the user name.

$ oc whoami

7.3. Configuring an LDAP identity provider

Configure the ldap identity provider to validate user names and passwords against an LDAPv3 server, using simple bind authentication.

7.3.1. About identity providers in OpenShift Container Platform

By default, only a kubeadmin user exists on your cluster. To specify an identity provider, you must create a Custom Resource (CR) that describes that identity provider and add it to the cluster.

OpenShift Container Platform user names containing /, :, and % are not supported.

7.3.2. About LDAP authentication

During authentication, the LDAP directory is searched for an entry that matches the provided user name. If a single unique match is found, a simple bind is attempted using the distinguished name (DN) of the entry plus the provided password.

These are the steps taken:

-

Generate a search filter by combining the attribute and filter in the configured

urlwith the user-provided user name. - Search the directory using the generated filter. If the search does not return exactly one entry, deny access.

- Attempt to bind to the LDAP server using the DN of the entry retrieved from the search, and the user-provided password.

- If the bind is unsuccessful, deny access.

- If the bind is successful, build an identity using the configured attributes as the identity, email address, display name, and preferred user name.

The configured url is an RFC 2255 URL, which specifies the LDAP host and search parameters to use. The syntax of the URL is:

ldap://host:port/basedn?attribute?scope?filterFor this URL:

| URL Component | Description |

|---|---|

|

|

For regular LDAP, use the string |

|

|

The name and port of the LDAP server. Defaults to |

|

| The DN of the branch of the directory where all searches should start from. At the very least, this must be the top of your directory tree, but it could also specify a subtree in the directory. |

|

|

The attribute to search for. Although RFC 2255 allows a comma-separated list of attributes, only the first attribute will be used, no matter how many are provided. If no attributes are provided, the default is to use |

|

|

The scope of the search. Can be either |

|

|

A valid LDAP search filter. If not provided, defaults to |

If you are using an insecure LDAP connection (ldap:// or port 389), then you must check the Insecure option in the configuration wizard.

When doing searches, the attribute, filter, and provided user name are combined to create a search filter that looks like:

(&(<filter>)(<attribute>=<username>))For example, consider a URL of:

ldap://ldap.example.com/o=Acme?cn?sub?(enabled=true)

When a client attempts to connect using a user name of bob, the resulting search filter will be (&(enabled=true)(cn=bob)).

If the LDAP directory requires authentication to search, specify a bindDN and bindPassword to use to perform the entry search.

7.3.3. Creating the LDAP Secret

To use the identity provider, you must define an OpenShift Container Platform Secret that contains the bindPassword.

Define an OpenShift Container Platform Secret that contains the bindPassword.

$ oc create secret generic ldap-secret --from-literal=bindPassword=<secret> -n openshift-configNoteThe secret key containing the bindPassword for the

--from-literalargument must be calledbindPassword, as shown in the above command.

7.3.4. Creating a ConfigMap

Identity providers use OpenShift Container Platform ConfigMaps in the openshift-config namespace to contain the certificate authority bundle. These are primarily used to contain certificate bundles needed by the identity provider.

Define an OpenShift Container Platform ConfigMap containing the certificate authority by using the following command. The certificate authority must be stored in the

ca.crtkey of the ConfigMap.$ oc create configmap ca-config-map --from-file=ca.crt=/path/to/ca -n openshift-config

7.3.5. Sample LDAP CR

The following Custom Resource (CR) shows the parameters and acceptable values for an LDAP identity provider.

LDAP CR

apiVersion: config.openshift.io/v1

kind: OAuth

metadata:

name: cluster

spec:

identityProviders:

- name: ldapidp

mappingMethod: claim

type: LDAP

ldap:

attributes:

id:

- dn

email:

- mail

name:

- cn

preferredUsername:

- uid

bindDN: ""

bindPassword:

name: ldap-secret

ca:

name: ca-config-map

insecure: false

url: "ldap://ldap.example.com/ou=users,dc=acme,dc=com?uid" - 1

- This provider name is prefixed to the returned user ID to form an identity name.

- 2

- Controls how mappings are established between this provider’s identities and user objects.

- 3

- List of attributes to use as the identity. First non-empty attribute is used. At least one attribute is required. If none of the listed attribute have a value, authentication fails. Defined attributes are retrieved as raw, allowing for binary values to be used.

- 4

- List of attributes to use as the email address. First non-empty attribute is used.

- 5

- List of attributes to use as the display name. First non-empty attribute is used.

- 6

- List of attributes to use as the preferred user name when provisioning a user for this identity. First non-empty attribute is used.

- 7

- Optional DN to use to bind during the search phase. Must be set if

bindPasswordis defined. - 8

- Optional reference to an OpenShift Container Platform Secret containing the bind password. Must be set if

bindDNis defined. - 9

- Optional: Reference to an OpenShift Container Platform ConfigMap containing the PEM-encoded certificate authority bundle to use in validating server certificates for the configured URL. Only used when

insecureisfalse. - 10

- When

true, no TLS connection is made to the server. Whenfalse,ldaps://URLs connect using TLS, andldap://URLs are upgraded to TLS. This should be set tofalsewhenldaps://URLs are in use, as these URLs always attempt to connect using TLS. - 11

- An RFC 2255 URL which specifies the LDAP host and search parameters to use.

To whitelist users for an LDAP integration, use the lookup mapping method. Before a login from LDAP would be allowed, a cluster administrator must create an identity and user object for each LDAP user.

7.3.6. Adding an identity provider to your clusters

After you install your cluster, add an identity provider to it so your users can authenticate.

Prerequisites

- Create an OpenShift Container Platform cluster.

- Create the Custom Resource (CR) for your identity providers.

- You must be logged in as an administrator.

Procedure

Apply the defined CR:

$ oc apply -f </path/to/CR>NoteIf a CR does not exist,

oc applycreates a new CR and might trigger the following warning:Warning: oc apply should be used on resources created by either oc create --save-config or oc apply. In this case you can safely ignore this warning.Log in to the cluster as a user from your identity provider, entering the password when prompted.

$ oc login -u <username>Confirm that the user logged in successfully, and display the user name.

$ oc whoami

7.4. Configuring an basic authentication identity provider

Configure a basic-authentication identity provider for users to log in to OpenShift Container Platform with credentials validated against a remote identity provider. Basic authentication is a generic backend integration mechanism.

7.4.1. About identity providers in OpenShift Container Platform

By default, only a kubeadmin user exists on your cluster. To specify an identity provider, you must create a Custom Resource (CR) that describes that identity provider and add it to the cluster.

OpenShift Container Platform user names containing /, :, and % are not supported.

7.4.2. About basic authentication

Basic authentication is a generic backend integration mechanism that allows users to log in to OpenShift Container Platform with credentials validated against a remote identity provider.

Because basic authentication is generic, you can use this identity provider for advanced authentication configurations.

Basic authentication must use an HTTPS connection to the remote server to prevent potential snooping of the user ID and password and man-in-the-middle attacks.

With basic authentication configured, users send their user name and password to OpenShift Container Platform, which then validates those credentials against a remote server by making a server-to-server request, passing the credentials as a basic authentication header. This requires users to send their credentials to OpenShift Container Platform during login.

This only works for user name/password login mechanisms, and OpenShift Container Platform must be able to make network requests to the remote authentication server.

User names and passwords are validated against a remote URL that is protected by basic authentication and returns JSON.

A 401 response indicates failed authentication.

A non-200 status, or the presence of a non-empty "error" key, indicates an error:

{"error":"Error message"}

A 200 status with a sub (subject) key indicates success:

{"sub":"userid"} - 1

- The subject must be unique to the authenticated user and must not be able to be modified.

A successful response can optionally provide additional data, such as:

A display name using the

namekey. For example:{"sub":"userid", "name": "User Name", ...}An email address using the

emailkey. For example:{"sub":"userid", "email":"user@example.com", ...}A preferred user name using the

preferred_usernamekey. This is useful when the unique, unchangeable subject is a database key or UID, and a more human-readable name exists. This is used as a hint when provisioning the OpenShift Container Platform user for the authenticated identity. For example:{"sub":"014fbff9a07c", "preferred_username":"bob", ...}

7.4.3. Creating the Secret

Identity providers use OpenShift Container Platform Secrets in the openshift-config namespace to contain the client secret, client certificates, and keys.

You can define an OpenShift Container Platform Secret containing a string by using the following command.

$ oc create secret generic <secret_name> --from-literal=clientSecret=<secret> -n openshift-configYou can define an OpenShift Container Platform Secret containing the contents of a file, such as a certificate file, by using the following command.

$ oc create secret generic <secret_name> --from-file=/path/to/file -n openshift-config

7.4.4. Creating a ConfigMap

Identity providers use OpenShift Container Platform ConfigMaps in the openshift-config namespace to contain the certificate authority bundle. These are primarily used to contain certificate bundles needed by the identity provider.

Define an OpenShift Container Platform ConfigMap containing the certificate authority by using the following command. The certificate authority must be stored in the

ca.crtkey of the ConfigMap.$ oc create configmap ca-config-map --from-file=ca.crt=/path/to/ca -n openshift-config

7.4.5. Sample basic authentication CR

The following Custom Resource (CR) shows the parameters and acceptable values for an basic authentication identity provider.

Basic authentication CR

apiVersion: config.openshift.io/v1

kind: OAuth

metadata:

name: cluster

spec:

identityProviders:

- name: basicidp

mappingMethod: claim

type: BasicAuth

basicAuth:

url: https://www.example.com/remote-idp

ca:

name: ca-config-map

tlsClientCert:

name: client-cert-secret

tlsClientKey:

name: client-key-secret- 1

- This provider name is prefixed to the returned user ID to form an identity name.

- 2

- Controls how mappings are established between this provider’s identities and user objects.

- 3

- URL accepting credentials in Basic authentication headers.

- 4

- Optional: Reference to an OpenShift Container Platform ConfigMap containing the PEM-encoded certificate authority bundle to use in validating server certificates for the configured URL.

- 5

- Optional: Reference to an OpenShift Container Platform Secret containing the client certificate to present when making requests to the configured URL.

- 6

- Reference to an OpenShift Container Platform Secret containing the key for the client certificate. Required if

tlsClientCertis specified.

7.4.6. Adding an identity provider to your clusters

After you install your cluster, add an identity provider to it so your users can authenticate.

Prerequisites

- Create an OpenShift Container Platform cluster.

- Create the Custom Resource (CR) for your identity providers.

- You must be logged in as an administrator.

Procedure

Apply the defined CR:

$ oc apply -f </path/to/CR>NoteIf a CR does not exist,

oc applycreates a new CR and might trigger the following warning:Warning: oc apply should be used on resources created by either oc create --save-config or oc apply. In this case you can safely ignore this warning.Log in to the cluster as a user from your identity provider, entering the password when prompted.

$ oc login -u <username>Confirm that the user logged in successfully, and display the user name.

$ oc whoami

7.4.7. Example Apache HTTPD configuration for basic identity providers

The basic identify provider (IDP) configuration in OpenShift Container Platform 4 requires that the IDP server respond with JSON for success and failures. You can use CGI scripting in Apache HTTPD to accomplish this. This section provides examples.

/etc/httpd/conf.d/login.conf

<VirtualHost *:443>

# CGI Scripts in here

DocumentRoot /var/www/cgi-bin

# SSL Directives

SSLEngine on

SSLCipherSuite PROFILE=SYSTEM

SSLProxyCipherSuite PROFILE=SYSTEM

SSLCertificateFile /etc/pki/tls/certs/localhost.crt

SSLCertificateKeyFile /etc/pki/tls/private/localhost.key

# Configure HTTPD to execute scripts

ScriptAlias /basic /var/www/cgi-bin

# Handles a failed login attempt

ErrorDocument 401 /basic/fail.cgi

# Handles authentication

<Location /basic/login.cgi>

AuthType Basic

AuthName "Please Log In"

AuthBasicProvider file

AuthUserFile /etc/httpd/conf/passwords

Require valid-user

</Location>

</VirtualHost>/var/www/cgi-bin/login.cgi

#!/bin/bash

echo "Content-Type: application/json"

echo ""

echo '{"sub":"userid", "name":"'$REMOTE_USER'"}'

exit 0/var/www/cgi-bin/fail.cgi

#!/bin/bash

echo "Content-Type: application/json"

echo ""

echo '{"error": "Login failure"}'

exit 07.4.7.1. File requirements

These are the requirements for the files you create on an Apache HTTPD web server:

-

login.cgiandfail.cgimust be executable (chmod +x). -

login.cgiandfail.cgimust have proper SELinux contexts if SELinux is enabled:restorecon -RFv /var/www/cgi-bin, or ensure that the context ishttpd_sys_script_exec_tusingls -laZ. -

login.cgiis only executed if your user successfully logs in perRequire and Authdirectives. -

fail.cgiis executed if the user fails to log in, resulting in anHTTP 401response.

7.4.8. Basic authentication troubleshooting

The most common issue relates to network connectivity to the backend server. For simple debugging, run curl commands on the master. To test for a successful login, replace the <user> and <password> in the following example command with valid credentials. To test an invalid login, replace them with false credentials.

curl --cacert /path/to/ca.crt --cert /path/to/client.crt --key /path/to/client.key -u <user>:<password> -v https://www.example.com/remote-idpSuccessful responses

A 200 status with a sub (subject) key indicates success:

{"sub":"userid"}The subject must be unique to the authenticated user, and must not be able to be modified.

A successful response can optionally provide additional data, such as:

A display name using the

namekey:{"sub":"userid", "name": "User Name", ...}An email address using the

emailkey:{"sub":"userid", "email":"user@example.com", ...}A preferred user name using the

preferred_usernamekey:{"sub":"014fbff9a07c", "preferred_username":"bob", ...}The

preferred_usernamekey is useful when the unique, unchangeable subject is a database key or UID, and a more human-readable name exists. This is used as a hint when provisioning the OpenShift Container Platform user for the authenticated identity.

Failed responses

-

A

401response indicates failed authentication. -

A non-

200status or the presence of a non-empty "error" key indicates an error:{"error":"Error message"}

7.5. Configuring a request header identity provider

Configure a request-header identity provider to identify users from request header values, such as X-Remote-User. It is typically used in combination with an authenticating proxy, which sets the request header value.

7.5.1. About identity providers in OpenShift Container Platform

By default, only a kubeadmin user exists on your cluster. To specify an identity provider, you must create a Custom Resource (CR) that describes that identity provider and add it to the cluster.

OpenShift Container Platform user names containing /, :, and % are not supported.

7.5.2. About request header authentication

A request header identity provider identifies users from request header values, such as X-Remote-User. It is typically used in combination with an authenticating proxy, which sets the request header value.

You can also use the request header identity provider for advanced configurations such as the community-supported SAML authentication. Note that this solution is not supported by Red Hat.

For users to authenticate using this identity provider, they must access https://<namespace_route>/oauth/authorize (and subpaths) via an authenticating proxy. To accomplish this, configure the OAuth server to redirect unauthenticated requests for OAuth tokens to the proxy endpoint that proxies to https://<namespace_route>/oauth/authorize.

To redirect unauthenticated requests from clients expecting browser-based login flows:

-

Set the

provider.loginURLparameter to the authenticating proxy URL that will authenticate interactive clients and then proxy the request tohttps://<namespace_route>/oauth/authorize.

To redirect unauthenticated requests from clients expecting WWW-Authenticate challenges:

-

Set the

provider.challengeURLparameter to the authenticating proxy URL that will authenticate clients expectingWWW-Authenticatechallenges and then proxy the request tohttps://<namespace_route>/oauth/authorize.

The provider.challengeURL and provider.loginURL parameters can include the following tokens in the query portion of the URL:

${url}is replaced with the current URL, escaped to be safe in a query parameter.For example:

https://www.example.com/sso-login?then=${url}${query}is replaced with the current query string, unescaped.For example:

https://www.example.com/auth-proxy/oauth/authorize?${query}

As of OpenShift Container Platform 4.1, your proxy must support mutual TLS.

7.5.2.1. SSPI connection support on Microsoft Windows

Using SSPI connection support on Microsoft Windows is a Technology Preview feature. Technology Preview features are not supported with Red Hat production service level agreements (SLAs), might not be functionally complete, and Red Hat does not recommend to use them for production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.