Chapter 3. Install SAP S/4HANA

3.1. Configuration options used in this document

Below are the configuration options that will be used for instances in this document.

Both nodes will be running the ASCS/ERS and HDB instances with Automated System Replication in a cluster:

1st node hostname: s4node1

2nd node hostname: s4node2

SID: S4H

ASCS Instance number: 20

ASCS virtual hostname: s4ascs

ERS Instance number: 29

ERS virtual hostname: s4ers

HANA database:

SID: S4D

HANA Instance number: 00

HANA virtual hostname: s4db3.2. Prepare hosts

Before starting installation ensure that you have to:

- Install RHEL 8 for SAP Solutions (the latest certified version for SAP HANA is recommended)

- Register the system to Red Hat Customer Portal or Satellite

- Enable RHEL for SAP Applications and RHEL for SAP Solutions repos

- Enable High Availability add-on channel

- Place shared storage and filesystems at correct mount points

- Make the virtual IP addresses, used by instances, present and reachable

- Resolve hostnames, that will be used by instances, to IP addresses and back

- Make available the installation media

Configure the system according to the recommendation for running SAP S/4HANA

- For more information please refer to Red Hat Enterprise Linux 8.x: Installation and Configuration - SAP OSS Note 2772999

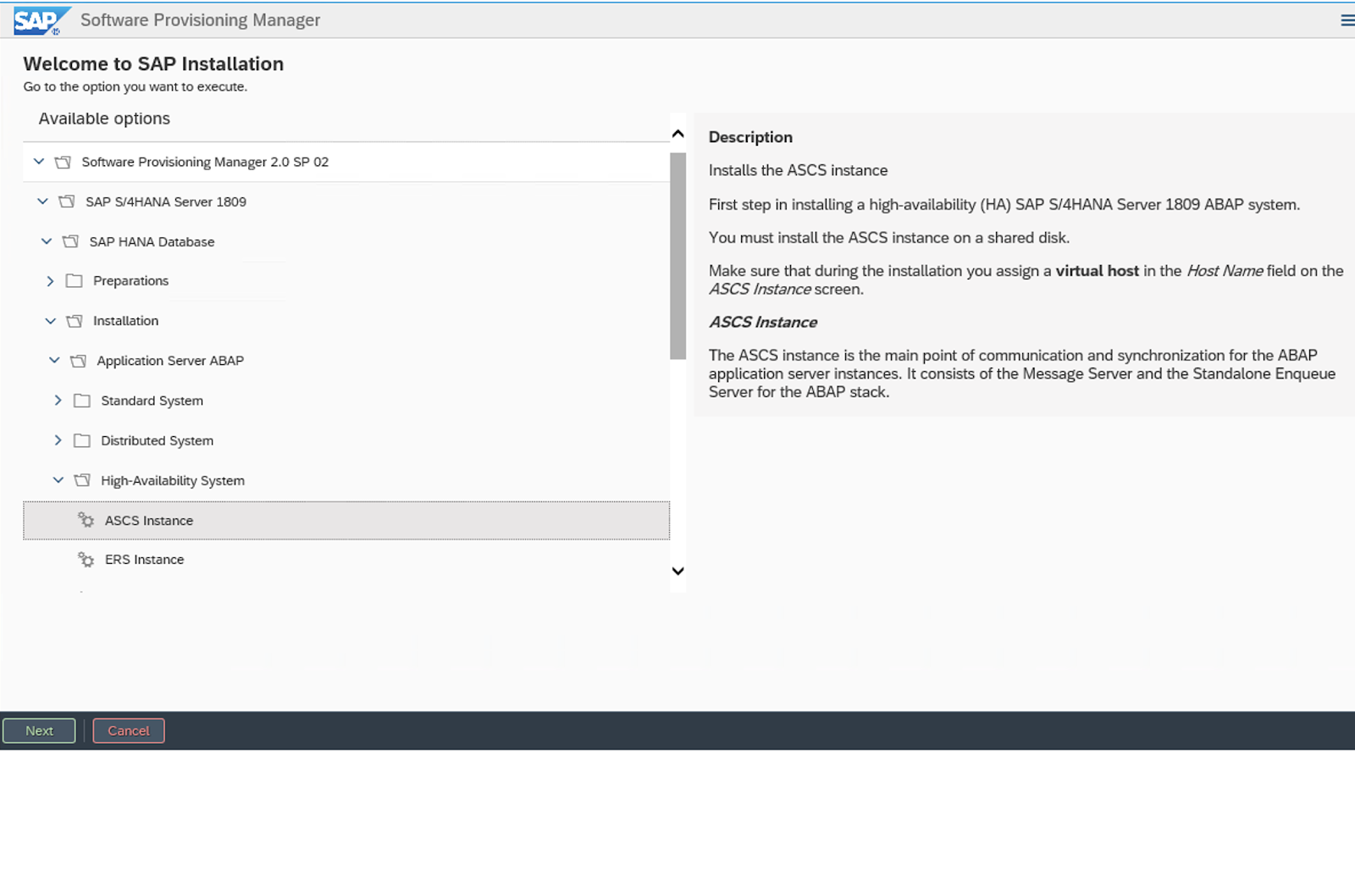

3.3. Install S/4HANA

Use SAP’s Software Provisioning Manager (SWPM) to install instances in the following order:

- ASCS instance

- ERS instance

- SAP HANA DB instances on both nodes with System Replication

3.3.1. Install S/4 on s4node1

The following file systems should be mounted on s4node1, where ASCS will be installed:

/usr/sap/S4H/ASCS20

/usr/sap/S4H/SYS

/usr/sap/trans

/sapmnt

Virtual IP for s4ascs should be enabled on s4node1.

Run the installer:

[root@s4node1]# ./sapinst SAPINST_USE_HOSTNAME=s4ascs

Select High-Availability System option.

3.3.2. Install ERS on s4node2

The following file systems should be mounted on s4node2, where ERS will be installed:

/usr/sap/S4H/ERS29 /usr/sap/S4H/SYS /usr/sap/trans /sapmnt

Virtual IP for s4ers should be enabled on s4node2.

Run the installer:

[root@s4node2]# ./sapinst SAPINST_USE_HOSTNAME=s4ers

Select High-Availability System option.

3.3.3. Install SAP HANA with System Replication

In this example, we will be using SAP HANA with the following configuration. You can also use other supported databases as per the support policies.

SAP HANA SID: S4D

SAP HANA Instance number: 00

In this example, SAP HANA Database server can be installed on both nodes using the hdblcm command line tool and then Automated HANA System Replication will be established in the same way as mentioned in the following document: SAP HANA system replication in pacemaker cluster.

It is obvious to note that in this setup, the ASCS and HANA primary instances can failover independently of each other; hence, there will come a situation where the ASCS and the primary SAP HANA instances start running on the same node. Therefore, it is also important to ensure that both nodes have enough resources and free memory available for the ASCS/ERS and the primary SAP HANA instances to run on one node in an event of the other node’s failure.

To achieve this, the SAP HANA instance can be set with certain memory restrictions/limits, and we highly recommend reaching out to SAP for exploring the available options as per your SAP HANA environment. Some related links are as follows:

3.4. Post Installation

3.4.1. ASCS profile modification

The ASCS instance requires the following modification to its profile to prevent the automatic restart of the server instance since it will be managed by the cluster. To apply the change, run the following command at your ASCS profile /sapmnt/S4H/profile/S4H_ASCS20_s4ascs.

[root]# sed -i -e 's/Restart_Program_01/Start_Program_01/'

/sapmnt/S4H/profile/S4H_ASCS20_s4ascs+3.4.2. ERS profile modification

The ERS instance requires the following modification to its profile, to prevent the automatic restart of the enqueue server, as it will be managed by cluster. To apply the change, run the following command at your ERS profile /sapmnt/S4H/profile/S4H_ERS29_s4ers.

[root]# sed -i -e 's/Restart_Program_00/Start_Program_00/'

/sapmnt/S4H/profile/S4H_ERS29_s4ers+3.4.3. Update the /usr/sap/sapservices file

On both s4node1 and s4node2, make sure the following two lines are commented out in /usr/sap/sapservices file:

#LD_LIBRARY_PATH=/usr/sap/S4H/ERS29/exe:$LD_LIBRARY_PATH; export

LD_LIBRARY_PATH; /usr/sap/S4H/ERS29/exe/sapstartsrv

pf=/usr/sap/S4H/SYS/profile/S4H_ERS29_s4ers -D -u s4hadm

#LD_LIBRARY_PATH=/usr/sap/S4H/ASCS20/exe:$LD_LIBRARY_PATH; export

LD_LIBRARY_PATH; /usr/sap/S4H/ASCS20/exe/sapstartsrv

pf=/usr/sap/S4H/SYS/profile/S4H_ASCS20_s4ascs -D -u s4hadm3.4.4. Create mount points for ASCS and ERS on the failover node

[root@s4node1 ~]# mkdir /usr/sap/S4H/ERS29/

[root@s4node1 ~]# chown s4hadm:sapsys /usr/sap/S4H/ERS29/

[root@s4node2 ~]# mkdir /usr/sap/S4H/ASCS20

[root@s4node2 ~]# chown s4hadm:sapsys /usr/sap/S4H/ASCS203.4.5. Manually Testing Instances on Other Node

Stop ASCS and ERS instances. Move the instance specific directory to the other node:

[root@s4node1 ~]# umount /usr/sap/S4H/ASCS20

[root@s4node2 ~]# mount /usr/sap/S4H/ASCS20

[root@s4node2 ~]# umount /usr/sap/S4H/ERS29/

[root@s4node1 ~]# mount /usr/sap/S4H/ERS29/Manually start the ASCS and ERS instances on the other cluster node, then manually stop them, respectively.

3.4.6. Check SAP HostAgent on all nodes

On all nodes check if SAP HostAgent has the same version and meets the minimum version requirement:

[root]# /usr/sap/hostctrl/exe/saphostexec -versionTo upgrade/install SAP HostAgent. Please follow SAP OSS Note 1031096.

3.4.7. Install permanent SAP license keys

SAP hardware key determination in the high-availability scenario has been improved. It might be necessary to install several SAP license keys based on the hardware key of each cluster node. Please see SAP OSS Note 1178686 - Linux: Alternative method to generate a SAP hardware key for more information.