Chapter 7. Scaling storage nodes

To scale the storage capacity of OpenShift Container Storage, you can do either of the following:

- Scale up storage nodes - Add storage capacity to the existing OpenShift Container Storage worker nodes

- Scale out storage nodes - Add new worker nodes containing storage capacity

7.1. Requirements for scaling storage nodes

Before you proceed to scale the storage nodes, refer to the following sections to understand the node requirements for your specific Red Hat OpenShift Container Storage instance:

- Platform requirements

Storage device requirements

Always ensure that you have plenty of storage capacity.

If storage ever fills completely, it is not possible to add capacity or delete or migrate content away from the storage to free up space. Completely full storage is very difficult to recover.

Capacity alerts are issued when cluster storage capacity reaches 75% (near-full) and 85% (full) of total capacity. Always address capacity warnings promptly, and review your storage regularly to ensure that you do not run out of storage space.

If you do run out of storage space completely, contact Red Hat Customer Support.

7.2. Scaling up storage by adding capacity to your OpenShift Container Storage nodes on Red Hat OpenStack Platform infrastructure

Use this procedure to add storage capacity and performance to your configured Red Hat OpenShift Container Storage worker nodes.

Prerequisites

- A running OpenShift Container Storage Platform.

- Administrative privileges on the OpenShift Web Console.

- To scale using a storage class other than the one provisioned during deployment, first define an additional storage class. See Creating a storage class for details.

Procedure

- Log in to the OpenShift Web Console.

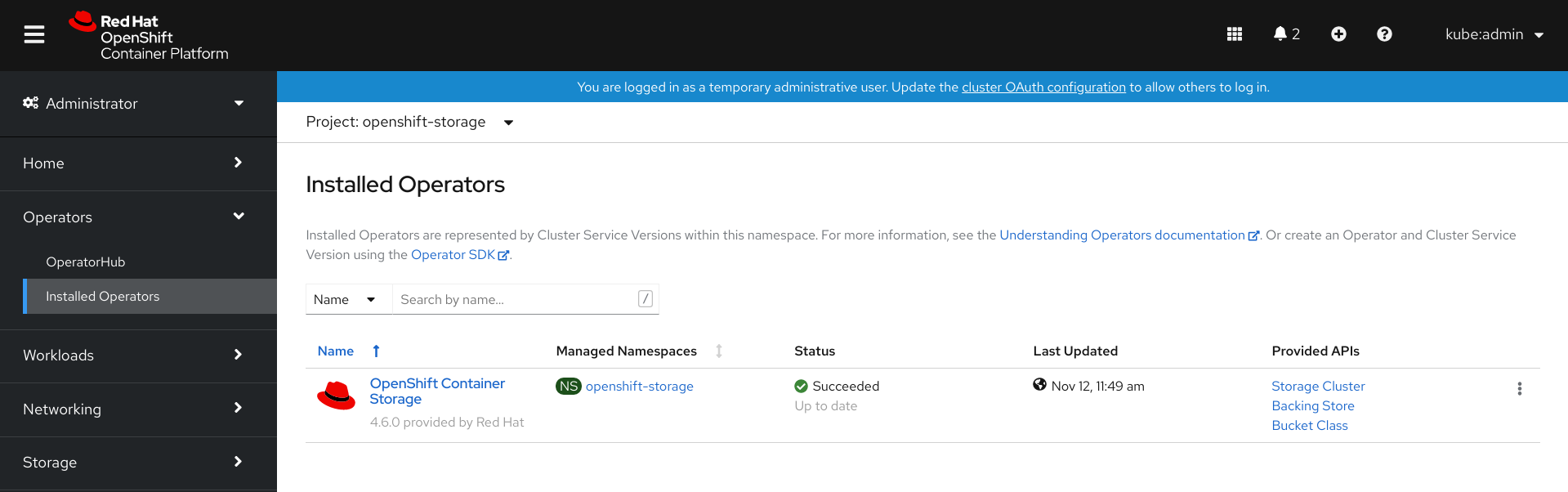

-

Click on Operators

Installed Operators. Click OpenShift Container Storage Operator.

Click Storage Cluster tab.

- The visible list should have only one item. Click (⋮) on the far right to extend the options menu.

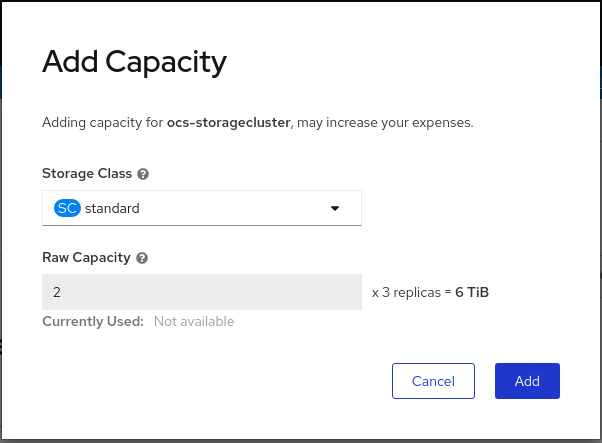

Select Add Capacity from the options menu.

Select a storage class.

The storage class should be set to standard if you are using the default storage class generated during deployment. If you have created other storage classes, select whichever is appropriate.

The Raw Capacity field shows the size set during storage class creation. The total amount of storage consumed is three times this amount, because OpenShift Container Storage uses a replica count of 3.

- Click Add and wait for the cluster state to change to Ready.

Verification steps

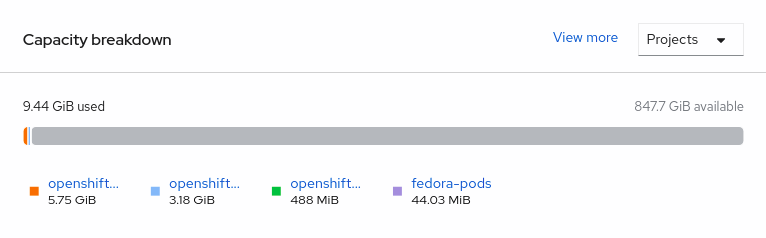

Navigate to Overview

Persistent Storage tab, then check the Capacity breakdown card.

Note that the capacity increases based on your selections.

Verify that the new OSDs and their corresponding new PVCs are created.

To view the state of the newly created OSDs:

-

Click Workloads

Pods from the OpenShift Web Console. -

Select

openshift-storagefrom the Project drop-down list.

-

Click Workloads

To view the state of the PVCs:

-

Click Storage

Persistent Volume Claims from the OpenShift Web Console. -

Select

openshift-storagefrom the Project drop-down list.

-

Click Storage

(Optional) If data encryption is enabled on the cluster, verify that the new OSD devices are encrypted.

Identify the node(s) where the new OSD pod(s) are running.

$ oc get -o=custom-columns=NODE:.spec.nodeName pod/<OSD pod name>

For example:

oc get -o=custom-columns=NODE:.spec.nodeName pod/rook-ceph-osd-0-544db49d7f-qrgqm

For each of the nodes identified in previous step, do the following:

Create a debug pod and open a chroot environment for the selected host(s).

$ oc debug node/<node name> $ chroot /host

Run “lsblk” and check for the “crypt” keyword beside the

ocs-devicesetname(s)$ lsblk

Cluster reduction is not currently supported, regardless of whether reduction would be done by removing nodes or OSDs.

7.3. Scaling out storage capacity by adding new nodes

To scale out storage capacity, you need to perform the following:

- Add a new node to increase the storage capacity when existing worker nodes are already running at their maximum supported OSDs, which is the increment of 3 OSDs of the capacity selected during initial configuration.

- Verify that the new node is added successfully

- Scale up the storage capacity after the node is added

Prerequisites

- You must be logged into OpenShift Container Platform (RHOCP) cluster.

Procedure

-

Navigate to Compute

Machine Sets. - On the machine set where you want to add nodes, select Edit Machine Count.

- Add the amount of nodes, and click Save.

-

Click Compute

Nodes and confirm if the new node is in Ready state. Apply the OpenShift Container Storage label to the new node.

-

For the new node, Action menu (⋮)

Edit Labels. - Add cluster.ocs.openshift.io/openshift-storage and click Save.

-

For the new node, Action menu (⋮)

It is recommended to add 3 nodes each in different zones. You must add 3 nodes and perform this procedure for all of them.

Verification steps

- To verify that the new node is added, see Verifying the addition of a new node.

7.3.1. Verifying the addition of a new node

Execute the following command and verify that the new node is present in the output:

$ oc get nodes --show-labels | grep cluster.ocs.openshift.io/openshift-storage= |cut -d' ' -f1

Click Workloads

Pods, confirm that at least the following pods on the new node are in Running state: -

csi-cephfsplugin-* -

csi-rbdplugin-*

-

7.3.2. Scaling up storage capacity

After you add a new node to OpenShift Container Storage, you must scale up the storage capacity as described in Scaling up storage by adding capacity.