Chapter 4. Adding storage resources for hybrid or Multicloud

4.1. Creating a new backing store

Use this procedure to create a new backing store in OpenShift Data Foundation.

Prerequisites

- Administrator access to OpenShift Data Foundation.

Procedure

-

In the OpenShift Web Console, click Storage

Data Foundation. - Click the Backing Store tab.

- Click Create Backing Store.

On the Create New Backing Store page, perform the following:

- Enter a Backing Store Name.

- Select a Provider.

- Select a Region.

- Enter an Endpoint. This is optional.

Select a Secret from the drop-down list, or create your own secret. Optionally, you can Switch to Credentials view which lets you fill in the required secrets.

For more information on creating an OCP secret, see the section Creating the secret in the Openshift Container Platform documentation.

Each backingstore requires a different secret. For more information on creating the secret for a particular backingstore, see the Section 4.2, “Adding storage resources for hybrid or Multicloud using the MCG command line interface” and follow the procedure for the addition of storage resources using a YAML.

NoteThis menu is relevant for all providers except Google Cloud and local PVC.

- Enter the Target bucket. The target bucket is a container storage that is hosted on the remote cloud service. It allows you to create a connection that tells the MCG that it can use this bucket for the system.

- Click Create Backing Store.

Verification steps

-

In the OpenShift Web Console, click Storage

Data Foundation. - Click the Backing Store tab to view all the backing stores.

4.2. Adding storage resources for hybrid or Multicloud using the MCG command line interface

The Multicloud Object Gateway (MCG) simplifies the process of spanning data across the cloud provider and clusters.

Add a backing storage that can be used by the MCG.

Depending on the type of your deployment, you can choose one of the following procedures to create a backing storage:

- For creating an AWS-backed backingstore, see Section 4.2.1, “Creating an AWS-backed backingstore”

- For creating an IBM COS-backed backingstore, see Section 4.2.2, “Creating an IBM COS-backed backingstore”

- For creating an Azure-backed backingstore, see Section 4.2.3, “Creating an Azure-backed backingstore”

- For creating a GCP-backed backingstore, see Section 4.2.4, “Creating a GCP-backed backingstore”

- For creating a local Persistent Volume-backed backingstore, see Section 4.2.5, “Creating a local Persistent Volume-backed backingstore”

For VMware deployments, skip to Section 4.3, “Creating an s3 compatible Multicloud Object Gateway backingstore” for further instructions.

4.2.1. Creating an AWS-backed backingstore

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgNoteSpecify the appropriate architecture for enabling the repositories using the subscription manager. For instance, in case of IBM Z infrastructure use the following command:

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsAlternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/packages

NoteChoose the correct Product Variant according to your architecture.

Procedure

From the MCG command-line interface, run the following command:

noobaa backingstore create aws-s3 <backingstore_name> --access-key=<AWS ACCESS KEY> --secret-key=<AWS SECRET ACCESS KEY> --target-bucket <bucket-name> -n openshift-storage

-

Replace

<backingstore_name>with the name of the backingstore. -

Replace

<AWS ACCESS KEY>and<AWS SECRET ACCESS KEY>with an AWS access key ID and secret access key you created for this purpose. Replace

<bucket-name>with an existing AWS bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration.The output will be similar to the following:

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "aws-resource" INFO[0002] ✅ Created: Secret "backing-store-secret-aws-resource"

You can also add storage resources using a YAML:

Create a secret with the credentials:

apiVersion: v1 kind: Secret metadata: name: <backingstore-secret-name> namespace: openshift-storage type: Opaque data: AWS_ACCESS_KEY_ID: <AWS ACCESS KEY ID ENCODED IN BASE64> AWS_SECRET_ACCESS_KEY: <AWS SECRET ACCESS KEY ENCODED IN BASE64>-

You must supply and encode your own AWS access key ID and secret access key using Base64, and use the results in place of

<AWS ACCESS KEY ID ENCODED IN BASE64>and<AWS SECRET ACCESS KEY ENCODED IN BASE64>. -

Replace

<backingstore-secret-name>with a unique name.

-

You must supply and encode your own AWS access key ID and secret access key using Base64, and use the results in place of

Apply the following YAML for a specific backing store:

apiVersion: noobaa.io/v1alpha1 kind: BackingStore metadata: finalizers: - noobaa.io/finalizer labels: app: noobaa name: bs namespace: openshift-storage spec: awsS3: secret: name: <backingstore-secret-name> namespace: openshift-storage targetBucket: <bucket-name> type: aws-s3-

Replace

<bucket-name>with an existing AWS bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration. -

Replace

<backingstore-secret-name>with the name of the secret created in the previous step.

-

Replace

4.2.2. Creating an IBM COS-backed backingstore

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgNoteSpecify the appropriate architecture for enabling the repositories using the subscription manager. For instance,

- For IBM Power, use the following command:

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpms- For IBM Z infrastructure, use the following command:

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsAlternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/packages

NoteChoose the correct Product Variant according to your architecture.

Procedure

From the MCG command-line interface, run the following command:

noobaa backingstore create ibm-cos <backingstore_name> --access-key=<IBM ACCESS KEY> --secret-key=<IBM SECRET ACCESS KEY> --endpoint=<IBM COS ENDPOINT> --target-bucket <bucket-name> -n openshift-storage-

Replace

<backingstore_name>with the name of the backingstore. Replace

<IBM ACCESS KEY>,<IBM SECRET ACCESS KEY>,<IBM COS ENDPOINT>with an IBM access key ID, secret access key and the appropriate regional endpoint that corresponds to the location of the existing IBM bucket.To generate the above keys on IBM cloud, you must include HMAC credentials while creating the service credentials for your target bucket.

Replace

<bucket-name>with an existing IBM bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration.The output will be similar to the following:

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "ibm-resource" INFO[0002] ✅ Created: Secret "backing-store-secret-ibm-resource"

-

Replace

You can also add storage resources using a YAML:

Create a secret with the credentials:

apiVersion: v1 kind: Secret metadata: name: <backingstore-secret-name> namespace: openshift-storage type: Opaque data: IBM_COS_ACCESS_KEY_ID: <IBM COS ACCESS KEY ID ENCODED IN BASE64> IBM_COS_SECRET_ACCESS_KEY: <IBM COS SECRET ACCESS KEY ENCODED IN BASE64>-

You must supply and encode your own IBM COS access key ID and secret access key using Base64, and use the results in place of

<IBM COS ACCESS KEY ID ENCODED IN BASE64>and<IBM COS SECRET ACCESS KEY ENCODED IN BASE64>. -

Replace

<backingstore-secret-name>with a unique name.

-

You must supply and encode your own IBM COS access key ID and secret access key using Base64, and use the results in place of

Apply the following YAML for a specific backing store:

apiVersion: noobaa.io/v1alpha1 kind: BackingStore metadata: finalizers: - noobaa.io/finalizer labels: app: noobaa name: bs namespace: openshift-storage spec: ibmCos: endpoint: <endpoint> secret: name: <backingstore-secret-name> namespace: openshift-storage targetBucket: <bucket-name> type: ibm-cos-

Replace

<bucket-name>with an existing IBM COS bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration. -

Replace

<endpoint>with a regional endpoint that corresponds to the location of the existing IBM bucket name. This argument tells Multicloud Object Gateway which endpoint to use for its backing store, and subsequently, data storage and administration. -

Replace

<backingstore-secret-name>with the name of the secret created in the previous step.

-

Replace

4.2.3. Creating an Azure-backed backingstore

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgNoteSpecify the appropriate architecture for enabling the repositories using the subscription manager. For instance, in case of IBM Z infrastructure use the following command:

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsAlternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/packages

NoteChoose the correct Product Variant according to your architecture.

Procedure

From the MCG command-line interface, run the following command:

noobaa backingstore create azure-blob <backingstore_name> --account-key=<AZURE ACCOUNT KEY> --account-name=<AZURE ACCOUNT NAME> --target-blob-container <blob container name> -n openshift-storage-

Replace

<backingstore_name>with the name of the backingstore. -

Replace

<AZURE ACCOUNT KEY>and<AZURE ACCOUNT NAME>with an AZURE account key and account name you created for this purpose. Replace

<blob container name>with an existing Azure blob container name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration.The output will be similar to the following:

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "azure-resource" INFO[0002] ✅ Created: Secret "backing-store-secret-azure-resource"

-

Replace

You can also add storage resources using a YAML:

Create a secret with the credentials:

apiVersion: v1 kind: Secret metadata: name: <backingstore-secret-name> type: Opaque data: AccountName: <AZURE ACCOUNT NAME ENCODED IN BASE64> AccountKey: <AZURE ACCOUNT KEY ENCODED IN BASE64>-

You must supply and encode your own Azure Account Name and Account Key using Base64, and use the results in place of

<AZURE ACCOUNT NAME ENCODED IN BASE64>and<AZURE ACCOUNT KEY ENCODED IN BASE64>. -

Replace

<backingstore-secret-name>with a unique name.

-

You must supply and encode your own Azure Account Name and Account Key using Base64, and use the results in place of

Apply the following YAML for a specific backing store:

apiVersion: noobaa.io/v1alpha1 kind: BackingStore metadata: finalizers: - noobaa.io/finalizer labels: app: noobaa name: bs namespace: openshift-storage spec: azureBlob: secret: name: <backingstore-secret-name> namespace: openshift-storage targetBlobContainer: <blob-container-name> type: azure-blob-

Replace

<blob-container-name>with an existing Azure blob container name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration. -

Replace

<backingstore-secret-name>with the name of the secret created in the previous step.

-

Replace

4.2.4. Creating a GCP-backed backingstore

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgNoteSpecify the appropriate architecture for enabling the repositories using the subscription manager. For instance, in case of IBM Z infrastructure use the following command:

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsAlternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/packages

NoteChoose the correct Product Variant according to your architecture.

Procedure

From the MCG command-line interface, run the following command:

noobaa backingstore create google-cloud-storage <backingstore_name> --private-key-json-file=<PATH TO GCP PRIVATE KEY JSON FILE> --target-bucket <GCP bucket name> -n openshift-storage-

Replace

<backingstore_name>with the name of the backingstore. -

Replace

<PATH TO GCP PRIVATE KEY JSON FILE>with a path to your GCP private key created for this purpose. Replace

<GCP bucket name>with an existing GCP object storage bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration.The output will be similar to the following:

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "google-gcp" INFO[0002] ✅ Created: Secret "backing-store-google-cloud-storage-gcp"

-

Replace

You can also add storage resources using a YAML:

Create a secret with the credentials:

apiVersion: v1 kind: Secret metadata: name: <backingstore-secret-name> type: Opaque data: GoogleServiceAccountPrivateKeyJson: <GCP PRIVATE KEY ENCODED IN BASE64>-

You must supply and encode your own GCP service account private key using Base64, and use the results in place of

<GCP PRIVATE KEY ENCODED IN BASE64>. -

Replace

<backingstore-secret-name>with a unique name.

-

You must supply and encode your own GCP service account private key using Base64, and use the results in place of

Apply the following YAML for a specific backing store:

apiVersion: noobaa.io/v1alpha1 kind: BackingStore metadata: finalizers: - noobaa.io/finalizer labels: app: noobaa name: bs namespace: openshift-storage spec: googleCloudStorage: secret: name: <backingstore-secret-name> namespace: openshift-storage targetBucket: <target bucket> type: google-cloud-storage-

Replace

<target bucket>with an existing Google storage bucket. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration. -

Replace

<backingstore-secret-name>with the name of the secret created in the previous step.

-

Replace

4.2.5. Creating a local Persistent Volume-backed backingstore

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgNoteSpecify the appropriate architecture for enabling the repositories using the subscription manager. For instance, in case of IBM Z infrastructure use the following command:

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsAlternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/packages

NoteChoose the correct Product Variant according to your architecture.

Procedure

From the MCG command-line interface, run the following command:

NoteThis command must be run from within the

openshift-storagenamespace.noobaa backingstore create pv-pool <backingstore_name> --num-volumes=<NUMBER OF VOLUMES> --pv-size-gb=<VOLUME SIZE> --storage-class=<LOCAL STORAGE CLASS> -n openshift-storage-

Replace

<backingstore_name>with the name of the backingstore. -

Replace

<NUMBER OF VOLUMES>with the number of volumes you would like to create. Note that increasing the number of volumes scales up the storage. -

Replace

<VOLUME SIZE>with the required size, in GB, of each volume. Replace

<LOCAL STORAGE CLASS>with the local storage class, recommended to useocs-storagecluster-ceph-rbd.The output will be similar to the following:

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Exists: BackingStore "local-mcg-storage"

-

Replace

You can also add storage resources using a YAML:

Apply the following YAML for a specific backing store:

apiVersion: noobaa.io/v1alpha1 kind: BackingStore metadata: finalizers: - noobaa.io/finalizer labels: app: noobaa name: <backingstore_name> namespace: openshift-storage spec: pvPool: numVolumes: <NUMBER OF VOLUMES> resources: requests: storage: <VOLUME SIZE> storageClass: <LOCAL STORAGE CLASS> type: pv-pool-

Replace

<backingstore_name>with the name of the backingstore. -

Replace

<NUMBER OF VOLUMES>with the number of volumes you would like to create. Note that increasing the number of volumes scales up the storage. -

Replace

<VOLUME SIZE>with the required size, in GB, of each volume. Note that the letter G should remain. -

Replace

<LOCAL STORAGE CLASS>with the local storage class, recommended to useocs-storagecluster-ceph-rbd.

-

Replace

4.3. Creating an s3 compatible Multicloud Object Gateway backingstore

The Multicloud Object Gateway (MCG) can use any S3 compatible object storage as a backing store, for example, Red Hat Ceph Storage’s RADOS Object Gateway (RGW). The following procedure shows how to create an S3 compatible MCG backing store for Red Hat Ceph Storage’s RGW. Note that when the RGW is deployed, OpenShift Data Foundation operator creates an S3 compatible backingstore for MCG automatically.

Procedure

From the MCG command-line interface, run the following command:

NoteThis command must be run from within the

openshift-storagenamespace.noobaa backingstore create s3-compatible rgw-resource --access-key=<RGW ACCESS KEY> --secret-key=<RGW SECRET KEY> --target-bucket=<bucket-name> --endpoint=<RGW endpoint> -n openshift-storageTo get the

<RGW ACCESS KEY>and<RGW SECRET KEY>, run the following command using your RGW user secret name:oc get secret <RGW USER SECRET NAME> -o yaml -n openshift-storage- Decode the access key ID and the access key from Base64 and keep them.

-

Replace

<RGW USER ACCESS KEY>and<RGW USER SECRET ACCESS KEY>with the appropriate, decoded data from the previous step. -

Replace

<bucket-name>with an existing RGW bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration. To get the

<RGW endpoint>, see Accessing the RADOS Object Gateway S3 endpoint.The output will be similar to the following:

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "rgw-resource" INFO[0002] ✅ Created: Secret "backing-store-secret-rgw-resource"

You can also create the backingstore using a YAML:

Create a

CephObjectStoreuser. This also creates a secret containing the RGW credentials:apiVersion: ceph.rook.io/v1 kind: CephObjectStoreUser metadata: name: <RGW-Username> namespace: openshift-storage spec: store: ocs-storagecluster-cephobjectstore displayName: "<Display-name>"-

Replace

<RGW-Username>and<Display-name>with a unique username and display name.

-

Replace

Apply the following YAML for an S3-Compatible backing store:

apiVersion: noobaa.io/v1alpha1 kind: BackingStore metadata: finalizers: - noobaa.io/finalizer labels: app: noobaa name: <backingstore-name> namespace: openshift-storage spec: s3Compatible: endpoint: <RGW endpoint> secret: name: <backingstore-secret-name> namespace: openshift-storage signatureVersion: v4 targetBucket: <RGW-bucket-name> type: s3-compatible-

Replace

<backingstore-secret-name>with the name of the secret that was created withCephObjectStorein the previous step. -

Replace

<bucket-name>with an existing RGW bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration. -

To get the

<RGW endpoint>, see Accessing the RADOS Object Gateway S3 endpoint.

-

Replace

4.4. Adding storage resources for hybrid and Multicloud using the user interface

Procedure

-

In the OpenShift Web Console, click Storage

Data Foundation. -

In the Storage Systems tab, select the storage system and then click Overview

Object tab. - Select the Multicloud Object Gateway link.

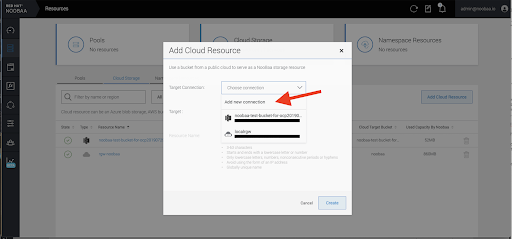

Select the Resources tab in the left, highlighted below. From the list that populates, select Add Cloud Resource.

Select Add new connection.

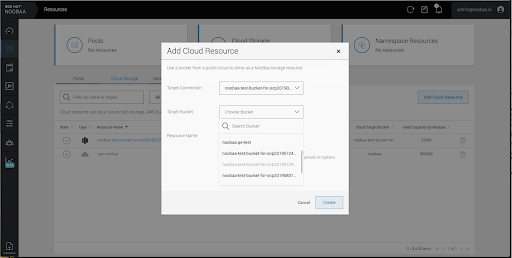

Select the relevant native cloud provider or S3 compatible option and fill in the details.

Select the newly created connection and map it to the existing bucket.

- Repeat these steps to create as many backing stores as needed.

Resources created in NooBaa UI cannot be used by OpenShift UI or MCG CLI.

4.5. Creating a new bucket class

Bucket class is a CRD representing a class of buckets that defines tiering policies and data placements for an Object Bucket Class (OBC).

Use this procedure to create a bucket class in OpenShift Data Foundation.

Procedure

-

In the OpenShift Web Console, click Storage

Data Foundation. - Click the Bucket Class tab.

- Click Create Bucket Class.

On the Create new Bucket Class page, perform the following:

Select the bucket class type and enter a bucket class name.

Select the BucketClass type. Choose one of the following options:

- Standard: data will be consumed by a Multicloud Object Gateway (MCG), deduped, compressed and encrypted.

Namespace: data is stored on the NamespaceStores without performing de-duplication, compression or encryption.

By default, Standard is selected.

- Enter a Bucket Class Name.

- Click Next.

In Placement Policy, select Tier 1 - Policy Type and click Next. You can choose either one of the options as per your requirements.

- Spread allows spreading of the data across the chosen resources.

- Mirror allows full duplication of the data across the chosen resources.

- Click Add Tier to add another policy tier.

Select at least one Backing Store resource from the available list if you have selected Tier 1 - Policy Type as Spread and click Next. Alternatively, you can also create a new backing store.

NoteYou need to select at least 2 backing stores when you select Policy Type as Mirror in previous step.

- Review and confirm Bucket Class settings.

- Click Create Bucket Class.

Verification steps

-

In the OpenShift Web Console, click Storage

Data Foundation. - Click the Bucket Class tab and search the new Bucket Class.

4.6. Editing a bucket class

Use the following procedure to edit the bucket class components through the YAML file by clicking the edit button on the Openshift web console.

Prerequisites

- Administrator access to OpenShift Web Console.

Procedure

-

In the OpenShift Web Console, click Storage

Data Foundation. - Click the Bucket Class tab.

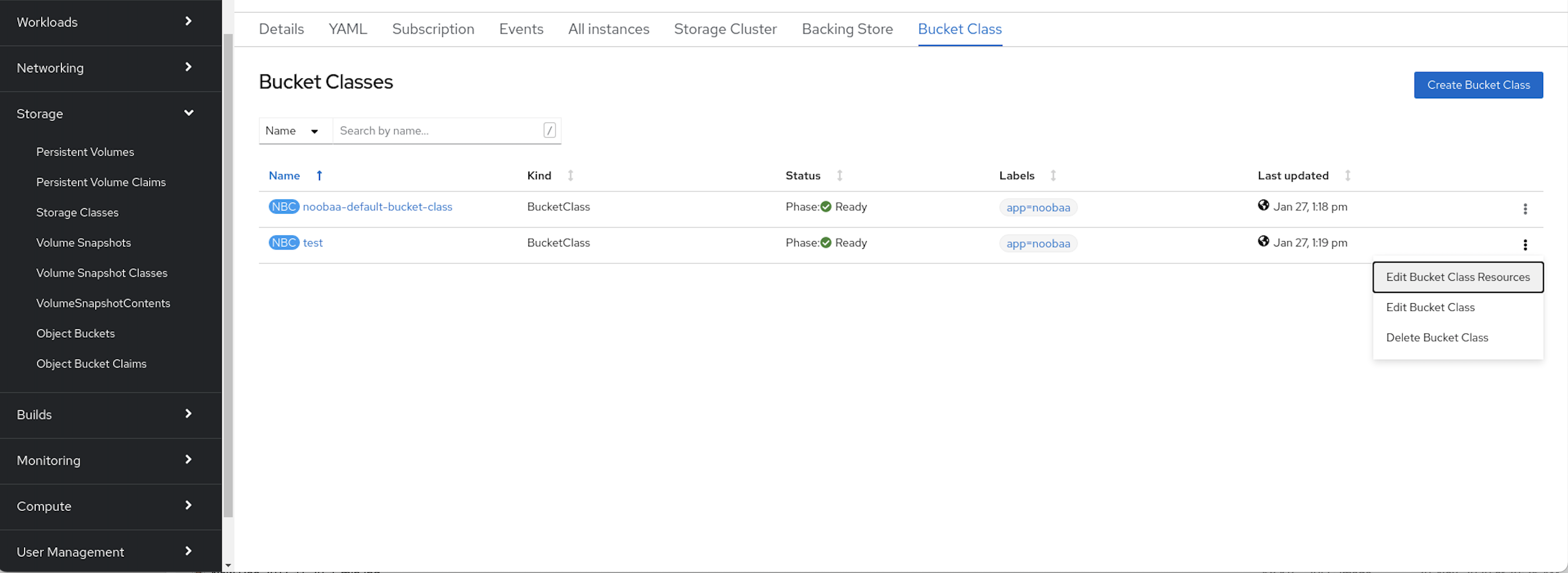

- Click the Action Menu (⋮) next to the Bucket class you want to edit.

- Click Edit Bucket Class.

- You are redirected to the YAML file, make the required changes in this file and click Save.

4.7. Editing backing stores for bucket class

Use the following procedure to edit an existing Multicloud Object Gateway (MCG) bucket class to change the underlying backing stores used in a bucket class.

Prerequisites

- Administrator access to OpenShift Web Console.

- A bucket class.

- Backing stores.

Procedure

-

In the OpenShift Web Console, click Storage

Data Foundation. - Click the Bucket Class tab.

Click the Action Menu (⋮) next to the Bucket class you want to edit.

- Click Edit Bucket Class Resources.

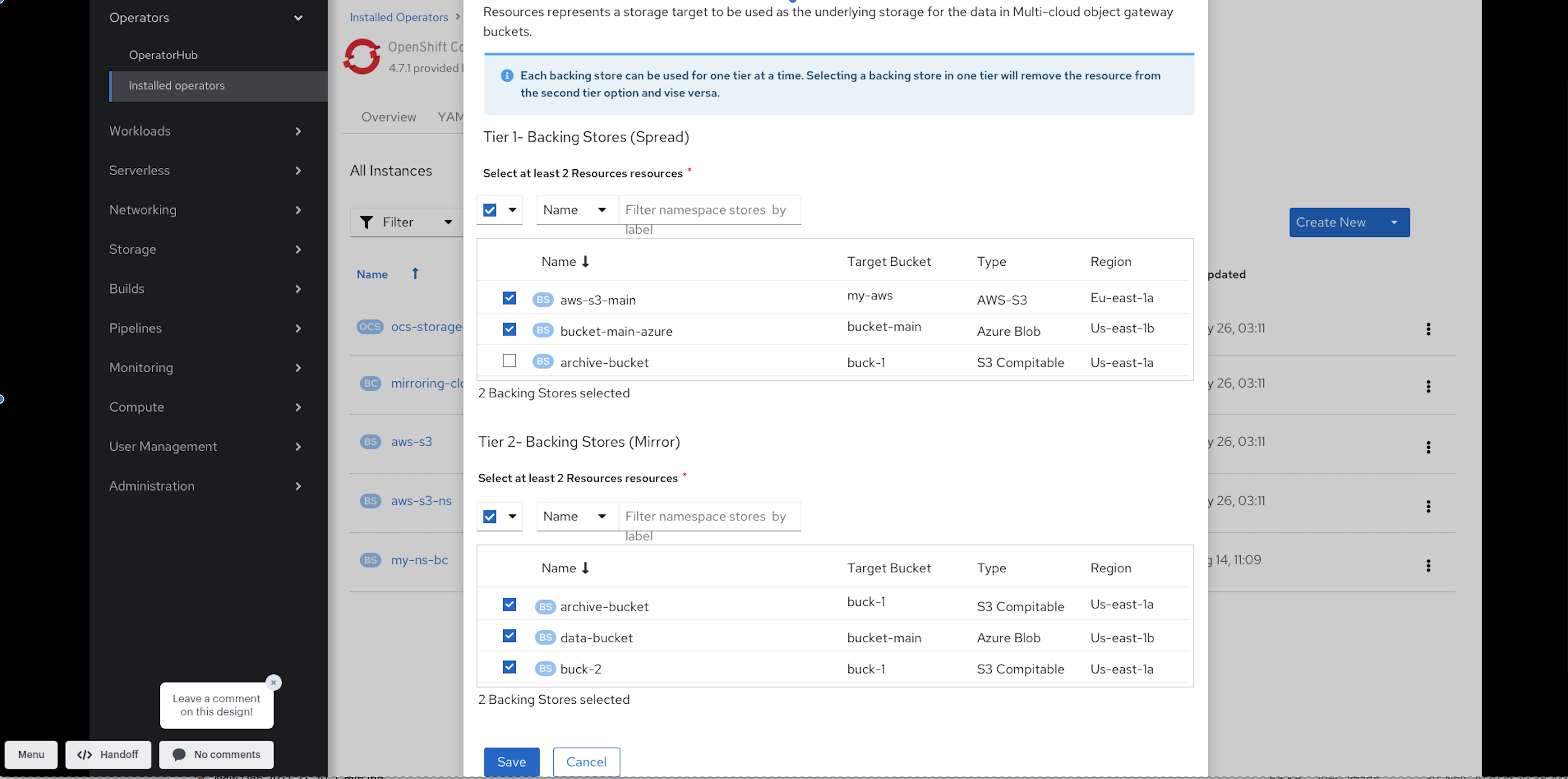

On the Edit Bucket Class Resources page, edit the bucket class resources either by adding a backing store to the bucket class or by removing a backing store from the bucket class. You can also edit bucket class resources created with one or two tiers and different placement policies.

- To add a backing store to the bucket class, select the name of the backing store.

To remove a backing store from the bucket class, clear the name of the backing store.

- Click Save.