Chapter 2. Block Storage and Volumes

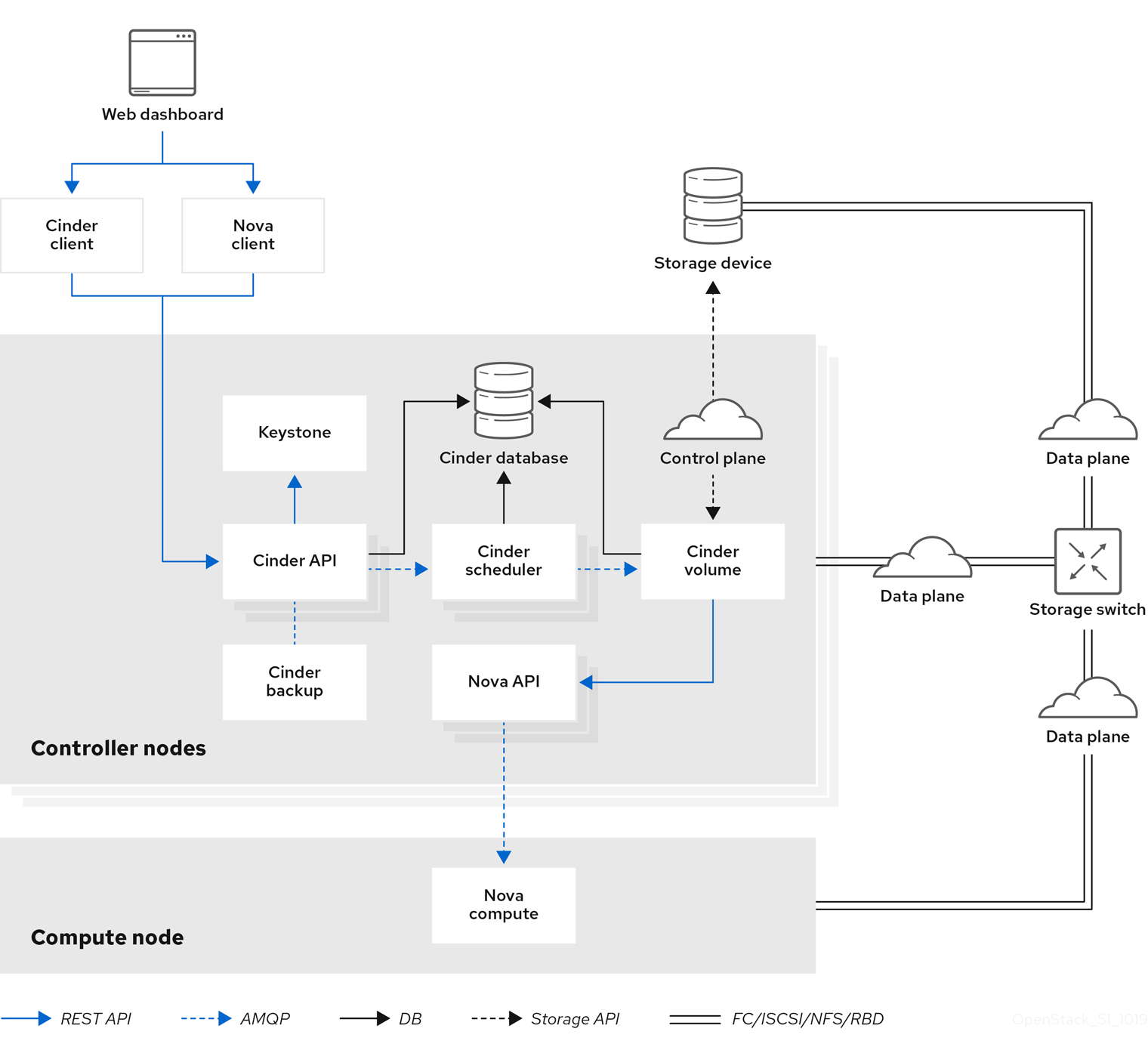

The Block Storage service (openstack-cinder) manages the administration, security, scheduling, and overall management of all volumes. Volumes are used as the primary form of persistent storage for Compute instances.

For more information about volume backups, refer to the Block Storage Backup Guide.

2.1. Back Ends

Red Hat OpenStack Platform is deployed using the OpenStack Platform director. Doing so helps ensure the proper configuration of each service, including the Block Storage service (and, by extension, its back end). The director also has several integrated back end configurations.

Red Hat OpenStack Platform supports Red Hat Ceph and NFS as Block Storage back ends. By default, the Block Storage service uses an LVM back end as a repository for volumes. While this back end is suitable for test environments, LVM is not supported in production environments.

For instructions on how to deploy Ceph with OpenStack, see Deploying an Overcloud with Containerized Red Hat Ceph.

For instructions on how to set up NFS storage in the overcloud, see Configuring NFS Storage (from the Advanced Overcloud Customization Guide).

2.1.1. Third-Party Storage Providers

You can also configure the Block Storage service to use supported third-party storage appliances. The director includes the necessary components for easily deploying different backend solutions.

For a complete list of supported back end appliances and drivers, see Component, Plug-In, and Driver Support in RHEL OpenStack Platform. Some back ends have individual guides, which are available on the Red Hat OpenStack Storage documentation site.

2.2. Block Storage Service Administration

The following procedures explain how to configure the Block Storage service to suit your needs. All of these procedures require administrator privileges.

2.2.1. Active-active deployment for high availability

In active-passive mode, if the Block Storage service fails in a hyperconverged deployment, node fencing is undesirable. This is because node fencing can trigger storage to be rebalanced unnecessarily. Edge sites do not deploy Pacemaker, although Pacemaker is still present at the control site. Instead, edge sites deploy the Block Storage service in an active-active configuration to support highly available hyperconverged deployments.

Active-active deployments improve scaling, performance, and reduce response time by balancing workloads across all available nodes. Deploying the Block Storage service in an active-active configuration creates a highly available environment that maintains the management layer during partial network outages and single- or multi-node hardware failures. Active-active deployments allow a cluster to continue providing Block Storage services during a node outage.

Active-active deployments do not, however, enable workflows to resume automatically. If a service stops, individual operations running on the failed node will also fail during the outage. In this situation, confirm that the service is down and initiate a cleanup of resources that had in-flight operations.

2.2.1.1. Enabling active-active configuration for high availability

The cinder-volume-active-active.yaml file enables you to deploy the Block Storage service in an active-active configuration. This file ensures director uses the non-Pacemaker cinder-volume heat template and adds the etcd service to the deployment as a distributed lock manager (DLM).

The cinder-volume-active-active.yaml file also defines the active-active cluster name by assigning a value to the CinderVolumeCluster parameter. CinderVolumeCluster is a global Block Storage parameter. Therefore, you cannot include clustered (active-active) and non-clustered back ends in the same deployment.

Currently, active-active configuration for Block Storage works only with Ceph RADOS Block Device (RBD) back ends. If you plan to use multiple back ends, all back ends must support the active-active configuration. If a back end that does not support the active-active configuration is included in the deployment, that back end will not be available for storage. In an active-active deployment, you risk data loss if you save data on a back end that does not support the active-active configuration.

Procedure

To enable active-active Block Storage service volumes, include the following environment file in your overcloud deployment:

-e /usr/share/openstack-tripleo-heat-templates/environments/cinder-volume-active-active.yaml2.2.1.2. Maintenance commands for active-active configurations

After deploying an active-active configuration, there are several commands you can use to interact with the environment when using API version 3.17 and later.

| User goal | Command |

| See the service listing, including details such as cluster name, host, zone, status, state, disabled reason, and back end state.

NOTE: When deployed by director for the Ceph back end, the default cluster name is |

|

| See detailed and summary information about clusters as a whole as opposed to individual services. |

NOTE: This command requires a cinder API microversion of 3.7 or later. |

| See detailed information about a specific cluster. |

NOTE: This command requires a cinder API microversion of 3.7 or later. |

| Enable a disabled service. |

NOTE: This command requires a cinder API microversion of 3.7 or later. |

| Disable a clustered service. |

NOTE: This command requires a cinder API microversion of 3.7 or later. |

2.2.1.3. Volume manage and unmanage

The unmanage and manage mechanisms facilitate moving volumes from one service using version X to another service using version X+1. Both services remain running during this process.

In API version 3.17 or later, you can see lists of volumes and snapshots that are available for management in Block Storage clusters. To see these lists, use the --cluster argument with cinder manageable-list or cinder snapshot-manageable-list.

In API version 3.16 and later, the cinder manage command also accepts the optional --cluster argument so that you can add previously unmanaged volumes to a Block Storage cluster.

2.2.1.4. Volume migration on a clustered service

With API version 3.16 and later, the cinder migrate and cinder-manage commands accept the --cluster argument to define the destination for active-active deployments.

When you migrate a volume on a Block Storage clustered service, pass the optional --cluster argument and omit the host positional argument, because the arguments are mutually exclusive.

2.2.1.5. Initiating server maintenance

All Block Storage volume services perform their own maintenance when they start. In an environment with multiple volume services grouped in a cluster, you can clean up services that are not currently running.

The command work-cleanup triggers server cleanups. The command returns:

- A list of the services that the command can clean.

- A list of the services that the command cannot clean because they are not currently running in the cluster.

The work-cleanup command works only on servers running API version 3.24 or later.

Procedure

Run the following command to verify whether all of the services for a cluster are running:

cinder cluster-list --detailedAlternatively, run the

cluster showcommand.If any services are not running, run the following command to identify those specific services:

cinder service-listRun the following command to trigger the server cleanup:

cinder work-cleanup [--cluster <cluster-name>] [--host <hostname>] [--binary <binary>] [--is-up <True|true|False|false>] [--disabled <True|true|False|false>] [--resource-id <resource-id>] [--resource-type <Volume|Snapshot>]NoteFilters, such as

--cluster,--host, and--binary, define what the command cleans. You can filter on cluster name, host name, type of service, and resource type, including a specific resource. If you do not apply filtering, the command attempts to clean everything that can be cleaned.The following example filters by cluster name:

cinder work-cleanup --cluster tripleo@tripleo_ceph

2.2.2. Group Volume Settings with Volume Types

With Red Hat OpenStack Platform you can create volume types so that you can apply associated settings to the volume type. You can apply settings during volume creation, see Create a Volume. You can also apply settings after you create a volume, see Changing the Type of a Volume (Volume Re-typing). The following list shows some of the associated setting that you can apply to a volume type:

- The encryption of a volume. For more information, see Configure Volume Type Encryption.

- The back end that a volume uses. For more information, see Specify Back End for Volume Creation and Migrate between Back Ends.

- Quality-of-Service (QoS) Specs

Settings are associated with volume types using key-value pairs called Extra Specs. When you specify a volume type during volume creation, the Block Storage scheduler applies these key-value pairs as settings. You can associate multiple key-value pairs to the same volume type.

Volume types provide the capability to provide different users with storage tiers. By associating specific performance, resilience, and other settings as key-value pairs to a volume type, you can map tier-specific settings to different volume types. You can then apply tier settings when creating a volume by specifying the corresponding volume type.

2.2.2.1. List the Capabilities of a Host Driver

Available and supported Extra Specs vary per back end driver. Consult the driver documentation for a list of valid Extra Specs.

Alternatively, you can query the Block Storage host directly to determine which well-defined standard Extra Specs are supported by its driver. Start by logging in (through the command line) to the node hosting the Block Storage service. Then:

# cinder service-listThis command will return a list containing the host of each Block Storage service (cinder-backup, cinder-scheduler, and cinder-volume). For example:

+------------------+---------------------------+------+---------

| Binary | Host | Zone | Status ...

+------------------+---------------------------+------+---------

| cinder-backup | localhost.localdomain | nova | enabled ...

| cinder-scheduler | localhost.localdomain | nova | enabled ...

| cinder-volume | *localhost.localdomain@lvm* | nova | enabled ...

+------------------+---------------------------+------+---------To display the driver capabilities (and, in turn, determine the supported Extra Specs) of a Block Storage service, run:

# cinder get-capabilities _VOLSVCHOST_Where VOLSVCHOST is the complete name of the cinder-volume's host. For example:

# cinder get-capabilities localhost.localdomain@lvm

+---------------------+-----------------------------------------+

| Volume stats | Value |

+---------------------+-----------------------------------------+

| description | None |

| display_name | None |

| driver_version | 3.0.0 |

| namespace | OS::Storage::Capabilities::localhost.loc...

| pool_name | None |

| storage_protocol | iSCSI |

| vendor_name | Open Source |

| visibility | None |

| volume_backend_name | lvm |

+---------------------+-----------------------------------------+

+--------------------+------------------------------------------+

| Backend properties | Value |

+--------------------+------------------------------------------+

| compression | {u'type': u'boolean', u'description'...

| qos | {u'type': u'boolean', u'des ...

| replication | {u'type': u'boolean', u'description'...

| thin_provisioning | {u'type': u'boolean', u'description': u'S...

+--------------------+------------------------------------------+The Backend properties column shows a list of Extra Spec Keys that you can set, while the Value column provides information on valid corresponding values.

2.2.2.2. Create and Configure a Volume Type

- As an admin user in the dashboard, select Admin > Volumes > Volume Types.

- Click Create Volume Type.

- Enter the volume type name in the Name field.

- Click Create Volume Type. The new type appears in the Volume Types table.

- Select the volume type’s View Extra Specs action.

- Click Create and specify the Key and Value. The key-value pair must be valid; otherwise, specifying the volume type during volume creation will result in an error.

- Click Create. The associated setting (key-value pair) now appears in the Extra Specs table.

By default, all volume types are accessible to all OpenStack projects. If you need to create volume types with restricted access, you will need to do so through the CLI. For instructions, see Section 2.2.2.5, “Create and Configure Private Volume Types”.

You can also associate a QoS Spec to the volume type. For more information, see Section 2.2.5.4, “Associate a QOS Spec with a Volume Type”.

2.2.2.3. Edit a Volume Type

- As an admin user in the dashboard, select Admin > Volumes > Volume Types.

- In the Volume Types table, select the volume type’s View Extra Specs action.

On the Extra Specs table of this page, you can:

- Add a new setting to the volume type. To do this, click Create and specify the key/value pair of the new setting you want to associate to the volume type.

- Edit an existing setting associated with the volume type by selecting the setting’s Edit action.

- Delete existing settings associated with the volume type by selecting the extra specs' check box and clicking Delete Extra Specs in this and the next dialog screen.

2.2.2.4. Delete a Volume Type

To delete a volume type, select its corresponding check boxes from the Volume Types table and click Delete Volume Types.

2.2.2.5. Create and Configure Private Volume Types

By default, all volume types are available to all projects. You can create a restricted volume type by marking it private. To do so, set the type’s is-public flag to false.

Private volume types are useful for restricting access to volumes with certain attributes. Typically, these are settings that should only be usable by specific projects; examples include new back ends or ultra-high performance configurations that are being tested.

To create a private volume type, run:

$ cinder type-create --is-public false <TYPE-NAME>By default, private volume types are only accessible to their creators. However, admin users can find and view private volume types using the following command:

$ cinder type-list --allThis command lists both public and private volume types, and it also includes the name and ID of each one. You need the volume type’s ID to provide access to it.

Access to a private volume type is granted at the project level. To grant a project access to a private volume type, run:

$ cinder type-access-add --volume-type <TYPE-ID> --project-id <TENANT-ID>To view which projects have access to a private volume type, run:

$ cinder type-access-list --volume-type <TYPE-ID>To remove a project from the access list of a private volume type, run:

$ cinder type-access-remove --volume-type <TYPE-ID> --project-id <TENANT-ID>By default, only users with administrative privileges can create, view, or configure access for private volume types.

2.2.3. Create and Configure an Internal Project for the Block Storage Service

Some Block Storage features (for example, the Image-Volume cache) require the configuration of an internal tenant. The Block Storage service uses this tenant/project to manage block storage items that do not necessarily need to be exposed to normal users. Examples of such items are images cached for frequent volume cloning or temporary copies of volumes being migrated.

To configure an internal project, first create a generic project and user, both named cinder-internal. To do so, log in to the Controller node and run:

# openstack project create --enable --description "Block Storage Internal Project" cinder-internal

+-------------+----------------------------------+

| Property | Value |

+-------------+----------------------------------+

| description | Block Storage Internal Tenant |

| enabled | True |

| id | *cb91e1fe446a45628bb2b139d7dccaef* |

| name | cinder-internal |

+-------------+----------------------------------+

# openstack user create --project cinder-internal cinder-internal

+----------+----------------------------------+

| Property | Value |

+----------+----------------------------------+

| email | None |

| enabled | True |

| id | *84e9672c64f041d6bfa7a930f558d946* |

| name | cinder-internal |

|project_id| *cb91e1fe446a45628bb2b139d7dccaef* |

| username | cinder-internal |

+----------+----------------------------------+The procedure for adding Extra Config options creates an internal project. Refer to Section 2.2.4, “Configure and Enable the Image-Volume Cache”.

2.2.4. Configure and Enable the Image-Volume Cache

The Block Storage service features an optional Image-Volume cache which can be used when creating volumes from images. This cache is designed to improve the speed of volume creation from frequently-used images. For information on how to create volumes from images, see Section 2.3.1, “Create a volume”.

When enabled, the Image-Volume cache stores a copy of an image the first time a volume is created from it. This stored image is cached locally to the Block Storage back end to help improve performance the next time the image is used to create a volume. The Image-Volume cache’s limit can be set to a size (in GB), number of images, or both.

The Image-Volume cache is supported by several back ends. If you are using a third-party back end, refer to its documentation for information on Image-Volume cache support.

The Image-Volume cache requires that an internal tenant be configured for the Block Storage service. For instructions, see Section 2.2.3, “Create and Configure an Internal Project for the Block Storage Service”.

To enable and configure the Image-Volume cache on a back end (BACKEND), add the values to an ExtraConfig section of an environment file on the undercloud. For example:

parameter_defaults:

ExtraConfig:

cinder::config::cinder_config:

DEFAULT/cinder_internal_tenant_project_id:

value: TENANTID

DEFAULT/cinder_internal_tenant_user_id:

value: USERID

BACKEND/image_volume_cache_enabled:

value: True

BACKEND/image_volume_cache_max_size_gb:

value: MAXSIZE

BACKEND/image_volume_cache_max_count:

value: MAXNUMBER The Block Storage service database uses a time stamp to track when each cached image was last used to create an image. If either or both MAXSIZE and MAXNUMBER are set, the Block Storage service will delete cached images as needed to make way for new ones. Cached images with the oldest time stamp are deleted first whenever the Image-Volume cache limits are met.

After you create the environment file in /home/stack/templates/, log in as the stack user and deploy the configuration by running:

$ openstack overcloud deploy --templates \

-e /home/stack/templates/<ENV_FILE>.yaml

Where ENV_FILE.yaml is the name of the file with the ExtraConfig settings added earlier.

If you passed any extra environment files when you created the overcloud, pass them again here using the -e option to avoid making undesired changes to the overcloud.

For additional information on the openstack overcloud deploy command, see Deployment command in the Director Installation and Usage Guide.

2.2.5. Use Quality-of-Service Specifications

You can map multiple performance settings to a single Quality-of-Service specification (QOS Specs). Doing so allows you to provide performance tiers for different user types.

Performance settings are mapped as key-value pairs to QOS Specs, similar to the way volume settings are associated to a volume type. However, QOS Specs are different from volume types in the following respects:

QOS Specs are used to apply performance settings, which include limiting read/write operations to disks. Available and supported performance settings vary per storage driver.

To determine which QOS Specs are supported by your back end, consult the documentation of your back end device’s volume driver.

- Volume types are directly applied to volumes, whereas QOS Specs are not. Rather, QOS Specs are associated to volume types. During volume creation, specifying a volume type also applies the performance settings mapped to the volume type’s associated QOS Specs.

2.2.5.1. Basic volume Quality of Service

You can define performance limits for volumes on a per-volume basis using basic volume QOS values. The Block Storage service supports the following options:

-

read_iops_sec -

write_iops_sec -

total_iops_sec -

read_bytes_sec -

write_bytes_sec -

total_bytes_sec -

read_iops_sec_max -

write_iops_sec_max -

total_iops_sec_max -

read_bytes_sec_max -

write_bytes_sec_max -

total_bytes_sec_max -

size_iops_sec

2.2.5.2. Create and Configure a QOS Spec

As an administrator, you can create and configure a QOS Spec through the QOS Specs table. You can associate more than one key/value pair to the same QOS Spec.

- As an admin user in the dashboard, select Admin > Volumes > Volume Types.

- On the QOS Specs table, click Create QOS Spec.

- Enter a name for the QOS Spec.

In the Consumer field, specify where the QOS policy should be enforced:

Expand Table 2.1. Consumer Types Type Description back-endQOS policy will be applied to the Block Storage back end.

front-endQOS policy will be applied to Compute.

bothQOS policy will be applied to both Block Storage and Compute.

- Click Create. The new QOS Spec should now appear in the QOS Specs table.

- In the QOS Specs table, select the new spec’s Manage Specs action.

Click Create, and specify the Key and Value. The key-value pair must be valid; otherwise, specifying a volume type associated with this QOS Spec during volume creation will fail.

For example, to set read limit IOPS to

500, use the following Key/Value pair:read_iops_sec=500- Click Create. The associated setting (key-value pair) now appears in the Key-Value Pairs table.

2.2.5.3. Set Capacity-Derived QoS Limits

You can use volume types to implement capacity-derived Quality-of-Service (QoS) limits on volumes. This will allow you to set a deterministic IOPS throughput based on the size of provisioned volumes. Doing this simplifies how storage resources are provided to users — namely, providing a user with pre-determined (and, ultimately, highly predictable) throughput rates based on the volume size they provision.

In particular, the Block Storage service allows you to set how much IOPS to allocate to a volume based on the actual provisioned size. This throughput is set on an IOPS per GB basis through the following QoS keys:

read_iops_sec_per_gb

write_iops_sec_per_gb

total_iops_sec_per_gb

These keys allow you to set read, write, or total IOPS to scale with the size of provisioned volumes. For example, if the volume type uses read_iops_sec_per_gb=500, then a provisioned 3GB volume would automatically have a read IOPS of 1500.

Capacity-derived QoS limits are set per volume type, and configured like any normal QoS spec. In addition, these limits are supported by the underlying Block Storage service directly, and is not dependent on any particular driver.

For more information about volume types, see Section 2.2.2, “Group Volume Settings with Volume Types” and Section 2.2.2.2, “Create and Configure a Volume Type”. For instructions on how to set QoS specs, Section 2.2.5, “Use Quality-of-Service Specifications”.

When you apply a volume type (or perform a volume re-type) with capacity-derived QoS limits to an attached volume, the limits will not be applied. The limits will only be applied once you detach the volume from its instance.

See Section 2.3.12, “Changing the volume type (volume re-typing)” for information about volume re-typing.

2.2.5.4. Associate a QOS Spec with a Volume Type

As an administrator, you can associate a QOS Spec to an existing volume type using the Volume Types table.

- As an administrator in the dashboard, select Admin > Volumes > Volume Types.

- In the Volume Types table, select the type’s Manage QOS Spec Association action.

- Select a QOS Spec from the QOS Spec to be associated list.

- Click Associate. The selected QOS Spec now appears in the Associated QOS Spec column of the edited volume type.

2.2.5.5. Disassociate a QOS Spec from a Volume Type

- As an administrator in the dashboard, select Admin > Volumes > Volume Types.

- In the Volume Types table, select the type’s Manage QOS Spec Association action.

- Select None from the QOS Spec to be associated list.

- Click Associate. The selected QOS Spec is no longer in the Associated QOS Spec column of the edited volume type.

2.2.6. Configure Volume Encryption

Volume encryption helps provide basic data protection in case the volume back-end is either compromised or outright stolen. Both Compute and Block Storage services are integrated to allow instances to read access and use encrypted volumes. You must deploy Barbican to take advantage of volume encryption.

At present, volume encryption is not supported on file-based volumes (such as NFS).

Volume encryption is applied through volume type. See Section 2.2.6.1, “Configure Volume Type Encryption Through the Dashboard” for information on encrypted volume types.

2.2.6.1. Configure Volume Type Encryption Through the Dashboard

To create encrypted volumes, you first need an encrypted volume type. Encrypting a volume type involves setting what provider class, cipher, and key size it should use:

- As an admin user in the dashboard, select Admin > Volumes > Volume Types.

- In the Actions column of the volume to be encrypted, select Create Encryption to launch the Create Volume Type Encryption wizard.

From there, configure the Provider, Control Location, Cipher, and Key Size settings of the volume type’s encryption. The Description column describes each setting.

ImportantThe values listed below are the only supported options for Provider, Cipher, and Key Size.

-

Enter

luksfor Provider. -

Enter

aes-xts-plain64for Cipher. -

Enter

256for Key Size.

-

Enter

- Click Create Volume Type Encryption.

Once you have an encrypted volume type, you can invoke it to automatically create encrypted volumes. For more information on creating a volume type, see Section 2.2.2.2, “Create and Configure a Volume Type”. Specifically, select the encrypted volume type from the Type drop-down list in the Create Volume window (see Section 2.3, “Basic volume usage and configuration”).

To configure an encrypted volume type through the CLI, see Section 2.2.6.2, “Configure Volume Type Encryption Through the CLI”.

You can also re-configure the encryption settings of an encrypted volume type.

- Select Update Encryption from the Actions column of the volume type to launch the Update Volume Type Encryption wizard.

- In Project > Compute > Volumes, check the Encrypted column in the Volumes table to determine whether the volume is encrypted.

- If the volume is encrypted, click Yes in that column to view the encryption settings.

2.2.6.2. Configure Volume Type Encryption Through the CLI

To configure Block Storage volume encryption, do the following:

Create a volume type:

cinder type-create encrypt-typeConfigure the cipher, key size, control location, and provider settings:

cinder encryption-type-create --cipher aes-xts-plain64 --key-size 256 --control-location front-end encrypt-type luksCreate an encrypted volume:

cinder --debug create 1 --volume-type encrypt-type --name DemoEncVol

2.2.6.3. Automatic deletion of volume image encryption key

The Block Storage service (cinder) creates an encryption key in the Key Management service (barbican) when it uploads an encrypted volume to the Image service (glance). This creates a 1:1 relationship between an encryption key and a stored image.

Encryption key deletion prevents unlimited resource consumption of the Key Management service. The Block Storage, Key Management, and Image services automatically manage the key for an encrypted volume, including the deletion of the key.

The Block Storage service automatically adds two properties to a volume image:

-

cinder_encryption_key_id- The identifier of the encryption key that the Key Management service stores for a specific image. -

cinder_encryption_key_deletion_policy- The policy that tells the Image service to tell the Key Management service whether to delete the key associated with this image.

The values of these properties are automatically assigned. To avoid unintentional data loss, do not adjust these values.

When you create a volume image, the Block Storage service sets the cinder_encryption_key_deletion_policy property to on_image_deletion. When you delete a volume image, the Image service deletes the corresponding encryption key if the cinder_encryption_key_deletion_policy equals on_image_deletion.

Red Hat does not recommend manual manipulation of the cinder_encryption_key_id or cinder_encryption_key_deletion_policy properties. If you use the encryption key that is identified by the value of cinder_encryption_key_id for any other purpose, you risk data loss.

For additional information, refer to the Manage secrets with the OpenStack Key Manager guide.

2.2.7. Configure How Volumes are Allocated to Multiple Back Ends

If the Block Storage service is configured to use multiple back ends, you can use configured volume types to specify where a volume should be created. For details, see Section 2.3.2, “Specify back end for volume creation”.

The Block Storage service will automatically choose a back end if you do not specify one during volume creation. Block Storage sets the first defined back end as a default; this back end will be used until it runs out of space. At that point, Block Storage will set the second defined back end as a default, and so on.

If this is not suitable for your needs, you can use the filter scheduler to control how Block Storage should select back ends. This scheduler can use different filters to triage suitable back ends, such as:

- AvailabilityZoneFilter

- Filters out all back ends that do not meet the availability zone requirements of the requested volume.

- CapacityFilter

- Selects only back ends with enough space to accommodate the volume.

- CapabilitiesFilter

- Selects only back ends that can support any specified settings in the volume.

- InstanceLocality

- Configures clusters to use volumes local to the same node.

To configure the filter scheduler, add an environment file to your deployment containing:

parameter_defaults:

ControllerExtraConfig: #

cinder::config::cinder_config:

DEFAULT/scheduler_default_filters:

value: 'AvailabilityZoneFilter,CapacityFilter,CapabilitiesFilter,InstanceLocality'- 1

- You can also add the

ControllerExtraConfig:hook and its nested sections to theparameter_defaults:section of an existing environment file.

2.2.8. Deploying availability zones

An availability zone is a provider-specific method of grouping cloud instances and services. Director uses CinderXXXAvailabilityZone parameters (where XXX is associated with a specific back end) to configure different availability zones for Block Storage volume back ends.

Procedure

To deploy different availability zones for Block Storage volume back ends:

Add the following parameters to the environment file to create two availability zones:

parameter_defaults: CinderXXXAvailabilityZone: zone1 CinderYYYAvailabilityZone: zone2Replace XXX and YYY with supported back-end values, such as:

CinderISCSIAvailabilityZone CinderNfsAvailabilityZone CinderRbdAvailabilityZoneNoteSearch the

/usr/share/openstack-tripleo-heat-templates/deployment/cinder/directory for the heat template associated with your back end for the correct back-end value.The following example deploys two back ends where

rbdis zone 1 andiSCSIis zone 2:parameter_defaults: CinderRbdAvailabilityZone: zone1 CinderISCSIAvailabilityZone: zone2- Deploy the overcloud and include the updated environment file.

2.2.9. Configure and Use Consistency Groups

The Block Storage service allows you to set consistency groups. With this, you can group multiple volumes together as a single entity. This, in turn, allows you to perform operations on multiple volumes at once, rather than individually. Specifically, this release allows you to use consistency groups to create snapshots for multiple volumes simultaneously. By extension, this will also allow you to restore or clone those volumes simultaneously.

A volume may be a member of multiple consistency groups. However, you cannot delete, retype, or migrate volumes once you add them to a consistency group.

2.2.9.1. Set Up Consistency Groups

By default, Block Storage security policy disables consistency groups APIs. You need to enable it here before using the feature. The related consistency group entries in /etc/cinder/policy.json of the node hosting the Block Storage API service (namely, openstack-cinder-api) list the default settings:

"consistencygroup:create" : "group:nobody",

"consistencygroup:delete": "group:nobody",

"consistencygroup:update": "group:nobody",

"consistencygroup:get": "group:nobody",

"consistencygroup:get_all": "group:nobody",

"consistencygroup:create_cgsnapshot" : "group:nobody",

"consistencygroup:delete_cgsnapshot": "group:nobody",

"consistencygroup:get_cgsnapshot": "group:nobody",

"consistencygroup:get_all_cgsnapshots": "group:nobody",

These settings need to be changed in an environment file and then deployed to the overcloud using the openstack overcloud deploy command. If you edit the JSON file directly, the changes will be overwritten next time the overcloud is deployed.

To enable the consistency groups, edit an environment file and add a new entry to the parameter_defaults section. This will ensure that the entries are updated in the containers and are retained whenever the environment is re-deployed using director with the openstack overcloud deploy command.

Add a new section to an environment file using CinderApiPolicies to set the consistency group settings. The equivalent parameter_defaults section showing the default settings from the JSON file would look like this:

parameter_defauts:

CinderApiPolicies: { \

cinder-consistencygroup_create: { key: 'consistencygroup:create', value: 'group:nobody' }, \

cinder-consistencygroup_delete: { key: 'consistencygroup:delete', value: 'group:nobody' }, \

cinder-consistencygroup_update: { key: 'consistencygroup:update', value: 'group:nobody' }, \

cinder-consistencygroup_get: { key: 'consistencygroup:get', value: 'group:nobody' }, \

cinder-consistencygroup_get_all: { key: 'consistencygroup:get_all', value: 'group:nobody' }, \

cinder-consistencygroup_create_cgsnapshot: { key: 'consistencygroup:create_cgsnapshot', value: 'group:nobody' }, \

cinder-consistencygroup_delete_cgsnapshot: { key: 'consistencygroup:delete_cgsnapshot', value: 'group:nobody' }, \

cinder-consistencygroup_get_cgsnapshot: { key: 'consistencygroup:get_cgsnapshot', value: 'group:nobody' }, \

cinder-consistencygroup_get_all_cgsnapshots: { key: 'consistencygroup:get_all_cgsnapshots', value: 'group:nobody' }, \

}

The value ‘group:nobody’ determines that no group can use this feature, effectively disabling it. To enable it, you will need to change the group to another value.

For increased security, set the permissions for both consistency group API and volume type management API be identical. The volume type management API is set to "rule:admin_or_owner" by default (in the same /etc/cinder/policy.json file):

"volume_extension:types_manage": "rule:admin_or_owner",You can make the consistency groups feature available to all users by setting the API policy entries to allow users to create, use, and manage their own consistency groups. To do so, use rule:admin_or_owner:

CinderApiPolicies: { \

cinder-consistencygroup_create: { key: 'consistencygroup:create', value: 'rule:admin_or_owner' }, \

cinder-consistencygroup_delete: { key: 'consistencygroup:delete', value: 'rule:admin_or_owner' }, \

cinder-consistencygroup_update: { key: 'consistencygroup:update', value: 'rule:admin_or_owner' }, \

cinder-consistencygroup_get: { key: 'consistencygroup:get', value: 'rule:admin_or_owner' }, \

cinder-consistencygroup_get_all: { key: 'consistencygroup:get_all', value: 'rule:admin_or_owner' }, \

cinder-consistencygroup_create_cgsnapshot: { key: 'consistencygroup:create_cgsnapshot', value: 'rule:admin_or_owner' }, \

cinder-consistencygroup_delete_cgsnapshot: { key: 'consistencygroup:delete_cgsnapshot', value: 'rule:admin_or_owner' }, \

cinder-consistencygroup_get_cgsnapshot: { key: 'consistencygroup:get_cgsnapshot', value: 'rule:admin_or_owner' }, \

cinder-consistencygroup_get_all_cgsnapshots: { key: 'consistencygroup:get_all_cgsnapshots', value: 'rule:admin_or_owner’ }, \

}

Once you have created the environment file file in /home/stack/templates/, log in as the stack user. Then, deploy the configuration by running:

$ openstack overcloud deploy --templates \

-e /home/stack/templates/<ENV_FILE>.yamlWhere ENV_FILE.yaml is the name of the file with the ExtraConfig settings added earlier.

If you passed any extra environment files when you created the overcloud, pass them again here using the -e option to avoid making undesired changes to the overcloud.

For additional information on the openstack overcloud deploy command, refer to Creating the Overcloud with the CLI Tools section in the Director Installation and Usage Guide.

2.2.9.2. Create and Manage Consistency Groups

After enabling the consistency groups API, you can then start creating consistency groups. To do so:

- As an admin user in the dashboard, select Project > Compute > Volumes > Volume Consistency Groups.

- Click Create Consistency Group.

- In the Consistency Group Information tab of the wizard, enter a name and description for your consistency group. Then, specify its Availability Zone.

- You can also add volume types to your consistency group. When you create volumes within the consistency group, the Block Storage service will apply compatible settings from those volume types. To add a volume type, click its + button from the All available volume types list.

- Click Create Consistency Group. It should appear next in the Volume Consistency Groups table.

You can change the name or description of a consistency group by selecting Edit Consistency Group from its Action column.

In addition, you can also add or remove volumes from a consistency group directly. To do so:

- As an admin user in the dashboard, select Project > Compute > Volumes > Volume Consistency Groups.

Find the consistency group you want to configure. In the Actions column of that consistency group, select Manage Volumes. Doing so will launch the Add/Remove Consistency Group Volumes wizard.

- To add a volume to the consistency group, click its + button from the All available volumes list.

- To remove a volume from the consistency group, click its - button from the Selected volumes list.

- Click Edit Consistency Group.

2.2.9.3. Create and Manage Consistency Group Snapshots

After adding volumes to a consistency group, you can now create snapshots from it. Before doing so, first log in as admin user from the command line on the node hosting the openstack-cinder-api and run:

# export OS_VOLUME_API_VERSION=2Doing so will configure the client to use version 2 of openstack-cinder-api.

To list all available consistency groups (along with their respective IDs, which you will need later):

# cinder consisgroup-listTo create snapshots using the consistency group, run:

# cinder cgsnapshot-create --name CGSNAPNAME --description "DESCRIPTION" CGNAMEIDWhere:

- CGSNAPNAME is the name of the snapshot (optional).

- DESCRIPTION is a description of the snapshot (optional).

- CGNAMEID is the name or ID of the consistency group.

To display a list of all available consistency group snapshots, run:

` # cinder cgsnapshot-list `2.2.9.4. Clone Consistency Groups

Consistency groups can also be used to create a whole batch of pre-configured volumes simultaneously. You can do this by cloning an existing consistency group or restoring a consistency group snapshot. Both processes use the same command.

To clone an existing consistency group:

# cinder consisgroup-create-from-src --source-cg CGNAMEID --name CGNAME --description "DESCRIPTION"Where: - CGNAMEID is the name or ID of the consistency group you want to clone. - CGNAME is the name of your consistency group (optional). - DESCRIPTION is a description of your consistency group (optional).

To create a consistency group from a consistency group snapshot:

# cinder consisgroup-create-from-src --cgsnapshot CGSNAPNAME --name CGNAME --description "DESCRIPTION"Replace CGSNAPNAME with the name or ID of the snapshot you are using to create the consistency group.

2.3. Basic volume usage and configuration

The following procedures describe how to perform basic end-user volume management. These procedures do not require administrative privileges.

2.3.1. Create a volume

The default maximum number of volumes you can create for a project is 10.

Procedure

- In the dashboard, select Project > Compute > Volumes.

Click Create Volume, and edit the following fields:

Expand Field Description Volume name

Name of the volume.

Description

Optional, short description of the volume.

Type

Optional volume type (see Section 2.2.2, “Group Volume Settings with Volume Types”).

If you have multiple Block Storage back ends, you can use this to select a specific back end. See Section 2.3.2, “Specify back end for volume creation”.

Size (GB)

Volume size (in gigabytes).

Availability Zone

Availability zones (logical server groups), along with host aggregates, are a common method for segregating resources within OpenStack. Availability zones are defined during installation. For more information on availability zones and host aggregates, see Manage Host Aggregates.

Specify a Volume Source:

Expand Source Description No source, empty volume

The volume is empty and does not contain a file system or partition table.

Snapshot

Use an existing snapshot as a volume source. If you select this option, a new Use snapshot as a source list opens; you can then choose a snapshot from the list. For more information about volume snapshots, see Section 2.3.10, “Create, use, or delete volume snapshots”.

Image

Use an existing image as a volume source. If you select this option, a new Use snapshot as a source list opens; you can then choose an image from the list.

Volume

Use an existing volume as a volume source. If you select this option, a new Use snapshot as a source list opens; you can then choose a volume from the list.

- Click Create Volume. After the volume is created, its name appears in the Volumes table.

You can also change the volume type later on. For more information, see Section 2.3.12, “Changing the volume type (volume re-typing)”.

2.3.2. Specify back end for volume creation

Whenever multiple Block Storage back ends are configured, you will also need to create a volume type for each back end. You can then use the type to specify which back end should be used for a created volume. For more information about volume types, see Section 2.2.2, “Group Volume Settings with Volume Types”.

To specify a back end when creating a volume, select its corresponding volume type from the Type drop-down list (see Section 2.3.1, “Create a volume”).

If you do not specify a back end during volume creation, the Block Storage service will automatically choose one for you. By default, the service will choose the back end with the most available free space. You can also configure the Block Storage service to choose randomly among all available back ends instead; for more information, see Section 2.2.7, “Configure How Volumes are Allocated to Multiple Back Ends”.

2.3.3. Edit a volume name or description

- In the dashboard, select Project > Compute > Volumes.

- Select the volume’s Edit Volume button.

- Edit the volume name or description as required.

- Click Edit Volume to save your changes.

To create an encrypted volume, you must first have a volume type configured specifically for volume encryption. In addition, both Compute and Block Storage services must be configured to use the same static key. For information on how to set up the requirements for volume encryption, refer to Section 2.2.6, “Configure Volume Encryption”.

2.3.4. Resize (extend) a volume

The ability to resize a volume in use is supported but is driver dependant. RBD is supported. You cannot extend in-use multi-attach volumes. For more information about support for this feature, contact Red Hat Support.

List the volumes to retrieve the ID of the volume you want to extend:

$ cinder listTo resize the volume, run the following commands to specify the correct API microversion, then pass the volume ID and the new size (a value greater than the old one) as parameters:

$ OS_VOLUME_API_VERSION=<API microversion> $ cinder extend <volume ID> <size>Replace <API microversion>, <volume ID>, and <size> with appropriate values. Use the following example as a guide:

$ OS_VOLUME_API_VERSION=3.42 $ cinder extend 573e024d-5235-49ce-8332-be1576d323f8 10

2.3.5. Delete a volume

- In the dashboard, select Project > Compute > Volumes.

- In the Volumes table, select the volume to delete.

- Click Delete Volumes.

A volume cannot be deleted if it has existing snapshots. For instructions on how to delete snapshots, see Section 2.3.10, “Create, use, or delete volume snapshots”.

2.3.6. Attach and detach a volume to an instance

Instances can use a volume for persistent storage. A volume can only be attached to one instance at a time. For more information about instances, see Manage Instances in the Instances and Images Guide.

2.3.6.1. Attaching a volume to an instance

- In the dashboard, select Project > Compute > Volumes.

- Select the Edit Attachments action. If the volume is not attached to an instance, the Attach To Instance drop-down list is visible.

- From the Attach To Instance list, select the instance to which you want to attach the volume.

- Click Attach Volume.

2.3.6.2. Detaching a volume from an instance

- In the dashboard, select Project > Compute > Volumes.

- Select the volume’s Manage Attachments action. If the volume is attached to an instance, the instance’s name is displayed in the Attachments table.

- Click Detach Volume in this and the next dialog screen.

2.3.7. Attach a volume to multiple instances

Volume multi-attach gives multiple instances simultaneous read/write access to a Block Storage volume. This feature works when you use Ceph as a Block Storage (cinder) back end. The Ceph RBD driver is supported.

You must use a multi-attach or cluster-aware file system to manage write operations from multiple instances. Failure to do so causes data corruption.

To use the optional multi-attach feature, your cinder driver must support it. For more information about the certification for your vendor plugin, see the following locations:

Contact Red Hat support to verify that multi-attach is supported for your vendor plugin.

Restrictions for multi-attach volumes:

- Live migration of multi-attach volumes is not available.

- Volume encryption is not supported with multi-attach volumes.

- Retyping an attached volume from a multi-attach type to non-multi-attach type or non-multi-attach to multi-attach is not possible.

- Read-only multi-attach is not supported.

- You cannot extend in-use multi-attach volumes.

2.3.7.1. Creating a multi-attach volume type

To attach a volume to multiple instances, set the multiattach flag to <is>True in the volume extra specs. When you create a multi-attach volume type, the volume inherits the flag and becomes a multi-attach volume.

By default, creating a new volume type is an admin-only operation.

Procedure

Run the following commands to create a multi-attach volume type:

$ cinder type-create multiattach $ cinder type-key multiattach set multiattach="<is> True"NoteThis procedure creates a volume on any back end that supports multiattach. Therefore, if there are two back ends that support multiattach, the scheduler decides which back end to use based on the available space at the time of creation.

Run the following command to specify the back end:

$ cinder type-key multiattach set volume_backend_name=<backend_name>

2.3.7.2. Volume retyping

You can retype a volume to be multi-attach capable or retype a multi-attach capable volume to make it incapable of attaching to multiple instances. However, you can retype a volume only when it is not in use and its status is available.

When you attach a multi-attach volume, some hypervisors require special considerations, such as when you disable caching. Currently, there is no way to safely update an attached volume while keeping it attached the entire time. Retyping fails if you attempt to retype a volume that is attached to multiple instances.

2.3.7.3. Creating a multi-attach volume

After you create a multi-attach volume type, create a multi-attach volume.

Procedure

Run the following command to create a multi-attach volume:

$ cinder create <volume_size> --name <volume_name> --volume-type multiattachRun the following command to verify that a volume is multi-attach capable. If the volume is multi-attach capable, the

multiattachfield equalsTrue.$ cinder show <vol_id> | grep multiattach | multiattach | True |

You can now attach the volume to multiple instances. For information about how to attach a volume to an instance, see Attach a volume to an instance.

2.3.7.4. Supported back ends

The Block Storage back end must support multi-attach. For information about supported back ends, contact Red Hat Support.

2.3.8. Read-only volumes

A volume can be marked read-only to protect its data from being accidentally overwritten or deleted. To do so, set the volume to read-only using the following command:

# cinder readonly-mode-update <VOLUME-ID> trueTo set a read-only volume back to read-write, run:

# cinder readonly-mode-update <VOLUME-ID> false2.3.9. Change a volume owner

To change a volume’s owner, you will have to perform a volume transfer. A volume transfer is initiated by the volume’s owner, and the volume’s change in ownership is complete after the transfer is accepted by the volume’s new owner.

2.3.9.1. Transfer a volume from the command line

- Log in as the volume’s current owner.

List the available volumes:

# cinder listInitiate the volume transfer:

# cinder transfer-create VOLUMEWhere

VOLUMEis the name orIDof the volume you wish to transfer. For example,+------------+--------------------------------------+ | Property | Value | +------------+--------------------------------------+ | auth_key | f03bf51ce7ead189 | | created_at | 2014-12-08T03:46:31.884066 | | id | 3f5dc551-c675-4205-a13a-d30f88527490 | | name | None | | volume_id | bcf7d015-4843-464c-880d-7376851ca728 | +------------+--------------------------------------+The

cinder transfer-createcommand clears the ownership of the volume and creates anidandauth_keyfor the transfer. These values can be given to, and used by, another user to accept the transfer and become the new owner of the volume.The new user can now claim ownership of the volume. To do so, the user should first log in from the command line and run:

# cinder transfer-accept TRANSFERID TRANSFERKEYWhere

TRANSFERIDandTRANSFERKEYare theidandauth_keyvalues returned by thecinder transfer-createcommand, respectively. For example,# cinder transfer-accept 3f5dc551-c675-4205-a13a-d30f88527490 f03bf51ce7ead189

You can view all available volume transfers using:

# cinder transfer-list2.3.9.2. Transfer a volume using the dashboard

Create a volume transfer from the dashboard

- As the volume owner in the dashboard, select Projects > Volumes.

- In the Actions column of the volume to transfer, select Create Transfer.

In the Create Transfer dialog box, enter a name for the transfer and click Create Volume Transfer.

The volume transfer is created, and in the Volume Transfer screen you can capture the

transfer IDand theauthorization keyto send to the recipient project.Click the Download transfer credentials button to download a

.txtfile containing thetransfer name,transfer ID, andauthorization key.NoteThe authorization key is available only in the Volume Transfer screen. If you lose the authorization key, you must cancel the transfer and create another transfer to generate a new authorization key.

Close the Volume Transfer screen to return to the volume list.

The volume status changes to

awaiting-transferuntil the recipient project accepts the transfer

Accept a volume transfer from the dashboard

- As the recipient project owner in the dashboard, select Projects > Volumes.

- Click Accept Transfer.

In the Accept Volume Transfer dialog box, enter the

transfer IDand theauthorization keythat you received from the volume owner and click Accept Volume Transfer.The volume now appears in the volume list for the active project.

2.3.10. Create, use, or delete volume snapshots

You can preserve the state of a volume at a specific point in time by creating a volume snapshot. You can then use the snapshot to clone new volumes.

Volume backups are different from snapshots. Backups preserve the data contained in the volume, whereas snapshots preserve the state of a volume at a specific point in time. In addition, you cannot delete a volume if it has existing snapshots. Volume backups are used to prevent data loss, whereas snapshots are used to facilitate cloning.

For this reason, snapshot back ends are typically co-located with volume back ends in order to minimize latency during cloning. By contrast, a backup repository is usually located in a different location (eg. different node, physical storage, or even geographical location) in a typical enterprise deployment. This is to protect the backup repository from any damage that might occur to the volume back end.

For more information about volume backups, see the Block Storage Backup Guide.

To create a volume snapshot:

- In the dashboard, select Project > Compute > Volumes.

- Select the target volume’s Create Snapshot action.

- Provide a Snapshot Name for the snapshot and click Create a Volume Snapshot. The Volume Snapshots tab displays all snapshots.

You can clone new volumes from a snapshot when it appears in the Volume Snapshots table. To do so, select the snapshot’s Create Volume action. For more information about volume creation, see Section 2.3.1, “Create a volume”.

To delete a snapshot, select its Delete Volume Snapshot action.

If your OpenStack deployment uses a Red Hat Ceph back end, see Section 2.3.10.1, “Protected and unprotected snapshots in a Red Hat Ceph Storage back end” for more information about snapshot security and troubleshooting.

For RADOS block device (RBD) volumes for the Block Storage service (cinder) that are created from snapshots, you can use the CinderRbdFlattenVolumeFromSnapshot heat parameter to flatten and remove the dependency on the snapshot. When you set CinderRbdFlattenVolumeFromSnapshot to true, the Block Storage service flattens RBD volumes and removes the dependency on the snapshot and also flattens all future snapshots. The default value is false, which is also the default value for the cinder RBD driver.

Be aware that flattening a snapshot removes any potential block sharing with the parent and results in larger snapshot sizes on the back end and increases the time for snapshot creation.

2.3.10.1. Protected and unprotected snapshots in a Red Hat Ceph Storage back end

When using Red Hat Ceph Storage as a back end for your OpenStack deployment, you can set a snapshot to protected in the back end. Attempting to delete protected snapshots through OpenStack (as in, through the dashboard or the cinder snapshot-delete command) will fail.

When this occurs, set the snapshot to unprotected in the Red Hat Ceph back end first. Afterwards, you should be able to delete the snapshot through OpenStack as normal.

For related instructions, see Protecting a Snapshot and Unprotecting a Snapshot.

2.3.11. Upload a volume to the Image Service

You can upload an existing volume as an image to the Image service directly. To do so:

- In the dashboard, select Project > Compute > Volumes.

- Select the target volume’s Upload to Image action.

- Provide an Image Name for the volume and select a Disk Format from the list.

- Click Upload.

To view the uploaded image, select Project > Compute > Images. The new image appears in the Images table. For information on how to use and configure images, see Manage Images in the Instances and Images Guide available at Red Hat OpenStack Platform.

2.3.12. Changing the volume type (volume re-typing)

Volume re-typing is the process of applying a volume type (and, in turn, its settings) to an already existing volume. For more information about volume types, see Section 2.2.2, “Group Volume Settings with Volume Types”.

A volume can be re-typed whether or not it has an existing volume type. In either case, a re-type will only be successful if the Extra Specs of the volume type can be applied to the volume. Volume re-typing is useful for applying pre-defined settings or storage attributes to an existing volume, such as when you want to:

- Migrate the volume to a different back end (Section 2.4.1.2, “Migrating between back ends”).

- Change the volume’s storage class/tier.

Users with no administrative privileges can only re-type volumes they own. To perform a volume re-type:

- In the dashboard, select Project > Compute > Volumes.

- In the Actions column of the volume you want to migrate, select Change Volume Type.

- In the Change Volume Type dialog, select the target volume type and define the new back end from the Type drop-down list.

If you are migrating the volume to another back end, select On Demand from the Migration Policy drop-down list. See Section 2.4.1.2, “Migrating between back ends” for more information.

NoteRetyping a volume between two different types of back ends is not supported in this release.

- Click Change Volume Type to start the migration.

2.4. Advanced Volume Configuration

The following procedures describe how to perform advanced volume management procedures.

2.4.1. Migrate a Volume

The Block Storage service allows you to migrate volumes between hosts or back ends within and across availability zones (AZ). Volume migration has some limitations:

- In-use volume migration depends upon driver support.

- The volume cannot have snapshots.

- The target of the in-use volume migration requires ISCSI or fibre channel (FC) block-backed devices and cannot use non-block devices, such as Ceph RADOS Block Device (RBD).

The speed of any migration depends upon your host setup and configuration. With driver-assisted migration, the data movement takes place at the storage backplane instead of inside of the OpenStack Block Storage service. Optimized driver-assisted copying is available for not-in-use RBD volumes if volume re-typing is not required. Otherwise, data is copied from one host to another through the Block Storage service.

2.4.1.1. Migrating between hosts

When you migrate a volume between hosts, both hosts must reside on the same back end. Use the dashboard to migrate a volume between hosts:

- In the dashboard, select Admin > Volumes.

- In the Actions column of the volume you want to migrate, select Migrate Volume.

In the Migrate Volume dialog, select the target host from the Destination Host drop-down list.

NoteTo bypass any driver optimizations for the host migration, select the Force Host Copy checkbox.

- Click Migrate to start the migration.

2.4.1.2. Migrating between back ends

Migrating a volume between back ends involves volume re-typing. This means that in order to migrate to a new back end, the following must be true:

- The new back end must be specified as an Extra Spec in a separate target volume type.

- All other Extra Specs defined in the target volume type must be compatible with the original volume type of the volume.

For more information, see:

When you define the back end as an Extra Spec, use volume_backend_name as the Key. Its corresponding value is the name of the back end, as defined in the Block Storage configuration file, /etc/cinder/cinder.conf. In this file, each back end is defined in its own section, and its corresponding name is set in the volume_backend_name parameter.

After you have a back end defined in a target volume type, you can migrate a volume to that back end by using the re-typing procedure. This involves re-applying the target volume type to a volume, thereby applying the new back end settings. For more information, see Section 2.3.12, “Changing the volume type (volume re-typing)”.

Retyping a volume between two different types of back ends is not supported in this release.

2.5. Multipath configuration

Use multipath to configure multiple I/O paths between server nodes and storage arrays into a single device to create redundancy and improve performance. You can configure multipath on new and existing overcloud deployments.

2.5.1. Configuring multipath on new deployments

Complete this procedure to configure multipath on a new overcloud deployment.

For information about how to configure multipath on existing overcloud deployments, see Section 2.5.2, “Configuring multipath on existing deployments”.

Prerequisites

The overcloud Controller and Compute nodes must have access to the Red Hat Enterprise Linux server repository. For more information, see Downloading the base cloud image in the Director Installation and Usage guide.

Procedure

Configure the overcloud.

NoteFor more information, see Configuring a basic overcloud with CLI tools in the Director Installation and Usage guide.

Update the heat template to enable multipath:

parameter_defaults: NovaLibvirtVolumeUseMultipath: true NovaComputeOptVolumes: - /etc/multipath.conf:/etc/multipath.conf:ro - /etc/multipath/:/etc/multipath/:rw CinderVolumeOptVolumes: - /etc/multipath.conf:/etc/multipath.conf:ro - /etc/multipath/:/etc/multipath/:rwOptional: If you are using Block Storage (cinder) as an Image service (glance) back end, you must also complete the following steps:

Add the following

GlanceApiOptVolumesconfiguration to the heat template:parameter_defaults: GlanceApiOptVolumes: - /etc/multipath.conf:/etc/multipath.conf:ro - /etc/multipath/:/etc/multipath/:rwSet the

ControllerExtraConfigparameter in the following way:parameter_defaults: ControllerExtraConfig: glance::config::api_config: default_backend/cinder_use_multipath: value: true- Note

-

Ensure that both

default_backendand theGlanceBackendIDheat template default values match.

For every configured back end, set

use_multipath_for_image_xfertotrue:parameter_defaults: ExtraConfig: cinder::config::cinder_config: <backend>/use_multipath_for_image_xfer: value: trueDeploy the overcloud:

$ openstack overcloud deployNoteFor information about creating the overcloud using overcloud parameters, see Creating the Overcloud with the CLI Tools in the Director Installation and Usage guide.

Before containers are running, install multipath on all Controller and Compute nodes:

$ sudo dnf install -y device-mapper-multipathNoteDirector provides a set of hooks to support custom configuration for specific node roles after the first boot completes and before the core configuration begins. For more information about custom overcloud configuration, see Pre-Configuration: Customizing Specific Overcloud Roles in the Advanced Overcloud Customization guide.

Configure the multipath daemon on all Controller and Compute nodes:

$ mpathconf --enable --with_multipathd y --user_friendly_names n --find_multipaths yNoteThe example code creates a basic multipath configuration that works for most environments. However, check with your storage vendor for recommendations, because some vendors have optimized configurations that are specific to their hardware. For more information about multipath, see the DM Multipath guide.

Run the following command on all Controller and Compute nodes to prevent partition creation:

$ sed -i "s/^defaults {/defaults {\n\tskip_kpartx yes/" /etc/multipath.confNoteSetting

skip_kpartxtoyesprevents kpartx on the Compute node from automatically creating partitions on the device, which prevents unnecessary device mapper entries. For more information about configuration attributes, see Multipaths device configuration attributes in the DM Multipath guide.Start the multipath daemon on all Controller and Compute nodes:

$ systemctl enable --now multipathd

2.5.2. Configuring multipath on existing deployments

Complete this procedure to configure multipath on an existing overcloud deployment.

For more information about how to configure multipath on new overcloud deployments, see Section 2.5.1, “Configuring multipath on new deployments”.

Prerequisites

The overcloud Controller and Compute nodes must have access to the Red Hat Enterprise Linux server repository. For more information, see Downloading the base cloud image in the Director Installation and Usage guide.

Procedure

Verify that multipath is installed on all Controller and Compute nodes:

$ rpm -qa | grep device-mapper-multipath device-mapper-multipath-0.4.9-127.el8.x86_64 device-mapper-multipath-libs-0.4.9-127.el8.x86_64If multipath is not installed, install it on all Controller and Compute nodes:

$ sudo dnf install -y device-mapper-multipathConfigure the multipath daemon on all Controller and Compute nodes:

$ mpathconf --enable --with_multipathd y --user_friendly_names n --find_multipaths yNoteThe example code creates a basic multipath configuration that works for most environments. However, check with your storage vendor for recommendations, because some vendors have optimized configurations specific to their hardware. For more information about multipath, see the DM Multipath guide.

Run the following command on all Controller and Compute nodes to prevent partition creation:

$ sed -i "s/^defaults {/defaults {\n\tskip_kpartx yes/" /etc/multipath.confNoteSetting

skip_kpartxtoyesprevents kpartx on the Compute node from automatically creating partitions on the device, which prevents unnecessary device mapper entries. For more information about configuration attributes, see Multipaths device configuration attributes in the DM Multipath guide.Start the multipath daemon on all Controller and Compute nodes:

$ systemctl enable --now multipathdUpdate the heat template to enable multipath:

parameter_defaults: NovaLibvirtVolumeUseMultipath: true NovaComputeOptVolumes: - /etc/multipath.conf:/etc/multipath.conf:ro - /etc/multipath/:/etc/multipath/:rw CinderVolumeOptVolumes: - /etc/multipath.conf:/etc/multipath.conf:ro - /etc/multipath/:/etc/multipath/:rwOptional: If you are using Block Storage (cinder) as an Image service (glance) back end, you must also complete the following steps:

Add the following

GlanceApiOptVolumesconfiguration to the heat template:parameter_defaults: GlanceApiOptVolumes: - /etc/multipath.conf:/etc/multipath.conf:ro - /etc/multipath/:/etc/multipath/:rwSet the

ControllerExtraConfigparameter in the following way:parameter_defaults: ControllerExtraConfig: glance::config::api_config: default_backend/cinder_use_multipath: value: true- Note

-

Ensure that both

default_backendand theGlanceBackendIDheat template default values match.

For every configured back end, set

use_multipath_for_image_xfertotrue:parameter_defaults: ExtraConfig: cinder::config::cinder_config: <backend>/use_multipath_for_image_xfer: value: trueRun the following command to update the overcloud:

$ openstack overcloud deployNoteWhen you run the

openstack overcloud deploycommand to install and configure multipath, you must pass all previous roles and environment files that you used to deploy the overcloud, such as--templates,--roles-file,-efor all environment files, and--timeout. Failure to pass all previous roles and environment files can cause problems with your overcloud deployment. For more information about using overcloud parameters, see Creating the Overcloud with the CLI Tools in the Director Installation and Usage guide.

2.5.3. Verifying multipath configuration

This procedure describes how to verify multipath configuration on new or existing overcloud deployments.

Procedure

- Create a VM.

- Attach a non-encrypted volume to the VM.

Get the name of the Compute node that contains the instance:

$ nova show INSTANCE | grep OS-EXT-SRV-ATTR:hostReplace INSTANCE with the name of the VM that you booted.

Retrieve the virsh name of the instance:

$ nova show INSTANCE | grep instance_nameReplace INSTANCE with the name of the VM that you booted.

Get the IP address of the Compute node:

$ . stackrc $ nova list | grep compute_nameReplace compute_name with the name from the output of the

nova show INSTANCEcommand.SSH into the Compute node that runs the VM:

$ ssh heat-admin@COMPUTE_NODE_IPReplace COMPUTE_NODE_IP with the IP address of the Compute node.

Log in to the container that runs virsh:

$ podman exec -it nova_libvirt /bin/bashEnter the following command on a Compute node instance to verify that it is using multipath in the cinder volume host location:

virsh domblklist VIRSH_INSTANCE_NAME | grep /dev/dmReplace VIRSH_INSTANCE_NAME with the output of the

nova show INSTANCE | grep instance_namecommand.