Configuring load balancing as a service

Configuring the Load-balancing service (octavia) to manage network traffic across the data plane in a Red Hat OpenStack Services on OpenShift environment

Abstract

Providing feedback on Red Hat documentation

We appreciate your feedback. Tell us how we can improve the documentation.

To provide documentation feedback for Red Hat OpenStack Services on OpenShift (RHOSO), create a Jira issue in the OSPRH Jira project.

Procedure

- Log in to the Red Hat Atlassian Jira.

- Click the following link to open a Create Issue page: Create issue

- Select Red Hat OpenStack Services on OpenShift as the Project.

- Select Bug as the Issue Type.

- Click Next.

- Complete the Summary and Description fields. In the Description field, include the documentation URL, chapter or section number, and a detailed description of the issue.

- Select documentation as the Component.

- Click Create.

- Review the details of the bug you created.

Some features in this guide are a Technology Preview

The following Load-balancing service (octavia) features are available in this release as a Technology Preview, and therefore are not fully supported by Red Hat. They should be used only for testing, and should not be deployed in a production environment:

- IPv6 support

For more information, see Technology Preview.

Chapter 1. Introduction to the Load-balancing service

The Load-balancing service (octavia) lets you distribute traffic evenly across multiple servers to improve availability, performance, and scalability.

The Load-balancing service provides a load balancing as a service API version 2 implementation for Red Hat OpenStack Services on OpenShift (RHOSO) environments. The Load-balancing service manages multiple virtual machines, containers, or bare metal servers—collectively known as amphorae—which it launches on demand. The ability to provide on-demand, horizontal scaling makes the Load-balancing service a fully-featured load balancer that is appropriate for large RHOSO enterprise deployments.

1.1. Load-balancing service components

The Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia) uses a set of VM instances referred to as amphorae that reside on the Compute nodes. The Load-balancing service controllers communicate with the amphorae over a load-balancing management network (lb-mgmt-net).

When using octavia, you can create load-balancer virtual IPs (VIPs) that do not require floating IPs (FIPs). Not using FIPs has the advantage of improving performance through the load balancer.

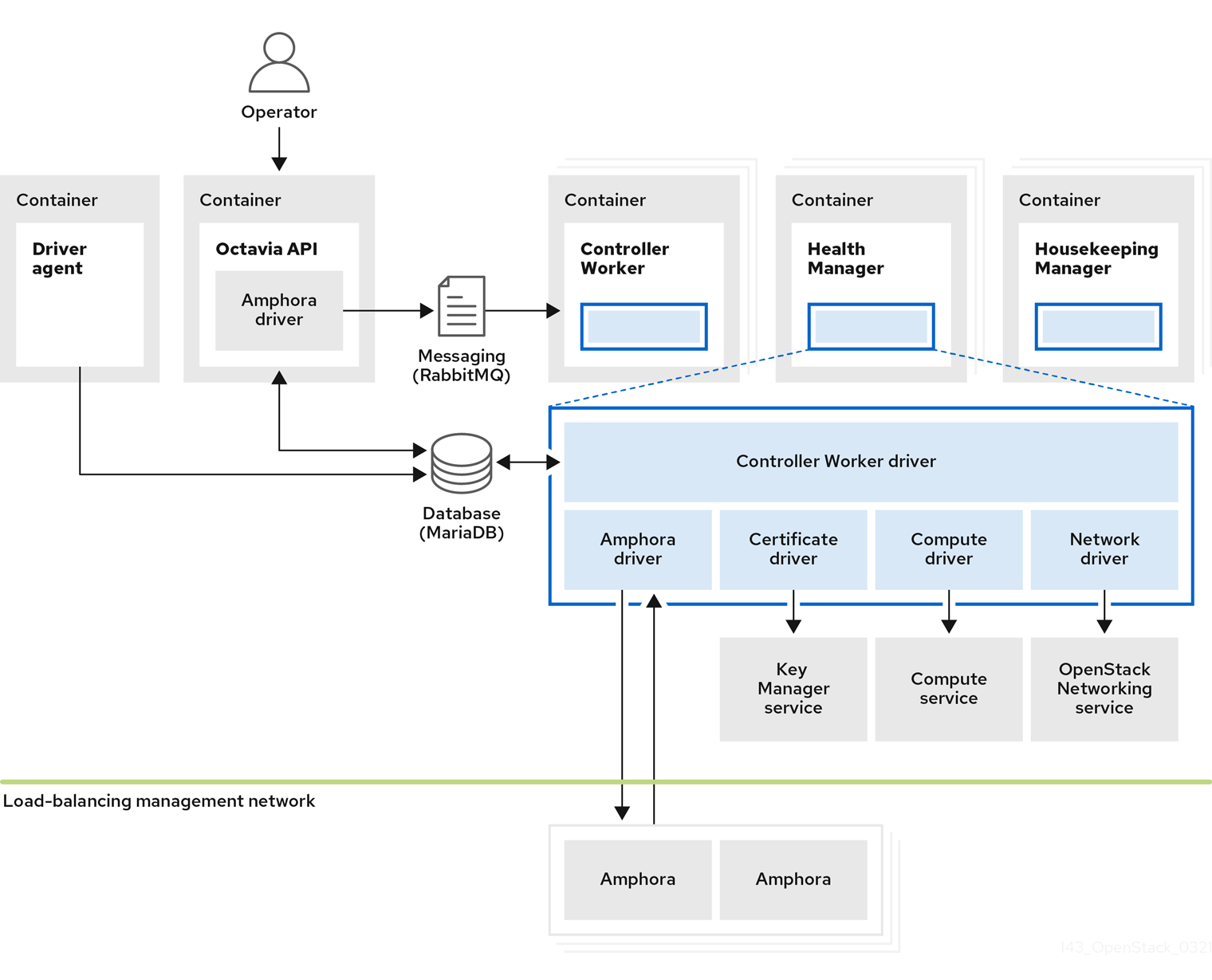

Figure 1.1. Load-balancing service components

Figure 1.1 shows the components of the Load-balancing service are hosted on the same nodes as the Networking API server, which by default, is on the Red Hat OpenShift worker nodes that host the RHOSO control plane. The Load-balancing service consists of the following components:

- Octavia API (

octavia-apipods) - Provides the REST API for users to interact with octavia.

- Controller Worker (

octavia-workerpods) - Sends configuration and configuration updates to amphorae over the load-balancing management network.

- Health Manager (

octavia-healthmanagerpods) - Monitors the health of individual amphorae and handles failover events if an amphora encounters a failure.

- Housekeeping Manager (

octavia-housekeepingpods) - Cleans up deleted database records, and manages amphora certificate rotation.

- Driver agent (included within the

octavia-apipods) - Supports other provider drivers, such as OVN.

- Amphora

- Performs the load balancing. Amphorae are typically instances that run on Compute nodes that you configure with load balancing parameters according to the listener, pool, health monitor, L7 policies, and members' configuration. Amphorae send a periodic heartbeat to the Health Manager.

1.2. Octavia network

The Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia) requires a dedicated network, the octavia network, to serve as the external provider network component of the load-balancing management network. This network links the amphorae to the Octavia amphora controller services. The octavia network also provides a dedicated address pool that RHOSO uses to create additional static IP addresses for configuring heartbeat destinations for the amphorae.

The Load-balancing service uses a bridge-type network attachment definition (NAD), octbr, to connect the RHOSO control plane pods to the load-balancing management network. A bridge attachment is required because a macvlan interface would block the bridged packet flow that the Open vSwitch (OVS) bridge depends on to serve multiple tenants. On each node, a network configuration policy creates a VLAN sub-interface and attaches it to the octbr bridge. This setup provides the layer 2 path that carries traffic between pods on different worker nodes. Within the cluster, a dedicated link router connects the provider network to the lb-mgmt-net tenant network, where the amphora instances reside. The control plane pods receive their IP addresses from the provider network through the NAD. This routing arrangement provides the Load-balancing service controllers with direct connectivity to every amphora without using source network address translation (SNAT).

To route traffic between the tenant network and the control plane pods, the Networking service (neutron) treats the octavia network attachment as an externally routed provider network. The OVN controller uses a network interface controller (NIC) mapping to associate the network attachment name with the provider network. The octavia Operator also assigns each Octavia Health Manager an additional dedicated IP address that survives pod restarts so the related configuration in the running amphorae remains valid. This setup establishes standard routing to direct traffic, such as heartbeat signals and control commands, between the amphorae and the Load-balancing service controllers. The octavia network also connects the rsyslogd pods for the Load-balancing service to perform log offloading.

1.3. Load-balancing service object model

The Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia) uses a typical load-balancing object model.

Figure 1.2. Load-balancing service object model diagram

- Load balancer

- The top API object that represents the load-balancing entity. The VIP address is allocated when you create the load balancer. When you use the amphora provider to create the load balancer one or more amphora instances launch on one or more Compute nodes.

- Listener

- The port on which the load balancer listens, for example, TCP port 80 for HTTP. Listeners also support TLS-terminated HTTPS load balancers.

- Health Monitor

- A process that performs periodic health checks on each back-end member server to pre-emptively detect failed servers and temporarily remove them from the pool.

- Pool

- A group of members that handle client requests from the load balancer. You can associate pools with more than one listener by using the API. You can share pools with L7 policies.

- Member

- Describes how to connect to the back-end instances or services. This description consists of the IP address and network port on which the back end member is available.

- L7 Rule

- Defines the layer 7 (L7) conditions that determine whether an L7 policy applies to the connection.

- L7 Policy

- A collection of L7 rules associated with a listener, and which might also have an association to a back-end pool. Policies describe actions that the load balancer takes if all of the rules in the policy are true.

1.4. Uses of load balancing in RHOSO

Load balancing is essential for enabling simple or automatic delivery scaling and availability for cloud deployments. The Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia) depends on other RHOSO services:

- Compute service (nova) - For managing the Load-balancing service VM instance (amphora) lifecycle, and creating compute resources on demand.

- Networking service (neutron) - For network connectivity between amphorae, tenant environments, and external networks.

- Key Manager service (barbican) - For managing TLS certificates and credentials, when TLS session termination is configured on a listener.

- Identity service (keystone) - For authentication requests to the octavia API, and for the Load-balancing service to authenticate with other RHOSO services.

- Image service (glance) - For storing the amphora virtual machine image.

The Load-balancing service interacts with the other RHOSO services through a driver interface. The driver interface avoids major restructuring of the Load-balancing service if an external component requires replacement with a functionally-equivalent service.

Chapter 2. Considerations for implementing the Load-balancing service

Before you deploy the Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia), you must make various decisions, such as which provider to use or whether to implement a highly available environment.

For more information, see the following sections:

- Section 2.1, “Load-balancing service provider drivers”

- Section 2.2, “Load-balancing service (octavia) feature support matrix”

- Section 2.3, “Load-balancing service software requirements”

- Section 2.4, “Avoiding taskflow interruptions by using flow resumption”

- Section 2.5, “Basics of active-standby topology for Load-balancing service instances”

2.1. Load-balancing service provider drivers

The Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia) supports enabling multiple provider drivers by using the Octavia v2 API. You can choose to use one provider driver, or multiple provider drivers simultaneously.

RHOSO provides two load-balancing providers, amphora and Open Virtual Network (OVN).

Amphora, the default, is a highly available load balancer with a feature set that scales with your compute environment. Because of this, amphora is suited for large-scale deployments.

The OVN load-balancing provider is a lightweight load balancer with a basic feature set. OVN is typical for east-west, layer 4 network traffic. OVN provisions quickly and consumes fewer resources than a full-featured load-balancing provider such as amphora.

2.2. Load-balancing service (octavia) feature support matrix

The Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia) provides two load-balancing providers, amphora and Open Virtual Network (OVN).

Amphora is a full-featured load-balancing provider that requires a separate haproxy VM and an extra latency hop.

OVN runs on every node and does not require a separate VM nor an extra hop. However, OVN has far fewer load-balancing features than amphora.

The following table lists features in the Load-balancing service that 18.0 supports and in which maintenance release support for the feature began.

If the feature is not listed, then RHOSO 18.0 does not support the feature.

| Feature | Support level in RHOSO 18.0 | |

| Amphora Provider | OVN Provider | |

| Amphora active-standby | Full support | N/A |

| Availability zones | Full support | No support |

| Backup members | Technology Preview | No support |

| DPDK | No support | No support |

| Flow resumption (taskflow jobboard) | Full support | N/A |

| Health monitors | Full support | Technology preview |

| Listener API timeouts | Full support | No support |

| Load-balancing flavors | Full support | N/A |

| Log offloading | Full Support | No support |

| ML2/OVN DVR | Full support | Full support |

| ML2/OVN L3 HA | Full support | Full support |

| Object tags | Full support | Full support |

| SCTP | Technology Preview | Full support |

| SR-IOV | No support | No support |

| TCP | Full support | Full support |

| Terminated HTTPS load balancers (with barbican) | Full support | No support |

| TLS back end encryption | Technology Preview | No support |

| TLS client authentication | Technology Preview | No support |

| UDP | Full support | Full support |

| VIP access control list | Full support | N/A |

| Volume-based amphora | No support | N/A |

2.3. Load-balancing service software requirements

The Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia) requires that you configure the following core OpenStack components:

- Compute (nova)

- OpenStack Networking (neutron)

- Image (glance)

- Identity (keystone)

- Key Manager (barbican)

- MariaDB

- Redis (when flow resumption is enabled)

2.4. Avoiding taskflow interruptions by using flow resumption

If the controller service abruptly shuts down during creation, modification, deletion, or failover of an amphora load balancer, the taskflow is interrupted and the instance remains in a PENDING_* state indefinitely. You can avoid these situations by configuring the Load-balancing service (octavia) to use flow resumption, also known as taskflow jobboard. When flow resumption is configured, the Load-balancing service automatically re-assigns the flow to an alternate controller if the original controller shuts down unexpectedly.

You configure flow resumption by modifying the OpenStackControlPlane custom resource (CR). For more information, see the optional steps in Deploying the Load-balancing service.

2.5. Basics of active-standby topology for Load-balancing service instances

When you deploy the Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia), you can decide whether, by default, load balancers are highly available when users create them. If you want to give users a choice, then after RHOSO deployment, create a Load-balancing service flavor for creating highly available load balancers and a flavor for creating standalone load balancers.

By default, the amphora provider driver is configured for a single Load-balancing service (amphora) instance topology with limited support for high availability (HA). However, you can make Load-balancing service instances highly available when you implement an active-standby topology.

In this topology, the Load-balancing service boots an active and standby amphora instance for each load balancer, and maintains session persistence between each. If the active instance becomes unhealthy, the instance automatically fails over to the standby instance, making it active. The Load-balancing service health manager automatically rebuilds an instance that fails.

Chapter 3. Deploying the Load-balancing service in an existing environment

Deploying the Load-balancing service (octavia) to an existing Red Hat OpenStack Services on OpenShift (RHOSO) environment consists of creating a secret to secure communication and then deploying the Load-balancing service in the RHOSO control plane.

When your RHOSO environment was installed, the networks required for the Load-balancing service were configured and added to the control plane. For more information, see Preparing networks for Red Hat OpenStack Services on OpenShift in Deploying Red Hat OpenStack Services on OpenShift.

- Overview

You must perform the steps in the following procedures to deploy the Load-balancing service (octavia):

The steps in these procedures provide sample values that you add to the required CRs. The actual values that you provide will depend on your particular hardware configuration and local networking policies.

3.1. Creating a CA passphrase for certificate generation and signing

In Red Hat OpenStack Services on OpenShift (RHOSO) environments, you create a Secret custom resource (CR) which is used to encrypt the generated private key of the Server CA. RHOSO uses dual CAs to make communication between the Load balancing service (octavia) amphora and its controller more secure.

Prerequisites

-

You have the

occommand line tool installed on your workstation. -

You are logged on to a workstation that has access to the RHOSO control plane as a user with

cluster-adminprivileges.

Procedure

Generate a Base64, encoded password.

Retain the encoded output to use in a later step.

- Example

In this example, the password,

my_passwordis encoded using the Base64 encoding scheme:$ echo -n my_password | base64

-

Create a

SecretCR file on your workstation, for example,octavia-ca-passphrase.yaml. Add the following configuration to

octavia-ca-passphrase.yaml:apiVersion: v1 data: server-ca-passphrase: <Base64_password> kind: Secret metadata: name: octavia-ca-passphrase namespace: openstack type: Opaque- Replace the <Base64_password> with the Base64-encoded password that you created earlier.

Create the

SecretCR in the cluster:$ oc create -f octavia-ca-passphrase.yaml

Verification

Confirm that the

SecretCR exists:$ oc describe secret octavia-ca-passphrase -n openstack

Next steps

3.2. Deploying the Load-balancing service

To deploy the Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia), you must configure the OVN controller to create a NIC mapping for the provider network as well as add it to the networkAttachments property for each Load-balancing service that controls load balancers (amphorae).

Prerequisites

-

You have the

occommand line tool installed on your workstation. -

You are logged on to a workstation that has access to the RHOSO control plane as a user with

cluster-adminprivileges.

Procedure

Using the Skopeo utility, obtain the amphora image version. You will need the image version in a SHA format for a later step:

$ podman login registry.redhat.io $ sudo dnf install -y skopeo $ skopeo inspect docker://registry.redhat.io/rhoso/\ octavia-amphora-image-rhel9:$(oc get openstackversion \ -o jsonpath='{.items[0].status.deployedVersion}' | \ awk -F '-' '{print $1}') --format '{{.Name}}@{{.Digest}}'Open your

OpenStackControlPlaneCR file, and enable the Load-balancing service (octavia) by adding the following service configuration:apiVersion: core.openstack.org/v1beta1 kind: OpenStackControlPlane metadata: name: openstack-control-plane namespace: openstack spec: ... octavia: enabled: true template: octaviaHousekeeping: networkAttachments: - octavia octaviaHealthManager: networkAttachments: - octavia octaviaWorker: networkAttachments: - octavia ...With the amphora image version that you obtained in an earlier step, use the

octavia.template.amphoraImageContainerImageparameter to add the amphora image to the Image service (glance):- Example

... octavia: enabled: true template: octaviaHousekeeping: networkAttachments: - octavia octaviaHealthManager: networkAttachments: - octavia octaviaWorker: networkAttachments: - octavia amphoraImageContainerImage: registry.redhat.io/rhoso/\ octavia-amphora-image-rhel9@sha256:312cd5e8ea9fe261c1929aefececbeb22afe5e433ae76ef0860d98e561db21c9 ...

Optional: to enable flow resumption, perform the following steps.

For more information, see Avoiding taskflow interruptions by using flow resumption.

Create the

octavia-redisdatabase in Redis by adding the schema name,octavia-redis:, and the number of replicas,replicas: 1:apiVersion: core.openstack.org/v1beta1 kind: OpenStackControlPlane metadata: name: openstack-control-plane namespace: openstack spec: ... redis: enabled: true templates: octavia-redis: replicas: 1 ...Enable the

octavia-redisdatabase by adding the line,redisServiceName: octavia-redis:apiVersion: core.openstack.org/v1beta1 kind: OpenStackControlPlane metadata: name: openstack-control-plane namespace: openstack spec: ... octavia: enabled: true template: databaseInstance: <Galera_CR> redisServiceName: octavia-redis octaviaHousekeeping: networkAttachments: - octavia octaviaHealthManager: networkAttachments: - octavia octaviaWorker: networkAttachments: - octavia ...

Locate the service configuration for

ovn, and add the following configuration undertemplate:... ovn: template: ovnController: networkAttachment: tenant nicMappings: octavia: octbr-

networkAttachment- Note the one-character difference between the OVNnetworkAttachmentproperty and the octavianetworkAttachmentsproperty. The nametenantis an example value. -

nicMappings- The value must beoctavia: octbr.

-

Update the

OpenStackControlPlanecustom resource with the required values for the Load-balancing service.- Example

$ oc apply -f openstack_control_plane.yaml -n openstack

Verification

Wait until RHOCP creates the Load-balancing service resources. Run the following command to check the status:

$ oc wait octavia octavia --for condition=Ready- Sample output

You should see output similar to the following:

octavia.octavia.openstack.org/octavia condition met

Confirm that the Load-balancing service pods are running:

$ oc get pods | grep octavia- Sample output

You should see output similar to the following:

octavia-api-78b56bb844-ngjhc 2/2 Running 0 12s octavia-healthmanager-f6hpx 1/1 Running 0 14s octavia-housekeeping-knwpf 1/1 Running 0 10s octavia-redis-redis-0 2/2 Running 0 20s octavia-rsyslog-4nkv8 1/1 Running 0 23s octavia-worker-l5hs4 1/1 Running 0 26s

Access the remote shell for the OpenStackClient pod from your workstation:

$ oc rsh -n openstack openstackclientConfirm that the networks

octavia-provider-netandlb-mgmt-netare present:$ openstack network list -f yaml- Sample output

- ID: 2e4fc309-546b-4ac8-9eae-aa8d70a27a9b Name: octavia-provider-net Subnets: - eea45073-6e56-47fd-9153-12f7f49bc115 - ID: 77881d3f-04b0-46cb-931f-d54003cce9f0 Name: lb-mgmt-net Subnets: - e4ab96af-8077-4971-baa4-e0d40a16f55aThe network,

octavia-provider-net, is the external provider network, and is limited to the RHOSO control plane. Thelb-mgmt-netnetwork connects the Load-balancing service to amphora instances.

Exit the

openstackclientpod:$ exit

Chapter 4. Managing Load-balancing service instance logs

You can go to one location for administrative logs and tenant flow logs related to load-balancing service (octavia) instances (amphorae). The amphorae offload the logs to central locations on syslog receivers using a set of containers or to other syslog receivers at endpoints that you can choose.

You can also retain logs when the amphorae are rotated.

Even though log offloading is enabled by default, amphorae still continue to write administrative and tenant flow logs to the disk inside the amphorae. You can, however, disable logging locally if you choose.

When you use the TCP syslog protocol, you can specify one or more secondary endpoints for administrative and tenant log offloading in the event that the primary endpoint fails.

You can control a range of other logging features such as setting the syslog facility value, changing the tenant flow log format, and widening the scope of administrative logging to include logs from sources like the kernel and from cron.

- Section 4.1, “Configuration parameters for Load-balancing service instance logging”

- Section 4.2, “Load-balancing service instance tenant flow log format”

- Section 4.3, “Disabling Load-balancing service instance tenant flow logging”

- Section 4.4, “Disabling Load-balancing service instance local log storage”

4.1. Configuration parameters for Load-balancing service instance logging

To modify the Load-balancing service (octavia) instance (amphora) logging configuration, set values for one or more configuration parameters that control logging and apply the OpenStackControlPlane custom resource (CR) for the Load-balancing service.

These configuration parameters for amphora logging enable you to control features such as turning off log offloading, defining custom endpoints to offload logs to, setting the syslog facility value for logs, and so on.

The octavia Operator automatically enables log offloading.

- Global logging parameters

-

To set the configuration parameters for all logs, you must add a specific section to

OpenStackControlerPlaneCR for each of the octavia services: housekeeping, health manager, and worker. Add the configuration parameters for all logs underneath thecustomServiceConfig.[amphora_agent]parameters. - Usage example

octavia:

template:

octaviaHousekeeping:

customServiceConfig: |

[amphora_agent]

<log configuration parameters go here>

octaviaHealthManager:

customServiceConfig: |

[amphora_agent]

<log configuration parameters go here>

octaviaWorker:

customServiceConfig: |

[amphora_agent]

<log configuration parameters go here>disable_local_log_storage=false-

When

true, instances do not store logs on the instance host filesystem. This includes all kernel, system, and security logs. Default:false. forward_all_logs=true-

When

true, instances forward all log messages to the administrative log endpoints, including non-load balancing related logs such as the cron and kernel logs. Default:true.

- Administrative logging parameters

-

To set the configuration parameters for administrative logging, you must add a specific section to

OpenStackControlerPlaneCR for each of the octavia services: housekeeping, health manager, and worker. With the exception ofadminLogTargets, you add the configuration parameters for administrative logging underneath thecustomServiceConfig.[amphora_agent]parameters. - Usage example

octavia:

template:

octaviaRsyslog:

adminLogTargets:

- host: 192.168.1.1

port: 1514

protocol: udp

octaviaHousekeeping:

customServiceConfig: |

[amphora_agent]

<administrative logging parameters go here>

octaviaHealthManager:

customServiceConfig: |

[amphora_agent]

<administrative logging parameters go here>

octaviaWorker:

customServiceConfig: |

[amphora_agent]

<administrative logging parameters go here>adminLogTargetsA list of objects describing syslog endpoints to receive administrative log messages:

-

host: <host> -

port: <port> protocol: <protocol>An endpoint can be a container, VM, or physical host that is running a process that is listening for the log messages on the specified port. Default: The default value is automatically set by the octavia Operator.

You add

adminLogTargetsunderneath theoctaviaRsyslogparameter.

-

administrative_log_facility=<number>-

A number between

0and7that is the syslogLOG_LOCALfacility to use for the administrative log messages. Default:1.

- Tenant flow logging parameters

-

To set the configuration parameters for tenant flow logging, you must add a specific section to

OpenStackControlerPlaneCR for each of the octavia services: housekeeping, health manager, and worker. With the exception oftenantLogTargets, you add the configuration parameters for tenant flow logging underneath thecustomServiceConfig.[amphora_agent]parameters. For an example of how to set these parameters, see Section 4.3, “Disabling Load-balancing service instance tenant flow logging”. - Usage example

octavia:

template:

octaviaRsyslog

tenantLogTargets:

- host: 192.168.1.1

port: 1514

protocol: udp

octaviaHousekeeping:

customServiceConfig: |

[amphora_agent]

<tenant flow logging parameters go here>

[haproxy_amphora]

connection_login=true

octaviaHealthManager:

customServiceConfig: |

[amphora_agent]

<tenant flow logging parameters go here>

[haproxy_amphora]

connection_login=true

octaviaWorker:

customServiceConfig: |

[amphora_agent]

<tenant flow logging go here>

[haproxy_amphora]

connection_login=trueconnection_login=true | false-

When

true, tenant connection flows are logged. Default:true. tenantLogTargetsA list of objects describing syslog endpoints to receive tenant traffic flow log messages:

-

host: <host> -

port: <port> protocol: <protocol>These endpoints can be a container, VM, or physical host that is running a process that is listening for the log messages on the specified port. Default: The default value is automatically set by the octavia Operator.

You add

tenantLogTargetsunderneath theoctaviaRsyslogparameter.

-

user_log_facility=<number>-

A number between 0 and 7 that is the syslog "LOG_LOCAL" facility to use for the tenant traffic flow log messages. Default:

0. user_log_format="<value>"The format for the tenant traffic flow log.

Default:

"{{ '{{' }} project_id {{ '}}' }} {{ '{{' }} lb_id {{ '}}' }} %f %ci %cp %t %{+Q}r %ST %B %U %[ssl_c_verify] %{+Q}[ssl_c_s_dn] %b %s %Tt %tsc".The alphanumerics represent specific octavia fields, and the curly braces ({}) are substitution variables.

4.2. Load-balancing service instance tenant flow log format

Tenant flow logs for Load-balancing service instances (amphorae) use the HAProxy log format. The two exceptions are the project_id and lb_id variables whose values are provided by the amphora provider driver.

- Example

- Here is an example log entry with rsyslog as the syslog receiver:

Jun 12 00:44:13 amphora-3e0239c3-5496-4215-b76c-6abbe18de573 haproxy[1644]: 5408b89aa45b48c69a53dca1aaec58db fd8f23df-960b-4b12-ba62-2b1dff661ee7 261ecfc2-9e8e-4bba-9ec2-3c903459a895 172.24.4.1 41152 12/Jun/2019:00:44:13.030 "GET / HTTP/1.1" 200 76 73 - "" e37e0e04-68a3-435b-876c-cffe4f2138a4 6f2720b3-27dc-4496-9039-1aafe2fee105 4 --- Notes

- A hyphen (-) indicates any field that is unknown or not applicable to the connection.

The prefix in the earlier sample log entry originates from the rsyslog receiver, and is not part of the syslog message from the amphora:

Jun 12 00:44:13 amphora-3e0239c3-5496-4215-b76c-6abbe18de573 haproxy[1644]:”

- Default

- The default amphora tenant flow log format is:

`"{{ '{{' }} project_id {{ '}}' }} {{ '{{' }} lb_id {{ '}}' }} %f %ci %cp %t %{+Q}r %ST %B %U %[ssl_c_verify] %{+Q}[ssl_c_s_dn] %b %s %Tt %tsc"`The following table describes the log file format details.

| Variable | Type | Field name |

|---|---|---|

|

| UUID | Project ID (substitution variable from the amphora provider driver) |

|

| UUID | Load balancer ID (substitution variable from the amphora provider driver) |

|

| string |

|

|

| IP address |

|

|

| numeric |

|

|

| date |

|

|

| numeric |

|

|

| numeric |

|

|

| numeric |

|

|

| Boolean |

|

|

| string |

|

|

| string |

|

|

| string |

|

|

| numeric |

|

|

| string |

|

4.3. Disabling Load-balancing service instance tenant flow logging

Tenant flow log offloading for Load-balancing service instances (amphorae) is enabled by default.

To disable tenant flow logging without disabling administrative log offloading, you must override the [amphora_agent].tenant_log_targets` in the customServiceConfig` field of each Load-balancing service component in the OpenstackControlPlane custom resource (CR) file.

When the OctaviaConnectionLogging parameter is false, the amphorae do not write tenant flow logs to the disk inside the amphorae, nor offload any logs to syslog receivers listening elsewhere.

Prerequisites

-

You have the

occommand line tool installed on your workstation. -

You are logged on to a workstation that has access to the RHOSO control plane as a user with

cluster-adminprivileges.

Procedure

-

Open your

OpenStackControlPlaneCR file,openstack_control_plane.yaml, on your workstation. Add the following configuration to the

octaviaservice configuration:octavia: template: octaviaHousekeeping: customServiceConfig: | [amphora_agent] tenant_log_targets = octaviaHealthManager: customServiceConfig: | [amphora_agent] tenant_log_targets = octaviaWorker: customServiceConfig: | [amphora_agent] tenant_log_targets =Update the control plane:

$ oc apply -f openstack_control_plane.yaml -n openstackWait until RHOCP creates the resources related to the

OpenStackControlPlaneCR. Run the following command to check the status:$ oc get openstackcontrolplane -n openstack NAME STATUS MESSAGE openstack-control-plane Unknown Setup startedThe

OpenStackControlPlaneresources are created when the status is "Setup complete".TipAppend the

-woption to the end of thegetcommand to track deployment progress.Confirm that the control plane is deployed by reviewing the pods in the

openstacknamespace:$ oc get pods -n openstackThe control plane is deployed when all the pods are either completed or running.

4.4. Disabling Load-balancing service instance local log storage

Even when you configure Load-balancing service instances (amphorae) to offload administrative and tenant flow logs, the amphorae continue to write these logs to the disk inside the amphorae. To improve the performance of the load balancer, you can stop logging locally.

If you disable logging locally, you also disable all log storage in the amphora, including kernel, system, and security logging.

If you disable local log storage and the OctaviaLogOffload parameter is set to false, ensure that you set OctaviaConnectionLogging to false for improved load balancing performance.

Prerequisites

-

You have the

occommand line tool installed on your workstation. -

You are logged on to a workstation that has access to the RHOSO control plane as a user with

cluster-adminprivileges.

Procedure

-

Open your

OpenStackControlPlane`custom resource (CR) file,openstack_control_plane.yaml, on your workstation. Add the following configuration to the

octaviaservice configuration:octavia: template: octaviaHousekeeping: customServiceConfig: | [amphora_agent] disable_local_log_storage=true octaviaHealthManager: customServiceConfig: | [amphora_agent] disable_local_log_storage=true octaviaWorker: customServiceConfig: | [amphora_agent] disable_local_log_storage=trueUpdate the control plane:

$ oc apply -f openstack_control_plane.yaml -n openstackWait until RHOCP creates the resources related to the

OpenStackControlPlaneCR. Run the following command to check the status:$ oc get openstackcontrolplane -n openstack NAME STATUS MESSAGE openstack-control-plane Unknown Setup startedThe

OpenStackControlPlaneresources are created when the status is "Setup complete".TipAppend the

-woption to the end of thegetcommand to track deployment progress.Confirm that the control plane is deployed by reviewing the pods in the

openstacknamespace:$ oc get pods -n openstackThe control plane is deployed when all the pods are either completed or running.

Chapter 5. Creating Load-balancing service flavors

Load-balancing service (octavia) flavors are sets of provider configuration options that the Red Hat OpenStack Services on OpenShift (RHOSO) administrator creates. When RHOSO users request load balancers, they can specify that the load balancer be built using one of the defined flavors.

The administrator can define one or more flavors for each load-balancing provider driver, which exposes the unique capabilities of the respective provider.

Load-balancing flavors are supported only on the amphora provider.

To create a new Load-balancing service flavor:

- Decide which capabilities of the load-balancing provider you want to configure in the flavor.

- Create the flavor profile with the flavor capabilities you have chosen.

Create the flavor.

The Load-balancing service ships with a pre-defined, enhanced flavor that enables cloud users to scale up their load balancing instances to 4 vCPUs, 4GB RAM, and 3GB of disk space. By performing the steps in the following sections, RHOSO administrators can create their own custom flavors that meet the unique requirements to help their site vertically scale. For more information, see Section 5.4, “Vertically scaling load balancers”.

5.1. Reviewing Load-balancing service provider capabilities

Before creating a Load-balancing service (octavia) flavor, the Red Hat OpenStack Services on OpenShift (RHOSO) administrator should know the capabilities that each provider driver exposes.

Prerequisites

-

You have the

occommand line tool installed on your workstation. -

You are logged on to a workstation that has access to the RHOSO control plane as a user with

cluster-adminprivileges. - The Load-balancing service uses the amphora provider.

Procedure

Access the remote shell for the OpenStackClient pod from your workstation:

$ oc rsh -n openstack openstackclientList the capabilities for each driver:

$ openstack loadbalancer provider capability list <provider>Replace

<provider>with the name or UUID of the provider.- Example

$ openstack loadbalancer provider capability list amphoraThe command output lists all of the capabilities that the provider supports.

- Sample output

+-----------------------+---------------------------------------------------+ | name | description | +-----------------------+---------------------------------------------------+ | loadbalancer_topology | The load balancer topology. One of: SINGLE - One | | | amphora per load balancer. ACTIVE_STANDBY - Two | | | amphora per load balancer. | | ... | ... | +-----------------------+---------------------------------------------------+

- Note the names of the capabilities that you want to include in the flavor that you are creating. You will use these capability names later when you create the profile for the flavor.

Exit the

openstackclientpod:$ exit

Next step

- Proceed to Section 5.2, “Defining flavor profiles”.

5.2. Defining flavor profiles

Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia) flavor profiles contain the provider driver name and a list of capabilities. RHOSO administrators use a flavor profile to create a flavor that RHOSO users will specify when they create a load balancer.

Prerequisites

-

You have the

occommand line tool installed on your workstation. -

You are logged on to a workstation that has access to the RHOSO control plane as a user with

cluster-adminprivileges. You must know which load-balancing provider and which of its capabilities you want to include in the flavor profile.

For more information, see Section 5.1, “Reviewing Load-balancing service provider capabilities”.

Procedure

Access the remote shell for the OpenStackClient pod from your workstation:

$ oc rsh -n openstack openstackclientCreate a flavor profile:

$ openstack loadbalancer flavorprofile create --name <profile_name> --provider <provider_name> --flavor-data '{"<capability>": "<value>"}'- Example

In this example, a flavor profile is created for the amphora provider. When this profile is specified in a flavor, the load balancer that users create by using the flavor is a single amphora load balancer.

$ openstack loadbalancer flavorprofile create --name amphora-single-profile \ --provider amphora --flavor-data '{"loadbalancer_topology": "SINGLE"}'- Sample output

+---------------+--------------------------------------+ | Field | Value | +---------------+--------------------------------------+ | id | 72b53ac2-b191-48eb-8f73-ed012caca23a | | name | amphora-single-profile | | provider_name | amphora | | flavor_data | {"loadbalancer_topology": "SINGLE"} | +---------------+--------------------------------------+

Verification

- When you create a flavor profile, the Load-balancing service validates the flavor values with the provider to ensure that the provider can support the capabilities that you have specified.

Next step

5.3. Creating Load-balancing service flavors

Red Hat OpenStack Services on OpenShift (RHOSO) administrators create a user-facing flavor for the Load-balancing service (octavia) by using a flavor profile. The name that you assign to the flavor is the value that a RHOSO user will specify when they create a load balancer.

Prerequisites

-

You have the

occommand line tool installed on your workstation. -

You are logged on to a workstation that has access to the RHOSO control plane as a user with

cluster-adminprivileges. - You must have created a flavor profile.

- The Load-balancing service uses the amphora provider.

Procedure

Access the remote shell for the OpenStackClient pod from your workstation:

$ oc rsh -n openstack openstackclientCreate a flavor:

$ openstack loadbalancer flavor create --name <flavor_name> \ --flavorprofile <flavor-profile> --description "<string>"TipProvide a detailed description so that users can understand the capabilities of the flavor that you are providing.

- Example

In this example, a flavor has been defined. When users specify this flavor, they create a load balancer that uses one Load-balancing service instance (amphora) and is not highly available.

$ openstack loadbalancer flavor create --name standalone-lb --flavorprofile amphora-single-profile --description "A non-high availability load balancer for testing."- Sample output

+-------------------+--------------------------------------+ | Field | Value | +-------------------+--------------------------------------+ | id | 25cda2d8-f735-4744-b936-d30405c05359 | | name | standalone-lb | | flavor_profile_id | 72b53ac2-b191-48eb-8f73-ed012caca23a | | enabled | True | | description | A non-high availability load | | | balancer for testing. | +-------------------+--------------------------------------+NoteDisabled flavors are still visible to users, but users cannot use the disabled flavor to create a load balancer.

5.4. Vertically scaling load balancers

Red Hat OpenStack Services on OpenShift (RHOSO) users can scale-up their load balancers, increase the CPU and RAM of the load-balancing instance, to improve performance and capacity. Vertically scaling a load increases the maximum number of concurrent connections and the volume of network traffic processed.

To scale up a load balancer, use the appropriate load-balancing flavor when you create a load balancer. RHOSO ships with amphora-4vcpus which creates an instance that contains 4 vCPUs, 4GB RAM, and 3GB of disk space. The amphora-4vcpus flavor also automatically uses an amphora image that enables CPU pinning in the VM. One vCPU is dedicated to the system, and three vCPUs are dedicated to HAProxy.

Your RHOSO administrator can create other custom load-balancing flavors that meet the load-balancing needs of your particular environment.

Prerequisites

-

The administrator has created a project for you and has provided you with a

clouds.yamlfile for you to access the cloud. The

python-openstackclientpackage resides on your workstation.$ dnf list installed python-openstackclient- The Load-balancing service uses the amphora provider.

- Your RHOSO administrator has provided you with an enhanced, load-balancing flavor.

Procedure

Confirm that the system

OS_CLOUDvariable is set for your cloud:$ echo $OS_CLOUD my_cloudReset the variable if necessary:

$ export OS_CLOUD=my_other_cloudAs an alternative, you can specify the cloud name by adding the

--os-cloud <cloud_name>option each time you run anopenstackcommand.Create a load balancer using the load-balancing flavor provided.

EXAMPLE

In this example, a non-secure HTTP load balancer (

lb1) is created on a public subnet (public_subnet), using the flavor (amphora-4vcpus):$ openstack loadbalancer create --name lb1 \ --vip-subnet-id public_subnet \ --flavor amphora-4vcpus --wait

Chapter 6. Monitoring the Load-balancing service

In Red Hat OpenStack Services on OpenShift (RHOSO) environments, to keep load balancing operational, you can use the load-balancer management network and create, modify, and delete load-balancing health monitors.

For more information, see the following sections:

- Section 6.1, “The Load-balancing service networks”

- Section 6.2, “Load-balancing service instance monitoring”

- Section 6.3, “Load-balancing service pool member monitoring”

- Section 6.4, “Load balancer provisioning status monitoring”

- Section 6.5, “Load balancer functionality monitoring”

- Section 6.6, “About Load-balancing service health monitors”

- Section 6.7, “Creating Load-balancing service health monitors”

- Section 6.8, “Modifying Load-balancing service health monitors”

- Section 6.9, “Deleting Load-balancing service health monitors”

- Section 6.10, “Best practices for Load-balancing service HTTP health monitors”

6.1. The Load-balancing service networks

The Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia) controller pods require network connectivity across the OpenStack cloud in order to monitor and manage amphora load-balancer virtual machines (VMs) and uses two OpenStack networks to achieve this:

- octavia controller network (

octavia-provider-net) - an external provider network connecting Load-balancing service (octavia) controllers running in the control plane

- Load-balancing management network (

lb-mgmt-net) - a project (tenant) network that is connected to the amphora VMs.

An OpenStack router routes packets between the management network and the controller network with both the control plane pods and load balancer VMs having routes configured to direct traffic through the router for those networks.

Running the following command, oc rsh openstack client openstack network list -f yaml yields output similar to the following:

- ID: 2e4fc309-546b-4ac8-9eae-aa8d70a27a9b

Name: octavia-provider-net

Subnets:

- eea45073-6e56-47fd-9153-12f7f49bc115

- ID: 77881d3f-04b0-46cb-931f-d54003cce9f0

Name: lb-mgmt-net

Subnets:

- e4ab96af-8077-4971-baa4-e0d40a16f55a

The octavia-provider-net network is the external provider network, and uses the octavia network attachment interface as the physical network. This network is limited to the OpenShift control plane. The lb-mgmt-net network is a self-serve tenant network that connects the Octavia amphora instances.

The amphora controllers do not have direct access to the lb-mgmt-net network. The controllers access the lb-mgmt-net network through the octavia network attachment and a router that the octavia-operator manages. You can view the subnets by running the command, oc rsh openstackclient subnet list -f yaml:

- ID: e4ab96af-8077-4971-baa4-e0d40a16f55a

Name: lb-mgmt-subnet

Network: 77881d3f-04b0-46cb-931f-d54003cce9f0

Subnet: 172.24.0.0/16

- ID: eea45073-6e56-47fd-9153-12f7f49bc115

Name: octavia-provider-subnet

Network: 2e4fc309-546b-4ac8-9eae-aa8d70a27a9b

Subnet: 172.23.0.0/24

The subnet CIDR for octavia-provider-subnet originates from the octavia network attachment. The Subnet CIDR of lb-mgmt-subnet originates from the dst field of the octavia network attachment routes.

The octavia-link-router manages the routing between the octavia-provider-net and lb-mgmt-net networks. To view the routers, run the command,oc rsh openstackclient openstack router list -f yaml:

- ID: 371d800c-c803-4210-836b-eb468654462a

Name: octavia-link-router

Project: dc65b54e9cba475ba0adba7f898060f2

State: true

Status: ACTIVE

You can view the configuration of the octavia-link-router by running the command, oc rsh openstackclient openstack router show -f yaml octavia-link-router:

admin_state_up: true

availability_zone_hints: []

availability_zones: []

created_at: '2024-06-11T17:20:57Z'

description: ''

enable_ndp_proxy: null

external_gateway_info:

enable_snat: false

external_fixed_ips:

- ip_address: 172.23.0.150

subnet_id: eea45073-6e56-47fd-9153-12f7f49bc115

network_id: 2e4fc309-546b-4ac8-9eae-aa8d70a27a9b

flavor_id: null

id: 371d800c-c803-4210-836b-eb468654462a

interfaces_info:

- ip_address: 172.24.1.89

port_id: 1a44e94d-f44a-4752-81db-bc5402857a08

subnet_id: e4ab96af-8077-4971-baa4-e0d40a16f55a

name: octavia-link-router

project_id: dc65b54e9cba475ba0adba7f898060f2

revision_number: 4

routes: []

status: ACTIVE

tags: []

tenant_id: dc65b54e9cba475ba0adba7f898060f2

updated_at: '2024-06-11T17:21:01Z'

The external_gateway_info of the router corresponds to the gw field of the routes provided in the network attachment.

Notice that source network address translation is disabled. This is important as the amphora controllers communicate with the amphora using the addresses on the lb-mgmt-net network that OpenStack allocates, not a floating IP address. The routes of the network attachment direct traffic from the amphora controllers to the router, and the host routes on the lb-mgmt-net subnet establish the reverse route. This host route uses the ip_address of the port in interfaces_info as the next_hop and the Subnet of the octavia-provider-subnet as the Destination to be routed to.

To view the host routes for the lb-mgmt-subnet, run the command, oc rsh openstackclient openstack subnet show lb-mgmt-subnet -c host_routes -f yaml:

host_routes:

- destination: 172.23.0.0/24

nexthop: 172.24.1.89

The port used to connect lb-mgmt-subnet to the router is named lb-mgmt-router-port and you can view the details by running the command, oc rsh openstackclient openstack port show lb-mgmt-router-port -f yaml. Note that the port_id in the router’s interface_info can be used instead of the port name.

admin_state_up: true

allowed_address_pairs: []

binding_host_id: ''

binding_profile: {}

binding_vif_details: {}

binding_vif_type: unbound

binding_vnic_type: normal

created_at: '2024-06-11T17:20:41Z'

data_plane_status: null

description: ''

device_id: 371d800c-c803-4210-836b-eb468654462a

device_owner: network:router_interface

device_profile: null

dns_assignment:

- fqdn: host-172-24-1-89.openstackgate.local.

hostname: host-172-24-1-89

ip_address: 172.24.1.89

dns_domain: ''

dns_name: ''

extra_dhcp_opts: []

fixed_ips:

- ip_address: 172.24.1.89

subnet_id: e4ab96af-8077-4971-baa4-e0d40a16f55a

id: 1a44e94d-f44a-4752-81db-bc5402857a08

ip_allocation: immediate

mac_address: fa:16:3e:ba:be:ee

name: lb-mgmt-router-port

network_id: 77881d3f-04b0-46cb-931f-d54003cce9f0

numa_affinity_policy: null

port_security_enabled: true

project_id: dc65b54e9cba475ba0adba7f898060f2

propagate_uplink_status: null

qos_network_policy_id: null

qos_policy_id: null

resource_request: null

revision_number: 3

security_group_ids:

- 055686ce-fb2d-409b-ab74-85df9ab3a9e0

- 5c41444b-0863-4609-9335-d5a66bdbcad8

status: ACTIVE

tags: []

trunk_details: null

updated_at: '2024-06-11T17:21:03Z'Notice the following about these fields and their values:

-

fixed_ips- matches the IP address for theinterfaces_infoof theoctavia-link-router. -

device_id- matches the ID for theoctavia-link-router. -

device_owner- indicates that OpenStack is using the port as a router interface.

6.2. Load-balancing service instance monitoring

In Red Hat OpenStack Services on OpenShift (RHOSO) environments, the Load-balancing service (octavia) monitors the load balancing instances (amphorae) and initiates failovers and replacements if the amphorae malfunction. Any time a failover occurs, the Load-balancing service logs the failover in the corresponding health manager log on the controller in /var/log/containers/octavia.

Use log analytics to monitor failover trends to address problems early. Problems such as Networking service (neutron) connectivity issues, Denial of Service attacks, and Compute service (nova) malfunctions often lead to higher failover rates for load balancers.

6.3. Load-balancing service pool member monitoring

In Red Hat OpenStack Services on OpenShift (RHOSO) environments, the Load-balancing service (octavia) uses the health information from the underlying load balancing subsystems to determine the health of members of the load-balancing pool. Health information is streamed to the Load-balancing service database, and made available by the status tree or other API methods. For critical applications, you must poll for health information in regular intervals.

6.4. Load balancer provisioning status monitoring

In Red Hat OpenStack Services on OpenShift (RHOSO) environments, you can monitor the provisioning status of a load balancer and send alerts if the provisioning status is ERROR. Do not configure an alert to trigger when an application is making regular changes to the pool and enters several PENDING stages.

The provisioning status of load balancer objects reflect the ability of the control plane to contact and successfully provision a create, update, and delete request. The operating status of a load balancer object reports on the current functionality of the load balancer.

For example, a load balancer might have a provisioning status of ERROR, but an operating status of ONLINE. This might be caused by a Networking service (neutron) failure that blocked that last requested update to the load balancer configuration from successfully completing. In this case, the load balancer continues to process traffic through the load balancer, but might not have applied the latest configuration updates yet.

6.5. Load balancer functionality monitoring

You can monitor the operational status of your load balancer and its child objects in your Red Hat OpenStack Services on OpenShift (RHOSO) environment.

You can also use an external monitoring service that connects to your load balancer listeners and monitors them from outside of the cloud. An external monitoring service indicates if there is a failure outside of the Load-balancing service (octavia) that might impact the functionality of your load balancer, such as router failures, network connectivity issues, and so on.

6.6. About Load-balancing service health monitors

A Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia) health monitor is a process that does periodic health checks on each back end member server to pre-emptively detect failed servers and temporarily pull them out of the pool.

If the health monitor detects a failed server, it removes the server from the pool and marks the member in ERROR. After you have corrected the server and it is functional again, the health monitor automatically changes the status of the member from ERROR to ONLINE, and resumes passing traffic to it.

Always use health monitors in production load balancers. If you do not have a health monitor, failed servers are not removed from the pool. This can lead to service disruption for web clients.

There are several types of health monitors, as briefly described here:

- HTTP

-

by default, probes the

/path on the application server. - HTTPS

operates exactly like HTTP health monitors, but with TLS back end servers.

If the servers perform client certificate validation, HAProxy does not have a valid certificate. In these cases, TLS-HELLO health monitoring is an alternative.

- TLS-HELLO

ensures that the back end server responds to SSLv3-client hello messages.

A TLS-HELLO health monitor does not check any other health metrics, like status code or body contents.

- PING

sends periodic ICMP ping requests to the back end servers.

You must configure back end servers to allow PINGs so that these health checks pass.

ImportantA PING health monitor checks only if the member is reachable and responds to ICMP echo requests. PING health monitors do not detect if the application that runs on an instance is healthy. Use PING health monitors only in cases where an ICMP echo request is a valid health check.

- TCP

opens a TCP connection to the back end server protocol port.

The TCP application opens a TCP connection and, after the TCP handshake, closes the connection without sending any data.

- UDP-CONNECT

performs a basic UDP port connect.

A UDP-CONNECT health monitor might not work correctly if Destination Unreachable (ICMP type 3) is not enabled on the member server, or if it is blocked by a security rule. In these cases, a member server might be marked as having an operating status of

ONLINEwhen it is actually down.

6.7. Creating Load-balancing service health monitors

Use Load-balancing service (octavia) health monitors to avoid service disruptions for your users. The health monitors run periodic health checks on each back end server to pre-emptively detect failed servers and temporarily pull the servers out of the pool in your Red Hat OpenStack Services on OpenShift (RHOSO) environment.

Prerequisites

-

The administrator has created a project for you and has provided you with a

clouds.yamlfile for you to access the cloud. The

python-openstackclientpackage resides on your workstation.$ dnf list installed python-openstackclient

Procedure

Confirm that the system

OS_CLOUDvariable is set for your cloud:$ echo $OS_CLOUD my_cloudReset the variable if necessary:

$ export OS_CLOUD=my_other_cloudAs an alternative, you can specify the cloud name by adding the

--os-cloud <cloud_name>option each time you run anopenstackcommand.Run the

openstack loadbalancer healthmonitor createcommand, using argument values that are appropriate for your site.All health monitor types require the following configurable arguments:

<pool>- Name or ID of the pool of back-end member servers to be monitored.

--type-

The type of health monitor. One of

HTTP,HTTPS,PING,SCTP,TCP,TLS-HELLO, orUDP-CONNECT. --delay- Number of seconds to wait between health checks.

--timeout-

Number of seconds to wait for any given health check to complete.

timeoutmust always be smaller thandelay. --max-retries- Number of health checks a back-end server must fail before it is considered down. Also, the number of health checks that a failed back-end server must pass to be considered up again.

In addition, HTTP health monitor types also require the following arguments, which are set by default:

--url-path-

Path part of the URL that should be retrieved from the back-end server. By default this is

/. --http-method-

HTTP method that is used to retrieve the

url_path. By default this isGET. --expected-codes-

List of HTTP status codes that indicate an OK health check. By default this is

200. - Example

$ openstack loadbalancer healthmonitor create --name my-health-monitor --delay 10 --max-retries 4 --timeout 5 --type TCP lb-pool-1 --wait

Verification

-

Run the

openstack loadbalancer healthmonitor listcommand and verify that your health monitor is running.

6.8. Modifying Load-balancing service health monitors

You can modify the configuration for Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia) health monitors when you want to change the interval for sending probes to members, the connection timeout interval, the HTTP method for requests, and so on.

Prerequisites

-

The administrator has created a project for you and has provided you with a

clouds.yamlfile for you to access the cloud. The

python-openstackclientpackage resides on your workstation.$ dnf list installed python-openstackclient

Procedure

Confirm that the system

OS_CLOUDvariable is set for your cloud:$ echo $OS_CLOUD my_cloudReset the variable if necessary:

$ export OS_CLOUD=my_other_cloudAs an alternative, you can specify the cloud name by adding the

--os-cloud <cloud_name>option each time you run anopenstackcommand.Modify your health monitor (

my-health-monitor).In this example, a user is changing the time in seconds that the health monitor waits between sending probes to members.

- Example

$ openstack loadbalancer healthmonitor set my_health_monitor --delay 600

Verification

Run the

openstack loadbalancer healthmonitor showcommand to confirm your configuration changes.$ openstack loadbalancer healthmonitor show my_health_monitor

6.9. Deleting Load-balancing service health monitors

You can remove a Red Hat OpenStack Services on OpenShift (RHOSO) Load-balancing service (octavia) health monitor.

An alternative to deleting a health monitor is to disable it by using the openstack loadbalancer healthmonitor set --disable command.

Prerequisites

-

The administrator has created a project for you and has provided you with a

clouds.yamlfile for you to access the cloud. The

python-openstackclientpackage resides on your workstation.$ dnf list installed python-openstackclient

Procedure

Confirm that the system

OS_CLOUDvariable is set for your cloud:$ echo $OS_CLOUD my_cloudReset the variable if necessary:

$ export OS_CLOUD=my_other_cloudAs an alternative, you can specify the cloud name by adding the

--os-cloud <cloud_name>option each time you run anopenstackcommand.Delete the health monitor (

my-health-monitor).- Example

$ openstack loadbalancer healthmonitor delete my-health-monitor

Verification

-

Run the

openstack loadbalancer healthmonitor listcommand to verify that the health monitor you deleted no longer exists.

6.10. Best practices for Load-balancing service HTTP health monitors

In Red Hat OpenStack Services on OpenShift (RHOSO) environments, when you write the code that generates the health check in your web application, use the following best practices:

-

The health monitor

url-pathdoes not require authentication to load. -

By default, the health monitor

url-pathreturns anHTTP 200 OKstatus code to indicate a healthy server unless you specify alternateexpected-codes. The health check does enough internal checks to ensure that the application is healthy and no more. Ensure that the following conditions are met for the application:

- Any required database or other external storage connections are up and running.

- The load is acceptable for the server on which the application runs.

- Your site is not in maintenance mode.

- Tests specific to your application are operational.

The page generated by the health check should be small in size:

- It returns in a sub-second interval.

- It does not induce significant load on the application server.

The page generated by the health check is never cached, although the code that runs the health check might reference cached data.

For example, you might find it useful to run a more extensive health check using cron and store the results to disk. The code that generates the page at the health monitor

url-pathincorporates the results of this cron job in the tests it performs.-

Because the Load-balancing service only processes the HTTP status code returned, and because health checks are run so frequently, you can use the

HEADorOPTIONSHTTP methods to skip processing the entire page.

Chapter 7. Creating non-secure HTTP load balancers

In Red Hat OpenStack Services on OpenShift (RHOSO) environments, you can create load balancers for non-secure HTTP network traffic.

For more information, see the following sections:

7.1. Creating an HTTP load balancer with a health monitor

For networks that are not compatible with Red Hat OpenStack Services on OpenShift (RHOSO) Networking service (neutron) floating IPs, create a load balancer to manage network traffic for non-secure HTTP applications. Create a health monitor to ensure that your back-end members remain available.

Prerequisites

- A shared external (public) subnet that you can reach from the internet.

-

The administrator has created a project for you and has provided you with a

clouds.yamlfile for you to access the cloud. The

python-openstackclientpackage resides on your workstation.$ dnf list installed python-openstackclient

Procedure

Confirm that the system

OS_CLOUDvariable is set for your cloud:$ echo $OS_CLOUD my_cloudReset the variable if necessary:

$ export OS_CLOUD=my_other_cloudAs an alternative, you can specify the cloud name by adding the

--os-cloud <cloud_name>option each time you run anopenstackcommand.Create a load balancer (

lb1) on a public subnet (public_subnet).NoteValues inside parentheses are sample values that are used in the example commands in this procedure. Substitute these sample values with values that are appropriate for your site.

- Example

$ openstack loadbalancer create --name lb1 \ --vip-subnet-id public_subnet --wait

Create a listener (

listener1) on a port (80).- Example

$ openstack loadbalancer listener create --name listener1 \ --protocol HTTP --protocol-port 80 lb1 --wait

Verify the state of the listener.

- Example

$ openstack loadbalancer listener show listener1Before going to the next step, ensure that the status is

ACTIVE.

Create the listener default pool (

pool1).- Example

$ openstack loadbalancer pool create --name pool1 \ --lb-algorithm ROUND_ROBIN --listener listener1 --protocol HTTP --wait

Create a health monitor (

healthmon1) of type (HTTP) on the pool (pool1) that connects to the back-end servers and tests the path (/).Health checks can help to avoid a false positive. If no health monitor is defined, the member server is assumed to be

ONLINE.- Example

$ openstack loadbalancer healthmonitor create --name healthmon1 \ --delay 15 --max-retries 4 --timeout 10 --type HTTP --url-path / \ pool1 --wait

Add load balancer members (

192.0.2.10and192.0.2.11) on the private subnet (private_subnet) to the default pool.- Example

In this example, the back-end servers,

192.0.2.10and192.0.2.11, are namedmember1andmember2, respectively:$ openstack loadbalancer member create --name member1 --subnet-id \ private_subnet --address 192.0.2.10 --protocol-port 80 pool1 --wait $ openstack loadbalancer member create --name member2 --subnet-id \ private_subnet --address 192.0.2.11 --protocol-port 80 pool1 --wait

Verification

View and verify the load balancer (lb1) settings:

- Example

$ openstack loadbalancer show lb1- Sample output

+---------------------+--------------------------------------+ | Field | Value | +---------------------+--------------------------------------+ | admin_state_up | True | | created_at | 2024-01-15T11:11:09 | | description | | | flavor | | | id | 788fe121-3dec-4e1b-8360-4020642238b0 | | listeners | 09f28053-fde8-4c78-88b9-0f191d84120e | | name | lb1 | | operating_status | ONLINE | | pools | 627842b3-eed8-4f5f-9f4a-01a738e64d6a | | project_id | dda678ca5b1241e7ad7bf7eb211a2fd7 | | provider | amphora | | provisioning_status | ACTIVE | | updated_at | 2024-01-15T11:12:13 | | vip_address | 198.51.100.12 | | vip_network_id | 9bca13be-f18d-49a5-a83d-9d487827fd16 | | vip_port_id | 69a85edd-5b1c-458f-96f2-b4552b15b8e6 | | vip_qos_policy_id | None | | vip_subnet_id | 5bd7334b-49b3-4849-b3a2-b0b83852dba1 | +---------------------+--------------------------------------+

When a health monitor is present and functioning properly, you can check the status of each member.

A working member (

member1) has anONLINEvalue for itsoperating_status.- Example

$ openstack loadbalancer member show pool1 member1- Sample output

+---------------------+--------------------------------------+ | Field | Value | +---------------------+--------------------------------------+ | address | 192.0.2.10 | | admin_state_up | True | | created_at | 2024-01-15T11:16:23 | | id | b85c807e-4d7c-4cbd-b725-5e8afddf80d2 | | name | member1 | | operating_status | ONLINE | | project_id | dda678ca5b1241e7ad7bf7eb211a2fd7 | | protocol_port | 80 | | provisioning_status | ACTIVE | | subnet_id | 5bd7334b-49b3-4849-b3a2-b0b83852dba1 | | updated_at | 2024-01-15T11:20:45 | | weight | 1 | | monitor_port | None | | monitor_address | None | | backup | False | +---------------------+--------------------------------------+

7.2. Creating an HTTP load balancer that uses a floating IP

To manage network traffic for non-secure HTTP applications, create a Red Hat OpenStack Services on OpenShift (RHOSO) load balancer with a virtual IP (VIP) that depends on a floating IP. The advantage of using a floating IP is that you retain control of the assigned IP, which is necessary if you need to move, destroy, or recreate your load balancer. It is a best practice to also create a health monitor to ensure that your back-end members remain available.

Floating IPs do not work with IPv6 networks.

Prerequisites

- A floating IP to use with a load balancer VIP.

- A RHOSO Networking service (neutron) shared external (public) subnet that you can reach from the internet to use for the floating IP.

-

The administrator has created a project for you and has provided you with a

clouds.yamlfile for you to access the cloud. The

python-openstackclientpackage resides on your workstation.$ dnf list installed python-openstackclient

Procedure

Confirm that the system

OS_CLOUDvariable is set for your cloud:$ echo $OS_CLOUD my_cloudReset the variable if necessary:

$ export OS_CLOUD=my_other_cloudAs an alternative, you can specify the cloud name by adding the

--os-cloud <cloud_name>option each time you run anopenstackcommand.Create a load balancer (

lb1) on a private subnet (private_subnet).NoteValues inside parentheses are sample values that are used in the example commands in this procedure. Substitute these sample values with values that are appropriate for your site.

- Example

$ openstack loadbalancer create --name lb1 \ --vip-subnet-id private_subnet --wait

-

In the output from the previous step, record the value of

load_balancer_vip_port_id, because you must provide it in a later step. Create a listener (

listener1) on a port (80).- Example

$ openstack loadbalancer listener create --name listener1 \ --protocol HTTP --protocol-port 80 lb1 --wait

Create the listener default pool (

pool1).- Example

The command in this example creates an HTTP pool that uses a private subnet containing back-end servers that host non-secure HTTP applications on TCP port 80:

$ openstack loadbalancer pool create --name pool1 \ --lb-algorithm ROUND_ROBIN --listener listener1 --protocol HTTP --wait

Create a health monitor (

healthmon1) of type (HTTP) on the pool (pool1) that connects to the back-end servers and tests the path (/).Health checks can help to avoid a false positive. If no health monitor is defined, the member server is assumed to be

ONLINE.- Example

$ openstack loadbalancer healthmonitor create --name healthmon1 \ --delay 15 --max-retries 4 --timeout 10 --type HTTP --url-path / \ pool1 --wait

Add load balancer members (

192.0.2.10and192.0.2.11) on the private subnet to the default pool.- Example

In this example, the back-end servers,

192.0.2.10and192.0.2.11, are namedmember1andmember2, respectively:$ openstack loadbalancer member create --name member1 --subnet-id \ private_subnet --address 192.0.2.10 --protocol-port 80 pool1 --wait $ openstack loadbalancer member create --name member2 --subnet-id \ private_subnet --address 192.0.2.11 --protocol-port 80 pool1 --wait

Create a floating IP address on the shared external subnet (

public).- Example

$ openstack floating ip create public

-

In the output from step 8, record the value of

floating_ip_address, because you must provide it in a later step. Associate this floating IP (

203.0.113.0) with the load balancervip_port_id(69a85edd-5b1c-458f-96f2-b4552b15b8e6).- Example

$ openstack floating ip set --port 69a85edd-5b1c-458f-96f2-b4552b15b8e6 203.0.113.0

Verification

Verify HTTP traffic flows across the load balancer by using the floating IP (

203.0.113.0).- Example

$ curl -v http://203.0.113.0 --insecure- Sample output

* About to connect() to 203.0.113.0 port 80 (#0) * Trying 203.0.113.0... * Connected to 203.0.113.0 (203.0.113.0) port 80 (#0) > GET / HTTP/1.1 > User-Agent: curl/7.29.0 > Host: 203.0.113.0 > Accept: */* > < HTTP/1.1 200 OK < Content-Length: 30 < * Connection #0 to host 203.0.113.0 left intact

When a health monitor is present and functioning properly, you can check the status of each member.

A working member (

member1) has anONLINEvalue for itsoperating_status.- Example

$ openstack loadbalancer member show pool1 member1- Sample output

+---------------------+--------------------------------------+ | Field | Value | +---------------------+--------------------------------------+ | address | 192.0.02.10 | | admin_state_up | True | | created_at | 2024-01-15T11:11:23 | | id | b85c807e-4d7c-4cbd-b725-5e8afddf80d2 | | name | member1 | | operating_status | ONLINE | | project_id | dda678ca5b1241e7ad7bf7eb211a2fd7 | | protocol_port | 80 | | provisioning_status | ACTIVE | | subnet_id | 5bd7334b-49b3-4849-b3a2-b0b83852dba1 | | updated_at | 2024-01-15T11:28:42 | | weight | 1 | | monitor_port | None | | monitor_address | None | | backup | False | +---------------------+--------------------------------------+

7.3. Creating an HTTP load balancer with session persistence

To manage network traffic for non-secure HTTP applications, you can create Red Hat OpenStack Services on OpenShift (RHOSO) load balancers that track session persistence. Doing so ensures that when a request comes in, the load balancer directs subsequent requests from the same client to the same back-end server. Session persistence optimizes load balancing by saving time and memory.

Prerequisites

- A shared external (public) subnet that you can reach from the internet.

- The non-secure web applications whose network traffic you are load balancing have cookies enabled.

-

The administrator has created a project for you and has provided you with a

clouds.yamlfile for you to access the cloud. The

python-openstackclientpackage resides on your workstation.$ dnf list installed python-openstackclient

Procedure

Confirm that the system

OS_CLOUDvariable is set for your cloud:$ echo $OS_CLOUD my_cloudReset the variable if necessary:

$ export OS_CLOUD=my_other_cloudAs an alternative, you can specify the cloud name by adding the

--os-cloud <cloud_name>option each time you run anopenstackcommand.Create a load balancer (

lb1) on a public subnet (public_subnet).NoteValues inside parentheses are sample values that are used in the example commands in this procedure. Substitute these sample values with values that are appropriate for your site.

- Example

$ openstack loadbalancer create --name lb1 \ --vip-subnet-id public_subnet --wait

Create a listener (

listener1) on a port (80).- Example

$ openstack loadbalancer listener create --name listener1 \ --protocol HTTP --protocol-port 80 lb1 --wait

Create the listener default pool (

pool1) that defines session persistence on a cookie (PHPSESSIONID).- Example

The command in this example creates an HTTP pool that uses a private subnet containing back-end servers that host non-secure HTTP applications on TCP port 80:

$ openstack loadbalancer pool create --name pool1 \ --lb-algorithm ROUND_ROBIN --listener listener1 --protocol HTTP \ --session-persistence type=APP_COOKIE,cookie_name=PHPSESSIONID --wait

Create a health monitor (

healthmon1) of type (HTTP) on the pool (pool1) that connects to the back-end servers and tests the path (/).Health checks can help to avoid a false positive. If no health monitor is defined, the member server is assumed to be

ONLINE.- Example

$ openstack loadbalancer healthmonitor create --name healthmon1 \ --delay 15 --max-retries 4 --timeout 10 --type HTTP --url-path / \ pool1 --wait

Add load balancer members (

192.0.2.10and192.0.2.11) on the private subnet (private_subnet) to the default pool.- Example

In this example, the back-end servers,

192.0.2.10and192.0.2.11, are namedmember1andmember2, respectively:$ openstack loadbalancer member create --name member1 --subnet-id \ private_subnet --address 192.0.2.10 --protocol-port 80 pool1 --wait $ openstack loadbalancer member create --name member2 --subnet-id \ private_subnet --address 192.0.2.11 --protocol-port 80 pool1 --wait

Verification

View and verify the load balancer (

lb1) settings:- Example

$ openstack loadbalancer show lb1- Sample output

+---------------------+--------------------------------------+ | Field | Value | +---------------------+--------------------------------------+ | admin_state_up | True | | created_at | 2024-01-15T11:11:58 | | description | | | flavor | | | id | 788fe121-3dec-4e1b-8360-4020642238b0 | | listeners | 09f28053-fde8-4c78-88b9-0f191d84120e | | name | lb1 | | operating_status | ONLINE | | pools | 627842b3-eed8-4f5f-9f4a-01a738e64d6a | | project_id | dda678ca5b1241e7ad7bf7eb211a2fd7 | | provider | amphora | | provisioning_status | ACTIVE | | updated_at | 2024-01-15T11:28:42 | | vip_address | 198.51.100.22 | | vip_network_id | 9bca13be-f18d-49a5-a83d-9d487827fd16 | | vip_port_id | 69a85edd-5b1c-458f-96f2-b4552b15b8e6 | | vip_qos_policy_id | None | | vip_subnet_id | 5bd7334b-49b3-4849-b3a2-b0b83852dba1 | +---------------------+--------------------------------------+

When a health monitor is present and functioning properly, you can check the status of each member.

A working member (