Chapter 5. Clair for Red Hat Quay

Clair v4 (Clair) is an open source application that leverages static code analyses for parsing image content and reporting vulnerabilities affecting the content. Clair is packaged with Red Hat Quay and can be used in both standalone and Operator deployments. It can be run in highly scalable configurations, where components can be scaled separately as appropriate for enterprise environments.

5.1. Clair vulnerability databases

Clair uses the following vulnerability databases to report for issues in your images:

- Ubuntu Oval database

- Debian Security Tracker

- Red Hat Enterprise Linux (RHEL) Oval database

- SUSE Oval database

- Oracle Oval database

- Alpine SecDB database

- VMWare Photon OS database

- Amazon Web Services (AWS) UpdateInfo

- Open Source Vulnerability (OSV) Database

For information about how Clair does security mapping with the different databases, see Claircore Severity Mapping.

5.1.1. Information about Open Source Vulnerability (OSV) database for Clair

Open Source Vulnerability (OSV) is a vulnerability database and monitoring service that focuses on tracking and managing security vulnerabilities in open source software.

OSV provides a comprehensive and up-to-date database of known security vulnerabilities in open source projects. It covers a wide range of open source software, including libraries, frameworks, and other components that are used in software development. For a full list of included ecosystems, see defined ecosystems.

Clair also reports vulnerability and security information for golang, java, and ruby ecosystems through the Open Source Vulnerability (OSV) database.

By leveraging OSV, developers and organizations can proactively monitor and address security vulnerabilities in open source components that they use, which helps to reduce the risk of security breaches and data compromises in projects.

For more information about OSV, see the OSV website.

5.2. Clair on OpenShift Container Platform

To set up Clair v4 (Clair) on a Red Hat Quay deployment on OpenShift Container Platform, it is recommended to use the Red Hat Quay Operator. By default, the Red Hat Quay Operator will install or upgrade a Clair deployment along with your Red Hat Quay deployment and configure Clair automatically.

5.3. Testing Clair

Use the following procedure to test Clair on either a standalone Red Hat Quay deployment, or on an OpenShift Container Platform Operator-based deployment.

Prerequisites

- You have deployed the Clair container image.

Procedure

Pull a sample image by entering the following command:

$ podman pull ubuntu:20.04Tag the image to your registry by entering the following command:

$ sudo podman tag docker.io/library/ubuntu:20.04 <quay-server.example.com>/<user-name>/ubuntu:20.04Push the image to your Red Hat Quay registry by entering the following command:

$ sudo podman push --tls-verify=false quay-server.example.com/quayadmin/ubuntu:20.04- Log in to your Red Hat Quay deployment through the UI.

- Click the repository name, for example, quayadmin/ubuntu.

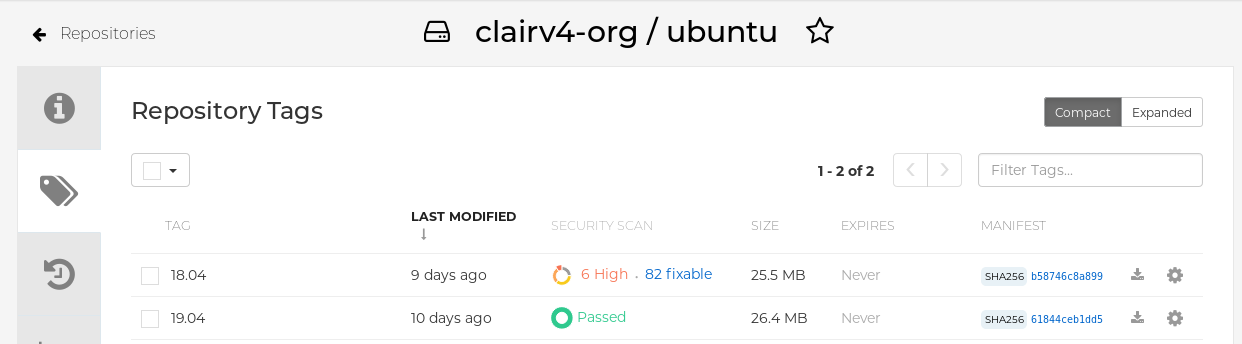

In the navigation pane, click Tags.

Report summary

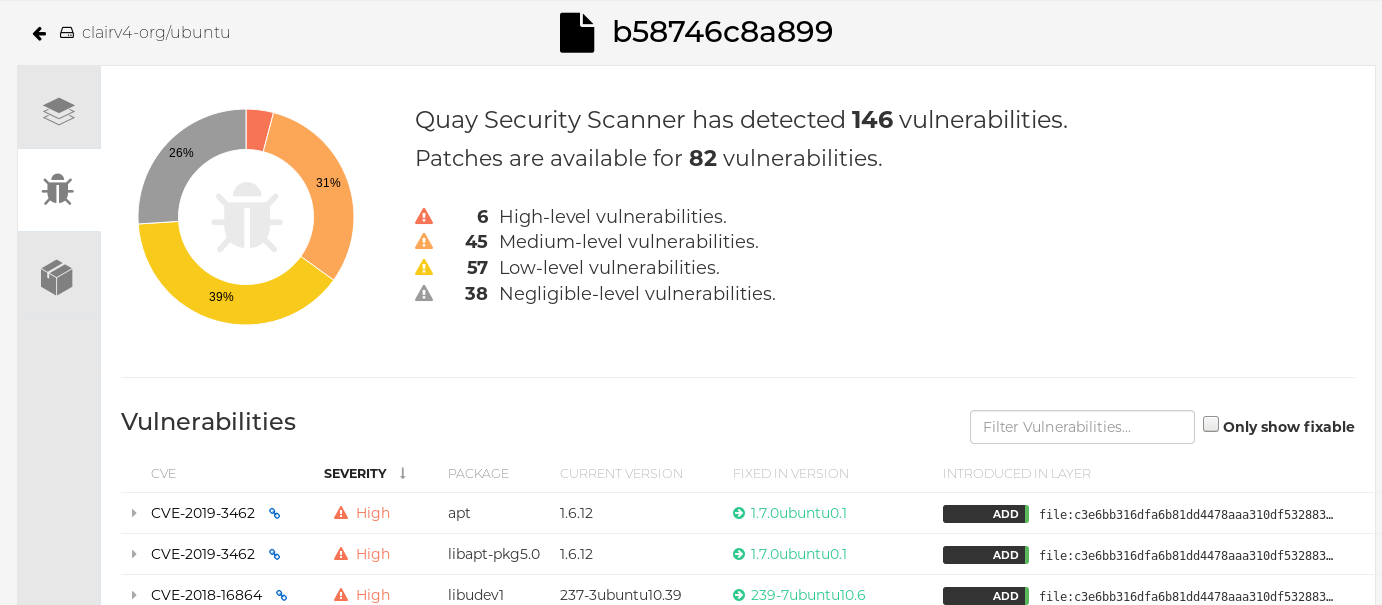

Click the image report, for example, 45 medium, to show a more detailed report:

Report details

Note

NoteIn some cases, Clair shows duplicate reports on images, for example,

ubi8/nodejs-12orubi8/nodejs-16. This occurs because vulnerabilities with same name are for different packages. This behavior is expected with Clair vulnerability reporting and will not be addressed as a bug.

5.4. Advanced Clair configuration

Use the procedures in the following sections to configure advanced Clair settings.

5.4.1. Unmanaged Clair configuration

Red Hat Quay users can run an unmanaged Clair configuration with the Red Hat Quay OpenShift Container Platform Operator. This feature allows users to create an unmanaged Clair database, or run their custom Clair configuration without an unmanaged database.

An unmanaged Clair database allows the Red Hat Quay Operator to work in a geo-replicated environment, where multiple instances of the Operator must communicate with the same database. An unmanaged Clair database can also be used when a user requires a highly-available (HA) Clair database that exists outside of a cluster.

5.4.1.1. Running a custom Clair configuration with an unmanaged Clair database

Use the following procedure to set your Clair database to unmanaged.

Procedure

In the Quay Operator, set the

clairpostgrescomponent of theQuayRegistrycustom resource tomanaged: false:apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: quay370 spec: configBundleSecret: config-bundle-secret components: - kind: objectstorage managed: false - kind: route managed: true - kind: tls managed: false - kind: clairpostgres managed: false

5.4.1.2. Configuring a custom Clair database with an unmanaged Clair database

The Red Hat Quay Operator for OpenShift Container Platform allows users to provide their own Clair database.

Use the following procedure to create a custom Clair database.

The following procedure sets up Clair with SSL/TLS certifications. To view a similar procedure that does not set up Clair with SSL/TSL certifications, see "Configuring a custom Clair database with a managed Clair configuration".

Procedure

Create a Quay configuration bundle secret that includes the

clair-config.yamlby entering the following command:$ oc create secret generic --from-file config.yaml=./config.yaml --from-file extra_ca_cert_rds-ca-2019-root.pem=./rds-ca-2019-root.pem --from-file clair-config.yaml=./clair-config.yaml --from-file ssl.cert=./ssl.cert --from-file ssl.key=./ssl.key config-bundle-secretExample Clair

config.yamlfileindexer: connstring: host=quay-server.example.com port=5432 dbname=quay user=quayrdsdb password=quayrdsdb sslrootcert=/run/certs/rds-ca-2019-root.pem sslmode=verify-ca layer_scan_concurrency: 6 migrations: true scanlock_retry: 11 log_level: debug matcher: connstring: host=quay-server.example.com port=5432 dbname=quay user=quayrdsdb password=quayrdsdb sslrootcert=/run/certs/rds-ca-2019-root.pem sslmode=verify-ca migrations: true metrics: name: prometheus notifier: connstring: host=quay-server.example.com port=5432 dbname=quay user=quayrdsdb password=quayrdsdb sslrootcert=/run/certs/rds-ca-2019-root.pem sslmode=verify-ca migrations: trueNote-

The database certificate is mounted under

/run/certs/rds-ca-2019-root.pemon the Clair application pod in theclair-config.yaml. It must be specified when configuring yourclair-config.yaml. -

An example

clair-config.yamlcan be found at Clair on OpenShift config.

-

The database certificate is mounted under

Add the

clair-config.yamlfile to your bundle secret, for example:apiVersion: v1 kind: Secret metadata: name: config-bundle-secret namespace: quay-enterprise data: config.yaml: <base64 encoded Quay config> clair-config.yaml: <base64 encoded Clair config> extra_ca_cert_<name>: <base64 encoded ca cert> ssl.crt: <base64 encoded SSL certificate> ssl.key: <base64 encoded SSL private key>NoteWhen updated, the provided

clair-config.yamlfile is mounted into the Clair pod. Any fields not provided are automatically populated with defaults using the Clair configuration module.You can check the status of your Clair pod by clicking the commit in the Build History page, or by running

oc get pods -n <namespace>. For example:$ oc get pods -n <namespace>Example output

NAME READY STATUS RESTARTS AGE f192fe4a-c802-4275-bcce-d2031e635126-9l2b5-25lg2 1/1 Running 0 7s

5.4.2. Running a custom Clair configuration with a managed Clair database

In some cases, users might want to run a custom Clair configuration with a managed Clair database. This is useful in the following scenarios:

- When a user wants to disable specific updater resources.

When a user is running Red Hat Quay in an disconnected environment. For more information about running Clair in a disconnected environment, see Configuring access to the Clair database in the air-gapped OpenShift cluster.

Note-

If you are running Red Hat Quay in an disconnected environment, the

airgapparameter of yourclair-config.yamlmust be set toTrue. - If you are running Red Hat Quay in an disconnected environment, you should disable all updater components.

-

If you are running Red Hat Quay in an disconnected environment, the

5.4.2.1. Setting a Clair database to managed

Use the following procedure to set your Clair database to managed.

Procedure

In the Quay Operator, set the

clairpostgrescomponent of theQuayRegistrycustom resource tomanaged: true:apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: quay370 spec: configBundleSecret: config-bundle-secret components: - kind: objectstorage managed: false - kind: route managed: true - kind: tls managed: false - kind: clairpostgres managed: true

5.4.2.2. Configuring a custom Clair database with a managed Clair configuration

The Red Hat Quay Operator for OpenShift Container Platform allows users to provide their own Clair database.

Use the following procedure to create a custom Clair database.

Procedure

Create a Quay configuration bundle secret that includes the

clair-config.yamlby entering the following command:$ oc create secret generic --from-file config.yaml=./config.yaml --from-file extra_ca_cert_rds-ca-2019-root.pem=./rds-ca-2019-root.pem --from-file clair-config.yaml=./clair-config.yaml config-bundle-secretExample Clair

config.yamlfileindexer: connstring: host=quay-server.example.com port=5432 dbname=quay user=quayrdsdb password=quayrdsdb sslmode=disable layer_scan_concurrency: 6 migrations: true scanlock_retry: 11 log_level: debug matcher: connstring: host=quay-server.example.com port=5432 dbname=quay user=quayrdsdb password=quayrdsdb sslmode=disable migrations: true metrics: name: prometheus notifier: connstring: host=quay-server.example.com port=5432 dbname=quay user=quayrdsdb password=quayrdsdb sslmode=disable migrations: trueNote-

The database certificate is mounted under

/run/certs/rds-ca-2019-root.pemon the Clair application pod in theclair-config.yaml. It must be specified when configuring yourclair-config.yaml. -

An example

clair-config.yamlcan be found at Clair on OpenShift config.

-

The database certificate is mounted under

Add the

clair-config.yamlfile to your bundle secret, for example:apiVersion: v1 kind: Secret metadata: name: config-bundle-secret namespace: quay-enterprise data: config.yaml: <base64 encoded Quay config> clair-config.yaml: <base64 encoded Clair config>Note-

When updated, the provided

clair-config.yamlfile is mounted into the Clair pod. Any fields not provided are automatically populated with defaults using the Clair configuration module.

-

When updated, the provided

You can check the status of your Clair pod by clicking the commit in the Build History page, or by running

oc get pods -n <namespace>. For example:$ oc get pods -n <namespace>Example output

NAME READY STATUS RESTARTS AGE f192fe4a-c802-4275-bcce-d2031e635126-9l2b5-25lg2 1/1 Running 0 7s

5.4.3. Clair in disconnected environments

Clair uses a set of components called updaters to handle the fetching and parsing of data from various vulnerability databases. Updaters are set up by default to pull vulnerability data directly from the internet and work for immediate use. However, some users might require Red Hat Quay to run in a disconnected environment, or an environment without direct access to the internet. Clair supports disconnected environments by working with different types of update workflows that take network isolation into consideration. This works by using the clairctl command line interface tool, which obtains updater data from the internet by using an open host, securely transferring the data to an isolated host, and then important the updater data on the isolated host into Clair.

Use this guide to deploy Clair in a disconnected environment.

Currently, Clair enrichment data is CVSS data. Enrichment data is currently unsupported in disconnected environments.

For more information about Clair updaters, see "Clair updaters".

5.4.3.1. Setting up Clair in a disconnected OpenShift Container Platform cluster

Use the following procedures to set up an OpenShift Container Platform provisioned Clair pod in a disconnected OpenShift Container Platform cluster.

5.4.3.1.1. Installing the clairctl command line utility tool for OpenShift Container Platform deployments

Use the following procedure to install the clairctl CLI tool for OpenShift Container Platform deployments.

Procedure

Install the

clairctlprogram for a Clair deployment in an OpenShift Container Platform cluster by entering the following command:$ oc -n quay-enterprise exec example-registry-clair-app-64dd48f866-6ptgw -- cat /usr/bin/clairctl > clairctlNoteUnofficially, the

clairctltool can be downloadedSet the permissions of the

clairctlfile so that it can be executed and run by the user, for example:$ chmod u+x ./clairctl

5.4.3.1.2. Retrieving and decoding the Clair configuration secret for Clair deployments on OpenShift Container Platform

Use the following procedure to retrieve and decode the configuration secret for an OpenShift Container Platform provisioned Clair instance on OpenShift Container Platform.

Prerequisites

-

You have installed the

clairctlcommand line utility tool.

Procedure

Enter the following command to retrieve and decode the configuration secret, and then save it to a Clair configuration YAML:

$ oc get secret -n quay-enterprise example-registry-clair-config-secret -o "jsonpath={$.data['config\.yaml']}" | base64 -d > clair-config.yamlUpdate the

clair-config.yamlfile so that thedisable_updatersandairgapparameters are set toTrue, for example:--- indexer: airgap: true --- matcher: disable_updaters: true ---

5.4.3.1.3. Exporting the updaters bundle from a connected Clair instance

Use the following procedure to export the updaters bundle from a Clair instance that has access to the internet.

Prerequisites

-

You have installed the

clairctlcommand line utility tool. -

You have retrieved and decoded the Clair configuration secret, and saved it to a Clair

config.yamlfile. -

The

disable_updatersandairgapparameters are set toTruein your Clairconfig.yamlfile.

Procedure

From a Clair instance that has access to the internet, use the

clairctlCLI tool with your configuration file to export the updaters bundle. For example:$ ./clairctl --config ./config.yaml export-updaters updates.gz

5.4.3.1.4. Configuring access to the Clair database in the disconnected OpenShift Container Platform cluster

Use the following procedure to configure access to the Clair database in your disconnected OpenShift Container Platform cluster.

Prerequisites

-

You have installed the

clairctlcommand line utility tool. -

You have retrieved and decoded the Clair configuration secret, and saved it to a Clair

config.yamlfile. -

The

disable_updatersandairgapparameters are set toTruein your Clairconfig.yamlfile. - You have exported the updaters bundle from a Clair instance that has access to the internet.

Procedure

Determine your Clair database service by using the

ocCLI tool, for example:$ oc get svc -n quay-enterpriseExample output

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE example-registry-clair-app ClusterIP 172.30.224.93 <none> 80/TCP,8089/TCP 4d21h example-registry-clair-postgres ClusterIP 172.30.246.88 <none> 5432/TCP 4d21h ...Forward the Clair database port so that it is accessible from the local machine. For example:

$ oc port-forward -n quay-enterprise service/example-registry-clair-postgres 5432:5432Update your Clair

config.yamlfile, for example:indexer: connstring: host=localhost port=5432 dbname=postgres user=postgres password=postgres sslmode=disable1 scanlock_retry: 10 layer_scan_concurrency: 5 migrations: true scanner: repo: rhel-repository-scanner:2 repo2cpe_mapping_file: /data/cpe-map.json package: rhel_containerscanner:3 name2repos_mapping_file: /data/repo-map.json- 1

- Replace the value of the

hostin the multipleconnstringfields withlocalhost. - 2

- For more information about the

rhel-repository-scannerparameter, see "Mapping repositories to Common Product Enumeration information". - 3

- For more information about the

rhel_containerscannerparameter, see "Mapping repositories to Common Product Enumeration information".

5.4.3.1.5. Importing the updaters bundle into the disconnected OpenShift Container Platform cluster

Use the following procedure to import the updaters bundle into your disconnected OpenShift Container Platform cluster.

Prerequisites

-

You have installed the

clairctlcommand line utility tool. -

You have retrieved and decoded the Clair configuration secret, and saved it to a Clair

config.yamlfile. -

The

disable_updatersandairgapparameters are set toTruein your Clairconfig.yamlfile. - You have exported the updaters bundle from a Clair instance that has access to the internet.

- You have transferred the updaters bundle into your disconnected environment.

Procedure

Use the

clairctlCLI tool to import the updaters bundle into the Clair database that is deployed by OpenShift Container Platform. For example:$ ./clairctl --config ./clair-config.yaml import-updaters updates.gz

5.4.3.2. Setting up a self-managed deployment of Clair for a disconnected OpenShift Container Platform cluster

Use the following procedures to set up a self-managed deployment of Clair for a disconnected OpenShift Container Platform cluster.

5.4.3.2.1. Installing the clairctl command line utility tool for a self-managed Clair deployment on OpenShift Container Platform

Use the following procedure to install the clairctl CLI tool for self-managed Clair deployments on OpenShift Container Platform.

Procedure

Install the

clairctlprogram for a self-managed Clair deployment by using thepodman cpcommand, for example:$ sudo podman cp clairv4:/usr/bin/clairctl ./clairctlSet the permissions of the

clairctlfile so that it can be executed and run by the user, for example:$ chmod u+x ./clairctl

5.4.3.2.2. Deploying a self-managed Clair container for disconnected OpenShift Container Platform clusters

Use the following procedure to deploy a self-managed Clair container for disconnected OpenShift Container Platform clusters.

Prerequisites

-

You have installed the

clairctlcommand line utility tool.

Procedure

Create a folder for your Clair configuration file, for example:

$ mkdir /etc/clairv4/config/Create a Clair configuration file with the

disable_updatersparameter set toTrue, for example:--- indexer: airgap: true --- matcher: disable_updaters: true ---Start Clair by using the container image, mounting in the configuration from the file you created:

$ sudo podman run -it --rm --name clairv4 \ -p 8081:8081 -p 8088:8088 \ -e CLAIR_CONF=/clair/config.yaml \ -e CLAIR_MODE=combo \ -v /etc/clairv4/config:/clair:Z \ registry.redhat.io/quay/clair-rhel8:v3.9.15

5.4.3.2.3. Exporting the updaters bundle from a connected Clair instance

Use the following procedure to export the updaters bundle from a Clair instance that has access to the internet.

Prerequisites

-

You have installed the

clairctlcommand line utility tool. - You have deployed Clair.

-

The

disable_updatersandairgapparameters are set toTruein your Clairconfig.yamlfile.

Procedure

From a Clair instance that has access to the internet, use the

clairctlCLI tool with your configuration file to export the updaters bundle. For example:$ ./clairctl --config ./config.yaml export-updaters updates.gz

5.4.3.2.4. Configuring access to the Clair database in the disconnected OpenShift Container Platform cluster

Use the following procedure to configure access to the Clair database in your disconnected OpenShift Container Platform cluster.

Prerequisites

-

You have installed the

clairctlcommand line utility tool. - You have deployed Clair.

-

The

disable_updatersandairgapparameters are set toTruein your Clairconfig.yamlfile. - You have exported the updaters bundle from a Clair instance that has access to the internet.

Procedure

Determine your Clair database service by using the

ocCLI tool, for example:$ oc get svc -n quay-enterpriseExample output

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE example-registry-clair-app ClusterIP 172.30.224.93 <none> 80/TCP,8089/TCP 4d21h example-registry-clair-postgres ClusterIP 172.30.246.88 <none> 5432/TCP 4d21h ...Forward the Clair database port so that it is accessible from the local machine. For example:

$ oc port-forward -n quay-enterprise service/example-registry-clair-postgres 5432:5432Update your Clair

config.yamlfile, for example:indexer: connstring: host=localhost port=5432 dbname=postgres user=postgres password=postgres sslmode=disable1 scanlock_retry: 10 layer_scan_concurrency: 5 migrations: true scanner: repo: rhel-repository-scanner:2 repo2cpe_mapping_file: /data/cpe-map.json package: rhel_containerscanner:3 name2repos_mapping_file: /data/repo-map.json- 1

- Replace the value of the

hostin the multipleconnstringfields withlocalhost. - 2

- For more information about the

rhel-repository-scannerparameter, see "Mapping repositories to Common Product Enumeration information". - 3

- For more information about the

rhel_containerscannerparameter, see "Mapping repositories to Common Product Enumeration information".

5.4.3.2.5. Importing the updaters bundle into the disconnected OpenShift Container Platform cluster

Use the following procedure to import the updaters bundle into your disconnected OpenShift Container Platform cluster.

Prerequisites

-

You have installed the

clairctlcommand line utility tool. - You have deployed Clair.

-

The

disable_updatersandairgapparameters are set toTruein your Clairconfig.yamlfile. - You have exported the updaters bundle from a Clair instance that has access to the internet.

- You have transferred the updaters bundle into your disconnected environment.

Procedure

Use the

clairctlCLI tool to import the updaters bundle into the Clair database that is deployed by OpenShift Container Platform:$ ./clairctl --config ./clair-config.yaml import-updaters updates.gz

5.4.4. Mapping repositories to Common Product Enumeration information

Clair’s Red Hat Enterprise Linux (RHEL) scanner relies on a Common Product Enumeration (CPE) file to map RPM packages to the corresponding security data to produce matching results. These files are owned by product security and updated daily.

The CPE file must be present, or access to the file must be allowed, for the scanner to properly process RPM packages. If the file is not present, RPM packages installed in the container image will not be scanned.

| CPE | Link to JSON mapping file |

|---|---|

|

| |

|

|

In addition to uploading CVE information to the database for disconnected Clair installations, you must also make the mapping file available locally:

- For standalone Red Hat Quay and Clair deployments, the mapping file must be loaded into the Clair pod.

-

For Red Hat Quay Operator deployments on OpenShift Container Platform and Clair deployments, you must set the Clair component to

unmanaged. Then, Clair must be deployed manually, setting the configuration to load a local copy of the mapping file.

5.4.4.1. Mapping repositories to Common Product Enumeration example configuration

Use the repo2cpe_mapping_file and name2repos_mapping_file fields in your Clair configuration to include the CPE JSON mapping files. For example:

indexer:

scanner:

repo:

rhel-repository-scanner:

repo2cpe_mapping_file: /data/cpe-map.json

package:

rhel_containerscanner:

name2repos_mapping_file: /data/repo-map.jsonFor more information, see How to accurately match OVAL security data to installed RPMs.

5.5. Deploying Red Hat Quay on infrastructure nodes

By default, Quay related pods are placed on arbitrary worker nodes when using the Red Hat Quay Operator to deploy the registry. For more information about how to use machine sets to configure nodes to only host infrastructure components, see Creating infrastructure machine sets.

If you are not using OpenShift Container Platform machine set resources to deploy infra nodes, the section in this document shows you how to manually label and taint nodes for infrastructure purposes. After you have configured your infrastructure nodes either manually or use machines sets, you can control the placement of Quay pods on these nodes using node selectors and tolerations.

5.5.1. Labeling and tainting nodes for infrastructure use

Use the following procedure to label and tain nodes for infrastructure use.

Enter the following command to reveal the master and worker nodes. In this example, there are three master nodes and six worker nodes.

$ oc get nodesExample output

NAME STATUS ROLES AGE VERSION user1-jcnp6-master-0.c.quay-devel.internal Ready master 3h30m v1.20.0+ba45583 user1-jcnp6-master-1.c.quay-devel.internal Ready master 3h30m v1.20.0+ba45583 user1-jcnp6-master-2.c.quay-devel.internal Ready master 3h30m v1.20.0+ba45583 user1-jcnp6-worker-b-65plj.c.quay-devel.internal Ready worker 3h21m v1.20.0+ba45583 user1-jcnp6-worker-b-jr7hc.c.quay-devel.internal Ready worker 3h21m v1.20.0+ba45583 user1-jcnp6-worker-c-jrq4v.c.quay-devel.internal Ready worker 3h21m v1.20.0+ba45583 user1-jcnp6-worker-c-pwxfp.c.quay-devel.internal Ready worker 3h21m v1.20.0+ba45583 user1-jcnp6-worker-d-h5tv2.c.quay-devel.internal Ready worker 3h22m v1.20.0+ba45583 user1-jcnp6-worker-d-m9gg4.c.quay-devel.internal Ready worker 3h21m v1.20.0+ba45583Enter the following commands to label the three worker nodes for infrastructure use:

$ oc label node --overwrite user1-jcnp6-worker-c-pwxfp.c.quay-devel.internal node-role.kubernetes.io/infra=$ oc label node --overwrite user1-jcnp6-worker-d-h5tv2.c.quay-devel.internal node-role.kubernetes.io/infra=$ oc label node --overwrite user1-jcnp6-worker-d-m9gg4.c.quay-devel.internal node-role.kubernetes.io/infra=Now, when listing the nodes in the cluster, the last three worker nodes have the

infrarole. For example:$ oc get nodesExample

NAME STATUS ROLES AGE VERSION user1-jcnp6-master-0.c.quay-devel.internal Ready master 4h14m v1.20.0+ba45583 user1-jcnp6-master-1.c.quay-devel.internal Ready master 4h15m v1.20.0+ba45583 user1-jcnp6-master-2.c.quay-devel.internal Ready master 4h14m v1.20.0+ba45583 user1-jcnp6-worker-b-65plj.c.quay-devel.internal Ready worker 4h6m v1.20.0+ba45583 user1-jcnp6-worker-b-jr7hc.c.quay-devel.internal Ready worker 4h5m v1.20.0+ba45583 user1-jcnp6-worker-c-jrq4v.c.quay-devel.internal Ready worker 4h5m v1.20.0+ba45583 user1-jcnp6-worker-c-pwxfp.c.quay-devel.internal Ready infra,worker 4h6m v1.20.0+ba45583 user1-jcnp6-worker-d-h5tv2.c.quay-devel.internal Ready infra,worker 4h6m v1.20.0+ba45583 user1-jcnp6-worker-d-m9gg4.c.quay-devel.internal Ready infra,worker 4h6m v1.20.0+ba4558When a worker node is assigned the

infrarole, there is a chance that user workloads could get inadvertently assigned to an infra node. To avoid this, you can apply a taint to the infra node, and then add tolerations for the pods that you want to control. For example:$ oc adm taint nodes user1-jcnp6-worker-c-pwxfp.c.quay-devel.internal node-role.kubernetes.io/infra:NoSchedule$ oc adm taint nodes user1-jcnp6-worker-d-h5tv2.c.quay-devel.internal node-role.kubernetes.io/infra:NoSchedule$ oc adm taint nodes user1-jcnp6-worker-d-m9gg4.c.quay-devel.internal node-role.kubernetes.io/infra:NoSchedule

5.5.2. Creating a project with node selector and tolerations

Use the following procedure to create a project with node selector and tolerations.

If you have already deployed Red Hat Quay using the Operator, remove the installed Operator and any specific namespaces that you created for the deployment.

Procedure

Create a project resource, specifying a node selector and toleration. For example:

quay-registry.yaml

kind: Project apiVersion: project.openshift.io/v1 metadata: name: quay-registry annotations: openshift.io/node-selector: 'node-role.kubernetes.io/infra=' scheduler.alpha.kubernetes.io/defaultTolerations: >- [{"operator": "Exists", "effect": "NoSchedule", "key": "node-role.kubernetes.io/infra"}]Enter the following command to create the project:

$ oc apply -f quay-registry.yamlExample output

project.project.openshift.io/quay-registry created

Subsequent resources created in the quay-registry namespace should now be scheduled on the dedicated infrastructure nodes.

5.5.3. Installing the Red Hat Quay Operator in the namespace

Use the following procedure to install the Red Hat Quay Operator in the namespace.

To install the Red Hat Quay Operator in a specific namespace, you must explicitly specify the appropriate project namespace, as in the following command. In this example, we are using

quay-registry. Ths results in the Operator pod landing on one of the three infrastructure nodes. For example:$ oc get pods -n quay-registry -o wideExample output

NAME READY STATUS RESTARTS AGE IP NODE quay-operator.v3.4.1-6f6597d8d8-bd4dp 1/1 Running 0 30s 10.131.0.16 user1-jcnp6-worker-d-h5tv2.c.quay-devel.internal

5.5.4. Creating the Red Hat Quay registry

Use the following procedure to create the Red Hat Quay registry.

Enter the following command to create the Red Hat Quay registry. Then, wait for the deployment to be marked as

ready. In the following example, you should see that they have only been scheduled on the three nodes that you have labelled for infrastructure purposes.$ oc get pods -n quay-registry -o wideExample output

NAME READY STATUS RESTARTS AGE IP NODE example-registry-clair-app-789d6d984d-gpbwd 1/1 Running 1 5m57s 10.130.2.80 user1-jcnp6-worker-d-m9gg4.c.quay-devel.internal example-registry-clair-postgres-7c8697f5-zkzht 1/1 Running 0 4m53s 10.129.2.19 user1-jcnp6-worker-c-pwxfp.c.quay-devel.internal example-registry-quay-app-56dd755b6d-glbf7 1/1 Running 1 5m57s 10.129.2.17 user1-jcnp6-worker-c-pwxfp.c.quay-devel.internal example-registry-quay-config-editor-7bf9bccc7b-dpc6d 1/1 Running 0 5m57s 10.131.0.23 user1-jcnp6-worker-d-h5tv2.c.quay-devel.internal example-registry-quay-database-8dc7cfd69-dr2cc 1/1 Running 0 5m43s 10.129.2.18 user1-jcnp6-worker-c-pwxfp.c.quay-devel.internal example-registry-quay-mirror-78df886bcc-v75p9 1/1 Running 0 5m16s 10.131.0.24 user1-jcnp6-worker-d-h5tv2.c.quay-devel.internal example-registry-quay-postgres-init-8s8g9 0/1 Completed 0 5m54s 10.130.2.79 user1-jcnp6-worker-d-m9gg4.c.quay-devel.internal example-registry-quay-redis-5688ddcdb6-ndp4t 1/1 Running 0 5m56s 10.130.2.78 user1-jcnp6-worker-d-m9gg4.c.quay-devel.internal quay-operator.v3.4.1-6f6597d8d8-bd4dp 1/1 Running 0 22m 10.131.0.16 user1-jcnp6-worker-d-h5tv2.c.quay-devel.internal

5.6. Resizing Managed Storage

When deploying the Red Hat Quay Operator, three distinct persistent volume claims (PVCs) are deployed:

- One for the PostgreSQL 13 registry.

- One for the Clair PostgreSQL 13 registry.

- One that uses NooBaa as a backend storage.

The connection between Red Hat Quay and NooBaa is done through the S3 API and ObjectBucketClaim API in OpenShift Container Platform. Red Hat Quay leverages that API group to create a bucket in NooBaa, obtain access keys, and automatically set everything up. On the backend, or NooBaa, side, that bucket is creating inside of the backing store. As a result, NooBaa PVCs are not mounted or connected to Red Hat Quay pods.

The default size for the PostgreSQL 13 and Clair PostgreSQL 13 PVCs is set to 50 GiB. You can expand storage for these PVCs on the OpenShift Container Platform console by using the following procedure.

The following procedure shares commonality with Expanding Persistent Volume Claims on Red Hat OpenShift Data Foundation.

5.6.1. Resizing PostgreSQL 13 PVCs on Red Hat Quay

Use the following procedure to resize the PostgreSQL 13 and Clair PostgreSQL 13 PVCs.

Prerequisites

- You have cluster admin privileges on OpenShift Container Platform.

Procedure

-

Log into the OpenShift Container Platform console and select Storage

Persistent Volume Claims. -

Select the desired

PersistentVolumeClaimfor either PostgreSQL 13 or Clair PostgreSQL 13, for example,example-registry-quay-postgres-13. - From the Action menu, select Expand PVC.

Enter the new size of the Persistent Volume Claim and select Expand.

After a few minutes, the expanded size should reflect in the PVC’s Capacity field.

5.7. Customizing Default Operator Images

In certain circumstances, it might be useful to override the default images used by the Red Hat Quay Operator. This can be done by setting one or more environment variables in the Red Hat Quay Operator ClusterServiceVersion.

Using this mechanism is not supported for production Red Hat Quay environments and is strongly encouraged only for development or testing purposes. There is no guarantee your deployment will work correctly when using non-default images with the Red Hat Quay Operator.

5.7.1. Environment Variables

The following environment variables are used in the Red Hat Quay Operator to override component images:

| Environment Variable | Component |

|

|

|

|

|

|

|

|

|

|

|

|

Overridden images must be referenced by manifest (@sha256:) and not by tag (:latest).

5.7.2. Applying overrides to a running Operator

When the Red Hat Quay Operator is installed in a cluster through the Operator Lifecycle Manager (OLM), the managed component container images can be easily overridden by modifying the ClusterServiceVersion object.

Use the following procedure to apply overrides to a running Red Hat Quay Operator.

Procedure

The

ClusterServiceVersionobject is Operator Lifecycle Manager’s representation of a running Operator in the cluster. Find the Red Hat Quay Operator’sClusterServiceVersionby using a Kubernetes UI or thekubectl/ocCLI tool. For example:$ oc get clusterserviceversions -n <your-namespace>Using the UI,

oc edit, or another method, modify the Red Hat QuayClusterServiceVersionto include the environment variables outlined above to point to the override images:JSONPath:

spec.install.spec.deployments[0].spec.template.spec.containers[0].env- name: RELATED_IMAGE_COMPONENT_QUAY value: quay.io/projectquay/quay@sha256:c35f5af964431673f4ff5c9e90bdf45f19e38b8742b5903d41c10cc7f6339a6d - name: RELATED_IMAGE_COMPONENT_CLAIR value: quay.io/projectquay/clair@sha256:70c99feceb4c0973540d22e740659cd8d616775d3ad1c1698ddf71d0221f3ce6 - name: RELATED_IMAGE_COMPONENT_POSTGRES value: centos/postgresql-10-centos7@sha256:de1560cb35e5ec643e7b3a772ebaac8e3a7a2a8e8271d9e91ff023539b4dfb33 - name: RELATED_IMAGE_COMPONENT_REDIS value: centos/redis-32-centos7@sha256:06dbb609484330ec6be6090109f1fa16e936afcf975d1cbc5fff3e6c7cae7542

This is done at the Operator level, so every QuayRegistry will be deployed using these same overrides.

5.8. AWS S3 CloudFront

Use the following procedure if you are using AWS S3 Cloudfront for your backend registry storage.

Procedure

Enter the following command to specify the registry key:

$ oc create secret generic --from-file config.yaml=./config_awss3cloudfront.yaml --from-file default-cloudfront-signing-key.pem=./default-cloudfront-signing-key.pem test-config-bundle