Chapter 2. Upgrading the Red Hat Quay Operator Overview

The Red Hat Quay Operator follows a synchronized versioning scheme, which means that each version of the Operator is tied to the version of Red Hat Quay and the components that it manages. There is no field on the QuayRegistry custom resource which sets the version of Red Hat Quay to deploy; the Operator can only deploy a single version of all components. This scheme was chosen to ensure that all components work well together and to reduce the complexity of the Operator needing to know how to manage the lifecycles of many different versions of Red Hat Quay on Kubernetes.

2.1. Operator Lifecycle Manager

The Red Hat Quay Operator should be installed and upgraded using the Operator Lifecycle Manager (OLM). When creating a Subscription with the default approvalStrategy: Automatic, OLM will automatically upgrade the Red Hat Quay Operator whenever a new version becomes available.

When the Red Hat Quay Operator is installed by Operator Lifecycle Manager, it might be configured to support automatic or manual upgrades. This option is shown on the Operator Hub page for the Red Hat Quay Operator during installation. It can also be found in the Red Hat Quay Operator Subscription object by the approvalStrategy field. Choosing Automatic means that your Red Hat Quay Operator will automatically be upgraded whenever a new Operator version is released. If this is not desirable, then the Manual approval strategy should be selected.

2.2. Upgrading the Quay Operator

The standard approach for upgrading installed Operators on OpenShift Container Platform is documented at Upgrading installed Operators.

In general, Red Hat Quay supports upgrades from a prior (N-1) minor version only. For example, upgrading directly from Red Hat Quay 3.0.5 to the latest version of 3.5 is not supported. Instead, users would have to upgrade as follows:

-

3.0.5

3.1.3 -

3.1.3

3.2.2 -

3.2.2

3.3.4 -

3.3.4

3.4.z -

3.4.z

3.5.z

This is required to ensure that any necessary database migrations are done correctly and in the right order during the upgrade.

In some cases, Red Hat Quay supports direct, single-step upgrades from prior (N-2, N-3) minor versions. This simplifies the upgrade procedure for customers on older releases. The following upgrade paths are supported:

-

3.3.z

3.6.z -

3.4.z

3.6.z -

3.4.z

3.7.z -

3.5.z

3.7.z -

3.7.z

3.8.z -

3.6.z

3.9.z -

3.7.z

3.9.z -

3.8.z

3.9.z

For users on standalone deployments of Red Hat Quay wanting to upgrade to 3.9, see the Standalone upgrade guide.

2.2.1. Upgrading Quay

To update Red Hat Quay from one minor version to the next, for example, 3.4

For z stream upgrades, for example, 3.4.2 z stream upgrade depends on the approvalStrategy as outlined above. If the approval strategy is set to Automatic, the Quay Operator will upgrade automatically to the newest z stream. This results in automatic, rolling Quay updates to newer z streams with little to no downtime. Otherwise, the update must be manually approved before installation can begin.

2.2.2. Updating Red Hat Quay from 3.8

If your Red Hat Quay deployment is upgrading from one y-stream to the next, for example, from 3.8.10 stable-3.8 to stable-3.9. Changing the upgrade channel in the middle of a y-stream upgrade will disallow Red Hat Quay from upgrading to 3.9. This is a known issue and will be fixed in a future version of Red Hat Quay.

When updating Red Hat Quay 3.8

- This upgrade is irreversible. It is highly recommended that you upgrade to PostgreSQL 13. PostgreSQL 10 had its final release on November 10, 2022 and is no longer supported. For more information, see the PostgreSQL Versioning Policy.

-

By default, Red Hat Quay is configured to remove old persistent volume claims (PVCs) from PostgreSQL 10. To disable this setting and backup old PVCs, you must set

POSTGRES_UPGRADE_RETAIN_BACKUPtoTruein yourquay-operatorSubscriptionobject.

Prerequisites

- You have installed Red Hat Quay 3.8 on OpenShift Container Platform.

100 GB of free, additional storage.

During the upgrade process, additional persistent volume claims (PVCs) are provisioned to store the migrated data. This helps prevent a destructive operation on user data. The upgrade process rolls out PVCs for 50 GB for both the Red Hat Quay database upgrade, and the Clair database upgrade.

Procedure

Optional. Back up your old PVCs from PostgreSQL 10 by setting

POSTGRES_UPGRADE_RETAIN_BACKUPtoTrueyourquay-operatorSubscriptionobject. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

In the OpenShift Container Platform Web Console, navigate to Operators

Installed Operators. - Click on the Red Hat Quay Operator.

- Navigate to the Subscription tab.

- Under Subscription details click Update channel.

- Select stable-3.9 and save the changes.

- Check the progress of the new installation under Upgrade status. Wait until the upgrade status changes to 1 installed before proceeding.

-

In your OpenShift Container Platform cluster, navigate to Workloads

Pods. Existing pods should be terminated, or in the process of being terminated. -

Wait for the following pods, which are responsible for upgrading the database and alembic migration of existing data, to spin up:

clair-postgres-upgrade,quay-postgres-upgrade, andquay-app-upgrade. -

After the

clair-postgres-upgrade,quay-postgres-upgrade, andquay-app-upgradepods are marked as Completed, the remaining pods for your Red Hat Quay deployment spin up. This takes approximately ten minutes. -

Verify that the

quay-databaseandclair-postgrespods now use thepostgresql-13image. -

After the

quay-apppod is marked as Running, you can reach your Red Hat Quay registry.

2.2.3. Upgrading directly from 3.3.z or 3.4.z to 3.6

The following section provides important information when upgrading from Red Hat Quay 3.3.z or 3.4.z to 3.6.

2.2.3.1. Upgrading with edge routing enabled

- Previously, when running a 3.3.z version of Red Hat Quay with edge routing enabled, users were unable to upgrade to 3.4.z versions of Red Hat Quay. This has been resolved with the release of Red Hat Quay 3.6.

When upgrading from 3.3.z to 3.6, if

tls.terminationis set tononein your Red Hat Quay 3.3.z deployment, it will change to HTTPS with TLS edge termination and use the default cluster wildcard certificate. For example:Copy to Clipboard Copied! Toggle word wrap Toggle overflow

2.2.3.2. Upgrading with custom SSL/TLS certificate/key pairs without Subject Alternative Names

There is an issue for customers using their own SSL/TLS certificate/key pairs without Subject Alternative Names (SANs) when upgrading from Red Hat Quay 3.3.4 to Red Hat Quay 3.6 directly. During the upgrade to Red Hat Quay 3.6, the deployment is blocked, with the error message from the Red Hat Quay Operator pod logs indicating that the Red Hat Quay SSL/TLS certificate must have SANs.

If possible, you should regenerate your SSL/TLS certificates with the correct hostname in the SANs. A possible workaround involves defining an environment variable in the quay-app, quay-upgrade and quay-config-editor pods after upgrade to enable CommonName matching:

GODEBUG=x509ignoreCN=0

GODEBUG=x509ignoreCN=0

The GODEBUG=x509ignoreCN=0 flag enables the legacy behavior of treating the CommonName field on X.509 certificates as a hostname when no SANs are present. However, this workaround is not recommended, as it will not persist across a redeployment.

2.2.3.3. Configuring Clair v4 when upgrading from 3.3.z or 3.4.z to 3.6 using the Red Hat Quay Operator

To set up Clair v4 on a new Red Hat Quay deployment on OpenShift Container Platform, it is highly recommended to use the Red Hat Quay Operator. By default, the Red Hat Quay Operator will install or upgrade a Clair deployment along with your Red Hat Quay deployment and configure Clair automatically.

For instructions about setting up Clair v4 in a disconnected OpenShift Container Platform cluster, see Setting Up Clair on a Red Hat Quay OpenShift deployment.

2.2.4. Swift configuration when upgrading from 3.3.z to 3.6

When upgrading from Red Hat Quay 3.3.z to 3.6.z, some users might receive the following error: Switch auth v3 requires tenant_id (string) in os_options. As a workaround, you can manually update your DISTRIBUTED_STORAGE_CONFIG to add the os_options and tenant_id parameters:

2.2.5. Changing the update channel for the Red Hat Quay Operator

The subscription of an installed Operator specifies an update channel, which is used to track and receive updates for the Operator. To upgrade the Red Hat Quay Operator to start tracking and receiving updates from a newer channel, change the update channel in the Subscription tab for the installed Red Hat Quay Operator. For subscriptions with an Automatic approval strategy, the upgrade begins automatically and can be monitored on the page that lists the Installed Operators.

2.2.6. Manually approving a pending Operator upgrade

If an installed Operator has the approval strategy in its subscription set to Manual, when new updates are released in its current update channel, the update must be manually approved before installation can begin. If the Red Hat Quay Operator has a pending upgrade, this status will be displayed in the list of Installed Operators. In the Subscription tab for the Red Hat Quay Operator, you can preview the install plan and review the resources that are listed as available for upgrade. If satisfied, click Approve and return to the page that lists Installed Operators to monitor the progress of the upgrade.

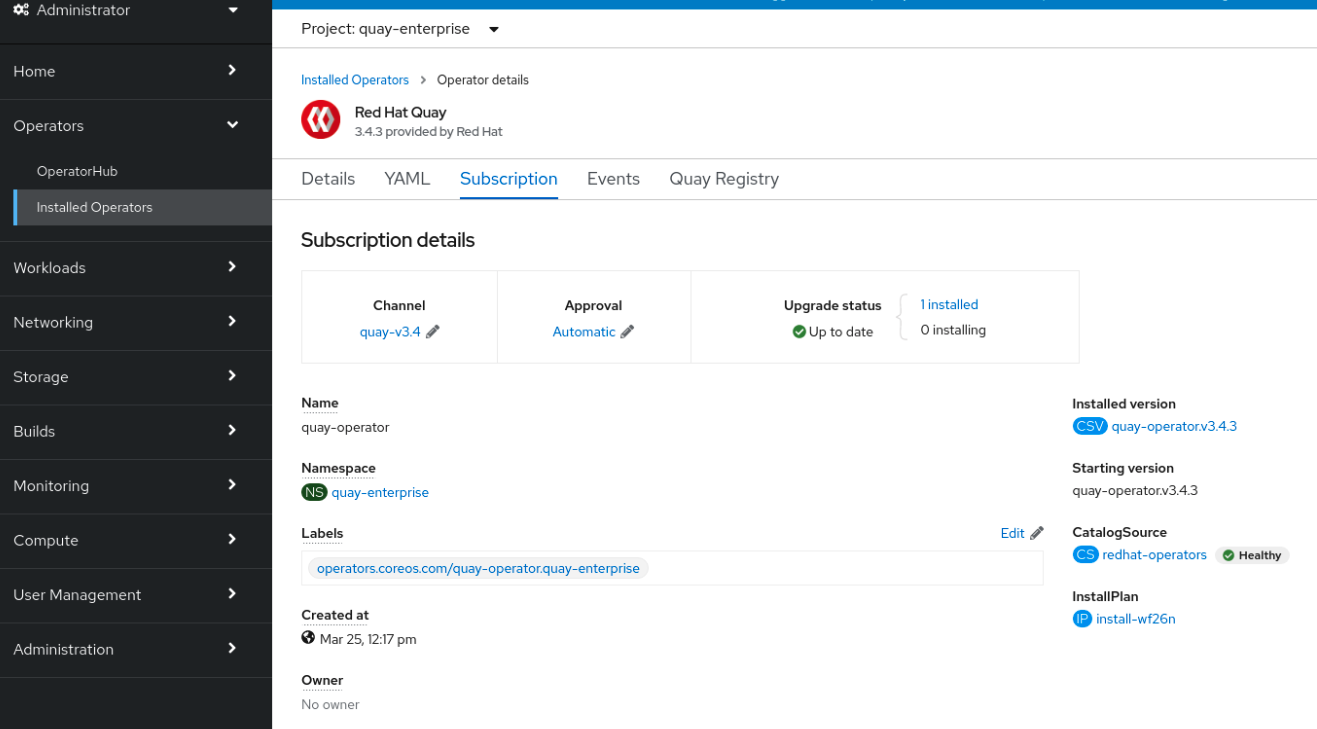

The following image shows the Subscription tab in the UI, including the update Channel, the Approval strategy, the Upgrade status and the InstallPlan:

The list of Installed Operators provides a high-level summary of the current Quay installation:

2.3. Upgrading a QuayRegistry

When the Red Hat Quay Operator starts, it immediately looks for any QuayRegistries it can find in the namespace(s) it is configured to watch. When it finds one, the following logic is used:

-

If

status.currentVersionis unset, reconcile as normal. -

If

status.currentVersionequals the Operator version, reconcile as normal. -

If

status.currentVersiondoes not equal the Operator version, check if it can be upgraded. If it can, perform upgrade tasks and set thestatus.currentVersionto the Operator’s version once complete. If it cannot be upgraded, return an error and leave theQuayRegistryand its deployed Kubernetes objects alone.

2.4. Upgrading a QuayEcosystem

Upgrades are supported from previous versions of the Operator which used the QuayEcosystem API for a limited set of configurations. To ensure that migrations do not happen unexpectedly, a special label needs to be applied to the QuayEcosystem for it to be migrated. A new QuayRegistry will be created for the Operator to manage, but the old QuayEcosystem will remain until manually deleted to ensure that you can roll back and still access Quay in case anything goes wrong. To migrate an existing QuayEcosystem to a new QuayRegistry, use the following procedure.

Procedure

Add

"quay-operator/migrate": "true"to themetadata.labelsof theQuayEcosystem.oc edit quayecosystem <quayecosystemname>

$ oc edit quayecosystem <quayecosystemname>Copy to Clipboard Copied! Toggle word wrap Toggle overflow metadata: labels: quay-operator/migrate: "true"metadata: labels: quay-operator/migrate: "true"Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Wait for a

QuayRegistryto be created with the samemetadata.nameas yourQuayEcosystem. TheQuayEcosystemwill be marked with the label"quay-operator/migration-complete": "true". -

After the

status.registryEndpointof the newQuayRegistryis set, access Red Hat Quay and confirm that all data and settings were migrated successfully. -

If everything works correctly, you can delete the

QuayEcosystemand Kubernetes garbage collection will clean up all old resources.

2.4.1. Reverting QuayEcosystem Upgrade

If something goes wrong during the automatic upgrade from QuayEcosystem to QuayRegistry, follow these steps to revert back to using the QuayEcosystem:

Procedure

Delete the

QuayRegistryusing either the UI orkubectl:kubectl delete -n <namespace> quayregistry <quayecosystem-name>

$ kubectl delete -n <namespace> quayregistry <quayecosystem-name>Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

If external access was provided using a

Route, change theRouteto point back to the originalServiceusing the UI orkubectl.

If your QuayEcosystem was managing the PostgreSQL database, the upgrade process will migrate your data to a new PostgreSQL database managed by the upgraded Operator. Your old database will not be changed or removed but Red Hat Quay will no longer use it once the migration is complete. If there are issues during the data migration, the upgrade process will exit and it is recommended that you continue with your database as an unmanaged component.

2.4.2. Supported QuayEcosystem Configurations for Upgrades

The Red Hat Quay Operator reports errors in its logs and in status.conditions if migrating a QuayEcosystem component fails or is unsupported. All unmanaged components should migrate successfully because no Kubernetes resources need to be adopted and all the necessary values are already provided in Red Hat Quay’s config.yaml file.

Database

Ephemeral database not supported (volumeSize field must be set).

Redis

Nothing special needed.

External Access

Only passthrough Route access is supported for automatic migration. Manual migration required for other methods.

-

LoadBalancerwithout custom hostname: After theQuayEcosystemis marked with label"quay-operator/migration-complete": "true", delete themetadata.ownerReferencesfield from existingServicebefore deleting theQuayEcosystemto prevent Kubernetes from garbage collecting theServiceand removing the load balancer. A newServicewill be created withmetadata.nameformat<QuayEcosystem-name>-quay-app. Edit thespec.selectorof the existingServiceto match thespec.selectorof the newServiceso traffic to the old load balancer endpoint will now be directed to the new pods. You are now responsible for the oldService; the Quay Operator will not manage it. -

LoadBalancer/NodePort/Ingresswith custom hostname: A newServiceof typeLoadBalancerwill be created withmetadata.nameformat<QuayEcosystem-name>-quay-app. Change your DNS settings to point to thestatus.loadBalancerendpoint provided by the newService.

Clair

Nothing special needed.

Object Storage

QuayEcosystem did not have a managed object storage component, so object storage will always be marked as unmanaged. Local storage is not supported.

Repository Mirroring

Nothing special needed.