Red Hat Quay Operator features

Advanced Red Hat Quay Operator features

Abstract

Preface

To configure and manage advanced capabilities in Red Hat Quay on OpenShift Container Platform, you can use this guide. It covers features such as vulnerability scanning with Clair, geo-replication, backup and restore operations, FIPS compliance, monitoring and alerting, and custom SSL certificates.

Chapter 1. Federal Information Processing Standard (FIPS) readiness and compliance

The Federal Information Processing Standard (FIPS) developed by the National Institute of Standards and Technology (NIST) is regarded as the highly regarded for securing and encrypting sensitive data, notably in highly regulated areas such as banking, healthcare, and the public sector. Red Hat Enterprise Linux (RHEL) and OpenShift Container Platform support FIPS by providing a FIPS mode, in which the system only allows usage of specific FIPS-validated cryptographic modules like openssl. This ensures FIPS compliance.

1.1. Enabling FIPS compliance

To enable FIPS compliance for your Red Hat Quay deployment, you can set the FEATURE_FIPS configuration field to True in your config.yaml file. This ensures that Red Hat Quay uses only FIPS-validated cryptographic modules for securing sensitive data.

Prerequisite

- If you are running a standalone deployment of Red Hat Quay, your Red Hat Enterprise Linux (RHEL) deployment is version 8 or later and FIPS-enabled.

- If you are deploying Red Hat Quay on OpenShift Container Platform, OpenShift Container Platform is version 4.10 or later.

- Your Red Hat Quay version is 3.5.0 or later.

If you are using the Red Hat Quay on OpenShift Container Platform on an IBM Power or IBM Z cluster:

- OpenShift Container Platform version 4.14 or later is required

- Red Hat Quay version 3.10 or later is required

- You have administrative privileges for your Red Hat Quay deployment.

Procedure

In your Red Hat Quay

config.yamlfile, set theFEATURE_FIPSconfiguration field toTrue. For example:# ... FEATURE_FIPS = true # ...With

FEATURE_FIPSset toTrue, Red Hat Quay runs using FIPS-compliant hash functions.

Chapter 2. Console monitoring and alerting

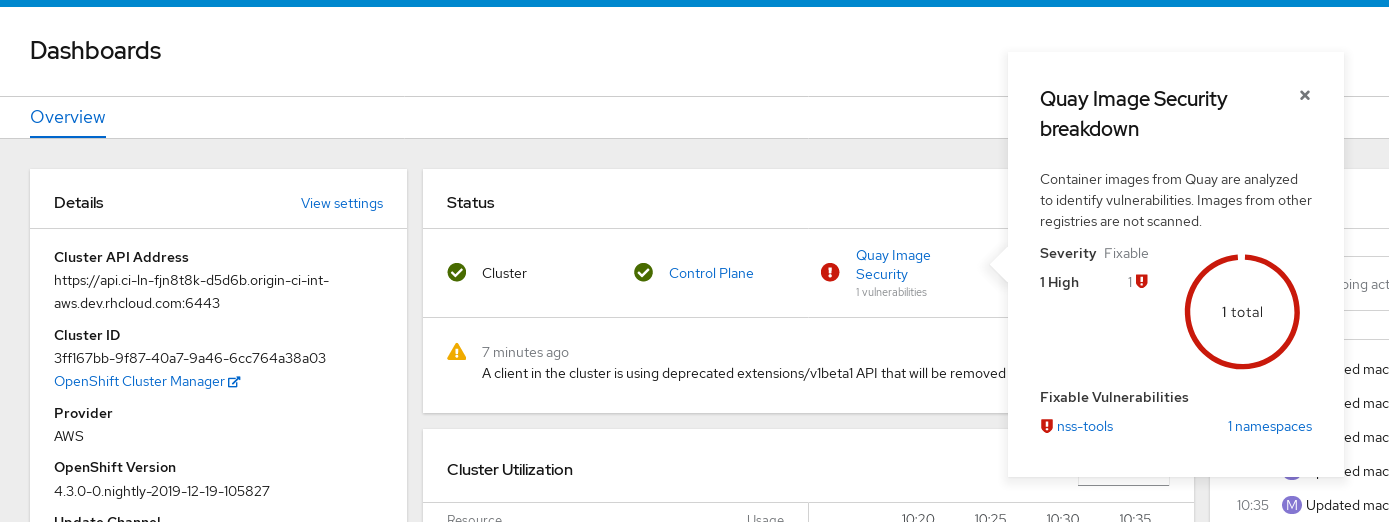

Red Hat Quay provides monitoring and alerting features in the OpenShift Container Platform console for instances deployed by the Operator. You can use Grafana dashboards, individual metrics, and alerts to monitor registry performance and receive notifications when Quay pods restart frequently.

To enable the monitoring features, you must select All namespaces on the cluster as the installation mode when installing the Red Hat Quay Operator.

2.1. Dashboard

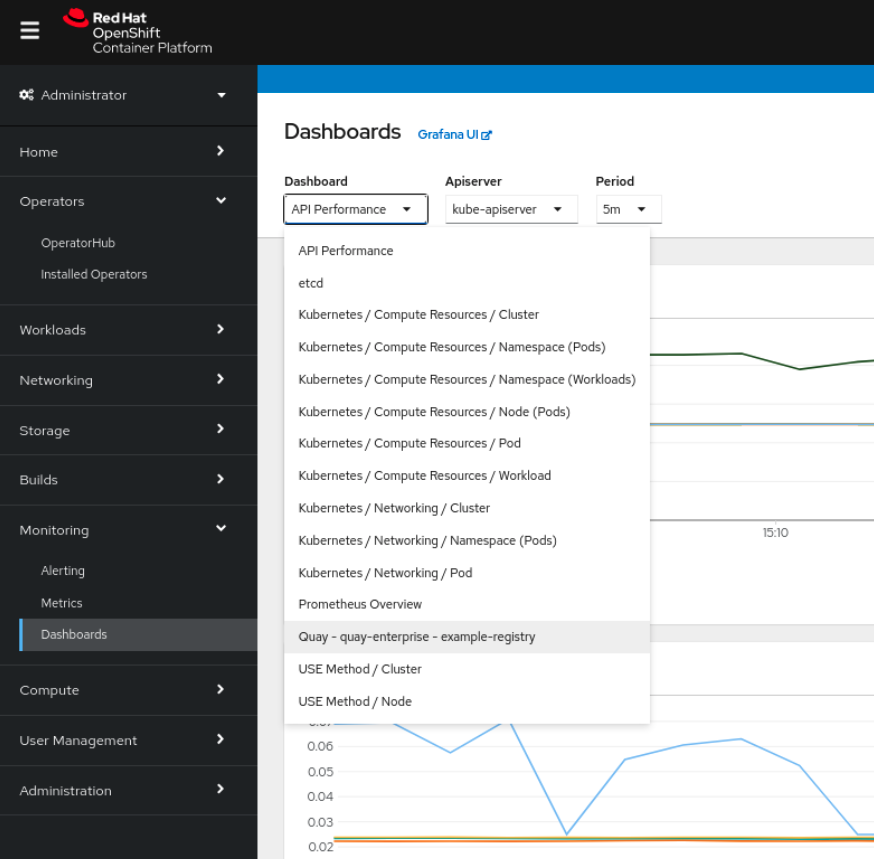

On the OpenShift Container Platform console, click Monitoring → Dashboards and search for the dashboard of your desired Red Hat Quay registry instance:

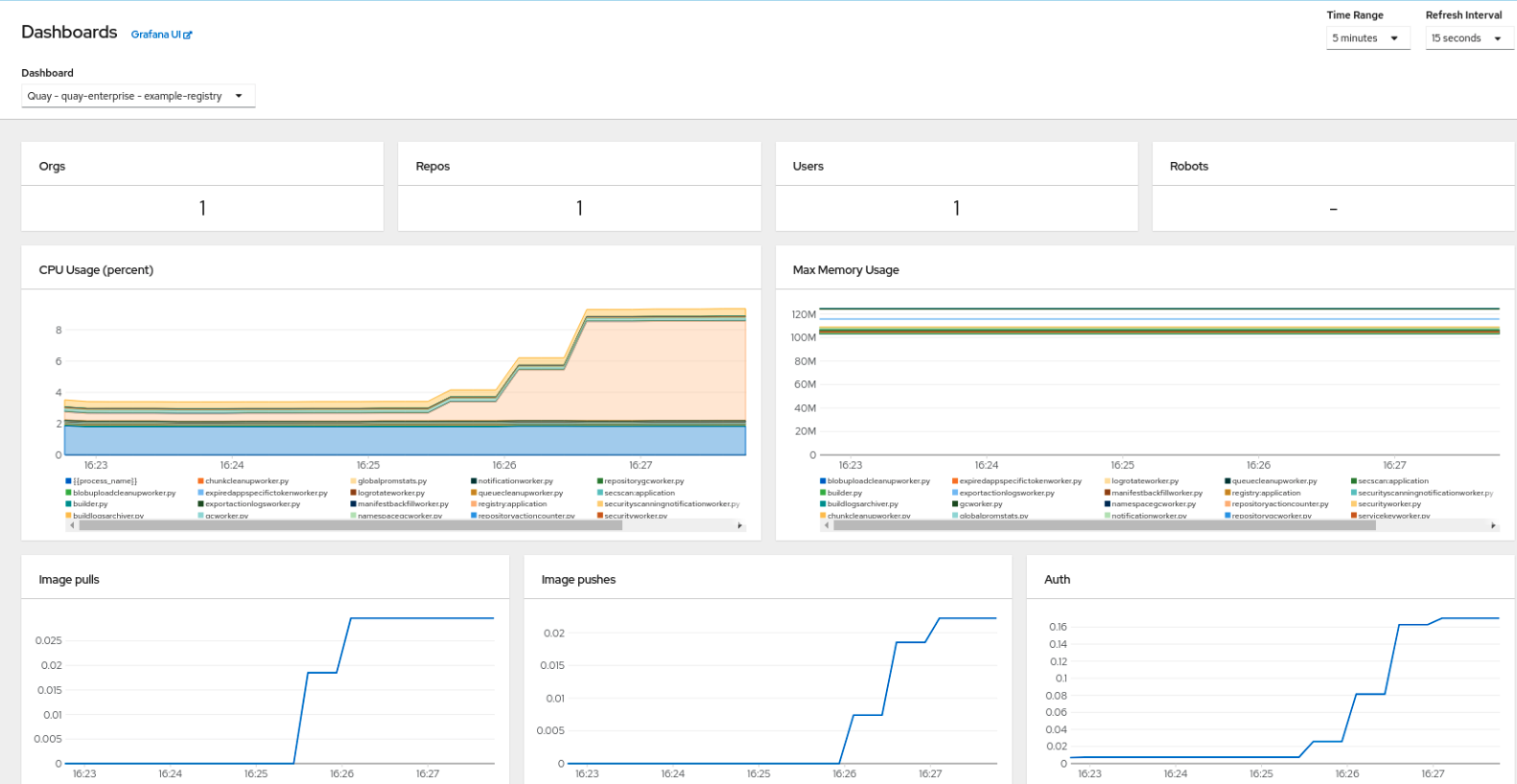

The dashboard shows various statistics including the following:

- The number of Organizations, Repositories, Users, and Robot accounts

- CPU Usage

- Max memory usage

- Rates of pulls and pushes, and authentication requests

- API request rate

- Latencies

2.2. Metrics

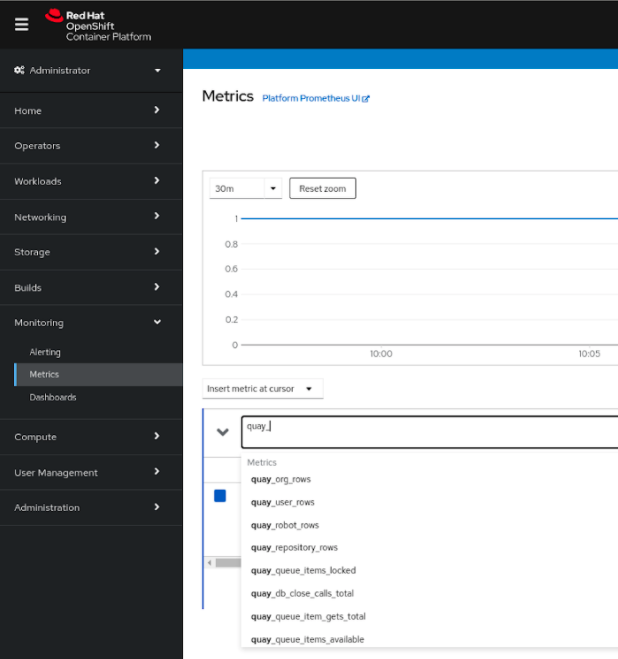

You can see the underlying metrics behind the Red Hat Quay dashboard by accessing Monitoring → Metrics in the UI. In the Expression field, enter the text quay_ to see the list of metrics available:

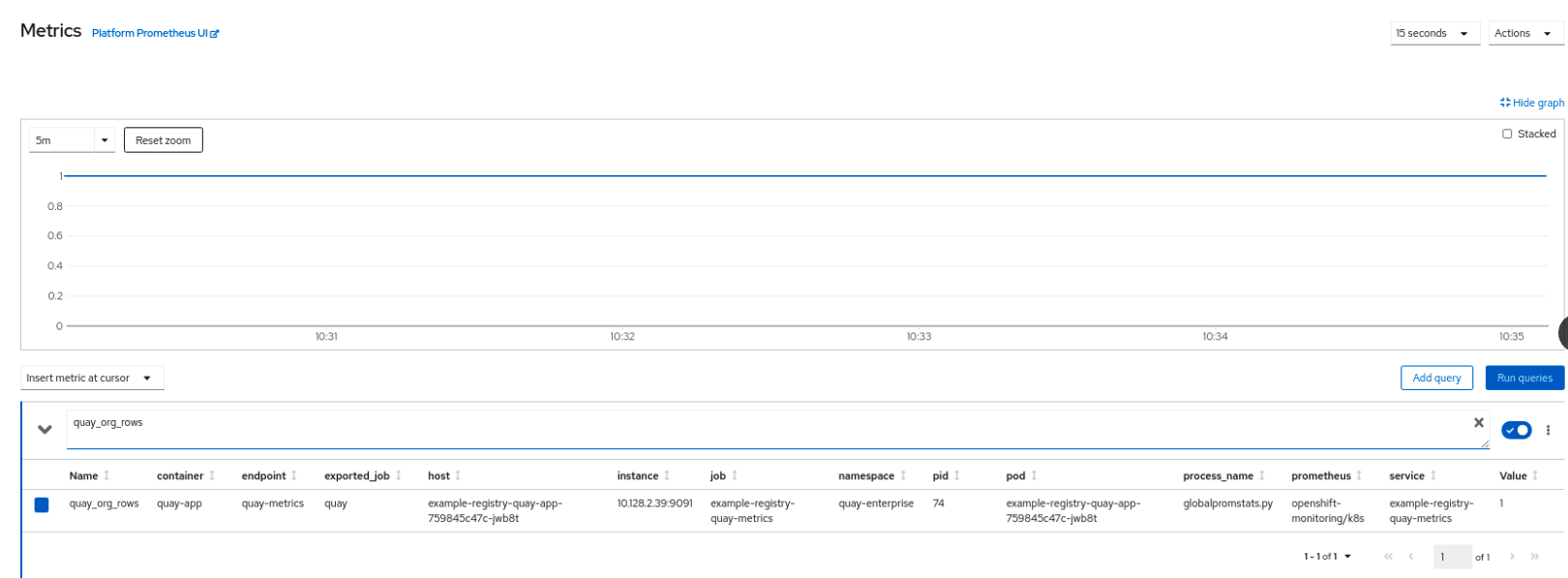

Select a sample metric, for example, quay_org_rows:

This metric shows the number of organizations in the registry. It is also directly surfaced in the dashboard.

2.3. Alerting

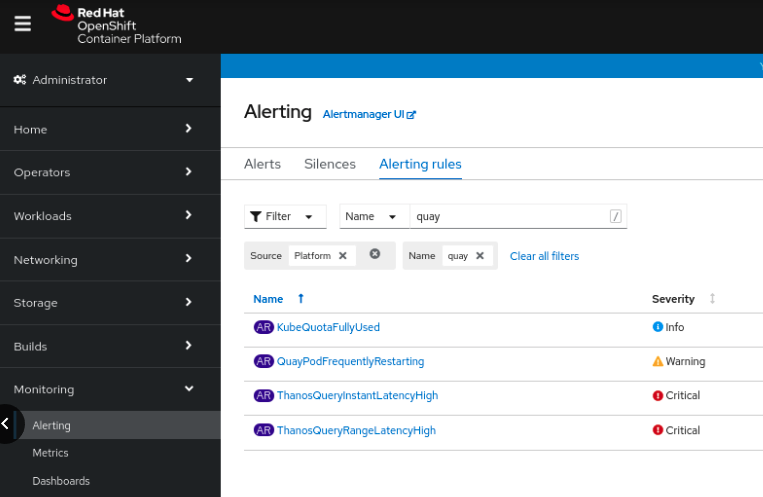

An alert is raised if the Quay pods restart too often. The alert can be configured by accessing the Alerting rules tab from Monitoring → Alerting in the console UI and searching for the Quay-specific alert:

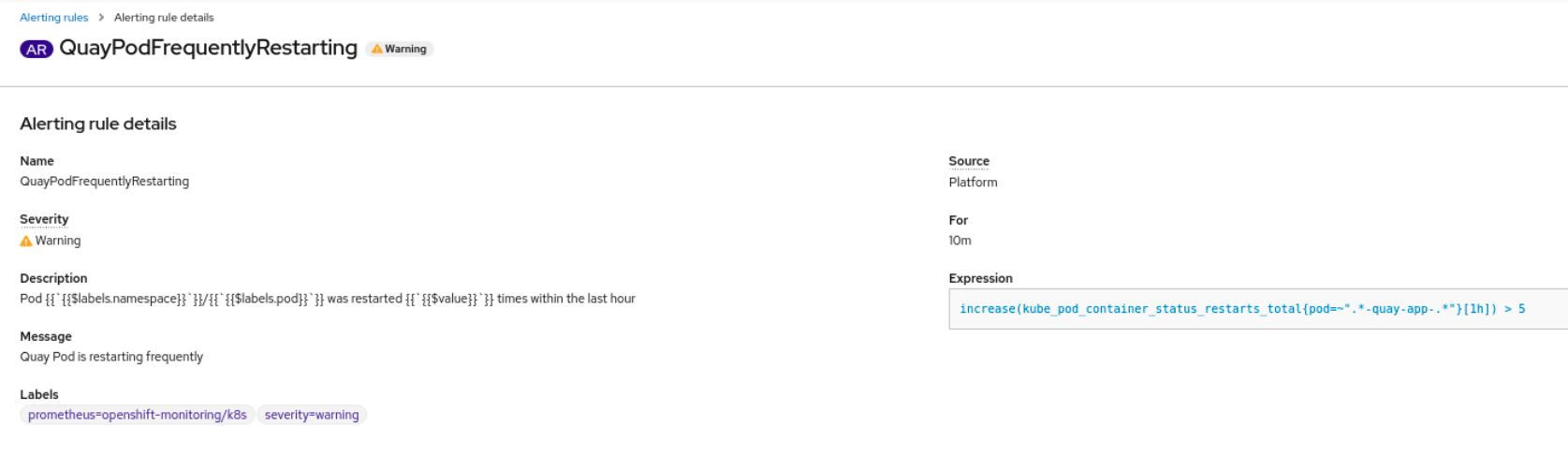

Select the QuayPodFrequentlyRestarting rule detail to configure the alert:

Chapter 3. Clair security scanner

Clair is an open source security scanner that analyzes container images and reports vulnerabilities. You can use Clair to automatically scan images and identify security issues in your container registry.

3.1. Clair vulnerability databases

Clair uses multiple vulnerability databases to identify security issues in container images. These databases provide comprehensive coverage across different operating systems and programming languages.

Clair uses the following vulnerability databases to report for issues in your images:

- Ubuntu Oval database

- Debian Security Tracker

- Red Hat Enterprise Linux (RHEL) Oval database

- SUSE Oval database

- Oracle Oval database

- Alpine SecDB database

- VMware Photon OS database

- Amazon Web Services (AWS) UpdateInfo

- Open Source Vulnerability (OSV) Database

For information about how Clair does security mapping with the different databases, see Claircore Severity Mapping.

3.1.1. Information about Open Source Vulnerability (OSV) database for Clair

Open Source Vulnerability (OSV) is a vulnerability database and monitoring service that focuses on tracking and managing security vulnerabilities in open source software.

OSV provides a comprehensive and up-to-date database of known security vulnerabilities in open source projects. It covers a wide range of open source software, including libraries, frameworks, and other components that are used in software development. For a full list of included ecosystems, see defined ecosystems.

Clair also reports vulnerability and security information for golang, java, and ruby ecosystems through the Open Source Vulnerability (OSV) database.

By leveraging OSV, developers and organizations can proactively monitor and address security vulnerabilities in open source components that they use, which helps to reduce the risk of security breaches and data compromises in projects.

For more information about OSV, see the OSV website.

3.2. Clair on OpenShift Container Platform

The Red Hat Quay Operator automatically installs and configures Clair when you deploy Red Hat Quay on OpenShift Container Platform. This simplifies setup by eliminating the need for manual Clair configuration.

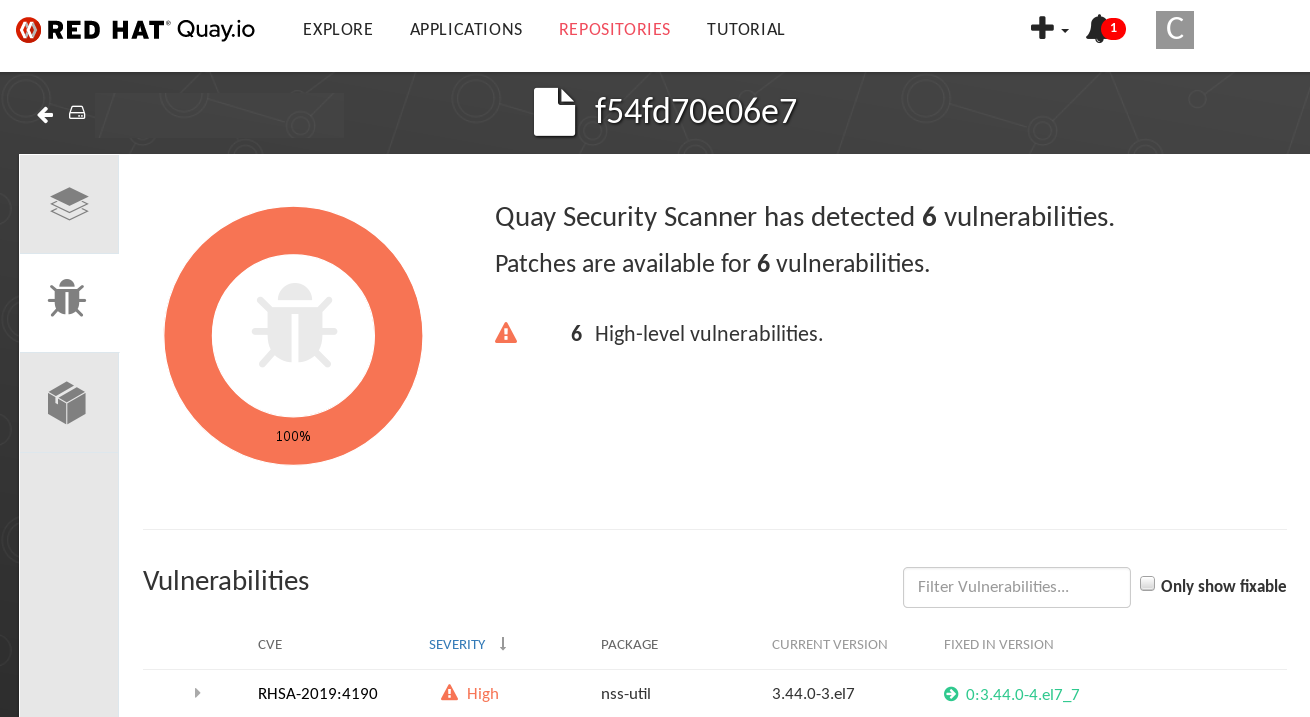

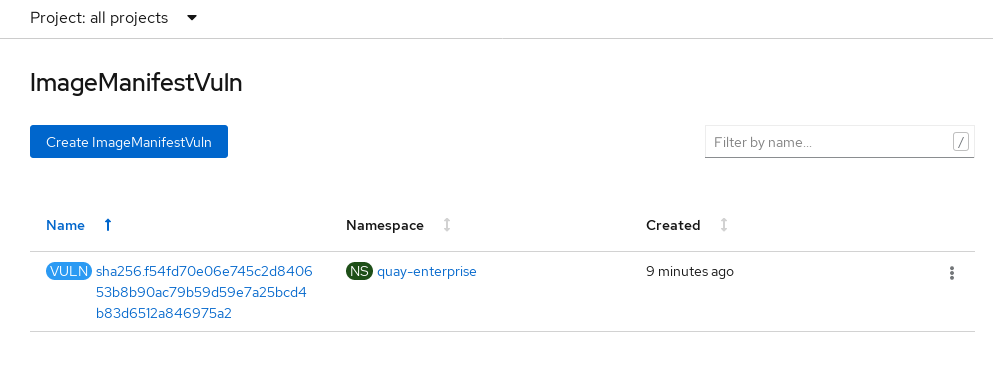

3.3. Testing Clair

To verify that Clair is working correctly on your Red Hat Quay deployment, you can pull, tag, and push a sample image to your registry, then view the vulnerability report in the UI.

Prerequisites

- You have deployed the Clair container image.

Procedure

Pull a sample image by entering the following command:

$ podman pull ubuntu:20.04Tag the image to your registry by entering the following command:

$ sudo podman tag docker.io/library/ubuntu:20.04 <quay-server.example.com>/<user-name>/ubuntu:20.04Push the image to your Red Hat Quay registry by entering the following command:

$ sudo podman push --tls-verify=false quay-server.example.com/quayadmin/ubuntu:20.04- Log in to your Red Hat Quay deployment through the UI.

- Click the repository name, for example, quayadmin/ubuntu.

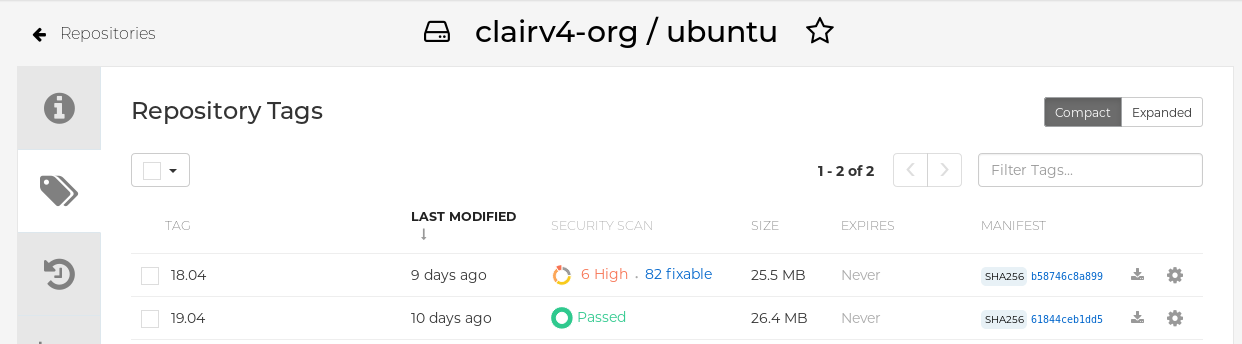

In the navigation pane, click Tags.

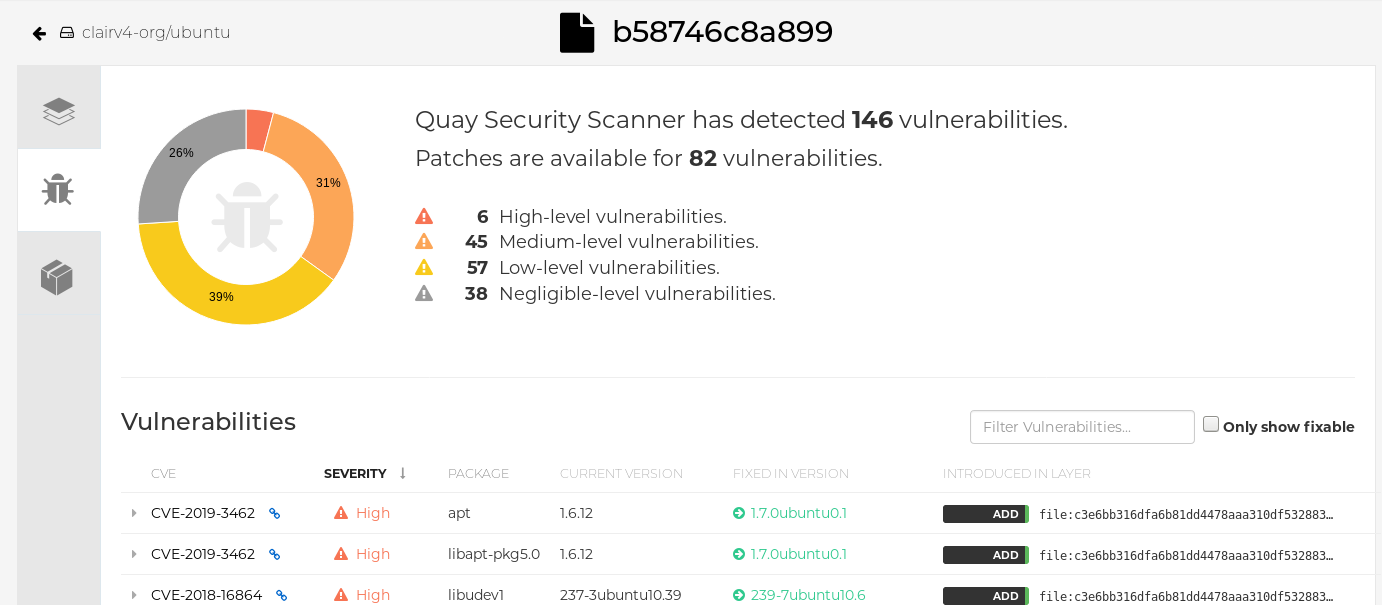

Click the image report, for example, 45 medium, to show a more detailed report:

Note

NoteIn some cases, Clair shows duplicate reports on images, for example,

ubi8/nodejs-12orubi8/nodejs-16. This occurs because vulnerabilities with same name are for different packages. This behavior is expected with Clair vulnerability reporting and will not be addressed as a bug.

3.4. Advanced Clair configuration

Advanced Clair configuration lets you customize Clair settings beyond the default installation. You can use these options to adjust scanning behavior, database connections, and other advanced features to meet specific security and performance requirements.

3.4.1. Unmanaged Clair configuration

Unmanaged Clair configuration lets you run a custom Clair setup or use an external Clair database with the Red Hat Quay Operator. You can use this configuration for geo-replicated environments where multiple Operator instances share the same database, or when you need a highly available database outside your cluster.

3.4.1.1. Running a custom Clair configuration with an unmanaged Clair database

To run a custom Clair configuration with an unmanaged Clair database, you can set the clairpostgres component to unmanaged in your QuayRegistry custom resource. This lets you use an external database for geo-replicated environments or highly available setups outside your cluster.

You must not use the same externally managed PostgreSQL database for both Red Hat Quay and Clair deployments. Your PostgreSQL database must also not be shared with other workloads, as it might exhaust the natural connection limit on the PostgreSQL side when connection-intensive workloads, like Red Hat Quay or Clair, contend for resources. Additionally, pgBouncer is not supported with Red Hat Quay or Clair, so it is not an option to resolve this issue.

Procedure

In the Quay Operator, set the

clairpostgrescomponent of theQuayRegistrycustom resource tomanaged: false:apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: quay370 spec: configBundleSecret: config-bundle-secret components: - kind: objectstorage managed: false - kind: route managed: true - kind: tls managed: false - kind: clairpostgres managed: false

3.4.1.2. Configuring a custom Clair database with an unmanaged Clair database

To configure a custom Clair database with SSL/TLS certificates for your Red Hat Quay deployment, you can create a Quay configuration bundle secret that includes the clair-config.yaml file. This lets you use your own external database with secure connections for Clair vulnerability scanning.

The following procedure sets up Clair with SSL/TLS certifications. To view a similar procedure that does not set up Clair with SSL/TLS certifications, see "Configuring a custom Clair database with a managed Clair configuration".

Procedure

Create a Quay configuration bundle secret that includes the

clair-config.yamlby entering the following command:$ oc create secret generic --from-file config.yaml=./config.yaml --from-file extra_ca_cert_rds-ca-2019-root.pem=./rds-ca-2019-root.pem --from-file clair-config.yaml=./clair-config.yaml --from-file ssl.cert=./ssl.cert --from-file ssl.key=./ssl.key config-bundle-secretExample Clair

config.yamlfileindexer: connstring: host=quay-server.example.com port=5432 dbname=quay user=quayrdsdb password=quayrdsdb sslrootcert=/run/certs/rds-ca-2019-root.pem sslmode=verify-ca layer_scan_concurrency: 6 migrations: true scanlock_retry: 11 log_level: debug matcher: connstring: host=quay-server.example.com port=5432 dbname=quay user=quayrdsdb password=quayrdsdb sslrootcert=/run/certs/rds-ca-2019-root.pem sslmode=verify-ca migrations: true metrics: name: prometheus notifier: connstring: host=quay-server.example.com port=5432 dbname=quay user=quayrdsdb password=quayrdsdb sslrootcert=/run/certs/rds-ca-2019-root.pem sslmode=verify-ca migrations: trueNote-

The database certificate is mounted under

/run/certs/rds-ca-2019-root.pemon the Clair application pod in theclair-config.yaml. It must be specified when configuring yourclair-config.yaml. -

An example

clair-config.yamlcan be found at Clair on OpenShift config.

-

The database certificate is mounted under

Add the

clair-config.yamlfile to your bundle secret, for example:apiVersion: v1 kind: Secret metadata: name: config-bundle-secret namespace: quay-enterprise data: config.yaml: <base64 encoded Quay config> clair-config.yaml: <base64 encoded Clair config> extra_ca_cert_<name>: <base64 encoded ca cert> ssl.crt: <base64 encoded SSL certificate> ssl.key: <base64 encoded SSL private key>NoteWhen updated, the provided

clair-config.yamlfile is mounted into the Clair pod. Any fields not provided are automatically populated with defaults using the Clair configuration module.You can check the status of your Clair pod by clicking the commit in the Build History page, or by running

oc get pods -n <namespace>. For example:$ oc get pods -n <namespace>Example output

NAME READY STATUS RESTARTS AGE f192fe4a-c802-4275-bcce-d2031e635126-9l2b5-25lg2 1/1 Running 0 7s

3.4.2. Running a custom Clair configuration with a managed Clair database

Running a custom Clair configuration with a managed Clair database lets you customize Clair settings while the Operator manages the database. You can use this approach to disable specific updater resources or configure Clair for disconnected environments.

-

If you are running Red Hat Quay in an disconnected environment, the

airgapparameter of yourclair-config.yamlmust be set toTrue. - If you are running Red Hat Quay in an disconnected environment, you should disable all updater components.

3.4.2.1. Setting a Clair database to managed

To have the Red Hat Quay Operator manage your Clair database, you can set the clairpostgres component to managed in your QuayRegistry custom resource. This simplifies deployment and maintenance by letting the Operator handle database provisioning and configuration.

Procedure

In the Quay Operator, set the

clairpostgrescomponent of theQuayRegistrycustom resource tomanaged: true:apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: quay370 spec: configBundleSecret: config-bundle-secret components: - kind: objectstorage managed: false - kind: route managed: true - kind: tls managed: false - kind: clairpostgres managed: true

3.4.2.2. Configuring a custom Clair database with a managed Clair configuration

To configure a custom Clair database while keeping the Clair configuration managed by the Operator, you can create a Quay configuration bundle secret that includes the clair-config.yaml file. This lets you use your own external database while the Operator continues to manage Clair settings.

Procedure

Create a Quay configuration bundle secret that includes the

clair-config.yamlby entering the following command:$ oc create secret generic --from-file config.yaml=./config.yaml --from-file extra_ca_cert_rds-ca-2019-root.pem=./rds-ca-2019-root.pem --from-file clair-config.yaml=./clair-config.yaml config-bundle-secretExample Clair

config.yamlfileindexer: connstring: host=quay-server.example.com port=5432 dbname=quay user=quayrdsdb password=quayrdsdb sslmode=disable layer_scan_concurrency: 6 migrations: true scanlock_retry: 11 log_level: debug matcher: connstring: host=quay-server.example.com port=5432 dbname=quay user=quayrdsdb password=quayrdsdb sslmode=disable migrations: true metrics: name: prometheus notifier: connstring: host=quay-server.example.com port=5432 dbname=quay user=quayrdsdb password=quayrdsdb sslmode=disable migrations: trueNote-

The database certificate is mounted under

/run/certs/rds-ca-2019-root.pemon the Clair application pod in theclair-config.yaml. It must be specified when configuring yourclair-config.yaml. -

An example

clair-config.yamlcan be found at Clair on OpenShift config.

-

The database certificate is mounted under

Add the

clair-config.yamlfile to your bundle secret, for example:apiVersion: v1 kind: Secret metadata: name: config-bundle-secret namespace: quay-enterprise data: config.yaml: <base64 encoded Quay config> clair-config.yaml: <base64 encoded Clair config>Note-

When updated, the provided

clair-config.yamlfile is mounted into the Clair pod. Any fields not provided are automatically populated with defaults using the Clair configuration module.

-

When updated, the provided

You can check the status of your Clair pod by clicking the commit in the Build History page, or by running

oc get pods -n <namespace>. For example:$ oc get pods -n <namespace>Example output

NAME READY STATUS RESTARTS AGE f192fe4a-c802-4275-bcce-d2031e635126-9l2b5-25lg2 1/1 Running 0 7s

3.4.3. Clair in disconnected environments

Clair supports disconnected environments where your Red Hat Quay deployment has no direct internet access. You can use the clairctl tool to transfer vulnerability database updates from an open host to your isolated environment, enabling Clair to scan images without internet connectivity.

Clair uses a set of components called updaters to handle the fetching and parsing of data from various vulnerability databases. Updaters are set up by default to pull vulnerability data directly from the internet and work for immediate use.

Currently, Clair enrichment data is CVSS data. Enrichment data is currently unsupported in disconnected environments.

For more information about Clair updaters, see "Clair updaters".

3.4.3.1. Setting up Clair in a disconnected OpenShift Container Platform cluster

To install the clairctl command line utility for disconnected OpenShift Container Platform deployments, you can extract the tool from a running Clair pod and set its execution permissions. This lets you use clairctl to manage vulnerability database updates in disconnected environments.

Procedure

Install the

clairctlprogram for a Clair deployment in an OpenShift Container Platform cluster by entering the following command:$ oc -n quay-enterprise exec example-registry-clair-app-64dd48f866-6ptgw -- cat /usr/bin/clairctl > clairctlNoteUnofficially, the

clairctltool can be downloadedSet the permissions of the

clairctlfile so that it can be executed and run by the user, for example:$ chmod u+x ./clairctl

3.4.3.1.1. Retrieving and decoding the Clair configuration secret for Clair deployments on OpenShift Container Platform

To configure Clair for disconnected environments on OpenShift Container Platform, you can retrieve and decode the Clair configuration secret, then update the clair-config.yaml file to set disable_updaters and airgap parameters to True. This prepares Clair to work without direct internet access.

Prerequisites

-

You have installed the

clairctlcommand line utility tool.

Procedure

Enter the following command to retrieve and decode the configuration secret, and then save it to a Clair configuration YAML:

$ oc get secret -n quay-enterprise example-registry-clair-config-secret -o "jsonpath={$.data['config\.yaml']}" | base64 -d > clair-config.yamlUpdate the

clair-config.yamlfile so that thedisable_updatersandairgapparameters are set toTrue, for example:# ... indexer: airgap: true # ... matcher: disable_updaters: true # ...

3.4.3.1.2. Exporting the updaters bundle from a connected Clair instance

To export vulnerability database updates from a connected Clair instance for use in disconnected environments, you can use the clairctl tool with your configuration file to export the updaters bundle. This creates a bundle file that you can transfer to your isolated environment.

Prerequisites

-

You have installed the

clairctlcommand line utility tool. -

You have retrieved and decoded the Clair configuration secret, and saved it to a Clair

config.yamlfile. -

The

disable_updatersandairgapparameters are set toTruein your Clairconfig.yamlfile.

Procedure

From a Clair instance that has access to the internet, use the

clairctlCLI tool with your configuration file to export the updaters bundle. For example:$ ./clairctl --config ./config.yaml export-updaters updates.gz

3.4.3.1.3. Configuring access to the Clair database in the disconnected OpenShift Container Platform cluster

To configure access to the Clair database in your disconnected OpenShift Container Platform cluster, you can determine the database service, forward the database port, and update your Clair config.yaml file to use localhost. This lets you import the updaters bundle into the database using the clairctl tool.

Prerequisites

-

You have installed the

clairctlcommand line utility tool. -

You have retrieved and decoded the Clair configuration secret, and saved it to a Clair

config.yamlfile. -

The

disable_updatersandairgapparameters are set toTruein your Clairconfig.yamlfile. - You have exported the updaters bundle from a Clair instance that has access to the internet.

Procedure

Determine your Clair database service by using the

ocCLI tool, for example:$ oc get svc -n quay-enterpriseExample output

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE example-registry-clair-app ClusterIP 172.30.224.93 <none> 80/TCP,8089/TCP 4d21h example-registry-clair-postgres ClusterIP 172.30.246.88 <none> 5432/TCP 4d21h ...Forward the Clair database port so that it is accessible from the local machine. For example:

$ oc port-forward -n quay-enterprise service/example-registry-clair-postgres 5432:5432Update your Clair

config.yamlfile, for example:indexer: connstring: host=localhost port=5432 dbname=postgres user=postgres password=postgres sslmode=disable layer_scan_concurrency: 5 migrations: true scanlock_retry: 10 airgap: true scanner: repo: rhel-repository-scanner: repo2cpe_mapping_file: /data/repository-to-cpe.json package: rhel_containerscanner: name2repos_mapping_file: /data/container-name-repos-map.jsonwhere:

connstring::Specifies the connection string for the database.rhel-repository-scanner::Specifies the repository scanner configuration.rhel_containerscanner::Specifies the container scanner configuration.

3.4.3.1.4. Importing the updaters bundle into the disconnected OpenShift Container Platform cluster

To import vulnerability database updates into your disconnected OpenShift Container Platform cluster, you can use the clairctl tool with your Clair configuration file to import the updaters bundle. This populates the Clair database with vulnerability data so Clair can scan images without internet access.

Prerequisites

-

You have installed the

clairctlcommand line utility tool. -

You have retrieved and decoded the Clair configuration secret, and saved it to a Clair

config.yamlfile. -

The

disable_updatersandairgapparameters are set toTruein your Clairconfig.yamlfile. - You have exported the updaters bundle from a Clair instance that has access to the internet.

- You have transferred the updaters bundle into your disconnected environment.

Procedure

Use the

clairctlCLI tool to import the updaters bundle into the Clair database that is deployed by OpenShift Container Platform. For example:$ ./clairctl --config ./clair-config.yaml import-updaters updates.gz

3.4.3.2. Setting up a self-managed deployment of Clair for a disconnected OpenShift Container Platform cluster

To install the clairctl command line utility for a self-managed Clair deployment on OpenShift Container Platform, you can copy the tool from a Clair container using podman and set its execution permissions. This lets you use clairctl to manage vulnerability database updates in disconnected environments.

Procedure

Install the

clairctlprogram for a self-managed Clair deployment by using thepodman cpcommand, for example:$ sudo podman cp clairv4:/usr/bin/clairctl ./clairctlSet the permissions of the

clairctlfile so that it can be executed and run by the user, for example:$ chmod u+x ./clairctl

3.4.3.2.1. Deploying a self-managed Clair container for disconnected OpenShift Container Platform clusters

To deploy a self-managed Clair container for disconnected OpenShift Container Platform clusters, you can create a configuration directory, configure a Clair configuration file with disable_updaters enabled, and start the container using podman. This lets you run Clair independently in environments without direct internet access.

Prerequisites

-

You have installed the

clairctlcommand line utility tool.

Procedure

Create a folder for your Clair configuration file, for example:

$ mkdir /etc/clairv4/config/Create a Clair configuration file with the

disable_updatersparameter set toTrue, for example:--- indexer: airgap: true --- matcher: disable_updaters: true ---Start Clair by using the container image, mounting in the configuration from the file you created:

$ sudo podman run -it --rm --name clairv4 \ -p 8081:8081 -p 8088:8088 \ -e CLAIR_CONF=/clair/config.yaml \ -e CLAIR_MODE=combo \ -v /etc/clairv4/config:/clair:Z \ registry.redhat.io/quay/clair-rhel9:v3.17.0

3.4.3.2.2. Exporting the updaters bundle from a connected Clair instance

To export vulnerability database updates from a connected self-managed Clair instance for use in disconnected environments, you can use the clairctl tool with your configuration file to export the updaters bundle. This creates a bundle file that you can transfer to your isolated environment.

Prerequisites

-

You have installed the

clairctlcommand line utility tool. - You have deployed Clair.

-

The

disable_updatersandairgapparameters are set toTruein your Clairconfig.yamlfile.

Procedure

From a Clair instance that has access to the internet, use the

clairctlCLI tool with your configuration file to export the updaters bundle. For example:$ ./clairctl --config ./config.yaml export-updaters updates.gz

3.4.3.2.3. Configuring access to the Clair database in the disconnected OpenShift Container Platform cluster

To configure access to the Clair database in your disconnected OpenShift Container Platform cluster for a self-managed deployment, you can determine the database service, forward the database port, and update your Clair config.yaml file to use localhost. This lets you import the updaters bundle into the database using the clairctl tool.

Prerequisites

-

You have installed the

clairctlcommand line utility tool. - You have deployed Clair.

-

The

disable_updatersandairgapparameters are set toTruein your Clairconfig.yamlfile. - You have exported the updaters bundle from a Clair instance that has access to the internet.

Procedure

Determine your Clair database service by using the

ocCLI tool, for example:$ oc get svc -n quay-enterpriseExample output

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE example-registry-clair-app ClusterIP 172.30.224.93 <none> 80/TCP,8089/TCP 4d21h example-registry-clair-postgres ClusterIP 172.30.246.88 <none> 5432/TCP 4d21h ...Forward the Clair database port so that it is accessible from the local machine. For example:

$ oc port-forward -n quay-enterprise service/example-registry-clair-postgres 5432:5432Update your Clair

config.yamlfile, for example:indexer: connstring: host=localhost port=5432 dbname=postgres user=postgres password=postgres sslmode=disable layer_scan_concurrency: 5 migrations: true scanlock_retry: 10 airgap: true scanner: repo: rhel-repository-scanner: repo2cpe_mapping_file: /data/repository-to-cpe.json package: rhel_containerscanner: name2repos_mapping_file: /data/container-name-repos-map.jsonwhere:

connstring::Specifies the connection string for the database.rhel-repository-scanner::Specifies the repository scanner configuration.rhel_containerscanner::Specifies the container scanner configuration.

3.4.3.2.4. Importing the updaters bundle into the disconnected OpenShift Container Platform cluster

To import vulnerability database updates into your disconnected OpenShift Container Platform cluster for a self-managed deployment, you can use the clairctl tool with your Clair configuration file to import the updaters bundle. This populates the Clair database with vulnerability data so Clair can scan images without internet access.

Prerequisites

-

You have installed the

clairctlcommand line utility tool. - You have deployed Clair.

-

The

disable_updatersandairgapparameters are set toTruein your Clairconfig.yamlfile. - You have exported the updaters bundle from a Clair instance that has access to the internet.

- You have transferred the updaters bundle into your disconnected environment.

Procedure

Use the

clairctlCLI tool to import the updaters bundle into the Clair database that is deployed by OpenShift Container Platform:$ ./clairctl --config ./clair-config.yaml import-updaters updates.gz

3.4.4. Common Product Enumeration mapping in Clair

Clair uses Common Product Enumeration (CPE) mapping files to map RPM packages to security data for accurate vulnerability scanning of Red Hat Enterprise Linux (RHEL) container images. Understanding how Clair utilizes these files ensures that your vulnerability reports remain accurate and comprehensive.

The scanner requires the CPE file to be present and accessible to process RPM packages properly. If these files are missing or inaccessible, RPM packages installed in the container image are skipped during the scanning process.

By default, the Clair indexer includes the repos2cpe and names2repos data files within the Clair container. This allows you to reference local paths such as /data/repository-to-cpe.json without additional external configuration.

While Red Hat Product Security updates CPE files regularly, the versions bundled within the Clair container are only updated during Red Hat Quay releases. This can lead to temporary discrepancies between the latest security data and the versions bundled with your current installation.

Additional resources

3.4.4.1. CPE mapping configuration reference

Common Product Enumeration (CPE) mapping configuration defines the fields and file paths used by Clair to associate packages with standardized product identifiers.

| CPE Type | Link to JSON mapping file |

|---|---|

|

| |

|

|

Example configuration

indexer:

scanner:

repo:

rhel-repository-scanner:

repo2cpe_mapping_file: /data/repository-to-cpe.json

package:

rhel_containerscanner:

name2repos_mapping_file: /data/container-name-repos-map.jsonwhere:

repo2cpe_mapping_file- Specifies the path to the JSON file mapping Red Hat repositories to CPEs.

name2repos_mapping_file- Specifies the path to the JSON file mapping container names to repositories.

3.5. Resizing Managed Storage

To expand storage capacity for your Red Hat Quay on OpenShift Container Platform deployment, you can use the OpenShift Container Platform console to resize the PostgreSQL and Clair PostgreSQL persistent volume claims. This lets you increase storage beyond the default 50 GiB allocation when your registry needs more space.

When deploying Red Hat Quay on OpenShift Container Platform, three distinct persistent volume claims (PVCs) are deployed:

- One for the PostgreSQL 15 registry.

- One for the Clair PostgreSQL 15 registry.

- One that uses NooBaa as a backend storage.

The connection between Red Hat Quay and NooBaa is done through the S3 API and ObjectBucketClaim API in OpenShift Container Platform. Red Hat Quay leverages that API group to create a bucket in NooBaa, obtain access keys, and automatically set everything up. On the backend, or NooBaa, side, that bucket is creating inside of the backing store. As a result, NooBaa PVCs are not mounted or connected to Red Hat Quay pods.

Prerequisites

- You have cluster admin privileges on OpenShift Container Platform.

Procedure

- Log into the OpenShift Container Platform console and select Storage → Persistent Volume Claims.

-

Select the desired

PersistentVolumeClaimfor either PostgreSQL 13 or Clair PostgreSQL 13, for example,example-registry-quay-postgres-13. - From the Action menu, select Expand PVC.

Enter the new size of the Persistent Volume Claim and select Expand.

After a few minutes, the expanded size should reflect in the PVC’s Capacity field.

3.6. Customizing Default Operator Images

Currently, customizing default Operator images is not supported on IBM Power and IBM Z.

Customizing default Operator images lets you override the default container images used by the Red Hat Quay Operator by setting environment variables in the ClusterServiceVersion object.

Customizing default Operator images is not supported for production Red Hat Quay environments and is only recommended for development or testing purposes. There is no guarantee your deployment will work correctly when using non-default images with the Red Hat Quay Operator.

3.6.1. Environment Variables

The Red Hat Quay Operator uses environment variables to override default container images for components such as base, clair, postgres, and redis. You can set these variables in the ClusterServiceVersion object to customize which images the Operator uses for each component.

| Environment Variable | Component |

|

|

|

|

|

|

|

|

|

|

|

|

Overridden images must be referenced by manifest (@sha256:) and not by tag (:latest).

3.6.2. Applying overrides to a running Operator

To override container images for a running Red Hat Quay Operator, you can modify the ClusterServiceVersion object to add environment variables that point to your custom images. This applies the overrides at the Operator level, so all QuayRegistry instances use the same custom images.

Procedure

The

ClusterServiceVersionobject is Operator Lifecycle Manager’s representation of a running Operator in the cluster. Find the Red Hat Quay Operator’sClusterServiceVersionby using a Kubernetes UI or thekubectl/ocCLI tool. For example:$ oc get clusterserviceversions -n <namespace>Using the UI,

oc edit, or another method, modify theClusterServiceVersionobject to include the environment variables outlined above to point to the override images:JSONPath:

spec.install.spec.deployments[0].spec.template.spec.containers[0].env- name: RELATED_IMAGE_COMPONENT_QUAY value: quay.io/projectquay/quay@sha256:c35f5af964431673f4ff5c9e90bdf45f19e38b8742b5903d41c10cc7f6339a6d - name: RELATED_IMAGE_COMPONENT_CLAIR value: quay.io/projectquay/clair@sha256:70c99feceb4c0973540d22e740659cd8d616775d3ad1c1698ddf71d0221f3ce6 - name: RELATED_IMAGE_COMPONENT_POSTGRES value: centos/postgresql-10-centos7@sha256:de1560cb35e5ec643e7b3a772ebaac8e3a7a2a8e8271d9e91ff023539b4dfb33 - name: RELATED_IMAGE_COMPONENT_REDIS value: centos/redis-32-centos7@sha256:06dbb609484330ec6be6090109f1fa16e936afcf975d1cbc5fff3e6c7cae7542

3.7. AWS S3 CloudFront

To configure AWS S3 CloudFront for your Red Hat Quay backend registry storage, you can create a secret that includes your config.yaml file and the CloudFront signing key. This enables CloudFront content delivery for your registry storage.

Procedure

Create a secret that includes your

config.yamlfile and the CloudFront signing key by entering the following command:$ oc create secret generic --from-file config.yaml=./config_awss3cloudfront.yaml --from-file default-cloudfront-signing-key.pem=./default-cloudfront-signing-key.pem test-config-bundle

Chapter 4. Geo-replication

Geo-replication lets multiple geographically distributed Red Hat Quay deployments work as a single registry from the client perspective. This improves push and pull performance in globally distributed setups and provides transparent failover and redirect for clients.

Geo-replication is supported on standalone and Operator-based deployments.

4.1. Geo-replication features

Geo-replication features optimize image push and pull operations by routing pushes to the nearest storage backend and replicating data in the background to other locations. Pulls automatically use the closest available storage engine to maximize performance, with fallback to the source storage if replication is incomplete.

The following are the key features of geo-replication:

- When geo-replication is configured, container image pushes are written to the preferred storage engine for that Red Hat Quay instance. This is typically the nearest storage backend within the region.

- After the initial push, image data is replicated in the background to other storage engines.

- The list of replication locations is configurable and those can be different storage backends.

- An image pull always uses the closest available storage engine to maximize pull performance.

- If replication has not been completed yet, the pull uses the source storage backend instead.

4.2. Geo-replication requirements and constraints

To run geo-replication reliably in Red Hat Quay, you must meet strict requirements for shared object storage, networking, load balancing, and external health monitoring. Geo-replication does not provide automatic failover, database replication, or storage awareness, so you must design and manage these behaviors outside of Red Hat Quay.

The following are the requirements and constraints for geo-replication:

- In geo-replicated setups, Red Hat Quay requires that all regions are able to read and write to all other region’s object storage. Object storage must be geographically accessible by all other regions.

- In case of an object storage system failure of one geo-replicating site, that site’s Red Hat Quay deployment must be shut down so that clients are redirected to the remaining site with intact storage systems by a global load balancer. Otherwise, clients will experience pull and push failures.

- Red Hat Quay has no internal awareness of the health or availability of the connected object storage system. Users must configure a global load balancer (LB) to monitor the health of your distributed system and to route traffic to different sites based on their storage status.

-

To check the status of your geo-replication deployment, you must use the

/health/endtoendcheckpoint, which is used for global health monitoring. You must configure the redirect manually using the/health/endtoendendpoint. The/health/instanceend point only checks local instance health. - If the object storage system of one site becomes unavailable, there will be no automatic redirect to the remaining storage system, or systems, of the remaining site, or sites.

- Geo-replication is asynchronous. The permanent loss of a site incurs the loss of the data that has been saved in that sites' object storage system but has not yet been replicated to the remaining sites at the time of failure.

A single database, and therefore all metadata and Red Hat Quay configuration, is shared across all regions.

Geo-replication does not replicate the database. In the event of an outage, Red Hat Quay with geo-replication enabled will not failover to another database.

- A single Redis cache is shared across the entire Red Hat Quay setup and needs to be accessible by all Red Hat Quay pods.

-

The exact same configuration should be used across all regions, with exception of the storage backend, which can be configured explicitly using the

QUAY_DISTRIBUTED_STORAGE_PREFERENCEenvironment variable. - Geo-replication requires object storage in each region. It does not work with local storage.

- Each region must be able to access every storage engine in each region, which requires a network path.

- Alternatively, the storage proxy option can be used.

- The entire storage backend, for example, all blobs, is replicated. Repository mirroring, by contrast, can be limited to a repository, or an image.

- All Red Hat Quay instances must share the same entrypoint, typically through a load balancer.

- All Red Hat Quay instances must have the same set of superusers, as they are defined inside the common configuration file.

In geo-replication environments, your Clair configuration can be set to

unmanaged. An unmanaged Clair database allows the Red Hat Quay Operator to work in a geo-replicated environment where multiple instances of the Operator must communicate with the same database. For more information, see Advanced Clair configuration.If you keep your Clair configuration

managed, you must retrieve the configuration file for the deployed Clair instance that is deployed by the Operator. For more information, see Retrieving and decoding the Clair configuration secret for Clair deployments on OpenShift Container Platform.- Geo-Replication requires SSL/TLS certificates and keys. For more information, see * Geo-Replication requires SSL/TLS certificates and keys. For more information, see Proof of concept deployment using SSL/TLS certificates. .

If the above requirements cannot be met, you should instead use two or more distinct Red Hat Quay deployments and take advantage of repository mirroring functions.

4.2.1. Preparing your OpenShift Container Platform environment for geo-replication

To prepare your OpenShift Container Platform environment for geo-replication, you must deploy shared PostgreSQL and Redis instances, create object storage backends for each cluster, and configure a load balancer. This sets up the infrastructure needed for multiple Red Hat Quay deployments to work as a single registry.

Procedure

- Deploy a PostgreSQL instance for Red Hat Quay.

Login to the database by entering the following command:

psql -U <username> -h <hostname> -p <port> -d <database_name>Create a database for Red Hat Quay named

quay. For example:CREATE DATABASE quay;Enable pg_trm extension inside the database

\c quay; CREATE EXTENSION IF NOT EXISTS pg_trgm;Deploy a Redis instance:

Note- Deploying a Redis instance might be unnecessary if your cloud provider has its own service.

- Deploying a Redis instance is required if you are leveraging Builders.

- Deploy a VM for Redis

- Verify that it is accessible from the clusters where Red Hat Quay is running

- Port 6379/TCP must be open

Run Redis inside the instance

sudo dnf install -y podman podman run -d --name redis -p 6379:6379 redis

- Create two object storage backends, one for each cluster. Ideally, one object storage bucket will be close to the first, or primary, cluster, and the other will run closer to the second, or secondary, cluster.

- Deploy the clusters with the same config bundle, using environment variable overrides to select the appropriate storage backend for an individual cluster.

- Configure a load balancer to provide a single entry point to the clusters.

4.2.2. Configuring geo-replication for Red Hat Quay on OpenShift Container Platform

To configure geo-replication for Red Hat Quay on OpenShift Container Platform, you can create a shared config.yaml file with PostgreSQL, Redis, and storage backend details, create a configBundleSecret, and deploy QuayRegistry resources in each cluster with storage preference overrides. This enables multiple Red Hat Quay deployments to work as a single registry with improved performance across geographic regions.

Prerequisites

- You have prepared your OpenShift Container Platform environment for geo-replication by following the "Preparing your OpenShift Container Platform environment for geo-replication" procedure.

Procedure

Create a

config.yamlfile that is shared between clusters. Thisconfig.yamlfile contains the details for the common PostgreSQL, Redis and storage backends:Geo-replication

config.yamlfileSERVER_HOSTNAME: <georep.quayteam.org or any other name>1 DB_CONNECTION_ARGS: autorollback: true threadlocals: true DB_URI: postgresql://postgres:password@10.19.0.1:5432/quay BUILDLOGS_REDIS: host: 10.19.0.2 port: 6379 USER_EVENTS_REDIS: host: 10.19.0.2 port: 6379 DATABASE_SECRET_KEY: 0ce4f796-c295-415b-bf9d-b315114704b8 DISTRIBUTED_STORAGE_CONFIG: usstorage: - GoogleCloudStorage - access_key: GOOGQGPGVMASAAMQABCDEFG bucket_name: georep-test-bucket-0 secret_key: AYWfEaxX/u84XRA2vUX5C987654321 storage_path: /quaygcp eustorage: - GoogleCloudStorage - access_key: GOOGQGPGVMASAAMQWERTYUIOP bucket_name: georep-test-bucket-1 secret_key: AYWfEaxX/u84XRA2vUX5Cuj12345678 storage_path: /quaygcp DISTRIBUTED_STORAGE_DEFAULT_LOCATIONS: - usstorage - eustorage DISTRIBUTED_STORAGE_PREFERENCE: - usstorage - eustorage FEATURE_STORAGE_REPLICATION: truewhere:

SERVER_HOSTNAME::Specifies the hostname of the global load balancer. Must match the hostname of the global load balancer.Create the

configBundleSecretcustom resource (CR) by entering the following command:$ oc create secret generic --from-file config.yaml=./config.yaml georep-config-bundleIn each of the clusters, set the

configBundleSecretand use theQUAY_DISTRIBUTED_STORAGE_PREFERENCEenvironmental variable override to configure the appropriate storage for that cluster. For example:NoteThe

config.yamlfile between both deployments must match. If making a change to one cluster, it must also be changed in the other.US cluster

QuayRegistryexampleapiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: example-registry namespace: quay-enterprise spec: configBundleSecret: georep-config-bundle components: - kind: objectstorage managed: false - kind: route managed: true - kind: tls managed: false - kind: postgres managed: false - kind: clairpostgres managed: false - kind: redis managed: false - kind: quay managed: true overrides: env: - name: QUAY_DISTRIBUTED_STORAGE_PREFERENCE value: usstorage - kind: mirror managed: true overrides: env: - name: QUAY_DISTRIBUTED_STORAGE_PREFERENCE value: usstorageNoteBecause SSL/TLS is unmanaged, and the route is managed, you must supply the certificates directly in the config bundle. For more information, see Configuring SSL/TLS and Routes.

European cluster

apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: example-registry namespace: quay-enterprise spec: configBundleSecret: georep-config-bundle components: - kind: objectstorage managed: false - kind: route managed: true - kind: tls managed: false - kind: postgres managed: false - kind: clairpostgres managed: false - kind: redis managed: false - kind: quay managed: true overrides: env: - name: QUAY_DISTRIBUTED_STORAGE_PREFERENCE value: eustorage - kind: mirror managed: true overrides: env: - name: QUAY_DISTRIBUTED_STORAGE_PREFERENCE value: eustorageNoteBecause SSL/TLS is unmanaged, and the route is managed, you must supply the certificates directly in the config bundle. For more information, see Configuring SSL and TLS for Red Hat Quay.

4.2.3. Mixed storage for geo-replication

Geo-replication supports using different storage backends, such as AWS S3 in public cloud and Ceph on-premise, for replication targets. You must grant access to all storage backends from all Red Hat Quay pods and cluster nodes, so use a VPN or token pair with bucket-specific access to meet security requirements.

Because geo-replication supports multiple replication targets, it is recommended to use a VPN or token pair with bucket-specific access to meet security requirements. This results in the public cloud instance of Red Hat Quay having access to on-premise storage, but the network is encrypted, protected, and uses ACLs, thereby meeting security requirements. If you cannot implement these security measures, it might be preferable to deploy two distinct Red Hat Quay registries and to use repository mirroring as an alternative to geo-replication.

4.2.4. Upgrading a geo-replication deployment of Red Hat Quay on OpenShift Container Platform

To upgrade your geo-replicated Red Hat Quay on OpenShift Container Platform deployment, you must stop operations, scale down secondary systems, upgrade the primary system, then upgrade secondary systems. This ensures a safe upgrade process with minimal downtime across your geo-replicated registry.

- When upgrading geo-replicated Red Hat Quay on OpenShift Container Platform deployment to the next y-stream release (for example, Red Hat Quay 3.16 → Red Hat Quay 3), you must stop operations before upgrading.

- There is intermittent downtime down upgrading from one y-stream release to the next.

- It is highly recommended to back up your Red Hat Quay on OpenShift Container Platform deployment before upgrading.

The following procedure assumes that you are running the Red Hat Quay registry on three or more systems. For this procedure, three systems named System A, System B, and System C are used. System A serves as the primary system in which the Red Hat Quay Operator is deployed.

Procedure

On System B and System C, scale down your Red Hat Quay registry. This is done by disabling auto scaling and overriding the replica county for Red Hat Quay, mirror workers, and Clair if it is managed. Use the following

quayregistry.yamlfile as a reference:apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: registry namespace: ns spec: components: … - kind: horizontalpodautoscaler managed: false - kind: quay managed: true overrides: replicas: 0 - kind: clair managed: true overrides: replicas: 0 - kind: mirror managed: true overrides: replicas: 0 …where:

managed: false::Disables auto scaling ofQuay,ClairandMirroringworkersoverrides::Sets the replica count to 0 for components accessing the database and objectstorageNoteYou must keep the Red Hat Quay registry running on System A. Do not update the

quayregistry.yamlfile on System A.Wait for the

registry-quay-app,registry-quay-mirror, andregistry-clair-apppods to disappear. Enter the following command to check their status:oc get pods -n <quay-namespace>Example output

quay-operator.v3.7.1-6f9d859bd-p5ftc 1/1 Running 0 12m quayregistry-clair-postgres-7487f5bd86-xnxpr 1/1 Running 1 (12m ago) 12m quayregistry-quay-app-upgrade-xq2v6 0/1 Completed 0 12m quayregistry-quay-redis-84f888776f-hhgms 1/1 Running 0 12m- On System A, initiate a Red Hat Quay upgrade to the latest y-stream version. This is a manual process. For more information about upgrading installed Operators, see Upgrading installed Operators. For more information about Red Hat Quay upgrade paths, see Upgrading the Red Hat Quay Operator.

-

After the new Red Hat Quay registry is installed, the necessary upgrades on the cluster are automatically completed. Afterwards, new Red Hat Quay pods are started with the latest y-stream version. Additionally, new

Quaypods are scheduled and started. Confirm that the update has properly worked by navigating to the Red Hat Quay UI:

In the OpenShift console, navigate to Operators → Installed Operators, and click the Registry Endpoint link.

ImportantDo not execute the following step until the Red Hat Quay UI is available. Do not upgrade the Red Hat Quay registry on System B and on System C until the UI is available on System A.

Confirm that the update has properly worked on System A, initiate the Red Hat Quay upgrade on System B and on System C. The Operator upgrade results in an upgraded Red Hat Quay installation, and the pods are restarted.

NoteBecause the database schema is correct for the new y-stream installation, the new pods on System B and on System C should quickly start.

After updating, revert the changes made in step 1 of this procedure by removing

overridesfor the components. For example:apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: registry namespace: ns spec: components: … - kind: horizontalpodautoscaler managed: true - kind: quay managed: true - kind: clair managed: true - kind: mirror managed: true …where:

kind: horizontalpodautoscaler::Set this resource toTrueif thehorizontalpodautoscalerresource was set toTruebefore the upgrade procedure, or if you want Red Hat Quay to scale in case of a resource shortage.

4.2.5. Removing a geo-replicated site from your Red Hat Quay on OpenShift Container Platform deployment

To remove a geo-replicated site from your Red Hat Quay on OpenShift Container Platform deployment, you must sync all blobs between sites, remove the storage configuration entry, and use the removelocation utility to permanently delete the site. This action cannot be undone, so ensure that all data is synchronized before proceeding.

Prerequisites

- You are logged into OpenShift Container Platform.

-

You have configured Red Hat Quay geo-replication with at least two sites, for example,

usstorageandeustorage. - Each site has its own Organization, Repository, and image tags.

Procedure

Sync the blobs between all of your defined sites by running the following command:

$ python -m util.backfillreplicationWarningPrior to removing storage engines from your Red Hat Quay

config.yamlfile, you must ensure that all blobs are synced between all defined sites.When running this command, replication jobs are created which are picked up by the replication worker. If there are blobs that need replicated, the script returns UUIDs of blobs that will be replicated. If you run this command multiple times, and the output from the return script is empty, it does not mean that the replication process is done; it means that there are no more blobs to be queued for replication. Customers should use appropriate judgement before proceeding, as the allotted time replication takes depends on the number of blobs detected.

Alternatively, you could use a third party cloud tool, such as Microsoft Azure, to check the synchronization status.

This step must be completed before proceeding.

-

In your Red Hat Quay

config.yamlfile for siteusstorage, remove theDISTRIBUTED_STORAGE_CONFIGentry for theeustoragesite. Identify your Red Hat Quay application pods by entering the following command:

$ oc get pod -n <quay_namespace>Example output

quay390usstorage-quay-app-5779ddc886-2drh2 quay390eustorage-quay-app-66969cd859-n2ssmOpen an interactive shell session in the

usstoragepod by entering the following command:$ oc rsh quay390usstorage-quay-app-5779ddc886-2drh2Permanently remove the

eustoragesite by entering the following command:ImportantThe following action cannot be undone. Use with caution.

sh-4.4$ python -m util.removelocation eustorageExample output

WARNING: This is a destructive operation. Are you sure you want to remove eustorage from your storage locations? [y/n] y Deleted placement 30 Deleted placement 31 Deleted placement 32 Deleted placement 33 Deleted location eustorage

Chapter 5. Backing up and restoring Red Hat Quay managed by the Red Hat Quay Operator

To protect Red Hat Quay data and enable recovery from failures, you can back up and restore Red Hat Quay instances managed by the Red Hat Quay Operator on OpenShift Container Platform.

5.1. Enabling read-only mode for Red Hat Quay on OpenShift Container Platform

To maintain service availability during backup and restore operations, you can enable read-only mode for your Red Hat Quay deployment on OpenShift Container Platform. Read-only mode restricts write access to ensure data integrity while keeping the registry online.

When backing up and restoring, you are required to scale down your Red Hat Quay on OpenShift Container Platform deployment. This results in service unavailability during the backup period which, in some cases, might be unacceptable. Enabling read-only mode ensures service availability during the backup and restore procedure for Red Hat Quay on OpenShift Container Platform deployments.

In some cases, a read-only option for Red Hat Quay is not possible since it requires inserting a service key and other manual configuration changes. As an alternative to read-only mode, Red Hat Quay administrators might consider enabling the DISABLE_PUSHES feature. When this field is set to True, users are unable to push images or image tags to the registry when using the CLI. Enabling DISABLE_PUSHES differs from read-only mode because the database is not set as read-only when it is enabled.

This field might be useful in some situations such as when Red Hat Quay administrators want to calculate their registry’s quota and disable image pushing until after calculation has completed. With this method, administrators can avoid putting putting the whole registry in read-only mode, which affects the database, so that most operations can still be done.

For information about enabling this configuration field, see Miscellaneous configuration fields.

5.1.1. Prerequisites for enabling read-only mode

You must meet the following prerequisites to enable read-only mode for Red Hat Quay on OpenShift Container Platform:

If you are using Red Hat Enterprise Linux (RHEL) 7.x:

- You have enabled the Red Hat Software Collections List (RHSCL).

- You have installed Python 3.6.

-

You have downloaded the

virtualenvpackage. -

You have installed the

gitCLI.

If you are using Red Hat Enterprise Linux (RHEL) 8:

- You have installed Python 3 on your machine.

-

You have downloaded the

python3-virtualenvpackage. -

You have installed the

gitCLI.

-

You have cloned the

https://github.com/quay/quay.gitrepository. -

You have installed the

ocCLI. -

You have access to the cluster with

cluster-adminprivileges.

5.1.2. Creating service keys for Red Hat Quay on OpenShift Container Platform

To enable Red Hat Quay to communicate with components and sign completed requests such as image scanning and login, you can create service keys. Access the Quay container pod and run the keypair generation script to create the necessary keys.

Procedure

Enter the following command to obtain a list of Red Hat Quay pods:

$ oc get pods -n <namespace>Example output

example-registry-clair-app-7dc7ff5844-4skw5 0/1 Error 0 70d example-registry-clair-app-7dc7ff5844-nvn4f 1/1 Running 0 31d example-registry-clair-app-7dc7ff5844-x4smw 0/1 ContainerStatusUnknown 6 (70d ago) 70d example-registry-clair-app-7dc7ff5844-xjnvt 1/1 Running 0 60d example-registry-clair-postgres-547d75759-75c49 1/1 Running 0 70d example-registry-quay-app-76c8f55467-52wjz 1/1 Running 0 70d example-registry-quay-app-76c8f55467-hwz4c 1/1 Running 0 70d example-registry-quay-app-upgrade-57ghs 0/1 Completed 1 70d example-registry-quay-database-7c55899f89-hmnm6 1/1 Running 0 70d example-registry-quay-mirror-6cccbd76d-btsnb 1/1 Running 0 70d example-registry-quay-mirror-6cccbd76d-x8g42 1/1 Running 0 70d example-registry-quay-redis-85cbdf96bf-4vk5m 1/1 Running 0 70dOpen a remote shell session to the

Quaycontainer by entering the following command:$ oc rsh example-registry-quay-app-76c8f55467-52wjzCreate the necessary service keys by entering the following command:

sh-4.4$ python3 tools/generatekeypair.py quay-readonlyExample output

Writing public key to quay-readonly.jwk Writing key ID to quay-readonly.kid Writing private key to quay-readonly.pem

5.1.3. Adding keys to the PostgreSQL database

To enable read-only mode configuration in Red Hat Quay, you can add service keys to the PostgreSQL database. Use SQL INSERT statements to store the keys and their approval information.

Prerequisites

- You have created the service keys.

Procedure

Enter the following command to enter your Red Hat Quay database environment:

$ oc rsh example-registry-quay-database-76c8f55467-52wjz psql -U <database_username> -d <database_name>Display the approval types and associated notes of the

servicekeyapprovalby entering the following command:quay=# select * from servicekeyapproval;Example output

id | approver_id | approval_type | approved_date | notes ----+-------------+----------------------------------+----------------------------+------- 1 | | ServiceKeyApprovalType.AUTOMATIC | 2024-05-07 03:47:48.181347 | 2 | | ServiceKeyApprovalType.AUTOMATIC | 2024-05-07 03:47:55.808087 | 3 | | ServiceKeyApprovalType.AUTOMATIC | 2024-05-07 03:49:04.27095 | 4 | | ServiceKeyApprovalType.AUTOMATIC | 2024-05-07 03:49:05.46235 | 5 | 1 | ServiceKeyApprovalType.SUPERUSER | 2024-05-07 04:05:10.296796 | ...Add the service key to your Red Hat Quay database by entering the following query:

quay=# INSERT INTO servicekey (name, service, metadata, kid, jwk, created_date, expiration_date) VALUES ('quay-readonly', 'quay', '{}', '{<contents_of_.kid_file>}', '{<contents_of_.jwk_file>}', '{<created_date_of_read-only>}', '{<expiration_date_of_read-only>}');Example output

INSERT 0 1Next, add the key approval with the following query:

quay=# INSERT INTO servicekeyapproval ('approval_type', 'approved_date', 'notes') VALUES ("ServiceKeyApprovalType.SUPERUSER", "CURRENT_DATE", {include_notes_here_on_why_this_is_being_added});Example output

INSERT 0 1Set the

approval_idfield on the created service key row to theidfield from the created service key approval. You can use the followingSELECTstatements to get the necessary IDs:UPDATE servicekey SET approval_id = (SELECT id FROM servicekeyapproval WHERE approval_type = 'ServiceKeyApprovalType.SUPERUSER') WHERE name = 'quay-readonly';Example output

UPDATE 1

5.1.4. Configuring read-only mode Red Hat Quay on OpenShift Container Platform

To enable read-only mode in Red Hat Quay and safely manage registry operations such as backup and restore, you can modify the configuration secret and restart the Quay container.

Deploying Red Hat Quay on OpenShift Container Platform in read-only mode requires you to modify the secrets stored inside of your OpenShift Container Platform cluster. It is highly recommended that you create a backup of the secret prior to making changes to it.

Prerequisites

- You have created the service keys and added them to your PostgreSQL database.

Procedure

Read the secret name of your Red Hat Quay on OpenShift Container Platform deployment by entering the following command:

$ oc get deployment -o yaml <quay_main_app_deployment_name>Use the

base64command to encode thequay-readonly.kidandquay-readonly.pemfiles by entering the following commands:$ base64 -w0 quay-readonly.kidExample output

ZjUyNDFm...$ base64 -w0 quay-readonly.pemExample output

LS0tLS1CRUdJTiBSU0E...Obtain the current configuration bundle and secret by entering the following command. Save the output to a file called

config.yaml:$ oc get secret quay-config-secret-name -o json | jq '.data."config.yaml"' | cut -d '"' -f2 | base64 -d -w0 > config.yamlEdit the

config.yamlfile and add the following information to enable read-only mode:# ... REGISTRY_STATE: readonly INSTANCE_SERVICE_KEY_KID_LOCATION: 'conf/stack/quay-readonly.kid' INSTANCE_SERVICE_KEY_LOCATION: 'conf/stack/quay-readonly.pem' # ...Save the file and

base64encode it by entering the following command:$ base64 -w0 quay-config.yamlScale down the Red Hat Quay Operator pods to

0by entering the following command. This ensures that the Operator does not reconcile the secret after editing it.$ oc scale --replicas=0 deployment quay-operator -n openshift-operatorsEdit the secret to include the new content by entering the following command:

$ oc edit secret quay-config-secret-name -n quay-namespace# ... data: "quay-readonly.kid": "ZjUyNDFm..." "quay-readonly.pem": "LS0tLS1CRUdJTiBSU0E..." "config.yaml": "QUNUSU9OX0xPR19..." # ...With your Red Hat Quay on OpenShift Container Platform deployment on read-only mode, you can safely manage your registry’s operations and perform such actions as backup and restore.

5.1.5. Scaling up the Red Hat Quay from a read-only deployment

To exit read-only mode and restore normal operations in Red Hat Quay, you can remove the read-only settings from the config.yaml file and scale the Operator deployment back up.

Depending on your needs, you might wait to scale up the Red Hat Quay deployment after backing up and restoring your regisry.

Procedure

Edit the

config.yamlfile and remove the following information:# ... REGISTRY_STATE: readonly INSTANCE_SERVICE_KEY_KID_LOCATION: 'conf/stack/quay-readonly.kid' INSTANCE_SERVICE_KEY_LOCATION: 'conf/stack/quay-readonly.pem' # ...Scale the Red Hat Quay Operator back up by entering the following command:

$ oc scale --replicas=1 deployment quay-operator -n openshift-operators

5.2. Backing up Red Hat Quay on OpenShift Container Platform

To create backups of your Red Hat Quay on OpenShift Container Platform deployment for disaster recovery, you can back up the configuration, PostgreSQL database, and object storage. Regular backups ensure you can restore your registry to a previous state if needed.

Database backups should be performed regularly using either the supplied tools on the PostgreSQL image or your own backup infrastructure. The Red Hat Quay Operator does not ensure that the PostgreSQL database is backed up.

PostgreSQL and S3 object storage backups must be taken at the same time to avoid desynchronization. If backups are taken at different times, the database might contain references to storage blobs that are not present in the storage backup, which can cause data inconsistency and restore failures.

This procedure covers backing up your Red Hat Quay PostgreSQL database. It does not cover backing up the Clair PostgreSQL database. Backing up the Clair PostgreSQL database is not needed because it can be recreated. If you opt to recreate it from scratch, you wait for the information to be repopulated after all images inside of your Red Hat Quay deployment are scanned. During this downtime, security reports are unavailable.

If you are considering backing up the Clair PostgreSQL database, you must consider that its size is dependent upon the number of images stored inside of Red Hat Quay. As a result, the database can be extremely large.

5.2.1. Prerequisites for backing up Red Hat Quay on OpenShift Container Platform

-

A healthy Red Hat Quay deployment on OpenShift Container Platform using the Red Hat Quay Operator. The status condition

Availableis set toTrue. -

The components

quay,postgresandobjectstorageare set tomanaged: true -

If the component

clairis set tomanaged: truethe componentclairpostgresis also set tomanaged: true(starting with Red Hat Quay v3.7 or later)

If your deployment contains partially unmanaged database or storage components and you are using external services for PostgreSQL or S3-compatible object storage to run your Red Hat Quay deployment, you must refer to the service provider or vendor documentation to create a backup of the data. You can refer to the tools described in this guide as a starting point on how to backup your external PostgreSQL database or object storage.

5.2.2. Red Hat Quay configuration backup

To back up your Red Hat Quay configuration for disaster recovery, you can export the QuayRegistry custom resource, back up the managed secret keys, and save the config bundle and config.yaml files. This procedure creates backup files that you can use to restore your registry configuration.

Procedure

To back the

QuayRegistrycustom resource by exporting it, enter the following command:$ oc get quayregistry <quay_registry_name> -n <quay_namespace> -o yaml > quay-registry.yamlEdit the resulting

quayregistry.yamland remove the status section and the following metadata fields:metadata.creationTimestamp metadata.finalizers metadata.generation metadata.resourceVersion metadata.uidBackup the managed keys secret by entering the following command:

NoteIf you are running a version older than Red Hat Quay 3.7.0, this step can be skipped. Some secrets are automatically generated while deploying Red Hat Quay for the first time. These are stored in a secret called

<quay_registry_name>-quay-registry-managed-secret-keysin the namespace of theQuayRegistryresource.$ oc get secret -n <quay_namespace> <quay_registry_name>-quay-registry-managed-secret-keys -o yaml > managed_secret_keys.yamlEdit the resulting

managed_secret_keys.yamlfile and remove the entrymetadata.ownerReferences. Yourmanaged_secret_keys.yamlfile should look similar to the following:apiVersion: v1 kind: Secret type: Opaque metadata: name: <quay_registry_name>-quay-registry-managed-secret-keys namespace: <quay_namespace> data: CONFIG_EDITOR_PW: <redacted> DATABASE_SECRET_KEY: <redacted> DB_ROOT_PW: <redacted> DB_URI: <redacted> SECRET_KEY: <redacted> SECURITY_SCANNER_V4_PSK: <redacted>All information under the

dataproperty should remain the same.Redirect the current

Quayconfiguration file by entering the following command:$ oc get secret -n <quay-namespace> $(oc get quayregistry <quay_registry_name> -n <quay_namespace> -o jsonpath='{.spec.configBundleSecret}') -o yaml > config-bundle.yamlBackup the

/conf/stack/config.yamlfile mounted inside of theQuaypods:$ oc exec -it quay_pod_name -- cat /conf/stack/config.yaml > quay_config.yamlObtain the

Quaydatabase name:$ oc -n <quay_namespace> rsh $(oc get pod -l app=quay -o NAME -n <quay_namespace> |head -n 1) cat /conf/stack/config.yaml|awk -F"/" '/^DB_URI/ {print $4}'Example output

quayregistry-quay-database

5.2.3. Scaling down the Red Hat Quay deployment

To create a consistent backup of your Red Hat Quay deployment, you must scale down the deployment by disabling auto scaling and setting replica counts to zero. This ensures the registry is in a quiescent state before backing up.

This step is needed to create a consistent backup of the state of your Red Hat Quay deployment. Do not omit this step, including in setups where PostgreSQL databases and/or S3-compatible object storage are provided by external services (unmanaged by the Red Hat Quay Operator).

Procedure

Scale down the Red Hat Quay deployment by disabling auto scaling and overriding the replica count for Red Hat Quay, mirror workers, and Clair (if managed). For example:

apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: registry namespace: ns spec: components: … - kind: horizontalpodautoscaler managed: false - kind: quay managed: true overrides: replicas: 0 - kind: clair managed: true overrides: replicas: 0 - kind: mirror managed: true overrides: replicas: 0 …where:

managed: false::Disables auto scaling of Quay, Clair and Mirroring workers.overrides::Sets the replica count to 0 for components accessing the database and objectstorage.Wait for the

registry-quay-app,registry-quay-mirrorandregistry-clair-apppods (depending on which components you set to be managed by the Red Hat Quay Operator) to disappear. You can check their status by entering the following command:$ oc get pods -n <quay_namespace>Example output:

$ oc get podExample output

quay-operator.v3.7.1-6f9d859bd-p5ftc 1/1 Running 0 12m quayregistry-clair-postgres-7487f5bd86-xnxpr 1/1 Running 1 (12m ago) 12m quayregistry-quay-app-upgrade-xq2v6 0/1 Completed 0 12m quayregistry-quay-database-859d5445ff-cqthr 1/1 Running 0 12m quayregistry-quay-redis-84f888776f-hhgms 1/1 Running 0 12m

5.2.4. Backing up the Red Hat Quay managed database

To back up your Red Hat Quay managed database for disaster recovery, you can identify the PostgreSQL pod and use pg_dump to create a backup SQL file. This procedure creates a backup that you can use to restore your database.

If your Red Hat Quay deployment is configured with external, or unmanged, PostgreSQL database(s), refer to your vendor’s documentation on how to create a consistent backup of these databases.

Procedure

Identify the Red Hat Quay PostgreSQL pod name by entering the following command:

$ oc get pod -l quay-component=postgres -n <quay_namespace> -o jsonpath='{.items[0].metadata.name}'Example output:

quayregistry-quay-database-59f54bb7-58xs7Download a backup database by entering the following command:

$ oc -n <quay_namespace> exec quayregistry-quay-database-59f54bb7-58xs7 -- /usr/bin/pg_dump -C quayregistry-quay-database > backup.sql

5.2.5. Backing up the Red Hat Quay managed object storage

To back up your Red Hat Quay managed object storage for disaster recovery, you can export AWS credentials from secrets and use the aws s3 sync command to copy all blobs to a local directory. This procedure creates a backup of your registry’s object storage data.

The instructions in this section apply to the following configurations:

- Standalone, multi-cloud object gateway configurations

-

OpenShift Data Foundations storage requires that the Red Hat Quay Operator provisioned an S3 object storage bucket from, through the

ObjectStorageBucketClaimAPI.

Procedure

Decode and export the

AWS_ACCESS_KEY_IDby entering the following command:$ export AWS_ACCESS_KEY_ID=$(oc get secret -l app=noobaa -n <quay-namespace> -o jsonpath='{.items[0].data.AWS_ACCESS_KEY_ID}' |base64 -d)Decode and export the

AWS_SECRET_ACCESS_KEY_IDby entering the following command:$ export AWS_SECRET_ACCESS_KEY=$(oc get secret -l app=noobaa -n <quay-namespace> -o jsonpath='{.items[0].data.AWS_SECRET_ACCESS_KEY}' |base64 -d)Create a new directory by entering the following command:

$ mkdir blobsCopy all blobs to the directory by entering the following command:

$ aws s3 sync --no-verify-ssl --endpoint https://$(oc get route s3 -n openshift-storage -o jsonpath='{.spec.host}') s3://$(oc get cm -l app=noobaa -n <quay-namespace> -o jsonpath='{.items[0].data.BUCKET_NAME}') ./blobs

5.2.6. Scaling up the Red Hat Quay deployment

To restore your Red Hat Quay deployment to normal operation after scaling down, you can re-enable auto scaling and remove replica overrides for quay, mirror workers, and Clair. This restores your registry to full capacity after completing backup or maintenance tasks.

Procedure

Scale up the Red Hat Quay deployment by re-enabling auto scaling, if desired, and removing the replica overrides for Quay, mirror workers and Clair as applicable. For example:

apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: registry namespace: ns spec: components: … - kind: horizontalpodautoscaler managed: true1 - kind: quay2 managed: true - kind: clair managed: true - kind: mirror managed: true …where:

managed: true::Re-enables auto scaling of Quay, Clair and Mirroring workers again.overrides::Replica overrides are removed again to scale the Quay components back up.Check the status of the Red Hat Quay deployment by entering the following command:

$ oc wait quayregistry registry --for=condition=Available=true -n <quay_namespace>Example output:

apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: ... name: registry namespace: <quay-namespace> ... spec: ... status: - lastTransitionTime: '2022-06-20T05:31:17Z' lastUpdateTime: '2022-06-20T17:31:13Z' message: All components reporting as healthy reason: HealthChecksPassing status: 'True' type: Available

5.3. Restoring Red Hat Quay

To restore your Red Hat Quay registry when the Operator manages the database, you can restore the configuration, database, and object storage from backups. This procedure restores your registry to a previous state after performing the backup process.

5.3.1. Prerequisites for restoring Red Hat Quay

The following prerequisites are required to restore Red Hat Quay:

- Red Hat Quay is deployed on OpenShift Container Platform using the Red Hat Quay Operator.

- A backup of the Red Hat Quay configuration managed by the Red Hat Quay Operator has been created following the instructions in the Backing up Red Hat Quay section.

- The object storage bucket used by Red Hat Quay has been backed up.

-

The components

quay,postgresandobjectstorageare set tomanaged: true -

If the component

clairis set tomanaged: true, the componentclairpostgresis also set tomanaged: true. - There is no running Red Hat Quay deployment managed by the Red Hat Quay Operator in the target namespace on your OpenShift Container Platform cluster

If your deployment contains partially unmanaged database or storage components and you are using external services for PostgreSQL or S3-compatible object storage to run your Red Hat Quay deployment, you must refer to the service provider or vendor documentation to restore their data from a backup prior to restore Red Hat Quay

5.3.2. Restoring Red Hat Quay from a backup

To restore your Red Hat Quay registry and configuration from a backup, you can restore the configuration bundle, managed secret keys, and QuayRegistry custom resource. This procedure restores your registry to a previous state using backup files created with the backup process.

Prerequisites

- You have backed up your Red Hat Quay registry and configuration.

-

You have the backup files

config-bundle.yaml,managed-secret-keys.yaml, andquay-registry.yaml.

Procedure

Restore the backed up Red Hat Quay configuration by entering the following command:

$ oc create -f ./config-bundle.yamlImportantIf you receive the error

Error from server (AlreadyExists): error when creating "./config-bundle.yaml": secrets "config-bundle-secret" already exists, you must delete your existing resource with$ oc delete Secret config-bundle-secret -n <quay-namespace>and recreate it with$ oc create -f ./config-bundle.yaml.Restore the generated keys from the backup by entering the following command:

$ oc create -f ./managed-secret-keys.yamlRestore the

QuayRegistrycustom resource by entering the following command:$ oc create -f ./quay-registry.yamlCheck the status of the Red Hat Quay deployment by entering the following command. Wait for it to be available:

$ oc wait quayregistry registry --for=condition=Available=true -n <quay-namespace>

5.3.3. Scaling down the Red Hat Quay deployment

To scale down your Red Hat Quay deployment, you can disable auto scaling and set replica counts to zero. This reduces resource consumption and stops registry operations temporarily.

Procedure

Scale down the Red Hat Quay deployment by disabling auto scaling and overriding the replica count for Quay, mirror workers and Clair (if managed). For example:

apiVersion: quay.redhat.com/v1 kind: QuayRegistry metadata: name: registry namespace: ns spec: components: … - kind: horizontalpodautoscaler managed: false1 - kind: quay managed: true overrides:2 replicas: 0 - kind: clair managed: true overrides: replicas: 0 - kind: mirror managed: true overrides: replicas: 0 …where:

managed: false::Specifies that the component is not managed by the Red Hat Quay Operator.overrides::Specifies the replica count for the component.Wait for the

registry-quay-app,registry-quay-mirrorandregistry-clair-apppods (depending on which components you set to be managed by Red Hat Quay Operator) to disappear. You can check their status by running the following command:$ oc get pods -n <quay-namespace>Example output:

registry-quay-config-editor-77847fc4f5-nsbbv 1/1 Running 0 9m1s registry-quay-database-66969cd859-n2ssm 1/1 Running 0 6d1h registry-quay-redis-7cc5f6c977-956g8 1/1 Running 0 5d21h

5.3.4. Restoring your Red Hat Quay database