Ce contenu n'est pas disponible dans la langue sélectionnée.

Chapter 5. Developing Operators

5.1. About the Operator SDK

The Operator Framework is an open source toolkit to manage Kubernetes native applications, called Operators, in an effective, automated, and scalable way. Operators take advantage of Kubernetes extensibility to deliver the automation advantages of cloud services, like provisioning, scaling, and backup and restore, while being able to run anywhere that Kubernetes can run.

Operators make it easy to manage complex, stateful applications on top of Kubernetes. However, writing an Operator today can be difficult because of challenges such as using low-level APIs, writing boilerplate, and a lack of modularity, which leads to duplication.

The Operator SDK, a component of the Operator Framework, provides a command-line interface (CLI) tool that Operator developers can use to build, test, and deploy an Operator.

Why use the Operator SDK?

The Operator SDK simplifies this process of building Kubernetes-native applications, which can require deep, application-specific operational knowledge. The Operator SDK not only lowers that barrier, but it also helps reduce the amount of boilerplate code required for many common management capabilities, such as metering or monitoring.

The Operator SDK is a framework that uses the controller-runtime library to make writing Operators easier by providing the following features:

- High-level APIs and abstractions to write the operational logic more intuitively

- Tools for scaffolding and code generation to quickly bootstrap a new project

- Integration with Operator Lifecycle Manager (OLM) to streamline packaging, installing, and running Operators on a cluster

- Extensions to cover common Operator use cases

- Metrics set up automatically in any generated Go-based Operator for use on clusters where the Prometheus Operator is deployed

Operator authors with cluster administrator access to a Kubernetes-based cluster, such as OpenShift Container Platform, can use the Operator SDK CLI to develop their own Operators based on Go, Ansible, or Helm. Kubebuilder is embedded into the Operator SDK as the scaffolding solution for Go-based Operators, which means existing Kubebuilder projects can be used as is with the Operator SDK and continue to work.

OpenShift Container Platform 4.6 supports Operator SDK v0.19.4.

5.1.1. What are Operators?

For an overview about basic Operator concepts and terminology, see Understanding Operators.

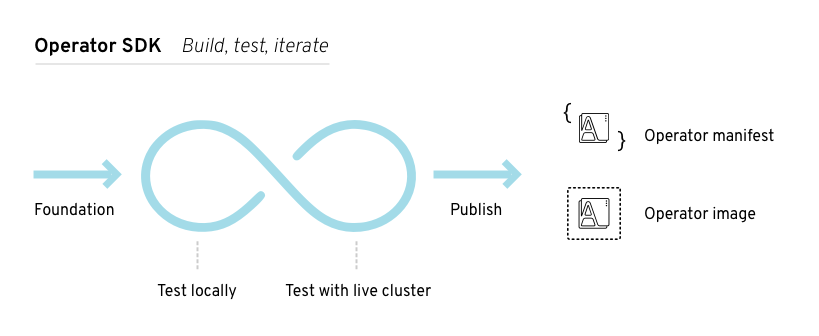

5.1.2. Development workflow

The Operator SDK provides the following workflow to develop a new Operator:

- Create an Operator project by using the Operator SDK command-line interface (CLI).

- Define new resource APIs by adding custom resource definitions (CRDs).

- Specify resources to watch by using the Operator SDK API.

- Define the Operator reconciling logic in a designated handler and use the Operator SDK API to interact with resources.

- Use the Operator SDK CLI to build and generate the Operator deployment manifests.

Figure 5.1. Operator SDK workflow

At a high level, an Operator that uses the Operator SDK processes events for watched resources in an Operator author-defined handler and takes actions to reconcile the state of the application.

5.2. Installing the Operator SDK CLI

The Operator SDK provides a command-line interface (CLI) tool that Operator developers can use to build, test, and deploy an Operator. You can install the Operator SDK CLI on your workstation so that you are prepared to start authoring your own Operators.

OpenShift Container Platform 4.6 supports Operator SDK v0.19.4, which can be installed from upstream sources.

Starting in OpenShift Container Platform 4.7, the Operator SDK is fully supported and available from official Red Hat product sources. See OpenShift Container Platform 4.7 release notes for more information.

5.2.1. Installing the Operator SDK CLI from from GitHub releases

You can download and install a pre-built release binary of the Operator SDK CLI from the project on GitHub.

Prerequisites

- Go v1.13+

-

dockerv17.03+,podmanv1.9.3+, orbuildahv1.7+ -

OpenShift CLI (

oc) v4.6+ installed - Access to a cluster based on Kubernetes v1.12.0+

- Access to a container registry

Procedure

Set the release version variable:

$ RELEASE_VERSION=v0.19.4Download the release binary.

For Linux:

$ curl -OJL https://github.com/operator-framework/operator-sdk/releases/download/${RELEASE_VERSION}/operator-sdk-${RELEASE_VERSION}-x86_64-linux-gnuFor macOS:

$ curl -OJL https://github.com/operator-framework/operator-sdk/releases/download/${RELEASE_VERSION}/operator-sdk-${RELEASE_VERSION}-x86_64-apple-darwin

Verify the downloaded release binary.

Download the provided

.ascfile.For Linux:

$ curl -OJL https://github.com/operator-framework/operator-sdk/releases/download/${RELEASE_VERSION}/operator-sdk-${RELEASE_VERSION}-x86_64-linux-gnu.ascFor macOS:

$ curl -OJL https://github.com/operator-framework/operator-sdk/releases/download/${RELEASE_VERSION}/operator-sdk-${RELEASE_VERSION}-x86_64-apple-darwin.asc

Place the binary and corresponding

.ascfile into the same directory and run the following command to verify the binary:For Linux:

$ gpg --verify operator-sdk-${RELEASE_VERSION}-x86_64-linux-gnu.ascFor macOS:

$ gpg --verify operator-sdk-${RELEASE_VERSION}-x86_64-apple-darwin.asc

If you do not have the public key of the maintainer on your workstation, you will get the following error:

Example output with error

$ gpg: assuming signed data in 'operator-sdk-${RELEASE_VERSION}-x86_64-apple-darwin' $ gpg: Signature made Fri Apr 5 20:03:22 2019 CEST $ gpg: using RSA key <key_id>1 $ gpg: Can't check signature: No public key- 1

- RSA key string.

To download the key, run the following command, replacing

<key_id>with the RSA key string provided in the output of the previous command:$ gpg [--keyserver keys.gnupg.net] --recv-key "<key_id>"1 - 1

- If you do not have a key server configured, specify one with the

--keyserveroption.

Install the release binary in your

PATH:For Linux:

$ chmod +x operator-sdk-${RELEASE_VERSION}-x86_64-linux-gnu$ sudo cp operator-sdk-${RELEASE_VERSION}-x86_64-linux-gnu /usr/local/bin/operator-sdk$ rm operator-sdk-${RELEASE_VERSION}-x86_64-linux-gnuFor macOS:

$ chmod +x operator-sdk-${RELEASE_VERSION}-x86_64-apple-darwin$ sudo cp operator-sdk-${RELEASE_VERSION}-x86_64-apple-darwin /usr/local/bin/operator-sdk$ rm operator-sdk-${RELEASE_VERSION}-x86_64-apple-darwin

Verify that the CLI tool was installed correctly:

$ operator-sdk version

5.2.2. Installing the Operator SDK CLI from Homebrew

You can install the SDK CLI using Homebrew.

Prerequisites

- Homebrew

-

dockerv17.03+,podmanv1.9.3+, orbuildahv1.7+ -

OpenShift CLI (

oc) v4.6+ installed - Access to a cluster based on Kubernetes v1.12.0+

- Access to a container registry

Procedure

Install the SDK CLI using the

brewcommand:$ brew install operator-sdkVerify that the CLI tool was installed correctly:

$ operator-sdk version

5.2.3. Compiling and installing the Operator SDK CLI from source

You can obtain the Operator SDK source code to compile and install the SDK CLI.

Prerequisites

Procedure

Clone the

operator-sdkrepository:$ git clone https://github.com/operator-framework/operator-sdkChange to the directory for the cloned repository:

$ cd operator-sdkCheck out the v0.19.4 release:

$ git checkout tags/v0.19.4 -b v0.19.4Update dependencies:

$ make tidyCompile and install the SDK CLI:

$ make installThis installs the CLI binary

operator-sdkin the$GOPATH/bin/directory.Verify that the CLI tool was installed correctly:

$ operator-sdk version

5.3. Creating Go-based Operators

Operator developers can take advantage of Go programming language support in the Operator SDK to build an example Go-based Operator for Memcached, a distributed key-value store, and manage its lifecycle.

Kubebuilder is embedded into the Operator SDK as the scaffolding solution for Go-based Operators.

5.3.1. Creating a Go-based Operator using the Operator SDK

The Operator SDK makes it easier to build Kubernetes native applications, a process that can require deep, application-specific operational knowledge. The SDK not only lowers that barrier, but it also helps reduce the amount of boilerplate code needed for many common management capabilities, such as metering or monitoring.

This procedure walks through an example of creating a simple Memcached Operator using tools and libraries provided by the SDK.

Prerequisites

- Operator SDK v0.19.4 CLI installed on the development workstation

-

Operator Lifecycle Manager (OLM) installed on a Kubernetes-based cluster (v1.8 or above to support the

apps/v1beta2API group), for example OpenShift Container Platform 4.6 -

Access to the cluster using an account with

cluster-adminpermissions -

OpenShift CLI (

oc) v4.6+ installed

Procedure

Create an Operator project:

Create a directory for the project:

$ mkdir -p $HOME/projects/memcached-operatorChange to the directory:

$ cd $HOME/projects/memcached-operatorActivate support for Go modules:

$ export GO111MODULE=onRun the

operator-sdk initcommand to initialize the project:$ operator-sdk init \ --domain=example.com \ --repo=github.com/example-inc/memcached-operatorNoteThe

operator-sdk initcommand uses thego.kubebuilder.io/v2plug-in by default.

Update your Operator to use supported images:

In the project root-level Dockerfile, change the default runner image reference from:

FROM gcr.io/distroless/static:nonrootto:

FROM registry.access.redhat.com/ubi8/ubi-minimal:latest-

Depending on the Go project version, your Dockerfile might contain a

USER 65532:65532orUSER nonroot:nonrootdirective. In either case, remove the line, as it is not required by the supported runner image. In the

config/default/manager_auth_proxy_patch.yamlfile, change theimagevalue from:gcr.io/kubebuilder/kube-rbac-proxy:<tag>to use the supported image:

registry.redhat.io/openshift4/ose-kube-rbac-proxy:v4.6

Update the

testtarget in your Makefile to install dependencies required during later builds by replacing the following lines:Example 5.1. Existing

testtargettest: generate fmt vet manifests go test ./... -coverprofile cover.outWith the following lines:

Example 5.2. Updated

testtargetENVTEST_ASSETS_DIR=$(shell pwd)/testbin test: manifests generate fmt vet ## Run tests. mkdir -p ${ENVTEST_ASSETS_DIR} test -f ${ENVTEST_ASSETS_DIR}/setup-envtest.sh || curl -sSLo ${ENVTEST_ASSETS_DIR}/setup-envtest.sh https://raw.githubusercontent.com/kubernetes-sigs/controller-runtime/v0.7.2/hack/setup-envtest.sh source ${ENVTEST_ASSETS_DIR}/setup-envtest.sh; fetch_envtest_tools $(ENVTEST_ASSETS_DIR); setup_envtest_env $(ENVTEST_ASSETS_DIR); go test ./... -coverprofile cover.outCreate a custom resource definition (CRD) API and controller:

Run the following command to create an API with group

cache, versionv1, and kindMemcached:$ operator-sdk create api \ --group=cache \ --version=v1 \ --kind=MemcachedWhen prompted, enter

yfor creating both the resource and controller:Create Resource [y/n] y Create Controller [y/n] yExample output

Writing scaffold for you to edit... api/v1/memcached_types.go controllers/memcached_controller.go ...This process generates the Memcached resource API at

api/v1/memcached_types.goand the controller atcontrollers/memcached_controller.go.Modify the Go type definitions at

api/v1/memcached_types.goto have the followingspecandstatus:// MemcachedSpec defines the desired state of Memcached type MemcachedSpec struct { // +kubebuilder:validation:Minimum=0 // Size is the size of the memcached deployment Size int32 `json:"size"` } // MemcachedStatus defines the observed state of Memcached type MemcachedStatus struct { // Nodes are the names of the memcached pods Nodes []string `json:"nodes"` }Add the

+kubebuilder:subresource:statusmarker to add astatussubresource to the CRD manifest:// Memcached is the Schema for the memcacheds API // +kubebuilder:subresource:status1 type Memcached struct { metav1.TypeMeta `json:",inline"` metav1.ObjectMeta `json:"metadata,omitempty"` Spec MemcachedSpec `json:"spec,omitempty"` Status MemcachedStatus `json:"status,omitempty"` }- 1

- Add this line.

This enables the controller to update the CR status without changing the rest of the CR object.

Update the generated code for the resource type:

$ make generateTipAfter you modify a

*_types.gofile, you must run themake generatecommand to update the generated code for that resource type.The above Makefile target invokes the

controller-genutility to update theapi/v1/zz_generated.deepcopy.gofile. This ensures your API Go type definitions implement theruntime.Objectinterface that allKindtypes must implement.

Generate and update CRD manifests:

$ make manifestsThis Makefile target invokes the

controller-genutility to generate the CRD manifests in theconfig/crd/bases/cache.example.com_memcacheds.yamlfile.Optional: Add custom validation to your CRD.

OpenAPI v3.0 schemas are added to CRD manifests in the

spec.validationblock when the manifests are generated. This validation block allows Kubernetes to validate the properties in aMemcachedcustom resource (CR) when it is created or updated.As an Operator author, you can use annotation-like, single-line comments called Kubebuilder markers to configure custom validations for your API. These markers must always have a

+kubebuilder:validationprefix. For example, adding an enum-type specification can be done by adding the following marker:// +kubebuilder:validation:Enum=Lion;Wolf;Dragon type Alias stringUsage of markers in API code is discussed in the Kubebuilder Generating CRDs and Markers for Config/Code Generation documentation. A full list of OpenAPIv3 validation markers is also available in the Kubebuilder CRD Validation documentation.

If you add any custom validations, run the following command to update the OpenAPI validation section for the CRD:

$ make manifests

After creating a new API and controller, you can implement the controller logic. For this example, replace the generated controller file

controllers/memcached_controller.gowith following example implementation:Example 5.3. Example

memcached_controller.go/* Licensed under the Apache License, Version 2.0 (the "License"); you may not use this file except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. */ package controllers import ( "context" "reflect" "github.com/go-logr/logr" appsv1 "k8s.io/api/apps/v1" corev1 "k8s.io/api/core/v1" "k8s.io/apimachinery/pkg/api/errors" metav1 "k8s.io/apimachinery/pkg/apis/meta/v1" "k8s.io/apimachinery/pkg/runtime" "k8s.io/apimachinery/pkg/types" ctrl "sigs.k8s.io/controller-runtime" "sigs.k8s.io/controller-runtime/pkg/client" cachev1 "github.com/example-inc/memcached-operator/api/v1" ) // MemcachedReconciler reconciles a Memcached object type MemcachedReconciler struct { client.Client Log logr.Logger Scheme *runtime.Scheme } // +kubebuilder:rbac:groups=cache.example.com,resources=memcacheds,verbs=get;list;watch;create;update;patch;delete // +kubebuilder:rbac:groups=cache.example.com,resources=memcacheds/status,verbs=get;update;patch // +kubebuilder:rbac:groups=apps,resources=deployments,verbs=get;list;watch;create;update;patch;delete // +kubebuilder:rbac:groups=core,resources=pods,verbs=get;list; func (r *MemcachedReconciler) Reconcile(req ctrl.Request) (ctrl.Result, error) { ctx := context.Background() log := r.Log.WithValues("memcached", req.NamespacedName) // Fetch the Memcached instance memcached := &cachev1.Memcached{} err := r.Get(ctx, req.NamespacedName, memcached) if err != nil { if errors.IsNotFound(err) { // Request object not found, could have been deleted after reconcile request. // Owned objects are automatically garbage collected. For additional cleanup logic use finalizers. // Return and don't requeue log.Info("Memcached resource not found. Ignoring since object must be deleted") return ctrl.Result{}, nil } // Error reading the object - requeue the request. log.Error(err, "Failed to get Memcached") return ctrl.Result{}, err } // Check if the deployment already exists, if not create a new one found := &appsv1.Deployment{} err = r.Get(ctx, types.NamespacedName{Name: memcached.Name, Namespace: memcached.Namespace}, found) if err != nil && errors.IsNotFound(err) { // Define a new deployment dep := r.deploymentForMemcached(memcached) log.Info("Creating a new Deployment", "Deployment.Namespace", dep.Namespace, "Deployment.Name", dep.Name) err = r.Create(ctx, dep) if err != nil { log.Error(err, "Failed to create new Deployment", "Deployment.Namespace", dep.Namespace, "Deployment.Name", dep.Name) return ctrl.Result{}, err } // Deployment created successfully - return and requeue return ctrl.Result{Requeue: true}, nil } else if err != nil { log.Error(err, "Failed to get Deployment") return ctrl.Result{}, err } // Ensure the deployment size is the same as the spec size := memcached.Spec.Size if *found.Spec.Replicas != size { found.Spec.Replicas = &size err = r.Update(ctx, found) if err != nil { log.Error(err, "Failed to update Deployment", "Deployment.Namespace", found.Namespace, "Deployment.Name", found.Name) return ctrl.Result{}, err } // Spec updated - return and requeue return ctrl.Result{Requeue: true}, nil } // Update the Memcached status with the pod names // List the pods for this memcached's deployment podList := &corev1.PodList{} listOpts := []client.ListOption{ client.InNamespace(memcached.Namespace), client.MatchingLabels(labelsForMemcached(memcached.Name)), } if err = r.List(ctx, podList, listOpts...); err != nil { log.Error(err, "Failed to list pods", "Memcached.Namespace", memcached.Namespace, "Memcached.Name", memcached.Name) return ctrl.Result{}, err } podNames := getPodNames(podList.Items) // Update status.Nodes if needed if !reflect.DeepEqual(podNames, memcached.Status.Nodes) { memcached.Status.Nodes = podNames err := r.Status().Update(ctx, memcached) if err != nil { log.Error(err, "Failed to update Memcached status") return ctrl.Result{}, err } } return ctrl.Result{}, nil } // deploymentForMemcached returns a memcached Deployment object func (r *MemcachedReconciler) deploymentForMemcached(m *cachev1.Memcached) *appsv1.Deployment { ls := labelsForMemcached(m.Name) replicas := m.Spec.Size dep := &appsv1.Deployment{ ObjectMeta: metav1.ObjectMeta{ Name: m.Name, Namespace: m.Namespace, }, Spec: appsv1.DeploymentSpec{ Replicas: &replicas, Selector: &metav1.LabelSelector{ MatchLabels: ls, }, Template: corev1.PodTemplateSpec{ ObjectMeta: metav1.ObjectMeta{ Labels: ls, }, Spec: corev1.PodSpec{ Containers: []corev1.Container{{ Image: "memcached:1.4.36-alpine", Name: "memcached", Command: []string{"memcached", "-m=64", "-o", "modern", "-v"}, Ports: []corev1.ContainerPort{{ ContainerPort: 11211, Name: "memcached", }}, }}, }, }, }, } // Set Memcached instance as the owner and controller ctrl.SetControllerReference(m, dep, r.Scheme) return dep } // labelsForMemcached returns the labels for selecting the resources // belonging to the given memcached CR name. func labelsForMemcached(name string) map[string]string { return map[string]string{"app": "memcached", "memcached_cr": name} } // getPodNames returns the pod names of the array of pods passed in func getPodNames(pods []corev1.Pod) []string { var podNames []string for _, pod := range pods { podNames = append(podNames, pod.Name) } return podNames } func (r *MemcachedReconciler) SetupWithManager(mgr ctrl.Manager) error { return ctrl.NewControllerManagedBy(mgr). For(&cachev1.Memcached{}). Owns(&appsv1.Deployment{}). Complete(r) }The example controller runs the following reconciliation logic for each

MemcachedCR:- Create a Memcached deployment if it does not exist.

-

Ensure that the deployment size is the same as specified by the

MemcachedCR spec. -

Update the

MemcachedCR status with the names of thememcachedpods.

The next two sub-steps inspect how the controller watches resources and how the reconcile loop is triggered. You can skip these steps to go directly to building and running the Operator.

Inspect the controller implementation at the

controllers/memcached_controller.gofile to see how the controller watches resources.The

SetupWithManager()function specifies how the controller is built to watch a CR and other resources that are owned and managed by that controller:Example 5.4.

SetupWithManager()functionimport ( ... appsv1 "k8s.io/api/apps/v1" ... ) func (r *MemcachedReconciler) SetupWithManager(mgr ctrl.Manager) error { return ctrl.NewControllerManagedBy(mgr). For(&cachev1.Memcached{}). Owns(&appsv1.Deployment{}). Complete(r) }NewControllerManagedBy()provides a controller builder that allows various controller configurations.For(&cachev1.Memcached{})specifies theMemcachedtype as the primary resource to watch. For each Add, Update, or Delete event for aMemcachedtype, the reconcile loop is sent a reconcileRequestargument, which consists of a namespace and name key, for thatMemcachedobject.Owns(&appsv1.Deployment{})specifies theDeploymenttype as the secondary resource to watch. For eachDeploymenttype Add, Update, or Delete event, the event handler maps each event to a reconcile request for the owner of the deployment. In this case, the owner is theMemcachedobject for which the deployment was created.Every controller has a reconciler object with a

Reconcile()method that implements the reconcile loop. The reconcile loop is passed theRequestargument, which is a namespace and name key used to find the primary resource object,Memcached, from the cache:Example 5.5. Reconcile loop

import ( ctrl "sigs.k8s.io/controller-runtime" cachev1 "github.com/example-inc/memcached-operator/api/v1" ... ) func (r *MemcachedReconciler) Reconcile(ctx context.Context, req ctrl.Request) (ctrl.Result, error) { // Lookup the Memcached instance for this reconcile request memcached := &cachev1.Memcached{} err := r.Get(ctx, req.NamespacedName, memcached) ... }Based on the return value of the

Reconcile()function, the reconcileRequestmight be requeued, and the loop might be triggered again:Example 5.6. Requeue logic

// Reconcile successful - don't requeue return reconcile.Result{}, nil // Reconcile failed due to error - requeue return reconcile.Result{}, err // Requeue for any reason other than error return reconcile.Result{Requeue: true}, nilYou can set the

Result.RequeueAfterto requeue the request after a grace period:Example 5.7. Requeue after grace period

import "time" // Reconcile for any reason other than an error after 5 seconds return ctrl.Result{RequeueAfter: time.Second*5}, nilNoteYou can return

ResultwithRequeueAfterset to periodically reconcile a CR.For more on reconcilers, clients, and interacting with resource events, see the Controller Runtime Client API documentation.

5.3.2. Running the Operator

There are two ways you can use the Operator SDK CLI to build and run your Operator:

- Run locally outside the cluster as a Go program.

- Run as a deployment on the cluster.

Prerequisites

- You have a Go-based Operator project as described in Creating a Go-based Operator using the Operator SDK.

5.3.2.1. Running locally outside the cluster

You can run your Operator project as a Go program outside of the cluster. This method is useful for development purposes to speed up deployment and testing.

Procedure

Run the following command to install the custom resource definitions (CRDs) in the cluster configured in your

~/.kube/configfile and run the Operator as a Go program locally:$ make install runExample 5.8. Example output

... 2021-01-10T21:09:29.016-0700 INFO controller-runtime.metrics metrics server is starting to listen {"addr": ":8080"} 2021-01-10T21:09:29.017-0700 INFO setup starting manager 2021-01-10T21:09:29.017-0700 INFO controller-runtime.manager starting metrics server {"path": "/metrics"} 2021-01-10T21:09:29.018-0700 INFO controller-runtime.manager.controller.memcached Starting EventSource {"reconciler group": "cache.example.com", "reconciler kind": "Memcached", "source": "kind source: /, Kind="} 2021-01-10T21:09:29.218-0700 INFO controller-runtime.manager.controller.memcached Starting Controller {"reconciler group": "cache.example.com", "reconciler kind": "Memcached"} 2021-01-10T21:09:29.218-0700 INFO controller-runtime.manager.controller.memcached Starting workers {"reconciler group": "cache.example.com", "reconciler kind": "Memcached", "worker count": 1}

5.3.2.2. Running as a deployment

After creating your Go-based Operator project, you can build and run your Operator as a deployment inside a cluster.

Procedure

Run the following

makecommands to build and push the Operator image. Modify theIMGargument in the following steps to reference a repository that you have access to. You can obtain an account for storing containers at repository sites such as Quay.io.Build the image:

$ make docker-build IMG=<registry>/<user>/<image_name>:<tag>NoteThe Dockerfile generated by the SDK for the Operator explicitly references

GOARCH=amd64forgo build. This can be amended toGOARCH=$TARGETARCHfor non-AMD64 architectures. Docker will automatically set the environment variable to the value specified by–platform. With Buildah, the–build-argwill need to be used for the purpose. For more information, see Multiple Architectures.Push the image to a repository:

$ make docker-push IMG=<registry>/<user>/<image_name>:<tag>NoteThe name and tag of the image, for example

IMG=<registry>/<user>/<image_name>:<tag>, in both the commands can also be set in your Makefile. Modify theIMG ?= controller:latestvalue to set your default image name.

Run the following command to deploy the Operator:

$ make deploy IMG=<registry>/<user>/<image_name>:<tag>By default, this command creates a namespace with the name of your Operator project in the form

<project_name>-systemand is used for the deployment. This command also installs the RBAC manifests fromconfig/rbac.Verify that the Operator is running:

$ oc get deployment -n <project_name>-systemExample output

NAME READY UP-TO-DATE AVAILABLE AGE <project_name>-controller-manager 1/1 1 1 8m

5.3.3. Creating a custom resource

After your Operator is installed, you can test it by creating a custom resource (CR) that is now provided on the cluster by the Operator.

Prerequisites

-

Example Memcached Operator, which provides the

MemcachedCR, installed on a cluster

Procedure

Change to the namespace where your Operator is installed. For example, if you deployed the Operator using the

make deploycommand:$ oc project memcached-operator-systemEdit the sample

MemcachedCR manifest atconfig/samples/cache_v1_memcached.yamlto contain the following specification:apiVersion: cache.example.com/v1 kind: Memcached metadata: name: memcached-sample ... spec: ... size: 3Create the CR:

$ oc apply -f config/samples/cache_v1_memcached.yamlEnsure that the

MemcachedOperator creates the deployment for the sample CR with the correct size:$ oc get deploymentsExample output

NAME READY UP-TO-DATE AVAILABLE AGE memcached-operator-controller-manager 1/1 1 1 8m memcached-sample 3/3 3 3 1mCheck the pods and CR status to confirm the status is updated with the Memcached pod names.

Check the pods:

$ oc get podsExample output

NAME READY STATUS RESTARTS AGE memcached-sample-6fd7c98d8-7dqdr 1/1 Running 0 1m memcached-sample-6fd7c98d8-g5k7v 1/1 Running 0 1m memcached-sample-6fd7c98d8-m7vn7 1/1 Running 0 1mCheck the CR status:

$ oc get memcached/memcached-sample -o yamlExample output

apiVersion: cache.example.com/v1 kind: Memcached metadata: ... name: memcached-sample ... spec: size: 3 status: nodes: - memcached-sample-6fd7c98d8-7dqdr - memcached-sample-6fd7c98d8-g5k7v - memcached-sample-6fd7c98d8-m7vn7

Update the deployment size.

Update

config/samples/cache_v1_memcached.yamlfile to change thespec.sizefield in theMemcachedCR from3to5:$ oc patch memcached memcached-sample \ -p '{"spec":{"size": 5}}' \ --type=mergeConfirm that the Operator changes the deployment size:

$ oc get deploymentsExample output

NAME READY UP-TO-DATE AVAILABLE AGE memcached-operator-controller-manager 1/1 1 1 10m memcached-sample 5/5 5 5 3m

5.4. Creating Ansible-based Operators

This guide outlines Ansible support in the Operator SDK and walks Operator authors through examples building and running Ansible-based Operators with the operator-sdk CLI tool that use Ansible playbooks and modules.

5.4.1. Ansible support in the Operator SDK

The Operator Framework is an open source toolkit to manage Kubernetes native applications, called Operators, in an effective, automated, and scalable way. This framework includes the Operator SDK, which assists developers in bootstrapping and building an Operator based on their expertise without requiring knowledge of Kubernetes API complexities.

One of the Operator SDK options for generating an Operator project includes leveraging existing Ansible playbooks and modules to deploy Kubernetes resources as a unified application, without having to write any Go code.

5.4.1.1. Custom resource files

Operators use the Kubernetes extension mechanism, custom resource definitions (CRDs), so your custom resource (CR) looks and acts just like the built-in, native Kubernetes objects.

The CR file format is a Kubernetes resource file. The object has mandatory and optional fields:

| Field | Description |

|---|---|

|

| Version of the CR to be created. |

|

| Kind of the CR to be created. |

|

| Kubernetes-specific metadata to be created. |

|

| Key-value list of variables which are passed to Ansible. This field is empty by default. |

|

|

Summarizes the current state of the object. For Ansible-based Operators, the |

|

| Kubernetes-specific annotations to be appended to the CR. |

The following list of CR annotations modify the behavior of the Operator:

| Annotation | Description |

|---|---|

|

|

Specifies the reconciliation interval for the CR. This value is parsed using the standard Golang package |

Example Ansible-based Operator annotation

apiVersion: "test1.example.com/v1alpha1"

kind: "Test1"

metadata:

name: "example"

annotations:

ansible.operator-sdk/reconcile-period: "30s"5.4.1.2. watches.yaml file

A group/version/kind (GVK) is a unique identifier for a Kubernetes API. The watches.yaml file contains a list of mappings from custom resources (CRs), identified by its GVK, to an Ansible role or playbook. The Operator expects this mapping file in a predefined location at /opt/ansible/watches.yaml.

| Field | Description |

|---|---|

|

| Group of CR to watch. |

|

| Version of CR to watch. |

|

| Kind of CR to watch |

|

|

Path to the Ansible role added to the container. For example, if your |

|

|

Path to the Ansible playbook added to the container. This playbook is expected to be a way to call roles. This field is mutually exclusive with the |

|

| The reconciliation interval, how often the role or playbook is run, for a given CR. |

|

|

When set to |

Example watches.yaml file

- version: v1alpha1

group: test1.example.com

kind: Test1

role: /opt/ansible/roles/Test1

- version: v1alpha1

group: test2.example.com

kind: Test2

playbook: /opt/ansible/playbook.yml

- version: v1alpha1

group: test3.example.com

kind: Test3

playbook: /opt/ansible/test3.yml

reconcilePeriod: 0

manageStatus: false5.4.1.2.1. Advanced options

Advanced features can be enabled by adding them to your watches.yaml file per GVK. They can go below the group, version, kind and playbook or role fields.

Some features can be overridden per resource using an annotation on that CR. The options that can be overridden have the annotation specified below.

| Feature | YAML key | Description | Annotation for override | Default value |

|---|---|---|---|---|

| Reconcile period |

| Time between reconcile runs for a particular CR. |

|

|

| Manage status |

|

Allows the Operator to manage the |

| |

| Watch dependent resources |

| Allows the Operator to dynamically watch resources that are created by Ansible. |

| |

| Watch cluster-scoped resources |

| Allows the Operator to watch cluster-scoped resources that are created by Ansible. |

| |

| Max runner artifacts |

| Manages the number of artifact directories that Ansible Runner keeps in the Operator container for each individual resource. |

|

|

Example watches.yml file with advanced options

- version: v1alpha1

group: app.example.com

kind: AppService

playbook: /opt/ansible/playbook.yml

maxRunnerArtifacts: 30

reconcilePeriod: 5s

manageStatus: False

watchDependentResources: False5.4.1.3. Extra variables sent to Ansible

Extra variables can be sent to Ansible, which are then managed by the Operator. The spec section of the custom resource (CR) passes along the key-value pairs as extra variables. This is equivalent to extra variables passed in to the ansible-playbook command.

The Operator also passes along additional variables under the meta field for the name of the CR and the namespace of the CR.

For the following CR example:

apiVersion: "app.example.com/v1alpha1"

kind: "Database"

metadata:

name: "example"

spec:

message: "Hello world 2"

newParameter: "newParam"The structure passed to Ansible as extra variables is:

{ "meta": {

"name": "<cr_name>",

"namespace": "<cr_namespace>",

},

"message": "Hello world 2",

"new_parameter": "newParam",

"_app_example_com_database": {

<full_crd>

},

}

The message and newParameter fields are set in the top level as extra variables, and meta provides the relevant metadata for the CR as defined in the Operator. The meta fields can be accessed using dot notation in Ansible, for example:

- debug:

msg: "name: {{ meta.name }}, {{ meta.namespace }}"5.4.1.4. Ansible Runner directory

Ansible Runner keeps information about Ansible runs in the container. This is located at /tmp/ansible-operator/runner/<group>/<version>/<kind>/<namespace>/<name>.

5.4.2. Building an Ansible-based Operator using the Operator SDK

This procedure walks through an example of building a simple Memcached Operator powered by Ansible playbooks and modules using tools and libraries provided by the Operator SDK.

Prerequisites

- Operator SDK v0.19.4 CLI installed on the development workstation

-

Access to a Kubernetes-based cluster v1.11.3+ (for example OpenShift Container Platform 4.6) using an account with

cluster-adminpermissions -

OpenShift CLI (

oc) v4.6+ installed -

ansiblev2.9.0+ -

ansible-runnerv1.1.0+ -

ansible-runner-httpv1.0.0+

Procedure

Create a new Operator project. A namespace-scoped Operator watches and manages resources in a single namespace. Namespace-scoped Operators are preferred because of their flexibility. They enable decoupled upgrades, namespace isolation for failures and monitoring, and differing API definitions.

To create a new Ansible-based, namespace-scoped

memcached-operatorproject and change to the new directory, use the following commands:$ operator-sdk new memcached-operator \ --api-version=cache.example.com/v1alpha1 \ --kind=Memcached \ --type=ansible$ cd memcached-operatorThis creates the

memcached-operatorproject specifically for watching theMemcachedresource with API versionexample.com/v1apha1and kindMemcached.Customize the Operator logic.

For this example, the

memcached-operatorexecutes the following reconciliation logic for eachMemcachedcustom resource (CR):-

Create a

memcacheddeployment if it does not exist. -

Ensure that the deployment size is the same as specified by the

MemcachedCR.

By default, the

memcached-operatorwatchesMemcachedresource events as shown in thewatches.yamlfile and executes the Ansible roleMemcached:- version: v1alpha1 group: cache.example.com kind: MemcachedYou can optionally customize the following logic in the

watches.yamlfile:Specifying a

roleoption configures the Operator to use this specified path when launchingansible-runnerwith an Ansible role. By default, theoperator-sdk newcommand fills in an absolute path to where your role should go:- version: v1alpha1 group: cache.example.com kind: Memcached role: /opt/ansible/roles/memcachedSpecifying a

playbookoption in thewatches.yamlfile configures the Operator to use this specified path when launchingansible-runnerwith an Ansible playbook:- version: v1alpha1 group: cache.example.com kind: Memcached playbook: /opt/ansible/playbook.yaml

-

Create a

Build the Memcached Ansible role.

Modify the generated Ansible role under the

roles/memcached/directory. This Ansible role controls the logic that is executed when a resource is modified.Define the

Memcachedspec.Defining the spec for an Ansible-based Operator can be done entirely in Ansible. The Ansible Operator passes all key-value pairs listed in the CR spec field along to Ansible as variables. The names of all variables in the spec field are converted to snake case (lowercase with an underscore) by the Operator before running Ansible. For example,

serviceAccountin the spec becomesservice_accountin Ansible.TipYou should perform some type validation in Ansible on the variables to ensure that your application is receiving expected input.

In case the user does not set the

specfield, set a default by modifying theroles/memcached/defaults/main.ymlfile:size: 1Define the

Memcacheddeployment.With the

Memcachedspec now defined, you can define what Ansible is actually executed on resource changes. Because this is an Ansible role, the default behavior executes the tasks in theroles/memcached/tasks/main.ymlfile.The goal is for Ansible to create a deployment if it does not exist, which runs the

memcached:1.4.36-alpineimage. Ansible 2.7+ supports the k8s Ansible module, which this example leverages to control the deployment definition.Modify the

roles/memcached/tasks/main.ymlto match the following:- name: start memcached k8s: definition: kind: Deployment apiVersion: apps/v1 metadata: name: '{{ meta.name }}-memcached' namespace: '{{ meta.namespace }}' spec: replicas: "{{size}}" selector: matchLabels: app: memcached template: metadata: labels: app: memcached spec: containers: - name: memcached command: - memcached - -m=64 - -o - modern - -v image: "docker.io/memcached:1.4.36-alpine" ports: - containerPort: 11211NoteThis example used the

sizevariable to control the number of replicas of theMemcacheddeployment. This example sets the default to1, but any user can create a CR that overwrites the default.

Deploy the CRD.

Before running the Operator, Kubernetes needs to know about the new custom resource definition (CRD) that the Operator will be watching. Deploy the

MemcachedCRD:$ oc create -f deploy/crds/cache.example.com_memcacheds_crd.yamlBuild and run the Operator.

There are two ways to build and run the Operator:

- As a pod inside a Kubernetes cluster.

-

As a Go program outside the cluster using the

operator-sdk upcommand.

Choose one of the following methods:

Run as a pod inside a Kubernetes cluster. This is the preferred method for production use.

Build the

memcached-operatorimage and push it to a registry:$ operator-sdk build quay.io/example/memcached-operator:v0.0.1$ podman push quay.io/example/memcached-operator:v0.0.1Deployment manifests are generated in the

deploy/operator.yamlfile. The deployment image in this file needs to be modified from the placeholderREPLACE_IMAGEto the previous built image. To do this, run:$ sed -i 's|REPLACE_IMAGE|quay.io/example/memcached-operator:v0.0.1|g' deploy/operator.yamlDeploy the

memcached-operatormanifests:$ oc create -f deploy/service_account.yaml$ oc create -f deploy/role.yaml$ oc create -f deploy/role_binding.yaml$ oc create -f deploy/operator.yamlVerify that the

memcached-operatordeployment is up and running:$ oc get deploymentNAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE memcached-operator 1 1 1 1 1m

Run outside the cluster. This method is preferred during the development cycle to speed up deployment and testing.

Ensure that Ansible Runner and Ansible Runner HTTP Plug-in are installed or else you will see unexpected errors from Ansible Runner when a CR is created.

It is also important that the role path referenced in the

watches.yamlfile exists on your machine. Because normally a container is used where the role is put on disk, the role must be manually copied to the configured Ansible roles path (for example/etc/ansible/roles).To run the Operator locally with the default Kubernetes configuration file present at

$HOME/.kube/config:$ operator-sdk run --localTo run the Operator locally with a provided Kubernetes configuration file:

$ operator-sdk run --local --kubeconfig=config

Create a

MemcachedCR.Modify the

deploy/crds/cache_v1alpha1_memcached_cr.yamlfile as shown and create aMemcachedCR:$ cat deploy/crds/cache_v1alpha1_memcached_cr.yamlExample output

apiVersion: "cache.example.com/v1alpha1" kind: "Memcached" metadata: name: "example-memcached" spec: size: 3$ oc apply -f deploy/crds/cache_v1alpha1_memcached_cr.yamlEnsure that the

memcached-operatorcreates the deployment for the CR:$ oc get deploymentExample output

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE memcached-operator 1 1 1 1 2m example-memcached 3 3 3 3 1mCheck the pods to confirm three replicas were created:

$ oc get podsNAME READY STATUS RESTARTS AGE example-memcached-6fd7c98d8-7dqdr 1/1 Running 0 1m example-memcached-6fd7c98d8-g5k7v 1/1 Running 0 1m example-memcached-6fd7c98d8-m7vn7 1/1 Running 0 1m memcached-operator-7cc7cfdf86-vvjqk 1/1 Running 0 2m

Update the size.

Change the

spec.sizefield in thememcachedCR from3to4and apply the change:$ cat deploy/crds/cache_v1alpha1_memcached_cr.yamlExample output

apiVersion: "cache.example.com/v1alpha1" kind: "Memcached" metadata: name: "example-memcached" spec: size: 4$ oc apply -f deploy/crds/cache_v1alpha1_memcached_cr.yamlConfirm that the Operator changes the deployment size:

$ oc get deploymentExample output

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE example-memcached 4 4 4 4 5m

Clean up the resources:

$ oc delete -f deploy/crds/cache_v1alpha1_memcached_cr.yaml$ oc delete -f deploy/operator.yaml$ oc delete -f deploy/role_binding.yaml$ oc delete -f deploy/role.yaml$ oc delete -f deploy/service_account.yaml$ oc delete -f deploy/crds/cache_v1alpha1_memcached_crd.yaml

5.4.3. Managing application lifecycle using the k8s Ansible module

To manage the lifecycle of your application on Kubernetes using Ansible, you can use the k8s Ansible module. This Ansible module allows a developer to either leverage their existing Kubernetes resource files (written in YAML) or express the lifecycle management in native Ansible.

One of the biggest benefits of using Ansible in conjunction with existing Kubernetes resource files is the ability to use Jinja templating so that you can customize resources with the simplicity of a few variables in Ansible.

This section goes into detail on usage of the k8s Ansible module. To get started, install the module on your local workstation and test it using a playbook before moving on to using it within an Operator.

5.4.3.1. Installing the k8s Ansible module

To install the k8s Ansible module on your local workstation:

Procedure

Install Ansible 2.9+:

$ sudo yum install ansibleInstall the OpenShift python client package using

pip:$ sudo pip install openshift$ sudo pip install kubernetes

5.4.3.2. Testing the k8s Ansible module locally

Sometimes, it is beneficial for a developer to run the Ansible code from their local machine as opposed to running and rebuilding the Operator each time.

Procedure

Install the

community.kubernetescollection:$ ansible-galaxy collection install community.kubernetesInitialize a new Ansible-based Operator project:

$ operator-sdk new --type ansible \ --kind Test1 \ --api-version test1.example.com/v1alpha1 test1-operatorExample output

Create test1-operator/tmp/init/galaxy-init.sh Create test1-operator/tmp/build/Dockerfile Create test1-operator/tmp/build/test-framework/Dockerfile Create test1-operator/tmp/build/go-test.sh Rendering Ansible Galaxy role [test1-operator/roles/test1]... Cleaning up test1-operator/tmp/init Create test1-operator/watches.yaml Create test1-operator/deploy/rbac.yaml Create test1-operator/deploy/crd.yaml Create test1-operator/deploy/cr.yaml Create test1-operator/deploy/operator.yaml Run git init ... Initialized empty Git repository in /home/user/go/src/github.com/user/opsdk/test1-operator/.git/ Run git init done$ cd test1-operatorModify the

roles/test1/tasks/main.ymlfile with the Ansible logic that you want. This example creates and deletes a namespace with the switch of a variable.- name: set test namespace to "{{ state }}" community.kubernetes.k8s: api_version: v1 kind: Namespace state: "{{ state }}" name: test ignore_errors: true1 - 1

- Setting

ignore_errors: trueensures that deleting a nonexistent project does not fail.

Modify the

roles/test1/defaults/main.ymlfile to setstatetopresentby default:state: presentCreate an Ansible playbook

playbook.ymlin the top-level directory, which includes thetest1role:- hosts: localhost roles: - test1Run the playbook:

$ ansible-playbook playbook.ymlExample output

[WARNING]: provided hosts list is empty, only localhost is available. Note that the implicit localhost does not match 'all' PLAY [localhost] *************************************************************************** PROCEDURE [Gathering Facts] ********************************************************************* ok: [localhost] Task [test1 : set test namespace to present] changed: [localhost] PLAY RECAP ********************************************************************************* localhost : ok=2 changed=1 unreachable=0 failed=0Check that the namespace was created:

$ oc get namespaceExample output

NAME STATUS AGE default Active 28d kube-public Active 28d kube-system Active 28d test Active 3sRerun the playbook setting

statetoabsent:$ ansible-playbook playbook.yml --extra-vars state=absentExample output

[WARNING]: provided hosts list is empty, only localhost is available. Note that the implicit localhost does not match 'all' PLAY [localhost] *************************************************************************** PROCEDURE [Gathering Facts] ********************************************************************* ok: [localhost] Task [test1 : set test namespace to absent] changed: [localhost] PLAY RECAP ********************************************************************************* localhost : ok=2 changed=1 unreachable=0 failed=0Check that the namespace was deleted:

$ oc get namespaceExample output

NAME STATUS AGE default Active 28d kube-public Active 28d kube-system Active 28d

5.4.3.3. Testing the k8s Ansible module inside an Operator

After you are familiar with using the k8s Ansible module locally, you can trigger the same Ansible logic inside of an Operator when a custom resource (CR) changes. This example maps an Ansible role to a specific Kubernetes resource that the Operator watches. This mapping is done in the watches.yaml file.

5.4.3.3.1. Testing an Ansible-based Operator locally

After getting comfortable testing Ansible workflows locally, you can test the logic inside of an Ansible-based Operator running locally.

To do so, use the operator-sdk run --local command from the top-level directory of your Operator project. This command reads from the watches.yaml file and uses the ~/.kube/config file to communicate with a Kubernetes cluster just as the k8s Ansible module does.

Procedure

Because the

run --localcommand reads from thewatches.yamlfile, there are options available to the Operator author. Ifroleis left alone (by default,/opt/ansible/roles/<name>) you must copy the role over to the/opt/ansible/roles/directory from the Operator directly.This is cumbersome because changes are not reflected from the current directory. Instead, change the

rolefield to point to the current directory and comment out the existing line:- version: v1alpha1 group: test1.example.com kind: Test1 # role: /opt/ansible/roles/Test1 role: /home/user/test1-operator/Test1Create a custom resource definition (CRD) and proper role-based access control (RBAC) definitions for the custom resource (CR)

Test1. Theoperator-sdkcommand autogenerates these files inside of thedeploy/directory:$ oc create -f deploy/crds/test1_v1alpha1_test1_crd.yaml$ oc create -f deploy/service_account.yaml$ oc create -f deploy/role.yaml$ oc create -f deploy/role_binding.yamlRun the

run --localcommand:$ operator-sdk run --localExample output

[...] INFO[0000] Starting to serve on 127.0.0.1:8888 INFO[0000] Watching test1.example.com/v1alpha1, Test1, defaultNow that the Operator is watching the resource

Test1for events, the creation of a CR triggers your Ansible role to execute. View thedeploy/cr.yamlfile:apiVersion: "test1.example.com/v1alpha1" kind: "Test1" metadata: name: "example"Because the

specfield is not set, Ansible is invoked with no extra variables. The next section covers how extra variables are passed from a CR to Ansible. This is why it is important to set reasonable defaults for the Operator.Create a CR instance of

Test1with the default variablestateset topresent:$ oc create -f deploy/cr.yamlCheck that the namespace

testwas created:$ oc get namespaceExample output

NAME STATUS AGE default Active 28d kube-public Active 28d kube-system Active 28d test Active 3sModify the

deploy/cr.yamlfile to set thestatefield toabsent:apiVersion: "test1.example.com/v1alpha1" kind: "Test1" metadata: name: "example" spec: state: "absent"Apply the changes and confirm that the namespace is deleted:

$ oc apply -f deploy/cr.yaml$ oc get namespaceExample output

NAME STATUS AGE default Active 28d kube-public Active 28d kube-system Active 28d

5.4.3.3.2. Testing an Ansible-based Operator on a cluster

After getting familiar running Ansible logic inside of an Ansible-based Operator locally, you can test the Operator inside of a pod on a Kubernetes cluster, such as OpenShift Container Platform. Running as a pod on a cluster is preferred for production use.

Procedure

Build the

test1-operatorimage and push it to a registry:$ operator-sdk build quay.io/example/test1-operator:v0.0.1$ podman push quay.io/example/test1-operator:v0.0.1Deployment manifests are generated in the

deploy/operator.yamlfile. The deployment image in this file must be modified from the placeholderREPLACE_IMAGEto the previously-built image. To do so, run the following command:$ sed -i 's|REPLACE_IMAGE|quay.io/example/test1-operator:v0.0.1|g' deploy/operator.yamlIf you are performing these steps on macOS, use the following command instead:

$ sed -i "" 's|REPLACE_IMAGE|quay.io/example/test1-operator:v0.0.1|g' deploy/operator.yamlDeploy the

test1-operator:$ oc create -f deploy/crds/test1_v1alpha1_test1_crd.yaml1 - 1

- Only required if the CRD does not exist already.

$ oc create -f deploy/service_account.yaml$ oc create -f deploy/role.yaml$ oc create -f deploy/role_binding.yaml$ oc create -f deploy/operator.yamlVerify that the

test1-operatoris up and running:$ oc get deploymentExample output

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE test1-operator 1 1 1 1 1mYou can now view the Ansible logs for the

test1-operator:$ oc logs deployment/test1-operator

5.4.4. Managing custom resource status using the operator_sdk.util Ansible collection

Ansible-based Operators automatically update custom resource (CR) status subresources with generic information about the previous Ansible run. This includes the number of successful and failed tasks and relevant error messages as shown:

status:

conditions:

- ansibleResult:

changed: 3

completion: 2018-12-03T13:45:57.13329

failures: 1

ok: 6

skipped: 0

lastTransitionTime: 2018-12-03T13:45:57Z

message: 'Status code was -1 and not [200]: Request failed: <urlopen error [Errno

113] No route to host>'

reason: Failed

status: "True"

type: Failure

- lastTransitionTime: 2018-12-03T13:46:13Z

message: Running reconciliation

reason: Running

status: "True"

type: Running

Ansible-based Operators also allow Operator authors to supply custom status values with the k8s_status Ansible module, which is included in the operator_sdk.util collection. This allows the author to update the status from within Ansible with any key-value pair as desired.

By default, Ansible-based Operators always include the generic Ansible run output as shown above. If you would prefer your application did not update the status with Ansible output, you can track the status manually from your application.

Procedure

To track CR status manually from your application, update the

watches.yamlfile with amanageStatusfield set tofalse:- version: v1 group: api.example.com kind: Test1 role: Test1 manageStatus: falseUse the

operator_sdk.util.k8s_statusAnsible module to update the subresource. For example, to update with keytest1and valuetest2,operator_sdk.utilcan be used as shown:- operator_sdk.util.k8s_status: api_version: app.example.com/v1 kind: Test1 name: "{{ meta.name }}" namespace: "{{ meta.namespace }}" status: test1: test2Collections can also be declared in the

meta/main.ymlfor the role, which is included for new scaffolded Ansible Operators:collections: - operator_sdk.utilDeclaring collections in the role meta allows you to invoke the

k8s_statusmodule directly:k8s_status: <snip> status: test1: test2

5.5. Creating Helm-based Operators

This guide outlines Helm chart support in the Operator SDK and walks Operator authors through an example of building and running an Nginx Operator with the operator-sdk CLI tool that uses an existing Helm chart.

5.5.1. Helm chart support in the Operator SDK

The Operator Framework is an open source toolkit to manage Kubernetes native applications, called Operators, in an effective, automated, and scalable way. This framework includes the Operator SDK, which assists developers in bootstrapping and building an Operator based on their expertise without requiring knowledge of Kubernetes API complexities.

One of the Operator SDK options for generating an Operator project includes leveraging an existing Helm chart to deploy Kubernetes resources as a unified application, without having to write any Go code. Such Helm-based Operators are designed to excel at stateless applications that require very little logic when rolled out, because changes should be applied to the Kubernetes objects that are generated as part of the chart. This may sound limiting, but can be sufficient for a surprising amount of use-cases as shown by the proliferation of Helm charts built by the Kubernetes community.

The main function of an Operator is to read from a custom object that represents your application instance and have its desired state match what is running. In the case of a Helm-based Operator, the spec field of the object is a list of configuration options that are typically described in the Helm values.yaml file. Instead of setting these values with flags using the Helm CLI (for example, helm install -f values.yaml), you can express them within a custom resource (CR), which, as a native Kubernetes object, enables the benefits of RBAC applied to it and an audit trail.

For an example of a simple CR called Tomcat:

apiVersion: apache.org/v1alpha1

kind: Tomcat

metadata:

name: example-app

spec:

replicaCount: 2

The replicaCount value, 2 in this case, is propagated into the template of the chart where the following is used:

{{ .Values.replicaCount }}

After an Operator is built and deployed, you can deploy a new instance of an app by creating a new instance of a CR, or list the different instances running in all environments using the oc command:

$ oc get Tomcats --all-namespacesThere is no requirement use the Helm CLI or install Tiller; Helm-based Operators import code from the Helm project. All you have to do is have an instance of the Operator running and register the CR with a custom resource definition (CRD). Because it obeys RBAC, you can more easily prevent production changes.

5.5.2. Building a Helm-based Operator using the Operator SDK

This procedure walks through an example of building a simple Nginx Operator powered by a Helm chart using tools and libraries provided by the Operator SDK.

It is best practice to build a new Operator for each chart. This can allow for more native-behaving Kubernetes APIs (for example, oc get Nginx) and flexibility if you ever want to write a fully-fledged Operator in Go, migrating away from a Helm-based Operator.

Prerequisites

- Operator SDK v0.19.4 CLI installed on the development workstation

-

Access to a Kubernetes-based cluster v1.11.3+ (for example OpenShift Container Platform 4.6) using an account with

cluster-adminpermissions -

OpenShift CLI (

oc) v4.6+ installed

Procedure

Create a new Operator project. A namespace-scoped Operator watches and manages resources in a single namespace. Namespace-scoped Operators are preferred because of their flexibility. They enable decoupled upgrades, namespace isolation for failures and monitoring, and differing API definitions.

To create a new Helm-based, namespace-scoped

nginx-operatorproject, use the following command:$ operator-sdk new nginx-operator \ --api-version=example.com/v1alpha1 \ --kind=Nginx \ --type=helm$ cd nginx-operatorThis creates the

nginx-operatorproject specifically for watching the Nginx resource with API versionexample.com/v1apha1and kindNginx.Customize the Operator logic.

For this example, the

nginx-operatorexecutes the following reconciliation logic for eachNginxcustom resource (CR):- Create an Nginx deployment if it does not exist.

- Create an Nginx service if it does not exist.

- Create an Nginx ingress if it is enabled and does not exist.

- Ensure that the deployment, service, and optional ingress match the desired configuration (for example, replica count, image, service type) as specified by the Nginx CR.

By default, the

nginx-operatorwatchesNginxresource events as shown in thewatches.yamlfile and executes Helm releases using the specified chart:- version: v1alpha1 group: example.com kind: Nginx chart: /opt/helm/helm-charts/nginxReview the Nginx Helm chart.

When a Helm Operator project is created, the Operator SDK creates an example Helm chart that contains a set of templates for a simple Nginx release.

For this example, templates are available for deployment, service, and ingress resources, along with a

NOTES.txttemplate, which Helm chart developers use to convey helpful information about a release.If you are not already familiar with Helm Charts, review the Helm Chart developer documentation.

Understand the Nginx CR spec.

Helm uses a concept called values to provide customizations to the defaults of a Helm chart, which are defined in the

values.yamlfile.Override these defaults by setting the desired values in the CR spec. You can use the number of replicas as an example:

First, inspect the

helm-charts/nginx/values.yamlfile to find that the chart has a value calledreplicaCountand it is set to1by default. To have 2 Nginx instances in your deployment, your CR spec must containreplicaCount: 2.Update the

deploy/crds/example.com_v1alpha1_nginx_cr.yamlfile to look like the following:apiVersion: example.com/v1alpha1 kind: Nginx metadata: name: example-nginx spec: replicaCount: 2Similarly, the default service port is set to

80. To instead use8080, update thedeploy/crds/example.com_v1alpha1_nginx_cr.yamlfile again by adding the service port override:apiVersion: example.com/v1alpha1 kind: Nginx metadata: name: example-nginx spec: replicaCount: 2 service: port: 8080The Helm Operator applies the entire spec as if it was the contents of a values file, just like the

helm install -f ./overrides.yamlcommand works.

Deploy the CRD.

Before running the Operator, Kubernetes must know about the new custom resource definition (CRD) that the Operator will be watching. Deploy the following CRD:

$ oc create -f deploy/crds/example_v1alpha1_nginx_crd.yamlBuild and run the Operator.

There are two ways to build and run the Operator:

- As a pod inside a Kubernetes cluster.

-

As a Go program outside the cluster using the

operator-sdk upcommand.

Choose one of the following methods:

Run as a pod inside a Kubernetes cluster. This is the preferred method for production use.

Build the

nginx-operatorimage and push it to a registry:$ operator-sdk build quay.io/example/nginx-operator:v0.0.1$ podman push quay.io/example/nginx-operator:v0.0.1Deployment manifests are generated in the

deploy/operator.yamlfile. The deployment image in this file needs to be modified from the placeholderREPLACE_IMAGEto the previous built image. To do this, run:$ sed -i 's|REPLACE_IMAGE|quay.io/example/nginx-operator:v0.0.1|g' deploy/operator.yamlDeploy the

nginx-operatormanifests:$ oc create -f deploy/service_account.yaml$ oc create -f deploy/role.yaml$ oc create -f deploy/role_binding.yaml$ oc create -f deploy/operator.yamlVerify that the

nginx-operatordeployment is up and running:$ oc get deploymentExample output

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE nginx-operator 1 1 1 1 1m

Run outside the cluster. This method is preferred during the development cycle to speed up deployment and testing.

It is important that the chart path referenced in the

watches.yamlfile exists on your machine. By default, thewatches.yamlfile is scaffolded to work with an Operator image built with theoperator-sdk buildcommand. When developing and testing your Operator with theoperator-sdk run --localcommand, the SDK looks in your local file system for this path.Create a symlink at this location to point to the path of your Helm chart:

$ sudo mkdir -p /opt/helm/helm-charts$ sudo ln -s $PWD/helm-charts/nginx /opt/helm/helm-charts/nginxTo run the Operator locally with the default Kubernetes configuration file present at

$HOME/.kube/config:$ operator-sdk run --localTo run the Operator locally with a provided Kubernetes configuration file:

$ operator-sdk run --local --kubeconfig=<path_to_config>

Deploy the

NginxCR.Apply the

NginxCR that you modified earlier:$ oc apply -f deploy/crds/example.com_v1alpha1_nginx_cr.yamlEnsure that the

nginx-operatorcreates the deployment for the CR:$ oc get deploymentExample output

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE example-nginx-b9phnoz9spckcrua7ihrbkrt1 2 2 2 2 1mCheck the pods to confirm two replicas were created:

$ oc get podsExample output

NAME READY STATUS RESTARTS AGE example-nginx-b9phnoz9spckcrua7ihrbkrt1-f8f9c875d-fjcr9 1/1 Running 0 1m example-nginx-b9phnoz9spckcrua7ihrbkrt1-f8f9c875d-ljbzl 1/1 Running 0 1mCheck that the service port is set to

8080:$ oc get serviceExample output

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE example-nginx-b9phnoz9spckcrua7ihrbkrt1 ClusterIP 10.96.26.3 <none> 8080/TCP 1mUpdate the

replicaCountand remove the port.Change the

spec.replicaCountfield from2to3, remove thespec.servicefield, and apply the change:$ cat deploy/crds/example.com_v1alpha1_nginx_cr.yamlExample output

apiVersion: "example.com/v1alpha1" kind: "Nginx" metadata: name: "example-nginx" spec: replicaCount: 3$ oc apply -f deploy/crds/example.com_v1alpha1_nginx_cr.yamlConfirm that the Operator changes the deployment size:

$ oc get deploymentExample output

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE example-nginx-b9phnoz9spckcrua7ihrbkrt1 3 3 3 3 1mCheck that the service port is set to the default

80:$ oc get serviceExample output

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE example-nginx-b9phnoz9spckcrua7ihrbkrt1 ClusterIP 10.96.26.3 <none> 80/TCP 1mClean up the resources:

$ oc delete -f deploy/crds/example.com_v1alpha1_nginx_cr.yaml$ oc delete -f deploy/operator.yaml$ oc delete -f deploy/role_binding.yaml$ oc delete -f deploy/role.yaml$ oc delete -f deploy/service_account.yaml$ oc delete -f deploy/crds/example_v1alpha1_nginx_crd.yaml

5.6. Generating a cluster service version (CSV)

A cluster service version (CSV), defined by a ClusterServiceVersion object, is a YAML manifest created from Operator metadata that assists Operator Lifecycle Manager (OLM) in running the Operator in a cluster. It is the metadata that accompanies an Operator container image, used to populate user interfaces with information such as its logo, description, and version. It is also a source of technical information that is required to run the Operator, like the RBAC rules it requires and which custom resources (CRs) it manages or depends on.

The Operator SDK includes the generate csv subcommand to generate a CSV for the current Operator project customized using information contained in manually-defined YAML manifests and Operator source files.

A CSV-generating command removes the responsibility of Operator authors having in-depth OLM knowledge in order for their Operator to interact with OLM or publish metadata to the Catalog Registry. Further, because the CSV spec will likely change over time as new Kubernetes and OLM features are implemented, the Operator SDK is equipped to easily extend its update system to handle new CSV features going forward.

The CSV version is the same as the Operator version, and a new CSV is generated when upgrading Operator versions. Operator authors can use the --csv-version flag to have their Operator state encapsulated in a CSV with the supplied semantic version:

$ operator-sdk generate csv --csv-version <version>

This action is idempotent and only updates the CSV file when a new version is supplied, or a YAML manifest or source file is changed. Operator authors should not have to directly modify most fields in a CSV manifest. Those that require modification are defined in this guide. For example, the CSV version must be included in metadata.name.

5.6.1. How CSV generation works

The deploy/ directory of an Operator project is the standard location for all manifests required to deploy an Operator. The Operator SDK can use data from manifests in deploy/ to write a cluster service version (CSV).

The following command:

$ operator-sdk generate csv --csv-version <version>

writes a CSV YAML file to the deploy/olm-catalog/ directory by default.

Exactly three types of manifests are required to generate a CSV:

-

operator.yaml -

*_{crd,cr}.yaml -

RBAC role files, for example

role.yaml

Operator authors may have different versioning requirements for these files and can configure which specific files are included in the deploy/olm-catalog/csv-config.yaml file.

Workflow

Depending on whether an existing CSV is detected, and assuming all configuration defaults are used, the generate csv subcommand either:

Creates a new CSV, with the same location and naming convention as exists currently, using available data in YAML manifests and source files.

-

The update mechanism checks for an existing CSV in

deploy/. When one is not found, it creates aClusterServiceVersionobject, referred to here as a cache, and populates fields easily derived from Operator metadata, such as Kubernetes APIObjectMeta. -

The update mechanism searches

deploy/for manifests that contain data a CSV uses, such as aDeploymentresource, and sets the appropriate CSV fields in the cache with this data. - After the search completes, every cache field populated is written back to a CSV YAML file.

-

The update mechanism checks for an existing CSV in

or:

Updates an existing CSV at the currently pre-defined location, using available data in YAML manifests and source files.

-

The update mechanism checks for an existing CSV in

deploy/. When one is found, the CSV YAML file contents are marshaled into a CSV cache. -

The update mechanism searches

deploy/for manifests that contain data a CSV uses, such as aDeploymentresource, and sets the appropriate CSV fields in the cache with this data. - After the search completes, every cache field populated is written back to a CSV YAML file.

-

The update mechanism checks for an existing CSV in

Individual YAML fields are overwritten and not the entire file, as descriptions and other non-generated parts of a CSV should be preserved.

5.6.2. CSV composition configuration

Operator authors can configure CSV composition by populating several fields in the deploy/olm-catalog/csv-config.yaml file:

| Field | Description |

|---|---|

|

|

The Operator resource manifest file path. Default: |

|

|

A list of CRD and CR manifest file paths. Default: |

|

|

A list of RBAC role manifest file paths. Default: |

5.6.3. Manually-defined CSV fields

Many CSV fields cannot be populated using generated, generic manifests that are not specific to Operator SDK. These fields are mostly human-written metadata about the Operator and various custom resource definitions (CRDs).

Operator authors must directly modify their cluster service version (CSV) YAML file, adding personalized data to the following required fields. The Operator SDK gives a warning during CSV generation when a lack of data in any of the required fields is detected.

The following tables detail which manually-defined CSV fields are required and which are optional.

| Field | Description |

|---|---|

|

|

A unique name for this CSV. Operator version should be included in the name to ensure uniqueness, for example |

|

|

The capability level according to the Operator maturity model. Options include |

|

| A public name to identify the Operator. |

|

| A short description of the functionality of the Operator. |

|

| Keywords describing the Operator. |

|

|

Human or organizational entities maintaining the Operator, with a |

|

|

The provider of the Operator (usually an organization), with a |

|

| Key-value pairs to be used by Operator internals. |

|

|

Semantic version of the Operator, for example |

|

|

Any CRDs the Operator uses. This field is populated automatically by the Operator SDK if any CRD YAML files are present in

|

| Field | Description |

|---|---|

|

| The name of the CSV being replaced by this CSV. |

|

|

URLs (for example, websites and documentation) pertaining to the Operator or application being managed, each with a |

|

| Selectors by which the Operator can pair resources in a cluster. |

|

|

A base64-encoded icon unique to the Operator, set in a |

|

|

The level of maturity the software has achieved at this version. Options include |

Further details on what data each field above should hold are found in the CSV spec.

Several YAML fields currently requiring user intervention can potentially be parsed from Operator code.

5.6.3.1. Operator metadata annotations

Operator developers can manually define certain annotations in the metadata of a cluster service version (CSV) to enable features or highlight capabilities in user interfaces (UIs), such as OperatorHub.

The following table lists Operator metadata annotations that can be manually defined using metadata.annotations fields.

| Field | Description |

|---|---|

|

| Provide custom resource definition (CRD) templates with a minimum set of configuration. Compatible UIs pre-fill this template for users to further customize. |

|

| Specify a single required custom resource that must be created at the time that the Operator is installed. Must include a template that contains a complete YAML definition. |

|

| Set a suggested namespace where the Operator should be deployed. |

|

| Infrastructure features supported by the Operator. Users can view and filter by these features when discovering Operators through OperatorHub in the web console. Valid, case-sensitive values:

Important

The use of FIPS Validated / Modules in Process cryptographic libraries is only supported on OpenShift Container Platform deployments on the

|

|

|

Free-form array for listing any specific subscriptions that are required to use the Operator. For example, |

|

| Hides CRDs in the UI that are not meant for user manipulation. |

Example use cases

Operator supports disconnected and proxy-aware

operators.openshift.io/infrastructure-features: '["disconnected", "proxy-aware"]'Operator requires an OpenShift Container Platform license

operators.openshift.io/valid-subscription: '["OpenShift Container Platform"]'Operator requires a 3scale license

operators.openshift.io/valid-subscription: '["3Scale Commercial License", "Red Hat Managed Integration"]'Operator supports disconnected and proxy-aware, and requires an OpenShift Container Platform license

operators.openshift.io/infrastructure-features: '["disconnected", "proxy-aware"]'

operators.openshift.io/valid-subscription: '["OpenShift Container Platform"]'5.6.4. Generating a CSV

Prerequisites

- An Operator project generated using the Operator SDK

Procedure

-

In your Operator project, configure your CSV composition by modifying the

deploy/olm-catalog/csv-config.yamlfile, if desired. Generate the CSV:

$ operator-sdk generate csv --csv-version <version>-

In the new CSV generated in the

deploy/olm-catalog/directory, ensure all required, manually-defined fields are set appropriately.

5.6.5. Enabling your Operator for restricted network environments

As an Operator author, your Operator must meet additional requirements to run properly in a restricted network, or disconnected, environment.

Operator requirements for supporting disconnected mode

In the cluster service version (CSV) of your Operator:

- List any related images, or other container images that your Operator might require to perform their functions.

- Reference all specified images by a digest (SHA) and not by a tag.

- All dependencies of your Operator must also support running in a disconnected mode.

- Your Operator must not require any off-cluster resources.

For the CSV requirements, you can make the following changes as the Operator author.

Prerequisites

- An Operator project with a CSV.

Procedure

Use SHA references to related images in two places in the CSV for your Operator:

Update

spec.relatedImages:... spec: relatedImages:1 - name: etcd-operator2 image: quay.io/etcd-operator/operator@sha256:d134a9865524c29fcf75bbc4469013bc38d8a15cb5f41acfddb6b9e492f556e43 - name: etcd-image image: quay.io/etcd-operator/etcd@sha256:13348c15263bd8838ec1d5fc4550ede9860fcbb0f843e48cbccec07810eebb68 ...Update the

envsection in the deployment when declaring environment variables that inject the image that the Operator should use:spec: install: spec: deployments: - name: etcd-operator-v3.1.1 spec: replicas: 1 selector: matchLabels: name: etcd-operator strategy: type: Recreate template: metadata: labels: name: etcd-operator spec: containers: - args: - /opt/etcd/bin/etcd_operator_run.sh env: - name: WATCH_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.annotations['olm.targetNamespaces'] - name: ETCD_OPERATOR_DEFAULT_ETCD_IMAGE1 value: quay.io/etcd-operator/etcd@sha256:13348c15263bd8838ec1d5fc4550ede9860fcbb0f843e48cbccec07810eebb682 - name: ETCD_LOG_LEVEL value: INFO image: quay.io/etcd-operator/operator@sha256:d134a9865524c29fcf75bbc4469013bc38d8a15cb5f41acfddb6b9e492f556e43 imagePullPolicy: IfNotPresent livenessProbe: httpGet: path: /healthy port: 8080 initialDelaySeconds: 10 periodSeconds: 30 name: etcd-operator readinessProbe: httpGet: path: /ready port: 8080 initialDelaySeconds: 10 periodSeconds: 30 resources: {} serviceAccountName: etcd-operator strategy: deploymentNoteWhen configuring probes, the

timeoutSecondsvalue must be lower than theperiodSecondsvalue. ThetimeoutSecondsdefault value is1. TheperiodSecondsdefault value is10.

Add the

disconnectedannotation, which indicates that the Operator works in a disconnected environment:metadata: annotations: operators.openshift.io/infrastructure-features: '["disconnected"]'Operators can be filtered in OperatorHub by this infrastructure feature.

5.6.6. Enabling your Operator for multiple architectures and operating systems