Ce contenu n'est pas disponible dans la langue sélectionnée.

Chapter 6. Using the Node Tuning Operator on ROSA with HCP clusters

Red Hat OpenShift Service on AWS (ROSA) with hosted control planes (HCP) supports the Node Tuning Operator to improve performance of your nodes on your ROSA with HCP clusters. Prior to creating a node tuning configuration, you must create a custom tuning specification.

Purpose

The Node Tuning Operator helps you manage node-level tuning by orchestrating the TuneD daemon and achieves low latency performance by using the Performance Profile controller. The majority of high-performance applications require some level of kernel tuning. The Node Tuning Operator provides a unified management interface to users of node-level sysctls and more flexibility to add custom tuning specified by user needs.

The Operator manages the containerized TuneD daemon for Red Hat OpenShift Service on AWS as a Kubernetes daemon set. It ensures the custom tuning specification is passed to all containerized TuneD daemons running in the cluster in the format that the daemons understand. The daemons run on all nodes in the cluster, one per node.

Node-level settings applied by the containerized TuneD daemon are rolled back on an event that triggers a profile change or when the containerized TuneD daemon is terminated gracefully by receiving and handling a termination signal.

The Node Tuning Operator uses the Performance Profile controller to implement automatic tuning to achieve low latency performance for Red Hat OpenShift Service on AWS applications.

The cluster administrator configures a performance profile to define node-level settings such as the following:

- Updating the kernel to kernel-rt.

- Choosing CPUs for housekeeping.

- Choosing CPUs for running workloads.

The Node Tuning Operator is part of a standard Red Hat OpenShift Service on AWS installation in version 4.1 and later.

In earlier versions of Red Hat OpenShift Service on AWS, the Performance Addon Operator was used to implement automatic tuning to achieve low latency performance for OpenShift applications. In Red Hat OpenShift Service on AWS 4.11 and later, this functionality is part of the Node Tuning Operator.

6.1. Custom tuning specification

The custom resource (CR) for the Operator has two major sections. The first section, profile:, is a list of TuneD profiles and their names. The second, recommend:, defines the profile selection logic.

Multiple custom tuning specifications can co-exist as multiple CRs in the Operator’s namespace. The existence of new CRs or the deletion of old CRs is detected by the Operator. All existing custom tuning specifications are merged and appropriate objects for the containerized TuneD daemons are updated.

Management state

The Operator Management state is set by adjusting the default Tuned CR. By default, the Operator is in the Managed state and the spec.managementState field is not present in the default Tuned CR. Valid values for the Operator Management state are as follows:

- Managed: the Operator will update its operands as configuration resources are updated

- Unmanaged: the Operator will ignore changes to the configuration resources

- Removed: the Operator will remove its operands and resources the Operator provisioned

Profile data

The profile: section lists TuneD profiles and their names.

{

"profile": [

{

"name": "tuned_profile_1",

"data": "# TuneD profile specification\n[main]\nsummary=Description of tuned_profile_1 profile\n\n[sysctl]\nnet.ipv4.ip_forward=1\n# ... other sysctl's or other TuneD daemon plugins supported by the containerized TuneD\n"

},

{

"name": "tuned_profile_n",

"data": "# TuneD profile specification\n[main]\nsummary=Description of tuned_profile_n profile\n\n# tuned_profile_n profile settings\n"

}

]

}Recommended profiles

The profile: selection logic is defined by the recommend: section of the CR. The recommend: section is a list of items to recommend the profiles based on a selection criteria.

"recommend": [

{

"recommend-item-1": details_of_recommendation,

# ...

"recommend-item-n": details_of_recommendation,

}

]The individual items of the list:

{

"profile": [

{

# ...

}

],

"recommend": [

{

"profile": <tuned_profile_name>, 1

"priority":{ <priority>, 2

},

"match": [ 3

{

"label": <label_information> 4

},

]

},

]

}

<match> is an optional list recursively defined as follows:

"match": [

{

"label": 1

},

]- 1

- Node or pod label name.

If <match> is not omitted, all nested <match> sections must also evaluate to true. Otherwise, false is assumed and the profile with the respective <match> section will not be applied or recommended. Therefore, the nesting (child <match> sections) works as logical AND operator. Conversely, if any item of the <match> list matches, the entire <match> list evaluates to true. Therefore, the list acts as logical OR operator.

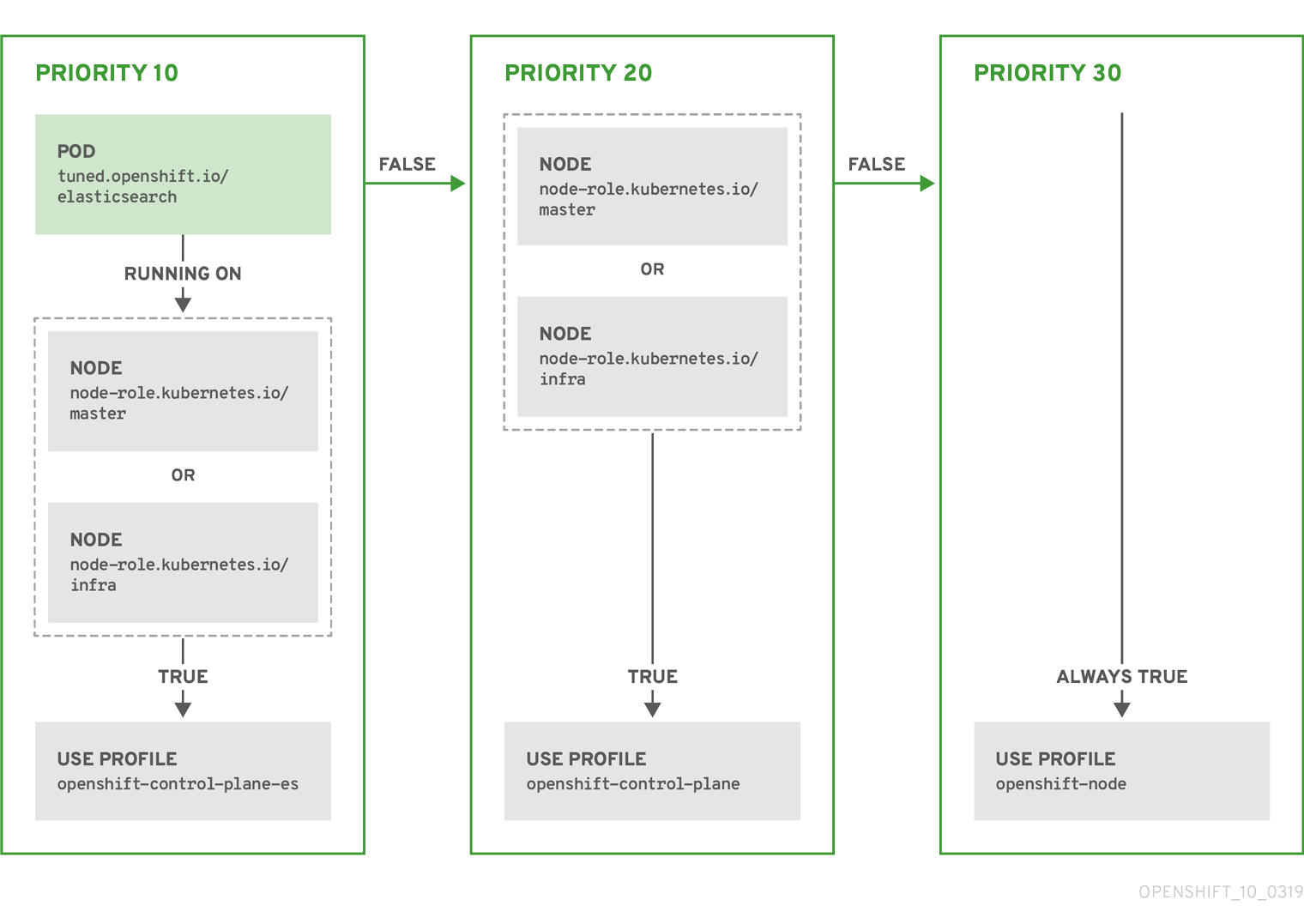

Example: Node or pod label based matching

[

{

"match": [

{

"label": "tuned.openshift.io/elasticsearch",

"match": [

{

"label": "node-role.kubernetes.io/master"

},

{

"label": "node-role.kubernetes.io/infra"

}

],

"type": "pod"

}

],

"priority": 10,

"profile": "openshift-control-plane-es"

},

{

"match": [

{

"label": "node-role.kubernetes.io/master"

},

{

"label": "node-role.kubernetes.io/infra"

}

],

"priority": 20,

"profile": "openshift-control-plane"

},

{

"priority": 30,

"profile": "openshift-node"

}

]

The CR above is translated for the containerized TuneD daemon into its recommend.conf file based on the profile priorities. The profile with the highest priority (10) is openshift-control-plane-es and, therefore, it is considered first. The containerized TuneD daemon running on a given node looks to see if there is a pod running on the same node with the tuned.openshift.io/elasticsearch label set. If not, the entire <match> section evaluates as false. If there is such a pod with the label, in order for the <match> section to evaluate to true, the node label also needs to be node-role.kubernetes.io/master or node-role.kubernetes.io/infra.

If the labels for the profile with priority 10 matched, openshift-control-plane-es profile is applied and no other profile is considered. If the node/pod label combination did not match, the second highest priority profile (openshift-control-plane) is considered. This profile is applied if the containerized TuneD pod runs on a node with labels node-role.kubernetes.io/master or node-role.kubernetes.io/infra.

Finally, the profile openshift-node has the lowest priority of 30. It lacks the <match> section and, therefore, will always match. It acts as a profile catch-all to set openshift-node profile, if no other profile with higher priority matches on a given node.

Example: Machine pool based matching

{

"apiVersion": "tuned.openshift.io/v1",

"kind": "Tuned",

"metadata": {

"name": "openshift-node-custom",

"namespace": "openshift-cluster-node-tuning-operator"

},

"spec": {

"profile": [

{

"data": "[main]\nsummary=Custom OpenShift node profile with an additional kernel parameter\ninclude=openshift-node\n[bootloader]\ncmdline_openshift_node_custom=+skew_tick=1\n",

"name": "openshift-node-custom"

}

],

"recommend": [

{

"priority": 20,

"profile": "openshift-node-custom"

}

]

}

}

Cloud provider-specific TuneD profiles

With this functionality, all Cloud provider-specific nodes can conveniently be assigned a TuneD profile specifically tailored to a given Cloud provider on a Red Hat OpenShift Service on AWS cluster. This can be accomplished without adding additional node labels or grouping nodes into machine pools.

This functionality takes advantage of spec.providerID node object values in the form of <cloud-provider>://<cloud-provider-specific-id> and writes the file /var/lib/ocp-tuned/provider with the value <cloud-provider> in NTO operand containers. The content of this file is then used by TuneD to load provider-<cloud-provider> profile if such profile exists.

The openshift profile that both openshift-control-plane and openshift-node profiles inherit settings from is now updated to use this functionality through the use of conditional profile loading. Neither NTO nor TuneD currently include any Cloud provider-specific profiles. However, it is possible to create a custom profile provider-<cloud-provider> that will be applied to all Cloud provider-specific cluster nodes.

Example GCE Cloud provider profile

{

"apiVersion": "tuned.openshift.io/v1",

"kind": "Tuned",

"metadata": {

"name": "provider-gce",

"namespace": "openshift-cluster-node-tuning-operator"

},

"spec": {

"profile": [

{

"data": "[main]\nsummary=GCE Cloud provider-specific profile\n# Your tuning for GCE Cloud provider goes here.\n",

"name": "provider-gce"

}

]

}

}

Due to profile inheritance, any setting specified in the provider-<cloud-provider> profile will be overwritten by the openshift profile and its child profiles.

6.2. Creating node tuning configurations on ROSA with HCP

You can create tuning configurations using the Red Hat OpenShift Service on AWS (ROSA) CLI, rosa.

Prerequisites

- You have downloaded the latest version of the ROSA CLI.

- You have a cluster on the latest version.

- You have a specification file configured for node tuning.

Procedure

Run the following command to create your tuning configuration:

$ rosa create tuning-config -c <cluster_id> --name <name_of_tuning> --spec-path <path_to_spec_file>

You must supply the path to the

spec.jsonfile or the command returns an error.Example output

$ I: Tuning config 'sample-tuning' has been created on cluster 'cluster-example'. $ I: To view all tuning configs, run 'rosa list tuning-configs -c cluster-example'

Validation

You can verify the existing tuning configurations that are applied by your account with the following command:

$ rosa list tuning-configs -c <cluster_name> [-o json]

You can specify the type of output you want for the configuration list.

Without specifying the output type, you see the ID and name of the tuning configuration:

Example output without specifying output type

ID NAME 20468b8e-edc7-11ed-b0e4-0a580a800298 sample-tuning

If you specify an output type, such as

json, you receive the tuning configuration as JSON text:NoteThe following JSON output has hard line-returns for the sake of reading clarity. This JSON output is invalid unless you remove the newlines in the JSON strings.

Example output specifying JSON output

[ { "kind": "TuningConfig", "id": "20468b8e-edc7-11ed-b0e4-0a580a800298", "href": "/api/clusters_mgmt/v1/clusters/23jbsevqb22l0m58ps39ua4trff9179e/tuning_configs/20468b8e-edc7-11ed-b0e4-0a580a800298", "name": "sample-tuning", "spec": { "profile": [ { "data": "[main]\nsummary=Custom OpenShift profile\ninclude=openshift-node\n\n[sysctl]\nvm.dirty_ratio=\"55\"\n", "name": "tuned-1-profile" } ], "recommend": [ { "priority": 20, "profile": "tuned-1-profile" } ] } } ]

6.3. Modifying your node tuning configurations for ROSA with HCP

You can view and update the node tuning configurations using the Red Hat OpenShift Service on AWS (ROSA) CLI, rosa.

Prerequisites

- You have downloaded the latest version of the ROSA CLI.

- You have a cluster on the latest version

- Your cluster has a node tuning configuration added to it

Procedure

You view the tuning configurations with the

rosa describecommand:$ rosa describe tuning-config -c <cluster_id> 1 --name <name_of_tuning> 2 [-o json] 3

The following items in this spec file are:

Example output without specifying output type

Name: sample-tuning ID: 20468b8e-edc7-11ed-b0e4-0a580a800298 Spec: { "profile": [ { "data": "[main]\nsummary=Custom OpenShift profile\ninclude=openshift-node\n\n[sysctl]\nvm.dirty_ratio=\"55\"\n", "name": "tuned-1-profile" } ], "recommend": [ { "priority": 20, "profile": "tuned-1-profile" } ] }Example output specifying JSON output

{ "kind": "TuningConfig", "id": "20468b8e-edc7-11ed-b0e4-0a580a800298", "href": "/api/clusters_mgmt/v1/clusters/23jbsevqb22l0m58ps39ua4trff9179e/tuning_configs/20468b8e-edc7-11ed-b0e4-0a580a800298", "name": "sample-tuning", "spec": { "profile": [ { "data": "[main]\nsummary=Custom OpenShift profile\ninclude=openshift-node\n\n[sysctl]\nvm.dirty_ratio=\"55\"\n", "name": "tuned-1-profile" } ], "recommend": [ { "priority": 20, "profile": "tuned-1-profile" } ] } }After verifying the tuning configuration, you edit the existing configurations with the

rosa editcommand:$ rosa edit tuning-config -c <cluster_id> --name <name_of_tuning> --spec-path <path_to_spec_file>

In this command, you use the

spec.jsonfile to edit your configurations.

Verification

Run the

rosa describecommand again, to see that the changes you made to thespec.jsonfile are updated in the tuning configurations:$ rosa describe tuning-config -c <cluster_id> --name <name_of_tuning>

Example output

Name: sample-tuning ID: 20468b8e-edc7-11ed-b0e4-0a580a800298 Spec: { "profile": [ { "data": "[main]\nsummary=Custom OpenShift profile\ninclude=openshift-node\n\n[sysctl]\nvm.dirty_ratio=\"55\"\n", "name": "tuned-2-profile" } ], "recommend": [ { "priority": 10, "profile": "tuned-2-profile" } ] }

6.4. Deleting node tuning configurations on ROSA with HCP

You can delete tuning configurations by using the Red Hat OpenShift Service on AWS (ROSA) CLI, rosa.

You cannot delete a tuning configuration referenced in a machine pool. You must first remove the tuning configuration from all machine pools before you can delete it.

Prerequisites

- You have downloaded the latest version of the ROSA CLI.

- You have a cluster on the latest version .

- Your cluster has a node tuning configuration that you want delete.

Procedure

To delete the tuning configurations, run the following command:

$ rosa delete tuning-config -c <cluster_id> <name_of_tuning>

The tuning configuration on the cluster is deleted

Example output

? Are you sure you want to delete tuning config sample-tuning on cluster sample-cluster? Yes I: Successfully deleted tuning config 'sample-tuning' from cluster 'sample-cluster'