Questo contenuto non è disponibile nella lingua selezionata.

Chapter 31. Load balancing with MetalLB

31.1. About MetalLB and the MetalLB Operator

As a cluster administrator, you can add the MetalLB Operator to your cluster so that when a service of type LoadBalancer is added to the cluster, MetalLB can add an external IP address for the service. The external IP address is added to the host network for your cluster.

31.1.1. When to use MetalLB

Using MetalLB is valuable when you have a bare-metal cluster, or an infrastructure that is like bare metal, and you want fault-tolerant access to an application through an external IP address.

You must configure your networking infrastructure to ensure that network traffic for the external IP address is routed from clients to the host network for the cluster.

After deploying MetalLB with the MetalLB Operator, when you add a service of type LoadBalancer, MetalLB provides a platform-native load balancer.

MetalLB operating in layer2 mode provides support for failover by utilizing a mechanism similar to IP failover. However, instead of relying on the virtual router redundancy protocol (VRRP) and keepalived, MetalLB leverages a gossip-based protocol to identify instances of node failure. When a failover is detected, another node assumes the role of the leader node, and a gratuitous ARP message is dispatched to broadcast this change.

MetalLB operating in layer3 or border gateway protocol (BGP) mode delegates failure detection to the network. The BGP router or routers that the OpenShift Container Platform nodes have established a connection with will identify any node failure and terminate the routes to that node.

Using MetalLB instead of IP failover is preferable for ensuring high availability of pods and services.

31.1.2. MetalLB Operator custom resources

The MetalLB Operator monitors its own namespace for the following custom resources:

MetalLB-

When you add a

MetalLBcustom resource to the cluster, the MetalLB Operator deploys MetalLB on the cluster. The Operator only supports a single instance of the custom resource. If the instance is deleted, the Operator removes MetalLB from the cluster. IPAddressPoolMetalLB requires one or more pools of IP addresses that it can assign to a service when you add a service of type

LoadBalancer. AnIPAddressPoolincludes a list of IP addresses. The list can be a single IP address that is set using a range, such as 1.1.1.1-1.1.1.1, a range specified in CIDR notation, a range specified as a starting and ending address separated by a hyphen, or a combination of the three. AnIPAddressPoolrequires a name. The documentation uses names likedoc-example,doc-example-reserved, anddoc-example-ipv6. AnIPAddressPoolassigns IP addresses from the pool.L2AdvertisementandBGPAdvertisementcustom resources enable the advertisement of a given IP from a given pool.NoteA single

IPAddressPoolcan be referenced by a L2 advertisement and a BGP advertisement.BGPPeer- The BGP peer custom resource identifies the BGP router for MetalLB to communicate with, the AS number of the router, the AS number for MetalLB, and customizations for route advertisement. MetalLB advertises the routes for service load-balancer IP addresses to one or more BGP peers.

BFDProfile- The BFD profile custom resource configures Bidirectional Forwarding Detection (BFD) for a BGP peer. BFD provides faster path failure detection than BGP alone provides.

L2Advertisement-

The L2Advertisement custom resource advertises an IP coming from an

IPAddressPoolusing the L2 protocol. BGPAdvertisement-

The BGPAdvertisement custom resource advertises an IP coming from an

IPAddressPoolusing the BGP protocol.

After you add the MetalLB custom resource to the cluster and the Operator deploys MetalLB, the controller and speaker MetalLB software components begin running.

MetalLB validates all relevant custom resources.

31.1.3. MetalLB software components

When you install the MetalLB Operator, the metallb-operator-controller-manager deployment starts a pod. The pod is the implementation of the Operator. The pod monitors for changes to all the relevant resources.

When the Operator starts an instance of MetalLB, it starts a controller deployment and a speaker daemon set.

You can configure deployment specifications in the MetalLB custom resource to manage how controller and speaker pods deploy and run in your cluster. For more information about these deployment specifications, see the Additional resources section.

controllerThe Operator starts the deployment and a single pod. When you add a service of type

LoadBalancer, Kubernetes uses thecontrollerto allocate an IP address from an address pool. In case of a service failure, verify you have the following entry in yourcontrollerpod logs:Example output

"event":"ipAllocated","ip":"172.22.0.201","msg":"IP address assigned by controllerspeakerThe Operator starts a daemon set for

speakerpods. By default, a pod is started on each node in your cluster. You can limit the pods to specific nodes by specifying a node selector in theMetalLBcustom resource when you start MetalLB. If thecontrollerallocated the IP address to the service and service is still unavailable, read thespeakerpod logs. If thespeakerpod is unavailable, run theoc describe pod -ncommand.For layer 2 mode, after the

controllerallocates an IP address for the service, thespeakerpods use an algorithm to determine whichspeakerpod on which node will announce the load balancer IP address. The algorithm involves hashing the node name and the load balancer IP address. For more information, see "MetalLB and external traffic policy". Thespeakeruses Address Resolution Protocol (ARP) to announce IPv4 addresses and Neighbor Discovery Protocol (NDP) to announce IPv6 addresses.

For Border Gateway Protocol (BGP) mode, after the controller allocates an IP address for the service, each speaker pod advertises the load balancer IP address with its BGP peers. You can configure which nodes start BGP sessions with BGP peers.

Requests for the load balancer IP address are routed to the node with the speaker that announces the IP address. After the node receives the packets, the service proxy routes the packets to an endpoint for the service. The endpoint can be on the same node in the optimal case, or it can be on another node. The service proxy chooses an endpoint each time a connection is established.

31.1.4. MetalLB and external traffic policy

With layer 2 mode, one node in your cluster receives all the traffic for the service IP address. With BGP mode, a router on the host network opens a connection to one of the nodes in the cluster for a new client connection. How your cluster handles the traffic after it enters the node is affected by the external traffic policy.

clusterThis is the default value for

spec.externalTrafficPolicy.With the

clustertraffic policy, after the node receives the traffic, the service proxy distributes the traffic to all the pods in your service. This policy provides uniform traffic distribution across the pods, but it obscures the client IP address and it can appear to the application in your pods that the traffic originates from the node rather than the client.localWith the

localtraffic policy, after the node receives the traffic, the service proxy only sends traffic to the pods on the same node. For example, if thespeakerpod on node A announces the external service IP, then all traffic is sent to node A. After the traffic enters node A, the service proxy only sends traffic to pods for the service that are also on node A. Pods for the service that are on additional nodes do not receive any traffic from node A. Pods for the service on additional nodes act as replicas in case failover is needed.This policy does not affect the client IP address. Application pods can determine the client IP address from the incoming connections.

The following information is important when configuring the external traffic policy in BGP mode.

Although MetalLB advertises the load balancer IP address from all the eligible nodes, the number of nodes loadbalancing the service can be limited by the capacity of the router to establish equal-cost multipath (ECMP) routes. If the number of nodes advertising the IP is greater than the ECMP group limit of the router, the router will use less nodes than the ones advertising the IP.

For example, if the external traffic policy is set to local and the router has an ECMP group limit set to 16 and the pods implementing a LoadBalancer service are deployed on 30 nodes, this would result in pods deployed on 14 nodes not receiving any traffic. In this situation, it would be preferable to set the external traffic policy for the service to cluster.

31.1.5. MetalLB concepts for layer 2 mode

In layer 2 mode, the speaker pod on one node announces the external IP address for a service to the host network. From a network perspective, the node appears to have multiple IP addresses assigned to a network interface.

In layer 2 mode, MetalLB relies on ARP and NDP. These protocols implement local address resolution within a specific subnet. In this context, the client must be able to reach the VIP assigned by MetalLB that exists on the same subnet as the nodes announcing the service in order for MetalLB to work.

The speaker pod responds to ARP requests for IPv4 services and NDP requests for IPv6.

In layer 2 mode, all traffic for a service IP address is routed through one node. After traffic enters the node, the service proxy for the CNI network provider distributes the traffic to all the pods for the service.

Because all traffic for a service enters through a single node in layer 2 mode, in a strict sense, MetalLB does not implement a load balancer for layer 2. Rather, MetalLB implements a failover mechanism for layer 2 so that when a speaker pod becomes unavailable, a speaker pod on a different node can announce the service IP address.

When a node becomes unavailable, failover is automatic. The speaker pods on the other nodes detect that a node is unavailable and a new speaker pod and node take ownership of the service IP address from the failed node.

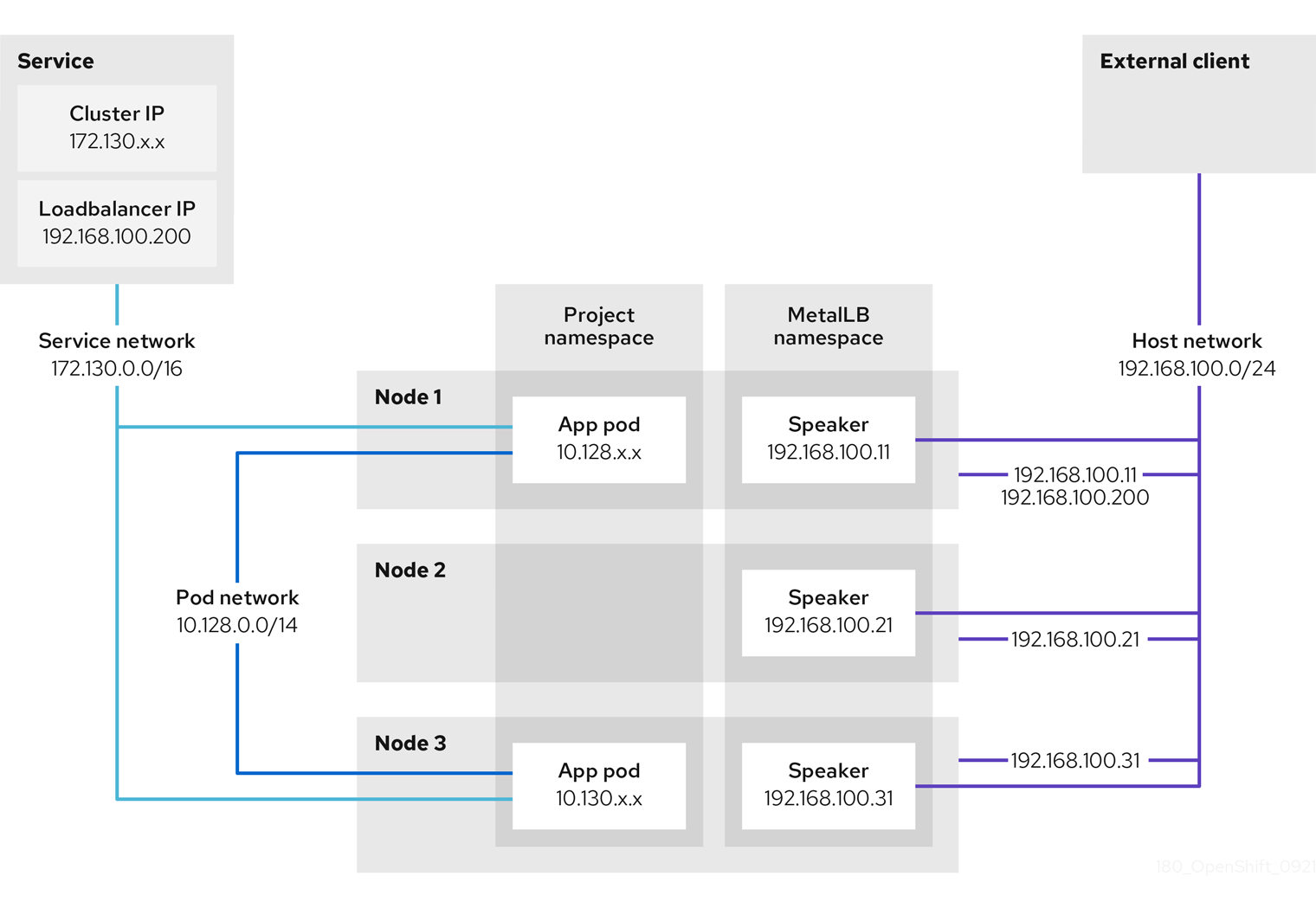

The preceding graphic shows the following concepts related to MetalLB:

-

An application is available through a service that has a cluster IP on the

172.130.0.0/16subnet. That IP address is accessible from inside the cluster. The service also has an external IP address that MetalLB assigned to the service,192.168.100.200. - Nodes 1 and 3 have a pod for the application.

-

The

speakerdaemon set runs a pod on each node. The MetalLB Operator starts these pods. -

Each

speakerpod is a host-networked pod. The IP address for the pod is identical to the IP address for the node on the host network. -

The

speakerpod on node 1 uses ARP to announce the external IP address for the service,192.168.100.200. Thespeakerpod that announces the external IP address must be on the same node as an endpoint for the service and the endpoint must be in theReadycondition. Client traffic is routed to the host network and connects to the

192.168.100.200IP address. After traffic enters the node, the service proxy sends the traffic to the application pod on the same node or another node according to the external traffic policy that you set for the service.-

If the external traffic policy for the service is set to

cluster, the node that advertises the192.168.100.200load balancer IP address is selected from the nodes where aspeakerpod is running. Only that node can receive traffic for the service. -

If the external traffic policy for the service is set to

local, the node that advertises the192.168.100.200load balancer IP address is selected from the nodes where aspeakerpod is running and at least an endpoint of the service. Only that node can receive traffic for the service. In the preceding graphic, either node 1 or 3 would advertise192.168.100.200.

-

If the external traffic policy for the service is set to

-

If node 1 becomes unavailable, the external IP address fails over to another node. On another node that has an instance of the application pod and service endpoint, the

speakerpod begins to announce the external IP address,192.168.100.200and the new node receives the client traffic. In the diagram, the only candidate is node 3.

31.1.6. MetalLB concepts for BGP mode

In BGP mode, by default each speaker pod advertises the load balancer IP address for a service to each BGP peer. It is also possible to advertise the IPs coming from a given pool to a specific set of peers by adding an optional list of BGP peers. BGP peers are commonly network routers that are configured to use the BGP protocol. When a router receives traffic for the load balancer IP address, the router picks one of the nodes with a speaker pod that advertised the IP address. The router sends the traffic to that node. After traffic enters the node, the service proxy for the CNI network plugin distributes the traffic to all the pods for the service.

The directly-connected router on the same layer 2 network segment as the cluster nodes can be configured as a BGP peer. If the directly-connected router is not configured as a BGP peer, you need to configure your network so that packets for load balancer IP addresses are routed between the BGP peers and the cluster nodes that run the speaker pods.

Each time a router receives new traffic for the load balancer IP address, it creates a new connection to a node. Each router manufacturer has an implementation-specific algorithm for choosing which node to initiate the connection with. However, the algorithms commonly are designed to distribute traffic across the available nodes for the purpose of balancing the network load.

If a node becomes unavailable, the router initiates a new connection with another node that has a speaker pod that advertises the load balancer IP address.

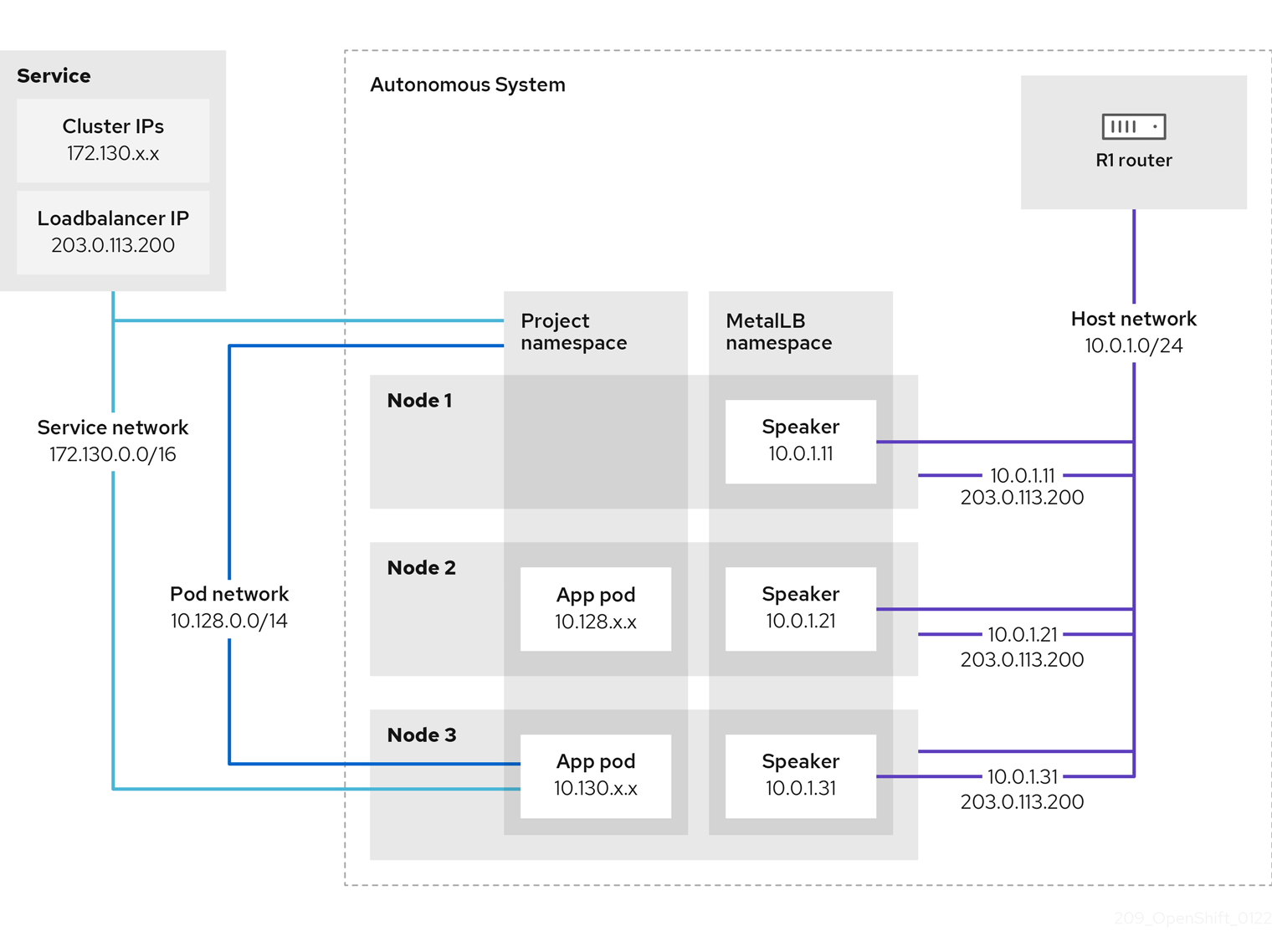

Figure 31.1. MetalLB topology diagram for BGP mode

The preceding graphic shows the following concepts related to MetalLB:

-

An application is available through a service that has an IPv4 cluster IP on the

172.130.0.0/16subnet. That IP address is accessible from inside the cluster. The service also has an external IP address that MetalLB assigned to the service,203.0.113.200. - Nodes 2 and 3 have a pod for the application.

-

The

speakerdaemon set runs a pod on each node. The MetalLB Operator starts these pods. You can configure MetalLB to specify which nodes run thespeakerpods. -

Each

speakerpod is a host-networked pod. The IP address for the pod is identical to the IP address for the node on the host network. -

Each

speakerpod starts a BGP session with all BGP peers and advertises the load balancer IP addresses or aggregated routes to the BGP peers. Thespeakerpods advertise that they are part of Autonomous System 65010. The diagram shows a router, R1, as a BGP peer within the same Autonomous System. However, you can configure MetalLB to start BGP sessions with peers that belong to other Autonomous Systems. All the nodes with a

speakerpod that advertises the load balancer IP address can receive traffic for the service.-

If the external traffic policy for the service is set to

cluster, all the nodes where a speaker pod is running advertise the203.0.113.200load balancer IP address and all the nodes with aspeakerpod can receive traffic for the service. The host prefix is advertised to the router peer only if the external traffic policy is set to cluster. -

If the external traffic policy for the service is set to

local, then all the nodes where aspeakerpod is running and at least an endpoint of the service is running can advertise the203.0.113.200load balancer IP address. Only those nodes can receive traffic for the service. In the preceding graphic, nodes 2 and 3 would advertise203.0.113.200.

-

If the external traffic policy for the service is set to

-

You can configure MetalLB to control which

speakerpods start BGP sessions with specific BGP peers by specifying a node selector when you add a BGP peer custom resource. - Any routers, such as R1, that are configured to use BGP can be set as BGP peers.

- Client traffic is routed to one of the nodes on the host network. After traffic enters the node, the service proxy sends the traffic to the application pod on the same node or another node according to the external traffic policy that you set for the service.

- If a node becomes unavailable, the router detects the failure and initiates a new connection with another node. You can configure MetalLB to use a Bidirectional Forwarding Detection (BFD) profile for BGP peers. BFD provides faster link failure detection so that routers can initiate new connections earlier than without BFD.

31.1.7. Limitations and restrictions

31.1.7.1. Infrastructure considerations for MetalLB

MetalLB is primarily useful for on-premise, bare metal installations because these installations do not include a native load-balancer capability. In addition to bare metal installations, installations of OpenShift Container Platform on some infrastructures might not include a native load-balancer capability. For example, the following infrastructures can benefit from adding the MetalLB Operator:

- Bare metal

- VMware vSphere

MetalLB Operator and MetalLB are supported with the OpenShift SDN and OVN-Kubernetes network providers.

31.1.7.2. Limitations for layer 2 mode

31.1.7.2.1. Single-node bottleneck

MetalLB routes all traffic for a service through a single node, the node can become a bottleneck and limit performance.

Layer 2 mode limits the ingress bandwidth for your service to the bandwidth of a single node. This is a fundamental limitation of using ARP and NDP to direct traffic.

31.1.7.2.2. Slow failover performance

Failover between nodes depends on cooperation from the clients. When a failover occurs, MetalLB sends gratuitous ARP packets to notify clients that the MAC address associated with the service IP has changed.

Most client operating systems handle gratuitous ARP packets correctly and update their neighbor caches promptly. When clients update their caches quickly, failover completes within a few seconds. Clients typically fail over to a new node within 10 seconds. However, some client operating systems either do not handle gratuitous ARP packets at all or have outdated implementations that delay the cache update.

Recent versions of common operating systems such as Windows, macOS, and Linux implement layer 2 failover correctly. Issues with slow failover are not expected except for older and less common client operating systems.

To minimize the impact from a planned failover on outdated clients, keep the old node running for a few minutes after flipping leadership. The old node can continue to forward traffic for outdated clients until their caches refresh.

During an unplanned failover, the service IPs are unreachable until the outdated clients refresh their cache entries.

31.1.7.2.3. Additional Network and MetalLB cannot use same network

Using the same VLAN for both MetalLB and an additional network interface set up on a source pod might result in a connection failure. This occurs when both the MetalLB IP and the source pod reside on the same node.

To avoid connection failures, place the MetalLB IP in a different subnet from the one where the source pod resides. This configuration ensures that traffic from the source pod will take the default gateway. Consequently, the traffic can effectively reach its destination by using the OVN overlay network, ensuring that the connection functions as intended.

31.1.7.3. Limitations for BGP mode

31.1.7.3.1. Node failure can break all active connections

MetalLB shares a limitation that is common to BGP-based load balancing. When a BGP session terminates, such as when a node fails or when a speaker pod restarts, the session termination might result in resetting all active connections. End users can experience a Connection reset by peer message.

The consequence of a terminated BGP session is implementation-specific for each router manufacturer. However, you can anticipate that a change in the number of speaker pods affects the number of BGP sessions and that active connections with BGP peers will break.

To avoid or reduce the likelihood of a service interruption, you can specify a node selector when you add a BGP peer. By limiting the number of nodes that start BGP sessions, a fault on a node that does not have a BGP session has no affect on connections to the service.

31.1.7.3.2. Support for a single ASN and a single router ID only

When you add a BGP peer custom resource, you specify the spec.myASN field to identify the Autonomous System Number (ASN) that MetalLB belongs to. OpenShift Container Platform uses an implementation of BGP with MetalLB that requires MetalLB to belong to a single ASN. If you attempt to add a BGP peer and specify a different value for spec.myASN than an existing BGP peer custom resource, you receive an error.

Similarly, when you add a BGP peer custom resource, the spec.routerID field is optional. If you specify a value for this field, you must specify the same value for all other BGP peer custom resources that you add.

The limitation to support a single ASN and single router ID is a difference with the community-supported implementation of MetalLB.

31.2. Installing the MetalLB Operator

As a cluster administrator, you can add the MetallB Operator so that the Operator can manage the lifecycle for an instance of MetalLB on your cluster.

MetalLB and IP failover are incompatible. If you configured IP failover for your cluster, perform the steps to remove IP failover before you install the Operator.

31.2.1. Installing the MetalLB Operator from the OperatorHub using the web console

As a cluster administrator, you can install the MetalLB Operator by using the OpenShift Container Platform web console.

Prerequisites

-

Log in as a user with

cluster-adminprivileges.

Procedure

-

In the OpenShift Container Platform web console, navigate to Operators

OperatorHub. Type a keyword into the Filter by keyword box or scroll to find the Operator you want. For example, type

metallbto find the MetalLB Operator.You can also filter options by Infrastructure Features. For example, select Disconnected if you want to see Operators that work in disconnected environments, also known as restricted network environments.

- On the Install Operator page, accept the defaults and click Install.

Verification

To confirm that the installation is successful:

-

Navigate to the Operators

Installed Operators page. -

Check that the Operator is installed in the

openshift-operatorsnamespace and that its status isSucceeded.

-

Navigate to the Operators

If the Operator is not installed successfully, check the status of the Operator and review the logs:

-

Navigate to the Operators

Installed Operators page and inspect the Statuscolumn for any errors or failures. -

Navigate to the Workloads

Pods page and check the logs in any pods in the openshift-operatorsproject that are reporting issues.

-

Navigate to the Operators

31.2.2. Installing from OperatorHub by using the CLI

Instead of using the OpenShift Container Platform web console, you can install an Operator from OperatorHub using the CLI. You can use the OpenShift CLI (oc) to install the MetalLB Operator.

It is recommended that when using the CLI you install the Operator in the metallb-system namespace.

Prerequisites

- A cluster installed on bare-metal hardware.

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create a namespace for the MetalLB Operator by entering the following command:

$ cat << EOF | oc apply -f - apiVersion: v1 kind: Namespace metadata: name: metallb-system EOFCreate an Operator group custom resource (CR) in the namespace:

$ cat << EOF | oc apply -f - apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: metallb-operator namespace: metallb-system EOFConfirm the Operator group is installed in the namespace:

$ oc get operatorgroup -n metallb-systemExample output

NAME AGE metallb-operator 14mCreate a

SubscriptionCR:Define the

SubscriptionCR and save the YAML file, for example,metallb-sub.yaml:apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: metallb-operator-sub namespace: metallb-system spec: channel: stable name: metallb-operator source: redhat-operators1 sourceNamespace: openshift-marketplace- 1

- You must specify the

redhat-operatorsvalue.

To create the

SubscriptionCR, run the following command:$ oc create -f metallb-sub.yaml

Optional: To ensure BGP and BFD metrics appear in Prometheus, you can label the namespace as in the following command:

$ oc label ns metallb-system "openshift.io/cluster-monitoring=true"

Verification

The verification steps assume the MetalLB Operator is installed in the metallb-system namespace.

Confirm the install plan is in the namespace:

$ oc get installplan -n metallb-systemExample output

NAME CSV APPROVAL APPROVED install-wzg94 metallb-operator.4.12.0-nnnnnnnnnnnn Automatic trueNoteInstallation of the Operator might take a few seconds.

To verify that the Operator is installed, enter the following command:

$ oc get clusterserviceversion -n metallb-system \ -o custom-columns=Name:.metadata.name,Phase:.status.phaseExample output

Name Phase metallb-operator.4.12.0-nnnnnnnnnnnn Succeeded

31.2.3. Starting MetalLB on your cluster

After you install the Operator, you need to configure a single instance of a MetalLB custom resource. After you configure the custom resource, the Operator starts MetalLB on your cluster.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges. - Install the MetalLB Operator.

Procedure

This procedure assumes the MetalLB Operator is installed in the metallb-system namespace. If you installed using the web console substitute openshift-operators for the namespace.

Create a single instance of a MetalLB custom resource:

$ cat << EOF | oc apply -f - apiVersion: metallb.io/v1beta1 kind: MetalLB metadata: name: metallb namespace: metallb-system EOF

Verification

Confirm that the deployment for the MetalLB controller and the daemon set for the MetalLB speaker are running.

Verify that the deployment for the controller is running:

$ oc get deployment -n metallb-system controllerExample output

NAME READY UP-TO-DATE AVAILABLE AGE controller 1/1 1 1 11mVerify that the daemon set for the speaker is running:

$ oc get daemonset -n metallb-system speakerExample output

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE speaker 6 6 6 6 6 kubernetes.io/os=linux 18mThe example output indicates 6 speaker pods. The number of speaker pods in your cluster might differ from the example output. Make sure the output indicates one pod for each node in your cluster.

31.2.4. Deployment specifications for MetalLB

When you start an instance of MetalLB using the MetalLB custom resource, you can configure deployment specifications in the MetalLB custom resource to manage how the controller or speaker pods deploy and run in your cluster. Use these deployment specifications to manage the following tasks:

- Select nodes for MetalLB pod deployment.

- Manage scheduling by using pod priority and pod affinity.

- Assign CPU limits for MetalLB pods.

- Assign a container RuntimeClass for MetalLB pods.

- Assign metadata for MetalLB pods.

31.2.4.1. Limit speaker pods to specific nodes

By default, when you start MetalLB with the MetalLB Operator, the Operator starts an instance of a speaker pod on each node in the cluster. Only the nodes with a speaker pod can advertise a load balancer IP address. You can configure the MetalLB custom resource with a node selector to specify which nodes run the speaker pods.

The most common reason to limit the speaker pods to specific nodes is to ensure that only nodes with network interfaces on specific networks advertise load balancer IP addresses. Only the nodes with a running speaker pod are advertised as destinations of the load balancer IP address.

If you limit the speaker pods to specific nodes and specify local for the external traffic policy of a service, then you must ensure that the application pods for the service are deployed to the same nodes.

Example configuration to limit speaker pods to worker nodes

apiVersion: metallb.io/v1beta1

kind: MetalLB

metadata:

name: metallb

namespace: metallb-system

spec:

nodeSelector: <.>

node-role.kubernetes.io/worker: ""

speakerTolerations: <.>

- key: "Example"

operator: "Exists"

effect: "NoExecute"

<.> The example configuration specifies to assign the speaker pods to worker nodes, but you can specify labels that you assigned to nodes or any valid node selector. <.> In this example configuration, the pod that this toleration is attached to tolerates any taint that matches the key value and effect value using the operator.

After you apply a manifest with the spec.nodeSelector field, you can check the number of pods that the Operator deployed with the oc get daemonset -n metallb-system speaker command. Similarly, you can display the nodes that match your labels with a command like oc get nodes -l node-role.kubernetes.io/worker=.

You can optionally allow the node to control which speaker pods should, or should not, be scheduled on them by using affinity rules. You can also limit these pods by applying a list of tolerations. For more information about affinity rules, taints, and tolerations, see the additional resources.

31.2.4.2. Configuring pod priority and pod affinity in a MetalLB deployment

You can optionally assign pod priority and pod affinity rules to controller and speaker pods by configuring the MetalLB custom resource. The pod priority indicates the relative importance of a pod on a node and schedules the pod based on this priority. Set a high priority on your controller or speaker pod to ensure scheduling priority over other pods on the node.

Pod affinity manages relationships among pods. Assign pod affinity to the controller or speaker pods to control on what node the scheduler places the pod in the context of pod relationships. For example, you can use pod affinity rules to ensure that certain pods are located on the same node or nodes, which can help improve network communication and reduce latency between those components.

Prerequisites

-

You are logged in as a user with

cluster-adminprivileges. - You have installed the MetalLB Operator.

- You have started the MetalLB Operator on your cluster.

Procedure

Create a

PriorityClasscustom resource, such asmyPriorityClass.yaml, to configure the priority level. This example defines aPriorityClassnamedhigh-prioritywith a value of1000000. Pods that are assigned this priority class are considered higher priority during scheduling compared to pods with lower priority classes:apiVersion: scheduling.k8s.io/v1 kind: PriorityClass metadata: name: high-priority value: 1000000Apply the

PriorityClasscustom resource configuration:$ oc apply -f myPriorityClass.yamlCreate a

MetalLBcustom resource, such asMetalLBPodConfig.yaml, to specify thepriorityClassNameandpodAffinityvalues:apiVersion: metallb.io/v1beta1 kind: MetalLB metadata: name: metallb namespace: metallb-system spec: logLevel: debug controllerConfig: priorityClassName: high-priority1 affinity: podAffinity:2 requiredDuringSchedulingIgnoredDuringExecution: - labelSelector: matchLabels: app: metallb topologyKey: kubernetes.io/hostname speakerConfig: priorityClassName: high-priority affinity: podAffinity: requiredDuringSchedulingIgnoredDuringExecution: - labelSelector: matchLabels: app: metallb topologyKey: kubernetes.io/hostname- 1

- Specifies the priority class for the MetalLB controller pods. In this case, it is set to

high-priority. - 2

- Specifies that you are configuring pod affinity rules. These rules dictate how pods are scheduled in relation to other pods or nodes. This configuration instructs the scheduler to schedule pods that have the label

app: metallbonto nodes that share the same hostname. This helps to co-locate MetalLB-related pods on the same nodes, potentially optimizing network communication, latency, and resource usage between these pods.

Apply the

MetalLBcustom resource configuration:$ oc apply -f MetalLBPodConfig.yaml

Verification

To view the priority class that you assigned to pods in the

metallb-systemnamespace, run the following command:$ oc get pods -n metallb-system -o custom-columns=NAME:.metadata.name,PRIORITY:.spec.priorityClassNameExample output

NAME PRIORITY controller-584f5c8cd8-5zbvg high-priority metallb-operator-controller-manager-9c8d9985-szkqg <none> metallb-operator-webhook-server-c895594d4-shjgx <none> speaker-dddf7 high-priorityTo verify that the scheduler placed pods according to pod affinity rules, view the metadata for the pod’s node or nodes by running the following command:

$ oc get pod -o=custom-columns=NODE:.spec.nodeName,NAME:.metadata.name -n metallb-system

31.2.4.3. Configuring pod CPU limits in a MetalLB deployment

You can optionally assign pod CPU limits to controller and speaker pods by configuring the MetalLB custom resource. Defining CPU limits for the controller or speaker pods helps you to manage compute resources on the node. This ensures all pods on the node have the necessary compute resources to manage workloads and cluster housekeeping.

Prerequisites

-

You are logged in as a user with

cluster-adminprivileges. - You have installed the MetalLB Operator.

Procedure

Create a

MetalLBcustom resource file, such asCPULimits.yaml, to specify thecpuvalue for thecontrollerandspeakerpods:apiVersion: metallb.io/v1beta1 kind: MetalLB metadata: name: metallb namespace: metallb-system spec: logLevel: debug controllerConfig: resources: limits: cpu: "200m" speakerConfig: resources: limits: cpu: "300m"Apply the

MetalLBcustom resource configuration:$ oc apply -f CPULimits.yaml

Verification

To view compute resources for a pod, run the following command, replacing

<pod_name>with your target pod:$ oc describe pod <pod_name>

31.2.6. Next steps

31.3. Upgrading the MetalLB

A Subscription custom resource (CR) that subscribes the namespace to metallb-system by default, automatically sets the installPlanApproval parameter to Automatic. This means that when Red Hat-provided Operator catalogs include a newer version of the MetalLB Operator, the MetalLB Operator is automatically upgraded.

If you need to manually control upgrading the MetalLB Operator, set the installPlanApproval parameter to Manual.

31.3.1. Manually upgrading the MetalLB Operator

To manually control upgrading the MetalLB Operator, you must edit the Subscription custom resource (CR) that subscribes the namespace to metallb-system. A Subscription CR is created as part of the Operator installation and the CR has the installPlanApproval parameter set to Automatic by default.

Prerequisites

- You updated your cluster to the latest z-stream release.

- You used OperatorHub to install the MetalLB Operator.

-

Access the cluster as a user with the

cluster-adminrole.

Procedure

Get the YAML definition of the

metallb-operatorsubscription in themetallb-systemnamespace by entering the following command:$ oc -n metallb-system get subscription metallb-operator -o yamlEdit the

SubscriptionCR by setting theinstallPlanApprovalparameter toManual:apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: metallb-operator namespace: metallb-system # ... spec: channel: stable installPlanApproval: Manual name: metallb-operator source: redhat-operators sourceNamespace: openshift-marketplace # ...Find the latest OpenShift Container Platform 4.12 version of the MetalLB Operator by entering the following command:

$ oc -n metallb-system get csvExample output

NAME DISPLAY VERSION REPLACES PHASE metallb-operator.v4.12.0 MetalLB Operator 4.12.0 SucceededCheck the install plan that exists in the namespace by entering the following command.

$ oc -n metallb-system get installplanExample output that shows install-tsz2g as a manual install plan

NAME CSV APPROVAL APPROVED install-shpmd metallb-operator.v4.12.0-202502261233 Automatic true install-tsz2g metallb-operator.v4.12.0-202503102139 Manual falseEdit the install plan that exists in the namespace by entering the following command. Ensure that you replace

<name_of_installplan>with the name of the install plan, such asinstall-tsz2g.$ oc edit installplan <name_of_installplan> -n metallb-systemWith the install plan open in your editor, set the

spec.approvalparameter toManualand set thespec.approvedparameter totrue.NoteAfter you edit the install plan, the upgrade operation starts. If you enter the

oc -n metallb-system get csvcommand during the upgrade operation, the output might show theReplacingor thePendingstatus.

Verification

Verify the upgrade was successful by entering the following command:

$ oc -n metallb-system get csvExample output

NAME DISPLAY VERSION REPLACE PHASE metallb-operator.v<latest>.0-202503102139 MetalLB Operator {product-version}.0-202503102139 metallb-operator.v{product-version}.0-202502261233 Succeeded

31.3.2. Additional resources

31.4. Configuring MetalLB address pools

As a cluster administrator, you can add, modify, and delete address pools. The MetalLB Operator uses the address pool custom resources to set the IP addresses that MetalLB can assign to services. The namespace used in the examples assume the namespace is metallb-system.

31.4.1. About the IPAddressPool custom resource

The address pool custom resource definition (CRD) and API documented in "Load balancing with MetalLB" in OpenShift Container Platform 4.10 can still be used in 4.12. However, the enhanced functionality associated with advertising the IPAddressPools with layer 2 or the BGP protocol is not supported when using the address pool CRD.

The fields for the IPAddressPool custom resource are described in the following table.

| Field | Type | Description |

|---|---|---|

|

|

|

Specifies the name for the address pool. When you add a service, you can specify this pool name in the |

|

|

| Specifies the namespace for the address pool. Specify the same namespace that the MetalLB Operator uses. |

|

|

|

Optional: Specifies the key value pair assigned to the |

|

|

| Specifies a list of IP addresses for MetalLB Operator to assign to services. You can specify multiple ranges in a single pool; they will all share the same settings. Specify each range in CIDR notation or as starting and ending IP addresses separated with a hyphen. |

|

|

|

Optional: Specifies whether MetalLB automatically assigns IP addresses from this pool. Specify Note

For IP address pool configurations, ensure the addresses field specifies only IPs that are available and not in use by other network devices, especially gateway addresses, to prevent conflicts when |

|

|

|

Optional: This ensures when enabled that IP addresses ending |

31.4.2. Configuring an address pool

As a cluster administrator, you can add address pools to your cluster to control the IP addresses that MetalLB can assign to load-balancer services.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create a file, such as

ipaddresspool.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: IPAddressPool metadata: namespace: metallb-system name: doc-example labels:1 zone: east spec: addresses: - 203.0.113.1-203.0.113.10 - 203.0.113.65-203.0.113.75 # ...- 1

- This label assigned to the

IPAddressPoolcan be referenced by theipAddressPoolSelectorsin theBGPAdvertisementCRD to associate theIPAddressPoolwith the advertisement.

Apply the configuration for the IP address pool:

$ oc apply -f ipaddresspool.yaml

Verification

View the address pool by entering the following command:

$ oc describe -n metallb-system IPAddressPool doc-exampleExample output

Name: doc-example Namespace: metallb-system Labels: zone=east Annotations: <none> API Version: metallb.io/v1beta1 Kind: IPAddressPool Metadata: ... Spec: Addresses: 203.0.113.1-203.0.113.10 203.0.113.65-203.0.113.75 Auto Assign: true Events: <none>-

Confirm that the address pool name, such as

doc-example, and the IP address ranges exist in the output.

31.4.3. Example address pool configurations

The following examples show address pool configurations for specific scenarios.

31.4.3.1. Example: IPv4 and CIDR ranges

You can specify a range of IP addresses in classless inter-domain routing (CIDR) notation. You can combine CIDR notation with the notation that uses a hyphen to separate lower and upper bounds.

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: doc-example-cidr

namespace: metallb-system

spec:

addresses:

- 192.168.100.0/24

- 192.168.200.0/24

- 192.168.255.1-192.168.255.5

# ...31.4.3.2. Example: Assign IP addresses

You can set the autoAssign field to false to prevent MetalLB from automatically assigning IP addresses from the address pool. You can then assign a single IP address or multiple IP addresses from an IP address pool. To assign an IP address, append the /32 CIDR notation to the target IP address in the spec.addresses parameter. This setting ensures that only the specific IP address is avilable for assignment, leaving non-reserved IP addresses for application use.

Example IPAddressPool CR that assigns multiple IP addresses

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: doc-example-reserved

namespace: metallb-system

spec:

addresses:

- 192.168.100.1/32

- 192.168.200.1/32

autoAssign: false

# ...When you add a service, you can request a specific IP address from the address pool or you can specify the pool name in an annotation to request any IP address from the pool.

31.4.3.3. Example: IPv4 and IPv6 addresses

You can add address pools that use IPv4 and IPv6. You can specify multiple ranges in the addresses list, just like several IPv4 examples.

Whether the service is assigned a single IPv4 address, a single IPv6 address, or both is determined by how you add the service. The spec.ipFamilies and spec.ipFamilyPolicy fields control how IP addresses are assigned to the service.

apiVersion: metallb.io/v1beta1

kind: IPAddressPool

metadata:

name: doc-example-combined

namespace: metallb-system

spec:

addresses:

- 10.0.100.0/28

- 2002:2:2::1-2002:2:2::100

# ...31.4.4. Next steps

31.5. About advertising for the IP address pools

You can configure MetalLB so that the IP address is advertised with layer 2 protocols, the BGP protocol, or both. With layer 2, MetalLB provides a fault-tolerant external IP address. With BGP, MetalLB provides fault-tolerance for the external IP address and load balancing.

MetalLB supports advertising using L2 and BGP for the same set of IP addresses.

MetalLB provides the flexibility to assign address pools to specific BGP peers effectively to a subset of nodes on the network. This allows for more complex configurations, for example facilitating the isolation of nodes or the segmentation of the network.

31.5.1. About the BGPAdvertisement custom resource

The fields for the BGPAdvertisements object are defined in the following table:

| Field | Type | Description |

|---|---|---|

|

|

| Specifies the name for the BGP advertisement. |

|

|

| Specifies the namespace for the BGP advertisement. Specify the same namespace that the MetalLB Operator uses. |

|

|

|

Optional: Specifies the number of bits to include in a 32-bit CIDR mask. To aggregate the routes that the speaker advertises to BGP peers, the mask is applied to the routes for several service IP addresses and the speaker advertises the aggregated route. For example, with an aggregation length of |

|

|

|

Optional: Specifies the number of bits to include in a 128-bit CIDR mask. For example, with an aggregation length of |

|

|

| Optional: Specifies one or more BGP communities. Each community is specified as two 16-bit values separated by the colon character. Well-known communities must be specified as 16-bit values:

|

|

|

| Optional: Specifies the local preference for this advertisement. This BGP attribute applies to BGP sessions within the Autonomous System. |

|

|

|

Optional: The list of |

|

|

|

Optional: A selector for the |

|

|

|

Optional: |

|

|

|

Optional: Use a list to specify the |

31.5.2. Configuring MetalLB with a BGP advertisement and a basic use case

Configure MetalLB as follows so that the peer BGP routers receive one 203.0.113.200/32 route and one fc00:f853:ccd:e799::1/128 route for each load-balancer IP address that MetalLB assigns to a service. Because the localPref and communities fields are not specified, the routes are advertised with localPref set to zero and no BGP communities.

31.5.2.1. Example: Advertise a basic address pool configuration with BGP

Configure MetalLB as follows so that the IPAddressPool is advertised with the BGP protocol.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create an IP address pool.

Create a file, such as

ipaddresspool.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: IPAddressPool metadata: namespace: metallb-system name: doc-example-bgp-basic spec: addresses: - 203.0.113.200/30 - fc00:f853:ccd:e799::/124Apply the configuration for the IP address pool:

$ oc apply -f ipaddresspool.yaml

Create a BGP advertisement.

Create a file, such as

bgpadvertisement.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: BGPAdvertisement metadata: name: bgpadvertisement-basic namespace: metallb-system spec: ipAddressPools: - doc-example-bgp-basicApply the configuration:

$ oc apply -f bgpadvertisement.yaml

31.5.3. Configuring MetalLB with a BGP advertisement and an advanced use case

Configure MetalLB as follows so that MetalLB assigns IP addresses to load-balancer services in the ranges between 203.0.113.200 and 203.0.113.203 and between fc00:f853:ccd:e799::0 and fc00:f853:ccd:e799::f.

To explain the two BGP advertisements, consider an instance when MetalLB assigns the IP address of 203.0.113.200 to a service. With that IP address as an example, the speaker advertises two routes to BGP peers:

-

203.0.113.200/32, withlocalPrefset to100and the community set to the numeric value of theNO_ADVERTISEcommunity. This specification indicates to the peer routers that they can use this route but they should not propagate information about this route to BGP peers. -

203.0.113.200/30, aggregates the load-balancer IP addresses assigned by MetalLB into a single route. MetalLB advertises the aggregated route to BGP peers with the community attribute set to8000:800. BGP peers propagate the203.0.113.200/30route to other BGP peers. When traffic is routed to a node with a speaker, the203.0.113.200/32route is used to forward the traffic into the cluster and to a pod that is associated with the service.

As you add more services and MetalLB assigns more load-balancer IP addresses from the pool, peer routers receive one local route, 203.0.113.20x/32, for each service, as well as the 203.0.113.200/30 aggregate route. Each service that you add generates the /30 route, but MetalLB deduplicates the routes to one BGP advertisement before communicating with peer routers.

31.5.3.1. Example: Advertise an advanced address pool configuration with BGP

Configure MetalLB as follows so that the IPAddressPool is advertised with the BGP protocol.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create an IP address pool.

Create a file, such as

ipaddresspool.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: IPAddressPool metadata: namespace: metallb-system name: doc-example-bgp-adv labels: zone: east spec: addresses: - 203.0.113.200/30 - fc00:f853:ccd:e799::/124 autoAssign: falseApply the configuration for the IP address pool:

$ oc apply -f ipaddresspool.yaml

Create a BGP advertisement.

Create a file, such as

bgpadvertisement1.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: BGPAdvertisement metadata: name: bgpadvertisement-adv-1 namespace: metallb-system spec: ipAddressPools: - doc-example-bgp-adv communities: - 65535:65282 aggregationLength: 32 localPref: 100Apply the configuration:

$ oc apply -f bgpadvertisement1.yamlCreate a file, such as

bgpadvertisement2.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: BGPAdvertisement metadata: name: bgpadvertisement-adv-2 namespace: metallb-system spec: ipAddressPools: - doc-example-bgp-adv communities: - 8000:800 aggregationLength: 30 aggregationLengthV6: 124Apply the configuration:

$ oc apply -f bgpadvertisement2.yaml

31.5.4. Advertising an IP address pool from a subset of nodes

To advertise an IP address from an IP addresses pool, from a specific set of nodes only, use the .spec.nodeSelector specification in the BGPAdvertisement custom resource. This specification associates a pool of IP addresses with a set of nodes in the cluster. This is useful when you have nodes on different subnets in a cluster and you want to advertise an IP addresses from an address pool from a specific subnet, for example a public-facing subnet only.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create an IP address pool by using a custom resource:

apiVersion: metallb.io/v1beta1 kind: IPAddressPool metadata: namespace: metallb-system name: pool1 spec: addresses: - 4.4.4.100-4.4.4.200 - 2001:100:4::200-2001:100:4::400Control which nodes in the cluster the IP address from

pool1advertises from by defining the.spec.nodeSelectorvalue in the BGPAdvertisement custom resource:apiVersion: metallb.io/v1beta1 kind: BGPAdvertisement metadata: name: example spec: ipAddressPools: - pool1 nodeSelector: - matchLabels: kubernetes.io/hostname: NodeA - matchLabels: kubernetes.io/hostname: NodeB

In this example, the IP address from pool1 advertises from NodeA and NodeB only.

31.5.5. About the L2Advertisement custom resource

The fields for the l2Advertisements object are defined in the following table:

| Field | Type | Description |

|---|---|---|

|

|

| Specifies the name for the L2 advertisement. |

|

|

| Specifies the namespace for the L2 advertisement. Specify the same namespace that the MetalLB Operator uses. |

|

|

|

Optional: The list of |

|

|

|

Optional: A selector for the |

|

|

|

Optional: Important Limiting the nodes to announce as next hops is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process. For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope. |

|

|

|

Optional: The list of |

31.5.6. Configuring MetalLB with an L2 advertisement

Configure MetalLB as follows so that the IPAddressPool is advertised with the L2 protocol.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create an IP address pool.

Create a file, such as

ipaddresspool.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: IPAddressPool metadata: namespace: metallb-system name: doc-example-l2 spec: addresses: - 4.4.4.0/24 autoAssign: falseApply the configuration for the IP address pool:

$ oc apply -f ipaddresspool.yaml

Create a L2 advertisement.

Create a file, such as

l2advertisement.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: L2Advertisement metadata: name: l2advertisement namespace: metallb-system spec: ipAddressPools: - doc-example-l2Apply the configuration:

$ oc apply -f l2advertisement.yaml

31.5.7. Configuring MetalLB with a L2 advertisement and label

The ipAddressPoolSelectors field in the BGPAdvertisement and L2Advertisement custom resource definitions is used to associate the IPAddressPool to the advertisement based on the label assigned to the IPAddressPool instead of the name itself.

This example shows how to configure MetalLB so that the IPAddressPool is advertised with the L2 protocol by configuring the ipAddressPoolSelectors field.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create an IP address pool.

Create a file, such as

ipaddresspool.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: IPAddressPool metadata: namespace: metallb-system name: doc-example-l2-label labels: zone: east spec: addresses: - 172.31.249.87/32Apply the configuration for the IP address pool:

$ oc apply -f ipaddresspool.yaml

Create a L2 advertisement advertising the IP using

ipAddressPoolSelectors.Create a file, such as

l2advertisement.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: L2Advertisement metadata: name: l2advertisement-label namespace: metallb-system spec: ipAddressPoolSelectors: - matchExpressions: - key: zone operator: In values: - eastApply the configuration:

$ oc apply -f l2advertisement.yaml

31.5.8. Configuring MetalLB with an L2 advertisement for selected interfaces

By default, the IP address pool IP addresses that are assigned to the service are advertised from all the network interfaces. You can use the interfaces field in the L2Advertisement custom resource definition to restrict the network interfaces that advertise the addresses from a given IP address pool.

This example shows how to configure MetalLB so that the IP address pool is advertised only from the network interfaces listed in the interfaces field of all nodes.

Prerequisites

-

You have installed the OpenShift CLI (

oc). -

You are logged in as a user with

cluster-adminprivileges.

Procedure

Create an IP address pool:

Create a file, such as

ipaddresspool.yaml, and enter the configuration details like the following example:apiVersion: metallb.io/v1beta1 kind: IPAddressPool metadata: namespace: metallb-system name: doc-example-l2 spec: addresses: - 4.4.4.0/24 autoAssign: falseApply the configuration for the IP address pool like the following example:

$ oc apply -f ipaddresspool.yaml

Create a L2 advertisement advertising the IP with

interfacesselector.Create a YAML file, such as

l2advertisement.yaml, and enter the configuration details like the following example:apiVersion: metallb.io/v1beta1 kind: L2Advertisement metadata: name: l2advertisement namespace: metallb-system spec: ipAddressPools: - doc-example-l2 interfaces: - interfaceA - interfaceBApply the configuration for the advertisement like the following example:

$ oc apply -f l2advertisement.yaml

The interface selector does not affect how MetalLB chooses the node to announce a given IP by using L2. The chosen node does not announce the service if the node does not have the selected interface.

31.6. Configuring MetalLB BGP peers

As a cluster administrator, you can add, modify, and delete Border Gateway Protocol (BGP) peers. The MetalLB Operator uses the BGP peer custom resources to identify which peers that MetalLB speaker pods contact to start BGP sessions. The peers receive the route advertisements for the load-balancer IP addresses that MetalLB assigns to services.

31.6.1. About the BGP peer custom resource

The fields for the BGP peer custom resource are described in the following table.

| Field | Type | Description |

|---|---|---|

|

|

| Specifies the name for the BGP peer custom resource. |

|

|

| Specifies the namespace for the BGP peer custom resource. |

|

|

|

Specifies the Autonomous System number for the local end of the BGP session. Specify the same value in all BGP peer custom resources that you add. The range is |

|

|

|

Specifies the Autonomous System number for the remote end of the BGP session. The range is |

|

|

| Specifies the IP address of the peer to contact for establishing the BGP session. |

|

|

| Optional: Specifies the IP address to use when establishing the BGP session. The value must be an IPv4 address. |

|

|

|

Optional: Specifies the network port of the peer to contact for establishing the BGP session. The range is |

|

|

|

Optional: Specifies the duration for the hold time to propose to the BGP peer. The minimum value is 3 seconds ( |

|

|

|

Optional: Specifies the maximum interval between sending keep-alive messages to the BGP peer. If you specify this field, you must also specify a value for the |

|

|

| Optional: Specifies the router ID to advertise to the BGP peer. If you specify this field, you must specify the same value in every BGP peer custom resource that you add. |

|

|

| Optional: Specifies the MD5 password to send to the peer for routers that enforce TCP MD5 authenticated BGP sessions. |

|

|

|

Optional: Specifies name of the authentication secret for the BGP Peer. The secret must live in the |

|

|

| Optional: Specifies the name of a BFD profile. |

|

|

| Optional: Specifies a selector, using match expressions and match labels, to control which nodes can connect to the BGP peer. |

|

|

|

Optional: Specifies that the BGP peer is multiple network hops away. If the BGP peer is not directly connected to the same network, the speaker cannot establish a BGP session unless this field is set to |

The passwordSecret field is mutually exclusive with the password field, and contains a reference to a secret containing the password to use. Setting both fields results in a failure of the parsing.

31.6.2. Configuring a BGP peer

As a cluster administrator, you can add a BGP peer custom resource to exchange routing information with network routers and advertise the IP addresses for services.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges. - Configure MetalLB with a BGP advertisement.

Procedure

Create a file, such as

bgppeer.yaml, with content like the following example:apiVersion: metallb.io/v1beta2 kind: BGPPeer metadata: namespace: metallb-system name: doc-example-peer spec: peerAddress: 10.0.0.1 peerASN: 64501 myASN: 64500 routerID: 10.10.10.10Apply the configuration for the BGP peer:

$ oc apply -f bgppeer.yaml

31.6.3. Configure a specific set of BGP peers for a given address pool

This procedure illustrates how to:

-

Configure a set of address pools (

pool1andpool2). -

Configure a set of BGP peers (

peer1andpeer2). -

Configure BGP advertisement to assign

pool1topeer1andpool2topeer2.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create address pool

pool1.Create a file, such as

ipaddresspool1.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: IPAddressPool metadata: namespace: metallb-system name: pool1 spec: addresses: - 4.4.4.100-4.4.4.200 - 2001:100:4::200-2001:100:4::400Apply the configuration for the IP address pool

pool1:$ oc apply -f ipaddresspool1.yaml

Create address pool

pool2.Create a file, such as

ipaddresspool2.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: IPAddressPool metadata: namespace: metallb-system name: pool2 spec: addresses: - 5.5.5.100-5.5.5.200 - 2001:100:5::200-2001:100:5::400Apply the configuration for the IP address pool

pool2:$ oc apply -f ipaddresspool2.yaml

Create BGP

peer1.Create a file, such as

bgppeer1.yaml, with content like the following example:apiVersion: metallb.io/v1beta2 kind: BGPPeer metadata: namespace: metallb-system name: peer1 spec: peerAddress: 10.0.0.1 peerASN: 64501 myASN: 64500 routerID: 10.10.10.10Apply the configuration for the BGP peer:

$ oc apply -f bgppeer1.yaml

Create BGP

peer2.Create a file, such as

bgppeer2.yaml, with content like the following example:apiVersion: metallb.io/v1beta2 kind: BGPPeer metadata: namespace: metallb-system name: peer2 spec: peerAddress: 10.0.0.2 peerASN: 64501 myASN: 64500 routerID: 10.10.10.10Apply the configuration for the BGP peer2:

$ oc apply -f bgppeer2.yaml

Create BGP advertisement 1.

Create a file, such as

bgpadvertisement1.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: BGPAdvertisement metadata: name: bgpadvertisement-1 namespace: metallb-system spec: ipAddressPools: - pool1 peers: - peer1 communities: - 65535:65282 aggregationLength: 32 aggregationLengthV6: 128 localPref: 100Apply the configuration:

$ oc apply -f bgpadvertisement1.yaml

Create BGP advertisement 2.

Create a file, such as

bgpadvertisement2.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: BGPAdvertisement metadata: name: bgpadvertisement-2 namespace: metallb-system spec: ipAddressPools: - pool2 peers: - peer2 communities: - 65535:65282 aggregationLength: 32 aggregationLengthV6: 128 localPref: 100Apply the configuration:

$ oc apply -f bgpadvertisement2.yaml

31.6.4. Example BGP peer configurations

31.6.4.1. Example: Limit which nodes connect to a BGP peer

You can specify the node selectors field to control which nodes can connect to a BGP peer.

apiVersion: metallb.io/v1beta2

kind: BGPPeer

metadata:

name: doc-example-nodesel

namespace: metallb-system

spec:

peerAddress: 10.0.20.1

peerASN: 64501

myASN: 64500

nodeSelectors:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values: [compute-1.example.com, compute-2.example.com]31.6.4.2. Example: Specify a BFD profile for a BGP peer

You can specify a BFD profile to associate with BGP peers. BFD compliments BGP by providing more rapid detection of communication failures between peers than BGP alone.

apiVersion: metallb.io/v1beta2

kind: BGPPeer

metadata:

name: doc-example-peer-bfd

namespace: metallb-system

spec:

peerAddress: 10.0.20.1

peerASN: 64501

myASN: 64500

holdTime: "10s"

bfdProfile: doc-example-bfd-profile-full

Deleting the bidirectional forwarding detection (BFD) profile and removing the bfdProfile added to the border gateway protocol (BGP) peer resource does not disable the BFD. Instead, the BGP peer starts using the default BFD profile. To disable BFD from a BGP peer resource, delete the BGP peer configuration and recreate it without a BFD profile. For more information, see BZ#2050824.

31.6.4.3. Example: Specify BGP peers for dual-stack networking

To support dual-stack networking, add one BGP peer custom resource for IPv4 and one BGP peer custom resource for IPv6.

apiVersion: metallb.io/v1beta2

kind: BGPPeer

metadata:

name: doc-example-dual-stack-ipv4

namespace: metallb-system

spec:

peerAddress: 10.0.20.1

peerASN: 64500

myASN: 64500

---

apiVersion: metallb.io/v1beta2

kind: BGPPeer

metadata:

name: doc-example-dual-stack-ipv6

namespace: metallb-system

spec:

peerAddress: 2620:52:0:88::104

peerASN: 64500

myASN: 6450031.6.5. Next steps

31.7. Configuring community alias

As a cluster administrator, you can configure a community alias and use it across different advertisements.

31.7.1. About the community custom resource

The community custom resource is a collection of aliases for communities. Users can define named aliases to be used when advertising ipAddressPools using the BGPAdvertisement. The fields for the community custom resource are described in the following table.

The community CRD applies only to BGPAdvertisement.

| Field | Type | Description |

|---|---|---|

|

|

|

Specifies the name for the |

|

|

|

Specifies the namespace for the |

|

|

|

Specifies a list of BGP community aliases that can be used in BGPAdvertisements. A community alias consists of a pair of name (alias) and value (number:number). Link the BGPAdvertisement to a community alias by referring to the alias name in its |

| Field | Type | Description |

|---|---|---|

|

|

|

The name of the alias for the |

|

|

|

The BGP |

31.7.2. Configuring MetalLB with a BGP advertisement and community alias

Configure MetalLB as follows so that the IPAddressPool is advertised with the BGP protocol and the community alias set to the numeric value of the NO_ADVERTISE community.

In the following example, the peer BGP router doc-example-peer-community receives one 203.0.113.200/32 route and one fc00:f853:ccd:e799::1/128 route for each load-balancer IP address that MetalLB assigns to a service. A community alias is configured with the NO_ADVERTISE community.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create an IP address pool.

Create a file, such as

ipaddresspool.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: IPAddressPool metadata: namespace: metallb-system name: doc-example-bgp-community spec: addresses: - 203.0.113.200/30 - fc00:f853:ccd:e799::/124Apply the configuration for the IP address pool:

$ oc apply -f ipaddresspool.yaml

Create a community alias named

community1.apiVersion: metallb.io/v1beta1 kind: Community metadata: name: community1 namespace: metallb-system spec: communities: - name: NO_ADVERTISE value: '65535:65282'Create a BGP peer named

doc-example-bgp-peer.Create a file, such as

bgppeer.yaml, with content like the following example:apiVersion: metallb.io/v1beta2 kind: BGPPeer metadata: namespace: metallb-system name: doc-example-bgp-peer spec: peerAddress: 10.0.0.1 peerASN: 64501 myASN: 64500 routerID: 10.10.10.10Apply the configuration for the BGP peer:

$ oc apply -f bgppeer.yaml

Create a BGP advertisement with the community alias.

Create a file, such as

bgpadvertisement.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: BGPAdvertisement metadata: name: bgp-community-sample namespace: metallb-system spec: aggregationLength: 32 aggregationLengthV6: 128 communities: - NO_ADVERTISE1 ipAddressPools: - doc-example-bgp-community peers: - doc-example-peerApply the configuration:

$ oc apply -f bgpadvertisement.yaml

31.8. Configuring MetalLB BFD profiles

As a cluster administrator, you can add, modify, and delete Bidirectional Forwarding Detection (BFD) profiles. The MetalLB Operator uses the BFD profile custom resources to identify which BGP sessions use BFD to provide faster path failure detection than BGP alone provides.

31.8.1. About the BFD profile custom resource

The fields for the BFD profile custom resource are described in the following table.

| Field | Type | Description |

|---|---|---|

|

|

| Specifies the name for the BFD profile custom resource. |

|

|

| Specifies the namespace for the BFD profile custom resource. |

|

|

| Specifies the detection multiplier to determine packet loss. The remote transmission interval is multiplied by this value to determine the connection loss detection timer.

For example, when the local system has the detect multiplier set to

The range is |

|

|

|

Specifies the echo transmission mode. If you are not using distributed BFD, echo transmission mode works only when the peer is also FRR. The default value is

When echo transmission mode is enabled, consider increasing the transmission interval of control packets to reduce bandwidth usage. For example, consider increasing the transmit interval to |

|

|

|

Specifies the minimum transmission interval, less jitter, that this system uses to send and receive echo packets. The range is |

|

|

| Specifies the minimum expected TTL for an incoming control packet. This field applies to multi-hop sessions only. The purpose of setting a minimum TTL is to make the packet validation requirements more stringent and avoid receiving control packets from other sessions.

The default value is |

|

|

| Specifies whether a session is marked as active or passive. A passive session does not attempt to start the connection. Instead, a passive session waits for control packets from a peer before it begins to reply. Marking a session as passive is useful when you have a router that acts as the central node of a star network and you want to avoid sending control packets that you do not need the system to send.

The default value is |

|

|

|

Specifies the minimum interval that this system is capable of receiving control packets. The range is |

|

|

|

Specifies the minimum transmission interval, less jitter, that this system uses to send control packets. The range is |

31.8.2. Configuring a BFD profile

As a cluster administrator, you can add a BFD profile and configure a BGP peer to use the profile. BFD provides faster path failure detection than BGP alone.

Prerequisites

-

Install the OpenShift CLI (

oc). -

Log in as a user with

cluster-adminprivileges.

Procedure

Create a file, such as

bfdprofile.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: BFDProfile metadata: name: doc-example-bfd-profile-full namespace: metallb-system spec: receiveInterval: 300 transmitInterval: 300 detectMultiplier: 3 echoMode: false passiveMode: true minimumTtl: 254Apply the configuration for the BFD profile:

$ oc apply -f bfdprofile.yaml

31.8.3. Next steps

- Configure a BGP peer to use the BFD profile.

31.9. Configuring services to use MetalLB

As a cluster administrator, when you add a service of type LoadBalancer, you can control how MetalLB assigns an IP address.

31.9.1. Request a specific IP address

Like some other load-balancer implementations, MetalLB accepts the spec.loadBalancerIP field in the service specification.

If the requested IP address is within a range from any address pool, MetalLB assigns the requested IP address. If the requested IP address is not within any range, MetalLB reports a warning.

Example service YAML for a specific IP address

apiVersion: v1

kind: Service

metadata:

name: <service_name>

annotations:

metallb.universe.tf/address-pool: <address_pool_name>

spec:

selector:

<label_key>: <label_value>

ports:

- port: 8080

targetPort: 8080

protocol: TCP

type: LoadBalancer

loadBalancerIP: <ip_address>

If MetalLB cannot assign the requested IP address, the EXTERNAL-IP for the service reports <pending> and running oc describe service <service_name> includes an event like the following example.

Example event when MetalLB cannot assign a requested IP address

...

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning AllocationFailed 3m16s metallb-controller Failed to allocate IP for "default/invalid-request": "4.3.2.1" is not allowed in config31.9.2. Request an IP address from a specific pool

To assign an IP address from a specific range, but you are not concerned with the specific IP address, then you can use the metallb.universe.tf/address-pool annotation to request an IP address from the specified address pool.

Example service YAML for an IP address from a specific pool

apiVersion: v1

kind: Service

metadata:

name: <service_name>

annotations:

metallb.universe.tf/address-pool: <address_pool_name>

spec:

selector:

<label_key>: <label_value>

ports:

- port: 8080

targetPort: 8080

protocol: TCP

type: LoadBalancer

If the address pool that you specify for <address_pool_name> does not exist, MetalLB attempts to assign an IP address from any pool that permits automatic assignment.

31.9.3. Accept any IP address

By default, address pools are configured to permit automatic assignment. MetalLB assigns an IP address from these address pools.

To accept any IP address from any pool that is configured for automatic assignment, no special annotation or configuration is required.

Example service YAML for accepting any IP address

apiVersion: v1

kind: Service

metadata:

name: <service_name>

spec:

selector:

<label_key>: <label_value>

ports:

- port: 8080

targetPort: 8080

protocol: TCP

type: LoadBalancer31.9.5. Configuring a service with MetalLB

You can configure a load-balancing service to use an external IP address from an address pool.

Prerequisites

-

Install the OpenShift CLI (

oc). - Install the MetalLB Operator and start MetalLB.

- Configure at least one address pool.

- Configure your network to route traffic from the clients to the host network for the cluster.

Procedure

Create a

<service_name>.yamlfile. In the file, ensure that thespec.typefield is set toLoadBalancer.Refer to the examples for information about how to request the external IP address that MetalLB assigns to the service.

Create the service:

$ oc apply -f <service_name>.yamlExample output

service/<service_name> created

Verification

Describe the service:

$ oc describe service <service_name>Example output

Name: <service_name> Namespace: default Labels: <none> Annotations: metallb.universe.tf/address-pool: doc-example <.> Selector: app=service_name Type: LoadBalancer <.> IP Family Policy: SingleStack IP Families: IPv4 IP: 10.105.237.254 IPs: 10.105.237.254 LoadBalancer Ingress: 192.168.100.5 <.> Port: <unset> 80/TCP TargetPort: 8080/TCP NodePort: <unset> 30550/TCP Endpoints: 10.244.0.50:8080 Session Affinity: None External Traffic Policy: Cluster Events: <.> Type Reason Age From Message ---- ------ ---- ---- ------- Normal nodeAssigned 32m (x2 over 32m) metallb-speaker announcing from node "<node_name>"<.> The annotation is present if you request an IP address from a specific pool. <.> The service type must indicate

LoadBalancer. <.> The load-balancer ingress field indicates the external IP address if the service is assigned correctly. <.> The events field indicates the node name that is assigned to announce the external IP address. If you experience an error, the events field indicates the reason for the error.

31.10. MetalLB logging, troubleshooting, and support

If you need to troubleshoot MetalLB configuration, see the following sections for commonly used commands.

31.10.1. Setting the MetalLB logging levels

MetalLB uses FRRouting (FRR) in a container with the default setting of info generates a lot of logging. You can control the verbosity of the logs generated by setting the logLevel as illustrated in this example.

Gain a deeper insight into MetalLB by setting the logLevel to debug as follows:

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole. -

You have installed the OpenShift CLI (

oc).

Procedure

Create a file, such as

setdebugloglevel.yaml, with content like the following example:apiVersion: metallb.io/v1beta1 kind: MetalLB metadata: name: metallb namespace: metallb-system spec: logLevel: debug nodeSelector: node-role.kubernetes.io/worker: ""Apply the configuration:

$ oc replace -f setdebugloglevel.yamlNoteUse

oc replaceas the understanding is themetallbCR is already created and here you are changing the log level.Display the names of the

speakerpods:$ oc get -n metallb-system pods -l component=speakerExample output