Questo contenuto non è disponibile nella lingua selezionata.

Chapter 2. Developer metrics

2.1. Serverless developer metrics overview

Metrics enable developers to monitor how Knative services are performing. You can use the OpenShift Container Platform monitoring stack to record and view health checks and metrics for your Knative services.

You can view different metrics for OpenShift Serverless by navigating to Dashboards in the web console Developer perspective.

If Service Mesh is enabled with mTLS, metrics for Knative Serving are disabled by default because Service Mesh prevents Prometheus from scraping metrics.

For information about resolving this issue, see Enabling Knative Serving metrics when using Service Mesh with mTLS.

Scraping the metrics does not affect autoscaling of a Knative service, because scraping requests do not go through the activator. Consequently, no scraping takes place if no pods are running.

2.2. Knative service metrics exposed by default

| Metric name, unit, and type | Description | Metric tags |

|---|---|---|

|

Metric unit: dimensionless Metric type: counter |

The number of requests that are routed to | configuration_name="event-display", container_name="queue-proxy", namespace_name="apiserversource1", pod_name="event-display-00001-deployment-658fd4f9cf-qcnr5", response_code="200", response_code_class="2xx", revision_name="event-display-00001", service_name="event-display" |

|

Metric unit: milliseconds Metric type: histogram | The response time in milliseconds. | configuration_name="event-display", container_name="queue-proxy", namespace_name="apiserversource1", pod_name="event-display-00001-deployment-658fd4f9cf-qcnr5", response_code="200", response_code_class="2xx", revision_name="event-display-00001", service_name="event-display" |

|

Metric unit: dimensionless Metric type: counter |

The number of requests that are routed to | configuration_name="event-display", container_name="queue-proxy", namespace_name="apiserversource1", pod_name="event-display-00001-deployment-658fd4f9cf-qcnr5", response_code="200", response_code_class="2xx", revision_name="event-display-00001", service_name="event-display" |

|

Metric unit: milliseconds Metric type: histogram | The response time in milliseconds. | configuration_name="event-display", container_name="queue-proxy", namespace_name="apiserversource1", pod_name="event-display-00001-deployment-658fd4f9cf-qcnr5", response_code="200", response_code_class="2xx", revision_name="event-display-00001", service_name="event-display" |

|

Metric unit: dimensionless Metric type: gauge |

The current number of items in the serving and waiting queue, or not reported if unlimited concurrency. | configuration_name="event-display", container_name="queue-proxy", namespace_name="apiserversource1", pod_name="event-display-00001-deployment-658fd4f9cf-qcnr5", response_code="200", response_code_class="2xx", revision_name="event-display-00001", service_name="event-display" |

2.3. Knative service with custom application metrics

You can extend the set of metrics exported by a Knative service. The exact implementation depends on your application and the language used.

The following listing implements a sample Go application that exports the count of processed events custom metric.

2.4. Configuration for scraping custom metrics

Custom metrics scraping is performed by an instance of Prometheus purposed for user workload monitoring. After you enable user workload monitoring and create the application, you need a configuration that defines how the monitoring stack will scrape the metrics.

The following sample configuration defines the ksvc for your application and configures the service monitor. The exact configuration depends on your application and how it exports the metrics.

2.5. Examining metrics of a service

After you have configured the application to export the metrics and the monitoring stack to scrape them, you can examine the metrics in the web console.

Prerequisites

- You have logged in to the OpenShift Container Platform web console.

- You have installed the OpenShift Serverless Operator and Knative Serving.

Procedure

Optional: Run requests against your application that you will be able to see in the metrics:

hello_route=$(oc get ksvc helloworld-go -n ns1 -o jsonpath='{.status.url}') && \ curl $hello_route$ hello_route=$(oc get ksvc helloworld-go -n ns1 -o jsonpath='{.status.url}') && \ curl $hello_routeCopy to Clipboard Copied! Toggle word wrap Toggle overflow Example output

Hello Go Sample v1!

Hello Go Sample v1!Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

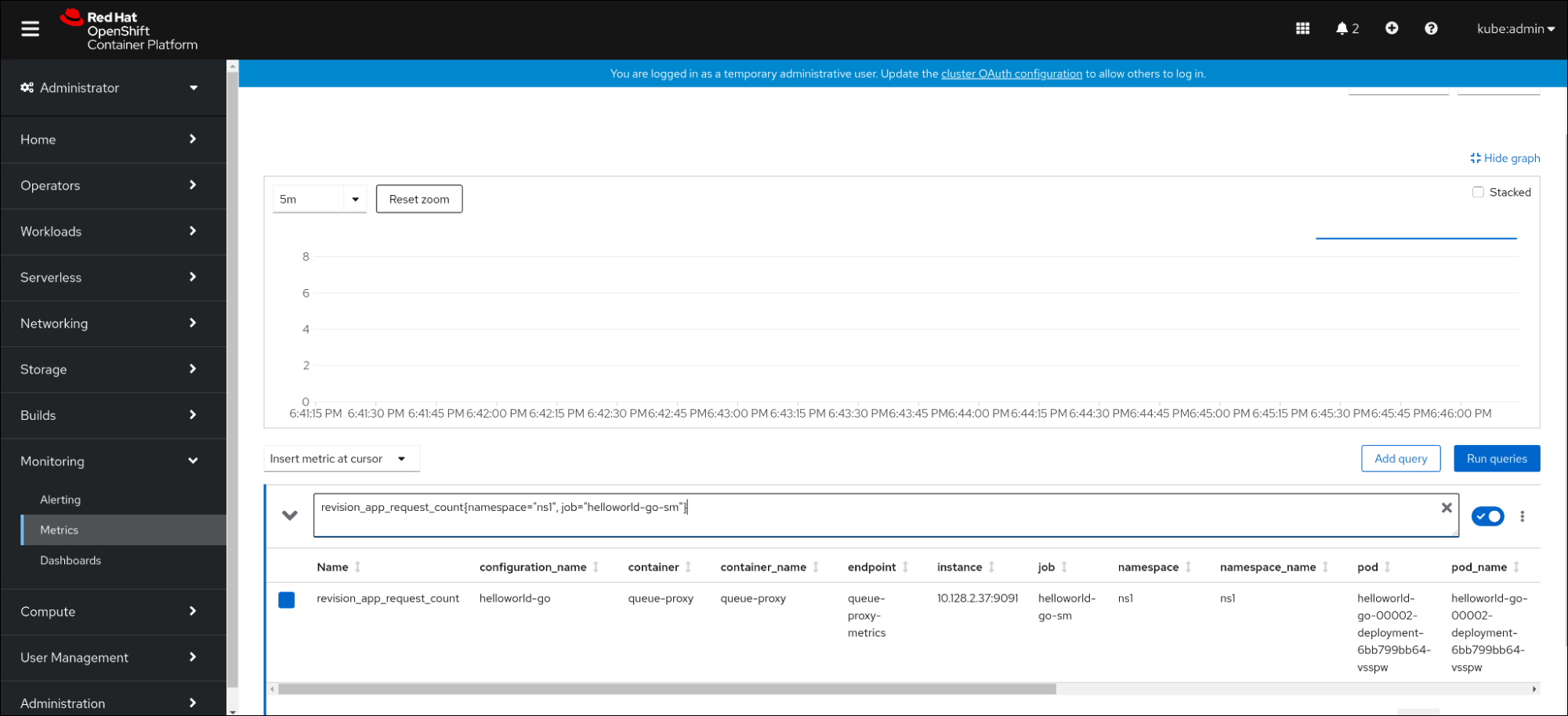

In the web console, navigate to the Observe

Metrics interface. In the input field, enter the query for the metric you want to observe, for example:

revision_app_request_count{namespace="ns1", job="helloworld-go-sm"}revision_app_request_count{namespace="ns1", job="helloworld-go-sm"}Copy to Clipboard Copied! Toggle word wrap Toggle overflow Another example:

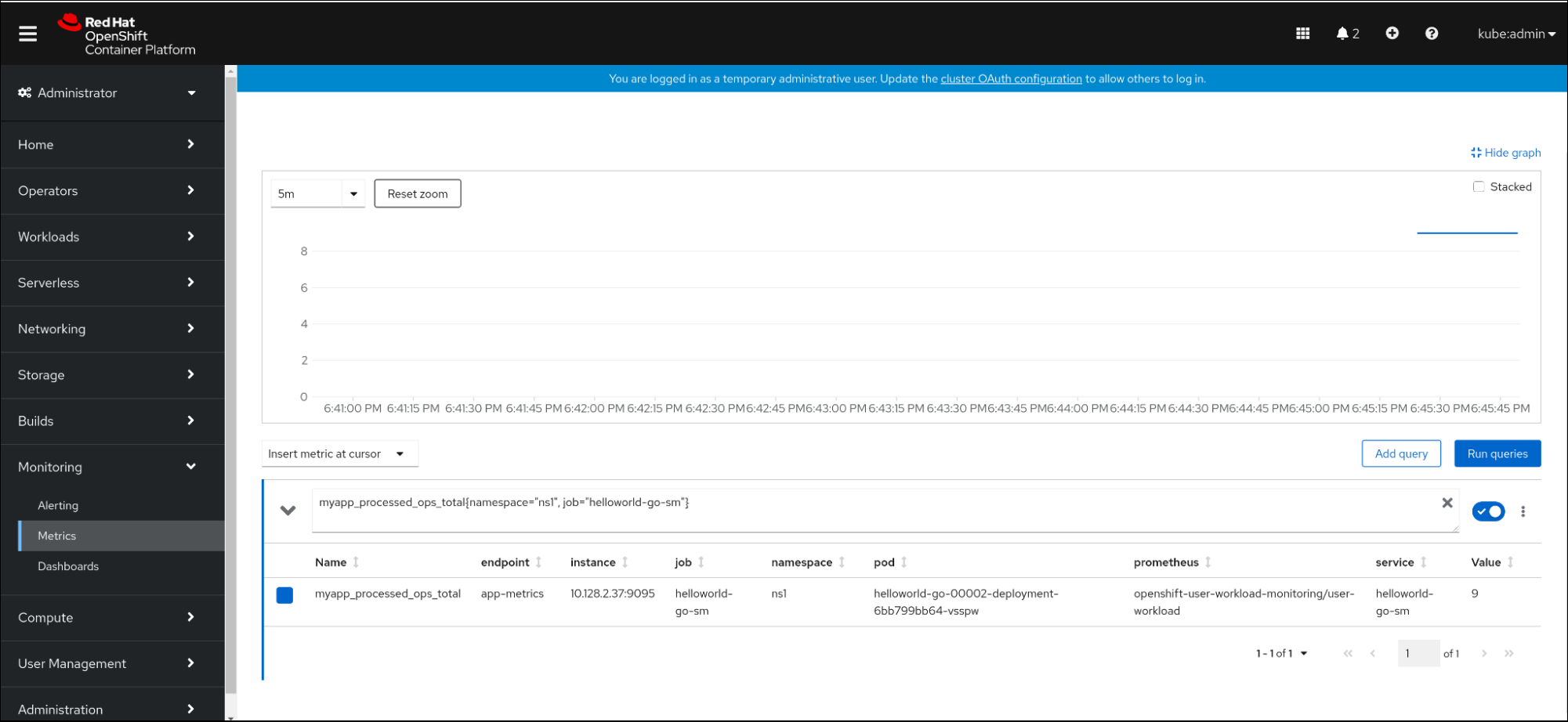

myapp_processed_ops_total{namespace="ns1", job="helloworld-go-sm"}myapp_processed_ops_total{namespace="ns1", job="helloworld-go-sm"}Copy to Clipboard Copied! Toggle word wrap Toggle overflow Observe the visualized metrics:

2.5.1. Queue proxy metrics

Each Knative service has a proxy container that proxies the connections to the application container. A number of metrics are reported for the queue proxy performance.

You can use the following metrics to measure if requests are queued at the proxy side and the actual delay in serving requests at the application side.

| Metric name | Description | Type | Tags | Unit |

|---|---|---|---|---|

|

|

The number of requests that are routed to | Counter |

| Integer (no units) |

|

| The response time of revision requests. | Histogram |

| Milliseconds |

|

|

The number of requests that are routed to the | Counter |

| Integer (no units) |

|

| The response time of revision app requests. | Histogram |

| Milliseconds |

|

|

The current number of items in the | Gauge |

| Integer (no units) |

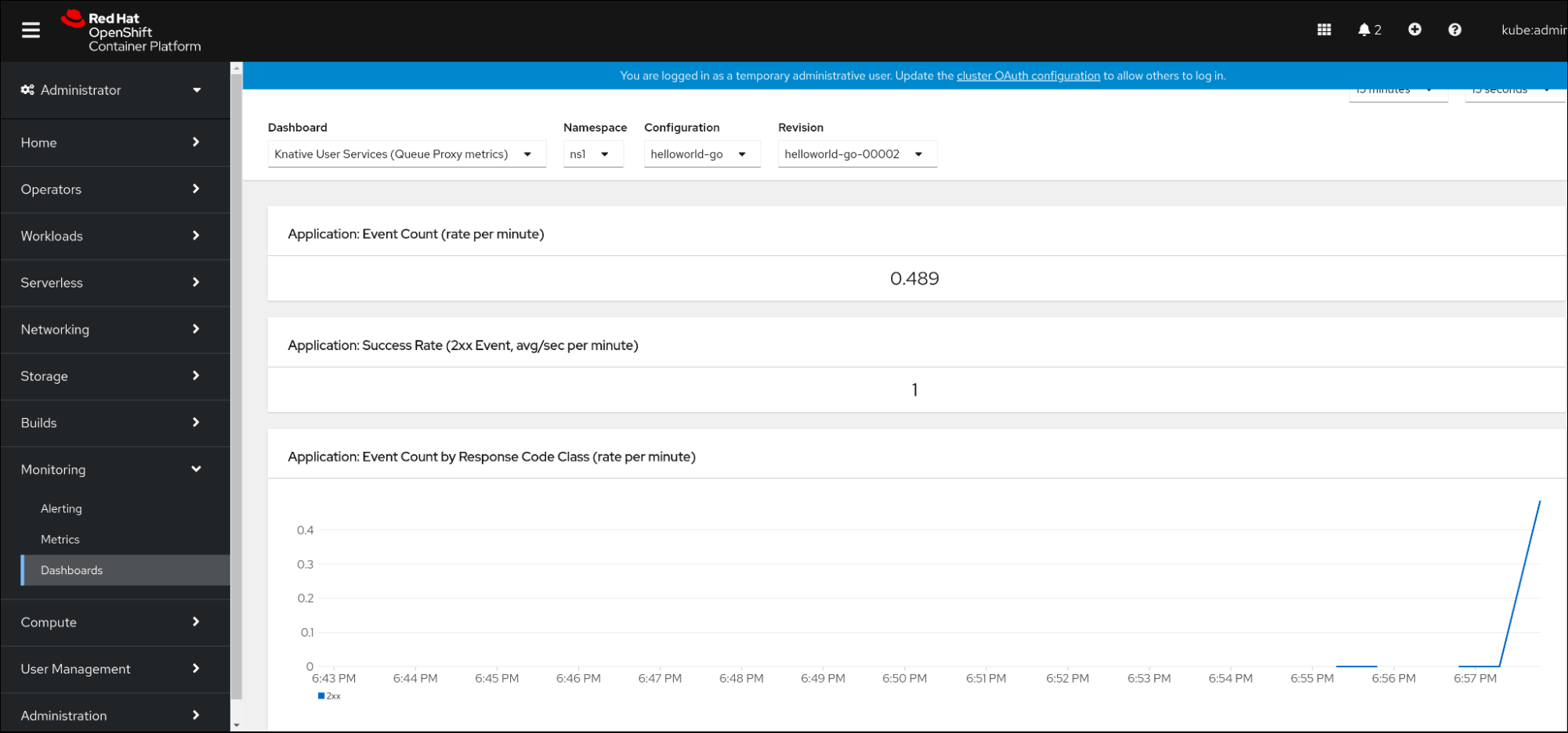

2.6. Dashboard for service metrics

You can examine the metrics using a dedicated dashboard that aggregates queue proxy metrics by namespace.

2.6.1. Examining metrics of a service in the dashboard

Prerequisites

- You have logged in to the OpenShift Container Platform web console.

- You have installed the OpenShift Serverless Operator and Knative Serving.

Procedure

-

In the web console, navigate to the Observe

Metrics interface. -

Select the

Knative User Services (Queue Proxy metrics)dashboard. - Select the Namespace, Configuration, and Revision that correspond to your application.

Observe the visualized metrics: