OpenShift Container Storage is now OpenShift Data Foundation starting with version 4.9.

Este conteúdo não está disponível no idioma selecionado.

Chapter 10. Multicloud Object Gateway

10.1. About the Multicloud Object Gateway

The Multicloud Object Gateway (MCG) is a lightweight object storage service for OpenShift, allowing users to start small and then scale as needed on-premise, in multiple clusters, and with cloud-native storage.

10.2. Accessing the Multicloud Object Gateway with your applications

You can access the object service with any application targeting AWS S3 or code that uses AWS S3 Software Development Kit (SDK). Applications need to specify the Multicloud Object Gateway (MCG) endpoint, an access key, and a secret access key. You can use your terminal or the MCG CLI to retrieve this information.

Prerequisites

- A running OpenShift Data Foundation Platform.

Download the MCG command-line interface for easier management.

subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms yum install mcg

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteSpecify the appropriate architecture for enabling the repositories using the subscription manager.

- For IBM Power, use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow - For IBM Z infrastructure, use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Alternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found at Download RedHat OpenShift Data Foundation page.

NoteChoose the correct Product Variant according to your architecture.

You can access the relevant endpoint, access key, and secret access key in two ways:

Example 10.1. Example

- Accessing the MCG bucket(s) using the virtual-hosted style

- If the client application tries to access https://<bucket-name>.s3-openshift-storage.apps.mycluster-cluster.qe.rh-ocs.com

<bucket-name>is the name of the MCG bucket

For example, https://mcg-test-bucket.s3-openshift-storage.apps.mycluster-cluster.qe.rh-ocs.com

A DNS entry is needed for

mcg-test-bucket.s3-openshift-storage.apps.mycluster-cluster.qe.rh-ocs.comto point to the S3 Service.

Ensure that you have a DNS entry in order to point the client application to the MCG bucket(s) using the virtual-hosted style.

10.2.1. Accessing the Multicloud Object Gateway from the terminal

Procedure

Run the describe command to view information about the Multicloud Object Gateway (MCG) endpoint, including its access key (AWS_ACCESS_KEY_ID value) and secret access key (AWS_SECRET_ACCESS_KEY value).

oc describe noobaa -n openshift-storage

# oc describe noobaa -n openshift-storageThe output will look similar to the following:

10.2.2. Accessing the Multicloud Object Gateway from the MCG command-line interface

Prerequisites

Download the MCG command-line interface.

subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms yum install mcg

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteSpecify the appropriate architecture for enabling the repositories using the subscription manager.

- For IBM Power, use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow - For IBM Z infrastructure, use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Procedure

Run the status command to access the endpoint, access key, and secret access key:

noobaa status -n openshift-storage

noobaa status -n openshift-storageThe output will look similar to the following:

You now have the relevant endpoint, access key, and secret access key in order to connect to your applications.

Example 10.2. Example

If AWS S3 CLI is the application, the following command will list the buckets in OpenShift Data Foundation:

AWS_ACCESS_KEY_ID=<AWS_ACCESS_KEY_ID> AWS_SECRET_ACCESS_KEY=<AWS_SECRET_ACCESS_KEY> aws --endpoint <ENDPOINT> --no-verify-ssl s3 ls

AWS_ACCESS_KEY_ID=<AWS_ACCESS_KEY_ID>

AWS_SECRET_ACCESS_KEY=<AWS_SECRET_ACCESS_KEY>

aws --endpoint <ENDPOINT> --no-verify-ssl s3 ls10.3. Allowing user access to the Multicloud Object Gateway Console

To allow access to the Multicloud Object Gateway (MCG) Console to a user, ensure that the user meets the following conditions:

- User is in cluster-admins group.

- User is in system:cluster-admins virtual group.

Prerequisites

- A running OpenShift Data Foundation Platform.

Procedure

Enable access to the MCG console.

Perform the following steps once on the cluster :

Create a

cluster-adminsgroup.oc adm groups new cluster-admins

# oc adm groups new cluster-adminsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Bind the group to the

cluster-adminrole.oc adm policy add-cluster-role-to-group cluster-admin cluster-admins

# oc adm policy add-cluster-role-to-group cluster-admin cluster-adminsCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Add or remove users from the

cluster-adminsgroup to control access to the MCG console.To add a set of users to the

cluster-adminsgroup :oc adm groups add-users cluster-admins <user-name> <user-name> <user-name>...

# oc adm groups add-users cluster-admins <user-name> <user-name> <user-name>...Copy to Clipboard Copied! Toggle word wrap Toggle overflow where

<user-name>is the name of the user to be added.NoteIf you are adding a set of users to the

cluster-adminsgroup, you do not need to bind the newly added users to the cluster-admin role to allow access to the OpenShift Data Foundation dashboard.To remove a set of users from the

cluster-adminsgroup :oc adm groups remove-users cluster-admins <user-name> <user-name> <user-name>...

# oc adm groups remove-users cluster-admins <user-name> <user-name> <user-name>...Copy to Clipboard Copied! Toggle word wrap Toggle overflow where

<user-name>is the name of the user to be removed.

Verification steps

- On the OpenShift Web Console, login as a user with access permission to Multicloud Object Gateway Console.

-

Navigate to Storage

OpenShift Data Foundation. -

In the Storage Systems tab, select the storage system and then click Overview

Object tab. - Select the Multicloud Object Gateway link.

- Click Allow selected permissions.

10.4. Adding storage resources for hybrid or Multicloud

10.4.1. Creating a new backing store

Use this procedure to create a new backing store in OpenShift Data Foundation.

Prerequisites

- Administrator access to OpenShift Data Foundation.

Procedure

-

In the OpenShift Web Console, click Storage

OpenShift Data Foundation. - Click the Backing Store tab.

- Click Create Backing Store.

On the Create New Backing Store page, perform the following:

- Enter a Backing Store Name.

- Select a Provider.

- Select a Region.

- Enter an Endpoint. This is optional.

Select a Secret from the drop-down list, or create your own secret. Optionally, you can Switch to Credentials view which lets you fill in the required secrets.

For more information on creating an OCP secret, see the section Creating the secret in the Openshift Container Platform documentation.

Each backingstore requires a different secret. For more information on creating the secret for a particular backingstore, see the Section 10.4.2, “Adding storage resources for hybrid or Multicloud using the MCG command line interface” and follow the procedure for the addition of storage resources using a YAML.

NoteThis menu is relevant for all providers except Google Cloud and local PVC.

- Enter the Target bucket. The target bucket is a container storage that is hosted on the remote cloud service. It allows you to create a connection that tells the MCG that it can use this bucket for the system.

- Click Create Backing Store.

Verification steps

-

In the OpenShift Web Console, click Storage

OpenShift Data Foundation. - Click the Backing Store tab to view all the backing stores.

10.4.2. Adding storage resources for hybrid or Multicloud using the MCG command line interface

The Multicloud Object Gateway (MCG) simplifies the process of spanning data across cloud provider and clusters.

You must add a backing storage that can be used by the MCG.

Depending on the type of your deployment, you can choose one of the following procedures to create a backing storage:

- For creating an AWS-backed backingstore, see Section 10.4.2.1, “Creating an AWS-backed backingstore”

- For creating an IBM COS-backed backingstore, see Section 10.4.2.2, “Creating an IBM COS-backed backingstore”

- For creating an Azure-backed backingstore, see Section 10.4.2.3, “Creating an Azure-backed backingstore”

- For creating a GCP-backed backingstore, see Section 10.4.2.4, “Creating a GCP-backed backingstore”

- For creating a local Persistent Volume-backed backingstore, see Section 10.4.2.5, “Creating a local Persistent Volume-backed backingstore”

For VMware deployments, skip to Section 10.4.3, “Creating an s3 compatible Multicloud Object Gateway backingstore” for further instructions.

10.4.2.1. Creating an AWS-backed backingstore

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms yum install mcg

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteSpecify the appropriate architecture for enabling the repositories using the subscription manager. For instance, in case of IBM Z infrastructure use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Alternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/packages

NoteChoose the correct Product Variant according to your architecture.

Procedure

From the MCG command-line interface, run the following command:

noobaa backingstore create aws-s3 <backingstore_name> --access-key=<AWS ACCESS KEY> --secret-key=<AWS SECRET ACCESS KEY> --target-bucket <bucket-name> -n openshift-storage

noobaa backingstore create aws-s3 <backingstore_name> --access-key=<AWS ACCESS KEY> --secret-key=<AWS SECRET ACCESS KEY> --target-bucket <bucket-name> -n openshift-storageCopy to Clipboard Copied! Toggle word wrap Toggle overflow

-

Replace

<backingstore_name>with the name of the backingstore. -

Replace

<AWS ACCESS KEY>and<AWS SECRET ACCESS KEY>with an AWS access key ID and secret access key you created for this purpose. Replace

<bucket-name>with an existing AWS bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration.The output will be similar to the following:

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "aws-resource" INFO[0002] ✅ Created: Secret "backing-store-secret-aws-resource"

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "aws-resource" INFO[0002] ✅ Created: Secret "backing-store-secret-aws-resource"Copy to Clipboard Copied! Toggle word wrap Toggle overflow

You can also add storage resources using a YAML:

Create a secret with the credentials:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

You must supply and encode your own AWS access key ID and secret access key using Base64, and use the results in place of

<AWS ACCESS KEY ID ENCODED IN BASE64>and<AWS SECRET ACCESS KEY ENCODED IN BASE64>. -

Replace

<backingstore-secret-name>with a unique name.

-

You must supply and encode your own AWS access key ID and secret access key using Base64, and use the results in place of

Apply the following YAML for a specific backing store:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<bucket-name>with an existing AWS bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration. -

Replace

<backingstore-secret-name>with the name of the secret created in the previous step.

-

Replace

10.4.2.2. Creating an IBM COS-backed backingstore

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms yum install mcg

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteSpecify the appropriate architecture for enabling the repositories using the subscription manager. For instance,

- For IBM Power, use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow - For IBM Z infrastructure, use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Alternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/packages

NoteChoose the correct Product Variant according to your architecture.

Procedure

From the MCG command-line interface, run the following command:

noobaa backingstore create ibm-cos <backingstore_name> --access-key=<IBM ACCESS KEY> --secret-key=<IBM SECRET ACCESS KEY> --endpoint=<IBM COS ENDPOINT> --target-bucket <bucket-name> -n openshift-storage

noobaa backingstore create ibm-cos <backingstore_name> --access-key=<IBM ACCESS KEY> --secret-key=<IBM SECRET ACCESS KEY> --endpoint=<IBM COS ENDPOINT> --target-bucket <bucket-name> -n openshift-storageCopy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<backingstore_name>with the name of the backingstore. Replace

<IBM ACCESS KEY>,<IBM SECRET ACCESS KEY>,<IBM COS ENDPOINT>with an IBM access key ID, secret access key and the appropriate regional endpoint that corresponds to the location of the existing IBM bucket.To generate the above keys on IBM cloud, you must include HMAC credentials while creating the service credentials for your target bucket.

Replace

<bucket-name>with an existing IBM bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration.The output will be similar to the following:

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "ibm-resource" INFO[0002] ✅ Created: Secret "backing-store-secret-ibm-resource"

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "ibm-resource" INFO[0002] ✅ Created: Secret "backing-store-secret-ibm-resource"Copy to Clipboard Copied! Toggle word wrap Toggle overflow

-

Replace

You can also add storage resources using a YAML:

Create a secret with the credentials:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

You must supply and encode your own IBM COS access key ID and secret access key using Base64, and use the results in place of

<IBM COS ACCESS KEY ID ENCODED IN BASE64>and<IBM COS SECRET ACCESS KEY ENCODED IN BASE64>. -

Replace

<backingstore-secret-name>with a unique name.

-

You must supply and encode your own IBM COS access key ID and secret access key using Base64, and use the results in place of

Apply the following YAML for a specific backing store:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<bucket-name>with an existing IBM COS bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration. -

Replace

<endpoint>with a regional endpoint that corresponds to the location of the existing IBM bucket name. This argument tells Multicloud Object Gateway which endpoint to use for its backing store, and subsequently, data storage and administration. -

Replace

<backingstore-secret-name>with the name of the secret created in the previous step.

-

Replace

10.4.2.3. Creating an Azure-backed backingstore

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms yum install mcg

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteSpecify the appropriate architecture for enabling the repositories using the subscription manager. For instance, in case of IBM Z infrastructure use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Alternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/packages

NoteChoose the correct Product Variant according to your architecture.

Procedure

From the MCG command-line interface, run the following command:

noobaa backingstore create azure-blob <backingstore_name> --account-key=<AZURE ACCOUNT KEY> --account-name=<AZURE ACCOUNT NAME> --target-blob-container <blob container name>

noobaa backingstore create azure-blob <backingstore_name> --account-key=<AZURE ACCOUNT KEY> --account-name=<AZURE ACCOUNT NAME> --target-blob-container <blob container name>Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<backingstore_name>with the name of the backingstore. -

Replace

<AZURE ACCOUNT KEY>and<AZURE ACCOUNT NAME>with an AZURE account key and account name you created for this purpose. Replace

<blob container name>with an existing Azure blob container name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration.The output will be similar to the following:

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "azure-resource" INFO[0002] ✅ Created: Secret "backing-store-secret-azure-resource"

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "azure-resource" INFO[0002] ✅ Created: Secret "backing-store-secret-azure-resource"Copy to Clipboard Copied! Toggle word wrap Toggle overflow

-

Replace

You can also add storage resources using a YAML:

Create a secret with the credentials:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

You must supply and encode your own Azure Account Name and Account Key using Base64, and use the results in place of

<AZURE ACCOUNT NAME ENCODED IN BASE64>and<AZURE ACCOUNT KEY ENCODED IN BASE64>. -

Replace

<backingstore-secret-name>with a unique name.

-

You must supply and encode your own Azure Account Name and Account Key using Base64, and use the results in place of

Apply the following YAML for a specific backing store:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<blob-container-name>with an existing Azure blob container name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration. -

Replace

<backingstore-secret-name>with the name of the secret created in the previous step.

-

Replace

10.4.2.4. Creating a GCP-backed backingstore

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms yum install mcg

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteSpecify the appropriate architecture for enabling the repositories using the subscription manager. For instance, in case of IBM Z infrastructure use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Alternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/packages

NoteChoose the correct Product Variant according to your architecture.

Procedure

From the MCG command-line interface, run the following command:

noobaa backingstore create google-cloud-storage <backingstore_name> --private-key-json-file=<PATH TO GCP PRIVATE KEY JSON FILE> --target-bucket <GCP bucket name>

noobaa backingstore create google-cloud-storage <backingstore_name> --private-key-json-file=<PATH TO GCP PRIVATE KEY JSON FILE> --target-bucket <GCP bucket name>Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<backingstore_name>with the name of the backingstore. -

Replace

<PATH TO GCP PRIVATE KEY JSON FILE>with a path to your GCP private key created for this purpose. Replace

<GCP bucket name>with an existing GCP object storage bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration.The output will be similar to the following:

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "google-gcp" INFO[0002] ✅ Created: Secret "backing-store-google-cloud-storage-gcp"

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "google-gcp" INFO[0002] ✅ Created: Secret "backing-store-google-cloud-storage-gcp"Copy to Clipboard Copied! Toggle word wrap Toggle overflow

-

Replace

You can also add storage resources using a YAML:

Create a secret with the credentials:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

You must supply and encode your own GCP service account private key using Base64, and use the results in place of

<GCP PRIVATE KEY ENCODED IN BASE64>. -

Replace

<backingstore-secret-name>with a unique name.

-

You must supply and encode your own GCP service account private key using Base64, and use the results in place of

Apply the following YAML for a specific backing store:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<target bucket>with an existing Google storage bucket. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration. -

Replace

<backingstore-secret-name>with the name of the secret created in the previous step.

-

Replace

10.4.2.5. Creating a local Persistent Volume-backed backingstore

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms yum install mcg

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteSpecify the appropriate architecture for enabling the repositories using the subscription manager. For instance, in case of IBM Z infrastructure use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Alternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/packages

NoteChoose the correct Product Variant according to your architecture.

Procedure

From the MCG command-line interface, run the following command:

noobaa backingstore create pv-pool <backingstore_name> --num-volumes=<NUMBER OF VOLUMES> --pv-size-gb=<VOLUME SIZE> --storage-class=<LOCAL STORAGE CLASS>

noobaa backingstore create pv-pool <backingstore_name> --num-volumes=<NUMBER OF VOLUMES> --pv-size-gb=<VOLUME SIZE> --storage-class=<LOCAL STORAGE CLASS>Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<backingstore_name>with the name of the backingstore. -

Replace

<NUMBER OF VOLUMES>with the number of volumes you would like to create. Note that increasing the number of volumes scales up the storage. -

Replace

<VOLUME SIZE>with the required size, in GB, of each volume. Replace

<LOCAL STORAGE CLASS>with the local storage class, recommended to useocs-storagecluster-ceph-rbd.The output will be similar to the following:

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Exists: BackingStore "local-mcg-storage"

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Exists: BackingStore "local-mcg-storage"Copy to Clipboard Copied! Toggle word wrap Toggle overflow

-

Replace

You can also add storage resources using a YAML:

Apply the following YAML for a specific backing store:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<backingstore_name>with the name of the backingstore. -

Replace

<NUMBER OF VOLUMES>with the number of volumes you would like to create. Note that increasing the number of volumes scales up the storage. -

Replace

<VOLUME SIZE>with the required size, in GB, of each volume. Note that the letter G should remain. -

Replace

<LOCAL STORAGE CLASS>with the local storage class, recommended to useocs-storagecluster-ceph-rbd.

-

Replace

10.4.3. Creating an s3 compatible Multicloud Object Gateway backingstore

The Multicloud Object Gateway (MCG) can use any S3 compatible object storage as a backing store, for example, Red Hat Ceph Storage’s RADOS Object Gateway (RGW). The following procedure shows how to create an S3 compatible MCG backing store for Red Hat Ceph Storage’s RGW. Note that when the RGW is deployed, OpenShift Data Foundation operator creates an S3 compatible backingstore for MCG automatically.

Procedure

From the MCG command-line interface, run the following command:

noobaa backingstore create s3-compatible rgw-resource --access-key=<RGW ACCESS KEY> --secret-key=<RGW SECRET KEY> --target-bucket=<bucket-name> --endpoint=<RGW endpoint>

noobaa backingstore create s3-compatible rgw-resource --access-key=<RGW ACCESS KEY> --secret-key=<RGW SECRET KEY> --target-bucket=<bucket-name> --endpoint=<RGW endpoint>Copy to Clipboard Copied! Toggle word wrap Toggle overflow To get the

<RGW ACCESS KEY>and<RGW SECRET KEY>, run the following command using your RGW user secret name:oc get secret <RGW USER SECRET NAME> -o yaml -n openshift-storage

oc get secret <RGW USER SECRET NAME> -o yaml -n openshift-storageCopy to Clipboard Copied! Toggle word wrap Toggle overflow - Decode the access key ID and the access key from Base64 and keep them.

-

Replace

<RGW USER ACCESS KEY>and<RGW USER SECRET ACCESS KEY>with the appropriate, decoded data from the previous step. -

Replace

<bucket-name>with an existing RGW bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration. To get the

<RGW endpoint>, see Accessing the RADOS Object Gateway S3 endpoint.The output will be similar to the following:

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "rgw-resource" INFO[0002] ✅ Created: Secret "backing-store-secret-rgw-resource"

INFO[0001] ✅ Exists: NooBaa "noobaa" INFO[0002] ✅ Created: BackingStore "rgw-resource" INFO[0002] ✅ Created: Secret "backing-store-secret-rgw-resource"Copy to Clipboard Copied! Toggle word wrap Toggle overflow

You can also create the backingstore using a YAML:

Create a

CephObjectStoreuser. This also creates a secret containing the RGW credentials:Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<RGW-Username>and<Display-name>with a unique username and display name.

-

Replace

Apply the following YAML for an S3-Compatible backing store:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<backingstore-secret-name>with the name of the secret that was created withCephObjectStorein the previous step. -

Replace

<bucket-name>with an existing RGW bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration. -

To get the

<RGW endpoint>, see Accessing the RADOS Object Gateway S3 endpoint.

-

Replace

10.4.4. Adding storage resources for hybrid and Multicloud using the user interface

Procedure

-

In the OpenShift Web Console, click Storage

OpenShift Data Foundation. -

In the Storage Systems tab, select the storage system and then click Overview

Object tab. - Select the Multicloud Object Gateway link.

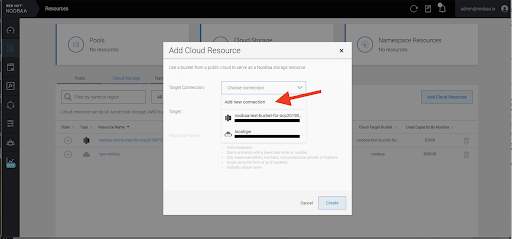

Select the Resources tab in the left, highlighted below. From the list that populates, select Add Cloud Resource.

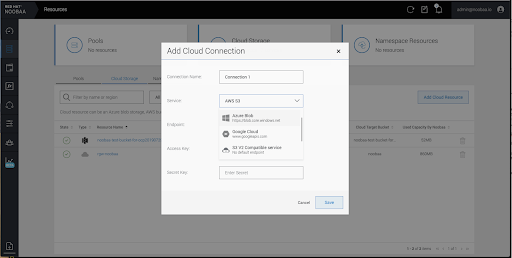

Select Add new connection.

Select the relevant native cloud provider or S3 compatible option and fill in the details.

Select the newly created connection and map it to the existing bucket.

- Repeat these steps to create as many backing stores as needed.

Resources created in NooBaa UI cannot be used by OpenShift UI or MCG CLI.

10.4.5. Creating a new bucket class

Bucket class is a CRD representing a class of buckets that defines tiering policies and data placements for an Object Bucket Class (OBC).

Use this procedure to create a bucket class in OpenShift Data Foundation.

Procedure

-

In the OpenShift Web Console, click Storage

OpenShift Data Foundation. - Click the Bucket Class tab.

- Click Create Bucket Class.

On the Create new Bucket Class page, perform the following:

Select the bucket class type and enter a bucket class name.

Select the BucketClass type. Choose one of the following options:

- Standard: data will be consumed by a Multicloud Object Gateway (MCG), deduped, compressed and encrypted.

Namespace: data is stored on the NamespaceStores without performing de-duplication, compression or encryption.

By default, Standard is selected.

- Enter a Bucket Class Name.

- Click Next.

In Placement Policy, select Tier 1 - Policy Type and click Next. You can choose either one of the options as per your requirements.

- Spread allows spreading of the data across the chosen resources.

- Mirror allows full duplication of the data across the chosen resources.

- Click Add Tier to add another policy tier.

Select at least one Backing Store resource from the available list if you have selected Tier 1 - Policy Type as Spread and click Next. Alternatively, you can also create a new backing store.

NoteYou need to select at least 2 backing stores when you select Policy Type as Mirror in previous step.

- Review and confirm Bucket Class settings.

- Click Create Bucket Class.

Verification steps

-

In the OpenShift Web Console, click Storage

OpenShift Data Foundation. - Click the Bucket Class tab and search the new Bucket Class.

10.4.6. Editing a bucket class

Use the following procedure to edit the bucket class components through the YAML file by clicking the edit button on the Openshift web console.

Prerequisites

- Administrator access to OpenShift Web Console.

Procedure

-

In the OpenShift Web Console, click Storage

OpenShift Data Foundation. - Click the Bucket Class tab.

- Click the Action Menu (⋮) next to the Bucket class you want to edit.

- Click Edit Bucket Class.

- You are redirected to the YAML file, make the required changes in this file and click Save.

10.4.7. Editing backing stores for bucket class

Use the following procedure to edit an existing Multicloud Object Gateway (MCG) bucket class to change the underlying backing stores used in a bucket class.

Prerequisites

- Administrator access to OpenShift Web Console.

- A bucket class.

- Backing stores.

Procedure

-

In the OpenShift Web Console, click Storage

OpenShift Data Foundation. - Click the Bucket Class tab.

Click the Action Menu (⋮) next to the Bucket class you want to edit.

- Click Edit Bucket Class Resources.

On the Edit Bucket Class Resources page, edit the bucket class resources either by adding a backing store to the bucket class or by removing a backing store from the bucket class. You can also edit bucket class resources created with one or two tiers and different placement policies.

- To add a backing store to the bucket class, select the name of the backing store.

To remove a backing store from the bucket class, clear the name of the backing store.

- Click Save.

10.5. Managing namespace buckets

Namespace buckets let you connect data repositories on different providers together, so you can interact with all of your data through a single unified view. Add the object bucket associated with each provider to the namespace bucket, and access your data through the namespace bucket to see all of your object buckets at once. This lets you write to your preferred storage provider while reading from multiple other storage providers, greatly reducing the cost of migrating to a new storage provider.

You can interact with objects in a namespace bucket using the S3 API. See S3 API endpoints for objects in namespace buckets for more information.

A namespace bucket can only be used if its write target is available and functional.

10.5.1. Amazon S3 API endpoints for objects in namespace buckets

You can interact with objects in the namespace buckets using the Amazon Simple Storage Service (S3) API.

Red Hat OpenShift Data Foundation 4.6 onwards supports the following namespace bucket operations:

See the Amazon S3 API reference documentation for the most up-to-date information about these operations and how to use them.

Additional resources

10.5.2. Adding a namespace bucket using the Multicloud Object Gateway CLI and YAML

For more information about namespace buckets, see Managing namespace buckets.

Depending on the type of your deployment and whether you want to use YAML or the Multicloud Object Gateway CLI, choose one of the following procedures to add a namespace bucket:

10.5.2.1. Adding an AWS S3 namespace bucket using YAML

Prerequisites

- A running OpenShift Data Foundation Platform

- Access to the Multicloud Object Gateway (MCG), see Chapter 2, Accessing the Multicloud Object Gateway with your applications.

Procedure

Create a secret with the credentials:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

You must supply and encode your own AWS access key ID and secret access key using Base64, and use the results in place of

<AWS ACCESS KEY ID ENCODED IN BASE64>and<AWS SECRET ACCESS KEY ENCODED IN BASE64>. -

Replace

<namespacestore-secret-name>with a unique name.

-

You must supply and encode your own AWS access key ID and secret access key using Base64, and use the results in place of

Create a NamespaceStore resource using OpenShift Custom Resource Definitions (CRDs). A NamespaceStore represents underlying storage to be used as a read or write target for the data in the MCG namespace buckets. To create a NamespaceStore resource, apply the following YAML:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<resource-name>with the name you want to give to the resource. -

Replace

<namespacestore-secret-name>with the secret created in step 1. -

Replace

<namespace-secret>with the namespace where the secret can be found. -

Replace

<target-bucket>with the target bucket you created for the NamespaceStore.

-

Replace

Create a namespace bucket class that defines a namespace policy for the namespace buckets. The namespace policy requires a type of either

singleormulti.A namespace policy of type

singlerequires the following configuration:Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<my-bucket-class>with a unique namespace bucket class name. -

Replace

<resource>with the name of a single namespace-store that defines the read and write target of the namespace bucket.

-

Replace

A namespace policy of type

multirequires the following configuration:Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<my-bucket-class>with a unique bucket class name. -

Replace

<write-resource>with the name of a single namespace-store that defines the write target of the namespace bucket. -

Replace

<read-resources>with a list of the names of the namespace-stores that defines the read targets of the namespace bucket.

-

Replace

Apply the following YAML to create a bucket using an Object Bucket Class (OBC) resource that uses the bucket class defined in step 2.

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<resource-name>with the name you want to give to the resource. -

Replace

<my-bucket>with the name you want to give to the bucket. -

Replace

<my-bucket-class>with the bucket class created in the previous step.

-

Replace

Once the OBC is provisioned by the operator, a bucket is created in the MCG, and the operator creates a Secret and ConfigMap with the same name and on the same namespace of the OBC.

10.5.2.2. Adding an IBM COS namespace bucket using YAML

Prerequisites

- A running OpenShift Data Foundation Platform.

- Access to the Multicloud Object Gateway (MCG), see Chapter 2, Accessing the Multicloud Object Gateway with your applications.

Procedure

Create a secret with the credentials:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

You must supply and encode your own IBM COS access key ID and secret access key using Base64, and use the results in place of

<IBM COS ACCESS KEY ID ENCODED IN BASE64>and<IBM COS SECRET ACCESS KEY ENCODED IN BASE64>. -

Replace

<namespacestore-secret-name>with a unique name.

-

You must supply and encode your own IBM COS access key ID and secret access key using Base64, and use the results in place of

Create a NamespaceStore resource using OpenShift Custom Resource Definitions (CRDs). A NamespaceStore represents underlying storage to be used as a read or write target for the data in the MCG namespace buckets. To create a NamespaceStore resource, apply the following YAML:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<IBM COS ENDPOINT>with the appropriate IBM COS endpoint. -

Replace

<namespacestore-secret-name>with the secret created in step 1. -

Replace

<namespace-secret>with the namespace where the secret can be found. -

Replace

<target-bucket>with the target bucket you created for the NamespaceStore.

-

Replace

Create a namespace bucket class that defines a namespace policy for the namespace buckets. The namespace policy requires a type of either

singleormulti.A namespace policy of type

singlerequires the following configuration:Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<my-bucket-class>with a unique namespace bucket class name. -

Replace

<resource>with a the name of a single namespace-store that defines the read and write target of the namespace bucket.

-

Replace

A namespace policy of type

multirequires the following configuration:Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<my-bucket-class>with a unique bucket class name. -

Replace

<write-resource>with the name of a single namespace-store that defines the write target of the namespace bucket. -

Replace

<read-resources>with a list of the names of namespace-stores that defines the read targets of the namespace bucket.

-

Replace

Apply the following YAML to create a bucket using an Object Bucket Class (OBC) resource that uses the bucket class defined in step 2.

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<resource-name>with the name you want to give to the resource. -

Replace

<my-bucket>with the name you want to give to the bucket. -

Replace

<my-bucket-class>with the bucket class created in the previous step.

-

Replace

Once the OBC is provisioned by the operator, a bucket is created in the MCG, and the operator creates a Secret and ConfigMap with the same name and on the same namespace of the OBC.

10.5.2.3. Adding an AWS S3 namespace bucket using the Multicloud Object Gateway CLI

Prerequisites

- A running OpenShift Data Foundation Platform.

- Access to the Multicloud Object Gateway (MCG), see Chapter 2, Accessing the Multicloud Object Gateway with your applications.

- Download the MCG command-line interface:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms yum install mcg

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms

# yum install mcgSpecify the appropriate architecture for enabling the repositories using subscription manager. For instance, in case of IBM Z infrastructure use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsAlternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/package.

Choose the correct Product Variant according to your architecture.

Procedure

Create a NamespaceStore resource. A NamespaceStore represents an underlying storage to be used as a read or write target for the data in MCG namespace buckets. From the MCG command-line interface, run the following command:

noobaa namespacestore create aws-s3 <namespacestore> --access-key <AWS ACCESS KEY> --secret-key <AWS SECRET ACCESS KEY> --target-bucket <bucket-name> -n openshift-storage

noobaa namespacestore create aws-s3 <namespacestore> --access-key <AWS ACCESS KEY> --secret-key <AWS SECRET ACCESS KEY> --target-bucket <bucket-name> -n openshift-storageCopy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<namespacestore>with the name of the NamespaceStore. -

Replace

<AWS ACCESS KEY>and<AWS SECRET ACCESS KEY>with an AWS access key ID and secret access key you created for this purpose. -

Replace

<bucket-name>with an existing AWS bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration.

-

Replace

Create a namespace bucket class that defines a namespace policy for the namespace buckets. The namespace policy requires a type of either

singleormulti.Run the following command to create a namespace bucket class with a namespace policy of type

single:noobaa bucketclass create namespace-bucketclass single <my-bucket-class> --resource <resource> -n openshift-storage

noobaa bucketclass create namespace-bucketclass single <my-bucket-class> --resource <resource> -n openshift-storageCopy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<resource-name>with the name you want to give the resource. -

Replace

<my-bucket-class>with a unique bucket class name. -

Replace

<resource>with a single namespace-store that defines the read and write target of the namespace bucket.

-

Replace

Run the following command to create a namespace bucket class with a namespace policy of type

multi:noobaa bucketclass create namespace-bucketclass multi <my-bucket-class> --write-resource <write-resource> --read-resources <read-resources> -n openshift-storage

noobaa bucketclass create namespace-bucketclass multi <my-bucket-class> --write-resource <write-resource> --read-resources <read-resources> -n openshift-storageCopy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<resource-name>with the name you want to give the resource. -

Replace

<my-bucket-class>with a unique bucket class name. -

Replace

<write-resource>with a single namespace-store that defines the write target of the namespace bucket. -

Replace

<read-resources>with a list of namespace-stores separated by commas that defines the read targets of the namespace bucket.

-

Replace

Run the following command to create a bucket using an Object Bucket Class (OBC) resource that uses the bucket class defined in step 2.

noobaa obc create my-bucket-claim -n openshift-storage --app-namespace my-app --bucketclass <custom-bucket-class>

noobaa obc create my-bucket-claim -n openshift-storage --app-namespace my-app --bucketclass <custom-bucket-class>Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<bucket-name>with a bucket name of your choice. -

Replace

<custom-bucket-class>with the name of the bucket class created in step 2.

-

Replace

Once the OBC is provisioned by the operator, a bucket is created in the MCG, and the operator creates a Secret and ConfigMap with the same name and on the same namespace of the OBC.

10.5.2.4. Adding an IBM COS namespace bucket using the Multicloud Object Gateway CLI

Prerequisites

- A running OpenShift Data Foundation Platform.

- Access to the Multicloud Object Gateway (MCG), see Chapter 2, Accessing the Multicloud Object Gateway with your applications.

Download the MCG command-line interface:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms yum install mcg

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteSpecify the appropriate architecture for enabling the repositories using subscription manager.

- For IBM Power, use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow - For IBM Z infrastructure, use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Alternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/package.

NoteChoose the correct Product Variant according to your architecture.

Procedure

Create a NamespaceStore resource. A NamespaceStore represents an underlying storage to be used as a read or write target for the data in MCG namespace buckets. From the MCG command-line interface, run the following command:

noobaa namespacestore create ibm-cos <namespacestore> --endpoint <IBM COS ENDPOINT> --access-key <IBM ACCESS KEY> --secret-key <IBM SECRET ACCESS KEY> --target-bucket <bucket-name> -n openshift-storage

noobaa namespacestore create ibm-cos <namespacestore> --endpoint <IBM COS ENDPOINT> --access-key <IBM ACCESS KEY> --secret-key <IBM SECRET ACCESS KEY> --target-bucket <bucket-name> -n openshift-storageCopy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<namespacestore>with the name of the NamespaceStore. -

Replace

<IBM ACCESS KEY>,<IBM SECRET ACCESS KEY>,<IBM COS ENDPOINT>with an IBM access key ID, secret access key and the appropriate regional endpoint that corresponds to the location of the existing IBM bucket. -

Replace

<bucket-name>with an existing IBM bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration.

-

Replace

Create a namespace bucket class that defines a namespace policy for the namespace buckets. The namespace policy requires a type of either

singleormulti.Run the following command to create a namespace bucket class with a namespace policy of type

single:noobaa bucketclass create namespace-bucketclass single <my-bucket-class> --resource <resource> -n openshift-storage

noobaa bucketclass create namespace-bucketclass single <my-bucket-class> --resource <resource> -n openshift-storageCopy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<resource-name>with the name you want to give the resource. -

Replace

<my-bucket-class>with a unique bucket class name. -

Replace

<resource>with a single namespace-store that defines the read and write target of the namespace bucket.

-

Replace

Run the following command to create a namespace bucket class with a namespace policy of type

multi:noobaa bucketclass create namespace-bucketclass multi <my-bucket-class> --write-resource <write-resource> --read-resources <read-resources> -n openshift-storage

noobaa bucketclass create namespace-bucketclass multi <my-bucket-class> --write-resource <write-resource> --read-resources <read-resources> -n openshift-storageCopy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<resource-name>with the name you want to give the resource. -

Replace

<my-bucket-class>with a unique bucket class name. -

Replace

<write-resource>with a single namespace-store that defines the write target of the namespace bucket. -

Replace

<read-resources>with a list of namespace-stores separated by commas that defines the read targets of the namespace bucket.

-

Replace

Run the following command to create a bucket using an Object Bucket Class (OBC) resource that uses the bucket class defined in step 2.

noobaa obc create my-bucket-claim -n openshift-storage --app-namespace my-app --bucketclass <custom-bucket-class>

noobaa obc create my-bucket-claim -n openshift-storage --app-namespace my-app --bucketclass <custom-bucket-class>Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<bucket-name>with a bucket name of your choice. -

Replace

<custom-bucket-class>with the name of the bucket class created in step 2.

-

Replace

Once the OBC is provisioned by the operator, a bucket is created in the MCG, and the operator creates a Secret and ConfigMap with the same name and on the same namespace of the OBC.

10.5.3. Adding a namespace bucket using the OpenShift Container Platform user interface

With the release of OpenShift Data Foundation 4.8, namespace buckets can be added using the OpenShift Container Platform user interface. For more information about namespace buckets, see Managing namespace buckets.

Prerequisites

- Openshift Container Platform with OpenShift Data Foundation operator installed.

- Access to the Multicloud Object Gateway (MCG).

Procedure

- Log into the OpenShift Web Console.

-

Click Storage

OpenShift Data Foundation. Click the Namespace Store tab to create a

namespacestoreresources to be used in the namespace bucket.- Click Create namespace store.

- Enter a namespacestore name.

- Choose a provider.

- Choose a region.

- Either select an existing secret, or click Switch to credentials to create a secret by entering a secret key and secret access key.

- Choose a target bucket.

- Click Create.

- Verify the namespacestore is in the Ready state.

- Repeat these steps until you have the desired amount of resources.

Click the Bucket Class tab

Create a new Bucket Class. - Select the Namespace radio button.

- Enter a Bucket Class name.

- Add a description (optional).

- Click Next.

- Choose a namespace policy type for your namespace bucket, and then click Next.

Select the target resource(s).

- If your namespace policy type is Single, you need to choose a read resource.

- If your namespace policy type is Multi, you need to choose read resources and a write resource.

- If your namespace policy type is Cache, you need to choose a Hub namespace store that defines the read and write target of the namespace bucket.

- Click Next.

- Review your new bucket class, and then click Create Bucketclass.

- On the BucketClass page, verify that your newly created resource is in the Created phase.

-

In the OpenShift Web Console, click Storage

OpenShift Data Foundation. - In the Status card, click Storage System and click the storage system link from the pop up that appears.

-

In the Object tab, click Multicloud Object Gateway

Buckets Namespace Buckets tab . Click Create Namespace Bucket.

- On the Choose Name tab, specify a Name for the namespace bucket and click Next.

On the Set Placement tab:

- Under Read Policy, select the checkbox for each namespace resource created in step 5 that the namespace bucket should read data from.

- If the namespace policy type you are using is Multi, then Under Write Policy, specify which namespace resource the namespace bucket should write data to.

- Click Next.

- Click Create.

Verification

- Verify that the namespace bucket is listed with a green check mark in the State column, the expected number of read resources, and the expected write resource name.

10.6. Mirroring data for hybrid and Multicloud buckets

The Multicloud Object Gateway (MCG) simplifies the process of spanning data across cloud provider and clusters.

Prerequisites

- You must first add a backing storage that can be used by the MCG, see Section 10.4, “Adding storage resources for hybrid or Multicloud”.

Then you create a bucket class that reflects the data management policy, mirroring.

Procedure

You can set up mirroring data in three ways:

10.6.1. Creating bucket classes to mirror data using the MCG command-line-interface

From the Multicloud Object Gateway (MCG) command-line interface, run the following command to create a bucket class with a mirroring policy:

noobaa bucketclass create placement-bucketclass mirror-to-aws --backingstores=azure-resource,aws-resource --placement Mirror

$ noobaa bucketclass create placement-bucketclass mirror-to-aws --backingstores=azure-resource,aws-resource --placement MirrorCopy to Clipboard Copied! Toggle word wrap Toggle overflow Set the newly created bucket class to a new bucket claim, generating a new bucket that will be mirrored between two locations:

noobaa obc create mirrored-bucket --bucketclass=mirror-to-aws

$ noobaa obc create mirrored-bucket --bucketclass=mirror-to-awsCopy to Clipboard Copied! Toggle word wrap Toggle overflow

10.6.2. Creating bucket classes to mirror data using a YAML

Apply the following YAML. This YAML is a hybrid example that mirrors data between local Ceph storage and AWS:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow Add the following lines to your standard Object Bucket Claim (OBC):

additionalConfig: bucketclass: mirror-to-aws

additionalConfig: bucketclass: mirror-to-awsCopy to Clipboard Copied! Toggle word wrap Toggle overflow For more information about OBCs, see Section 10.8, “Object Bucket Claim”.

10.6.3. Configuring buckets to mirror data using the user interface

-

In the OpenShift Web Console, click Storage

OpenShift Data Foundation. - In the Status card, click Storage System and click the storage system link from the pop up that appears.

- In the Object tab, click the Multicloud Object Gateway link.

On the NooBaa page, click the buckets icon on the left side. You can see a list of your buckets:

- Click the bucket you want to update.

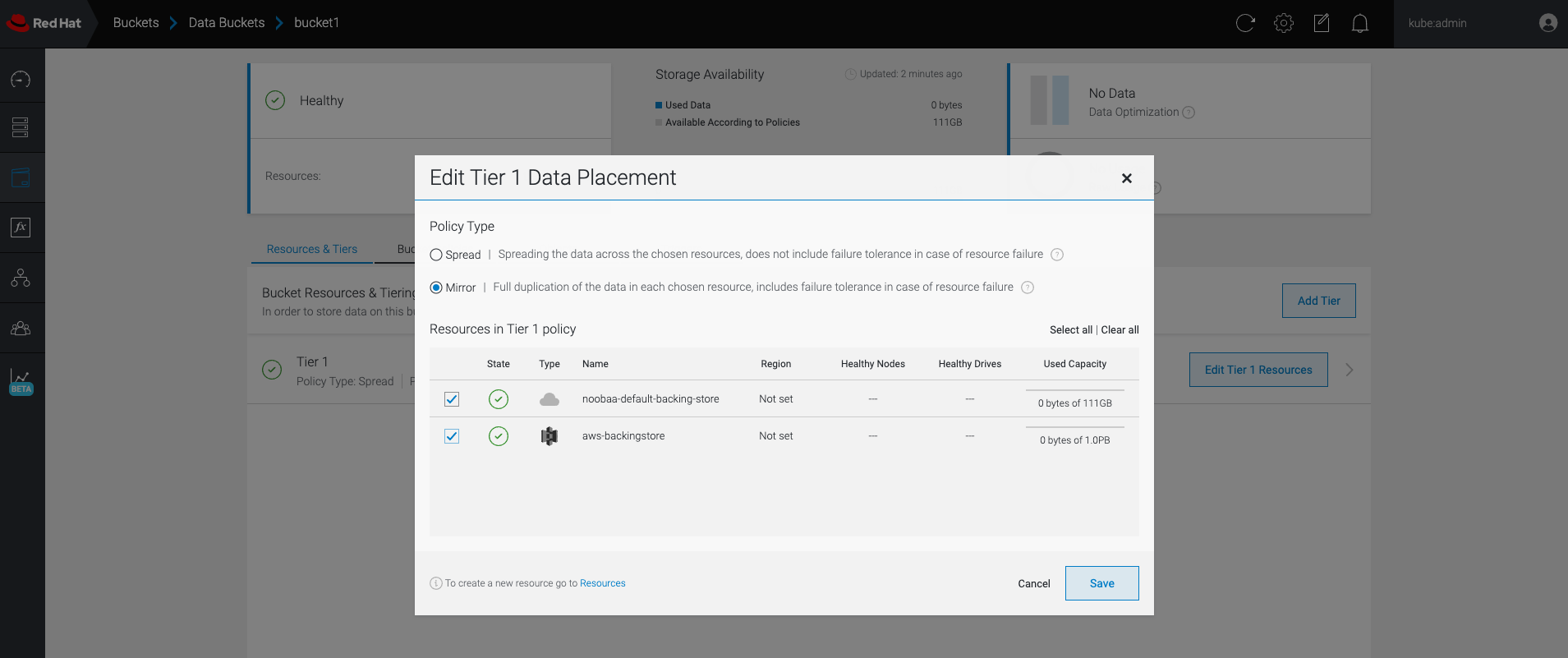

Click Edit Tier 1 Resources:

Select Mirror and check the relevant resources you want to use for this bucket. In the following example, the data between

noobaa-default-backing-storewhich is on RGW andAWS-backingstorewhich is on AWS is mirrored:- Click Save.

Resources created in NooBaa UI cannot be used by OpenShift UI or Multicloud Object Gateway (MCG) CLI.

10.7. Bucket policies in the Multicloud Object Gateway

OpenShift Data Foundation supports AWS S3 bucket policies. Bucket policies allow you to grant users access permissions for buckets and the objects in them.

10.7.1. About bucket policies

Bucket policies are an access policy option available for you to grant permission to your AWS S3 buckets and objects. Bucket policies use JSON-based access policy language. For more information about access policy language, see AWS Access Policy Language Overview.

10.7.2. Using bucket policies

Prerequisites

- A running OpenShift Data Foundation Platform.

- Access to the Multicloud Object Gateway (MCG), see Section 10.2, “Accessing the Multicloud Object Gateway with your applications”

Procedure

To use bucket policies in the MCG:

Create the bucket policy in JSON format. See the following example:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow There are many available elements for bucket policies with regard to access permissions.

For details on these elements and examples of how they can be used to control the access permissions, see AWS Access Policy Language Overview.

For more examples of bucket policies, see AWS Bucket Policy Examples.

Instructions for creating S3 users can be found in Section 10.7.3, “Creating an AWS S3 user in the Multicloud Object Gateway”.

Using AWS S3 client, use the

put-bucket-policycommand to apply the bucket policy to your S3 bucket:aws --endpoint ENDPOINT --no-verify-ssl s3api put-bucket-policy --bucket MyBucket --policy BucketPolicy

# aws --endpoint ENDPOINT --no-verify-ssl s3api put-bucket-policy --bucket MyBucket --policy BucketPolicyCopy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

ENDPOINTwith the S3 endpoint. -

Replace

MyBucketwith the bucket to set the policy on. -

Replace

BucketPolicywith the bucket policy JSON file. Add

--no-verify-sslif you are using the default self signed certificates.For example:

aws --endpoint https://s3-openshift-storage.apps.gogo44.noobaa.org --no-verify-ssl s3api put-bucket-policy -bucket MyBucket --policy file://BucketPolicy

# aws --endpoint https://s3-openshift-storage.apps.gogo44.noobaa.org --no-verify-ssl s3api put-bucket-policy -bucket MyBucket --policy file://BucketPolicyCopy to Clipboard Copied! Toggle word wrap Toggle overflow For more information on the

put-bucket-policycommand, see the AWS CLI Command Reference for put-bucket-policy.NoteThe principal element specifies the user that is allowed or denied access to a resource, such as a bucket. Currently, Only NooBaa accounts can be used as principals. In the case of object bucket claims, NooBaa automatically create an account

obc-account.<generated bucket name>@noobaa.io.NoteBucket policy conditions are not supported.

-

Replace

10.7.3. Creating an AWS S3 user in the Multicloud Object Gateway

Prerequisites

- A running OpenShift Data Foundation Platform.

- Access to the Multicloud Object Gateway (MCG), see Section 10.2, “Accessing the Multicloud Object Gateway with your applications”

Procedure

-

In the OpenShift Web Console, click Storage

OpenShift Data Foundation. - In the Status card, click Storage System and click the storage system link from the pop up that appears.

- In the Object tab, click the Multicloud Object Gateway link.

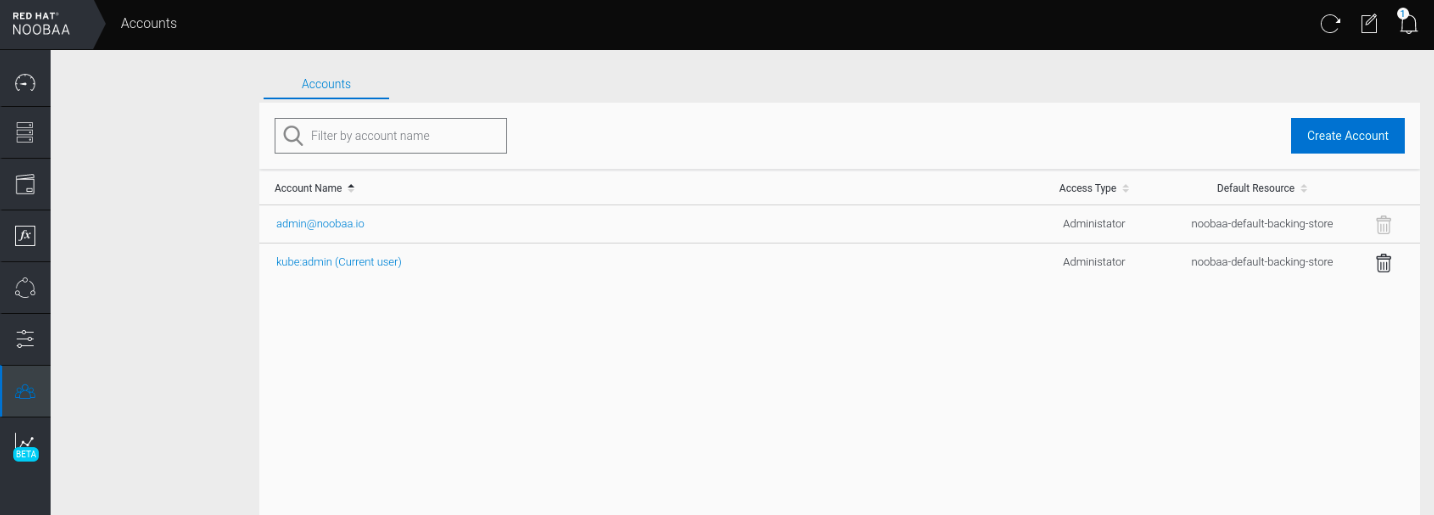

Under the Accounts tab, click Create Account.

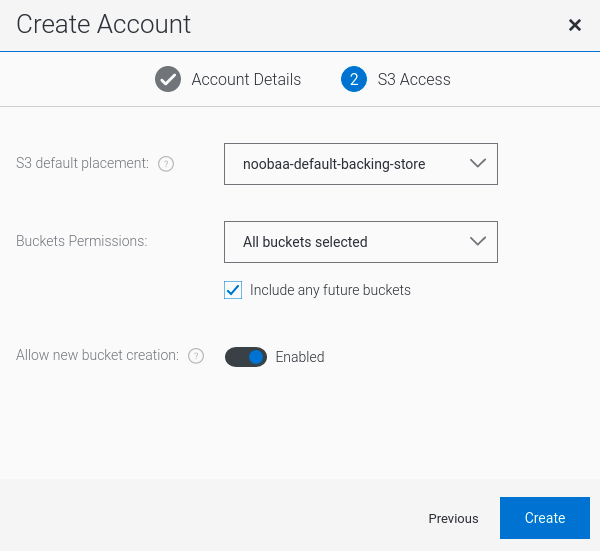

Select S3 Access Only, provide the Account Name, for example, john.doe@example.com. Click Next.

Select S3 default placement, for example, noobaa-default-backing-store. Select Buckets Permissions. A specific bucket or all buckets can be selected. Click Create.

10.8. Object Bucket Claim

An Object Bucket Claim can be used to request an S3 compatible bucket backend for your workloads.

You can create an Object Bucket Claim in three ways:

An object bucket claim creates a new bucket and an application account in NooBaa with permissions to the bucket, including a new access key and secret access key. The application account is allowed to access only a single bucket and can’t create new buckets by default.

10.8.1. Dynamic Object Bucket Claim

Similar to Persistent Volumes, you can add the details of the Object Bucket claim (OBC) to your application’s YAML, and get the object service endpoint, access key, and secret access key available in a configuration map and secret. It is easy to read this information dynamically into environment variables of your application.

Procedure

Add the following lines to your application YAML:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow These lines are the OBC itself.

-

Replace

<obc-name>with the a unique OBC name. -

Replace

<obc-bucket-name>with a unique bucket name for your OBC.

-

Replace

You can add more lines to the YAML file to automate the use of the OBC. The example below is the mapping between the bucket claim result, which is a configuration map with data and a secret with the credentials. This specific job claims the Object Bucket from NooBaa, which creates a bucket and an account.

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace all instances of

<obc-name>with your OBC name. -

Replace

<your application image>with your application image.

-

Replace all instances of

Apply the updated YAML file:

oc apply -f <yaml.file>

# oc apply -f <yaml.file>Copy to Clipboard Copied! Toggle word wrap Toggle overflow Replace

<yaml.file>with the name of your YAML file.To view the new configuration map, run the following:

oc get cm <obc-name> -o yaml

# oc get cm <obc-name> -o yamlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Replace

obc-namewith the name of your OBC.You can expect the following environment variables in the output:

-

BUCKET_HOST- Endpoint to use in the application. BUCKET_PORT- The port available for the application.-

The port is related to the

BUCKET_HOST. For example, if theBUCKET_HOSTis https://my.example.com, and theBUCKET_PORTis 443, the endpoint for the object service would be https://my.example.com:443.

-

The port is related to the

-

BUCKET_NAME- Requested or generated bucket name. -

AWS_ACCESS_KEY_ID- Access key that is part of the credentials. -

AWS_SECRET_ACCESS_KEY- Secret access key that is part of the credentials.

-

Retrieve the AWS_ACCESS_KEY_ID and AWS_SECRET_ACCESS_KEY. The names are used so that it is compatible with the AWS S3 API. You need to specify the keys while performing S3 operations, especially when you read, write or list from the Multicloud Object Gateway (MCG) bucket. The keys are encoded in Base64. Decode the keys before using them.

oc get secret <obc_name> -o yaml

# oc get secret <obc_name> -o yaml<obc_name>- Specify the name of the object bucket claim.

10.8.2. Creating an Object Bucket Claim using the command line interface

When creating an Object Bucket Claim (OBC) using the command-line interface, you get a configuration map and a Secret that together contain all the information your application needs to use the object storage service.

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms yum install mcg

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteSpecify the appropriate architecture for enabling the repositories using the subscription manager.

- For IBM Power, use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow - For IBM Z infrastructure, use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow

Procedure

Use the command-line interface to generate the details of a new bucket and credentials. Run the following command:

noobaa obc create <obc-name> -n openshift-storage

# noobaa obc create <obc-name> -n openshift-storageCopy to Clipboard Copied! Toggle word wrap Toggle overflow Replace

<obc-name>with a unique OBC name, for example,myappobc.Additionally, you can use the

--app-namespaceoption to specify the namespace where the OBC configuration map and secret will be created, for example,myapp-namespace.Example output:

INFO[0001] ✅ Created: ObjectBucketClaim "test21obc"

INFO[0001] ✅ Created: ObjectBucketClaim "test21obc"Copy to Clipboard Copied! Toggle word wrap Toggle overflow The MCG command-line-interface has created the necessary configuration and has informed OpenShift about the new OBC.

Run the following command to view the OBC:

oc get obc -n openshift-storage

# oc get obc -n openshift-storageCopy to Clipboard Copied! Toggle word wrap Toggle overflow Example output:

NAME STORAGE-CLASS PHASE AGE test21obc openshift-storage.noobaa.io Bound 38s

NAME STORAGE-CLASS PHASE AGE test21obc openshift-storage.noobaa.io Bound 38sCopy to Clipboard Copied! Toggle word wrap Toggle overflow Run the following command to view the YAML file for the new OBC:

oc get obc test21obc -o yaml -n openshift-storage

# oc get obc test21obc -o yaml -n openshift-storageCopy to Clipboard Copied! Toggle word wrap Toggle overflow Example output:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow Inside of your

openshift-storagenamespace, you can find the configuration map and the secret to use this OBC. The CM and the secret have the same name as the OBC. Run the following command to view the secret:oc get -n openshift-storage secret test21obc -o yaml

# oc get -n openshift-storage secret test21obc -o yamlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Example output:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow The secret gives you the S3 access credentials.

Run the following command to view the configuration map:

oc get -n openshift-storage cm test21obc -o yaml

# oc get -n openshift-storage cm test21obc -o yamlCopy to Clipboard Copied! Toggle word wrap Toggle overflow Example output:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow The configuration map contains the S3 endpoint information for your application.

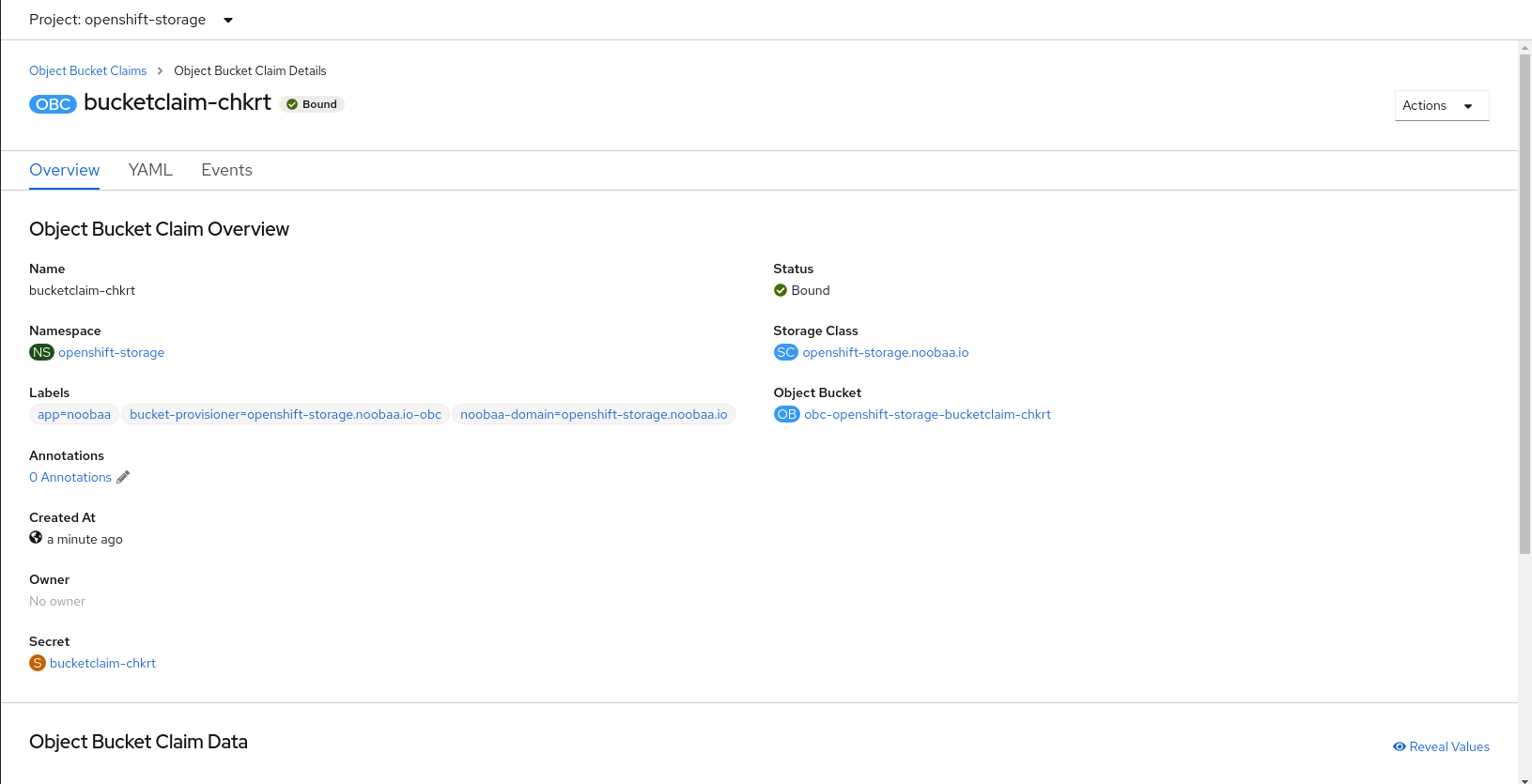

10.8.3. Creating an Object Bucket Claim using the OpenShift Web Console

You can create an Object Bucket Claim (OBC) using the OpenShift Web Console.

Prerequisites

- Administrative access to the OpenShift Web Console.

- In order for your applications to communicate with the OBC, you need to use the configmap and secret. For more information about this, see Section 10.8.1, “Dynamic Object Bucket Claim”.

Procedure

- Log into the OpenShift Web Console.

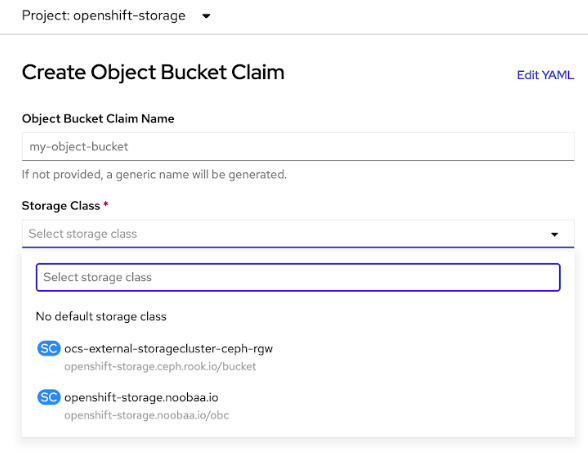

On the left navigation bar, click Storage

Object Bucket Claims Create Object Bucket Claim. Enter a name for your object bucket claim and select the appropriate storage class based on your deployment, internal or external, from the dropdown menu:

- Internal mode

The following storage classes, which were created after deployment, are available for use:

-

ocs-storagecluster-ceph-rgwuses the Ceph Object Gateway (RGW) -

openshift-storage.noobaa.iouses the Multicloud Object Gateway (MCG)

-

- External mode

The following storage classes, which were created after deployment, are available for use:

-

ocs-external-storagecluster-ceph-rgwuses the RGW openshift-storage.noobaa.iouses the MCGNoteThe RGW OBC storage class is only available with fresh installations of OpenShift Data Foundation version 4.5. It does not apply to clusters upgraded from previous OpenShift Data Foundation releases.

-

Click Create.

Once you create the OBC, you are redirected to its detail page:

Additional Resources

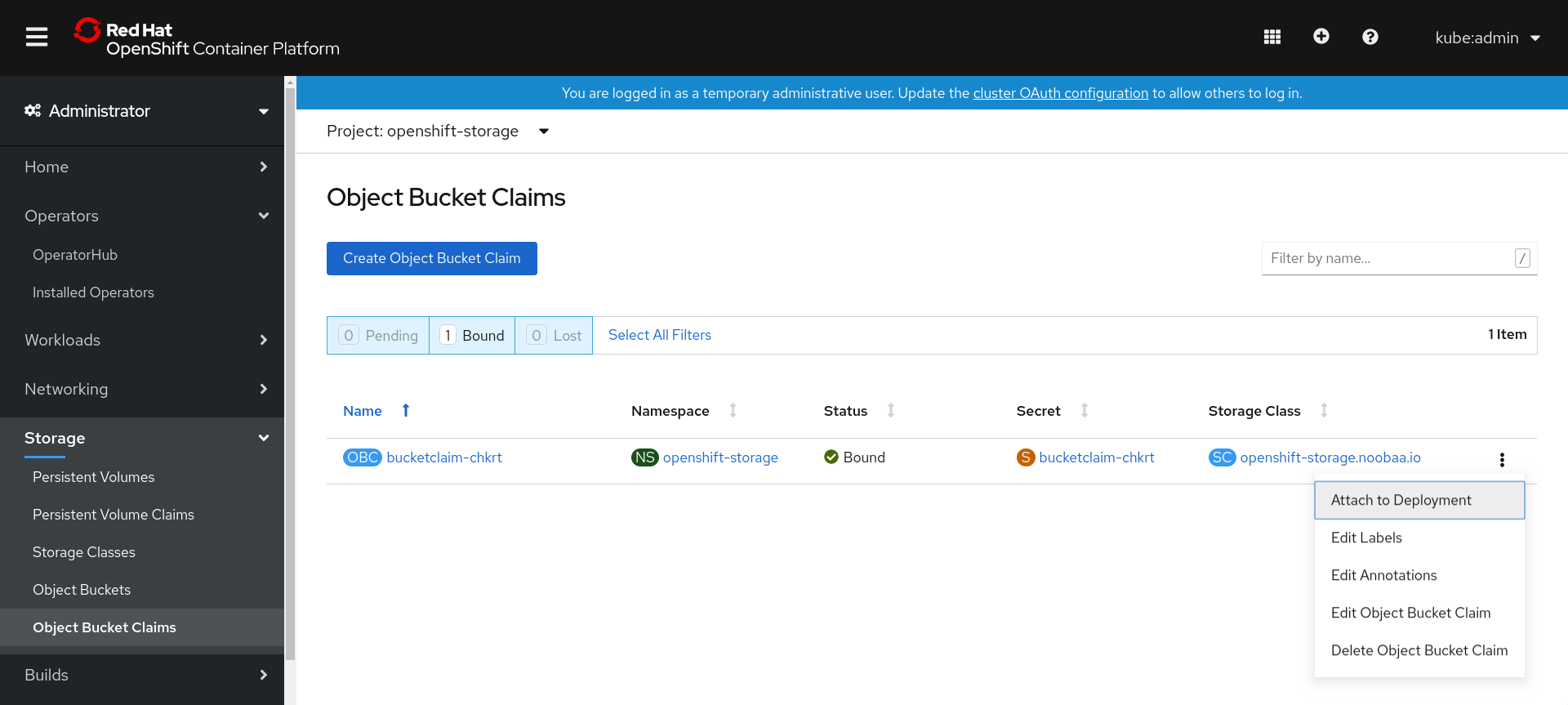

10.8.4. Attaching an Object Bucket Claim to a deployment

Once created, Object Bucket Claims (OBCs) can be attached to specific deployments.

Prerequisites

- Administrative access to the OpenShift Web Console.

Procedure

-

On the left navigation bar, click Storage

Object Bucket Claims. Click the Action menu (⋮) next to the OBC you created.

From the drop-down menu, select Attach to Deployment.

Select the desired deployment from the Deployment Name list, then click Attach.

Additional Resources

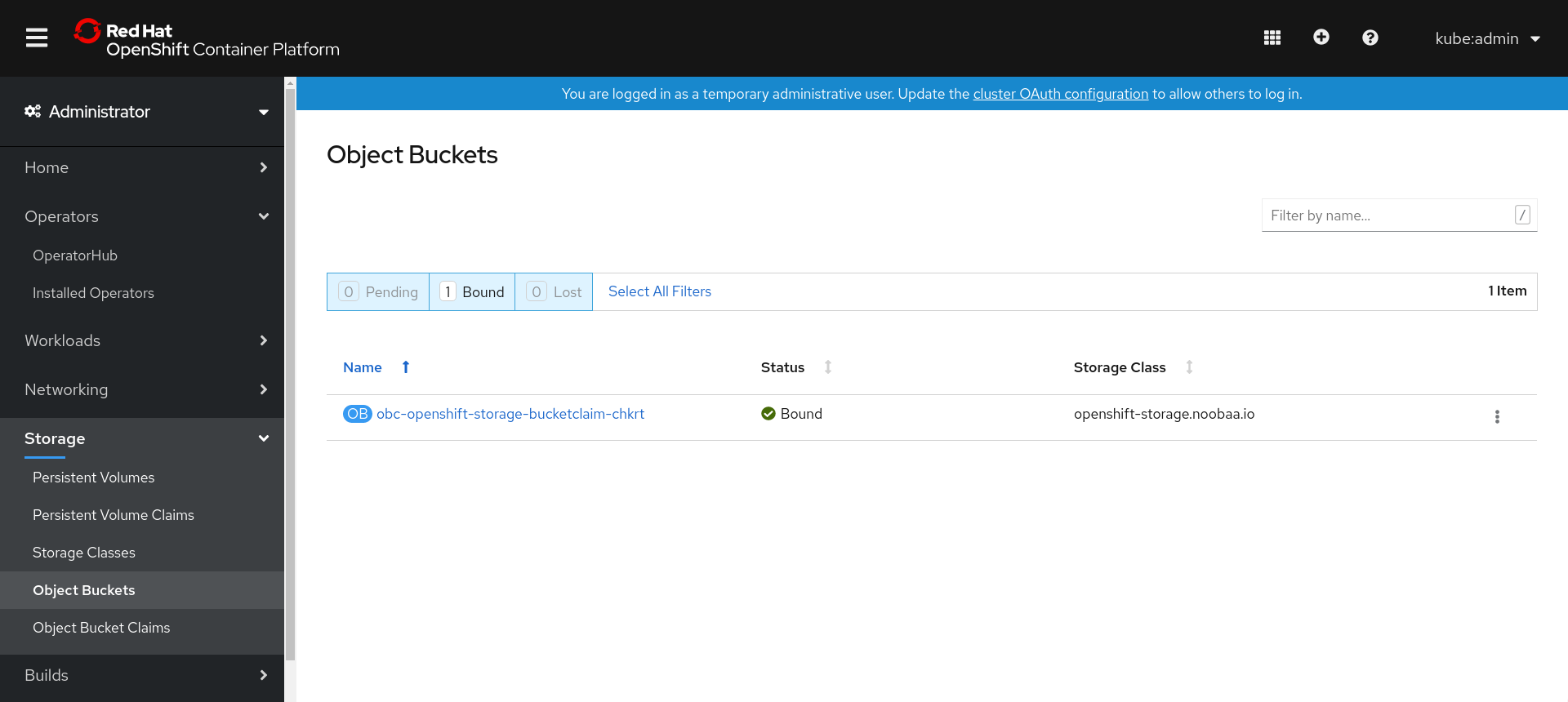

10.8.5. Viewing object buckets using the OpenShift Web Console

You can view the details of object buckets created for Object Bucket Claims (OBCs) using the OpenShift Web Console.

Prerequisites

- Administrative access to the OpenShift Web Console.

Procedure

- Log into the OpenShift Web Console.

On the left navigation bar, click Storage

Object Buckets. Alternatively, you can also navigate to the details page of a specific OBC and click the Resource link to view the object buckets for that OBC.

- Select the object bucket you want to see details for. You are navigated to the Object Bucket Details page.

Additional Resources

10.8.6. Deleting Object Bucket Claims

Prerequisites

- Administrative access to the OpenShift Web Console.

Procedure

-

On the left navigation bar, click Storage

Object Bucket Claims. Click the Action menu (⋮) next to the Object Bucket Claim (OBC) you want to delete.

- Select Delete Object Bucket Claim.

- Click Delete.

Additional Resources

10.9. Caching policy for object buckets

A cache bucket is a namespace bucket with a hub target and a cache target. The hub target is an S3 compatible large object storage bucket. The cache bucket is the local Multicloud Object Gateway bucket. You can create a cache bucket that caches an AWS bucket or an IBM COS bucket.

Cache buckets are a Technology Preview feature. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information, see Technology Preview Features Support Scope.

10.9.1. Creating an AWS cache bucket

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms yum install mcg

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteSpecify the appropriate architecture for enabling the repositories using the subscription manager. In case of IBM Z infrastructure use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Alternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/package.

NoteChoose the correct Product Variant according to your architecture.

Procedure

Create a NamespaceStore resource. A NamespaceStore represents an underlying storage to be used as a read or write target for the data in the MCG namespace buckets. From the MCG command-line interface, run the following command:

noobaa namespacestore create aws-s3 <namespacestore> --access-key <AWS ACCESS KEY> --secret-key <AWS SECRET ACCESS KEY> --target-bucket <bucket-name>

noobaa namespacestore create aws-s3 <namespacestore> --access-key <AWS ACCESS KEY> --secret-key <AWS SECRET ACCESS KEY> --target-bucket <bucket-name>Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<namespacestore>with the name of the namespacestore. -

Replace

<AWS ACCESS KEY>and<AWS SECRET ACCESS KEY>with an AWS access key ID and secret access key you created for this purpose. Replace

<bucket-name>with an existing AWS bucket name. This argument tells the MCG which bucket to use as a target bucket for its backing store, and subsequently, data storage and administration.You can also add storage resources by applying a YAML. First create a secret with credentials:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow You must supply and encode your own AWS access key ID and secret access key using Base64, and use the results in place of

<AWS ACCESS KEY ID ENCODED IN BASE64>and<AWS SECRET ACCESS KEY ENCODED IN BASE64>.Replace

<namespacestore-secret-name>with a unique name.Then apply the following YAML:

Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<namespacestore>with a unique name. -

Replace

<namespacestore-secret-name>with the secret created in the previous step. -

Replace

<namespace-secret>with the namespace used to create the secret in the previous step. -

Replace

<target-bucket>with the AWS S3 bucket you created for the namespacestore.

-

Replace

Run the following command to create a bucket class:

noobaa bucketclass create namespace-bucketclass cache <my-cache-bucket-class> --backingstores <backing-store> --hub-resource <namespacestore>

noobaa bucketclass create namespace-bucketclass cache <my-cache-bucket-class> --backingstores <backing-store> --hub-resource <namespacestore>Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<my-cache-bucket-class>with a unique bucket class name. -

Replace

<backing-store>with the relevant backing store. You can list one or more backingstores separated by commas in this field. -

Replace

<namespacestore>with the namespacestore created in the previous step.

-

Replace

Run the following command to create a bucket using an Object Bucket Claim (OBC) resource that uses the bucket class defined in step 2.

noobaa obc create <my-bucket-claim> my-app --bucketclass <custom-bucket-class>

noobaa obc create <my-bucket-claim> my-app --bucketclass <custom-bucket-class>Copy to Clipboard Copied! Toggle word wrap Toggle overflow -

Replace

<my-bucket-claim>with a unique name. -

Replace

<custom-bucket-class>with the name of the bucket class created in step 2.

-

Replace

10.9.2. Creating an IBM COS cache bucket

Prerequisites

Download the Multicloud Object Gateway (MCG) command-line interface.

subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms yum install mcg

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-x86_64-rpms # yum install mcgCopy to Clipboard Copied! Toggle word wrap Toggle overflow NoteSpecify the appropriate architecture for enabling the repositories using the subscription manager.

- For IBM Power, use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-ppc64le-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow - For IBM Z infrastructure, use the following command:

subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpms

# subscription-manager repos --enable=rh-odf-4-for-rhel-8-s390x-rpmsCopy to Clipboard Copied! Toggle word wrap Toggle overflow Alternatively, you can install the MCG package from the OpenShift Data Foundation RPMs found here https://access.redhat.com/downloads/content/547/ver=4/rhel---8/4/x86_64/package.

NoteChoose the correct Product Variant according to your architecture.

Procedure