Dieser Inhalt ist in der von Ihnen ausgewählten Sprache nicht verfügbar.

Chapter 6. Monitoring the cluster on the Ceph dashboard

As a storage administrator, you can use Red Hat Ceph Storage Dashboard to monitor specific aspects of the cluster based on types of hosts, services, data access methods, and more.

This section covers the following administrative tasks:

- Monitoring hosts of the Ceph cluster on the dashboard.

- Viewing and editing the configuration of the Ceph cluster on the dashboard.

- Viewing and editing the manager modules of the Ceph cluster on the dashboard.

- Monitoring monitors of the Ceph cluster on the dashboard.

- Monitoring services of the Ceph cluster on the dashboard.

- Monitoring Ceph OSDs on the dashboard.

- Monitoring HAProxy on the dashboard.

- Viewing the CRUSH map of the Ceph cluster on the dashboard.

- Filtering logs of the Ceph cluster on the dashboard.

- Viewing centralized logs of the Ceph cluster on the dashboard.

- Monitoring pools of the Ceph cluster on the dashboard.

- Monitoring Ceph file systems on the dashboard.

- Monitoring Ceph Object Gateway daemons on the dashboard.

- Monitoring block device images on the Ceph dashboard.

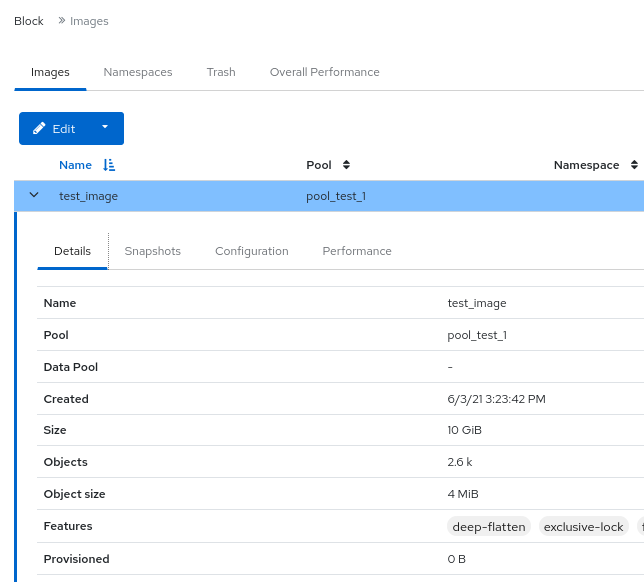

6.1. Monitoring hosts of the Ceph cluster on the dashboard

You can monitor the hosts of the cluster on the Red Hat Ceph Storage Dashboard.

The following are the different tabs on the hosts page. Each tab contains a table with the relavent information. The tables are searchable and customizable by column and row.

To change the order of the columns, select the column name and drag to place within the table.

To select which columns are displaying, click the toggle columns button and select or clear column names.

Enter the number of rows to be displayed in the row selector field.

- Devices

- This tab has a table that details the device ID, state of the device health, life expectancy, device name, prediction creation date, and the daemons on the hosts.

- Physical Disks

- This tab has a table that details all disks attached to a selected host, as well as their type, size and others. It has details such as device path, type of device, available, vendor, model, size, and the OSDs deployed. To identify which disk is where on the physical device, select the device and click Identify. Select the duration of how long the LED should blink for to find the selected disk.

- Daemons

- This tab has a table that details all services that have been deployed on the selected host, which container they are running in, and their current status. The table has details such as daemon name, daemon version, status, when the daemon was last refreshed, CPU usage, memory usage (in MiB), and daemon events. Daemon actions can be run from this tab. For more details, see Daemon actions.

- Performance Details

- This tab has details such as OSDs deployed, CPU utilization, RAM usage, network load, network drop rate, and OSD disk performance statistics. View performance information through the embedded Grafana Dashboard.

- Device health

- For SMART-enabled devices, you can get the individual health status and SMART data only on the OSD deployed hosts.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Dashboard is installed.

- Hosts are added to the storage cluster.

- All the services, monitor, manager, and OSD daemons are deployed on the storage cluster.

Procedure

- From the dashboard navigation, go to Cluster→Hosts.

- On the Hosts List tab, expand the host row and select the host with the daemon to perform the action on.

On the Daemons tab of the host, select the row with the daemon.

NoteThe Daemons table can be searched and filtered.

Select the action that needs to be run on the daemon. The options are Start, Stop, Restart, and Redeploy.

Figure 6.1. Monitoring hosts of the Ceph cluster

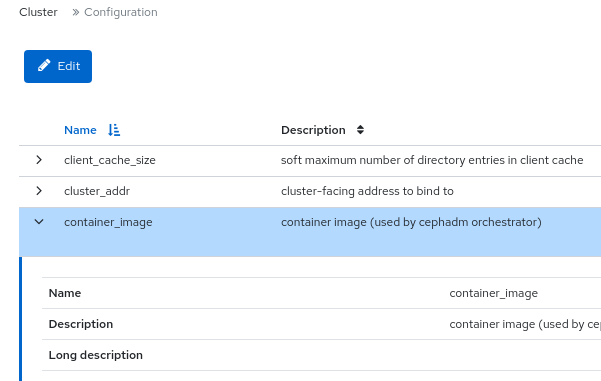

6.2. Viewing and editing the configuration of the Ceph cluster on the dashboard

You can view various configuration options of the Ceph cluster on the dashboard. You can edit only some configuration options.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Dashboard is installed.

- All the services are deployed on the storage cluster.

Procedure

- From the dashboard navigation, go to Administration→Configuration.

To view the details of the configuration, expand the row contents.

Figure 6.2. Configuration options

- Optional: Use the search field to find a configuration.

Optional: You can filter for a specific configuration. Use the following filters:

- Level - Basic, advanced, or dev

- Service - Any, mon, mgr, osd, mds, common, mds_client, rgw, and similar filters.

- Source - Any, mon, and similar filters

- Modified - yes or no

To edit a configuration, select the configuration row and click Edit.

Use the Edit form to edit the required parameters, and click Update.

A notification displays that the configuration was updated successfully.

6.3. Viewing and editing the manager modules of the Ceph cluster on the dashboard

Manager modules are used to manage module-specific configuration settings. For example, you can enable alerts for the health of the cluster.

You can view, enable or disable, and edit the manager modules of a cluster on the Red Hat Ceph Storage dashboard.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Dashboard is installed.

Viewing the manager modules

- From the dashboard navigation, go to Administration→Manager Modules.

To view the details of a specific manager module, expand the row contents.

Figure 6.3. Manager modules

Enabling a manager module

Select the row and click Enable from the action drop-down.

Disabling a manager module

Select the row and click Disable from the action drop-down.

Editing a manager module

Select the row:

NoteNot all modules have configurable parameters. If a module is not configurable, the Edit button is disabled.

Edit the required parameters and click Update.

A notification displays that the module was updated successfully.

6.4. Monitoring monitors of the Ceph cluster on the dashboard

You can monitor the performance of the Ceph monitors on the landing page of the Red Hat Ceph Storage dashboard You can also view the details such as status, quorum, number of open session, and performance counters of the monitors in the Monitors panel.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Dashboard is installed.

- Monitors are deployed in the storage cluster.

Procedure

From the dashboard navigation, go to Cluster→Monitors.

The Monitors panel displays information about the overall monitor status and monitor hosts that are in and out of quorum.

To see the number of open sessions, hover the cursor over the Open Sessions.

To see performance counters for any monitor, click Name in the In Quorum and Not In Quorum tables.

Figure 6.4. Viewing monitor Performance Counters

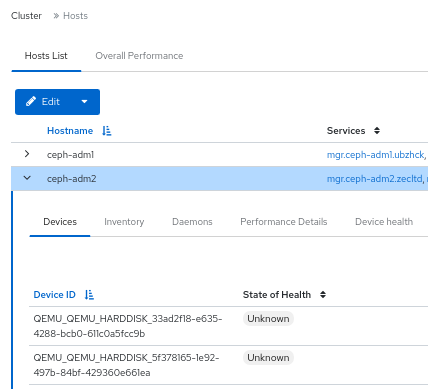

6.5. Monitoring services of the Ceph cluster on the dashboard

You can monitor the services of the cluster on the Red Hat Ceph Storage Dashboard. You can view the details such as hostname, daemon type, daemon ID, container ID, container image name, container image ID, version status and last refreshed time.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Dashboard is installed.

- Hosts are added to the storage cluster.

- All the services are deployed on the storage cluster.

Procedure

- From the dashboard navigation, go to Administration→Services.

Expand the service for more details.

Figure 6.5. Monitoring services of the Ceph cluster

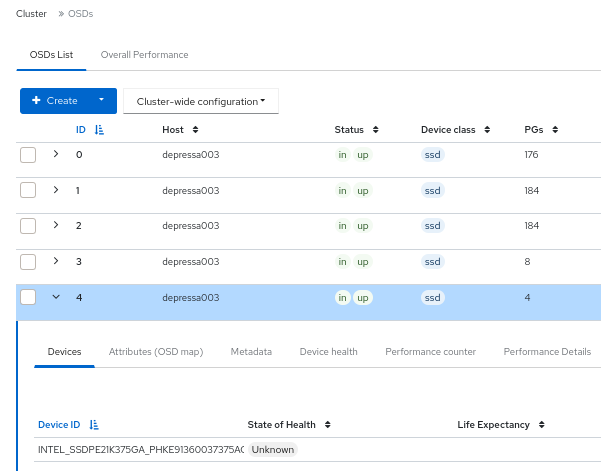

6.6. Monitoring Ceph OSDs on the dashboard

You can monitor the status of the Ceph OSDs on the landing page of the Red Hat Ceph Storage Dashboard. You can also view the details such as host, status, device class, number of placement groups (PGs), size flags, usage, and read or write operations time in the OSDs tab.

The following are the different tabs on the OSDs page:

- Devices - This tab has details such as Device ID, state of health, life expectancy, device name, and the daemons on the hosts.

- Attributes (OSD map) - This tab shows the cluster address, details of heartbeat, OSD state, and the other OSD attributes.

- Metadata - This tab shows the details of the OSD object store, the devices, the operating system, and the kernel details.

- Device health - For SMART-enabled devices, you can get the individual health status and SMART data.

- Performance counter - This tab gives details of the bytes written on the devices.

- Performance Details - This tab has details such as OSDs deployed, CPU utilization, RAM usage, network load, network drop rate, and OSD disk performance statistics. View performance information through the embedded Grafana Dashboard.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Dashboard is installed.

- Hosts are added to the storage cluster.

- All the services including OSDs are deployed on the storage cluster.

Procedure

- From the dashboard navigation, go to Cluster→OSDs.

To view the details of a specific OSD, from the OSDs List tab, expand an OSD row.

Figure 6.6. Monitoring OSDs of the Ceph cluster

You can view additional details such as Devices, Attributes (OSD map), Metadata, Device Health, Performance counter, and Performance Details, by clicking on the respective tabs.

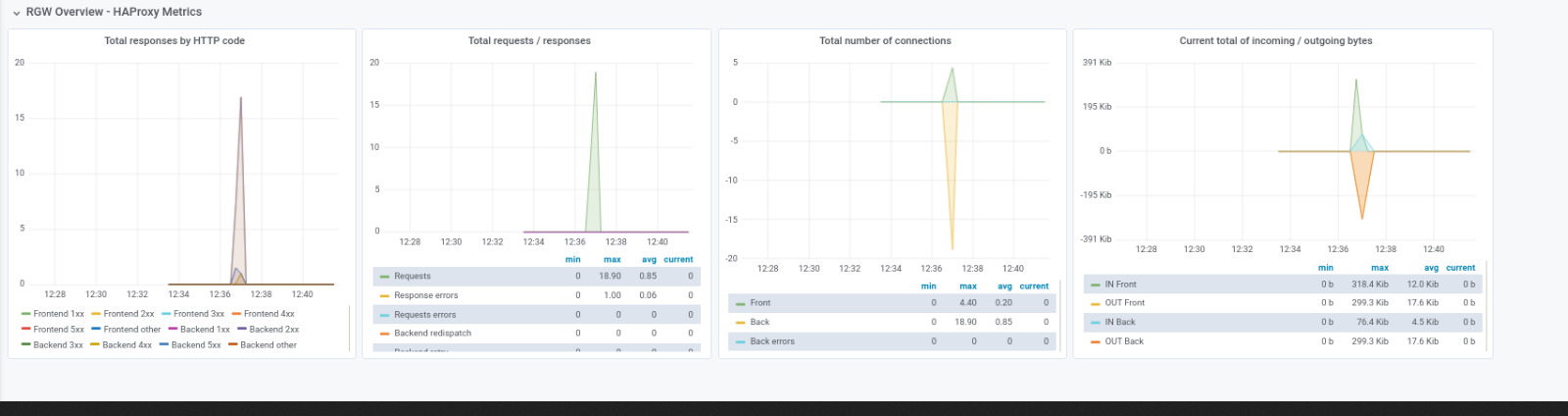

6.7. Monitoring HAProxy on the dashboard

The Ceph Object Gateway allows you to assign many instances of the object gateway to a single zone, so that you can scale out as load increases. Since each object gateway instance has its own IP address, you can use HAProxy to balance the load across Ceph Object Gateway servers.

You can monitor the following HAProxy metrics on the dashboard:

- Total responses by HTTP code.

- Total requests/responses.

- Total number of connections.

- Current total number of incoming / outgoing bytes.

You can also get the Grafana details by running the ceph dashboard get-grafana-api-url command.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Admin level access on the storage dashboard.

- An existing Ceph Object Gateway service, without SSL. If you want SSL service, the certificate should be configured on the ingress service, not the Ceph Object Gateway service.

- Ingress service deployed using the Ceph Orchestrator.

- Monitoring stack components are created on the dashboard.

Procedure

Log in to the Grafana URL and select the RGW_Overview panel:

Syntax

https://DASHBOARD_URL:3000Example

https://dashboard_url:3000- Verify the HAProxy metrics on the Grafana URL.

- From the Ceph dashboard navigation, go to Object→Gateways.

From the Overall Performance tab, verify the Ceph Object Gateway HAProxy metrics.

Figure 6.7. HAProxy metrics

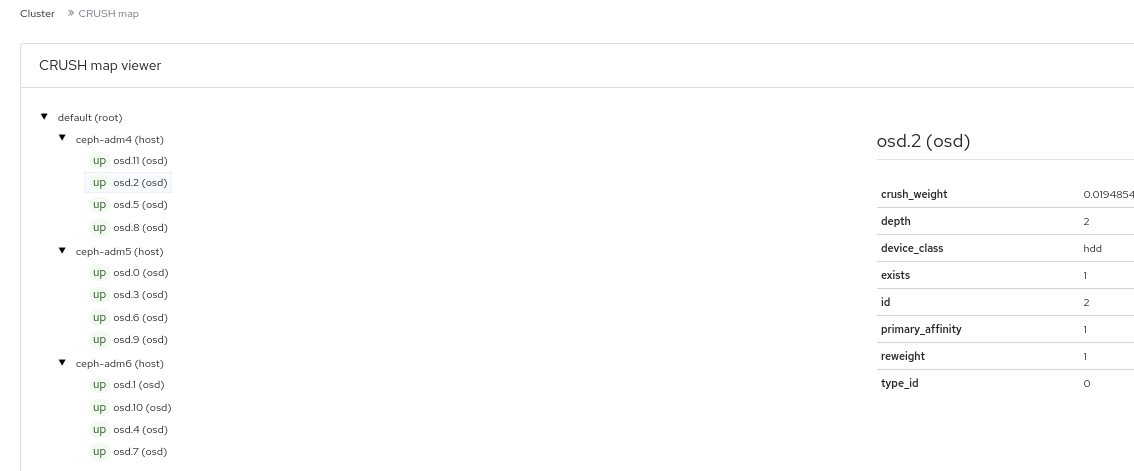

6.8. Viewing the CRUSH map of the Ceph cluster on the dashboard

You can view the The CRUSH map that contains a list of OSDs and related information on the Red Hat Ceph Storage dashboard. Together, the CRUSH map and CRUSH algorithm determine how and where data is stored. The dashboard allows you to view different aspects of the CRUSH map, including OSD hosts, OSD daemons, ID numbers, device class, and more.

The CRUSH map allows you to determine which host a specific OSD ID is running on. This is helpful if there is an issue with an OSD.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Dashboard is installed.

- OSD daemons deployed on the storage cluster.

Procedure

- From the dashboard navigation, go to Cluster→CRUSH map.

To view the details of the specific OSD, click it’s row.

Figure 6.8. CRUSH Map detail view

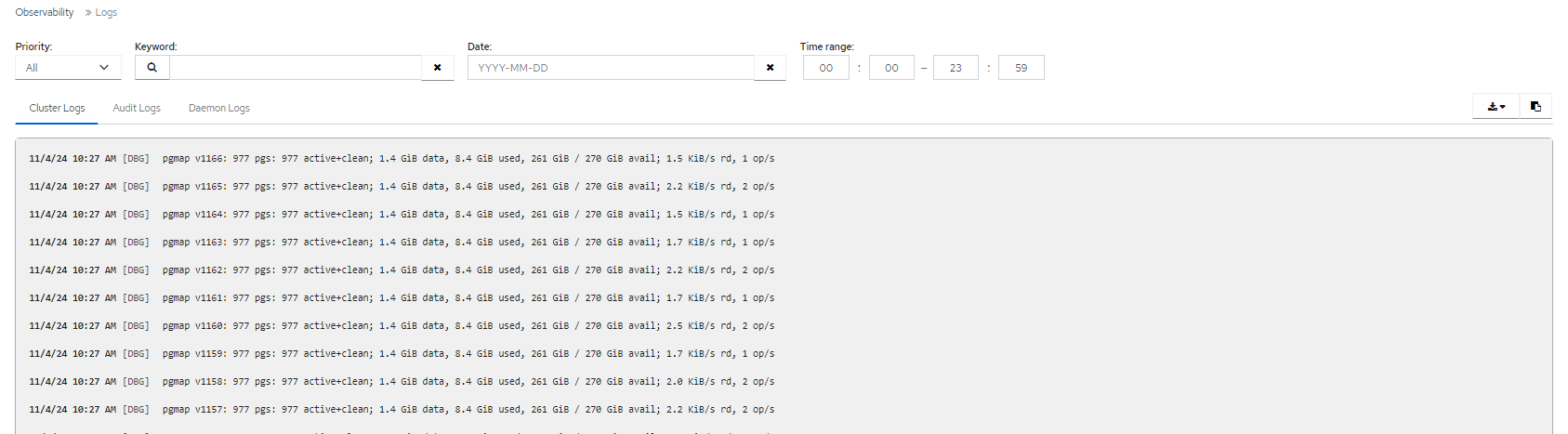

6.9. Filtering logs of the Ceph cluster on the dashboard

You can view and filter logs of the Red Hat Ceph Storage cluster on the dashboard based on several criteria. The criteria includes Priority, Keyword, Date, and Time range.

You can download the logs to the system or copy the logs to the clipboard as well for further analysis.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- The Dashboard is installed.

- Log entries have been generated since the Ceph Monitor was last started.

The Dashboard logging feature only displays the thirty latest high level events. The events are stored in memory by the Ceph Monitor. The entries disappear after restarting the Monitor. If you need to review detailed or older logs, refer to the file based logs.

Procedure

- From the dashboard navigation, go to Observability→Logs.

From the Cluster Logs tab, view cluster logs.

Figure 6.9. Cluster logs

- Use the Priority filter to filter by Debug, Info, Warning, Error, or All.

- Use the Keyword field to enter text to search by keyword.

- Use the Date picker to filter by a specific date.

-

Use the Time range fields to enter a range, using the HH:MM - HH:MM format. Hours must be entered using numbers

0to23. - To combine filters, set two or more filters.

- To save the logs, use the Download or Copy to Clipboard buttons.

6.10. Viewing centralized logs of the Ceph cluster on the dashboard

Ceph Dashboard allows you to view logs from all the clients in a centralized space in the Red Hat Ceph Storage cluster for efficient monitoring. This is achieved through using Loki, a log aggregation system designed to store and query logs, and Promtail, an agent that ships the contents of local logs to a private Grafana Loki instance.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Dashboard is installed.

- Grafana is configured and logged into on the cluster.

Procedure

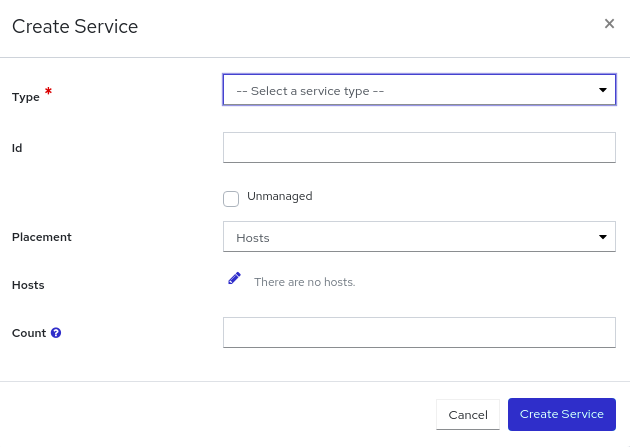

- From the dashboard navigation, go to Administration→Services.

- From Services, click Create.

-

In the Create Service form, from the Type list, select

loki. Fill in the remaining details, and click Create Service. Repeat the previous step to create the

Promtailservice. Selectpromtailfrom the Type list.The

lokiandpromtailservices are displayed in the Services table, after being created successfully.Figure 6.10. Creating Loki and Promtail services

Note

NoteBy default, Promtail service is deployed on all the running hosts.

Enable logging to files.

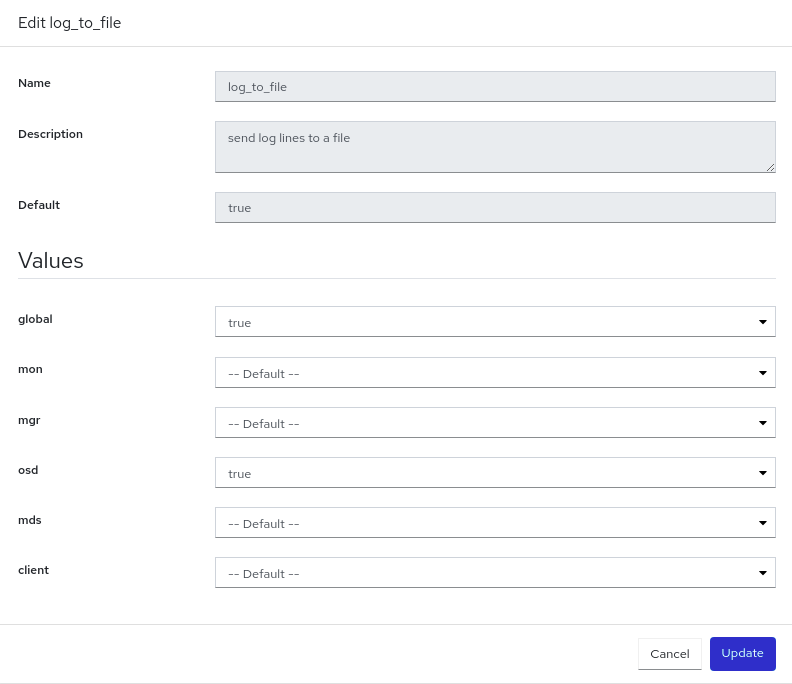

- Go to Administration→Configuration.

-

Select

log_to_fileand click Edit. In the Edit log_to_file form, set the global value to

true.Figure 6.11. Configuring log files

Click Update.

The

Updated config option log_to_filenotification displays and you are returned to the Configuration table.Repeat these steps for

mon_cluster_log_to_file, setting the global value totrue.NoteBoth

log_to_fileandmon_cluster_log_to_filefiles need to be configured.

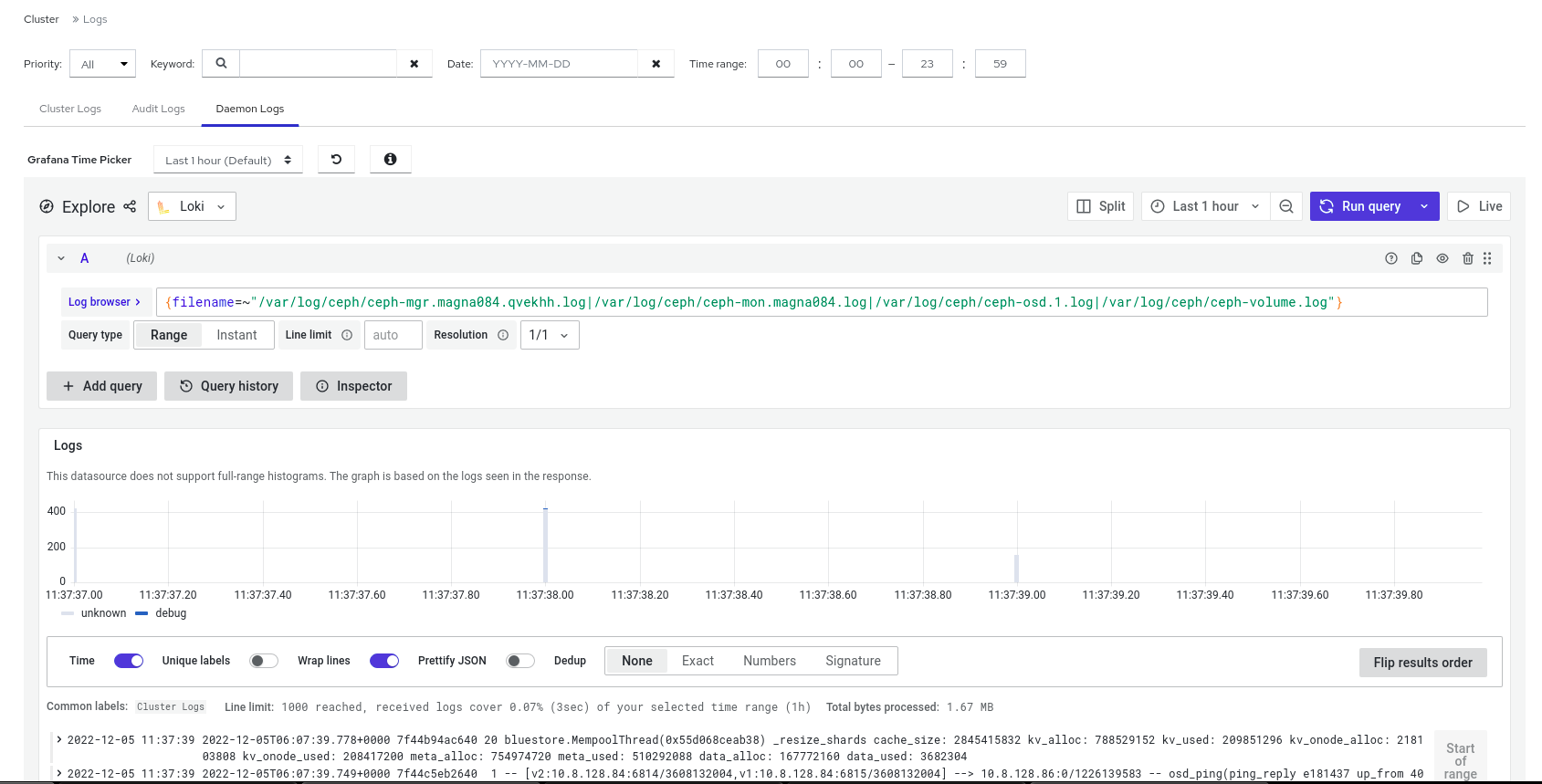

View the centralized logs.

Go to Observability→Logs and switch to the Daemon Logs tab. Use Log browser to select files and click Show logs to view the logs from that file.

Figure 6.12. View centralized logs

Note

NoteIf you do not see the logs, you need to sign in to Grafana and reload the page.

6.11. Monitoring pools of the Ceph cluster on the dashboard

You can view the details, performance details, configuration, and overall performance of the pools in a cluster on the Red Hat Ceph Storage Dashboard.

A pool plays a critical role in how the Ceph storage cluster distributes and stores data. If you have deployed a cluster without creating a pool, Ceph uses the default pools for storing data.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Dashboard is installed.

- Pools are created

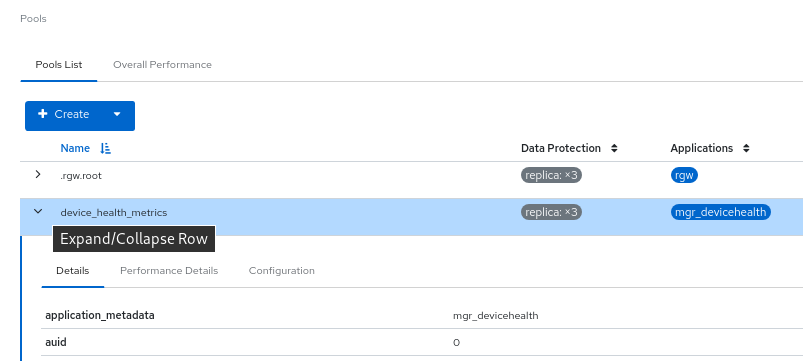

Procedure

- From the dashboard navigation, go to Cluster→Pools.

- View the Pools List tab, which gives the details of Data protection and the application for which the pool is enabled. Hover the mouse over Usage, Read bytes, and Write bytes for the required details.

Expand the pool row for detailed information about a specific pool.

Figure 6.13. Monitoring pools

- For general information, go to the Overall Performance tab.

6.12. Monitoring Ceph File Systems on the dashboard

You can use the Red Hat Ceph Storage Dashboard to monitor Ceph File Systems (CephFS) and related components.

For each File System listed, the following tabs are available:

- Details

- View the metadata servers (MDS) and their rank plus any standby daemons, pools and their usage,and performance counters.

- Directories

- View list of directories, their quotas and snapshots. Select a directory to set and unset maximum file and size quotas and to create and delete snapshots for the specific directory.

- Subvolumes

- Create, edit, and view subvolume information. These can be filtered by subvolume groups.

- Subvolume groups

- Create, edit, and view subvolume group information.

- Snapshots

- Create, clone, and view snapshot information. These can be filtered by subvolume groups and subvolumes.

- Snapshot schedules

- Enable, create, edit, and delete snapshot schedules.

- Clients

- View and evict Ceph File System client information.

- Performance Details

- View the performance of the file systems through the embedded Grafana Dashboard.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Dashboard is installed.

- MDS service is deployed on at least one of the hosts.

- Ceph File System is installed.

Procedure

- From the dashboard navigation, go to File→File Systems.

- To view more information about an individual file system, expand the file system row.

6.13. Monitoring Ceph object gateway daemons on the dashboard

You can use the Red Hat Ceph Storage Dashboard to monitor Ceph object gateway daemons. You can view the details, performance counters, and performance details of the Ceph object gateway daemons.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Dashboard is installed.

- At least one Ceph object gateway daemon configured in the storage cluster.

Procedure

- From the dashboard navigation, go to Object→Gateways.

- View information about individual gateways, from the Gateways List tab.

- To view more information about an individual gateway, expand the gateway row.

- If you have configured multiple Ceph Object Gateway daemons, click on Sync Performance tab and view the multi-site performance counters.

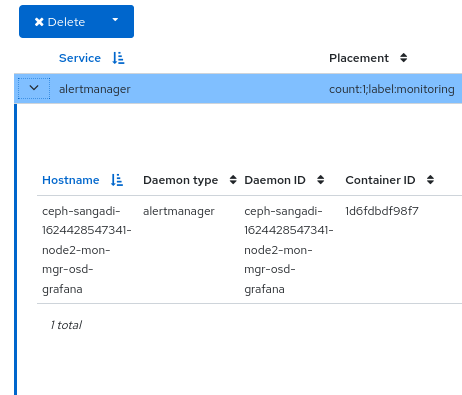

6.14. Monitoring Block Device images on the Ceph dashboard

You can use the Red Hat Ceph Storage Dashboard to monitor and manage Block device images. You can view the details, snapshots, configuration details, and performance details of the images.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Dashboard is installed.

- A pool with the rbd application enabled is created.

- An image is created.

Procedure

- From the dashboard navigation, go to Block→Images.

Expand the image row to see detailed information.

Figure 6.14. Monitoring Block device images