Chapter 4. Additional Concepts

4.1. Networking

Kubernetes ensures that pods are able to network with each other, and allocates each pod an IP address from an internal network. This ensures all containers within the pod behave as if they were on the same host. Giving each pod its own IP address means that pods can be treated like physical hosts or virtual machines in terms of port allocation, networking, naming, service discovery, load balancing, application configuration, and migration.

Creating links between pods is unnecessary. However, it is not recommended that you have a pod talk to another directly by using the IP address. Instead, we recommend that you create a service, then interact with the service.

4.1.1. OpenShift Enterprise DNS

If you are running multiple services, such as frontend and backend services for use with multiple pods, in order for the frontend pods to communicate with the backend services, environment variables are created for user names, service IP, and more. If the service is deleted and recreated, a new IP address can be assigned to the service, and requires the frontend pods to be recreated in order to pick up the updated values for the service IP environment variable. Additionally, the backend service has to be created before any of the frontend pods to ensure that the service IP is generated properly and that it can be provided to the frontend pods as an environment variable.

For this reason, OpenShift Enterprise has a built-in DNS so that the services can be reached by the service DNS as well as the service IP/port. OpenShift Enterprise supports split DNS by running SkyDNS on the master that answers DNS queries for services. The master listens to port 53 by default.

When the node starts, the following message indicates the Kubelet is correctly resolved to the master:

0308 19:51:03.118430 4484 node.go:197] Started Kubelet for node

openshiftdev.local, server at 0.0.0.0:10250

I0308 19:51:03.118459 4484 node.go:199] Kubelet is setting 10.0.2.15 as a

DNS nameserver for domain "local"If the second message does not appear, the Kubernetes service may not be available.

On a node host, each container’s nameserver has the master name added to the front, and the default search domain for the container will be .<pod_namespace>.cluster.local. The container will then direct any nameserver queries to the master before any other nameservers on the node, which is the default behavior for Docker-formatted containers. The master will answer queries on the .cluster.local domain that have the following form:

| Object Type | Example |

|---|---|

| Default | <pod_namespace>.cluster.local |

| Services | <service>.<pod_namespace>.svc.cluster.local |

| Endpoints | <name>.<namespace>.endpoints.cluster.local |

This prevents having to restart frontend pods in order to pick up new services, which creates a new IP for the service. This also removes the need to use environment variables, as pods can use the service DNS. Also, as the DNS does not change, you can reference database services as db.local in config files. Wildcard lookups are also supported, as any lookups resolve to the service IP, and removes the need to create the backend service before any of the frontend pods, since the service name (and hence DNS) is established upfront.

This DNS structure also covers headless services, where a portal IP is not assigned to the service and the kube-proxy does not load-balance or provide routing for its endpoints. Service DNS can still be used and responds with multiple A records, one for each pod of the service, allowing the client to round-robin between each pod.

4.1.2. Network Plugins

OpenShift Enterprise supports the same plugin model as Kubernetes for networking pods. The following network plugins are currently supported by OpenShift Enterprise.

4.1.3. OpenShift Enterprise SDN

OpenShift Enterprise deploys a software-defined networking (SDN) approach for connecting pods in an OpenShift Enterprise cluster. The OpenShift Enterprise SDN connects all pods across all node hosts, providing a unified cluster network.

OpenShift Enterprise SDN is automatically installed and configured as part of the Ansible-based installation procedure. Further administration should not be required; however, further details on the design and operation of OpenShift Enterprise SDN are provided for those who are curious or need to troubleshoot problems.

4.2. OpenShift SDN

4.2.1. Overview

OpenShift Enterprise uses a software-defined networking (SDN) approach to provide a unified cluster network that enables communication between pods across the OpenShift Enterprise cluster. This pod network is established and maintained by the OpenShift Enterprise SDN, which configures an overlay network using Open vSwitch (OVS).

OpenShift Enterprise SDN provides two SDN plug-ins for configuring the pod network:

- The ovs-subnet plug-in is the original plug-in which provides a "flat" pod network where every pod can communicate with every other pod and service.

The ovs-multitenant plug-in provides OpenShift Enterprise project level isolation for pods and services. Each project receives a unique Virtual Network ID (VNID) that identifies traffic from pods assigned to the project. Pods from different projects cannot send packets to or receive packets from pods and services of a different project.

However, projects which receive VNID 0 are more privileged in that they are allowed to communicate with all other pods, and all other pods can communicate with them. In OpenShift Enterprise clusters, the default project has VNID 0. This facilitates certain services like the load balancer, etc. to communicate with all other pods in the cluster and vice versa.

Following is a detailed discussion of the design and operation of OpenShift Enterprise SDN, which may be useful for troubleshooting.

Information on configuring the SDN on masters and nodes is available in Configuring the SDN.

4.2.2. Design on Masters

On an OpenShift Enterprise master, OpenShift Enterprise SDN maintains a registry of nodes, stored in etcd. When the system administrator registers a node, OpenShift Enterprise SDN allocates an unused subnet from the cluster network and stores this subnet in the registry. When a node is deleted, OpenShift Enterprise SDN deletes the subnet from the registry and considers the subnet available to be allocated again.

In the default configuration, the cluster network is the 10.128.0.0/14 network (i.e. 10.128.0.0 - 10.131.255.255), and nodes are allocated /23 subnets (i.e., 10.128.0.0/23, 10.128.2.0/23, 10.128.4.0/23, and so on). This means that the cluster network has 512 subnets available to assign to nodes, and a given node is allocated 510 addresses that it can assign to the containers running on it. The size and address range of the cluster network are configurable, as is the host subnet size.

Note that OpenShift Enterprise SDN on a master does not configure the local (master) host to have access to any cluster network. Consequently, a master host does not have access to pods via the cluster network, unless it is also running as a node.

When using the ovs-multitenant plug-in, the OpenShift Enterprise SDN master also watches for the creation and deletion of projects, and assigns VXLAN VNIDs to them, which will be used later by the nodes to isolate traffic correctly.

4.2.3. Design on Nodes

On a node, OpenShift Enterprise SDN first registers the local host with the SDN master in the aforementioned registry so that the master allocates a subnet to the node.

Next, OpenShift Enterprise SDN creates and configures six network devices:

- br0, the OVS bridge device that pod containers will be attached to. OpenShift Enterprise SDN also configures a set of non-subnet-specific flow rules on this bridge. The ovs-multitenant plug-in does this immediately.

- lbr0, a Linux bridge device, which is configured as the Docker service’s bridge and given the cluster subnet gateway address (eg, 10.128.x.1/23).

- tun0, an OVS internal port (port 2 on br0). This also gets assigned the cluster subnet gateway address, and is used for external network access. OpenShift Enterprise SDN configures netfilter and routing rules to enable access from the cluster subnet to the external network via NAT.

- vlinuxbr and vovsbr, two Linux peer virtual Ethernet interfaces. vlinuxbr is added to lbr0 and vovsbr is added to br0 (port 9 with the ovs-subnet plug-in and port 3 with the ovs-multitenant plug-in) to provide connectivity for containers created directly with the Docker service outside of OpenShift Enterprise.

- vxlan0, the OVS VXLAN device (port 1 on br0), which provides access to containers on remote nodes.

Each time a pod is started on the host, OpenShift Enterprise SDN:

- moves the host side of the pod’s veth interface pair from the lbr0 bridge (where the Docker service placed it when starting the container) to the OVS bridge br0.

- adds OpenFlow rules to the OVS database to route traffic addressed to the new pod to the correct OVS port.

- in the case of the ovs-multitenant plug-in, adds OpenFlow rules to tag traffic coming from the pod with the pod’s VNID, and to allow traffic into the pod if the traffic’s VNID matches the pod’s VNID (or is the privileged VNID 0). Non-matching traffic is filtered out by a generic rule.

The pod is allocated an IP address in the cluster subnet by the Docker service itself because the Docker service is told to use the lbr0 bridge, which OpenShift Enterprise SDN has assigned the cluster gateway address (eg. 10.128.x.1/23). Note that the tun0 is also assigned the cluster gateway IP address because it is the default gateway for all traffic destined for external networks, but these two interfaces do not conflict because the lbr0 interface is only used for IPAM and no OpenShift Enterprise SDN pods are connected to it.

OpenShift Enterprise SDN nodes also watch for subnet updates from the SDN master. When a new subnet is added, the node adds OpenFlow rules on br0 so that packets with a destination IP address in the remote subnet go to vxlan0 (port 1 on br0) and thus out onto the network. The ovs-subnet plug-in sends all packets across the VXLAN with VNID 0, but the ovs-multitenant plug-in uses the appropriate VNID for the source container.

4.2.4. Packet Flow

Suppose you have two containers, A and B, where the peer virtual Ethernet device for container A’s eth0 is named vethA and the peer for container B’s eth0 is named vethB.

If the Docker service’s use of peer virtual Ethernet devices is not already familiar to you, review Docker’s advanced networking documentation.

Now suppose first that container A is on the local host and container B is also on the local host. Then the flow of packets from container A to container B is as follows:

eth0 (in A’s netns)

Next, suppose instead that container A is on the local host and container B is on a remote host on the cluster network. Then the flow of packets from container A to container B is as follows:

eth0 (in A’s netns)

Finally, if container A connects to an external host, the traffic looks like:

eth0 (in A’s netns)

Almost all packet delivery decisions are performed with OpenFlow rules in the OVS bridge br0, which simplifies the plug-in network architecture and provides flexible routing. In the case of the ovs-multitenant plug-in, this also provides enforceable network isolation.

4.2.5. Network Isolation

You can use the ovs-multitenant plug-in to achieve network isolation. When a packet exits a pod assigned to a non-default project, the OVS bridge br0 tags that packet with the project’s assigned VNID. If the packet is directed to another IP address in the node’s cluster subnet, the OVS bridge only allows the packet to be delivered to the destination pod if the VNIDs match.

If a packet is received from another node via the VXLAN tunnel, the Tunnel ID is used as the VNID, and the OVS bridge only allows the packet to be delivered to a local pod if the tunnel ID matches the destination pod’s VNID.

Packets destined for other cluster subnets are tagged with their VNID and delivered to the VXLAN tunnel with a tunnel destination address of the node owning the cluster subnet.

As described before, VNID 0 is privileged in that traffic with any VNID is allowed to enter any pod assigned VNID 0, and traffic with VNID 0 is allowed to enter any pod. Only the default OpenShift Enterprise project is assigned VNID 0; all other projects are assigned unique, isolation-enabled VNIDs. Cluster administrators can optionally control the pod network for the project using the administrator CLI.

4.3. Authentication

4.3.1. Overview

The authentication layer identifies the user associated with requests to the OpenShift Enterprise API. The authorization layer then uses information about the requesting user to determine if the request should be allowed.

As an administrator, you can configure authentication using a master configuration file.

4.3.2. Users and Groups

A user in OpenShift Enterprise is an entity that can make requests to the OpenShift Enterprise API. Typically, this represents the account of a developer or administrator that is interacting with OpenShift Enterprise.

A user can be assigned to one or more groups, each of which represent a certain set of users. Groups are useful when managing authorization policies to grant permissions to multiple users at once, for example allowing access to objects within a project, versus granting them to users individually.

In addition to explicitly defined groups, there are also system groups, or virtual groups, that are automatically provisioned by OpenShift. These can be seen when viewing cluster bindings.

In the default set of virtual groups, note the following in particular:

| Virtual Group | Description |

|---|---|

| system:authenticated | Automatically associated with all authenticated users. |

| system:authenticated:oauth | Automatically associated with all users authenticated with an OAuth access token. |

| system:unauthenticated | Automatically associated with all unauthenticated users. |

4.3.3. API Authentication

Requests to the OpenShift Enterprise API are authenticated using the following methods:

- OAuth Access Tokens

-

Obtained from the OpenShift Enterprise OAuth server using the

<master>/oauth/authorizeand<master>/oauth/tokenendpoints. -

Sent as an

Authorization: Bearer…header or anaccess_token=…query parameter

-

Obtained from the OpenShift Enterprise OAuth server using the

- X.509 Client Certificates

- Requires a HTTPS connection to the API server.

- Verified by the API server against a trusted certificate authority bundle.

- The API server creates and distributes certificates to controllers to authenticate themselves.

Any request with an invalid access token or an invalid certificate is rejected by the authentication layer with a 401 error.

If no access token or certificate is presented, the authentication layer assigns the system:anonymous virtual user and the system:unauthenticated virtual group to the request. This allows the authorization layer to determine which requests, if any, an anonymous user is allowed to make.

See the REST API Overview for more information and examples.

4.3.4. OAuth

The OpenShift Enterprise master includes a built-in OAuth server. Users obtain OAuth access tokens to authenticate themselves to the API.

When a person requests a new OAuth token, the OAuth server uses the configured identity provider to determine the identity of the person making the request.

It then determines what user that identity maps to, creates an access token for that user, and returns the token for use.

OAuth Clients

Every request for an OAuth token must specify the OAuth client that will receive and use the token. The following OAuth clients are automatically created when starting the OpenShift Enterprise API:

| OAuth Client | Usage |

|---|---|

| openshift-web-console | Requests tokens for the web console. |

| openshift-browser-client |

Requests tokens at |

| openshift-challenging-client |

Requests tokens with a user-agent that can handle |

To register additional clients:

$ oc create -f <(echo '

{

"kind": "OAuthClient",

"apiVersion": "v1",

"metadata": {

"name": "demo"

},

"secret": "...",

"redirectURIs": [

"http://www.example.com/"

]

}')- 1

- The

nameof the OAuth client is used as theclient_idparameter when making requests to<master>/oauth/authorizeand<master>/oauth/token. - 2

- The

secretis used as theclient_secretparameter when making requests to<master>/oauth/token. - 3

- The

redirect_uriparameter specified in requests to<master>/oauth/authorizeand<master>/oauth/tokenmust be equal to (or prefixed by) one of the URIs inredirectURIs.

Integrations

All requests for OAuth tokens involve a request to <master>/oauth/authorize. Most authentication integrations place an authenticating proxy in front of this endpoint, or configure OpenShift Enterprise to validate credentials against a backing identity provider. Requests to <master>/oauth/authorize can come from user-agents that cannot display interactive login pages, such as the CLI. Therefore, OpenShift Enterprise supports authenticating using a WWW-Authenticate challenge in addition to interactive login flows.

If an authenticating proxy is placed in front of the <master>/oauth/authorize endpoint, it should send unauthenticated, non-browser user-agents WWW-Authenticate challenges, rather than displaying an interactive login page or redirecting to an interactive login flow.

To prevent cross-site request forgery (CSRF) attacks against browser clients, Basic authentication challenges should only be sent if a X-CSRF-Token header is present on the request. Clients that expect to receive Basic WWW-Authenticate challenges should set this header to a non-empty value.

If the authenticating proxy cannot support WWW-Authenticate challenges, or if OpenShift Enterprise is configured to use an identity provider that does not support WWW-Authenticate challenges, users can visit <master>/oauth/token/request using a browser to obtain an access token manually.

Obtaining OAuth Tokens

The OAuth server supports standard authorization code grant and the implicit grant OAuth authorization flows.

When requesting an OAuth token using the implicit grant flow (response_type=token) with a client_id configured to request WWW-Authenticate challenges (like openshift-challenging-client), these are the possible server responses from /oauth/authorize, and how they should be handled:

| Status | Content | Client response |

|---|---|---|

| 302 |

|

Use the |

| 302 |

|

Fail, optionally surfacing the |

| 302 |

Other | Follow the redirect, and process the result using these rules |

| 401 |

|

Respond to challenge if type is recognized (e.g. |

| 401 |

| No challenge authentication is possible. Fail and show response body (which might contain links or details on alternate methods to obtain an OAuth token) |

| Other | Other | Fail, optionally surfacing response body to the user |

4.4. Authorization

4.4.1. Overview

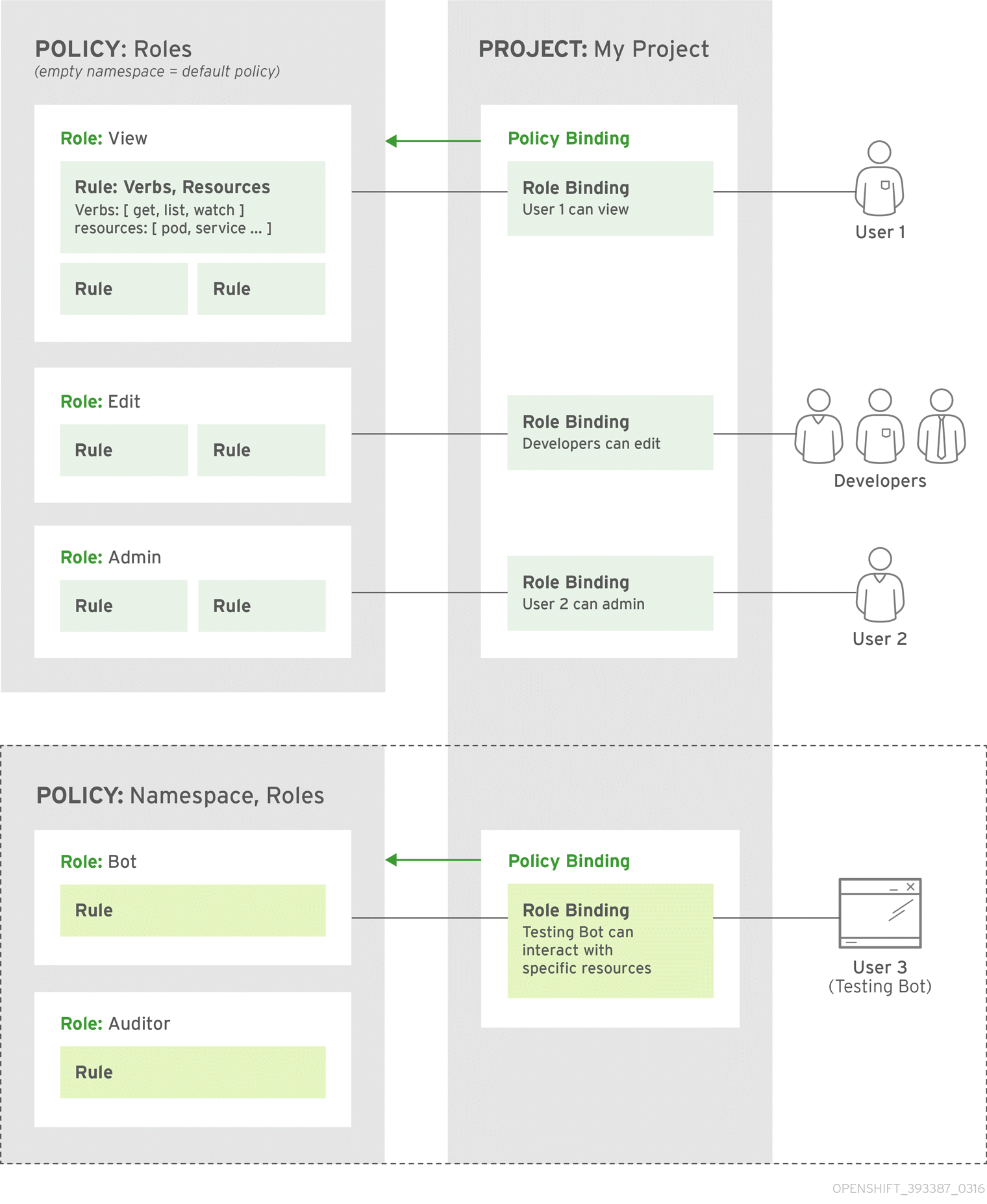

Authorization policies determine whether a user is allowed to perform a given action within a project. This allows platform administrators to use the cluster policy to control who has various access levels to the OpenShift Enterprise platform itself and all projects. It also allows developers to use local policy to control who has access to their projects. Note that authorization is a separate step from authentication, which is more about determining the identity of who is taking the action.

Authorization is managed using:

| Rules |

Sets of permitted verbs on a set of objects. For example, whether something can |

| Roles | Collections of rules. Users and groups can be associated with, or bound to, multiple roles at the same time. |

| Bindings | Associations between users and/or groups with a role. |

Cluster administrators can visualize rules, roles, and bindings using the CLI. For example, consider the following excerpt from viewing a policy, showing rule sets for the admin and basic-user default roles:

admin Verbs Resources Resource Names Extension

[create delete get list update watch] [projects resourcegroup:exposedkube resourcegroup:exposedopenshift resourcegroup:granter secrets] []

[get list watch] [resourcegroup:allkube resourcegroup:allkube-status resourcegroup:allopenshift-status resourcegroup:policy] []

basic-user Verbs Resources Resource Names Extension

[get] [users] [~]

[list] [projectrequests] []

[list] [projects] []

[create] [subjectaccessreviews] [] IsPersonalSubjectAccessReviewThe following excerpt from viewing policy bindings shows the above roles bound to various users and groups:

RoleBinding[admins]:

Role: admin

Users: [alice system:admin]

Groups: []

RoleBinding[basic-user]:

Role: basic-user

Users: [joe]

Groups: [devel]The relationships between the the policy roles, policy bindings, users, and developers are illustrated below.

4.4.2. Evaluating Authorization

Several factors are combined to make the decision when OpenShift Enterprise evaluates authorization:

| Identity | In the context of authorization, both the user name and list of groups the user belongs to. | ||||||

| Action | The action being performed. In most cases, this consists of:

| ||||||

| Bindings | The full list of bindings. |

OpenShift Enterprise evaluates authorizations using the following steps:

- The identity and the project-scoped action is used to find all bindings that apply to the user or their groups.

- Bindings are used to locate all the roles that apply.

- Roles are used to find all the rules that apply.

- The action is checked against each rule to find a match.

- If no matching rule is found, the action is then denied by default.

4.4.3. Cluster Policy and Local Policy

There are two levels of authorization policy:

| Cluster policy | Roles and bindings that are applicable across all projects. Roles that exist in the cluster policy are considered cluster roles. Cluster bindings can only reference cluster roles. |

| Local policy | Roles and bindings that are scoped to a given project. Roles that exist only in a local policy are considered local roles. Local bindings can reference both cluster and local roles. |

This two-level hierarchy allows re-usability over multiple projects through the cluster policy while allowing customization inside of individual projects through local policies.

During evaluation, both the cluster bindings and the local bindings are used. For example:

- Cluster-wide "allow" rules are checked.

- Locally-bound "allow" rules are checked.

- Deny by default.

4.4.4. Roles

Roles are collections of policy rules, which are sets of permitted verbs that can be performed on a set of resources. OpenShift Enterprise includes a set of default roles that can be added to users and groups in the cluster policy or in a local policy.

| Default Role | Description |

|---|---|

| admin |

A project manager. If used in a local binding, an admin user will have rights to view any resource in the project and modify any resource in the project except for role creation and quota. If the cluster-admin wants to allow an admin to modify roles, the cluster-admin must create a project-scoped |

| basic-user | A user that can get basic information about projects and users. |

| cluster-admin | A super-user that can perform any action in any project. When granted to a user within a local policy, they have full control over quota and roles and every action on every resource in the project. |

| cluster-status | A user that can get basic cluster status information. |

| edit | A user that can modify most objects in a project, but does not have the power to view or modify roles or bindings. |

| self-provisioner | A user that can create their own projects. |

| view | A user who cannot make any modifications, but can see most objects in a project. They cannot view or modify roles or bindings. |

Remember that users and groups can be associated with, or bound to, multiple roles at the same time.

Cluster administrators can visualize these roles, including a matrix of the verbs and resources each are associated using the CLI to view the cluster roles. Additional system: roles are listed as well, which are used for various OpenShift Enterprise system and component operations.

By default in a local policy, only the binding for the admin role is immediately listed when using the CLI to view local bindings. However, if other default roles are added to users and groups within a local policy, they become listed in the CLI output, as well.

If you find that these roles do not suit you, a cluster-admin user can create a policyBinding object named <projectname>:default with the CLI using a JSON file. This allows the project admin to bind users to roles that are defined only in the <projectname> local policy.

The cluster- role assigned by the project administrator is limited in a project. It is not the same cluster- role granted by the cluster-admin or system:admin.

Cluster roles are roles defined at the cluster level, but can be bound either at the cluster level or at the project level.

Learn how to create a local role for a project.

4.4.4.1. Updating Cluster Roles

After any OpenShift Enterprise cluster upgrade, the recommended default roles may have been updated. See Updating Policy Definitions for instructions on getting to the new recommendations using:

$ oadm policy reconcile-cluster-roles4.4.5. Security Context Constraints

In addition to authorization policies that control what a user can do, OpenShift Enterprise provides security context constraints (SCC) that control the actions that a pod can perform and what it has the ability to access. Administrators can manage SCCs using the CLI.

SCCs are also very useful for managing access to persistent storage.

SCCs are objects that define a set of conditions that a pod must run with in order to be accepted into the system. They allow an administrator to control the following:

- Running of privileged containers.

- Capabilities a container can request to be added.

- Use of host directories as volumes.

- The SELinux context of the container.

- The user ID.

- The use of host namespaces and networking.

-

Allocating an

FSGroupthat owns the pod’s volumes - Configuring allowable supplemental groups

- Requiring the use of a read only root file system

- Controlling the usage of volume types

Seven SCCs are added to the cluster by default, and are viewable by cluster administrators using the CLI:

$ oc get scc

NAME PRIV CAPS SELINUX RUNASUSER FSGROUP SUPGROUP PRIORITY READONLYROOTFS VOLUMES

anyuid false [] MustRunAs RunAsAny RunAsAny RunAsAny 10 false [configMap downwardAPI emptyDir persistentVolumeClaim secret]

hostaccess false [] MustRunAs MustRunAsRange MustRunAs RunAsAny <none> false [configMap downwardAPI emptyDir hostPath persistentVolumeClaim secret]

hostmount-anyuid false [] MustRunAs RunAsAny RunAsAny RunAsAny <none> false [configMap downwardAPI emptyDir hostPath persistentVolumeClaim secret]

hostnetwork false [] MustRunAs MustRunAsRange MustRunAs MustRunAs <none> false [configMap downwardAPI emptyDir persistentVolumeClaim secret]

nonroot false [] MustRunAs MustRunAsNonRoot RunAsAny RunAsAny <none> false [configMap downwardAPI emptyDir persistentVolumeClaim secret]

privileged true [] RunAsAny RunAsAny RunAsAny RunAsAny <none> false [*]

restricted false [] MustRunAs MustRunAsRange MustRunAs RunAsAny <none> false [configMap downwardAPI emptyDir persistentVolumeClaim secret]The definition for each SCC is also viewable by cluster administrators using the CLI. For example, for the privileged SCC:

# oc export scc/privileged

allowHostDirVolumePlugin: true

allowHostIPC: true

allowHostNetwork: true

allowHostPID: true

allowHostPorts: true

allowPrivilegedContainer: true

allowedCapabilities: null

apiVersion: v1

defaultAddCapabilities: null

fsGroup:

type: RunAsAny

groups:

- system:cluster-admins

- system:nodes

kind: SecurityContextConstraints

metadata:

annotations:

kubernetes.io/description: 'privileged allows access to all privileged and host

features and the ability to run as any user, any group, any fsGroup, and with

any SELinux context. WARNING: this is the most relaxed SCC and should be used

only for cluster administration. Grant with caution.'

creationTimestamp: null

name: privileged

priority: null

readOnlyRootFilesystem: false

requiredDropCapabilities: null

runAsUser:

type: RunAsAny

seLinuxContext:

type: RunAsAny

supplementalGroups:

type: RunAsAny

users:

- system:serviceaccount:default:registry

- system:serviceaccount:default:router

- system:serviceaccount:openshift-infra:build-controller

volumes:

- '*'- 1

- The

FSGroupstrategy which dictates the allowable values for the Security Context - 2

- The groups that have access to this SCC

- 3

- The run as user strategy type which dictates the allowable values for the Security Context

- 4

- The SELinux context strategy type which dictates the allowable values for the Security Context

- 5

- The supplemental groups strategy which dictates the allowable supplemental groups for the Security Context

- 6

- The users who have access to this SCC

The users and groups fields on the SCC control which SCCs can be used. By default, cluster administrators, nodes, and the build controller are granted access to the privileged SCC. All authenticated users are granted access to the restricted SCC.

The privileged SCC:

- allows privileged pods.

- allows host directories to be mounted as volumes.

- allows a pod to run as any user.

- allows a pod to run with any MCS label.

- allows a pod to use the host’s IPC namespace.

- allows a pod to use the host’s PID namespace.

- allows a pod to use any FSGroup.

- allows a pod to use any supplemental group.

The restricted SCC:

- ensures pods cannot run as privileged.

- ensures pods cannot use host directory volumes.

- requires that a pod run as a user in a pre-allocated range of UIDs.

- requires that a pod run with a pre-allocated MCS label.

- allows a pod to use any FSGroup.

- allows a pod to use any supplemental group.

For more information about each SCC, see the kubernetes.io/description annotation available on the SCC.

SCCs are comprised of settings and strategies that control the security features a pod has access to. These settings fall into three categories:

| Controlled by a boolean |

Fields of this type default to the most restrictive value. For example, |

| Controlled by an allowable set | Fields of this type are checked against the set to ensure their value is allowed. |

| Controlled by a strategy | Items that have a strategy to generate a value provide:

|

4.4.5.1. SCC Strategies

4.4.5.1.1. RunAsUser

-

MustRunAs - Requires a

runAsUserto be configured. Uses the configuredrunAsUseras the default. Validates against the configuredrunAsUser. - MustRunAsRange - Requires minimum and maximum values to be defined if not using pre-allocated values. Uses the minimum as the default. Validates against the entire allowable range.

-

MustRunAsNonRoot - Requires that the pod be submitted with a non-zero

runAsUseror have theUSERdirective defined in the image. No default provided. -

RunAsAny - No default provided. Allows any

runAsUserto be specified.

4.4.5.1.2. SELinuxContext

-

MustRunAs - Requires

seLinuxOptionsto be configured if not using pre-allocated values. UsesseLinuxOptionsas the default. Validates againstseLinuxOptions. -

RunAsAny - No default provided. Allows any

seLinuxOptionsto be specified.

4.4.5.1.3. SupplementalGroups

- MustRunAs - Requires at least one range to be specified if not using pre-allocated values. Uses the minimum value of the first range as the default. Validates against all ranges.

-

RunAsAny - No default provided. Allows any

supplementalGroupsto be specified.

4.4.5.1.4. FSGroup

- MustRunAs - Requires at least one range to be specified if not using pre-allocated values. Uses the minimum value of the first range as the default. Validates against the first ID in the first range.

-

RunAsAny - No default provided. Allows any

fsGroupID to be specified.

4.4.5.2. Controlling Volumes

The usage of specific volume types can be controlled by setting the volumes field of the SCC. The allowable values of this field correspond to the volume sources that are defined when creating a volume:

- azureFile

- flocker

- flexVolume

- hostPath

- emptyDir

- gcePersistentDisk

- awsElasticBlockStore

- gitRepo

- secret

- nfs

- iscsi

- glusterfs

- persistentVolumeClaim

- rbd

- cinder

- cephFS

- downwardAPI

- fc

- configMap

- *

The recommended minimum set of allowed volumes for new SCCs are configMap, downwardAPI, emptyDir, persistentVolumeClaim, and secret.

* is a special value to allow the use of all volume types.

For backwards compatibility, the usage of allowHostDirVolumePlugin overrides settings in the volumes field. For example, if allowHostDirVolumePlugin is set to false but allowed in the volumes field, then the hostPath value will be removed from volumes.

4.4.5.3. Admission

Admission control with SCCs allows for control over the creation of resources based on the capabilities granted to a user.

In terms of the SCCs, this means that an admission controller can inspect the user information made available in the context to retrieve an appropriate set of SCCs. Doing so ensures the pod is authorized to make requests about its operating environment or to generate a set of constraints to apply to the pod.

The set of SCCs that admission uses to authorize a pod are determined by the user identity and groups that the user belongs to. Additionally, if the pod specifies a service account, the set of allowable SCCs includes any constraints accessible to the service account.

Admission uses the following approach to create the final security context for the pod:

- Retrieve all SCCs available for use.

- Generate field values for security context settings that were not specified on the request.

- Validate the final settings against the available constraints.

If a matching set of constraints is found, then the pod is accepted. If the request cannot be matched to an SCC, the pod is rejected.

A pod must validate every field against the SCC. The following are examples for just two of the fields that must be validated:

These examples are in the context of a strategy using the preallocated values.

A FSGroup SCC Strategy of MustRunAs

If the pod defines a fsGroup ID, then that ID must equal the default FSGroup ID. Otherwise, the pod is not validated by that SCC and the next SCC is evaluated. If the FSGroup strategy is RunAsAny and the pod omits a fsGroup ID, then the pod matches the SCC based on FSGroup (though other strategies may not validate and thus cause the pod to fail).

A SupplementalGroups SCC Strategy of MustRunAs

If the pod specification defines one or more SupplementalGroups IDs, then the pod’s IDs must equal one of the IDs in the namespace’s openshift.io/sa.scc.supplemental-groups annotation. Otherwise, the pod is not validated by that SCC and the next SCC is evaluated. If the SupplementalGroups setting is RunAsAny and the pod specification omits a SupplementalGroups ID, then the pod matches the SCC based on SupplementalGroups (though other strategies may not validate and thus cause the pod to fail).

4.4.5.3.1. SCC Prioritization

SCCs have a priority field that affects the ordering when attempting to validate a request by the admission controller. A higher priority SCC is moved to the front of the set when sorting. When the complete set of available SCCs are determined they are ordered by:

- Highest priority first, nil is considered a 0 priority

- If priorities are equal, the SCCs will be sorted from most restrictive to least restrictive

- If both priorities and restrictions are equal the SCCs will be sorted by name

By default, the anyuid SCC granted to cluster administrators is given priority in their SCC set. This allows cluster administrators to run pods as any user by without specifying a RunAsUser on the pod’s SecurityContext. The administrator may still specify a RunAsUser if they wish.

4.4.5.3.2. Understanding Pre-allocated Values and Security Context Constraints

The admission controller is aware of certain conditions in the security context constraints that trigger it to look up pre-allocated values from a namespace and populate the security context constraint before processing the pod. Each SCC strategy is evaluated independently of other strategies, with the pre-allocated values (where allowed) for each policy aggregated with pod specification values to make the final values for the various IDs defined in the running pod.

The following SCCs cause the admission controller to look for pre-allocated values when no ranges are defined in the pod specification:

-

A

RunAsUserstrategy of MustRunAsRange with no minimum or maximum set. Admission looks for the openshift.io/sa.scc.uid-range annotation to populate range fields. -

An

SELinuxContextstrategy of MustRunAs with no level set. Admission looks for the openshift.io/sa.scc.mcs annotation to populate the level. -

A

FSGroupstrategy of MustRunAs. Admission looks for the openshift.io/sa.scc.supplemental-groups annotation. -

A

SupplementalGroupsstrategy of MustRunAs. Admission looks for the openshift.io/sa.scc.supplemental-groups annotation.

During the generation phase, the security context provider will default any values that are not specifically set in the pod. Defaulting is based on the strategy being used:

-

RunAsAnyandMustRunAsNonRootstrategies do not provide default values. Thus, if the pod needs a field defined (for example, a group ID), this field must be defined inside the pod specification. -

MustRunAs(single value) strategies provide a default value which is always used. As an example, for group IDs: even if the pod specification defines its own ID value, the namespace’s default field will also appear in the pod’s groups. -

MustRunAsRangeandMustRunAs(range-based) strategies provide the minimum value of the range. As with a single valueMustRunAsstrategy, the namespace’s default value will appear in the running pod. If a range-based strategy is configurable with multiple ranges, it will provide the minimum value of the first configured range.

FSGroup and SupplementalGroups strategies fall back to the openshift.io/sa.scc.uid-range annotation if the openshift.io/sa.scc.supplemental-groups annotation does not exist on the namespace. If neither exist, the SCC will fail to create.

By default, the annotation-based FSGroup strategy configures itself with a single range based on the minimum value for the annotation. For example, if your annotation reads 1/3, the FSGroup strategy will configure itself with a minimum and maximum of 1. If you want to allow more groups to be accepted for the FSGroup field, you can configure a custom SCC that does not use the annotation.

The openshift.io/sa.scc.supplemental-groups annotation accepts a comma delimited list of blocks in the format of <start>/<length or <start>-<end>. The openshift.io/sa.scc.uid-range annotation accepts only a single block.

4.5. Persistent Storage

4.5.1. Overview

Managing storage is a distinct problem from managing compute resources. OpenShift Enterprise leverages the Kubernetes persistent volume (PV) framework to allow administrators to provision persistent storage for a cluster. Using persistent volume claims (PVCs), developers can request PV resources without having specific knowledge of the underlying storage infrastructure.

PVCs are specific to a project and are created and used by developers as a means to use a PV. PV resources on their own are not scoped to any single project; they can be shared across the entire OpenShift Enterprise cluster and claimed from any project. After a PV has been bound to a PVC, however, that PV cannot then be bound to additional PVCs. This has the effect of scoping a bound PV to a single namespace (that of the binding project).

PVs are defined by a PersistentVolume API object, which represents a piece of existing networked storage in the cluster that has been provisioned by an administrator. It is a resource in the cluster just like a node is a cluster resource. PVs are volume plug-ins like Volumes, but have a lifecycle independent of any individual pod that uses the PV. PV objects capture the details of the implementation of the storage, be that NFS, iSCSI, or a cloud-provider-specific storage system.

High-availability of storage in the infrastructure is left to the underlying storage provider.

PVCs are defined by a PersistentVolumeClaim API object, which represents a request for storage by a developer. It is similar to a pod in that pods consume node resources and PVCs consume PV resources. For example, pods can request specific levels of resources (e.g., CPU and memory), while PVCs can request specific storage capacity and access modes (e.g, they can be mounted once read/write or many times read-only).

4.5.2. Lifecycle of a Volume and Claim

PVs are resources in the cluster. PVCs are requests for those resources and also act as claim checks to the resource. The interaction between PVs and PVCs have the following lifecycle.

4.5.2.1. Provisioning

A cluster administrator creates some number of PVs. They carry the details of the real storage that is available for use by cluster users. They exist in the API and are available for consumption.

4.5.2.2. Binding

A user creates a PersistentVolumeClaim with a specific amount of storage requested and with certain access modes. A control loop in the master watches for new PVCs, finds a matching PV (if possible), and binds them together. The user will always get at least what they asked for, but the volume may be in excess of what was requested.

Claims remain unbound indefinitely if a matching volume does not exist. Claims are bound as matching volumes become available. For example, a cluster provisioned with many 50Gi volumes would not match a PVC requesting 100Gi. The PVC can be bound when a 100Gi PV is added to the cluster.

4.5.2.3. Using

Pods use claims as volumes. The cluster inspects the claim to find the bound volume and mounts that volume for a pod. For those volumes that support multiple access modes, the user specifies which mode is desired when using their claim as a volume in a pod.

Once a user has a claim and that claim is bound, the bound PV belongs to the user for as long as they need it. Users schedule pods and access their claimed PVs by including a persistentVolumeClaim in their pod’s volumes block. See below for syntax details.

4.5.2.4. Releasing

When a user is done with a volume, they can delete the PVC object from the API which allows reclamation of the resource. The volume is considered "released" when the claim is deleted, but it is not yet available for another claim. The previous claimant’s data remains on the volume which must be handled according to policy.

4.5.2.5. Reclaiming

The reclaim policy of a PersistentVolume tells the cluster what to do with the volume after it is released. Currently, volumes can either be retained or recycled.

Retention allows for manual reclamation of the resource. For those volume plug-ins that support it, recycling performs a basic scrub on the volume (e.g., rm -rf /<volume>/*) and makes it available again for a new claim.

4.5.3. Persistent Volumes

Each PV contains a spec and status, which is the specification and status of the volume.

Example 4.1. Persistent Volume Object Definition

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv0003

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Recycle

nfs:

path: /tmp

server: 172.17.0.24.5.3.1. Types of Persistent Volumes

OpenShift Enterprise supports the following PersistentVolume plug-ins:

4.5.3.2. Capacity

Generally, a PV will have a specific storage capacity. This is set using the PV’s capacity attribute. See the Kubernetes Resource Model to understand the units expected by capacity.

Currently, storage capacity is the only resource that can be set or requested. Future attributes may include IOPS, throughput, etc.

4.5.3.3. Access Modes

A PersistentVolume can be mounted on a host in any way supported by the resource provider. Providers will have different capabilities and each PV’s access modes are set to the specific modes supported by that particular volume. For example, NFS can support multiple read/write clients, but a specific NFS PV might be exported on the server as read-only. Each PV gets its own set of access modes describing that specific PV’s capabilities.

Claims are matched to volumes with similar access modes. The only two matching criteria are access modes and size. A claim’s access modes represent a request. Therefore, the user may be granted more, but never less. For example, if a claim requests RWO, but the only volume available was an NFS PV (RWO+ROX+RWX), the claim would match NFS because it supports RWO.

Direct matches are always attempted first. The volume’s modes must match or contain more modes than you requested. The size must be greater than or equal to what is expected. If two types of volumes (NFS and iSCSI, for example) both have the same set of access modes, then either of them will match a claim with those modes. There is no ordering between types of volumes and no way to choose one type over another.

All volumes with the same modes are grouped, then sorted by size (smallest to largest). The binder gets the group with matching modes and iterates over each (in size order) until one size matches.

The access modes are:

| Access Mode | CLI Abbreviation | Description |

|---|---|---|

| ReadWriteOnce |

| The volume can be mounted as read-write by a single node. |

| ReadOnlyMany |

| The volume can be mounted read-only by many nodes. |

| ReadWriteMany |

| The volume can be mounted as read-write by many nodes. |

A volume’s AccessModes are descriptors of the volume’s capabilities. They are not enforced constraints. The storage provider is responsible for runtime errors resulting from invalid use of the resource.

For example, a GCE Persistent Disk has AccessModes ReadWriteOnce and ReadOnlyMany. The user must mark their claims as read-only if they want to take advantage of the volume’s ability for ROX. Errors in the provider show up at runtime as mount errors.

4.5.3.4. Recycling Policy

The current recycling policies are:

| Recycling Policy | Description |

|---|---|

| Retain | Manual reclamation |

| Recycle |

Basic scrub (e.g, |

Currently, only NFS and HostPath support the 'Recycle' recycling policy.

4.5.3.5. Phase

A volumes can be found in one of the following phases:

| Phase | Description |

|---|---|

| Available | A free resource that is not yet bound to a claim. |

| Bound | The volume is bound to a claim. |

| Released | The claim has been deleted, but the resource is not yet reclaimed by the cluster. |

| Failed | The volume has failed its automatic reclamation. |

The CLI shows the name of the PVC bound to the PV.

4.5.4. Persistent Volume Claims

Each PVC contains a spec and status, which is the specification and status of the claim.

Example 4.2. Persistent Volume Claim Object Definition

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: myclaim

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 8Gi4.5.4.1. Access Modes

Claims use the same conventions as volumes when requesting storage with specific access modes.

4.5.4.2. Resources

Claims, like pods, can request specific quantities of a resource. In this case, the request is for storage. The same resource model applies to both volumes and claims.

4.5.4.3. Claims As Volumes

Pods access storage by using the claim as a volume. Claims must exist in the same namespace as the pod using the claim. The cluster finds the claim in the pod’s namespace and uses it to get the PersistentVolume backing the claim. The volume is then mounted to the host and into the pod:

kind: Pod

apiVersion: v1

metadata:

name: mypod

spec:

containers:

- name: myfrontend

image: dockerfile/nginx

volumeMounts:

- mountPath: "/var/www/html"

name: mypd

volumes:

- name: mypd

persistentVolumeClaim:

claimName: myclaim4.6. Remote Commands

4.6.1. Overview

OpenShift Enterprise takes advantage of a feature built into Kubernetes to support executing commands in containers. This is implemented using HTTP along with a multiplexed streaming protocol such as SPDY or HTTP/2.

Developers can use the CLI to execute remote commands in containers.

4.6.2. Server Operation

The Kubelet handles remote execution requests from clients. Upon receiving a request, it upgrades the response, evaluates the request headers to determine what streams (stdin, stdout, and/or stderr) to expect to receive, and waits for the client to create the streams.

After the Kubelet has received all the streams, it executes the command in the container, copying between the streams and the command’s stdin, stdout, and stderr, as appropriate. When the command terminates, the Kubelet closes the upgraded connection, as well as the underlying one.

Architecturally, there are options for running a command in a container. The supported implementation currently in OpenShift Enterprise invokes nsenter directly on the node host to enter the container’s namespaces prior to executing the command. However, custom implementations could include using docker exec, or running a "helper" container that then runs nsenter so that nsenter is not a required binary that must be installed on the host.

4.7. Port Forwarding

4.7.1. Overview

OpenShift Enterprise takes advantage of a feature built into Kubernetes to support port forwarding to pods. This is implemented using HTTP along with a multiplexed streaming protocol such as SPDY or HTTP/2.

Developers can use the CLI to port forward to a pod. The CLI listens on each local port specified by the user, forwarding via the described protocol.

4.7.2. Server Operation

The Kubelet handles port forward requests from clients. Upon receiving a request, it upgrades the response and waits for the client to create port forwarding streams. When it receives a new stream, it copies data between the stream and the pod’s port.

Architecturally, there are options for forwarding to a pod’s port. The supported implementation currently in OpenShift Enterprise invokes nsenter directly on the node host to enter the pod’s network namespace, then invokes socat to copy data between the stream and the pod’s port. However, a custom implementation could include running a "helper" pod that then runs nsenter and socat, so that those binaries are not required to be installed on the host.

4.8. Source Control Management

OpenShift Enterprise takes advantage of preexisting source control management (SCM) systems hosted either internally (such as an in-house Git server) or externally (for example, on GitHub, Bitbucket, etc.). Currently, OpenShift Enterprise only supports Git solutions.

SCM integration is tightly coupled with builds, the two points being:

-

Creating a

BuildConfigusing a repository, which allows building your application inside of OpenShift Enterprise. You can create aBuildConfigmanually or let OpenShift create it automatically by inspecting your repository. - Triggering a build upon repository changes.

4.9. Admission Controllers

Admission control plug-ins intercept requests to the master API prior to persistence of a resource, but after the request is authenticated and authorized.

Each admission control plug-in is run in sequence before a request is accepted into the cluster. If any plug-in in the sequence rejects the request, the entire request is rejected immediately, and an error is returned to the end-user.

Admission control plug-ins may modify the incoming object in some cases to apply system configured defaults. In addition, admission control plug-ins may modify related resources as part of request processing to do things such as incrementing quota usage.

The OpenShift Enterprise master has a default list of plug-ins that are enabled by default for each type of resource (Kubernetes and OpenShift Enterprise). These are required for the proper functioning of the master. Modifying these lists is not recommended unless you strictly know what you are doing. Future versions of the product may use a different set of plug-ins and may change their ordering. If you do override the default list of plug-ins in the master configuration file, you are responsible for updating it to reflect requirements of newer versions of the OpenShift Enterprise master.

Cluster administrators can configure some admission control plug-ins to control certain behavior, such as:

4.10. Other API Objects

4.10.1. LimitRange

A limit range provides a mechanism to enforce min/max limits placed on resources in a Kubernetes namespace.

By adding a limit range to your namespace, you can enforce the minimum and maximum amount of CPU and Memory consumed by an individual pod or container.

See the Kubernetes documentation for more information.

4.10.2. ResourceQuota

Kubernetes can limit both the number of objects created in a namespace, and the total amount of resources requested across objects in a namespace. This facilitates sharing of a single Kubernetes cluster by several teams, each in a namespace, as a mechanism of preventing one team from starving another team of cluster resources.

See Cluster Administration and Kubernetes documentation for more information on ResourceQuota.

4.10.3. Resource

A Kubernetes Resource is something that can be requested by, allocated to, or consumed by a pod or container. Examples include memory (RAM), CPU, disk-time, and network bandwidth.

See the Developer Guide and Kubernetes documentation for more information.

4.10.4. Secret

Secrets are storage for sensitive information, such as keys, passwords, and certificates. They are accessible by the intended pod(s), but held separately from their definitions.

4.10.5. PersistentVolume

A persistent volume is an object (PersistentVolume) in the infrastructure provisioned by the cluster administrator. Persistent volumes provide durable storage for stateful applications.

See the Kubernetes documentation for more information.

4.10.6. PersistentVolumeClaim

A PersistentVolumeClaim object is a request for storage by a pod author. Kubernetes matches the claim against the pool of available volumes and binds them together. The claim is then used as a volume by a pod. Kubernetes makes sure the volume is available on the same node as the pod that requires it.

See the Kubernetes documentation for more information.

4.10.7. OAuth Objects

4.10.7.1. OAuthClient

An OAuthClient represents an OAuth client, as described in RFC 6749, section 2.

The following OAuthClient objects are automatically created:

|

| Client used to request tokens for the web console |

|

| Client used to request tokens at /oauth/token/request with a user-agent that can handle interactive logins |

|

| Client used to request tokens with a user-agent that can handle WWW-Authenticate challenges |

Example 4.3. OAuthClient Object Definition

kind: "OAuthClient"

apiVersion: "v1"

metadata:

name: "openshift-web-console"

selflink: "/osapi/v1/oAuthClients/openshift-web-console"

resourceVersion: "1"

creationTimestamp: "2015-01-01T01:01:01Z"

respondWithChallenges: false

secret: "45e27750-a8aa-11e4-b2ea-3c970e4b7ffe"

redirectURIs:

- "https://localhost:8443" - 1

- The

nameis used as theclient_idparameter in OAuth requests. - 2

- When

respondWithChallengesis set totrue, unauthenticated requests to/oauth/authorizewill result inWWW-Authenticatechallenges, if supported by the configured authentication methods. - 3

- The value in the

secretparameter is used as theclient_secretparameter in an authorization code flow. - 4

- One or more absolute URIs can be placed in the

redirectURIssection. Theredirect_uriparameter sent with authorization requests must be prefixed by one of the specifiedredirectURIs.

4.10.7.2. OAuthClientAuthorization

An OAuthClientAuthorization represents an approval by a User for a particular OAuthClient to be given an OAuthAccessToken with particular scopes.

Creation of OAuthClientAuthorization objects is done during an authorization request to the OAuth server.

Example 4.4. OAuthClientAuthorization Object Definition

---

kind: "OAuthClientAuthorization"

apiVersion: "v1"

metadata:

name: "bob:openshift-web-console"

resourceVersion: "1"

creationTimestamp: "2015-01-01T01:01:01-00:00"

clientName: "openshift-web-console"

userName: "bob"

userUID: "9311ac33-0fde-11e5-97a1-3c970e4b7ffe"

scopes: []

----4.10.7.3. OAuthAuthorizeToken

An OAuthAuthorizeToken represents an OAuth authorization code, as described in RFC 6749, section 1.3.1.

An OAuthAuthorizeToken is created by a request to the /oauth/authorize endpoint, as described in RFC 6749, section 4.1.1.

An OAuthAuthorizeToken can then be used to obtain an OAuthAccessToken with a request to the /oauth/token endpoint, as described in RFC 6749, section 4.1.3.

Example 4.5. OAuthAuthorizeToken Object Definition

kind: "OAuthAuthorizeToken"

apiVersion: "v1"

metadata:

name: "MDAwYjM5YjMtMzM1MC00NDY4LTkxODItOTA2OTE2YzE0M2Fj"

resourceVersion: "1"

creationTimestamp: "2015-01-01T01:01:01-00:00"

clientName: "openshift-web-console"

expiresIn: 300

scopes: []

redirectURI: "https://localhost:8443/console/oauth"

userName: "bob"

userUID: "9311ac33-0fde-11e5-97a1-3c970e4b7ffe" - 1

namerepresents the token name, used as an authorization code to exchange for an OAuthAccessToken.- 2

- The

clientNamevalue is the OAuthClient that requested this token. - 3

- The

expiresInvalue is the expiration in seconds from the creationTimestamp. - 4

- The

redirectURIvalue is the location where the user was redirected to during the authorization flow that resulted in this token. - 5

userNamerepresents the name of the User this token allows obtaining an OAuthAccessToken for.- 6

userUIDrepresents the UID of the User this token allows obtaining an OAuthAccessToken for.

4.10.7.4. OAuthAccessToken

An OAuthAccessToken represents an OAuth access token, as described in RFC 6749, section 1.4.

An OAuthAccessToken is created by a request to the /oauth/token endpoint, as described in RFC 6749, section 4.1.3.

Access tokens are used as bearer tokens to authenticate to the API.

Example 4.6. OAuthAccessToken Object Definition

kind: "OAuthAccessToken"

apiVersion: "v1"

metadata:

name: "ODliOGE5ZmMtYzczYi00Nzk1LTg4MGEtNzQyZmUxZmUwY2Vh"

resourceVersion: "1"

creationTimestamp: "2015-01-01T01:01:02-00:00"

clientName: "openshift-web-console"

expiresIn: 86400

scopes: []

redirectURI: "https://localhost:8443/console/oauth"

userName: "bob"

userUID: "9311ac33-0fde-11e5-97a1-3c970e4b7ffe"

authorizeToken: "MDAwYjM5YjMtMzM1MC00NDY4LTkxODItOTA2OTE2YzE0M2Fj" - 1

nameis the token name, which is used as a bearer token to authenticate to the API.- 2

- The

clientNamevalue is the OAuthClient that requested this token. - 3

- The

expiresInvalue is the expiration in seconds from the creationTimestamp. - 4

- The

redirectURIis where the user was redirected to during the authorization flow that resulted in this token. - 5

userNamerepresents the User this token allows authentication as.- 6

userUIDrepresents the User this token allows authentication as.- 7

authorizeTokenis the name of the OAuthAuthorizationToken used to obtain this token, if any.

4.10.8. User Objects

4.10.8.1. Identity

When a user logs into OpenShift Enterprise, they do so using a configured identity provider. This determines the user’s identity, and provides that information to OpenShift Enterprise.

OpenShift Enterprise then looks for a UserIdentityMapping for that Identity:

-

If the

Identityalready exists, but is not mapped to aUser, login fails. -

If the

Identityalready exists, and is mapped to aUser, the user is given anOAuthAccessTokenfor the mappedUser. -

If the

Identitydoes not exist, anIdentity,User, andUserIdentityMappingare created, and the user is given anOAuthAccessTokenfor the mappedUser.

Example 4.7. Identity Object Definition

kind: "Identity"

apiVersion: "v1"

metadata:

name: "anypassword:bob"

uid: "9316ebad-0fde-11e5-97a1-3c970e4b7ffe"

resourceVersion: "1"

creationTimestamp: "2015-01-01T01:01:01-00:00"

providerName: "anypassword"

providerUserName: "bob"

user:

name: "bob"

uid: "9311ac33-0fde-11e5-97a1-3c970e4b7ffe" - 1

- The identity name must be in the form providerName:providerUserName.

- 2

providerNameis the name of the identity provider.- 3

providerUserNameis the name that uniquely represents this identity in the scope of the identity provider.- 4

- The

namein theuserparameter is the name of the user this identity maps to. - 5

- The

uidrepresents the UID of the user this identity maps to.

4.10.8.2. User

A User represents an actor in the system. Users are granted permissions by adding roles to users or to their groups.

User objects are created automatically on first login, or can be created via the API.

Example 4.8. User Object Definition

kind: "User"

apiVersion: "v1"

metadata:

name: "bob"

uid: "9311ac33-0fde-11e5-97a1-3c970e4b7ffe"

resourceVersion: "1"

creationTimestamp: "2015-01-01T01:01:01-00:00"

identities:

- "anypassword:bob"

fullName: "Bob User"

<1> `name` is the user name used when adding roles to a user.

<2> The values in `identities` are Identity objects that map to this user. May be `null` or empty for users that cannot log in.

<3> The `fullName` value is an optional display name of user.4.10.8.3. UserIdentityMapping

A UserIdentityMapping maps an Identity to a User.

Creating, updating, or deleting a UserIdentityMapping modifies the corresponding fields in the Identity and User objects.

An Identity can only map to a single User, so logging in as a particular identity unambiguously determines the User.

A User can have multiple identities mapped to it. This allows multiple login methods to identify the same User.

Example 4.9. UserIdentityMapping Object Definition

kind: "UserIdentityMapping"

apiVersion: "v1"

metadata:

name: "anypassword:bob"

uid: "9316ebad-0fde-11e5-97a1-3c970e4b7ffe"

resourceVersion: "1"

identity:

name: "anypassword:bob"

uid: "9316ebad-0fde-11e5-97a1-3c970e4b7ffe"

user:

name: "bob"

uid: "9311ac33-0fde-11e5-97a1-3c970e4b7ffe"

<1> `*UserIdentityMapping*` name matches the mapped `*Identity*` name4.10.8.4. Group

A Group represents a list of users in the system. Groups are granted permissions by adding roles to users or to their groups.

Example 4.10. Group Object Definition