Windows Container Support for OpenShift

Red Hat OpenShift for Windows Containers Guide

Abstract

Chapter 1. Red Hat OpenShift support for Windows Containers overview

Red Hat OpenShift support for Windows Containers is a feature providing the ability to run Windows compute nodes in an OpenShift Container Platform cluster. This is possible by using the Red Hat Windows Machine Config Operator (WMCO) to install and manage Windows nodes. With a Red Hat subscription, you can get support for running Windows workloads in OpenShift Container Platform. Windows instances deployed by the WMCO are configured with the containerd container runtime. For more information, see the release notes.

You can add Windows nodes either by creating a compute machine set or by specifying existing Bring-Your-Own-Host (BYOH) Window instances through a configuration map.

Compute machine sets are not supported for bare metal or provider agnostic clusters.

For workloads including both Linux and Windows, OpenShift Container Platform allows you to deploy Windows workloads running on Windows Server containers while also providing traditional Linux workloads hosted on Red Hat Enterprise Linux CoreOS (RHCOS) or Red Hat Enterprise Linux (RHEL). For more information, see getting started with Windows container workloads.

You need the WMCO to run Windows workloads in your cluster. The WMCO orchestrates the process of deploying and managing Windows workloads on a cluster. For more information, see how to enable Windows container workloads.

You can create a Windows MachineSet object to create infrastructure Windows machine sets and related machines so that you can move supported Windows workloads to the new Windows machines. You can create a Windows MachineSet object on multiple platforms.

You can schedule Windows workloads to Windows compute nodes.

You can perform Windows Machine Config Operator upgrades to ensure that your Windows nodes have the latest updates.

You can remove a Windows node by deleting a specific machine.

You can use Bring-Your-Own-Host (BYOH) Windows instances to repurpose Windows Server VMs and bring them to OpenShift Container Platform. BYOH Windows instances benefit users who are looking to mitigate major disruptions in the event that a Windows server goes offline. You can use BYOH Windows instances as nodes on OpenShift Container Platform 4.8 and later versions.

You can disable Windows container workloads by performing the following:

- Uninstalling the Windows Machine Config Operator

- Deleting the Windows Machine Config Operator namespace

Chapter 2. Release notes

2.1. Red Hat OpenShift support for Windows Containers release notes

The release notes for Red Hat OpenShift for Windows Containers tracks the development of the Windows Machine Config Operator (WMCO), which provides all Windows container workload capabilities in OpenShift Container Platform.

2.1.1. Release notes for Red Hat Windows Machine Config Operator 7.2.2

This release of the WMCO provides a security update and bug fixes for running Windows compute nodes in an OpenShift Container Platform cluster. The components of the WMCO 7.2.2 were released in RHSA-2024:6734.

2.2. Release notes for past releases of the Windows Machine Config Operator

The following release notes are for previous versions of the Windows Machine Config Operator (WMCO).

For the current version, see Red Hat OpenShift support for Windows Containers release notes.

2.2.1. Release notes for Red Hat Windows Machine Config Operator 7.2.1

This release of the WMCO provides new features and bug fixes for running Windows compute nodes in an OpenShift Container Platform cluster. The components of the WMCO 7.2.1 were released in RHBA-2024:1476.

2.2.1.1. Bug fixes

- Previously, the WMCO did not properly wait for Windows virtual machines (VMs) to finish rebooting. This led to occasional timing issues where the WMCO would attempt to interact with a node that was in the middle of a reboot, causing WMCO to log an error and restart node configuration. Now, the WMCO waits for the instance to completely reboot. (OCPBUGS-23036)

-

Previously, the WMCO configuration was missing the

DeleteEmptyDirData: truefield, which is required for draining nodes that haveemptyDirvolumes attached. As a consequence, customers that had nodes withemptyDirvolumes would see the following error in the logs:cannot delete Pods with local storage. With this fix, theDeleteEmptyDirData: truefield was added to the node drain helper struct in the WMCO. As a result, customers are able to drain nodes withemptyDirvolumes attached. (OCPBUGS-23081)

- Previously, because of bad logic in the networking configuration script, the WICD was incorrectly reading carriage returns in the CNI configuration file as changes, and identified the file as modified. This caused the CNI configuration to be unnecessarily reloaded, potentially resulting in container restarts and brief network outages. With this fix, the WICD now reloads the CNI configuration only when the CNI configuration is actually modified. (OCPBUGS-27771)

- Previously, the WMCO incorrectly approved the node certificate signing requests (CSR) for all nodes trying to join a cluster, not just Windows node CSRs. With this fix, the WMCO approves CSRs for only Windows nodes as expected. (OCPBUGS-27139)

- Previously, because of routing issues present in Windows Server 2019, under certain conditions and after more than one hour of running time, workloads on Windows Server 2019 could have experienced packet loss when communicating with other containers in the cluster. This fix enables Direct Server Return (DSR) routing within kube-proxy. As a result, DSR now causes request and response traffic to use a different network path, circumventing the bug within Windows Server 2019. (OCPBUGS-28254)

- Previously, because the upgrade path from WMCO 6.x to WMCO 7.x included previously released versions, the WMCO would fail during the upgrade. With this fix, you can successfully upgrade from WMCO 6.x to WMCO 7.x. (OCPBUGS-27775)

- Previously, because of a lack of synchronization between Windows compute machine set nodes and Bring-Your-Own-Host (BYOH) instances, during an update the compute machine set nodes and the BYOH instances could update simultaneously, which could have impacted running workloads. This fix introduces a locking mechanism so that compute machine set nodes and BYOH instances update individually. (OCPBUGS-23020)

2.2.2. Release notes for Red Hat Windows Machine Config Operator 7.1.0

This release of the WMCO provides new features and bug fixes for running Windows compute nodes in an OpenShift Container Platform cluster. The components of the WMCO 7.1.0 were released in RHSA-2023:4025.

Due to a known issue, your OpenShift Container Platform cluster must be on version 4.12.3 or greater before updating the WMCO from version 7.0.1 to version 7.1.0. The update fails if the cluster is lower than version 4.12.3.

2.2.2.1. Bug fixes

-

Previously, the

containerdcontainer runtime reported an incorrect version on each Windows node because repository tags were not propagated to the build system. This configuration causedcontainerdto report itsgo buildversion as the version of each Windows node. With this update, the correct version is injected into the binary during build time, so thatcontainerdreports the correct version for each Windows node. (OCPBUGS-7843) - Previously, the Windows Machine Config Operator (WMCO) could not drain daemon set workloads. This issue caused Windows daemon set pods to block Windows nodes that the WMCO attempted to remove or update. With this update, the WMCO includes additional role-based access control (RBAC) permissions, so that the WMCO can remove daemon set workloads. The WMCO can also delete any processes that were created with the containerd shim, so that daemon set containers do not exist on a Windows instance after a WMCO removes a node from a cluster. (OCPBUGS-8056)

-

Previously, on an Azure Windows Server 2019 platform that does not have Azure container services installed, WMCO would fail to deploy Windows instances and would display the

Install-WindowsFeature : Win32 internal error "Access is denied" 0x5 occurred while reading the console output buffererror message. The failure occurred because the MicrosoftInstall-WindowsFeaturecmdlet displays a progress bar, which cannot be sent over an SSH connection. This fix hides the progress bar. As a result, Windows instances can be deployed as nodes. (OCPBUGS-14445)

2.2.3. Release notes for Red Hat Windows Machine Config Operator 7.0.1

This release of the WMCO provides new features and bug fixes for running Windows compute nodes in an OpenShift Container Platform cluster. The components of the WMCO 7.0.1 were released in RHBA-2023:0748.

2.2.3.1. Bug fixes

-

Previously, WMCO 7.0.0 did not support running in a namespace other than

openshift-windows-machine-operator. With this fix, you can run WMCO in a custom namespace and can upgrade clusters that have WMCO installed in a custom namespace. (OCPBUGS-5065)

2.2.4. Release notes for Red Hat Windows Machine Config Operator 7.0.0

This release of the WMCO provides new features and bug fixes for running Windows compute nodes in an OpenShift Container Platform cluster. The components of the WMCO 7.0.0 were released in RHSA-2022:9096.

2.2.4.1. New features and improvements

2.2.4.1.1. Windows Instance Config Daemon (WICD)

The Windows Instance Config Daemon (WICD) is now performing many of the tasks that were previously performed by the Windows Machine Config Bootstrapper (WMCB). The WICD is installed on your Windows nodes. Users do not need to interact with the WICD and should not experience any difference in WMCO operation.

2.2.4.1.2. Support for clusters running on Google Cloud Platform

You can now run Windows Server 2022 nodes on a cluster installed on Google Cloud Platform (GCP). You can create a Windows MachineSet object on GCP to host Windows Server 2022 compute nodes. For more information, see Creating a Windows MachineSet object on vSphere.

2.2.4.2. Bug fixes

- Previously, restarting the WMCO in a cluster with running Windows Nodes caused the windows exporter endpoint to be removed. Because of this, each Windows node could not report any metrics data. After this fix, the endpoint is retained when the WMCO is restarted. As a result, metrics data is reported properly after restarting WMCO. (BZ#2107261)

- Previously, the test to determine if the Windows Defender antivirus service is running was incorrectly checking for any process whose name started with Windows Defender, regardless of state. This resulted in an error when creating firewall exclusions for containerd on instances without Windows Defender installed. This fix now checks for the presence of the specific running process associated with the Windows Defender antivirus service. As a result, the WMCO can properly configure Windows instances as nodes regardless of whether Windows Defender is installed or not. (OCPBUGS-3573)

2.2.4.3. Known issues

The following known limitations have been announced after the previous WMCO release:

- OpenShift Serverless, Horizontal Pod Autoscaling, and Vertical Pod Autoscaling are not supported on Windows nodes.

- Red Hat OpenShift support for Windows Containers does not support adding Windows nodes to a cluster through a trunk port. The only supported networking configuration for adding Windows nodes is through an access port that carries traffic for the VLAN.

-

WMCO 7.0.0 does not support running in a namespace other than

openshift-windows-machine-operator. If you are using a custom namespace, it is recommended that you not upgrade to WMCO 7.0.0. Instead, you should upgrade to WMCO 7.0.1 when it is released. If your WMCO is configured with the Automatic update approval strategy, you should change it to Manual for WMCO 7.0.0. See the installation instructions for information on changing the approval strategy.

2.3. Windows Machine Config Operator prerequisites

The following information details the supported platform versions, Windows Server versions, and networking configurations for the Windows Machine Config Operator. See the vSphere documentation for any information that is relevant to only that platform.

2.3.1. WMCO 7.2.x supported platforms and Windows Server versions

The following table lists the Windows Server versions that are supported by WMCO 7.2.0 and 7.2.1, based on the applicable platform. Unlisted Windows Server versions are not supported and attempting to use them will cause errors.

| Platform | Supported Windows Server version |

|---|---|

| Amazon Web Services (AWS) |

|

| Microsoft Azure |

|

| VMware vSphere |

|

| Google Cloud Platform (GCP) |

|

| Bare metal or provider agnostic |

|

- For disconnected clusters, the Windows AMI must have the EC2LaunchV2 agent version 2.0.1643 or later installed. For more information, see the Install the latest version of EC2Launch v2 in the AWS documentation.

2.3.2. WMCO 7.0 and 7.1 supported platforms and Windows Server versions

The following table lists the Windows Server versions that are supported by WMCO 7.0.0, 7.0.1, and 7.1.0, based on the applicable platform. Unlisted Windows Server versions are not supported and attempting to use them will cause errors.

| Platform | Supported Windows Server version |

|---|---|

| Amazon Web Services (AWS) |

|

| Microsoft Azure |

|

| VMware vSphere |

|

| Google Cloud Platform (GCP) |

|

| Bare metal or provider agnostic |

|

2.4. Windows Machine Config Operator known limitations

Note the following limitations when working with Windows nodes managed by the WMCO (Windows nodes).

The following OpenShift Container Platform features are not supported on Windows nodes:

- Image builds

- OpenShift Pipelines

- OpenShift Service Mesh

- OpenShift monitoring of user-defined projects

- OpenShift Serverless

- Horizontal Pod Autoscaling

- Vertical Pod Autoscaling

- Hosted Control Planes

The following Red Hat features are not supported on Windows nodes:

- Dual NIC is not supported on WMCO-managed Windows instances.

- Windows nodes do not support workloads created by using deployment configs. You can use a deployment or other method to deploy workloads.

- Windows nodes are not supported in clusters that use a cluster-wide proxy. This is because the WMCO is not able to route traffic through the proxy connection for the workloads.

- Windows nodes are not supported in clusters that are in a disconnected environment.

- Red Hat OpenShift support for Windows Containers does not support adding Windows nodes to a cluster through a trunk port. The only supported networking configuration for adding Windows nodes is through an access port that carries traffic for the VLAN.

- Red Hat OpenShift support for Windows Containers supports only in-tree storage drivers for all cloud providers.

- Red Hat OpenShift support for Windows Containers does not support any Windows operating system language other than English (United States).

-

Due to a limitation within the Windows operating system,

clusterNetworkCIDR addresses of class E, such as240.0.0.0, are not compatible with Windows nodes. Kubernetes has identified the following node feature limitations :

- Huge pages are not supported for Windows containers.

- Privileged containers are not supported for Windows containers.

- Kubernetes has identified several API compatibility issues.

Chapter 3. Getting support

Windows Container Support for Red Hat OpenShift is provided and available as an optional, installable component. Windows Container Support for Red Hat OpenShift is not part of the OpenShift Container Platform subscription. It requires an additional Red Hat subscription and is supported according to the Scope of coverage and Service level agreements.

You must have this separate subscription to receive support for Windows Container Support for Red Hat OpenShift. Without this additional Red Hat subscription, deploying Windows container workloads in production clusters is not supported. You can request support through the Red Hat Customer Portal.

For more information, see the Red Hat OpenShift Container Platform Life Cycle Policy document for Red Hat OpenShift support for Windows Containers.

If you do not have this additional Red Hat subscription, you can use the Community Windows Machine Config Operator, a distribution that lacks official support.

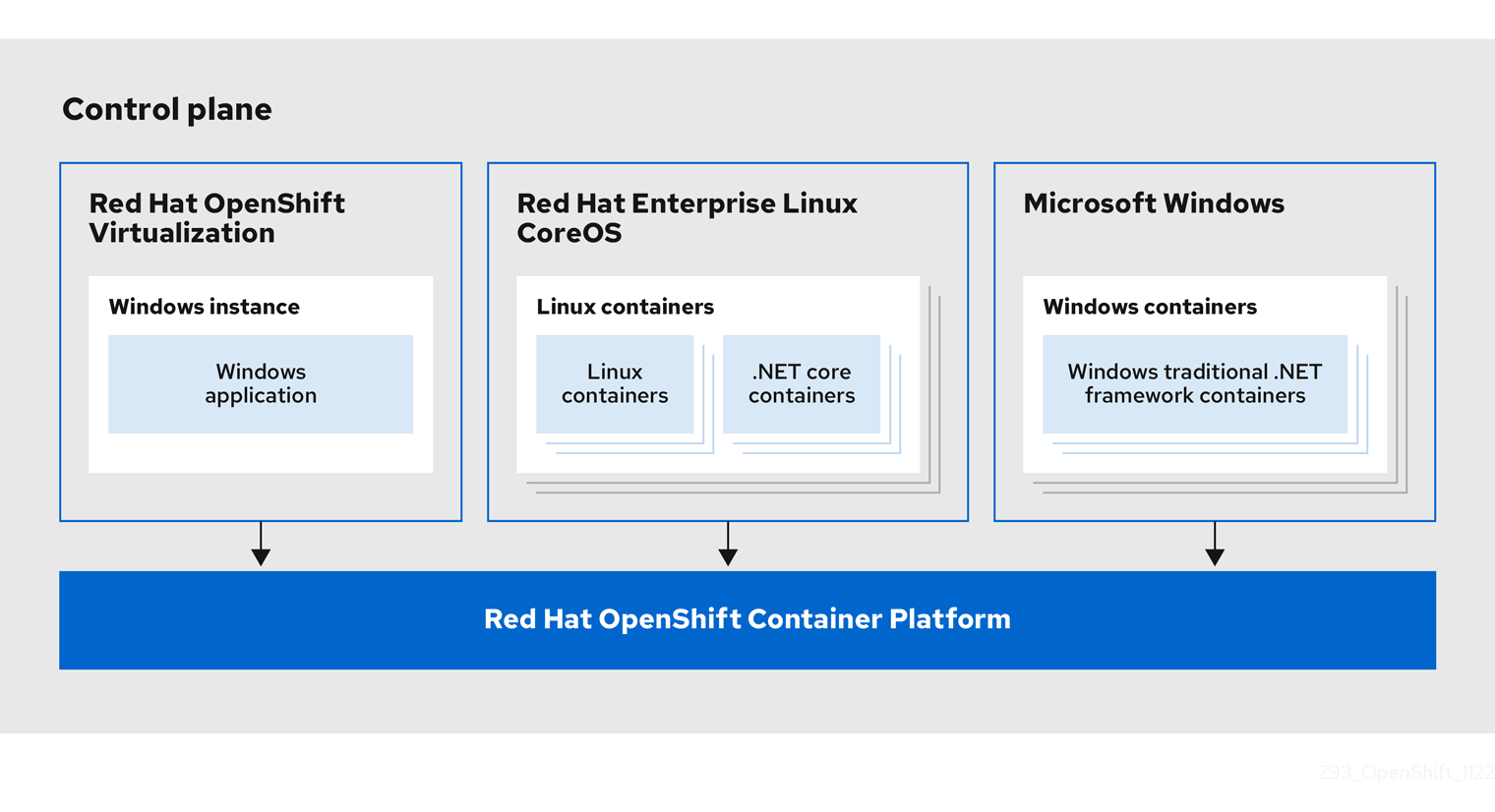

Chapter 4. Understanding Windows container workloads

Red Hat OpenShift support for Windows Containers provides built-in support for running Microsoft Windows Server containers on OpenShift Container Platform. For those that administer heterogeneous environments with a mix of Linux and Windows workloads, OpenShift Container Platform allows you to deploy Windows workloads running on Windows Server containers while also providing traditional Linux workloads hosted on Red Hat Enterprise Linux CoreOS (RHCOS) or Red Hat Enterprise Linux (RHEL).

Multi-tenancy for clusters that have Windows nodes is not supported. Clusters are considered multi-tenant when multiple workloads operate on shared infrastructure and resources. If one or more workloads running on an infrastructure cannot be trusted, the multi-tenant environment is considered hostile.

Hostile multi-tenant clusters introduce security concerns in all Kubernetes environments. Additional security features like pod security policies, or more fine-grained role-based access control (RBAC) for nodes, make exploiting your environment more difficult. However, if you choose to run hostile multi-tenant workloads, a hypervisor is the only security option you should use. The security domain for Kubernetes encompasses the entire cluster, not an individual node. For these types of hostile multi-tenant workloads, you should use physically isolated clusters.

Windows Server Containers provide resource isolation using a shared kernel but are not intended to be used in hostile multitenancy scenarios.

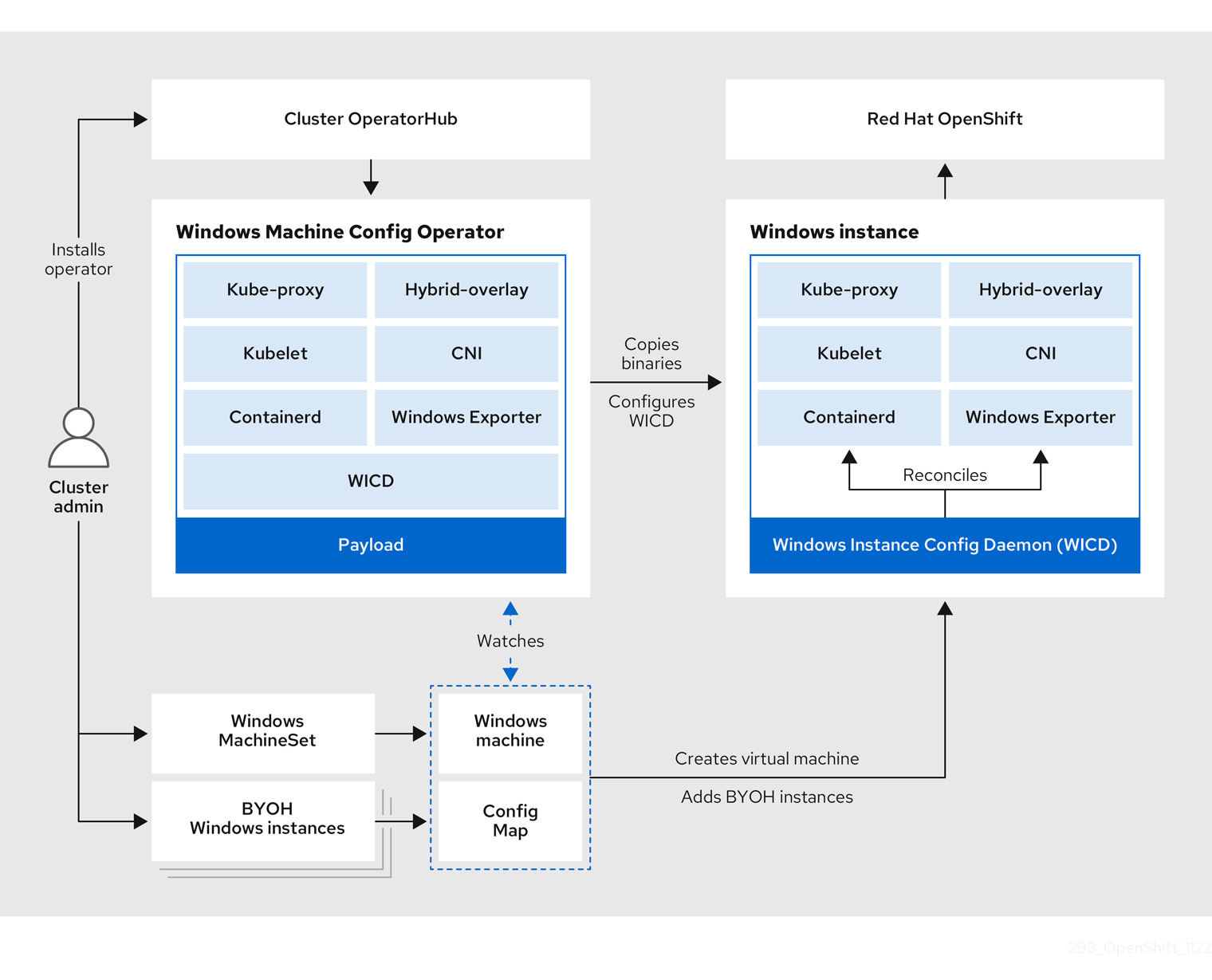

4.1. Windows workload management

To run Windows workloads in your cluster, you must first install the Windows Machine Config Operator (WMCO). The WMCO is a Linux-based Operator that runs on Linux-based control plane and compute nodes. The WMCO orchestrates the process of deploying and managing Windows workloads on a cluster.

Figure 4.1. WMCO design

Before deploying Windows workloads, you must create a Windows compute node and have it join the cluster. The Windows node hosts the Windows workloads in a cluster, and can run alongside other Linux-based compute nodes. You can create a Windows compute node by creating a Windows compute machine set to host Windows Server compute machines. You must apply a Windows-specific label to the compute machine set that specifies a Windows OS image.

The WMCO watches for machines with the Windows label. After a Windows compute machine set is detected and its respective machines are provisioned, the WMCO configures the underlying Windows virtual machine (VM) so that it can join the cluster as a compute node.

Figure 4.2. Mixed Windows and Linux workloads

The WMCO expects a predetermined secret in its namespace containing a private key that is used to interact with the Windows instance. WMCO checks for this secret during boot up time and creates a user data secret which you must reference in the Windows MachineSet object that you created. Then the WMCO populates the user data secret with a public key that corresponds to the private key. With this data in place, the cluster can connect to the Windows VM using an SSH connection.

After the cluster establishes a connection with the Windows VM, you can manage the Windows node using similar practices as you would a Linux-based node.

The OpenShift Container Platform web console provides most of the same monitoring capabilities for Windows nodes that are available for Linux nodes. However, the ability to monitor workload graphs for pods running on Windows nodes is not available at this time.

Scheduling Windows workloads to a Windows node can be done with typical pod scheduling practices like taints, tolerations, and node selectors; alternatively, you can differentiate your Windows workloads from Linux workloads and other Windows-versioned workloads by using a RuntimeClass object.

4.2. Windows node services

The following Windows-specific services are installed on each Windows node:

| Service | Description |

|---|---|

| kubelet | Registers the Windows node and manages its status. |

| Container Network Interface (CNI) plugins | Exposes networking for Windows nodes. |

| Windows Instance Config Daemon (WICD) | Maintains the state of all services running on the Windows instance to ensure the instance functions as a worker node. |

| Exports Prometheus metrics from Windows nodes | |

| Interacts with the underlying Azure cloud platform. | |

| hybrid-overlay | Creates the OpenShift Container Platform Host Network Service (HNS). |

| kube-proxy | Maintains network rules on nodes allowing outside communication. |

| containerd container runtime | Manages the complete container lifecycle. |

Chapter 5. Enabling Windows container workloads

Before adding Windows workloads to your cluster, you must install the Windows Machine Config Operator (WMCO), which is available in the OpenShift Container Platform OperatorHub. The WMCO orchestrates the process of deploying and managing Windows workloads on a cluster.

Dual NIC is not supported on WMCO-managed Windows instances.

Prerequisites

-

You have access to an OpenShift Container Platform cluster using an account with

cluster-adminpermissions. -

You have installed the OpenShift CLI (

oc). -

You have installed your cluster using installer-provisioned infrastructure, or using user-provisioned infrastructure with the

platform: nonefield set in yourinstall-config.yamlfile. - You have configured hybrid networking with OVN-Kubernetes for your cluster. This must be completed during the installation of your cluster. For more information, see Configuring hybrid networking.

- You are running an OpenShift Container Platform cluster version 4.6.8 or later.

Windows instances deployed by the WMCO are configured with the containerd container runtime. Because WMCO installs and manages the runtime, it is recommanded that you do not manually install containerd on nodes.

5.1. Installing the Windows Machine Config Operator

You can install the Windows Machine Config Operator using either the web console or OpenShift CLI (oc).

- The WMCO is not supported in clusters that use a cluster-wide proxy because the WMCO is not able to route traffic through the proxy connection for the workloads.

-

Due to a limitation within the Windows operating system,

clusterNetworkCIDR addresses of class E, such as240.0.0.0, are not compatible with Windows nodes.

5.1.1. Installing the Windows Machine Config Operator using the web console

You can use the OpenShift Container Platform web console to install the Windows Machine Config Operator (WMCO).

Dual NIC is not supported on WMCO-managed Windows instances.

Procedure

- From the Administrator perspective in the OpenShift Container Platform web console, navigate to the Operators → OperatorHub page.

-

Use the Filter by keyword box to search for

Windows Machine Config Operatorin the catalog. Click the Windows Machine Config Operator tile. - Review the information about the Operator and click Install.

On the Install Operator page:

- Select the stable channel as the Update Channel. The stable channel enables the latest stable release of the WMCO to be installed.

- The Installation Mode is preconfigured because the WMCO must be available in a single namespace only.

-

Choose the Installed Namespace for the WMCO. The default Operator recommended namespace is

openshift-windows-machine-config-operator. - Click the Enable Operator recommended cluster monitoring on the Namespace checkbox to enable cluster monitoring for the WMCO.

Select an Approval Strategy.

- The Automatic strategy allows Operator Lifecycle Manager (OLM) to automatically update the Operator when a new version is available.

- The Manual strategy requires a user with appropriate credentials to approve the Operator update.

Click Install. The WMCO is now listed on the Installed Operators page.

NoteThe WMCO is installed automatically into the namespace you defined, like

openshift-windows-machine-config-operator.- Verify that the Status shows Succeeded to confirm successful installation of the WMCO.

5.1.2. Installing the Windows Machine Config Operator using the CLI

You can use the OpenShift CLI (oc) to install the Windows Machine Config Operator (WMCO).

Dual NIC is not supported on WMCO-managed Windows instances.

Procedure

Create a namespace for the WMCO.

Create a

Namespaceobject YAML file for the WMCO. For example,wmco-namespace.yaml:apiVersion: v1 kind: Namespace metadata: name: openshift-windows-machine-config-operator1 labels: openshift.io/cluster-monitoring: "true"2 Create the namespace:

$ oc create -f <file-name>.yamlFor example:

$ oc create -f wmco-namespace.yaml

Create the Operator group for the WMCO.

Create an

OperatorGroupobject YAML file. For example,wmco-og.yaml:apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: windows-machine-config-operator namespace: openshift-windows-machine-config-operator spec: targetNamespaces: - openshift-windows-machine-config-operatorCreate the Operator group:

$ oc create -f <file-name>.yamlFor example:

$ oc create -f wmco-og.yaml

Subscribe the namespace to the WMCO.

Create a

Subscriptionobject YAML file. For example,wmco-sub.yaml:apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: windows-machine-config-operator namespace: openshift-windows-machine-config-operator spec: channel: "stable"1 installPlanApproval: "Automatic"2 name: "windows-machine-config-operator" source: "redhat-operators"3 sourceNamespace: "openshift-marketplace"4 - 1

- Specify

stableas the channel. - 2

- Set an approval strategy. You can set

AutomaticorManual. - 3

- Specify the

redhat-operatorscatalog source, which contains thewindows-machine-config-operatorpackage manifests. If your OpenShift Container Platform is installed on a restricted network, also known as a disconnected cluster, specify the name of theCatalogSourceobject you created when you configured the Operator LifeCycle Manager (OLM). - 4

- Namespace of the catalog source. Use

openshift-marketplacefor the default OperatorHub catalog sources.

Create the subscription:

$ oc create -f <file-name>.yamlFor example:

$ oc create -f wmco-sub.yamlThe WMCO is now installed to the

openshift-windows-machine-config-operator.

Verify the WMCO installation:

$ oc get csv -n openshift-windows-machine-config-operatorExample output

NAME DISPLAY VERSION REPLACES PHASE windows-machine-config-operator.2.0.0 Windows Machine Config Operator 2.0.0 Succeeded

5.2. Configuring a secret for the Windows Machine Config Operator

To run the Windows Machine Config Operator (WMCO), you must create a secret in the WMCO namespace containing a private key. This is required to allow the WMCO to communicate with the Windows virtual machine (VM).

Prerequisites

- You installed the Windows Machine Config Operator (WMCO) using Operator Lifecycle Manager (OLM).

You created a PEM-encoded file containing a private key by using a strong algorithm, such as ECDSA.

If you created the key pair on a Red Hat Enterprise Linux (RHEL) system, before you can use the public key on a Windows system, make sure the public key is saved using ASCII encoding. For example, the following PowerShell command copies a public key, encoding it for the ASCII character set:

C:\> echo "ssh-rsa <ssh_pub_key>" | Out-File <ssh_key_path> -Encoding asciiwhere:

<ssh_pub_key>- Specifies the SSH public key used to access the cluster.

<ssh_key_path>- Specifies the path to the SSH public key.

Procedure

Define the secret required to access the Windows VMs:

$ oc create secret generic cloud-private-key --from-file=private-key.pem=${HOME}/.ssh/<key> \ -n openshift-windows-machine-config-operator1

- 1

- You must create the private key in the WMCO namespace, like

openshift-windows-machine-config-operator.

It is recommended to use a different private key than the one used when installing the cluster.

5.3. Using Windows containers in a proxy-enabled cluster

The Windows Machine Config Operator (WMCO) can consume and use a cluster-wide egress proxy configuration when making external requests outside the cluster’s internal network.

This allows you to add Windows nodes and run workloads in a proxy-enabled cluster, allowing your Windows nodes to pull images from registries that are secured behind your proxy server or to make requests to off-cluster services and services that use a custom public key infrastructure.

The cluster-wide proxy affects system components only, not user workloads.

In proxy-enabled clusters, the WMCO is aware of the NO_PROXY, HTTP_PROXY, and HTTPS_PROXY values that are set for the cluster. The WMCO periodically checks whether the proxy environment variables have changed. If there is a discrepancy, the WMCO reconciles and updates the proxy environment variables on the Windows instances.

Windows workloads created on Windows nodes in proxy-enabled clusters do not inherit proxy settings from the node by default, the same as with Linux nodes. Also, by default PowerShell sessions do not inherit proxy settings on Windows nodes in proxy-enabled clusters.

5.4. Rebooting a node gracefully

The Windows Machine Config Operator (WMCO) minimizes node reboots whenever possible. However, certain operations and updates require a reboot to ensure that changes are applied correctly and securely. To safely reboot your Windows nodes, use the graceful reboot process. For information on gracefully rebooting a standard OpenShift Container Platform node, see "Rebooting a node gracefully" in the Nodes documentation.

Before rebooting a node, it is recommended to backup etcd data to avoid any data loss on the node.

For single-node OpenShift clusters that require users to perform the oc login command rather than having the certificates in kubeconfig file to manage the cluster, the oc adm commands might not be available after cordoning and draining the node. This is because the openshift-oauth-apiserver pod is not running due to the cordon. You can use SSH to access the nodes as indicated in the following procedure.

In a single-node OpenShift cluster, pods cannot be rescheduled when cordoning and draining. However, doing so gives the pods, especially your workload pods, time to properly stop and release associated resources.

Procedure

To perform a graceful restart of a node:

Mark the node as unschedulable:

$ oc adm cordon <node1>Drain the node to remove all the running pods:

$ oc adm drain <node1> --ignore-daemonsets --delete-emptydir-data --forceYou might receive errors that pods associated with custom pod disruption budgets (PDB) cannot be evicted.

Example error

error when evicting pods/"rails-postgresql-example-1-72v2w" -n "rails" (will retry after 5s): Cannot evict pod as it would violate the pod's disruption budget.In this case, run the drain command again, adding the

disable-evictionflag, which bypasses the PDB checks:$ oc adm drain <node1> --ignore-daemonsets --delete-emptydir-data --force --disable-evictionSSH into the Windows node and enter PowerShell by running the following command:

C:\> powershellRestart the node by running the following command:

C:\> Restart-Computer -ForceWindows nodes on Amazon Web Services (AWS) do not return to

READYstate after a graceful reboot due to an inconsistency with the EC2 instance metadata routes and the Host Network Service (HNS) networks.After the reboot, SSH into any Windows node on AWS and add the route by running the following command in a shell prompt:

C:\> route add 169.254.169.254 mask 255.255.255.0 <gateway_ip>where:

169.254.169.254- Specifies the address of the EC2 instance metadata endpoint.

255.255.255.255- Specifies the network mask of the EC2 instance metadata endpoint.

<gateway_ip>Specifies the corresponding IP address of the gateway in the Windows instance, which you can find by running the following command:

C:\> ipconfig | findstr /C:"Default Gateway"

After the reboot is complete, mark the node as schedulable by running the following command:

$ oc adm uncordon <node1>Verify that the node is ready:

$ oc get node <node1>Example output

NAME STATUS ROLES AGE VERSION <node1> Ready worker 6d22h v1.18.3+b0068a8

Additional resources

Chapter 6. Creating Windows machine sets

6.1. Creating a Windows machine set on AWS

You can create a Windows MachineSet object to serve a specific purpose in your OpenShift Container Platform cluster on Amazon Web Services (AWS). For example, you might create infrastructure Windows machine sets and related machines so that you can move supporting Windows workloads to the new Windows machines.

Prerequisites

- You installed the Windows Machine Config Operator (WMCO) using Operator Lifecycle Manager (OLM).

You are using a supported Windows Server as the operating system image.

Use one of the following

awscommands, as appropriate for your Windows Server release, to query valid AMI images:Example Windows Server 2022 command

$ aws ec2 describe-images --region <aws_region_name> --filters "Name=name,Values=Windows_Server-2022*English*Core*Base*" "Name=is-public,Values=true" --query "reverse(sort_by(Images, &CreationDate))[*].{name: Name, id: ImageId}" --output tableExample Windows Server 2019 command

$ aws ec2 describe-images --region <aws_region_name> --filters "Name=name,Values=Windows_Server-2019*English*Core*Base*" "Name=is-public,Values=true" --query "reverse(sort_by(Images, &CreationDate))[*].{name: Name, id: ImageId}" --output tablewhere:

- <aws_region_name>

- Specifies the name of your AWS region.

- For disconnected clusters, the Windows AMI must have the EC2LaunchV2 agent version 2.0.1643 or later installed. For more information, see the Install the latest version of EC2Launch v2 in the AWS documentation.

6.1.1. Machine API overview

The Machine API is a combination of primary resources that are based on the upstream Cluster API project and custom OpenShift Container Platform resources.

For OpenShift Container Platform 4.12 clusters, the Machine API performs all node host provisioning management actions after the cluster installation finishes. Because of this system, OpenShift Container Platform 4.12 offers an elastic, dynamic provisioning method on top of public or private cloud infrastructure.

The two primary resources are:

- Machines

-

A fundamental unit that describes the host for a node. A machine has a

providerSpecspecification, which describes the types of compute nodes that are offered for different cloud platforms. For example, a machine type for a compute node might define a specific machine type and required metadata. - Machine sets

MachineSetresources are groups of compute machines. Compute machine sets are to compute machines as replica sets are to pods. If you need more compute machines or must scale them down, you change thereplicasfield on theMachineSetresource to meet your compute need.WarningControl plane machines cannot be managed by compute machine sets.

Control plane machine sets provide management capabilities for supported control plane machines that are similar to what compute machine sets provide for compute machines.

For more information, see “Managing control plane machines".

The following custom resources add more capabilities to your cluster:

- Machine autoscaler

The

MachineAutoscalerresource automatically scales compute machines in a cloud. You can set the minimum and maximum scaling boundaries for nodes in a specified compute machine set, and the machine autoscaler maintains that range of nodes.The

MachineAutoscalerobject takes effect after aClusterAutoscalerobject exists. BothClusterAutoscalerandMachineAutoscalerresources are made available by theClusterAutoscalerOperatorobject.- Cluster autoscaler

This resource is based on the upstream cluster autoscaler project. In the OpenShift Container Platform implementation, it is integrated with the Machine API by extending the compute machine set API. You can use the cluster autoscaler to manage your cluster in the following ways:

- Set cluster-wide scaling limits for resources such as cores, nodes, memory, and GPU

- Set the priority so that the cluster prioritizes pods and new nodes are not brought online for less important pods

- Set the scaling policy so that you can scale up nodes but not scale them down

- Machine health check

-

The

MachineHealthCheckresource detects when a machine is unhealthy, deletes it, and, on supported platforms, makes a new machine.

In OpenShift Container Platform version 3.11, you could not roll out a multi-zone architecture easily because the cluster did not manage machine provisioning. Beginning with OpenShift Container Platform version 4.1, this process is easier. Each compute machine set is scoped to a single zone, so the installation program sends out compute machine sets across availability zones on your behalf. And then because your compute is dynamic, and in the face of a zone failure, you always have a zone for when you must rebalance your machines. In global Azure regions that do not have multiple availability zones, you can use availability sets to ensure high availability. The autoscaler provides best-effort balancing over the life of a cluster.

6.1.2. Sample YAML for a Windows MachineSet object on AWS

This sample YAML defines a Windows MachineSet object running on Amazon Web Services (AWS) that the Windows Machine Config Operator (WMCO) can react upon.

apiVersion: machine.openshift.io/v1beta1

kind: MachineSet

metadata:

labels:

machine.openshift.io/cluster-api-cluster: <infrastructure_id>

name: <infrastructure_id>-windows-worker-<zone>

namespace: openshift-machine-api

spec:

replicas: 1

selector:

matchLabels:

machine.openshift.io/cluster-api-cluster: <infrastructure_id>

machine.openshift.io/cluster-api-machineset: <infrastructure_id>-windows-worker-<zone>

template:

metadata:

labels:

machine.openshift.io/cluster-api-cluster: <infrastructure_id>

machine.openshift.io/cluster-api-machine-role: worker

machine.openshift.io/cluster-api-machine-type: worker

machine.openshift.io/cluster-api-machineset: <infrastructure_id>-windows-worker-<zone>

machine.openshift.io/os-id: Windows

spec:

metadata:

labels:

node-role.kubernetes.io/worker: ""

providerSpec:

value:

ami:

id: <windows_container_ami>

apiVersion: awsproviderconfig.openshift.io/v1beta1

blockDevices:

- ebs:

iops: 0

volumeSize: 120

volumeType: gp2

credentialsSecret:

name: aws-cloud-credentials

deviceIndex: 0

iamInstanceProfile:

id: <infrastructure_id>-worker-profile

instanceType: m5a.large

kind: AWSMachineProviderConfig

placement:

availabilityZone: <zone>

region: <region>

securityGroups:

- filters:

- name: tag:Name

values:

- <infrastructure_id>-worker-sg

subnet:

filters:

- name: tag:Name

values:

- <infrastructure_id>-private-<zone>

tags:

- name: kubernetes.io/cluster/<infrastructure_id>

value: owned

userDataSecret:

name: windows-user-data

namespace: openshift-machine-api- 1 3 5 10 13 14 15

- Specify the infrastructure ID that is based on the cluster ID that you set when you provisioned the cluster. You can obtain the infrastructure ID by running the following command:

$ oc get -o jsonpath='{.status.infrastructureName}{"\n"}' infrastructure cluster - 2 4 6

- Specify the infrastructure ID, worker label, and zone.

- 7

- Configure the compute machine set as a Windows machine.

- 8

- Configure the Windows node as a compute machine.

- 9

- Specify the AMI ID of a supported Windows image with a container runtime installed.Note

For disconnected clusters, the Windows AMI must have the EC2LaunchV2 agent version 2.0.1643 or later installed. For more information, see the Install the latest version of EC2Launch v2 in the AWS documentation.

- 11

- Specify the AWS zone, like

us-east-1a. - 12

- Specify the AWS region, like

us-east-1. - 16

- Created by the WMCO when it is configuring the first Windows machine. After that, the

windows-user-datais available for all subsequent compute machine sets to consume.

6.1.3. Creating a compute machine set

In addition to the compute machine sets created by the installation program, you can create your own to dynamically manage the machine compute resources for specific workloads of your choice.

Prerequisites

- Deploy an OpenShift Container Platform cluster.

-

Install the OpenShift CLI (

oc). -

Log in to

ocas a user withcluster-adminpermission.

Procedure

Create a new YAML file that contains the compute machine set custom resource (CR) sample and is named

<file_name>.yaml.Ensure that you set the

<clusterID>and<role>parameter values.Optional: If you are not sure which value to set for a specific field, you can check an existing compute machine set from your cluster.

To list the compute machine sets in your cluster, run the following command:

$ oc get machinesets -n openshift-machine-apiExample output

NAME DESIRED CURRENT READY AVAILABLE AGE agl030519-vplxk-worker-us-east-1a 1 1 1 1 55m agl030519-vplxk-worker-us-east-1b 1 1 1 1 55m agl030519-vplxk-worker-us-east-1c 1 1 1 1 55m agl030519-vplxk-worker-us-east-1d 0 0 55m agl030519-vplxk-worker-us-east-1e 0 0 55m agl030519-vplxk-worker-us-east-1f 0 0 55mTo view values of a specific compute machine set custom resource (CR), run the following command:

$ oc get machineset <machineset_name> \ -n openshift-machine-api -o yamlExample output

apiVersion: machine.openshift.io/v1beta1 kind: MachineSet metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id>1 name: <infrastructure_id>-<role>2 namespace: openshift-machine-api spec: replicas: 1 selector: matchLabels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machineset: <infrastructure_id>-<role> template: metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machine-role: <role> machine.openshift.io/cluster-api-machine-type: <role> machine.openshift.io/cluster-api-machineset: <infrastructure_id>-<role> spec: providerSpec:3 ...- 1

- The cluster infrastructure ID.

- 2

- A default node label.Note

For clusters that have user-provisioned infrastructure, a compute machine set can only create

workerandinfratype machines. - 3

- The values in the

<providerSpec>section of the compute machine set CR are platform-specific. For more information about<providerSpec>parameters in the CR, see the sample compute machine set CR configuration for your provider.

Create a

MachineSetCR by running the following command:$ oc create -f <file_name>.yaml

Verification

View the list of compute machine sets by running the following command:

$ oc get machineset -n openshift-machine-apiExample output

NAME DESIRED CURRENT READY AVAILABLE AGE agl030519-vplxk-windows-worker-us-east-1a 1 1 1 1 11m agl030519-vplxk-worker-us-east-1a 1 1 1 1 55m agl030519-vplxk-worker-us-east-1b 1 1 1 1 55m agl030519-vplxk-worker-us-east-1c 1 1 1 1 55m agl030519-vplxk-worker-us-east-1d 0 0 55m agl030519-vplxk-worker-us-east-1e 0 0 55m agl030519-vplxk-worker-us-east-1f 0 0 55mWhen the new compute machine set is available, the

DESIREDandCURRENTvalues match. If the compute machine set is not available, wait a few minutes and run the command again.

6.2. Creating a Windows machine set on Azure

You can create a Windows MachineSet object to serve a specific purpose in your OpenShift Container Platform cluster on Microsoft Azure. For example, you might create infrastructure Windows machine sets and related machines so that you can move supporting Windows workloads to the new Windows machines.

Prerequisites

- You installed the Windows Machine Config Operator (WMCO) using Operator Lifecycle Manager (OLM).

- You are using a supported Windows Server as the operating system image.

6.2.1. Machine API overview

The Machine API is a combination of primary resources that are based on the upstream Cluster API project and custom OpenShift Container Platform resources.

For OpenShift Container Platform 4.12 clusters, the Machine API performs all node host provisioning management actions after the cluster installation finishes. Because of this system, OpenShift Container Platform 4.12 offers an elastic, dynamic provisioning method on top of public or private cloud infrastructure.

The two primary resources are:

- Machines

-

A fundamental unit that describes the host for a node. A machine has a

providerSpecspecification, which describes the types of compute nodes that are offered for different cloud platforms. For example, a machine type for a compute node might define a specific machine type and required metadata. - Machine sets

MachineSetresources are groups of compute machines. Compute machine sets are to compute machines as replica sets are to pods. If you need more compute machines or must scale them down, you change thereplicasfield on theMachineSetresource to meet your compute need.WarningControl plane machines cannot be managed by compute machine sets.

Control plane machine sets provide management capabilities for supported control plane machines that are similar to what compute machine sets provide for compute machines.

For more information, see “Managing control plane machines".

The following custom resources add more capabilities to your cluster:

- Machine autoscaler

The

MachineAutoscalerresource automatically scales compute machines in a cloud. You can set the minimum and maximum scaling boundaries for nodes in a specified compute machine set, and the machine autoscaler maintains that range of nodes.The

MachineAutoscalerobject takes effect after aClusterAutoscalerobject exists. BothClusterAutoscalerandMachineAutoscalerresources are made available by theClusterAutoscalerOperatorobject.- Cluster autoscaler

This resource is based on the upstream cluster autoscaler project. In the OpenShift Container Platform implementation, it is integrated with the Machine API by extending the compute machine set API. You can use the cluster autoscaler to manage your cluster in the following ways:

- Set cluster-wide scaling limits for resources such as cores, nodes, memory, and GPU

- Set the priority so that the cluster prioritizes pods and new nodes are not brought online for less important pods

- Set the scaling policy so that you can scale up nodes but not scale them down

- Machine health check

-

The

MachineHealthCheckresource detects when a machine is unhealthy, deletes it, and, on supported platforms, makes a new machine.

In OpenShift Container Platform version 3.11, you could not roll out a multi-zone architecture easily because the cluster did not manage machine provisioning. Beginning with OpenShift Container Platform version 4.1, this process is easier. Each compute machine set is scoped to a single zone, so the installation program sends out compute machine sets across availability zones on your behalf. And then because your compute is dynamic, and in the face of a zone failure, you always have a zone for when you must rebalance your machines. In global Azure regions that do not have multiple availability zones, you can use availability sets to ensure high availability. The autoscaler provides best-effort balancing over the life of a cluster.

6.2.2. Sample YAML for a Windows MachineSet object on Azure

This sample YAML defines a Windows MachineSet object running on Microsoft Azure that the Windows Machine Config Operator (WMCO) can react upon.

apiVersion: machine.openshift.io/v1beta1

kind: MachineSet

metadata:

labels:

machine.openshift.io/cluster-api-cluster: <infrastructure_id>

name: <windows_machine_set_name>

namespace: openshift-machine-api

spec:

replicas: 1

selector:

matchLabels:

machine.openshift.io/cluster-api-cluster: <infrastructure_id>

machine.openshift.io/cluster-api-machineset: <windows_machine_set_name>

template:

metadata:

labels:

machine.openshift.io/cluster-api-cluster: <infrastructure_id>

machine.openshift.io/cluster-api-machine-role: worker

machine.openshift.io/cluster-api-machine-type: worker

machine.openshift.io/cluster-api-machineset: <windows_machine_set_name>

machine.openshift.io/os-id: Windows

spec:

metadata:

labels:

node-role.kubernetes.io/worker: ""

providerSpec:

value:

apiVersion: azureproviderconfig.openshift.io/v1beta1

credentialsSecret:

name: azure-cloud-credentials

namespace: openshift-machine-api

image:

offer: WindowsServer

publisher: MicrosoftWindowsServer

resourceID: ""

sku: 2019-Datacenter-with-Containers

version: latest

kind: AzureMachineProviderSpec

location: <location>

managedIdentity: <infrastructure_id>-identity

networkResourceGroup: <infrastructure_id>-rg

osDisk:

diskSizeGB: 128

managedDisk:

storageAccountType: Premium_LRS

osType: Windows

publicIP: false

resourceGroup: <infrastructure_id>-rg

subnet: <infrastructure_id>-worker-subnet

userDataSecret:

name: windows-user-data

namespace: openshift-machine-api

vmSize: Standard_D2s_v3

vnet: <infrastructure_id>-vnet

zone: "<zone>" - 1 3 5 11 12 13 15

- Specify the infrastructure ID that is based on the cluster ID that you set when you provisioned the cluster. You can obtain the infrastructure ID by running the following command:

$ oc get -o jsonpath='{.status.infrastructureName}{"\n"}' infrastructure cluster - 2 4 6

- Specify the Windows compute machine set name. Windows machine names on Azure cannot be more than 15 characters long. Therefore, the compute machine set name cannot be more than 9 characters long, due to the way machine names are generated from it.

- 7

- Configure the compute machine set as a Windows machine.

- 8

- Configure the Windows node as a compute machine.

- 9

- Specify a

WindowsServerimage offering that defines the2019-Datacenter-with-ContainersSKU. - 10

- Specify the Azure region, like

centralus. - 14

- Created by the WMCO when it is configuring the first Windows machine. After that, the

windows-user-datais available for all subsequent compute machine sets to consume. - 16

- Specify the zone within your region to place machines on. Be sure that your region supports the zone that you specify.

6.2.3. Creating a compute machine set

In addition to the compute machine sets created by the installation program, you can create your own to dynamically manage the machine compute resources for specific workloads of your choice.

Prerequisites

- Deploy an OpenShift Container Platform cluster.

-

Install the OpenShift CLI (

oc). -

Log in to

ocas a user withcluster-adminpermission.

Procedure

Create a new YAML file that contains the compute machine set custom resource (CR) sample and is named

<file_name>.yaml.Ensure that you set the

<clusterID>and<role>parameter values.Optional: If you are not sure which value to set for a specific field, you can check an existing compute machine set from your cluster.

To list the compute machine sets in your cluster, run the following command:

$ oc get machinesets -n openshift-machine-apiExample output

NAME DESIRED CURRENT READY AVAILABLE AGE agl030519-vplxk-worker-us-east-1a 1 1 1 1 55m agl030519-vplxk-worker-us-east-1b 1 1 1 1 55m agl030519-vplxk-worker-us-east-1c 1 1 1 1 55m agl030519-vplxk-worker-us-east-1d 0 0 55m agl030519-vplxk-worker-us-east-1e 0 0 55m agl030519-vplxk-worker-us-east-1f 0 0 55mTo view values of a specific compute machine set custom resource (CR), run the following command:

$ oc get machineset <machineset_name> \ -n openshift-machine-api -o yamlExample output

apiVersion: machine.openshift.io/v1beta1 kind: MachineSet metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id>1 name: <infrastructure_id>-<role>2 namespace: openshift-machine-api spec: replicas: 1 selector: matchLabels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machineset: <infrastructure_id>-<role> template: metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machine-role: <role> machine.openshift.io/cluster-api-machine-type: <role> machine.openshift.io/cluster-api-machineset: <infrastructure_id>-<role> spec: providerSpec:3 ...- 1

- The cluster infrastructure ID.

- 2

- A default node label.Note

For clusters that have user-provisioned infrastructure, a compute machine set can only create

workerandinfratype machines. - 3

- The values in the

<providerSpec>section of the compute machine set CR are platform-specific. For more information about<providerSpec>parameters in the CR, see the sample compute machine set CR configuration for your provider.

Create a

MachineSetCR by running the following command:$ oc create -f <file_name>.yaml

Verification

View the list of compute machine sets by running the following command:

$ oc get machineset -n openshift-machine-apiExample output

NAME DESIRED CURRENT READY AVAILABLE AGE agl030519-vplxk-windows-worker-us-east-1a 1 1 1 1 11m agl030519-vplxk-worker-us-east-1a 1 1 1 1 55m agl030519-vplxk-worker-us-east-1b 1 1 1 1 55m agl030519-vplxk-worker-us-east-1c 1 1 1 1 55m agl030519-vplxk-worker-us-east-1d 0 0 55m agl030519-vplxk-worker-us-east-1e 0 0 55m agl030519-vplxk-worker-us-east-1f 0 0 55mWhen the new compute machine set is available, the

DESIREDandCURRENTvalues match. If the compute machine set is not available, wait a few minutes and run the command again.

6.3. Creating a Windows machine set on vSphere

You can create a Windows MachineSet object to serve a specific purpose in your OpenShift Container Platform cluster on VMware vSphere. For example, you might create infrastructure Windows machine sets and related machines so that you can move supporting Windows workloads to the new Windows machines.

Prerequisites

- You installed the Windows Machine Config Operator (WMCO) using Operator Lifecycle Manager (OLM).

- You are using a supported Windows Server as the operating system image.

6.3.1. Machine API overview

The Machine API is a combination of primary resources that are based on the upstream Cluster API project and custom OpenShift Container Platform resources.

For OpenShift Container Platform 4.12 clusters, the Machine API performs all node host provisioning management actions after the cluster installation finishes. Because of this system, OpenShift Container Platform 4.12 offers an elastic, dynamic provisioning method on top of public or private cloud infrastructure.

The two primary resources are:

- Machines

-

A fundamental unit that describes the host for a node. A machine has a

providerSpecspecification, which describes the types of compute nodes that are offered for different cloud platforms. For example, a machine type for a compute node might define a specific machine type and required metadata. - Machine sets

MachineSetresources are groups of compute machines. Compute machine sets are to compute machines as replica sets are to pods. If you need more compute machines or must scale them down, you change thereplicasfield on theMachineSetresource to meet your compute need.WarningControl plane machines cannot be managed by compute machine sets.

Control plane machine sets provide management capabilities for supported control plane machines that are similar to what compute machine sets provide for compute machines.

For more information, see “Managing control plane machines".

The following custom resources add more capabilities to your cluster:

- Machine autoscaler

The

MachineAutoscalerresource automatically scales compute machines in a cloud. You can set the minimum and maximum scaling boundaries for nodes in a specified compute machine set, and the machine autoscaler maintains that range of nodes.The

MachineAutoscalerobject takes effect after aClusterAutoscalerobject exists. BothClusterAutoscalerandMachineAutoscalerresources are made available by theClusterAutoscalerOperatorobject.- Cluster autoscaler

This resource is based on the upstream cluster autoscaler project. In the OpenShift Container Platform implementation, it is integrated with the Machine API by extending the compute machine set API. You can use the cluster autoscaler to manage your cluster in the following ways:

- Set cluster-wide scaling limits for resources such as cores, nodes, memory, and GPU

- Set the priority so that the cluster prioritizes pods and new nodes are not brought online for less important pods

- Set the scaling policy so that you can scale up nodes but not scale them down

- Machine health check

-

The

MachineHealthCheckresource detects when a machine is unhealthy, deletes it, and, on supported platforms, makes a new machine.

In OpenShift Container Platform version 3.11, you could not roll out a multi-zone architecture easily because the cluster did not manage machine provisioning. Beginning with OpenShift Container Platform version 4.1, this process is easier. Each compute machine set is scoped to a single zone, so the installation program sends out compute machine sets across availability zones on your behalf. And then because your compute is dynamic, and in the face of a zone failure, you always have a zone for when you must rebalance your machines. In global Azure regions that do not have multiple availability zones, you can use availability sets to ensure high availability. The autoscaler provides best-effort balancing over the life of a cluster.

6.3.2. Preparing your vSphere environment for Windows container workloads

You must prepare your vSphere environment for Windows container workloads by creating the vSphere Windows VM golden image and enabling communication with the internal API server for the WMCO.

6.3.2.1. Creating the vSphere Windows VM golden image

Create a vSphere Windows virtual machine (VM) golden image.

Prerequisites

You have created a private/public key pair, which is used to configure key-based authentication in the OpenSSH server. The private key must be configured in the Windows Machine Config Operator (WMCO) namespace so that the WMCO can communicate with the Windows VM.

If you created the key pair on a Red Hat Enterprise Linux (RHEL) system, before you can use the public key on a Windows system, make sure the public key is saved using ASCII encoding. For example, the following PowerShell command copies a public key, encoding it for the ASCII character set:

C:\> echo "ssh-rsa <ssh_pub_key>" | Out-File <ssh_key_path> -Encoding asciiwhere:

<ssh_pub_key>- Specifies the SSH public key used to access the cluster.

<ssh_key_path>- Specifies the path to the SSH public key.

See the "Configuring a secret for the Windows Machine Config Operator" section for more details.

You must use Microsoft PowerShell commands in several cases when creating your Windows VM. PowerShell commands in this guide are distinguished by the PS C:\> prefix.

Procedure

- Select a compatible Windows Server version. Currently, the Windows Machine Config Operator (WMCO) stable version supports Windows Server 2022 Long-Term Servicing Channel with the OS-level container networking patch KB5012637.

Create a new VM in the vSphere client using the VM golden image with a compatible Windows Server version. For more information about compatible versions, see the "Windows Machine Config Operator prerequisites" section of the "Red Hat OpenShift support for Windows Containers release notes."

ImportantThe virtual hardware version for your VM must meet the infrastructure requirements for OpenShift Container Platform. For more information, see the "VMware vSphere infrastructure requirements" section in the OpenShift Container Platform documentation. Also, you can refer to VMware’s documentation on virtual machine hardware versions.

- Install and configure VMware Tools version 11.0.6 or greater on the Windows VM. See the VMware Tools documentation for more information.

After installing VMware Tools on the Windows VM, verify the following:

The

C:\ProgramData\VMware\VMware Tools\tools.conffile exists with the following entry:exclude-nics=If the

tools.conffile does not exist, create it with theexclude-nicsoption uncommented and set as an empty value.This entry ensures the cloned vNIC generated on the Windows VM by the hybrid-overlay is not ignored.

The Windows VM has a valid IP address in vCenter:

C:\> ipconfigThe VMTools Windows service is running:

PS C:\> Get-Service -Name VMTools | Select Status, StartType

- Install and configure the OpenSSH Server on the Windows VM. See Microsoft’s documentation on installing OpenSSH for more details.

Set up SSH access for an administrative user. See Microsoft’s documentation on the Administrative user to do this.

ImportantThe public key used in the instructions must correspond to the private key you create later in the WMCO namespace that holds your secret. See the "Configuring a secret for the Windows Machine Config Operator" section for more details.

You must create a new firewall rule in the Windows VM that allows incoming connections for container logs. Run the following PowerShell command to create the firewall rule on TCP port 10250:

PS C:\> New-NetFirewallRule -DisplayName "ContainerLogsPort" -LocalPort 10250 -Enabled True -Direction Inbound -Protocol TCP -Action Allow -EdgeTraversalPolicy Allow- Clone the Windows VM so it is a reusable image. Follow the VMware documentation on how to clone an existing virtual machine for more details.

In the cloned Windows VM, run the Windows Sysprep tool:

C:\> C:\Windows\System32\Sysprep\sysprep.exe /generalize /oobe /shutdown /unattend:<path_to_unattend.xml>1 - 1

- Specify the path to your

unattend.xmlfile.

NoteThere is a limit on how many times you can run the

sysprepcommand on a Windows image. Consult Microsoft’s documentation for more information.An example

unattend.xmlis provided, which maintains all the changes needed for the WMCO. You must modify this example; it cannot be used directly.Example 6.1. Example

unattend.xml<?xml version="1.0" encoding="UTF-8"?> <unattend xmlns="urn:schemas-microsoft-com:unattend"> <settings pass="specialize"> <component xmlns:wcm="http://schemas.microsoft.com/WMIConfig/2002/State" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" name="Microsoft-Windows-International-Core" processorArchitecture="amd64" publicKeyToken="31bf3856ad364e35" language="neutral" versionScope="nonSxS"> <InputLocale>0409:00000409</InputLocale> <SystemLocale>en-US</SystemLocale> <UILanguage>en-US</UILanguage> <UILanguageFallback>en-US</UILanguageFallback> <UserLocale>en-US</UserLocale> </component> <component xmlns:wcm="http://schemas.microsoft.com/WMIConfig/2002/State" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" name="Microsoft-Windows-Security-SPP-UX" processorArchitecture="amd64" publicKeyToken="31bf3856ad364e35" language="neutral" versionScope="nonSxS"> <SkipAutoActivation>true</SkipAutoActivation> </component> <component xmlns:wcm="http://schemas.microsoft.com/WMIConfig/2002/State" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" name="Microsoft-Windows-SQMApi" processorArchitecture="amd64" publicKeyToken="31bf3856ad364e35" language="neutral" versionScope="nonSxS"> <CEIPEnabled>0</CEIPEnabled> </component> <component xmlns:wcm="http://schemas.microsoft.com/WMIConfig/2002/State" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" name="Microsoft-Windows-Shell-Setup" processorArchitecture="amd64" publicKeyToken="31bf3856ad364e35" language="neutral" versionScope="nonSxS"> <ComputerName>winhost</ComputerName>1 </component> </settings> <settings pass="oobeSystem"> <component xmlns:wcm="http://schemas.microsoft.com/WMIConfig/2002/State" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" name="Microsoft-Windows-Shell-Setup" processorArchitecture="amd64" publicKeyToken="31bf3856ad364e35" language="neutral" versionScope="nonSxS"> <AutoLogon> <Enabled>false</Enabled>2 </AutoLogon> <OOBE> <HideEULAPage>true</HideEULAPage> <HideLocalAccountScreen>true</HideLocalAccountScreen> <HideOEMRegistrationScreen>true</HideOEMRegistrationScreen> <HideOnlineAccountScreens>true</HideOnlineAccountScreens> <HideWirelessSetupInOOBE>true</HideWirelessSetupInOOBE> <NetworkLocation>Work</NetworkLocation> <ProtectYourPC>1</ProtectYourPC> <SkipMachineOOBE>true</SkipMachineOOBE> <SkipUserOOBE>true</SkipUserOOBE> </OOBE> <RegisteredOrganization>Organization</RegisteredOrganization> <RegisteredOwner>Owner</RegisteredOwner> <DisableAutoDaylightTimeSet>false</DisableAutoDaylightTimeSet> <TimeZone>Eastern Standard Time</TimeZone> <UserAccounts> <AdministratorPassword> <Value>MyPassword</Value>3 <PlainText>true</PlainText> </AdministratorPassword> </UserAccounts> </component> </settings> </unattend>- 1

- Specify the

ComputerName, which must follow the Kubernetes' names specification. These specifications also apply to Guest OS customization performed on the resulting template while creating new VMs. - 2

- Disable the automatic logon to avoid the security issue of leaving an open terminal with Administrator privileges at boot. This is the default value and must not be changed.

- 3

- Replace the

MyPasswordplaceholder with the password for the Administrator account. This prevents the built-in Administrator account from having a blank password by default. Follow Microsoft’s best practices for choosing a password.

After the Sysprep tool has completed, the Windows VM will power off. You must not use or power on this VM anymore.

- Convert the Windows VM to a template in vCenter.

6.3.2.2. Enabling communication with the internal API server for the WMCO on vSphere

The Windows Machine Config Operator (WMCO) downloads the Ignition config files from the internal API server endpoint. You must enable communication with the internal API server so that your Windows virtual machine (VM) can download the Ignition config files, and the kubelet on the configured VM can only communicate with the internal API server.

Prerequisites

- You have installed a cluster on vSphere.

Procedure

-

Add a new DNS entry for

api-int.<cluster_name>.<base_domain>that points to the external API server URLapi.<cluster_name>.<base_domain>. This can be a CNAME or an additional A record.

The external API endpoint was already created as part of the initial cluster installation on vSphere.

6.3.3. Sample YAML for a Windows MachineSet object on vSphere

This sample YAML defines a Windows MachineSet object running on VMware vSphere that the Windows Machine Config Operator (WMCO) can react upon.

apiVersion: machine.openshift.io/v1beta1

kind: MachineSet

metadata:

labels:

machine.openshift.io/cluster-api-cluster: <infrastructure_id>

name: <windows_machine_set_name>

namespace: openshift-machine-api

spec:

replicas: 1

selector:

matchLabels:

machine.openshift.io/cluster-api-cluster: <infrastructure_id>

machine.openshift.io/cluster-api-machineset: <windows_machine_set_name>

template:

metadata:

labels:

machine.openshift.io/cluster-api-cluster: <infrastructure_id>

machine.openshift.io/cluster-api-machine-role: worker

machine.openshift.io/cluster-api-machine-type: worker

machine.openshift.io/cluster-api-machineset: <windows_machine_set_name>

machine.openshift.io/os-id: Windows

spec:

metadata:

labels:

node-role.kubernetes.io/worker: ""

providerSpec:

value:

apiVersion: vsphereprovider.openshift.io/v1beta1

credentialsSecret:

name: vsphere-cloud-credentials

diskGiB: 128

kind: VSphereMachineProviderSpec

memoryMiB: 16384

network:

devices:

- networkName: "<vm_network_name>"

numCPUs: 4

numCoresPerSocket: 1

snapshot: ""

template: <windows_vm_template_name>

userDataSecret:

name: windows-user-data

workspace:

datacenter: <vcenter_datacenter_name>

datastore: <vcenter_datastore_name>

folder: <vcenter_vm_folder_path>

resourcePool: <vsphere_resource_pool>

server: <vcenter_server_ip> - 1 3 5

- Specify the infrastructure ID that is based on the cluster ID that you set when you provisioned the cluster. You can obtain the infrastructure ID by running the following command:

$ oc get -o jsonpath='{.status.infrastructureName}{"\n"}' infrastructure cluster - 2 4 6

- Specify the Windows compute machine set name. The compute machine set name cannot be more than 9 characters long, due to the way machine names are generated in vSphere.

- 7

- Configure the compute machine set as a Windows machine.

- 8

- Configure the Windows node as a compute machine.

- 9

- Specify the size of the vSphere Virtual Machine Disk (VMDK).Note

This parameter does not set the size of the Windows partition. You can resize the Windows partition by using the

unattend.xmlfile or by creating the vSphere Windows virtual machine (VM) golden image with the required disk size. - 10

- Specify the vSphere VM network to deploy the compute machine set to. This VM network must be where other Linux compute machines reside in the cluster.

- 11

- Specify the full path of the Windows vSphere VM template to use, such as

golden-images/windows-server-template. The name must be unique.ImportantDo not specify the original VM template. The VM template must remain off and must be cloned for new Windows machines. Starting the VM template configures the VM template as a VM on the platform, which prevents it from being used as a template that compute machine sets can apply configurations to.

- 12

- The

windows-user-datais created by the WMCO when the first Windows machine is configured. After that, thewindows-user-datais available for all subsequent compute machine sets to consume. - 13

- Specify the vCenter Datacenter to deploy the compute machine set on.

- 14

- Specify the vCenter Datastore to deploy the compute machine set on.

- 15

- Specify the path to the vSphere VM folder in vCenter, such as

/dc1/vm/user-inst-5ddjd. - 16

- Optional: Specify the vSphere resource pool for your Windows VMs.

- 17

- Specify the vCenter server IP or fully qualified domain name.

6.3.4. Creating a compute machine set

In addition to the compute machine sets created by the installation program, you can create your own to dynamically manage the machine compute resources for specific workloads of your choice.

Prerequisites

- Deploy an OpenShift Container Platform cluster.

-

Install the OpenShift CLI (

oc). -

Log in to

ocas a user withcluster-adminpermission.

Procedure

Create a new YAML file that contains the compute machine set custom resource (CR) sample and is named

<file_name>.yaml.Ensure that you set the

<clusterID>and<role>parameter values.Optional: If you are not sure which value to set for a specific field, you can check an existing compute machine set from your cluster.

To list the compute machine sets in your cluster, run the following command:

$ oc get machinesets -n openshift-machine-apiExample output

NAME DESIRED CURRENT READY AVAILABLE AGE agl030519-vplxk-worker-us-east-1a 1 1 1 1 55m agl030519-vplxk-worker-us-east-1b 1 1 1 1 55m agl030519-vplxk-worker-us-east-1c 1 1 1 1 55m agl030519-vplxk-worker-us-east-1d 0 0 55m agl030519-vplxk-worker-us-east-1e 0 0 55m agl030519-vplxk-worker-us-east-1f 0 0 55mTo view values of a specific compute machine set custom resource (CR), run the following command:

$ oc get machineset <machineset_name> \ -n openshift-machine-api -o yamlExample output

apiVersion: machine.openshift.io/v1beta1 kind: MachineSet metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id>1 name: <infrastructure_id>-<role>2 namespace: openshift-machine-api spec: replicas: 1 selector: matchLabels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machineset: <infrastructure_id>-<role> template: metadata: labels: machine.openshift.io/cluster-api-cluster: <infrastructure_id> machine.openshift.io/cluster-api-machine-role: <role> machine.openshift.io/cluster-api-machine-type: <role> machine.openshift.io/cluster-api-machineset: <infrastructure_id>-<role> spec: providerSpec:3 ...- 1

- The cluster infrastructure ID.

- 2

- A default node label.Note

For clusters that have user-provisioned infrastructure, a compute machine set can only create

workerandinfratype machines. - 3

- The values in the

<providerSpec>section of the compute machine set CR are platform-specific. For more information about<providerSpec>parameters in the CR, see the sample compute machine set CR configuration for your provider.

Create a

MachineSetCR by running the following command:$ oc create -f <file_name>.yaml

Verification

View the list of compute machine sets by running the following command:

$ oc get machineset -n openshift-machine-apiExample output

NAME DESIRED CURRENT READY AVAILABLE AGE agl030519-vplxk-windows-worker-us-east-1a 1 1 1 1 11m agl030519-vplxk-worker-us-east-1a 1 1 1 1 55m agl030519-vplxk-worker-us-east-1b 1 1 1 1 55m agl030519-vplxk-worker-us-east-1c 1 1 1 1 55m agl030519-vplxk-worker-us-east-1d 0 0 55m agl030519-vplxk-worker-us-east-1e 0 0 55m agl030519-vplxk-worker-us-east-1f 0 0 55mWhen the new compute machine set is available, the

DESIREDandCURRENTvalues match. If the compute machine set is not available, wait a few minutes and run the command again.

6.4. Creating a Windows machine set on Google Cloud

You can create a Windows MachineSet object to serve a specific purpose in your OpenShift Container Platform cluster on Google Cloud. For example, you might create infrastructure Windows machine sets and related machines so that you can move supporting Windows workloads to the new Windows machines.

Prerequisites

- You installed the Windows Machine Config Operator (WMCO) using Operator Lifecycle Manager (OLM).

- You are using a supported Windows Server as the operating system image.

6.4.1. Machine API overview

The Machine API is a combination of primary resources that are based on the upstream Cluster API project and custom OpenShift Container Platform resources.

For OpenShift Container Platform 4.12 clusters, the Machine API performs all node host provisioning management actions after the cluster installation finishes. Because of this system, OpenShift Container Platform 4.12 offers an elastic, dynamic provisioning method on top of public or private cloud infrastructure.

The two primary resources are:

- Machines

-

A fundamental unit that describes the host for a node. A machine has a

providerSpecspecification, which describes the types of compute nodes that are offered for different cloud platforms. For example, a machine type for a compute node might define a specific machine type and required metadata. - Machine sets

MachineSetresources are groups of compute machines. Compute machine sets are to compute machines as replica sets are to pods. If you need more compute machines or must scale them down, you change thereplicasfield on theMachineSetresource to meet your compute need.WarningControl plane machines cannot be managed by compute machine sets.

Control plane machine sets provide management capabilities for supported control plane machines that are similar to what compute machine sets provide for compute machines.

For more information, see “Managing control plane machines".

The following custom resources add more capabilities to your cluster:

- Machine autoscaler

The

MachineAutoscalerresource automatically scales compute machines in a cloud. You can set the minimum and maximum scaling boundaries for nodes in a specified compute machine set, and the machine autoscaler maintains that range of nodes.The

MachineAutoscalerobject takes effect after aClusterAutoscalerobject exists. BothClusterAutoscalerandMachineAutoscalerresources are made available by theClusterAutoscalerOperatorobject.- Cluster autoscaler