Building applications

Creating and managing applications on OpenShift Container Platform

Abstract

Chapter 1. Building applications overview

Using OpenShift Container Platform, you can create, edit, delete, and manage applications using the web console or command-line interface (CLI).

1.1. Working on a project

Using projects, you can organize and manage applications in isolation. You can manage the entire project lifecycle, including creating, viewing, and deleting a project in OpenShift Container Platform.

After you create the project, you can grant or revoke access to a project and manage cluster roles for the users using the Developer perspective. You can also edit the project configuration resource while creating a project template that is used for automatic provisioning of new projects.

Using the CLI, you can create a project as a different user by impersonating a request to the OpenShift Container Platform API. When you make a request to create a new project, the OpenShift Container Platform uses an endpoint to provision the project according to a customizable template. As a cluster administrator, you can choose to prevent an authenticated user group from self-provisioning new projects.

1.2. Working on an application

1.2.1. Creating an application

To create applications, you must have created a project or have access to a project with the appropriate roles and permissions. You can create an application by using either the Developer perspective in the web console, installed Operators, or the OpenShift CLI (oc). You can source the applications to be added to the project from Git, JAR files, devfiles, or the developer catalog.

You can also use components that include source or binary code, images, and templates to create an application by using the OpenShift CLI (oc). With the OpenShift Container Platform web console, you can create an application from an Operator installed by a cluster administrator.

1.2.2. Maintaining an application

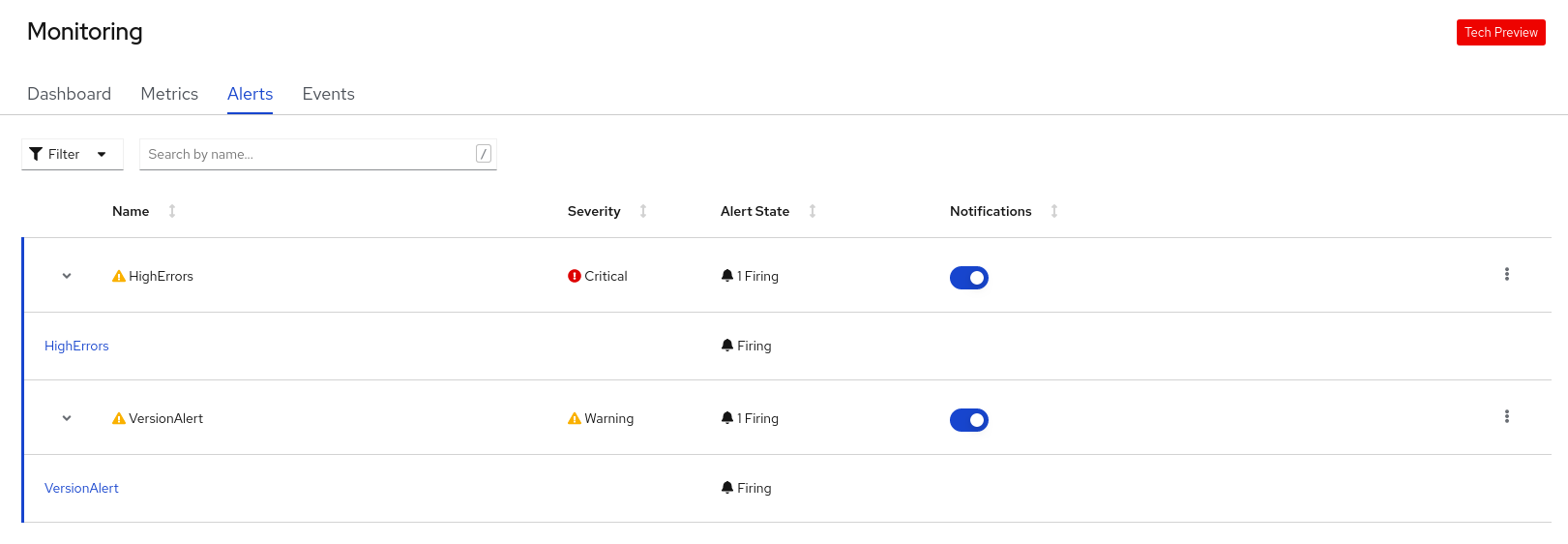

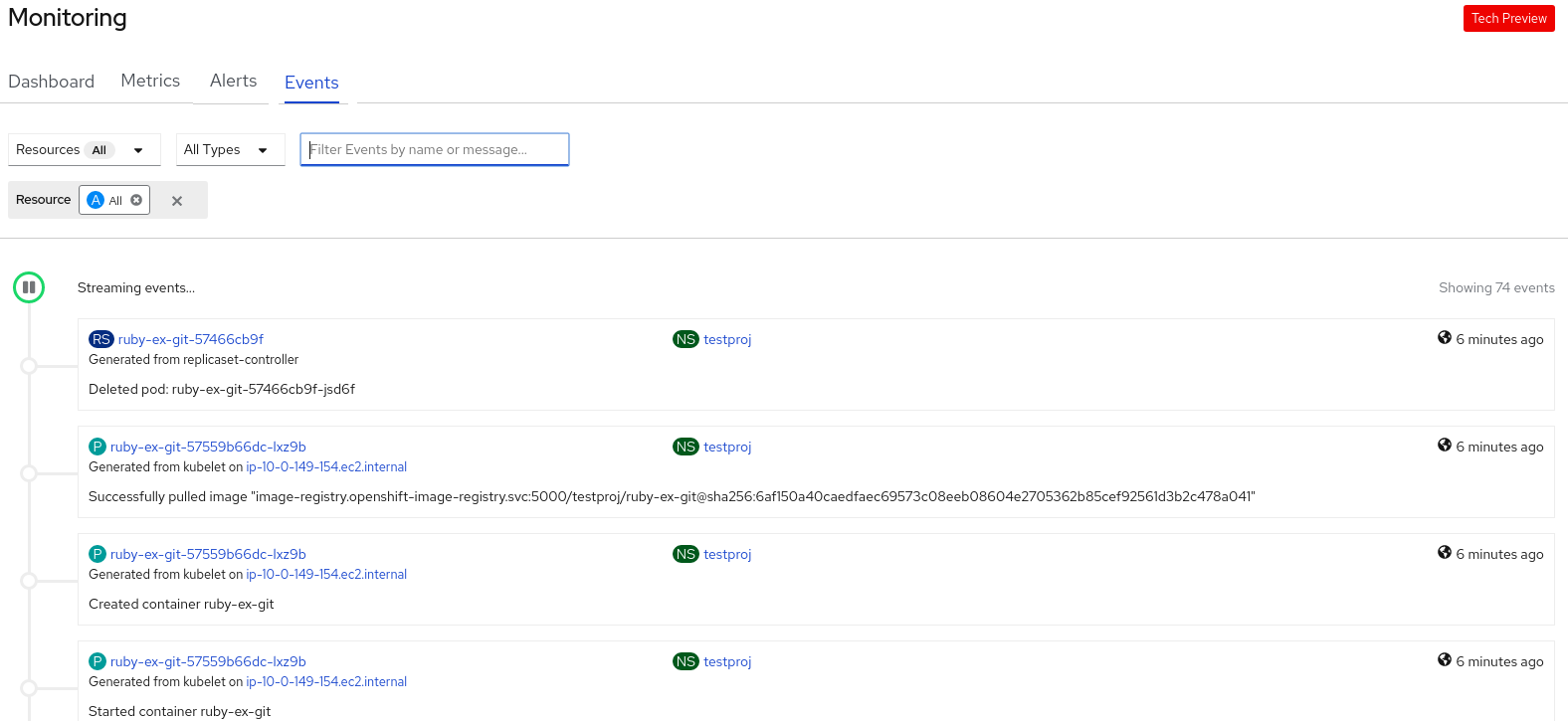

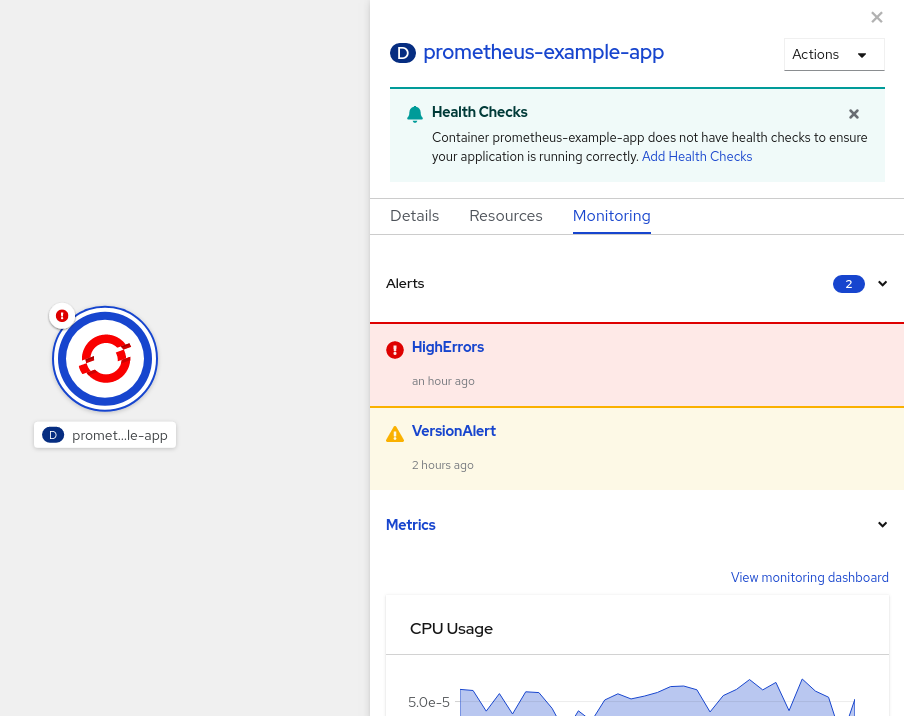

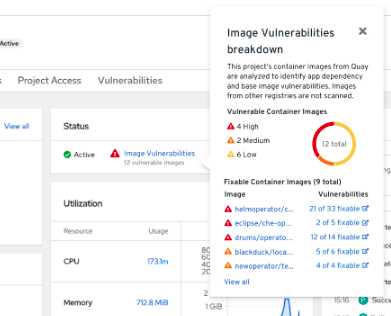

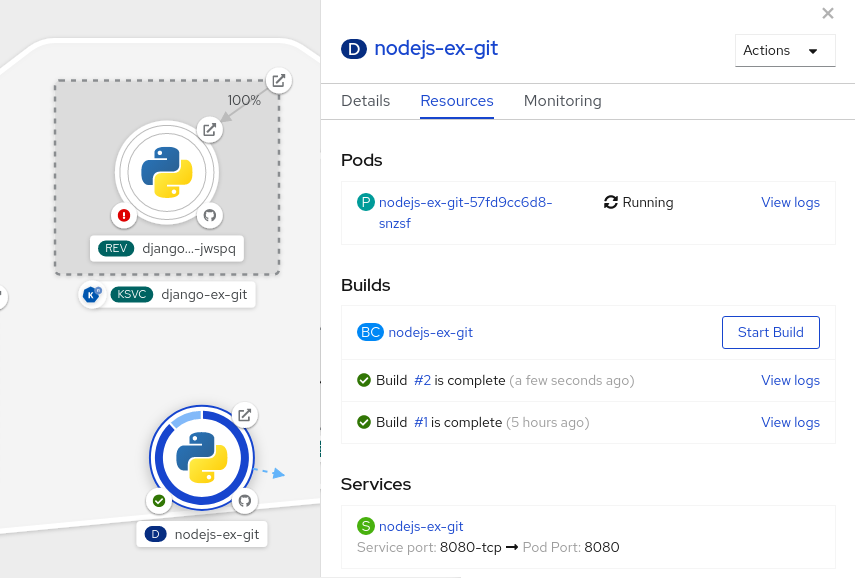

After you create the application, you can use the web console to monitor your project or application metrics. You can also edit or delete the application using the web console.

When the application is running, not all applications resources are used. As a cluster administrator, you can choose to idle these scalable resources to reduce resource consumption.

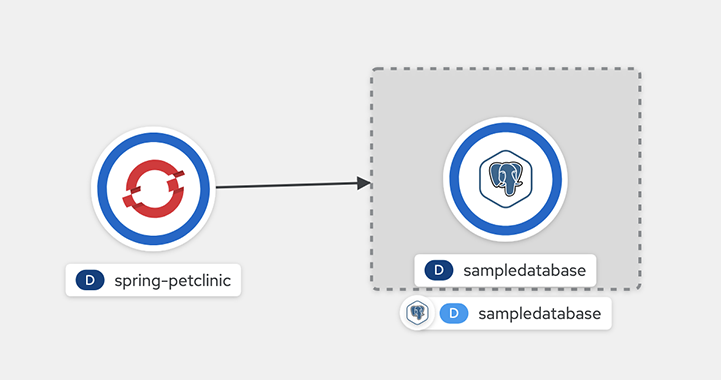

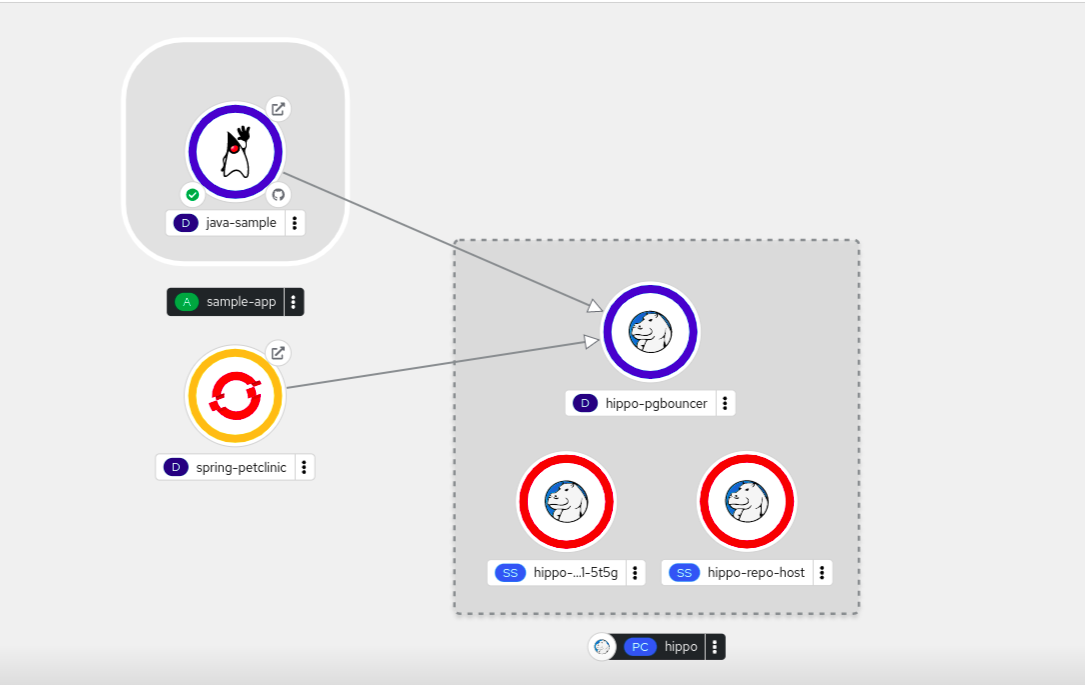

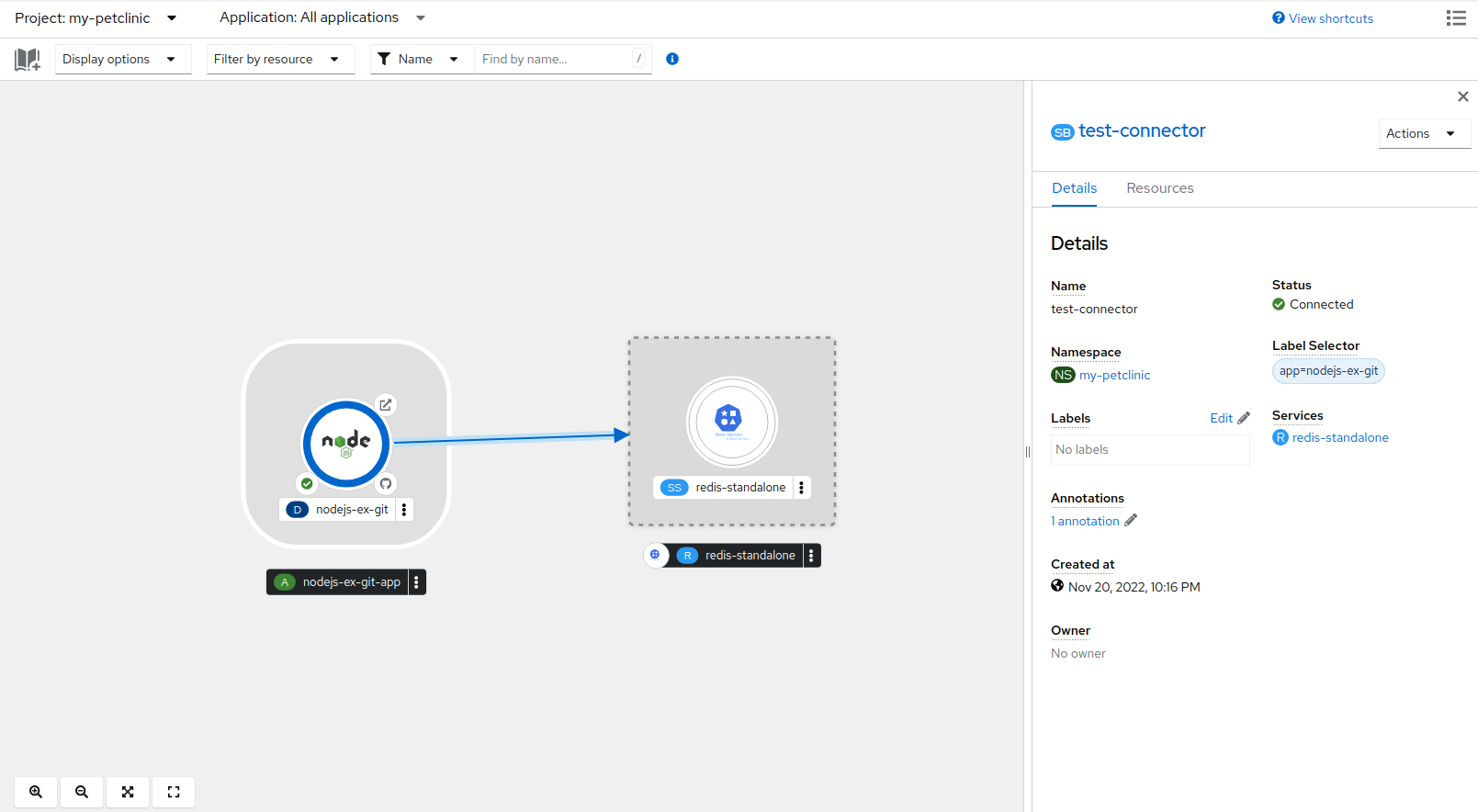

1.2.3. Connecting an application to services

An application uses backing services to build and connect workloads, which vary according to the service provider. Using the Service Binding Operator, as a developer, you can bind workloads together with Operator-managed backing services, without any manual procedures to configure the binding connection. You can apply service binding also on IBM Power Systems, IBM Z, and LinuxONE environments.

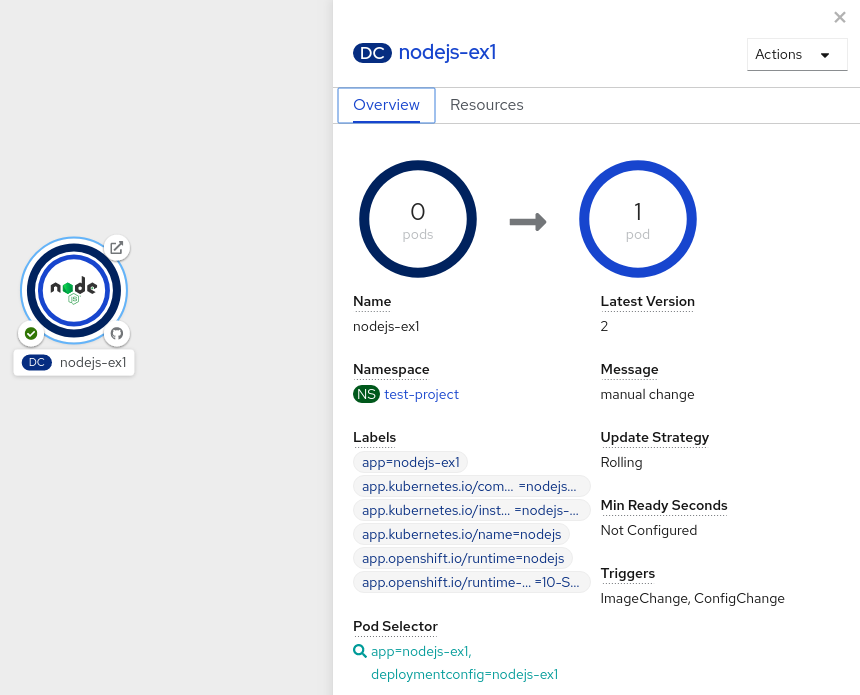

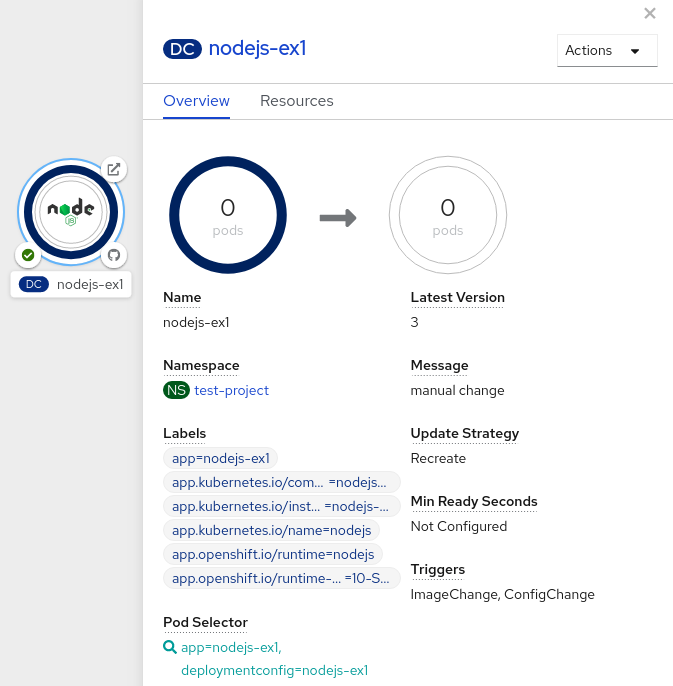

1.2.4. Deploying an application

You can deploy your application using Deployment or DeploymentConfig objects and manage them from the web console. You can create deployment strategies that help reduce downtime during a change or an upgrade to the application.

You can also use Helm, a software package manager that simplifies deployment of applications and services to OpenShift Container Platform clusters.

1.3. Using the Red Hat Marketplace

The Red Hat Marketplace is an open cloud marketplace where you can discover and access certified software for container-based environments that run on public clouds and on-premise.

Chapter 2. Projects

2.1. Working with projects

A project allows a community of users to organize and manage their content in isolation from other communities.

Projects starting with openshift- and kube- are default projects. These projects host cluster components that run as pods and other infrastructure components. As such, OpenShift Container Platform does not allow you to create projects starting with openshift- or kube- using the oc new-project command. Cluster administrators can create these projects using the oc adm new-project command.

Do not run workloads in or share access to default projects. Default projects are reserved for running core cluster components.

The following default projects are considered highly privileged: default, kube-public, kube-system, openshift, openshift-infra, openshift-node, and other system-created projects that have the openshift.io/run-level label set to 0 or 1. Functionality that relies on admission plugins, such as pod security admission, security context constraints, cluster resource quotas, and image reference resolution, does not work in highly privileged projects.

2.1.1. Creating a project

You can use the OpenShift Container Platform web console or the OpenShift CLI (oc) to create a project in your cluster.

2.1.1.1. Creating a project by using the web console

You can use the OpenShift Container Platform web console to create a project in your cluster.

Projects starting with openshift- and kube- are considered critical by OpenShift Container Platform. As such, OpenShift Container Platform does not allow you to create projects starting with openshift- using the web console.

Prerequisites

- Ensure that you have the appropriate roles and permissions to create projects, applications, and other workloads in OpenShift Container Platform.

Procedure

If you are using the Administrator perspective:

- Navigate to Home → Projects.

Click Create Project:

-

In the Create Project dialog box, enter a unique name, such as

myproject, in the Name field. - Optional: Add the Display name and Description details for the project.

Click Create.

The dashboard for your project is displayed.

-

In the Create Project dialog box, enter a unique name, such as

- Optional: Select the Details tab to view the project details.

- Optional: If you have adequate permissions for a project, you can use the Project Access tab to provide or revoke admin, edit, and view privileges for the project.

If you are using the Developer perspective:

Click the Project menu and select Create Project:

Figure 2.1. Create project

-

In the Create Project dialog box, enter a unique name, such as

myproject, in the Name field. - Optional: Add the Display name and Description details for the project.

- Click Create.

-

In the Create Project dialog box, enter a unique name, such as

- Optional: Use the left navigation panel to navigate to the Project view and see the dashboard for your project.

- Optional: In the project dashboard, select the Details tab to view the project details.

- Optional: If you have adequate permissions for a project, you can use the Project Access tab of the project dashboard to provide or revoke admin, edit, and view privileges for the project.

Additional resources

2.1.1.2. Creating a project by using the CLI

If allowed by your cluster administrator, you can create a new project.

Projects starting with openshift- and kube- are considered critical by OpenShift Container Platform. As such, OpenShift Container Platform does not allow you to create Projects starting with openshift- or kube- using the oc new-project command. Cluster administrators can create these projects using the oc adm new-project command.

Procedure

Run:

$ oc new-project <project_name> \ --description="<description>" --display-name="<display_name>"For example:

$ oc new-project hello-openshift \ --description="This is an example project" \ --display-name="Hello OpenShift"

The number of projects you are allowed to create might be limited by the system administrator. After your limit is reached, you might have to delete an existing project in order to create a new one.

2.1.2. Viewing a project

You can use the OpenShift Container Platform web console or the OpenShift CLI (oc) to view a project in your cluster.

2.1.2.1. Viewing a project by using the web console

You can view the projects that you have access to by using the OpenShift Container Platform web console.

Procedure

If you are using the Administrator perspective:

- Navigate to Home → Projects in the navigation menu.

- Select a project to view. The Overview tab includes a dashboard for your project.

- Select the Details tab to view the project details.

- Select the YAML tab to view and update the YAML configuration for the project resource.

- Select the Workloads tab to see workloads in the project.

- Select the RoleBindings tab to view and create role bindings for your project.

If you are using the Developer perspective:

- Navigate to the Project page in the navigation menu.

- Select All Projects from the Project drop-down menu at the top of the screen to list all of the projects in your cluster.

- Select a project to view. The Overview tab includes a dashboard for your project.

- Select the Details tab to view the project details.

- If you have adequate permissions for a project, select the Project access tab view and update the privileges for the project.

2.1.2.2. Viewing a project using the CLI

When viewing projects, you are restricted to seeing only the projects you have access to view based on the authorization policy.

Procedure

To view a list of projects, run:

$ oc get projectsYou can change from the current project to a different project for CLI operations. The specified project is then used in all subsequent operations that manipulate project-scoped content:

$ oc project <project_name>

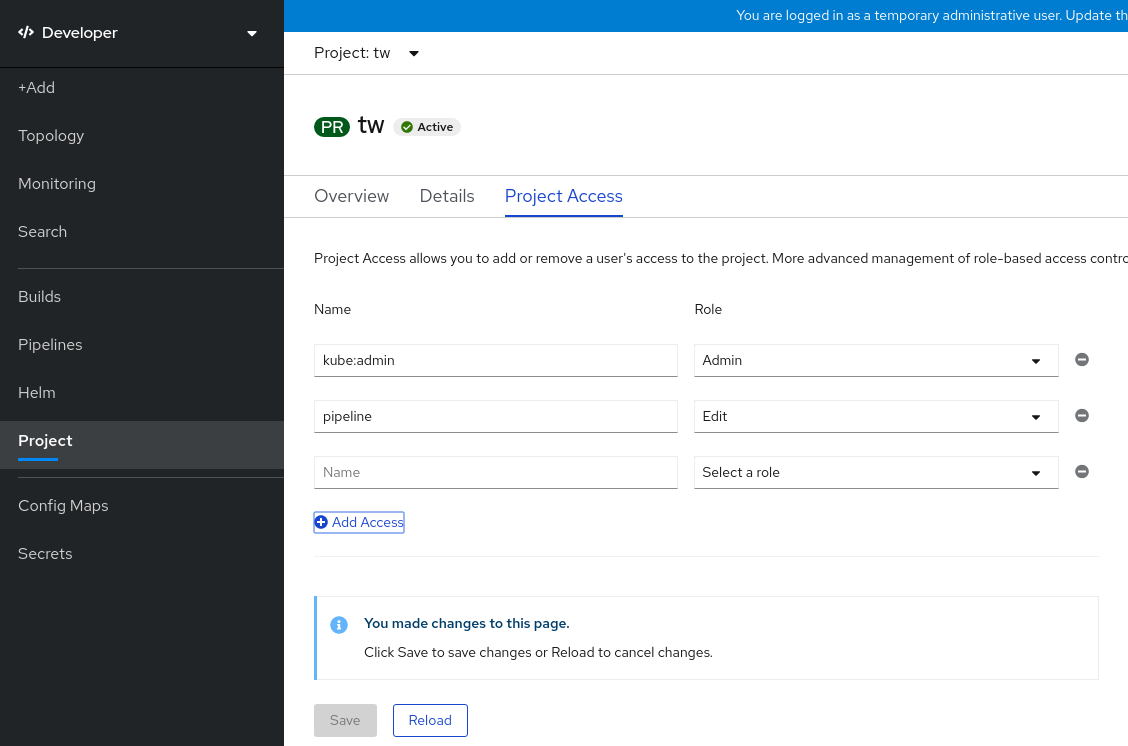

2.1.3. Providing access permissions to your project using the Developer perspective

You can use the Project view in the Developer perspective to grant or revoke access permissions to your project.

Prerequisites

- You have created a project.

Procedure

To add users to your project and provide Admin, Edit, or View access to them:

- In the Developer perspective, navigate to the Project page.

- Select your project from the Project menu.

- Select the Project Access tab.

Click Add access to add a new row of permissions to the default ones.

Figure 2.2. Project permissions

- Enter the user name, click the Select a role drop-down list, and select an appropriate role.

- Click Save to add the new permissions.

You can also use:

- The Select a role drop-down list, to modify the access permissions of an existing user.

- The Remove Access icon, to completely remove the access permissions of an existing user to the project.

Advanced role-based access control is managed in the Roles and Roles Binding views in the Administrator perspective.

2.1.4. Customizing the available cluster roles using the web console

In the Developer perspective of the web console, the Project → Project access page enables a project administrator to grant roles to users in a project. By default, the available cluster roles that can be granted to users in a project are admin, edit, and view.

As a cluster administrator, you can define which cluster roles are available in the Project access page for all projects cluster-wide. You can specify the available roles by customizing the spec.customization.projectAccess.availableClusterRoles object in the Console configuration resource.

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole.

Procedure

- In the Administrator perspective, navigate to Administration → Cluster settings.

- Click the Configuration tab.

-

From the Configuration resource list, select Console

operator.openshift.io. - Navigate to the YAML tab to view and edit the YAML code.

In the YAML code under

spec, customize the list of available cluster roles for project access. The following example specifies the defaultadmin,edit, andviewroles:apiVersion: operator.openshift.io/v1 kind: Console metadata: name: cluster # ... spec: customization: projectAccess: availableClusterRoles: - admin - edit - view-

Click Save to save the changes to the

Consoleconfiguration resource.

Verification

- In the Developer perspective, navigate to the Project page.

- Select a project from the Project menu.

- Select the Project access tab.

-

Click the menu in the Role column and verify that the available roles match the configuration that you applied to the

Consoleresource configuration.

2.1.5. Adding to a project

You can add items to your project by using the +Add page in the Developer perspective.

Prerequisites

- You have created a project.

Procedure

- In the Developer perspective, navigate to the +Add page.

- Select your project from the Project menu.

- Click on an item on the +Add page and then follow the workflow.

You can also use the search feature in the Add* page to find additional items to add to your project. Click * under Add at the top of the page and type the name of a component in the search field.

2.1.6. Checking the project status

You can use the OpenShift Container Platform web console or the OpenShift CLI (oc) to view the status of your project.

2.1.6.1. Checking project status by using the web console

You can review the status of your project by using the web console.

Prerequisites

- You have created a project.

Procedure

If you are using the Administrator perspective:

- Navigate to Home → Projects.

- Select a project from the list.

- Review the project status in the Overview page.

If you are using the Developer perspective:

- Navigate to the Project page.

- Select a project from the Project menu.

- Review the project status in the Overview page.

2.1.6.2. Checking project status by using the CLI

You can review the status of your project by using the OpenShift CLI (oc).

Prerequisites

-

You have installed the OpenShift CLI (

oc). - You have created a project.

Procedure

Switch to your project:

$ oc project <project_name>1 - 1

- Replace

<project_name>with the name of your project.

Obtain a high-level overview of the project:

$ oc status

2.1.7. Deleting a project

You can use the OpenShift Container Platform web console or the OpenShift CLI (oc) to delete a project.

When you delete a project, the server updates the project status to Terminating from Active. Then, the server clears all content from a project that is in the Terminating state before finally removing the project. While a project is in Terminating status, you cannot add new content to the project. Projects can be deleted from the CLI or the web console.

2.1.7.1. Deleting a project by using the web console

You can delete a project by using the web console.

Prerequisites

- You have created a project.

- You have the required permissions to delete the project.

Procedure

If you are using the Administrator perspective:

- Navigate to Home → Projects.

- Select a project from the list.

Click the Actions drop-down menu for the project and select Delete Project.

NoteThe Delete Project option is not available if you do not have the required permissions to delete the project.

- In the Delete Project? pane, confirm the deletion by entering the name of your project.

- Click Delete.

If you are using the Developer perspective:

- Navigate to the Project page.

- Select the project that you want to delete from the Project menu.

Click the Actions drop-down menu for the project and select Delete Project.

NoteIf you do not have the required permissions to delete the project, the Delete Project option is not available.

- In the Delete Project? pane, confirm the deletion by entering the name of your project.

- Click Delete.

2.1.7.2. Deleting a project by using the CLI

You can delete a project by using the OpenShift CLI (oc).

Prerequisites

-

You have installed the OpenShift CLI (

oc). - You have created a project.

- You have the required permissions to delete the project.

Procedure

Delete your project:

$ oc delete project <project_name>1 - 1

- Replace

<project_name>with the name of the project that you want to delete.

2.2. Creating a project as another user

Impersonation allows you to create a project as a different user.

2.2.1. API impersonation

You can configure a request to the OpenShift Container Platform API to act as though it originated from another user. For more information, see User impersonation in the Kubernetes documentation.

2.2.2. Impersonating a user when you create a project

You can impersonate a different user when you create a project request. Because system:authenticated:oauth is the only bootstrap group that can create project requests, you must impersonate that group.

Procedure

To create a project request on behalf of a different user:

$ oc new-project <project> --as=<user> \ --as-group=system:authenticated --as-group=system:authenticated:oauth

2.3. Configuring project creation

In OpenShift Container Platform, projects are used to group and isolate related objects. When a request is made to create a new project using the web console or oc new-project command, an endpoint in OpenShift Container Platform is used to provision the project according to a template, which can be customized.

As a cluster administrator, you can allow and configure how developers and service accounts can create, or self-provision, their own projects.

2.3.1. About project creation

The OpenShift Container Platform API server automatically provisions new projects based on the project template that is identified by the projectRequestTemplate parameter in the cluster’s project configuration resource. If the parameter is not defined, the API server creates a default template that creates a project with the requested name, and assigns the requesting user to the admin role for that project.

When a project request is submitted, the API substitutes the following parameters into the template:

| Parameter | Description |

|---|---|

|

| The name of the project. Required. |

|

| The display name of the project. May be empty. |

|

| The description of the project. May be empty. |

|

| The user name of the administrating user. |

|

| The user name of the requesting user. |

Access to the API is granted to developers with the self-provisioner role and the self-provisioners cluster role binding. This role is available to all authenticated developers by default.

2.3.2. Modifying the template for new projects

As a cluster administrator, you can modify the default project template so that new projects are created using your custom requirements.

To create your own custom project template:

Prerequisites

-

You have access to an OpenShift Container Platform cluster using an account with

cluster-adminpermissions.

Procedure

-

Log in as a user with

cluster-adminprivileges. Generate the default project template:

$ oc adm create-bootstrap-project-template -o yaml > template.yaml-

Use a text editor to modify the generated

template.yamlfile by adding objects or modifying existing objects. The project template must be created in the

openshift-confignamespace. Load your modified template:$ oc create -f template.yaml -n openshift-configEdit the project configuration resource using the web console or CLI.

Using the web console:

- Navigate to the Administration → Cluster Settings page.

- Click Configuration to view all configuration resources.

- Find the entry for Project and click Edit YAML.

Using the CLI:

Edit the

project.config.openshift.io/clusterresource:$ oc edit project.config.openshift.io/cluster

Update the

specsection to include theprojectRequestTemplateandnameparameters, and set the name of your uploaded project template. The default name isproject-request.Project configuration resource with custom project template

apiVersion: config.openshift.io/v1 kind: Project metadata: # ... spec: projectRequestTemplate: name: <template_name> # ...- After you save your changes, create a new project to verify that your changes were successfully applied.

2.3.3. Disabling project self-provisioning

You can prevent an authenticated user group from self-provisioning new projects.

Procedure

-

Log in as a user with

cluster-adminprivileges. View the

self-provisionerscluster role binding usage by running the following command:$ oc describe clusterrolebinding.rbac self-provisionersExample output

Name: self-provisioners Labels: <none> Annotations: rbac.authorization.kubernetes.io/autoupdate=true Role: Kind: ClusterRole Name: self-provisioner Subjects: Kind Name Namespace ---- ---- --------- Group system:authenticated:oauthReview the subjects in the

self-provisionerssection.Remove the

self-provisionercluster role from the groupsystem:authenticated:oauth.If the

self-provisionerscluster role binding binds only theself-provisionerrole to thesystem:authenticated:oauthgroup, run the following command:$ oc patch clusterrolebinding.rbac self-provisioners -p '{"subjects": null}'If the

self-provisionerscluster role binding binds theself-provisionerrole to more users, groups, or service accounts than thesystem:authenticated:oauthgroup, run the following command:$ oc adm policy \ remove-cluster-role-from-group self-provisioner \ system:authenticated:oauth

Edit the

self-provisionerscluster role binding to prevent automatic updates to the role. Automatic updates reset the cluster roles to the default state.To update the role binding using the CLI:

Run the following command:

$ oc edit clusterrolebinding.rbac self-provisionersIn the displayed role binding, set the

rbac.authorization.kubernetes.io/autoupdateparameter value tofalse, as shown in the following example:apiVersion: authorization.openshift.io/v1 kind: ClusterRoleBinding metadata: annotations: rbac.authorization.kubernetes.io/autoupdate: "false" # ...

To update the role binding by using a single command:

$ oc patch clusterrolebinding.rbac self-provisioners -p '{ "metadata": { "annotations": { "rbac.authorization.kubernetes.io/autoupdate": "false" } } }'

Log in as an authenticated user and verify that it can no longer self-provision a project:

$ oc new-project testExample output

Error from server (Forbidden): You may not request a new project via this API.Consider customizing this project request message to provide more helpful instructions specific to your organization.

2.3.4. Customizing the project request message

When a developer or a service account that is unable to self-provision projects makes a project creation request using the web console or CLI, the following error message is returned by default:

You may not request a new project via this API.Cluster administrators can customize this message. Consider updating it to provide further instructions on how to request a new project specific to your organization. For example:

-

To request a project, contact your system administrator at

projectname@example.com. -

To request a new project, fill out the project request form located at

https://internal.example.com/openshift-project-request.

To customize the project request message:

Procedure

Edit the project configuration resource using the web console or CLI.

Using the web console:

- Navigate to the Administration → Cluster Settings page.

- Click Configuration to view all configuration resources.

- Find the entry for Project and click Edit YAML.

Using the CLI:

-

Log in as a user with

cluster-adminprivileges. Edit the

project.config.openshift.io/clusterresource:$ oc edit project.config.openshift.io/cluster

-

Log in as a user with

Update the

specsection to include theprojectRequestMessageparameter and set the value to your custom message:Project configuration resource with custom project request message

apiVersion: config.openshift.io/v1 kind: Project metadata: # ... spec: projectRequestMessage: <message_string> # ...For example:

apiVersion: config.openshift.io/v1 kind: Project metadata: # ... spec: projectRequestMessage: To request a project, contact your system administrator at projectname@example.com. # ...- After you save your changes, attempt to create a new project as a developer or service account that is unable to self-provision projects to verify that your changes were successfully applied.

Chapter 3. Creating applications

3.1. Using templates

The following sections provide an overview of templates, as well as how to use and create them.

3.1.1. Understanding templates

A template describes a set of objects that can be parameterized and processed to produce a list of objects for creation by OpenShift Container Platform. A template can be processed to create anything you have permission to create within a project, for example services, build configurations, and deployment configurations. A template can also define a set of labels to apply to every object defined in the template.

You can create a list of objects from a template using the CLI or, if a template has been uploaded to your project or the global template library, using the web console.

3.1.2. Uploading a template

If you have a JSON or YAML file that defines a template, you can upload the template to projects using the CLI. This saves the template to the project for repeated use by any user with appropriate access to that project. Instructions about writing your own templates are provided later in this topic.

Procedure

Upload a template using one of the following methods:

Upload a template to your current project’s template library, pass the JSON or YAML file with the following command:

$ oc create -f <filename>Upload a template to a different project using the

-noption with the name of the project:$ oc create -f <filename> -n <project>

The template is now available for selection using the web console or the CLI.

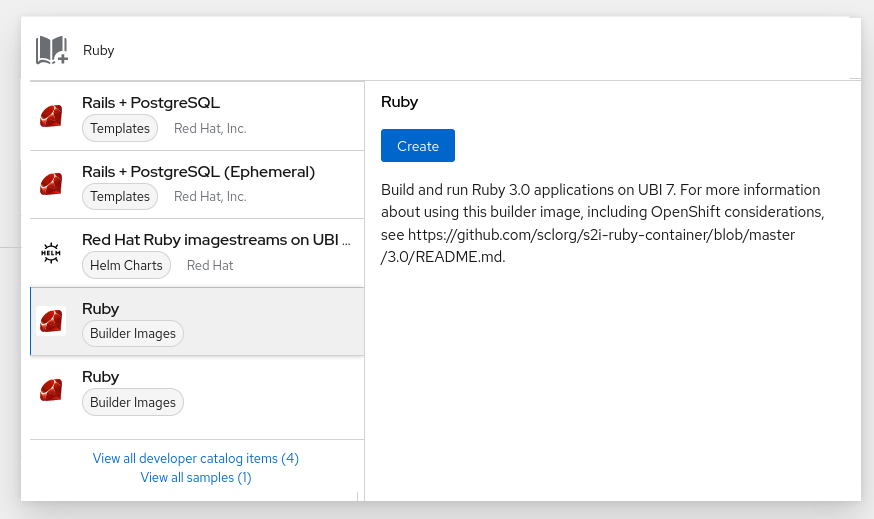

3.1.3. Creating an application by using the web console

You can use the web console to create an application from a template.

Procedure

- Select Developer from the context selector at the top of the web console navigation menu.

- While in the desired project, click +Add

- Click All services in the Developer Catalog tile.

Click Builder Images under Type to see the available builder images.

NoteOnly image stream tags that have the

buildertag listed in their annotations appear in this list, as demonstrated here:kind: "ImageStream" apiVersion: "v1" metadata: name: "ruby" creationTimestamp: null spec: # ... tags: - name: "2.6" annotations: description: "Build and run Ruby 2.6 applications" iconClass: "icon-ruby" tags: "builder,ruby"1 supports: "ruby:2.6,ruby" version: "2.6" # ...- 1

- Including

builderhere ensures this image stream tag appears in the web console as a builder.

- Modify the settings in the new application screen to configure the objects to support your application.

3.1.4. Creating objects from templates by using the CLI

You can use the CLI to process templates and use the configuration that is generated to create objects.

3.1.4.1. Adding labels

Labels are used to manage and organize generated objects, such as pods. The labels specified in the template are applied to every object that is generated from the template.

Procedure

Add labels in the template from the command line:

$ oc process -f <filename> -l name=otherLabel

3.1.4.2. Listing parameters

The list of parameters that you can override are listed in the parameters section of the template.

Procedure

You can list parameters with the CLI by using the following command and specifying the file to be used:

$ oc process --parameters -f <filename>Alternatively, if the template is already uploaded:

$ oc process --parameters -n <project> <template_name>For example, the following shows the output when listing the parameters for one of the quick start templates in the default

openshiftproject:$ oc process --parameters -n openshift rails-postgresql-exampleExample output

NAME DESCRIPTION GENERATOR VALUE SOURCE_REPOSITORY_URL The URL of the repository with your application source code https://github.com/sclorg/rails-ex.git SOURCE_REPOSITORY_REF Set this to a branch name, tag or other ref of your repository if you are not using the default branch CONTEXT_DIR Set this to the relative path to your project if it is not in the root of your repository APPLICATION_DOMAIN The exposed hostname that will route to the Rails service rails-postgresql-example.openshiftapps.com GITHUB_WEBHOOK_SECRET A secret string used to configure the GitHub webhook expression [a-zA-Z0-9]{40} SECRET_KEY_BASE Your secret key for verifying the integrity of signed cookies expression [a-z0-9]{127} APPLICATION_USER The application user that is used within the sample application to authorize access on pages openshift APPLICATION_PASSWORD The application password that is used within the sample application to authorize access on pages secret DATABASE_SERVICE_NAME Database service name postgresql POSTGRESQL_USER database username expression user[A-Z0-9]{3} POSTGRESQL_PASSWORD database password expression [a-zA-Z0-9]{8} POSTGRESQL_DATABASE database name root POSTGRESQL_MAX_CONNECTIONS database max connections 10 POSTGRESQL_SHARED_BUFFERS database shared buffers 12MBThe output identifies several parameters that are generated with a regular expression-like generator when the template is processed.

3.1.4.3. Generating a list of objects

Using the CLI, you can process a file defining a template to return the list of objects to standard output.

Procedure

Process a file defining a template to return the list of objects to standard output:

$ oc process -f <filename>Alternatively, if the template has already been uploaded to the current project:

$ oc process <template_name>Create objects from a template by processing the template and piping the output to

oc create:$ oc process -f <filename> | oc create -f -Alternatively, if the template has already been uploaded to the current project:

$ oc process <template> | oc create -f -You can override any parameter values defined in the file by adding the

-poption for each<name>=<value>pair you want to override. A parameter reference appears in any text field inside the template items.For example, in the following the

POSTGRESQL_USERandPOSTGRESQL_DATABASEparameters of a template are overridden to output a configuration with customized environment variables:Creating a List of objects from a template

$ oc process -f my-rails-postgresql \ -p POSTGRESQL_USER=bob \ -p POSTGRESQL_DATABASE=mydatabaseThe JSON file can either be redirected to a file or applied directly without uploading the template by piping the processed output to the

oc createcommand:$ oc process -f my-rails-postgresql \ -p POSTGRESQL_USER=bob \ -p POSTGRESQL_DATABASE=mydatabase \ | oc create -f -If you have large number of parameters, you can store them in a file and then pass this file to

oc process:$ cat postgres.env POSTGRESQL_USER=bob POSTGRESQL_DATABASE=mydatabase$ oc process -f my-rails-postgresql --param-file=postgres.envYou can also read the environment from standard input by using

"-"as the argument to--param-file:$ sed s/bob/alice/ postgres.env | oc process -f my-rails-postgresql --param-file=-

3.1.5. Modifying uploaded templates

You can edit a template that has already been uploaded to your project.

Procedure

Modify a template that has already been uploaded:

$ oc edit template <template>

3.1.6. Using instant app and quick start templates

OpenShift Container Platform provides a number of default instant app and quick start templates to make it easy to quickly get started creating a new application for different languages. Templates are provided for Rails (Ruby), Django (Python), Node.js, CakePHP (PHP), and Dancer (Perl). Your cluster administrator must create these templates in the default, global openshift project so you have access to them.

By default, the templates build using a public source repository on GitHub that contains the necessary application code.

Procedure

You can list the available default instant app and quick start templates with:

$ oc get templates -n openshiftTo modify the source and build your own version of the application:

-

Fork the repository referenced by the template’s default

SOURCE_REPOSITORY_URLparameter. Override the value of the

SOURCE_REPOSITORY_URLparameter when creating from the template, specifying your fork instead of the default value.By doing this, the build configuration created by the template now points to your fork of the application code, and you can modify the code and rebuild the application at will.

-

Fork the repository referenced by the template’s default

Some of the instant app and quick start templates define a database deployment configuration. The configuration they define uses ephemeral storage for the database content. These templates should be used for demonstration purposes only as all database data is lost if the database pod restarts for any reason.

3.1.6.1. Quick start templates

A quick start template is a basic example of an application running on OpenShift Container Platform. Quick starts come in a variety of languages and frameworks, and are defined in a template, which is constructed from a set of services, build configurations, and deployment configurations. This template references the necessary images and source repositories to build and deploy the application.

To explore a quick start, create an application from a template. Your administrator must have already installed these templates in your OpenShift Container Platform cluster, in which case you can simply select it from the web console.

Quick starts refer to a source repository that contains the application source code. To customize the quick start, fork the repository and, when creating an application from the template, substitute the default source repository name with your forked repository. This results in builds that are performed using your source code instead of the provided example source. You can then update the code in your source repository and launch a new build to see the changes reflected in the deployed application.

3.1.6.1.1. Web framework quick start templates

These quick start templates provide a basic application of the indicated framework and language:

- CakePHP: a PHP web framework that includes a MySQL database

- Dancer: a Perl web framework that includes a MySQL database

- Django: a Python web framework that includes a PostgreSQL database

- NodeJS: a NodeJS web application that includes a MongoDB database

- Rails: a Ruby web framework that includes a PostgreSQL database

3.1.7. Writing templates

You can define new templates to make it easy to recreate all the objects of your application. The template defines the objects it creates along with some metadata to guide the creation of those objects.

The following is an example of a simple template object definition (YAML):

apiVersion: template.openshift.io/v1

kind: Template

metadata:

name: redis-template

annotations:

description: "Description"

iconClass: "icon-redis"

tags: "database,nosql"

objects:

- apiVersion: v1

kind: Pod

metadata:

name: redis-master

spec:

containers:

- env:

- name: REDIS_PASSWORD

value: ${REDIS_PASSWORD}

image: dockerfile/redis

name: master

ports:

- containerPort: 6379

protocol: TCP

parameters:

- description: Password used for Redis authentication

from: '[A-Z0-9]{8}'

generate: expression

name: REDIS_PASSWORD

labels:

redis: master3.1.7.1. Writing the template description

The template description informs you what the template does and helps you find it when searching in the web console. Additional metadata beyond the template name is optional, but useful to have. In addition to general descriptive information, the metadata also includes a set of tags. Useful tags include the name of the language the template is related to for example, Java, PHP, Ruby, and so on.

The following is an example of template description metadata:

kind: Template

apiVersion: template.openshift.io/v1

metadata:

name: cakephp-mysql-example

annotations:

openshift.io/display-name: "CakePHP MySQL Example (Ephemeral)"

description: >-

An example CakePHP application with a MySQL database. For more information

about using this template, including OpenShift considerations, see

https://github.com/sclorg/cakephp-ex/blob/master/README.md.

WARNING: Any data stored will be lost upon pod destruction. Only use this

template for testing."

openshift.io/long-description: >-

This template defines resources needed to develop a CakePHP application,

including a build configuration, application DeploymentConfig, and

database DeploymentConfig. The database is stored in

non-persistent storage, so this configuration should be used for

experimental purposes only.

tags: "quickstart,php,cakephp"

iconClass: icon-php

openshift.io/provider-display-name: "Red Hat, Inc."

openshift.io/documentation-url: "https://github.com/sclorg/cakephp-ex"

openshift.io/support-url: "https://access.redhat.com"

message: "Your admin credentials are ${ADMIN_USERNAME}:${ADMIN_PASSWORD}" - 1

- The unique name of the template.

- 2

- A brief, user-friendly name, which can be employed by user interfaces.

- 3

- A description of the template. Include enough detail that users understand what is being deployed and any caveats they must know before deploying. It should also provide links to additional information, such as a README file. Newlines can be included to create paragraphs.

- 4

- Additional template description. This may be displayed by the service catalog, for example.

- 5

- Tags to be associated with the template for searching and grouping. Add tags that include it into one of the provided catalog categories. Refer to the

idandcategoryAliasesinCATALOG_CATEGORIESin the console constants file. The categories can also be customized for the whole cluster. - 6

- An icon to be displayed with your template in the web console.

Example 3.1. Available icons

-

icon-3scale -

icon-aerogear -

icon-amq -

icon-angularjs -

icon-ansible -

icon-apache -

icon-beaker -

icon-camel -

icon-capedwarf -

icon-cassandra -

icon-catalog-icon -

icon-clojure -

icon-codeigniter -

icon-cordova -

icon-datagrid -

icon-datavirt -

icon-debian -

icon-decisionserver -

icon-django -

icon-dotnet -

icon-drupal -

icon-eap -

icon-elastic -

icon-erlang -

icon-fedora -

icon-freebsd -

icon-git -

icon-github -

icon-gitlab -

icon-glassfish -

icon-go-gopher -

icon-golang -

icon-grails -

icon-hadoop -

icon-haproxy -

icon-helm -

icon-infinispan -

icon-jboss -

icon-jenkins -

icon-jetty -

icon-joomla -

icon-jruby -

icon-js -

icon-knative -

icon-kubevirt -

icon-laravel -

icon-load-balancer -

icon-mariadb -

icon-mediawiki -

icon-memcached -

icon-mongodb -

icon-mssql -

icon-mysql-database -

icon-nginx -

icon-nodejs -

icon-openjdk -

icon-openliberty -

icon-openshift -

icon-openstack -

icon-other-linux -

icon-other-unknown -

icon-perl -

icon-phalcon -

icon-php -

icon-play -

iconpostgresql -

icon-processserver -

icon-python -

icon-quarkus -

icon-rabbitmq -

icon-rails -

icon-redhat -

icon-redis -

icon-rh-integration -

icon-rh-spring-boot -

icon-rh-tomcat -

icon-ruby -

icon-scala -

icon-serverlessfx -

icon-shadowman -

icon-spring-boot -

icon-spring -

icon-sso -

icon-stackoverflow -

icon-suse -

icon-symfony -

icon-tomcat -

icon-ubuntu -

icon-vertx -

icon-wildfly -

icon-windows -

icon-wordpress -

icon-xamarin -

icon-zend

-

- 7

- The name of the person or organization providing the template.

- 8

- A URL referencing further documentation for the template.

- 9

- A URL where support can be obtained for the template.

- 10

- An instructional message that is displayed when this template is instantiated. This field should inform the user how to use the newly created resources. Parameter substitution is performed on the message before being displayed so that generated credentials and other parameters can be included in the output. Include links to any next-steps documentation that users should follow.

3.1.7.2. Writing template labels

Templates can include a set of labels. These labels are added to each object created when the template is instantiated. Defining a label in this way makes it easy for users to find and manage all the objects created from a particular template.

The following is an example of template object labels:

kind: "Template"

apiVersion: "v1"

...

labels:

template: "cakephp-mysql-example"

app: "${NAME}" 3.1.7.3. Writing template parameters

Parameters allow a value to be supplied by you or generated when the template is instantiated. Then, that value is substituted wherever the parameter is referenced. References can be defined in any field in the objects list field. This is useful for generating random passwords or allowing you to supply a hostname or other user-specific value that is required to customize the template. Parameters can be referenced in two ways:

-

As a string value by placing values in the form

${PARAMETER_NAME}in any string field in the template. -

As a JSON or YAML value by placing values in the form

${{PARAMETER_NAME}}in place of any field in the template.

When using the ${PARAMETER_NAME} syntax, multiple parameter references can be combined in a single field and the reference can be embedded within fixed data, such as "http://${PARAMETER_1}${PARAMETER_2}". Both parameter values are substituted and the resulting value is a quoted string.

When using the ${{PARAMETER_NAME}} syntax only a single parameter reference is allowed and leading and trailing characters are not permitted. The resulting value is unquoted unless, after substitution is performed, the result is not a valid JSON object. If the result is not a valid JSON value, the resulting value is quoted and treated as a standard string.

A single parameter can be referenced multiple times within a template and it can be referenced using both substitution syntaxes within a single template.

A default value can be provided, which is used if you do not supply a different value:

The following is an example of setting an explicit value as the default value:

parameters:

- name: USERNAME

description: "The user name for Joe"

value: joeParameter values can also be generated based on rules specified in the parameter definition, for example generating a parameter value:

parameters:

- name: PASSWORD

description: "The random user password"

generate: expression

from: "[a-zA-Z0-9]{12}"In the previous example, processing generates a random password 12 characters long consisting of all upper and lowercase alphabet letters and numbers.

The syntax available is not a full regular expression syntax. However, you can use \w, \d, \a, and \A modifiers:

-

[\w]{10}produces 10 alphabet characters, numbers, and underscores. This follows the PCRE standard and is equal to[a-zA-Z0-9_]{10}. -

[\d]{10}produces 10 numbers. This is equal to[0-9]{10}. -

[\a]{10}produces 10 alphabetical characters. This is equal to[a-zA-Z]{10}. -

[\A]{10}produces 10 punctuation or symbol characters. This is equal to[~!@#$%\^&*()\-_+={}\[\]\\|<,>.?/"';:`]{10}.

Depending on if the template is written in YAML or JSON, and the type of string that the modifier is embedded within, you might need to escape the backslash with a second backslash. The following examples are equivalent:

Example YAML template with a modifier

parameters:

- name: singlequoted_example

generate: expression

from: '[\A]{10}'

- name: doublequoted_example

generate: expression

from: "[\\A]{10}"Example JSON template with a modifier

{

"parameters": [

{

"name": "json_example",

"generate": "expression",

"from": "[\\A]{10}"

}

]

}Here is an example of a full template with parameter definitions and references:

kind: Template

apiVersion: template.openshift.io/v1

metadata:

name: my-template

objects:

- kind: BuildConfig

apiVersion: build.openshift.io/v1

metadata:

name: cakephp-mysql-example

annotations:

description: Defines how to build the application

spec:

source:

type: Git

git:

uri: "${SOURCE_REPOSITORY_URL}"

ref: "${SOURCE_REPOSITORY_REF}"

contextDir: "${CONTEXT_DIR}"

- kind: DeploymentConfig

apiVersion: apps.openshift.io/v1

metadata:

name: frontend

spec:

replicas: "${{REPLICA_COUNT}}"

parameters:

- name: SOURCE_REPOSITORY_URL

displayName: Source Repository URL

description: The URL of the repository with your application source code

value: https://github.com/sclorg/cakephp-ex.git

required: true

- name: GITHUB_WEBHOOK_SECRET

description: A secret string used to configure the GitHub webhook

generate: expression

from: "[a-zA-Z0-9]{40}"

- name: REPLICA_COUNT

description: Number of replicas to run

value: "2"

required: true

message: "... The GitHub webhook secret is ${GITHUB_WEBHOOK_SECRET} ..." - 1

- This value is replaced with the value of the

SOURCE_REPOSITORY_URLparameter when the template is instantiated. - 2

- This value is replaced with the unquoted value of the

REPLICA_COUNTparameter when the template is instantiated. - 3

- The name of the parameter. This value is used to reference the parameter within the template.

- 4

- The user-friendly name for the parameter. This is displayed to users.

- 5

- A description of the parameter. Provide more detailed information for the purpose of the parameter, including any constraints on the expected value. Descriptions should use complete sentences to follow the console’s text standards. Do not make this a duplicate of the display name.

- 6

- A default value for the parameter which is used if you do not override the value when instantiating the template. Avoid using default values for things like passwords, instead use generated parameters in combination with secrets.

- 7

- Indicates this parameter is required, meaning you cannot override it with an empty value. If the parameter does not provide a default or generated value, you must supply a value.

- 8

- A parameter which has its value generated.

- 9

- The input to the generator. In this case, the generator produces a 40 character alphanumeric value including upper and lowercase characters.

- 10

- Parameters can be included in the template message. This informs you about generated values.

3.1.7.4. Writing the template object list

The main portion of the template is the list of objects which is created when the template is instantiated. This can be any valid API object, such as a build configuration, deployment configuration, or service. The object is created exactly as defined here, with any parameter values substituted in prior to creation. The definition of these objects can reference parameters defined earlier.

The following is an example of an object list:

kind: "Template"

apiVersion: "v1"

metadata:

name: my-template

objects:

- kind: "Service"

apiVersion: "v1"

metadata:

name: "cakephp-mysql-example"

annotations:

description: "Exposes and load balances the application pods"

spec:

ports:

- name: "web"

port: 8080

targetPort: 8080

selector:

name: "cakephp-mysql-example"- 1

- The definition of a service, which is created by this template.

If an object definition metadata includes a fixed namespace field value, the field is stripped out of the definition during template instantiation. If the namespace field contains a parameter reference, normal parameter substitution is performed and the object is created in whatever namespace the parameter substitution resolved the value to, assuming the user has permission to create objects in that namespace.

3.1.7.5. Marking a template as bindable

The Template Service Broker advertises one service in its catalog for each template object of which it is aware. By default, each of these services is advertised as being bindable, meaning an end user is permitted to bind against the provisioned service.

Procedure

Template authors can prevent end users from binding against services provisioned from a given template.

-

Prevent end user from binding against services provisioned from a given template by adding the annotation

template.openshift.io/bindable: "false"to the template.

3.1.7.6. Exposing template object fields

Template authors can indicate that fields of particular objects in a template should be exposed. The Template Service Broker recognizes exposed fields on ConfigMap, Secret, Service, and Route objects, and returns the values of the exposed fields when a user binds a service backed by the broker.

To expose one or more fields of an object, add annotations prefixed by template.openshift.io/expose- or template.openshift.io/base64-expose- to the object in the template.

Each annotation key, with its prefix removed, is passed through to become a key in a bind response.

Each annotation value is a Kubernetes JSONPath expression, which is resolved at bind time to indicate the object field whose value should be returned in the bind response.

Bind response key-value pairs can be used in other parts of the system as environment variables. Therefore, it is recommended that every annotation key with its prefix removed should be a valid environment variable name — beginning with a character A-Z, a-z, or _, and being followed by zero or more characters A-Z, a-z, 0-9, or _.

Unless escaped with a backslash, Kubernetes' JSONPath implementation interprets characters such as ., @, and others as metacharacters, regardless of their position in the expression. Therefore, for example, to refer to a ConfigMap datum named my.key, the required JSONPath expression would be {.data['my\.key']}. Depending on how the JSONPath expression is then written in YAML, an additional backslash might be required, for example "{.data['my\\.key']}".

The following is an example of different objects' fields being exposed:

kind: Template

apiVersion: template.openshift.io/v1

metadata:

name: my-template

objects:

- kind: ConfigMap

apiVersion: v1

metadata:

name: my-template-config

annotations:

template.openshift.io/expose-username: "{.data['my\\.username']}"

data:

my.username: foo

- kind: Secret

apiVersion: v1

metadata:

name: my-template-config-secret

annotations:

template.openshift.io/base64-expose-password: "{.data['password']}"

stringData:

password: <password>

- kind: Service

apiVersion: v1

metadata:

name: my-template-service

annotations:

template.openshift.io/expose-service_ip_port: "{.spec.clusterIP}:{.spec.ports[?(.name==\"web\")].port}"

spec:

ports:

- name: "web"

port: 8080

- kind: Route

apiVersion: route.openshift.io/v1

metadata:

name: my-template-route

annotations:

template.openshift.io/expose-uri: "http://{.spec.host}{.spec.path}"

spec:

path: mypath

An example response to a bind operation given the above partial template follows:

{

"credentials": {

"username": "foo",

"password": "YmFy",

"service_ip_port": "172.30.12.34:8080",

"uri": "http://route-test.router.default.svc.cluster.local/mypath"

}

}Procedure

-

Use the

template.openshift.io/expose-annotation to return the field value as a string. This is convenient, although it does not handle arbitrary binary data. -

If you want to return binary data, use the

template.openshift.io/base64-expose-annotation instead to base64 encode the data before it is returned.

3.1.7.7. Waiting for template readiness

Template authors can indicate that certain objects within a template should be waited for before a template instantiation by the service catalog, Template Service Broker, or TemplateInstance API is considered complete.

Before starting the procedure, read the following considerations:

- Set memory, CPU, and storage default sizes to make sure your application is given enough resources to run smoothly.

-

Avoid referencing the

latesttag from images if that tag is used across major versions. This can cause running applications to break when new images are pushed to that tag. - A good template builds and deploys cleanly without requiring modifications after the template is deployed.

Procedure

To use the template feature, mark one or more objects of kind

Build,BuildConfig,Deployment,DeploymentConfig,Job, orStatefulSetin a template with the following annotation:"template.alpha.openshift.io/wait-for-ready": "true"Template instantiation is not complete until all objects marked with the annotation report ready. Similarly, if any of the annotated objects report failed, or if the template fails to become ready within a fixed timeout of one hour, the template instantiation fails.

For the purposes of instantiation, readiness and failure of each object kind are defined as follows:

Expand Kind Readiness Failure BuildObject reports phase complete.

Object reports phase canceled, error, or failed.

BuildConfigLatest associated build object reports phase complete.

Latest associated build object reports phase canceled, error, or failed.

DeploymentObject reports new replica set and deployment available. This honors readiness probes defined on the object.

Object reports progressing condition as false.

DeploymentConfigObject reports new replication controller and deployment available. This honors readiness probes defined on the object.

Object reports progressing condition as false.

JobObject reports completion.

Object reports that one or more failures have occurred.

StatefulSetObject reports all replicas ready. This honors readiness probes defined on the object.

Not applicable.

The following is an example template extract, which uses the

wait-for-readyannotation. Further examples can be found in the OpenShift Container Platform quick start templates.kind: Template apiVersion: template.openshift.io/v1 metadata: name: my-template objects: - kind: BuildConfig apiVersion: build.openshift.io/v1 metadata: name: ... annotations: # wait-for-ready used on BuildConfig ensures that template instantiation # will fail immediately if build fails template.alpha.openshift.io/wait-for-ready: "true" spec: ... - kind: DeploymentConfig apiVersion: apps.openshift.io/v1 metadata: name: ... annotations: template.alpha.openshift.io/wait-for-ready: "true" spec: ... - kind: Service apiVersion: v1 metadata: name: ... spec: ...

3.1.7.8. Creating a template from existing objects

Rather than writing an entire template from scratch, you can export existing objects from your project in YAML form, and then modify the YAML from there by adding parameters and other customizations as template form.

Procedure

Export objects in a project in YAML form:

$ oc get -o yaml all > <yaml_filename>You can also substitute a particular resource type or multiple resources instead of

all. Runoc get -hfor more examples.The object types included in

oc get -o yaml allare:-

BuildConfig -

Build -

DeploymentConfig -

ImageStream -

Pod -

ReplicationController -

Route -

Service

-

Using the all alias is not recommended because the contents might vary across different clusters and versions. Instead, specify all required resources.

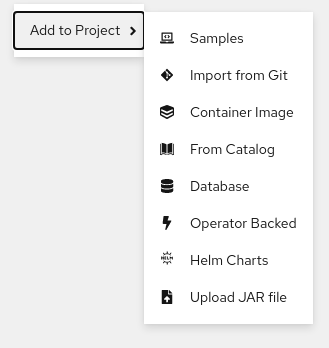

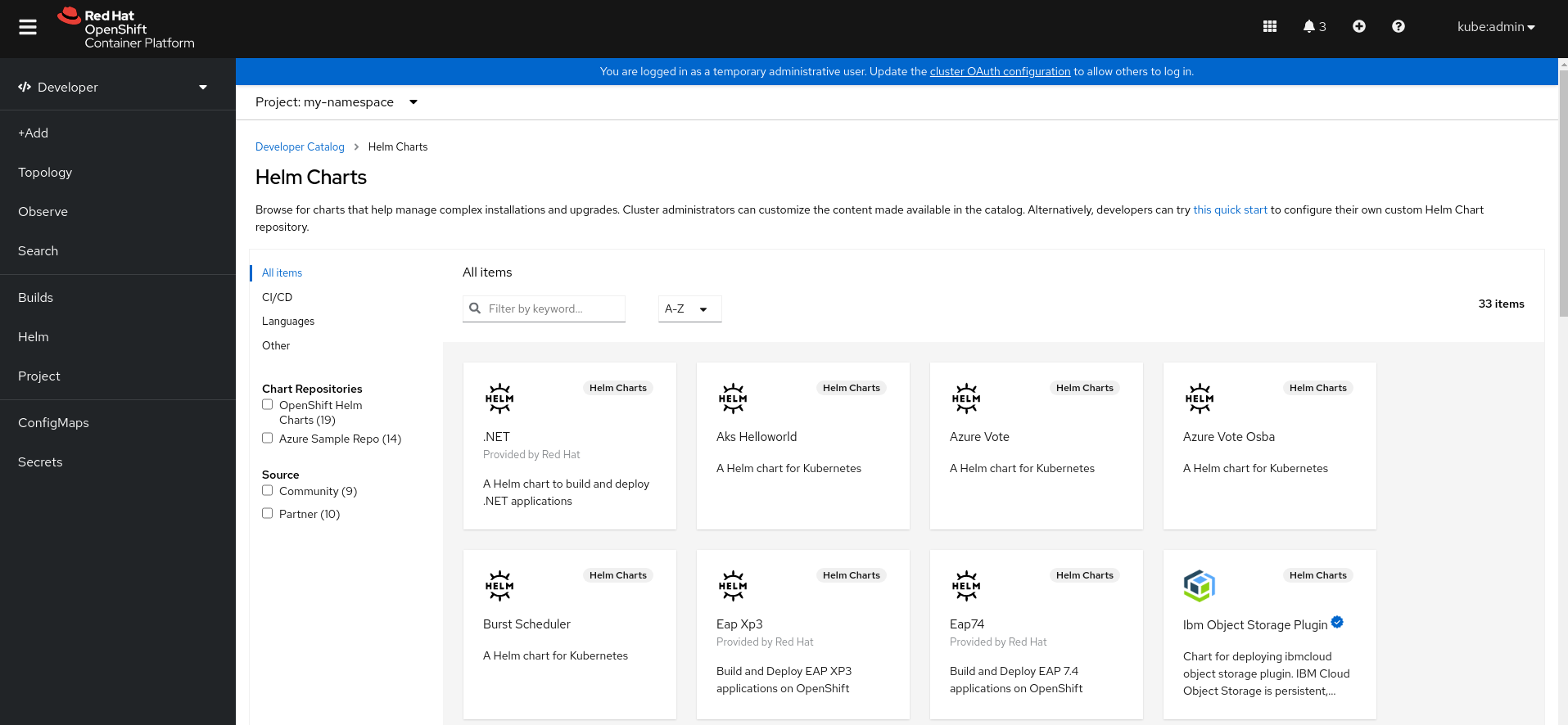

3.2. Creating applications by using the Developer perspective

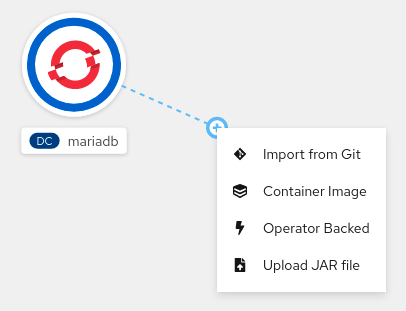

The Developer perspective in the web console provides you the following options from the +Add view to create applications and associated services and deploy them on OpenShift Container Platform:

Getting started resources: Use these resources to help you get started with Developer Console. You can choose to hide the header using the Options menu

.

.

- Creating applications using samples: Use existing code samples to get started with creating applications on the OpenShift Container Platform.

- Build with guided documentation: Follow the guided documentation to build applications and familiarize yourself with key concepts and terminologies.

- Explore new developer features: Explore the new features and resources within the Developer perspective.

Developer catalog: Explore the Developer Catalog to select the required applications, services, or source to image builders, and then add it to your project.

- All Services: Browse the catalog to discover services across OpenShift Container Platform.

- Database: Select the required database service and add it to your application.

- Operator Backed: Select and deploy the required Operator-managed service.

- Helm chart: Select the required Helm chart to simplify deployment of applications and services.

- Devfile: Select a devfile from the Devfile registry to declaratively define a development environment.

Event Source: Select an event source to register interest in a class of events from a particular system.

NoteThe Managed services option is also available if the RHOAS Operator is installed.

- Git repository: Import an existing codebase, Devfile, or Dockerfile from your Git repository using the From Git, From Devfile, or From Dockerfile options respectively, to build and deploy an application on OpenShift Container Platform.

- Container images: Use existing images from an image stream or registry to deploy it on to the OpenShift Container Platform.

- Pipelines: Use Tekton pipeline to create CI/CD pipelines for your software delivery process on the OpenShift Container Platform.

Serverless: Explore the Serverless options to create, build, and deploy stateless and serverless applications on the OpenShift Container Platform.

- Channel: Create a Knative channel to create an event forwarding and persistence layer with in-memory and reliable implementations.

- Samples: Explore the available sample applications to create, build, and deploy an application quickly.

- Quick Starts: Explore the quick start options to create, import, and run applications with step-by-step instructions and tasks.

From Local Machine: Explore the From Local Machine tile to import or upload files on your local machine for building and deploying applications easily.

- Import YAML: Upload a YAML file to create and define resources for building and deploying applications.

- Upload JAR file: Upload a JAR file to build and deploy Java applications.

- Share my Project: Use this option to add or remove users to a project and provide accessibility options to them.

- Helm Chart repositories: Use this option to add Helm Chart repositories in a namespace.

- Re-ordering of resources: Use these resources to re-order pinned resources added to your navigation pane. The drag-and-drop icon is displayed on the left side of the pinned resource when you hover over it in the navigation pane. The dragged resource can be dropped only in the section where it resides.

Note that certain options, such as Pipelines, Event Source, and Import Virtual Machines, are displayed only when the OpenShift Pipelines Operator, OpenShift Serverless Operator, and OpenShift Virtualization Operator are installed, respectively.

3.2.1. Prerequisites

To create applications using the Developer perspective ensure that:

- You have logged in to the web console.

- You have created a project or have access to a project with the appropriate roles and permissions to create applications and other workloads in OpenShift Container Platform.

To create serverless applications, in addition to the preceding prerequisites, ensure that:

3.2.2. Creating sample applications

You can use the sample applications in the +Add flow of the Developer perspective to create, build, and deploy applications quickly.

Prerequisites

- You have logged in to the OpenShift Container Platform web console and are in the Developer perspective.

Procedure

- In the +Add view, click the Samples tile to see the Samples page.

- On the Samples page, select one of the available sample applications to see the Create Sample Application form.

In the Create Sample Application Form:

- In the Name field, the deployment name is displayed by default. You can modify this name as required.

- In the Builder Image Version, a builder image is selected by default. You can modify this image version by using the Builder Image Version drop-down list.

- A sample Git repository URL is added by default.

- Click Create to create the sample application. The build status of the sample application is displayed on the Topology view. After the sample application is created, you can see the deployment added to the application.

3.2.3. Creating applications by using Quick Starts

The Quick Starts page shows you how to create, import, and run applications on OpenShift Container Platform, with step-by-step instructions and tasks.

Prerequisites

- You have logged in to the OpenShift Container Platform web console and are in the Developer perspective.

Procedure

- In the +Add view, click the Getting Started resources → Build with guided documentation → View all quick starts link to view the Quick Starts page.

- In the Quick Starts page, click the tile for the quick start that you want to use.

- Click Start to begin the quick start.

- Perform the steps that are displayed.

3.2.4. Importing a codebase from Git to create an application

You can use the Developer perspective to create, build, and deploy an application on OpenShift Container Platform using an existing codebase in GitHub.

The following procedure walks you through the From Git option in the Developer perspective to create an application.

Procedure

- In the +Add view, click From Git in the Git Repository tile to see the Import from git form.

-

In the Git section, enter the Git repository URL for the codebase you want to use to create an application. For example, enter the URL of this sample Node.js application

https://github.com/sclorg/nodejs-ex. The URL is then validated. Optional: You can click Show Advanced Git Options to add details such as:

- Git Reference to point to code in a specific branch, tag, or commit to be used to build the application.

- Context Dir to specify the subdirectory for the application source code you want to use to build the application.

- Source Secret to create a Secret Name with credentials for pulling your source code from a private repository.

Optional: You can import a

Devfile, aDockerfile,Builder Image, or aServerless Functionthrough your Git repository to further customize your deployment.-

If your Git repository contains a

Devfile, aDockerfile, aBuilder Image, or afunc.yaml, it is automatically detected and populated on the respective path fields. -

If a

Devfile, aDockerfile, or aBuilder Imageare detected in the same repository, theDevfileis selected by default. -

If

func.yamlis detected in the Git repository, the Import Strategy changes toServerless Function. - Alternatively, you can create a serverless function by clicking Create Serverless function in the +Add view using the Git repository URL.

- To edit the file import type and select a different strategy, click Edit import strategy option.

-

If multiple

Devfiles, aDockerfiles, or aBuilder Imagesare detected, to import a specific instance, specify the respective paths relative to the context directory.

-

If your Git repository contains a

After the Git URL is validated, the recommended builder image is selected and marked with a star. If the builder image is not auto-detected, select a builder image. For the

https://github.com/sclorg/nodejs-exGit URL, by default the Node.js builder image is selected.- Optional: Use the Builder Image Version drop-down to specify a version.

- Optional: Use the Edit import strategy to select a different strategy.

- Optional: For the Node.js builder image, use the Run command field to override the command to run the application.

In the General section:

-

In the Application field, enter a unique name for the application grouping, for example,

myapp. Ensure that the application name is unique in a namespace. The Name field to identify the resources created for this application is automatically populated based on the Git repository URL if there are no existing applications. If there are existing applications, you can choose to deploy the component within an existing application, create a new application, or keep the component unassigned.

NoteThe resource name must be unique in a namespace. Modify the resource name if you get an error.

-

In the Application field, enter a unique name for the application grouping, for example,

In the Resources section, select:

- Deployment, to create an application in plain Kubernetes style.

- Deployment Config, to create an OpenShift Container Platform style application.

Serverless Deployment, to create a Knative service.

NoteTo set the default resource preference for importing an application, go to User Preferences → Applications → Resource type field. The Serverless Deployment option is displayed in the Import from Git form only if the OpenShift Serverless Operator is installed in your cluster. The Resources section is not available while creating a serverless function. For further details, refer to the OpenShift Serverless documentation.

In the Pipelines section, select Add Pipeline, and then click Show Pipeline Visualization to see the pipeline for the application. A default pipeline is selected, but you can choose the pipeline you want from the list of available pipelines for the application.

NoteThe Add pipeline checkbox is checked and Configure PAC is selected by default if the following criterias are fulfilled:

- Pipeline operator is installed

-

pipelines-as-codeis enabled -

.tektondirectory is detected in the Git repository

Add a webhook to your repository. If Configure PAC is checked and the GitHub App is set up, you can see the Use GitHub App and Setup a webhook options. If GitHub App is not set up, you can only see the Setup a webhook option:

- Go to Settings → Webhooks and click Add webhook.

- Set the Payload URL to the Pipelines as Code controller public URL.

- Select the content type as application/json.

-

Add a webhook secret and note it in an alternate location. With

opensslinstalled on your local machine, generate a random secret. - Click Let me select individual events and select these events: Commit comments, Issue comments, Pull request, and Pushes.

- Click Add webhook.

Optional: In the Advanced Options section, the Target port and the Create a route to the application is selected by default so that you can access your application using a publicly available URL.

If your application does not expose its data on the default public port, 80, clear the check box, and set the target port number you want to expose.

Optional: You can use the following advanced options to further customize your application:

- Routing

By clicking the Routing link, you can perform the following actions:

- Customize the hostname for the route.

- Specify the path the router watches.

- Select the target port for the traffic from the drop-down list.

Secure your route by selecting the Secure Route check box. Select the required TLS termination type and set a policy for insecure traffic from the respective drop-down lists.

NoteFor serverless applications, the Knative service manages all the routing options above. However, you can customize the target port for traffic, if required. If the target port is not specified, the default port of

8080is used.

- Domain mapping

If you are creating a Serverless Deployment, you can add a custom domain mapping to the Knative service during creation.

In the Advanced options section, click Show advanced Routing options.

- If the domain mapping CR that you want to map to the service already exists, you can select it from the Domain mapping drop-down menu.

-

If you want to create a new domain mapping CR, type the domain name into the box, and select the Create option. For example, if you type in

example.com, the Create option is Create "example.com".

- Health Checks

Click the Health Checks link to add Readiness, Liveness, and Startup probes to your application. All the probes have prepopulated default data; you can add the probes with the default data or customize it as required.

To customize the health probes:

- Click Add Readiness Probe, if required, modify the parameters to check if the container is ready to handle requests, and select the check mark to add the probe.

- Click Add Liveness Probe, if required, modify the parameters to check if a container is still running, and select the check mark to add the probe.

Click Add Startup Probe, if required, modify the parameters to check if the application within the container has started, and select the check mark to add the probe.

For each of the probes, you can specify the request type - HTTP GET, Container Command, or TCP Socket, from the drop-down list. The form changes as per the selected request type. You can then modify the default values for the other parameters, such as the success and failure thresholds for the probe, number of seconds before performing the first probe after the container starts, frequency of the probe, and the timeout value.

- Build Configuration and Deployment

Click the Build Configuration and Deployment links to see the respective configuration options. Some options are selected by default; you can customize them further by adding the necessary triggers and environment variables.

For serverless applications, the Deployment option is not displayed as the Knative configuration resource maintains the desired state for your deployment instead of a

DeploymentConfigresource.

- Scaling

Click the Scaling link to define the number of pods or instances of the application you want to deploy initially.

If you are creating a serverless deployment, you can also configure the following settings:

-

Min Pods determines the lower limit for the number of pods that must be running at any given time for a Knative service. This is also known as the

minScalesetting. -

Max Pods determines the upper limit for the number of pods that can be running at any given time for a Knative service. This is also known as the

maxScalesetting. - Concurrency target determines the number of concurrent requests desired for each instance of the application at a given time.

- Concurrency limit determines the limit for the number of concurrent requests allowed for each instance of the application at a given time.

- Concurrency utilization determines the percentage of the concurrent requests limit that must be met before Knative scales up additional pods to handle additional traffic.

-

Autoscale window defines the time window over which metrics are averaged to provide input for scaling decisions when the autoscaler is not in panic mode. A service is scaled-to-zero if no requests are received during this window. The default duration for the autoscale window is

60s. This is also known as the stable window.

-

Min Pods determines the lower limit for the number of pods that must be running at any given time for a Knative service. This is also known as the

- Resource Limit

- Click the Resource Limit link to set the amount of CPU and Memory resources a container is guaranteed or allowed to use when running.

- Labels

- Click the Labels link to add custom labels to your application.

- Click Create to create the application and a success notification is displayed. You can see the build status of the application in the Topology view.

3.2.5. Creating applications by deploying container image

You can use an external image registry or an image stream tag from an internal registry to deploy an application on your cluster.

Prerequisites

- You have logged in to the OpenShift Container Platform web console and are in the Developer perspective.

Procedure

- In the +Add view, click Container images to view the Deploy Images page.

In the Image section:

- Select Image name from external registry to deploy an image from a public or a private registry, or select Image stream tag from internal registry to deploy an image from an internal registry.

- Select an icon for your image in the Runtime icon tab.

In the General section:

- In the Application name field, enter a unique name for the application grouping.

- In the Name field, enter a unique name to identify the resources created for this component.

In the Resource type section, select the resource type to generate:

-

Select Deployment to enable declarative updates for

PodandReplicaSetobjects. -

Select DeploymentConfig to define the template for a

Podobject, and manage deploying new images and configuration sources. - Select Serverless Deployment to enable scaling to zero when idle.

-

Select Deployment to enable declarative updates for

- Click Create. You can view the build status of the application in the Topology view.

3.2.6. Deploying a Java application by uploading a JAR file

You can use the web console Developer perspective to upload a JAR file by using the following options:

- Navigate to the +Add view of the Developer perspective, and click Upload JAR file in the From Local Machine tile. Browse and select your JAR file, or drag a JAR file to deploy your application.

- Navigate to the Topology view and use the Upload JAR file option, or drag a JAR file to deploy your application.

- Use the in-context menu in the Topology view, and then use the Upload JAR file option to upload your JAR file to deploy your application.

Prerequisites

- The Cluster Samples Operator must be installed by a cluster administrator.

- You have access to the OpenShift Container Platform web console and are in the Developer perspective.

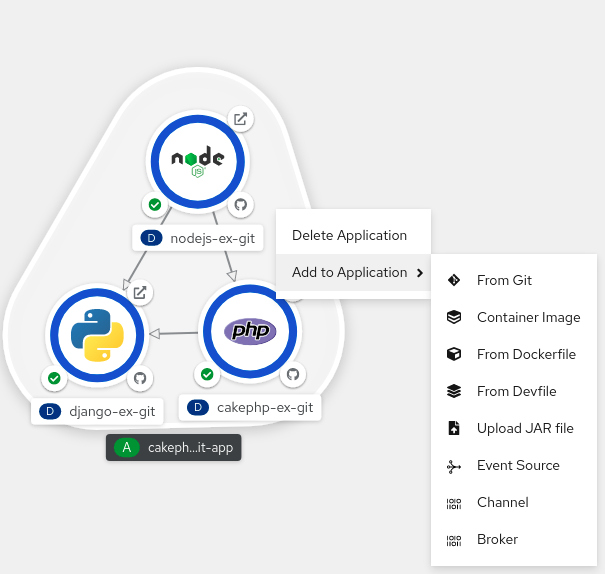

Procedure

- In the Topology view, right-click anywhere to view the Add to Project menu.

- Hover over the Add to Project menu to see the menu options, and then select the Upload JAR file option to see the Upload JAR file form. Alternatively, you can drag the JAR file into the Topology view.

- In the JAR file field, browse for the required JAR file on your local machine and upload it. Alternatively, you can drag the JAR file on to the field. A toast alert is displayed at the top right if an incompatible file type is dragged into the Topology view. A field error is displayed if an incompatible file type is dropped on the field in the upload form.

- The runtime icon and builder image are selected by default. If a builder image is not auto-detected, select a builder image. If required, you can change the version using the Builder Image Version drop-down list.

- Optional: In the Application Name field, enter a unique name for your application to use for resource labelling.

- In the Name field, enter a unique component name for the associated resources.

- Optional: Use the Resource type drop-down list to change the resource type.

- In the Advanced options menu, click Create a Route to the Application to configure a public URL for your deployed application.

- Click Create to deploy the application. A toast notification is shown to notify you that the JAR file is being uploaded. The toast notification also includes a link to view the build logs.

If you attempt to close the browser tab while the build is running, a web alert is displayed.

After the JAR file is uploaded and the application is deployed, you can view the application in the Topology view.

3.2.7. Using the Devfile registry to access devfiles

You can use the devfiles in the +Add flow of the Developer perspective to create an application. The +Add flow provides a complete integration with the devfile community registry. A devfile is a portable YAML file that describes your development environment without needing to configure it from scratch. Using the Devfile registry, you can use a preconfigured devfile to create an application.

Procedure