Chapter 6. Colocation of containerized Ceph daemons

This section describes:

6.1. How colocation works and its advantages

You can colocate containerized Ceph daemons on the same node. Here are the advantages of colocating some of Ceph’s services:

- Significant improvement in total cost of ownership (TCO) at small scale.

- Reduction from six nodes to three for the minimum configuration.

- Easier upgrade.

- Better resource isolation.

See the Knowledgebase article Red Hat Ceph Storage: Supported Configurations for more information on collocation of daemons in the Red Hat Ceph Storage cluster.

How Colocation Works

You can colocate one daemon from the following list with an OSD daemon (ceph-osd) by adding the same node to the appropriate sections in the Ansible inventory file.

-

Ceph Metadata Server (

ceph-mds) -

Ceph Monitor (

ceph-mon) and Ceph Manager (ceph-mgr) daemons -

NFS Ganesha (

nfs-ganesha) -

RBD Mirror (

rbd-mirror) -

iSCSI Gateway (

iscsigw)

Starting with Red Hat Ceph Storage 4.2, Metadata Server (MDS) can be co-located with one additional scale-out daemon.

Additionally, for Ceph Object Gateway (radosgw) or Grafana, you can colocate either with an OSD daemon plus a daemon from the above list, excluding RBD mirror.z For example, the following is a valid five node colocated configuration:

| Node | Daemon | Daemon | Daemon |

|---|---|---|---|

| node1 | OSD | Monitor | Grafana |

| node2 | OSD | Monitor | RADOS Gateway |

| node3 | OSD | Monitor | RADOS Gateway |

| node4 | OSD | Metadata Server | |

| node5 | OSD | Metadata Server |

To deploy a five node cluster like the above setup, configure the Ansible inventory file like so:

Ansible inventory file with colocated daemons

[grafana-server]

node1

[mons]

node[1:3]

[mgrs]

node[1:3]

[osds]

node[1:5]

[rgws]

node[2:3]

[mdss]

node[4:5]

Because ceph-mon and ceph-mgr work together closely they do not count as two separate daemons for the purposes of colocation.

Colocating Grafana with any other daemon is not supported with Cockpit based installation. Use ceph-ansible to configure the storage cluster.

Red Hat recommends colocating the Ceph Object Gateway with OSD containers to increase performance. To achieve the highest performance without incurring an additional cost, use two gateways by setting radosgw_num_instances: 2 in group_vars/all.yml. For more information, see Red Hat Ceph Storage RGW deployment strategies and sizing guidance.

Adequate CPU and network resources are required to colocate Grafana with two other containers. If resource exhaustion occurs, colocate Grafana with a Monitor only, and if resource exhaustion still occurs, run Grafana on a dedicated node.

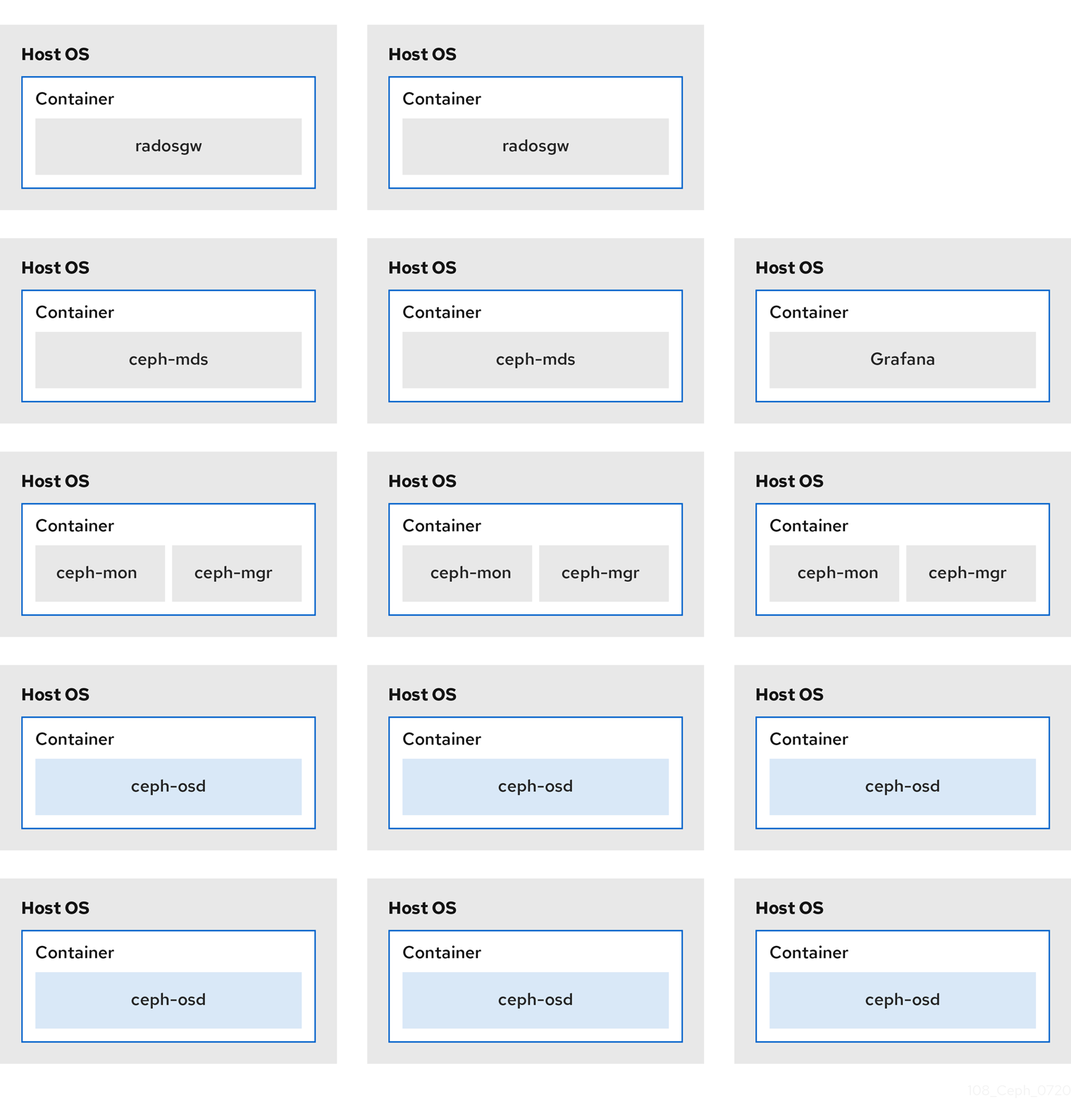

The Figure 6.1, “Colocated Daemons” and Figure 6.2, “Non-colocated Daemons” images shows the difference between clusters with colocated and non-colocated daemons.

Figure 6.1. Colocated Daemons

Figure 6.2. Non-colocated Daemons

When you colocate multiple containerized Ceph daemons on the same node, the ceph-ansible playbook reserves dedicated CPU and RAM resources to each. By default, ceph-ansible uses values listed in the Recommended Minimum Hardware chapter in the Red Hat Ceph Storage Hardware Guide. To learn how to change the default values, see the Setting Dedicated Resources for Colocated Daemons section.

6.2. Setting Dedicated Resources for Colocated Daemons

When colocating two Ceph daemon on the same node, the ceph-ansible playbook reserves CPU and RAM resources for each daemon. The default values that ceph-ansible uses are listed in the Recommended Minimum Hardware chapter in the Red Hat Ceph Storage Hardware Selection Guide. To change the default values, set the needed parameters when deploying Ceph daemons.

Procedure

To change the default CPU limit for a daemon, set the

ceph_daemon-type_docker_cpu_limitparameter in the appropriate.ymlconfiguration file when deploying the daemon. See the following table for details.Expand Daemon Parameter Configuration file OSD

ceph_osd_docker_cpu_limitosds.ymlMDS

ceph_mds_docker_cpu_limitmdss.ymlRGW

ceph_rgw_docker_cpu_limitrgws.ymlFor example, to change the default CPU limit to 2 for the Ceph Object Gateway, edit the

/usr/share/ceph-ansible/group_vars/rgws.ymlfile as follows:ceph_rgw_docker_cpu_limit: 2To change the default RAM for OSD daemons, set the

osd_memory_targetin the/usr/share/ceph-ansible/group_vars/all.ymlfile when deploying the daemon. For example, to limit the OSD RAM to 6 GB:ceph_conf_overrides: osd: osd_memory_target=6000000000ImportantIn an hyperconverged infrastructure (HCI) configuration, you can also use the

ceph_osd_docker_memory_limitparameter in theosds.ymlconfiguration file to change the Docker memory CGroup limit. In this case, setceph_osd_docker_memory_limitto 50% higher thanosd_memory_target, so that the CGroup limit is more constraining than it is by default for an HCI configuration. For example, ifosd_memory_targetis set to 6 GB, setceph_osd_docker_memory_limitto 9 GB:ceph_osd_docker_memory_limit: 9g

Additional Resources

-

The sample configuration files in the

/usr/share/ceph-ansible/group_vars/directory