Managing, monitoring, and updating the kernel

A guide to managing the Linux kernel on Red Hat Enterprise Linux 8

Abstract

Providing feedback on Red Hat documentation

We are committed to providing high-quality documentation and value your feedback. To help us improve, you can submit suggestions or report errors through the Red Hat Jira tracking system.

Procedure

Log in to the Jira website.

If you do not have an account, select the option to create one.

- Click Create in the top navigation bar.

- Enter a descriptive title in the Summary field.

- Enter your suggestion for improvement in the Description field. Include links to the relevant parts of the documentation.

- Click Create at the bottom of the dialogue.

Chapter 1. The Linux kernel

Learn about the Linux kernel and the Linux kernel RPM package provided and maintained by Red Hat (Red Hat kernel). Keep the Red Hat kernel updated, which ensures the operating system has all the latest bug fixes, performance enhancements, and patches, and is compatible with new hardware.

1.1. What the kernel is

The kernel is a core part of a Linux operating system that manages the system resources and provides interface between hardware and software applications.

The Red Hat kernel is a custom-built kernel based on the upstream Linux mainline kernel that Red Hat engineers further develop and harden with a focus on stability and compatibility with the latest technologies and hardware.

Before Red Hat releases a new kernel version, the kernel needs to pass a set of rigorous quality assurance tests.

The Red Hat kernels are packaged in the RPM format so that they are easily upgraded and verified by the YUM package manager.

Kernels that are not compiled by Red Hat are not supported by Red Hat.

1.2. RPM packages

An RPM package consists of an archive of files and metadata used to install and erase these files. Specifically, the RPM package contains the following parts:

- GPG signature

- The GPG signature is used to verify the integrity of the package.

- Header (package metadata)

- The RPM package manager uses this metadata to determine package dependencies, where to install files, and other information.

- Payload

-

The payload is a

cpioarchive that contains files to install to the system.

There are two types of RPM packages. Both types share the file format and tooling, but have different contents and serve different purposes:

Source RPM (SRPM)

An SRPM contains source code and a

specfile, which describes how to build the source code into a binary RPM. Optionally, the SRPM can contain patches to source code.Binary RPM

A binary RPM contains the binaries built from the sources and patches.

1.3. The Linux kernel RPM package overview

The kernel RPM is a meta package that ensures required subpackages are properly installed, including kernel-core and kernel-modules for the Linux kernel binary and modules.

kernel-core-

Provides the binary image of the kernel, all

initramfs-related objects to bootstrap the system, and a minimal number of kernel modules to ensure core functionality. This subpackage alone could be used in virtualized and cloud environments to provide a Red Hat Enterprise Linux 8 kernel with a quick boot time and a small disk size footprint. kernel-modules-

Provides the remaining kernel modules that are not present in

kernel-core.

The small set of kernel subpackages above aims to provide a reduced maintenance surface to system administrators especially in virtualized and cloud environments.

Optional kernel packages are for example:

kernel-modules-extra- Provides kernel modules for rare hardware. Loading of the module is disabled by default.

kernel-debug- Provides a kernel with many debugging options enabled for kernel diagnosis, at the expense of reduced performance.

kernel-tools- Provides tools for manipulating the Linux kernel and supporting documentation.

kernel-devel-

Provides the kernel headers and makefiles that are enough to build modules against the

kernelpackage. kernel-abi-stablelists-

Provides information pertaining to the RHEL kernel ABI, including a list of kernel symbols required by external Linux kernel modules and a

yumplug-in to aid enforcement. kernel-headers- Includes the C header files that specify the interface between the Linux kernel and user-space libraries and programs. The header files define structures and constants required for building most standard programs.

1.4. Displaying contents of a kernel package

By querying the repository, you can see if a kernel package provides a specific file, such as a module. It is not necessary to download or install the package to display the file list.

Use the dnf utility to query the file list, for example, of the kernel-core, kernel-modules-core, or kernel-modules package. Note that the kernel package is a meta package that does not contain any files.

Procedure

List the available versions of a package:

$ yum repoquery <package_name>Display the list of files in a package:

$ yum repoquery -l <package_name>

1.5. Installing specific kernel versions

Install new kernels using the yum package manager.

Procedure

To install a specific kernel version, enter the following command:

# yum install kernel-5.14.0

1.6. Updating the kernel

Update the kernel using the yum package manager.

Procedure

To update the kernel, enter the following command:

# yum update kernelThis command updates the kernel along with all dependencies to the latest available version.

- Reboot your system for the changes to take effect.

When upgrading from RHEL 7 to RHEL 8, follow relevant sections of the Upgrading from RHEL 7 to RHEL 8 document.

1.7. Setting a kernel as default

Set a specific kernel as default by using the grubby command-line tool and GRUB.

Procedure

Setting the kernel as default by using the

grubbytool.Enter the following command to set the kernel as default using the

grubbytool:# grubby --set-default $kernel_pathThe command uses a machine ID without the

.confsuffix as an argument.NoteThe machine ID is located in the

/boot/loader/entries/directory.

Setting the kernel as default by using the

idargument.List the boot entries using the

idargument:# grubby --info ALL | grep idSet an intended kernel as default:

# grubby --set-default /boot/vmlinuz-<version>.<architecture>NoteTo list the boot entries using the

titleargument, execute the# grubby --info=ALL | grep titlecommand.

Setting the default kernel for only the next boot.

Execute the following command to set the default kernel for only the next reboot using the

grub2-rebootcommand:# grub2-reboot <index|title|id>WarningSet the default kernel for only the next boot with care. Installing new kernel RPMs, self-built kernels, and manually adding the entries to the

/boot/loader/entries/directory might change the index values.

Chapter 2. Managing kernel modules

Learn about kernel modules, how to display their information, and how to perform basic administrative tasks with kernel modules.

2.1. Introduction to kernel modules

Extend the Red Hat Enterprise Linux kernel functionality without rebooting by using kernel modules. These compressed object files add support for hardware drivers, file systems, and system calls. Manage them dynamically to load or unload features as required.

The most common functionality enabled by kernel modules are:

- Device driver which adds support for new hardware

- Support for a file system such as GFS2 or NFS

- System calls

On modern systems, kernel modules are automatically loaded when needed. However, in some cases it is necessary to load or unload modules manually.

Similarly to the kernel, modules accept parameters that customize their behavior.

You can use the kernel tools to perform the following actions on modules:

- Inspect modules that are currently running.

- Inspect modules that are available to load into the kernel.

- Inspect parameters that a module accepts.

- Enable a mechanism to load and unload kernel modules into the running kernel.

2.2. Kernel module dependencies

Certain kernel modules sometimes depend on one or more other kernel modules. The /lib/modules/<KERNEL_VERSION>/modules.dep file contains a complete list of kernel module dependencies for the corresponding kernel version.

depmod

The dependency file is generated by the depmod program, included in the kmod package. Many utilities provided by kmod consider module dependencies when performing operations. Therefore, manual dependency-tracking is rarely necessary.

The code of kernel modules executes in kernel-space in the unrestricted mode. Be mindful of what modules you are loading.

weak-modules

In addition to depmod, Red Hat Enterprise Linux provides the weak-modules script, which is a part of the kmod package. weak-modules determines the modules that are kABI-compatible with installed kernels. While checking modules kernel compatibility, weak-modules processes modules symbol dependencies from higher to lower release of kernel for which they were built. It processes each module independently of the kernel release.

2.3. Listing installed kernel modules

The grubby --info=ALL command displays an indexed list of installed kernels on !BLS and BLS installs.

Procedure

List the installed kernels using the following command:

# grubby --info=ALL | grep titleThe list of all installed kernels is displayed as follows:

title=Red Hat Enterprise Linux (4.18.0-20.el8.x86_64) 8.0 (Ootpa) title=Red Hat Enterprise Linux (4.18.0-19.el8.x86_64) 8.0 (Ootpa) title=Red Hat Enterprise Linux (4.18.0-12.el8.x86_64) 8.0 (Ootpa) title=Red Hat Enterprise Linux (4.18.0) 8.0 (Ootpa) title=Red Hat Enterprise Linux (0-rescue-2fb13ddde2e24fde9e6a246a942caed1) 8.0 (Ootpa)

This is the list of installed kernels of grubby-8.40-17 from the GRUB menu.

2.4. Listing currently loaded kernel modules

View the currently loaded kernel modules.

Prerequisites

-

The

kmodpackage is installed.

Procedure

To list all currently loaded kernel modules, enter:

$ lsmod Module Size Used by fuse 126976 3 uinput 20480 1 xt_CHECKSUM 16384 1 ipt_MASQUERADE 16384 1 xt_conntrack 16384 1 ipt_REJECT 16384 1 nft_counter 16384 16 nf_nat_tftp 16384 0 nf_conntrack_tftp 16384 1 nf_nat_tftp tun 49152 1 bridge 192512 0 stp 16384 1 bridge llc 16384 2 bridge,stp nf_tables_set 32768 5 nft_fib_inet 16384 1 …In the example above:

-

The

Modulecolumn provides the names of currently loaded modules. -

The

Sizecolumn displays the amount of memory per module in kilobytes. -

The

Used bycolumn shows the number, and optionally the names of modules that are dependent on a particular module.

-

The

2.5. Listing all installed kernels

Use the grubby utility to list all installed kernels on your system.

Prerequisites

- You have root permissions.

Procedure

To list all installed kernels, enter:

# grubby --info=ALL | grep ^kernel kernel="/boot/vmlinuz-4.18.0-305.10.2.el8_4.x86_64" kernel="/boot/vmlinuz-4.18.0-240.el8.x86_64" kernel="/boot/vmlinuz-0-rescue-41eb2e172d7244698abda79a51778f1b"

The output shows the path and versions of all the kernels installed.

2.6. Displaying information about kernel modules

Use the modinfo command to display some detailed information about the specified kernel module.

Prerequisites

-

The

kmodpackage is installed.

Procedure

To display information about any kernel module, enter:

$ modinfo <KERNEL_MODULE_NAME>For example:

$ modinfo virtio_net filename: /lib/modules/4.18.0-94.el8.x86_64/kernel/drivers/net/virtio_net.ko.xz license: GPL description: Virtio network driver rhelversion: 8.1 srcversion: 2E9345B281A898A91319773 alias: virtio:d00000001v* depends: net_failover intree: Y name: virtio_net vermagic: 4.18.0-94.el8.x86_64 SMP mod_unload modversions … parm: napi_weight:int parm: csum:bool parm: gso:bool parm: napi_tx:boolYou can query information about all available modules, regardless of whether they are loaded. The

parmentries show parameters the user is able to set for the module, and what type of value they expect.NoteWhen entering the name of a kernel module, do not append the

.ko.xzextension to the end of the name. Kernel module names do not have extensions; their corresponding files do.

2.7. Loading kernel modules at system runtime

The optimal way to expand the functionality of the Linux kernel is by loading kernel modules. Use the modprobe command to find and load a kernel module into the currently running kernel.

The changes described in this procedure will not persist after rebooting the system. For information about how to load kernel modules to persist across system reboots, see Loading kernel modules automatically at system boot time.

Prerequisites

- Root permissions

-

The

kmodpackage is installed. - The kernel module is not loaded. To ensure this is the case, list the Listing currently loaded kernel modules.

Procedure

Select a kernel module you want to load.

The modules are located in the

/lib/modules/$(uname -r)/kernel/<SUBSYSTEM>/directory.Load the relevant kernel module:

# modprobe <MODULE_NAME>NoteWhen entering the name of a kernel module, do not append the

.ko.xzextension to the end of the name. Kernel module names do not have extensions; their corresponding files do.

Verification

Optionally, verify the relevant module was loaded:

$ lsmod | grep <MODULE_NAME>If the module was loaded correctly, this command displays the relevant kernel module. For example:

$ lsmod | grep serio_raw serio_raw 16384 0

2.8. Unloading kernel modules at system runtime

To unload certain kernel modules from the running kernel, use the modprobe command to find and unload a kernel module at system runtime from the currently loaded kernel.

You must not unload the kernel modules that are used by the running system because it can lead to an unstable or non-operational system.

After finishing the unloading of inactive kernel modules, the modules that are defined to be automatically loaded on boot, will not remain unloaded after rebooting the system. For information about how to prevent this outcome, see Preventing kernel modules from being automatically loaded at system boot time.

Prerequisites

- You have root permissions.

-

The

kmodpackage is installed.

Procedure

List all the loaded kernel modules:

# lsmodSelect the kernel module you want to unload.

If a kernel module has dependencies, unload those prior to unloading the kernel module. For details on identifying modules with dependencies, see Listing currently loaded kernel modules and Kernel module dependencies.

Unload the relevant kernel module:

# modprobe -r <MODULE_NAME>When entering the name of a kernel module, do not append the

.ko.xzextension to the end of the name. Kernel module names do not have extensions; their corresponding files do.

Verification

Optionally, verify the relevant module was unloaded:

$ lsmod | grep <MODULE_NAME>If the module is unloaded successfully, this command does not display any output.

2.9. Unloading kernel modules at early stages of the boot process

Unload a kernel module early in the boot process if it causes system unresponsiveness preventing normal access. Use the boot loader to temporarily block specific modules, allowing you to reach a state where permanent changes can be made.

The changes described in this procedure will not persist after the next reboot. For information about how to add a kernel module to a denylist so that it will not be automatically loaded during the boot process, see Preventing kernel modules from being automatically loaded at system boot time.

Prerequisites

- You have a loadable kernel module that you want to prevent from loading for some reason.

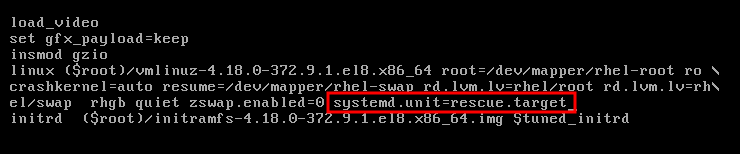

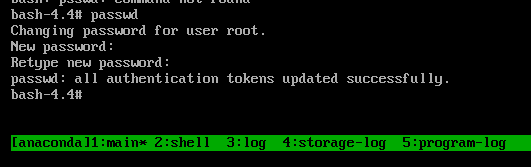

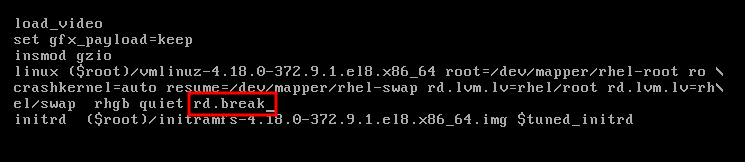

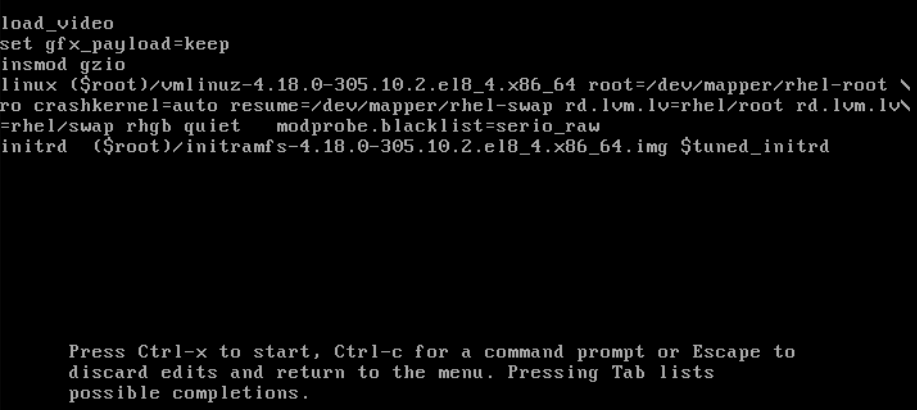

Procedure

- Boot the system into the boot loader.

- Use the cursor keys to highlight the relevant boot loader entry.

Press the e key to edit the entry.

- Use the cursor keys to navigate to the line that starts with linux.

Append

modprobe.blacklist=module_nameto the end of the line.Figure 2.2. Kernel boot entry

The

serio_rawkernel module illustrates a rogue module to be unloaded early in the boot process.- Press Ctrl+X to boot using the modified configuration.

Verification

After the system boots, verify that the relevant kernel module is not loaded:

# lsmod | grep serio_raw

2.10. Loading kernel modules automatically at system boot time

Configure a kernel module to load it automatically during the boot process.

Prerequisites

- Root permissions

-

The

kmodpackage is installed.

Procedure

Select a kernel module you want to load during the boot process.

The modules are located in the

/lib/modules/$(uname -r)/kernel/<SUBSYSTEM>/directory.Create a configuration file for the module:

# echo <MODULE_NAME> > /etc/modules-load.d/<MODULE_NAME>.confNoteWhen entering the name of a kernel module, do not append the

.ko.xzextension to the end of the name. Kernel module names do not have extensions; their corresponding files do.

Verification

After reboot, verify the relevant module is loaded:

$ lsmod | grep <MODULE_NAME>

The changes described in this procedure will persist after rebooting the system.

2.11. Preventing kernel modules from being automatically loaded at system boot time

Prevent the system from loading a kernel module automatically during boot by listing the module in the modprobe configuration file with a corresponding command.

Prerequisites

-

The commands in this procedure require root privileges. Either use

su -to switch to the root user or preface the commands withsudo. -

The

kmodpackage is installed. - Ensure that your current system configuration does not require a kernel module you plan to deny.

Procedure

List modules loaded to the currently running kernel by using the

lsmodcommand:$ lsmodModule Size Used by tls 131072 0 uinput 20480 1 snd_seq_dummy 16384 0 snd_hrtimer 16384 1 …In the output, identify the module you want to prevent from getting loaded.

Alternatively, identify an unloaded kernel module you want to prevent from potentially loading in the

/lib/modules/<KERNEL-VERSION>/kernel/<SUBSYSTEM>/directory, for example:$ ls /lib/modules/4.18.0-477.20.1.el8_8.x86_64/kernel/crypto/ansi_cprng.ko.xz chacha20poly1305.ko.xz md4.ko.xz serpent_generic.ko.xz anubis.ko.xz cmac.ko.xz…

Create a configuration file serving as a denylist:

# touch /etc/modprobe.d/denylist.confIn a text editor of your choice, combine the names of modules you want to exclude from automatic loading to the kernel with the

blacklistconfiguration command, for example:# Prevents <KERNEL-MODULE-1> from being loaded blacklist <MODULE-NAME-1> install <MODULE-NAME-1> /bin/false # Prevents <KERNEL-MODULE-2> from being loaded blacklist <MODULE-NAME-2> install <MODULE-NAME-2> /bin/false …Because the

blacklistcommand does not prevent the module from getting loaded as a dependency for another kernel module that is not in a denylist, you must also define theinstallline. In this case, the system runs/bin/falseinstead of installing the module. The lines starting with a hash sign are comments you can use to make the file more readable.NoteWhen entering the name of a kernel module, do not append the

.ko.xzextension to the end of the name. Kernel module names do not have extensions; their corresponding files do.Create a backup copy of the current initial RAM disk image before rebuilding:

# cp /boot/initramfs-$(uname -r).img /boot/initramfs-$(uname -r).bak.$(date +%m-%d-%H%M%S).imgAlternatively, create a backup copy of an initial RAM disk image which corresponds to the kernel version for which you want to prevent kernel modules from automatic loading:

# cp /boot/initramfs-<VERSION>.img /boot/initramfs-<VERSION>.img.bak.$(date +%m-%d-%H%M%S)

Generate a new initial RAM disk image to apply the changes:

# dracut -f -vIf you build an initial RAM disk image for a different kernel version than your system currently uses, specify both target

initramfsand kernel version:# dracut -f -v /boot/initramfs-<TARGET-VERSION>.img <CORRESPONDING-TARGET-KERNEL-VERSION>

Restart the system:

$ reboot

The changes described in this procedure will take effect and persist after rebooting the system. If you incorrectly list a key kernel module in the denylist, you can switch the system to an unstable or non-operational state.

2.12. Compiling custom kernel modules

You can build a sampling kernel module as requested by various configurations at hardware and software level.

Prerequisites

You installed the

kernel-devel,gcc, andelfutils-libelf-develpackages.# dnf install kernel-devel-$(uname -r) gcc elfutils-libelf-devel- You have root permissions.

-

You created the

/root/testmodule/directory where you compile the custom kernel module.

Procedure

Create the

/root/testmodule/test.cfile with the following content.#include <linux/module.h> #include <linux/kernel.h> int init_module(void) { printk("Hello World\n This is a test\n"); return 0; } void cleanup_module(void) { printk("Good Bye World"); }The

test.cfile is a source file that provides the main functionality to the kernel module. The file has been created in a dedicated/root/testmodule/directory for organizational purposes. After the module compilation, the/root/testmodule/directory will contain multiple files.The

test.cfile includes from the system libraries:-

The

linux/kernel.hheader file is necessary for theprintk()function in the example code. -

The

linux/module.hheader file contains function declarations and macro definitions that are shared across multiple C source files.

-

The

-

Follow the

init_module()andcleanup_module()functions to start and end the kernel logging functionprintk(), which prints text. Create the

/root/testmodule/Makefilefile with the following content.obj-m := test.oThe Makefile contains instructions for the compiler to produce an object file named

test.o. Theobj-mdirective specifies that the resultingtest.kofile is going to be compiled as a loadable kernel module. Alternatively, theobj-ydirective can instruct to buildtest.koas a built-in kernel module.Compile the kernel module.

# make -C /lib/modules/$(uname -r)/build M=/root/testmodule modules make: Entering directory '/usr/src/kernels/4.18.0-305.el8.x86_64' CC [M] /root/testmodule/test.o Building modules, stage 2. MODPOST 1 modules WARNING: modpost: missing MODULE_LICENSE() in /root/testmodule/test.o see include/linux/module.h for more information CC /root/testmodule/test.mod.o LD [M] /root/testmodule/test.ko make: Leaving directory '/usr/src/kernels/4.18.0-305.el8.x86_64'The compiler creates an object file (

test.o) for each source file (test.c) as an intermediate step before linking them together into the final kernel module (test.ko).After a successful compilation,

/root/testmodule/contains additional files that relate to the compiled custom kernel module. The compiled module itself is represented by thetest.kofile.

Verification

Optional: check the contents of the

/root/testmodule/directory:# ls -l /root/testmodule/ total 152 -rw-r—r--. 1 root root 16 Jul 26 08:19 Makefile -rw-r—r--. 1 root root 25 Jul 26 08:20 modules.order -rw-r—r--. 1 root root 0 Jul 26 08:20 Module.symvers -rw-r—r--. 1 root root 224 Jul 26 08:18 test.c -rw-r—r--. 1 root root 62176 Jul 26 08:20 test.ko -rw-r—r--. 1 root root 25 Jul 26 08:20 test.mod -rw-r—r--. 1 root root 849 Jul 26 08:20 test.mod.c -rw-r—r--. 1 root root 50936 Jul 26 08:20 test.mod.o -rw-r—r--. 1 root root 12912 Jul 26 08:20 test.oCopy the kernel module to the

/lib/modules/$(uname -r)/directory:# cp /root/testmodule/test.ko /lib/modules/$(uname -r)/Update the modular dependency list:

# depmod -aLoad the kernel module:

# modprobe -v test insmod /lib/modules/4.18.0-305.el8.x86_64/test.koVerify that the kernel module was successfully loaded:

# lsmod | grep test test 16384 0Read the latest messages from the kernel ring buffer:

# dmesg [74422.545004] Hello World This is a test

Chapter 3. Signing a kernel and modules for Secure Boot

Enhance system security by using signed kernels and modules. On UEFI systems with Secure Boot, you can self-sign custom kernel builds or kernel modules, and import public keys to target systems. Secure Boot requires signing the boot loader, kernel, and all kernel modules to ensure a successful boot process.

RHEL 8 includes:

- Signed boot loaders

- Signed kernels

- Signed kernel modules

In addition, the signed first-stage boot loader and the signed kernel include embedded Red Hat public keys. These signed executable binaries and embedded keys enable RHEL 8 to install, boot, and run with the Microsoft UEFI Secure Boot Certification Authority keys. These keys are provided by the UEFI firmware on systems that support UEFI Secure Boot.

- Not all UEFI-based systems include support for Secure Boot.

- The build system, where you build and sign your kernel module, does not need to have UEFI Secure Boot enabled and does not even need to be a UEFI-based system.

3.1. Prerequisites

To be able to sign externally built kernel modules, install the utilities from the following packages:

# yum install pesign openssl kernel-devel mokutil keyutilsExpand Table 3.1. Required utilities Utility Provided by package Used on Purpose efikeygenpesignBuild system

Generates public and private X.509 key pair

opensslopensslBuild system

Exports the unencrypted private key

sign-filekernel-develBuild system

Executable file used to sign a kernel module with the private key

mokutilmokutilTarget system

Optional utility used to manually enroll the public key

keyctlkeyutilsTarget system

Optional utility used to display public keys in the system keyring

3.2. What is UEFI Secure Boot

With the Unified Extensible Firmware Interface (UEFI) Secure Boot technology, you can prevent the execution of the kernel-space code that is not signed by a trusted key. The system boot loader is signed with a cryptographic key. The database of public keys in the firmware authorizes the process of signing the key. You can subsequently verify a signature in the next-stage boot loader and the kernel.

UEFI Secure Boot establishes a chain of trust from the firmware to the signed drivers and kernel modules as follows:

-

An UEFI private key signs, and a public key authenticates the

shimfirst-stage boot loader. A certificate authority (CA) in turn signs the public key. The CA is stored in the firmware database. -

The

shimfile contains the Red Hat public key Red Hat Secure Boot (CA key 1) to authenticate the GRUB boot loader and the kernel. - The kernel in turn contains public keys to authenticate drivers and modules.

Secure Boot is the boot path validation component of the UEFI specification. The specification defines:

- Programming interface for cryptographically protected UEFI variables in non-volatile storage.

- Storing the trusted X.509 root certificates in UEFI variables.

- Validation of UEFI applications such as boot loaders and drivers.

- Procedures to revoke known-bad certificates and application hashes.

UEFI Secure Boot helps in the detection of unauthorized changes but does not:

- Prevent installation or removal of second-stage boot loaders.

- Require explicit user confirmation of such changes.

- Stop boot path manipulations. Signatures are verified during booting but, not when the boot loader is installed or updated.

If the boot loader or the kernel are not signed by a system trusted key, Secure Boot prevents them from starting.

3.3. UEFI Secure Boot support

You can install and run RHEL 8 on systems with UEFI Secure Boot enabled if a trusted key signs the kernel and all loaded drivers. Red Hat provides signed and authenticated kernels and drivers. You must sign externally built kernels or drivers before loading them.

Restrictions imposed by UEFI Secure Boot

- The system only runs the kernel-mode code after its signature has been properly authenticated.

- GRUB module loading is disabled because no infrastructure exists for signing and verification of GRUB modules. Allowing module loading would run untrusted code within the security perimeter defined by Secure Boot.

- Red Hat provides a signed GRUB binary that has all supported modules on RHEL 8.

3.4. Requirements for authenticating kernel modules with X.509 keys

When loading a kernel module, the kernel verifies its signature against public X.509 keys in the system (.builtin_trusted_keys) and platform (.platform) keyrings. Keys in the .blacklist keyring are explicitly excluded from verification.

You need to meet certain conditions to load kernel modules on systems with enabled UEFI Secure Boot functionality:

If UEFI Secure Boot is enabled or if the

module.sig_enforcekernel parameter has been specified:-

You can only load those signed kernel modules whose signatures were authenticated against keys from the system keyring (

.builtin_trusted_keys) and the platform keyring (.platform). -

The public key must not be on the system revoked keys keyring (

.blacklist).

-

You can only load those signed kernel modules whose signatures were authenticated against keys from the system keyring (

If UEFI Secure Boot is disabled and the

module.sig_enforcekernel parameter has not been specified:- You can load unsigned kernel modules and signed kernel modules without a public key.

If the system is not UEFI-based or if UEFI Secure Boot is disabled:

-

Only the keys embedded in the kernel are loaded onto

.builtin_trusted_keysand.platform. - You have no ability to augment that set of keys without rebuilding the kernel.

-

Only the keys embedded in the kernel are loaded onto

| Module signed | Public key found and signature valid | UEFI Secure Boot state | sig_enforce | Module load | Kernel tainted |

|---|---|---|---|---|---|

| Unsigned | - | Not enabled | Not enabled | Succeeds | Yes |

| Not enabled | Enabled | Fails | - | ||

| Enabled | - | Fails | - | ||

| Signed | No | Not enabled | Not enabled | Succeeds | Yes |

| Not enabled | Enabled | Fails | - | ||

| Enabled | - | Fails | - | ||

| Signed | Yes | Not enabled | Not enabled | Succeeds | No |

| Not enabled | Enabled | Succeeds | No | ||

| Enabled | - | Succeeds | No |

3.5. Sources for public keys

During boot, the kernel loads X.509 keys from a set of persistent key stores into the following keyrings:

-

The system keyring (

.builtin_trusted_keys) -

The

.platformkeyring -

The system

.blacklistkeyring

| Source of X.509 keys | User can add keys | UEFI Secure Boot state | Keys loaded during boot |

|---|---|---|---|

| Embedded in kernel | No | - |

|

|

UEFI | Limited | Not enabled | No |

| Enabled |

| ||

|

Embedded in the | No | Not enabled | No |

| Enabled |

| ||

| Machine Owner Key (MOK) list | Yes | Not enabled | No |

| Enabled |

|

.builtin_trusted_keys- A keyring that is built on boot.

- Provides trusted public keys.

-

rootprivileges are required to view the keys.

.platform- A keyring that is built on boot.

- Provides keys from third-party platform providers and custom public keys.

-

rootprivileges are required to view the keys.

.blacklist- A keyring with X.509 keys which have been revoked.

-

A module signed by a key from

.blacklistwill fail authentication even if your public key is in.builtin_trusted_keys.

- UEFI Secure Boot

db - A signature database.

- Stores keys (hashes) of UEFI applications, UEFI drivers, and boot loaders.

- The keys can be loaded on the machine.

- UEFI Secure Boot

dbx - A revoked signature database.

- Prevents keys from getting loaded.

-

The revoked keys from this database are added to the

.blacklistkeyring.

3.6. Generating a public and private key pair

Generate an X.509 key pair to use custom kernels or modules on Secure Boot systems. Use the private key to sign your binaries, and enroll the public key in the Machine Owner Key (MOK) list to validate and authorize them for boot.

Apply strong security measures and access policies to guard the contents of your private key. In the wrong hands, the key could be used to compromise any system which is authenticated by the corresponding public key.

Procedure

Create an X.509 public and private key pair:

If you only want to sign custom kernel modules:

# efikeygen --dbdir /etc/pki/pesign \ --self-sign \ --module \ --common-name 'CN=Organization signing key' \ --nickname 'Custom Secure Boot key'If you want to sign custom kernel:

# efikeygen --dbdir /etc/pki/pesign \ --self-sign \ --kernel \ --common-name 'CN=Organization signing key' \ --nickname 'Custom Secure Boot key'When the RHEL system is running FIPS mode:

# efikeygen --dbdir /etc/pki/pesign \ --self-sign \ --kernel \ --common-name 'CN=Organization signing key' \ --nickname 'Custom Secure Boot key' \ --token 'NSS FIPS 140-2 Certificate DB'NoteIn FIPS mode, you must use the

--tokenoption so thatefikeygenfinds the default "NSS Certificate DB" token in the PKI database.The public and private keys are now stored in the

/etc/pki/pesign/directory.

It is a good security practice to sign the kernel and the kernel modules within the validity period of its signing key. However, the sign-file utility does not warn you and the key will be usable in RHEL 8 regardless of the validity dates.

3.7. Example output of system keyrings

You can display information about the keys on the system keyrings using the keyctl utility from the keyutils package.

Prerequisites

- You have root permissions.

-

You have installed the

keyctlutility from thekeyutilspackage.

Example 3.1. Keyrings output

The following is a shortened example output of .builtin_trusted_keys, .platform, and .blacklist keyrings from a RHEL 8 system where UEFI Secure Boot is enabled.

# keyctl list %:.builtin_trusted_keys

6 keys in keyring:

...asymmetric: Red Hat Enterprise Linux Driver Update Program (key 3): bf57f3e87...

...asymmetric: Red Hat Secure Boot (CA key 1): 4016841644ce3a810408050766e8f8a29...

...asymmetric: Microsoft Corporation UEFI CA 2011: 13adbf4309bd82709c8cd54f316ed...

...asymmetric: Microsoft Windows Production PCA 2011: a92902398e16c49778cd90f99e...

...asymmetric: Red Hat Enterprise Linux kernel signing key: 4249689eefc77e95880b...

...asymmetric: Red Hat Enterprise Linux kpatch signing key: 4d38fd864ebe18c5f0b7...

# keyctl list %:.platform

4 keys in keyring:

...asymmetric: VMware, Inc.: 4ad8da0472073...

...asymmetric: Red Hat Secure Boot CA 5: cc6fafe72...

...asymmetric: Microsoft Windows Production PCA 2011: a929f298e1...

...asymmetric: Microsoft Corporation UEFI CA 2011: 13adbf4e0bd82...

# keyctl list %:.blacklist

4 keys in keyring:

...blacklist: bin:f5ff83a...

...blacklist: bin:0dfdbec...

...blacklist: bin:38f1d22...

...blacklist: bin:51f831f...

The .builtin_trusted_keys keyring in the example shows the addition of two keys from the UEFI Secure Boot db keys as well as the Red Hat Secure Boot (CA key 1), which is embedded in the shim boot loader.

Example 3.2. Kernel console output

The following example shows the kernel console output. The messages identify the keys with an UEFI Secure Boot related source. These include UEFI Secure Boot db, embedded shim, and MOK list.

# dmesg | egrep 'integrity.*cert'

[1.512966] integrity: Loading X.509 certificate: UEFI:db

[1.513027] integrity: Loaded X.509 cert 'Microsoft Windows Production PCA 2011: a929023...

[1.513028] integrity: Loading X.509 certificate: UEFI:db

[1.513057] integrity: Loaded X.509 cert 'Microsoft Corporation UEFI CA 2011: 13adbf4309...

[1.513298] integrity: Loading X.509 certificate: UEFI:MokListRT (MOKvar table)

[1.513549] integrity: Loaded X.509 cert 'Red Hat Secure Boot CA 5: cc6fa5e72868ba494e93...3.8. Enrolling public key on target system by adding the public key to the MOK list

Authenticate kernel module access by enrolling your public key in the target system’s platform keyring (.platform). Using the Machine Owner Key (MOK) facility, you can expand the UEFI Secure Boot key database to include custom keys for persistent authentication across reboots.

The MOK facility is supported by shim, MokManager, GRUB, and the mokutil utility that enables secure key management and authentication for UEFI-based systems.

To get the authentication service of your kernel module on your systems, consider requesting your system vendor to incorporate your public key into the UEFI Secure Boot key database in their factory firmware image.

Prerequisites

- You have generated a public and private key pair and know the validity dates of your public keys. For details, see Generating a public and private key pair.

Procedure

Export your public key to the

sb_cert.cerfile:# certutil -d /etc/pki/pesign \ -n 'Custom Secure Boot key' \ -Lr \ > sb_cert.cerImport your public key into the MOK list:

# mokutil --import sb_cert.cer- Enter a new password for this MOK enrollment request.

Reboot the machine.

The

shimboot loader notices the pending MOK key enrollment request and it launchesMokManager.efito enable you to complete the enrollment from the UEFI console.Choose

Enroll MOK, enter the password you previously associated with this request when prompted, and confirm the enrollment.Your public key is added to the MOK list, which is persistent.

Once a key is on the MOK list, it will be automatically propagated to the

.platformkeyring on this and subsequent boots when UEFI Secure Boot is enabled.

3.9. Signing a kernel with the private key

You can obtain enhanced security benefits on your system by loading a signed kernel if the UEFI Secure Boot mechanism is enabled.

Prerequisites

- You have generated a public and private key pair and know the validity dates of your public keys. For details, see Generating a public and private key pair.

- You have enrolled your public key on the target system. For details, see Enrolling public key on target system by adding the public key to the MOK list.

- You have a kernel image in the ELF format available for signing.

Procedure

On the x64 architecture:

Create a signed image:

# pesign --certificate 'Custom Secure Boot key' \ --in vmlinuz-version \ --sign \ --out vmlinuz-version.signedReplace

versionwith the version suffix of yourvmlinuzfile, andCustom Secure Boot keywith the name that you chose earlier.Optional: Check the signatures:

# pesign --show-signature \ --in vmlinuz-version.signedOverwrite the unsigned image with the signed image:

# mv vmlinuz-version.signed vmlinuz-version

On the 64-bit ARM architecture:

Decompress the

vmlinuzfile:# zcat vmlinuz-version > vmlinux-versionCreate a signed image:

# pesign --certificate 'Custom Secure Boot key' \ --in vmlinux-version \ --sign \ --out vmlinux-version.signedOptional: Check the signatures:

# pesign --show-signature \ --in vmlinux-version.signedCompress the

vmlinuxfile:# gzip --to-stdout vmlinux-version.signed > vmlinuz-versionRemove the uncompressed

vmlinuxfile:# rm vmlinux-version*

3.10. Signing a GRUB build with the private key

On a system where the UEFI Secure Boot mechanism is enabled, you can sign a GRUB build with a custom existing private key. You must do this if you are using a custom GRUB build, or if you have removed the Microsoft trust anchor from your system.

Prerequisites

- You have generated a public and private key pair and know the validity dates of your public keys. For details, see Generating a public and private key pair.

- You have enrolled your public key on the target system. For details, see Enrolling public key on target system by adding the public key to the MOK list.

- You have a GRUB EFI binary available for signing.

Procedure

On the x64 architecture:

Create a signed GRUB EFI binary:

# pesign --in /boot/efi/EFI/redhat/grubx64.efi \ --out /boot/efi/EFI/redhat/grubx64.efi.signed \ --certificate 'Custom Secure Boot key' \ --signReplace

Custom Secure Boot keywith the name that you chose earlier.Optional: Check the signatures:

# pesign --in /boot/efi/EFI/redhat/grubx64.efi.signed \ --show-signatureOverwrite the unsigned binary with the signed binary:

# mv /boot/efi/EFI/redhat/grubx64.efi.signed \ /boot/efi/EFI/redhat/grubx64.efi

On the 64-bit ARM architecture:

Create a signed GRUB EFI binary:

# pesign --in /boot/efi/EFI/redhat/grubaa64.efi \ --out /boot/efi/EFI/redhat/grubaa64.efi.signed \ --certificate 'Custom Secure Boot key' \ --signReplace

Custom Secure Boot keywith the name that you chose earlier.Optional: Check the signatures:

# pesign --in /boot/efi/EFI/redhat/grubaa64.efi.signed \ --show-signatureOverwrite the unsigned binary with the signed binary:

# mv /boot/efi/EFI/redhat/grubaa64.efi.signed \ /boot/efi/EFI/redhat/grubaa64.efi

3.11. Signing kernel modules with the private key

Load signed kernel modules to enhance system security when UEFI Secure Boot is active.

Your signed kernel module is also loadable on systems where UEFI Secure Boot is disabled or on a non-UEFI system. As a result, you do not need to provide both, a signed and unsigned version of your kernel module.

Prerequisites

- You have generated a public and private key pair and know the validity dates of your public keys. For details, see Generating a public and private key pair.

- You have enrolled your public key on the target system. For details, see Enrolling public key on target system by adding the public key to the MOK list.

- You have a kernel module in ELF image format available for signing.

Procedure

Export your public key to the

sb_cert.cerfile:# certutil -d /etc/pki/pesign \ -n 'Custom Secure Boot key' \ -Lr \ > sb_cert.cerExtract the key from the NSS database as a PKCS #12 file:

# pk12util -o sb_cert.p12 \ -n 'Custom Secure Boot key' \ -d /etc/pki/pesign- When the previous command prompts, enter a new password that encrypts the private key.

Export the unencrypted private key:

# openssl pkcs12 \ -in sb_cert.p12 \ -out sb_cert.priv \ -nocerts \ -nodesImportantKeep the unencrypted private key secure.

Sign your kernel module. The following command appends the signature directly to the ELF image in your kernel module file:

# /usr/src/kernels/$(uname -r)/scripts/sign-file \ sha256 \ sb_cert.priv \ sb_cert.cer \ my_module.ko

Your kernel module is now ready for loading.

In RHEL 8, the validity dates of the key pair matter. The key does not expire, but the kernel module must be signed within the validity period of its signing key. The sign-file utility will not warn you of this. For example, a key that is only valid in 2019 can be used to authenticate a kernel module signed in 2019 with that key. However, users cannot use that key to sign a kernel module in 2020.

Verification

Display information about the kernel module’s signature:

# modinfo my_module.ko | grep signersigner: Your Name KeyCheck that the signature lists your name as entered during generation.

NoteThe appended signature is not contained in an ELF image section and is not a formal part of the ELF image. Therefore, utilities such as

readelfcannot display the signature on your kernel module.Load the module:

# insmod my_module.koRemove (unload) the module:

# modprobe -r my_module.ko

3.12. Loading signed kernel modules

After enrolling your public key in the system keyring (.builtin_trusted_keys) and the MOK list, and signing kernel modules with your private key, you can load them using the modprobe command.

Prerequisites

- You have generated the public and private key pair. For details, see Generating a public and private key pair.

- You have enrolled the public key into the system keyring. For details, see Enrolling public key on target system by adding the public key to the MOK list.

- You have signed a kernel module with the private key. For details, see Signing kernel modules with the private key.

Install the

kernel-modules-extrapackage, which creates the/lib/modules/$(uname -r)/extra/directory:# yum -y install kernel-modules-extra

Procedure

Verify that your public keys are on the system keyring:

# keyctl list %:.platformCopy the kernel module into the

extra/directory of the kernel that you want:# cp my_module.ko /lib/modules/$(uname -r)/extra/Update the modular dependency list:

# depmod -aLoad the kernel module:

# modprobe -v my_moduleOptional: To load the module on boot, add it to the

/etc/modules-loaded.d/my_module.conffile:# echo "my_module" > /etc/modules-load.d/my_module.conf

Verification

Verify that the module was successfully loaded:

# lsmod | grep my_module

Chapter 4. Configuring kernel command-line parameters

With kernel command-line parameters, you can change the behavior of certain aspects of the Red Hat Enterprise Linux kernel at boot time. As a system administrator, you control which options get set at boot. Note that certain kernel behaviors can only be set at boot time.

Changing the behavior of the system by modifying kernel command-line parameters can have negative effects on your system. Always test changes before deploying them in production. For further guidance, contact Red Hat Support.

4.1. What are kernel command-line parameters

With kernel command-line parameters, you can overwrite default values and set specific hardware settings. At boot time, you can configure the following features:

- The Red Hat Enterprise Linux kernel

- The initial RAM disk

- The user space features

By default, the kernel command-line parameters for systems using the GRUB boot loader are defined in the kernelopts variable of the /boot/grub2/grubenv file for each kernel boot entry.

For IBM Z, the kernel command-line parameters are stored in the boot entry configuration file because the zipl boot loader does not support environment variables. Thus, the kernelopts environment variable cannot be used.

You can manipulate boot loader configuration files by using the grubby utility. With grubby, you can perform these actions:

- Change the default boot entry.

- Add or remove arguments from a GRUB menu entry.

4.2. Understanding boot entries

A boot entry is a collection of options stored in a configuration file and tied to a particular kernel version. In practice, you have at least as many boot entries as your system has installed kernels. The boot entry configuration file is located in the /boot/loader/entries/ directory:

6f9cc9cb7d7845d49698c9537337cedc-4.18.0-5.el8.x86_64.conf

The file name above consists of a machine ID stored in the /etc/machine-id file, and a kernel version.

The boot entry configuration file contains information about the kernel version, the initial ramdisk image, and the kernelopts environment variable that contains the kernel command-line parameters. The configuration file can have the following contents:

title Red Hat Enterprise Linux (4.18.0-74.el8.x86_64) 8.0 (Ootpa)

version 4.18.0-74.el8.x86_64

linux /vmlinuz-4.18.0-74.el8.x86_64

initrd /initramfs-4.18.0-74.el8.x86_64.img $tuned_initrd

options $kernelopts $tuned_params

id rhel-20190227183418-4.18.0-74.el8.x86_64

grub_users $grub_users

grub_arg --unrestricted

grub_class kernel

The kernelopts environment variable is defined in the /boot/grub2/grubenv file.

4.3. Changing kernel command-line parameters for all boot entries

Change kernel command-line parameters for all boot entries on your system.

Prerequisites

-

grubbyutility is installed on your system. -

ziplutility is installed on your IBM Z system.

Procedure

To add a parameter:

# grubby --update-kernel=ALL --args="<NEW_PARAMETER>"For systems that use the GRUB boot loader, the command updates the

/boot/grub2/grubenvfile by adding a new kernel parameter to thekerneloptsvariable in that file.On IBM Z, update the boot menu:

# zipl

To remove a parameter:

# grubby --update-kernel=ALL --remove-args="<PARAMETER_TO_REMOVE>"On IBM Z, update the boot menu:

# zipl

Newly installed kernels inherit the kernel command-line parameters from your previously configured kernels.

4.4. Changing kernel command-line parameters for a single boot entry

Make changes in kernel command-line parameters for a single boot entry on your system.

Prerequisites

-

grubbyandziplutilities are installed on your system.

Procedure

To add a parameter:

# grubby --update-kernel=/boot/vmlinuz-$(uname -r) --args="<NEW_PARAMETER>"On IBM Z, update the boot menu:

# grubby --args="<NEW_PARAMETER> --update-kernel=ALL --zipl

To remove a parameter:

# grubby --update-kernel=/boot/vmlinuz-$(uname -r) --remove-args="<PARAMETER_TO_REMOVE>"On IBM Z, update the boot menu:

# grubby --args="<PARAMETER_TO_REMOVE> --update-kernel=ALL --zipl

On systems that use the grub.cfg file, the options parameter exists by default for each kernel boot entry, which is set to the kernelopts variable. This variable is defined in the /boot/grub2/grubenv configuration file.

On GRUB systems:

-

If the kernel command-line parameters are modified for all boot entries, the

grubbyutility updates thekerneloptsvariable in the/boot/grub2/grubenvfile. -

If kernel command-line parameters are modified for a single boot entry, the

kerneloptsvariable is expanded, the kernel parameters are modified, and the resulting value is stored in that boot entry’s/boot/loader/entries/<RELEVANT_KERNEL_BOOT_ENTRY.conf>file.

On zIPL systems:

-

grubbymodifies and stores the kernel command-line parameters of an individual kernel boot entry in the/boot/loader/entries/<ENTRY>.conffile.

4.5. Changing kernel command-line parameters temporarily at boot time

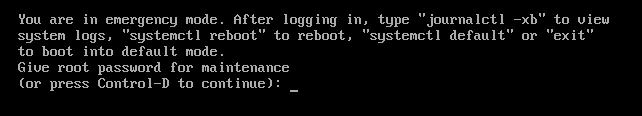

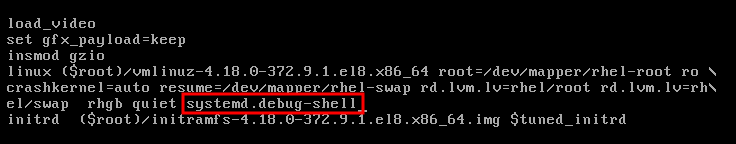

Make temporary changes to a Kernel Menu Entry by changing the kernel parameters only during a single boot process.

This procedure applies only for a single boot and does not persistently make the changes.

Procedure

- Boot into the GRUB boot menu.

- Select the kernel you want to start.

- Press the e key to edit the kernel parameters.

-

Find the kernel command line by moving the cursor down. The kernel command line starts with

linuxon 64-Bit IBM Power Series and x86-64 BIOS-based systems, orlinuxefion UEFI systems. Move the cursor to the end of the line.

NotePress Ctrl+a to jump to the start of the line and Ctrl+e to jump to the end of the line. On some systems, Home and End keys might also work.

Edit the kernel parameters as required. For example, to run the system in emergency mode, add the

emergencyparameter at the end of thelinuxline:linux ($root)/vmlinuz-4.18.0-348.12.2.el8_5.x86_64 root=/dev/mapper/rhel-root ro crashkernel=auto resume=/dev/mapper/rhel-swap rd.lvm.lv=rhel/root rd.lvm.lv=rhel/swap rhgb quiet emergencyTo enable the system messages, remove the

rhgbandquietparameters.- Press Ctrl+x to boot with the selected kernel and the modified command line parameters.

If you press the Esc key to leave command line editing, it will drop all the user made changes.

4.6. Configuring GRUB settings to enable serial console connection

The serial console is beneficial when you need to connect to a headless server or an embedded system and the network is down. Or when you need to avoid security rules and obtain login access on a different system.

You need to configure some default GRUB settings to use the serial console connection.

Prerequisites

- You have root permissions.

Procedure

Add the following two lines to the

/etc/default/grubfile:GRUB_TERMINAL="serial" GRUB_SERIAL_COMMAND="serial --speed=9600 --unit=0 --word=8 --parity=no --stop=1"The first line disables the graphical terminal. The

GRUB_TERMINALkey overrides values ofGRUB_TERMINAL_INPUTandGRUB_TERMINAL_OUTPUTkeys.The second line adjusts the baud rate (

--speed), parity and other values to fit your environment and hardware. Note that a much higher baud rate, for example 115200, is preferable for tasks such as following log files.Update the GRUB configuration file.

On BIOS-based machines:

# grub2-mkconfig -o /boot/grub2/grub.cfgOn UEFI-based machines:

# grub2-mkconfig -o /boot/efi/EFI/redhat/grub.cfg

- Reboot the system for the changes to take effect.

Chapter 5. Configuring kernel parameters at runtime

As a system administrator, you can modify many facets of the Red Hat Enterprise Linux kernel’s behavior at runtime. Configure kernel parameters at runtime by using the sysctl command and by modifying the configuration files in the /etc/sysctl.d/ and /proc/sys/ directories.

Configuring kernel parameters on a production system requires careful planning. Unplanned changes can render the kernel unstable, requiring a system reboot. Verify that you are using valid options before changing any kernel values.

For more information about tuning kernel on IBM DB2, see Tuning Red Hat Enterprise Linux for IBM DB2.

5.1. What are kernel parameters

Kernel parameters are tunable values that you can adjust while the system is running. Note that for changes to take effect, you do not need to reboot the system or recompile the kernel.

It is possible to address the kernel parameters through:

-

The

sysctlcommand -

The virtual file system mounted at the

/proc/sys/directory -

The configuration files in the

/etc/sysctl.d/directory

Tunables are divided into classes by the kernel subsystem. Red Hat Enterprise Linux has the following tunable classes:

| Tunable class | Subsystem |

|---|---|

|

| Execution domains and personalities |

|

| Cryptographic interfaces |

|

| Kernel debugging interfaces |

|

| Device-specific information |

|

| Global and specific file system tunables |

|

| Global kernel tunables |

|

| Network tunables |

|

| Sun Remote Procedure Call (NFS) |

|

| User Namespace limits |

|

| Tuning and management of memory, buffers, and cache |

5.2. Configuring kernel parameters temporarily with sysctl

Use the sysctl command to temporarily set kernel parameters at runtime. The command is also useful for listing and filtering tunables.

Prerequisites

- Root permissions

Procedure

List all parameters and their values.

# sysctl -aNoteThe

# sysctl -acommand displays kernel parameters, which can be adjusted at runtime and at boot time.To configure a parameter temporarily, enter:

# sysctl <TUNABLE_CLASS>.<PARAMETER>=<TARGET_VALUE>The sample command above changes the parameter value while the system is running. The changes take effect immediately, without a need for restart.

NoteThe changes return back to default after your system reboots.

5.3. Configuring kernel parameters permanently with sysctl

Use the sysctl command to permanently set kernel parameters.

Prerequisites

- Root permissions

Procedure

List all parameters.

# sysctl -aThe command displays all kernel parameters that can be configured at runtime.

Configure a parameter permanently:

# sysctl -w <TUNABLE_CLASS>.<PARAMETER>=<TARGET_VALUE> >> /etc/sysctl.confThe sample command changes the tunable value and writes it to the

/etc/sysctl.conffile, which overrides the default values of kernel parameters. The changes take effect immediately and persistently, without a need for restart.

To permanently modify kernel parameters, you can also make manual changes to the configuration files in the /etc/sysctl.d/ directory.

5.4. Using configuration files in /etc/sysctl.d/ to adjust kernel parameters

You must modify the configuration files in the /etc/sysctl.d/ directory manually to permanently set kernel parameters.

Prerequisites

- You have root permissions.

Procedure

Create a new configuration file in

/etc/sysctl.d/:# vim /etc/sysctl.d/<some_file.conf>Include kernel parameters, one per line:

<TUNABLE_CLASS>.<PARAMETER>=<TARGET_VALUE> <TUNABLE_CLASS>.<PARAMETER>=<TARGET_VALUE>- Save the configuration file.

Reboot the machine for the changes to take effect.

Alternatively, apply changes without rebooting:

# sysctl -p /etc/sysctl.d/<some_file.conf>The command enables you to read values from the configuration file, which you created earlier.

5.5. Configuring kernel parameters temporarily through /proc/sys/

Set kernel parameters temporarily through the files in the /proc/sys/ virtual file system directory.

Prerequisites

- Root permissions

Procedure

Identify a kernel parameter you want to configure.

# ls -l /proc/sys/<TUNABLE_CLASS>/The writable files returned by the command can be used to configure the kernel. The files with read-only permissions provide feedback on the current settings.

Assign a target value to the kernel parameter.

# echo <TARGET_VALUE> > /proc/sys/<TUNABLE_CLASS>/<PARAMETER>The configuration changes applied by using a command are not permanent and will disappear once the system is restarted.

Verification

Verify the value of the newly set kernel parameter.

# cat /proc/sys/<TUNABLE_CLASS>/<PARAMETER>

Chapter 7. Making persistent changes to the GRUB boot loader

Use the grubby tool to make persistent changes in GRUB.

7.1. Prerequisites

- You have successfully installed RHEL on your system.

- You have root permission.

7.2. Listing the default kernel

By listing the default kernel, you can find the file name and the index number of the default kernel to make permanent changes to the GRUB boot loader.

Procedure

- To get the file name of the default kernel, enter:

# grubby --default-kernel

/boot/vmlinuz-4.18.0-372.9.1.el8.x86_64- To get the index number of the default kernel, enter:

# grubby --default-index

07.4. Editing a Kernel Argument

You can change a value in an existing kernel argument. For example, you can change the virtual console (screen) font and size.

Procedure

Change the virtual console font to

latarcyrheb-sunwith the size of32:# grubby --args=vconsole.font=latarcyrheb-sun32 --update-kernel /boot/vmlinuz-4.18.0-372.9.1.el8.x86_64

7.6. Adding a new boot entry

You can add a new boot entry to the boot loader menu entries.

Procedure

Copy all the kernel arguments from your default kernel to this new kernel entry:

# grubby --add-kernel=new_kernel --title="entry_title" --initrd="new_initrd" --copy-defaultGet the list of available boot entries:

# ls -l /boot/loader/entries/* -rw-r--r--. 1 root root 408 May 27 06:18 /boot/loader/entries/67db13ba8cdb420794ef3ee0a8313205-0-rescue.conf -rw-r--r--. 1 root root 536 Jun 30 07:53 /boot/loader/entries/67db13ba8cdb420794ef3ee0a8313205-4.18.0-372.9.1.el8.x86_64.conf -rw-r--r-- 1 root root 336 Aug 15 15:12 /boot/loader/entries/d88fa2c7ff574ae782ec8c4288de4e85-4.18.0-193.el8.x86_64.confCreate a new boot entry. For example, for the 4.18.0-193.el8.x86_64 kernel, issue the command as follows:

# grubby --grub2 --add-kernel=/boot/vmlinuz-4.18.0-193.el8.x86_64 --title="Red Hat Enterprise 8 Test" --initrd=/boot/initramfs-4.18.0-193.el8.x86_64.img --copy-default

Verification

Verify that the newly added boot entry is listed among the available boot entries:

# ls -l /boot/loader/entries/* -rw-r--r--. 1 root root 408 May 27 06:18 /boot/loader/entries/67db13ba8cdb420794ef3ee0a8313205-0-rescue.conf -rw-r--r--. 1 root root 536 Jun 30 07:53 /boot/loader/entries/67db13ba8cdb420794ef3ee0a8313205-4.18.0-372.9.1.el8.x86_64.conf -rw-r--r-- 1 root root 287 Aug 16 15:17 /boot/loader/entries/d88fa2c7ff574ae782ec8c4288de4e85-4.18.0-193.el8.x86_64.0~custom.conf -rw-r--r-- 1 root root 287 Aug 16 15:29 /boot/loader/entries/d88fa2c7ff574ae782ec8c4288de4e85-4.18.0-193.el8.x86_64.conf

7.7. Changing the default boot entry with grubby

With the grubby tool, you can change the default boot entry.

Procedure

- To make a persistent change in the kernel designated as the default kernel, enter:

# grubby --set-default /boot/vmlinuz-4.18.0-372.9.1.el8.x86_64

The default is /boot/loader/entries/67db13ba8cdb420794ef3ee0a8313205-4.18.0-372.9.1.el8.x86_64.conf with index 0 and kernel /boot/vmlinuz-4.18.0-372.9.1.el8.x86_647.9. Changing default kernel options for current and future kernels

By using the kernelopts variable, you can change the default kernel options for both current and future kernels.

Procedure

List the kernel parameters from the

kerneloptsvariable:# grub2-editenv - list | grep kernelopts kernelopts=root=/dev/mapper/rhel-root ro crashkernel=auto resume=/dev/mapper/rhel-swap rd.lvm.lv=rhel/root rd.lvm.lv=rhel/swap rhgb quietMake the changes to the kernel command-line parameters. You can add, remove or modify a parameter. For example, to add the

debugparameter, enter:# grub2-editenv - set "$(grub2-editenv - list | grep kernelopts) <debug>"Optional: Verify the parameter newly added to

kernelopts:# grub2-editenv - list | grep kernelopts kernelopts=root=/dev/mapper/rhel-root ro crashkernel=auto resume=/dev/mapper/rhel-swap rd.lvm.lv=rhel/root rd.lvm.lv=rhel/swap rhgb quiet debug- Reboot the system for the changes to take effect.

As an alternative, you can use the grubby command to pass the arguments to current and future kernels:

# grubby --update-kernel ALL --args="<PARAMETER>"Chapter 9. Reinstalling GRUB

You can reinstall the GRUB boot loader to fix certain problems, usually caused by an incorrect installation of GRUB, missing files, or a broken system. You can resolve these issues by restoring the missing files and updating the boot information.

Reasons to reinstall GRUB:

- Upgrading the GRUB boot loader packages.

- Adding the boot information to another drive.

- The user requires the GRUB boot loader to control installed operating systems. However, some operating systems are installed with their own boot loaders and reinstalling GRUB returns control to the desired operating system.

GRUB restores files only if they are not corrupted.

9.1. Reinstalling GRUB on BIOS-based machines

You can reinstall the GRUB boot loader on your BIOS-based system. Always reinstall GRUB after updating the GRUB packages.

This overwrites the existing GRUB to install the new GRUB. Ensure that the system does not cause data corruption or boot crash during the installation.

Procedure

Reinstall GRUB on the device where it is installed. For example, if

sdais your device:# grub2-install /dev/sdaReboot your system for the changes to take effect:

# reboot

9.2. Reinstalling GRUB on UEFI-based machines

You can reinstall the GRUB boot loader on your UEFI-based system.

Ensure that the system does not cause data corruption or boot crash during the installation.

Procedure

Reinstall the

grub2-efiandshimboot loader files:# yum reinstall grub2-efi shimReboot your system for the changes to take effect:

# reboot

9.3. Reinstalling GRUB on IBM Power machines

Reinstall the GRUB boot loader on the Power PC Reference Platform (PReP) boot partition of your IBM Power system. Always reinstall GRUB after updating the GRUB packages.

This overwrites the existing GRUB to install the new GRUB. Ensure that the system does not cause data corruption or boot crash during the installation.

Procedure

Determine the disk partition that stores GRUB:

# bootlist -m normal -osda1Reinstall GRUB on the disk partition:

# grub2-install partitionReplace

partitionwith the identified GRUB partition, such as/dev/sda1.Reboot your system for the changes to take effect:

# reboot

9.4. Resetting GRUB

Resetting GRUB removes all GRUB configuration files and system settings. It reinstalls the boot loader and restores all configuration settings to their default values. This process fixes failures caused by corrupted files and invalid configuration.

The following procedure will remove all the customization made by the user.

Procedure

Remove the configuration files:

# rm /etc/grub.d/* # rm /etc/sysconfig/grubReinstall packages.

On BIOS-based machines:

# yum reinstall grub2-toolsOn UEFI-based machines:

# yum reinstall grub2-efi shim grub2-tools grub2-common

Rebuild the

grub.cfgfile for the changes to take effect.On BIOS-based machines:

# grub2-mkconfig -o /boot/grub2/grub.cfgOn UEFI-based machines:

# grub2-mkconfig -o /boot/efi/EFI/redhat/grub.cfg

-

Follow Reinstalling GRUB procedure to restore GRUB on the

/boot/partition.

Chapter 10. Protecting GRUB with a password

You can protect GRUB with a password in two ways:

- Password is required for modifying menu entries but not for booting existing menu entries.

- Password is required for modifying menu entries and for booting existing menu entries.

Chapter 11. Keeping kernel panic parameters disabled in virtualized environments

When configuring a Virtual Machine in RHEL 8, do not enable the softlockup_panic and nmi_watchdog kernel parameters, because the Virtual Machine might suffer from a spurious soft lockup. And that should not require a kernel panic.

Find the reasons behind this advice in the following sections.

11.1. What is a soft lockup

A soft lockup occurs when a task executes in kernel space without rescheduling, preventing other tasks from running on that CPU. This issue, often caused by a bug, triggers a warning on the system console to alert users.

11.2. Parameters controlling kernel panic

The following kernel parameters can be set to control a system’s behavior when a soft lockup is detected.

softlockup_panicControls whether or not the kernel will panic when a soft lockup is detected.

Expand Type Value Effect Integer

0

kernel does not panic on soft lockup

Integer

1

kernel panics on soft lockup

By default, on RHEL 8, this value is 0.

The system needs to detect a hard lockup first to be able to panic. The detection is controlled by the

nmi_watchdogparameter.nmi_watchdogControls whether lockup detection mechanisms (

watchdogs) are active or not. This parameter is of integer type.Expand Value Effect 0

disables lockup detector

1

enables lockup detector

The hard lockup detector monitors each CPU for its ability to respond to interrupts.

watchdog_threshControls frequency of watchdog

hrtimer, NMI events, and soft or hard lockup thresholds.Expand Default threshold Soft lockup threshold 10 seconds

2 *

watchdog_threshSetting this parameter to zero disables lockup detection altogether.

11.3. Spurious soft lockups in virtualized environments

Soft lockup warnings on guest operating systems can be false alarms caused by host workload or resource contention. Unlike physical hosts where these indicate bugs, virtualized environments might trigger false warnings when the host schedules out the guest CPU for extended periods.

Heavy workload on a host or high contention over some specific resource, such as memory, can cause a spurious soft lockup firing because the host might schedule out the guest CPU for a period longer than 20 seconds. When the guest CPU is again scheduled to run on the host, it experiences a time jump that triggers the due timers. The timers also include the hrtimer watchdog that can report a soft lockup on the guest CPU.

Soft lockup in a virtualized environment can be false. You must not enable the kernel parameters that trigger a system panic when a soft lockup reports to a guest CPU.

To understand soft lockups in guests, it is essential to know that the host schedules the guest as a task, and the guest then schedules its own tasks.

Chapter 12. Adjusting kernel parameters for database servers

To ensure efficient operation of database servers and databases, you must configure the required sets of kernel parameters.

12.1. Introduction to database servers

A database server is a service that provides features of a database management system (DBMS). DBMS provides utilities for database administration and interacts with end users, applications, and databases.

Red Hat Enterprise Linux 8 provides the following database management systems:

- MariaDB 10.3

- MariaDB 10.5 - available since RHEL 8.4

- MariaDB 10.11 - available since RHEL 8.10

- MySQL 8.0

- PostgreSQL 10

- PostgreSQL 9.6

- PostgreSQL 12 - available since RHEL 8.1.1

- PostgreSQL 13 - available since RHEL 8.4

- PostgreSQL 15 - available since RHEL 8.8

- PostgreSQL 16 - available since RHEL 8.10

12.2. Parameters affecting performance of database applications

The following kernel parameters affect performance of database applications.

- fs.aio-max-nr

Defines the maximum number of asynchronous I/O operations the system can handle on the server.

NoteRaising the

fs.aio-max-nrparameter produces no additional changes beyond increasing the aio limit.- fs.file-max

Defines the maximum number of file handles (temporary file names or IDs assigned to open files) the system supports at any instance.

The kernel dynamically allocates file handles whenever a file handle is requested by an application. However, the kernel does not free these file handles when they are released by the application. It recycles these file handles instead. The total number of allocated file handles will increase over time even though the number of currently used file handles might be low.

kernel.shmall-

Defines the total number of shared memory pages that can be used system-wide. To use the entire main memory, the value of the

kernel.shmallparameter should be ≤ total main memory size. kernel.shmmax- Defines the maximum size in bytes of a single shared memory segment that a Linux process can allocate in its virtual address space.

kernel.shmmni- Defines the maximum number of shared memory segments the database server is able to handle.

net.ipv4.ip_local_port_range- The system uses this port range for programs that connect to a database server without specifying a port number.

net.core.rmem_default- Defines the default receive socket memory through Transmission Control Protocol (TCP).

net.core.rmem_max- Defines the maximum receive socket memory through Transmission Control Protocol (TCP).

net.core.wmem_default- Defines the default send socket memory through Transmission Control Protocol (TCP).

net.core.wmem_max- Defines the maximum send socket memory through Transmission Control Protocol (TCP).

vm.dirty_bytes/vm.dirty_ratio-

Defines a threshold in bytes / in percentage of dirty-able memory at which a process generating dirty data is started in the

write()function.

Either vm.dirty_bytes or vm.dirty_ratio can be specified at a time.

vm.dirty_background_bytes/vm.dirty_background_ratio- Defines a threshold in bytes / in percentage of dirty-able memory at which the kernel tries to actively write dirty data to hard-disk.

Either vm.dirty_background_bytes or vm.dirty_background_ratio can be specified at a time.

vm.dirty_writeback_centisecsDefines a time interval between periodic wake-ups of the kernel threads responsible for writing dirty data to hard-disk.

This kernel parameters measures in 100th’s of a second.

vm.dirty_expire_centisecsDefines the time of dirty data that becomes old to be written to hard-disk.

This kernel parameters measures in 100th’s of a second.

Chapter 13. Getting started with kernel logging

Log files provide messages about the system, including the kernel, services, and applications running on it. The syslog service provides native support for logging in Red Hat Enterprise Linux. Various utilities use this system to record events and organize them into log files. These files are useful when auditing the operating system or troubleshooting problems.

13.1. What is the kernel ring buffer

Capture early boot and kernel messages by using the kernel ring buffer, preventing data loss during startup. This cyclic buffer stores the printk() output, which you can view with the dmesg command or save to permanent logs by using the syslog service.

The ring buffer is a cyclic data structure that has a fixed size, and is hard-coded into the kernel. Users can display data stored in the kernel ring buffer through the dmesg command or the /var/log/boot.log file. When the ring buffer is full, the new data overwrites the old.

13.2. Role of printk on log-levels and kernel logging

The kernel assigns a log-level to every message to indicate its importance. While the ring buffer collects all messages, the kernel.printk parameter determines which messages appear on the console, which effectively filters output based on severity.

The log-level values break down in this order:

- 0

- Kernel emergency. The system is unusable.

- 1

- Kernel alert. Action must be taken immediately.

- 2

- Condition of the kernel is considered critical.

- 3

- General kernel error condition.

- 4

- General kernel warning condition.

- 5

- Kernel notice of a normal but significant condition.

- 6

- Kernel informational message.

- 7

- Kernel debug-level messages.

By default, kernel.printk in RHEL 8 has the following values:

# sysctl kernel.printk

kernel.printk = 7 4 1 7The four values define the following, in order:

- Console log-level, defines the lowest priority of messages printed to the console.

- Default log-level for messages without an explicit log-level attached to them.

- Sets the lowest possible log-level configuration for the console log-level.

Sets default value for the console log-level at boot time.

Each of these values defines a different rule for handling error messages.

The default 7 4 1 7 printk value allows for better debugging of kernel activity. However, when coupled with a serial console, this printk setting might cause intense I/O bursts that might lead to a RHEL system becoming temporarily unresponsive. To avoid these situations, setting a printk value of 4 4 1 7 typically works, but at the expense of losing the extra debugging information.

Also note that certain kernel command line parameters, such as quiet or debug, change the default kernel.printk values.

Chapter 14. Installing kdump

The kdump service is installed and activated by default on the new versions of RHEL 8 installations.

14.1. What is kdump

kdump provides a crash dumping mechanism that generates a vmcore file containing system memory contents for analysis. It uses kexec to boot into a reserved second kernel without a reboot. The crashed kernel’s memory is captured accurately, as a result.

A kernel crash dump can be the only information available if a system failure occur. Therefore, operational kdump is important in mission-critical environments. Red Hat advises to regularly update and test kexec-tools in your normal kernel update cycle. This is important when you install new kernel features.

If you have multiple kernels on a machine, you can enable kdump for all installed kernels or for specified kernels only. When you install kdump, the system creates a default /etc/kdump.conf file. /etc/kdump.conf includes the default minimum kdump configuration, which you can edit to customize the kdump configuration.

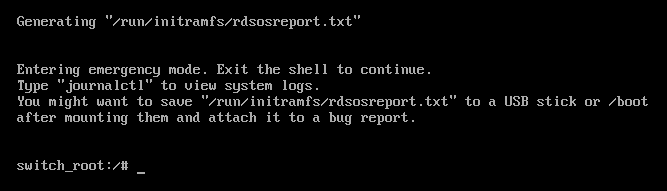

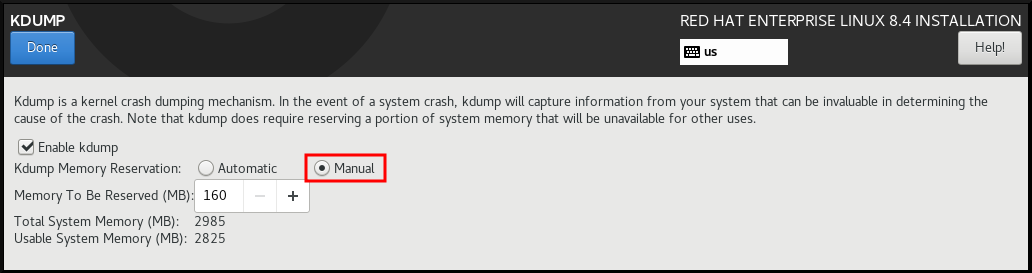

14.2. Installing kdump using Anaconda

The Anaconda installer provides a graphical interface screen for kdump configuration during an interactive installation. You can enable kdump and reserve the required amount of memory.

Procedure

On the Anaconda installer, click KDUMP and enable

kdump:

- In Kdump Memory Reservation, select Manual` if you must customize the memory reserve.

In KDUMP > Memory To Be Reserved (MB), set the required memory reserve for

kdump.

14.3. Installing kdump on the command line

Installation options such as custom Kickstart installations, in some cases does not install or enable kdump by default. The following procedure helps you enable kdump in this case.

Prerequisites

- An active RHEL subscription.

-

A repository containing the

kexec-toolspackage for your system CPU architecture. -

Fulfilled requirements for

kdumpconfigurations and targets. For details, see Supported kdump configurations and targets.

Procedure

Check if

kdumpis installed on your system:# rpm -q kexec-toolsOutput if the package is installed:

kexec-tools-2.0.17-11.el8.x86_64Output if the package is not installed:

package kexec-tools is not installedInstall

kdumpand other necessary packages:# dnf install kexec-tools