Using image mode for RHEL to build, deploy, and manage operating systems

Using RHEL bootc images on Red Hat Enterprise Linux 9

Abstract

Providing feedback on Red Hat documentation

We are committed to providing high-quality documentation and value your feedback. To help us improve, you can submit suggestions or report errors through the Red Hat Jira tracking system.

Procedure

Log in to the Jira website.

If you do not have an account, select the option to create one.

- Click Create in the top navigation bar.

- Enter a descriptive title in the Summary field.

- Enter your suggestion for improvement in the Description field. Include links to the relevant parts of the documentation.

- Click Create at the bottom of the dialogue.

Chapter 1. Introducing image mode for RHEL

Use image mode for RHEL to build, test, and deploy operating systems by using the same tools and techniques as application containers. Image mode for RHEL is available by using the registry.redhat.io/rhel9/rhel-bootc bootc image. The RHEL bootc images differ from the existing application Universal Base Images (UBI) in that they contain additional components necessary to boot that were traditionally excluded, such as, kernel, initrd, boot loader, firmware, among others.

The rhel-bootc and user-created containers based on rhel-bootc container image are subject to the Red Hat Enterprise Linux end user license agreement (EULA). You are not allowed to publicly redistribute these images.

Red Hat provides bootc image for the following computer architectures:

- AMD and Intel 64-bit architectures (x86-64-v2)

- The 64-bit ARM architecture (ARMv8.0-A)

- IBM Power Systems 64-bit Little Endian architecture (ppc64le)

- IBM Z 64-bit architecture (s390x)

Bootc supports the following container image formats and disk image formats:

| Image type | Target environment |

|---|---|

|

| Physical, virtualized, cloud, and edge environments. |

|

| Amazon Machine Image. |

|

| QEMU (targeted for environments such as Red Hat OpenStack, Red Hat OpenStack services for OpenShift, and OpenShift Virtualization), Libvirt (RHEL). |

|

| VMDK for vSphere. |

|

| An unattended Anaconda installer that installs to the first disk found. |

|

| Unformatted raw disk. Also supported in QEMU and Libvirt |

|

| VHD for Virtual PC, among others. |

|

| Google Compute Engine (GCE) environment. |

Containers help streamline the lifecycle of a RHEL system by offering the following possibilities:

- Building container images

-

You can configure your operating system at a build time by modifying the Containerfile. Image mode for RHEL is available by using the

registry.redhat.io/rhel9/rhel-bootccontainer image. You can use Podman, OpenShift Container Platform, or other standard container build tools to manage your containers and container images. You can automate the build process by using CI/CD pipelines. - Versioning, mirroring, and testing container images

- You can version, mirror, introspect, and sign your derived bootc image by using any container tools such as Podman or OpenShift Container Platform.

- Deploying container images to the target environment

You have several options on how to deploy your image:

- Anaconda: is the installation program used by RHEL. You can deploy all image types to the target environment by using Anaconda and Kickstart to automate the installation process.

-

bootc-image-builder: is a containerized tool that converts the container image to different types of disk images, and optionally uploads them to an image registry or object storage. -

bootc: is a tool responsible for fetching container images from a container registry and installing them to a system, updating the operating system, or switching from an existing ostree-based system. The RHEL bootc image contains thebootcutility by default and works with all image types. However, remember that therpm-ostreeis not supported and must not be used to make changes.

- Updating your operating system

-

The system supports in-place transactional updates with rollback after deployment. Automatic updates are on by default. A systemd service unit and systemd timer unit files check the container registry for updates and apply them to the system. As the updates are transactional, a reboot is required. For environments that require more sophisticated or scheduled rollouts, disable auto updates and use the

bootcutility to update your operating system.

RHEL has two deployment modes. Both provide the same stability, reliability, and performance during deployment. See their differences:

-

Package mode: You can build package-based images and OSTree images by using RHEL image builder, and you can manage the package mode images by using

composer-clior web console. The operating system uses RPM packages and is updated by using thednfpackage manager. The root filesystem is mutable. However, the operating system cannot be managed as a containerized application. See Composing a customized RHEL system image product documentation. -

Image mode: a container-native approach to build, deploy, and manage RHEL. The same RPM packages are delivered as a base image and updates are deployed as a container image. The root filesystem is immutable by default, except for

/etcand/var, with most content coming from the container image.

You can choose to use either the Image mode or the Package mode deployment to build, test, and share your operating system. Image mode additionally enables you to manage your operating system in the same way as any other containerized application.

1.1. Benefits of image mode for RHEL

The benefits of image mode for RHEL occur across the lifecycle of a system. The following list contains some of the most important advantages:

- Container images are easier to understand and use than other image formats and are fast to build

- Containerfiles, also known as Dockerfiles, provide a straightforward approach to defining the content and build instructions for an image. Container images are often significantly faster to build and iterate on compared to other image creation tools.

- Consolidate process, infrastructure, and release artifacts

- As you distribute applications as containers, you can use the same infrastructure and processes to manage the underlying operating system.

- Immutable updates

-

Just as containerized applications are updated in an immutable way, with image mode for RHEL, the operating system is also. You can boot into updates and roll back when needed in the same way that you use

rpm-ostreesystems.

The use of rpm-ostree to make changes, or install content, is not supported.

- Portability across hybrid cloud environments

- You can use bootc images across physical, virtualized, cloud, and edge environments.

Although containers provide the foundation to build, transport, and run images, it is important to understand that after you deploy these bootc images, either by using an installation mechanism, or you convert them to a disk image, the system does not run as a container.

1.2. Prerequisites

- You have a subscribed RHEL 9 system. For more information, see Getting Started with RHEL System Registration documentation.

- You have a container registry. You can create your registry locally or create a free account on the Quay.io service. To create the Quay.io account, see Red Hat Quay.io page.

- You have a Red Hat account with either production or developer subscriptions. No cost developer subscriptions are available on the Red Hat Enterprise Linux Overview page.

- You have authenticated to registry.redhat.io. For more information, see Red Hat Container Registry Authentication article.

Chapter 2. Building and testing RHEL bootc images

You can build and test RHEL container images by using Podman and Containerfiles to create, customize, and share bootable RHEL system images across environments efficiently. You can also use other tools, for example, OpenShift Container Platform. For more examples of configuring RHEL systems by using containers, see the rhel-bootc-examples repository.

2.1. Building and configuring bootc-based images from a Containerfile

You can use a Containerfile to build and customize your own bootc-based image with the tools, configurations, and applications you need. While most standard instructions work, some are ignored when the image is installed on a system.

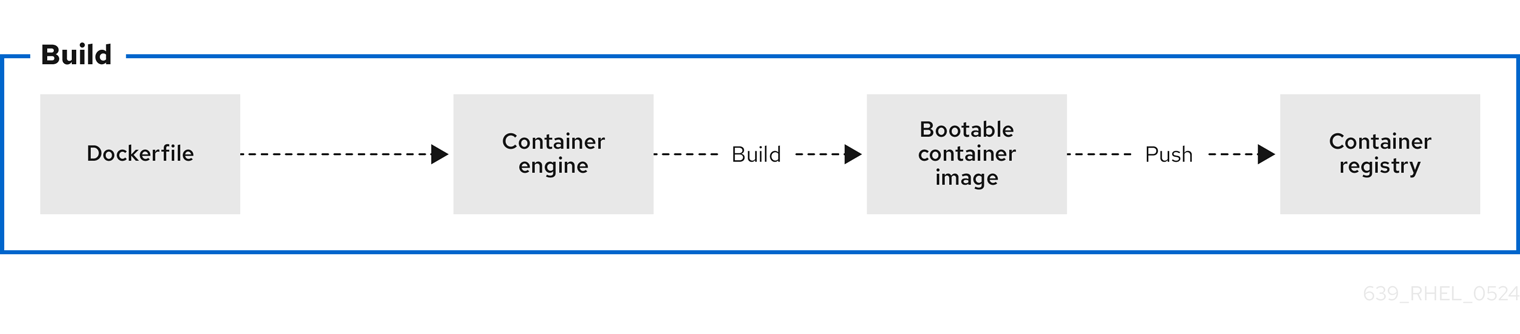

Figure 2.1. Building an image by using instructions from a Containerfile, testing the container, pushing an image to a registry, and sharing it with others

A general Containerfile structure is the following:

FROM registry.redhat.io/rhel9/rhel-bootc:latest

RUN dnf -y install [software] [dependencies] && dnf clean all

ADD [application]

ADD [configuration files]

RUN [config scripts]

The available commands that are usable inside a Containerfile and a Dockerfile are equivalent.

However, the following commands in a Containerfile are ignored when the rhel-9-bootc image is installed to a system:

-

ENTRYPOINTandCMD(OCI:Entrypoint/Cmd): you can setCMD /sbin/initinstead. -

ENV(OCI:Env): change thesystemdconfiguration to configure the global system environment. -

EXPOSE(OCI:exposedPorts): it is independent of how the system firewall and network function at runtime. -

USER(OCI:User): configure individual services inside the RHEL bootc to run as unprivileged users instead.

The rhel-9-bootc container image reuses the OCI image format.

-

The

rhel-9-bootccontainer image ignores the container config section (Config) when it is installed to a system. -

The

rhel-9-bootccontainer image does not ignore the container config section (Config) when you run this image by using container runtimes such aspodmanordocker.

2.2. Building reproducible container images with container tools

Red Hat Enterprise Linux (RHEL) now supports reproducible container builds using Podman and Buildah, reducing image changes with consistent inputs over time. This new feature decreases data pulled from registries when updating images, which is crucial for supply chain security, reliable software deployment, and effective debugging. Reproducible builds for RHEL containers reduce registry storage, create smaller update payloads, and enable faster downloads by ensuring image layers remain consistent. Previously, challenges with tarball creation and escalating container image sizes led to increased storage burdens and unnecessary layer pulls, even when underlying data remained unchanged, hindering faster updates in environments like rhel-bootc and RHEL AI.

2.3. Building a container image

To build an image by using instructions from a Containerfile, use the podman build command.

Prerequisites

-

The

container-toolsmeta-package is installed.

Procedure

Create a

Containerfile:$ cat Containerfile FROM registry.redhat.io/rhel9/rhel-bootc:latest RUN dnf -y install cloud-init && \ ln -s ../cloud-init.target /usr/lib/systemd/system/default.target.wants && \ dnf clean allThis

Containerfileexample adds thecloud-inittool, so it automatically fetches SSH keys and can run scripts from the infrastructure and also gather configuration and secrets from the instance metadata. For example, you can use this container image for pre-generated AWS or KVM guest systems.Build the

<image>image by usingContainerfilein the current directory:$ podman build -t quay.io/<namespace>/<image>:<tag> .

Verification

List all images:

$ podman images REPOSITORY TAG IMAGE ID CREATED SIZE localhost/<image> latest b28cd00741b3 About a minute ago 2.1 GB

2.4. Benefits of custom bootable images with multi-stage builds

The deployment image must include only the application and its required runtime, without adding any build tools or unnecessary libraries. To achieve this, use a two-stage Containerfile: one stage for building the artifacts and another for hosting the application.

With multi-stage builds, you use multiple FROM instructions in your Containerfile. Each FROM instruction can use a different base, and each of them begins a new stage of the build. You can selectively copy artifacts from one stage to another and exclude everything you do not need in the final image.

Multi-stage builds offer several advantages:

- Smaller image size

- By separating the build environment from the runtime environment, only the necessary files and dependencies are included in the final image, significantly reducing its size.

- Improved security

- Since build tools and unnecessary libraries are excluded from the final image, the attack surface is reduced, leading to a more secure container.

- Optimized performance

- A smaller image size means faster download, deployment, and startup times, improving the overall efficiency of the containerized application.

- Simplified maintenance

- With the build and runtime environments separated, the final image is cleaner and easier to maintain, containing only what is needed to run the application.

- Cleaner builds

- Multi-stage builds help avoid clutter from intermediate files, which could accumulate during the build process, ensuring that only essential artifacts make it into the final image.

- Resource efficiency

- The ability to build in one stage and discard unnecessary parts minimizes the use of storage and bandwidth during deployment.

- Better layer caching

- With clearly defined stages, Podman can efficiently cache the results of previous stages, by accelerating up future builds.

The following Containerfile consists of two stages. The first stage is typically named builder and it compiles a golang binary. The second stage copies the binary from the first stage. The default working directory for the go-toolset builder is opt/ap-root/src.

FROM registry.access.redhat.com/ubi9/go-toolset:latest as builder

RUN echo 'package main; import "fmt"; func main() { fmt.Println("hello world") }' > helloworld.go

RUN go build helloworld.go

FROM registry.redhat.io/rhel9/rhel-bootc:latest

COPY --from=builder /opt/app-root/src/helloworld /

CMD ["/helloworld"]

As a result, the final container image includes the helloworld binary but no data from the previous stage.

You can also use multi-stage builds to perform the following scenarios:

- Stopping at a specific build stage

- When you build your image, you can stop at a specified build stage. For example:

$ podman build --target build -t hello .For example, you can use this approach to debugging a specific build stage.

- Using an external image as a stage

-

You can use the

COPY --frominstruction to copy from a separate image either using the local image name, a tag available locally or on a container registry, or a tag ID. For example:

COPY --from=<image> <source_path> <destination_path>- Using a previous stage as a new stage

-

You can continue where a previous stage ended by using the

FROMinstruction. For example:

FROM ubi9 AS stage1

[...]

FROM stage1 AS stage2

[...]

FROM ubi9 AS final-stage

[...]2.5. Running a container image

To run and test your container, use the podman run command.

Prerequisites

-

The

container-toolsmeta-package is installed.

Procedure

Run the container by using the

podman runcommand with the appropriate options. For example, to run the container namedmybootcbased on thequay.io/<namespace>/<image>:<tag>container image:$ podman run -it --rm --name mybootc quay.io/<namespace>/<image>:<tag> /bin/bash-

The

-ioption creates an interactive session. Without the-ioption, the shell open, and then exits. -

The

-toption opens a terminal session. Without the-ioption, stays open, but you cannot type anything to the shell. -

The

--rmoption removes thequay.io/<namespace>/<image>:<tag>container image after the container exits.

-

The

Verification

List all running containers:

$ podman ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 7ccd6001166e quay.io/<namespace>/<image>:<tag> /sbin/init 6 seconds ago Up 5 seconds ago mybootc

2.6. Overriding the version of bootc images

Red Hat Enterprise Linux (RHEL) base images, Universal Base Images (UBI), and rhel-bootc use version numbers that track only the major operating system version. To define a specific version number for a derived image, you can override this value by adding the version label to your Containerfile.

Prerequisites

-

The

container-toolsmeta-package is installed.

Procedure

Create a Containerfile:

FROM registry.redhat.io/rhel9/rhel-bootc:latest # In this example, the custom operating system has its own # version scheme. # The operating system major version is copied # and a sub-version of it is added, which represents a point-in-time # snapshot of the base OS content. # This just changes the output of bootc status. A deeper level # of customization is available by also changing the contents of /usr/lib/os-release. # Define the custom version and release metadata LABEL org.opencontainers.image.version=”10.1.1”Build the

<image>image by usingContainerfilefrom the current directory:$ podman build -t quay.io/<namespace>/<image>:<tag> .

Verification

Verify that the override was applied:

$ podman inspect <image-name> --format '{{index .Labels "org.opencontainers.image.version"}}'

2.7. Pushing a container image to the registry

To push an image to your own registry, or a third-party registry, and share it with others, use the podman push command. The following procedure uses the Red Hat Quay registry as an example.

Prerequisites

-

The

container-toolsmeta-package is installed. - An image is built and available on the local system.

- You have created the Red Hat Quay registry. For more information, see Proof of Concept - Deploying Red Hat Quay.

Procedure

Push the

quay.io/<namespace>/<image>:<tag>container image from your local storage to the registry:$ podman push quay.io/<namespace>/<image>:<tag>

Chapter 3. Building and managing logically bound images

Logically bound images give you support for container images that are bound to the lifecycle of the base bootc image. This helps combine different operational processes for applications and operating systems, and the container application images are referenced from the base image as image files or an equivalent. Consequently, you can manage multiple container images for system installations.

You can use containers for lifecycle-bound workloads, such as security agents and monitoring tools. You can also upgrade such workloads by using the bootc upgrade command.

3.1. Introduction to logically bound images

By using logically bound images, you can associate container application images to a base bootc system image. By default, application containers as executed by, for example, podman have a lifecycle independent of host upgrades; they can be added or removed at any time, and are typically fetched on demand after booting if the container image is not present.

Logically bound images offer a key benefit that the application containers bound in this way have a lifecycle tied to the host upgrade and are available before the host reboots into the new operating system. The container images bound in this way will be present as long as a bootc container references them.

The following are examples for lifecycle bound workloads which are usually not updated outside of the host:

- Logging, for example, journald→remote log forwarder container

- Monitoring, for example, Prometheus node_exporter

- Configuration management agents

- Security agents

For these types of workloads it is often important that the container start from a very early stage in the boot process before e.g. networking might be available. Logically bound images enable you to start containers (often via systemd) with the the same reliability of using ExecStart= of a binary in the base bootc image.

The term logically bound can also be contrasted with another model of physically bound images, where application container content is physically stored in the bootc container image. A key advantage for logically bound over physically bound is that tou can update the bootc system without re-downloading application container images which are not changed.

When using logically bound images, you must manage multiple container images for the system to install the logically bound images. This is an advantage and also a disadvantage. For example, for a disconnected or offline installation, you must mirror all the containers, not just one. The application images are only referenced from the base image as .image files or an equivalent.

- Logically bound images installation

-

When you run

bootc install, logically bound images must be present in the default/var/lib/containerscontainer store. The images will be copied into the target system and present directly at boot, alongside the bootc base image. - Logically bound images lifecycle

-

Logically bound images are referenced by the bootable container and have guaranteed availability when the bootc based server starts. The image is always upgraded by using

bootc upgradeand is available asread-onlyto other processes, such as Podman. - Logically bound images management on upgrades, rollbacks, and garbage collection

- During upgrades, the logically bound images are managed exclusively by bootc.

- During rollbacks, the logically bound images corresponding to rollback deployments are retained.

- bootc performs garbage collection of unused bound images.

3.2. Creating logically bound images

You can create logically bound images by using a Podman Quadlet .image or .container files.

Prerequisites

-

The

container-toolsmeta-package is installed.

Procedure

- Select the image that you want to logically bound.

Create a

Containerfile:$ cat Containerfile FROM quay.io/<namespace>/<image>:latest COPY ./<app_1>.image /usr/share/containers/systemd/<app_1>.image COPY ./<app_2>.container /usr/share/containers/systemd/<app_2>.container RUN ln -s /usr/share/containers/systemd/<app_1>.image \ /usr/lib/bootc/bound-images.d/<app_1>.image && \ ln -s /usr/share/containers/systemd/<app_2>.container \ /usr/lib/bootc/bound-images.d/<app_2>.containerIn the

.containerdefinition, use:GlobalArgs=--storage-opt=additionalimagestore=/usr/lib/bootc/storageIn the

Containerfileexample, the image is selected to be logically bound by creating a symlink in the/usr/lib/bootc/bound-images.ddirectory pointing to either an.imageor a.containerfile.Run the

bootc upgradecommand.$ bootc upgradeThe bootc upgrade performs the following overall steps:

- Fetches the new base image from the image repository. See linkhttps://docs.redhat.com/en/documentation/red_hat_enterprise_linux/9/html/using_image_mode_for_rhel_to_build_deploy_and_manage_operating_systems/managing-users-groups-ssh-key-and-secrets-in-image-mode-for-rhel#configuring-container-pull-secrets_managing-users-groups-ssh-key-and-secrets-in-image-mode-for-rhel[Configuring container pull secrets].

- Reads the new base image root file system to discover logically bound images.

-

Automatically pulls any discovered logically bound images defined in the new bootc image into the bootc-owned

/usr/lib/bootc/storageimage storage.

Make the bound images become available to container runtimes such as Podman. For that, you must explicitly configure bound images to point to the bootc storage as an "additional image store". For example:

podman --storage-opt=additionalimagestore=/usr/lib/bootc/storage run <image>ImportantDo not attempt to globally enable the

/usr/lib/bootc/storageimage storage in/etc/containers/storage.conf. Only use the bootc storage for logically bound images.

The bootc image store is owned by bootc. The logically bound images will be garbage collected when they are no longer referenced by a file in the /usr/lib/bootc/bound-images.d directory.

Chapter 4. Building and managing physically bound images

Physically bound images embed all necessary application containers, configurations, and Quadlet files directly into the root filesystem during the bootc build process. This ensures the entire system is all integrated, enabling disconnected and offline deployment, and applications are immediately available on boot.

4.1. Introduction to physically bound images

RHEL bootc physically binds images to the base operating system image, directly embedding containerized applications. This creates a fully self-contained, and immutable system. The underlying mechanism resembles that of logically-bound images because physically-bound images can be pre-pulled during image build time and made available at runtime.

Physically bound images contain the data in the final tar of the image, while logically bound images only reference the image and pull it during a bootc upgrade. Physically bound images offer strict offline reliability, while logically bound images accelerate development by avoiding an update to the entire base image for a small application change.

The key benefits of physically bound images include:

- Because the base operating system and all required application containers ship as one artifact, you have pre-installed application availability right at boot.

- You gain resilience against registry downtime, simplify your offline deployments, and secure atomic updates for both the operating system and applications.

4.2. Creating physically bound images by using system roles

You can use system roles to create physically bound images to detect the non-booted environment and adjust their behaviour. The images must not, for example, try to start systemd units or talk to network services; instead, they must defer all of that to the first boot.

Prerequisites

- You have prepared the control node and the managed nodes.

-

The account you use to connect to the managed nodes has

sudopermissions for these nodes. - You are logged in to the control node as a user who can run playbooks on the managed nodes.

Procedure

Create a playbook file, for example

./setup.yml, with the following content:--- - name: Configure physically bound images with RHEL system roles hosts: all vars: podman_registries_conf: unqualified-search-registries: - "registry.access.redhat.com" - "docker.io" - "my-company-registry.com" registry: - location: "my-company-registry.com" - location: "my-local-registry:5000" insecure: true podman_quadlet_specs: - file_src: my-quadlet.container state: started tasks: - name: Run the podman role include_role: name: redhat.rhel_system_roles.podmanThe Podman system role in the Ansible Playbook configures the system with registries and a quadlet application.

Validate the playbook syntax:

$ ansible-playbook --syntax-check ./setup.ymlThis command only validates the syntax and does not protect against a wrong but valid configuration.

Create a

quadlet.containerfile to configures the container application, for example:[Install] WantedBy=default.target [Container] Image=quay.io/my_org/my_image:20260326 ContainerName=my-quadletCreate a

Containerfilefile with the following content:FROM quay.io/centos-bootc/centos-bootc:stream10 RUN dnf -y install ansible-core rhel-system-roles COPY ./setup.yml . COPY ./quadlet.container . RUN ansible-playbook -c local setup.ymlYou perform every action inside the image build, running the playbooks against localhost. You can use a multi-stage build to keep Ansible and the roles out of your final image. Because this process is entirely self-contained, it works well in your automatic container build pipelines.

NoteThe images must be copied over before any container or service (e.g., Quadlet) depending on such an image is started.

Build the

bootcimage, for example:$ podman build -t quay.io/<namespace>/<image>:<tag> .

Chapter 5. Creating bootc-compatible base disk images by using bootc-image-builder

The bootc-image-builder is a containerized tool to create disk images from bootc images. You can use the images that you build to deploy disk images in different environments, such as the edge, server, and clouds.

5.1. Introducing image mode for RHEL for bootc-image-builder

By using the bootc-image-builder tool, you can convert bootc images into disk images for a variety of different platforms and formats. Converting bootc images into disk images is equivalent to installing a bootc image. After you deploy these disk images to the target environment, you can update them directly from the container registry.

You can build your base images by using one of the following methods:

- Use a local RHEL system, instal the Podman tool, and build your image locally. Then, you can push the images to your private registry.

- Use a CI/CD pipeline: Create a CI/CD pipeline that uses a RHEL-based system to build images and push them to your private registry.

The bootc-image-builder tool supports generating the following image types:

- Disk image formats, such as ISO, are suitable for disconnected installations.

Virtual disk image formats, such as:

- QEMU copy-on-write (QCOW2)

- Amazon Machine Image (AMI)

- Unformatted raw disk (Raw)

- Virtual Machine Image (VMI)

bootc-image-builder uses the local container storage by default. The tool cannot pull container images from remote registries itself. To build disk images, you must make the base bootc container image available in the system’s local container registry to mount the system’s container storage into the bootc-image-builder container so it can use containers from the system storage.

Deploying from a container image is beneficial when you run VMs or servers because you can achieve the same installation result. That consistency extends across multiple different image types and platforms when you build them from the same container image. Consequently, you can minimize the effort in maintaining operating system images across platforms. You can also update systems that you deploy from these disk images by using the bootc tool, instead of re-creating and uploading new disk images with bootc-image-builder.

Although you can deploy a rhel-9-bootc image directly, you can also create your own customized images that are derived from this bootc image. The bootc-image-builder tool takes the rhel-9-bootc OCI container image as an input.

Generic base container images do not include any default passwords or SSH keys. Also, the disk images that you create by using the bootc-image-builder tool do not contain the tools that are available in common disk images, such as cloud-init. These disk images are transformed container images only.

5.2. Installing bootc-image-builder

The bootc-image-builder is intended to be used as a container and it is not available as an RPM package in RHEL. To install the bootc-image-builder, use the Red Hat Container Registry.

Prerequisites

-

The

container-toolsmeta-package is installed. The meta-package contains all container tools, such as Podman, Buildah, and Skopeo. -

You are authenticated to

registry.redhat.io. For details, see Red Hat Container Registry Authentication.

Procedure

Log in to authenticate to

registry.redhat.io:$ sudo podman login registry.redhat.ioInstall the

bootc-image-buildertool:$ sudo podman pull registry.redhat.io/rhel9/bootc-image-builder

Verification

List all images pulled to your local system:

$ sudo podman images REPOSITORY TAG IMAGE ID CREATED SIZE registry.redhat.io/rhel9/bootc-image-builder latest b361f3e845ea 24 hours ago 676 MB

5.3. Supported image customizations for a configuration file

You can use a build configuration file in the TOML or JSON format to add customizations for your resulting disk image.

The container directory maps the config file to /config.toml. The customizations object defines the image modifications. Additionally, you can embed a build configuration file, either as config.json or config.toml in the /usr/lib/bootc-image-builder directory. The system automatically uses any file system or disk customizations found in this directory by default. This behavior can be overridden if you explicitly specify a different customization.

For the JSON format, you can also pass the configuration by using stdin when you use the --config argument.

- User customization

Add a user to your disk image, and optionally set an SSH key. All fields for this section are optional except for the

name.Expand TOML JSON [[customizations.user]] name = "user" password = "password" key = "ssh-rsa AAA ... user@email.com" groups = ["wheel"]{ "customizations": { "user": [ { "name": "user", "password": "password", "key": "ssh-rsa AAA ... user@email.com", "groups": [ "wheel", "admins" ] } ] } }- Kernel configuration

You can customize the kernel boot parameters in the configuration file.

Expand TOML JSON [customizations.kernel] name = "kernel-debug" append = "nosmt=force"{ "customizations": { "kernel": { "append": "mitigations=auto,nosmt" } } }- File systems configuration

You can use the file system section of the customizations to set the minimum size of the base partitions, such as

/and/boot, and to create extra partitions with mount points under/var.Expand TOML JSON [[customizations.filesystem]] mountpoint = "/" minsize = "10 GiB" [[customizations.filesystem]] mountpoint = "/var/data" minsize = "20 GiB"{ "customizations": { "filesystem": [ { "mountpoint": "/", "minsize": "10 GiB" }, { "mountpoint": "/var/data", "minsize": "20 GiB" } ] } }- File system type interaction with rootfs

The root file system type (

--rootfs) argument overrides the default value from the source container. It also sets the file system types for all additional mount points for theext4,xfs, andbtrfstypes.For supported mount points and sizes, the following restrictions and rules apply, unless the

rootfsisbtrfs:-

You can specify

/to set the minimum size of the root file system. The final size of the file system, mounted at/sysrooton a booted system, equals the value you specify in this configuration or 2x the size of the base container, whichever is larger. -

You can specify

/bootto set the minimum size of the boot partition. You can also specify subdirectories of/var, but you cannot specify symlinks in/var. For example,/var/homeand/var/runare symlinks and cannot be file systems on their own. -

/varitself cannot be a mount point. Therootfsoption defines the file system type for the root file system. -

Currently, there is no support for creating

btrfssubvolumes during build time. Therefore, if therootfsisbtrfs, no custom mount points are supported under/var. You can only configure/and/boot.

-

You can specify

- Anaconda ISO (installer) configuration options

Create a Kickstart file that contains the installation commands of your choice. Then, add a Kickstart file to your ISO build to create a fully customized and automated installation medium.

NoteThe following combined customizations are not supported:

[customizations.user]and[customizations.installer.kickstart]. When you add a Kickstart, use a configuration file in theTOMLformat, because multi-line strings are prone to error.Expand TOML JSON [customizations.installer.kickstart] contents = """ text --non-interactive zerombr clearpart --all --initlabel --disklabel=gpt autopart --noswap --type=lvm network --bootproto=dhcp --device=link --activate --onboot=on """{ "customizations": { "installer": { "kickstart": { "contents": "text --non-interactive\nzerombr\nclearpart --all --initlabel --disklabel=gpt\nautopart --noswap --type=lvm\nnetwork --bootproto=dhcp --device=link --activate --onboot=on" } } } }Warningbootc-image-builderdoes not add additional Kickstart commands besides the container image, which the system adds automatically to the container image. See Creating Kickstart files for more information.

5.4. Creating QCOW2 images by using bootc-image-builder

Build a RHEL bootc image into a QEMU (QCOW2) image for the architecture that you are running the commands on.

The RHEL base image does not include a default user. Optionally, you can inject a user configuration by using the --config option to run the bootc-image-builder container. Alternatively, you can configure the base image with cloud-init to inject users and SSH keys on first boot. See Users and groups configuration - Injecting users and SSH keys by using cloud-init.

Prerequisites

- You have Podman installed on your host machine.

-

You have root access to run the

bootc-image-buildertool, and run the containers in--privilegedmode, to build the images.

Procedure

Optional: Create a

config.tomlto configure user access, for example:[[customizations.user]] name = "user" password = "pass" key = "ssh-rsa AAA ... user@email.com" groups = ["wheel"]Before running the container, initialize the

outputfolder. Use the-pargument to ensure that the command does not fail if the directory already exists:$ mkdir -p ./outputRun

bootc-image-builder. Optionally, if you want to use user access configuration, pass theconfig.tomlas an argument.NoteIf you do not have the container storage mount, your image must be public.

The following example creates a public QCOW2 image. The image must be accessible from a registry, such as

registry.redhat.io/rhel9/bootc-image-builder:latest.$ podman run \ --rm \ --privileged \ --pull=newer \ --security-opt label=type:unconfined_t \ -v ./config.toml:/config.toml:ro \ -v ./output:/output \ -v /var/lib/containers/storage:/var/lib/containers/storage \ registry.redhat.io/rhel9/bootc-image-builder:latest \ --type qcow2 \ --config /config.toml \ quay.io/<namespace>/<image>:<tag>This example creates a private QCOW2 image from a local container.

$ sudo podman run \ --rm \ -it \ --privileged \ --pull=newer \ --security-opt label=type:unconfined_t \ -v ./config.toml:/config.toml:ro \ -v ./output:/output \ -v /var/lib/containers/storage:/var/lib/containers/storage \ registry.redhat.io/rhel9/bootc-image-builder:latest \ --type qcow2 \ --config /config.toml \ quay.io/<namespace>/<image>:<tag>You can find the

.qcow2image in the output folder.

5.5. Creating VMDK images by using bootc-image-builder

Create a Virtual Machine Disk (VMDK) from a bootc image and use it within VMware’s virtualization platforms, such as vSphere, or use it in Oracle VirtualBox.

Prerequisites

- You have Podman installed on your host machine.

-

You have authenticated to the Red Hat Registry by using the

podman login registry.redhat.io. -

You have pulled the

rhel9/bootc-image-buildercontainer image.

Procedure

Create a

Containerfilewith the following content:FROM registry.redhat.io/rhel9/rhel-bootc:latest RUN dnf -y install cloud-init open-vm-tools && \ ln -s ../cloud-init.target /usr/lib/systemd/system/default.target.wants && \ rm -rf /var/{cache,log} /var/lib/{dnf,rhsm} && \ systemctl enable vmtoolsd.serviceBefore running the container, initialize the

outputfolder. Use the-pargument to ensure that the command does not fail if the directory already exists:$ mkdir -p ./outputBuild the bootc image:

# podman build . -t localhost/rhel-bootc-vmdkCreate a VMDK file from the previously created bootc image. The image must be accessible from a registry, such as

registry.redhat.io/rhel9/bootc-image-builder:latest.# podman run \ --rm \ -it \ --privileged \ -v /var/lib/containers/storage:/var/lib/containers/storage \ -v ./output:/output \ --security-opt label=type:unconfined_t \ --pull newer \ registry.redhat.io/rhel9/bootc-image-builder:latest \ --type vmdk \ --config /config.toml \ localhost/rhel-bootc-vmdk:latestA VMDK disk file for the bootc image is stored in the

output/vmdkdirectory.

5.6. Creating GCE images by using bootc-image-builder

Build a RHEL bootc image into a GCE image for the architecture that you are running the commands on. The RHEL base image does not include a default user. Optionally, you can inject a user configuration by using the --config option to run the bootc-image-builder container. Alternatively, you can configure the base image with cloud-init to inject users and SSH keys on first boot. See Users and groups configuration - Injecting users and SSH keys by using cloud-init.

Prerequisites

- You have Podman installed on your host machine.

-

You have root access to run the

bootc-image-buildertool, and run the containers in--privilegedmode, to build the images.

Procedure

Optional: Create a

config.tomlto configure user access, for example:[[customizations.user]] name = "user" password = "pass" key = "ssh-rsa AAA ... user@email.com" groups = ["wheel"]Before running the container, initialize the

outputfolder. Use the-pargument to ensure that the command does not fail if the directory already exists:$ mkdir -p ./outputRun

bootc-image-builder. Optionally, if you want to use user access configuration, pass theconfig.tomlas an argument.NoteIf you do not have the container storage mount, your image must be public.

Run

bootc-image-builder. Optionally, if you want to use user access configuration, pass theconfig.tomlas an argument. The image must be accessible from a registry, such asregistry.redhat.io/rhel9/bootc-image-builder:latest.The following is an example of creating a

gceimage:$ podman run \ --rm \ -it \ --privileged \ --pull=newer \ --security-opt label=type:unconfined_t \ -v ./config.toml:/config.toml \ -v ./output:/output \ -v /var/lib/containers/storage:/var/lib/containers/storage \ registry.redhat.io/rhel9/bootc-image-builder:latest \ --type gce \ --config /config.toml \ quay.io/<namespace>/<image>:<tag>You can find the

gceimage in the output folder.

5.7. Creating AMI images by using bootc-image-builder and uploading it to AWS

Create an Amazon Machine Image (AMI) from a bootc image and use it to launch an Amazon Web Services (AWS) Amazon Elastic Compute Cloud (EC2) instance.

Prerequisites

- You have Podman installed on your host machine.

-

You have an existing

AWS S3bucket within your AWS account. -

You have root access to run the

bootc-image-buildertool, and run the containers in--privilegedmode, to build the images. -

You have the

vmimportservice role configured on your account to import an AMI into your AWS account.

Procedure

Create a disk image from the bootc image.

- Configure the user details in the Containerfile. Make sure that you assign it with sudo access.

- Build a customized operating system image with the configured user from the Containerfile. It creates a default user with passwordless sudo access.

Optional: Configure the machine image with

cloud-init. See Users and groups configuration - Injecting users and SSH keys by using cloud-init. The following is an example:FROM registry.redhat.io/rhel9/rhel-bootc:latest RUN dnf -y install cloud-init && \ ln -s ../cloud-init.target /usr/lib/systemd/system/default.target.wants && \ rm -rf /var/{cache,log} /var/lib/{dnf,rhsm}NoteYou can also use

cloud-initto add users and additional configuration by using instance metadata.Build the bootc image. For example, to deploy the image to an

x86_64AWS machine, use the following commands:$ podman build -t quay.io/<namespace>/<image>:<tag> . $ podman push quay.io/<namespace>/<image>:<tag> .Before running the container, initialize the

outputfolder. Use the-pargument to ensure that the command does not fail if the directory already exists:$ mkdir -p ./output-

Use the

bootc-image-buildertool to create an AMI from the bootc container image. Use the

bootc-image-buildertool to create a public AMI image from the bootc container image. The image must be accessible from a registry, such asregistry.redhat.io/rhel9/bootc-image-builder:latest.$ podman run \ --rm \ -it \ --privileged \ --pull=newer \ -v $HOME/.aws:/root/.aws:ro \ -v /var/lib/containers/storage:/var/lib/containers/storage \ --env AWS_PROFILE=default \ registry.redhat.io/rhel9/bootc-image-builder:latest \ --type ami \ --config /config.toml \ --aws-ami-name rhel-bootc-x86 \ --aws-bucket rhel-bootc-bucket \ --aws-region us-east-1 \ quay.io/<namespace>/<image>:<tag>NoteThe following flags must be specified all together. If you do not specify any flag, the AMI is exported to your output directory.

-

--aws-ami-name- The name of the AMI image in AWS -

--aws-bucket- The target S3 bucket name for intermediate storage when you are creating the AMI --aws-region- The target region for AWS uploadsThe

bootc-image-buildertool builds an AMI image and uploads it to your AWS s3 bucket by using your AWS credentials to push and register an AMI image after building it. Thebootc-image-buildertool builds an AMI image and uploads it to yourAWS S3 bucketby using your AWS credentials to push and register an AMI image after building it.

-

Next steps

- You can deploy your image. See Deploying a container image to AWS with an AMI disk image.

You can make updates to the image and push the changes to a registry. See Managing RHEL bootc images.

- If you have any issues configuring the requirements for your AWS image, see the following documentation

- AWS IAM account manager

- Using high-level (s3) commands with the AWS CLI.

- S3 buckets.

- Regions and Zones.

- Launching a customized RHEL image on AWS.

- Launching a customized RHEL image on AWS.

For more details on users, groups, SSH keys, and secrets, see Users and groups configuration - Injecting users and SSH keys by using cloud-init

Additional resources

5.8. Creating raw disk images by using bootc-image-builder

You can convert a bootc image to a raw image with an MBR or GPT partition table by using bootc-image-builder.

The RHEL base image does not include a default user, so optionally, you can inject a user configuration by using the --config option to run the bootc-image-builder container. Alternatively, you can configure the base image with cloud-init to inject users and SSH keys on first boot. See Users and groups configuration - Injecting users and SSH keys by using cloud-init.

Prerequisites

- You have Podman installed on your host machine.

-

You have root access to run the

bootc-image-buildertool, and run the containers in--privilegedmode, to build the images. - You have pulled your target container image in the container storage.

Procedure

Optional: Create a

config.tomlto configure user access, for example:[[customizations.user]] name = "user" password = "pass" key = "ssh-rsa AAA ... user@email.com" groups = ["wheel"]Before running the container, initialize the

outputfolder. Use the-pargument to ensure that the command does not fail if the directory already exists:$ mkdir -p ./outputRun

bootc-image-builder. If you want to use user access configuration, pass theconfig.tomlas an argument. The image must be accessible from a registry, such asregistry.redhat.io/rhel9/bootc-image-builder:latest.$ podman run \ --rm \ --privileged \ --pull=newer \ --security-opt label=type:unconfined_t \ -v /var/lib/containers/storage:/var/lib/containers/storage \ -v ./config.toml:/config.toml \ -v ./output:/output \ registry.redhat.io/rhel9/bootc-image-builder:latest \ --type raw \ --config /config.toml \ quay.io/<namespace>/<image>:<tag>You can find the

.rawimage in the output folder.

5.9. Creating ISO images by using bootc-image-builder

You can use bootc-image-builder to create an ISO image from which you can perform an offline deployment of a bootable container.

Prerequisites

- You have Podman installed on your host machine.

- Your host system is subscribed or you have injected repository configuration by using bind mounts to ensure the image build process can fetch RPMs.

-

You have root access to run the

bootc-image-buildertool, and run the containers in--privilegedmode, to build the images.

Procedure

Optional: Create a

config.tomlto which overrides the default embedded Kickstart which performs an automatic installation.[customizations.installer.kickstart] contents = """ text --non-interactive zerombr clearpart --all --initlabel --disklabel=gpt autopart --noswap --type=lvm network --bootproto=dhcp --device=link --activate --onboot=on """Before running the container, initialize the

outputfolder. Use the-pargument to ensure that the command does not fail if the directory already exists:$ mkdir -p ./outputRun

bootc-image-builderto create a public ISO image. If you do not want to add any configuration, omit the-v $(pwd)/config.toml:/config.tomlargument. The image must be accessible from a registry, such asregistry.redhat.io/rhel9/bootc-image-builder:latest.$ podman run \ --rm \ --privileged \ --pull=newer \ --security-opt label=type:unconfined_t \ -v /var/lib/containers/storage:/var/lib/containers/storage \ -v $(pwd)/config.toml:/config.toml \ -v $(pwd)/output:/output \ registry.redhat.io/rhel9/bootc-image-builder:latest \ --type iso \ --config /config.toml \ quay.io/<namespace>/<image>:<tag>You can find the

.isoimage in the output folder.

Next steps

You can use the ISO image on unattended installation methods, such as USB sticks or Install-on-boot. The installable boot ISO contains a configured Kickstart file. See Deploying a container image by using Anaconda and Kickstart.

WarningBooting the ISO on a machine with an existing operating system or data can be destructive, because the Kickstart is configured to automatically reformat the first disk on the system.

- You can make updates to the image and push the changes to a registry. See Managing RHEL bootable images.

5.10. Using bootc-image-builder to build ISO images with a Kickstart file

You can use a Kickstart file to configure various parts of the RHEL installation process, such as setting up users, customizing partitioning, and adding an SSH key. You can include the Kickstart file in an ISO build to configure any part of the installation process, except the deployment of the base image. For ISOs with bootc container base images, you can use a Kickstart file to configure all installation settings except the ostreecontainer command.

For example, you can use a Kickstart to perform either a partial installation, a full installation, or even omit the user creation. Use bootc-image-builder to build an ISO image that contains the custom Kickstart to configure your installation process.

Prerequisites

- You have Podman installed on your host machine.

-

You have root access to run the

bootc-image-buildertool, and run the containers in--privilegedmode, to build the images.

Procedure

Create your Kickstart file. The following Kickstart file is an example of a fully unattended Kickstart file configuration that contains user creation, and partition instructions.

[customizations.installer.kickstart] contents = """ lang en_GB.UTF-8 keyboard uk timezone CET user --name <user> --password <password> --plaintext --groups <groups> sshkey --username <user> ssh-<type> <public key> rootpw --lock zerombr clearpart --all --initlabel autopart --type=plain reboot --eject """-

Save the Kickstart configuration in the

tomlformat to inject the Kickstart content. For example,config.toml. Run

bootc-image-builder, and include the Kickstart file configuration that you want to add to the ISO build. Thebootc-image-builderautomatically adds theostreecontainercommand that installs the container image.$ sudo podman run \ --rm \ -it \ --privileged \ --pull=newer \ --security-opt label=type:unconfined_t \ -v /var/lib/containers/storage:/var/lib/containers/storage \ -v ./config.toml:/config.toml \ -v ./output:/output \ registry.redhat.io/rhel9/bootc-image-builder:latest \ --type iso \ --config /config.toml \ quay.io/<namespace>/<image>:<tag>You can find the

.isoimage in the output folder.

5.11. Verification and troubleshooting

- If you have any issues configuring the requirements for your AWS image, see the following documentation

- For more details on users, groups, SSH keys, and secrets, see

Chapter 6. Customizing disk images of RHEL image mode with advanced partitioning

You can use the following methods to customize advanced partitions to allows for granular control over disk and filesystem layouts when building and deploying RHEL systems as container images:

- Disk customizations

- Filesystem customizations

However, the two customizations are incompatible with each other. You cannot use both customizations in the same blueprint, which is a text file in TOML format that defines the specifications and configurations for a custom RHEL system image.

6.1. Understanding partitions

The following are the general principles about partitions:

- The full disk image size is always larger than the size of the sum of the partitions, due to requirements for headers and metadata. Consequently, all sizes are treated as minimum requirements, whether for specific filesystems, partitions, logical volumes, or the image itself.

- When the partition is automatically added, the partition that contains the root filesystem is always the last in the partition table layout. This is valid for a plain formatted partition, an LVM Volume Group, or a Btrfs partition. For disk customizations, the order that you defined is respected.

-

For the raw partitioning, that is, with no LVM, the partition containing the root filesystem is grown to fill any leftover space on the partition table. Logical Volumes are not grown to fill the space in the Volume Group because they are simple to grow on a live system. Some directories have hard-coded minimum sizes which cannot be overridden. These are 1 GiB for

/and 2 GiB for/usr. As a result, if/usris not on a separate partition, the root filesystem size is at least 3 GiB.

6.2. Benefits of disk customizations in RHEL image mode

Disk customizations provide a more powerful interface to control the entire partitioning layout of the image.

- Allowed mountpoints

When using

bootc-image-builder, only the following directories allow customization:-

The

/(root) directory. -

Custom directories under

/var, but not/varitself.

-

The

- Not allowed mountpoints

Under

/var, the following mount points do not allow customization:-

/var/home -

/var/lock -

/var/mail -

/var/mnt -

/var/roothome -

/var/run -

/var/srv -

/var/usrlocal

-

6.3. Disk customizations in blueprints

You can define the partition table almost entirely in a blueprint by using disk customizations. Disk customizations have the following structure:

Partitions: The top level is a list of partitions.

type: Each partition has a type, which can be eitherplainorlvm. If the type is not set, it defaults toplain. The remaining required and optional properties of the partition depend on the type.plain: A plain partition is a partition with a filesystem. It supports the following properties:-

fs_type: The filesystem type, which should be one ofxfs,ext4,vfat, orswap. Setting it toswapwill create a swap partition. The mount point for a swap partition must be empty. -

minsize: The minimum size of the partition, as an integer (in bytes) or a string with a data unit (for example 3 GiB). The final size of the partition in the image might be larger for specific mount points. See Understanding partitions section. -

mountpointThe mount point for the filesystem. For swap partitions, this must be empty. -

label: The label for the filesystem (optional).

-

lvm: An lvm partition is a partition with an LVM volume group. Only single persistent volumes (PV) volume groups are supported. It supports the following properties:-

name: The name of the volume group (optional; if unset, the system generates a name automatically). -

minsize: The minimum size of the volume group, as an integer (in bytes) or a string with a data unit (for example 3 GiB). The final size of the partition and volume group in the image might be larger if the value is smaller than the sum of logical volumes it contains. logical_volumes: One or more logical volumes for the volume group. Each volume group supports the following properties:-

name: The name of the logical volume (optional; if unset, a name will be generated automatically based on the mount point). -

minsize: The minimum size of the logical volume, as an integer (in bytes) or a string with a data unit (for example 3 GiB). The final size of the logical volume in the image might be larger for specific mount points. -

label: The label for the filesystem (optional). -

fs_type: The filesystem type, which should be one ofxfs,ext4,vfat, orswap. Setting it to swap will create a swap logical volume. The mount point for a swap logical volume must be empty. -

mount point: The mount point for the logical volume’s filesystem. For swap logical volumes, this must be empty.

-

-

- Order:

The order of each element in a list is respected when creating the partition table. The partitions are created in the order they are defined, regardless of their type.

- Incomplete partition tables:

Incomplete partitioning descriptions are valid. Partitions, LVM logical volumes, are added automatically to create a valid partition table. The following rules are applied:

-

A root filesystem is added if one is not defined. This is identified by the mount point

/. If an LVM volume group is defined, the root filesystem is created as a logical volume, otherwise it will be created as a plain partition with a filesystem. The type of the filesystem, for plain and LVM, depends on the distribution (xfs for RHEL and CentOS, ext4 for Fedora). See Understanding partitions section for information about the sizing and order of the root partition. -

A boot partition is created if needed and if one is not defined. This is identified by the mountpoint

/boot. A boot partition is needed when the root partition (mount point/) is on an LVM logical volume. It is created as the first partition after the ESP (see next item). -

An EFI system partition (ESP) is created if needed. This is identified by the mount point

/boot/efi. An ESP is needed when the image is configured to boot with UEFI. This is defined by the image definition and depends on the image type, the distribution, and the architecture. The type of the filesystem is alwaysvfat. By default, the ESP is 200 MiB and is the first partition after the BIOS boot (see next item). - A 1 MiB unformatted BIOS boot partition is created at the start of the partition table if the image is configured to boot with BIOS. This is defined by the image definition and depends on the image type, the distribution, and the architecture. Both a BIOS boot partition and an ESP are created for images that are configured for hybrid boot.

- Combining partition types:

You can define multiple partitions. The following combination of partition types are valid:

-

plainandlvm: Plain partitions can be created alongside an LVM volume group. However, only one LVM volume group can be defined. - Examples: Blueprint to define two partitions

The following blueprint defines two partitions. The first is a 50 GiB partition with an ext4 filesystem that will be mounted at /data. The second is an LVM volume group with three logical volumes, one for root /, one for /var/home directories, and a swap space in that order. The LVM volume group will have 15 GiB of non-allocated space.

[[customizations.disk.partitions]]

type = "plain"

label = "data"

mountpoint = "/data"

fs_type = "ext4"

minsize = "50 GiB"

[[customizations.disk.partitions]]

type = "lvm"

name = "mainvg"

minsize = "20 GiB"

[[customizations.disk.partitions.logical_volumes]]

name = "rootlv"

mountpoint = "/"

label = "root"

fs_type = "ext4"

minsize = "2 GiB"

[[customizations.disk.partitions.logical_volumes]]

name = "homelv"

mountpoint = "/var/home"

label = "home"

fs_type = "ext4"

minsize = "2 GiB"

[[customizations.disk.partitions.logical_volumes]]

name = "swaplv"

fs_type = "swap"

minsize = "1 GiB"6.4. Benefits of the filesystem customization in RHEL image mode

Filesystem customization provides the final partition table of an image that you built with image builder, and it is determined by a combination of the following factors:

- The base partition table for a given image type.

The relevant blueprint customizations:

- Partitioning mode.

- Filesystem customizations.

The image size parameter of the build request:

-

On the command line, this is the

--sizeoption of thecomposer-cli compose startcommand.

-

On the command line, this is the

The following describes how these factors affect the final layout of the partition table.

- Modifying partition tables

You can modify the partition table by taking the following aspects in consideration:

- Partitioning modes

The partitioning mode controls how the partition table is modified from the image type’s default layout.

-

The

rawpartition type does not convert any partition to LVM. -

The

lvmpartition type always converts the partition that contains the/root mountpoint to an LVM Volume Group and creates a root Logical Volume. Except from/boot, any extra mountpoint is added to the Volume Group as new Logical Volumes. The

auto-lvmmode is the default mode and converts a raw partition table to an LVM-based one if and only if new mountpoints are defined in the filesystems customization. See the Mountpoints entry for more details.- Mountpoints

You can define new filesystems and minimum partition sizes by using the filesystems customization in the blueprint. By default, if new mountpoints are created, a partition table is automatically converted to LVM. See the Partitioning modes entry for more details.

- Image size The minimum size of the partition table is the size of the disk image. The final size of the image will either be the value of the size parameter or the sum of all partitions and their associated metadata, depending on which one is the larger.

6.5. Creating images with specific sizes in filesystem customization

When you create images with specific sizes in RHEL Image Mode, especially when using bootc and bootc-image-builder, you define the desired disk size within your image build process. While exact sizes can be challenging due to overheads, you can specify minimum requirements.

Prerequisites

-

You must specify the exact

[Image size]in the build request. - You must specify mountpoints as customizations with sizes smaller than the total size in sum. This is required because the partition table, partitions, and other entities often require extra space for metadata and headers. Thus, the space to fit all mountpoints is always larger than the sum of the partition sizes. However, the exact size of the extra space varies based on many factors, such as image type.

Procedure

The following are steps to create a disk image of a very specific size in the TOML file:

Define the

image_sizeparameter within the [disk] section of yourconfig.tomlfile:[disk] image_size = 10737418240 # Example: 10GB in bytes

Add any extra mountpoints with their required minimum sizes. Ensure that the sum of the sizes is smaller than the image size by at least 3.01 GiB if no /usr mountpoint exists, or at least 1.01 GiB if one does. The extra 0.01 MiB is more than enough for the headers and metadata, for which extra space might be reserved. Do not specify a size for the / mountpoint.

With this, you create a disk with a partition table of the desired size with each partition sized to fit the desired mountpoints. The root partition, root LVM Logical Volume, will be at least 3 GiB, or 1 GiB if /usr is specified. See Understanding partitions for more details.

- If the partition table does not have any LVM Volume Groups (VG), the root partition will be grown to fill the remaining space.

- If the partition table contains an LVM Volume Group (VG), the VG will have unallocated extents that can be used to grow any of the Logical Volumes.

6.6. Using bootc-image-builder to add with advanced partitioning to disk images of image mode

You can customize your bootc-image-builder blueprint to implement advanced partitioning for osbuild-composer. The following are possible custom mountpoints:

-

You can create LVM-based images under all partitions on LVs except,

/bootand/boot/efi. - You can create an LV-based swap.

- You can give VGs and LVs custom names.

Include partitioning configurations in the base image that bootc-image-builder will read to create the partitioning layout, making the container itself the source of truth for the partition table. Mountpoints for partitions and logical volumes should be created in the base container image used to build the disk. This is particularly important for top-level mountpoints, such as the /app mountpoint. The bootc-image-builder will validate the configuration against the bootc container before building, in order to avoid creating unbootable images.

Prerequisites

- You have Podman installed on your host machine.

-

You have root access to run the

bootc-image-buildertool, and run the containers in--privilegedmode, to build the images. - QEMU is installed.

Procedure

Create a

config.tomfile with the following content:Add a user to the image.

[[customizations.user]] name = "user1" password = "user2" key = "ssh-rsa AAA ... user@email.com" groups = ["wheel"] # Set a size for the root partition: [[customizations.partitioning.plain.filesystems]] mountpoint = "/" type = "ext4" minsize = "3 GiB" # Add an extra data partition [[customizations.partitioning.plain.filesystems]] mountpoint = "/var/data" type = "xfs" minsize = "2 GiB" label = "data" # Add an app partition with a top-level mountpoint. # Requires derived container. [[customizations.partitioning.plain.filesystems]] mountpoint = "/app" type = "xfs" minsize = "1 GiB" label = "app" # Add the LVM configuration: # Define the LVM Volume Group name and size [[customizations.partitioning.lvm.volume_groups]] name = "rhelvg" minsize = 10737418240 # 10 GiB # Add a data Logical Volume to the group [[customizations.partitioning.lvm.volume_groups.logical_volumes]] name = "datalv" mountpoint = "/var/data" label = "data" minsize = "1 GiB" type = "xfs" # The root Logical Volume is created automatically if not defined, but setting # it lets us set the name, label, and size explicitly [[customizations.partitioning.lvm.volume_groups.logical_volumes]] name = "rootlv" mountpoint = "/" label = "system" minsize = "2 GiB" type = "ext4" # Add an app Logical Volume with a top-level mountpoint. # Requires derived container. [[customizations.partitioning.lvm.volume_groups.logical_volumes]] mountpoint = "/app" type = "xfs" minsize = "1 GiB" label = "app" name = "applv"

Run the

bootc-image-builder. Optionally, if you want to use user access configuration, pass theconfig.tomlas an argument. The following is an example of creating a public QCOW2 image:sudo podman run \ --rm \ -it \ --privileged \ --pull=newer \ --security-opt label=type:unconfined_t \ -v ./config.toml:/config.toml:ro \ -v ./output:/output \ registry.redhat.io/rhel9/bootc-image-builder:latest \ --type qcow2 \ --config /config.toml \ quay.io/<namespace>/<image>:<tag>

You can find the .qcow2 image in the output folder.

Verification

Run the resulting QCOW2 file on a virtual machine.

qemu-system-x86_64 \ -enable-kvm \ -cpu host \ -m 8G \ -bios /usr/share/edk2/ovmf/OVMF_CODE.fd \ -snapshot \ -drive file="${path}/output/qcow2/disk.qcow2"Access the system in the virtual machine launched with SSH.

# ssh -i /<path_to_private_ssh-key> <user1>@<ip-address>

Next steps

- You can deploy your image. See Deploying a container image using KVM with a QCOW2 disk image.

- You can make updates to the image and push the changes to a registry. See Managing RHEL bootc images.

6.7. Building disk images of image-mode RHEL with advanced partitioning

You can create image-mode disk images with advanced partitioning by bootc-image-builder. The image-mode disk images you create for RHEL image mode include custom mount points, custom mount options, LVM-based partitions, and LVM-based swap volumes.

With that, you can, for example, change the size of the / and the /boot directories by using a config.toml file. When installing the RHEL image mode on bare metal, you can benefit from all partitioning features available in the Anaconda installer.

Prerequisites

- You have Podman installed on your host machine.

-

You have

virt-installinstalled on your host machine. -

You have root access to run the

bootc-image-buildertool, and run the containers in--privilegedmode, to build the images.

Procedure

Create a

config.tomlfile to configure custom mount options, for example:[[customizations.filesystem]] mountpoint = "/" minsize = "10 GiB" [[customizations.filesystem]] mountpoint = "/var/data" minsize = "20 GiB"Run

bootc-image-builder, passing theconfig.tomlas an argument.NoteIf you do not have the container storage mount and

--localimage options, your image must be public.The following is an example of creating a public image:

$ sudo podman run \ --rm \ -it \ --privileged \ --pull=newer \ --security-opt label=type:unconfined_t \ -v ./config.toml:/config.toml \ -v ./output:/output \ registry.redhat.io/rhel9/bootc-image-builder:latest \ --type <image_type> \ --config config.toml \ quay.io/<namespace>/<image>:<tag>

Chapter 7. Best practices for running containers by using local sources

You can access content hosted in an internal registry that requires a custom Transport Layer Security (TLS) root certificate, when running RHEL bootc images.

To install content to a container by using only local resources, you can use one of the following options:

- Bind mounts: Override the container’s store with the host’s.

-

Derived image: Create a new container image with your custom certificates by building it using a

Containerfile.

You can use these techniques to run a bootc-image-builder container or a bootc container when appropriate.

7.1. Importing custom certificate to a container by using bind mounts

Use bound mounts to override the container’s store with the host’s.

Procedure

Run RHEL bootc image and use bind mount, for example

-v /etc/pki:/etc/pki, to override the container’s store with the host’s:# podman run \ --rm \ -it \ --privileged \ --pull=newer \ --security-opt label=type:unconfined_t \ -v $(pwd)/output:/output \ -v /etc/pki:/etc/pki \ localhost/<image> \ --type iso \ --config /config.toml \ quay.io/<namespace>/<image>:<tag>

Verification

- The disk image build process should now be able to access internal certificates.

7.2. Importing custom certificates to a container by a using Containerfile

Create a new container image with your custom certificates by building it using a Containerfile.

Procedure

Create a

Containerfile:FROM <internal_repository>/<image> RUN mkdir -p /etc/pki/ca-trust/extracted/pem/ COPY tls-ca-bundle.pem /etc/pki/ca-trust/extracted/pem/ RUN rm -rf /etc/yum.repos.d/* COPY echo-rhel9_4.repo /etc/yum.repos.d/Build the custom image:

# podman build -t <your_image> .Run the

<your_image>:# podman run -it --rm <your_image>

Verification

List the certificates inside the container:

# ls -l /etc/pki/ca-trust/extracted/pem/ tls-ca-bundle.pem

Chapter 8. Deploying RHEL bootc images

You can deploy RHEL bootc images by various methods, depending on your target environment, installation method, and automation requirements.

8.1. Available methods for deploying RHEL bootc images

You can deploy the rhel-bootc container image by using the following mechanisms: * Anaconda * bootc-image-builder * bootc install

The following bootc image types are available:

Disk images that you generated by using the

bootc image-buildersuch as:- QCOW2 (QEMU copy-on-write, virtual disk)

- Raw (Mac Format)

- AMI (Amazon Cloud)

- ISO: Unattended installation method, by using an USB Sticks or Install-on-boot.

After you have created a layered image that you can deploy, there are several ways that the image can be installed to a host:

You can use RHEL installer and Kickstart to install the layered image to a bare metal system, by using the following mechanisms:

- Deploy by using USB

- PXE

-

You can also use

bootc-image-builderto convert the container image to a bootc image and deploy it to a bare metal or to a cloud environment.

The installation method happens only one time. After you deploy your image, any future updates will apply directly from the container registry as the updates are published.

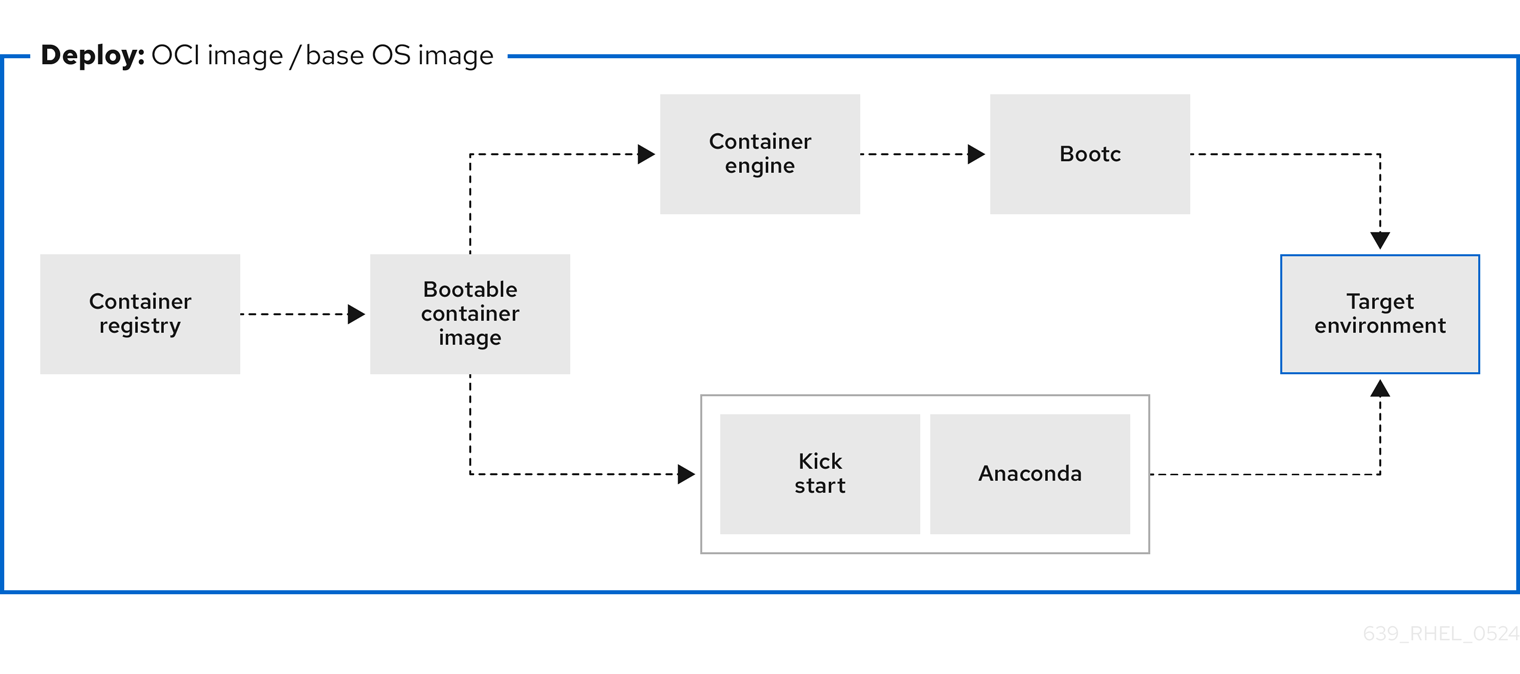

Figure 8.1. Deploying a bootc image by using a basic build installer bootc install, or deploying a container image by using Anaconda and Kickstart

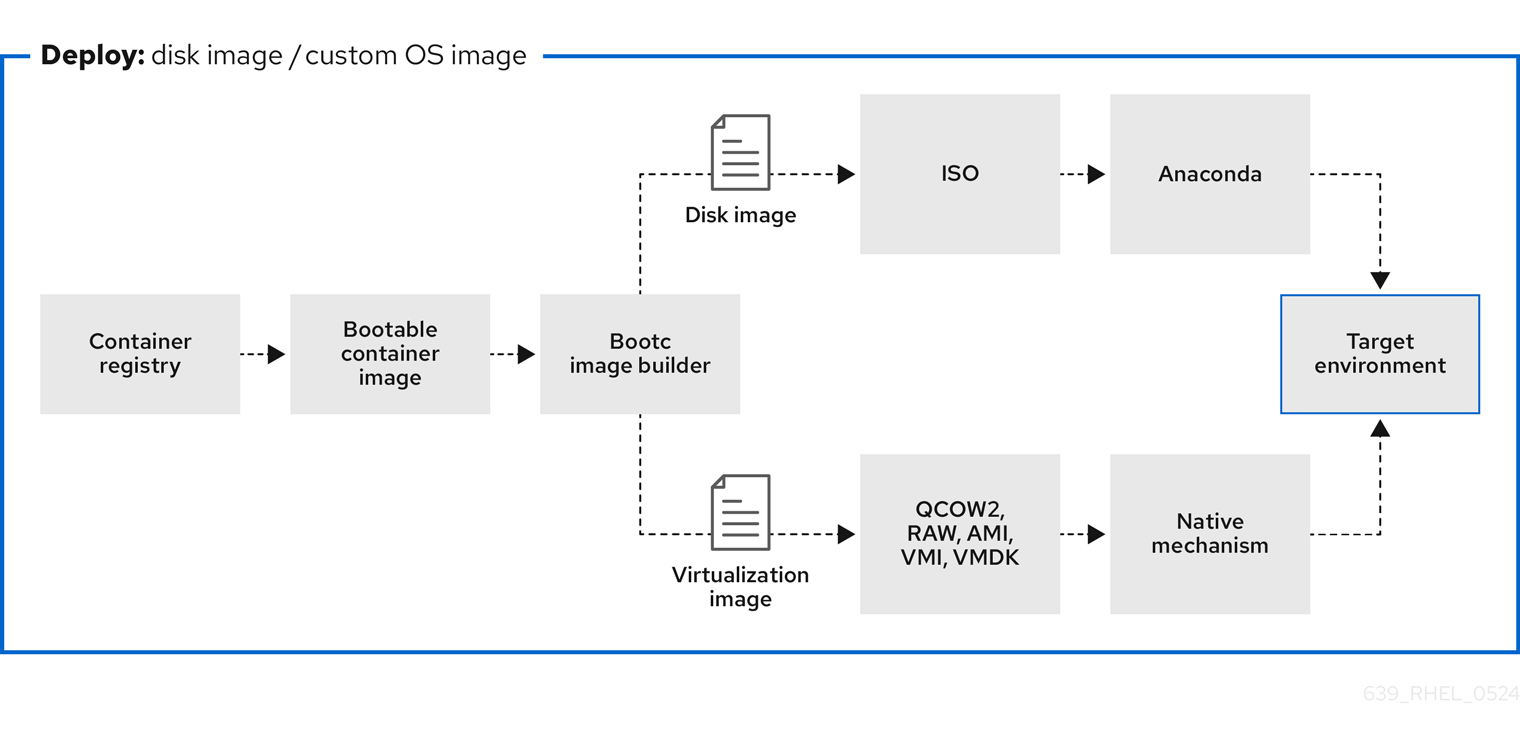

Figure 8.2. Using bootc-image-builder to create disk images from bootc images and deploying disk images in different environments, such as the edge, servers, and clouds by using Anaconda, bootc-image-builder or bootc install

8.2. Deploying a container image by using KVM with a QCOW2 disk image

After creating a QCOW2 image from a RHEL bootc image by using the bootc-image-builder tool, you can use a virtualization software to boot it.

Prerequisites

- You created a container image.

- You pushed the container image to an accessible repository.

-

You created a QCOW2 image by using

bootc-image-builder. For instructions, see Creating QCOW2 images by using bootc-image-builder.

Procedure

By using

libvirt, create a virtual machine (VM) with the disk image that you previously created from the container image. For more details, see Creating virtual machines by using the command line.The following example uses

virt-installto create a VM. Replace<qcow2/disk.qcow2>with the path to your QCOW2 file:$ sudo virt-install \ --name bootc \ --memory 4096 \ --vcpus 2 \ --disk <qcow2/disk.qcow2> \ --import

Verification

- Connect to the VM in which you are running the container image. See Configuring bridges on a network bond to connect virtual machines with the network for more details.

Next steps

- You can make updates to the image and push the changes to a registry. See Managing RHEL bootc images.

8.3. Deploying a container image by using AWS with an AMI disk image

After using the bootc-image-builder tool to create an AMI from a bootc image, and uploading it to a AWS s3 bucket, you can deploy a container image to AWS by using the AMI disk image.

Prerequisites

- You created an Amazon Machine Image (AMI) from a bootc image. See Creating AMI images by using bootc-image-builder and uploading it to AWS.

-

cloud-initis available in the Containerfile that you previously created so that you can create a layered image for your use case.

Procedure

- In a browser, access Service→EC2 and log in.

- On the AWS console dashboard menu, choose the correct region. The image must have the Available status, to indicate that it was correctly uploaded.

- On the AWS dashboard, select your image and click .

- In the new window that opens, choose an instance type according to the resources you need to start your image. Click .

- Review your instance details. You can edit each section if you need to make any changes. Click .

- Before you start the instance, select a public key to access it. You can either use the key pair you already have or you can create a new key pair.

Click to start your instance. You can check the status of the instance, which displays as Initializing.

After the instance status is Running, the button becomes available.

- Click . A window appears with instructions on how to connect by using SSH.

Run the following command to set the permissions of your private key file so that only you can read it. See Connect to your Linux instance.

$ chmod 400 <your-instance-name.pem>Connect to your instance by using its Public DNS:

$ ssh -i <your-instance-name.pem>ec2-user@<your-instance-IP-address>

Your instance continues to run unless you stop it.

Verification

After launching your image, you can:

- Try to connect to http://<your_instance_ip_address> in a browser.

- Check if you are able to perform any action while connected to your instance by using SSH.

Next steps

- After you deploy your image, you can make updates to the image and push the changes to a registry. See Managing RHEL bootc images.

8.4. Deploying a container image from the network by using Anaconda and Kickstart

You can deploy an ISO image by using Anaconda and Kickstart to install your container image. The installable boot ISO already contains the ostreecontainer Kickstart file configured that you can use to provision your custom container image.

Prerequisites

- You have downloaded the 9.4 Boot ISO for your architecture from Red Hat. See Downloading RH boot images.

Procedure

Create an